Abstract

Scholarly interest in the emerging topic of multimodal learning and its ethical considerations, which integrates a variety of informational forms to enhance learning, has significantly increased during the past ten years. This paper aims to pinpoint the conceptual foundations and development trend of multimodal learning through thorough bibliometric research. By examining patterns, trends, and linkages within the published literature using a sizable collection of articles from the Scopus database, this research attempts to map the scientific landscape of multimodal learning. According to our study, multimodal learning is becoming more popular in a variety of academic disciplines. The major works and new trends in the subject were highlighted by emphasizing key publications and significant authors. While developing trends and recurrent study subjects were determined using keyword analysis, the major papers having the greatest influence on the field were found utilizing co-citation analysis. The collaborative network analysis also revealed a vibrant academic community with expanding global ties, fostering an atmosphere that is conducive to cutting-edge multimodal learning research. With its insights on the past, present, and likely future paths of multimodal learning, this study is an invaluable resource for academics, teachers, and decision-makers.

Introduction

Learning is no longer limited to the pages of a textbook or the boundaries of a classroom in our increasingly linked society [1]. With the introduction of technology and as an irreversible transition due to the COVID pandemic, a new era of education has begun, one in which knowledge is disseminated through conventional approaches and a rich tapestry of sensory experiences. The dynamic change in how we learn has sparked the development of the multimodal learning discipline [2, 3].

The significance of latest multimodal pedagogy rests in its transformative capacity to foster the development of critical thinking, problem-solving abilities, and a lifetime love of learning [4]. This engaged learning encourages people to actively investigate, inquire about, and apply knowledge in a time when information is constantly flowing, creating strong bonds between students and the things they are studying [5, 6].

Multimodal learning is simply an approach to education that takes into account the many ways in which individuals absorb, process, and integrate information [7, 8]. By combining artificial intelligence skills with a range of sensory modalities, including text, graphics, music, video, and interactive information, it offers a full chance for experience learning [9, 10]. The study shows how language acquisition is enhanced in a range of systems and applications by multimodal language learning, which combines text, images, audio, and video. These courses employ cutting-edge techniques for student-centered assessment [11, 12]. Students who use all of their senses – visual and auditory – are better able to comprehend the content they are learning and pronounce words appropriately [13]. Multimodal language assessment makes use of devices like sensors, augmented reality, and virtual reality to assess spoken, written, and aural skills. In addition to traditional education methods, these advancements have an impact on sectors including artificial intelligence and healthcare [14, 15, 16, 17].

Through its ability to accommodate a range of student capacities, inclusive education demonstrates how multimodal learning enhances the efficacy of AI systems [18]. The study highlights equity, openness, and inclusion in light of the moral dilemmas raised by multimodal learning [19]. Multimodal learning is spreading more and more in the fields of education, technology, and other fields [20].

This study explores the history, major figures, and theme advancements in multimodal learning using sophisticated bibliometric analysis. A quantitative method for analyzing patterns in scientific literature, bibliometric analysis aids in understanding the traits and development of a subject [21, 22, 23, 24, 25, 26, 26, 27, 29, 30, 31]. For individuals who are not familiar with R programming, bibliometric analysis is made easier to understand with Biblioshiny’s graphical user interface. Comprehensive techniques for quantitative bibliometric and scientometric research are available in the R bibliometrix package [32, 33, 34, 35, 36, 37].

VOSviewer software is widely used in bibliometric and scientometric research for the purpose of constructing and visualizing bibliometric networks. Understanding the relationships between various scientific aspects and important personalities, as well as analyzing and demonstrating the structure and growth of scientific areas, depend on this methodology [38, 39, 40, 41, 42, 43, 44, 45, 46].

The objectives of the bibliometric examination of multimodal learning are:

Charting the Evolution: This involves identifying major shifts in focus and turning points along the way that multimodal learning research has evolved and expanded throughout time. Finding Critical Journals and Publications: To determine which eminent journals and publications have contributed most to the field of multimodal learning. Identifying Collaboration Networks: Collaboration networks can be identified and visualized to determine cooperation trends and whether nations, organizations, or authors regularly collaborate on multimodal learning research. Keyword and Topic Analysis: To identify and investigate the most widely used phrases and subjects, providing insight into the main concepts, tactics, and resources associated with multimodal learning. Citation Analysis: The goal of Citation Analysis is to identify the papers that have received the most citations, as they represent the multimodal learning research that has had the greatest impact and influence. Geographical Distribution: Maps showing the geographical distribution of research on multimodal learning may be useful in identifying nations or locations that are at the forefront of this field. Assessing Research Gaps: To discover multimodal learning subjects that are under or emergently explored. Understanding Interdisciplinary Connections: To examine how multimodal learning research crosses academic boundaries in order to highlight its interdisciplinary nature.

Multimodal learning is a rapidly developing field that includes various methods for combining and utilizing various informational media such as text, images, audio, video, and sensor data for a variety of purposes, such as computer vision, natural language processing, speech recognition, and education [47]. A culmination of AI technologies and multimodal learning emerges as a recent research area where the capabilities of AI are used to enhance learning [48, 49]. Here is a survey of the research on multimodal learning with an emphasis on the field of education.

The definition of multimodal learning and the steps to be followed for designing such a learning environment was introduced by Moreno, R., and Mayer, R. in 2007. They suggested a cognitive-affective theory of learning with media from which instructional design principles are derived. Then, they identified empirical support for five design principles: guided activity, reflection, feedback, control, and pre-training. These principles laid the foundation of multimodal learning in education [3].

Artificial Intelligence-based Transformer is an advanced neural network learner who excels at a variety of multimodal applications and Big Data. A prominent area in AI research is the recent advancements in Transformer-based multimodal learning and Big Data. A thorough analysis of Transformer approaches targeted towards multimodal data was presented [50] by Xu, P et al. The backdrop of multimodal learning, the Transformer ecosystem, and the multimodal Big Data age are the primary topics of this survey. This article also includes a systematic review of the Vanilla Transformer, Vision Transformer, and multimodal Transformers from a geometrically topological standpoint.

Ouhaichi, H., Spikol, D., and Vogel, B. analyze and present the research trends and technologies required to assist the development of multimodal learning analytics [20]. They carried out a systematic mapping analysis to identify multimodal learning analytics research types, methodology, and emerging research subjects. This study was based on well-established systematic literature practices. To indicate a growing interest in multimodal learning analytics technologies, the majority of mapped articles offered various solutions and employed evaluation-based research techniques. They also highlighted 14 subjects that can advance multimodal learning analytics, grouped under the four categories of learning context, learning process, systems and modalities, and technologies.

The study of direct interactions with learning management systems can be utilized to optimize and comprehend the learning process, as shown by studies in learning analytics. However, learning doesn’t always happen when a student is interacting with such systems directly. The application cases for learning analytics can be expanded by using sensors to collect data from students and their surroundings everywhere. Due to this, Schneider, J et al. [51] created the Multimodal Learning Hub (MLH), a system that collects and incorporates multimodal data from adaptable configurations of ubiquitous data providers in order to improve learning in ubiquitous learning scenarios.

Electroencephalography (EEG) was first used to capture physiological data from online learners by Han, I., Obeid, and Greco in 2023 [52]. Recent technical developments make it possible to capture real-time brain signals while students are working on online assignments. In the context of multimodal learning analytics, it is an impressive accomplishment that this new technology may be used to collect exact information on students’ levels of concentration. Neural data can be analyzed and utilized to forecast self-regulated behavior during online learning when paired with other learner data.

The students to enhance their academic and social skills while learning in groups, a live teacher must be present and actively guiding the group. In order to help teachers, comprehend students’ activities in an inquiry-based learning environment, Segal A et al Proposed a multi-modal learning approach using data-mining and visualization technologies [53]. They employ supervised learning to identify conspicuous states of activity in the group’s work, such as solving an issue, acting idly, or facing difficulties with technology. Teachers are able to watch many groups concurrently and take appropriate action to lead the group as necessary because these “critical” moments are visualized to them in real-time.

The learning analytics research community has been paying more attention about multimodal learning analytics (MMLA) [4]. The Cross MMLA Special Interest Group and MMLA workshops are periodically held at the Learning Analytics Summer Institute (LASI) by the Society of Learning Analytics Research. Worsley. M etal. propose a group of 12 commitments in their study that they think are essential for producing successful MMLA learning environment [54]. These set of key commitments can serve as a guide for MMLA researchers and the larger learning analytics community.

Virvou et al. present a comprehensive exploration into Learning Analytics, detailing how the integration of information technologies and artificial intelligence is transforming education into a more engaging, personalized experience. Their collective research delves into enhancing student engagement, predicting performance, and creating dynamic tools for both synchronous and asynchronous e-learning environments [55].

Materials and methods

We obtained the scientific papers relevant to our research from the main collection of the Scopus database. On August 21, 2023, we carried out a search using specific keywords such as ‘multi-modal learning’ or ‘multimodal learning’. This search included all languages, and was limited to only journal articles and conference papers. In total, we collected 1985 articles from 878 different sources, covering the period from 1991 to 2023. To ensure accuracy, we carefully reviewed the Scopus entries to remove any duplicates. The results were saved in a ‘CSV’ file, and we performed a bibliometric analysis on the data using VOSviewer version 1.6.19 and Biblioshiny software. Figure 1 provides a visual depiction of our methodology, while Table 1 provides detailed information regarding the key elements and aspects of our research.

The methodology phases.

Essential aspects of the investigation

Annual scientific production

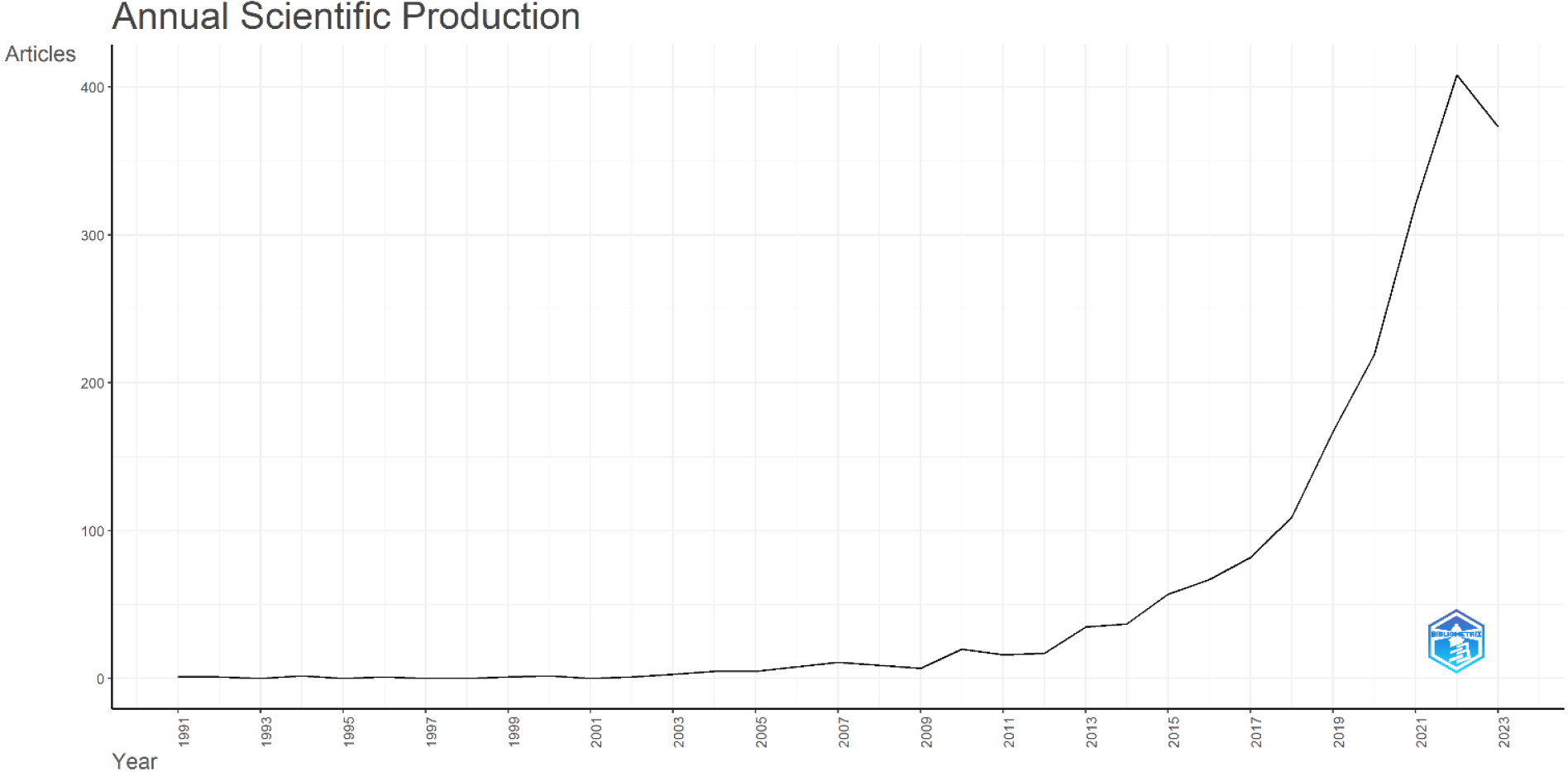

Figure 2 illustrates the annual count of scientific papers from 1991 to 2023, as presented by Biblioshiny. Between 1991 and 2002, the growth in publications remained relatively flat. However, from 2003 to 2022, there was a notable surge in the number of papers. The highest count was recorded in 2022 with 408 publications, followed by 373 in 2023 and 321 in 2021. The chart clearly shows the rising significance of multimodal learning in recent times. Over the span of 32 years, there is a consistent upward trend in scientific output. The average yearly growth rate stands at 20.33%, possibly due to improved research techniques, enhanced funding, or an expanding community of researchers.

Yearly scientific output from 1991 to 2023 depicted through Biblioshiny.

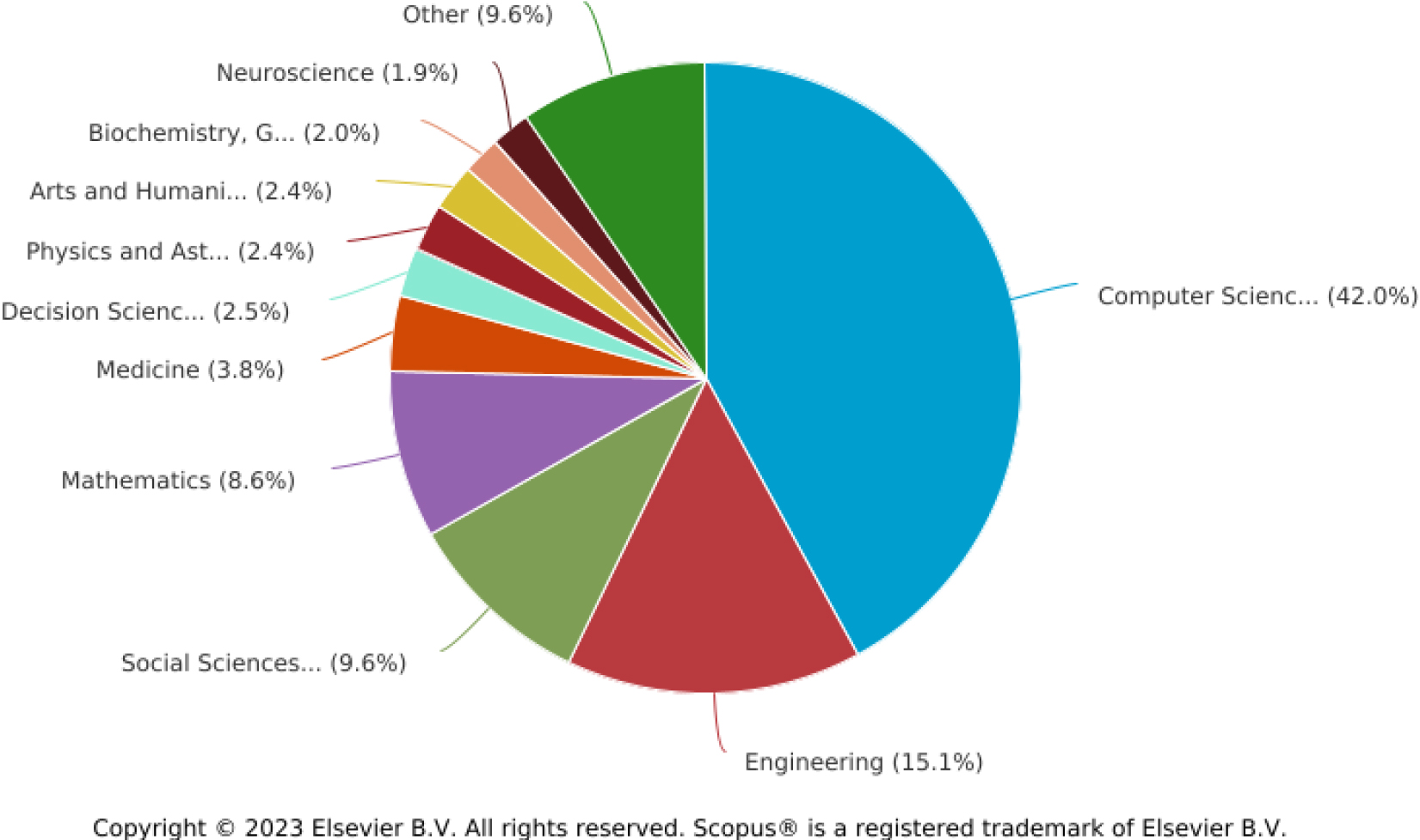

Figure 3 presents a pie chart illustrating the breakdown of subject domains. Computer Science dominates with 1,528 papers, constituting 42.0%. Engineering follows with 550 publications, accounting for 15.1%. Other notable fields where multimodal learning is prevalent include Social Sciences (350), Mathematics (312), Medicine (140), Decision Sciences (90), Physics and Astronomy (89), Arts and Humanities (87), Biochemistry, Genetics and Molecular Biology (71), Neuroscience (70), Materials Science (53), Psychology (51), Health Professions (42), Business, Management and Accounting (39), Chemistry (30), Earth and Planetary Sciences (29), Energy (19), Agricultural and Biological Sciences (15), Nursing (14), Chemical Engineering (13), Environmental Science (13), Multidisciplinary (12), Pharmacology, Toxicology and Pharmaceutics (9), Dentistry (5), Immunology and Microbiology (5), and Economics, Econometrics and Finance (1).

Most significant authors

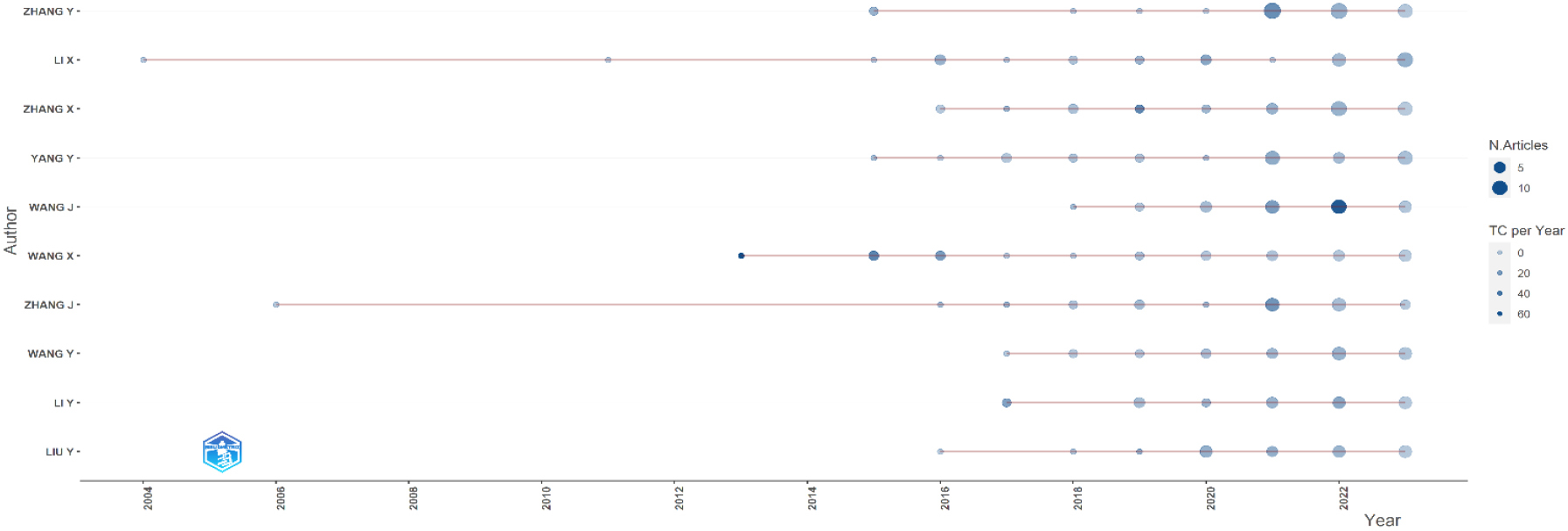

The research on Multimodal Learning was conducted by a total of 5069 writers who contributed articles on the topic. The impact of these writers was gauged by the volume of their publications. Zhang Y emerged as the leading contributor with 41 articles, trailed by Li X with 36, and Zhang X with 35. Table 2 offers a summary of the publication numbers for the top ten contributors. Their vast knowledge and proficiency have positioned them as prominent figures in their domains, enhancing their influence. Figure 4 showcases the publication trajectory of these authors, highlighting their article counts over the years.

The top ten authors

The top ten authors

Documents by subject area.

Authors’ production over time.

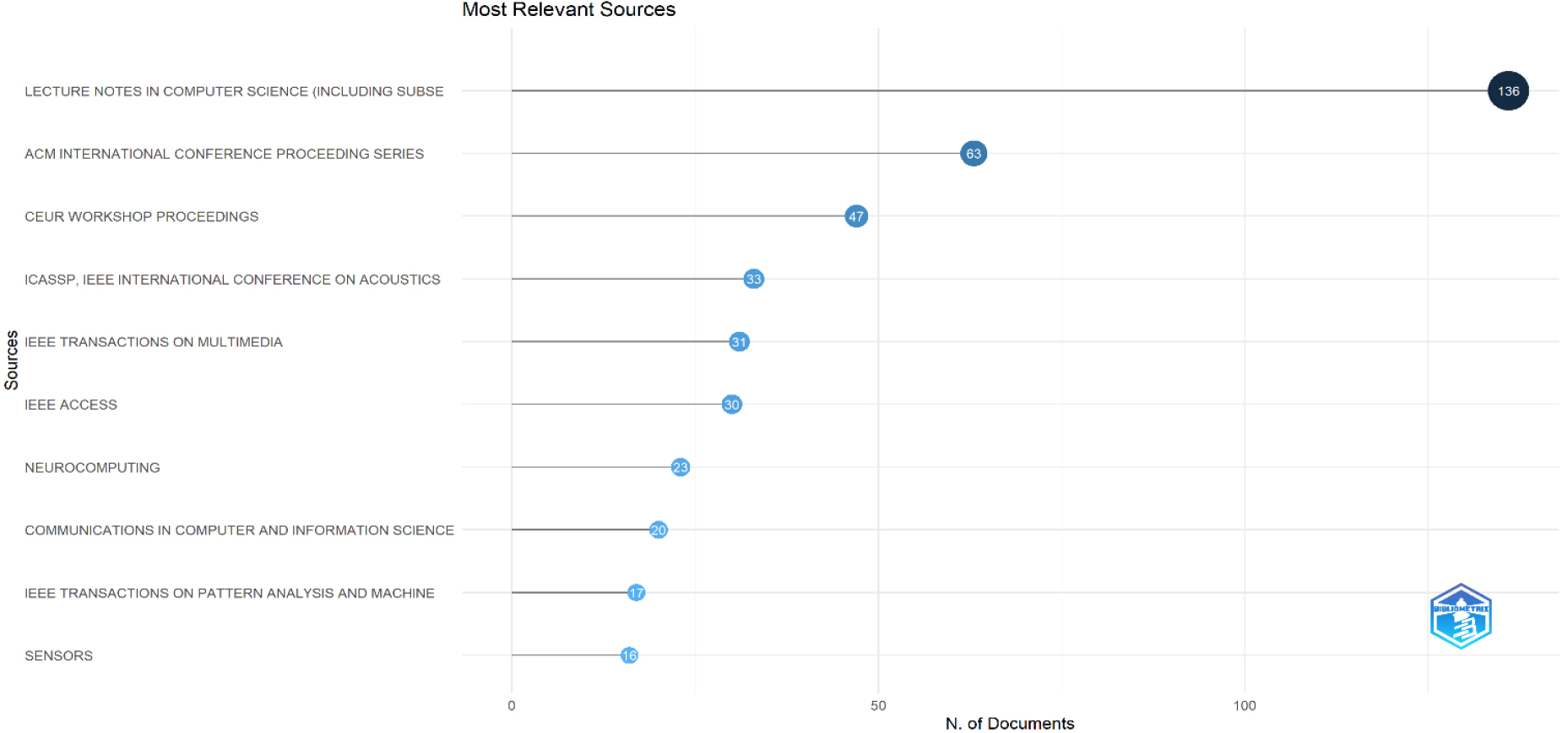

We reviewed 878 journal sources and collected 1985 articles. Among them, the most prolific source was Lecture Notes in Computer Science (Including its subseries on Artificial Intelligence and Bioinformatics), with a contribution of 136 articles. Next in line was the ACM International Conference Proceeding Series with 63 articles. Figure 5 presents the top 10 journals with the most research papers on Multimodal Learning.

The ten leading sources based on the number of publications.

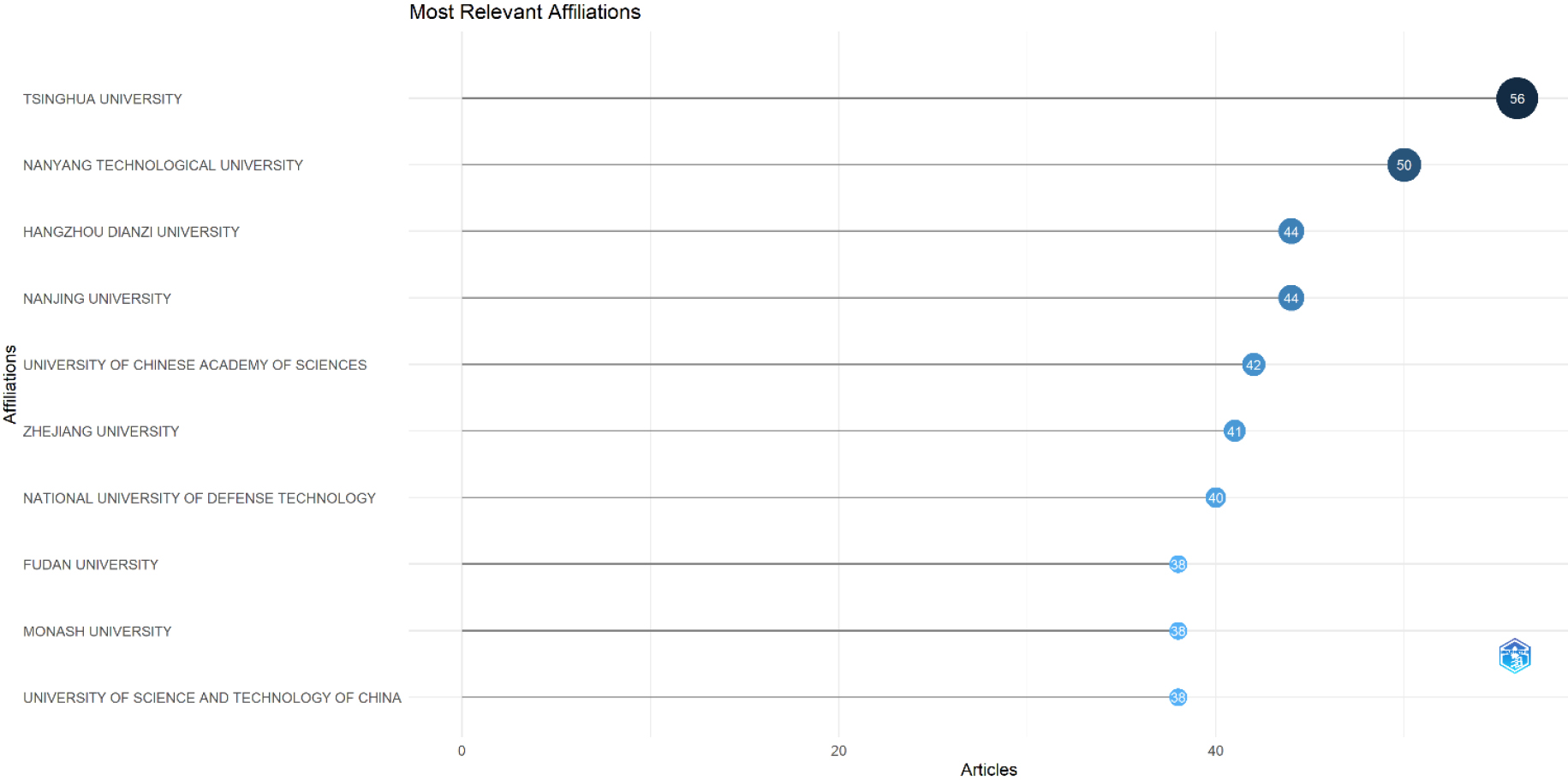

Most significant affiliations based on the number of publications.

Figure 6 showcases the leading institutions in Multimodal Learning research. Tsinghua University tops the chart with 56 papers, followed closely by Nanyang Technological University with 50 papers. Other prominent universities in this field are Hangzhou Dianzi University (44), Nanjing University (44), University of Chinese Academy of Sciences (42), Zhejiang University (41), National University of Defense Technology (40), Fudan University (38), Monash University (38), and University of Science and Technology of China (38), among others.

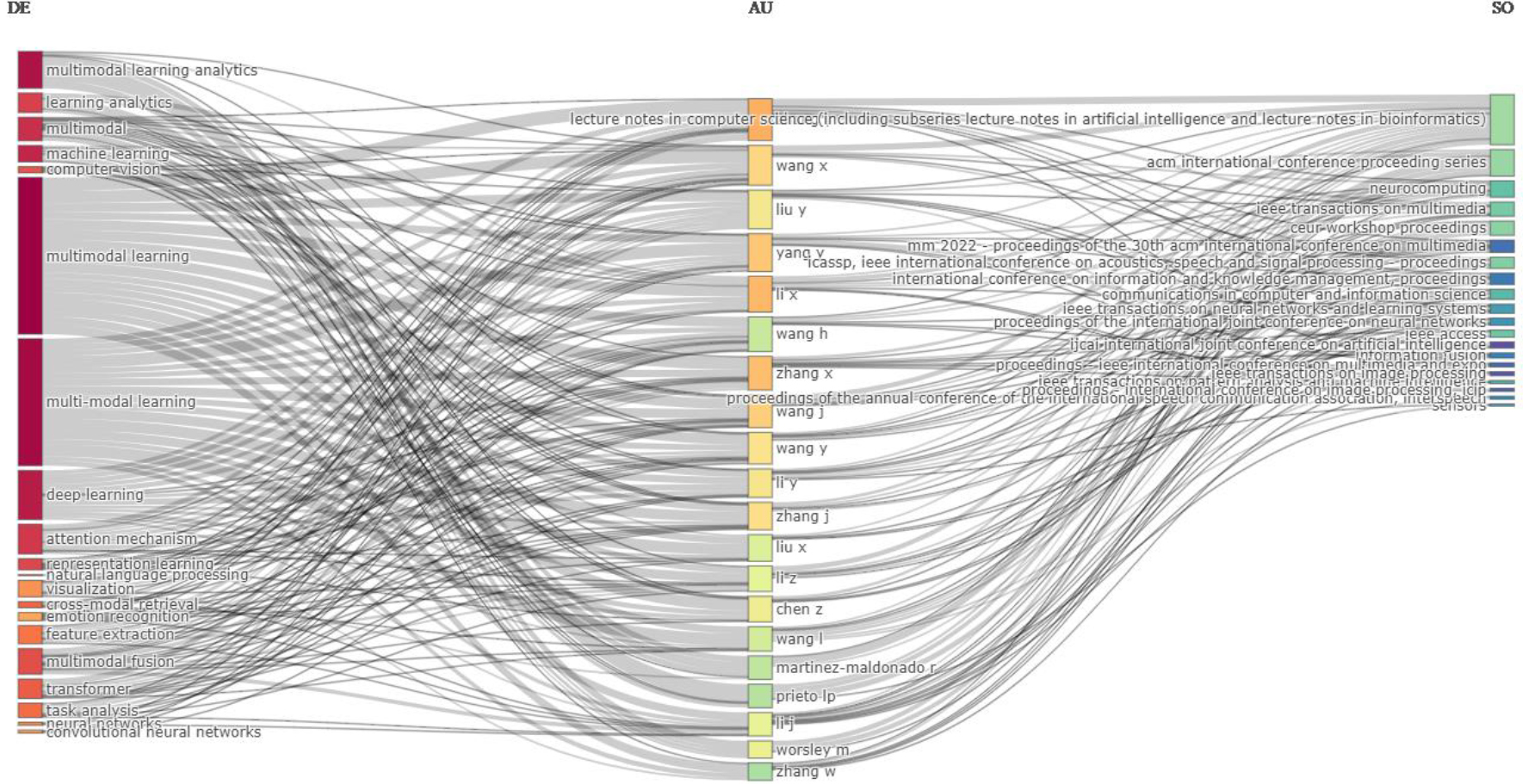

Figure 7 showcases a diagram that delves into the relationship among keywords (on the left side), authors (centered), and publications (on the right) within the Multimodal Learning domain. The study sought to pinpoint commonly used terms by various authors in published articles. A review of the predominant keywords, authors, and journals highlighted terms like “multimodal learning,” “multi-modal learning,” “deep learning,” and “multimodal learning analytics.” Notably, several authors, including Zhang Y, Wang X, Liu Y, and others, frequently used these terms and shared their findings in publications such as Lecture Notes in Computer Science (and its related series on Artificial Intelligence and Bioinformatics), ACM International Conference, Neurocomputing, and more.

Three Field Plot is structured with the keyword positioned on the left, the author in the middle, and the source on the right using Biblioshiny.

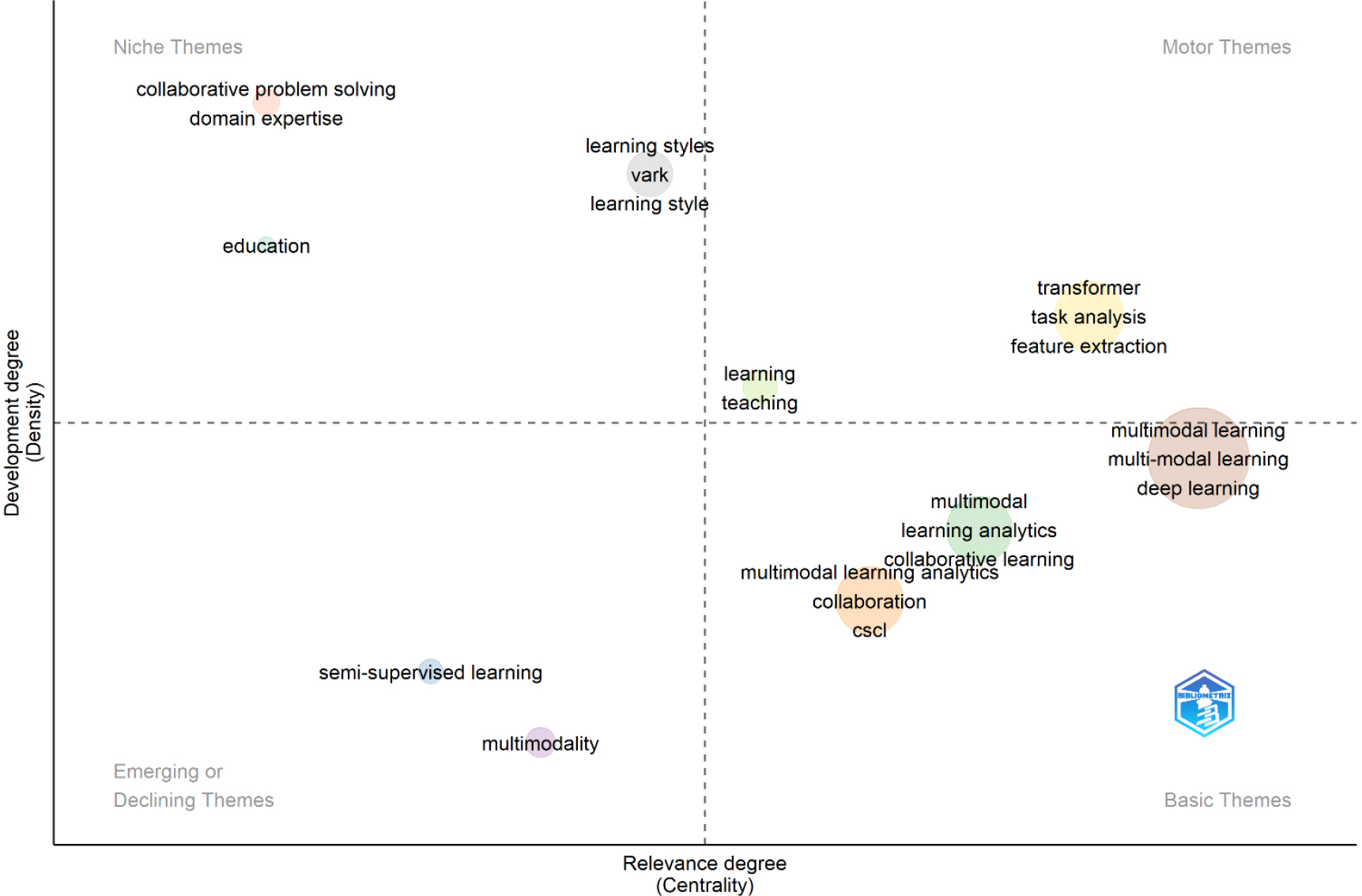

The diagram in Fig. 8 displays various bubbles, each symbolizing a unique theme. The bubble’s size denotes the number of publications, and their closeness signifies the relatedness of the themes. This thematic map is plotted against two primary dimensions: density (y-axis) and centrality (x-axis). Centrality measures a theme’s significance, while density gauges its advancement level. The map is segmented into four areas. The bottom-left section contains themes seen as emerging or fading, like “semi-supervised learning” and “multimodality”. These themes might grow in importance or lose their significance in the research domain. The bottom-right area holds basic or cross-cutting themes. They have low density, indicating thorough research on them, but their high centrality shows their foundational nature and broad relevance. The top-left section has themes with extensive development (high density) but are somewhat detached from other research fields due to their lower centrality. The top-right section encompasses themes with both high density and centrality, termed as motor themes. These are crucial and well-established, serving as pillars in the research arena. In this study, motor themes include “learning”, “teaching”, “transformer”, “task analysis”, and “feature extraction”.

Thematic map using Biblioshiny.

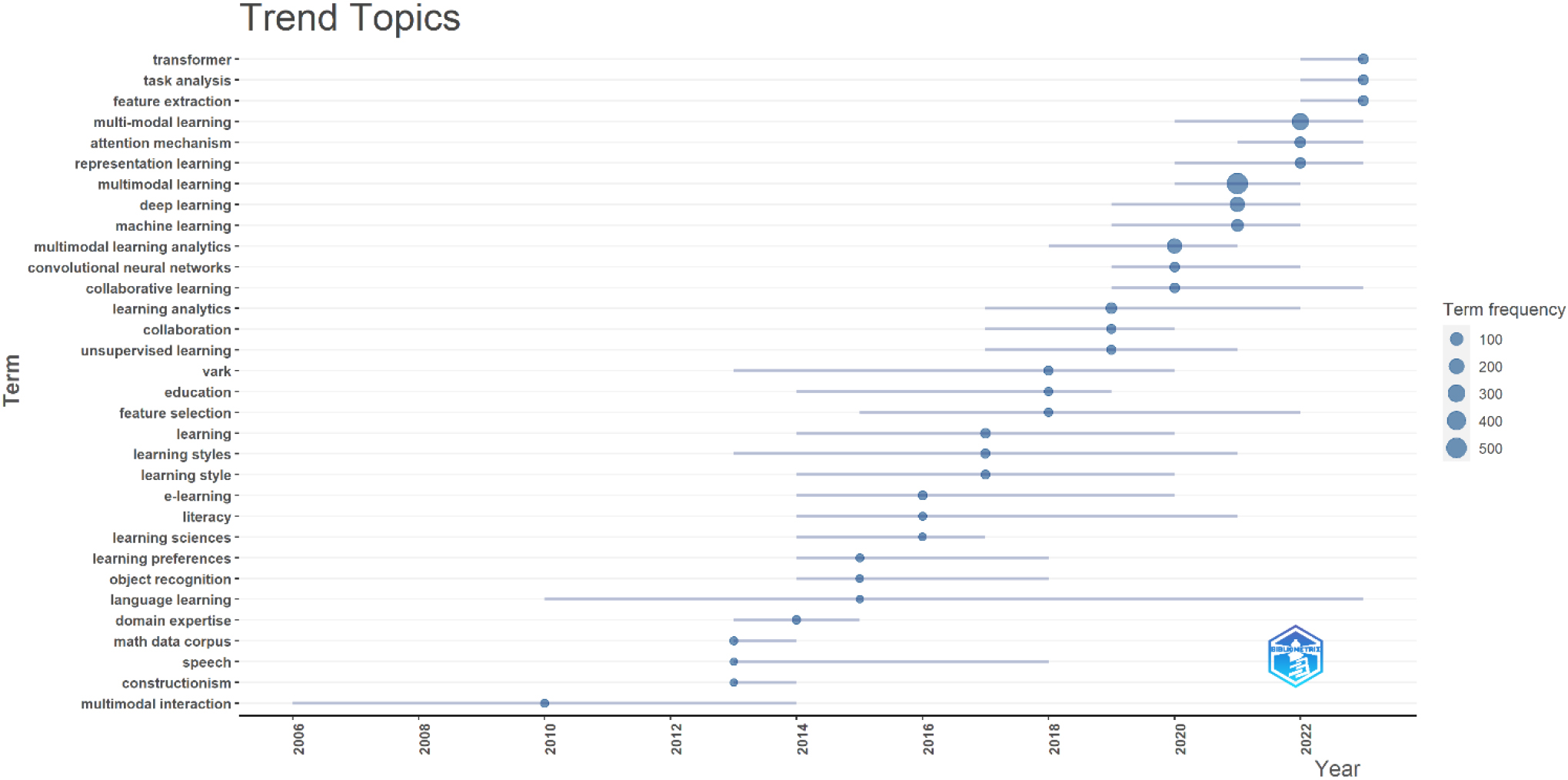

The study delved into a trending topic by analyzing the keywords selected by authors from the given dataset. The analysis had specific guidelines: it covered the years 2010 to 2023, keywords had to be mentioned at least five times, three keywords were picked for each year, and a word label size of five was used. Generally, the keywords authors choose reflect the core content of their work, giving a clear picture of the main themes in the field. This analysis sheds light on the dominant themes in multimodal learning literature over the years. Figure 9 graphically displays the structure of these keywords, highlighting yearly discussions on different aspects of multimodal learning by researchers. These subjects have connections to multimodal learning. For example, “multimodal learning analytics” was the top topic in 2020 with 175 mentions, while in 2021, “multimodal learning” took the lead with 579 mentions.

Trend topics identified using Biblioshiny from 2010 to 2023.

Table 3 showcases the most referenced articles in Multimodal Learning. These papers have garnered considerable recognition in scholarly circles. The paper “Multimodal deep learning” by Ngiam J. et al. from 2011 is particularly notable, with 2274 citations. Another significant work is “Multimodal learning with Deep Boltzmann Machines” by Srivastava N. and Salakhutdinov R. from 2012, which has received 925 citations.

The top cited papers

The top cited papers

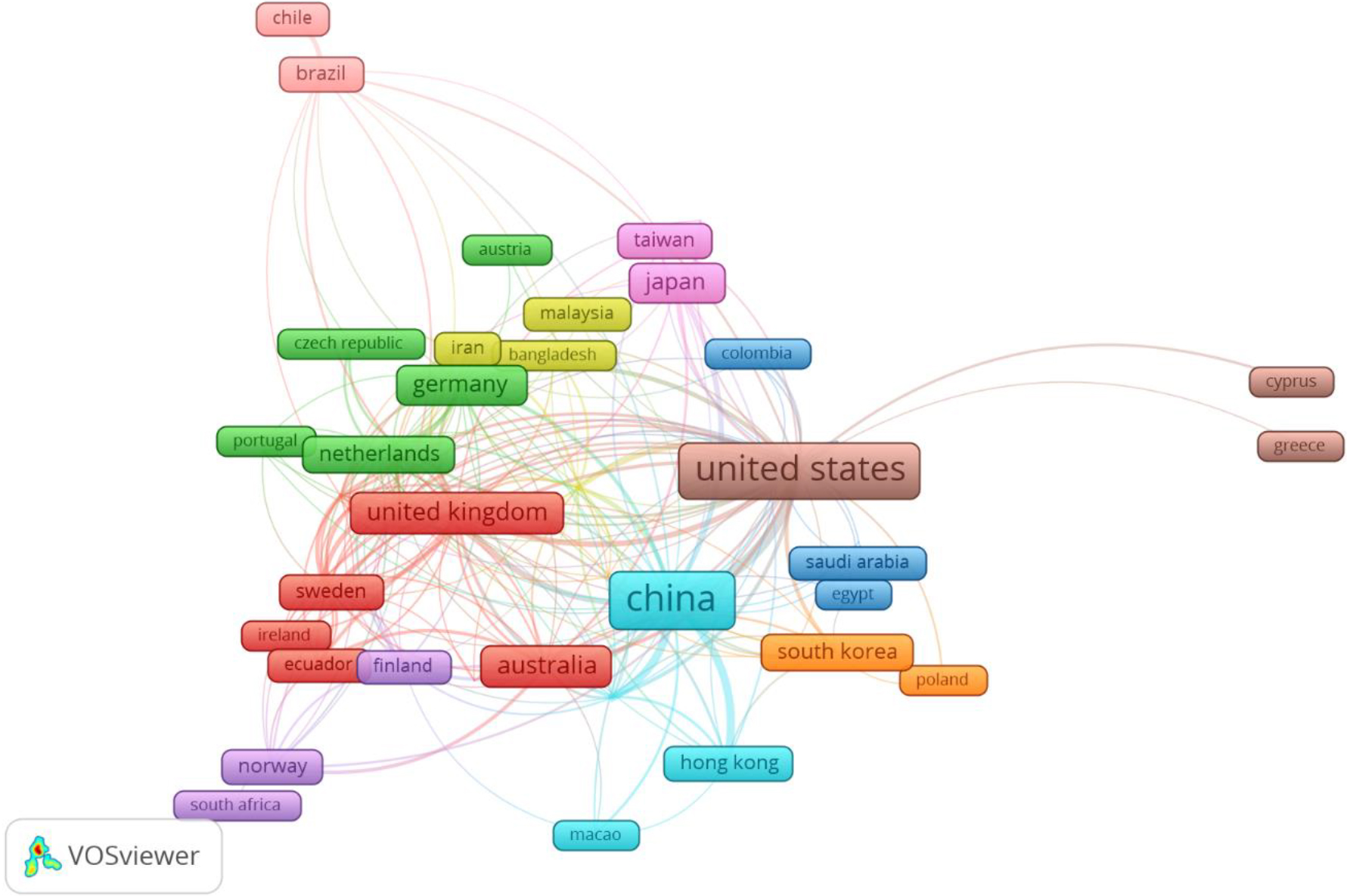

Country co-authorship analysis looks at the influence and collaboration of countries within a specific research domain. For Multimodal Learning, Fig. 10 visually maps out this co-authorship among nations. The node sizes highlight the most influential countries, and the links show collaborations between institutions from different nations. The closeness and thickness of these links indicate the depth of their collaboration. Different colors on the map depict the range of research areas. China (616), the US (509), the UK (118), and Australia (116) lead in publication numbers. In terms of citations, the US (10398), China (5516), and Canada (3985) are notable, reflecting their significant impact. Moreover, China (245), the US (229), and the UK (126) have the top total link strength values, emphasizing their central roles in the co-authorship network.

The network visualization of country co-authorship analysis through the use of VOSviewer.

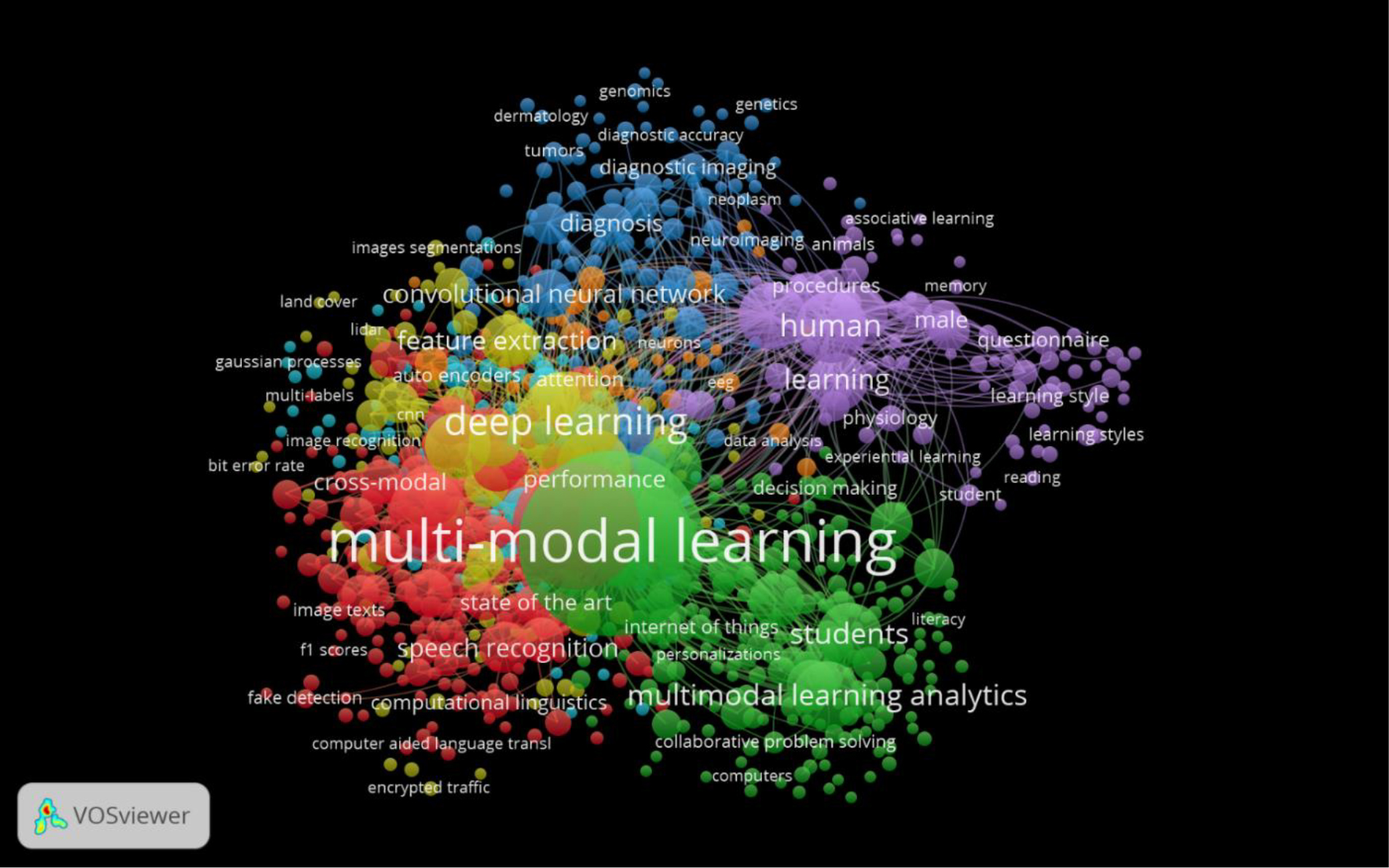

The VOSviewer software was used to visually display groups of frequently occurring keywords related to Multimodal Learning. From a total of 11,134 keywords, a subset of 880 keywords that appeared a minimum of 5 times was chosen for this analysis. Figure 11 showcases the results. In this depiction, the size and font of each circle are based on the frequency of the keyword’s appearance. Thus, bigger circles and fonts indicate more common keywords. The links between circles in the figure show the relationship between keywords, with the line thickness representing the intensity of their association. A denser line means the keywords often appear together. The analysis in Fig. 11 identified seven unique groups. The first group has 229 keywords, the second has 224, the third has 110, the fourth has 105, the fifth has 89, the sixth has 84, and the seventh has 39. The keyword “multi-modal learning” stands out in the network, appearing a whopping 1,250 times.

The network visualization of keyword co-occurrence using VOSviewer.

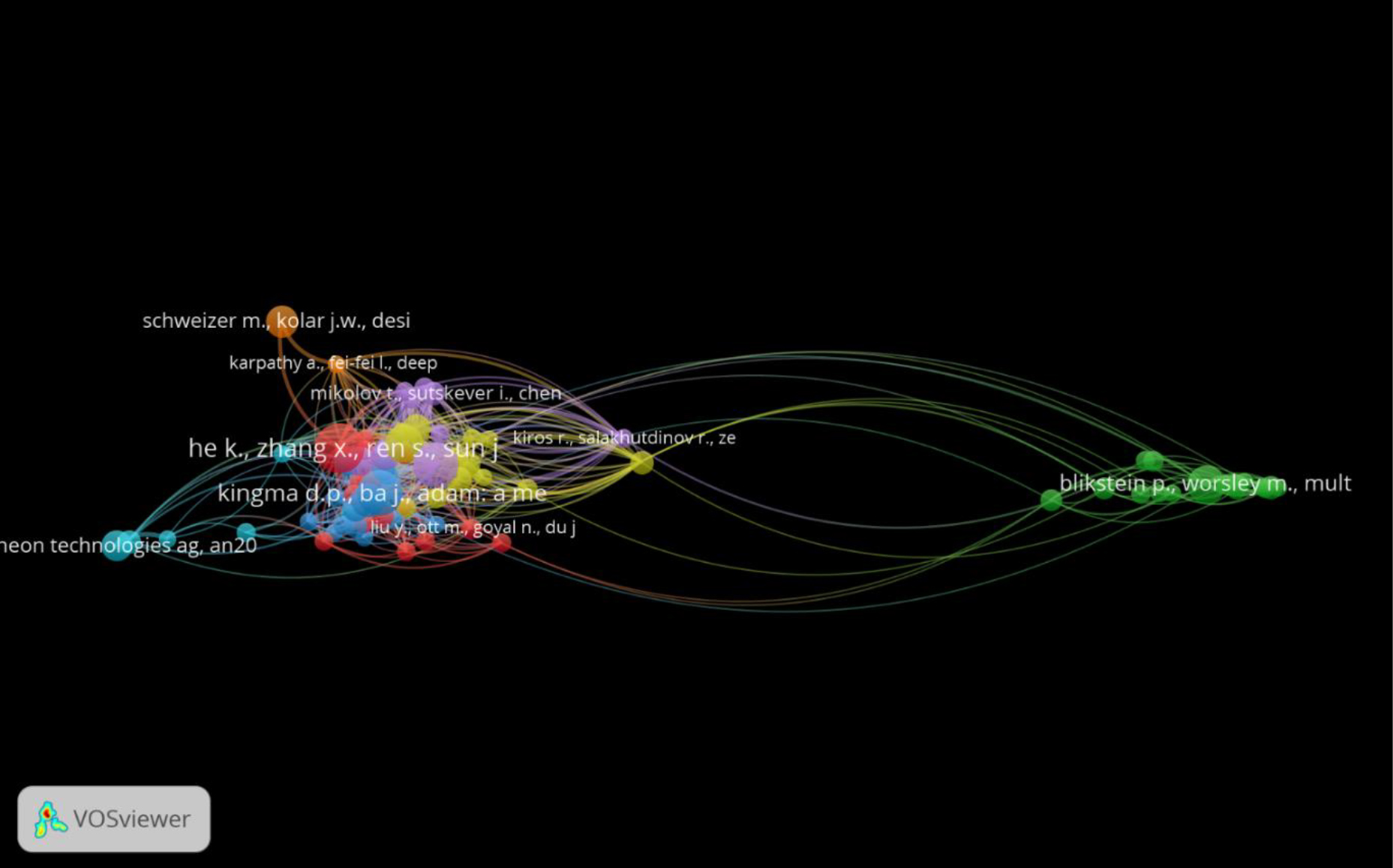

Co-citation analysis, a widely used bibliometric technique, studies how often two references are jointly cited in a specific group of documents. This approach aids in discerning the connections between different publications and pinpointing clusters of interrelated works. We established a baseline of 10 citations, and out of the 67,859 citation references produced, 80 met this standard. Figure 12 reveals the strongest link, with a combined strength of 149, for the paper “Deep Residual Learning for Image Recognition” by Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun from 2015. This was closely followed by “Adam: A Method for Stochastic Optimization” by Diederik P. Kingma and Jimmy Ba from 2014, which had a link strength of 146.

Network Visualization of co-citation analysis of references generated using VOSviewer.

A total of 1985 articles were collected from 878 different sources. This suggests that there is a sizable body of literature on the subject that spans more than three decades, from 1991 to 2023.

The quantity of scientific publications increased rather steadily or flatly between 1991 and 2002. This implies that there may not have been many noteworthy developments or changes in the scientific community during this time that would have encouraged an increase in publications. Between 2003 and 2022, there was a considerable increase in the number of scientific publications. This might be a sign of an uptick in research activity, potentially brought on by improvements in research methodologies, greater funding, or a burgeoning researcher community. With 408 articles published, the year 2022 had the most publications, signifying a peak in scientific productivity. This peak indicates that 2022 was an especially productive year for science. There has been an increased tendency for the past 32 years.

Various academic areas, including the sciences, arts, and humanities, use multimodal learning. This demonstrates the adaptability and wide application of multimodal learning strategies. Less than 15 articles have been published in fields including Economics, Econometrics and Finance, Immunology and Microbiology, Dentistry, and a few others, whereas Computer Science and Engineering are leading the way in multimodal learning adoption and study. This may mean that the adoption of multimodal learning is still in its infancy in certain industries or that its applicability is less widespread there.

The research on Multimodal Learning has seen significant contributions, with a total of 5069 writers participating in the discourse. This indicates a high level of interest and collaborative effort within the academic community towards understanding and advancing Multimodal Learning. Among the contributors, Zhang Y, Li X, and Zhang X emerged as the leading figures with 41, 36, and 35 articles respectively. Their prolific output signifies their dedication and significant contributions to the field of Multimodal Learning.

After reviewing 878 journal sources in total, 1985 papers about multimodal learning were gathered. This suggests that the field of multimodal learning is the subject of much research and attention. With 136 contributions, the publication “Lecture Notes in Computer Science” (which also includes its subseries on Bioinformatics and Artificial Intelligence) is the most active source of research articles on Multimodal Learning. This implies that the publication serves as the major forum for scholars working in this field. With 63 publications, the ACM International Conference Proceeding Series ranks as the second most prolific source, demonstrating its significance in the subject. With 56 articles published, Tsinghua University is the top university for research on multimodal learning. This demonstrates the university’s dedication to and proficiency with this field of study. Asia is home to the majority of the top-ranked universities that contribute, especially those in China. This implies that Asian academic institutions are leading the way in multimodal learning research, particularly Chinese universities.

In the discipline of multimodal learning, various authors regularly utilize certain keywords. Several of these keywords are “deep learning,” “multimodal learning analytics,” “multi-modal learning,” and “multimodal learning”. Authors Zhang Y, Wang X, and Liu Y are identified as frequent uses of these expressions in their published works. The highlighted keywords are commonly seen in publications pertaining to multimodal learning. Journals and conference proceedings where these keywords are likely to appear include ACM International Conference, Lecture Notes in Computer Science (and related series on Artificial Intelligence and Bioinformatics), and Neurocomputing.

Thematic maps give researchers a visual depiction of the research domain’s topography and aid in identifying key concepts, regions of interest, developing trends, and fundamental tenets of the discipline. Scholars wishing to explore fundamental topics may concentrate on the bottom-right quadrant. The bottom-left quadrant can be of interest to those who are interested in new trends. The top-left quadrant holds significance in regards to specialized or specialty fields. In order to grasp the central and established domains of the research, it is imperative to consider the top-right quadrant with its motor themes. The study offers insights, into the recurring themes and changing trends in the field of multimodal learning over time. By examining the frequency and choice of keywords one can determine the areas of interest and focus for researchers in this particular field.

A standout work mentioned is Multimodal deep learning by Ngiam J. and others in 2011 and with over 2000 citations, it’s clear this had a massive impact on bringing together deep learning and multimodal methods. Another big contribution was Multimodal learning with Deep Boltzmann Machines from Srivastava and Salakhutdinov in 2012. Even though it was published not long after Ngiam’s work, it has also gotten a ton of attention at 925 citations. The fact these came out so early in the 2010s shows they blazed a trail in this area.

The sheer number of times these have been referenced proves how foundational they still are. Lots of recent studies build off them in some way. This suggests that the combo of multimodal learning and deep neural networks remains an exciting field advancing AI. Their influence shows that merging different data types through deep architectures holds major promise.

The US, the UK and China stand out for driving multimodal research and working together. The US likely has an outsized role based on the citations. Mapping out who collaborates and where the centers of innovation are helps those looking to partner up or join in pushing the boundaries. It paints a picture of who the main players are advancing multimodal learning globally.

The term “multi-modal learning” stands out as being particularly significant to the field with 1,250 occurrences. Both “deep learning” and “multi-modal learning analytics” are crucial concepts since their discussion suggests that they are comparable to one other within the broader multimodal learning context. Co-citation analysis can be used to identify sets of related publications and establish connections between different publications. The high density of linkages between the articles indicates that they are fundamental works in their respective disciplines, as they are frequently cited jointly by other publications. Their importance and impact over scholars are therefore highlighted.

Conclusion

This bibliometric analysis provides a thorough synopsis of the developments, approaches, and significant contributions in the field of multimodal learning. Depending on the findings of the study, we were able to map the corpus of knowledge and pinpoint important papers that have an impact on the multimodal learning discussion. An increasing amount of scholarly literature suggests that multimodal learning is emerging as a prominent area of inquiry. This growth is the result of a more pervasive shift in training and instruction methods in the direction of a more comprehensive and integrated approach to education. The leading multimodal learning researchers from different colleges, organizations, and nations are highlighted in this article. Their work is crucial to our comprehension of multimodal approaches and to our training in them. By definition, multimodal learning covers a wide range of academic disciplines. This interdisciplinary collaboration not only advanced the discipline but also yielded innovative solutions to difficult learning problems. Co-citation and keyword research helped us identify new and developing subjects. Future study trying to improve the field’s capacity to adapt to students’ ever-changing requirements should draw inspiration from these outcomes. There was a lot of debate and citation surrounding a few publications that had a significant influence on the field. Understanding these works facilitates understanding of the basic concepts and advancements in multimodal learning. Future directions are provided by this bibliographic research, which charts the historical development of multimodal learning. The study offers crucial information to academics, teachers, and policymakers so they can capitalize on multimodal learning, which is becoming more and more incorporated into technology as educational landscapes change.