Abstract

Objective:

This discipline-based educational research (DBER) project was designed to longitudinally study the influence of high-fidelity computer-based sonography simulators (HFCBSSs) on student learning. The research question was: Can the implementation of HFCBSS improve clinical skills for sonography students, beyond traditional methods?

Materials and Methods:

HFCBSSs were shipped to three educational sites for implementation. A quasi-experimental design was utilized across the three educational sites. Educational materials, simulator guides, and assessment templates were developed for implementation. The educational sites revised their standard curricula to allow for students to develop skills with HFCBSS, low-fidelity simulation (e.g. phantoms), and sonographic evaluation of peer classmates. A control group of graduating sonography students was assessed having no HFCBSS experience. Those assessment scores were used as an educational threshold. Successive cohorts of students across the educational sites were assessed as they progressed through their first year.

Results:

First-year sonography students, exposed to the revised simulation curricula, demonstrated higher median assessment scores, on most sonographic examinations, than the control-group student scores. Those student cohorts that were exposed to the revised simulation curricula but demonstrated a lower median assessment score were remediated to make sure they were ready for clinical placement. Students, across all three educational sites, rated their experience with the HFCBSS as second only to gaining patient examinations in their sonography clinical placements.

Conclusion:

This educational research project spanned three educational sites, with varied curricula, but it did demonstrate HFCBSS is a useful and effective educational tool. It also demonstrated how preclinical sonographic skill assessments can ensure students get lab-based remediation prior to clinical placement. These results are exclusive to this cohort and should be replicated at other sites, for concurrence.

Keywords

Based on the previous use of computer-based simulation in health professional education, the background for conducting discipline-based educational research (DBER) needs to be contextualized. This work was informed by the highest level of evidence, and therefore, leveraged the recommendations from systematic reviews and meta-analyses. McGaghie et al. 1 conducted a review of the published literature from 1990 to 2010 on the comparative effectiveness of simulation-based medical education, with deliberate practice (DP). The results of their review were based on 14 quality studies that met their inclusion criteria (out of 3742 identified through their database search); they included a variety of simulated clinical skills and study designs. The conclusions of their review were that simulation coupled with DP was superior to standard clinical medical education. 1 The authors did underscore the need to implement simulation activities thoughtfully and evaluate the outcome rigorously at the training sites. 1 McGaghie et al. 1 followed several prior reviews from that group, which were synthesized in their 2010 publication. 2 In that synthesized publication, the group presented 12 best practices involving simulation-based medical education stemming from the original list of five features published by Issenberg et al. 3 The original five features included: (1) feedback, (2) DP, (3) curriculum integration, (4) outcome measurement, and (5) simulation fidelity. 3 Each of these simulation features contains a critical set of factors that should be part of planning a DBER project.

Perhaps, the highest level of evidence published was the work by Cook et al. 4 They conducted a meta-analysis on technology-enhanced simulation for health professions education, starting from a set of 10 903 potentially relevant articles. Of the articles reviewed, only 609 studies met their inclusion criteria. Their meta-analysis determined that students who participated in computer-based simulation, compared to those with no intervention, consistently demonstrated better learning outcomes. 4 The authors also postulated that studies moving forward need to address past limitations. To combat these limitations, they suggested including a control group of students, providing detailed explanation of learning activities, and providing simulation training that was longer than one day, for maximum effect. 4

Few studies exist that specifically address the use of technology-enhanced simulation in sonography. However, in a study by Gibbs, a high-fidelity computer-based sonography simulator (HFCBSS) was used as an educational activity, for 25 students. They completed both transabdominal (TA) and transvaginal (TV) sonography, with a Medaphor ScanTrainer. 5 The author conducted qualitative interviews with the students and found that their personal comments about using the simulator matched the original five best practices, posed by Issenberg et al. 3

A recent survey conducted by Pessin et al., surveyed Commission on Accreditation of Allied Health Education Programs (CAAHEPs)-accredited sonography programs, on their use of simulation. Sixty percent of the 230 identified programs participated in the study; 75% of the programs that responded use some form of simulation. 6 Among those educators who responded, the majority felt that the simulator improved students’ ability to identify normal anatomy and that it improved their ability to manipulate a transducer. 6 The program directors noted that one of the benefits of simulation was the introduction of complex or sensitive examinations, in a low-stress environment.

A systematic review, conducted by Sidhu et al., 7 examined the evidence for “improvement in ultrasound practice competence” resulting from simulation-based sonography training of postgraduate physicians. From an initial pool of 371 papers, they identified 14 studies that met their inclusion criteria. 7 A close review of the 14 studies uncovered generally limited evidence, which was considered as insufficient to support widespread adoption of simulation in enhancing medical education and improving sonography skills. This was primarily due to weaknesses or lack of sufficient information in the reviewed studies. The authors suggested future studies should have sufficient numbers of participants, include a control group, involve blinding, and meet a “standard of reporting to engender methodological rigor and make comparisons feasible.” 7

Based on this limited review of the evidence, the proposed study was designed to add to the body of knowledge on clinical mastery of sonography, with an HFCBSS. This DBER project relied heavily on the best practices proposed by McGaghie et al. 2 and Issenberg et al. 3 The research question was as follows: Can the implementation of HFCBSS improve clinical skills for sonography students beyond traditional methods?

Materials and Methods

Study Design, Educational Sites, and Participants

The goal of this study was to explore the implementation of an HFCBSS and its impact on the development of clinical skills among a cohort of sonography students. The design of the study was such that using a multi-site approach would allow for a less-biased determination of educational gains that were recorded across varied sonography programs. A quasi-experimental, pretest- posttest research design was used to evaluate sonography students’ clinical sonography scanning skills. This educational research was reviewed by institutional review boards (IRB), across all educational sites, and consent was obtained from each student who participated.

This study was conducted at educational sites that had three separate baccalaureate-accredited sonography programs. All students recruited for the study were enrolled in their respective baccalaureate CAAHEP-accredited sonography program for 2 years. Site 1 provided a curriculum that combines didactic, lab-based courses, and clinical rotations, which prepared students to become credentialed sonographers in the following specialties: abdominal-extended sonography (ABD), obstetrics and gynecology sonography (OB/GYN), and vascular sonography (VS). Sites 2 and 3 provide a curriculum that combines didactic, lab-based courses, and clinical rotations, which prepare students to become credentialed sonographers in the following specialties: ABD, OB/GYN, and adult cardiac sonography (ACS). Lab-based sessions are dedicated times for students to enhance their knowledge and psychomotor skills in sonography via scanning on simulators and peers. Prior to incorporating HFCBSS in labs, low-fidelity simulation was the primary method to provide hands-on practice for sensitive examinations, such as endocavitary pelvic sonography (GYN-TV).

Differences in the structure and delivery of the lab sessions were noted between educational sites. Site 1 predominately provided structured lab sessions, where faculty were present at all times to observe individual student’s scans and provide immediate feedback. Open lab sessions were also used to allow students an opportunity to use the lab space to continue developing their scanning skills on exams that have been covered during structured lab sessions. These open lab sessions did not require the presence of a faculty member to proctor the scans. Sites 2 and 3 used a combination of structured lab sessions that included a faculty providing immediate feedback as well as independent labs. During independent labs, students would be required to attend lab at a specified time and save images/cine clips of required anatomy. Later, the instructor reviewed these saved images/cine clips and provided students with feedback.

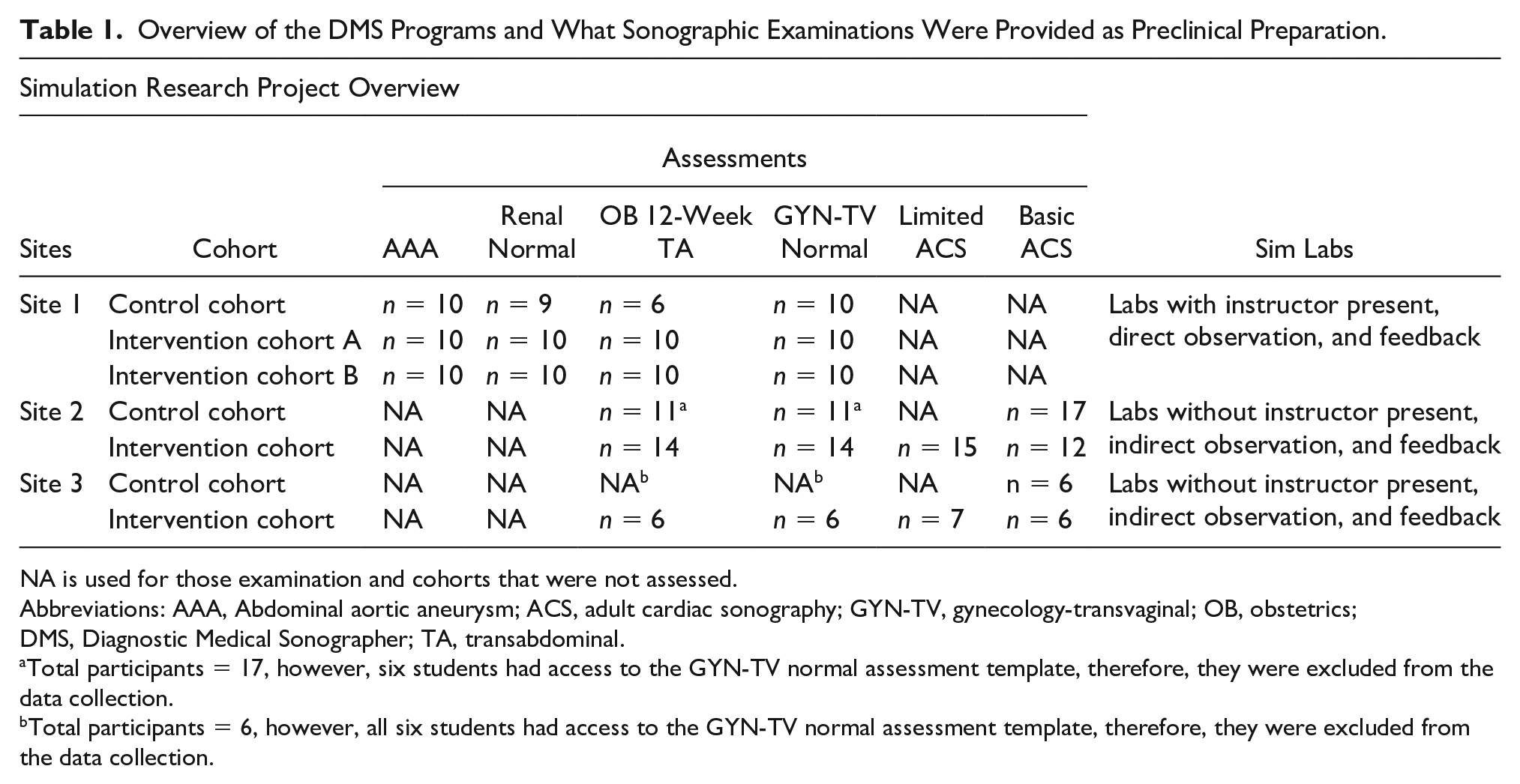

A total of 33 control-group students and 44 intervention-group students participated in the study, across the three sites (Tables 1–3). Students did not interact with students in the other participating programs. The control group at each site had remaining classes and clinical rotations that were scheduled on opposing days from the intervention group. The students may have been asked to complete volunteer hours as part of their admissions, but otherwise had no prior sonography experience.

Overview of the DMS Programs and What Sonographic Examinations Were Provided as Preclinical Preparation.

NA is used for those examination and cohorts that were not assessed.

Abbreviations: AAA, Abdominal aortic aneurysm; ACS, adult cardiac sonography; GYN-TV, gynecology-transvaginal; OB, obstetrics; DMS, Diagnostic Medical Sonographer; TA, transabdominal.

Total participants = 17, however, six students had access to the GYN-TV normal assessment template, therefore, they were excluded from the data collection.

Total participants = 6, however, all six students had access to the GYN-TV normal assessment template, therefore, they were excluded from the data collection.

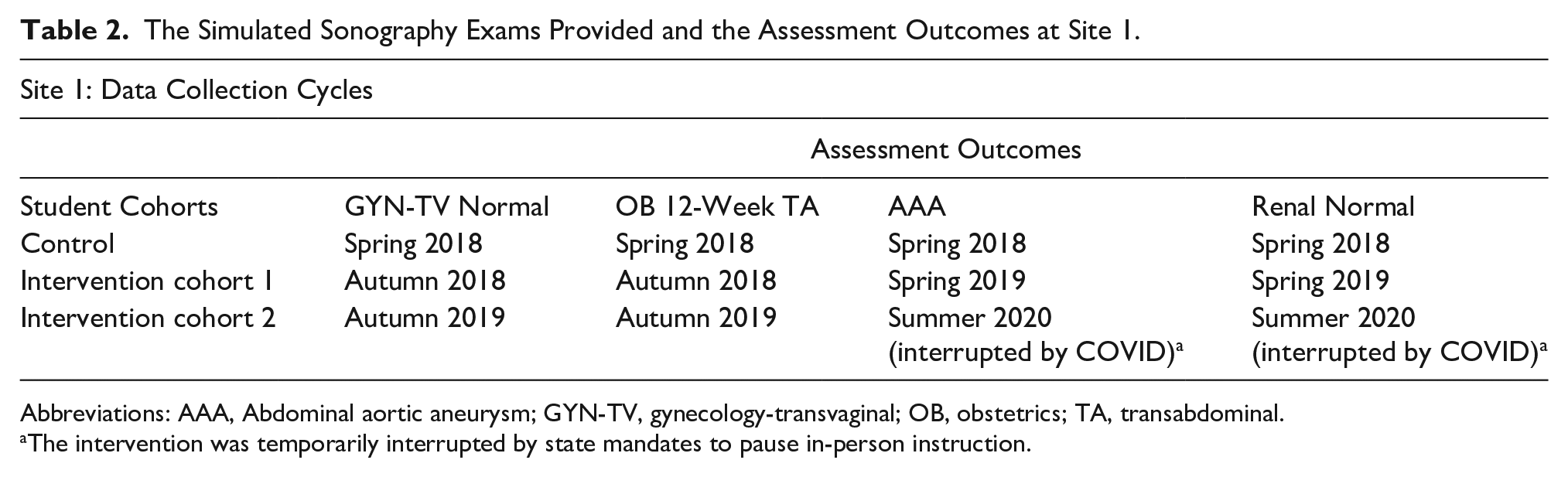

The Simulated Sonography Exams Provided and the Assessment Outcomes at Site 1.

Abbreviations: AAA, Abdominal aortic aneurysm; GYN-TV, gynecology-transvaginal; OB, obstetrics; TA, transabdominal.

The intervention was temporarily interrupted by state mandates to pause in-person instruction.

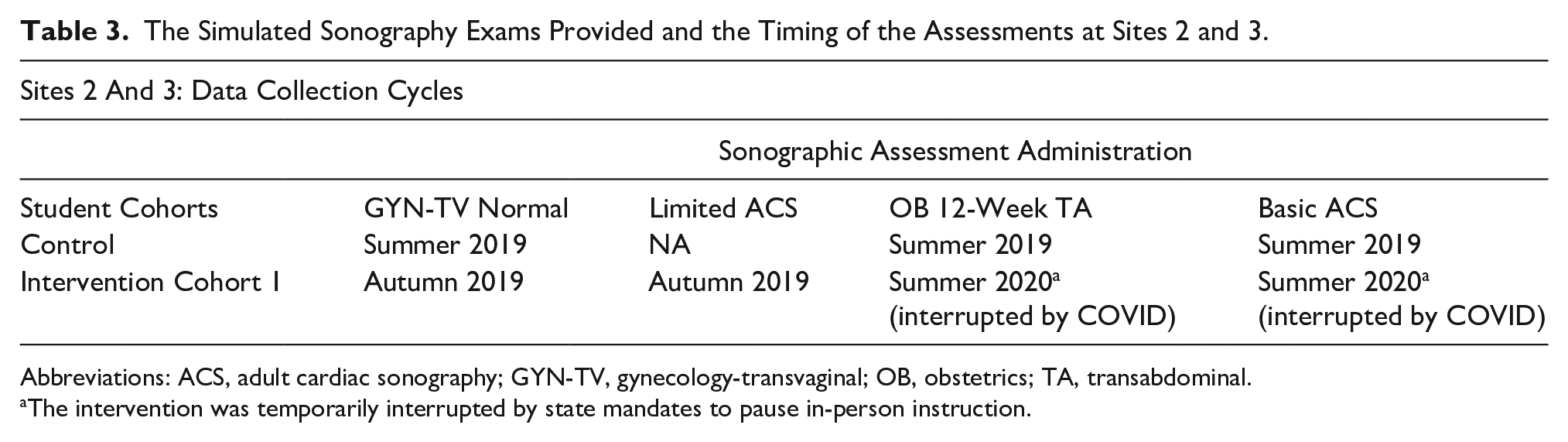

The Simulated Sonography Exams Provided and the Timing of the Assessments at Sites 2 and 3.

Abbreviations: ACS, adult cardiac sonography; GYN-TV, gynecology-transvaginal; OB, obstetrics; TA, transabdominal.

The intervention was temporarily interrupted by state mandates to pause in-person instruction.

Each site provided their own control group to which their intervention group was compared. The control group consisted of senior students who had no exposure to high-fidelity simulation, but for whom phantoms and peer scanning was part of their training. Site 1 assessed the control and two consecutive interventional cohorts on a variety of sonographic examinations (see Table 1). Site 2 assessed their control and interventional cohorts on a similar set of sonographic examinations (see Table 1). In addition, the interventional cohort in site 2 completed a limited ACS at the end of their first semester. Site 3 assessed their control group of students on similar sonograms (see Table 1). However, assessments completed for the control group for OB 12 weeks, TA, and GYN-TV were excluded due to students being provided the assessment templates. In addition, the interventional group at site 3 was assessed on a set of sonographic examinations (see Table 1).

Equipment

CAE Vimedix HFCBSS units were provided to the educational sites participating in the study. CAE is a company that was founded on creating flight simulators. The transition into medical education and providing simulation is a recent endeavor. The Vimedix HFCBSS included a male manikin simulator that provided abdomen and cardiac case studies, and a female manikin simulator for OB/GYN case studies, both TA and TV. The simulators included a mock phased array transducer, curvilinear transducer, and had either a separate central processing unit (CPU) and computer monitor (site 1) or laptop computer (sites 2 and 3). However, all equipment were running the same software version (v1.17.1.10) to ensure consistency of cases and computer functions. The study coordinator spent a month reviewing, with the company, the installation and the various case studies for curricular implementation.

Development of Assessment Tools and Scoring Guides

In reviewing the cases available on the simulators, researchers worked alongside instructors, from each of the participating sites, to select cases that could be integrated into their existing curricula and augment students’ learning. Once cases were selected, they were carefully vetted by the host program’s faculty for realism, image quality, and anatomic correctness. It was through this vetting process that the liver and right upper quadrant (RUQ) examinations were removed from the project. As such, it was determined that students should scan peers or utilize low-fidelity sonography simulation (LFSS), such as an abdominal tissue-mimicking phantom, for RUQ examinations in the lab.

Given the need to standardize the assessment of selected normal and abnormal simulation cases, it became clear that the research team would need to provide specific, detailed, and compatible assessment templates. It would also be important to provide guidance on what points to assign for each required element. This process began with a draft of an assessment template that the study coordinator created for the examinations and translated to the university curriculum. A compatible scoring guide was also created for each assessment template that provided a detailed breakdown of all the points and the list of components that must be met to receive those points. The scoring guides were added to help standardize and verify the points awarded for each image acquired. In addition, range values for appropriate measurements of the 12-week fetal crown rump length (CRL) and bilateral renal lengths were incorporated in the assessment tools to further provide objective components within the templates. Furthermore, a need to address any critical actions that may affect the diagnostic accuracy of acquired images/cine clips was necessary to document and be reflected in the student’s score. Critical actions included the following: nondiagnostic images and/or sweeps due to inappropriate depth and if assessment sweeps and/or images were obtained with the notch oriented incorrectly. Critical actions were addressed as follows:

If a critical action is made, the participant will receive a score of 0 for the image(s) and/or sweep(s) acquired.

If a critical action is resolved and corrected within the established time (end of case), it is no longer considered a critical action.

As the study coordinator created each assessment template and scoring guide, the faculty trialed exams and checked to determine the accuracy of the selected simulation, as well as the points that were to be awarded. The host sonography program offered their graduating sonography seniors (control group for site 1) the chance to complete each assessment and two faculty members scored the examinees to check for inter-rater reliability for scoring.

Pilot of Assessment Tools & Scoring Guides

A pilot study was conducted to ensure consistency of the research methods, tools, and protocols. This pilot study was conducted by the host site and further assessed by the partnering locations, and evaluated by a biostatistician to ensure quality and reliability. Revisions were made in a formative way with all changes being completed by the study coordinator. An example of a change was faculty initially inquiring about giving partial credit of 0.5 or 1.5 points during assessments. After consultation with a biostatistician, it was determined that only whole numbers should be awarded. This was part of the process that allowed these formative changes to be made and documented in the scoring guides. At the point where the scoring guides were close to a summative version, feedback was requested from faculty at sites 2 and 3 on the templates and scoring guides when they began assessing their graduating seniors (control groups).

HFCBSS Assessment Examinations Implemented Across Educational Sites

The purpose of the educational research was to allow the addition of HFCBSS to be added as it naturally occurred in each program’s schedule of content and laboratory practice sessions. For this reason, the three educational sites were able to assess their graduating sonography seniors (control group), and incorporate the HFCBSS and assessments into their upcoming semester curriculum. Based on the sonography program faculty’s ability to add the HFCBSS and assessments, these were the sonography examinations implemented and data collected: site 1: GYN-TV, OB 12 weeks, abdominal aortic aneurysm, and renal; sites 2 and 3: OB 12 weeks, limited echocardiogram (interventional group only), and basic echocardiogram. Limited echocardiogram assessment consisted solely of 2D images and cine clips of the adult heart through specific “windows” (e.g. apical, subcostal, etc.) with no measurement recordings. The basic echocardiogram template expanded on the limited echocardiogram template to incorporate measurements in 2D, M-mode tracings, and Doppler (color and spectral). Tables 1 and 2 provide the educational intervention plan for the programs, cohorts, exams, and cycles of assessment. All exams were assessed at the end of a specific semester.

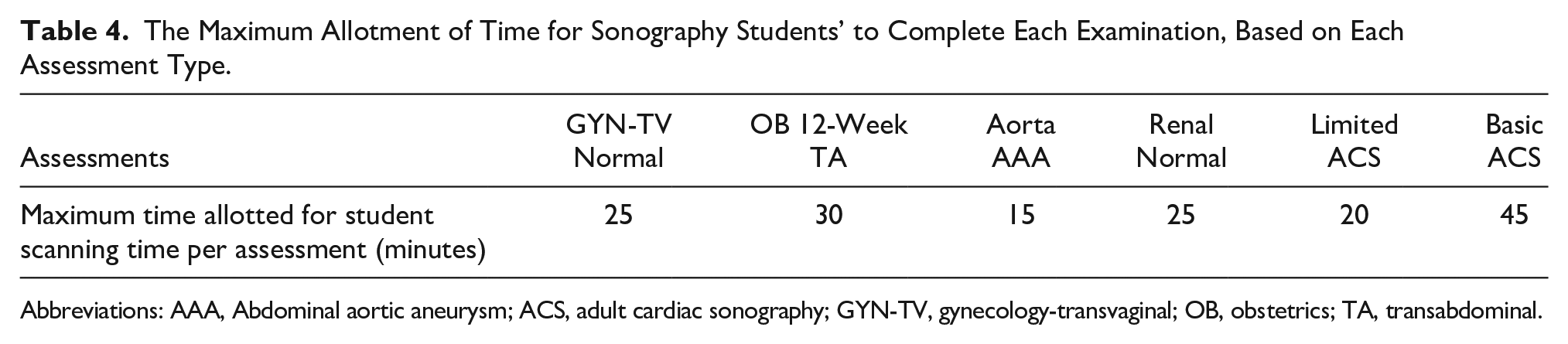

Once the graduating sonography students established a median threshold of assessment scores, the first intervention cohort of incoming sonography students were consented to provide assessment data. All incoming sonography students, across all three educational programs, were provided regularly scheduled didactics scan labs with HFCBSS, LFSS, and peer scanning opportunities. The time allotted for scan labs varied based on whether the sessions were independent or instructor lead. They also went to their first scheduled clinical rotation as was planned at the start of the semester. Students in these programs were geographically separated and did not share any of the same clinical sites or clinical preceptors. After spending time in the simulation lab with the simulators, phantoms, and peer scanning opportunities, students were assessed on their scanning protocols on each examination (see Tables 2 and 3) as follows: (1) ability to ask intake questions of a mock patient, prior to starting the simulated examination (this was primarily done in site 1), (2) ergonomic adjustment of the workspace, and (3) the examination time allotment was adjusted, depending on the type of sonographic examination was being assessed (see Table 4). The preface to the examinations was done to provide the student with a context for examining a patient and also portrays the entirety of a sonographic examination. The scoring was specific to the images created by the student. In addition, the examination time was determined by instructors to ensure that the students have enough time to complete each examination. At the conclusion of the assessment, a debriefing session was held with each student, to review the saved images and any post examination instructions. Students in the intervention cohorts were also asked to complete a usability and usefulness questionnaire (see Supplemental Appendix 1), with 5-point Likert scale and Likert-type response options, on their perceptions of the various types of learning modes they experienced. They were presented with the questionnaire at end of first semester and end of second semester for all sites, and also first semester preclinical for site 1.

The Maximum Allotment of Time for Sonography Students’ to Complete Each Examination, Based on Each Assessment Type.

Abbreviations: AAA, Abdominal aortic aneurysm; ACS, adult cardiac sonography; GYN-TV, gynecology-transvaginal; OB, obstetrics; TA, transabdominal.

Statistical Analysis

The data were examined for normality and equality of variance. A Levene’s test was used to check for normal distribution and homogeneity of variance. Very few of the assessment outcome samples (i.e., data for a sonographic examination at a particular educational site) were normally distributed or had equal variance. In addition, the disruptions due to the pandemic affected student instruction and data collection at some points during the study. As such, the advice from the biostatistician was to only report the descriptive statistics that were gathered.

Results

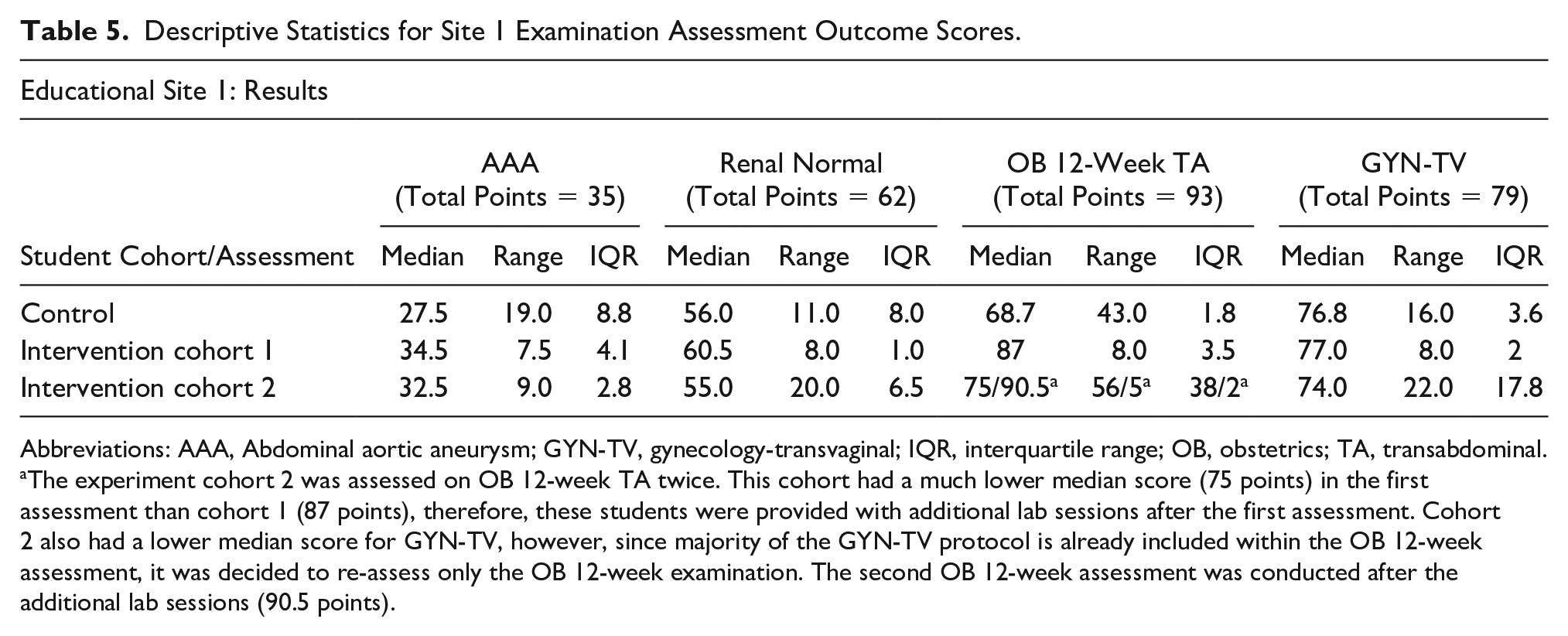

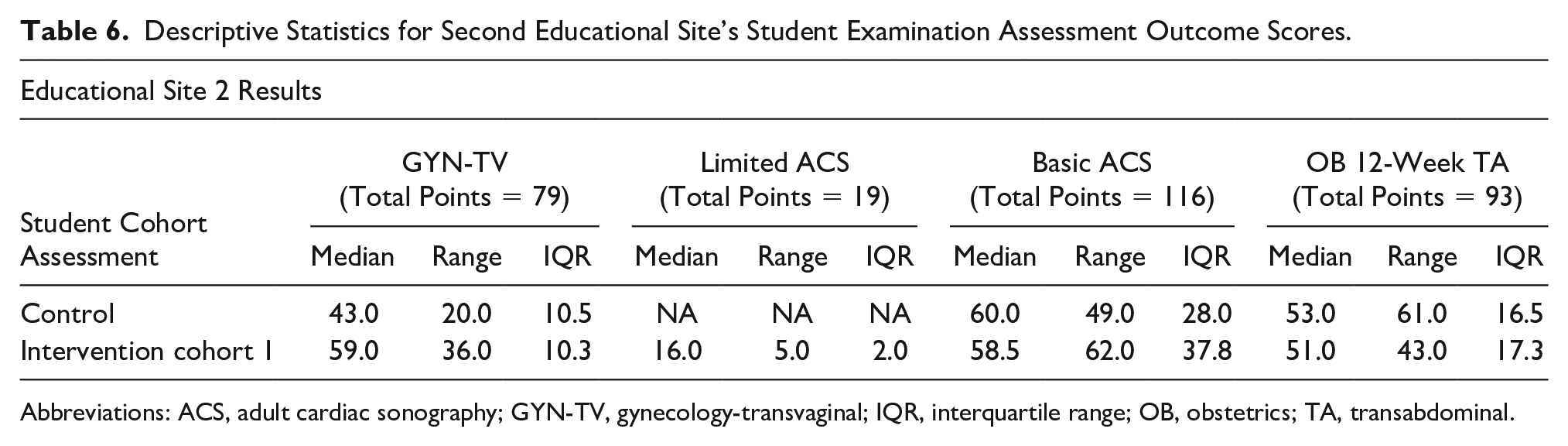

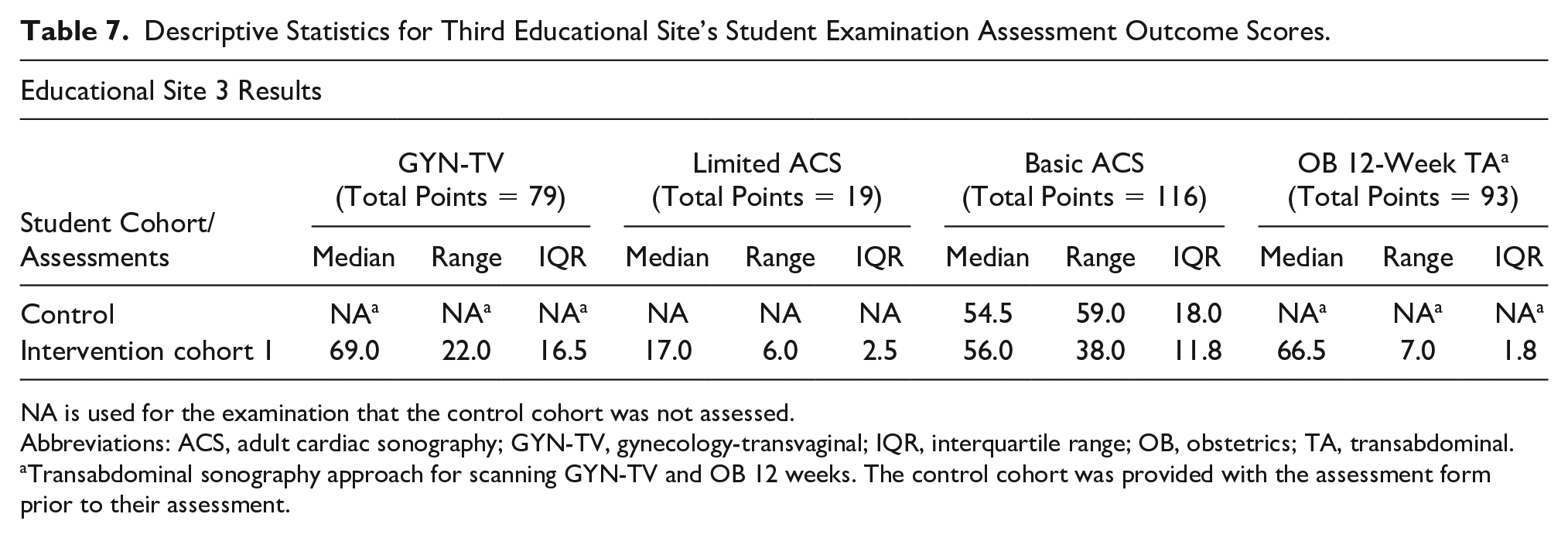

The results from this study are best considered as three separate case studies; the HFCBSS assessment outcome score medians/cohort are provided and organized by the educational site. Descriptive statistics for the assessment outcome scores are presented in Tables 5–7. Important educational insights can also be gleaned from examining the descriptive data collected.

Descriptive Statistics for Site 1 Examination Assessment Outcome Scores.

Abbreviations: AAA, Abdominal aortic aneurysm; GYN-TV, gynecology-transvaginal; IQR, interquartile range; OB, obstetrics; TA, transabdominal.

The experiment cohort 2 was assessed on OB 12-week TA twice. This cohort had a much lower median score (75 points) in the first assessment than cohort 1 (87 points), therefore, these students were provided with additional lab sessions after the first assessment. Cohort 2 also had a lower median score for GYN-TV, however, since majority of the GYN-TV protocol is already included within the OB 12-week assessment, it was decided to re-assess only the OB 12-week examination. The second OB 12-week assessment was conducted after the additional lab sessions (90.5 points).

Descriptive Statistics for Second Educational Site’s Student Examination Assessment Outcome Scores.

Abbreviations: ACS, adult cardiac sonography; GYN-TV, gynecology-transvaginal; IQR, interquartile range; OB, obstetrics; TA, transabdominal.

Descriptive Statistics for Third Educational Site’s Student Examination Assessment Outcome Scores.

NA is used for the examination that the control cohort was not assessed.

Abbreviations: ACS, adult cardiac sonography; GYN-TV, gynecology-transvaginal; IQR, interquartile range; OB, obstetrics; TA, transabdominal.

Transabdominal sonography approach for scanning GYN-TV and OB 12 weeks. The control cohort was provided with the assessment form prior to their assessment.

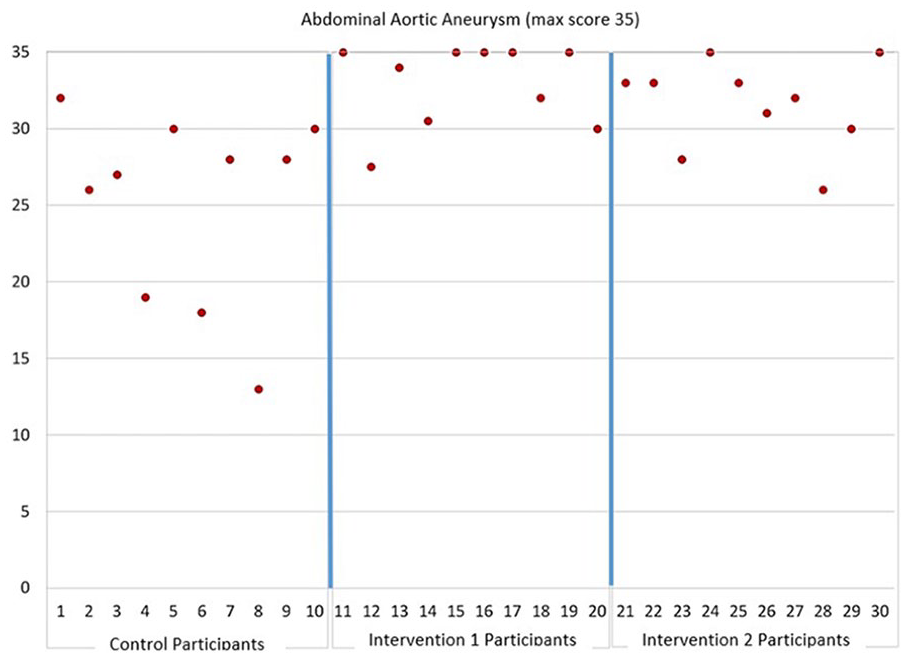

Educational Site 1

Table 5 provides descriptive statistics for the four examinations for which assessments were performed for site 1. Data are provided for each of the three cohorts. Figure 1 is provided and illustrates a noticeable difference in the overall assessment outcome scores for the abdominal aortic aneurysm (AAA) exam, between the intervention and the control cohorts. Several students in the intervention cohorts received full credit for the examination, whereas no students earned full credit in the control group. Three of the 10 students in the control group earned less than 20 points, whereas no students scored less than 26 points in the intervention cohorts.

The abdominal aortic aneurysm sonographic assessment results from site 1. The graph is divided into separate areas for assessment data on control, intervention 1, and intervention 2.

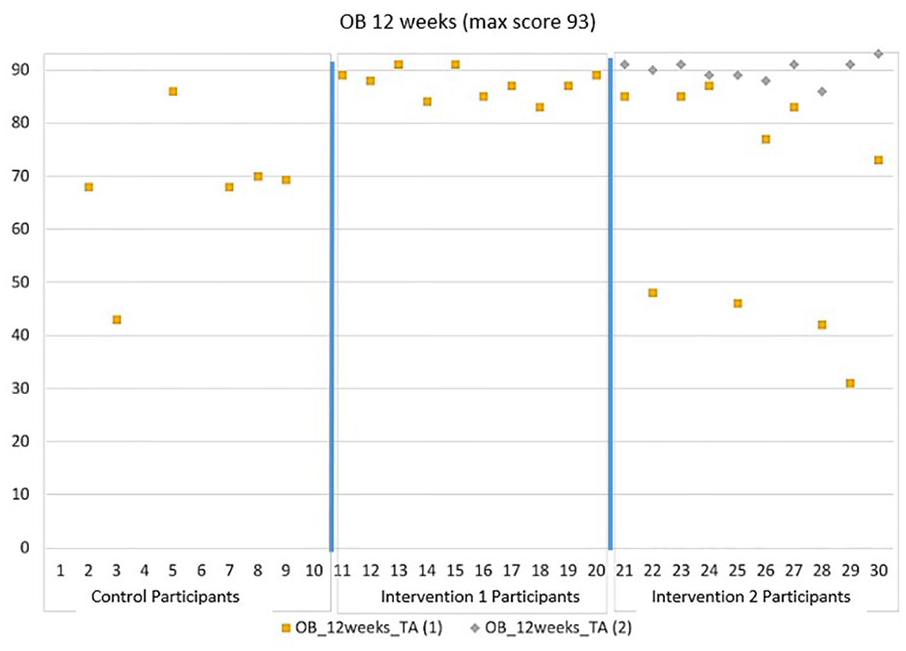

Figure 2 is provided and illustrates marked differences in performance between intervention 1 cohort and the control cohort on the OB 12-week examination. It also illustrates the improvement demonstrated by intervention 2 cohort following additional training, which was determined to be needed after initially performing poorly on the OB 12-week assessment. The performance of each student in the cohort improved following the additional training.

The OB 12-week TA assessment results for site 1. The graph is divided into separate areas for assessment data on control, intervention 1, and intervention 2. The intervention 2 cohort was assessed twice, with the second time occurring after additional training was provided. OB, obstetrics; TA, transabdominal.

Students were also invited to provide feedback about using the HFCBSS in the lab sessions. At site 1, the students’ average score was 4.9 out of 5, which indicated strong agreement as to learning with the simulator. In site 1, nine out of ten students strongly agreed that the simulator was a helpful learning exercise (score = 5), and one student rated the experience at 4, which was a rating of moderately helpful in learning. Students also provided debriefing feedback with regard to the use of the simulator: The simulator gives me the chance to scan pathologies I have never seen before so I can become familiar with them. It also provides me with a variety of exams to practice on. That is especially nice when I want to stay fresh on OB/GYN scanning when I am in an abdominal clinical site rotation.

I think the SIM is a very useful tool for students. It gave me the opportunity to master basic scanning techniques before even stepping into clinical. It allowed me to conceptualize and understand things in a stress free environment.

I like that we have the opportunity to do scans that we would not normally be able to practice on each other (OB, transvaginal, etc.). I think it is great too that there are so many different options and different exams options to utilize and explore.

Educational Site 2

Table 6 provides descriptive statistics for the four examinations for which assessments were performed for site 2. Data are provided for both cohorts. The program includes echocardiography. Two assessments were conducted for the intervention cohort, a preliminary one (limited adult echo) and the basic adult echo (which the control group was also assessed on).

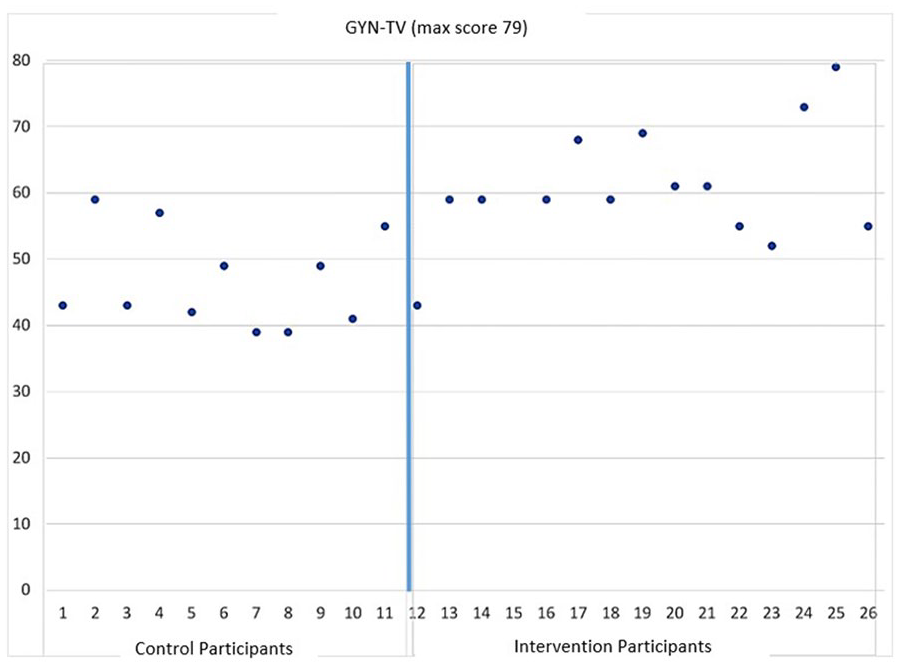

Figure 3 graphically illustrates a noticeable difference in the overall assessment outcome scores for the GYN-TV examination, between the intervention and the control cohorts for site 2. While only one control student scored as high as 59, 10 of 14 students in the intervention cohort scored 59 or above and one received full marks (79).

The GYN-TV assessment results from site 2. The graph is divided into separate areas for assessment data on control and intervention participants. GYN-TV, gynecology-transvaginal.

As mentioned in the methods section, critical actions were noted when students made errors during the examination, and at this site, the control group had five critical actions for OB 12-week TA and also three critical actions during assessments that were being conducted on GYN-TV. One common critical action was that students obtained images during the assessments at an inappropriate depth. Another critical action made by a student performing the GYN-TV examination was having the notch oriented incorrectly for a portion of the examination. The use of critical actions is important to note as these also likely contributed to the lower median scores for the control groups, at this site.

The students at this site were also provided with the chance to give debriefing feedback. As examples, three responses are provided below to the question: “Do you believe the simulator is a useful tool to have as a student? Why or why not?” “Yes, it helps us gain confidence in ourselves and is a good way to practice when there is not a peer there.” Yes! They are great for learning structures and transducer orientation and manipulation. It helped me to understand more in clinic, and see how they are achieving good images. I feel like I would have been lost at clinic without these Sims to practice on. They also helped understand class concepts that we learned. It was very helpful. I scanned the sim heart before I ever scanned a real human heart, and it gave me more confidence in myself to scan cardiac. Most importantly, I was able to “lock-in” the positioning of the transducer (because the sim’s body is so simple); which helped me learn what fine-tuned motions could improve the heart position and clarity.

Educational Site 3

Table 7 provides descriptive statistics for the four examinations for which assessments were performed for site 3. Data are provided for both cohorts. The program includes echocardiography. Two assessments were conducted for the intervention cohort, a preliminary one (limited adult echo) and the basic adult echo (which the control group was also assessed on).

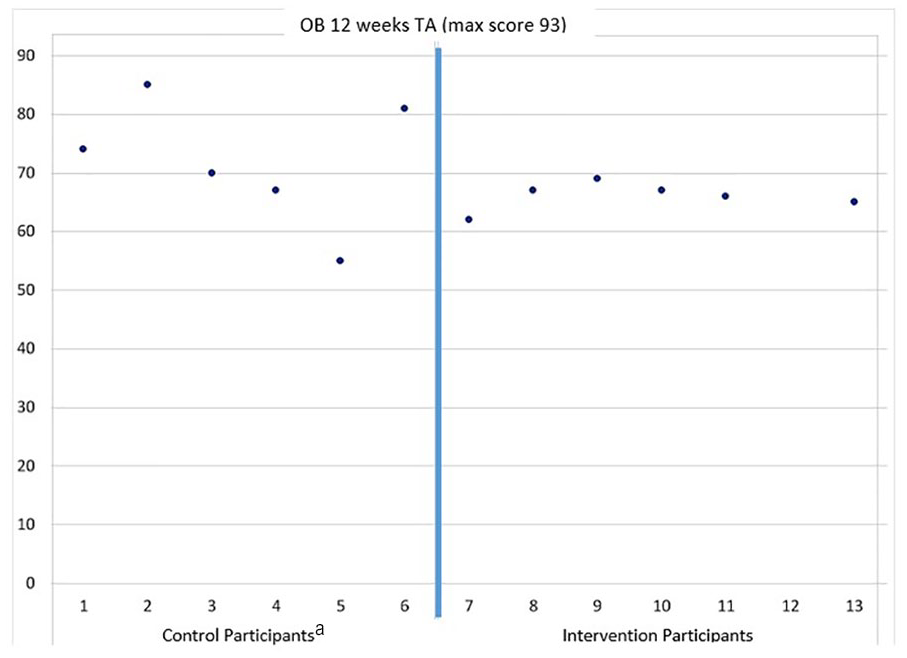

Figure 4 graphically illustrates the overall difference in performance between the intervention and the control cohorts for the OB 12-week examination, at site 3. Unfortunately, there was some confusion about how to handle the control assessment form and those students did get an advanced look at the tool. For the purposes of the research, the control data were not reported, however, the intervention cohort assessment was administered without an advanced look at the tool. In a practical manner, the faculty in that site used the data to judge the readiness of the intervention cohort. The intervention students received a score of 6/14 on the sweep for the left ovary and for the sweep of the right ovary, whereas the average among the control students was 11, with some control students scoring 14/14 on one or both sweep criteria. Fetal measurement was also poorer in the intervention cohort (average = 4.6 pts/13) in comparison to the intervention cohort (average = 8.2 pts/13). This resulted in more time being spent by faculty reviewing and providing the intervention students with more information prior to being placed in clinic.

The OB 12-week TA assessment results from site 3. The graph is divided into separate areas for assessment data on control and intervention participants. The control students did get an advanced look at the assessment tool, however, it did allow faculty to determine the intervention cohort’s preclinical readiness for conducting an OB 12-week examination. OB, obstetrics; TA, transabdominal.

Below are some site 3 students’ debriefing feedback, when asked the question “Do you believe the simulator is a useful tool to have as a student? Why or why not?” Yes. As I stated before, the simulator can make it easy to get an early understanding of what to look for when scanning structures that way be new to us. Even though the images may not be totally true to what we’ll see in the human body, having a general idea of what an image looks like and an understanding that the general idea will vary in patients is very helpful. Absolutely. It is great to practice on to build confidence. Just the amount of practice using the transducer alone is beneficial. I am more likely to scan when I have this simulator available because of the availability and option to practice on at my own pace. I believe the simulator is a useful tool to have as a student. Simulators provide learning opportunities in an unpressured environment, which allow students to learn without feeling rushed. It is also a convenient method for students because they can scan the simulator whenever they want without having to wait for a real model to scan on.

Discussion

This educational project was conceived as a multi-site data collection that specifically addressed the importance of implementing an HFCBSS into three educational sites with sonography programs. It was also important to not just require that HFCBSS be exclusively used, but also allow the faculty at those sites to integrate computer-based simulation alongside the other lab options being using for preclinical training. The educational sites selected were chosen because of their ranking and the commitment of the faculty to continually prepare sonography students to better utilize the clinical experiences that hospitals and clinics provide for hands-on training. Even prior to the COVID pandemic, the faculty in all three educational sites were concerned about new sonography students getting the maximum benefit of their time in each hospital or clinic rotation. The unforeseen challenge to this project was the local and state mandates that stopped in-person sessions for classroom meetings, laboratory activities, and clinical rotations, due to the pandemic. Although these mandates differed across the three educational sites, it was hard to capture the time lost by students in those programs. Nevertheless, the project provided the added educational resource of an HFCBSS, which allowed for those students affected by the mandates to remediate their novice skills, prior to being reassigned to clinical sites that reopened.

Although this educational project had been designed as a longitudinal study, the interruption of the pandemic did delay some of the data collection. Rather than end the project due to the disruption, the educational sites agreed to make use of the equipment and the data that were collected. The faculty at those educational sites used the data that were collected, and it resulted in determining whether students, who had their educational progress halted, were skilled enough to rejoin their assigned clinical sites. The limited control data, within each educational site, informed faculty as to the student’s readiness for some sonographic examinations and could be used as a threshold for specific student remediation. Given some educational sites did not complete all the assessments; they were more limited in making judgments about specific sonographic examination readiness. Without the assessment outcome data that were collected at educational sites, the faculty would have had to make many more subjective decisions about whether students were meeting the preclinical threshold to attend their next clinical assignment, after restrictions were eased.

This project also was designed without a lot of published evidence on the utilization of an HFCBSS within a sonography educational program, as well as how it might influence the clinical readiness of sonography students. Limited publications exist on implementing HFCBSS and they tend to focus on educating medical students. In physical therapy (PT), simulation has been more aggressively pursued, as means for preparing students to demonstrate clinical readiness and accelerate competency. A systematic review by Mori et al. 8 found that in PT, there was evidence that simulated learning environments could replace a portion of a full-time 4-week clinical rotation. Interestingly, the educational sites in the present study added HFCBSS to their existing labs that provided preclinical experience to the students prior to entering clinical rotations. The implementation of HFCBSS into the scanning labs allowed for a boost in educational training given that the labs depended heavily on LFSS and the peer scanning of colleagues. Inserting the high-fidelity simulators into labs not only added time to the scheduled labs but also enhanced the students’ preclinical experience. It was through their recorded feedback that we noted that students ranked the HFCBSS as a highly valued educational experience, across all educational sites.

Another strength of this educational project was that it utilized three different educational sites that had BS sonography programs and they were geographically separate areas to capture assessment outcome data. The sonography programs had similarities, such as providing preclinical laboratory experiences and also had differences in how those labs were conducted (supervised vs independent). These differences are considered a strength since each program may choose to hold scan labs in varied formats. Therefore, the assessment data and the outcomes of cohorts of BS sonography students who utilized HFCBSS, LFSS, and peer scanning of colleagues, demonstrate that HFCBSS has the potential to be effective in a variety of sonography programs.

Preclinical sonography students rated HFCBSS, as a highly preferred learning tool, upon completing the academic year. Although the HFCBSS was a highly rated learning option, it took on a lower rating once students began clinical rotations. In some ways, the difficulty in pushing on the mannequin to get an image was not lifelike and the clarity of the images was unlike those obtained from an actual patient. These features of the early experience using the HFCBSS may have been less noticeable as a beginning student but once clinical rotations began, the difference was noticeable. Initially, students indicated the importance of using HFCBSS to allow for practicing their examinations, at their own pace. This certainly was not possible during a clinical rotation.

Peer scanning is often highly rated given that it allows for experiencing the natural feel of pushing the transducer against the skin and using the post processing functions on an ultrasound equipment system. It is important to underscore that peer scanning has an important place in the preclinical educational setting. This is specifically important for students to use peer scanning as the main educational method for gaining experience with examinations of the thyroid, carotid, and so on, given that the HFCBSS did not provide these. This choice by the educational programs to retain labs that only had peer scanning and LFSS may have contributed to students higher ratings, based on those required scanning sessions. Physiotherapy students who engaged in simulated peer evaluations provided similar ratings. Students in that study ranked the use of colleagues for training purposes as valuable prior to clinical placement. 9

In a study by Canty et al., 10 50 medical students were given pre- and posttest exams on a CAE HFCBSS for cardiac imaging and were surveyed about their educational experience. The majority of the medical students, in that study, indicated that simulation raised their interest in learning anatomy, as well as sonography. Those students also indicated that they would have preferred to have more exposure to the technology, as it applied to other anatomical regions, such as the abdomen and pelvis (the lab was cardiac only). 10 In the present study, the sonography students were provided with preclinical simulator training and this was so early in their education that it was hard for them to compare the experience to other options available. Weidenbach et al. 11 also conducted a survey of five students, using an echocardiography computer simulator, with the goal of mastering 3D cardiac anatomy. The faculty in that study compared simulation with traditional education resources and noted an improvement in the students’ handling of transducers and mastering spatial orientation. 11 This is another important facet of this type of educational research, to obtain feedback from the faculty and clinical instructors on the preparedness of students who truly engage with simulation opportunities (both instructor lead and independent sessions).

An important facet of this multi-site educational research was that faculty were able to use the HFCBSS and the assessment tools as it best suited their respective curricula. The control cohort of students in each educational site provided median assessment outcome scores for comparison. Across the programs, the outcome data were collected in a similar manner, but the student labs were conducted slightly differently. Most of the nuances in providing DBER were due to the faculty to student ratio, which was challenging but also reflects staffing situations that can impact sonography programs. A recent meta-analysis of implementing simulation across curricula examined the effectiveness of different methods of adding simulation and how those methods were implemented across a variety of programs in assisting students in mastering complex skills. 12 Some of the strongest effects were found for the use of high-fidelity simulators, using mixed methods (simulators and other leaning methods), using live models, and providing scaffolding support from an instructor. 12 Furthermore, the researchers ensured that debrief and feedback sessions were provided for select labs and at the conclusion of all assessments, as this is an essential part of the research. It is also important to emphasize the significance of the feedback and debrief sessions for both educators’ and learners’ literature, as this was highlighted as a critical factor by Issenberg et al. 3 In a study by Mohammad et al., 13 residents who were trained on simulators noted that feedback and debrief were an important part of their learning process and clinical mastery for OB/GYN sonography.

Although some of the data collection was disrupted by the COVID-19 pandemic, it did fortuitously identify that junior students in two of the educational sites were not meeting the expected OB12 weeks and GYN-TV median assessment scores. This is important information for educators to discover to provide appropriate remediation when necessary. At site 1, these initial assessment scores allowed faculty to make the determination that students needed an educational intervention. The re-evaluation of students in site 1, demonstrated that they were, subsequently, able to achieve the assessment “benchmark” of the previous cohorts. Instructors were able to reference assessment templates to identify where students were not meeting expectations. Once this had been identified, additional guided practice was implemented. This has likewise been addressed by faculty training medical students in sonography. In an essay on ways to integrate sonography training in medical school, a specific point was made about remediation of skills. The authors stated that HFCBSS, LFSS, and peer scanning of a colleague allow students to perfect or remediate sonographic skills while providing patient safety. 14 The use of comparing student’s assessment outcome scores helps to prevent missed abnormalities and can be addressed by the faculty. 14 Preparing these students for what resulted in a clinical disruption, due to pandemic, provided added lab time and re-assessments that were critical for these students to achieve clinical mastery.

Limitations

This research design was quasi-experimental and this has inherent threats to internal and external validity. Educational research, by its nature, is hard to conduct since all students should be afforded the educational treatment. The control students were provided instruction after they were assessed on the HFCBSS. It was indeed unfortunate that missing assessment outcome data occurred for some sonographic examinations, across different educational sites. These issues as well as the interruption caused by the pandemic were unavoidable. This work cannot be generalized beyond the student groups enrolled in the study.

Conclusion

This educational research project was conducted across three different educational sites, with sonography programs that had varied curricula and demonstrated that high-fidelity simulation was a valuable asset to their preclinical training of students. The use of HFCBSS, LFSS, and peer scanning of a colleague had an impact on sonography students enrolled in this research. The use of preclinical simulation activities has the potential to aid in faculty assessment of sonography students’ preclinical readiness. Although these results are exclusive to the students in this research, this type of research should be repeated in an effort to seek concurrence.

Supplemental Material

sj-docx-1-jdm-10.1177_87564793221123020 – Supplemental material for Utilizing Simulation as a Means to Teach Diagnostic Medical Sonography: A Multi-Site Discipline-Based Educational Research Project

Supplemental material, sj-docx-1-jdm-10.1177_87564793221123020 for Utilizing Simulation as a Means to Teach Diagnostic Medical Sonography: A Multi-Site Discipline-Based Educational Research Project by Kevin D. Evans, Sundus H. Mohammad, Nicole Stigall-Weikle, Qian Yang and Carolyn M. Sommerich in Journal of Diagnostic Medical Sonography

Footnotes

Acknowledgements

The authors acknowledge the hard work and commitment to educational excellence of Dora DiGiacinto, Robin White, Jennifer Bagley, Rachel Pargeon, Oxana Gishyan, and Angela Butwin. The authors also thank their biostatistician Menglin Xu, PhD.

Ethics Approval

The protocol was reviewed by each university’s Institutional Review Board (IRB) and both boards determined that the project was exempt as Category 1 research, which is research conducted in established or commonly accepted educational settings, involving normal educational practices, which, in this case, was research on the effectiveness of different instructional methods.

Informed Consent

Verbal consent was obtained from each participant.

Animal Welfare

Guidelines for humane animal treatment did not apply to the present study.

Trial Registration

Not applicable.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by a grant from Inteleos/ARDMS/APCA.

Peer Reviewer Guarantee Statement

The Editor/Associate Editor of JDMS is an author of this article; therefore, the peer review process was managed by alternative members of the Board and the submitting Editor/Associate Editor had no involvement in the decision-making process.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.