Abstract

With rapid advancements yet ongoing debate, Generative Artificial Intelligence (GenAI) is reshaping the design field. This paper explores how AI impacts various aspects of user experience (UX) design, from creative tasks to research activities. To clarify and analyze these developments, the paper introduces the AI4UX framework, which captures the complex relationship and multiple scenarios between design practitioners and AI. This framework highlights the dual role of GenAI as both a material and a tool, examining its implications in creative design and research contexts. Through the AI4UX lens, we further explore how to view and assess the integration of GenAI into UX design, with both the opportunities and challenges it presents. First, GenAI is explored as a design material, with a focus on end-users’ perceptions of intelligence. Next, we highlight the importance of protecting human creativity when AI is used as a design tool. For researchers, the need for meaningful transparency is emphasized when using AI as a research tool. We also discuss GenAI’s potential as an intelligent evaluation agent in supplementing real users. Following this, we consider the future possibilities of AI-empowered UX research. Lastly, based on the AI4UX framework, we explored the heightened competency requirements for design practitioners in the era of AI, aiming to capture emerging design paradigms. This framework provides a foundation for understanding how the design industry and academia adapt to these transformations. We aim to empower design students and practitioners to better understand how to navigate AI’s evolving role in design, while ensuring the core principle of human-centered design is consistently upheld.

Introduction

The design industry is undergoing a major shift as GenAI (Generative Artificial Intelligence) increasingly permeates every stage of the design process—from ideation to execution (Fang et al., 2025; Figoli et al., 2025; Yu, 2025). Both specialized design tools and general-purpose AI platforms are deeply involved in this ongoing transformation. Figma introduced the tagline “Pushing Design Further” and launched Figma Make at Config 2025, an AI-powered tool that transforms ideas and existing files into interactive prototypes (Field, 2025). Meanwhile, OpenAI introduced a new integration that turns ChatGPT brainstorms directly into FigJam diagrams (Zhang, 2025). As these developments unfold, a more robust and well-established environment for human–AI collaboration in design is beginning to take shape.

In fact, for modern designers, the process of generating creative ideas and the medium for implementing them have continuously evolved. Traditionally, designers relied on craftsmanship and embodied experience, while the computational era introduced digital tools for problem-solving (Maeda, 2017). With the rise of AI and Large Language Models (LLMs), the design industry is undergoing yet another evolution. Amid these changes, what remains constant for designers is design thinking, focusing on understanding user needs to create innovative solutions (Dorst, 2011). These human-centered principles transcend design disciplines. This paper focuses on the “user-centered” design paradigm, specifically in the User Experience (UX) design domain, exploring how AI impacts the process while avoiding overly broad or vague discussions.

This article provides an overview to capture how AI evolves in the design process and shapes the new design patterns. We introduce the AI4UX framework by addressing the questions of what to design and how to design. It considers how this transformation is reshaping both the objects being designed and the design process itself, including design research methods. Design evaluation is a crucial stage as well as design generation. It becomes even more critical in evaluating GenAI-based design, such as AIGC (AI-Generated Content). By discussing human–AI collaborative design, this work ultimately emphasizes the augmentation of human capabilities. This article will examine new patterns emerging from the collaboration between human designers and AI. We attempt to address a theoretical foundation for how design capabilities can be redefined in the new era.

Introducing the AI4UX Framework

Theoretical Perspective and Framework Construction

As GenAI increasingly participates in the design process and is frequently the very subject being designed (Yu, 2025), epistemological questions about human–AI collaboration are no longer obscure philosophical concerns but practical issues. Across industry and academia, increasing attention has been paid to how understanding of human–AI collaboration is systematically constructed.

Existing frameworks have made important contributions by pragmatically structuring human–AI collaboration into distinct design stages and examining it across each stage. Some draw on established models such as the Double Diamond (Fang et al., 2025), while others adopt industry-aligned UX workflows (Li et al., 2024), with both examining the division of task allocation between human designers and AI throughout each stage. In other words, these studies are grounded in epistemological assumptions and frame human–AI collaboration within predefined stages, delineating the respective roles of designers and AI in specific tasks from brainstorming to concept expression. While such analyses provide valuable insights, they are less suited to discussing how the influx of GenAI is reconfiguring design practice itself, where design objects, creative processes, and evaluative practices are continuously constructed and reshaped.

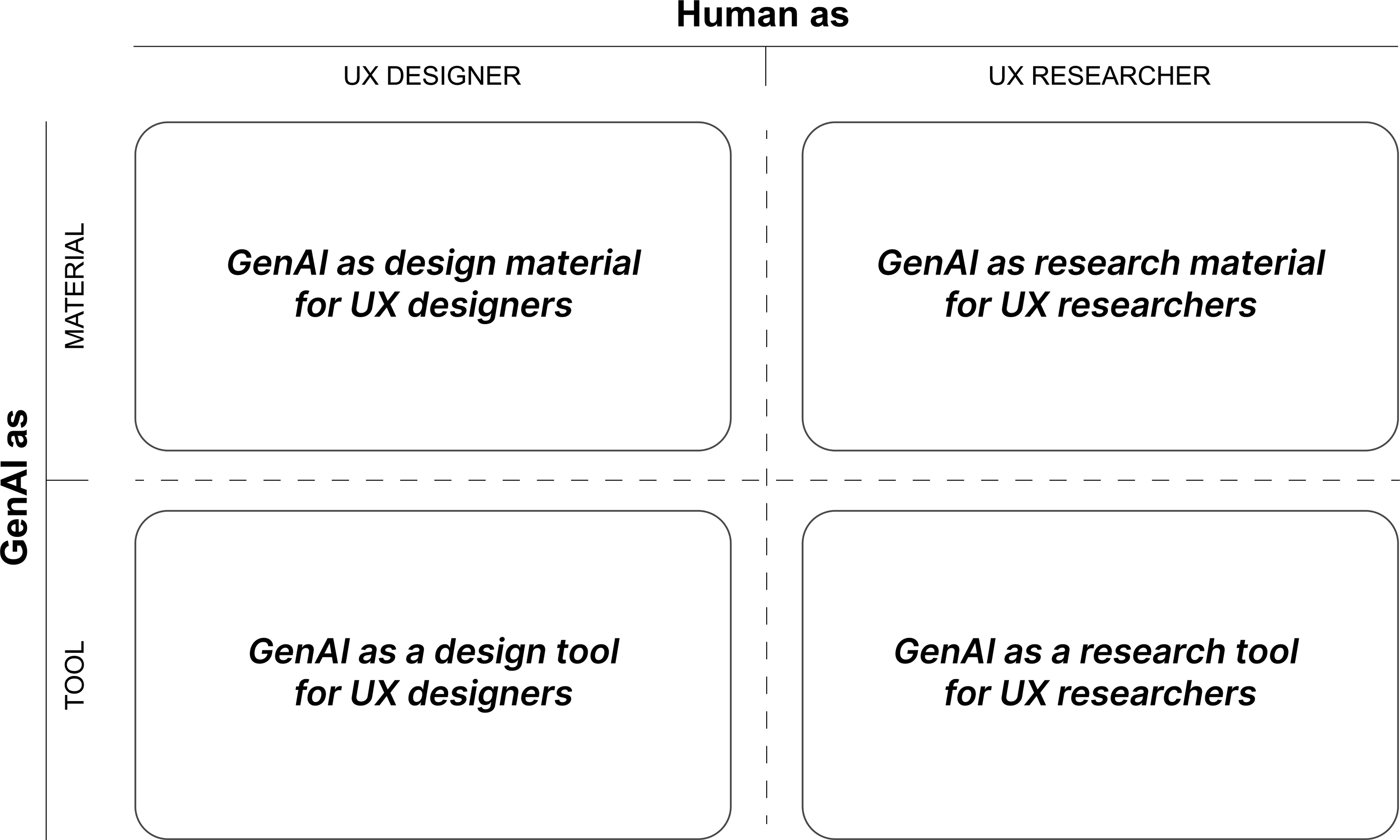

We therefore propose a framework grounded in a constructivist perspective (Creswell, 2009) to support the problematization (Alvesson & Sandberg, 2011) of AI-mediated UX practice. This study addresses the dual positioning of GenAI as both a design material and a design tool. While these notions are not new in isolation, their coexistence creates a distinctive challenge in the design domain. Specifically, both the tools of work and the objects of design are undergoing simultaneous transformation (Yu, 2025). Furthermore, we address a critical gap in current discourse by explicitly distinguishing between the practices of UX designers and UX researchers. UX research, though foundational to the field, is often marginalized in current discussions as related frameworks tend to prioritize creative design processes. However, the importance of UX research in the tech industry is increasing dramatically as massive GenAI and AIGC permeate.

GenAI Attributes and UX Roles

GenAI as a Design Material

As a new design material, GenAI has become deeply embedded in everyday products and services from smartphones to robots. With the rapid evolution of AI competencies, designing with AI as a material has extended beyond simply embedding AI features into existing devices, but constructing new product species from the AI ecosystem. A new wave of AI-native products is emerging, such as the Ai Pin and Rabbit R1 (Figure 1). Even these emerging artifacts will take a while for us to figure out their proper place (Norman, 2010; Pierce, 2024a, 2024b), they represent a growing range of possibilities in terms of form, functionality, and interfaces of human–AI interaction. New product species from the AI ecosystem (images sourced from publicly available product pages)

AI services and products have become an integral part of daily life. Alongside basic AI features like the AI-enabled photo eraser, major phone manufacturers have introduced unique AI functionalities. Apple’s Genmoji allows users to create custom emojis. Huawei’s AI Air Transfer and Samsung’s Instant Circle Search both feature gesture-based interactions for seamless functionality (Figure 2). In addition to software, the global AI-enabled hardware market continues to grow, particularly in consumer electronics (Global Market Insights, 2025). Products such as NOMI, LOVOT, and BubblePal, which offer emotional companionship, are gaining popularity. Efficiency tools such as PLAUD.AI NOTE (AI note taker) and health management devices like RingConn are also emerging (Figure 1). With the improvement of user experience, more and more AI-enabled products have achieved commercial success. AI-enabled applications in smartphones (images sourced from publicly available manufacturer pages)

Comparing to wood, plastic, and metal in the industrial era, or pixels, wireframes, and flows in the digital era, working with AI as the new design materials is challenging due to the uncertainty around AI capabilities, the complexity of its outputs and the maturity of technology in markets (Dove et al., 2017; Yang et al., 2020). For example, designers face challenges in understanding AI’s limitations, explaining its behavior to users, and determining accountability for errors with appropriate solutions (Yang et al., 2020).

GenAI as a Design Tool

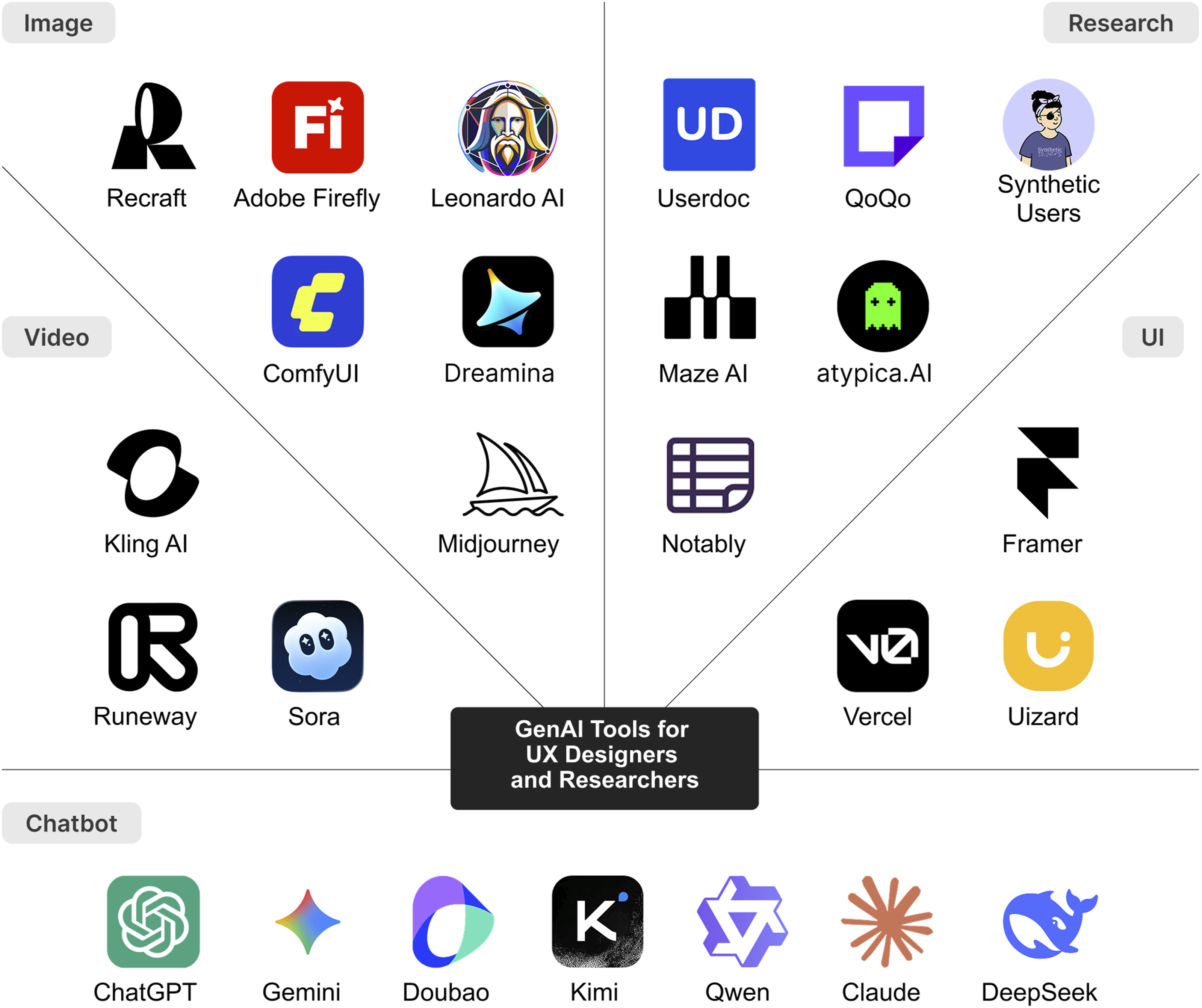

The rise of AI is also gradually reshaping how design is done. Since the boom of GPT, AI-enabled tools and plug-ins have become essential in the design industry. As shown in Figure 3, AI chatbots, such as ChatGPT, Gemini, and Doubao, focus on text-based interactions. There are also platforms dedicated to image generation, such as Midjourney and Dreamina. In the meantime, existing digital creative tools, such as Adobe products, integrate AI-powered features through Adobe Firefly. Additionally, AI research platforms are proposed to generate insights and even final reports. Specialized tools for video and UI generation are also emerging. From general-purpose to specialized platforms, UX designers and researchers have a broad range of tools at their disposal. The spectrum of GenAI tools (images sourced from relevant public websites)

Much research increasingly positions AI as a co-creator during the design process by exploring the mechanisms of AI-supported creativity (Figoli et al., 2022; Guo et al., 2023; Yu, 2025). For example, by leveraging vast knowledge bases, AI systems can generate novel, often unconventional design suggestions that spark inspiration (Chandrasekera et al., 2024). Especially in visual outputs, the uncertainty in AI-generated images can offer serendipitous cues that stimulate divergent thinking (Chandrasekera et al., 2024; Liu et al., 2024). Therefore, it is not surprising that design practitioners have begun to view AI as a creative partner, or even a team member (Knearem et al., 2023; Li et al., 2024). Within this context, recent research has further examined various aspects of human–AI collaboration, including specific interaction modalities and potential impacts on design workflows (Kuang et al., 2023; Kwon et al., 2023).

Although “human–AI collaboration” is widely used, some researchers question its accuracy. One critic argues the term erases the exploitative relationship between AI producers (such as the data labeler) and end users (Sarkar, 2023). Furthermore, whether AI output constitutes genuine creativity or merely pseudo-creativity remains under debate (Ivcevic & Grandinetti, 2024; Runco, 2023).

We believe that design practitioners who describe AI as a creative assistant often do so not out of naivety or exaggeration. Rather, their views stem from lived experiences—moments when unexpected insights emerge through interaction with AI. At the same time, there is a widespread recognition within the design community that AI tools cannot be relied on entirely. Such positive effects, improving efficiency or boosting creativity, are limited and may also involve certain risks, such as design fixation, hallucination, and bias (Hu et al., 2024; Sun et al., 2024; Wadinambiarachchi et al., 2024). Recent empirical evidence highlights a simultaneous enhancement and erosion of creative skills facilitated by AI. GenAI can boost creative performance in the short term, while diminishing collective diversity (Doshi & Hauser, 2024) and potentially hindering individual creativity once assistance is withdrawn (Kumar et al., 2025). These findings represent a structural trade-off between immediate creativity enhancement and the long-term development of creative capacity.

In this paper, we refer to AI as a design tool, rather than a co-creator. This is not a denial of AI’s value, but a call for more measured expectations about its role in design, particularly in the long-term evolution of human intelligence and augmented humanity. Moreover, we aim to approach AI-enhanced design from a broader, systemic perspective. After all, design is a creative endeavor—but not only that (Fallman, 2008; Lebuda & Benedek, 2023).

Distinguishing UX Designers and Researchers

Different UX roles and tasks require different types of human–AI collaboration. The similarities and differences between UX designers and UX researchers in the design industry need further exploration. For more than two decades, UX has been used as an umbrella term for various ways of understanding and studying the quality of interaction with products or services (Bargas-Avila & Hornbæk, 2011). In the design industry, jobs related to UX design vary widely, including Interaction Designer, Experience Architect, and even UX/UI Front End Developer (Lauer & Brumberger, 2014), which are closer to creating products, services, and systems. Others are more inclined to understand how people interact with these artifacts, including Usability Engineers, User Researchers, and Experience Specialists. A survey gathered 114 valid responses from UX professionals, with participants self-identifying their own UX roles. Among them, 74% were UX designers, 16% were UX researchers, and the remainder held roles such as UX writers and UX managers (Feng et al., 2023). UX designers make up the largest group in related industries, while UX Researchers, though fewer, play a crucial role in the user-centered design process. UX designers focus on innovative solution proposals, while UX researchers offer strategic guidance and solutions evaluation. The two roles form a loop, driving the iteration of design. However, both roles are shifting to each other. With the arrival of AIGC and AGI (Artificial General Intelligence), more and more designers may find they need to analyze user personas or test their work with GenAI support. We believe that in discussing how AI integrates with UX design, it is necessary not only to concentrate on how AI enhances design innovation, but also to include its role in enhancing user research.

The AI4UX Framework

Based on the above, we propose the conceptual AI4UX framework (see Figure 4), where “4” represents the four domains arising from AI as both material and tool, intersecting with UX designers and researchers. AI4UX could offer a broader perspective to capture new patterns and investigate the evolution of the designer–AI relationship. The AI4UX framework

GenAI as a Material for Designers

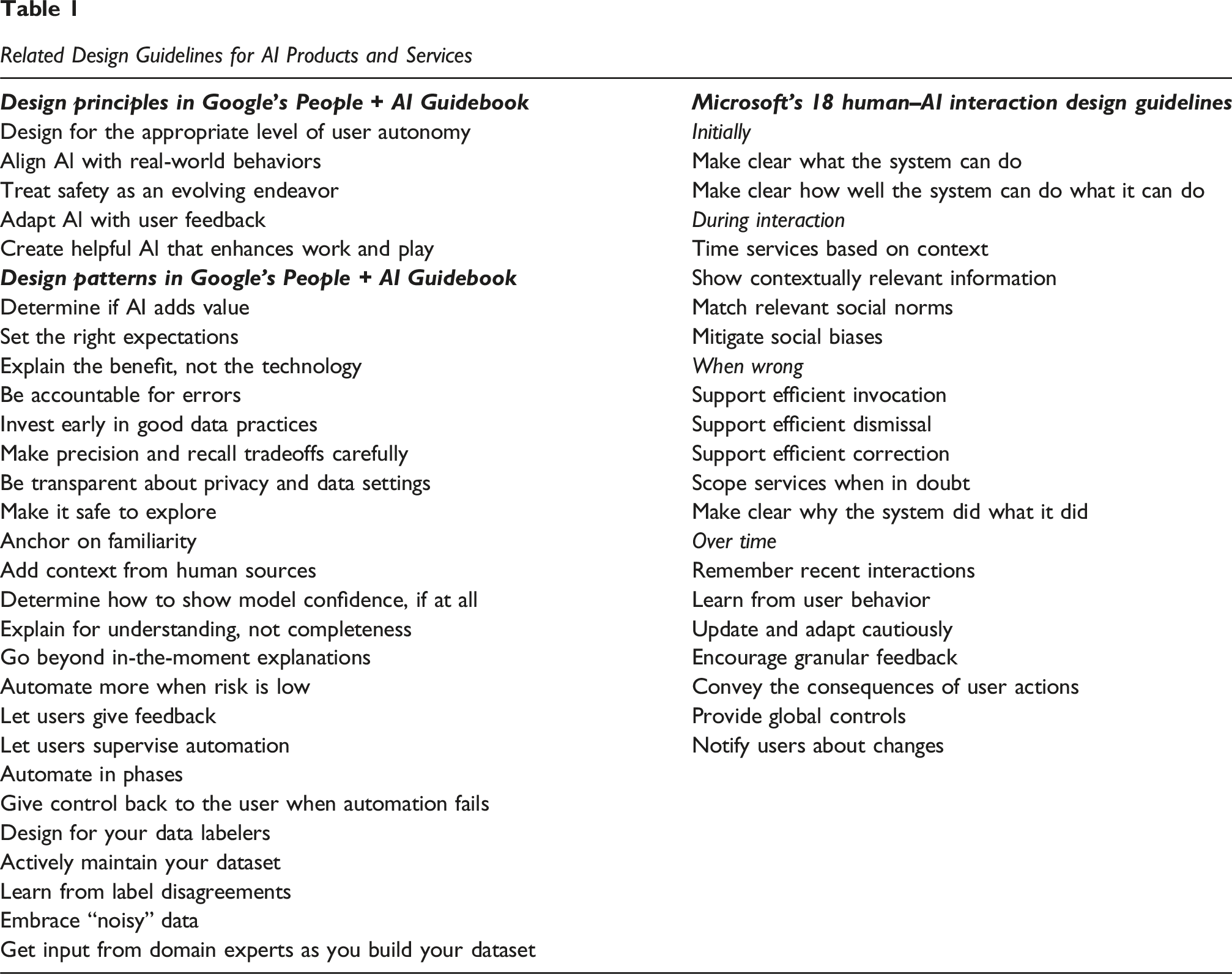

To help design practitioners navigate AI as a new design material, one line of research fundamentally outlines what AI systems are capable of (Jin et al., 2021; Yildirim, Oh, et al., 2023). From industries, tech giants such as People + AI Guidebook by Google (Yildirim, Pushkarna, et al., 2023) and Microsoft’s 18 human–AI interaction design guidelines (Amershi et al., 2019), also offer actionable guidance for real-world AI product development (see Table 1 in the Appendix).

In academia, there is a growing exploration of how AI technology can promote diversity and inclusion. Some research examines the potential of AI-infused digital art therapy and music therapy (Du et al., 2024; Sun et al., 2024). Other studies focus on the needs of specific, often overlooked groups, such as older adults (Huang et al., 2025), left-behind children (Shi et al., 2025), and people with disabilities (Adnin & Das, 2024), discussing their demand for AI services. AI is increasingly becoming a common design material for UX designers, bringing both challenges and opportunities. We are witnessing a future full of possibilities, where designers leverage AI to create a better world.

GenAI as a Tool for Designers

Compared to other fields, designers need to handle multimodal information, including text, images, videos, and even 3D models, relying on various digital design tools that are frequently updated and iterated. With the advent of AI, this diversity and the pace of iteration have intensified. In addition to general-purpose LLMs platforms such as ChatGPT and image-generation tools like Midjourney, tools specifically tailored for UI design, such as V0, are emerging. Even established design tools, such as Adobe Firefly and Figma Make, have incorporated AI-powered features. Moreover, these tools are becoming increasingly interconnected, with MCP (Model Context Protocol) enabling integration between AI applications and external systems.

This vibrant and rapidly evolving ecosystem is driving transformation in the design field, with many more specialized tools catering to specific designer groups and design tasks. For example, ProductMeta for metaphorical product designs (Zhou et al., 2025), Inkspire for sketching in product design processes (Lin et al., 2025), and PlantoGraphy for iterative landscape rendering (Huang et al., 2024).

GenAI as a Material for Researchers

Recently, the use of image-generative AI (IGAI) in participatory design for future living spaces has been further explored. Instead of merely having users assess designers’ concepts, it allowed them to directly use generative AI to imagine their own future community. In a study with remote Australian co-housing residents, IGAI acted as “a catalyst for imagination,” enabling individuals to create visualizations that provoke questions through a diverse, context-driven dialogue (Tao & Vyas, 2025). Similarly, another study focused on residents’ involvement in upgrading Los Angeles’ River Garden Park. IGAI was seen as a “boundary object,” with imperfect images found to better spark discussions, bridge cultural gaps, and foster a shared design language (Guridi et al., 2025). In our practice of Future Kitchen Appliance Design in 2022, we made an attempt to let consumers describe their vision for future living scenarios in the kitchen, then use AI to quickly generate corresponding images for concept evaluation. This allowed designers to receive faster feedback on their concepts in the early stages. In this context, AI-generated content served as “situational stimuli” to evoke and observe user reactions and perceptions. The above cases demonstrate a turn in participatory design, showcasing how AI, as a form of design material, can be utilized by UX researchers.

GenAI as a Tool for Researchers

The utilization of LLMs has been widely applied to streamline classic user research methods. According to the Nielsen Norman Group (NN/g), AI can accelerate the interview research processes from planning (e.g., drafting interview questions) and conducting (e.g., notetaking during interviews) to analyzing (e.g., transcribing, summarizing, and cleaning data) (Moran & Rosala, 2024). Furthermore, some studies explore the potential of GenAI in simulating users based on synthetic data. For example, it can facilitate the creation of personas (Schuller et al., 2024) and journey maps (Mei et al., 2025). It can even be used to directly predict user behavior (Akbari et al., 2023). In addition, conversational AI agents, such as chatbots for online informed consent (Xiao et al., 2023), can foster a more comfortable and effective experiment process.

However, much like how designers use AI-powered design tools, both industry and academia recognize AI’s growing potential as a UX research tool, alongside its limitations. No single platform covers all needs; a mix-and-match approach is often necessary for UX researchers. Additionally, concerns such as accuracy, bias, and accountability remain critical, regardless of the tools used (Liu & Moran, 2025; Mei et al., 2025; Zhang et al., 2025).

Capturing the New Patterns

From the AI4UX framework, the evaluation in design with GenAI becomes crucial. This section takes the lens of mutual evaluation to discuss how to assess the integration of GenAI into the UX field. First, we examine how humans evaluate GenAI products or services, with evaluation metrics expanding beyond traditional usability. Second, we explore how GenAI evaluates users and design solutions, analyzing its impact on the evaluation process, either pushing boundaries or potentially exceeding them. Lastly, we argue the essentials in human–AI collaborative design.

How Humans Evaluate GenAI Products or Services: Not Limited to Usability

Evaluating GenAI as a Design Material: Perceived Intelligence

Usability is the foundation of user experience, typically referring to the ability of users to use a product or system efficiently, effectively, and pleasantly (ISO, 1998; Nielsen, 1994). Related standard questionnaires, such as SUS (System Usability Scale), have been widely used to assess users’ overall perception of a product’s usability (Bangor et al., 2008). However, concerns about the limitations of common usability measures persist, with new proposals constantly emerging (Hornbæk, 2006). For specific product categories, researchers often explore more targeted evaluation metrics. For example, fluency is considered a key factor in the user experience of smartphone applications (Ge et al., 2024). In human–robot interaction (HRI), beyond performance metrics like task completion, the human perception of robots is gaining increasing attention, such as whether a robot appears human-like, lifelike, approachable, intelligent, and safe (Bartneck et al., 2009).

Beyond usability and fluency, we believe a core metric, perceived intelligence, becomes more and more crucial for the current user experience evaluation framework, fully applicable to intelligent products and services. For end users, the intelligent features embedded in products or services must translate into a genuinely perceptible intelligent experience. However, this description remains rather broad and requires further definition within specific contexts. First, user expectations of intelligence vary based on individual experiences, cultural backgrounds, and educational level. Second, AI-driven products and services are diverse and present intelligent experiences in distinct ways, making it worth exploring both their commonalities and differences. Some UX research teams have made efforts on this. For instance, at the ODC2024 Developer Conference, OPPO introduced a new metrics framework for perceived intelligence. It outlines primary indicators and separately interprets secondary indicators for different AI features in ColorOS, their mobile operating system, ensuring a more effective process for identifying UX problems.

We argue that evaluating AI products or services for general users goes beyond usability, though usability remains foundational. In other words, only when usability is sufficiently met can we further discuss perceived intelligence. Once functional efficiency is ensured, higher cognitive factors come into play, enhancing users’ overall satisfaction.

Evaluating GenAI as a Design Tool: Creativity Support

As previously mentioned, UX practitioners are confronted with a rapidly evolving and expanding market of AI-powered design tools. The diversity of this market is reflected not only in the number of tools but also in the variety of interaction interfaces. The optimal interaction paradigms for AI-native design tools have yet to be definitively established. Text-based AI chatbots (such as ChatGPT) primarily use conversational interfaces, UI design tools traditionally rely on canvas-based operations (such as Figma Make), while image generation tools adopt various forms, including conversational (e.g., MidJourney), plugin-based (e.g., Photoshop’s Generative Fill), and node-based interfaces (e.g., ComfyUI). Thus, from an operational perspective, designers face the challenge of quickly mastering new AI-enhanced tools. At the same time, they also struggle with the difficulty of switching between AI-enhanced and traditional design tools in their workflow.

Additionally, key challenges for designers using GenAI tools also arise from a cognitive perspective. One concern is design fixation, where AI outputs become implicit references, causing designers to fall into established thinking (Chen et al., 2025; Wadinambiarachchi et al., 2024). There is also a risk of content homogeneity. Research indicates that this effect intensifies over time, with prolonged reliance on AI diminishing independent problem-solving abilities (Zhou et al., 2026). Furthermore, designers may experience a loss of domination in their design works, with reduced control and reflection during the creative process, potentially leading to the underdevelopment of foundational design skills (Li et al., 2024; Naqvi et al., 2025).

Essentially, both discussions on the operational and cognitive aspects highlight concerns about the weakening, damage, or even replacement of human creativity. The most immediate benefit of AI-enhanced tools is efficiency. However, it is important to recognize that efficiency gains are meant to unleash creativity. A key priority for designers is maintaining creative control while leveraging AI’s capabilities (Khan et al., 2025). In related studies, researchers have measured Perception of Creativity Support, alongside the traditional SUS, to evaluate their proposed AI-enhanced design tools (X. Shi et al., 2025; Q. Zhou et al., 2025). This metric is typically measured through the Creativity Support Index, a psychometric survey (Cherry & Latulipe, 2014). Originally developed for general creativity support tools, this metric remains relevant for AI-enhanced design tools.

In creative contexts, the true value of AI lies in safeguarding and stimulating human creativity. Consequently, the interface layouts and interaction modalities of GenAI tools should strive to protect, stimulate, and amplify this creative potential. This fosters a context where human ingenuity is enhanced, not overshadowed, by the technology itself.

How GenAI Evaluates Users and Design: Breakthrough or Overstep

GenAI as a Research Tool: Meaningful Transparency

As summarized by NN/g, AI can assist in processing user interview data (Moran & Rosala, 2024). This is echoed by numerous studies that explore the application of ChatGPT within the grounded theory approach, comparing its coding results with manual coding. These empirical studies not only demonstrate that LLMs hold significant potential for qualitative data analysis, but also provide practical guidance for implementation (Yue et al., 2025; Y. Zhou et al., 2024). While AI can enhance coding efficiency in qualitative analysis, its impact on quality and consistency remains questioned by UX practitioners (Jiang et al., 2021; H. Zhang et al., 2025). Therefore, PaTAT is introduced as a human–AI collaborative tool for qualitative coding, using an explainable interactive approach to help users understand the model’s status and rationale (Gebreegziabher et al., 2023). Another study conducted a hands-on workshop where participants used ChatGPT for qualitative coding (H. Zhang et al., 2025). During the workshop, researchers helped optimize prompts and share effective strategies. After the workshop, participants shifted from a negative to a more positive and inclusive attitude. This shift is attributed to increased transparency and a better understanding of LLMs’ potential (H. Zhang et al., 2025).

These examples prompt us to reconsider what transparency truly means for UX researchers and stakeholders. While there is widespread advocacy for transparency, such calls often remain vague and superficial. We argue that Meaningful Transparency should be emphasized, as it goes beyond information disclosure to ensure understanding and accountability for LLM-generated content (Norval et al., 2022; Suzor et al., 2019). Researchers have highlighted limitations of the transparency ideal, noting that “Transparency does not necessarily build trust” (Ananny & Crawford, 2018). In other words, for GenAI as a research tool, transparency must be critically examined and paired with accountability mechanisms. The UX industry needs traceable, measurable standards and guidelines when adopting GenAI tools, similar to design specifications.

GenAI as a Research Material and Tool: A Supplement to Real Users

Beyond assisting researchers with qualitative data coding, platforms, such as Atypica.AI and Synthetic Users, now offer fully automated interviews and analysis. These platforms can generate personas or reports entirely without human participants. Atypica.AI builds dynamic consumer models based on social media content and claims 81% response consistency with real users (BMRLab, 2025). Compared to ChatGPT, Synthetic Users claim its advantages lie in multi-agent architecture, enhanced contextual understanding, and sustained interaction, resulting in more accurate, contextually rich outputs (Synthetic Users, 2025).

During the concept validation and user testing stage, LLM-based systems can simulate user interactions and feedback to predict potential UX issues, enabling rapid iteration. An example is MOCKLENS, an automated UX research tool that enhances GUI understanding and generates feedback based on synthetic user data (Wang, 2025). Its process and output align with professional UX research standards, aiming to make UX research more convenient and agile. Similarly, there is research on using LLMs for automatic feedback on UI mockups via a Figma plugin, integrated into the design workflow (Duan et al., 2024). Furthermore, SimUser, an LLM-based tool, generates usability feedback by simulating user interactions through two sub-agents: the Mobile Application Agent (MA) and the User Agent (UA) (Xiang et al., 2024). User characteristics and contextual factors influence its outputs.

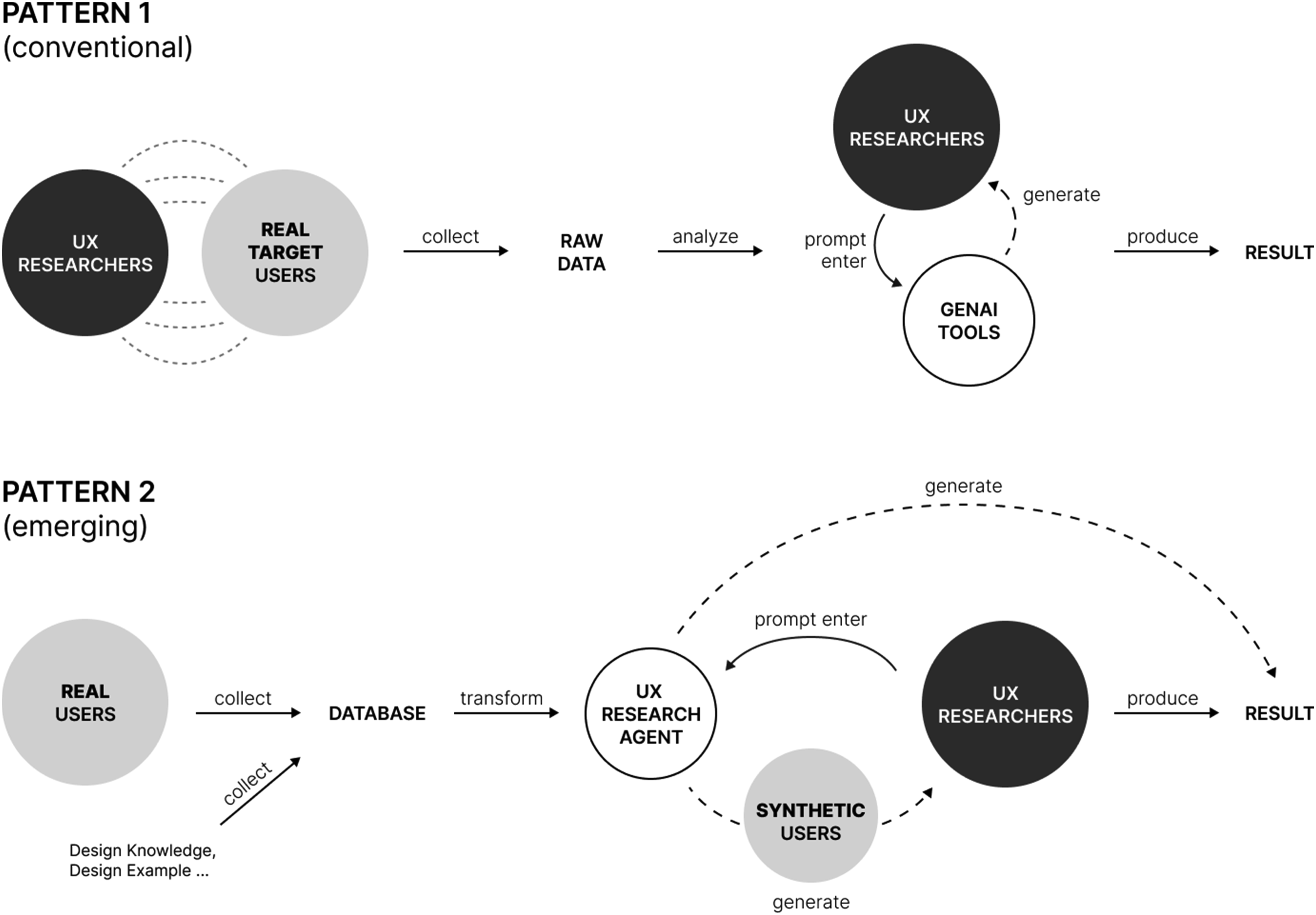

Based on the above, AI has evolved from a supportive tool for processing user data to an intelligent UX evaluation agent. The conventional Pattern 1 organizes and analyzes data collected from real users, while the emerging Pattern 2 mimics real user behavior and cognition to generate results (see Figure 5). In Pattern 2, where GenAI can simulate real users and generate analyzable experience data, or even directly provide insights, the boundary between GenAI as a research tool and material becomes blurred. GenAI is not only integrated into platforms and plugins used by researchers, but also used as research material. Its generated feedback, ratings, images, reports, or virtual users become the data and results for further observation and reflection. Comparison of patterns in AI-enabled user research

It is highly controversial in the UX field, as it leads real users to be absent from the process and sometimes even undermines the influence of UX researchers. Some argue that these practices violate core values of UX research, such as representation, inclusion, and equity, when working with human participants (Agnew et al., 2024). NN/g analyzes synthetic user responses generated by ChatGPT and concludes that they remain superficial and that “Imagined Experiences Are Not Reliable” (Rosala & Moran, 2024). Researchers behind SimUser also objectively discuss that while AI-generated and human feedback may overlap to some extent, AI has limitations in replicating human aesthetic sense, experiential knowledge, and understanding of emerging trends (Xiang et al., 2024). From a long-term perspective, potential risks include reinforcing certain stereotypes and oversimplifying identity groups (Wang et al., 2025).

However, this automated UX research indeed offers low-cost and time-saving advantages, allowing for the coverage of a wide user base and diverse usage scenarios in a short time. Some propose using these outputs as preparatory material or rehearsal for future research (Rosala & Moran, 2024). Others suggest leveraging LLMs, acting as synthetic users, to develop and refine hypotheses in the early concept stages (Dillion et al., 2023). Interviews with UX professionals emphasize that this approach is well-suited for training novices or basic error checks, such as spellchecking (Duan et al., 2024). In other words, AI-generated user feedback should be supplementary, not a replacement for real-user experiments. Under these circumstances, research comparing LLMs with human judgments is encouraged, as it helps to clarify consistency, identify strengths and weaknesses, and provide insights into the ethical decision-making of LLMs (Dillion et al., 2023). This will enable UX researchers and stakeholders to use the UX evaluation agent more responsibly.

GenAI for Future UX Research: Mirroring Tomorrow’s Users

There are intense debates within the UX field about whether simulating real users with AI for experiments is a breakthrough in paradigms or an ethical overstep. Research on creating digital identities from personal data to simulate human behavior, thinking, and emotions continues to advance. For example, Second Me is an AI-driven personal agent that organizes and utilizes user-specific knowledge to handle redundant tasks, such as managing browser credentials, autofill data, and unified authentication systems (J. Wei et al., 2025). Similarly, Future You allows users to chat with an AI-powered virtual version of their future selves, tailored to their goals and personal qualities, helping to improve future self-continuity and well-being (Pataranutaporn et al., 2024). Furthermore, generative agents are instantiated to populate an interactive environment, Smallville, a virtual town where 25 AI agents live, displaying realistic individual and social behaviors (Park et al., 2023).

As such, we might envision a new form of UX research interlacing with future user experience and speculative design. Researchers could generate digital avatars of user groups’ “future selves” or “second selves,” placing them in a predefined future community to observe their behavioral responses and emotional feedback. This approach might provide deeper insights into users’ potential needs, acceptance, and experience issues in future technological and social concerns. Alternatively, by engaging in dialogues with these future users, we gain a reflective perspective on the present. Rather than debating whether AI is a substitute or supplement for real users, perhaps we should shift our focus to how AI can create opportunities for experiential vision that were previously beyond our reach. We expect a more innovative application, in which human users and their AI-generated counterparts serve as complementary subjects in the next design creation and evaluation cycle.

In a broader methodological sense, the notion of mirroring tomorrow’s users may serve as a method for future design research. By creating and engaging with future users, or other digital counterparts, designers and researchers are not merely envisioning future UX scenarios, but experimenting with the enactment, negotiation, and affordance of future human capacities within complex socio-technical systems. Such speculative methods can help explore long-term behavior patterns, emerging norms in human–AI interactions, and latent needs that may remain invisible in present-oriented evaluations. In this sense, researchers can engage in exploratory and reflective thinking about how technology might transform and enhance human capacities and well-being in the future.

Designing for Humans and Humanity

It comes to a bold prediction when we recognize GenAI’s dual role as both a material and a tool. While not necessarily preferable, it is a plausible future (Dunne & Raby, 2024): AI could simulate and even influence humans’ evaluations of designs created by AI itself. However, we believe that no matter how deeply AI becomes involved in UX design, we cannot risk losing sight of the fact that design ultimately serves human emotions and experiences. In other words, no matter how advanced AI becomes in automating design and user research, real human value remains the baseline.

In this section, we discuss the perceived intelligence for general users, the protection of creativity for UX designers, and meaningful transparency for UX researchers. Ultimately, we are emphasizing one key point: human-centered AI and design for humans. Achieving reliability, safety, and trustworthiness will greatly improve human performance while also fostering self-efficacy, mastery, creativity, and responsibility (Shneiderman, 2022). Furthermore, we also argue the potential of AI evolving into an intelligent evaluation agent, with its impact on real users and future UX research. This may go beyond human-centered design to humanity-centered experience. It reminds us that we are part of a complex system, where our actions can impact people worldwide and have lasting effects (Norman, 2024). In exploring the innovative use of GenAI within the UX field, we must critically assess whether it oversteps boundaries. The deployment of such innovations in the real world requires utmost caution. The future of UX design should be navigated and shaped in a responsible and impactful manner. However, we must also recognize that AI presents a significant ontological challenge for individuals in the design community. In the next part, we will further explore how UX students and practitioners can equip themselves to address emerging challenges.

Implications for Education and Profession

Implications for Design Education

Design education has been dramatically changed by AI. The Design + Environmental Analysis (DEA) program at Cornell University has introduced a Design Challenge as a required work for all DEA applicants. Applicants need first to identify a real-world issue and design a product, space, tool, service, or experience to improve it. Then, they are asked to generate an alternative solution using AI, highlighting the differences between the AI-generated design and their original solution. Additionally, they need to explain how AI’s outputs impact their own design, whether by improving or limiting it (College of Human Ecology, 2025). In this context, AI seems to be positioned as a competitor or counterpart.

In contrast, a more common approach involves using AI in creative activities. The Continuing Education program at Parsons School of Design offers a course titled “AI for Creativity and Leadership,” which covers the fundamentals of AI and its application in creative environments (The New School, 2025). Beyond continuing education, some higher education institutions are exploring the impact and challenges of integrating AI into art and design programs through short-term courses or interdisciplinary workshops (Hwang & Wu, 2025; ZYang & Shin, 2025; Yin et al., 2023). However, research states that most design schools are adopting a wait-and-see approach to AI integration (Yang & Shin, 2025). Echoed by another, although there have been many practical attempts, a structural integration of AI education into the design curriculum has not yet been achieved (Flechtner & Stankowski, 2023).

The relationship between AI and design students is sometimes antagonistic, other times harmonious. Even in a harmonious case, it still lacks the fundamental construction of methodology for widespread AI applications in design education. However, it must be clear that this AI-enabled shift is inevitable in the industry. We stand at a crossroads of transformation, where resistance to AI creates reflective tension, yet coexistence with AI fosters a new order.

Evolving Competencies for the UX Profession

The ACM Interactions journal published an article titled “So You Want to Be an AI Designer?” in 2017 (Wei, 2017). At the time, an AI designer was a novelty in the design field. The author, Nina Wei, a product designer at Baidu AI Lab, argued that the core of an AI designer’s work is similar to that of other designers: solving problems to improve human life (Wei, 2017). Her methods, brainstorming, sketching, and user research, mirror those of other designers. The key difference lies in how to successfully apply AI technology to products, which primarily involves using AI as a design material.

Recently, HP IQ’s job posting for a “Lead Intelligent System Designer” clearly states the expectation to “create new paradigms for information architecture in AI-first systems” and “experience building with AI technologies (particularly LLMs) in products or substantial side projects” (HP Development Company, 2025). We must recognize that the industry’s expectations for AI designers are growing and becoming more demanding. Designers are now required to understand and apply AI as a design material. This means transforming AI into practical, user-friendly intelligent products. Meanwhile, they must master AI tools and integrate them into the design process. The same holds for UX researchers, forming a more efficient closed-loop workflow with AI, from needs identification to validation. The trend toward embracing AI in practice is inevitable. As AI saves time on tedious tasks and assists in creative work, stakeholders—whether in industry or education—have also raised expectations for the quality, efficiency, and even the scope of design practitioners’ output.

Amid the current wave of AI emergence, the value of UX design itself has unfortunately become increasingly blurred. When we talk about “AI and design,” we are ultimately talking about people, both those who use it and those who create it. AI is not an independent agent. It remains a material and a tool shaped by human intentions, needs, and limitations. Even in contexts where AI can generate, optimize, predict, and simulate, it is still guided by human values, individual preferences, and the enduring need for creative expression. No matter how advanced AI becomes, design remains fundamentally rooted in human experience, such as emotions, aesthetics, everyday needs, and cultural context.

Redefining Expertise in Designing With AI

We have discussed the current situation faced by design students and professionals, focusing specifically on curriculum adjustments for students and job recruitment for professionals. For students, we must recognize that AI is inevitably integrated into their foundational training, even as they are still acquiring basic design skills and knowledge. In contrast, professionals, with years of skill development and experience, are only now starting to incorporate AI tools into their practice. Therefore, while students represent the future of the profession, it is important to consider them separately from established professionals.

Research has shown that attitudes and strategies for using AI differ between design students and experts. Interviews reveal that students are more eager to embrace AI in design, some even considering it as co-authorship. In comparison, professionals are more cautious, concerning the loss of creativity, core skills, and issues like plagiarism and intellectual property (Naqvi et al., 2025). Another empirical study shows that experts and students differ in their use of AI-enhanced search platforms. Experts are more open to unexpected AI-generated results, while students tend to reject those that fall short of their initial expectations (Kwon et al., 2023). This raises concerns about whether using GenAI tools may hinder the development of core design skills in students (Li et al., 2024).

In other words, it has become harder for future design talent to grow into the widely recognized design experts as they used to be in modern design history. The rise of AI has democratized design, lowering entry barriers. However, this also makes it more challenging to cultivate design experts, as the criteria for expertise blurred, shifted, and escalated. This challenge extends beyond the UX design field and affects all industries in the AI era. It leads to many veteran designers still needing to continuously learn new expertise, and design schools have yet to undergo major reforms, with only small-scale explorations underway. We believe design experts’ roles will become more complex, relying not only on collaboration with AI tools but also on interdisciplinary thinking, and, still most importantly, a deeper understanding of human needs.

From an augmented humanity perspective, design education should prioritize protecting students’ sense of control, agency, and responsibility when working with GenAI tools. Rather than treating AI as an autonomous problem solver, students should remain cognitively and ethically engaged, maintaining ownership over decisions and outcomes. When AI is approached as a material, whether for designers or for researchers, it requires understanding its technical affordances while critically considering its broader social implications. Ultimately, intelligent products, services, and research practices should remain grounded in serving human needs, ensuring that technological capability does not overshadow human values and lived experience.

Limitations

This work provides a conceptual lens to examine the interplay and evolution of designer–AI relationships. It is not intended to provide a comprehensive classification of human–AI collaboration, but rather a novel lens for interrogating emerging design practices and the future. From the limitations of scientific rigors, we acknowledge several constraints that define the boundaries of this research as follows.

First, the diversity and fluidity of AI-integrated design workflows challenge rigid categorization. In UX research, the distinction between GenAI as a tool versus a material requires deeper unpacking, particularly to understand the overlapped spaces where both roles blur and shift dynamically. As GenAI agency and autonomy evolve, future research must integrate philosophical, psychological, and computational perspectives to map these shifting and ambiguous boundaries.

Second, as a conceptual scaffold, the AI4UX framework does not claim to provide a comprehensive account of the downstream implications of AI-mediated configurations. Consequently, ethical concerns, issues such as the displacement of real participants by synthetic users, are acknowledged but not examined in depth. Similarly, translating the emerging themes in Section Capturing the New Patterns into actionable design patterns and refining the pedagogogical guidance in Section Implications for Education and Profession need further research to bridge the gap between theoretical insights and practical implementation.

Lastly, GenAI is evolving in a very rapid technical cycle while the diverse adoption of different industries and regions. Most of the evidence and cases examined in this study are emerging in the last five years. We believe the broad socio-technical context may change dramatically in the coming years with the massive spread of AI technology in our daily lives. Thus, the AI4UX framework would update accordingly.

Conclusion

The integration of GenAI into UX design is not just a technological trend. It represents a transformation in how design is executed and evaluated. Designers now find themselves working in a new space where AI serves as both an innovative material, a powerful tool, and sometimes a replacement, fundamentally altering the design process and its outcomes. This shift requires designers to adapt their skills to fully harness AI’s potential while preserving a humanity-centered approach. The AI4UX framework introduced in this paper offers a conceptual viewpoint to examine these intersecting relationships, identifying the four domains where AI acts as both a material and a tool for designers and researchers.

Based on the AI4UX framework, the integration of GenAI into UX design requires a more scrutinizing perspective to understand AI’s expanding role. For UX designers, when designing AI products or services, we highlight the notion of perceived intelligence, extending the boundaries of usability. As a design tool, AI undeniably boosts designers’ productivity, yet maintaining creative control remains essential. Moreover, AI is also transforming UX research. While AI-assisted qualitative data analysis is widely used, it requires clearer guidelines and transparency to ensure reliability. AI-powered tools that simulate real users to provide feedback raise ethical concerns. To better preserve representation and inclusion across diverse user groups, AI should supplement, not replace, real participants. Additionally, we also envision the potential of GenAI in future UX research, particularly in mirroring tomorrow’s users. Beyond serving as UX evaluation concerns, these themes point to mechanisms that augment human judgment, agency, and responsibility and articulate a vision for enhancing human inclusivity and foresight.

As AI becomes increasingly integrated into design workflows, we further discuss its impact on individuals within the design community. For design students, growing and competing alongside AI means navigating a relationship that is both confrontational and symbiotic. Current design practitioners must not only understand the fundamentals of LLMs but also learn to effectively leverage GenAI tools. From an industry perspective, this necessitates a redefinition of expertise, where the traditional focus on technical skills and creativity expands to include the ability to collaborate with AI. Under these circumstances, we emphasize that the future of design with AI must be approached with care, ensuring that design remains rooted in human values, ethics, and lived experience.

Footnotes

Acknowledgments

The authors acknowledge the continued support of colleagues at the School of Design, Hunan University, and Dr. Nick Bryan-Kinns from the University of the Arts London.

Author Contributions

Wei Wang contributed to conceptualization, supervision, funding acquisition, and manuscript review and editing. Yijing Yang contributed to conceptualization, conducted the investigation, prepared the visualizations, and wrote the original draft as well as the revised manuscript. Zhilong Luan contributed to manuscript review and editing. All authors approved the final manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was sponsored by the National Social Science Fund of China Art Project (25BG176), the Research Fund for Humanities and Social Sciences of the Ministry of Education (22YJA760082), the Hunan Provincial Degree and Graduate Teaching Reform Research Project (2023JGYB063), Yuelushan Industrial Innovation Center, Lushan Lab Research Funding, the Fundamental Research Funds for the Central Universities.

Declaration of Conflicting Interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: The first author of this paper is a member of the journal’s editorial board. This author was not involved in the peer review or editorial decision-making process for this manuscript and had no access to information regarding its peer review.

Appendix

Related Design Guidelines for AI Products and Services

Design for the appropriate level of user autonomy

Initially

Align Al with real-world behaviors

Make clear what the system can do

Treat safety as an evolving endeavor

Make clear how well the system can do what it can do

Adapt Al with user feedback

During interaction

Create helpful Al that enhances work and play

Time services based on context

Show contextually relevant information

Determine if AI adds value

Match relevant social norms

Set the right expectations

Mitigate social biases

Explain the benefit, not the technology

When wrong

Be accountable for errors

Support efficient invocation

Invest early in good data practices

Support efficient dismissal

Make precision and recall tradeoffs carefully

Support efficient correction

Be transparent about privacy and data settings

Scope services when in doubt

Make it safe to explore

Make clear why the system did what it did

Anchor on familiarity

Over time

Add context from human sources

Remember recent interactions

Determine how to show model confidence, if at all

Learn from user behavior

Explain for understanding, not completeness

Update and adapt cautiously

Go beyond in-the-moment explanations

Encourage granular feedback

Automate more when risk is low

Convey the consequences of user actions

Let users give feedback

Provide global controls

Let users supervise automation

Notify users about changes

Automate in phases

Give control back to the user when automation fails

Design for your data labelers

Actively maintain your dataset

Learn from label disagreements

Embrace “noisy” data

Get input from domain experts as you build your dataset