Abstract

Artificial intelligence has reshaped journalism by enabling automated content creation, data analysis, news verification, content personalization, and multilingual translation, enhancing efficiency and accessibility. Tools like the Associated Press’s Wordsmith and Bloomberg’s Cyborg exemplify these advancements. However, artificial intelligence lacks human creativity, ethical judgment, and interpersonal skills critical to journalism. This study addresses the research question: How can media organizations ethically and effectively integrate artificial intelligence into journalistic practices to enhance efficiency while preserving creativity, originality, and ethical integrity? By examining artificial intelligence’s opportunities and challenges, particularly in intellectual property, transparency, and content diversity, this article proposes a framework for responsible artificial intelligence integration. Human oversight remains essential to ensure ethical journalism, balancing technological innovation with journalistic values.

Introduction

Artificial intelligence (AI) has profoundly transformed journalism, reshaping workflows and expanding capabilities across media organizations worldwide (Broussard et al., 2019). AI-driven tools enable automated content creation, data analysis, news verification, content personalization, and multilingual translation, significantly enhancing efficiency and audience reach (Asad et al., 2024; Fuchs, 2024). For example, the Associated Press employs Wordsmith to generate thousands of financial reports simultaneously, allowing journalists to focus on in-depth, complex stories (Rojas Torrijos, 2021). Similarly, Bloomberg’s Cyborg processes vast datasets to produce accurate financial news, while Reuters’ News Tracer scans social media platforms to verify news authenticity, ensuring rapid and reliable reporting (Duin & Pedersen, 2023; Opdahl et al., 2023). The Washington Post’s Heliograf personalizes content to align with individual reader preferences, boosting engagement, and the BBC leverages AI for multilingual translations to make news accessible to global audiences (Lao & You, 2024; Serdouk & Bessam, 2023). These advancements demonstrate AI’s potential to streamline repetitive tasks, improve scalability, and broaden the accessibility of journalistic content.

However, AI’s capabilities are not without limitations. While it excels in data-driven tasks, AI lacks the human creativity, ethical judgment, and interpersonal skills essential for nuanced storytelling, investigative reporting, and ethical decision-making (Ferrucci & Hopp, 2023). Existing literature underscores AI’s contributions to journalism but highlights significant gaps in understanding how to balance its efficiency with the preservation of originality, ethical oversight, and cultural sensitivity (Fuchs, 2024; Tran & Diep, 2024). For instance, concerns about content homogenization, intellectual property disputes, and the ethical implications of AI-generated content remain underexplored (Agarwal et al., 2025; Munoriyarwa et al., 2023). These gaps raise critical questions about how journalism can harness AI’s benefits without compromising its core values.

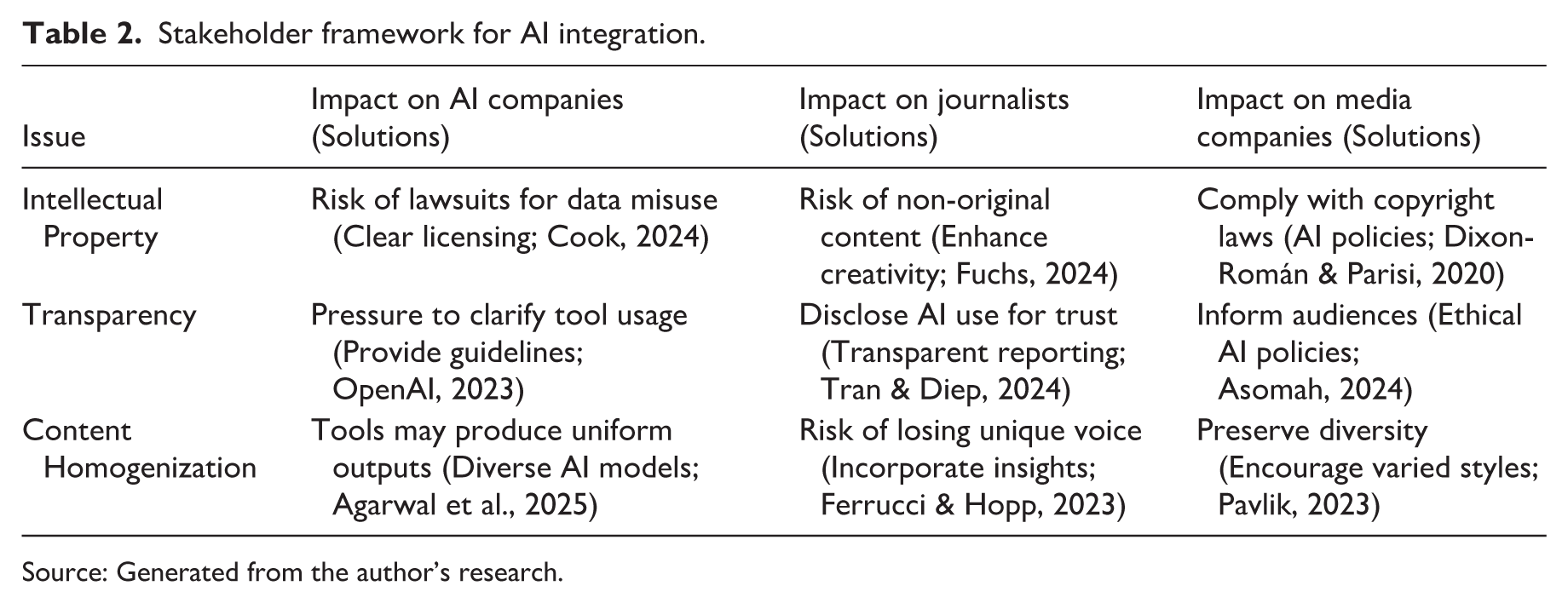

This study addresses the research question: How can media organizations ethically and effectively integrate AI into journalistic practices to enhance efficiency while preserving creativity, originality, and ethical integrity? By examining AI’s opportunities (such as automation and personalization) and its challenges, including intellectual property concerns, transparency, and risks of content homogenization, this article contributes to the discourse on responsible AI integration. Drawing on a comprehensive review of literature, it identifies a need for frameworks that guide media organizations in leveraging AI while maintaining journalistic standards (Dixon-Román & Parisi, 2020). The study proposes a stakeholder-focused framework (Table 2) that synthesizes solutions for AI companies, journalists, and media organizations, addressing issues like copyright compliance, transparent AI use, and diversity in content creation (Asomah, 2024; Tran & Diep, 2024). This framework emphasizes the importance of human oversight, ethical guidelines, and journalist training to ensure AI complements rather than replaces human contributions.

Ultimately, this article argues that a balanced integration of AI, grounded in ethical principles and human creativity, can enhance journalism’s efficiency and impact while preserving its foundational values of accuracy, authenticity, and societal relevance. By addressing these issues, the study aims to provide actionable insights for media organizations navigating the evolving landscape of AI-driven journalism.

Opportunities and benefits of AI in journalism

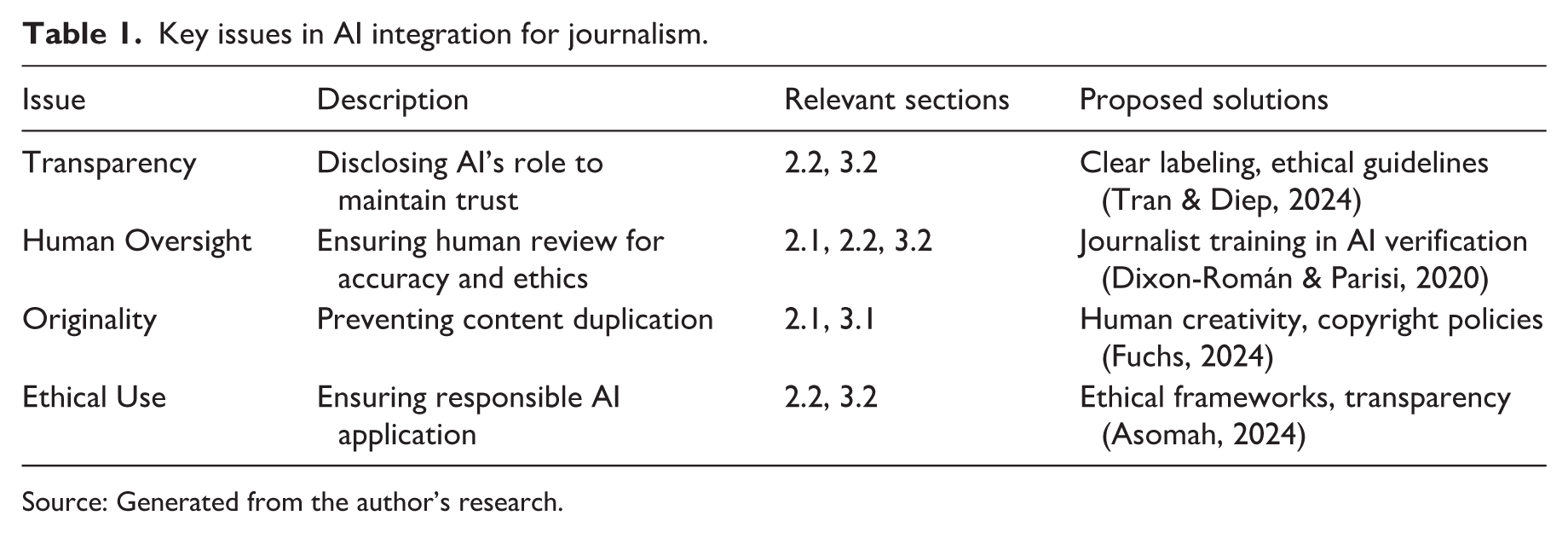

AI offers transformative opportunities for journalism, revolutionizing workflows by automating tasks, enhancing efficiency, and boosting audience engagement, though human oversight remains critical to ensure quality (Fuchs, 2024). Table 1 summarizes key issues in AI integration, their relevance, and proposed solutions to balance technological advancements with journalistic integrity.

Key issues in AI integration for journalism.

Source: Generated from the author’s research.

AI streamlines content creation, data analysis, and personalization, enabling journalists to focus on investigative and creative work (Aissani et al., 2023). However, over-reliance on AI risks content duplication, necessitating human creativity to ensure originality and compliance with copyright standards (Fuchs, 2024; Trapova & Mezei, 2022). Transparency in AI use, as emphasized in Table 1, fosters audience trust through clear labeling and ethical guidelines (Tran & Diep, 2024). Journalist training in AI verification, as suggested by Dixon-Román and Parisi (2020), ensures accuracy and ethical alignment, particularly for sensitive topics. By integrating AI’s efficiency with human expertise, journalism can maintain credibility and produce innovative, diverse content (Yue et al., 2024). This synergy enhances storytelling while upholding the profession’s core values.

Enhancing efficiency and creativity

AI significantly enhances journalistic efficiency by automating repetitive tasks such as content creation and data analysis, allowing journalists to focus on in-depth reporting (Aissani et al., 2023). These advancements enable media organizations to operate at scale, meeting the demands of fast-paced news cycles while maintaining high output (Goodnight & Wei, 2024). By automating data-heavy tasks, AI empowers journalists to dedicate more time to creative and analytical work, enhancing the overall quality of reporting.

However, over-reliance on AI risks content duplication, which can undermine originality and raise intellectual property concerns (Trapova & Mezei, 2022). For instance, AI-generated content may produce similar outputs across platforms, potentially homogenizing journalistic voices and diminishing diversity (Agarwal et al., 2025). To address this, journalists must enhance AI outputs with unique perspectives, ensuring compliance with ethical and legal standards (Fouda et al., 2024). This synergy between AI’s efficiency and human creativity fosters innovative storytelling that preserves authenticity and individuality (Tsao & Nogues, 2024). By integrating personal insights and contextual nuance, journalists can transform AI-generated drafts into compelling narratives that reflect diverse viewpoints. Training in ethical AI use, as recommended by Dixon-Román and Parisi (2020), equips journalists to verify outputs and maintain journalistic integrity. This balanced approach, supported by the solutions in Table 1, ensures AI serves as a tool to amplify creativity rather than replace it, safeguarding the profession’s commitment to originality and ethical responsibility.

Supporting professionalism and trust

AI supports journalists by automating repetitive tasks, such as data aggregation and initial content drafting, allowing them to focus on high-value investigative and analytical work (Lee et al., 2020). However, maintaining credibility requires human expertise to ensure AI-generated content is accurate and engaging (Chen, 2024). Without rigorous oversight, AI outputs risk errors or biases, which could erode public confidence in journalism (Ferrucci & Hopp, 2023). Journalists must verify AI-generated content, particularly for complex topics requiring contextual understanding, to uphold professional standards.

Transparency about AI’s role in content creation, as highlighted in Table 1, is critical for fostering audience trust (Tran & Diep, 2024). Clear labeling of AI use, such as disclosing when tools like Wordsmith or Heliograf contribute to articles, ensures accountability and aligns with ethical guidelines (Asomah, 2024). This openness reassures audiences that human oversight drives editorial decisions, preserving journalism’s authenticity. Continuous skill development is equally vital, enabling journalists to master AI tools while prioritizing investigative work (Yue et al., 2024). Training programs, as recommended by Dixon-Román and Parisi (2020), equip journalists to critically assess AI outputs, mitigating risks like misinterpretation of data or cultural nuances. By blending AI’s efficiency with human judgment, journalists can produce compelling, trustworthy content that resonates with diverse audiences. This synergy strengthens the profession’s value, ensuring AI enhances rather than undermines journalistic integrity. Media organizations should invest in ongoing education to keep journalists adept at navigating AI’s evolving role, maintaining public trust while leveraging technological advancements to deliver impactful reporting (Goodnight & Wei, 2024).

Challenges and ethical considerations

AI’s integration into journalism presents significant challenges, including intellectual property disputes, transparency issues, and content homogenization, requiring robust ethical and legal frameworks (Fuchs, 2024). Lawsuits against AI companies for using copyrighted articles to train models highlight the need for clear licensing agreements (Cook, 2024; Munoriyarwa et al., 2023). Transparency in disclosing AI’s role in content creation is essential to maintain audience trust (Tran & Diep, 2024). In addition, AI’s tendency to produce uniform outputs risks diminishing diverse journalistic voices, necessitating human creativity to ensure originality (Agarwal et al., 2025). Ethical guidelines and journalist training, as outlined in Table 1, are critical to address these concerns responsibly.

Intellectual property and originality

The integration of AI into journalism introduces significant challenges concerning intellectual property and originality, as AI-generated content risks producing overlapping or derivative outputs, raising copyright concerns (Fuchs, 2024). High-profile lawsuits, such as those against AI companies like OpenAI for using copyrighted news articles to train models without consent or compensation, underscore the urgent need for clear licensing agreements (Cook, 2024; Munoriyarwa et al., 2023). These legal disputes highlight ethical and legal complexities, as unauthorized use of journalistic content for AI training can undermine the intellectual property rights of creators and news organizations (Trapova & Mezei, 2022). To address this, media organizations must advocate for transparent agreements with AI providers to ensure ethical data sourcing and compliance with copyright laws.

Journalists play a critical role in mitigating these risks by enhancing AI outputs with human creativity to ensure originality (Fuchs, 2024). AI tools, while efficient in generating drafts or analyzing data, often produce content lacking unique perspectives or cultural nuance, which can lead to homogenized outputs (Agarwal et al., 2025). By infusing AI-generated content with personal insights and contextual depth, journalists can create distinctive narratives that align with ethical and legal standards (Fouda et al., 2024). Transparent copyright policies, as emphasized by Tran and Diep (2024), are essential to clarify ownership and usage rights of AI-assisted content, protecting both journalists and media organizations from legal pitfalls.

Training journalists in ethical AI use is vital to navigate these challenges effectively (Dixon-Román & Parisi, 2020). Such training equips journalists to critically assess AI outputs, ensuring they meet originality standards and comply with copyright regulations. Media organizations should implement robust policies, as outlined in Table 1, to guide the responsible use of AI, fostering collaboration between journalists and AI systems (Asomah, 2024). By prioritizing human creativity and ethical oversight, journalism can leverage AI’s efficiency while safeguarding intellectual property and maintaining the authenticity and diversity of content. This balanced approach ensures that AI enhances, rather than compromises, the profession’s commitment to originality and legal accountability.

Transparency and ethical standards

Transparency in disclosing AI’s role in content creation is paramount for maintaining audience trust and upholding journalistic credibility (Tran & Diep, 2024). As AI tools like Wordsmith and Heliograf become integral to newsrooms, clearly labeling their contributions (such as in automated financial reports or personalized content) ensures audiences understand the balance between human and machine input (Rojas Torrijos, 2021; Serdouk & Bessam, 2023). Failure to disclose AI use risks eroding public confidence, as audiences may perceive a lack of authenticity or accountability (Asomah, 2024). Ethical frameworks, as outlined in Table 1, provide clear guidelines for responsible AI integration, emphasizing transparent communication to foster trust (Dixon-Román & Parisi, 2020).

AI’s sentiment analysis, while powerful for gauging public opinion, struggles with nuanced expressions like sarcasm or irony, which rely on cultural and contextual cues, leading to potential misclassification (Sharma et al., 2024). For example, sarcastic comments may be misinterpreted as positive or negative, risking inaccurate reporting, particularly in politically or socially sensitive contexts (Ferrucci & Hopp, 2023). Human judgment is essential to verify AI outputs, ensuring they align with journalistic standards and accurately reflect complex narratives. This oversight is critical in maintaining fairness and avoiding biases that could distort public discourse.

Partnerships, such as OpenAI’s collaboration with Axel Springer, highlight AI’s role in analyzing documents and summarizing news, but they also raise accountability concerns for errors in AI-generated content (OpenAI, 2023). Robust editorial oversight is necessary to address these issues, ensuring errors are caught and corrected before publication (Fouda et al., 2024). Ethical frameworks, as noted in Table 1, guide media organizations in implementing policies that prioritize accuracy and accountability (Asomah, 2024). Journalist training in AI literacy, as recommended by Dixon-Román and Parisi (2020), equips professionals to critically assess AI tools, mitigating risks of misinformation. By fostering transparency and combining AI’s capabilities with human oversight, media organizations can safeguard journalistic integrity, ensuring AI enhances rather than undermines the profession’s commitment to ethical, trustworthy reporting (Tran & Diep, 2024). This balanced approach strengthens public confidence and supports responsible innovation in journalism.

Ethical and legal responsibilities in AI use

The integration of AI into journalism raises profound ethical and legal questions about accountability, particularly in the domains of intellectual property and content creation (Asomah, 2024). As AI tools like Wordsmith and News Tracer become embedded in newsrooms, journalists bear the primary responsibility for ensuring accuracy, objectivity, and ethical integrity in their work (Goodnight & Wei, 2024). AI provider policies, such as those from OpenAI and Meta, clearly delineate acceptable practices, placing accountability squarely on users for any misuse or breaches (Cook, 2024). These policies typically disclaim provider liability for generated content, emphasizing that journalists and media organizations must ensure compliance with ethical and legal standards.

Transparency in disclosing AI’s role in content creation is a fundamental ethical obligation, as failures to do so can erode journalistic integrity and public trust (Tran & Diep, 2024). For instance, when AI tools contribute to articles, such as generating drafts or analyzing data, clear labeling ensures audiences understand the extent of human versus machine involvement (Rojas Torrijos, 2021). This transparency aligns with ethical frameworks outlined in Table 1, fostering accountability and maintaining credibility (Asomah, 2024). Without such disclosure, audiences may question the authenticity of reporting, particularly in sensitive contexts where trust is paramount (Ferrucci & Hopp, 2023).

Legal responsibilities also arise, particularly concerning intellectual property. Lawsuits against AI companies for using copyrighted journalistic content to train models highlight the need for clear licensing agreements (Cook, 2024; Munoriyarwa et al., 2023). Journalists must navigate these complexities by ensuring AI-generated content adheres to copyright laws, often by enhancing outputs with original insights to avoid duplication (Fuchs, 2024). While counter-lawsuits by AI companies against journalists for misuse are theoretically possible, they are unlikely to succeed unless clear unethical practices, such as non-disclosure of AI use or violation of provider policies, are evident (Cook, 2024). Ethical transparency, as emphasized in Table 1, significantly minimizes such risks by ensuring accountability remains with users (Asomah, 2024).

To address these challenges, media organizations should implement robust training programs on ethical AI use, equipping journalists to critically evaluate outputs and navigate legal frameworks (Dixon-Román & Parisi, 2020). Such training ensures journalists can leverage AI’s efficiency while safeguarding against biases or errors that could compromise objectivity. In addition, ethical guidelines must be established to standardize transparency practices across newsrooms, reinforcing public trust (Tran & Diep, 2024). By fostering a culture of accountability and ethical responsibility, journalists and media organizations can harness AI’s potential while upholding the profession’s core values of accuracy, fairness, and integrity in an evolving technological landscape.

Framework for AI integration

The integration of AI into journalism requires a structured approach to address challenges such as intellectual property, transparency, and content homogenization. Table 2 presents a stakeholder-focused framework that synthesizes these issues, their impacts, and tailored solutions for AI companies, journalists, and media organizations, ensuring responsible AI adoption while preserving journalistic values.

Stakeholder framework for AI integration.

Source: Generated from the author’s research.

This framework emphasizes collaboration among stakeholders to balance AI’s efficiency with journalistic integrity. For AI companies, addressing intellectual property concerns involves establishing clear licensing agreements to prevent lawsuits over data misuse, as seen in cases against firms like OpenAI (Cook, 2024). Developing diverse AI models can mitigate content homogenization, ensuring outputs reflect varied perspectives (Agarwal et al., 2025). Providing transparent usage guidelines, as demonstrated by OpenAI’s partnership with Axel Springer, fosters ethical adoption (OpenAI, 2023).

Journalists must enhance AI-generated content with creativity to avoid non-original outputs, preserving their unique voice and adhering to copyright standards (Fuchs, 2024). Transparent reporting of AI’s role in content creation, such as labeling automated drafts, builds audience trust and aligns with ethical guidelines (Tran & Diep, 2024). Training in AI literacy, as recommended by Dixon-Román and Parisi (2020), equips journalists to critically evaluate outputs, ensuring accuracy and cultural sensitivity, particularly in sensitive reporting contexts (Ferrucci & Hopp, 2023).

Media organizations are tasked with implementing AI usage policies to comply with copyright laws and inform audiences about AI’s role, reinforcing trust (Asomah, 2024). Encouraging diverse reporting styles through training and editorial oversight preserves journalistic vibrancy, countering homogenization risks (Pavlik, 2023). By fostering AI-human collaboration, media organizations can ensure content is accurate, contextually rich, and ethically sound (Goodnight & Wei, 2024). Regular assessments and ethical frameworks, as outlined in Table 1, support ongoing adaptation, enabling journalism to leverage AI’s potential while upholding its core values of authenticity and accountability in an evolving digital landscape.

Conclusion

AI presents transformative opportunities for journalism, enhancing efficiency in content creation, data analysis, and personalization while broadening global accessibility (Fuchs, 2024). Tools like Wordsmith and Heliograf streamline workflows and engage diverse audiences, yet challenges such as intellectual property disputes, transparency, and content homogenization demand careful navigation (Agarwal et al., 2025; Cook, 2024). Integrating AI’s capabilities with human creativity and ethical oversight enables media organizations to enrich storytelling without compromising authenticity (Ferrucci & Hopp, 2023). Transparency in disclosing AI’s role, as emphasized by Tran and Diep (2024), fosters audience trust, ensuring accountability in news production. Prioritizing originality through human-driven creativity safeguards journalistic integrity, preventing homogenized outputs that risk diluting diverse voices (Asomah, 2024).

The stakeholder-focused framework in Table 2 guides responsible AI integration by addressing the roles of AI companies, journalists, and media organizations. It advocates for clear licensing agreements to resolve copyright concerns, transparent reporting to maintain trust, and diverse reporting styles to counter homogenization (Cook, 2024; Pavlik, 2023; Tran & Diep, 2024). Training programs are essential to equip journalists with AI literacy, enabling them to critically assess outputs and uphold ethical standards (Dixon-Román & Parisi, 2020). Clear organizational policies further ensure AI complements human contributions, balancing innovation with journalistic principles (Goodnight & Wei, 2024). By fostering AI-human collaboration, media organizations can produce accurate, contextually rich content that resonates with audiences while maintaining credibility. This approach ensures journalism remains impactful and ethically sound in an AI-driven era, leveraging technology to enhance, not replace, the human elements of creativity, judgment, and accountability that define the profession’s societal role.

Footnotes

Acknowledgements

The author would like to dedicate the financial support of the International School—Vietnam National University Hanoi Foundation for Science and Technology Development for the research.

Author Contributions

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the International School—Vietnam National University Hanoi Foundation for Science and Technology Development.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.