Abstract

A common goal of researchers using intensive longitudinal data is to develop models that predict emotions or behaviors, often using passively collected data from smartphone sensors or wearable devices. A frequent use case for such models is the development of just-in-time adaptive interventions (JITAIs). However, real-world effectiveness depends on rigorous evaluation. Previous research has highlighted challenges in selecting appropriate evaluation methods. To address these, we review key pitfalls in predictive modeling and provide recommendations for avoiding them. We focus on a common problem: the mismatch between development, evaluation and application, and use simulations to illustrate three pitfalls. First, although models may perform well from applying group-level validation (area under the curve [AUC] = .82), they may lack the ability in predicting within-persons change (mean AUC = .54, SD = .13). For JITAIs, this will prevent the model from identifying intervention-delivery moments and will discriminate only between individuals. Second, ensuring adequate variability in the outcome variable is critical. If outcomes remain stable, frequent prediction may offer little practical benefit. Third, selecting appropriate baseline models is essential; models that appear effective may underperform compared with simple baselines (e.g., AUC = .82 vs. AUC = .96). To address these pitfalls, we present recommendations for matching validation and evaluation strategies to the intended use-case scenario and provide a tool that can help researchers investigate whether their strategy and goal are misaligned. This can help improve the effectiveness of predictive models and increase their utility in real-world applications.

Keywords

Intensive longitudinal data have gained substantial attention in psychological and behavioral research because of their ability to capture the dynamic and evolving nature of human behavior. These data involve repeated measurements taken from the same individual, such as daily surveys or questionnaires—an approach commonly referred to as “experience-sampling method” (ESM; Kubey et al., 1996). The term “ESM” is often used interchangeably with “ecological momentary assessment” (EMA) or “ambulatory assessment.” However, EMA and ambulatory assessment are broader concepts that also include longitudinal data collected through passive measures using wearable devices, such as smartphones and smartwatches, also called “digital phenotyping” (Torous et al., 2016; Trull & Ebner-Priemer, 2009). Combining passive sensor data with ESM allows researchers to obtain a more comprehensive view of individuals’ daily lives, capturing both behavioral patterns and emotional states in real time (Rehg et al., 2017; Velozo et al., 2024).

ESM is a broad research field encompassing a wide range of objectives. A common goal among researchers using ESM and passive measures is to develop prediction models capable of forecasting emotions, cognitions, or behaviors based on (often passively collected) data 1 (Czyz et al., 2023; Epstein et al., 2009; Fried et al., 2023; Jacobson & Chung, 2020; Langener, Bringmann, et al., 2024; Niemeijer et al., 2022; Presseller et al., 2023; Schick et al., 2023; Thakur et al., 2022). These models offer diverse potential applications. One frequently emphasized use case is their potential to facilitate just-in-time adaptive interventions (JITAIs)—personalized, real-time interventions aimed at enhancing patient care during daily life (Nahum-Shani et al., 2018). In addition, if successful, these models could potentially be used to replace or adapt daily questionnaires, reducing the burden on participants (Eisele et al., 2022; Hart et al., 2022). However, the utility of these models across different scenarios depends on evaluation and validation to ensure they perform accurately in real-world contexts.

To evaluate prediction models, the data set is typically split into a training set and test set in which the training set is used to build the model and the test set assesses its performance. Various strategies exist for splitting the data into these sets. Thereby, the choice of an appropriate validation method depends on several factors, including the specific use case of the model and the characteristics of the data (Hewamalage et al., 2023; Little et al., 2017; Saeb et al., 2017). For intensive longitudinal data collected via passive measures and ESM, this selection process can be particularly challenging because of the inherent dependencies in the data—multiple time points from the same participant—and the temporal order of observations.

Previous research has indeed highlighted significant challenges in using correct validation and evaluation methods, with incorrect approaches often leading to biased and overly optimistic results; these issues are discussed in detail in the later sections of the article (e.g., Chaibub Neto et al., 2019; DeMasi et al., 2017; Little et al., 2017; Saeb et al., 2017). Nevertheless, these challenges are rarely addressed in the current literature, particularly in a way tailored to intensive longitudinal data.

As the core of our article, we focus on issues that can emerge when evaluating prediction models, and we use simulated data and empirical examples as illustrative examples to make these challenges explicit. In the first section, we review common use-case scenarios and their associated deployment strategies. We then introduce three widely used validation techniques, demonstrating their alignment with different use-case scenarios. In addition, we provide four key recommendations and a tool that researchers can use to examine whether their chosen evaluation strategy and potential use-case scenario are misaligned. Our overarching goal is to improve the use of evaluation strategies, making prediction models based on passive measures more effective and applicable in real-world settings.

Common Use-Case Scenarios

Prediction models using intensive longitudinal data can be employed for various purposes. Two important key considerations for researchers include (a) whether the goal is to track individuals over time, differentiate between them, or both and (b) whether the model will be applied to the same sample in which it has been developed or is intended to be used in an entirely new sample.

When the primary goal is to track individuals over time, the model typically aims to predict an individual’s behavior, mood, or symptoms at future time points based on past data (Czyz et al., 2023; Epstein et al., 2009; Jacobson & Chung, 2020; Langener, Bringmann, et al., 2024; Thakur et al., 2022). In this case, the outcome of interest is dynamic and varies within each individual over time. The main focus here is on capturing those fluctuations over time. This approach is especially relevant for developing JITAIs. For instance, a mobile app designed to monitor real-time mood and behavioral data could predict when a user’s stress level is likely to exceed a certain threshold and automatically trigger coping strategies or relaxation techniques.

On the other hand, when the aim is to differentiate between individuals—such as distinguishing between individuals with different health conditions—a different approach is required. In this scenario, the model seeks to classify or predict characteristics that do not necessarily change frequently over time but, rather, are more static to each individual (Saeb et al., 2017). For example, in medical research, intensive longitudinal data might be used to identify individuals at risk to develop Alzheimer’s disease (Lee et al., 2019). This outcome is relatively more static and involves classifying individuals into categories (at risk vs. not at risk) rather than predicting a changing outcome over time. Although this approach is valuable in contexts such as diagnosis, it is less relevant for applications such as JITAIs, in which the model focuses on real-time predictions and dynamic changes over time. Because in this article we focus specifically on evaluating prediction models that aim to forecast dynamic changes within an individual, we focus less on this use-case scenario but do mention it briefly to provide a complete overview.

Another important consideration for researchers is whether the prediction model will be applied to a new, independent sample or is used for the same individuals who contributed to the original data (Little et al., 2017). For example, in a study that predicts the risk of depression relapse based on daily smartphone data, the model might be trained on data from one group of participants and later applied to a new set of patients (i.e., nomothetic research). Alternatively, the model can be used to monitor and predict the behavior of the same individuals over time, a common goal in idiographic-focused research (Conner et al., 2009; Molenaar, 2004; Molenaar & Campbell, 2009). Likewise, the researcher has to decide whether it is feasible to update a model over time or whether they aim to develop one model that can be used in the future and does not require updating over time.

Commonly Used Modeling and Validation Strategies

A first step in designing a prediction model is deciding whether the goal is to develop individualized (i.e., person-specific) models, nonindividualized (i.e., group-level) models, or a hybrid approach. Prior work shows mixed results: Some studies have found that individualized models perform better because they capture person-specific patterns (Benoit et al., 2020; Cai et al., 2018), whereas others have reported stronger performance for group-level models that might be driven by similar characteristics shared across participants (Leenaerts et al., 2024; Soyster et al., 2022). One limitation of individualized models is that they require sufficient data per participant to be effective. When individual-level data are limited, researchers may consider hybrid approaches that combine individualized and nonindividualized models, which have shown promising predictive performance (Jacobson & Chung, 2020). In this article, we discuss both approaches, with particular emphasis on nonindividualized prediction models because these are more challenging to evaluate accurately.

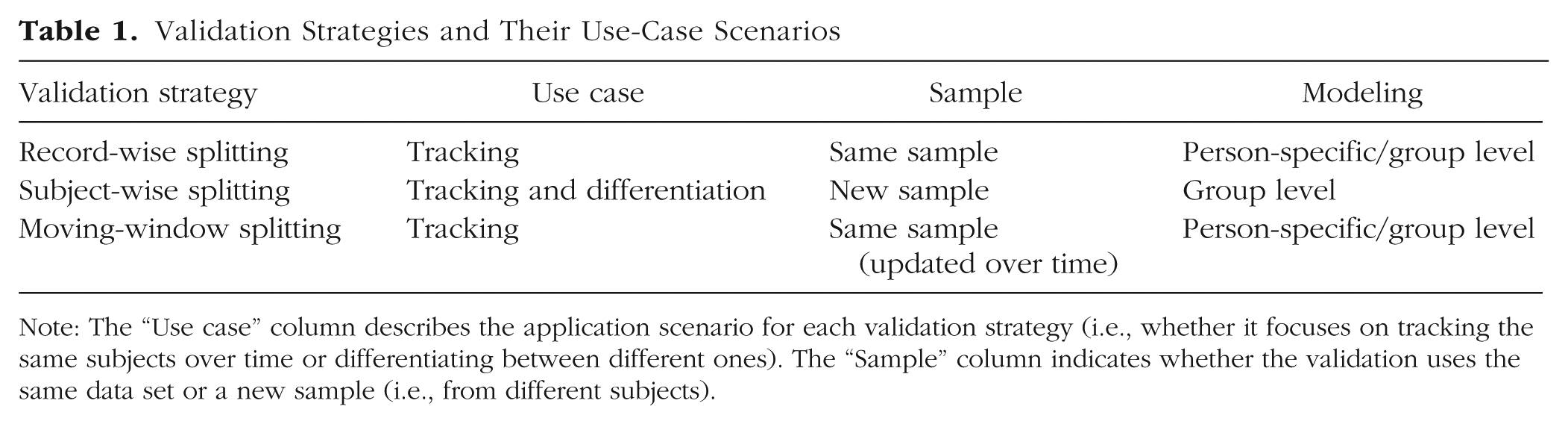

Once this modeling goal is established, researchers must choose an appropriate validation strategy. Three validation strategies are commonly used for building prediction models with longitudinal data: (a) record-wise splitting, (b) subject-wise splitting, and (c) moving-window splitting. We provide an overview of these methods and specify for which previously discussed use-case scenarios and modeling approach they are useful (for a summary, see Table 1). Importantly, as discussed in previous article, the use-case scenario has to match with the chosen validation strategy to provide a fair evaluation (Little et al., 2017; Saeb et al., 2017).

Validation Strategies and Their Use-Case Scenarios

Note: The “Use case” column describes the application scenario for each validation strategy (i.e., whether it focuses on tracking the same subjects over time or differentiating between different ones). The “Sample” column indicates whether the validation uses the same data set or a new sample (i.e., from different subjects).

We present three commonly used validation strategies; however, the principles discussed here apply broadly to other validation approaches For instance, leave-one-out cross-validation is a common method that can take different forms depending on the data-partitioning scheme: It constitutes record-wise splitting when a random time point is left out or subject-wise splitting when an entire subject and all associated data points are excluded. Likewise, rather than relying solely on a simple train-test split, researchers often employ cross-validation methods, which involve multiple iterations of data partitioning (e.g., k-fold cross-validation; Fushiki, 2011). In cross-validation, the same key considerations apply: Researchers must determine how to split their data and clearly define their intended use case before selecting a validation strategy. In particular, careful attention should be paid to whether the same subjects appear in both the training and testing sets and whether the model is updated over time.

Record-wise splitting

Record-wise splitting (see Fig. 1) is one of the simplest and most straightforward validation methods. In this approach, the researcher randomly selects a proportion of individual time points (or rows) from the entire data set to form the test set, and the remaining data points are used as the training set (Saeb et al., 2017). For example, in a study in which participants provide daily data over a month, the researcher might randomly select 30% of all daily responses to form the test set, leaving the remaining 70% for training. In this method, the training and test sets contain data points from the same participants, but the specific time points are uniquely assigned to either the training set or test set (Little et al., 2017; Saeb et al., 2017).

Record-wise splitting (group-level modeling). In record-wise splitting, a subset of time points from the entire data set is designated as the testing set (pink dots), and the remaining time points are allocated to the training set (green dots).

This type of split is the default behavior in most general-purpose machine-learning packages that are not specifically designed for time-series predictions. For example, in scikit-learn (Python), the train_test_split() function performs a random record-wise split by default (Pedregosa et al., 2011). Likewise, in caret (R), the createDataPartition() function also conducts a record-wise split unless explicitly modified (Kuhn, 2008). In addition, a review of 62 studies using longitudinal data found that 45% employed a record-wise split, highlighting its widespread use even in longitudinal analyses (Saeb et al., 2017).

This approach ensures that each time point is used exclusively for either training or validation, preventing any overlap between the two sets. As a result, this strategy may be appropriate when the goal is to track individuals over time and apply the model within the same sample, provided certain assumptions hold (Little et al., 2017; Saeb et al., 2017). One key assumption is stationarity in the distribution of both the outcome and predictor variables and their relationship. Because this validation strategy does not account for the temporal order of the data (Hewamalage et al., 2023), it can be problematic if the time series is nonstationary—that is, if the data distribution shifts over time (Bringmann et al., 2018; Ryan et al., 2025). For example, if the data have seasonal trends that are no longer captured because of the train-test split, the model will not be able to learn them (Hewamalage et al., 2023). Likewise, if the relationship between the outcome and predictors evolves over time, the model may fail to capture these changes, potentially limiting its predictive performance.

Importantly, the model might perform overly optimistic when applied to a new sample (Saeb et al., 2017) and might therefore be less relevant for studies that aim to build a model to diagnose people based on a static outcome.

Subject-wise splitting

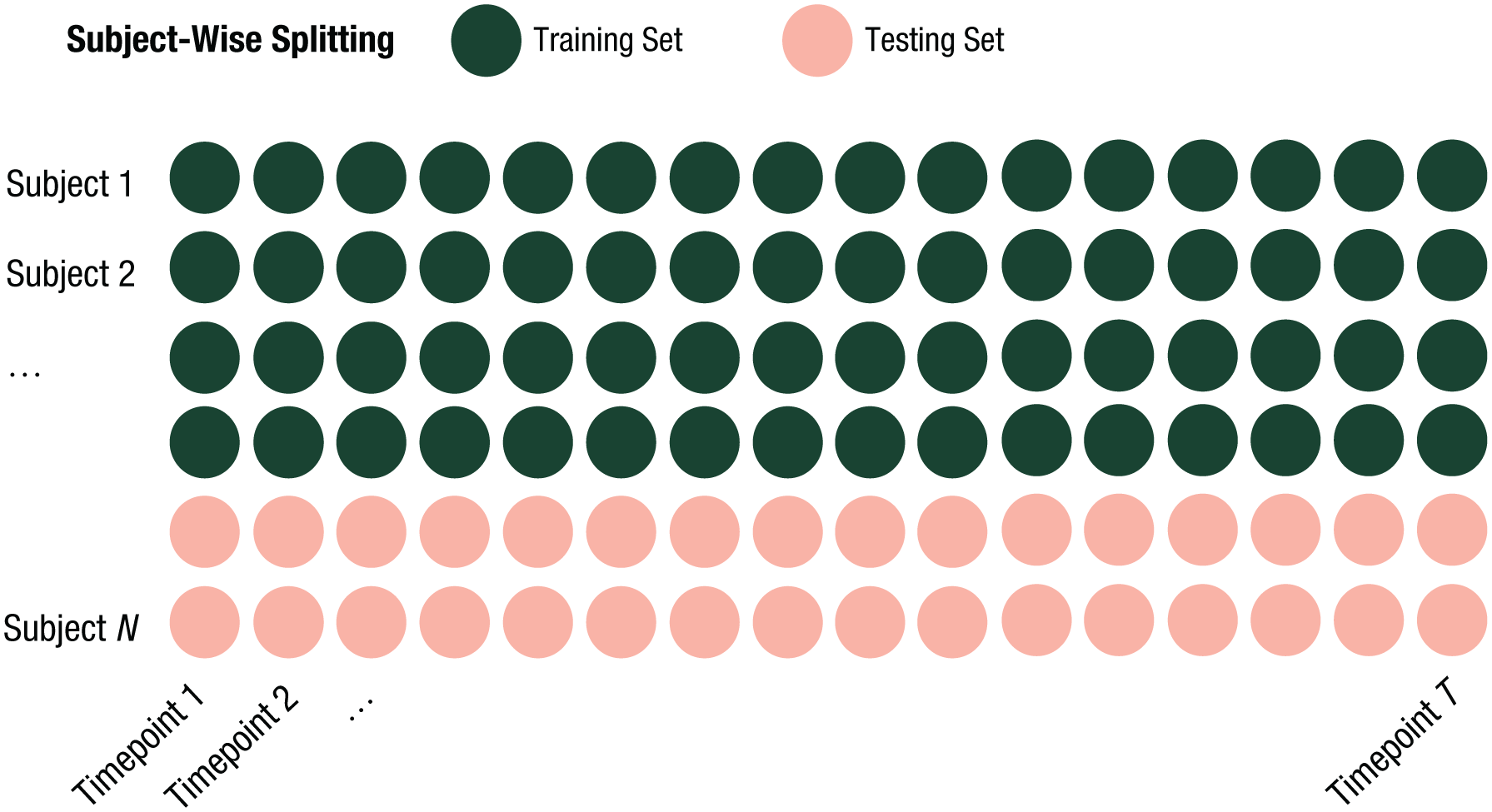

Subject-wise validation (see Fig. 2) involves splitting the data based on participants rather than individual time points and can therefore be used only when a group-level modeling approach is chosen. In this method, a proportion of participants are selected for the test set, along with all of their corresponding time points (Saeb et al., 2017). For instance, in a data set with 100 participants providing data across multiple days, the researcher might select randomly 20 participants for the test set and use all of their time points as the test data, leaving the remaining 80 participants and their data as the training set.

Subject-wise splitting (group-level modeling). In subject-wise splitting, a subset of participants and their corresponding time points form the testing set (pink dots), and the remaining participants and their corresponding time points are allocated to the training set (green dots).

This method ensures that the model is tested on data from entirely new participants (Saeb et al., 2017). Thus, it can be used to both track individuals over time and differentiate between individuals. It is particularly useful in scenarios in which the model will be applied to new participants. However, when the model is intended for use with the same participants, this method may be less recommended because its predictive performance might be inferior compared with a model trained and validated using data from the same participants. Moreover, because this split also does not account for time, it can also encounter issues when there are nomothetic regime changes. For example, a model trained before COVID would likely perform poorly during the pandemic. Likewise, a model trained during COVID would probably not perform well in the postpandemic period.

Moving-window splitting

The moving-window technique (see Fig. 3) is specifically designed to account for the temporal nature of longitudinal data. In this approach, the data set is divided into “windows” of time, which move forward as time progresses (Hewamalage et al., 2023; Tashman, 2000). For example, the first training window might use data from Days 1 to 30 to predict the outcome for Day 31. The second window would use data from Days 2 to 31 to predict outcomes for Day 32 and so on. This approach ensures that the model is continuously trained and validated on a rolling basis such that more recent data are incorporated into the training set as time moves forward and older data might be excluded. As with record-wise splitting, the training and test sets contain the same participant(s), but the time points in the two sets are distinct. Thus, it can be seen as a form of record-wise splitting. This method allows the model to learn from more recent data and adapt its predictions as new information becomes available (Hewamalage et al., 2023; Tashman, 2000). The size of the windows can be either fixed or dynamically adjusted depending on the research design and goals of the study (Langener, Stulp, et al., 2024). Likewise, the prediction horizon can vary; for instance, instead of predicting a single future time Zpoint, one can predict multiple time points ahead. Another variation of this approach is block-wise splitting, in which the training and testing windows in each split do not overlap as the moving window advances (Snijders, 1988).

Moving-window splitting (group-level modeling). In moving-window splitting, the data set is sequentially divided into multiple training (green dots) and testing (pink dots) splits that move forward as the time progresses.

Similar to record-wise splitting, this method is useful when the goal is to track individuals within the same sample. A key advantage is that it preserves the temporal structure of the data, making it better suited for longitudinal analyses. However, a potential drawback is the need for continuous updates over time, requiring ongoing data collection. That said, if the distribution of the outcome and predictors and their relationship remain stationary or if the model is validated using predictions far enough into the future, frequent updates may not be necessary.

Description of Empirical Example and Simulation Setup

Empirical example: Qwantify-app data set

In the remainder of the article, we use data from the openly available Qwantify-app data set (Wilson-Mendenhall et al., 2022) to illustrate common pitfalls in evaluating prediction models. The Qwantify-app data set was created to investigate the dynamics of desire in daily life. The data set includes a range of binary and continuous variables capturing individuals’ experiences of desire alongside their social, physical, and emotional states. We chose this data set as an empirical real-world example because it is openly available and already includes a variety of binary and continuous variables, eliminating the need for additional preprocessing, such as binarization (for more information, see Wilson-Mendenhall et al., 2022). This study incorporates 27 binary and six continuous variables, capturing a broad spectrum of within- and between-persons variability—which are common characteristics of longitudinal data. Thus, it serves as a strong case for illustrating potential pitfalls in model validation. For a comprehensive overview of all variables used, see Table 2. We did not preregister our analyses before conducting them.

Overview of Variables Included in This Article

Note: Bracketed terms represent individual response options drawn from multi-select (“check all that apply”) items assessing desired feelings, physical states, and emotions. Each option was operationalized as an independent binary indicator (endorsed vs. not endorsed) for analysis.

More than 600 participants downloaded the Qwantify app, and approximately 40% of them completed responses to more than 50 alerts. In this article, we included only participants with at least 50 alerts (N = 241), referred to hereafter as the “full data set.” For several analyses, we also examined three different subsets of the data: (a) 20 participants with at least 150 alerts, (b) 30 participants with at least 100 alerts, and (c) 100 participants with at least 60 alerts. Data collection occurred between October 2016 and July 2018. Participants received between two and five alerts per day, each prompting brief momentary assessments of their current experiences and context.

Simulation setup and machine-learning method

In this section, we describe the simulation setup that we use in the remainder of the article to illustrate common pitfalls in evaluating prediction models. We simulate a data set with a binary outcome variable that varies both between and within participants over time. First, we specify the number of participants N and time points T for which the data will be simulated. In addition, we define the number of features S to be included in the data set. The simulation was conducted using R (Version 4.4.2). The full code that we used to run the simulation is available at https://github.com/AnnaLangener/Justintime_Paper. We also provide a shiny app (online: https://annalangener.shinyapps.io/Justintime/; local: https://github.com/AnnaLangener/Justintime) that allows researchers to run their own simulation and to make informed decisions about their actual data (see also Main Takeaways and Recommendations). This study was not preregistered before conducting it.

To simulate the outcome for each participant, we first assigned each participant a probability

The beta distribution is flexible and allows one to control the overall prevalence and variability of the outcome probability across participants. For this reason, we selected it over other distributions, such as the uniform distribution. The mean of the beta distribution, denoted μ, is given by

This mean represents the overall probability of the outcome being present (1) across participants and time points. A higher mean results in more participants having a high probability of the outcome being present, leading to greater overall prevalence. Conversely, a lower mean results in more participants with a low probability of the outcome, reducing the overall prevalence. In addition, we can specify the standard deviation σ:

This standard deviation controls the variability of the outcome probability across participants. A larger standard deviation indicates greater heterogeneity, meaning there is a wider range of probabilities across participants. A smaller standard deviation reflects more homogeneity, with most participants having similar probabilities.

In our simulation setup, we aimed to regulate these two aspects. To achieve this, we calculated the parameters

Once a probability was assigned to each participant (sampled from the beta distribution, see Equation 1), we simulated the binary outcome for each participant n at time point t using a Bernoulli distribution. The success probability for each Bernoulli trial was determined by the participant’s assigned probability

To simulate predictor variables, we adopted a similar approach to Saeb et al. (2017). However, it is important to highlight a key difference: Whereas Saeb and colleagues employed a static outcome for participants—in which each participant consistently either had the outcome present across all time points or not—we simulated an outcome that fluctuates within participants over time. This approach better reflects dynamic processes, which are often of interest in longitudinal data, particularly when the goal is to track individuals over time. We employed a comparable equation to simulate s features across t time points and for n participants (

In constructing the features, we therefore based our simulations on the following components: first, the varying outcome (

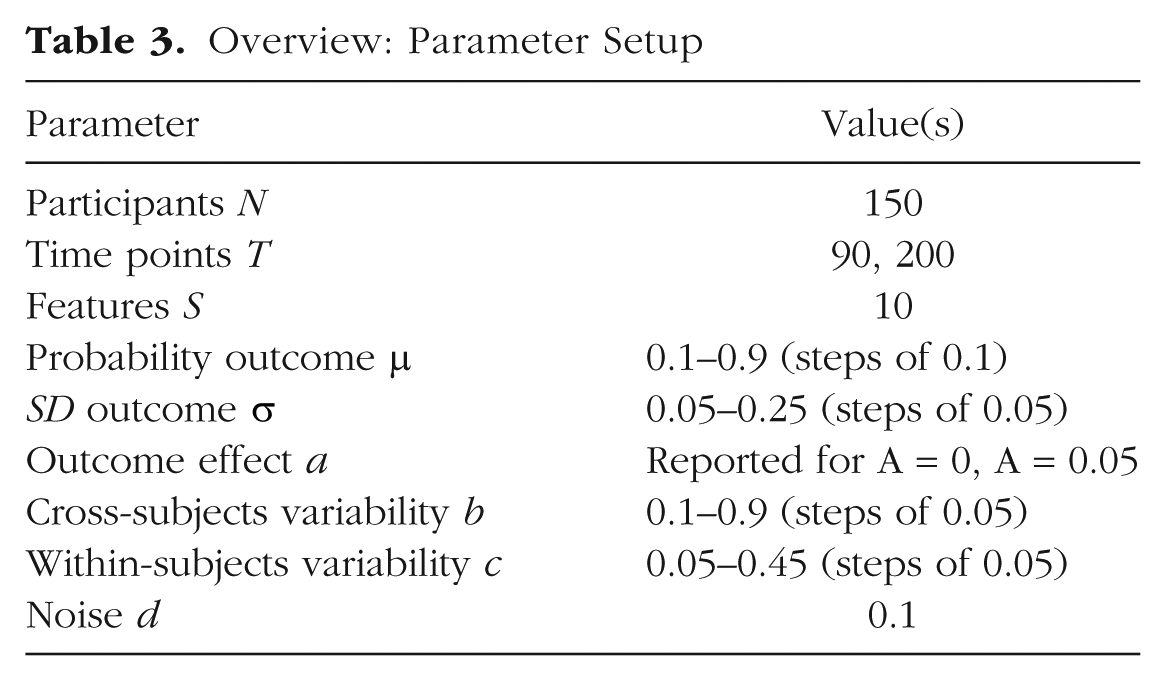

Throughout this article, we use this simulation to showcase and illustrate various pitfalls and report performance metrics across several simulation setups (summarized in Table 3). We fixed the following parameters: participants N = 150, time points T = 90, features S = 10, and proportion of feature noise d = 0.1. These specific values for the number of participants N and time points T were selected for illustrative purposes and based on our previous work. The values for S and d follow those used by Saeb et al. (2017), ensuring methodological comparability. We did not systematically explore other parameter values because of the high computational cost; however, we provide a Shiny app that allows users to examine alternative configurations interactively and have included other combinations in our empirical examples. Unless stated otherwise, presented results were generated by varying the outcome probability

Overview: Parameter Setup

For illustrative purposes, we employ a standard random-forest model to detect the outcome at a given time point using simulated features from that same time point. Thus, our initial simulation setup does not include temporal dependencies, and our random-forest model does not incorporate features from previous time points. To ensure our results remain valid in the presence of temporal dependencies, we have included empirical examples and added simulated temporal dependencies in the Supplemental Material available online.

Potential Pitfalls and Recommendations on How to Avoid Them

So far, we have described common use-case scenarios and deployment strategies and outlined which validation strategies are suitable for different contexts. However, even when a validation strategy is selected that matches the use-case scenario, problems and challenges can arise when evaluating a prediction model. In this section, we describe and illustrate three potential pitfalls and provide recommendations on how to avoid them.

Lack of within-persons evaluation and biased overall performance estimates

Need for within-persons evaluation

A common pitfall in predictive modeling, particularly for studies aiming to track individual behavior or emotions over time, is the lack of within-persons evaluation and biased overall performance estimates. This issue is especially relevant for applications such as JITAIs if a group-level modeling approach is chosen and the primary objective is to make accurate predictions within individuals rather than merely distinguishing between individuals.

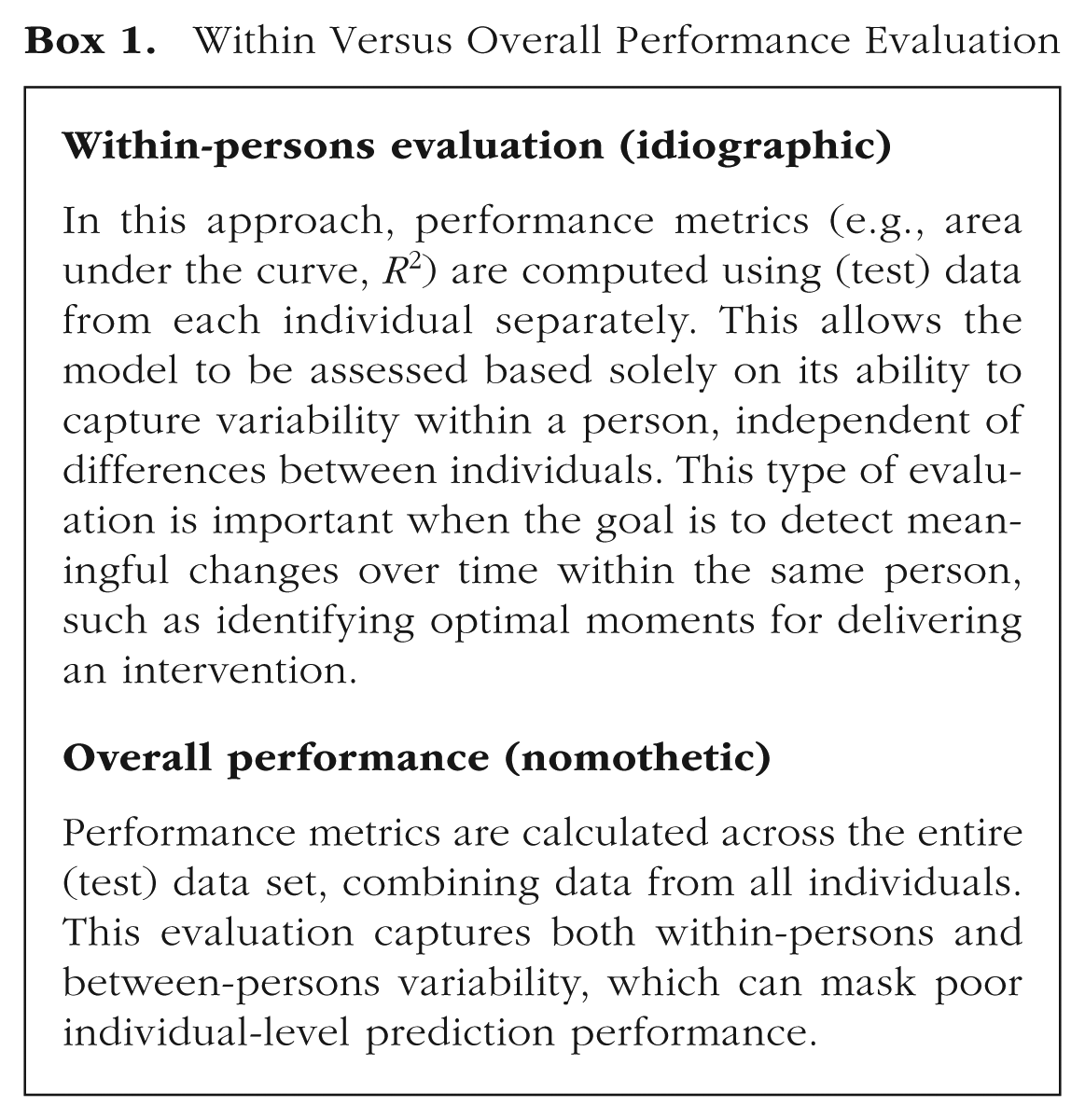

Thus, even though some researchers are used to thinking mainly about between-subjects effects, in such contexts, it is crucial to evaluate model performance not only across the entire data set (which includes both between- and within-persons variability if a group-level modeling approach is chosen) but also within each person (see Box 1). It is important to have an idiographic focus here because the model is designed to predict changes in individuals over time at deployment. Failing to assess within-persons performance can be misleading: A model might exhibit strong overall performance yet fail to provide accurate predictions for individual participants. For JITAIs, this means that the model would not be able to identify critical time points for intervention delivery, limiting its practical utility. Despite this, within-persons performance assessment remains rare in current research, even in studies explicitly focused on JITAIs.

Within Versus Overall Performance Evaluation

Different results between within-persons evaluation and overall performance

In a prior study (Langener et al., 2025), we aimed to predict variability in depressive symptoms using a deep-learning model and observed that overall performance metrics, such as the area under the curve (AUC), appeared small to moderate (AUC = .59, SD = .06) 2 when evaluated across the entire data set. However, when performance was assessed within persons, the AUC was no better than chance levels (within-persons AUC: M = .46, SD = .23).

To further demonstrate this issue, we provide an illustrative example from our simulation study choosing a specific parameter setup. In this setup, we simulated 150 participants with 200 time points each. We increased the number of time points to 200 to ensure there were enough observations for the moving-window split and within-persons evaluation. The mean prevalence of the outcome was relatively low (

The results of our simulation (see Table 4) further demonstrate the potential difference between overall and within-persons performance, particularly when a validation setup splits data by time point (i.e., record-wise and subject-wise) rather than by participant. For the record-wise evaluation, the overall AUC was .91 when using a random-forest model to predict the outcome, suggesting good model performance. However, when performance was assessed within persons, the mean within-persons AUC dropped to .56, with a high standard deviation (SD = .19), 3 indicating substantial variability in predictions across participants. The subject-wise evaluation revealed a similar mean within-persons AUC of .57 (overall AUC = .62). Likewise, the moving-window evaluation demonstrated good overall performance (AUC = .82), but the mean within-persons AUC remained low (.54), with variability across participants (SD = .13).

Illustrative Performance Using One Simulation Setup (Binary Outcome)

Note: When predicting a binary outcome, we can evaluate the prediction performance only if there is variation within the outcome. Thus, when calculating within-persons AUC, we excluded all participants that do not exhibit any variation in the test split (see “N included within persons”). AUC = area under the curve.

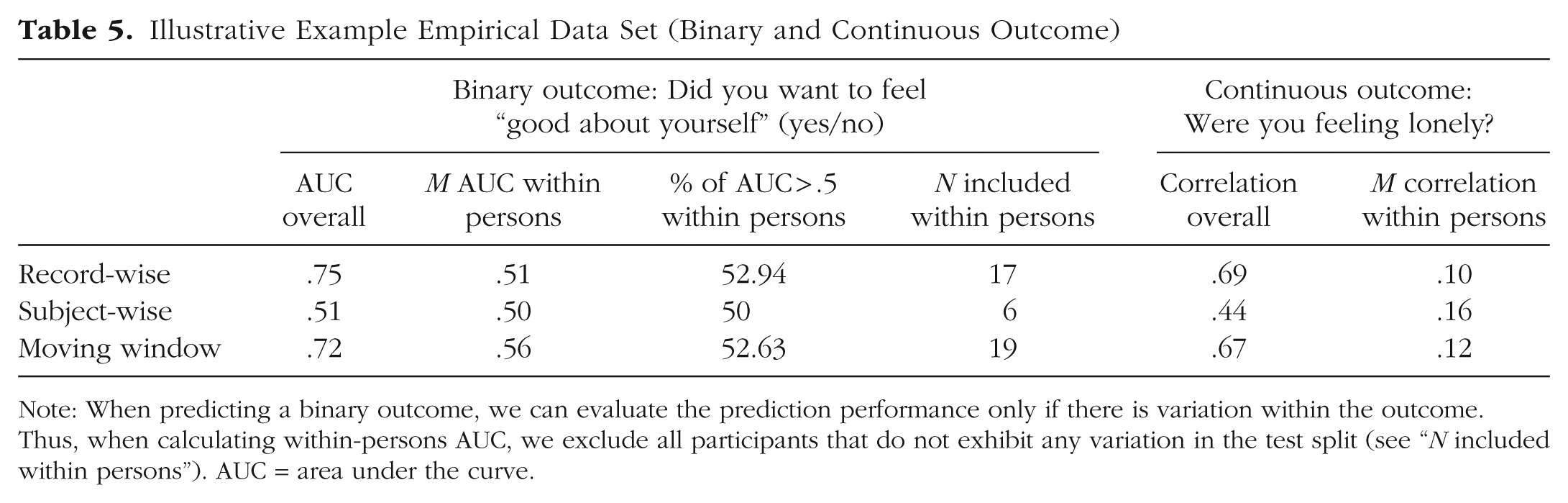

We further investigated the distinction between within-persons and overall predictive performance using an empirical example from the Qwantify-app data set. To ensure a fair comparison, we selected a subset of 20 participants who had provided data over a period of at least 150 days. This selection ensured that each individual contributed a sufficient number of observations to support reliable estimation of within-persons model performance.

For the binary outcome, we predicted whether participants wanted to “feel good about themselves” using a random-forest model. Predictors were taken from the previous EMA time point and included seven continuous variables: how they were feeling (bad to good), energy, overthinking, lonely, self-worth, appreciating, and stressed. The ICC for the binary outcome was .34, and the average ICC across predictors was .48.

For the continuous outcome, we predicted how lonely participants felt at a given time point using the same set of predictors from the preceding EMA entry, excluding lonely (because it was the outcome variable). The ICC for the continuous outcome was .62, and the mean ICC for the predictors was .46.

Table 5 presents the model performance across the three validation schemes: record-wise, subject-wise, and moving-window cross-validation. For the binary outcome, record-wise validation yielded a high overall AUC of .75 but a substantially lower mean within-persons AUC of .51, with only 53% of participants achieving an AUC above chance (.5). Subject-wise validation produced a low overall AUC of .51 and a similar mean within-persons AUC of .50, with 50% of individuals having a within-persons AUC above .5. In contrast, the moving-window approach provided an overall AUC of .72, a mean within-persons AUC of .56, and 53% of participants demonstrating above-chance performance.

Illustrative Example Empirical Data Set (Binary and Continuous Outcome)

Note: When predicting a binary outcome, we can evaluate the prediction performance only if there is variation within the outcome. Thus, when calculating within-persons AUC, we exclude all participants that do not exhibit any variation in the test split (see “N included within persons”). AUC = area under the curve.

For the continuous outcome, record-wise validation produced a relatively high overall correlation of .69, but the average within-persons correlation was low (.10), indicating limited within-persons predictive power. Subject-wise validation resulted in an overall correlation of .44 and a mean within-persons correlation of .16, based on only three participants with sufficient data. The moving-window approach again provided a more balanced view, yielding an overall correlation of .67 and a mean within-persons correlation of .12.

These results thus illustrate the limitations of relying solely on overall performance metrics when the goal is to track individuals over time. Although overall AUC values may suggest acceptable performance, the lack of within-persons performance limits the practical utility of the model to track changes within individuals over time.

Biased overall performance measures demonstrated via simulation study (binary outcome)

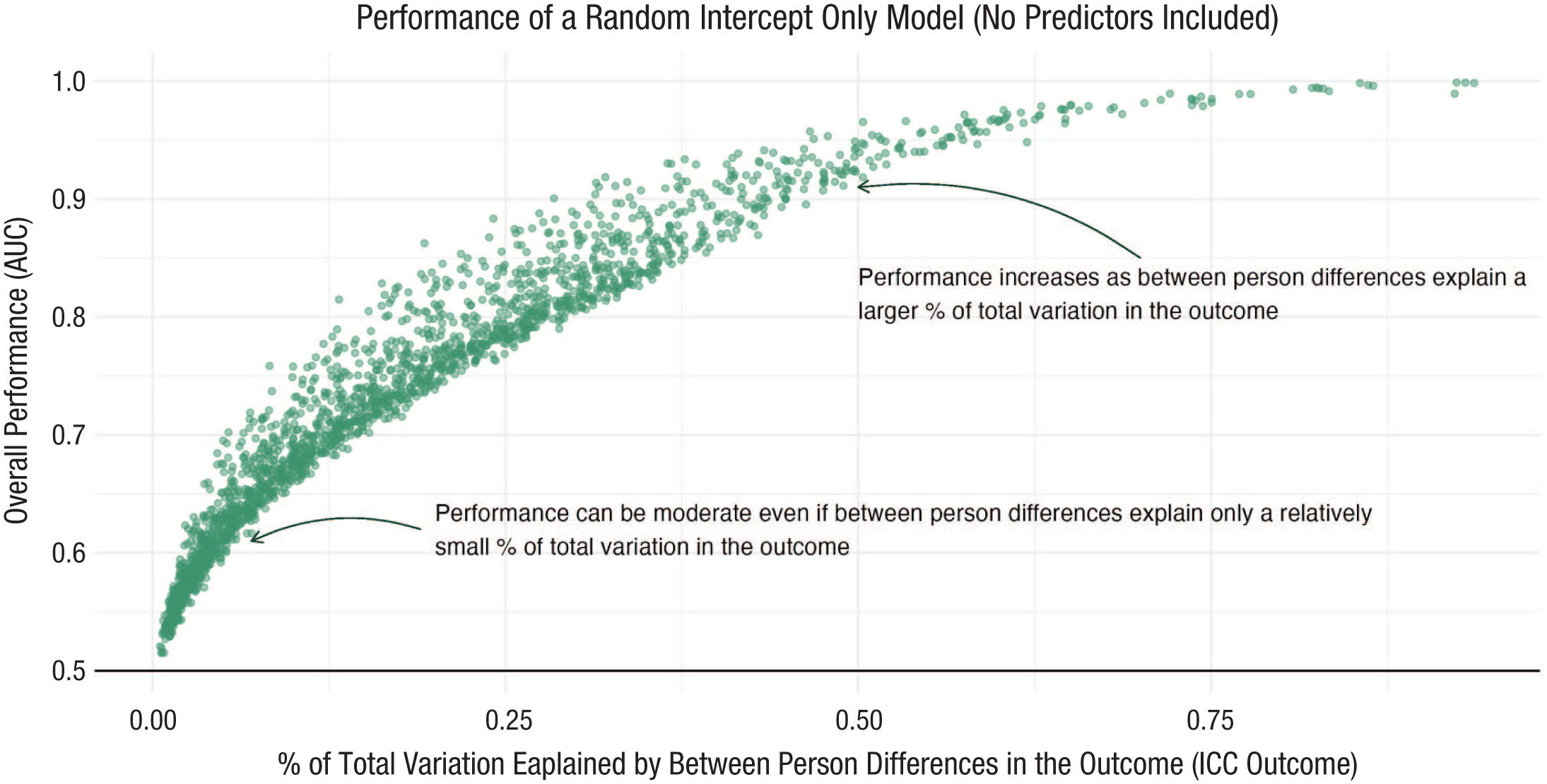

To further illustrate the importance of within-persons evaluation and the potential biases in overall performance metrics, we present another example demonstrating that overall performance can appear high even when the true relationship between the predictor and outcome is zero. Figure 4 depicts the results of various simulation setups in which we varied the overall prevalence of the outcome, the standard deviation of the outcome, cross-subjects variability, and within-subjects variability (for an overview, see Table 3; A = 0) and used a random-forest model with a record-wise split to predict the outcome.

Bias in performance estimates across different simulation setups when no relationship exists: expected AUC = .5. Bias increases if a large percentage of total variation is explained by between-persons differences in both the outcome and predictors. AUC = area under the curve.

The results show that the overall AUC can significantly exceed chance levels when the ICC of the predictors and outcomes is high, which means that the variation in outcome and predictors is largely explained by differences between individuals. Such elevated ICC values are frequently observed in empirical research across various psychological and behavioral domains. For example, in the context of social interactions among children and adolescents, nine studies reported within-persons ICC estimates that ranged from .43 to .89 (Mölsä et al., 2022). Likewise, studies on daily resilience in response to life stressors reported an average ICC of .52 (SD = .15, range = .29–.80; Zietse et al., 2025). Affect-related measures have shown ICC values between .20 and .39 (Eisele et al., 2021), and in samples including individuals with current or remitted major depressive disorder (MDD), negative affect and positive affect both showed ICCs of approximately .42 to .43 (Thompson et al., 2021). In addition, ICCs for responses to the Mobile Patient Health Questionnaire-9 in individuals diagnosed with MDD ranged from .38 to .67 (Haddox et al., 2024).

In such cases, the model may learn to differentiate between individuals, capitalizing on cross-subjects variability rather than capturing meaningful within-subjects relationships. This effect is particularly likely when the data are unbalanced, as in our Simulation Example 1, or when the outcome variable exhibits a large standard deviation. Consequently, relying solely on overall metrics can give a misleading impression of model performance, masking the model’s inability to make accurate within-persons predictions, which were around chance level across all simulation setups displayed in Figure 4 (M = 0.5, SD = 0.01). Such a model would therefore be of no use because there is no meaningful relationship between predictions and outcomes. A simpler model (e.g., a random-intercept model) would be easier to use and would probably have better predictive performance (see Weak Benchmark and Missing Baseline Comparison).

Biased overall performance measures demonstrated via empirical example (binary and continuous outcomes)

In our empirical example, we also investigated the prediction performance of a random-forest model with a data set that assumes no relationship between predictors and outcomes. We achieved this in a similar way as proposed by Chaibub Neto et al. (2019), who examined a similar issue in the context of diagnostic algorithms involving static outcomes and time-varying predictors. Specifically, we applied a data-shuffling procedure that disrupts the original data structure in two key ways. First, subject identifiers were randomly reassigned, effectively permuting the between-subjects structure. Second, within each (now permuted) subject, the outcome variable was independently shuffled to remove any within-subjects patterns. This approach generates a null model in which the predictive relationships are eliminated while preserving the ICC structure of both predictors and outcome variables.

Figure 5 presents the predictive performance (i.e., AUC values) for binary outcomes using the shuffled data set and a random-forest model. We predicted multiple binary outcomes (for a full overview, see Table 2), using both the full data set and three different subsets. As predictors, we included seven continuous outcomes (see Table 2). Because of variation in outcomes and participant samples, the ICC values of both outcomes (presented on the x-axis) and predictors varied across models. Thus, in Figure 5, each panel represents a distinct data subset differing in the number of participants and the number of time points per participant. Consistent with our simulation findings, we observed that model performance was biased when the ICC of the outcome and predictors was high, suggesting that strong between-persons variability can artificially inflate perceived predictive performance.

Predictive performance of the permuted data set for binary outcomes (empirical example). Each panel illustrates a separate data subset varying in the number of participants and the number of time points per participant. In each panel, prediction performance on a shuffled data set is shown for two validation strategies that split data by time point (i.e., moving window and record-wise). Binary outcomes are displayed along the x-axis in order of increasing intraclass correlation (ICC), and the y-axis shows the area under the curve (AUC) evaluated across the entire test set.

Figure 6 shows the predictive performance (measured as the correlation between predicted and observed values) for continuous outcomes using the shuffled data set and a random-forest model. We applied the models to several continuous outcomes using all other continuous variables as predictors (for a complete list, see Table 2), evaluating performance across three participant subsets and the full data set. Consistent with the findings for binary outcomes, model performance appeared inflated in many cases because of high ICC values across individuals.

Predictive performance of the permuted data set for continuous outcomes (empirical example). Each panel illustrates a separate data subset varying in the number of participants and the number of time points per participant. In each panel, prediction performance on a shuffled data set is shown for two validation strategies that split data by time point (i.e., moving window and record-wise). Continuous outcomes are displayed along the x-axis in order of increasing intraclass correlation (ICC), and the y-axis shows the correlation between predicted and observed scores evaluated across the entire test set.

Mismatch between chosen validation strategy and actual observations

As we have discussed so far, when developing a prediction model using longitudinal data, researchers must ensure that their chosen validation strategy aligns with their intended use-case scenario (see Table 1). However, another potential pitfall arises when these considerations do not align with the actual characteristics of the data. Specifically, a common challenge occurs when these methodological choices do not account for the actual variability of the outcome over time. For example, if the chosen strategy assumes fluctuations in an outcome that in reality remains relatively stable, the predictive model may lack meaningful application.

This again becomes particularly important if the goal is to track individuals over time. If the goal is to predict an outcome at a fine-grained temporal scale, it is crucial to ensure that the outcome exhibits sufficient variation in this period. When an outcome remains mostly stable over time, frequent measurement and prediction may have limited practical value (Myin-Germeys & Kuppens, 2022). For example, in a binary case, outcomes that are almost always present or absent provide little meaningful variation for within-persons prediction. If a person consumes alcohol only once in 5 years, attempting to predict this rare event and designing a JITAI around it may not be a meaningful goal (DeMasi et al., 2017).

To illustrate this issue, we provide an example from the simulation study using a specific parameter setup. In this scenario, the mean prevalence of the outcome is relatively low (

Within-persons variability over time.

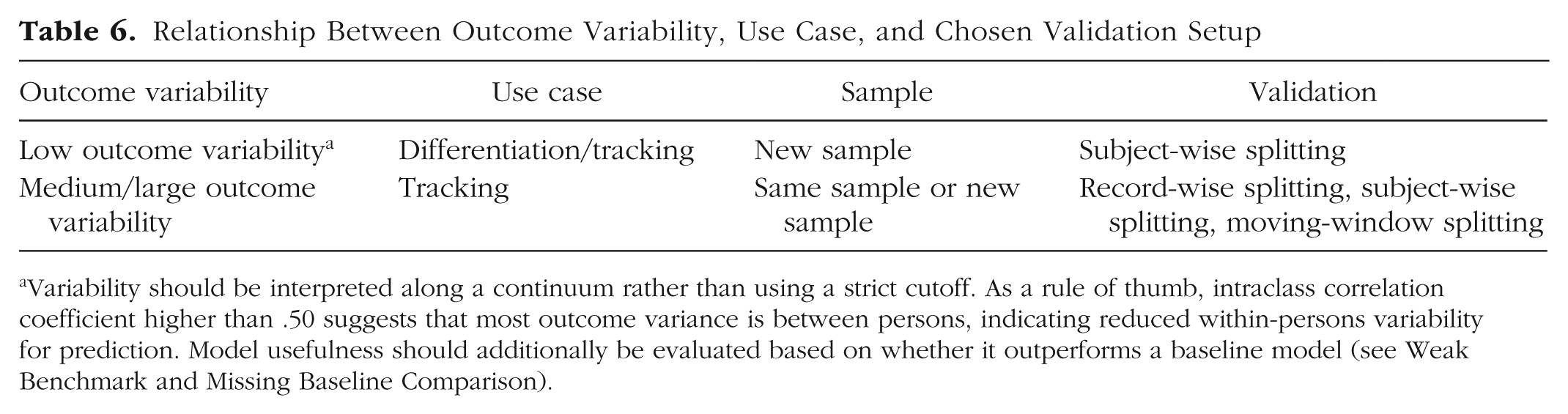

Determining when an outcome is too stable to serve as a viable target for a JITAI can be challenging. For instance, even if an outcome is rare but has significant consequences—such as a suicide attempt—it may still be valuable to attempt its prediction. In such cases, as demonstrated earlier, selecting an appropriate evaluation strategy is crucial for assessing model performance. Overall performance metrics alone may not be meaningful when the chosen validation strategy includes the same participants in both the training and testing sets. In addition, if the outcome is very rare, within-persons assessment may not be feasible because of its infrequent occurrence. To address these challenges, researchers can opt for a validation approach that splits data between participants (i.e., subject-wise validation; see Table 6). This becomes especially important if the variability in predictor variables is mainly explained by between-persons differences.

Relationship Between Outcome Variability, Use Case, and Chosen Validation Setup

Variability should be interpreted along a continuum rather than using a strict cutoff. As a rule of thumb, intraclass correlation coefficient higher than .50 suggests that most outcome variance is between persons, indicating reduced within-persons variability for prediction. Model usefulness should additionally be evaluated based on whether it outperforms a baseline model (see Weak Benchmark and Missing Baseline Comparison).

That said, predicting infrequent events (i.e., imbalanced data) is a research field in its own right, and numerous methods have been developed to address this challenge. For instance, anomaly-detection approaches and methods such as isolation forests and one-class support vector machines are specifically designed to detect rare events. In addition, techniques such as oversampling or undersampling can be used to mitigate class imbalance. A comprehensive overview of methods for rare-event prediction was provided by Shyalika et al. (2024). Likewise, performance metrics have been developed that are appropriate for imbalanced-data scenarios. For example, precision and recall measure the proportion of correctly identified positive instances relative to the predicted and actual positives, respectively, whereas the F1 score provides a harmonic mean of precision and recall. Another important consideration in imbalanced data sets is the selection of a meaningful baseline for comparison, typically the majority class, which is discussed in more detail in the following section.

Given these considerations, we recommend that researchers carefully conceptualize their use case before building and evaluating a prediction model based on longitudinal data. It is essential to assess whether the chosen approach is theoretically meaningful and appropriate for the data at hand (for an overview, see Table 6). Although theory development is always crucial (Bringmann et al., 2022), it becomes even more critical when working with high-resolution behavioral data (Cohen et al., 2020). If the outcome exhibits little variation over time, researchers may need to reconsider their strategy—for example, by focusing on distinguishing between individuals rather than predicting within-persons fluctuations.

In addition, after data collection, visualization and descriptive statistics are valuable tools for evaluating whether the outcome varies meaningfully within individuals (e.g., see Fig. 7). Ensuring that theoretical expectations align with empirical observations can refine predictive modeling strategies and enhance the overall usefulness of the research.

Weak benchmark and missing baseline comparison

Another common issue in evaluating predictive model performance is the absence of an appropriate baseline for comparison (DeMasi et al., 2017). Although metrics such as AUC indicate whether a model performs better than random chance, they provide limited insight into its practical utility. To assess a model effectively, its performance must be compared with a meaningful baseline. For instance, a baseline model might predict the mean value or in binary classification, always predict the majority class. If a model cannot outperform such simple baselines, its practical value is questionable because using the baseline model would be more efficient. This issue is particularly important for performance metrics, such as accuracy, in which high class imbalance can artificially inflate scores or for metrics that provide limited information on model performance, such as the mean squared error.

When the goal is to track individuals over time and the same individuals appear in both training and testing sets, selecting an appropriate baseline becomes even more critical. In such cases, the baseline should not be the overall mean/majority class across all individuals but rather the mean within each individual (DeMasi et al., 2017). This consideration is particularly important in moving-window setups, in which the correct baseline is the mean calculated within each individual’s (moving) training set rather than the overall data set mean (regardless of whether person-specific or group-level models were built). Because the training set is changing in the moving-window setup, it is also important to compare within-persons performance with this baseline model.

In a previous study, we aimed to predict variability in depressive symptoms using a deep-learning model (Langener et al., 2025). Although achieving moderate overall performance (AUC = .59), our model failed to outperform a simple random-intercept baseline model (AUC = .83). Thus, our model would have been useless in any practical application (Langener et al., 2025). To further illustrate this issue, we conducted a simulation using the same setup from the simulation study in Example 1 (see Table 7). Using a record-wise validation strategy, our model achieved an overall AUC of .91 using a random-forest model for prediction. However, compared with a random-intercept baseline model trained on the same data, the random-intercept baseline model outperformed our model (AUC = .98). Likewise, under a moving-window validation strategy, the random-intercept baseline again outperformed our random-forest model (AUC = .82 vs. AUC = .96). For within-persons evaluation, our random-forest model also performed worse than the random-intercept baseline (AUC = .47 vs. AUC = .58).

Overall Performance and Within-Persons Performance Compared With Baseline Performance for Each Validation Strategy

Note: As a machine-learning model, we used a random forest; as a baseline model, we used a random-intercept-only model. AUC = area under the curve.

Because subject-wise validation separates participants between training and testing sets, there is no participant-specific majority class in the training data, making a direct baseline comparison based on participants’ majority class infeasible. Likewise, within-persons performance can be compared only under the moving-window validation split using each participant’s baseline majority class because the training sets differ across time in this setup. Nevertheless, the population-level majority class can still be used as a baseline for comparison. This approach predicts the same value for all participants, which generally results in an AUC of .5 when the outcome fluctuates; if the outcome does not fluctuate, the AUC cannot be calculated. Despite this limitation, using the population-level majority class can still provide a useful baseline for other metrics, such as accuracy, or metrics for continuous outcomes.

To gain a clearer understanding of the general performance of a random-intercept baseline model, we conducted a simulation following the setup outlined in Table 3. The simulation was run across varying outcome probabilities

Performance of a random-intercept-only baseline model (record-wise).

We further evaluated whether this strong baseline performance holds in our empirical example. We compared the predictive performance (measured by AUC) of a random-forest model against a random-intercept-only baseline model across multiple binary outcomes and data subsets (as in our previous example, see Table 2). As shown in Figure 9, each panel represents a different subset of the data varying in the number of participants and number of time points per participant. In each subset, we observe a range of outcome ICCs because of differences in the binary outcomes examined. Across most subsets, the random-intercept-only model outperforms the random-forest model under both record-wise and moving-window validation strategies, particularly when the outcome ICC is high.

Comparison of predictive performance (AUC) across validation strategies and baseline models for binary outcomes as a function of outcome ICC. Each panel represents a distinct data subset differing in the number of participants and the number of time points per participant. In each panel, prediction performance is shown for both a random-intercept baseline model and a random-forest machine-learning model using different validation strategies. Binary outcomes are arranged along the x-axis in order of increasing intraclass correlation (ICC), and the y-axis indicates the area under the curve (AUC) computed across the full test set.

We observe similar results for continuous outcomes. As shown in Figure 10, the random-intercept-only baseline model consistently achieves higher or comparable correlation performance compared with the random-forest model across most subsets, particularly when outcome ICC is high. These findings highlight the strong baseline performance of simple person-level models and underscore the importance of selecting appropriate baseline models and validation strategies.

Comparison of predictive performance (correlation) across validation strategies and baseline models for continuous outcomes as a function of outcome ICC. Each panel represents a distinct data subset differing in the number of participants and the number of time points per participant. In each panel, prediction performance is shown for both a random-intercept baseline model and a random-forest machine-learning model using different validation strategies. Continuous outcomes are arranged along the x-axis in order of increasing intraclass correlation (ICC), and the y-axis indicates correlation between observed and predicted scores across the full test set.

Finally, we compared the performance of person-specific models using a moving-window cross-validation split applied to the same empirical example. To ensure sufficient data per participant, we used Subset 1 (N = 20; 150 time points per person). For the binary outcome, the mean within-persons AUC across all outcomes was .68 (SD = .08; minimum = .52, maximum = .84). In contrast, the baseline model, which used the mean of each training set, achieved a mean AUC of .52 (SD = .03; minimum = .44, maximum = .58). Likewise, for nonbinary outcomes, the mean correlation was .51 (SD = .1; minimum = .38, maximum = .65), and the baseline mean correlation was .2 (SD = .04; minimum = .16, maximum = .26). Together, these results indicate that in this example, the person-specific models outperformed the baseline approach.

Main Takeaways and Recommendations

Based on the three common pitfalls discussed, we offer four key recommendations to help researchers assess whether their use-case scenario and validation strategy are well aligned (Box 2). When designing a study, it is important to (a) clearly define the use-case scenario and select a validation approach that is appropriate (see Table 1). In addition, researchers should (b) evaluate the feasibility of the strategy by confirming that the outcome exhibits sufficient variability for the intended use case—this can also be assessed after data collection (see Mismatch Between Chosen Validation Strategy and Actual Observations, Table 6, and Fig. 7). During the data analysis and interpretation of the prediction performance, it is helpful to (c) choose meaningful baselines for performance comparison (see Weak Benchmark and Missing Baseline Comparison and Figs. 8–10) and (d) incorporate within-persons evaluation if the goal is to track individuals over time (see Lack of Within-Persons Evaluation and Biased Overall Performance Estimates and Figs. 4–6). Following these steps can help ensure alignment between study objectives and validation strategy and reduce the risk of common pitfalls.

Main Recommendations for the Evaluation of Prediction Models Based on Longitudinal Data

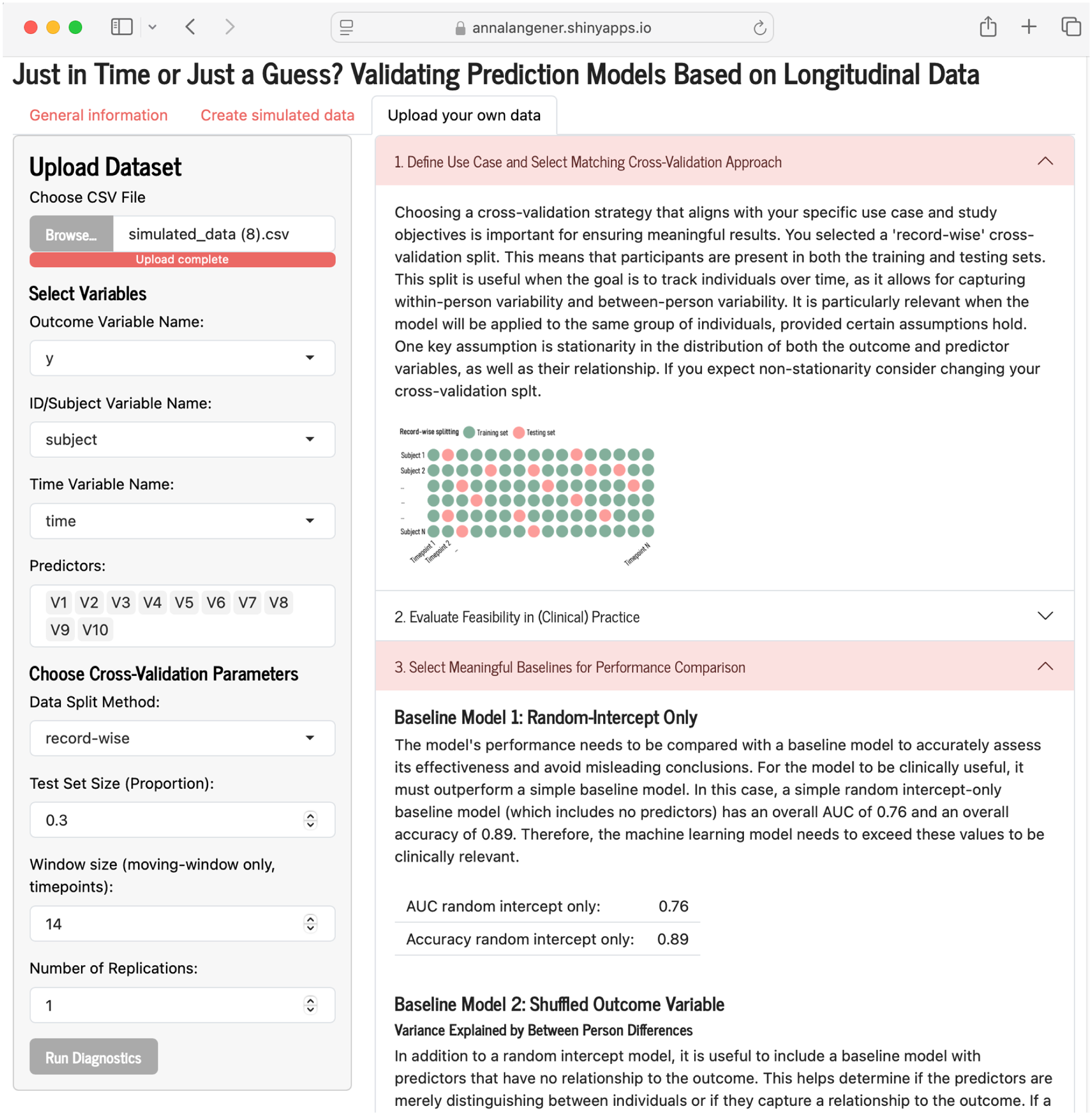

To further guide researchers in choosing an appropriate strategy, we developed a tool that helps assess whether their strategy aligns with their goals (run it online: https://annalangener.shinyapps.io/Justintime/; run it locally: https://github.com/AnnaLangener/Justintime). Researchers can upload their own data or simulate new data if they have not collected any yet. Once the data are uploaded, the app walks the researcher through the four main recommendations, offering descriptive statistics, visualizations, and baseline-model comparisons to help avoid common pitfalls (for a screenshot of the app, see Fig. 11).

Example screenshot of tool to guide researchers on whether their chosen validation strategy is in line with their research objectives (https://annalangener.shinyapps.io/Justintime/).

Discussion

Summary and conclusions

The use of longitudinally collected data has become increasingly prevalent in research, particularly in developing models aimed at predicting emotions or behaviors, often through passively gathered data. These predictive models hold significant promise for diverse applications across different fields. However, the practical effectiveness of these models in real-world settings depends on careful evaluation to ensure they perform reliably in real-world settings.

In this article, we offer guidance to researchers on how to select the most suitable validation strategy based on their specific research objectives and the context of their use case. We also identify three common pitfalls that can arise during the development of predictive models using longitudinal data. Through simulated and empirical examples, we demonstrate that overlooking these pitfalls can lead to overly optimistic performance estimates, which may undermine the utility of the models when applied in practice.

To address these challenges, we propose four key recommendations that support researchers in selecting the right validation strategy and avoiding the identified pitfalls. In addition, we introduce a tool designed to help researchers assess whether their chosen strategy aligns with their research goals. By incorporating these recommendations and using the provided tool, researchers can improve the effectiveness of their predictive models and enhance their real-world applicability.

Limitations and future outlook

Our results indicate that simple baseline models can often achieve strong predictive performance. Therefore, if the goal is to track individuals over time, future research should prioritize developing algorithms that better account for within-persons differences and individual variability, such as multilevel models (Peugh, 2010). In multilevel models, a common practice is to person-mean center predictor variables to better isolate within-persons effects (Wang & Maxwell, 2015). When predictors exhibit high ICCs, centering may be especially beneficial because it can help the model distinguish true within-persons relationships from between-persons variation, ultimately improving within-persons predictive performance. Combining baseline information and predictor variables may also enhance predictive performance. For example, recently developed algorithms, such as mixed-effects random forests (e.g., Capitaine et al., 2021), explicitly account for nested data structures, allowing models to capture both within- and between-persons variation more effectively, which may lead to improved model performance.

In this article, we have primarily focused on approaches for predicting either within-persons variation or between-persons variation. However, as mentioned earlier, researchers may be interested in predicting both. In such cases, we recommend reporting both within-persons and between-persons performance. In addition, researchers could consider developing two separate models: one that focuses on within-persons changes and another that aims to capture between-persons differences. These models can then be combined into a joint model.

Note that if a record-wise split is used and the variation in outcomes and predictors is largely explained by between-persons differences, the combined model may yield overly optimistic results, as demonstrated in this article. In such cases, a subject-wise split is necessary to obtain a more reliable assessment of model performance. To help researchers determine whether this applies to their data, they can use our Shiny app to examine how much variance is explained by between-persons differences and compare their results with a baseline model that assumes no relationship between predictors and outcomes, similarly as proposed by Chaibub Neto et al. (2019). This can help in selecting the appropriate strategy when both within-persons and between-persons variation are of primary interest.

Passive-sensing studies involve many researcher degrees of freedom; therefore, we strongly encourage researchers to preregister their study plans (ESM template: Kirtley et al., 2021; passive-sensing template: Langener, Siepe, et al., 2024). In this article, we recommended assessing outcome variability after data collection is complete. However, to enhance transparency and study rigor, we also suggest that researchers consider expected outcome variability in advance and specify in their preregistration how they will handle cases in which the expected variability is insufficient. Likewise, researchers should think beforehand what level of baseline performance they expect and what level is acceptable for them. This approach allows researchers to maintain the benefits of preregistration while ensuring that all methodological decisions are clearly documented and transparently reported.

In this article, we discussed that for predicting within-persons changes, the outcome must vary sufficiently within each individual. Importantly, when using a moving-window split, the outcome also needs to exhibit sufficient variability within each training window; otherwise, a simple baseline model would likely perform better. Therefore, researchers should check for temporal trends in their data. For example, if the outcome is consistently present in the first part of the time series but absent in the second part, there may be overall variability in the outcome but not enough within each split to make meaningful predictions that outperform a simple baseline model. Our Shiny app can help visualize the data, enabling researchers to assess whether this issue is present in their data sets. In addition, conducting a stationarity test can be useful to further check for this problem.

Conclusion

Researchers often aim to develop predictive models using a combination of questionnaires and passively collected data. However, to ensure these models are practically applicable, it is crucial for researchers to define a clear use-case scenario and select a validation strategy that aligns with it. In addition, there are several common pitfalls that must be avoided to enhance the practical utility of these models. To improve their effectiveness, we recommend that researchers actively check for these pitfalls, for example, by using our shiny app and following our proposed guidelines. Ultimately, we believe that addressing these factors is essential for ensuring that predictive models are truly useful in real-world applications.

Supplemental Material

sj-docx-1-amp-10.1177_25152459261418960 – Supplemental material for Just in Time or Just a Guess? Addressing Challenges in Validating Prediction Models Based on Longitudinal Data

Supplemental material, sj-docx-1-amp-10.1177_25152459261418960 for Just in Time or Just a Guess? Addressing Challenges in Validating Prediction Models Based on Longitudinal Data by Anna M. Langener and Nicholas C. Jacobson in Advances in Methods and Practices in Psychological Science

Footnotes

Acknowledgements

We thank all members of the Jacobson Lab for their valuable input and constructive feedback on this article during lab meetings. No ethical approval was required because existing openly available and simulated data were used. See https://github.com/AnnaLangener/Justintime_Paper and ![]() . In this study, we analyzed existing data rather than collecting new data; therefore, we used the available sample size and measures (note that different subsets of the data were included). We reported data exclusions, specifically, removing participants with fewer than 50 time points. In our simulation study, we varied the sample size based on values commonly used in practice. The study was not preregistered before conducting it.

. In this study, we analyzed existing data rather than collecting new data; therefore, we used the available sample size and measures (note that different subsets of the data were included). We reported data exclusions, specifically, removing participants with fewer than 50 time points. In our simulation study, we varied the sample size based on values commonly used in practice. The study was not preregistered before conducting it.

Transparency

Action Editor: David A. Sbarra

Editor: David A. Sbarra

Author Contributions