Abstract

The introduction of smart virtual assistants (VAs) and corresponding smart devices brought a new degree of freedom to our everyday lives. Voice-controlled and Internet-connected devices allow intuitive device controlling and monitoring from all around the globe and define a new era of human–machine interaction. Although VAs are especially successful in home automation, they also show great potential as artificial intelligence-driven laboratory assistants. Possible applications include stepwise reading of standard operating procedures (SOPs) and recipes, recitation of chemical substance or reaction parameters to a control, and readout of laboratory devices and sensors. In this study, we present a retrofitting approach to make standard laboratory instruments part of the Internet of Things (IoT). We established a voice user interface (VUI) for controlling those devices and reading out specific device data. A benchmark of the established infrastructure showed a high mean accuracy (95% ± 3.62) of speech command recognition and reveals high potential for future applications of a VUI within the laboratory. Our approach shows the general applicability of commercially available VAs as laboratory assistants and might be of special interest to researchers with physical impairments or low vision. The developed solution enables a hands-free device control, which is a crucial advantage within the daily laboratory routine.

Keywords

Introduction

Not long ago, the concept of a virtual assistant (VA) was something one would have surely imagined being set into the territory of science fiction, where artificial intelligence (AI)-driven entities support comic superheroes saving the day and help TV spaceship crews exploring places “no man has gone before.” In recent years, natural language processing (NLP)—the foundation for voice-based VAs—has become more and more sophisticated and reliable. 1 Lately, many big information technology (IT) companies have started to work on VAs, which use speech recognition technology, AI, and speech synthesis in order to understand and answer questions and execute specific tasks. 2 Today smart personal assistants are commercially available and have found their way into our homes and pockets. When Apple released one of the first widely available VAs, Siri, to the public in late 2011, the masses were thrilled, although privacy and security concerns were raised immediately. 3 Soon other smart assistants were released: Google’s Google Assistant, Microsoft’s Cortana, Amazon’s Alexa, and Samsung’s Bixby, just to name the most popular.4,5 Some VAs allow the extension of their capabilities by the installation of custom-developed applications, so-called “skills” or “actions.” Besides use for entertainment and home automation purposes, there is also a demand to use the current VA technology in a professional environment. With speech being one of the most natural and intuitive means of interaction, machine control or data access using a voice interface offers advantages over conservative device interaction methods that are provided by human interface devices (HIDs). 6 A hands-free machine control while pursuing other tasks, an intuitive interaction that does not require extensive training, and an easy device interaction for operators with visual or physical impairments are just a few benefits of a voice user interface (VUI).

So far, the use of VAs has been evaluated in different professional fields. Allen et al. used an Amazon Echo with a custom skill for a hands-free request of visual support on a tablet within a clinical study, while Miehle et al. presented a concept for VAs as a support in surgical operating rooms.7,8 A smart cabinet with a VUI deploying an Amazon Echo in a custom service environment was developed by Ennis et al. as a support for the elderly in everyday situations. 9

Also, a skill for assistance in chemistry labs was developed recently. “Helix” is an Amazon Echo-based skill that can look up chemical reaction information and chemical-associated data. 10 While the introduction of VAs into natural sciences just recently gained the interest of the scientific community, which is most probably due to the recent emergence of the technology and software, other innovative automation concepts and smart applications found their way into laboratories some time ago. Smart devices are already used to support scientists within their experiments. Current research topics include the use of smartphones, tablets, and smartglasses as inexpensive substitutions for some laboratory devices, for documentation tasks, and for data accession and analysis.11–13 Novel automation efforts comprise the fully automated design, execution, and evaluation of experiments, which could lead to a tremendous efficiency enhancement of lab work in the near future.14,15 The digital transformation of science has just started, but it seems to hold great potential to ease the work in all parts of scientific research.

The aim of this study was to establish a laboratory VUI for controlling laboratory instruments. This was achieved using commercially available hardware, cloud services, and open-source solutions. The setup is modular and allows the integration of older laboratory instruments. It showed high accuracy in the execution of speech commands. Using open communication protocols and data formats allows integration into available digital laboratory infrastructures.

Materials and Methods

Device IoT Retrofitting Approach

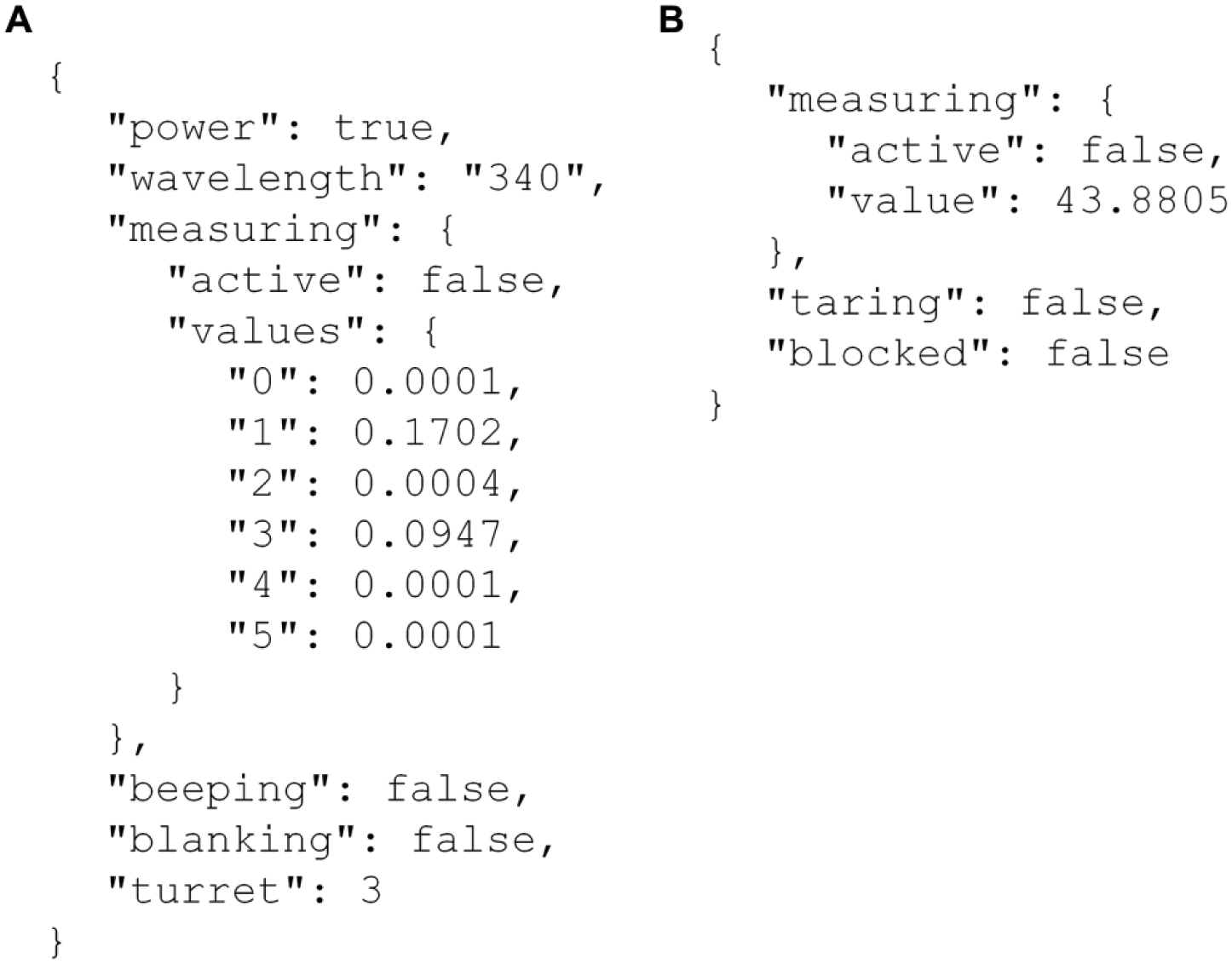

To integrate standard laboratory instruments into a modern Internet of Things (IoT) environment, the visual programming tool Node-RED 0.15.2 16 (IBM, Armonk, NY) deployed on a desktop PC (Intel i5 6500T CPU, 8 GB of RAM) running Windows 7 Professional 64-Bit OS (Microsoft Corporation, Redmond, WA) was used. Both laboratory devices utilize an RS232 interface for serial communication that was connected using an RS232-USB adapter. To realize an IoT capability, the proprietary port commands for device interaction were synchronized with the cloud-based IoT broker AWS IoT 17 (Amazon Web Services, Seattle, WA) that hosts a digital representation, a so-called device shadow, of the specific laboratory instrument. Port commands of the high-precision balance ED224S (Sartorius AG, Göttingen, Germany) were provided by the manufacturer’s manual. Port commands and drivers for the spectrophotometer GENESYS 10S UV-Vis (Thermo Fisher Scientific Inc., Waltham, MA) were kindly provided by the manufacturer. The serial port/device shadow synchronization was implemented using JavaScript and standard function nodes in Node-RED. The bidirectional device shadow communication was realized using the MQTT (Message Queue Telemetry Transport) messaging protocol. 18 A secured MQTT connection between the device server and the specific device shadow was established using X.509 certificates over the designated secure MQTT TCP (Transmission Control Protocol) port 8883. 19 Node-RED standard nodes were used for MQTT-RS232/RS232-MQTT communication and string manipulation. Integration of a Wi-Fi socket (Edimax SP-2101W, Edimax Computer Company, Santa Clara, CA) for instrument power control was achieved with the custom node “node-red-contrib-smartplug 2.0.0.” The device shadows were designed to perform all basic device interactions. The underlying JavaScript Object Notification (JSON) format is modular, which allows the addition of further device parameters at a later time (see Fig. 2 ).

Setup of a Custom Skill for Laboratory Instrument Interaction

An on-demand computing service 20 (Amazon Lambda, Amazon Web Services) was utilized to host a custom skill that interacts with the laboratory device shadows and reacts to processed speech command inquiries. Node.js 6.10 was used as a runtime environment and custom intents were implemented to realize specific device interactions. The Alexa Skills Kit (Amazon Web Services), which comprises the so-called “utterances”—custom phrases to invoke a specific device action, was enabled as a trigger for the hosted skill. An audio response model was implemented to give feedback to specific phrases.

Creation of a Voice Interaction Interface

The VUI was designed using the Alexa Skills Kit. The invocation names for the specific skills were defined as “photometer” and “balance,” respectively. The corresponding endpoints were set to the specific Lambda application resource. The interaction model was designed redundantly to have a selection of different phrases triggering a specific intent and resulting in an instrument action. The underlying speech recognition algorithms are part of Amazon’s Alexa Voice Service 21 (AVS, Amazon Inc., Seattle, WA). An Amazon Echo first-generation device was used as a VA-enabled device. The primary language of the system was set to English.

Results and Discussion

Virtual Assistant Ecosystem

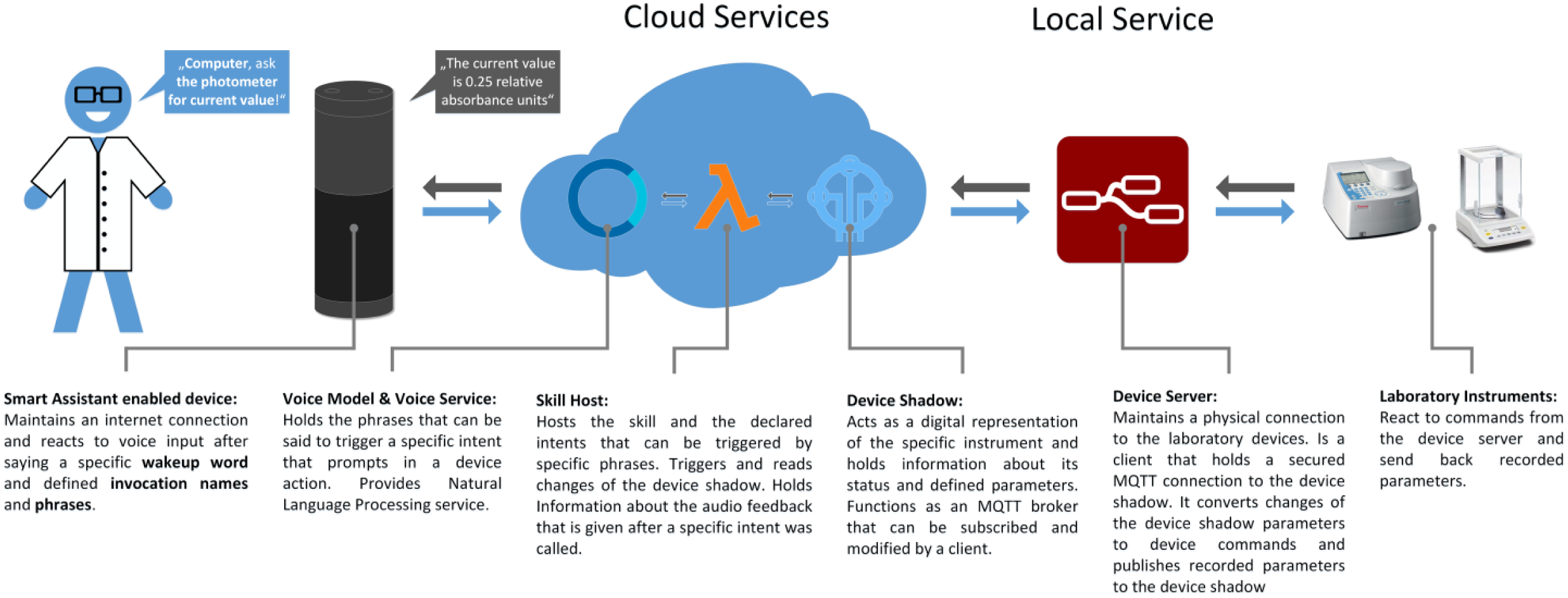

The voice model holds information about the skill’s invocation name, for example, “photometer,” which needs to be said to call a skill. The model also contains a defined phrase, for example, “to measure current value” or “to set wavelength to XXX nanometers.” Specific phrases invoke an intent, which, regarding the abovementioned examples, causes a photometric measurement or sets the spectrophotometer to a desired wavelength (see Fig. 1 for a dataflow scheme). This so-called interaction model uses an Internet-accessible skill hosting service as an endpoint and uses commercial NLP algorithms (Amazon) to process speech inquiries and generate speech output. The skill accepts requests based on the interaction model and performs actions upon these, for example, providing an audio feedback and/or interacting with the device shadow that is a digital representation of the current device state. The device shadow basically acts as an MQTT broker that holds a predefined key–value JSON of the device’s conditions and states (see Fig. 2 for the JSON layout of the used device shadows).

Established VA ecosystem. The figure shows the dataflow of the system. First, a defined speech command is given by the operator that gets recognized and recorded by a VA-enabled device and is then processed by a cloud voice service. Afterwards, a mapped intent within a cloud-hosted skill is called that modifies the device shadow of an integrated laboratory instrument. Changes of a device shadow get recognized by a local service and result in a device action. Taken measurements or parameter changes get recorded, and the device shadow gets changed by a local service that functions as a client of the device shadow service. This changed information is called by the skill, which provides an audio feedback that is processed by the voice service and given out by the VA-enabled device.

Specified device shadows of the integrated laboratory devices. (

This shadow can be accessed and modified by the skill. This can, for example, set the value of the subkey “active” to “true” within the “measuring” key after the specific intent is called. The shadow can also be accessed by the local service, which can update the device shadow values for a defined key, for example, writing a measured absorbance value to a defined turret position key after an absorption is measured or setting “measuring” to “false” after a successful measurement. Specific limits were defined for specific values; for example, the spectrophotometer wavelength can only be set between 190 and 1100 nm. If the operator wishes to set up a wavelength out of this range, the VA-enabled device gives out a failure message and specifies the valid values.

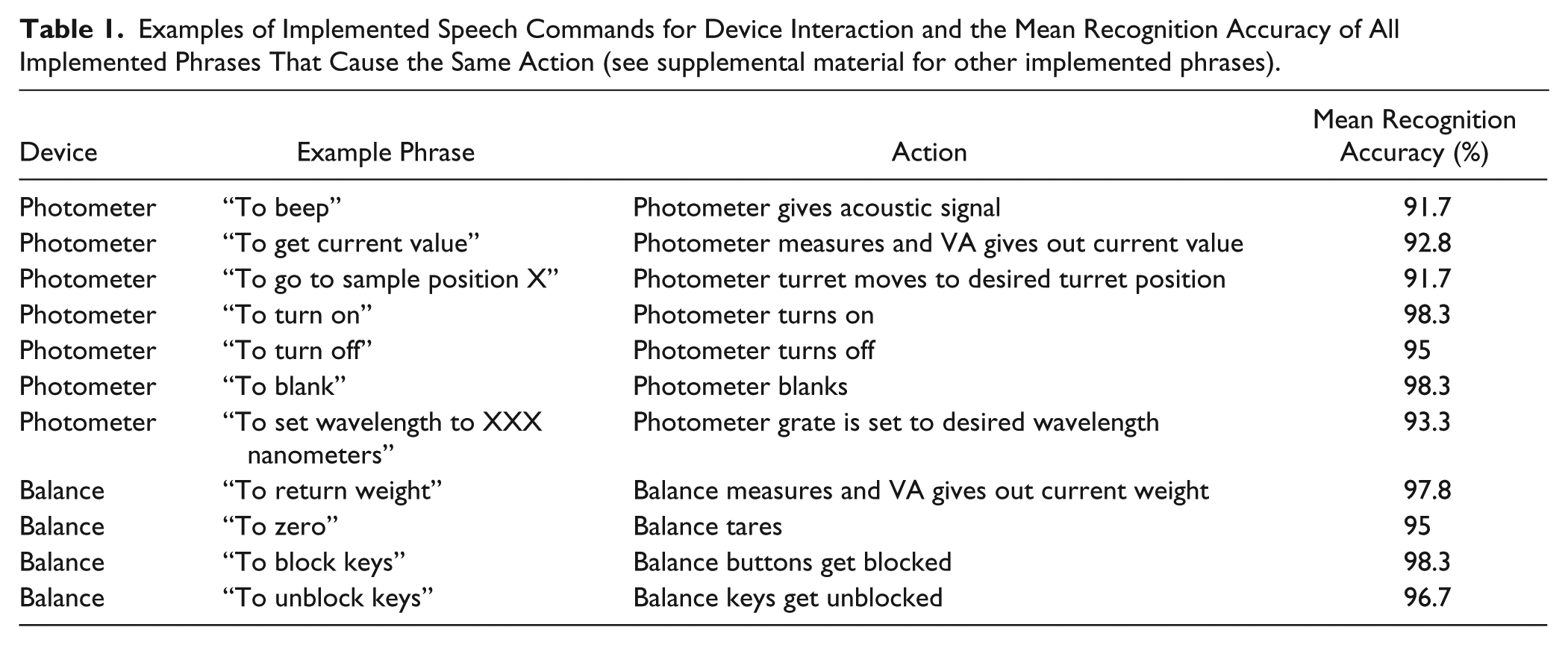

A complete speech command consists of a wake-up word, which is “computer” in our setup; the name of the skill, which is either “the photometer” or “the balance”; and a phrase that is mapped to an action. A total of 18 phrases were defined for seven different interactions with the photometer, whereas 9 phrases for four different balance interactions were implemented (see Table 1 for example phrases).

Examples of Implemented Speech Commands for Device Interaction and the Mean Recognition Accuracy of All Implemented Phrases That Cause the Same Action (see supplemental material for other implemented phrases).

Due to its modular fashion, the developed environment allows for easy integration of additional speech commands by only adding compatible code on the cloud side of the system. The integration of a similar device from another vendor is possible. Only the specific proprietary interaction commands and the communication standard (depending on the installed data exchange interface) within the local service need to be changed. The integration of other laboratory device types is possible. A reasonable device shadow design needs to be worked out for every new device type, and a new voice model and custom skill need to be implemented. The use of an open communication protocol (MQTT) and an open and compact data format (JSON) allows the integration of accruing data into laboratory information management systems (LIMSs) or enterprise resource planning (ERP) systems. Transport Layer Security (TLS) encryption provides advanced data security for transmissions from the cloud to the local service environment and vice versa. Since data security is always a major topic in science, engineering, and research, the established infrastructure might be seen as critical. The speech recognition is done by an external service provider, and all arising data are stored on an external service provider’s servers, which can always be targeted by attacks. From the privacy point of view, the smart-enabled device records voice after a specific wake-up word is said. It can potentially be cracked, which can grant unauthorized access to the connected device’s capabilities.4,22 The use of the system by unauthorized personnel could potentially be avoided by using voiceprint identification, which can limit the VA features to a specific group of users.23,24 A function like this might be implementable in the not too distant future. 25

Voice User Interface Accuracy

To determine the accuracy of the VUI and the speech command recognition capability of the underlying cloud algorithm, each of the particular phrases for the device control of the spectrophotometer or the balance was said 30 times in a row by a male scientist, who was already familiar with the developed system and the defined phrases. It was then checked whether the given speech commands resulted in the expected device actions. The evaluation was done in a laboratory with active exhaust ventilation that caused ambient noise in the range of 50–55 dB, which is equivalent to the sound level of a normal conversation. The speech commands were given 1.5 m from the VA-enabled device. Possible evaluation outcomes were that the system did not map the phrases to the correct intent, maybe due to an ambiguity error; that it could not connect the said phrases to any intent, due to not understanding the phrase; or that it mapped the specific phrase to the correct intent. The first two scenarios were both graded as “not correctly recognized,” while the last was marked as “correctly recognized.” The command recognition accuracy analysis resulted in an overall mean recognition accuracy of 95% ± 3.62 (see Table 1 for details and supplemental material for a detailed listing of all implemented phrases and video material of the specific device interactions). This accuracy seems to be adequate, taking into account that the operator has a German accent, which might influence the voice recognition success rate. 26 It has to be mentioned that the analyzed setup was already established for 4 months at the moment of the accuracy determination, and that it was trained by male as well as female users, with no perceptible differences concerning the voice recognition accuracy between the sexes. The experiences from this training process led to the suggestion that the recognition accuracy might be similar with different users, at least if they have the same accent. The used NLP service gets trained perpetually by using data from speech interactions, which means that the current shown data are just a snapshot, as the service gets more efficient every day. The high efficiency of the used cloud service demonstrates the capability of commercially available NLP algorithms and highlights that an application in professional environments is already imaginable.

The digital transformation of industry and everyday life is eminent and will pervade most facets of society. This new era of smart devices and services offers tremendous possibilities to support engineers and scientists. As demonstrated in this report, utilizing virtual voice-based assistants in the laboratory might be a powerful tool to improve the efficiency of laboratory processes.

We were able to integrate present laboratory devices into an IoT environment in an affordable way. By using a combination of commercially available and open-source solutions, a virtual laboratory assistant was implemented. It acted as a voice-based user interface to laboratory instruments. Although all data transfer within this system is encrypted, potential data security and privacy threats might arise due to the external processing and generation of speech data. NLP is a hardware-demanding task but can potentially be established as an in-house-solution to bypass potential security concerns. This would require substantial know-how and maintenance expenses, though, whereas the current generation of skills requires Internet-accessible endpoints. Also, a stable and fast Internet connection is needed to access the used cloud services, which might not always be given.

The use of voice commands and the VA voice output adds to the general cacophony in the lab and might be distracting to other laboratory staff. The pairing with Bluetooth headphones can already be accomplished with some Echo hardware versions (e.g., the Echo Dot), which might minimize noise annoyance caused by VA voice output. Amazon recently announced the “Alexa Mobile Accessory Kit” to bring Alexa to on-the-go devices like headsets, headphones, and smartwatches. 27 This offers the chance to add mobility to the VA and decrease the noise level within the laboratory.

VA technology and software is not limited to the presented VA-enabled device class. AI-enabled smartglasses were recently announced that might form a fruitful symbiosis between safety goggles, which are mandatory in the laboratory; a virtual laboratory assistant; and augmented reality elements. 28 This combination could be a great addition to the laboratory workflow (e.g., to interactively display device parameters, standard operating procedures [SOPs], and safety-relevant notifications in the experimenter’s field of view). Since cloud NLP is not as limited as the integrated voice recognition technology that most current smartglasses use for voice handling, even complex voice commands could be processed. Yet current hardware and battery limitations of smartglasses need to be overcome to integrate the technology into everyday working practice, but they offer another way to bring AI to the lab. 29

To conclude, it can be said that VUIs hold great potential for applications within the laboratory—not only as a means for instrument control, but also as a system to retrieve and save experimental data and protocols. The proof-of-concept setup shown in this study is surely only one of the first steps toward an AI-assisted laboratory. It indicates that present digitalization and automation concepts have the potential to improve the workflows and efficiency in the laboratory.

Current efforts of laboratory instrument manufacturers are focused on the development of standardized formats and protocols for laboratory device communication. This might pave the way to a plug-and-play IoT capability of future laboratory instruments. 30 The shown concept seems to be a useful addition to the laboratory. In order to find a place for a VA in the lab environment of the future, however, security solutions that reflect common privacy and confidentiality rules need to be developed.

Our ongoing research is focused on implementing further instrument types and the integration of the virtual laboratory assistant into a functional laboratory information and management system. Also, the implementation of SOPs supported by a VA is planned.

Supplemental Material

Supplemental_Material_Austerjostetal.pdf – Supplemental material for Introducing a Virtual Assistant to the Lab: A Voice User Interface for the Intuitive Control of Laboratory Instruments

Supplemental material, Supplemental_Material_Austerjostetal.pdf for Introducing a Virtual Assistant to the Lab: A Voice User Interface for the Intuitive Control of Laboratory Instruments by Jonas Austerjost, Marc Porr, Noah Riedel, Dominik Geier, Thomas Becker, Thomas Scheper, Daniel Marquard, Patrick Lindner and Sascha Beutel in SLAS Technology

Footnotes

Supplemental material is available online with this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was financially supported by the Bavarian Ministry of Economic Affairs and Media, Energy and Technology within the Information and Communications Technology program (grant number IUK-1504-0006//IUK470/001).

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.