Abstract

Study Design:

Cross-sectional and longitudinal validation study.

Objective:

Development and validation of a short, reliable, and valid questionnaire for the assessment of low back pain–related disability.

Methods:

The iDI was created in a stepwise procedure: (1) its development was based on the literature and theoretical consideration; (2) outcome data were collected and evaluated in a pilot study; (3) final validations were performed based on an international multicenter spine surgery outcome study including 514 patients; (4) the iDI was programmed for a tablet computer (iPad) and tested for its clinical practicability.

Results:

The final version of the iDI comprises of 8 simple questions related to different aspects of disability with a 5-point Likert-type answer scale. The iDI compared very well to the Oswestry Disability Index in terms of reliability and validity. The iDI was demonstrated to be suitable for data assessment on a tablet computer (iPad).

Conclusions:

The iDI is a short, valid, and practicable tool that facilitates routine quality assessment in terms of low back pain–related disability.

Introduction

Routine quality measurement of spinal treatments and their documentation must become part of our daily clinical practice if we want to improve the care for our patients. 1 Additionally, we will be more and more scrutinized by health care stakeholders to justify the amount of money spent in an area of medicine that is predominately focusing on an improvement of health-related quality of life rather than long-term survival. 2 Although the need for a quality assessment in spinal surgery is realized for many years, we are far away from a routine outcome assessment in our daily care. The prerequisite for the widespread use of quality management in daily clinical practice relies not only on data validity and reliability but also on the simplicity of data collection and handling. Particularly, the basic principle of “less is more” applies in this context. 3 Recent studies argued that the length of a questionnaire influenced patients’ response rates and influenced data quality. 4,5 If we can generate a small comprehensive outcome data set for each patient, it is of more value than a set of sophisticated data with a lot of missing values. The validity and usability of very short scales, for example, neurological stroke scales, is already demonstrated in other clinical disciplines such as neurology in an emergency setting, where every second counts. Collection of missing data is very cumbersome and often not possible in retrospect.

The purpose of this project was to generate a valid, reliable, and simple outcome tool for daily clinical application using modern information technology with a minimum of questions. A further goal was to make data assessment as simple as possible. Due to the wealth of data collected in our multinational study in 3 large German-speaking spine centers, this article summarizes the results on the self-reported disability domain of this new outcome tool. In this study, we specifically focused on the question whether a score for self-reported disability can be generated that reduces completion time and improves data consistency without compromising reliability and validity when compared with the most widely used Oswestry Disability Index (ODI). 6,7 In this context, the reader should see the development and evaluation of an outcome instrument, we coined iDI, Internet-suitable disability index.

Methods

Design

In analyzing a total of 514 patients, the cross-sectional and longitudinal validation includes a 3-step validation process. First, iDI questions were created according to recommendations from international guidelines 8 regarding similar aspects of back-related disability as used by the ODI, which was considered as the gold standard. After development and refinements, 3 data collection waves were conducted to measure and validate the psychometric properties.

Measures

The iDI comprised 8 questions covering walking, sitting, standing, lifting, self-care, sleeping, social life, and traveling. The response format was a 5-point Likert-type scale with grouping answers “not at all,” “somewhat,” “moderately,” “strongly,” and “extreme.” A 5-point Likert-type scale was chosen because of its usability and its validity. 9 For each of the 8 items, a maximum of 4 points was attainable (no disability = 0, maximum item-specific disability = 4). The total sum was divided by the total possible sum (ie, n = 32), multiplied by 100. In our multicenter study, ODI, 7,10 Roland & Morris Disability Questionnaire (RMDQ), 11,12 and EuroQol-5 Dimensions Index (EQ-5D) 13,14 were used as reference scales. All instruments were validated in the German language.

Data Collection and Participants

Pilot Study

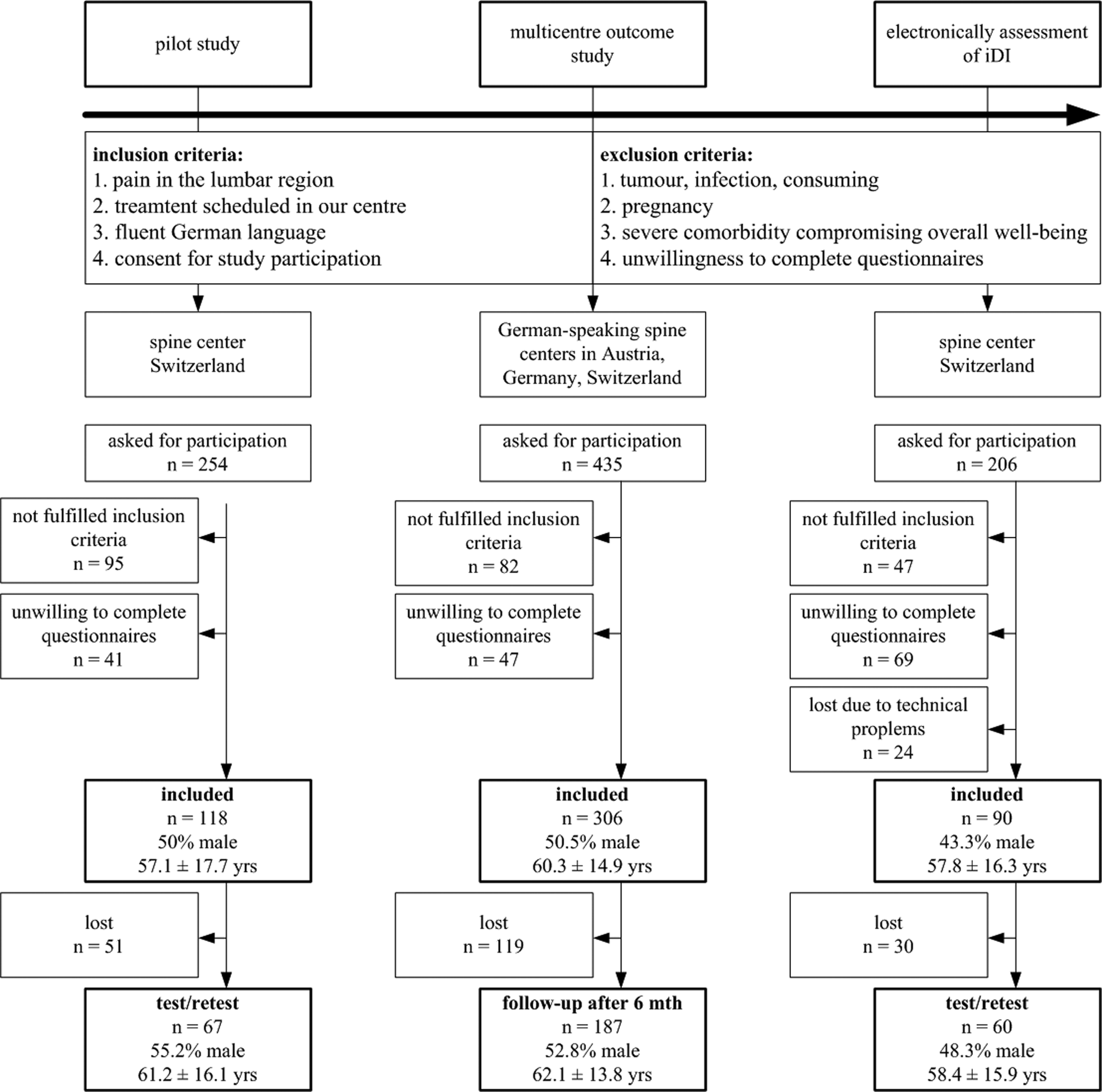

Participants included in the pilot project (n = 118) suffered from low back pain undergoing nonoperative as well as operative treatment in a spine center in Switzerland (Figure 1). Exclusion criteria were patients with pregnancy, tumor, infection, severe comorbidity compromising overall well-being (which was particularly marked by the treating physician as an activity-limiting comorbidity in the Sangha-Index 15 ), and unwillingness to complete questionnaires. All participants completed both questionnaires on spot (paper-and-pencil version): participants randomly received either the iDI or ODI. After returning the completed questionnaire, the participants were given the other questionnaire. Test-retest reliability was assessed by asking participants to fill out a second copy of the iDI or ODI, respectively, within 24 hours 16 and to return the questionnaire the following day in a preaddressed envelope.

Flow of participants.

Multicenter Outcome Study

All participants (n = 306) in this multinational study suffered from degenerative lumbar spinal disorders attending 3 spine centers in Germany, Austria, and Switzerland (Figure 1). Inclusion and exclusion criteria were identical to the pilot study. After giving informed consent, all participants responded to an entire questionnaire set before treatment (baseline) and after 6 months (follow-up). Each participating center sent the completed questionnaires sets to our data assessment center. Six months later, a study coworker contacted all participants by telephone and asked if they still agreed to participate in the follow-up assessment as initially consented. When agreeing, the patients received the questionnaire by mail (paper-and-pencil version) with a preaddressed answer envelope. If patients did not respond within 2 to 4 weeks, they were contacted and reminded again by phone. Thereafter, no further attempt was made. The study protocol was approved by the institutional review boards of the participating hospitals.

Electronic Assessment of iDI

The programming of the electronic version was custom made for use on an iPad mini using iOS in a web-based mode. On the starting page, the hospital staff filled in patient identification number, sex, and date of birth. The next page contained general information on the use of the program. At this stage, the iPad was handed out to the patient who could then advance to the next page. All following pages displayed only one iDI question per page with the 5 response options. Only after responding to the question would the next page appear, allowing for a complete data set. A forward/backward option allowed correcting a question if needed. After answering the final question, the last page appeared with thanks for study participation. The data were automatically transferred to a server. A study coworker was available to assist patients if needed. The number of patients needing help as well as intervention reasons and questionnaire completion time were recorded. After sampling data electronically, the ODI paper-and-pencil version was handed out to the patient. Again, the completion time was recorded. For the test-retest assessment, the study participants received an email within 24 hours with a link to the iDI to be completed for retest measures.

Statistical Analysis

To gain an overview of the test qualities of the iDI, statistical analyses have been conducted in all 3 data sets. All analyses were performed using SPSS version 22.0 (IBM SPSS Inc, Chicago, IL).

Data Quality

The paper-and-pencil versions of iDI and ODI were not considered in the analyses if more than 2 responses were missing. In case of missing 1 or 2 items, the denominator was adapted according to the total possible sum. Floor and ceiling effects were acknowledged if more than 15% of all patients reported highest or lowest score possible. 17

Reliability Measures

Internal consistency was assessed using Cronbach’s α. 17 . A value between 0.8 and 0.9 was considered as acceptable, and more than 0.9 as high. 18 With a test-retest analysis, the extent to which the same results were obtained on repeated measures when no changes are expected have been analyzed. The differences in mean values for repeated trials were checked with the intraclass correlation coefficient (ICCagreement) and the standard error of the mean (SEM). 17 If there is a perfect agreement between the 2 measures, the ICC is 1 and the SEM is 0. The SEM was also used to indicate the minimum detectable change, MDC95%. At P < .05, it is calculated with the formula 1.96 × √2 × SEM and represents the smallest score change that can be interpreted as a real change. Furthermore, expecting a simple structure with one underlying factor, we conducted an exploratory factor analysis with an orthogonal rotation method in form of Varimax. 19

Validation Measures

The convergent validity as well as the divergent validity were assessed by testing the correlations between the ODI, iDI, and matching references scales. 17 With regard to the convergent validity, thus the comparison between analogue reference scales, ODI and iDI were compared to the RMDQ scale. 11,12 In order to compare the scales that measure the opposite constructs (divergent validity), ODI and iDI were compared to the EQ-5D. 13,14

Usability

In terms of usability of the electronic version, completion time (in seconds) as well as iPad handling problems were assessed using a 5-point Likert-type scale (ie, 1 = without problems, without problems after short instruction, with little support, only with support, 5 = not at all). The completion times were compared using a paired t test, ICCagreement, and SEM. 17

Results

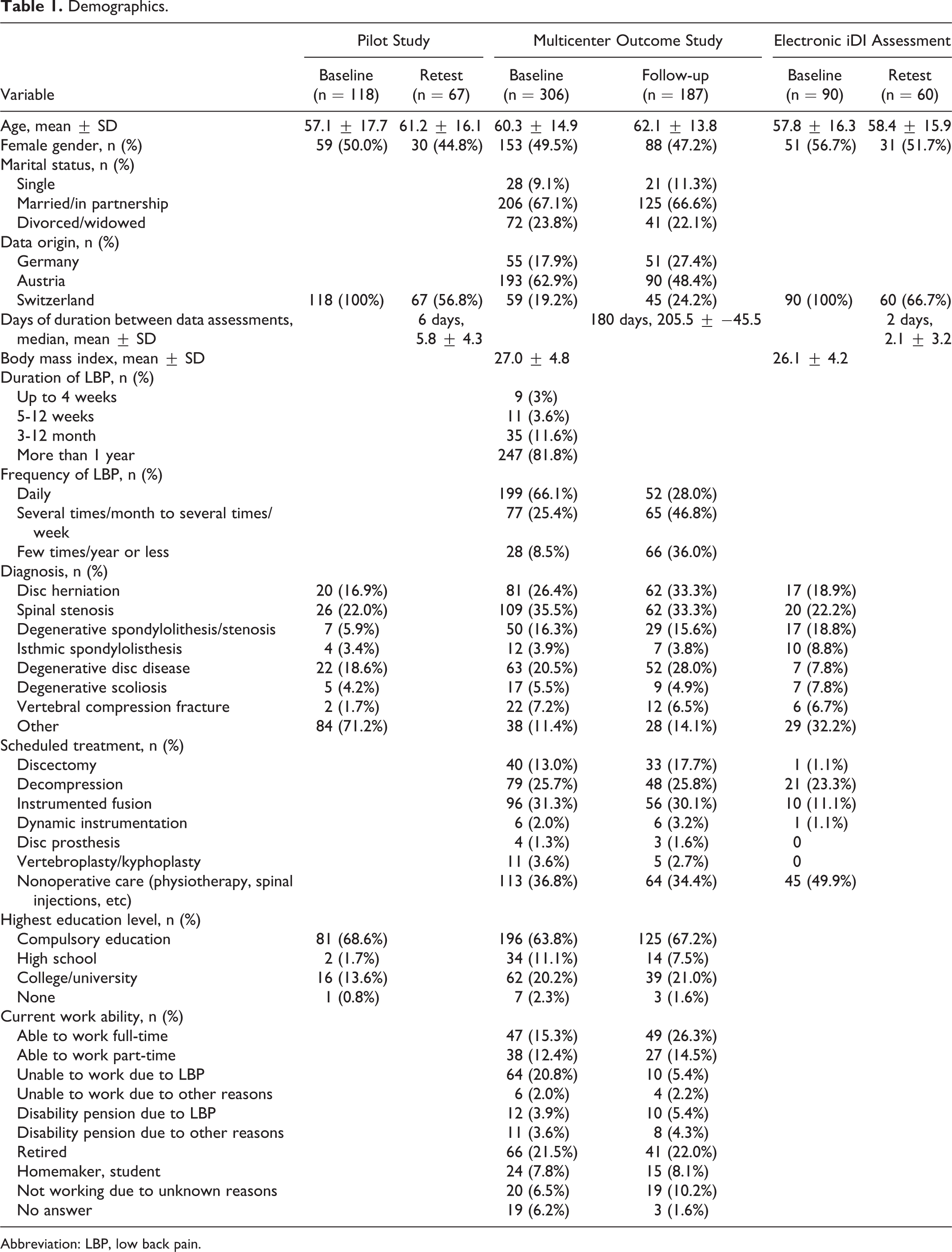

Overall, 514 patients have been included in all 3 studies. One-hundred and eighteen participated in the pilot study (test-retest 56.8%), 306 in the multicenter outcome study (follow-up 61.1%), and 90 in the electronically iDI assessment (test-retest 66.7%; Figure 1). Studies samples characteristics are shown in Table 1.

Demographics.

Abbreviation: LBP, low back pain.

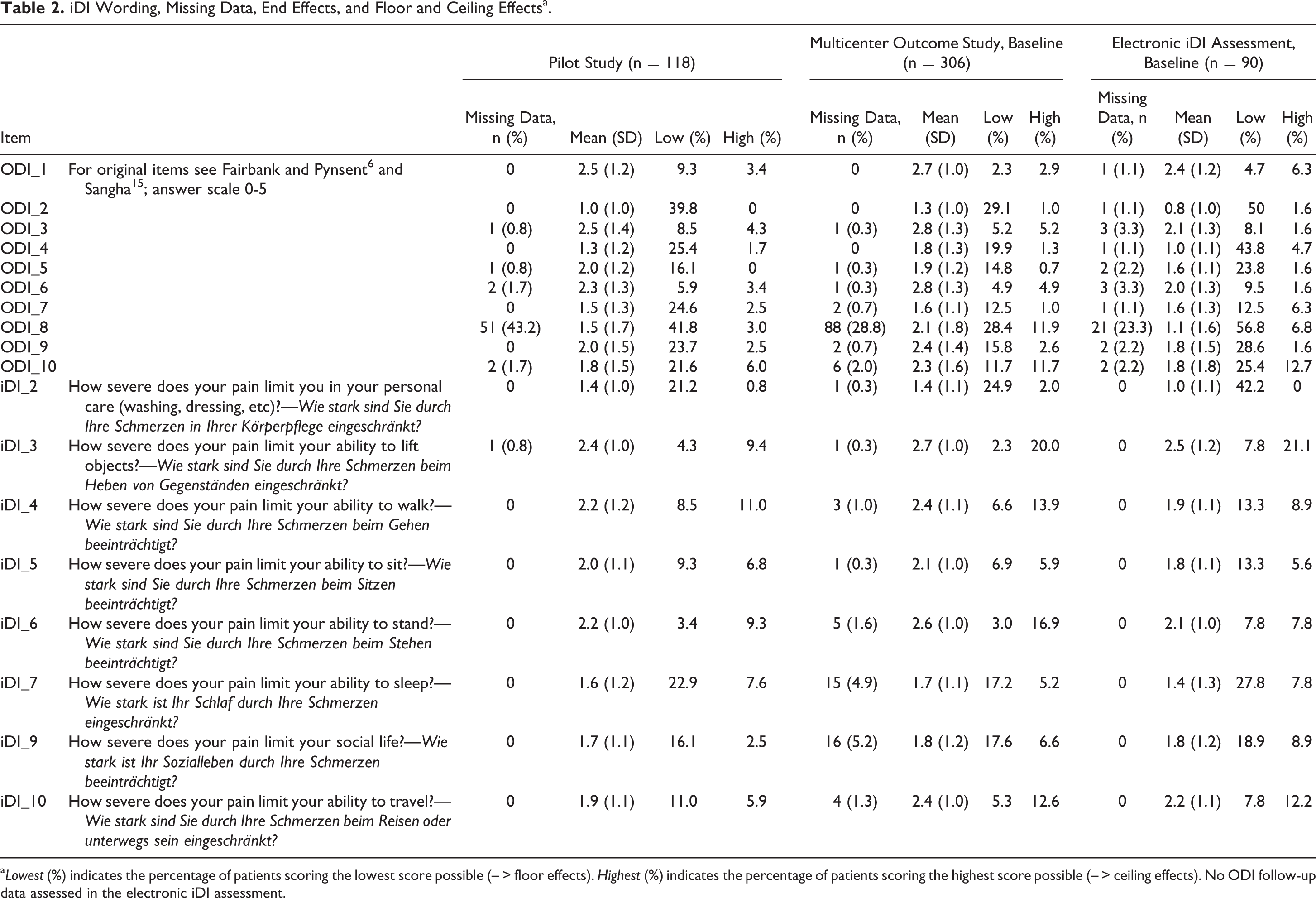

Missing Data and Normality of Score Distribution

Missing data occurred in all 3 studies (Table 2) and included most frequently the ODI Item 8 regarding sex life (31.8%). All other missing items were at random. Participants lost to test-retest or follow-up did not significantly differ from the participants included in the longitudinal analysis. Overall, the ODI scored lower showing more and higher floor effects than the iDI.

iDI Wording, Missing Data, End Effects, and Floor and Ceiling Effectsa.

a Lowest (%) indicates the percentage of patients scoring the lowest score possible (– > floor effects). Highest (%) indicates the percentage of patients scoring the highest score possible (– > ceiling effects). No ODI follow-up data assessed in the electronic iDI assessment.

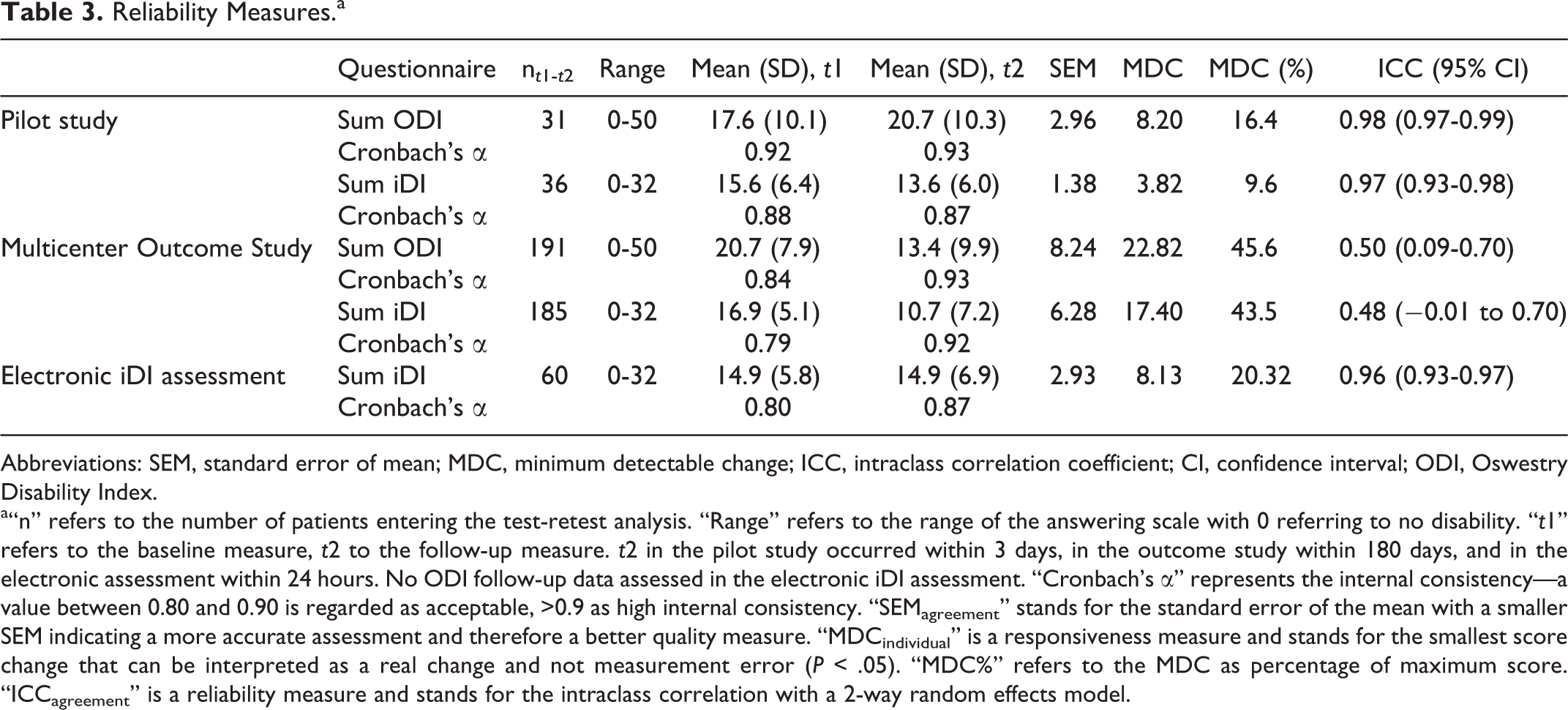

Item Quality and Reliability Measures

In total, 49 of 306 data sets had to be excluded because more than 2 item answers were missing in the multicenter outcome study. The item means were comparable for all 3 data collections (Table 3). With regard to item quality aspects, most item difficulties lie between a medium difficulty (r diff = 0.2-0.8) indicating that most patients answered correctly to the items, and the discriminatory power of all items were found above r = 0.5.

Reliability Measures.a

Abbreviations: SEM, standard error of mean; MDC, minimum detectable change; ICC, intraclass correlation coefficient; CI, confidence interval; ODI, Oswestry Disability Index.

a“n” refers to the number of patients entering the test-retest analysis. “Range” refers to the range of the answering scale with 0 referring to no disability. “t1” refers to the baseline measure, t2 to the follow-up measure. t2 in the pilot study occurred within 3 days, in the outcome study within 180 days, and in the electronic assessment within 24 hours. No ODI follow-up data assessed in the electronic iDI assessment. “Cronbach’s α” represents the internal consistency—a value between 0.80 and 0.90 is regarded as acceptable, >0.9 as high internal consistency. “SEMagreement” stands for the standard error of the mean with a smaller SEM indicating a more accurate assessment and therefore a better quality measure. “MDCindividual” is a responsiveness measure and stands for the smallest score change that can be interpreted as a real change and not measurement error (P < .05). “MDC%” refers to the MDC as percentage of maximum score. “ICCagreement” is a reliability measure and stands for the intraclass correlation with a 2-way random effects model.

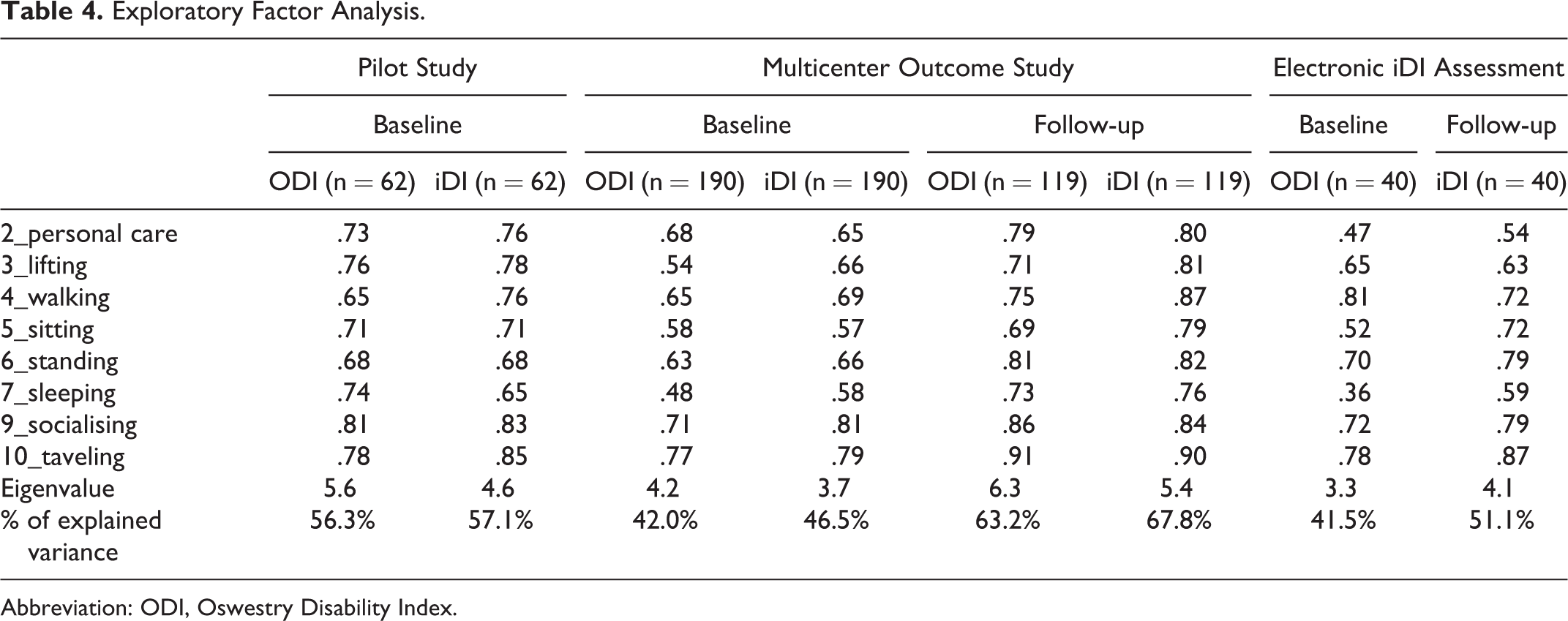

Furthermore, Cronbach’s α revealed that the strength of the relationship between the items within the test instrument were comparable for both questionnaires (Table 3). The extent to which the same results are obtained on repeated measures when no changes are expected have been analyzed with a test-retest analysis; that is, ICCagreement demonstrated comparable values for both questionnaires, while the SEM revealed slightly higher measures for the ODI. MDC95% exceeded 20% in the multicenter outcome study for both questionnaires. With regard to the exploratory factor analysis, a simple structure was detected as expected in both questionnaires (ODI and iDI). Explained variance is slightly higher for the iDI compared with the ODI. However, item loadings (Table 4) are comparable for both questionnaires. The simple structure was identified by 2 methods: first by scree plot and second as eigenvalues greater than 2. Considering that a factor loading is a correlation coefficient, a factor loading above 0.6 (would equal a 0.6 correlation coefficient) is commonly accepted. 18

Exploratory Factor Analysis.

Abbreviation: ODI, Oswestry Disability Index.

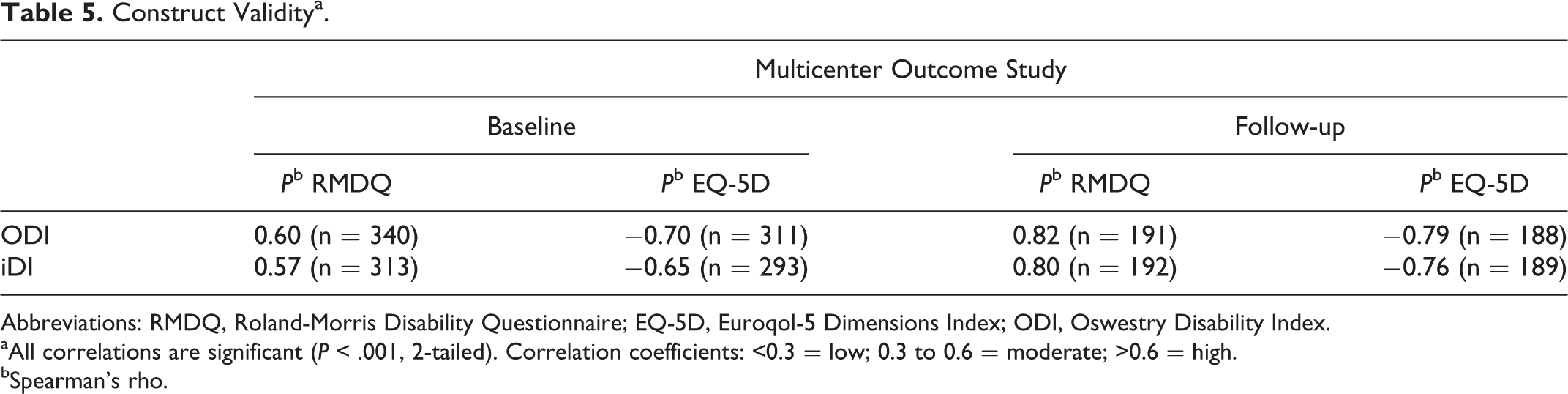

Construct Validity

The hypothesis regarding convergent validity (ie, both questionnaires are expected to correlate moderately to highly in a positive manner to the RMDQ) was confirmed (Table 5). Similarly, the hypothesis regarding divergent validity (ie, both questionnaires are expected to correlate moderately in a negative manner to the EQ-5D) was confirmed.

Construct Validitya.

Abbreviations: RMDQ, Roland-Morris Disability Questionnaire; EQ-5D, Euroqol-5 Dimensions Index; ODI, Oswestry Disability Index.

aAll correlations are significant (P < .001, 2-tailed). Correlation coefficients: <0.3 = low; 0.3 to 0.6 = moderate; >0.6 = high.

bSpearman’s rho.

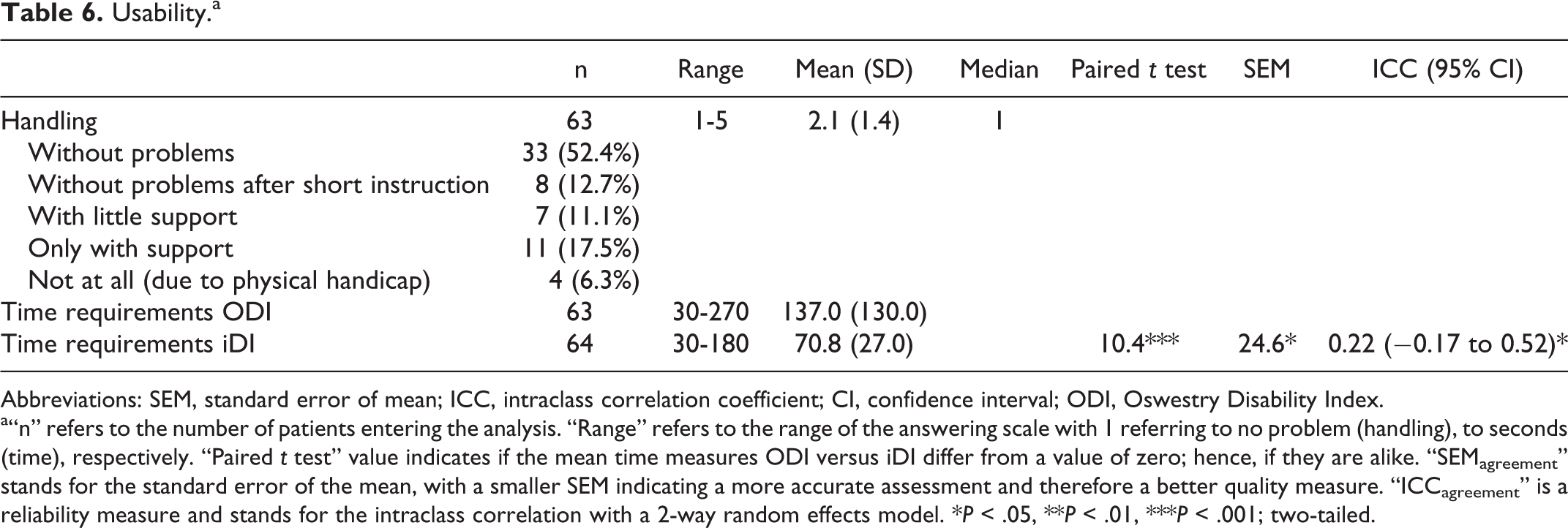

Usability

With regard to completion time in the electronic assessment, a sample of n = 63 filled out the ODI in 137.0 seconds (mean, SD ±53.3), while they answered to the iDI in 70.8 seconds (mean, SD ±27.0; t = 10.4, P < .001). The SEM scored high when a low score is targeted (SEM = 24.6), while ICC demonstrated a low agreement (ICCagreement = 0.22; 95% confidence interval = −0.17 to 0.52). The mean gain in time was 66.3 seconds (Table 6). Considering the problems handling the electronic device, the mean value of 2.1 (SD ±1.4) and the median value of 1 demonstrated an unproblematic handling. Only 3 out of 63 patients could not handle the electronic device due to peripheral neuropathies and needed full assistance by our staff. Sixteen elderly patients required some help in the beginning but managed to get along afterwards.

Usability.a

Abbreviations: SEM, standard error of mean; ICC, intraclass correlation coefficient; CI, confidence interval; ODI, Oswestry Disability Index.

a“n” refers to the number of patients entering the analysis. “Range” refers to the range of the answering scale with 1 referring to no problem (handling), to seconds (time), respectively. “Paired t test” value indicates if the mean time measures ODI versus iDI differ from a value of zero; hence, if they are alike. “SEMagreement” stands for the standard error of the mean, with a smaller SEM indicating a more accurate assessment and therefore a better quality measure. “ICCagreement” is a reliability measure and stands for the intraclass correlation with a 2-way random effects model. *P < .05, **P < .01, ***P < .001; two-tailed.

Discussion

The prerequisite common to all well-functioning industries is competition. In healthy competition, product and service quality rise steadily, innovation leads to new and better approaches, uncompetitive providers eventually go out of market, and costs are driven down for better quality. 20 In medicine, the strategy for improvement in health care must be as well linked to a value-based approach, that is, health outcome achieved for the dollars spent. 21 –23 However, outcome assessment in medicine particularly in spinal medicine is rather complex. The vast majority of spinal disorders are not life-threating conditions but are associated with a compromised quality of life. Therefore, a simple (dichotomous) outcome endpoint is not applicable, for example, survived/not survived or prosthesis in situ/removed. Outcome assessment with regard to an improvement of health-related quality of life in spinal medicine predominately relies on 5 pillars, that is, reduction of pain, self-reported disability, and pain medication as well as an improvement of quality of life and work capacity. 24,25 Reduction of pain and self-reported disability are the most important domains related to a good outcome. 24 The assessment of pain by a visual analogue or numeric scale (10 points) is widely recommended and meanwhile standard in most institutions. 26 In terms of the assessment of self-reported disability related to lumbar disorders, most centers use the ODI, 6 RMDQ, 11 or the North American Spine Society Score (NASS 27 ). The ODI is still the most frequently used instrument. Its main advantage is simplicity and the fact that it is available in multiple languages. However, awkward phrasing as well as multiconceptual response categories exhibit its main disadvantages. 28 With regard to simplicity, the RMDQ is comparable to the ODI. Due to its dichotomous response categories, only few information can be gathered per item. 28 The NASS is useful in measuring back pain, disability, and neurogenic symptoms. Its limitations include a rather narrow validation range. 28 When used in the context of routine quality management rather than scientific assessments of competing treatment methods, the questionnaire must be short, simple but valid and reliable, self-administered, and allow for easy electronic data sampling and management. Regarding these characteristics, the cited questionnaires leave room for improvements. In our study, we intended to develop an outcome tool facilitating disability assessment and allow for easy use on any touchscreen computer.

In 2011, the World Health Organization characterized disability as problems in human functioning that can be located in 3 areas: impairments, activity limitations, and participation restriction. 29 Hence, problems can be located in body function or structure, can cause difficulties in executing a task, or can hinder a person’s involvement in life situations. Measurement of disability is clearly different from measurement of pain and these 2 constructs should not be confounded. The items walking, sitting, standing, and lifting are part of most back pain disability assessment tools. 28,30 The ODI, for example, encompasses additionally self-care, sleeping, social life, traveling, and sex life, which cover activity limitations and participation restriction. However, the latter item frequently leads to nonresponses. Many study participants do not answer this question because it compromises their intimacy and/or because of cultural and religious reasons. In our study, an average number of 31.8% did not answer this specific question across all data assessment waves. From a methodological point of view, missing data that are not random but reflect disagreement or reluctance to answer should be avoided because of result bias. 31 Rather than reinventing the wheel, we opted to use similar aspects of disability than the ODI but omitted pain and sex life for the aforementioned reasons.

With regard to reliability, validity, and usability measures, the iDI is somewhat superior to the ODI. In contrast, Cronbach’s α of the ODI is slightly higher than the Cronbach’s α of iDI. However, an in-depth comparison of the ODI versus iDI item quality, validity, and responsivity needs to be addressed in a subsequent study.

Unlike other ODI alterations, 30 not only the number of items but also the wording was thoroughly changed with the aim to create equidistance since disability measurements should have sufficient gradations. 32 The drawback of a semantic differentiation of item expression is that intervals between statements cannot be presumed equal—not within the clusters nor between its items. 33 Therefore, the response options are often not equidistant (equal interval data level). In order to reach equidistance in response options, a scale should be symmetrical, have odd response options, and have a neutral center of the scale. Otherwise, response options cannot be equidistant. 34 As response format, we therefore opted for a 5-point Likert-type scale. This further leads to improved usability. The mean gain in completion time of more than a minute is superior to the ODI. With regard to the gain in completion time, 66 seconds may not seem a clinically relevant issue. However, the mean duration for answering the ODI is twice as long, which might make a difference in the patients’ perception and willingness to complete a questionnaire considering that disability is only one of the domains being assessed. In this context, a recent randomized controlled trial argued that the length of a questionnaire influenced patients’ response rates. 4

New outcome tools should restrict nonresponses by software solution that will result in improvement of accurate data analysis. 35 In our iPad outcome tool application, nonresponses were restricted by the function that the questionnaire could not be completed unless all questions are answered. If software is applied that eliminates missing values, it would be unethical to force responses if the respondent is not willing to do so, for example, answering a question on sex life. Therefore, we included only items that fulfil this criterion. As expected, only very few patients (n = 3/63) had problems using the electronic device because of a physical handicap. All other individuals could handle the device without significant problems and/or assistance. This seems to be in line with other reports on data assessment with electronic devices in the elderly. 36,37

Some limitations have to be mentioned and discussed. In the beginning of the study, we encountered a technical problem due to loss of Internet connectivity that resulted in program shutdowns. However, this was overcome by improving the WLAN connectivity in the treatment center.

When compared with the ODI, the iDI shows a potential upward bias due its 5-point Likert-type scale instead of a 6-point ordinal scale. Hence, the total possible sum is 32 (iDI) instead of 50 (ODI). This distortion is only relevant when calculating percentages instead of using absolute values and can be overcome by using a correction factor. When a comparison to the ODI is intended, the iDI percentage value can be divided by 1.56. Using this correction factor, the differences in percentages between the 2 scores are substantially less than the minimal clinically important relevant difference for lumbar spine surgery patients. 38

Comparing the electronic iDI with the paper-and-pencil ODI version is a point of methodological criticism. The ongoing discussion about comparability of online versus paper assessments are mixed with studies reporting favorable results in both directions. 39 Yet most of the studies included in the systematic review and meta-analysis have found no differences. 39

Furthermore, participation rates differed in the different phases of the project. The data of step 1 (participation rate: 46%) and step 3 (participation rate: 43%) were collected in a different way than in step 2 (international multicenter outcome study, participation rate of 70%). In steps 1 and 3, patients were approached by a research assistant during their waiting time for a medical consultation in a spine clinic. In step 2, patients were recruited by the treating surgeons. This highlights that a good physician-patient relationship enhances the willingness to respond to outcome instruments.

Finally, in order to clear up possible cultural barriers with regard to the original version of the iDI in German and this article in English, a cross-cultural research design should be included in a further study to evaluate possible differences between the iDI in German and the iDI in English.

Overall, this study demonstrates that the iDI compares very well to the “gold standard” ODI regarding item quality, reliability, and validity measures in patients with spinal disorders. The comparability is demonstrated in 3 different longitudinal German-speaking samples using a paper-and-pencil version as well as an electronic version. Three results highlight its strengths. First, although the iDI has less items than the ODI, reliability as well as validity measures are comparable. Second, factor analysis repeatedly revealed higher item loadings, as well as higher percentages of explained variance for iDI. Third, we demonstrated the simple application and programming of the iDI on a tablet computer (iPad) in a way that missing data are omitted improving overall data quality. In this context, the outcome tool iDI exhibits advantageous features and can be seen as an alternative for the assessment of self-reported disability.

Footnotes

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Michael Ogon reports other from AOSpine, other from ISASS, personal fees from Medtronic, other from DePuySynthes, other from Globus Medical, other from EuroSpine, other from Vivantes, outside the submitted work; Norbert Boos reports grants from AOSpine, during the conduct of the study.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by a grant from AO Spine Europe (Grant # ORD 08-03).