Abstract

There is evidence that online hate speech is increasing significantly on social media pages related to sport, but less research on how sport and media organisations are managing it. This research explored the management of content moderation in Australian sport media through qualitative interviews (16) with social media and communications staff in Australian sport and media organisations. It found that content moderation, or the moderating and removal of comments under social media posts, happened mostly as an addition to content creation work. Strategies for dealing with online hate and incivility were mostly the same between media and sport organisations, interviewees used some automated filters, but mostly manually hid, deleted and blocked comments and users to ‘clean’ their spaces. Overall there was a lack of formal guidelines and policies to direct moderation. Instead the work of content moderation was reliant on the actions of individuals, who took it on with a significant level of personal responsibility, and developed individual coping mechanisms to deal with the work. With its focus on communication staff in sport and media organisations this research contributes a different and important perspective to the growing field of research of online hate in sport.

Introduction

There is evidence that online hate speech is particularly prevalent on sport social media, with examples of racial vilification, gendered abuse of women athletes, homophobia and even death threats (Proszenko, 2021; Rugari, 2022; Toffoletti et al., 2024). While some of these posts have triggered legal action from organisations and individuals (Robertson, 2020), there is anecdotal evidence that Australian sport and media organisations are using content moderation strategies to manage online hate or uncivil comments, removing or blocking comments below posts on their social media pages (Savage, 2020). For example, a club social media staff member in Australia’s largest professional league, Australian Rules Football, posted to Twitter (now X) on the international day against LGBTQIA + discrimination in 2022: I just spent an hour moderating comments like these so that the content on our social platforms aligns with our club values at @CollingwoodFC. I deleted 333 comments on Facebook alone. It's sad that as a content producer, I'm scared to post content that promotes inclusivity (Quay, 2022).

Some of the public comments on the Collingwood account that Quay posted alongside his Tweet included: ‘whats [sic] next players wearing stilettos on game day’ and ‘stop forcing pride crap on us’, (Quay, 2022). But this abuse is also happening in private. In a submission to a state parliamentary inquiry into serious vilification and hate crimes, the Brisbane Lions AFL club included screenshots from their Facebook inbox that showed users calling an Indigenous athlete ‘a little useless ape’ and to ‘chuck him in the zoo’ (Kleyn & McKenna, 2021).The Brisbane club said that their players were consistently exposed to hate speech and vilification and called for more overt regulation (Kleyn & McKenna, 2021). A FIFA report found that almost one in five players at the 2023 FIFA Women’s World Cup was targeted with online abuse, much of it homophobic (Lewis, 2023).

In the most widely used definition, boyd and Ellison (2007, p. 211) describe a social network as ‘a web-based service that allows individuals to (1) construct a public or semi-public profile within an bounded system, (2) articulate a list of other users with whom they share a connection and, (3) view and traverse their list of connections and those made by others in the system’. The first recognized social media networks started in the late 1990s, with both AOL messenger and sixdegrees.com allowing users to create a profile, share conversations and connect with others. Friendster was the first version of the ‘new’ social network in 2002, followed by MySpace in 2003 (Nicholson et al., 2015). Facebook, arguably the world’s largest and most successful social network launched in 2004. As well as individual profiles, Facebook also allowed organisations or individuals to create pages where they could post news and content, allowing fans to also engage directly to this content and their organisation through liking or commenting. Sport organisations and individual athletes were early adopters of social media and research has established social media is now used extensively in sport media for fan engagement, marketing, sponsorship and general revenue generation (Abeza, 2023; Filo et al., 2015). As detailed above, there is anecdotal evidence content moderation is happening as part of these social media managers work, but while content moderation has been studied in other contexts (Gillespie et al., 2020; Roberts, 2019), there is little research that examines it in sport.

The moderation of comments on social media platforms in Australia is also unique given the potential legal landscape. In 2019, the High Court of Australia ruled that some of Australia’s major media companies could be held liable for comments under social media pages (Meade, 2022). The case in question, Fairfax Media Publications Pty Ltd v Voller, came after an Indigenous Australian man Dylan Voller was interviewed on the Australian Broadcasting Corporation (ABC), Australia’s public broadcaster. A program on the ABC detailed Voller’s abuse in Australia’s juvenile justice system, which eventually led to a Royal Commission. Other media outlets ran stories about Voller, and the social media posts about these stories elicited comments on them that Voller’s legal team claimed were defamatory. As detailed in Rolph’s (2021) analysis of the case and the judge’s ruling, ‘his Honour reasoned that, because the media company can prevent some or all of the comments from being made public, through moderation, hiding or blocking, ‘the extended publication of the comment is wholly in the hands of the media company that owns the public Facebook page’.’ (Rolph, 2021, p. 124) As Rolph notes, the effect of the Voller verdict is not limited to Facebook specifically but extends to any social media platforms which permits third party interactivity, such as Twitter (now X), Instagram and LinkedIn (Rolph, 2021, p. 131). Together, this context of increasing online abuse in sport and an increasingly complicated legal environment indicates it is a critical time to examine content moderation in Australian sport media.

Literature Review

Online Hate, Social Media and Sport

The symbiotic relationship between sport and media has intensified through the advance of digital and social media. Sport is a constant daily presence across social and digital platforms, with content created by media organisations, sport organisations, athletes and fans. But these spaces have become flashpoints for online hate (Kearns et al., 2023), which can be defined as ‘spreading, inciting, or promoting hatred, violence and discrimination against an individual or group based on their protected characteristics; which include “race”, ethnicity, religion, gender, sexual orientation, disability, among other social demarcations,’ (Kilvington, 2021). As Kavanagh, Jones & Sheppard-Marks (2016) note, ‘virtual environments provide an outlet for a variety of types of hate to occur and in many ways ‘enable’ abuse rather than act to prevent or control it.’ In response, online hate has become a critical developing field of research in sport media (Kearns et al., 2023). Kavanagh et al. (2016) conceptual typology of virtual maltreatment established four broad types of abuse in online environments; physical, sexual, emotional and discriminatory, and research has indicated that online hate continues to manifest in sport across different events and contexts (Burch et al., 2023; Sanderson et al., 2020). While racism, gendered abuse and homophobia have been found to be the most common types of discrimination present (Burch et al., 2024), it is important to note it is also not one-dimensional, in just one example Serena Williams has been subject to intersectional forms of abuse online (Litchfield et al., 2018).

The qualitative research that delves deeper into the content of these comments is also critical to illustrate the impact of online hate on both those it targets and wider public discourse. For example, McCarthy’s (2022) analysis of comment sections under women’s professional skateboarding videos on YouTube argued that actually many of these comments were ‘virtual manhood acts’ (VMAS) that attempted to delegitimise women as athletes, promote the idea that men are the victims of gender equality, and reinforce the domination of cisnormative men online (McCarthy, 2022). Phipps (2023) interviews with competitive women athletes found that VMAs were utilised frequently against them in their online spaces, which sought to undermine women’s inclusion in strength sport. This research that speaks to women in sport is a small but growing field that emphasises the significant impact of this online abuse. Toffoletti et al. (2024) surveyed elite sportswomen in Australia and found that nine out of 10 had experienced gendered online harm, and an overwhelming majority (85%) said they had changed their behaviour in response to it. Interviews with elite sportswomen in England found conflicted responses to social media use, the women had experienced gendered abuse, but also felt they needed to be on social media as role models for the next generation of women athletes (Pocock & Skey, 2024). To deal with this conflict, they had developed a strategy of ‘appropriate distance’, so they could engage in social media but minimise harm.

Overall this research indicates that online hate is a significant problem in sport. As Kavanagh et al. (2023) note, these elements can pose a signficiant risk to individual emotional and pyschological safety, particularly for athletes who are the main targets. But there is less research that examines how online hate is dealt with in an organisational context, although there is anecdotal evidence it is being addressed (Quay, 2022). Kilvington and Price’s (2019) interviews with advocacy bodies and clubs in English football did find that clubs were not investing resources in stopping online abuse at that time, with one stating they mostly, ‘try to ignore it’ (Kilvington & Price, 2019, p. 75). This study aims to explore the context of moderation in sport and media organisations, and how this moderation responds to online hate.

Content Moderation Context

A key function of social media platforms is for users to share content with others. However, not all user-generated content is necessarily made public. Content moderation is defined as ‘the organised practice of screening user generated content (UGC) posted to internet sites, social media and other online outlets, in order to determine the appropriateness of the content for a given site, locality, or jurisdiction’, (Roberts, 2017, p. 44). Moderation is essential to platforms, because they promise a better experience of the Internet (Gillespie, 2018), but it is also hidden, because its invisibility is critical to the illusion of an open platform (Roberts, 2016). As Gerrard (2022) states, content moderation was one of ‘tech’s best-kept secrets’ up until the 2010s, but it is now apparent that content moderation happens on different levels and contexts, from paid workers employed by the platforms, paid staff in organisations that have pages on those platforms or individuals involved in online communities in a volunteer capacity. Roberts’ (2016, 2019) work on commercial content moderators employed by sites like Facebook has highlighted these staff are mostly marginalised, low-paid workers on freelance or contract rates who must make content decisions in as little as 10 seconds (Roberts, 2019). These workers are likely to suffer high rates of burnout and mental health complaints (Gerrard, 2022), not surprising considering commercial content moderators are, ‘asked to make increasingly sophisticated decisions and will often be asked to do so under challenging productivity metrics that demand speed, accuracy, and resiliency of spirit, even when confronted by some of humanity’s worst expressions of itself,’ (Roberts, 2019, p. 209). Ruckenstein and Turunen’s (2020) interviews with Finnish moderators at organisations with online platforms also found significant emotional impact, for example some interviewees were becoming more strict with removing content towards the end of a work shift than at the start, as ‘emotionally, the work has a numbing effect,’ (Ruckenstein & Turunen, 2020). Mostly, research that has examined content moderators motivations and work practices, in both paid and volunteer settings, indicates that it is highly individualised, even in the presence of group or organisational guidelines (Malinen, 2021; Seering et al., 2019, 2022).

Online Hate, Incivility and Hirerachy of Influences

This study has utilised two key frameworks to examine the work of content moderation in Australian sport in the context of online hate, the framework of incivility and the hierarchy of influences model. Together they help frame how online hate is managed within a content moderation framework. In contrast to online hate, incivility is not necessarily outright hate, but the ‘violation of social norms’ (Muddiman, 2017). As Muddiman (2017) notes though, scholars have disagreed on what social norms these are, their proposed framework of incivility indicates that most definitions could fit into two levels, personal and public. Personal-level incivility, targets individuals and violates politeness norms, while public-level incivility, refers to disrespecting deliberative and democratic norms, such as misinformation (Muddiman, 2017). Research on content moderation has found that online hate, as defined by Kilvington (2021) earlier in this paper, and incivility are often dealt with in different ways. In addition to this model of incivility, Shoemaker and Reese’s (2013) hierarchy of influences model is useful to explore content moderation, as it indicates that the work of moderators is shaped by the interplay of five difference forces, (1) values and attitudes of the individual, (2) work routines (3), organisational influences, (4) social institutions) and 5 (cultural influences), (Shoemaker & Reese, 2013). Together these frames help to frame how moderation is influenced in this context.

Moderation Work, Online Hate and Uncivil Comments

While there is less research that deals with content moderation work in sport media directly, there is research on moderation in related fields, such as news media and political communication. News organisations have been an early adopter of reader comments, both on their own digital sites and social media platforms (Reimer et al., 2023). While the inclusion of audience feedback has not always been welcome (Canter, 2013), with some journalists’ decscribing the comment section as a ‘fetid swamp’ (Wolfgang, 2018), journalists’ now mostly find engaging in comments and moderating them to be part of their job (Chen & Pain, 2017). How content moderators deal with incvil content and online hate in comments in news media has found different approaches. Stockinger et al.’s (2023) interviews with professional moderators in German news organisations found clear cases of online hate were pre-filtered, deleted or blocked, but moderation of ‘gray area’ comments were more likely to approached by preventative or motivational strategies, that included the moderators engaging in the comments themselves, referencing community guidelines, promoting positive comments and encouraging other community members to self-moderate, which others have found are the best strategy for more civil engagement (Masullo et al., 2020). There is evidence that individual and organizational levels have the most influence on moderation styles (Frishlich et al., 2019; Wintterlin et al., 2020), but others have found it is a combination of factors in Shoemaker and Reese’s (2013) model. Paasch-Colberg and Strippel (2022) found that individual beliefs, socioeconomic status and individual work practices impacted moderation, but so did the size, purpose and routines of an organisation. For example, interviewees working in public media were likely to state they were more hands-off in moderation because of their public mandate (Paasch-Colberg & Strippel, 2022). Political commuication has also provided some important detailed case studies of the moderation work (Kalsnes & Ihlebæk, 2021; Tenove et al., 2023). Kalsnes and Ihlebæk’s (2021) qualitative interviews with political staff in Norway found they had developed policies, guidelines and training to help direct staff on how to engage and moderate on Facebook. The main strategy the communication advisers used to deal with negative content was ‘hiding’ comments rather than deleting, as hiding a comment was generally less disruptive to the comment section than deleting, which alerted the user (Kalsnes & Ihlebæk, 2021, p. 337).

Overall, moderation of content or comments within organisations has been found to have unpredictable approaches, including a lack of transparency about policies, inconsistency in applying rules when they do exist, differing resources, and a reliance on individual moderators personal beliefs and practices (Boberg et al., 2018; Gillespie, 2018). The management of social media is now a key work function in sport organisations (Abeza et al., 2019; Naraine & Parent, 2017; Pate & Bosley, 2020), and there is increasing evidence that sport spaces in social media have become a hotbed for online hate (Kavanagh et al., 2023; Kearns et al., 2023). Content moderation in sport organisations and media organisations that cover sport plays into public discourse, and yet we do not know about the unseen work in moderation, including how online hate is defined and managed. They key research questions this study are therefore: RQ1: How does content moderation fit into everyday work in Australian sport media? RQ2: How is online hate identified and what are the strategies used to deal with online hate and uncivil comments in Australian sport media?

Methodology

The key aim of this study was to understand the everyday work of content moderators in Australian sport media, including both sport organisations and media that cover sport, and how they addressed online hate and uncivil comments through content moderation. Given this was asking about a relatively new phenomenon, semi-structured qualitative interviews were employed as this method ‘can provide detailed and complex insight into people’s decisions, values, motivations, beliefs, perceptions, motivations, feelings and emotion,’ (Smith & Sparkes, 2016), and it has already been a method employed in studies of content moderation in organisations. A purposive sample was employed to specifically find relevant research participants in two key areas, Australian sport organisations and Australian media organisations that are focused on sport. A decision was made to include both sport organisations and media organisations given the significant size of social media audiences for both organizations, and the presence of online hate across sport. Participants were recruited in two ways, through public social media posts, and through the researcher’s own personal networks. All invitations indicated that the study was completely voluntary, their answers would be confidential and the study had received ethics approval from La Trobe University. Given the specific nature of this work, a purposive sample was designed the best for this approach in order to gain access to people within sport and media organisations that undertook this specific work. Participation inclusion criteria is detailed below: • Employed in either an Australian sport organisation, or a media organisation in Australia that covers sport at the time of the study. This employment could be fulltime, part-time, contract or casual. • Part of their work involves moderating comments on behalf of the above organisation, on social or digital platforms.

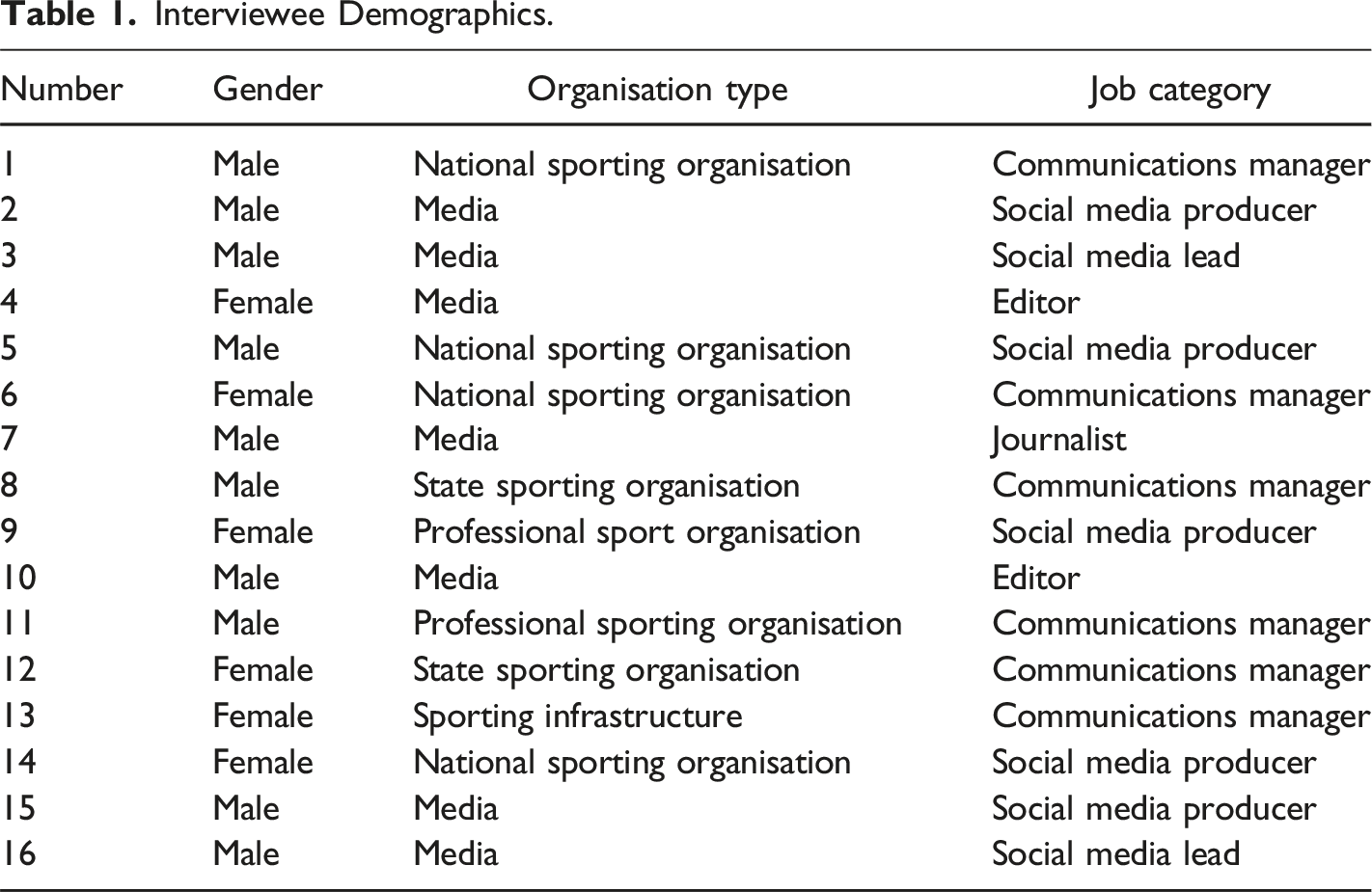

Interviewee Demographics.

Interviews ranged from 35 minutes to 55 minutes, and were conducted online via teleconferencing software. A semi-structured interview guide was used, developed based on existing literature, and it included questions around the interviewees everyday work and content moderation. To start with interviewees were asked if they could describe a recent day in their work, that helped to uncover where content moderation fit as part of their everyday work. Participants were asked to describe what triggered them to moderate a post, including their individual approach and whether their organisation had guidelines or directions on how to moderate, and also if they used the platform tools for reporting online hate. They were also asked to describe the impact of content moderation and online hate on their work practices and themselves. Interviews were recorded, transcripts were developed from the interviews and sent back to the interview participants for member-checking. Handwritten notes were taken by the researcher during each interview. Once checked and de-identified with aliases put into place for individual names and also employer organisations to ensure participant confidentiality, these transcripts and notes were then imported into NVivo. While different contexts were included in the sample, it emerged that similar themes were uncovered after eight interviews, interviews were extended until 12 to complete further saturation, and then further interviews conducted in 2022 to examine the updated landscape.

Data analysis commenced with multiple readings of the interview transcripts and interview notes to identify potential concepts, patterns or insights (Saldaña, 2013). Using NVivo, the first round of inductive coding of the interview transcripts followed two methods, in-vivo coding to capture participants’ experiences, and process coding to explore participants’ actions (Saldaña, 2013). The process coding process included developing codes such as ‘work routines’, where responses around everyday work and routines was collected, and then analysed for themes and patterns. For example, the main code that developed from here was one of ‘content first’, that indicated that these participants’ work roles were focused firstly on creating and posting content, and content moderation was only a small part of their everyday work.

Results

This study aimed to explore where content moderation was occurring within Australian sport and media organisations, how online hate and uncivil comments were moderated within this, and how this impacted these staff. It found that content moderation mostly happened as an addition to content creation work, and that uncivil comments were both easy to identify at one level, and on a sliding scale of grey that relied heavily on individual opinion in others. There were a number of strategies that these workers used to moderate comments they deemed to be either hate speech or uncivil, including automated functions, hiding, deleting and blocking comments and users, but overall the moderation process was heavily guided by the individuals undertaking it. This individual responsibility to dealing with online hate also then has implications the ongoing health and safety of these staff.

RQ1: The Mechanics of Moderation in Australian Sport and Media Organisations

Content first

The analysis revealed that these participants’ roles were mostly defined by a ‘content first’ strategy, or that content moderation in Australian sport and media organisations is mostly a task that happens as an addendum to the main task of content creation. In each of the participants’ workplaces, though they were different and varied between large media organisations with thousands of employees’ to small member-based sport organisations with less than 10, there was not one full-time content moderation role. The majority of these staff had a role whose job was to create and post content on their organisations’ social media or digital platforms, including but not limited to, Facebook, Instagram, X (formerly Twitter), YouTube, Snapchat and TikTok, and organisational websites. Moderating content and comments under social media posts had become an Day to day it’s all content, all the time. We have many social media accounts covering sport across Facebook, Twitter, Instagram, YouTube, Snapchat and TikTok. At every minute of the day there's something going on in one of the sport that needed to be covered.

For staff who worked in NSOs or SSOs, their roles were likely to encompass a wider variety of communication tasks, including content creation, but also media strategy, media management and stakeholder communication. Overall, while the volume of content moderation could depend on the news cycle, most participants it was a regular, but small part of their work, as this quote from Interview 3 shows: Maybe 95% of my day goes into creating things and making sure things are working. It's a lot working on the fly, so seeing what's working at any given time and then responding…then when I get gaps in my day, it's going through and making sure all the comments are ok... or everyone's playing nice.

RQ2: Identifying Online Hate or Uncivil Comments

‘It’s a gut feeling’

Participants found it difficult to define what constituted online hate beyond clear classifications, or comments that were overtly racist, homophobic or sexist. For example, Interview 15 said, ‘basically, if it’s nakedly abusive it’s out. If it’s racist or sexist, it’s out. If it’s homophobic, it’s out.’ Beyond this, the main theme found was that there was a ‘grey area’, where participants had freedom to exercise their own judgment, that is Interview 5, ‘I think it’s pretty well-known amongst us that it's anything that is racist or sexist or anything, that’s definitely got to go. But anything below that, it’s kind of a gut feeling of what action we want to take.’ The intent and context of a comment was also taken into account, using elements such as emojis, tone, swearing and phrases that wouldn’t necessarily be understood as uncivil without context. As the quote from Interview 14 shows below, sport organisations’ generally welcomed opinion from fans to help generate discussion and engagement, but also emphasised that opinion needed to be expressed in a respectful way. If it's defamatory, racist, homophobic, anything like that, delete and ban straight away. If it's just an opinion, leave it because we don't want to be blocking people from sharing their opinions. But it is definitely seeing how it’s written, the level of respect in it. I removed a comment the other day that was just like all capitals, someone's swearing that someone else wasn't put into a squad. And we were…just take that one down.

Lack of guidelines

Another key theme identified was that very few organisations represented in this study had official written guidelines to direct how moderation should occur though, either guidelines that could exist to show moderators what to moderate in and out or guidelines posted on social media pages or accounts to guide the public who would make comments on pages or accounts. Instead, moderation knowledge and practice were built up through both formal and informal training, for example for example Interview 10 said ‘there was less policy, more guidance on how to react and people charged with best practice.’ Almost all participants indicated that content and comment moderation was not mentioned as part of the initial job description or part of the hiring process. The exceptions were: one media organisation who said they included it in the job description for a social producer, and completed formal training, and two sport organisations who noted in an interview it was part of the match-day duties for their role. Where moderation was not explicitly mentioned, participants mostly added it to their role through ‘on the job’ learning. Sometimes this was explicitly noted by their managers or other staff while conducting their work tasks, for others they realised they needed to undertake it once they posted content on social platforms. Some interviewees indicated their training had been a discussion with a manager at first and then responsibility handed over, but there was also evidence of ongoing active collaboration within organisations. In this case moderation wasn’t just undertaken by one person, but screenshots shared among a team and conversations on how to go ahead, which were then used inform future moderation.

RQ2: Strategies for Acting on Online Hate and Uncivil Comments

Easier to hide

The analysis found that there were four main approaches used by participants to deal with comments that met their definition of online hate and uncivil comments, automatic removal with inbuilt platform or third-party tools, and then manual hiding of comments, deleting of comments, or blocking users. Most organisations in this research had some pre-set automated filters applied, particularly through Facebook, including swear words and common racist, sexist and homophobic phrases. Two media organisations also used third-party apps to remove unwanted comments on Facebook and Instagram. However the interviewees with automation all agreed it was not enough, as commentators would come up with ways to get around the filters, such as spelling out swear words with full stops after each letter or using emojis. Filters would also not necessarily pick up a word in context, for example Interview 2 said of a comment on a story on an Indigenous athlete, ‘our filter hasn’t picked up slave, but that’s obviously a very racist comment.’ The most common strategy for dealing with examples of hate speech was to hide comments, followed by deleting them. The interviewees explained that mostly hiding comments was preferred as it wouldn’t notify the commentator, as explained by Interview 13: When you delete comments, people will then comment back saying where's my comment gone? And I guess we don't really have at the moment a policy on our channels or anything that says… we reserve the right to delete comments. We find it's easy just to hide because, then it kind of eliminates that chance for them to go back and say ‘Why can't I see my comment?’.

Official reporting channels through the social media platforms themselves were very rarely used, for both their inefficiency in terms of time to react and ineffectiveness in adjudication of content, for example Interview 3 said of a string of racist comments they reported, ‘I think in every single instance, I got a message back saying ‘it doesn’t breach the community guidelines of Facebook’.’ Interview 1 said that it was difficult to decide whether to delete, block or report, but that speed was often the deciding factor ‘sometimes deleting it is your only practical option, because that’s the only way to get the get the negativity out, which is a real hole in the system.’ Banning was also used, particularly for repeat offenders. However, there was also an acknowledgement that the current approach was not the best ongoing strategy for curating more positive spaces, as this extended quote from Interview 3 indicates: There needs to be more of a process in getting people banned because hiding, deleting comments is just a band aid. You need real action to say, no, this is not okay. If I hide a comment, the guy doesn't even know that I've hidden the comment. If I delete a comment… they need to know that what they're doing isn't right. And I think the only way that's going to happen is if Facebook and the other platforms start saying, ‘You have breached the guidelines and you're getting banned for it or suspended for it.’

One strategy for all

Unlike other research that has examined moderation for organisations, though, in this research there was not much demarcation between online hate and uncivil comments and the strategies used to deal with them. Essentially, if a comment didn’t meet the criteria they judged as appropriate for their pages or platforms, it would be hidden, deleted or blocked. These participants in Australian sport media did not typically use engagement moderation strategies such as pointing to guidelines, or engage respond to commentators ‘below the line’ or use the organisational voice to respond to commentators publicly. For example, Interview 5, from a sport organisation said their preference was to leave the conversation rather than call it out, others mostly noted that it was a question of time and resources – of which they did not have, as this quote from Interview 2 explains: I'd really like to keep our comments healthy and looking perfectly fine, but I simply don't have the time or the ability with my role to make that happen. So you can argue as much as you like about whether that should be the case. But I think more people need to understand that the social media roles, in a fairly low paying industry, there just isn't a role for someone to moderate these sections, these comments sections properly. That’s just not the reality.

One potentially unique strategy used though was preventative moderation through omission. In this strategy, mostly utilised by media organisations, they simply would not post content on their social channels that could be ‘risky’. This included stories that may have legal implications, which they avoided publishing due to the real legal risks that came with Australia’s current defamation precedence. However, two participants also noted that their media outlets had published less content on women’s sport because they knew it would attract gendered abuse. Interview 7 said of a recent period in a women’s sport league: We didn't do any stories on it at all. Part of that is we're understaffed a little bit at the moment…. But part of it was also, well, some people would read it, but as many people would be making terrible jokes and we'd have to hide them and delete them. So it's spending more time than it's worth for us. Because of those problems, it also harms these sports, these athletes who deserve more coverage otherwise.

RQ2: Hierarchy of Influences: Content Moderation in Sport and Media Organisations

Reviewing Shoemaker and Reese’s (2013) hierarchy of influences in media, differences in moderating online hate and uncivil comments in this study could mostly attributed to the individual level and the organisational level.

Individual influence

The reliance on the individual in the content moderation process became clear when participants discussed the process of moderation in action. Most participants simply did not have time to consult with others as part of the process, as they moved to remove hate speech or uncivil comments quickly. They therefore relied on their own judgement, particularly in the absence of formal moderation guidelines. While in many cases the ‘gut feeling’ they used to make quick calls about whether comments should be hidden, blocked or deleted, was socially constructed through their work with others, these participants all emphasised that they had the power within their organisations to make judgements – or that they were charged with keeping their comment spaces ‘nice’. Interview 12 said ‘it was 100% common sense. I guess my boss left it down to me as my perspective of what the organisation should be.’

In many cases this had morphed into a level of personal responsibility, or that they took on responsibility for the content on their organisations’ pages, all the time. This led them to monitoring stories or content on their phone outside of work hours, for example Interview 2 said ‘I am personally invested in the content that I post to the pages. It is very difficult to separate work and personal life in that regard.’ Participants were aware that inability to shut off from platforms and the increasing amount of online hate was not ideal, but that it was also a necessary part of the role, as Interview 6 explains in this quote below: I never looked at it as, ‘How is this impacting my mental health?’ For me, it was, ‘This is just a job. I just have to put up with it. I just have to suck it up…I used to say, ‘You put your bullet proof vest and your hard hat on and you go to work,’ because you're dealing with people online that you can't see, or you can't confront.

Interview 14 stated that they had access to mental health training and support through their employer, but it wasn’t necessarily helpful for their particular role, ‘I think there’s a lot in place for athletes and training for athletes, but no one really behind the scenes and kind of how to deal when you’re logging onto social media and people are just bagging your work or bagging the organisation like 24/7.’ Instead most participants indicated that their coping strategy was simply that they had developed a ‘thicker skin’ over time on the role. Interview 15 said, ‘I wouldn’t say it doesn’t affect me, but certainly it’s not as confronting as it was when I first started doing it.’ This de-sensitisation over time did often lead them to have a harder line on blocking content, and others did report that their work in social media had led them to disengage with their own personal social media as a way of coping. These are all indications of changed behaviour as a result of engaging with online hate through their work.

Organisational influence

There was a clear difference between sport organisations and media organisations around the legal implications that could be related to comments. Study participants who worked at Australian media organisations were aware of the potential legal implications of defamatory comments on their pages, Interview 15 said ‘so yes, it’s a thing from above because we have to…we can’t afford to be getting sued for something someone says on Facebook.’ In this way the organisational and social level intertwined, as there was a clear organisational response to Australia’s legal framework. Organisations with more resources in the content creation team also were likely to share more collaborative routines around content moderation, and even if there were no formal guidelines to steer moderation, the ongoing conversations and workshopping with other staff members allowed for consensus building, even if informal. There was also a difference between how participants in media organisations and sport organisations conceived of their comment sections. Mostly, interviewees from media organisations found that it was a ‘necessary evil’, whereas sport organisations were more likely to say that the ability of fans to comment on digital platforms was critical to their organisations’ communication strategy.

Discussion

This study has implications for research in two key areas, research on content moderation work and the research that contributes to online hate in sport. First, it establishes that the moderation of online hate is a small but regular part of working in social media and communication roles in Australian sport and media organisations. Second, these staff need to be considered as a critical stakeholder in the management and research of online hate in sport.

In terms of content moderation work, this research indicates moderation work practices are remarkably similar to those undertaken by staff in news organisations and political communication roles, automatic removal with in-built platform or third-party tools, and then the manual hiding of comments, deleting of comments or blocking users (Kalsnes & Ihlebæk, 2021; Stockinger et al., 2023; Tenove et al., 2023). Like the political communications staff in Norway hiding comments on Facebook was the quickest way of fixing the comment sections, as the original user wasn’t necessarily notified, but the comment could no longer be viewed by others (Kalsnes & Ihlebæk, 2021). This research indicates there are strikingly similar uses of Facebook’s moderation tools across different contexts and countries, where users are being ‘hidden’ but have no way of knowing that they have been. This is potentially another form of what Gillespie (2022) calls ‘content reduction’, where platforms are not deeming content ‘bad’ enough for removal, just making it difficult to find, though this time it is an organisation rather than the platform enacting it. This research further highlights how the public posts on social media networks are not necessarily a reliable measure of public sentiment, given the manipulation by different users and parties. It also indicates that the use of AI technologies is not necessarily enough to stamp out the issue of online hate, as it underscored that manual moderation was still used on top of automatic filters and third-party companies.

Unlike other research in news and politics though, this study found two major, but related differences. The first, a lack of formal guidelines and policies on how moderation should be undertaken. The second, little differentiation between outright online hate and uncivil comments when actioning moderation. This lack of guidelines, both publicly and privately, means that much moderation of content in Australian sport media happens without context to the users, and is primarily influenced by individual moderators. This may also explain why Australian sport media staff were less likely to use engagement strategies, such as pointing users to guidelines or reminding them of group rules (Masullo et al., 2020; Stockinger et al., 2023; Wintterlin et al., 2020), - because these resources simply didn’t exist. While guidelines do not always mean consistent moderation in other contexts (Gillespie, 2018; Gillespie et al., 2020; Roberts, 2019), lack of these resources indicates that content moderation is still a developing function in Australian sport and media, compared to other industries and contexts, and largely influenced by individual moderators.

As other research has extensively established, the moderation of content is significantly influenced at the individual level (Boberg et al., 2018; Frischlich et al., 2019; Gerrard & Thornham, 2020; Roberts, 2019), and this study is no different. With the lack of both industry and organisation guidelines and training, content moderation in Australian sport media is highly influenced by the individuals undertaking it. However, there were differences at the organisational level between media and sport organisations. Generally though this research found media organisations had developed more resources, had more formal guidelines and training – which is likely in a response to Australia’s legal context. However, this also means that media organisations are potentially more equipped to deal with online hate through organisational structures, whereas sport organisations and the staff that moderate within them are likely more vulnerable – though they are likely to be direct targets of online hate (Kavanagh et al., 2022, 2023; Kleyn & McKenna, 2021). These staff in Australian sport media acknowledged the increasing issue of online hate and that they wished for more resources to deal with it. In one way then, this study shows that there has been progress from Kilvington’s study of English football (2019), because there is evidence that social media staff are actively aware of online hate and attempting to rectify it through moderation.

This research also helps establish that staff that moderate social media in media and sport organisations should be considered as a critical stakeholder in research on online hate, as there is clear evidence that these staff play an important, active and ongoing role in its response in sport. This study found work practices in social media management were similar to organisations in North America, in that it is a ‘content first’ role that is primarily about creating and posting content (Naraine & Parent, 2017; Pate & Bosley, 2020). Like professional sport organisations in North America, there was significant personal responsibility placed on these participants for curating their organisations’ brand through social accounts. An NBA manager in Abeza and Seguin’s (2019:92) research said: Managing social is, first and foremost, an art, supported by scientific data. It is a human doing it, it cannot be an algorithm or an agency. You have to trust the people who you put as the front face of the teams.

This research helps to underscore that this trust and responsibility placed in the hands of these staff can also turn into a level of personal responsibility that tips over into personal life. Some participants indicated that they found it very hard to separate work and personal social media use, and often were logged in and checking accounts at all hours, as they felt personally responsible for the content on their pages. In addition they mentioned they changed behaviour in these roles, had become more strict on moderating online hate, and developed specific coping strategies. These strategies included developing a ‘thicker skin’, and disengaging from personal social media use, which are also present in professional and volunteer moderators in other contexts (Roberts, 2019; Ruckenstein & Turunen, 2020). But these strategies are also similar to the notion of ‘appropriate distance’ that Pocock and Skey (2024) found elite sportswomen in the UK engaged in. In this model women athletes found they wanted to use social media to be a role model for the next generation, but leveraged it at an ‘appropriate distance’ to try and minimise the harm it could also bring them. There were also some examples of pre-emptive moderation, where posts were simply not published. While largely this was related to stories that could have legal implications, two participants also noted their media outlets had posted less stories about women’s sport due to the sexist and homophobic comments they attracted. This particular finding emphasises how the gendered nature of abuse online is reinforcing the hegemonic structure of sport media (McCarthy, 2022; Phipps, 2023).

The impact of online hate is well recognised in research on athletes in sport and moderators in other contexts, the patterns around coping behaviour and social media use here are indicative that staff who engage in moderation in sport and media organisations should be considered when examining the impacts of online hate. Kavanagh et al. (2023) emphasised it that athlete voices should not be ‘lost in a narrative which privileges the financial and managerial benefits of social media,’ and we argue that this article provides ample evidence that the staff charged with managing social media platforms, and the implications for their mental health, also need to be considered. Overall, this study indicates the sport industry could work more collaboratively to develop and deliver guidelines that could support staff undertaking content moderation. In addition, social media platforms need to create and enforce better reporting tools and more effective takedown procedures for both posts and members of their networks that continue to post online hate, combined with governments exerting more regulatory pressure on platforms to do this.

Conclusion

The aim of this study was to conduct a broad overview of how content moderation was occurring in Australian sport media, given the context of increasing online hate. It found that content moderation was occurring, but that it is an associated job function and not a primary one in both sport and media organisations. It offers an insight into content moderation at an important point in time, within the context of increasing online hate but also when sport and media organisations are engaging in comments in order to drive engagement and communication. Overall it contributes to the literature on social media in sport, providing some important insights into the work practices that are behind the social content that is more often the focus of research.

This research does have several limitations that should be acknowledged. Sport and media organisations were using multiple social media and other digital media platforms, that were likely to have different moderation techniques and tools available. Therefore, future research that examines individual platform moderation strategies and details would be valuable. These interviews were also conducted before Australia’s Online Safety Act came into force, which included expedited takedown laws applied to social media platforms. Updated interviews which explore whether this act and the e-Safety offices greater regulatory powers would be interesting to see if they had made a difference.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by a La Trobe University internal grant for some of the research, authorship, and/or publication of this article.