Abstract

Professional content moderators are responsible for limiting the negative effects of online discussions on news platforms and social media. However, little is known about how they adjust to platform and company moderation strategies while viewing and dealing with uncivil comments. Using qualitative interviews (

Online discussions are widely recognized as a promising tool for shaping public opinion through the exchange of diverse viewpoints from members of all sectors of society (Dahlberg, 2011; Rowe, 2015). However, recent studies indicate that the tone of user comments is becoming increasingly uncivil, posing a threat to democracy (Theocharis et al., 2020). Incivility has been linked to harmful consequences, including political polarization (Kim and Kim, 2019), societal apathy (Masullo et al., 2020a), and reduced political trust (Van’t Riet and Van Stekelenburg, 2021). An important measure to counteract destructive online discussions is employing professional “content moderators”. Along with screening, deleting, and moderating hostile discussions, they use various tools to ensure civil and free-flowing conversations (Jhaver et al., 2022).

As moderators’ workloads have grown dramatically over the years, (semi-)automated moderation systems have emerged to improve the speed and efficiency of content moderation, for example by training AI to identify and flag problematic content (Jhaver et al., 2022). However, human intervention remains essential to appropriately screen online comments (Roberts, 2019), particularly those that deviate from clearly defined concepts, such as hate speech, threats, or online stalking, and are therefore protected by freedom of expression laws (Jørgensen and Zuleta, 2020). What is more, perceptions of incivility are subjective (Otto et al., 2020), and in some cases, the use of swear words may even increase engagement (Brooks and Geer, 2007) or foster political interest (Masullo Chen et al., 2019). As a result, defining which comments are problematic enough to be moderated and how to intervene involves complex decision-making processes.

Accordingly, content moderation is viewed as a novel act of “gatekeeping,” shaping who can see what, when, and where (Boberg et al., 2018). Similar to traditional gatekeepers such as journalists and politicians, moderators are subject to several individual, organizational, institutional, and cultural factors that shape their decision-making processes, as evidenced by research using Shoemaker and Reese’s (2014) hierarchy of influences model as a theoretical framework (e.g. Paasch-Colberg and Strippel, 2021). Yet, the question of how factors on different levels influence moderators’ perceptions of actionable comments and their work routines to combat them is still largely unexplored.

Therefore, this study involved semi-structured interviews with professional moderators from various organizations (e.g. political parties and media outlets) in Germany and Austria who screen comments on news forums or social media platforms (e.g. Facebook, Instagram, Twitter, and TikTok). Our study has three primary objectives: (1) to identify the specific types of comments professional moderators view as problematic enough to be moderated; (2) to determine which moderation strategies they select for which types of comments, including the (desired) integration of technological tools into the daily moderation practices; and (3) to examine the underlying reasons for variations in the levels of automation and the use of AI, by applying the hierarchy of influences model proposed by Shoemaker and Reese (2014). By outlining the reasoning and execution of moderators’ decisions—now and in the near future—our findings not only contribute insightfully to the literature on online incivility and content moderation but are also of practical importance. Based on the growing efforts by academics and industry to create methods that automatically address uncivil online comments (Talat et al., 2021), this study provides valuable recommendations for further technological advances precisely tailored to professional moderators’ needs.

Perceptions of online incivility

In recent years, scholars, journalists, politicians, and citizens have expressed concerns about the quality of online discourses (Jaidka et al., 2021; Masullo et al., 2020a), characterizing them as problematic, negative, outrageous, and toxic—in one word: uncivil (Theocharis et al., 2020). Regarding the definition of incivility, Muddiman (2017) differentiates between two types of incivility:

However, although those definitions imply that identifying content as uncivil can be guided by objective categories, the outcome of this process strongly depends on the content in question, the individual characteristics of the person evaluating the content, and context factors (Otto et al., 2020; Su et al., 2018). The subjective nature of incivility is especially challenging for professional content moderators responsible for screening and handling content posted by users. They face the tasks of (1) deciding whether user comments must be moderated and (2) selecting an adequate moderation strategy. Thereby, they must balance the norms of a discussion that can be considered civil with the users’ right to freely express their opinions (Jørgensen and Zuleta, 2020).

Shoemaker and Reese’s (2014) hierarchy of influences model suggests that decision-making in the media sphere is shaped by a dynamic interplay of various forces at the individual, organizational, institutional, and cultural levels. For example, on an

In summary, the perception of incivility (Otto et al., 2020) may depend more on a comment’s context or a commenter’s intentions than on certain objective features, such as swear words (Chen, 2017). In this background, the question arises regarding how content moderators, who are trained professionals, navigate the complex task of distinguishing between acceptable and unacceptable speech while being embedded in organizational routines. Therefore, this study asks,

The role of human moderators in fighting uncivil online discussions

The rise of uncivil online comments has prompted news outlets and social media sites to take action (Theocharis et al., 2020). While one response has been to deactivate comment sections, considering both the lack of value of uncivil comments and the potential harm to a news outlet’s reputation as an unbiased news source (Williams and Sebastian, 2022), others have argued that by not allowing to discuss certain topics, users may feel disconnected from other readers and spend less time on their platform (Stroud et al., 2020). As a result, they extensively invested in content moderation solutions, including various strategies to address problematic content.

Those strategies can be (1)

Due to the sheer number of user comments in online discussions, it has become difficult for moderators to keep pace with their tasks to screen and address inappropriate content. Consequently, AI and machine learning technologies are increasingly adopted. Moderators can implement technological tools by engaging (AI-based) word filters or blocklists, skin filters suggesting pornography, and tools designed to match copyrighted material (Brøvig-Hanssen and Jones, 2021; Jhaver et al., 2022). However, AI tools face limitations in comprehending contexts, human cultures, and power dynamics, which impair their ability to address subtle forms of incivility (Roberts, 2019). There are concerns that AI perpetuates gender inequality and silences marginalized groups (Gerrard and Thornham, 2020; Munn, 2020). Moreover, users can bypass automated moderation by devising new ways to post prohibited content, such as using creative permutations of racial epithets (Roberts, 2019).

Currently, studies investigating the use of technology for content moderation are predominantly conducted by computer scientists focusing on the technological capabilities of automated content moderation (e.g. Rieder and Skop, 2021). Little research has been done on moderators’ perceptions and evaluations of different technological tools for moderating uncivil comments on different platforms. This is a significant oversight since moderators are the ones mainly selecting moderation strategies for different types of comments. Without the trust or willingness of moderators to use AI-based moderation tools, the potential advantages of these technologies may remain unrealized. Therefore, questions arise about the tools moderators currently use to adequately handle different types of user comments and the kind of assistance they would like from a (semi-)automated moderation system in the near future. This leads to the second research question:

Determinants of content moderation strategies

Following the hierarchy of influences model, the work of professional moderators is shaped by a complex interplay of five forces, including (1) values and attitudes on an individual level, (2) work routines, (3) organizational influences, (4) social institutions, and (5) cultural influences (Shoemaker and Reese, 2014). Several studies confirm the role of these forces in moderation processes. Concerning

Last,

However, although these studies confirm that the forces described in the hierarchy of influences model shape moderation decisions by human moderators, it has not been considered how they influence the implementation and perception of technology in these processes. For example, individual traits of moderators or organizational structures might shape not only what moderators perceive as uncivil but also which AI-based technological tools they consider appropriate to identify and address problematic content. Since the use of AI is becoming increasingly important in moderation processes (Jhaver et al., 2022), questions on the determinants of the perception of those tools mark an important research gap. Accordingly, we investigate the extent to which particular levels of the hierarchy of influences model may explain differences in evaluating and using AI for content moderation practice. Thus, we ask,

Method

To investigate the research questions, we conducted a qualitative interview study with professional content moderators in Austria and Germany. Both countries have similar laws to regulate the comment sections of online platforms, namely, the Austrian “Kommunikationsplattformen-Gesetz” (KoPl-G) and the German “Netzwerkdurch-suchungsgesetz” (NetzDG). As part of these laws, certain types of comments that violate the constitutional protection of human dignity, defame, or threaten individuals, promote hate speech, incite violence or crime, or propagate Nazi ideology are considered unlawful and must be removed from online platforms within 24 h. To mitigate the risk of

Procedure

The research design was preregistered with the Open Science Framework on 7 June 2022, and approved by the Institutional Review Board of the University of Vienna on 13 June 2022. As the aim was to explore how uncivil political online discussions are handled in different contexts, we sought participants with daily experience in moderating comments for different media outlets or political parties. To ensure a diverse sample, we considered factors such as the type of organization, different platform(s), political orientations, target audiences, and financing (Flick, 2017). We compiled a comprehensive list that included Austria’s 10 major newspapers (Kronen Zeitung, Der Standard, Die Presse, Kurier, NÖN, OEN, Kleine Zeitung, Heute, Österreich, Falter), five government parties (ÖVP, SPÖ, FPÖ, Grüne, NEOS), two private media outlets (ProSiebenSat1Puls4, ATV), and one public media outlet ORF, as well as Germany’s four major public media outlets (3sat, ARD, Arte, ZDF), and three current government parties (SPD, Bündnis90/Die Grünen, FDP). To identify potential interviewees, we conducted thorough online research and utilized personal contacts, specifically targeting social media managers, community managers, and journalists. Potential participants were mainly recruited through their openly accessible business email addresses and snowballing technique, resulting in a convenient sample. In four cases, organizations selected interview partners themselves after we approached them with a request for participation. Ultimately, we contacted 25 individuals responsible for moderating online comments.

Sample

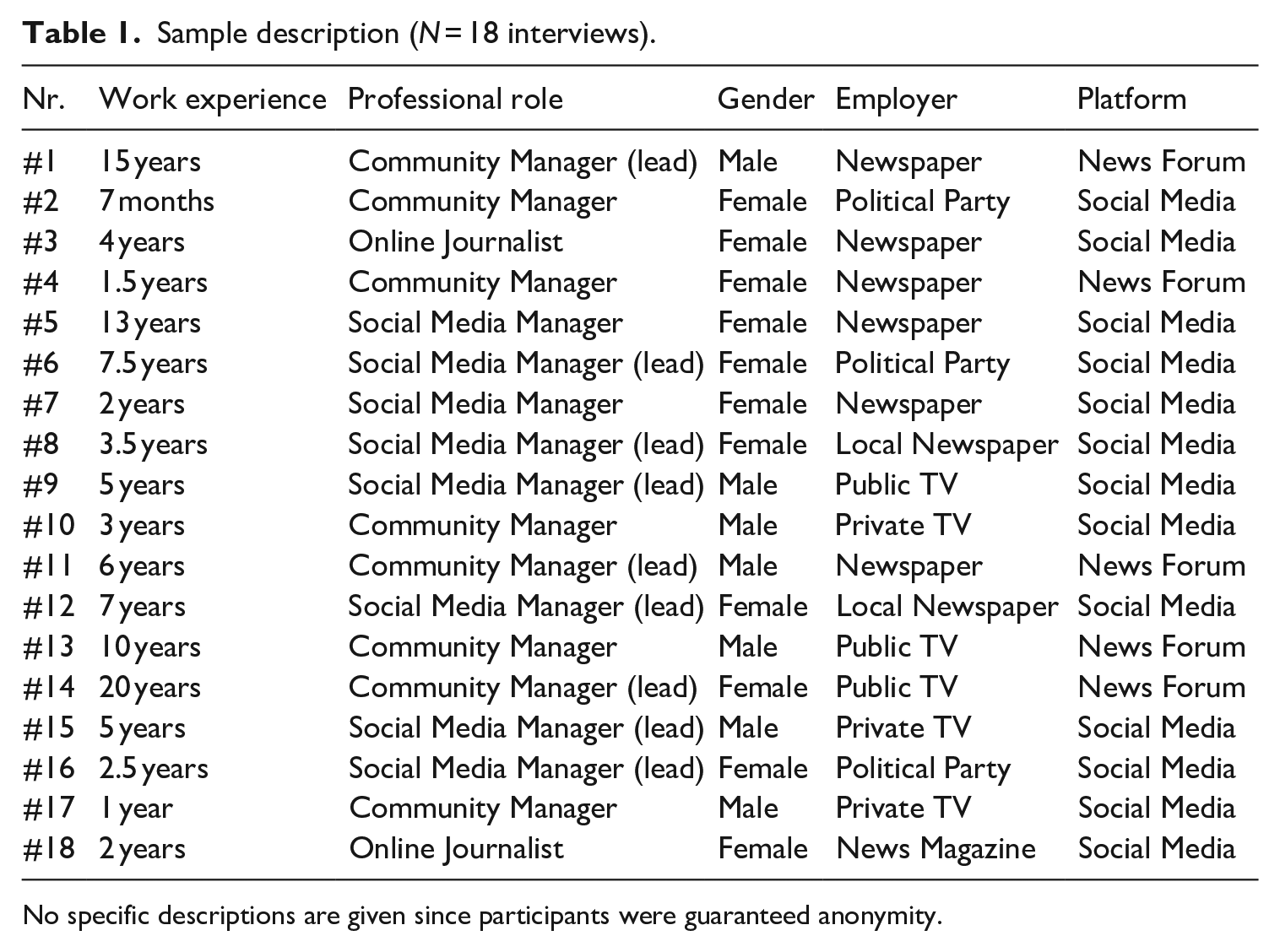

The final sample consisted of 18 professional moderators, 11 female, and 7 male interviewees. When the interviews took place, 13 were employed by news organizations, three by political parties, and two were online journalists who were also approached with moderation tasks. Overall, five interviewees moderated comments for their media outlet’s news forum, while 13 moderated comments on social media, namely Facebook, Instagram, TikTok, Twitter, YouTube, and LinkedIn. Moderators of our sample were either social media managers or news forum moderators. There was no moderator who oversaw both. It is worth nothing that while social media managers usually had additional responsibilities like content creation, news forum moderators focused solely on handling the high workload of moderating user comments.

The sample also comprises nine participants who, in addition to their content moderation tasks, lead a moderation team, and develop content moderation strategies for their news forums or social media. Thus, information on the background of moderation procedures could be gathered in addition to practical experience with user comments. Interviews were held between 30 June and 28 September, 2022, lasted 26 to 44 min, and were conducted via online video conferencing software (Zoom or Microsoft Teams) or telephone. Altogether, theoretical saturation was sufficiently reached. The interviewees in this paper are labeled #1 to #18 (see Table 1).

Sample description (

No specific descriptions are given since participants were guaranteed anonymity.

Interview guide

All participants were interviewed in a semi-structured manner to ensure comparability and remain open to new insights. The interview guide comprised 12 primary dimensions, each of which had several sub-questions, covering three key areas: (1) professional profile and moderation guidelines provided by the organization; (2) discussion climate and perceptions of uncivil online comments; and (3) (potential) methods of addressing different types of uncivil online comments. The full interview guide (in German) and an English translation are provided in the Supplemental Appendix.

Analysis

After data collection, all interviews were transcribed and read multiple times. In the next step, the software MAXQDA was used to conduct a thematic qualitative text analysis of the transcripts following the seven steps provided by Kuckartz (2014): familiarization, theme identification, coding, categorization, interpretation, verification, and reporting. This method enabled an explorative process employing both data-driven (inductive) and theory-driven (deductive) approaches, which was useful for classifying content into pre-established categories while remaining open to emerging new insights offered by the interviewees. In line with Shoemaker and Reese’s (2014) hierarchy of influences model, the pre-defined categories were

Results

Our study aimed to gain a comprehensive understanding of moderators’ perceptions of actionable online comments and their strategies for addressing them, as well as the internal and external factors influencing their moderation practices. In addition to providing direct answers to our research questions, moderators extensively described their challenges in determining the threshold for what constitutes an actionable comment. Furthermore, the issue of mental health frequently emerged as a topic, with interviewees stressing the importance of having a reliable support system. Despite the challenges faced by moderators, including limited resources, interviewees unanimously deemed the closure of comment sections unfeasible for both deliberative and financial reasons. Our findings in response to RQ1 to RQ3 will be outlined in the following chapters.

A distinction between “clear cases” and a “gray area” (RQ1)

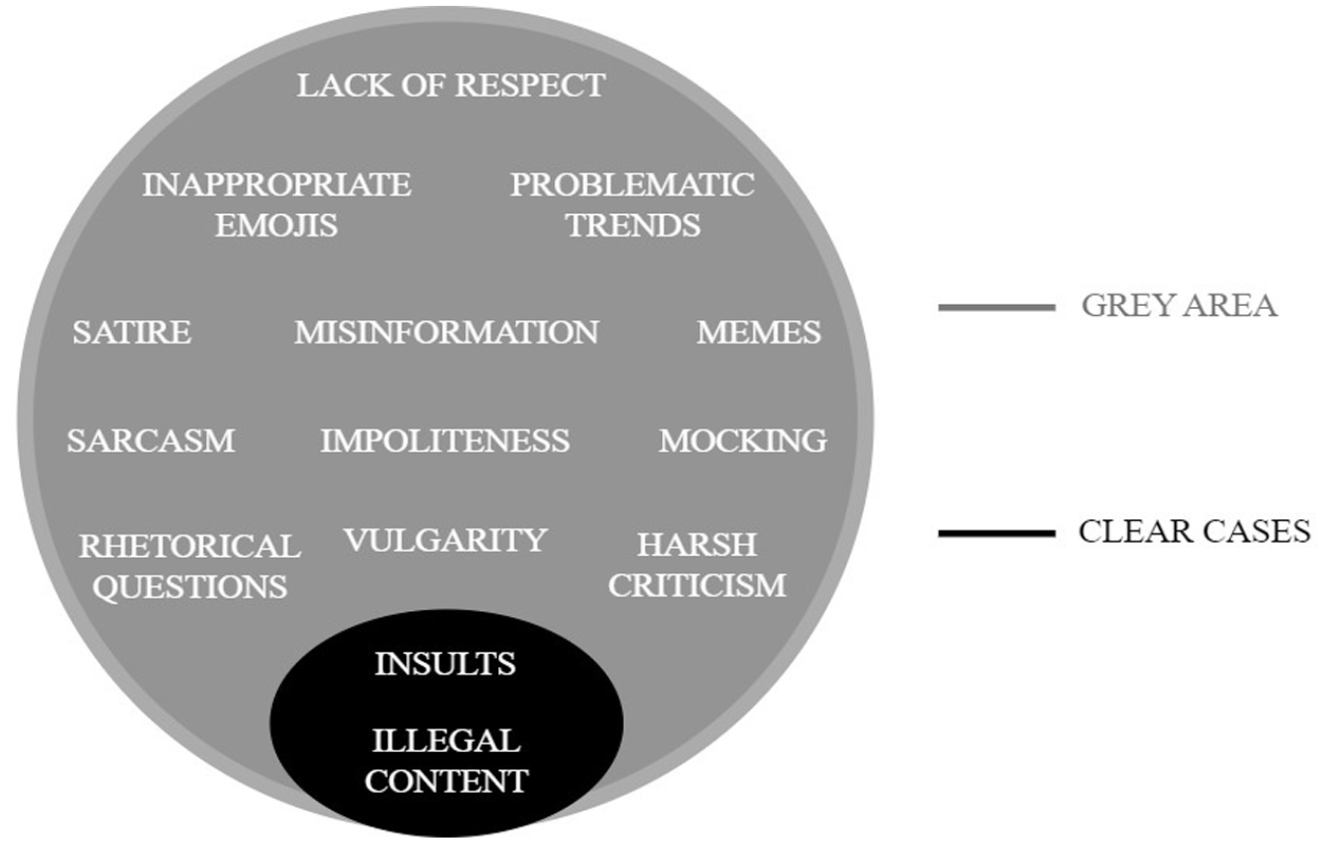

Addressing the first research question, the moderators’ perceptions of problematic online content that requires intervention can be roughly summarized alongside clearly problematic comments covered by the community guidelines and comments that are also problematic but cannot be clearly assigned to standard cases mentioned in these guidelines. In the moderation process, moderators identify and regulate the clear cases before spending more time with comments that fall into a gray area. The interviewees described this dichotomy as follows.

Regarding clearly problematic comments, moderators stated they were increasingly confronted with insults against other users, journalists, editors, politicians, or minorities, as well as with unlawful comments (e.g. hate speech, incitements, and death threats), stating that they “have to delete them quickly and report to the police, which increases the work pressure enormously.” (#6) These types of obviously harmful comments are usually covered by the organizations’ community guidelines, which serve as work guides for most of the interviewed moderators. However, the moderators emphasized that most problematic comments fall outside the above-mentioned concepts and stated that their community guidelines leave room for interpretation—described as a “gray area” (#3). Examples of gray area content are “satire, sarcasm, or memes with the intention to hurt people” (#2), “impolite remarks disguised as personal opinions” (#4), lack of respect (e.g. victim blaming), vulgarity, harsh criticism, rhetorical questions, inadequate reaction emojis, misinformation (including links to untrustworthy pages), (potentially) harmful trends among young users, and intentions to disrupt a discourse, for example, if “users from other political parties organize themselves to write under our postings” (#15).

In response to RQ1, which asked about the moderators’ perspective on actionable comments, our research revealed that professional moderators make a distinction between two categories of comments: clear and gray area comments (see Figure 1). Moderators have demonstrated a strong awareness and understanding of both community guidelines and national laws on hate speech and defamation, which typically cover clear comments. In contrast, gray area comments require moderators to rely on their intuition to decide whether a comment might disrupt an ongoing online discussion, considering various factors such as their employer’s goals and values, the target audience, and their own personal beliefs.

Moderator’s perceptions of actionable uncivil online comments.

How professional moderators respond to uncivil online comments (RQ2)

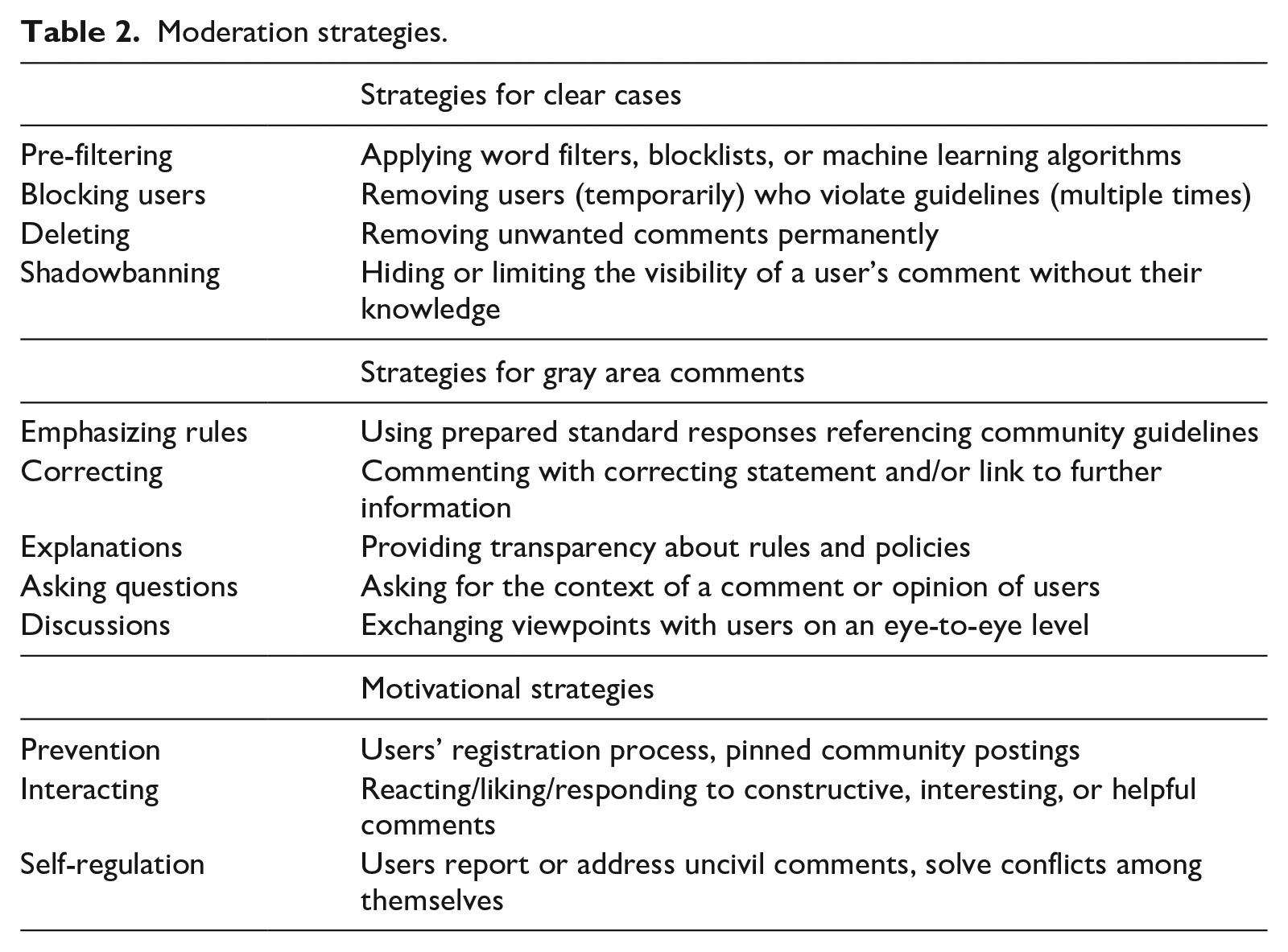

A distinction is made between clearly problematic and gray area comments when not only recognizing but also determining appropriate interventions. Correspondingly, moderation strategies range from automated pre-filtering to interactions with users, including discussions and explanations, or motivational strategies emphasizing constructive comments (see Table 2). Altogether, the following moderation strategies were identified from the moderators’ answers.

Moderation strategies.

Seven of the 18 professional moderators reported using word filters, blocklists, or AI to pre-filter hostile content. Respondents also mentioned the options of blocking hateful content, spam, and fake profiles. When asked which comments they typically delete or shadowban, many moderators explained that they “take those actions against comments that clearly violate our rules” (#15). Depending on the intentions, objectives, and resources of their outlet, some moderators said that “the main guideline is the applicable law” (#18), while others stated that they have “a lot of leeway to delete comments” (#4). One explained his decision-making as follows: I ask myself: Is it perhaps a little offensive but provides a fresh perspective to the conversation, or is it just too much? (#1)

The most reported strategy for addressing gray area comments was direct responses citing the community guidelines “to illustrate the limit of what is acceptable” (#2). In uncertain cases, moderators also tend to ask questions “to understand the context of a comment” (#14). For comments containing misinformation, opinions on adequate response strategies varied greatly. While some moderators mentioned that they “provide corrections to educate users” (#1), others reported that “the policy is to delete false statements completely to reduce their visibility” (#8). Interviewees also had differing views on the extent to which moderators should participate in a conversation; statements ranged from “we never discuss with users” (#15) to “it is important to enter into dialogue, even with troublesome users” (#4). However, they agreed that it is difficult to find the appropriate point to step in, as “the main goal is to add value to an ongoing discourse, not to disturb or even destroy it” (#14).

To prevent uncivil comments before they arise, users are required to agree to the community guidelines during the registration process. Moreover, when moderators anticipate heated debates due to a sensitive topic, they “craft a pinned community posting requesting users to maintain civility and respect diverse opinions” (#4). Interviewees further mentioned encouraging desirable comments by replying, liking, or reacting to them with emojis. Some moderators stated that their users “report comments” (#5), “address problematic users directly” (#3), or “solve conflicts among themselves” (#18); others explained that their community instead tends to be provocative, which “might be due to our target group, as our clientele is more right-wing” (#11).

Answering RQ2a, content moderation scenarios considered appropriate by professional moderators differ for content clearly violating the platform’s rules and gray area content. While the first is mainly addressed by (technologically supported) dogmatic strategies, gray area comments are approached either authoritatively-interactively or discursively-interactively (Wintterlin et al., 2020), depending on the specific case. Although moderators did not always agree regarding the effectiveness of the various tactics, most interviewees emphasized that it is crucial to be transparent for users in their decision-making by justifying the executed moderation approaches, especially for gray area comments. Moderators also highlighted the growing significance of preventive and motivating strategies and expressed a need for more time and resources to interact, using encouraging remarks to support the development of a community that regulates itself. Overall, human moderators only use AI for dealing with simple and straightforward tasks involving filtering and detecting uncivil spam and making the final decisions on more complex content themselves.

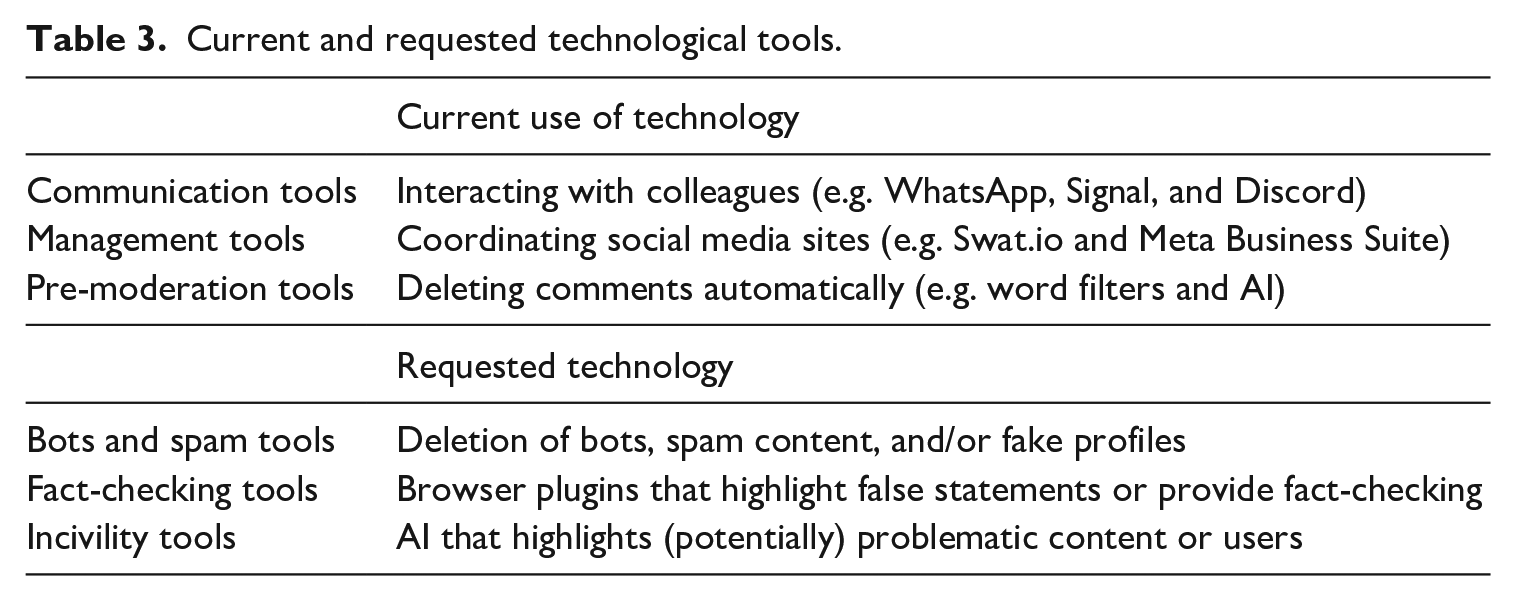

Concerning RQ2b, divergent viewpoints emerged in response to questions regarding feasible technological approaches against uncivil online comments. Table 3 provides an overview of the categories.

Current and requested technological tools.

Almost all moderators mentioned that they used communication tools, such as chat apps, to debate moderation decisions with their colleagues or management tools to manage large amounts of content on many platforms simultaneously (e.g. in the form of “tickets” assigned to different moderators). However, the technology used for the moderation process differed considerably between moderators. While some interviewees “disagree with the idea of automatically deleting or withholding postings” (#13), others stated, “without AI, we would be unable to function” (#11). Overall, the moderators reported that the level of automation ranged from “none” (#2) to “almost 80%” (#11). It is noteworthy that one interviewee’s media company had previously tried using artificial intelligence but had been dissatisfied because “it simply did not recognize many things, for example, irony” (#9). Accordingly, moderators revealed various difficulties with AI: “You can see a decrease in the quality of AI when our human collaborators disagree on certain issues” (#1), “AI has significant problems dealing with different regional dialects” (#15), and “AI is unable to understand the context of certain things, such as a cheerful emoji under a post about the murder of a Jewish boy” (#9). Correspondingly, most interviewees agreed that “AI should not make decisions in gray areas” (#18).

Moderators expressed the need for technological tools to keep up with the fast-changing online landscape, especially for clearly problematic content, as “it is exhausting to constantly remove spam, bots, or fake profiles that our word filters fail to identify” (#7). The increasing sophistication of hostile content has resulted in frequent requests for “tools that are capable of recognizing variations of banned words” (#15). Interviewees also desired tools that can flag potentially false statements or provide educational links to help with fact-checking, “which can be resource-intensive” (#1). The most frequently requested tool was one that could identify potentially problematic content or users but not automatically delete them. Moderators also suggested using sentiment analysis tools to alert them “to a high number of uncivil comments under a post” (#9) or “identify discussions that may be of interest or relevance” (#16). It is worth noting that although some of these tools may already exist, interviewees reported that they did not have access to them yet or that they were unsatisfied with the current solutions available.

Regarding answering RQ2b, the potential of (AI-based) technology for content moderation was evaluated as higher for comments categorizable as clear cases. The current use of technological tools is hardly applied to the gray area content, and moderators expressed doubts regarding their use for ambiguous comments. Despite these concerns, moderators express a desire for advanced tools that can identify and remove clearly hostile comments or flag and notify them of potentially problematic content or users. However, they are hesitant to delegate the final decision-making authority for gray area comments to AI.

Explaining differences in the implementation of (AI-based) technology (RQ3)

Regarding the (potential) use of AI, respondents referred explicitly and implicitly to various explanatory reasons at different levels of the hierarchy of influences model.

On the

On the

On the For colleagues who work very conscientiously, the process of building trust in AI has taken much longer, or is even still ongoing, as they hardly ever use it. (#11)

However, moderators focusing on “just getting rid of problematic stuff” (#12) are more open to using AI.

In summary, the findings for RQ3 revealed that differences have emerged in the potential application of AI in the content moderation process. Technological approaches differ due to different platforms’ rules and policies, design decisions, technical possibilities, and user demographics. Besides the characteristics of the business model and the platform’s generic rules and policies, the workload appears to be a major deciding factor at the organizational level, while on a personal level, professional self-perception and work ethics affect the willingness to implement and trust (AI-based) moderation tools.

Discussion

The impact of online comments has been central to the discussion regarding meaningful democratic discourse (Bormann, 2022; Jungherr et al., 2020). However, although the effects of incivility in user comments have been intensively studied (e.g. Chen, 2017; Kim and Kim, 2019), little is known about how the humans tasked with mitigating the damaging impact of uncivil debates—professional content moderators—view and react to uncivil online comments, how they implement technology in their daily moderation practice, and how differences in these perceptions and strategies can be explained (Paasch-Colberg and Strippel, 2021).

Our results suggest that, in their daily routine, moderators divide content requiring intervention into two groups: (1) clearly problematic comments violating the community guidelines and comprising aspects overlapping with the definition of hate speech, intolerance, and person-based incivility in the literature; (2) a larger group of gray area comments that are unwanted, violate established norms of communication (Muddiman, 2017; Rossini, 2020), but are not regulated by laws or guidelines. This implies that deciding to intervene in a gray area discussion is highly subjective and mainly based on how moderators judge a comment’s potential repercussions, its context, or the users’ intentions rather than the words or phrases it may contain. Hence, while moderators apply (AI-based) dogmatic methods for cases obviously violating laws or community guidelines, they must adjust their moderation strategies for gray area comments depending on their personal judgments of adequate interventions, mainly using interactive and motivational approaches.

Regarding technology implementation, content moderators in this study agreed that AI is not (yet) capable of addressing nuanced and complex gray area content. They emphasized that technological tools should not replace human moderators but support their decisions. However, the results also revealed differences in the use and evaluation of moderation strategies, the level of automation, and the desire to integrate AI into the moderation process. These differences could be attributed to explanatory factors at organizational (e.g. workload and business model), platform (e.g. technical abilities and user demographic), and individual levels (e.g. professional self-perception and work ethics).

Our findings have important theoretical and practical implications for scholarship on online incivility, content moderation, and (semi-)automated detection models alike. First, the results indicate that professional moderators have clear ideas about actionable gray area comments that cannot always be translated into policies in the form of handbooks, community guidelines or legal frameworks. At an international level, the Digital Services Act (“DSA”) of the European Union, coming to force this year, is the first major legislation establishing rules for detecting, flagging, and removing illegal content, while prioritizing vital principles such as transparency, user rights protection, and cooperation with authorities (Leerssen, 2023). It aims to harmonize different national laws in the European Union (EU) that have emerged to address illegal content. While the DSA was published after our initial data collection, the regulation is closely aligned with existing national laws and recognizes the diverse cultural backgrounds of individual member states by delegating the definition of problematic comments to them (Leerssen, 2023). As Germany’s NetzDG and Austria’s KoPl-G are stricter than most Western countries (Tworek, 2021), they may continue to serve as main legal frameworks within Austrian and German moderator’s organizations, providing the basis for developing categories of clearly problematic comments that need to be deleted. However, both legislation and organizations do not offer specific definitions or strategies for addressing comments that fall outside of the clearly defined parameters. Accordingly, although research on incivility detection has made significant strides in analyzing content characteristics (e.g. Rieder and Skop, 2021), it often fails to consider the broader context of a discussion, the potential consequences of a comment, or the intentions of the users involved, thereby overlooking the subtleties of gray areas. For example, while insults, mocking, and vulgarity are all considered uncivil (Muddiman, 2017), insults are typically intended to harm another person’s feelings or lower their self-esteem, which was considered clearly problematic by moderators. Yet, the acceptability of mocking (e.g. making fun of someone) and vulgarity (e.g. using swear words or explicit language) is highly dependent on context. This distinction between hateful or discriminating comments and uncivil comments that can either disrupt the quality of a discussion or generate interest and reveal new perspectives is in line with previous research (e.g. Rossini, 2020) and highlights the need to arrive at an operational definition for actionable comments, making moderation less arbitrary and providing transparency and justification for interventions. Therefore, policymakers on institutional or organizational levels should collaborate with both incivility scholars and moderators to provide more nuanced definitions of which types of gray area comments can be viewed as particularly problematic and therefore need specific regulations. Here, scholars can help organizations create best-case examples for learning purposes by assessing the impacts of different (automated) moderation approaches on discussion quality and user engagement tailored to the resources, goals, and work routines of organizations.

Second, the influence that moderators hold in shaping public opinion emphasizes the need for a thorough understanding of their role in online discourse and the decision-making process. By implementing the hierarchy of influences model as a comprehensive theoretical framework and examining a diverse sample of moderators across different organizations, we have gained valuable insights into the complex interplay of factors that impact their work. Our study demonstrated that organizational, platform, or individual factors not only shape moderators’ perceptions of actionable comments, as found by Paasch-Colberg and Strippel (2021) but also impact their decisions on content moderation, such as the use of specific approaches and technological tools. In their daily work routine, moderators must constantly weigh the potentially detrimental effects of uncivil comments—on their organization, other users, or even society—against restricting their users’ rights to freedom of expression and select the intervention they consider most appropriate according to their working conditions and role conceptions. For example, while moderators of public broadcasting news forums have been shown to be hesitant to delete legal comments or use automated moderation approaches due to the fear of being accused of censorship, moderators working for private TV social media accounts prioritized page views and follower counts over discussion quality and used tools like word filters very generously. Future research can make use of these findings to see moderation as a multifaceted, complex process influenced by a number of factors identified in the hierarchy of influences models. Further exploration, organization, and comparison of outcomes can lead to a more nuanced understanding of content moderators as “new moral gatekeepers” (Boberg et al., 2018) and their critical role in journalistic and political environments.

Third, while AI can provide several benefits in content moderation, it is important to acknowledge the associated challenges. Even though it is unlikely that AI-based systems will match the performance of human moderators in the near future (Jhaver et al., 2022), many moderators expressed interest in utilizing AI to remove clearly harmful content automatically and implement semi-automated solutions for content in gray areas that allow moderators to take corrective action. Furthermore, moderators see a lot of potential in motivational approaches, but lack of time prevents them from implementing them. This means that AI tools have the potential to effectively moderate negative comments while also highlighting beneficial ones, allowing moderators to identify areas where their input could further encourage productive discussions among users. However, while previous research has extensively studied users’ understanding and trust in algorithmic content moderation (e.g. Molina and Sundar, 2022), little is known about moderators’ perspectives on AI-based moderation tools. Their potential benefits may remain unrealized if moderators are hesitant to use them. Our findings indicate that this may be especially true for moderators who work very consciously or have had negative experiences with AI in the past. To enhance the application of AI-based content moderation, it is crucial to establish consistent feedback channels between professional moderators, users, and semi-automated detection models to improve and customize the models to meet their specific needs, ultimately fostering trust between all parties involved (Molina and Sundar, 2022). In addition, AI systems for content moderation must prioritize ethical considerations, including fairness and non-discrimination (Gerrard and Thornham, 2020). For this reason, interviewees with leading roles suggested employing a diverse team and providing in-depth training that increases their understanding of technology and facilitates critical reflection on their decision-making processes. Future research should seek to better understand moderators’ perspectives on AI-based content moderation tools and address their concerns, while also ensuring that ethical and practical considerations are taken into account.

Naturally, this study does not come without limitations. As the interviews were conducted during the COVID-19 pandemic, moderators were likely faced with increased levels of user interaction, potentially changing the prevalence and nature of problematic and harmful comments. Consequently, a replication of this study in non-pandemic times is required to learn about the robustness of the findings. Furthermore, the observed perceptions of uncivil user content and applied moderation strategies were identified based on the moderators’ self-reports, which may not accurately reflect their work routine. Thus, we do not know whether, for example, social desirability biased the moderators’ answers. This must be considered when interpreting our findings. Furthermore, our findings cannot be generalized to other countries, especially if they differ from Austria and Germany regarding journalistic self-perceptions or political and legal regulations for online discussions (Hanitzsch et al., 2010). Austria and Germany have more rigid laws on online comments compared to most Western democracies, which may lead content moderators in these countries to have a heightened sense of responsibility when making content moderation decisions (Tworek, 2021). The potential legal consequences of making incorrect moderation decisions may contribute to a more cautious approach by human moderators. This, in turn, could create skepticism toward new technologies in content moderation, as moderators may view AI as insufficiently equipped to make nuanced judgments that align with legal requirements. Cross-national examinations are required to comprehend influences on moderation practices in a broad context. Finally, this study only reflects the viewpoints of moderators. Therefore, future research should consider the perceptions of users, legal scholars, and machine learning experts to develop broadly accepted, technically feasible, and effectively functioning moderation strategies that benefit both society and democracy.

Supplemental Material

sj-pdf-1-nms-10.1177_14614448231190901 – Supplemental material for Navigating the gray areas of content moderation: Professional moderators’ perspectives on uncivil user comments and the role of (AI-based) technological tools

Supplemental material, sj-pdf-1-nms-10.1177_14614448231190901 for Navigating the gray areas of content moderation: Professional moderators’ perspectives on uncivil user comments and the role of (AI-based) technological tools by Andrea Stockinger, Svenja Schäfer and Sophie Lecheler in New Media & Society

Footnotes

Authors’ note

All authors have agreed to the submission, and the article is not currently being considered for publication by any other print or electronic journal.

Data availability statement

The data that support the findings of this study are available on request from the corresponding author, University of Vienna. The data are not publicly available due to containing information that could compromise the privacy of research participants.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.