Abstract

This article focuses on the critical limitations in current undergraduate major evaluation systems within higher education, notably the scarcity of systematic evaluation at the major level and the inadequacy of singular methodologies in comprehensively reflecting complex quality dimensions. To overcome these challenges, the study constructs a multi-dimensional evaluation framework encompassing teaching resources, faculty caliber, student development, and societal impact, organized hierarchically across four first-level, nine second-level, and forty third-level indicators. The core methodological innovation lies in a combined weighting approach that integrates the Analytic Hierarchy Process (AHP) for subjective expert judgment, the Entropy Weight Method (EWM) for objective data-driven variability analysis, and the Mean-Variance Method (MVM) for statistical dispersion evaluation. This approach optimally synthesizes the strengths of these individual methods, effectively mitigating subjective bias, data noise sensitivity, and discrete deviation inherent in single-method evaluations. Empirical validation using data from a science- and technology-focused university demonstrates that the combined weighting results exhibit superior consistency with external authoritative accreditations (National First-Class Undergraduate Majors and Engineering Education Accreditation), as rigorously confirmed by significant Spearman's rank correlation analysis. The research provides a scientifically rigorous and practically viable solution for higher education institutions seeking to establish robust, multi-dimensional evaluation systems, optimize major structures, and enhance the quality and effectiveness of undergraduate talent cultivation within the context of national higher education reform initiatives.

Plain Language Summary

Purpose this study developed a more balanced approach to evaluate undergraduate majors by combining subjective expert judgment with objective data analysis, addressing the limitations of single-method evaluations. Methods We constructed an evaluation indicator system covering four first-level indices: Faculty Team Indicators, Student-Related Indicators, Major Construction Indicators, and Teaching Quality and Curriculum Indicators, which were further divided into 40 third-level indicators. The weights of indicators were determined by integrating expert scoring, data variability analysis, and statistical dispersion assessment. This combined weighting method was validated using data from 44 majors at a science- and technology-focused university in China. Conclusions Results showed that majors receiving high comprehensive scores consistently aligned with those recognized as National First-Class Undergraduate Majors or accredited under Engineering Education Accreditation. This strong concordance with external authoritative accreditations confirms the method's reliability in identifying high-quality majors and diagnosing areas for improvement. Implications This research provides universities with a practical tool to enhance major quality through evidence-based resource allocation, major structure optimization, and support for national higher education reform initiatives.

Keywords

Introduction

The quality of higher education (HE) and the level of major development constitute core determinants influencing the efficacy of national innovation systems and international competitiveness. Within the policy context of the ongoing “Double First-Class initiative” and the comprehensive implementation of the “Double Ten-Thousand Plan,” China's higher education evaluation system is undergoing a paradigm shift from scale-driven expansion to quality-driven enhancement. Consequently, establishing a scientific, objective, and comprehensive evaluation framework for higher education is of paramount importance (Mok & Marginson, 2021; Xiong et al., 2022). However, traditional singular evaluation models often fall short of addressing contemporary governance demands. Critical research by Ajjawi et al. (2024) on “authentic assessment” theory highlights a widespread lack of innovation in current higher education evaluation methodologies and underscores the urgent need to establish a multidimensional, quantitative evaluation system—particularly at the major level (Ajjawi et al., 2024). As the fundamental units of talent cultivation in higher education, majors require quality evaluations that integrate multidimensional data spanning teaching resources, faculty competence, student development, and societal impact. Yet, existing research predominantly focuses on the institutional or individual teacher levels (Bergsmann et al., 2015; X. Yang et al., 2022), creating a significant gap in systematic major-level evaluation. This gap is particularly critical to address, as major-level evaluation introduces distinct managerial challenges, such as guiding intra-institutional resource allocation, managing competition among majors, and balancing standardization with disciplinary uniqueness. The complexity of evaluating major development levels is manifested in three aspects. First, evaluations must encompass diverse elements like student development, societal demands, and major distinctiveness. Second, a multidimensional framework is needed, concurrently considering inputs, processes, outputs, and impact. Furthermore, research shows systematic variations in educational attributes across majors, making uniform criteria problematic (Thanassoulis et al., 2017). This necessitates hierarchical indicator structures and flexible weighting mechanisms. Finally, data heterogeneity requires integrating subjective qualitative evaluations and objective quantitative metrics. This inherent complexity renders any singular evaluation methodology inadequate for comprehensively and accurately reflecting a program’s overall standing. More critically, it challenges prevailing mainstream evaluation paradigms on a global scale. For instance, international large-scale assessments (e.g., PISA, TIMSS), while providing comparable data, often reduce multidimensional educational quality to a single dimension through their ranking logic, attracting widespread critique of “assessment reductionism” and “data fetishism” (Addey et al., 2017; Appels et al., 2024). At the national level, examples such as the selective compliance observed in Portugal when aligning with the European Standards and Guidelines (ESG) (Cardoso et al., 2015), or the strategic responses adopted by disadvantaged community schools in Chile to the unfairly applied ratings of the national educational quality assessment system (SIMCE) due to contextual disparities (Contreras et al., 2024), reveal a profound disconnection between rigid evaluation frameworks and diverse local practices. This disconnection leads to evaluation that are either superficial or produce perverse incentives. Therefore, developing a new evaluation framework requires a core mission beyond methodological integration: to construct an evaluation mechanism capable of accommodating pluralistic values, adapting to different institutional contexts, and achieving a dynamic balance between standardization and contextualization.

Literature Review

Through systematic retrieval of the Web of Science and CNKI core journals using keywords such as “higher education quality” and “undergraduate major evaluation,” seminal works were identified to synthesize relevant research findings concerning the construction of evaluation indicator systems and the application of evaluation methodologies. A comprehensive summary of the 29 studies included in this literature review is presented in Table 1.

Summary of Included Reviews.

Research on Evaluation Indicator Systems

As synthesized in Table 1, research on evaluation indicator systems has evolved from single-dimensional to multi-dimensional approaches. Early studies primarily focused on singular dimensions, such as teaching effectiveness or student satisfaction (Carle, 2009; Milsom & Coughlin, 2015). In contrast, recent research tends to construct comprehensive indicator systems encompassing inputs, processes, and outputs (Jiang & Cao, 2021; Wang, 2022; Zhang et al., 2023). Meta-analytic research by Harrison et al indicates that high-quality educational evaluation should encompass multiple facets, including teaching practices, learning outcomes, resource conditions, and societal impact (Harrison et al., 2022). This perspective resonates with the “authentic assessment” framework proposed by Ajjawi et al. (2024).

Regarding the structure of indicator hierarchies, researchers commonly adopt a hierarchical design approach. Do et al. in their research on performance evaluation of university faculty in Vietnam, established a multi-layered evaluation framework encompassing main criteria and sub-criteria (Do et al., 2024). This hierarchical methodology has also been applied by Wang and Dai et al. in the evaluation of smart learning environments and higher education systems (Dai et al., 2021; Ding et al., 2023). Furthermore, the teacher professional ethics performance evaluation indicator system constructed by Xia and the professional competence training evaluation system for police colleges proposed by Wang et al. both employed a three-level indicator structure (Wang, 2022; Xia, 2021), demonstrating the effectiveness of multi-level indicator systems in addressing complex evaluation problems. Nevertheless, existing indicator systems still exhibit shortcomings in professional evaluation applications: (1) balancing comprehensiveness and practicality in the number of indicators remains difficult; (2) the integration of qualitative and quantitative indicators is often inadequate; and (3) correlations between indicators receive insufficient consideration.

Research on the Application of Evaluation Methods

As summarized in Table 1, evaluation methodologies have progressed from singular approaches to integrated frameworks. Numerous researchers have explored the applicability of single methodologies in evaluating higher education. The Analytic Hierarchy Process (AHP) method has gained widespread adoption due to its explicit hierarchical structure. For instance, Deng et al. utilized AHP to determine the weights for dimensions encompassing Moral, Intellectual, Physical, Aesthetic, and Labor Education (“Five Educations in Parallel”) within their constructed postgraduate evaluation system (Deng et al., 2024). However, Melón et al. (2008) found that the pure AHP approach, when applied in group decision-making contexts, can be susceptible to instability stemming from divergences in expert opinions (Melón et al., 2008). Additionally, the Entropy Weight Method (EWM) has been introduced into higher education major evaluation for its characteristic objectivity (Zhang, 2021). However, Bai and Wan (2025) observed that this method, when applied to innovation and entrepreneurship education evaluation, exhibits an excessive reliance on the degree of data dispersion (Bai & Wan, 2025). While each singular method offers distinct advantages, their significant limitations have motivated a shift toward integrated methodological approaches.

To overcome the limitations of single-method models, researchers are increasingly developing integrated evaluation frameworks that combine multiple technical approaches. For example, Cao et al. innovatively combined Principal Component Analysis (PCA) with the EWM to construct a quantitative model assessing higher education quality and sustainability (Cao et al., 2023). This approach simultaneously achieved dimensionality reduction of indicators while preserving core informational content. Similarly, Qi et al. employed an AHP technique for order of preference by similarity to ideal solution (TOPSIS) hybrid method to conduct stratified evaluations of higher education institutions within the Yangtze River Delta region (Qi et al., 2022). This involved determining indicator weights using the AHP, followed by multi-dimensional comparative ranking via the TOPSIS. The paradigm innovation within this line of research is further exemplified by the integrative application of methodologies. For instance, Gu fused Backpropagation (BP) Neural Networks with a stochastic matrix algorithm to develop a dynamic evaluation model for teaching quality (Gu, 2022). The model’s prediction accuracy of 94.9% validated the superiority of the hybrid algorithmic approach. Furthermore, Xin et al. (2022) established a “teaching-evaluation-learning-evaluation-effectiveness-evaluation” trifecta big data closed-loop evaluation system (Xin et al., 2022). This system effectively addressed the issue of data silos inherent in traditional singular evaluation methods through multi-source data fusion.

Recent research advances demonstrate that integrated subjective-objective weighting methods exhibit significant advantages in multi-faceted evaluation scenarios. Within the engineering and technical domain, Y. Yang et al. (2024) developed an integrated AHP-TOPSIS-RSR model for evaluating distributed photovoltaic grid integration (Y. Yang et al., 2024). This approach incorporated subjective weighting to reflect expert judgments regarding grid stability, while objective weighting captured the inherent characteristics of photovoltaic output data. The research empirically validated that this methodology effectively handles multi-dimensional indicators within complex systems. Analogously, Chen et al. integrated PCA and the AHP for power distribution equipment evaluation (Chen et al., 2019). By optimizing weight assignment using the relative entropy principle, they successfully resolved the typical issues of “excessive subjective bias” or “loss of data information” prevalent in traditional evaluations. These cross-disciplinary cases illustrate that the combination weighting method can systematically integrate domain-specific expertise with data-driven features.

Within the domain of higher education evaluation, this innovative model demonstrates unique value. Wu et al., addressing the inherent ambiguity in learning evaluation, employed an AHP-Fuzzy Comprehensive Evaluation (AHP-FCE) method to construct a multi-level indicator system (Wu et al., 2022). Their hierarchical weighting mechanism significantly enhanced the precision of undergraduate student learning outcome evaluation. Similarly, Xiao et al. (2024) integrated the Plan-Do-Check-Act (PDCA) cycle framework with combined subjective-objective weighting for teaching quality evaluation in vocational colleges. Their model incorporated a dynamic calibration mechanism, offering a novel solution for handling time-sensitive indicators in educational evaluation. Together, these applications demonstrate the promise of integrated weighting methods in higher education. Such approaches help overcome the limitations of traditional models, which often rely on fragmented indicators and uniform standards. Importantly, they preserve expert knowledge from subjective evaluation while leveraging the objectivity of data-driven algorithms, effectively addressing the complexity of multi-dimensional indicators. This dual capability provides robust methodological support for building multi-level major evaluation systems in higher education. It is especially suitable for integrating and analyzing the diverse data—such as teaching inputs, outputs, and efficacy outcomes—that are central to undergraduate major evaluation.

Research Content of This Research

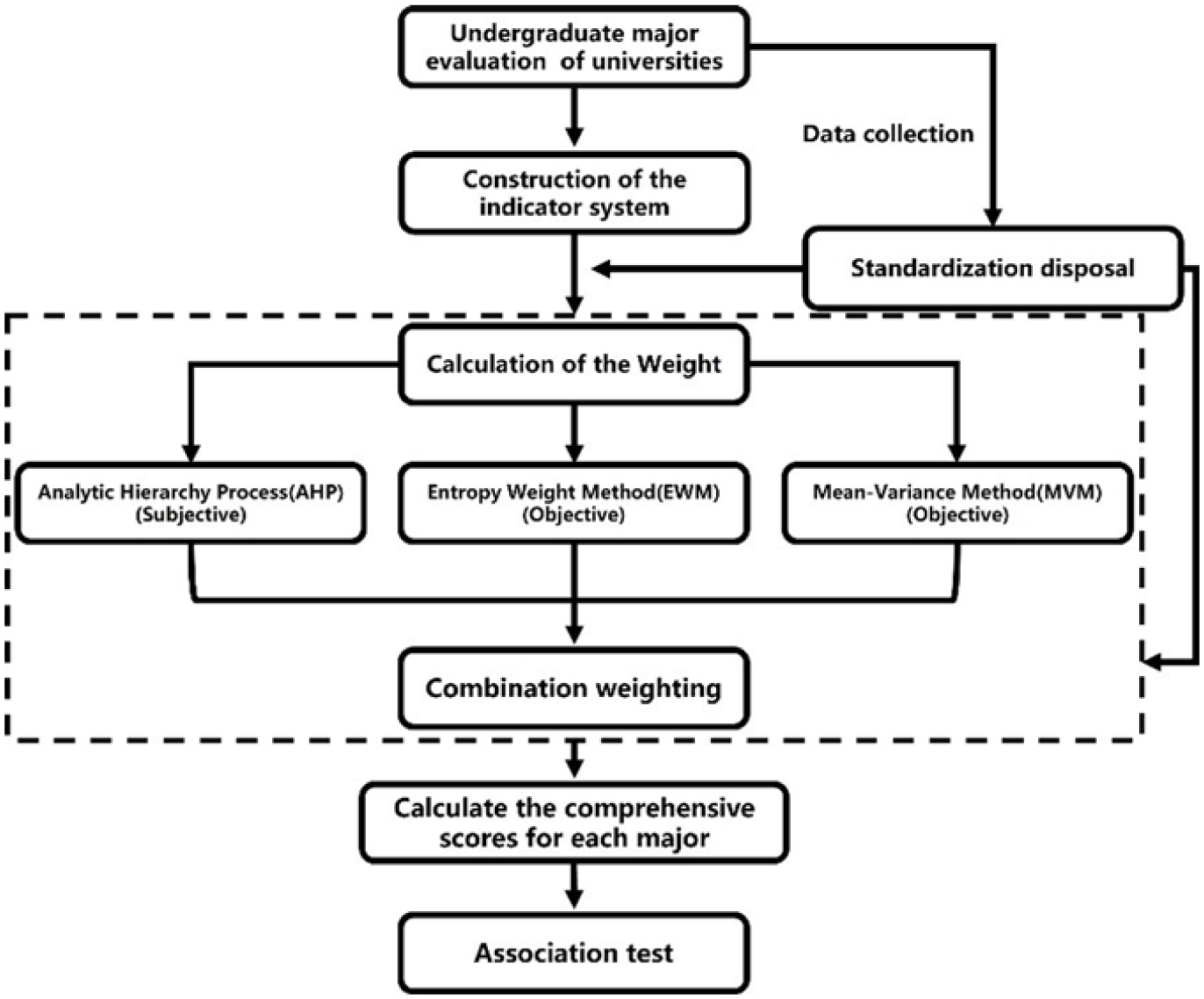

As shown in Figure 1, the technology route of this research focuses on undergraduate major evaluation of universities within the framework of higher education evaluation. Addressing identified limitations in extant research—specifically, the scarcity of systematic evaluation at the undergraduate major and the inadequacy of singular evaluation methods in comprehensively and accurately reflecting the overall quality of undergraduate majors, we construct a multi-level evaluation system comprising 4 first-level indices, 9 second-level indices, and 40 third-level indices. These indicators encompass multidimensional facets, including teaching resources, faculty quality, student development, and societal impact, designed to address the inherent complexity characterized by multifaceted evaluation elements, diverse dimensions, and heterogeneous data types. The research methodology involves calculating weights for indicators at each level using the AHP, EWM, and Mean-Variance Method (MVM), respectively. This three-method fusion is proposed to overcome the observed limitations of existing hybrid models. For instance, while the AHP-EWM combination integrates subjectivity and objectivity, its EWM component remains sensitive to data noise; meanwhile, the prevalent AHP-TOPSIS model relies heavily on the initial AHP weights, potentially amplifying subjective bias. Our AHP-EWM-MVM model introduces MVM’s statistical dispersion perspective to establish a more robust triangular validation mechanism. Subsequently, a combination weighting method synthesizing the above approaches is applied to assign integrated weights to each indicator factor. Utilizing empirical data, comprehensive scores for individual majors are computed and subjected to comparative evaluation analysis. This facilitates a systematic comparative analysis of the weight distributions derived from different weighting methods and the resultant variations in major composite scores. Simultaneously, we verify whether majors with higher composite scores have obtained the status of national first-class undergraduate majors and passed the engineering education accreditation. This serves to examine the correlation between evaluation outcomes and the effectiveness of major development. This research proposes determining indicator weights for higher education major evaluation through a combination weighting method, integrating domain expertise with data-driven features. The core proposition is formalized as the following testable hypothesis: H1: The evaluation system constructed based on the combined weighting method demonstrates high concordance with external authoritative accreditations of undergraduate major quality. This approach mitigates subjective bias in traditional single-method evaluations and addresses data information loss, thereby providing methodological references and practical pathways for optimizing undergraduate major structures and establishing a scientific, comprehensive evaluation system.

Technology route for the creation of this research.

Research Framework and Methodology

Construction of the Evaluation Indicator System for Undergraduate Majors

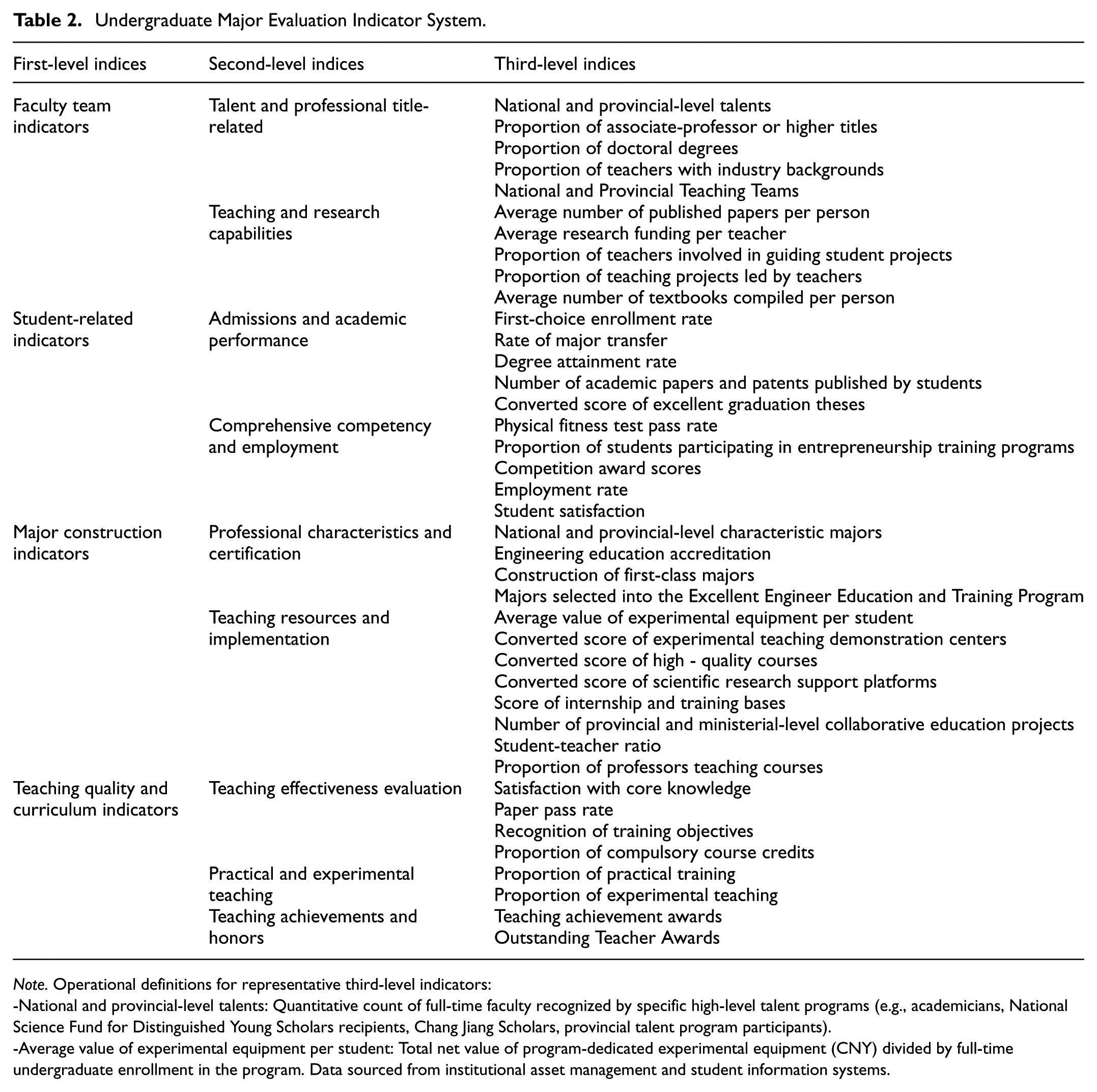

This research integrates relevant educational theories and practical requirements to construct a specialized evaluation indicator system for undergraduate majors. The overall objective is the evaluation of university undergraduate majors. The criteria level comprises four primary indicators: Faculty Team Indicators, Student-Related Indicators, Major Construction Indicators, and Teaching Quality and Curriculum Indicators. These primary indicators are further subdivided into nine secondary indicators. For instance, Faculty Team Indicators include “Talent and Professional Title-related” and “Teaching and Research Capabilities”, while Student-Related Indicators include “Admissions and Academic Performance” and “Comprehensive Competency and Employment.” Each secondary indicator is then divided into specific tertiary indicators, totaling 40 measurable elements. This number was determined through systematic literature analysis, expert consultation, and feasibility evaluation to balance comprehensiveness with practical data accessibility and operational cost. Examples include “National and Provincial-level Talents” and “Proportion of associate-professor or higher titles” under “Talent and Professional Title-related,” as detailed in Table 2. Data sources encompass the university's teaching management systems, professional development reports, student performance databases, and relevant educational statistics, ensuring the comprehensiveness, accuracy, and reliability of the data.

Undergraduate Major Evaluation Indicator System.

Note. Operational definitions for representative third-level indicators:

-National and provincial-level talents: Quantitative count of full-time faculty recognized by specific high-level talent programs (e.g., academicians, National Science Fund for Distinguished Young Scholars recipients, Chang Jiang Scholars, provincial talent program participants).

-Average value of experimental equipment per student: Total net value of program-dedicated experimental equipment (CNY) divided by full-time undergraduate enrollment in the program. Data sourced from institutional asset management and student information systems.

Dataset and Evaluation Methodology

This research selected 44 majors from a science- and technology-focused university in China as the research subjects, establishing a comprehensive evaluation indicator system and conducting a systematic major evaluation. The data for this research were sourced from the university’s “2019 to 2022 University Undergraduate Teaching Quality Report” and its “Undergraduate Major Evaluation Center Database.” Subsequently, the aforementioned data were collated and standardized using the following formula:

Following the determination of indicator weights, the composite score

Determination of Subjective Indicator Weights Based on AHP

Principle of the Analytic Hierarchy Process

The Analytic Hierarchy Process (AHP), proposed by the American operational researcher T. L. Saaty, determines the subjective weights of indicators by decomposing a complex problem into multiple hierarchical levels, such as the objective level, criteria level, and indicator level. It involves constructing judgment matrices, calculating the relative importance weights of elements at each level, and conducting a consistency check to ensure the rationality of the weights. The formula for the judgment matrix consistency check is as follows:

Where

The Values of IR.

Construction of Judgment Matrices

This research constructed a total of 14 judgment matrices. Specifically, one judgment matrix was formulated for the four subsystems (first-level indices), and one judgment matrix was developed for each set of indicator factors beneath each of these four subsystems. The expert panel was meticulously selected based on three criteria: (1) over ten years of experience in higher education evaluation, (2) familiarity with the characteristics of science and engineering majors, and (3) substantial experience in major development and management. For the indicators at each hierarchical level, these experts were invited to pairwise evaluate the relative importance of the indicator elements using Saaty’s 1 to 9 scale method, thereby constructing the judgment matrices. Taking the four subsystems (first-level indices) as an example, experts compared and scored the relative importance of the Faculty Team Indicators, Student-Related Indicators, Major Construction Indicators, and Teaching Quality and Curriculum Indicators. This process yielded a comprehensive judgment matrix for these first-level indices.

Consistency Test

Consistency tests were performed on each judgment matrix using the consistency test formula to ensure that the matrices met the required consistency standards. The computational results demonstrate that the

Determination of Subjective Weights

Following the aforementioned procedures, the subjective weights for the criteria level and the various indicators within the undergraduate major evaluation system were determined.

Determination of Objective Indicator Weights Based on the Entropy Weight Method

Principle of the Entropy Weight Method

The Entropy Weight Method is grounded in information entropy theory, which measures the degree of disorder or uncertainty in a system. In the context of undergraduate major evaluation, an indicator with high variability across majors possesses strong discriminatory power, corresponding to lower information entropy and a higher assigned weight. Conversely, an indicator with low variability carries less useful information, resulting in higher entropy and a lower weight.

Calculation Procedure

1) Data Normalization

Owing to the differing dimensions and scales among the various evaluation indicators, data normalization is essential to ensure comparability. We apply the following normalization formula:

where

2) Calculate the Weight of Each Indicator

After standardization, calculate the weight

3) Calculate the Entropy Value of Each Indicator

According to information entropy theory, information entropy measures the degree of disorder or uncertainty in information. In multi-indicator evaluation, the information entropy

4) Calculate the Divergence Coefficient of Each Indicator

To more intuitively reflect the relative importance of each indicator, the divergence coefficient

5) Calculate the Weight of Each Indicator

Based on the divergence coefficient, calculate the weight

Determination of Objective Indicator Weights Based on the Mean-Variance Method

Principle of the Mean-Variance Method

The fundamental concept of the Mean-Variance Method (MVM) stems from statistical variance theory. Variance acts as a metric to measure the extent of differences among data values. In the evaluation of undergraduate majors, when the data of an indicator vary widely among different majors, it implies that this indicator can effectively distinguish the strengths and weaknesses of various majors. As a result, such an indicator with a higher variance is deemed more informative and is assigned a greater weight in the evaluation system. On the contrary, if the data for an indicator shows minimal variation, it contributes little to differentiating majors, leading to a lower weight assignment. By calculating and normalizing these variances, MVM produces an objective, scientifically grounded weighting system. This process minimizes subjective influence, supporting more accurate and reliable evaluation outcomes.

Calculation Procedure

1) Calculate the Indicator Mean: For the j-th indicator, its mean value

2) Calculate the Indicator Standard Deviation: The standard deviation

3) Calculate Indicator Weights: Based on the standard deviations, calculate the weight

Calculation of Combined Weights

In undergraduate major evaluation, the scientific nature of the weighting system directly influences the reliability of the evaluation results. However, the exclusive use of subjective weighting methods, such as the AHP, while capable of incorporating expert experience and capturing logical relationships between indicators, is susceptible to subjective human biases, resulting in weights lacking objectivity and proving inflexible across differing national policy contexts. Conversely, objective weighting methods like the EWM and the MVM, while reliant on data and capable of determining weights based on indicator variability, may overlook the practical significance of indicators and their relevance to professional evaluation needs, leading to weights misaligned with talent development objectives. Therefore, this research integrates subjective weighting (AHP) and objective weighting methods (EWM, MVM) to assign combined weights to each evaluation indicator. This combined approach inherently provides a conflict-resolution mechanism. When significant discrepancies arise between subjective and objective weights for an indicator, the minimum entropy principle does not simply prioritize one source. Instead, it seeks an optimal compromise by minimizing the total information loss relative to both weight sets. This process effectively mitigates the influence of potential subjective bias or extreme data noise, resulting in a more balanced and robust weighting system.

Its adaptive logic operates at two levels. First, at the indicator level, the hierarchical system functions as an open architecture adaptable to context. For instance, within Germany's strong competency-based education tradition, tertiary indicators under Teaching Process Quality could be enhanced accordingly (Zlatkin-Troitschanskaia, 2021). In Nordic countries, where educational equity is a pronounced priority, observation points related to outcome disparities among student groups could be incorporated (Corral-Granados et al., 2025). Second, at the weighting level, the AHP-EWM-MVM mechanism serves as an adaptive engine. The AHP module internalizes locally relevant values by translating regional priorities—such as the emphasis on research impact versus teaching innovation—into weighted judgments through structured expert consultation. The EWM and MVM modules respond to the local data ecology by automatically reflecting the actual distribution and variation of institutional performance data within the region. The final composite weights, derived via the minimum variance method, thus represent a context-sensitive optimization that reconciles normative priorities with empirical evidence. Consequently, the same methodological framework could yield a weight structure emphasizing student satisfaction and employment outcomes in one national context, and curricular rigor and faculty research achievement in another, enabling a form of contextually intelligent evaluation.

Calculation Method

After obtaining the subjective weights (

Results Analysis

Evaluation Indicator Weight Analysis

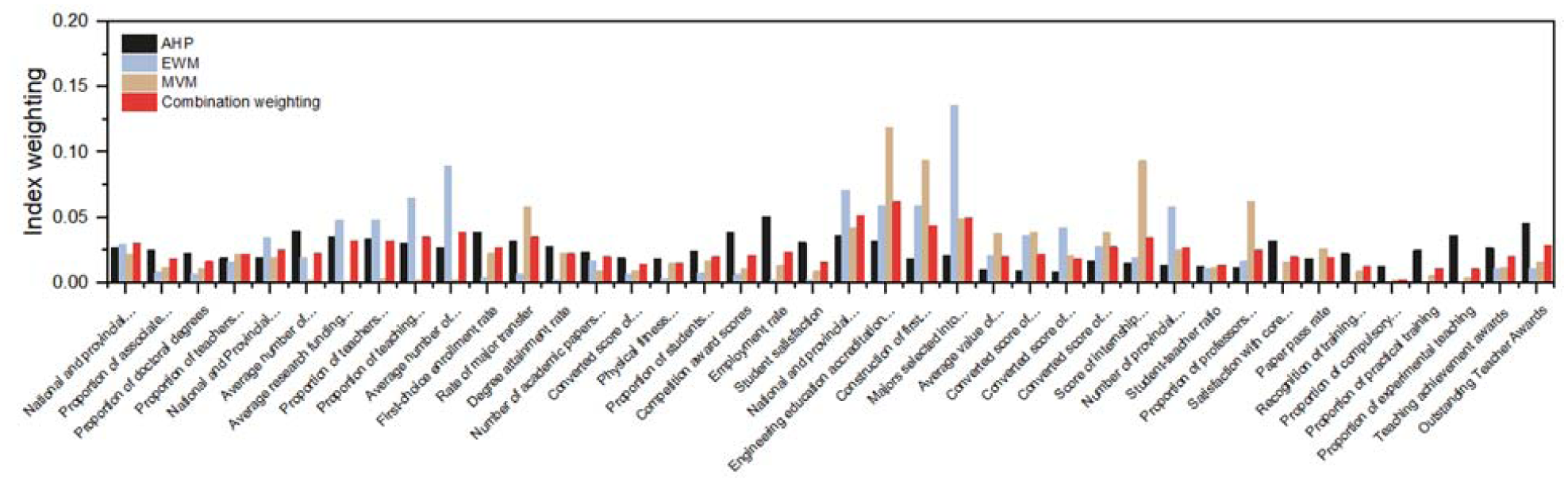

Based on the aforementioned evaluation indicator system, weights were calculated using three methods: the Analytic Hierarchy Process (AHP), the entropy weight method (EWM), and the Mean-Variance Method (MVM). Combined weights were then computed employing the minimum entropy method. The resultant weights for each indicator are presented in Tables 4 to 6 and Figures 2 to 4.

Different Method Weight Values of the First-Level Indices of the Major Evaluation.

Different Method Weight Values of the Second-Level Indices of the Major Evaluation.

Different Method Weight Values of the Third-Level Indices of the Major Evaluation.

The weight distributions of the first-level indices of the major evaluation.

The weight distributions of the second-level indices of the major evaluation.

The weight distributions of the third-level indices of the major evaluation.

The analysis of the computational results indicates that each of the three individual weighting methods exhibits inherent limitations, such as subjective bias, data noise, and discrete deviation. The AHP method, reliant on expert subjective judgment, assigned a weight to the “Major Construction Indicators” (0.2229) that is significantly low. This poses a risk of deviation in the valuation of key indicators due to subjective evaluation discrepancies. The EWM calculates weights based on the degree of data variability, which is susceptible to interference from data noise and may thus fail to truly reflect the importance of indicators. This issue is specifically manifested in the obvious anomaly of the weight of “Average number of textbooks compiled per person” (0.0895) compared with the results calculated by other methods. The MVM, constrained by data discrete deviation, may lead to imbalanced weight allocation. This imbalance is particularly pronounced in the substantial disparity between the weights of the third-level indicators “Engineering education accreditation” (0.1192) and “Proportion of teachers involved in guiding student projects” (0.0029). In contrast, the combination weighting method serves as a robust conflict-resolution mechanism. Through mathematical optimization, it achieves an effective balance between subjective and objective weights. For instance, the high combined weight for “Engineering education accreditation” (0.0620) is a result of this balancing. The AHP weight (0.0314) reflects its strategic importance recognized by experts, while the high MVM weight (0.1192) captures its significant dispersion in the dataset. The combination method rationally integrates these two perspectives, yielding a weight that respects both expert judgment and objective data characteristics. When confronted with significant disparities or extreme values, this approach more accurately reflects the actual importance of each indicator within undergraduate major evaluation. Consequently, employing the combination weighting method to obtain indicator weights is of paramount significance for enhancing the quality of undergraduate major evaluation within higher education evaluation.

From the results of combined weighting, a clear hierarchical structure of indicator values is presented. At the level of first-level indices, the weight of “Faculty Team Indicators” is 0.2565, that of “Student-Related Indicators” is 0.2509, and that of “Major Construction Indicators” is 0.2886. These indicators have relatively high and close weights, indicating that all these aspects occupy a core position in undergraduate major evaluation. Among them, the slightly higher weight of “major construction” reflects its critical leading role in the overall development of the major. Within the second-level indices, “Teaching and Research Capabilities” (0.1338) demonstrates the highest weighting among faculty-related metrics, highlighting the critical nexus between enhancing faculty pedagogical and research competencies and major development. Correspondingly, among the Level 2 indicators concerning student development, the weight for “Comprehensive Competency and Employment” (0.1181) validates the substantial emphasis placed on cultivating students holistic competencies and tracking graduate employment outcomes. Delving deeper into third-level indices, metrics such as “Average number of textbooks compiled per person” (0.0386) and “Proportion of teaching projects led by teachers” (0.0351) carry significant weight within the faculty development sub-dimensions. This weighting pattern identifies them as key leverage points for elevating faculty quality standards. Within Level 3 indicators pertaining to student quality, “First-choice enrollment rate” (0.0267) and “Rate of major transfer” (0.0350) are considered effective measures for reflecting the major attractiveness.

Data analysis indicates that the development of majors in higher education institutions must be grounded in a high-caliber teaching faculty. Efforts should be directed toward introducing and cultivating high-level talents at the national and provincial levels, optimizing the structure of professional titles and academic degrees, and enhancing faculty members’ capabilities in teaching, research, and industry engagement. Concurrently, it is imperative to focus on the entire process of student development: improving the quality of student recruitment by enhancing the attractiveness of academic programs, strengthening academic guidance, practical training, and comprehensive quality cultivation, so as to promote students’ all-around development and high-quality employment.

Regarding major construction, institutions should prioritize the establishment of distinctive features through authoritative accreditation, increase investment in teaching resources, and optimize laboratory/practical training facilities alongside faculty allocation. The enhancement of teaching quality hinges critically on constructing an effective evaluation system, strengthening core curricula and practical teaching components, and stimulating the production of high-quality teaching outcomes. Overall planning must scientifically allocate resources based on assigned indicator weights, promote the coordinated advancement of faculty development, student growth, major development, and teaching improvement, and establish a dynamic adjustment mechanism to accommodate evolving societal demands.

In summary, this research employs a combined weighting method to optimize indicator weight assignment, thereby facilitating institutional efforts towards the continuous refinement of quality assurance systems and the innovation of dynamic regulation mechanisms for the major evaluation. Higher education institutions should adopt a strategic foresight, grounding their endeavors in a high-caliber teaching faculty and prioritizing student development. By strengthening major construction and quality enhancement through scientific planning and dynamic adjustment, they can effectively contribute to the high-quality development of higher education.

Association Test

Self-evaluation serves as a critical instrument for monitoring the quality of major development. Through quantitative analysis of indicators encompassing teaching resources, student development, faculty caliber, and major construction, it systematically delineates the current state of major advancement, enabling the identification of strengths, diagnosis of weaknesses, and provision of evidence-based rationale for resource allocation. However, the validity of self-evaluation outcomes necessitates verification through external authoritative evaluation to ascertain whether they accurately reflect the actual effectiveness of major development. To establish objective benchmarks, this research utilizes two external authoritative certifications: “National First-Class Undergraduate Majors” and “Engineering Education Accreditation.” The composite scores for each major, calculated per the methodology in Section “Dataset and Evaluation Methodology,” are then subjected to association tests and comparative analysis.

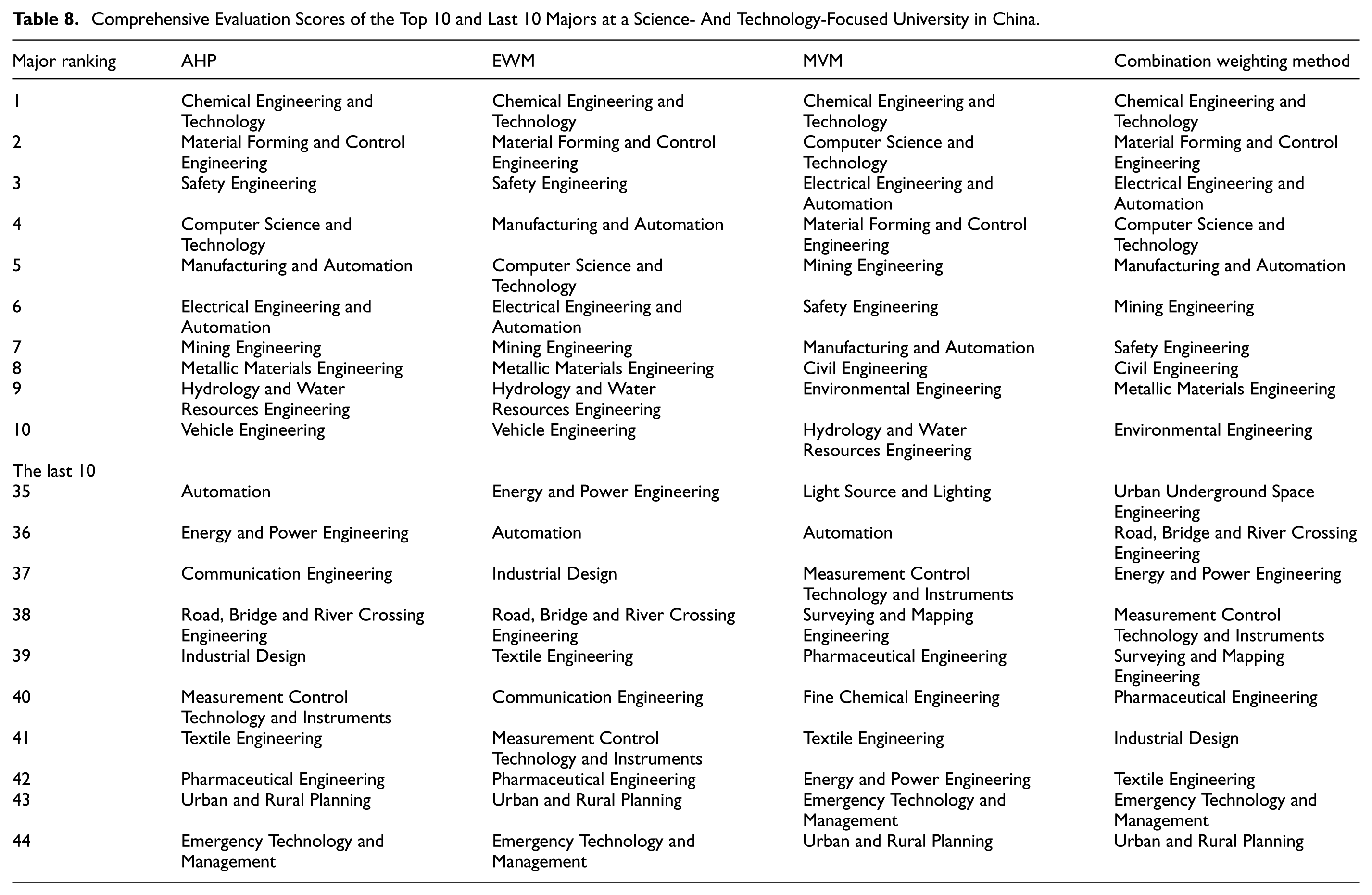

The evaluation results (Table 7) indicate that majors such as Chemical Engineering and Technology, Materials Forming and Control Engineering, and Safety Engineering consistently rank highly across various methodologies. Notably, Chemical Engineering and Technology secured the top position under all four evaluation methods. This preeminence aligns well with its dual-accredited status as both a National First-Class Undergraduate Majors and an Engineering Education Accreditation recipient. Conversely, majors including Urban and Rural Planning, and Emergency Technology and Management consistently ranked lowest across all four methodologies. These majors generally lack authoritative external accreditation and demonstrate conspicuous deficiencies in critical indicators such as “Faculty Team Indicators” and “Student-Related Indicators.”

Comprehensive Evaluation Scores for Selected Majors at a Science- and Technology-Focused University in China.

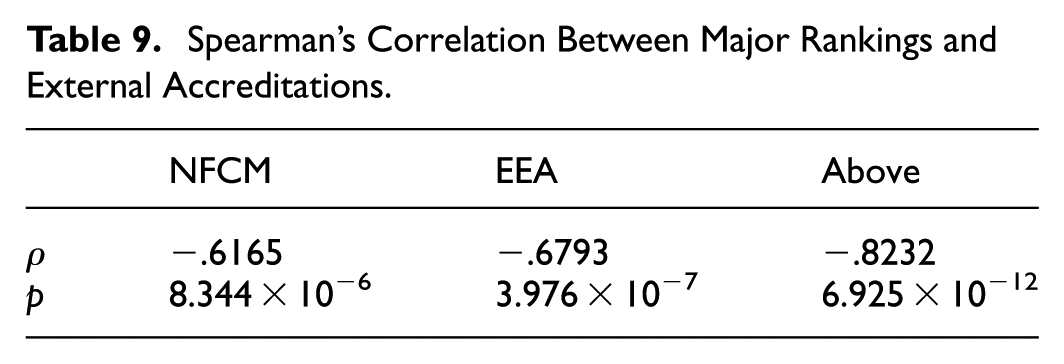

The data in Table 8 and the subsequent quantitative analysis robustly demonstrate the consistency between the evaluation results obtained via the combined weighting method and external accreditations. To quantitatively assess this alignment, we have supplemented the analysis with the Spearman’s correlation coefficients between the ranking of evaluation methods in this study and the authoritative external certifications within the established indicator system—namely, National First-Class Undergraduate Majors (NFCM), Engineering Education Accreditation (EEA), as well as the composite of the aforementioned two external certifications (Above). The corresponding results are presented in the Table 9. The results presented in the table allow for the following straightforward observations: (1) A consistent trend is evident between the major rankings and external certifications, with higher absolute values of ρ indicating a stronger degree of correlation and agreement. (2) All ρ values are negative (ranging from −.82 to −.62), where a higher absolute value corresponds to a more favorable major ranking (i.e., a higher level of major quality). With p-values far below .001, these results demonstrate a strong concordance with external evaluations, thereby validating that the evaluation results of this study align well with objective reality. (3) When comparing different external certifications, the composite measure incorporating all two certifications exhibits the largest absolute ρ value (−.82) and the smallest p-value (6.92

Comprehensive Evaluation Scores of the Top 10 and Last 10 Majors at a Science- And Technology-Focused University in China.

Spearman’s Correlation Between Major Rankings and External Accreditations.

To further validate the efficacy of the combination weighting model in evaluating the student development dimension, a supplementary analysis was conducted using two key metrics: “Employment Rate” and “Student Satisfaction.” The results demonstrate a significant positive correlation between the comprehensive rankings derived from the combination weighting method and these two indicators. Among the top 10 ranked majors, the average employment rate was 95.2%, and the average student satisfaction was 92.4%. In contrast, the bottom 10 ranked majors had corresponding averages of 83.1% and 79.5%. This clear gradient proves that the combination weighting method effectively captures core information reflecting student achievement and experience. The evaluation results are thus not only highly consistent with external accreditations but also accurately diagnose the intrinsic quality of majors regarding student development, further confirming the model’s comprehensive advantage in integrating multi-dimensional information.

Synthesizing the above analysis, the self-evaluation results derived from the combined weighting method employed in this research demonstrate a high degree of concordance with external authoritative accreditations, as rigorously validated by significant Spearman’s rank correlations. Most importantly, these results provide strong empirical support for our research hypothesis (H1). This validates the reliability of the proposed method and offers an efficient and robust solution for calculating indicator weights in major evaluation under multi-source information fusion.

Conclusions and Recommendations

Conclusions

This study addresses the complex and multidimensional challenge of evaluating undergraduate major quality by developing a combined weighting model that integrates subjective expertise with objective data. The proposed methodology tackles the universal difficulty of balancing expert judgment with quantitative evidence, offering a robust solution applicable across diverse international contexts. Empirical evidence indicates that the AHP-EWM-MVM-based evaluation framework effectively synthesizes expert knowledge with data characteristics, and its results demonstrate significant consistency with external authoritative accreditations.

The deeper methodological contribution of this study lies in the distinct transferability embedded in its design. In response to the pervasive tensions in global higher education evaluation—such as those between quality and equity, standardization and contextualization, along with the potential distortive effects of high-stakes evaluation—this framework establishes a flexible and modular foundation for generating solutions. Its modular indicator system allows for the replacement and reprioritization of indicators based on national contexts. The core of its dynamic combined weighting mechanism ensures that evaluation weights are grounded in the specific educational ecology by facilitating a transparent and mathematical negotiation between local value judgments and local data reality.

The findings demonstrate that the combined weighting method effectively mitigates the limitations inherent in single-method evaluations. By synthesizing the domain-knowledge logic of AHP with the data-driven features of EWM and MVM, it provides a scientifically rigorous yet practical approach. Furthermore, the model implements the principles of authentic assessment. Its multidimensional indicator system—encompassing teaching resources, faculty caliber, student development, and societal impact—reflects a comprehensive view of educational quality. The integration of subjective and objective weighting embodies the principle of synthesizing multiple sources of evidence, while its capacity for contextualized evaluation aligns with the situated nature of authentic assessment.

Beyond systematically representing core quality dimensions, the model proves effective in diagnosing major development outcomes. Moreover, the methodological framework is inherently generalizable, allowing for the development of customized evaluation schemes tailored to various institutional and disciplinary settings. Consequently, the value of this research extends beyond providing an evaluation tool for Chinese universities; it contributes to the international higher education community a design rationale and an implementation pathway for constructing contextually adaptive evaluation systems in a scientific and systematic manner, thereby establishing a solid methodological foundation for institutions to optimize their major structures and enhance quality assurance systems.

Recommendations

To advance undergraduate major evaluation and improve program quality, this study proposes the following evidence-based recommendations. First, institutions should establish a classification-based evaluation mechanism, using the combined weighting model to build a closed-loop quality governance system of evaluation, diagnosis, and improvement. For example, longitudinal application of the model at one university identified persistently low-performing majors—such as Textile Engineering and Urban and Rural Planning—which showed clear weaknesses in core areas like Faculty Team and Student Development. These data-informed insights supported the decision to reduce enrollment in those majors, optimizing institutional resource allocation.

Second, the model should be applied dynamically through regular data and weight updates, enabling longitudinal tracking and timely major adjustments. Third, intelligent evaluation platforms that integrate a unified framework with disciplinary adaptability should be developed to embed combined weighting results into dynamic major management. Moreover, strategic resource allocation should prioritize high-weight indicators identified in our analysis. In the studied institution, for instance, resources should focus on supporting majors pursuing authoritative accreditations such as Engineering Education Accreditation, and on enhancing core faculty competencies under Teaching and Research Capabilities.

By implementing these steps, higher education institutions can concentrate resources on building distinctive, competitive major clusters; rationally optimize major structures in response to developmental and societal needs; and obtain evidence-based support for regional differentiation within initiatives such as China’s “Double First-Class” program. Collectively, these advancements facilitate the comprehensive enhancement of the overall quality of higher education, contributing to the global pursuit of excellence in teaching and learning.

Limitations and Ethical Considerations

While this study provides a robust methodological framework for major evaluation, it is essential to acknowledge its limitations and the ethical considerations inherent in implementing such a data-intensive system. Firstly, the empirical validation was conducted within a single science-and-technology-focused university, which, while validating the method's effectiveness in this context, necessitates caution when generalizing the specific indicator weights to other institutional types. More critically, the deployment of any quantitative evaluation system carries the risk of unintended consequences. A primary concern is the potential for “gaming” the indicators, where departments might prioritize improving metrics over genuine educational quality. Furthermore, the system exhibits an inherent bias towards easily quantifiable outputs, potentially marginalizing essential but harder-to-measure educational values such as critical thinking, ethical reasoning, and creativity.

To mitigate these risks, we propose several strategies. The evaluation results should be positioned primarily as a diagnostic tool for continuous quality improvement rather than a final judgment. For programs with anomalous results, a qualitative-quantitative verification mechanism, incorporating methods like peer review and site visits, should be activated. Finally, the indicator system itself must undergo dynamic evolution to better capture a more holistic representation of educational quality and adapt to shifting educational paradigms. Acknowledging these limitations and implementing these safeguards are crucial for the responsible and effective application of the proposed evaluation model.

Footnotes

Acknowledgements

We are very grateful to all those who have helped with the article.

Ethical Considerations

This article does not contain any studies with human participants performed by any of the authors.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was sponsored by Humanities and Social Science Research Project of the Ministry of Education of China (Grant No. 20YJA880072) and Special Project on Science and Technology Strategy Research of Shanxi (202404030401047)

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data that support the findings of this study are available on request from the corresponding author.