Abstract

This study aims to explore the construction of an evaluation index system for undergraduate engineering majors based on the Context, Input, Process, Product (CIPP) model under the Outcome-based Education (OBE) approach employing the Delphi method and Analytic Hierarchy Process. The trial of the evaluation index system involved 986 entrepreneurs, engineering technicians and teachers majoring in the field of electronic-information, who were recruited and selected for participation on the Internet. Data were collected from the consultation questionnaire developed in accordance with the indicators at all levels. Subsequently, Principal Component Analysis was used to verify the rationality of the indicator design, and the corresponding weights were adjusted. The results found that the index system includes 4 first-level, 11 second-level, and 42 third-level indicators, and different weights are assigned according to the extent of influence each of these indicators has on the quality of undergraduate training. Furthermore, the evaluation system can reflect the actual relationship among the indicators to some extent, which provides reference for the quality evaluation of engineering education.

Keywords

Introduction

The quality of higher education can serve as an effective indicator of a country’s economic growth (Becket, 2008). The concept of quality in education is associated with the concept of product quality in the industry, which is the ability to produce high quality products according to the requirements of producers, and is associated with the selection of the best input materials for teaching in order to enhance skills and basic qualities in students (Susilana & Asra, 2013). In order to improve the quality of education, it is imperative to use an effective evaluation approach. Quality evaluation methods include internal evaluation and external evaluation, both of which emphasize quality assurance evaluations as well as the importance of feedback and retrospective evaluation. Evaluation of teaching quality aims to provide feedback and improve it, as well as address individual and social challenges (Brown, 2004). In addition, quality assurance is a systematic method for evaluating educational programs to ensure that they meet acceptable standards of instruction, academics, and infrastructure (UNESCO, 2004). Quality assurance systems for higher education have emphasized the quality of undergraduate education in recent years.

Undergraduate education spans a long duration, requires the most resources, and has a direct relationship with the quality of all types of master’s and doctoral programs (Ministry of Education of the People’s Republic of China, 2021b). The assessment of undergraduate education quality involves a comprehensive examination of the students (Lin & Geng, 2019). Professional evaluation has become independent from educational evaluation and has played an important part in the assessment of higher education institutions in recent years, while educational evaluation has covered higher education (Wang, 2016). It refers to the evaluation of the educational quality of different majors in higher or secondary specialized schools, a process in which information is collected and analyzed to generate professional judgments, which is comprehensive, cyclic and improvement-oriented, and ultimately leads to self-improvement (Cheyney University of Pennsylvania, 2009). The system and development of professional evaluation vary according to country. For example, American universities updated their professional structures in line with social development and improved their curricula and teaching models so that students’ professional knowledge and comprehensive abilities keep pace with the times (Wang & Tang, 2010). In the United Kingdom, the quality assurance system for higher education consists of two components: internal quality assurance system at the university and the external quality monitoring and evaluation system, which provides quality assurance in various ways (Wu, 2008). German higher education assessment generally consists of four stages: internal assessment, external assessment, implementation of assessment results, and follow-up assessment (Li, 2005). In China, several assessment initiatives have been launched by the Teaching and Learning Evaluation Center of the Ministry of Education since 2013, such as the report on the quality of undergraduate teaching in colleges and universities (Higher Education Evaluation Center of the Ministry of Education, 2020). There are 18 new undergraduate universities in China that have participated in the conformity assessment, 305 majors have qualified for the professional certification for engineering education, 262 have qualified for the professional certification for teacher training, and 269 have qualified for the professional certification for medicine (Ministry of Education of the People’s Republic of China, 2021a). It is considered to improve the quality of China’s engineering talent cultivation (Zhao et al., 2020). Therefore, it is evident that undergraduate education presently places great emphasis on evaluating university majors and undergraduate education (Zhang & Guo, 2009).

In China, although studies on evaluation systems have been conducted in certain fields, such as architecture, engineering cost and other fields or professions, in terms of specific operations, there is a lack of professional guidance, and there are problems such as imperfect evaluation systems and evaluation indicators without strong operability, which fail to play an effective role in professional protection (Lu, 2011). The focus of China’s higher education policy has shifted from the macro to the micro level, but the corresponding evaluation system is relatively lagging-behind. The evaluation of student competence at the undergraduate level has become a weak link in the evaluation system of higher education (Xie et al., 2021).

Engineering education is a multi-faceted process that requires students to have high levels of cognitive ability, problem-solving skills, and information processing skills (Alabdulkarem et al., 2021; Winberg et al., 2016). National reports across the globe, including Europe and the United States, implore the necessity to increase the number and diversity of students graduating in engineering to meet certain critical requirements of society (American Society for Engineering Education, 2013; Prieto et al., 2009). Electronic information is one of the most popular majors in engineering, with high employment rates, and emphasizes both theory and practice (Teaching Guidance Committee of Ministry of Education, 2018). In order to better cultivate students’ literacy and ability in all aspects, this study considers the electronic information-majors as an example and conducts an evaluation based on CIPP Model to construct an evaluation index system under Outcome-Based Education (OBE) approach for undergraduate engineering majors, to achieve a multi-faceted, multi-angle, comprehensive evaluation.

OBE approach

In the early 1990s, William G. Spady proposed OBE as a means of ensuring the quality of the American school system. Eventually, it was extended to the higher education system as well. OBE is a system of education that prioritizes goals, achievements, and outcomes, implying that all students are expected to leave the college demonstrating clearly oriented outcomes that focus and organize the college’s entire programmatic and instructional efforts, requiring administrators, faculty, and students to focus on desired educational outcomes (Spady, 1994).

It is an approach presently used worldwide as part of the strategy of quality assurance, in which decisions on the curriculum, and instruction are driven by the exit learning outcomes that the students should display at the end of a program or a course. OBE is underpinned by three basic premises: all students can learn and succeed, but not all at the same time or in the same way; successful learning promotes even more successful learning; and schools control the conditions that determine whether the students are successful at school learning (Killen, 2000). It is possible to accommodate different learning styles and implement instructional innovations with OBE, allowing for a more effective approach to teaching that emphasizes student competencies. Additionally, it incorporates innovative approaches to the teaching and learning process, such as problem-based learning, oral presentations, professional lectures, and on-site visits (Basir et al., 2019). The effectiveness of OBE implementation in facilitating the teaching and learning process relies on appropriate teaching, learning, and assessment activities that are strong interconnections, and constructive mapping of outcomes in terms of student attributes (Taras, 2015). The literature suggested that the OBE framework collectively emphasizes system-level change, observable, measurable outcomes, and the belief that all students can learn and succeed within a specified time (Faouzi et al., 2003). At present, OBE has resulted in a more positive learning attitude among students (An, 2014), while promoting the development of scholarship, teaching, and attitudes of knowledgeable faculty who have made significant contributions to institutional and programmatic goals (Borsoto et al., 2014; Mercado & Lagto, 2018; Tungpalan & Antalan, 2021).

CIPP model

Stufflebeam’s (2003) Context, Input, Process, and Product (CIPP) evaluation model is an integrated framework of controlled projects, guided programs, and systematic evaluation that has been widely adopted for educational evaluation. Specifically, the basic idea of the CIPP evaluation model is that the most important purpose of evaluation is not to prove, but to improve (Stufflebeam & Shinkfield, 2007). In a departure from other evaluation models, it comprises four dimensions—Context, Input, Process, and Product evaluation. Using this evaluation theory as the basis of constructing evaluation scales, it is possible to make value judgments on evaluation objects from multiple dimensions.

Context evaluation evaluates and optimizes the activity plan before the activity commences, and judges whether the activity objectives and environment can meet the needs of the participants, which lays the foundation for efficient performance of the activity, namely, assessing needs (Stufflebeam & Shinkfield, 2007). Input evaluation screens and examines the multiple resources in the activity, such as time and human resources, determines whether they are conducive to the achievement of the goals. Input evaluation also assesses the degree to which the plans or strategies used and the programs are responsive to customer needs. Process evaluation concerns the monitoring of the progress of the activity, determining whether unpredictable events occur, whether the activity is proceeding as expected, and recording the problems that arise from the activity to make improvements in time, as well as focusing on the degree of achievement of the results. Product evaluation is the final evaluation of the activity and its participants not only to measure and judge whether the participants have achieved the activity objectives, but also to summarize and improve the activity plan. Context and Input evaluation are formative and proactive in nature, while Process and Product evaluation are summative and retrospective, allowing decision-makers to obtain a wide range of information needed to guide activities better. The CIPP model has been widely used in the field of education recently, particularly for evaluating school policies, school programs, and curriculum implementation, providing a theoretical basis to evaluate the quality of higher education (Basaran et al., 2021; Hurmaini, 2015; Jumari & Suwandi, 2020; Markus & Philipp, 2018; Powell & Conrad, 2015). First-level indicators in this study are constructed with the four elements of the CIPP model.

OBE is a structural approach that organizes, implements, and evaluates education in terms of student learning outcomes and outputs, enabling higher education to shift from a teaching paradigm to learning paradigm that enhances the effectiveness and quality of teaching (Barr & Tagg, 1995). The goals, curriculum, materials, assessment, and graduation requirements of OBE are focused on outcomes rather than prescribed processes (Kaliannan & Chandran, 2012). CIPP, a decision-oriented assessment model, emphasizes the process of assessment and diagnostic feedback rather than the outcomes-based assessment favored by traditional educational assessment models. The model recognizes that educational evaluation should not be limited to the achievement of expected goals, but should collect information about the implementation of educational programs to provide a basis and reference for decision-making, which is a combination of formative and outcome evaluation. As can be seen, the CIPP model has improved OBE to a certain extent in terms of diagnostic feedback. When conducting the evaluation, the CIPP model can either evaluate each process individually or be considered as a whole for systematic evaluation. The model unifies the four segments and evaluates each part, making the evaluation system more comprehensive and scientific, achieving the purpose of providing information for educational decision-making. It is evident that both emphasize the dynamic nature of evaluation, process, diagnostic feedback, and the needs of society to achieve improvement and refinement of education and teaching through evaluation, namely, the purpose of evaluation is not to prove, but to improve (Stufflebeam, 2003). In this regard, OBE and CIPP are internally consistent. OBE provides decision-making guidelines for the optimization of the education and talent development system, while CIPP quantifies all aspects of the OBE through implementation, diagnosis, and improvement to make evaluation decisions more scientific and rigorous, with consistency in core connotation and complementarity in evaluation processes.

In this study, the CIPP model will be used to construct the evaluation index system of engineering majors under the OBE approach and the process of construction is shown in Figure 1. First, the evaluation indexes are constructed based on the requirements of the engineering professional training program, combined with the CIPP model, OBE, and professional accreditation standards for engineering education, as well as the Delphi method. The evaluation indexes are determined by coding literature research, policy as well as interview data, plus three rounds of expert consultation questionnaires to score the rationality of the indexes, and inviting experts to assign weights to each index. Next, the indicator weights are calculated using the Analytic Hierarchy Process, and a two-by-two comparison approach is adopted to judge the importance of each indicator using the idea of problem decomposition. As part of indicator weights determination, a questionnaire is prepared in accordance with the three-level indicators, and the evaluation samples are selected for empirical analysis using Principal Component Analysis. After extracting the common factors to verify the rationality of the evaluation indicators, necessary additions and deletions are made. Finally, an objective and reasonable evaluation system is obtained.

The process of constructing the quality evaluation system of engineering majors.

Purposes of this Study

Based on the four elements of the CIPP model, combined with the OBE and the requirements of engineering education accreditation standards, this study will construct an evaluation index system and determine its weights in four aspects: Context, Input, Process, and Product; design a strategy to evaluate engineering majors from both qualitative and quantitative analysis; and provide ideas for the optimization of the evaluation system, taking the training program of engineering majors as the core. Therefore, the purpose of this study is “Is the undergraduate engineering evaluation system based on the CIPP model operational? ”

Method

Evaluation Approach

The Delphi method involves an anonymous questionnaire survey of experts, from related fields having a certain number of years of teaching experience, representation, and authority. The questionnaire gages experts’ opinions and suggestions, and finally, by summarizing their views, steps are taken to modify or maintain the same content of the study (Linstone & Turloff, 1975).

Analytic Hierarchy Process is mainly used for evaluation or decision-making at multiple levels or multiple objectives. It converts complex multi-factor comparison problems with mathematical calculations, integrates and simplifies the complex thinking process due to multiple comparisons, reduces decision errors to a certain extent, and is widely used in the problem decision-making field (Saaty, 1999).

Principal Component Analysis is a type of Factor Analysis, the basic principle of which is to extract common factors from a set of variables by dimensionality reduction of the data, so as to achieve the data structure of a complex index system represented by a few extracted common factors. The initial factor loading matrix can also be rotated to redistribute the relationship between the factors and the original variables, so that the extracted common factors have real meaning and make the interpretation of empirical studies scientifically sound (Spearman, 1904).

Sample

Participants included 986 entrepreneurs, engineers, and teachers majoring in electronic-information who were selected online to receive the questionnaire. The proportion of males (48.8%) and females (51.2%) were approximately the same, and their ages ranged from 30 to 45 years. All the subjects participated in the study voluntarily. Prior to the study, they were informed of the study methods and signed consent forms.

Procedure

The study commenced in January 2022 and lasted for 5 months. According to the requirements of the OBE approach and engineering professional accreditation standards, the specific steps of engineering professional evaluation index construction were generally categorized into the following five steps.

Step 1: Coding of engineering professional evaluation-related literature, policy documents and texts of faculty and student interviews to condense the three-level constituent elements in the evaluation system. Among them, the components of the three-level indicators were determined based on the documents established at the national level and the contents of the interviews with teachers and students. The establishment of second-level indicators was according to the in-depth condensation of third-level indicators. The determination of first-level indicators was based on the summarization of the components of second-level indicators.

Step 2: By summarizing and organizing the indicators selected from literature, policy documents and in-depth interviews, deleting duplicated indicators and combining and condensing similar indicators as well as using the CIPP model as the basic framework, we initially came up with the dimensional structure of evaluation indicators of engineering majors (Table 1).

Step 3: The rationality and validity of the selected indicators were tested by the Delphi method. In this study, 15 authoritative entrepreneurs and business-related experts were selected and three rounds of expert consultation were adopted. The first two rounds of expert consultation were mainly to collect experts’ opinions on evaluation indexes and their rationality, and the questions in the first two rounds were exactly the same (See Appendix 1). The third round of expert consultation was to collect experts’ scores on the comparison of importance between two elements after modifying the first two rounds of experts’ opinions, so as to finally determine a relatively reliable and reasonable evaluation system for engineering majors. The three rounds of expert consultation questionnaires were implemented from January 10 to March 8, 2022, as shown in Table 2.

Step 4: Based on constructing the evaluation system of engineering majors using the Delphi method, the weights of each index of the evaluation system were determined by combining the Analytic Hierarchy Process. According to this process, firstly, the hierarchical structure of the engineering professional evaluation system was constructed; secondly, the judgment matrix was created; again, the hierarchical single ranking and its consistency test were conducted; finally, the weights of the relative importance of all factors of a certain level of the total target were calculated.

Step 5: After determining the weights of indicators at all levels, a questionnaire was prepared according to the three-level indicators for Principal Component Analysis, and the pilot major of electronic-information was used to examine whether the evaluation system of engineering majors has good reliability and validity, and whether there are significant differences in the perceived importance of the indicator system among different groups to improve the evaluation system.

Evaluation Indicators for Engineering Majors.

Implementation of Expert Consultation Questionnaire.

Calculation of index weights

In the light of the evaluation system determination, the Analytic Hierarchy Process was applied to calculate the weights of indicators at all levels. After collecting opinions from 15 consulting experts, Satty 1 to 9 scale analysis was used to construct the matrix of 15 experts’ composition elements at all levels, and the validity of this matrix was first judged in relation to the completeness of the matrix filling, followed by the random consistency test, and finally the calculation and analysis were conducted for the matrix (See Appendix 3).

Applying the Analytic Hierarchy Process to assign weights to the first-level and second-level indicators, the results showed that the judgment matrix’s

The Weights and Scores of Indicators at All Levels of the Evaluation System of Engineering Majors.

Instrument

To verify the reasonableness of the indicators at each level, an empirical study was conducted using Principal Component Analysis. A 5-point Likert scale was developed to test the reasonableness of the evaluation system based on the content of the three-level indicators (See Appendix 2), including 42 required questions. Each question statement was scored as 1 (completely disagree), 2 (strongly disagree), 3 (fair), 4 (strongly agree), and 5 (completely agree). In order to test the suitability of Principal Component Analysis, among the question items, the collected data were normalized and processed into dimensionless data, and then the reliability and validity of the questionnaire were verified using the Kaiser Meyer Olkin (KMO) statistical test and the Bartlett test. If the KMO value is less than 0.500, it indicates that the items in the scale are not suitable for Factor Analysis between items.

Results

Validating the Reasonableness of the Index

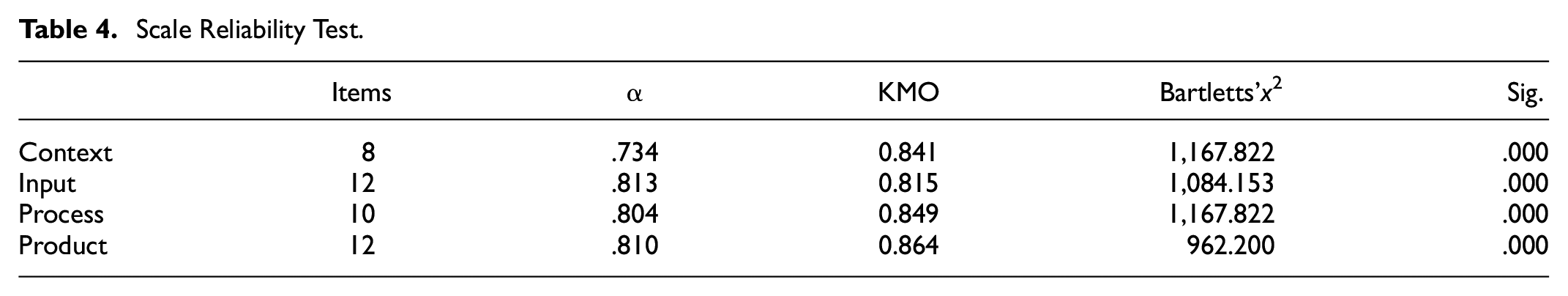

The suitability for Principal Component Analysis needs to be determined by KMO values and Bartlett’s test, and Table 4 shows the KMO of the scales and the coefficients of the reliability tests. We can observe that the KMO values for each dimension were greater than 0.500 and the sig values accompanying the Bartlett’s test for each dimension were less than 0.001, indicating that there was a strong correlation between the indicators and that Principal Component Analysis can be performed.

Scale Reliability Test.

The data were entered into Solutions Statistical Package for the Social Sciences (SPSS) 23.0 software for Principal Component Analysis to obtain the variance contribution of each factor to the final results. Subsequently, 11 factors were selected for analysis, which have eigenvalues greater than 1 and can be considered as common factors. These common factors were denoted by F1-F11, as shown in Table 5.

Eigenvalues and Variance Contribution of Each Indicator.

As can be seen from Table 5, the eigenvalues of the 11 factors could be seen to be greater than 1. It indicated that there were corresponding common factors in Context, Input, Process, and Product evaluation that could well describe the specific meanings of the 42 third-level indicators, which correspond to the number of second-level indicators, demonstrating that third-level indicators were set more reasonably.

Taking Process evaluation as an example, the maximum variance method was used to rotate the factors and then obtain the extraction results, and a total of three components were extracted, with an overall cumulative explained variance of 65.296%. The rotated load matrix in the Principal Component Analysis of Process evaluation was obtained, as shown in Table 6. According to the rotated loading matrix in the Principal Component Analysis, the influence of each variable on each factor can be seen. Based on the coefficients of each factor on the common factor, the degree of influence was judged. The factors with absolute values of coefficients greater than .500 were selected to extract the common factors among them and compare them with the evaluation contents of second-level indicators, so as to test the rationality of the design of second-level indexes.

Rotated Loading Matrix for Process Evaluation in Principal Component Analysis.

Table 6 shows that the coefficients of common factor F7 on “C21, C22, and C23” are 0.896, 0.877, and 0.922, respectively, which mainly reflect curriculum construction and talent training objectives, selection of teaching mode, implementation of teaching plan, so the common factor F7 was named as “teaching and training program factor. The coefficients of common factor F8 on “C27, C28, C29, and C30” are .637, .685, .756, and .726, respectively, reflecting the supervision and feedback mechanism of professional development. Therefore, common factor F8 was named as “teaching management and feedback factor.” The coefficients of common factor F9 on “C24, C25, and C26” are .761, .780, and .796, respectively, which mainly focused on the use of teaching methods, teaching contents and social practice, and evaluation methods. Therefore, the common factor F9 was named the “teaching methods and reforms factor.” From this, we can see that the design of the names of these three second-level indicators are in line with the data analysis and the design is more reasonable.

Therefore, the same method can be used to determine the common factors affecting the scores of each three-level indicator of Context, Input, and Product evaluations. The coefficients and naming of the three-level indicators on the corresponding common factors are shown in Appendix 4. The results showed that the common factors affecting the experimental sample data could be divided into four categories based on the four factors of the CIPP evaluation system, with three factors each for Context evaluation, Input evaluation, and Process evaluation, and two factors for Product evaluation. The specific contents were consistent with the initially constructed second-level indicators, indicating that the establishment of second-level indicators under this evaluation system was more reasonable. Simultaneously, the scores of the student samples on the 11 common factors were used as variables, and the dimensionality was reduced again, so that the scores of each second-level indicator for the first-level indicator could be derived, which are the objective weights of the 11 second-level indicators, as shown in Table 7.

Objective Weights of Second-Level Indicators.

Considering the Process evaluation as an example, it can be observed that the objective weights of the three second-level indicators in Process evaluation are 0.274, 0.263, and 0.463, respectively, which are compared with the subjective weights (0.270, 0.330, and 0.400) derived by Analytic Hierarchy Process and found to be not much different, indicating that the previous subjective judgment is more reasonable. In addition, by the weighing method of objective and subjective weights proposed by Song and Wang (2003), the combined weight is defined as the arithmetic mean of the subjective and objective weights. The weights of each second-level index of Process evaluation can be adjusted to (0.272, 0.297, 0.432). Using the same method, the weights of the other second-level indicators can be derived, and the respective weights of second-level indicators for Context evaluation are (0.164, 0.383, 0.453), Input evaluation are (0.476, 0.267, 0.258), while Product evaluation are (0.730, 0.270). Adjustments were made on the basis of Table 3, and then a more objective and reasonable evaluation system was constructed.

Discussion

The purpose of this study was to construct a quality evaluation index system for engineering majors based on the CIPP model under OBE approach, which consists of 4 first-level, 11 second-level, and 42 third-level indicators. The rationality of the evaluation indicators was verified using Principal Component Analysis, and the weights of each indicator were adjusted appropriately.

The results of our study showed that among the three second-level indicators corresponding to the Context evaluation, B3 (0.453) has the greatest weight, followed by B2 (0.383), and B1 (0.164). It can be seen that the “training goal” index has the highest weight value in the Context evaluation. The training goals of engineering majors offer platform for fostering engineering talents, measuring engineering education quality, and are an important part of engineering education at higher levels (Zhao & Feng, 2016). Shulman (2005) found that the goal of engineering education is that it must meet the degree standards set by the college, including the impact of accreditation requirements and meet the needs of the industry. Fromm (2003) argued that engineering students need strong verbal and written communication skills in addition to content knowledge and skills. The magnitude of the weight values of the three second-level indicators corresponding to Input evaluation were B4 (0.476) > B5 (0.267) > B6 (0.258); the teacher input had the highest weight, while practice and training had the lowest weight. It is imperative to optimize the faculty in order to improve practice teaching; this improves both disciplinary coverage and the smooth operation of the proposed practice teaching system (Zhao et al., 2020). Teachers need customized training to improve specific skills or to acquire new ones. This implies a growing need for professional development programs for teachers (Bayar, 2014; Eun, 2008; Quick et al., 2009). Through internships, students gain innovative and practical skills and expand their professional knowledge base (Bothe & Putnik, 2015).

The weights of the three second-level indicators corresponding to Process evaluation were B9 (0.432) > B8 (0.297) > B7 (0.272). Student feedback is vital for quality teaching and student learning, and it can have a considerable impact on student achievement (Hattie, 2009). The purpose of feedback, according to Sadler (1989), is to provide clarification of what is needed for learning and to close gaps in individual understanding. Involving students in the feedback process can improve their engagement in learning (O’Donovan et al., 2016). Using teaching methods, implementing training programs, and teaching management and feedback mechanisms are important components of engineering majors for cultivating highly-skilled personnel with a mastery of facilities and equipment as well as the development of students’ abilities (Zhang, Tan et al., 2022). Product evaluation includes two second-level indicators: students’ basic quality and satisfaction evaluation. In our study, the weight of students’ comprehensive quality (0.730) is greater than that of satisfaction evaluation (0.270). Student evaluations serve as both a periodic diagnosis of students’ overall abilities and a basis for students’ self-reflection and self-improvement (Higher Education Evaluation Center of the Ministry of Education, 2020). The Product evaluation can understand the development of students’ abilities at any time, obtain continuous feedback of teaching and training plans, and can provide reference for the revision of training plans and can the improvement of teaching methods (Sancar Tokmak et al., 2013). Using Principal Component Analysis, we have effectively verified the scientific of the design of these indicators, and it has also been confirmed in the literature.

Conclusions

This study explored the evaluation indexes of undergraduate engineering training quality according to electronics-information majors using the CIPP model, and used the Delphi method and Analytic Hierarchy Process to design the indexes at all levels and calculate the weights of the first-level and second-level indexes. In addition, the content and weight design of the corresponding second-level indicators were proved to be reasonable through empirical analysis, and the weights of second-level indicators were adjusted. The index system can basically reflect various factors affecting the quality of undergraduate training of engineering majors, and provide some reference for the training of undergraduate students in this major.

However, this study has certain limitations. The weights of second-level indicators given in the study are influenced both by the subjective perception of experts and the selected research samples, and their coefficients cannot be directly applied to the professional evaluation of other universities. Therefore, in practice, it is necessary to recalculate the objective weights according to the actual situation of the school and combine them with the subjective weights to arrive at the final weights of second-level indicators. Concurrently, it is necessary to collect abundant information from experimental samples to verify the degree of matching between the designed second-level and third-level indicators and the actual situation, so that the evaluation can be more accurate.

Footnotes

Appendix 1

Appendix 3

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by Jiangsu Normal University Graduate Training Quality Project-Education Teaching Reform and Research Key Project Research on Quality Evaluation of Engineering Graduate Training Based on CIPP Model (Grant No. JGKTZ201921). This research was also supported by the Educational Research Project of the Teaching Guidance Sub-committee of Optoelectronic Information Science and Engineering Specialty of the Teaching Guidance Committee of Electronic Information Specialty in Colleges and Universities of the Ministry of Education Fund of P.R. China (Grant Nos. 2020XGK26 and 2020SYL41).