Abstract

Faculty instructional development programs aim to enhance faculty members’ instructional quality and promote student learning. Despite their promise, these programs do not always yield improved outcomes, making their evaluation crucial to understanding the effectiveness and influencing factors. Although many studies have investigated the effectiveness of faculty instructional development programs by measuring program participants’ changes in teacher self-efficacy and teaching approaches, little research has investigated how and why instructional development programs affect these critical program outcomes. This study examined the outcomes of a faculty instructional development program for early-career faculty, offered by the college teaching resource center at a U.S. public university. Using a mixed-methods evaluation design, the study aimed to comprehensively understand the program’s effects on participants’ teacher self-efficacy and teaching approaches. The quantitative survey results revealed a statistically significant increase in teacher self-efficacy and teaching approaches among participants after the program. The qualitative interview data further supported these findings, revealing key themes that contribute to the program’s effectiveness: providing opportunities to learn various teaching aspects, building a supportive learning community through active strategies, giving feedback and opportunities to reflect on teaching, offering college-level collaborative support for teaching, and addressing confounding factors of program effects. These findings illuminate critical features of effective instructional development programs. We propose recommendations to better support faculty needs and offer suggestions for evaluating faculty instructional development programs.

Plain Language Summary

Faculty instructional development programs are designed to help university faculty members improve their teaching skills and support better learning outcomes for students. However, these programs do not always achieve their goals, which is why evaluating them is important. Understanding what makes these programs successful can help institutions improve how they support faculty. This study evaluated the impact of a faculty development program at a U.S. public university on participants’ confidence in their teaching and their teaching methods. The study used a combination of surveys and interviews to explore the program’s effects. Survey results showed that participants felt more confident in their teaching and improved their teaching methods after completing the program. Interviews provided additional insights into why the program worked well. Participants highlighted several factors that contributed to their growth, including opportunities to learn about different teaching strategies, being part of a supportive learning community, receiving feedback and reflecting on their teaching, and getting support from their colleagues and the institution. These findings show what makes faculty development programs successful and offer suggestions for strengthening them. Recommendations include addressing challenges that might affect the program’s outcomes and improving support for faculty participants. This study provides useful information for designing and evaluating similar programs at other institutions.

Keywords

Introduction

The quality of faculty members’ teaching positively affects students’ learning outcomes, including academic self-efficacy, sense of belonging, retention, persistence, and graduation (Haras et al., 2017). However, university faculty members often receive little training in effective teaching or pedagogy (National Research Council [NRC], 2009). This is because many faculty members received their doctorate or professional degree from a research-oriented university, where teaching is often less of a priority than conducting research (Marineau, 2018), typically leading to a lack of teaching experience. Although students learn better through active engagement in the learning process and real-world contexts, faculty in higher education, including those in agricultural and life sciences, still rely on lecture-based teaching focused on knowledge acquisition (NRC, 2009).

To tackle this issue, many colleges and universities are providing faculty members with resources and support to enhance their teaching skills (NRC, 2009). Instructional development programs are specifically designed to improve the quality of faculty instructional approaches (Llie et al., 2020) and encourage faculty to adopt more learner-centered teaching practices that promote student learning (Haras et al., 2017; NRC, 2009). However, despite their good intentions and promising interventions, these programs do not always lead to professional growth or improved outcomes (Darling-Hammond et al., 2017; Rossi et al., 2019). Therefore, it is essential to evaluate these programs to understand their effectiveness and the factors that influence them (Rossi et al., 2019).

To identify the effectiveness and value of faculty instructional development programs, program participants’ changes in teacher self-efficacy and approaches to teaching were commonly measured (Connolly et al., 2018; Ebert-May et al., 2011; Emery et al., 2020; Gibbs & Coffey, 2004; Ibrahim et al., 2020; Levesque-Bristol et al., 2019; Postareff et al., 2007; Trigwell & Prosser, 2004). Teacher self-efficacy is “a judgment of his or her capabilities to bring about desired outcomes of student engagement and learning” (Tschannen-Moran & Hoy, 2001, p. 783). Given the current study’s context is higher education, teacher self-efficacy was defined as faculty members’ belief in their ability to successfully teach (Ibrahim et al., 2020).

Studies on the impact of instructional development programs on teacher self-efficacy have shown mixed results (Connolly et al., 2018; Fabriz et al., 2020; Ibrahim et al., 2020; Pippins et al., 2023; Postareff et al., 2007; Rahman, 2023; Saikia et al., 2024). Connolly et al. (2018) found a positive correlation between program participation and self-efficacy among early-career scholars, with greater engagement leading to higher self-efficacy, even after controlling for variables like gender and teaching experience. Additionally, multiple studies (Fabriz et al., 2020; Ibrahim et al., 2020; Pippins et al., 2023; Rahman, 2023; Saikia et al., 2024) have demonstrated that participation in instructional development programs significantly enhances participants’ teaching-related self-efficacy, with improvements attributed to effective program design and implementation strategies. However, Postareff et al. (2007) found that only participants in year-long programs showed increased self-efficacy, while those in shorter programs saw no gains or even decreases in their teacher self-efficacy scores. The study suggested that shorter programs may create uncertainty, whereas longer programs provide sustained improvements in teacher self-efficacy.

Approaches to teaching are closely tied to students’ learning approaches and outcomes (Postareff et al., 2007). In teacher-centered teaching, students are seen as passive recipients of information, with little regard for their prior knowledge or experiences. This approach often leads students to adopt surface learning strategies, focusing on memorization (Postareff et al., 2007). On the other hand, a student-centered approach to teaching focuses more on students and their learning (Postareff et al., 2007). Thus, student-centered teaching emphasizes students building their own understanding, with instructors guiding rather than lecturing (Kober, 2015). This approach encourages deep learning, fostering a more profound comprehension of the content (Postareff et al., 2007).

Faculty instructional development programs have been shown to promote student-centered teaching approaches (Gibbs & Coffey, 2004; Levesque-Bristol et al., 2019; Postareff et al., 2007; Saikia et al., 2024). Gibbs and Coffey (2004) found that participants in such programs adopted more student-centered teaching approaches, leading their students to use deeper learning strategies compared to a control group. Similarly, Levesque-Bristol et al. (2019) reported that participants in a cohort-based instructional development program increasingly used student-centered approaches, resulting in higher student satisfaction and more positive course ratings when instructors provided more student-centered, autonomy-supportive learning environments. Saikia et al. (2024) found that participation in faculty development programs significantly enhanced faculty members’ self-efficacy and teaching competencies, particularly in fostering self-directed reflective learning among students and improving classroom communication.

While previous studies offer valuable insights into the effects of faculty instructional development programs, there remains a lack of research employing mixed-methods designs. A mixed-methods approach enables a more comprehensive understanding of program effectiveness by assessing both the outcomes, such as changes in teacher self-efficacy and teaching approaches, and the underlying mechanisms driving these changes (Creswell & Plano Clark, 2018). Moreover, limited research has focused specifically on instructional development programs for faculty in agricultural and life sciences. Addressing these gaps, the current study contributes to the literature by providing robust evidence on both the outcomes and processes of a faculty instructional development program targeting this population. The findings can inform the design, implementation, and evaluation of future initiatives, ultimately enhancing teaching effectiveness and improving educational quality for students.

Theoretical and Conceptual Framework

This study was framed using social cognitive theory (Bandura, 1986). The theory emphasizes that most human learning occurs in a social environment (Schunk, 2016). Bandura (1986) explained human behavior within a framework of triadic reciprocity or reciprocal interactions among personal factors (e.g., self-efficacy, self-regulation), behaviors (e.g., achievement behavior), and environmental variables (e.g., learning environment, other people; Bandura, 1991; Schunk, 2016). In the triadic reciprocity model, persons, behavior, and environmental factors influence each other bidirectionally (Bandura, 1989, 1991).

Social cognitive theory posits that individuals learn not only through their own experiences but also by observing others’ actions and the results of those actions. In other words, learning can occur enactively through actual doing or vicariously by observing others (models) perform (Schunk, 2016). In terms of vicarious (observational) learning, peer modeling is particularly effective for influencing behavior because people are more likely to perform a particular behavior when they (observers) perceive the models as similar to themselves (Bandura, 1986). Additionally, social cognitive theory posits that people aspire to control the events that affect their lives and perceive themselves as agents (Bandura, 1997). Individuals acquire a greater sense of personal agency by self-regulating important aspects of their lives (Schunk, 2016). The foundation of personal agency is self-efficacy (Bandura, 1991). Self-efficacy is essential in affecting one’s sense of agency, in which they feel they can influence their lives (Bandura, 1997).

As operationalized in this study, the personal factor was teacher self-efficacy, the behavioral factor was an approach to teaching, and the environmental factor was the instructional development program, Teacher’s College. This study posited that Teacher’s College plays a critical role in influencing participants’ teacher self-efficacy and approach to teaching by offering an effective professional learning environment (see Figure 1).

Conceptual Model of the Relationship Among Teacher’s College, Teacher Self-Efficacy, and Approach to Teaching.

Purpose and Objectives

This study aimed to identify the outcomes of the University of Florida (UF) College of Agricultural and Life Sciences (CALS) Teacher’s College on faculty participants’ teacher self-efficacy and approach to teaching. The following two objectives guided this study:

Identify changes in the mean scores for faculty participants’ teacher self-efficacy and approach to teaching after attending Teacher’s College.

Describe how Teacher’s College affected participants’ teacher self-efficacy and approach to teaching.

Methodology

Description of the Program

Teacher’s College is an 11-week instructional development program offered to faculty members by the UF CALS Teaching Resource Center (TRC). The program is designed to enhance faculty members’ teaching knowledge and skills while fostering a community of practice centered on learner-centered teaching (Roberts et al., 2019). Although Teacher’s College has been offered annually since 2007, its outcomes have not been systematically documented. The program incorporates a range of activities to support its goals, covering topics such as learning principles, active learning strategies, instructional design, teaching methods, student learning assessment, distance education, the scholarship of teaching and learning, teaching in laboratory settings, and the promotion and tenure process at UF (Roberts et al., 2019). Faculty participants are encouraged to adopt a student-centered teaching approach, emphasizing the active construction of knowledge by students. Key stakeholders include the CALS Teaching Resource Center, the CALS Dean’s Office, department chairs, UF administrators, faculty participants, and students.

Research Approach

We utilized an explanatory sequential mixed-methods approach (Creswell & Plano Clark, 2018), which consists of a quantitative phase and a qualitative follow-up phase, to gain a comprehensive understanding of the effectiveness of Teacher’s College. In the quantitative phase, we used pre- and post-surveys to identify if there were any statistically significant differences in the scores of teacher self-efficacy and approach to teaching before and after the program. Then, we reviewed the quantitative results to guide the development of the qualitative strand (Creswell & Plano Clark, 2018). Quantitative data indicated that there was a statistically significant increase in the scores of teacher self-efficacy and approach to teaching after the program. In the qualitative phase, we then used interviews to explore how and why Teacher’s College influenced participants’ teacher self-efficacy and teaching approaches to help explain the quantitative results (Creswell & Plano Clark, 2018).

Target Population and Sample

The target population of this study was UF CALS faculty members who had participated in Teacher’s College since 2008 (N = 266). We employed a purposive and non-probability sampling method. Study participants were selected based on the following criteria: (a) participation in Teacher’s College program between Fall 2014 and Fall 2020, (b) current faculty membership at UF CALS, and (c) holding a teaching appointment. Faculty members who participated in the program between 2008 and 2013 were excluded because they likely had less accurate memories of their program experience. During the seven years from 2014 to 2020, 143 faculty members participated in the program. After excluding those who have left UF or currently serve as unit leaders, 127 faculty members met the study criteria and constituted the study sample.

Quantitative Phase

Quantitative Study Participants

A total of 61 faculty participants from 18 departments within UF CALS completed the quantitative portion of this study, resulting in a 48% survey response rate. To address nonresponse error, we compared early respondents to late respondents following recommendations by Ary et al. (2014) and Lindner et al. (2001). The first half of the respondents (n = 31) were classified as the early respondent group. Late respondents were defined as those who responded to relatively late reminders. Lindner et al. (2001) suggested that a minimum of 30 participants should be included in the late respondent group. Based on this recommendation, the second half of the respondents who completed the survey after the last two reminders (n = 30) were categorized as the late respondent group. We conducted an independent t-test to compare the teacher self-efficacy scores between early and late respondents. The results showed no statistically significant difference in scores between early respondents (M = 3.60, SD = 0.63) and late respondents (M = 3.42, SD = 0.59), t(59) = 1.21, p = .23, d = 0.31. These findings indicate that the sample was unbiased (Ary et al., 2014; Lindner et al., 2001). However, we acknowledge this as a potential limitation of the study and recommend caution in generalizing the findings beyond the study sample. Table 1 presents the selected characteristics of the survey respondents.

Selected Characteristics of the Survey Respondents (n = 61).

Percent of program participants in this study.

Quantitative Study Instrument

This study is part of a broader evaluation of an instructional development program at UF. The survey instrument for this study comprised three major sections: (a) College Teaching Self-Efficacy (CTSE), (b) Approaches to Teaching Inventory (ATI), and (c) Demographic and Background Questionnaire.

College Teaching Self-Efficacy (CTSE)

We used the college teaching self-efficacy (CTSE) instrument developed by Connolly et al. (2018) to measure participants’ teacher self-efficacy. CTSE was chosen for this study because it was designed and validated specifically in the context of higher education. The instrument consists of six constructs regarding postsecondary teaching: (a) course planning, (b) using teaching methods, (c) creating learning environments, (d) assessing student learning, (e) interacting with students, and (f) mastering subject knowledge. Each dimension had three to five items, measured using 5-point Likert-type scales ranging from 1 (not at all confident) to 5 (extremely confident). The instrument originally consisted of 23 items, but one double-barreled question was split into two questions to avoid inaccuracies in survey results. Thus, the total number of items used in this study was 24 items. The Cronbach’s alpha for pre-and post-survey were .95 and .94, respectively, which were considered excellent reliability estimates (Pallant, 2016).

Approaches to Teaching Inventory (ATI)

The Approaches to Teaching Inventory (ATI; Trigwell et al., 2005) was used to measure respondents’ teaching approaches. The ATI was developed by Prosser and Trigwell (1999) and has been broadly used in measuring the impact of the faculty instructional development programs on teachers’ approaches to teaching (Postareff et al., 2007; Trigwell & Prosser, 2004). The ATI consisted of 22 items, including two main constructs, which are the conceptual change/student-focused (CCSF) approach to teaching (11 items) and information transfer/teacher-focused (ITTF) approach to teaching (11 items), using a 5-point Likert-type scale (from “rarely if ever” to “almost always”; Trigwell et al., 2005). The rationale for using the ATI inventory is that the ATI has been proven to be a valid and reliable instrument for assessing a teacher’s approach to teaching in a higher education context (Postareff et al., 2007). According to Trigwell et al. (2005), the Cronbach’s alpha of the instrument indicated that both scales had an acceptable value of scale reliability (CCSF approach [α = .85] and ITTF approach [α = .83]). In this study, the Cronbach’s alpha for the pre-survey was .84 (CCSF approach [α = .85] and ITTF approach [α = .81]). Furthermore, the Cronbach’s alpha for the post-survey was .76 (CCSF approach [α = .80] and ITTF approach [α = .77]), indicating good internal consistency (Pallant, 2016).

Demographic and Background Questionnaire

In the last section of the instrument, survey respondents were asked about demographic information, including sex, race/ethnicity, highest degree, position, discipline, level of instruction, and year of program participation. In terms of the validity of the instruments in this study, a team of three faculty members in agricultural education, three faculty members specializing in program evaluation, and one doctoral student in agricultural education from UF evaluated the face and content validity of the instruments used in this study.

Before administering the survey to the study group, participants in the Teacher’s College program who were not included in this study were invited to complete the survey and provide feedback on the readability and understandability of the questions. Based on their feedback, the researcher made minor modifications to the survey instrument to improve clarity. Overall, the pilot survey indicated that the survey instrument was appropriate for use.

Quantitative Data Collection and Analysis

Respondents were invited to participate in an online survey via Qualtrics. To encourage responses and ensure data quality, we followed Dillman’s Tailored Design Method (Dillman et al., 2014). The UF CALS Dean sent the initial survey invitation in October 2020 to encourage them to participate in the survey. Following this, the lead researcher sent personalized reminder emails to each respondent at 2-, 4-, and 8-week intervals, resulting in four solicitations to increase the response rate (Dillman et al., 2014). The final reminder was sent in December 2020.

We utilized the Statistical Package for the Social Sciences (SPSS) to compute both descriptive and inferential statistics. Descriptive statistics were used to calculate means and standard deviations. Then, before conducting paired-samples t-tests, we used the Kolmogorov–Smirnov and Shapiro-Wilk tests to test the normality of the data (Pallant, 2016), which confirmed that all constructs of the teacher self-efficacy and teaching approach data were normally distributed. Paired-samples t-tests were conducted to assess any statistically significant changes in respondents’ teacher self-efficacy and approach to teaching. Then, effect sizes were calculated using Cohen’s d (Cohen, 1988).

Qualitative Phase

Qualitative Study Participants

At the end of the survey, respondents were asked if they would be willing to participate in follow-up interviews. A total of 14 faculty members participated in the qualitative phase. The interview participants were composed of seven assistant professors (50%) and seven lecturers (50%). Regarding discipline areas, seven were in natural and applied sciences (50%), and seven were in social sciences (50%). As for sex, 10 were female (71.4%), and 4 were male (28.6%). In terms of race/ethnicity, 10 Whites (71.4%) and 4 non-Whites (28.6%) were included in the sample. Interview participants were assigned a code (F1, F2, F3, etc.) based on the alphabetical order of names to maintain confidentiality.

Qualitative Study Instrument

A semi-structured interview protocol was developed to gather in-depth insights into the impact of Teacher’s College on faculty participants’ teacher self-efficacy and teaching approaches (Table 2). The protocol also included questions to elicit participants’ overall reflections on the program. For the purposes of this study, only responses specifically related to teacher self-efficacy and teaching approaches were analyzed. To ensure the face and content validity of the interview instrument, a panel of experts reviewed the questions. The panel consisted of three faculty members in agricultural education, three faculty members with expertise in program evaluation, and one doctoral student in agricultural education.

Interview Questions Focused on Participants’ Perceptions of Teacher’s College.

Qualitative Data Collection and Analysis

Interviews were conducted via Zoom from December 2020 to January 2021, video-recorded, and transcribed verbatim. The interview transcripts were processed using the QSR NVivo software. Data from the semi-structured interviews were analyzed using the constant comparative method (Corbin & Strauss, 2008), a fundamental technique in qualitative data analysis that involves continuously comparing and contrasting data (Harding, 2019). This method helps identify similarities and differences between cases in a dataset (Harding, 2019). In this study, the coding process of the constant comparative method involved three levels of analysis: (a) open coding, (b) axial coding, and (c) selective coding (Corbin & Strauss, 2008). During open coding, researchers read each transcription line-by-line and utilized a descriptive coding procedure (Corbin & Strauss, 2008). In the axial coding phase, main categories and subcategories were developed by collapsing open codes into broad categories and comparing these categories (Ary et al., 2014). Finally, selective coding was used to identify relationships between the categories (Ary et al., 2014).

To ensure trustworthiness, several strategies were employed to meet the standards of credibility, dependability, confirmability, and transferability (Lincoln & Guba, 1985). Following Ary et al. (2014), the lead researcher held regular meetings with another team member for peer debriefing to ensure the credibility of the data. Peer reviewers also examined interview transcriptions alongside the principal researcher’s interpretations to determine their reasonableness. Dependability was assessed through intrarater and interrater agreements (Ary et al., 2014). For intrarater agreement, the primary researcher used the code-recode strategy, coding an interview transcript, setting the analysis aside for 1 week, and recoding it. The two coding sets were compared for consistency, with discrepancies reviewed and resolved to refine the process. For interrater agreement, two coders discussed and reconciled coding discrepancies through consensus (Syed & Nelson, 2015). Confirmability was established using an audit trail documenting data analysis decisions and justifications. Lastly, detailed and thick descriptions of the context and participants were provided to ensure transferability (Ary et al., 2014).

Results

Objective 1: Identify Changes in the Mean Scores for Faculty Participants’ Teacher Self-Efficacy and Teaching Approaches after Attending Teacher’s College

Regarding the first objective, a paired-samples t-test was conducted to identify if there was any statistically significant difference after Teacher’s College on participants’ scores on the College Teaching Self-efficacy (CTSE) scale. The results indicated that there was a statistically significant increase in CTSE scores from before the program (M = 3.51, SD = 0.59) to after the program (M = 3.97, SD = 0.46), t(55) = 7.96, p < .001. The mean increase in CTSE scores was 0.46, with a 95% confidence interval ranging from 0.35 to 0.58. The Cohen’s d statistic (0.88) indicated a large effect size. The results of CTSE are described in Table 3.

Participants’ Changes in the Means Scores of College Teaching Self-efficacy (CTSE).

Note. n = sample size; M = mean, SD = standard deviation, t = t score, p = p-value, d = Cohen's d statistic.

Next, we identified if there was any statistically significant difference after Teacher’s College on participants’ scores on the Approaches to Teaching Inventory (ATI) scale using a paired-samples t-test. The ATI consisted of two main constructs: the conceptual change/student-focused (CCSF) approach to teaching and the information transfer/teacher-focused (ITTF) approach. A paired-samples t-test indicated that there were statistically significant differences after Teacher’s College on participants’ scores on both CCSF and ITTF. Regarding the CCSF approach, there was a statistically significant increase in CCSF scores from before the program (M = 3.39, SD = 0.55) to after the program (M = 3.74, SD = 0.46), t(58) = 7.39, p < .001. The mean increase in CCSF scores was 0.35 with a 95% confidence interval ranging from 0.26 to 0.45. The Cohen's d statistic (0.70) indicated a large effect size (Cohen, 1988). In terms of the ITTF approach, there was a statistically significant increase in ITTF scores from before the program (M = 3.67, SD = 0.52) to after the program (M = 3.82, SD = 0.47), t(54) = 3.67, p = .001. The mean increase in ITTF scores was 0.16 with a 95% confidence interval ranging from 0.07 to 0.24. The Cohen’s d statistic (0.31) indicated a small effect size (Cohen, 1988). The results of ATI are described in Table 4.

Participants’ Changes in the Means Scores of the Approaches to Teaching Inventory (ATI).

Note. n = sample size; M = mean; SD = standard deviation, t = t score, p = p-value, d = Cohen’s d statistic.

Objective 2: Describe how Teacher’s College Affected Faculty Participants’ Teacher Self-Efficacy and Teaching Approaches

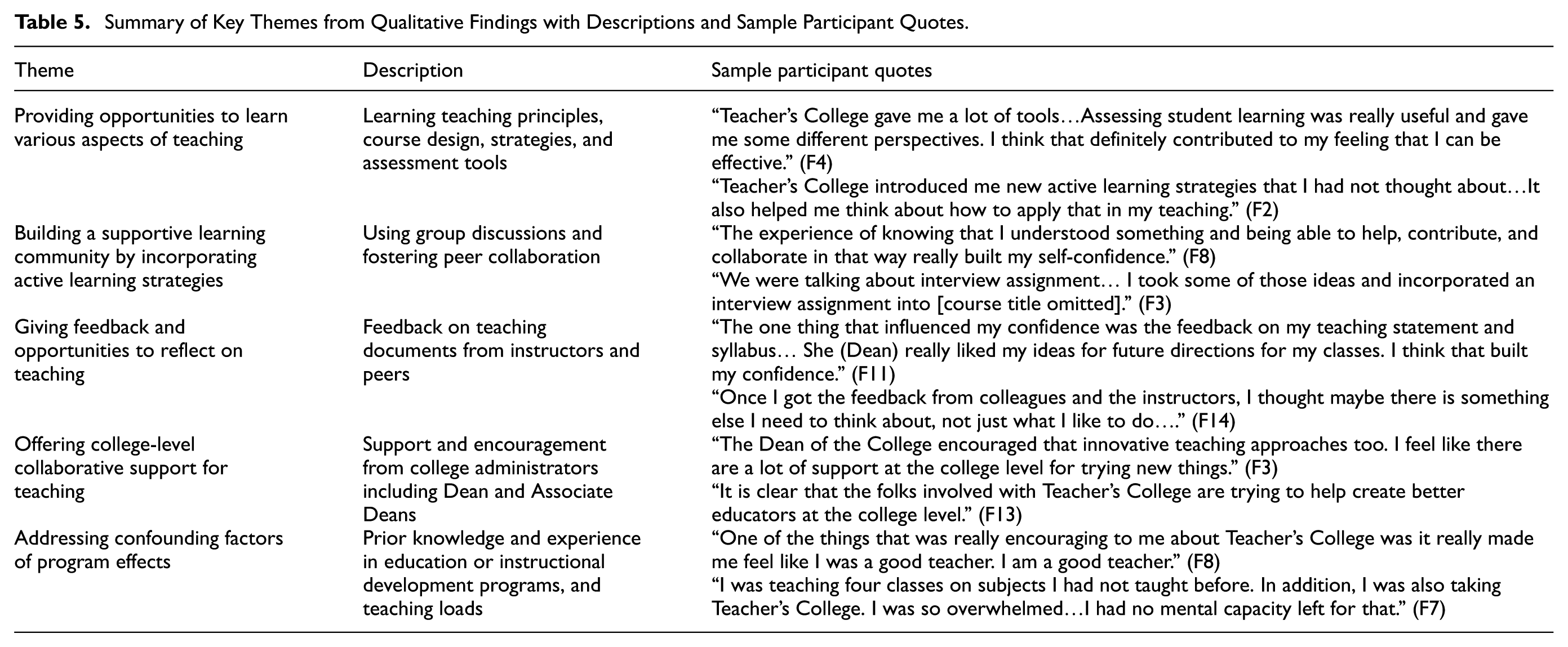

Expanding on the quantitative findings, the qualitative results described how Teacher’s College affected faculty participants’ teacher self-efficacy and teaching approaches. Five emergent themes from the qualitative data were identified: (a) providing opportunities to learn various aspects of teaching, (b) building a supportive learning community by incorporating active learning strategies, (c) giving feedback and opportunities to reflect on teaching, (d) offering college-level collaborative support for faculty teaching, and (e) addressing confounding factors of program effects.

Providing Opportunities to Learn Various Aspects of Teaching

Most participants (F2, F3, F4, F5, F6, F8, F9, F12, F13) stated that Teacher’s College significantly impacted their teacher self-efficacy and teaching approaches by offering extensive opportunities to learn various aspects of teaching. These teaching aspects included learning principles, course design, teaching strategies, student learning assessment, and educational terminology. For example, a lecturer (F4) noted that learning about various teaching tools and assessment methods boosted her confidence and creativity in evaluating student learning: “Teacher’s College gave me a lot of tools…Assessing student learning was really useful and gave me some different perspectives on how to assess learning. I think that definitely contributed to my feeling that I can be effective.” Other participants (F2, F9) also emphasized that learning new teaching strategies and tools, such as student assessment and course organization, enhanced their teacher self-efficacy.

Furthermore, several participants (F2, F3, F4, F5, F6, F9, F12, F13, F14) mentioned that their expanded knowledge in teaching also improved their teaching approaches. For instance, a lecturer (F4) shared that they learned various techniques to engage the classroom and facilitate discussions among students through the program. Along the same lines, an assistant professor (F6) appreciated learning different assessment tools, which broadened their perspective. Participants (F4, F5, F10, F11, F12) noted they had opportunities to apply what they learned during the program. For example, a lecturer (F4) mentioned that practicing classroom strategies with peer faculty members during the program was effective in understanding their applicability: “We talked about how to engage the classroom and how to structure it, then they actually also made us do it…I thought that was a really good way to show how these things work and how effective they are.”

Building a Supportive Learning Community by Incorporating Active Learning Strategies

Several participants (F1, F3, F5, F8, F9, F10, F13, F14) mentioned that Teacher’s College fostered a supportive and collaborative learning community by incorporating active learning strategies such as group discussions and think-pair-share activities. Participants reported that group discussion sessions in Teacher’s College were particularly helpful in enhancing their teacher self-efficacy by exposing them to new teaching ideas and skills. These sessions also allowed them to learn from other faculty members and reflect on their teaching practices. Participants with an academic background in education, such as F8 and F10, noted that small group discussions helped them realize their ability to provide valuable feedback to their colleagues, which helped build their teacher self-efficacy. F8 said, “The experience of knowing that I understood something and being able to help, contribute, and collaborate in that way really built my self-confidence.”

Additionally, observing colleagues navigate similar challenges had a positive impact on their self-efficacy. One lecturer (F3) noted that the program offered an opportunity to see others facing comparable difficulties, which reassured them that, like other educators, they too could continue to improve. F3 shared: They seem like young, brilliant teachers…then when they say, “Yeah, I really do not know if I am doing this right… just realizing that everyone goes through that helped me feel a little more confident in my own abilities because if they are saying those things but are actually good teachers, maybe I am, too.”

Regarding the impacts of Teacher’s College on teaching approaches, eight faculty members’ (F1, F3, F5, F8, F9, F10, F13, F14) stated that group activities provided participants with opportunities to share various teaching approach ideas and reflect on their teaching, which consequently helped enhance their teaching approaches. For instance, a lecturer (F3) incorporated a new teaching approach learned during the program into their course: “We were talking about interview assignments and how to use interview assignments and different strategies that we had considered. I took some of those ideas and incorporated an interview assignment into [course title omitted].” Along the same lines, an assistant professor (F1) shared that group discussion sessions helped them learn different teaching strategies, which enhanced their teaching approaches. This sentiment was echoed by F13, who stated: The good thing about Teacher’s College was being in a class with other educators and early faculty on different topics. So, the way that I teach my course and the topics may differ, but some of the learning activities and concepts might be the same.

Giving Feedback and Opportunities to Reflect on Teaching

During Teacher’s College, participants worked on several assignments, such as developing or revising their teaching documents such as teaching philosophies, syllabi, and lesson plans. The program instructors, including the director of the Teaching Resource Center, CALS Dean, and Associate Deans, provided feedback on participants’ assignments, giving them opportunities to reflect on their teaching approaches. In terms of the program’ impacts on teacher self-efficacy, five participants (F3, F11, F12, F13, F14) reported that receiving positive and constructive feedback on their teaching documents helped them reflect on their teaching approaches and boosted their teacher self-efficacy. For example, an assistant professor (F11) mentioned that receiving positive feedback about their teaching philosophy and syllabus from the CALS Dean increased their self-efficacy: The one thing that influenced my confidence was the feedback on my teaching statement and syllabus. I got mostly positive feedback. [Dean name omitted] said she really liked my ideas for future directions for my classes. I think that built my confidence.

In addition, seven faculty participants (F3, F4, F6, F7, F12, F13, F14) noted that receiving constructive feedback on their teaching documents from instructors and peers helped them improve their teaching documents and think more deeply about their teaching approaches. For instance, F3 stated that feedback from program facilitators and peer faculty members enabled them to develop better versions of their teaching philosophy and syllabus: “It was really nice and helpful to get some detailed comments on my syllabus and teaching philosophy… I picked up a lot of interesting ideas to enhance my syllabus and teaching philosophy through Teacher’s College” (F3). This sentiment was echoed by another faculty (F14), who said, “I thought I had a pretty well-written philosophy. But, once I got the feedback from colleagues and the instructors, I thought there is something else I need to think about, not just what I like to do…” (F14).

Offering College-Level Collaborative Support for Teaching

Three participants (F3, F9, F13) mentioned that college-level support for faculty teaching helped them adopt new teaching approaches. The involvement of college administrators, such as the Dean and the Associate Deans, was perceived very positively. In particular, encouragement from college administrators, including the CALS Dean and Associate Deans, to use innovative teaching approaches in the classroom significantly boosted participants’ teacher self-efficacy. A lecturer (F3) stated, “It was really nice to see that the Dean of the College encouraged that (innovative teaching approaches) too. I feel like there are a lot of support at the college level for trying new things.” The active involvement of college administrators provided encouragement and validation of experimenting with and adopting new teaching methods.

Participants appreciated the supportive facilitators and the commitment of the CALS Dean and Associate Deans to developing and supporting faculty members’ teaching. F13 noted, “It is clear that the folks involved with Teacher’s College are trying to help create better educators at the college level.” F9 echoed this sentiment: “I really like the involvement of the dean and the associate deans…It was really good to see our dean taking so much interest in the program. The dean taught us one part of the program. I really love that.” Overall, the collaborative support from college administrators and teaching experts for the program played a crucial role in fostering an environment conducive to growth and innovation in faculty participants’ teaching approaches.

Addressing Confounding Factors of Program Effects

Several participants (F1, F2, F3, F5, F8, F9, F10, F11, F14) mentioned that they were already familiar with the majority of the content in Teacher’s College due to their educational background in education and social sciences and previous instructional development program experiences. This familiarity influenced their experience and the impact of the program. Some participants (F8, F14) stated that their familiarity with program content boosted their confidence in teaching. A lecturer with many years of teaching experience (F14) mentioned that realizing they already knew most of the content affirmed they were on the right track and boosted their confidence: “What they said was either I have already done, or I was planning to incorporate, which means I am doing the right job…I am using most of the tactics or strategies introduced through Teacher’s College” (F14). Similarly, an assistant professor (F8) noted that familiar topics in the program alleviated their anxiety and boosted their confidence: “One of the things that was really encouraging to me about Teacher’s College was it really made me feel like I was a good teacher. I am a good teacher.”

Participants with previous instructional development program experience (F2, F3, F5, F9) mentioned that Teacher’s College reinforced what they already knew about effective teaching and provided opportunities to learn new teaching approaches. Similarly, another participant (F1) with a degree in education and teaching experience mentioned that while Teacher’s College did not significantly influence their teacher self-efficacy or approach to teaching, they still gained new knowledge and teaching strategies through interactions with their cohort. F1 shared, “I do think some of the ideas I picked up from other faculty and what they do in their classes were very helpful and influenced me.”

A lack of time due to heavy teaching responsibilities was identified as a barrier to the program's effectiveness. One participant (F7), who had four classes to teach during their first semester as a new faculty member, mentioned that they could not engage in Teacher’s College as actively as they wanted due to their heavy teaching load. F7 shared, “I was so overwhelmed…. I had cognitive overload. I had no capacity left for that.”Table 5 summarizes the five key themes from the qualitative analysis of Teacher’s College’s impact on faculty participants’ teacher self-efficacy and teaching approaches, including theme descriptions and sample participant quotes.

Summary of Key Themes from Qualitative Findings with Descriptions and Sample Participant Quotes.

Discussion

This study aimed to identify the impacts of Teacher’s College on faculty members’ teacher self-efficacy and teaching approaches. The findings highlighted the program’s significant positive effects on these aspects, providing strong evidence of its value for faculty instructional development. Although previous research has noted such changes following instructional development programs, few have specifically examined how and why such programs influence faculty members’ teacher self-efficacy and teaching approaches. The theoretical framework for this study, social cognitive theory (Bandura, 1986), provides a valuable framework for the critical role of environmental factors such as an instructional development program on personal and behavioral factors. Furthermore, using a mixed methods approach, this study explored key features of the instructional development program that positively affect faculty members’ teacher self-efficacy and teaching approaches. The results contribute to understanding how such programs can enhance faculty teaching development.

The quantitative survey results indicated a statistically significant increase in program participants’ teacher self-efficacy and teaching approaches. We used the College Teaching Self-Efficacy (CTSE) scale to measure faculty participants’ teacher self-efficacy. The results showed a statistically significant increase in participants’ self-efficacy. The findings are consistent with previous research (Connolly et al., 2018; Fabriz et al., 2020; Ibrahim et al., 2020; Pippins et al., 2023; Postareff et al., 2007; Rahman, 2023; Saikia et al., 2024) regarding the positive impact of faculty instructional development programs on faculty participants’ teacher self-efficacy. Furthermore, we utilized the Approaches to Teaching Inventory (ATI) to assess faculty participants’ teaching approaches. The findings revealed significant increases in student-centered teaching approaches, demonstrating that Teacher’s College effectively promotes participants’ adoption of such approaches. These results align with previous studies (Ebert-May et al., 2011; Gibbs & Coffey, 2004; Ibrahim et al., 2020), which found that instructors participating in teaching development programs were more likely to adopt student-centered teaching methods.

The follow-up qualitative results yielded information about how Teacher’s College positively affected participants’ teacher self-efficacy and teaching approaches. The results highlight several key themes that emerged from qualitative data. These themes include (a) providing opportunities to learn various aspects of teaching, (b) building a learning community through active learning strategies, (c) offering feedback and opportunities for reflection, (d) providing college-level collaborative support, and (e) addressing confounding factors of program effects. The results indicated that Teacher’s College incorporates most elements of effective professional development proposed by Darling-Hammond et al. (2017), demonstrating its effective design and implementation while also highlighting the critical features of instructional development that influence faculty participants’ teacher self-efficacy and teaching approaches.

Participants reported that the program offered various opportunities for them to learn different aspects of teaching, including teaching principles, course design, teaching strategies, student learning assessment, and more. Their increased understanding of pedagogical strategies was essential in facilitating participants’ adoption of student-centered teaching approaches and positively influenced their teacher self-efficacy. Teachers’ knowledge of pedagogical strategies and subject-matter content, along with their beliefs about that knowledge, plays a critical role in the development of self-efficacy (Palmer, 2011). The results of this study showed that participants became more self-efficacious in their teaching as their pedagogical knowledge increased through the program. This finding aligns with prior research indicating that knowledge of effective teaching practices can strengthen teacher self-efficacy (Morris et al., 2016), as well as with social cognitive theory’s assertion that the learning environment plays a critical role in shaping self-efficacy (Bandura, 1991; Schunk, 2016).

The findings also indicated that Teacher’s College facilitated building a supportive and collaborative learning community by incorporating active learning strategies, such as group discussions, where they shared perspectives and teaching experiences. Incorporating active learning strategies allowed participants to share ideas, reflect on their practices, and gain new perspectives. Such features positively influence faculty participants’ teacher self-efficacy and teaching approaches. The results are consistent with previous studies, such as Postareff et al. (2007), which found that faculty participants valued interacting with colleagues from other departments and discussing teaching with them. This interaction helped them learn different viewpoints and develop new teaching approaches. This supports Darling-Hammond’s (2017) research, emphasizing that spaces for collaboration and idea-sharing foster a sense of community among educators, enhancing teaching practices through mutual support and shared experiences.

These findings support the principles of social cognitive theory (Bandura, 1986), which proposes that individuals learn not only through their own experiences but also by observing others’ actions and the results of those actions. In particular, the theory explains that peer modeling is effective for facilitating vicarious learning and influencing behavior because reproduction occurs more commonly when observers perceive the models as similar to themselves (Bandura, 1986). Furthermore, recognizing others experiencing similar challenges helped participants feel relieved and supported, consequently improving their teacher self-efficacy.

The study highlighted the importance of providing feedback and opportunities for reflection on teaching. Participants reported that receiving constructive feedback from college administrators and program instructors on their teaching philosophy, syllabi, and lesson plans helped them reflect on their teaching approaches and promoted their teacher self-efficacy. This aligns with Palmer’s (2011) finding that verbal persuasion from respected experts can effectively enhance self-efficacy. Darling-Hammond et al. (2017) also argued that offering constructive feedback and time for instructors to reflect on their practice are critical components of effective professional development. Participants also appreciated the collaborative support from college administrators and teaching experts for the program, which validated their efforts and provided resources and encouragement to experiment with new teaching methods. These findings highlight the essential role of institutional support in the success of faculty instructional development programs.

The study also identified several confounding factors, such as previous educational background, teaching experience, and teaching responsibility loads, influencing the program’s effectiveness. Participants with extensive prior knowledge in teaching or previous instructional development programs found Teacher’s College more reinforcing than transformative. Additionally, heavy teaching loads were identified as a significant barrier to full engagement with the program. These findings suggest that workload management and tailored support are crucial for ensuring that all participants can maximize the benefit of the instructional development opportunities provided.

In conclusion, Teacher’s College has proven to be a valuable faculty instructional development program, significantly enhancing faculty participants’ teacher self-efficacy and teaching approaches. The findings indicated that the design and implementation of Teacher’s College were effective in influencing these positive changes. Building upon the effective features of Teacher’s College and addressing the challenges identified in the study, Teacher’s College can be further enhanced to ensure all program participants can fully benefit and achieve transformative improvements in their teacher self-efficacy and teaching approaches.

Recommendations

This study provides strong evidence that Teacher’s College effectively enhances faculty members’ teacher self-efficacy and promotes student-centered teaching approaches. Based on these findings, we offer several practical recommendations to strengthen the program and suggestions to guide future studies on faculty instructional development program evaluation.

Recommendations for Practice

The findings highlight the important role of peer interaction and collaborative learning in faculty instructional development. Many participants reported that observing and engaging with colleagues contributed meaningfully to changes in their teaching practices and enhanced teacher self-efficacy. These experiences align with social cognitive theory (Bandura, 1986), which emphasizes vicarious learning, where individuals learn by observing others, particularly those they perceive as similar to themselves. To build on this strength, it is recommended that the program continues incorporating peer modeling opportunities and fostering interdepartmental dialogue, enabling participants to share teaching challenges and strategies across disciplines.

The study found that Teacher’s College’s effectiveness is shaped not only by its content but also by individual differences, such as prior teaching experience and educational background. While many participants acquired new teaching strategies through the program, some with extensive experience viewed the content as a review. Nonetheless, they still valued peer interaction and reflective discussions, contributing to their teaching development. To further tailor the experience, differentiated learning tracks should be developed to address the specific needs of novice and experienced educators. Foundational modules can benefit newer faculty, while advanced workshops can challenge more seasoned instructors. Conducting pre-program needs assessments can inform content alignment with participants’ goals, interests, and experience levels (Dick et al., 2015).

Furthermore, the involvement of college administrators is highly valued. Ensuring their continuous engagement through teaching sessions, sharing insights, and offering feedback is crucial for the program’s success. Their active presence can motivate participants to enhance their teaching and reinforce the program’s importance. By implementing these recommendations, Teacher’s College can better support and meet the diverse needs of faculty members, maintaining a robust, collaborative, and supportive learning environment.

Recommendations for Research

This study employed a one-group pretest–posttest design, which was appropriate given the study’s context and the accessibility of participants. This design is commonly used in educational program evaluations when including a control or comparison group is not feasible (Cranmer, 2017), as it provides practical insights into program effectiveness by measuring changes within a single group over time. In the context of faculty instructional development, this approach has frequently been adopted due to logistical and ethical constraints that limit the use of experimental or quasi-experimental designs (Fabriz et al., 2020; Ibrahim et al., 2020; Saikia et al., 2024).

While practical and widely used, the one-group pretest–posttest design has limitations in establishing causality. Future research should consider incorporating control or comparison groups, when feasible, to strengthen causal inference. Such designs help mitigate internal validity threats, such as maturation, testing effects, and selection biases, and provide a more rigorous estimation of program impact by distinguishing program-related changes from external factors (Campbell & Stanley, 1963; Rossi et al., 2019).

Additionally, this study utilized self-reported measures to assess changes in teacher self-efficacy and teaching approaches. The instruments used had been previously validated for evaluating the effects of faculty instructional development programs (Connolly et al., 2018; Postareff et al., 2007; Trigwell & Prosser, 2004). To reduce potential response bias, surveys were administered anonymously, which has been shown to help mitigate social desirability effects in self-report questionnaires (Joinson, 1999). However, the possibility of such bias cannot be entirely ruled out.

To enhance the validity of future research, the inclusion of complementary measures is recommended. Although prior studies have found positive correlations between self-reported teaching practices and those observed by independent raters (Emery et al., 2020), self-report data remain vulnerable to bias, particularly when participants respond in ways they perceive as socially desirable, potentially overstating program effects (Payumo et al., 2019). To address this limitation, future studies should consider triangulating self-report data with objective measures such as classroom observations, peer reviews, or student evaluations. These methods can more accurately capture teaching practices and provide stronger evidence of program effectiveness (Ebert-May et al., 2011).

Footnotes

Acknowledgements

The authors would like to acknowledge the support of the University of Florida CALS Dean’s Office for facilitating data collection for this study.

Ethical Considerations

All procedures in this study follow the ethical standards of the University of Florida IRB, with approval (IRB# 202002310) granted on September 21, 2020.

Consent to Participate

Informed consent was obtained from all participants in this study.

Author Contributions

Gangseok Hur: Conceptualization, methodology, formal analysis, investigation, project administration, resources, visualization writing – original draft preparation, and writing – review & editing. T. Grady Roberts: Conceptualization, methodology, project administration, supervision, and writing – review & editing. David Diehl: Conceptualization, methodology, and writing – review & editing. James “J.C.” Bunch: Conceptualization, methodology, and writing – review & editing. John Diaz: Conceptualization, methodology, and writing – review & editing.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The participants in this study did not grant written consent for their data to be shared publicly. Therefore, the supporting data is not available.