Abstract

This study examines the impact of Artificial Intelligence (AI) chatbots on the loss of human connection and emotional support among higher education students. To do so, a quantitative research design is employed. An online survey questionnaire is distributed to a sample of 819 higher education students, assessing concerns about human connection, experiences with chatbots, and satisfaction levels. The research findings reveal participants’ concerns about the diminished sense of human connection and emotional support when interacting with chatbots. However, participants also acknowledge the benefits of chatbots in terms of personalized assistance, enhanced learning experiences, and improved access to information and resources. The regression analysis demonstrates a significant association between participants’ concerns of human connection and their experiences with chatbots and satisfaction with chatbot interactions. Higher levels of experiences with chatbots and greater satisfaction with chatbot interactions are positively correlated with increased concerns of human connection and emotional support. The study highlights the need for a balanced approach to the integration of chatbots in educational settings, considering the potential impact on human connection and emotional support. It emphasizes the importance of developing policies and guidelines that address the ethical use of chatbots and promote meaningful interpersonal relationships among students. Strategies for practice implementation, such as incorporating collaborative activities and providing training and support for students and faculty, are recommended. Also, instructional designers are encouraged to design chatbot interactions that go beyond information provision and focus on social presence and emotional support.

Introduction

Artificial Intelligence (AI) generative tools, such as chatbots like ChatGPT, have gained prominence in education for providing personalized assistance, engaging students, and offering instant feedback (Adiguzel et al., 2023; Ait Baha et al., 2023; Chen et al., 2022; Kazoun et al., 2022; Kooli, 2023; Medhi Thies et al., 2017; Rudolph et al., 2023; Smutny & Schreiberova, 2020; Tlili et al., 2023; Wang et al., 2021; Winkler & Söllner, 2018). Studies highlight the innovative conversational strategies and efficiency of human-to-chatbot communication compared to traditional human-to-human interaction (Ait Baha et al., 2023; Jiang et al., 2022; Kazoun et al., 2022; Meng & Dai, 2021; Sestino & D’Angelo, 2023; Wang et al., 2021). The growth of the mobile device market and the COVID-19 pandemic have further accelerated chatbot adoption across education, telemedicine, and other digital platforms (Almalki & Azeez, 2020; Jiang et al., 2022; Medhi Thies et al., 2017; Smutny & Schreiberova, 2020).

While developing and maintaining human relationships is crucial for overall well-being (Černý, 2023; Meng & Dai, 2021; Skjuve et al., 2021, 2022), chatbots, despite mimicking human conversation (Adamopoulou & Moussiades, 2020; Margalit, 2016), often lack the empathy and nuance of human interaction (Adamopoulou & Moussiades, 2020; Han, 2020; Margalit, 2016; Meng & Dai, 2021; Sands et al., 2021). Rooted in the Media Equation Theory, the recent focus on socially oriented chatbots with distinct “personalities” underscores how individuals respond to computer-mediated communication similarly to human interactions (Karyotaki et al., 2022; Mehra, 2021; Reeves & Nass, 1996). However, unmet expectations in chatbot interactions can lead to frustration and dissatisfaction (Černý, 2023; Margalit, 2016; Mehra, 2021; Reeves & Nass, 1996).

The reliance on AI has sparked concerns about the potential erosion of human connection and emotional support—critical elements for student well-being and educational experiences (Dong et al., 2020; Fügener et al., 2021; Meng & Dai, 2021; Natale, 2021; Ryan, 2020; Shneiderman, 2020; Suen et al., 2020; Wang et al., 2020; Xie & Pentina, 2022). While chatbots show promise in enhancing learning, it is essential to address emotional, cognitive, and social factors when integrating them into education (Gulz et al., 2011; Medhi Thies et al., 2017; Smutny & Schreiberova, 2020). Despite growing interest in social chatbots designed for education, companionship, and therapy (Kooli, 2023; Kuhail et al., 2023; Labadze et al., 2023), limited research explores how these interactions shape human-chatbot relationships and their social implications (Følstad et al., 2021; Margalit, 2016; Medhi Thies et al., 2017; Skjuve et al., 2021, 2022). Zahour et al. (2020: p. 554) note that “chatbots in academia have received only limited attention,” emphasizing the need to examine their impact on students’ sense of connection and satisfaction in higher education.

Given the growing prevalence of chatbots, there is a critical need for empirical research on their effects on interpersonal relationships and students’ sense of connection. As these tools increasingly act as social companions, understanding their relational dynamics and social impact is vital (Følstad et al., 2021; Skjuve et al., 2021, 2022; Zahour et al., 2020).

This study addresses these gaps by exploring the influence of AI chatbots on human connection and emotional support among higher education students. It investigates the potential negative consequences of chatbot use on students’ sense of connection, experiences, and satisfaction within academic contexts. The study poses three research questions:

Context and Background

Chatbots, originally termed “chatterbots” by Michael Mauldin in 1997, are AI-driven conversational agents designed to mimic human dialog through text or voice interactions (Deryugina, 2010; Følstad et al., 2021; Smutny & Schreiberova, 2020). Utilizing Natural Language Processing (NLP) and Machine Learning (ML), chatbots analyze user inputs, adapt responses, and continuously enhance their performance, making interactions more personalized and efficient (Adiguzel et al., 2023; Wang et al., 2021). Modern chatbots, including those powered by AI, have moved beyond entertainment to serve as tools for education, healthcare, customer service, and personal assistance (Jiang et al., 2022; Rudolph et al., 2023; Smutny & Schreiberova, 2020). See Figure 1.

Chatbot workflow (adopted from Aleedy et al., 2022: p. 662).

The emergence of personal digital assistants, such as Apple’s Siri, Amazon’s Alexa, and Google Assistant, marked a turning point in chatbot development, showcasing capabilities for real-time voice interaction and task automation (Medhi Thies et al., 2017; Wang et al., 2021). Since the introduction of ChatGPT in 2022, advancements in generative AI have further accelerated the adoption of chatbots across industries, including education (Rudolph et al., 2023; Tlili et al., 2023). Chatbots today are deployed on platforms ranging from websites and mobile apps to instant messaging tools, offering a variety of input methods and facilitating seamless interaction (Belda-Medina & Calvo-Ferrer, 2022; Zahour et al., 2020). Figure 2 illustrates the generic architecture of chatbot systems.

The generic architecture of chatbot systems (adopted from Luo et al., 2022: p. 3.)

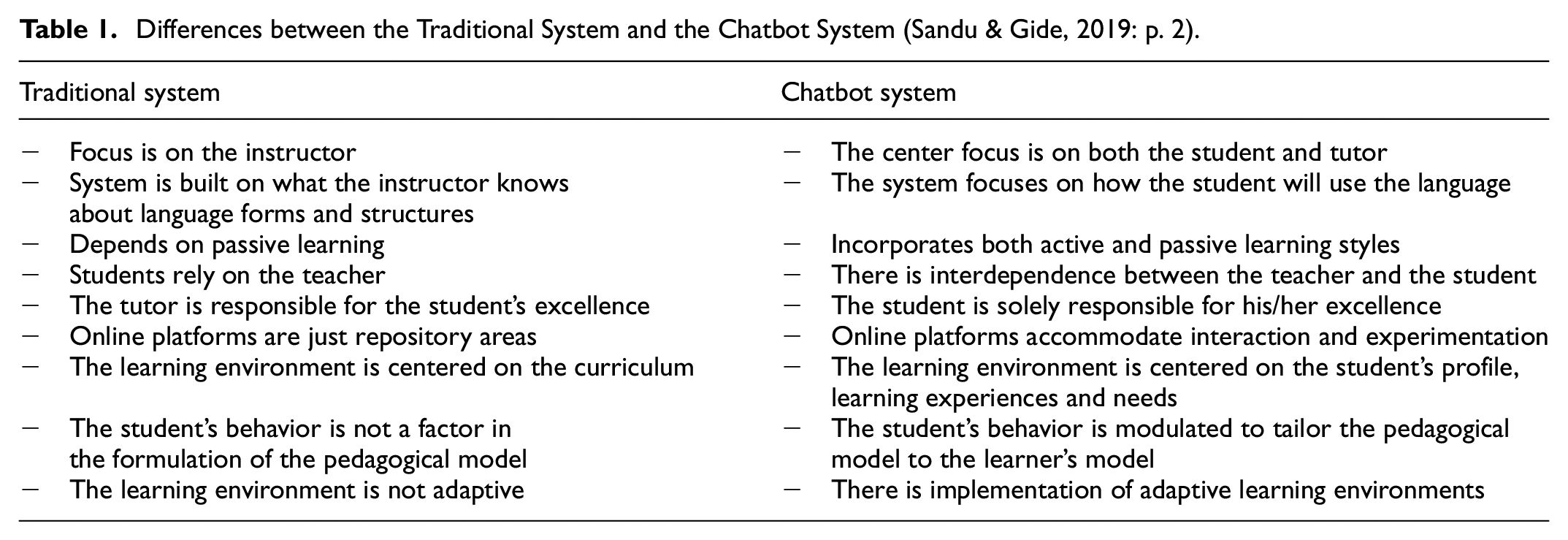

In education, chatbots have shown significant potential as pedagogical agents. They enhance personalized learning by providing tailored feedback, motivating students, and enabling one-on-one interactions in large-scale settings, such as MOOCs (Bozkurt & Sharma, 2023; Kuhail et al., 2023; Winkler & Söllner, 2018). Via leveraging AI techniques, chatbots can assess behavior, adapt to individual needs, and reduce stress, thus transforming the learning process into a more interactive and efficient experience (Ait Baha et al., 2023; Luo et al., 2022; Wang et al., 2021). These systems also support administrative tasks, such as answering student queries and guiding career decisions, improving workflows for educators and staff (Kuhail et al., 2023; Zahour et al., 2020). See Table 1.

Differences between the Traditional System and the Chatbot System (Sandu & Gide, 2019: p. 2).

However, the widespread adoption of chatbots raises concerns regarding their emotional and psychological impact. While they can simulate human-like interactions, chatbots often lack authentic empathy and nuanced understanding, essential for addressing complex emotional needs in higher education (Adamopoulou & Moussiades, 2020; Margalit, 2016). The convenience of chatbot use may inadvertently reduce real-life interpersonal interactions, with some students relying excessively on AI for emotional support, potentially leading to isolation and frustration when expectations are unmet (Černý, 2023; Xie & Pentina, 2022).

Research indicates that while chatbot interactions are initially engaging, their novelty often wears off over time, resulting in diminished feelings of connection and support (Croes & Antheunis, 2021). Although features like humor and “bot personalities” can enhance user experience, these interactions are no substitute for genuine human empathy, which remains crucial in managing stress and fostering well-being (Beattie et al., 2020; Meng & Dai, 2021).

Despite their advantages in streamlining educational processes, chatbots face limitations in fully addressing the emotional and psychological dimensions of learning environments. Concerns regarding privacy, bias, and the accuracy of chatbot responses further complicate their integration into education (Adiguzel et al., 2023; Kuhail et al., 2023). Additionally, faculty and administrative staff may view chatbots as a threat to their roles, creating resistance to adoption (Zawacki-Richter et al., 2019).

Existing literature underscores the transformative potential of chatbots in enhancing learning experiences but reveals critical gaps in understanding their impact on human connection and emotional well-being in higher education. This study seeks to bridge these gaps by examining how AI chatbots influence students’ relational dynamics, emotional support, and overall satisfaction, ultimately aiming to inform the development of more emotionally responsive chatbot systems.

Research Methodology

Research Design and Instrumentation

This study employed a quantitative research design to explore the impact of AI chatbots on the perceived loss of human connection among higher education students. The primary focus was on students’ concerns, experiences, and satisfaction levels with chatbot interactions.

An online survey questionnaire was developed to measure three core constructs: (1) perceptions of human connection, (2) experiences with chatbots, and (3) overall satisfaction with AI chatbots. The instrument consisted of nine items rated on a 5-point Likert scale, ranging from “Strongly Disagree” to “Strongly Agree” or “Very Dissatisfied” to “Very Satisfied.” Demographic data, including gender, subjective AI expertise, and AI usage frequency, were also collected. Items were informed by existing literature on AI-driven educational tools and human-computer interaction (Appendix 1). The survey introduction included a detailed consent form outlining the study’s aim, scope, and key definitions (e.g., “AI expertise” and “AI usage”), ensuring participants had a clear understanding of the context.

Validity and Reliability

To ensure validity, the initial survey items were reviewed by three higher education experts specializing in educational technology and survey research. Revisions were made based on their feedback. The reliability of the final nine-item questionnaire was tested using Cronbach’s Alpha, showing strong internal consistency (α = .87). Subscale reliability scores were:

Perceptions of Human Connection: α = .83

Experiences with Chatbots: α = .85

Overall Satisfaction: α = .72

Data were analyzed using SPSS (v. 26), with descriptive statistics, multivariate analysis of variance (MANOVA), and regression analysis employed to examine relationships among demographic factors, perceptions of human connection, chatbot experiences, and overall satisfaction.

While factor analysis was not conducted due to the brevity of the scale, future research may explore its factor structure using exploratory or confirmatory methods.

Sampling and Data Collection

The study targeted higher education students from various disciplines, academic levels, and universities in Saudi Arabia. A combination of random and snowball sampling methods was employed. Initial participants were selected through random sampling, and additional participants were recruited through email and personal networks using a snowball approach. A total of 819 students participated in the survey.

Ethical guidelines were strictly followed, including securing institutional review board approval and obtaining informed consent from all participants. Confidentiality and privacy were maintained throughout the study.

Results

Students’ Demographics

Table 2 presents the students’ demographics, including gender, subjective AI expertise, and frequency of AI usage. The study consisted of 398 male students (48.6%) and 421 female students (51.4%). Regarding their subjective AI expertise, 406 students (49.6%) perceived their AI expertise to be low, while a slightly smaller portion of them (N = 348, 42.5%) considered their AI expertise to be at a medium level. A minority of students (N = 65, 7.9%) claimed to have acquired a high level of AI expertise. In terms of the frequency of AI usage among the students, approximately 30.8% (N = 252) reported rarely using AI, while 17.0% (N = 139) indicated using AI on a monthly basis. Furthermore, 20.5% (N = 168) stated they use AI weekly, and the largest portion of the group, comprising 31.7% (260 students), reported daily usage of AI.

Students’ Demographics (N = 819).

Students’ Concerns, Experiences, and Satisfaction Levels (RQ1)

Table 3 presents the mean scores and standard deviations for students’ perspectives on concerns of human connection, experiences with chatbots, and satisfaction with chatbots. Regarding concerns related to human connection and emotional support, students strongly agreed with statements such as “interacting with chatbots has diminished my sense of human connection” (M = 4.42, SD = 0.85), “I don’t feel emotionally supported when interacting with chatbots” (M = 4.34, SD = 0.90), and “chatbots have reduced opportunities for meaningful social interactions” (M = 4.31, SD = 0.93). The overall mean score for concerns related to human connection was 4.36, with a standard deviation of 0.77.

Students’ Perspectives (N = 819).

In terms of experiences with chatbots, students reported positive perceptions. They agreed that AI chatbots have been helpful in providing personalized assistance (M = 4.11, SD = 0.99), enhanced their learning experience (M = 4.17, SD = 0.97), and made it easier to access information and resources (M = 4.30, SD = 0.89). The total mean score for experiences with chatbots was 4.19, with a standard deviation of 0.84. Likewise, students expressed high levels of satisfaction with AI chatbots. They perceived chatbots as effective in addressing their needs (M = 4.24, SD = 0.94), easy to use and interact with (M = 4.28, SD = 0.90), and impactful on their learning outcomes (M = 4.35, SD = 0.88). The overall mean score for satisfaction with chatbots was 4.29, with a standard deviation of 0.72.

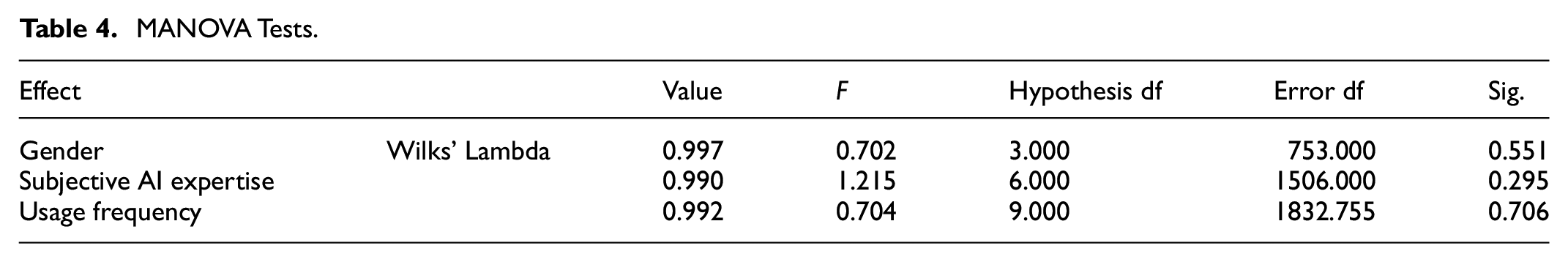

The Influence of Students’ Demographics on Their Perspectives (RQ2)

MANOVA test was used to analyze the relationships between students’ demographics as dependent variables and their perspectives of AI chatbots (independent variables). Based on Table 4, none of the tests show a statistically significant effect at conventional significance levels (p < .05). This implies that higher education students’ demographic characteristics have no impact on their perspectives regarding AI chatbots.

MANOVA Tests.

The Relationship Between Students’ Experiences, Satisfaction and Their Concerns (RQ3)

Regression analysis was conducted to examine the relationship between participants’ concerns of human connection and emotional support, as dependent variable, and their experiences with chatbots, as well as satisfaction with chatbot interactions, as independent variables. Table 5 presents the ANOVA results for a regression model aimed at predicting the dependent variable “concerns of human connection and emotional support” using the predictors “satisfaction with AI chatbots” and “experiences with chatbots.”

ANOVA Test (Dependent Variable: Concerns of Human Connection).

The ANOVA analysis demonstrates that the regression model is highly significant (p < .001), indicating the influential role of the predictors in shaping participants’ concerns of human connection and emotional support. The substantial F-value of 383.556 indicates that the regression model accounts for a significant amount of the variability observed in the dependent variable. Therefore, the ANOVA results provide evidence that the inclusion of the predictors “satisfaction with AI chatbots” and “experiences with chatbots” significantly contribute to students’ concerns of human connection and emotional support.

Further, the regression analysis, as shown in Table 6, indicates that both “experiences with chatbots” (β = .64, p < .001) and “satisfaction with chatbots” (β = .11, p < .001) have statistically significant and positive coefficients in predicting participants’ concerns of human connection and emotional support. These findings suggest that as experiences with chatbots increase and satisfaction with chatbot interactions improves, participants are more likely to express higher levels of concerns regarding human connection and emotional support.

Coefficients (Dependent Variable: Concerns of Human Connection).

Discussion

The findings of this study contribute to the broader discourse on AI integration in education (Adiguzel et al., 2023; Chen et al., 2022; Kazoun et al., 2022; Kooli, 2023; Medhi Thies et al., 2017; Rudolph et al., 2023; Smutny & Schreiberova, 2020; Tlili et al., 2023; Wang et al., 2021; Winkler & Söllner, 2018) by illuminating how chatbots affect both cognitive (e.g., access to information) and socio-emotional (e.g., feelings of connection) dimensions of student life. In particular, participants reported concerns regarding the reduced sense of human connection and emotional support when interacting with AI chatbots, aligning with prior work suggesting that technology-mediated interactions may compromise interpersonal engagement (Croes & Antheunis, 2021; Li & Zhang, 2023; Sweeney et al., 2021). This highlights the importance of examining the psychological and relational impacts of AI-driven tools, especially in higher education contexts where students often rely on robust social and emotional networks for well-being and academic success (Dong et al., 2020; Fügener et al., 2021; Natale, 2021; Ryan, 2020; Shneiderman, 2020; Suen et al., 2020; Wang et al., 2020; Xie & Pentina, 2022).

Despite these reservations, students simultaneously emphasized the practical benefits of AI chatbots, such as personalized assistance, enhanced learning experiences, and streamlined access to information. These positive evaluations are in keeping with existing studies indicating that chatbots can promote efficiency and satisfaction in educational settings (Karyotaki et al., 2022; Kuhail et al., 2023; Li & Zhang, 2023; Sandu & Gide, 2019; Shoufan, 2023; Zahour et al., 2020). The contradictory nature of these viewpoints—concerns about diminished emotional connections alongside an appreciation for chatbots’ functional advantages—underscores the dual impact of AI chatbots: they can simultaneously empower and alienate students, depending on the dimension under consideration.

Crucially, the regression analysis revealed a positive correlation between higher levels of experience and satisfaction with chatbots and increased concerns about the loss of human connection. This finding suggests that, as students become more aware of chatbots’ capabilities and begin to rely on them, they also develop a heightened sensitivity to the limitations of AI in fulfilling emotional and relational needs (Adamopoulou & Moussiades, 2020; Han, 2020; Li & Zhang, 2023; Margalit, 2016; Sands et al., 2021). From a psychological standpoint, this dynamic may reflect a growing realization that chatbots, despite their convenience and effectiveness, cannot replicate the complexity and empathy inherent in human interactions (Sweeney et al., 2021; Xie & Pentina, 2022). Thus, the present study refines our understanding of how user familiarity with chatbots may paradoxically amplify rather than diminish concerns regarding emotional support and human connection.

Given these multifaceted findings, several strategies can help mitigate the negative outcomes of chatbot use while retaining its educational benefits:

Hybrid Interaction Models: Educators and university administrators could integrate AI chatbots into a hybrid communication model, where chatbots handle routine, low-stakes queries (e.g., administrative FAQs), freeing human instructors or counselors to focus on emotionally rich interactions. This approach could preserve essential opportunities for interpersonal connection.

Enhanced Social Presence: Developers can incorporate social cues (e.g., empathic responses, expressive language, or interactive elements) within chatbot interfaces to create a greater sense of social presence. While these enhancements may never fully match human empathy, they can help address students’ emotional needs more effectively (Pamungkas, 2019; Skjuve et al., 2021).

User Education and Training: Providing training sessions that clarify the capabilities, limitations, and appropriate uses of chatbots can help manage expectations, reducing the risk of over-reliance on AI tools for emotional support. Students should be encouraged to balance technology use with in-person interactions and campus resources (e.g., counseling services).

Institutional Guidelines and Policies: Higher education institutions should develop policies that encourage ethical and mindful use of AI, ensuring that chatbots complement rather than replace human-led support. Clear guidelines could outline scenarios where human intervention is necessary, particularly in cases involving emotional distress or complex problem-solving.

By implementing these strategies, higher education institutions may leverage the best aspects of chatbots—such as convenience and efficiency—while attenuating the potential pitfalls related to reduced human connection and emotional support.

Conclusions and Implications

Overall, this study illuminates the nuanced relationship between AI chatbots and higher education students’ well-being, revealing that increased satisfaction with and usage of chatbots can coexist with heightened concerns about human connection and emotional support. These dual findings extend our understanding of how AI-driven tools influence student experiences, reinforcing that chatbots offer tangible benefits—such as personalized learning pathways and immediate feedback—yet remain limited in replicating genuine human empathy and relational depth.

From a practical standpoint, the results underscore the importance of balanced chatbot integration within university curricula. While chatbots can enhance students’ academic performance and provide on-demand resources, meaningful interpersonal interaction remains critical for holistic student development. Administrators and educators must, therefore, craft guidelines and training initiatives that foreground human relationships, ensuring chatbots serve as supplements to, not substitutes for, essential social and emotional support systems.

Additionally, the findings have implications for policy and instructional design. Policymakers could revise existing directives to better regulate AI usage and emphasize ethical considerations, data privacy, and the safeguarding of emotional well-being. Instructional designers might incorporate social presence features into AI interfaces, develop personalized pathways that respect individual differences, and ensure that students have ready access to human facilitators when encountering complex emotional or pedagogical challenges.

Limitations and Future Directions

This study has several limitations. First, it focuses on a sample of higher education students in Saudi Arabia; thus, the generalizability of the findings may be limited to similar cultural and institutional contexts. Future research should target diverse populations across multiple regions and educational systems to validate and extend these conclusions.

Second, the study primarily relies on self-report measures, which can introduce bias or incomplete reporting. Incorporating qualitative methods such as interviews and objective data including log files of chatbot interactions could provide a richer understanding of how and why students use chatbots, as well as how these tools might influence their emotional and social experiences.

Third, while the quantitative approach effectively identifies correlations between student concerns and chatbot use, a longitudinal design or experimental framework would better elucidate causation and changes over time. Future studies could track how students’ perceptions evolve as chatbots are refined to exhibit more adaptive, empathic behaviors.

Lastly, the study did not conduct a formal factor analysis for the scale due to its relatively small number of items. Future researchers may wish to perform exploratory or confirmatory factor analyses on larger samples to further validate the instrument and explore additional dimensions of chatbot impact (e.g., social presence, trust, mental health considerations).

All in all, while this study demonstrates that AI chatbots can enhance certain aspects of the educational experience, it equally underscores the essential role of authentic human connection in higher education. By attending to both the benefits and constraints of chatbot technology, stakeholders can create a more holistic, student-centered learning environment—one that effectively harnesses innovation while preserving the indispensable emotional support and interpersonal bonds that remain central to students’ academic and personal growth.

Footnotes

Appendix 1

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Avalability Statement

The data that support the findings of this study are available upon reasonable request.