Abstract

Source evaluation is central to effective reading and decision making in digital environments. However, L2 students’ processes of source evaluation and its role in task performance is not well understood. To fill the gap, the study examined 25 Chinese EFL undergraduates’ online processes of source evaluation in an Internet environment. By analyzing data of pre-task interviews, screen-recordings, stimulated recalls and students’ selected texts and written products, the study revealed four evaluation behaviours and six evaluation criteria that students engaged in. On average, students spent more time scanning search results and evaluated information and sources based on the criterion of determining relevance to the task more often. The follow-up Spearman correlation analysis showed that scanning search results was significantly and positively associated with the number of reliable texts, while the criteria of determining relevance to the task, examining source features and scrutinizing information accuracy were significantly and positively associated with total writing scores. Overall, the findings offer new insights into L2 students’ source evaluation, with implications for curriculum designs, teaching practices, and task designs aimed at helping EFL students become more critical readers in the digital age.

Introduction

Source evaluation refers to students’ use of key aspects of content- and source-based evaluation criteria (Macedo-Rouet et al., 2019), such as content relevance and usefulness and source reliability. The advent and development of the Internet and new technologies has given rise to distinct literacies and new activities, posing numerous challenges for students and necessitating the acquisition of different, additional strategies and skills (Cho, 2014; Leu et al., 2014). The ability to critically evaluate information and texts is an essential and higher-order strategy unique to online reading (Cho et al., 2018). Among various new, additional strategies and skills, source evaluation is highly important and central to effective reading and decision making in digital literacy contexts (Cho, 2014). Source evaluation, especially the processes of source evaluation, has thus been an emerging area of academic interest in L1 educational contexts over the past two decades (e.g., Brand-Gruwel et al., 2009; Cho, 2014; Cho & Afflerbach, 2015; Forzani, 2018; Kiili et al., 2017; Mason et al., 2018). L2 students, who have more limited grammar knowledge and linguistic repertoires than L1 students, may face greater difficulties when engaging in online research and comprehension, and may therefore require more pedagogical interventions for guidance and improvement. Thus, research that focuses on L2 students’ processes of source evaluation is important.

However, compared to the prevalence of L1 research on source evaluation, only a few studies have investigated L2 students’ processes of source evaluation (Sendur et al., 2021; Stapleton, 2005; Stapleton et al., 2006; Vorobel et al., 2021). Of the limited existing literature, most focused on L2 students’ evaluation criteria via indirect observations such as interviews and questionnaires (Nguyen & Buckingham, 2019; Stapleton, 2003, 2005; Stapleton et al., 2006; Thompson et al., 2013). Few of the studies have ever examined L2 students’ processes of source evaluation through direct observations (Sendur et al., 2021; Vorobel et al., 2021). Given the importance of source evaluation and L2 students’ challenges in online research and comprehension, more studies exploring how L2 students evaluate multiple online sources to use in academic tasks are needed to get a deeper understanding of the topic.

The present study, then, gives a detailed and comprehensive account of EFL undergraduates’ processes of source evaluation in an authentic, unconstrained Internet environment. It aims to investigate students’ specific evaluation behaviours and criteria when searching for, locating and reading multiple online sources to use in an L2 writing task. Such a study can deepen the knowledge and understanding of L2 students’ processes of source evaluation and is expected to contribute to crafting explicit instruction to guide and support L2 students’ development of digital literacy competence. Specifically, the purposes of the present study are threefold: to examine the specific behaviours involved in source evaluation, the criteria students adopt for source evaluation, and the relationship between evaluation behaviours, criteria, and task performance.

Literature Review

Theoretical Framework

Two models serve as the theoretical framework for the present study. They are the model of information problem solving (IPS) (Brand-Gruwel et al., 2009) and new literacies (Leu et al., 2007) which was later refined as new literacies of online research and comprehension (Leu et al., 2014). Both are concerned about source use in digital environments, involving a variety of processes, strategies and skills that students need to approach for successful online research and comprehension in digital environments.

The model of IPS regards students’ processes of information problem solving as a series of processes and skills. It lays emphasis on five constituent processes and skills involved in solving information problems. They are defining information problem, searching information, scanning information, processing information and organizing and presenting information. Each process or skill include a variety of subprocesses or subskills. Additionally, reading skills, evaluating skills, and computer skills are important to the performance of these processes and skills. In online research and comprehension, students are facing a myriad of sources and complicated information problems. They must evaluate information and sources from numerous search results and nonlinear digital texts during their searching and navigation processes.

The second theoretical perspective is new literacies of online research and comprehension (Leu et al., 2014). This theoretical lens is associated with what occurs when people read online to learn and integrate sources to accomplish academic and non-academic assignments. As argued by Leu et al. (2014), the advent of new technologies requires “additional social practices, skills, strategies, and dispositions to take full advantage of the affordances each contains” (p. 1159). According to the theoretical framework, online research and comprehension requires new and emerging skills with technologies, strategies for searching, reading and integrating multiple online sources, and practices such as how to judge content quality, information reliability and source reliability (Leu et al., 2004, 2014). It comprises five components: identifying and defining important questions, locating online information, critically evaluating online information, synthesizing online information and communicating online information. Each component includes a range of abilities, strategies and skill that students need to acquire for academic success. For EFL learners, seeking information online for learning tasks can be an even heavier burden. They may not be adequately prepared to select appropriate and authoritative information and sources and may encounter difficulties. For example, L2 readers, especially low performing L2 readers, have been found to seldom evaluate content quality and source reliability (Sendur et al., 2021; Stapleton, 2005; Stapleton et al., 2006; Vorobel et al., 2021).

L1 Students’ Processes of Source Evaluation: Behaviours and Criteria

Researchers in L1 educational contexts initiated research into students’ processes of source evaluation. Being an integral part and skill for new literacies of online research and comprehension (Leu et al., 2004, 2014), source evaluation has received much attention in L1 educational contexts over the past decades. Prior L1 research has focused on what criteria are employed by students to evaluate source content and sources of texts, what challenges they face, and what individual factors contribute to source evaluation (e.g., Brand-Gruwel et al., 2009; Bråten et al., 2017; Cho, 2014; Cho & Afflerbach, 2015; Forzani, 2018; Hämäläinen et al., 2021; Kiili et al., 2017; Mason et al., 2018; McCrudden et al., 2016; Walraven et al., 2009; Watson, 2014).

A strand of prior L1 studies examined students’ processes of source evaluation in an online setting via direct observations, such as think-aloud protocols and eye-tracking. These prior studies have documented a series of evaluation behaviours and criteria involved (Brand-Gruwel et al., 2005, 2017; Coiro et al., 2015; Goldman et al., 2012; Walraven et al., 2009; Watson, 2014). For example, Walraven et al. (2009) found students’ processes of source evaluation involved three behaviours: evaluating search results, evaluating information and evaluating sources. They also reported that students used various evaluation criteria, such as evaluating rank in hit lists, language, connection to task, with the criterion of evaluating title/summary of search results in the hit lists being the most frequently used.

Another strand of prior L1 studies focused on students’ challenges in their processes of source evaluation and has produced mixed results. The majority of prior studies have demonstrated that students often made superficial and shallow evaluation, focusing more on content usefulness or relevance, while rarely assessing aspects of source reliability, such as author expertise and currency (Brand-Gruwel et al., 2017; Coiro et al., 2015; Forzani, 2018; Goldman et al., 2012; Kiili et al., 2008, 2017; Rodicio, 2015; Walraven et al., 2009; Watson, 2014). In contrast, some studies have yielded inconsistent findings, showing that students were quite aware of the need to evaluate information quality and source reliability (Hämäläinen et al., 2021) and were confident in their abilities to evaluate sources (List et al., 2016; Paul et al., 2017). Specifically, students were capable of citing reasons for their source evaluation and were aware of benefits of source evaluation (List et al., 2016; Paul et al., 2017).

A third strand of prior L1 studies were comparative and focused on individual factors influencing source evaluation. Most of these prior studies have reported that epistemic beliefs, prior knowledge, topic familiarity, reading ability, and self-regulation played a role in students’ evaluation of information relevance and usefulness and source reliability (e.g., Bråten et al., 2017; Forzani, 2018; Hämäläinen et al., 2021; Kiili et al., 2017; Mason et al., 2018; McCrudden et al., 2016).

The prior L1 studies provide deep insights into students’ processes of source evaluation and make valuable contributions to our understanding of the topic. However, most of the prior studies were conducted in a constrained task environment (e.g., with limited access to online sources; Cho et al., 2018), focusing on students’ evaluation of information and sources based on several pre-selected webpages or sources. An authentic task environment, such as the Internet, may overwhelm students a multitude of challenges. Yet little is known about students’ processes of source evaluation when engaging with unconstrained sources. Further research is needed to gain a more comprehensive understanding of how students interact with online sources and the challenges they face in authentic settings.

L2 Students’ Processes of Source evaluation: Behaviours and Criteria

There has been little research on students’ processes of source evaluation in L2 educational contexts. Although limited in its amount, the existing literature points mostly to the criteria that students adopt for source evaluation (Nguyen & Buckingham, 2019; Sendur et al., 2021; Stapleton, 2003, 2005; Stapleton et al., 2006; Thompson et al., 2013; Vorobel et al., 2021; Wette, 2017, 2018). For example, Wette (2018) and Nguyen and Buckingham (2019) both found that undergraduate EFL students and EFL masters’ degree students perceived authoritativeness, relevance and recency as their major criteria for evaluating multiple sources. Vorobel et al. (2021) reported that students often evaluated information and sources based on relevance, interest, language of sources, the importance of sources and the reliability of information within sources.

Some of the prior studies have also reported L2 students’ difficulties in their processes of source evaluation. Specifically, some studies have demonstrated that L2 students paid more attention to content relevance than content accuracy and objectivity (Radia & Stapleton, 2008; Sendur et al., 2021; Stapleton, 2005; Stapleton et al., 2006; Vorobel et al., 2021), indicating that EFL students had difficulties in evaluating multiple texts, especially in evaluating key aspects of source reliability.

Insightful as the abovementioned research is, several limitations have been identified. First, up till now, only a few studies have examined L2 students’ source evaluation, although researchers have claimed that source evaluation skills and critical evaluation literacy exerted significant influences on students’ performance, such as academic L2 writing (Radia & Stapleton, 2008). Another limitation is that few studies have ever explored L2 students’ online processes of source evaluation in naturalistic task environments via direct objections (Vorobel et al., 2021), failing to offer a comprehensive understanding of L2 students’ evaluation behaviours and their challenges in the processes. Additionally, few prior L2 studies have investigated whether students’ processes of source evaluation were effective to their task performance.

Taken together, our review of relevant L1 and L2 studies highlights the need for more in-depth investigations into L2 students’ processes of source evaluation in authentic task environments, such as the Internet. In other words, there is a pressing need for more comprehensive exploration to gain insights into how L2 students engage in source evaluation, how they judge multiple sources in an Internet environment, and to what extent these activities affect their task performance. Such studies could provide valuable insights into how these students navigate the complexities of evaluating online information and sources and the specific challenges they encounter. Furthermore, investigating the relationship between source evaluation and task performance could offer a more holistic understanding of how these skills contribute to overall academic success. Therefore, the present study aims to describe a group of EFL undergraduate students’ processes of source evaluation in a relatively uncontrolled online context. It is guided by the following research questions:

What evaluation behaviours do students engage in when searching for L2 texts in an Internet environment?

What criteria do students use for source evaluation when searching for L2 texts in an Internet environment?

To what extent are students’ evaluation behaviours and criteria associated with their task performance?

Methods

Context and Participants

The study was set at a public, comprehensive university in China. The university offers a four-year undergraduate program for English majors who specialize in English teaching or Commercial English. A significant portion of the courses for English majors, such as English Writing, Advanced English Writing, and British and American Literature, often use a combination of instructional lectures, reading materials and after-class writing assignments. Students often searched for, read, evaluated and used texts to conduct their after-class writing assignments.

Twenty-five undergraduate English majors volunteered to participate in the study. They were all female and aged from 19 to 21 years old. All participants spoke Mandarin as their first language and had learned English for more than 13 years. None of them had ever travelled abroad. When the study began, all participants were in the seventh semester of undergraduate program and were preparing their research proposal for bachelor’s dissertations.

Based on their scores on two of China’s national standardized English tests, TEM 4 (Test for English Majors Band 4) and CET 6 (College English Test Band 6), the participants’ language proficiency fell into four levels: the lower-intermediate (four students), intermediate (11 students), upper-intermediate (six students), and advanced levels (four students).

Procedures and Data Collection

The study included multiple sources of data. They were pre-task interviews, screen recording videos, stimulated recalls and the participants’ selected sources and written products.

The study was reviewed and approved by an academic committee organized by the institution where the author works. The committee is responsible for examining important issues concerning research, including reviewing and approving research ethics. Prior to data collection, the participants were informed of the purpose, the voluntary nature and anonymity of the present study. They were all ensured that their responses were kept confidential, stored safely and were accessed only by the author. All these measures were conducted to ensure participants privacy and data security.

Before conducting the task, the participants gave their written consent to participate in the task, stimulated recalls and interviews. They were offered a form and were asked to confirm whether they volunteered to participate in the study and allowed their responses to be used in the study.

Pre-task Interviews

The topic of the task was concerned about China’s measures to fight Covid-19 and the compliments it received. To get to know participants’ prior topical knowledge, the researcher asked questions to each participant before the task began (e.g., What do you know about China’s measures to fight the pandemic? Do you find the need to search for texts to write the essay?). The participants’ responses showed that they were somewhat familiar with some aspects of the topic (e.g., the measures like city lockdown, quarantine) and that they did not get a comprehensive knowledge of the topic. In particular, they did not have a clear idea of international compliments that China has received and expressed their necessity to search for texts to complete the task. Therefore, the participants’ prior knowledge was not considered to be a factor of individual differences in their task performance in the study.

The Task

The task asked students to search for and read L2 texts to write an expository essay with a given topic China’s Fight against COVID-19 Winning International Fame, with a length of about 500 words. The topic aimed to encourage students to read and use L2 texts concerning effective measures taken by China to fight the pandemic and international compliments that it has received.

The topic selected for the study is an important contemporary event. Selecting a topic of such type made it more possible for students to navigate and evaluate diverse types of multiple online sources. As the spread and control of Covid-19 has raised great concern home and abroad, there is a wealth of online and print sources for reference for students. Besides, selecting such a topic allowed for more opportunities for students to be faced with a complex real-world event of current importance.

It was expected that students might be somewhat familiar with some aspects of the topic of the task (i.e., some measures taken by China to fight against Covid-19). On the other hand, it was also expected that students would not get a comprehensive understanding of measures taken by China, as a variety of measures have been taken to tackle the different situations of the spread of the pandemic. Additionally, students would not have a clear idea of what kinds of international compliments that China has received, and which countries have ever praised China for its efforts to fight the pandemic. The topic of the writing task, being somewhat familiar yet still challenging, was designed to engage students and prompt them to locate, scan, and evaluate information from multiple sources across various types of websites or venues, rather than relying solely on their prior knowledge and experience.

In the course of data collection, two participants each time were asked to conduct the task at a place and time that they selected. Once they decided the place and time, the participants contacted the researcher. When conducting the task, the participants were told that they had no time constraint. Moreover, they were allowed to search for texts and access various types of texts.

Screen Recordings

In the present study, screen recording was the key method to capture and observe students’ L2 source-based writing processes. It aimed to investigate students’ processes of source evaluation.

In the present study, the method of screen recording is adopted for three purposes. First, it is used to record students’ L2 source-based writing processes, including their processes of source evaluation, and their time allocation to each behaviour. Based on these data, students’ processes of source evaluation will be identified and coded and their time allocation to each evaluation behaviour will then be calculated and compared. Second, screen recording videos of students’ L2 source-based writing processes, including their processes of source evaluation, will also serve as the stimulus in stimulated recalls, to explore students’ retrospection of use of evaluation criteria across their processes of source evaluation in L2 writing. To these ends, students’ screens were all captured throughout each writing session. Third, students’ screen recordings are also a basis for the researcher to identify sources that students located, read, evaluated, and selected to use in their written products.

The participants were all asked to install on their own laptop computers a screen recorder program, EV Capture (Version 4.1.4). EV Capture was chosen over traditional screen recording tools due to its ability to provide real-time, contextually rich data that aligns closely with the purposes of the present study. The tool can track mouse movements and capture all of students’ activities on screens when they were searching for, locating and evaluating sources. It can also provide a window in the bottom-right of the screen and capture accompanying videos and audios of the participants during their processes of evaluation, including their voices, faces, eye movements and hand gestures. All of these videos and audios were crucial for understanding how specific factors influence the participants’ behaviours in natural settings, forming the strong stimulus (Gass & Mackey, 2016) for the students to recall their engagement with specific behaviours and evaluation criteria. Furthermore, this tool is very common for screen recording in China, and many students were familiar with its functions, as they often used it in their daily lives. This familiarity helped them well-prepared for the study and reduced the likelihood of technical issues, thereby facilitating a smoother data collection process.

The participants opened EV Capture the moment they received the task from the researcher and began to read the task. Via the program, all of their processes of online research and comprehension displayed on computers were all captured and recorded as video files. All of these video files, together with the video and audio of the participants’ movements and gestures were saved by the first researcher.

Stimulated Recalls

The method of stimulated recalls is mainly used to elicit participants’ thought processes and/or strategies with support of stimulus like video or eye movements. It has been used in previous research (e.g., Khuder & Harwood, 2019; Wingate & Harper, 2021), with the aims to observe and identify students’ online research and comprehension.

In the study, students were asked to conduct stimulated recalls right after they finished the task. The duration between the processes of task performance and the reports of their reflections on evaluation criteria during the processes was much shorter than that of retrospective interviews. Students’ verbalizations of memory of criteria use were stimulated by a replay of screen recordings of their online activities, together with their facial expressions, eye moments and hand gestures as well as mouse movements. These strong stimuli can reduce the risk of omissions and fabrications (Brand-Gruwel et al., 2017) while increase the accuracy and validity of the students’ short-term memory (Gass & Mackey, 2016).

Lastly, the researcher strived to ensure the consistency of stimulus and to minimise potential bias. During the stimulus recall sessions, the researcher consistently stopped the video where the participants were engaged in processes of source evaluation, such as scanning the search results. The participants were then asked to discuss their specific behaviours during these moments. By rooting the questions in concrete and specific behaviours related to source evaluation, the researcher aimed to reduce the risk of incomplete, faulty or overly simplified recalls (Prior, 2004).

Students’ Selected Sources

The students’ selected sources were collected and used as one of the main sources of data. They were used for judging the quality of students’ selected sources and exploring the students’ ability to search for, evaluate and select relevant, reliable and quality sources. Specifically, it was expected that students would search for, locate and read multiple sources with diverse genres, as they had done previously when conducting writing assignments after class. These sources of diverse genres would serve as an important source of data to evaluate students’ evaluation ability in terms of source usefulness and reliability.

Students’ Written Products

Students’ written products was also a source of the data, aiming to examine students’ performance in L2 source-based writing.

After completing the writing task, the participants saved and sent their essays to the researcher. The researcher saved each participant’s essay in a new file named after the participants, in preparation for identifying their sources that they had used in their written products.

Data Analysis

Coding Data of Screen Recordings

In the study, source-based behaviours were the actions that students performed when engaged in multiple texts throughout their L2 source-based writing processes in an Internet environment. Students’ screen recording videos were first segmented into units of analysis, or source-based behaviours.

The coding of screen recordings was both inductive and deductive. The model of information problem solving (IPS) (Brand-Gruwel et al., 2009) and new literacies of online research and comprehension (Leu et al., 2014) served as a main point of departure for identifying diverse behaviours involved in students’ source-use processes in L2 writing in the present study. Each students’ screen recording data were segmented in an Excel spreadsheet, and the coding of each segment was conducted first for the subprocesses of students’ L2 source-based writing processes. Based on these two models, students’ screen recording data were analyzed and coded into five categories to capture the specific source-based subprocesses: source searching, evaluation, reading, integration and other. Subsequently, source-based behaviours that emerged across the data were identified and added. The identified source-based behaviours were labelled based on these two models and previous empirical studies.

During the data analysis, the researcher solved disagreements through discussion with an expert in the field of L2 writing. The researcher first analyzed all screen recording data and developed a draft coding scheme from this initial analysis. The expert examined the initial coding scheme and applied it to her analysis and coding of a subset of the data. Based on the previous literature and the replay of the videos, they discussed their coding results and revised and refined the developed coding scheme. To test intercoder agreement, they randomly selected one participant’s screen recording data (approximately 2% of the total data), coded the data individually and compared their results. The intercoder agreement rate was good in code identification (80.0%). They discussed the coding results and resolved disputes, based on which a refined coding scheme for L2 source-based writing processes was developed and used for another round of data coding. The final version of the coding scheme is shown in Table 1. Examples of these subprocesses are shown in Appendix Table A1. Additionally, evaluation behaviours across students’ processes of source evaluation were highlighted for further coding and analysis.

Coding Scheme for Students’ L2 Source-Based Writing Processes.

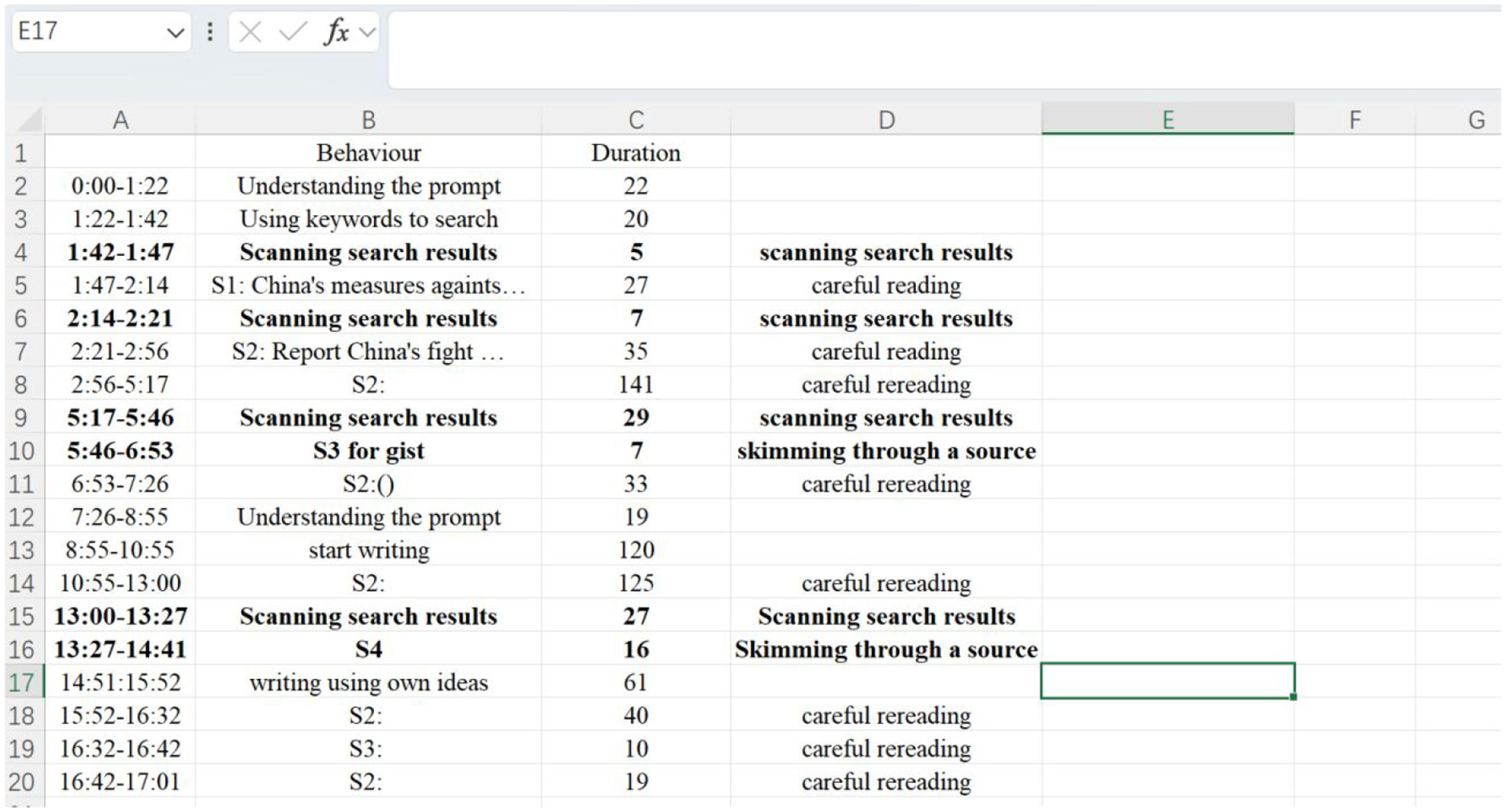

After the coding scheme was determined, students’ L2 source-based writing processes were listed in Column A of Microsoft Excel spreadsheet, with the time duration of each process calculated and listed in Column B. Each student’s source-based processes were then coded on a designated Excel sheet, with a special emphasis on highlighting evaluation behaviours in bold. Figure 1 is an example of the coding of source-based processes.

Coding of student 6’s L2 source-based writing processes.

The researcher then calculated the time in seconds that the participants allocated to each L2 source-based writing process, emphasizing their time allocation to evaluation behaviours. As students’ time to compose the writing differed, the researcher calculated the percentages of time that students spent on each process and behaviours in relation to their total composition time. For the analysis of specific evaluation behaviours, the researcher produced a verbal description.

Coding Data of Stimulated Recalls

Each students’ stimulated recalls were transcribed verbatim and segmented in a Microsoft Excel spreadsheet. Since the study focuses on students’ online evaluation of multiple L2 texts, the segments of stimulated recalls concerning evaluation criteria were the main focus of analysis.

The coding followed a partly inductive and partly deductive approach. The model of information problem solving (IPS) (Brand-Gruwel et al., 2009) and new literacies of online research and comprehension (Leu et al., 2014) were used as references for coding students’ stimulated recall data concerning source evaluation. The development of the coding scheme for analyzing the data of stimulated recalls was made via iterations and readjustments.

The researcher at first coded all students’ stimulated recall transcripts based on reading and rereading of relevant literature and the transcripts. This phase helped him create an initial coding scheme (Table 2).The applied the initial coding scheme to the subset of the data set. Through revisiting the stimulated recall transcripts, they further revised and refined the coding scheme. They then selected and coded a participants’ stimulated recalls and compared their results of the coding. The intercoder agreement was 87.5%. Later they discussed the coding results, resolved disputes and finally developed and determined a revised coding scheme for the evaluation criteria in the stimulated recall data. The final version of the coding scheme is shown in Table 3. And a sample of the coded data is shown in Appendix Table A2.

Coding Scheme for Students’ Evaluation Criteria.

Time Spent on Evaluation Behaviours in Percentages of Composition Time.

Guided by the coding framework, the analysis of the data of stimulated recalls finally resulted in the coding of 377 instances of evaluation criteria. Six evaluation criteria, including determining relevance to the task, examining source features, scrutinizing content accuracy and objectivity and connecting to prior knowledge, were introduced and refined based on previous studies (Brand-Gruwel et al., 2017; List et al., 2016; Thompson et al., 2013; Vorobel et al., 2021; Walraven et al., 2009). The other two evaluation criteria, including predicting ease of source integration and combining L2 writing needs, were developed, refined and added based on students’ stimulated recall transcripts that did not fit with the coding schemes used in previous studies.

After coding students’ evaluation criteria, the researcher calculated the number of each category of evaluation criteria. For the qualitative analysis of students’ evaluation criteria, the researcher created a written description of all evaluation criteria throughout their L2 source-based writing processes. In the descriptions, the researcher specified and interpreted the details and content of all evaluation criteria observed.

Analyzing Task Performance

Students’ task performance was measured by two parts. The first was the identification of the quality of the students’ selected texts. The quality of the students’ selected texts was identified based on the following criteria: the reliability of the websites, and content accuracy and objectivity. The researcher visited the websites of texts selected by the students, and judged their reliability based on their domain (e.g., .com, .edu, .gov), reputation and publisher/author, purpose of the establishment. After deciding the reliability of the websites, the researcher read and reread the students’ selected texts to judge whether they contain biased opinions and/or inaccurate information. Based on the analysis, the researcher measured the percentages of quality texts selected by each student.

The second measure of students’ task performance was total writing scores. Students’ written products for the writing assignment were made up 25 expository essays. The total number of words were 14,279, with a mean essay length of 571.12 words (SD = 95.16). For each written product, its title, author and references were removed as previous studies did. The researchers, together with an English teacher who have been teaching English writing for more than 10 years, took part in assessing students’ essay quality. An established analytic scoring rubrics of L2 source-based writing (Chan et al., 2015) was adapted in the present study.

Analyzing Quantitative Data with SPSS

For the third research question, measured variables of students’ evaluation behaviours, evaluation criteria, the quality of students’ selected texts and total writing scores were submitted to correlation analysis. Due to the small sample size and non-normal distribution of the data, Spearman correlation analysis was conducted to examine the contributions of students’ evaluation behaviours and criteria to their task performance. This approach was selected because Spearman correlation analysis is appropriate for non-parametric data and does not assume a linear relationship, making it suitable for the conditions of this study.

Results

Students’ Evaluation Behaviours

On average, students spent 2:16:40 completing the task (SD = 29:04). Students’ overall L2 source-based writing processes were divided into five types: source searching, evaluation, reading and integration, and other. The “other” category includes processes such as generating one’s own ideas, pausing, and solving technical problems that did not fall into the main categories.

Throughout their L2 source-based writing processes, students engaged in subprocesses of source searching, evaluation, reading and integration to differing extents. Figure 2 provides an overview of students’ time allocation to the subprocesses of source searching, evaluation, reading, integration and other. As shown in this figure, students engaged in these subprocesses to differing extents. By average, students spent the fourth largest proportion of their composition time evaluating information and sources (M = 10.29%, SD = 5.95%).

Time spent on L2 source-based processes in percentages of composition time.

Students’ processes of source evaluation consisted of four behaviours: scanning search results, rescanning search results, taking notes, and reviewing notes. The study calculated students’ time allocation to these evaluation behaviours. The results are shown in Table 3.

As shown in Table 3, students’ average time allocation to reviewing notes were the largest in percentages of their composition time. On average, the students spent 3.44 % (SD = 4.09%) of their total task time reviewing notes. Students’ average time allocation to scanning search results was the second largest (M = 5.64%, SD = 2.74%), sequentially followed by their average time allocation to taking notes (M = 2.23%, SD = 3.07%) and skimming through a source (M = 0.49%, SD = 0.53%).

When scanning search results, students typically browsed the titles on the hit lists generated and took a quick look at the brief introductions under these titles. Students primarily applied two kinds of venues to search: search engines, such as Bing and official news websites, such as China’s Daily, People’s Daily, Xinhua Net, and CGTN. These two types of venues often produced a myriad of search results ranked by relevance. The majority of query terms entered by students were single noun phrases. Typically, these noun phrases were specific and targeted, often representing the entire writing topic or its focus. Such search attempts frequently resulted in numerous search results, leading students to adopt what they believed to be effective methods for scanning these search results. Notably, most of the students relied too much on the search engine algorithm, assuming that it would prioritize and present the highest quality texts at the top of all search results. Consequently, students often made quick evaluation of search results and seldom scrolled down to the bottom to scan all search results. For example, some students spent less than six seconds in scanning search results, while some even spent less than two seconds to scan search results every time and immediately clicked in the first or the second link of the hit lists.

After clicking into a website for the first time, most students at first skimmed through a text to grasp thegist and made an initial evaluation. While skimming, they routinely read the title, the first paragraph, topic sentences of paragraphs in body parts, and final paragraph. Students often scrolled up and down repeatedly and read words and sentences in bold types, boldface and with highlighting. Such skimming often prompted them to make decisions about source evaluation, especially whether the text was relevant and which part of the text was useful.

Taking notes was an important step for students to determine source relevance and make initial decisions about source use in writing. Students mostly focused on what information and language to select and glean. Students’ notes were made up of two primary types of contents: useful language and key information. To some extent, the contents of these notes reflect students’ major attention to language use and ideations in L2 writing. In contrast, students seldom incorporated references to some texts into their notes. Only three students, including Student 16, Student 21, and Student 24, occasionally evaluated sources of information and took down references in their notes. The results indicate students’ limited attention to sources of information and author information when taking notes. They are in line with the findings of previous L1 studies (Coiro et al., 2015; Du & List, 2020; Walraven et al., 2009).

While reviewing notes, all students evaluated information within a text or texts for subsequent source use in writing. Students’ behaviour of reviewing notes was often guided by their dynamic content development in writing and interacted with their integration of information and languages from texts into writing. Specifically, students’ information needs for the task sometimes changed amid their writing processes, leading them to drop portions of the notes that they had previously thought to be important and useful when skimming through a text and taking notes. Besides, some students occasionally compared their notes with instances of source use that they had previously formulated based on the notes, in order to check the accuracy and the similarities and differences in language use between original sentences in the notes and their own source-based sentences. Taken together, the behaviour of reviewing notes was often driven by students’ L2 writing needs, indicating that students’ source evaluation was frequently entangled with their brainstorming for source integration and idea translation and reviewing and revising source use in writing.

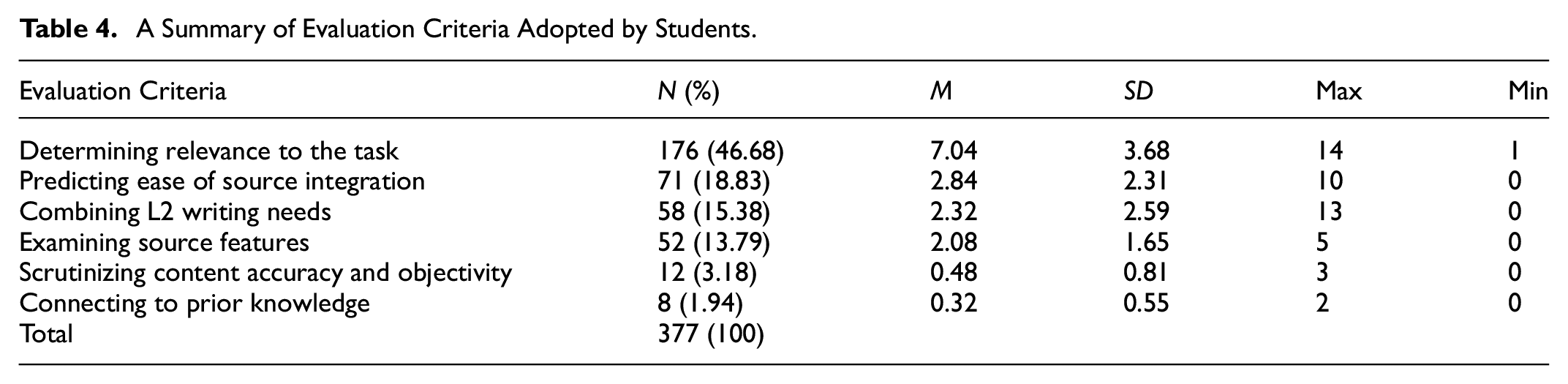

Students’ Evaluation Criteria

The analysis of the stimulated recalls showed that students’ evaluation criteria fell into six categories: determining relevance to the task, predicting ease of source integration, combining personal L2 writing needs, examining source features, scrutinizing content accuracy and objectivity, and connecting to prior knowledge. The results are shown in Table 4.

A Summary of Evaluation Criteria Adopted by Students.

As shown in Table 4, determining relevance to the task was used the most often. The result showed that the evaluation criteria used were mostly concerned about students’ evaluation of source relevance, judging whether a source was useful for their L2 writing. The second and third most frequently used evaluation criteria were predicting ease of source integration and combining personal L2 writing needs. Compared with their frequent use of these three evaluation criteria, students seldom used the criteria of scrutinizing information accuracy and objectivity and connecting to prior knowledge.

All students used the criterion of determining relevance to the task for source evaluation. Students’ consideration to the relevance of a text to the task was mostly based on their clear task conceptualization and interpretation, highlighting their focus and goals when searching for and locating texts. It not only demonstrated students’ special attention to main contents of a text, but also reflected their significant concern about amount of information.

Students expressed in their stimulated recalls a wide range of requirements for content, including “content fitting well with the plan of the writing” (Student 1, stimulated recalls), “specific measures taken by China” (Student 6, stimulated recalls), “positive comments by foreign countries or authorities” (Student 9, stimulated recalls), “the media’ reports on the information” (Student 5, stimulated recalls), “examples” (Student 13, stimulated recalls), “the timeline of the pandemic” (Student 6, stimulated recalls), “comments on the topic” (Student 3, stimulated recalls), “statistic data” (Student 10, stimulated recalls), and “remarks made by prestigious figures” (Student 17, stimulated recalls). Specifically, students often considered whether a text offered contents relevant to the task, what kinds of contents could satisfy information needs and whether a text was rich in substantive evidence and information. The results indicate that students tended to use multiple texts for the purpose of content-based learning (Thompson et al., 2013) and task fulfilment.

Predicting ease of source integration was a criterion for source evaluation specific to L2 students. It was concerned about students’ practical and economic considerations when engaged in source evaluation. As students intended to save time, efforts and energy and reduce possible difficulties in subsequent processes of source integration, predicating whether a text or information within a text was easy to transfer and transform in writing became one of their priorities and major concerns.

For instance, students decided to discard a text that included “too many trivial details” (Stimulated Recalls, Student 2), “too much numerical data” (Stimulated Recalls, Student 12) or “abstract ideas” (Stimulated Recalls, Student 9), feeling it posed great challenges for integrating into their essays. Additionally, students often mentioned their abandoning of information within a text that was presented in the form of image, video, table or figure, believing that such kinds of information costed too much time and efforts to interpret and use in their writing. Students’ use of this evaluation criteria reflects L2 writers’ language-related difficulties when engaged in source evaluation (Sendur et al., 2021).

In contrast, in their stimulated recalls, students mentioned their strong preferences for certain kinds of information or sources, perceiving that they were easy to understand and thereupon easy to integrate in writing. By predicating ease of source integration, students tended to select sources with “moderate length” (Stimulated recalls, Student 4), “common and simple vocabulary” (Stimulated recalls, Student 24), “simple syntactical structures” (Stimulated recalls, Student 3), or “concise wording and descriptions” (Stimulated recalls, Student 5). Moreover, many students stressed that a source with moderate length was highly valued.

Students’ use of the criterion of predicting ease of source integration reflected L2 writers’ language-related difficulties when engaged in source evaluation subprocesses (Sendur et al., 2021). Such kind of difficulties in source evaluation have not been documented in previous studies in L1 learning contexts. Given that L2 student writers were more limited in their linguistic repertoires than L1 students, L2 student writers paid much more attention to getting possible language support from multiple sources for their writing.

Combining L2 writing needs was another criterion for source evaluation specific to L2 students. It was closely associated with students’ difficulties and multiple practical needs in source use in L2 writing. Specifically, when evaluating a text or texts, students often considered their L2 writing needs in terms of language use, rhetorical effects, structure, and style. In their stimulated recalls, students stressed their thoughts of personal difficulties in L2 writing and whether a source was conducive to their subsequent source integration in writing. As a result, students paid particular attention to texts with good L2 writing, supposing this kind of texts would serve as a writing model in language use, rhetorical effects, organizational structure or style.

For instance, with their own L2 writing needs in mind, students preferred the sources including a variety of useful language, especially word-level language like “various synonyms to help enrich my word choices in writing” (Stimulated recalls, Student 1), “vocabulary used to describe the measures against the pandemic” (Stimulated recalls, Student 5), or “vocabulary used to develop the introductory paragraph” (Stimulated recalls, Student 20). Besides, students often selected sources that were characteristic of “clarity of organizational structure” (Stimulated recalls, Student 3), “academic style” (Stimulated recalls, Student 3), “formal language use” (Stimulated recalls, Student 9), “succinct and concise language use” (Stimulated recalls, Student 13), “elegant language use” (Stimulated recalls, Student 5), or “high-level coherence and logic” (Stimulated recalls, Student 15). Moreover, many students laid an emphasis on a source with clarity of organization structure. The results are confirmed by prior studies (Bai & Wang, 2023; Plakans & Gebril, 2012), indicating students’ purposes for language support and organizational structures from multiple online sources.

Examining source features for source evaluation was referred to as one of the “sourcing” strategies (Wineburg, 1991), or a source-related strategy (von der Mühlen et al., 2016) documented in prior L1 studies. In the study, students’ use of this criterion was somewhat limited. In their stimulated recalls, students demonstrated their examination of three surface features of a source, including genre types, publisher/author, and date of publication, assuming them important indicators of source reliability and quality.

Some students mentioned their examination of genre types. Overall, through examining this surface feature of a source, students decided to select information from a news article, academic paper, or official report, believing that a source of such genre sounded more reliable and formal. Instead, they tended to rejected interview transcripts, theoretical documents, commentary or propaganda video. Publisher/author was another source feature that students examined when evaluating a source. In their stimulated recalls, the students recalled that they would check whether a source was produced by an official publisher or a credible author, assuming that the official nature of a website or the prestige of its author was a security for its reliability and quality. Date of publication was the third source feature that the students examined. Students would check the up-to-datedness of a source, supposing such kind of sources included more comprehensive and valid information. For instance, in their stimulated recalls, students mentioned their examination of date of publication of academic paper, as they believed it was “an integral part of source quality” (Stimulated recalls, Student 3).

Students’ use of the criterion of scrutinizing content accuracy and objectivity for source evaluation was also very limited. Only seven students occasionally used this criterion. Students’ use of this criterion highlights their critical thinking and awareness of opinions, beliefs, voice, and stance involved in a text. This required that students held firm beliefs about a specific topic, had an awareness of potential bias within texts and initiated critical evaluation, so as to differentiate and identify objective, unbiased, accurate, and in-depth opinions, beliefs, voice, and stance from those subjective, superficial or false ones. For instance, in their stimulated recalls, students mentioned their rejection of texts featuring “ambiguous and insincere tone” (Stimulated recalls, Student 3) or texts including “doubts and lashes without any foundation” (Stimulated recalls, Student 5).

Some students used the criterion of connecting to prior knowledge for source evaluation. Students use it for two main purposes: evaluating the novelty and distinctness of information and evaluating the importance of information. For some students, integrating novel information into writing would guarantee and enhance their originality and creativity in source use in writing, while selecting important information to use would be beneficial to elevate their essays. For instance, in her stimulated recalls, Student 12 mentioned her associations with prior content knowledge of the topic, with the aim of “selecting a source characteristic of something new and special to use in writing” (Stimulated recalls, Student 12). Students tended to abandon texts that included “simple common sense” (Stimulated recalls, Student 2), “cliché” (Stimulated recalls, Student 8), or “trivial, unimportant details” (Stimulated recalls, Student 12), feeling them were too common and simple to use in writing.

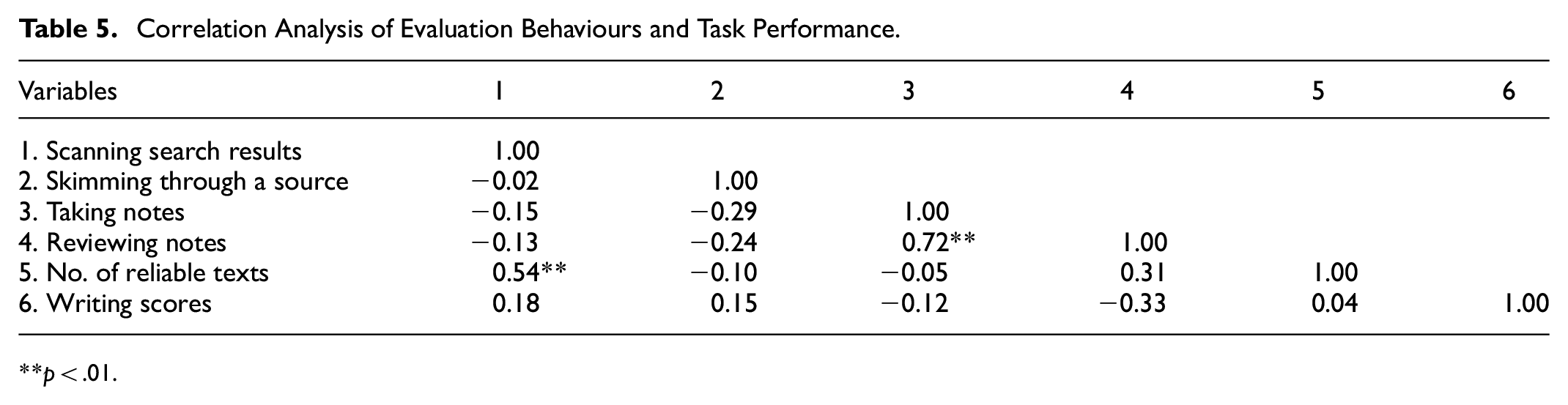

Correlation Analysis

The study then conducted correlational analysis among variables of evaluation behaviours, criteria and task performance including the number of reliable texts and total scores. The results are shown in Tables 5 and 6 respectively.

Correlation Analysis of Evaluation Behaviours and Task Performance.

p < .01.

Correlation Analysis of Evaluation Criteria and Task Performance.

p < .05. **p < .01.

As shown in Table 5, the evaluation behaviour of scanning search results was significantly and positively associated with the number of reliable texts (ρ = .54, p < .01), indicating a moderate correlation. This suggests that students who allocated more time to scanning search results were more likely to select a greater number of reliable texts to use in writing. The positive association implies that the scanning of search results is an effective behaviour in source evaluation, as it increases the likelihood of identifying credible sources. This finding highlights the importance of encouraging students to invest adequate time in scanning search results.

As shown in Table 6, significant associations were observed between evaluation criteria and task performance. To be specific, the evaluation criteria of determining relevance to the task (ρ = .44, p < .05), examining source features (ρ = .69, p < .05) and scrutinizing content accuracy and objectivity (ρ = .47, p < .05) were significantly and positively associated with writing scores. The results indicate that students who more often determined relevance to the task, examined source features and scrutinized information accuracy and objectivity tended to achieve higher writing scores. This suggests that these three evaluation criteria are effective in enhancing writing performance. These findings underscore the importance of incorporating these evaluation criteria into writing instruction to enhance students’ performance in L2 source-based writing.

Discussion

The study sought to examine EFL undergraduates’ processes of source evaluation during web search. The results showed that students engaged in four evaluation behaviours and used six categories of evaluation criteria across their L2 source-based writing processes. Moreover, the results of follow-up correlational analysis revealed the significant and positive associations between scanning search results and the number of reliable texts and the significant and positive associations between the criteria of determining relevance to the task, examining source features and scrutinizing content accuracy and objectivity and total writing scores.

Regarding evaluation behaviours, the results showed that students engaged in four evaluation behaviours to differing degrees. Students’ evaluation behaviours included scanning search results, skimming through a source, taking notes and reviewing notes. Across their processes of source evaluation, students laid greater emphasis on evaluating useful content and language than evaluating search results. On the one hand, students mostly trusted default functions provided by search engines and their evaluation of search results tended to be superficial and casual, indicating a lack of thorough scrutiny when evaluating search results. On the other hand, when taking notes from multiple texts, students often focused on key information and useful languages. In contrast, students paid limited attention to the websites where texts were located. The results altogether showed students’ tendency to prioritize source content and language over sources of texts.

Such results concur with that of prior L1 studies (Hinostroza et al., 2018; Walraven et al., 2009) finding that students across different educational levels seldom evaluated search results and held strong belief in the rank of the hit lists provided by a search engine. The results suggest that both L1 and L2 students lacked an awareness of carefully and critically evaluating search results, indicating that they had limited proficiency in source evaluation and have not yet acquired advanced level of source evaluation.

Regarding evaluation criteria, the results showed that students used evaluation criteria to varying extents. Students’ evaluation criteria involved determining relevance to the task, predicting ease of source integration, combining L2 writing needs, examining source features, scrutinizing content accuracy and objectivity, and connecting to prior knowledge. Typically, the three most commonly used evaluation criteria were determining relevance to the task, predicting ease of source integration, and combining L2 writing needs. These three evaluation criteria were all concerned about students’ evaluation of multiple texts to seek support and scaffolding in terms of content development, language use, and organizational structures. The results therefore highlight students’ frequent use of evaluation criteria for source relevance and usefulness, indicating their major concerns and L2 writing needs when evaluating multiple texts to use in writing.

The criterion of determining relevance to the task was confirmed in earlier work in L1 learning contexts (Brand-Gruwel et al., 2017; Goldman et al., 2012; Rodicio, 2015; Walraven et al., 2008, 2009) and L2 learning contexts (Thompson et al., 2013; Vorobel et al., 2021), while the criteria of examining source features, scrutinizing content accuracy and objectivity, and connecting to prior knowledge were documented in only a few L1 studies (Brand-Gruwel et al., 2017; Hämäläinen et al., 2021; List et al., 2016; Walraven et al., 2009). The other two evaluation criteria, including predicting ease of source integration and combining L2 writing needs, have seldom been reported in previous L1 and L2 studies. These two evaluation criteria are specific to L2 students and reflect L2 students’ distinct tendency when evaluating multiple texts. The results therefore add to our understanding of evaluation criteria, especially L2 students’ evaluation criteria for multiple texts. They indicate L2 students’ specific concerns and cast new light on research on undergraduate EFL students’ source evaluation.

Compared with their frequent use of the criterion of determining relevance to the task, students occasionally examined source features and scrutinized content accuracy and objectivity. These two evaluation criteria were both essential for identifying content quality and source reliability. The results corroborate the findings of previous L1 research (Brand-Gruwel et al., 2017; Coiro et al., 2015; Forzani, 2018; Goldman et al., 2012; Kiili et al., 2017; List et al., 2016; Rodicio, 2015; Walraven et al., 2008, 2009) and L2 research (Radia & Stapleton, 2008; Stapleton, 2003, 2005; Stapleton et al., 2006). These prior studies all revealed that students, especially novices and unsuccessful students, had low-level ability to evaluate source reliability. However, the results of the present study are in contrast with that of Hämäläinen et al. (2021) finding that participants were particularly good at using criteria for evaluating source reliability. In their study, Hämäläinen et al. (2021) offered prompts to remind students of judging the credibility of online sources, which might help guide students to evaluate sources based on reliability and therefore improve their performance in evaluating the reliability of multiple sources.

Moreover, the findings of the present study hold significant theoretical implications. The results of students’ engagement with evaluation behaviours and criteria lends support for the assumptions of the model of information problem solving (IPS) (Brand-Gruwel et al., 2009) and new literacies of online research and comprehension (Leu et al., 2014). They reaffirm the specific processes and skills that students need to engage during web search, indicating that both L1 and L2 students share some common evaluation behaviours and criteria in online research and comprehension. More importantly, the present study offers new insights by revealing additional evaluation behaviours and criteria specific to L2 students, such as reviewing notes, predicting ease of source integration and combining L2 writing needs. Taken together, the results of the present study not only confirm the model of information problem solving (IPS) (Brand-Gruwel et al., 2009) and new literacies of online research and comprehension (Leu et al., 2014) in EFL students’ source evaluation in a natural environment, but also extend both models.

Regarding the associations, the results showed the significant and positive associations among the behaviour of scanning search results, the criteria of determining relevance to the task, examining source features and scrutinizing content accuracy and objectivity and task performance. The results indicate the importance of source evaluation, including evaluation behaviours and criteria, to students’ performance in L2 source-based writing. Specifically, students who actively engage in these evaluation behaviours and criteria tend to achieve higher task performance, indicating that source evaluation practices contribute to improved writing quality. The positive associations suggest that the behaviour of scanning search results helps students locate relevant sources and glean information needed. Additionally, the criteria of determining relevance to the task, examining source features and scrutinizing content accuracy help safeguard the quality of sources selected to use in writing. These high-quality sources and information selected by students might inspire their ideation, organizational structure, and language use, ultimately improving the overall quality of their writing. In short, students’ engagements with these evaluation behaviours and criteria can not only enhance the credibility and coherence of their writings but also improve their ability to construct well-supported arguments.

Source evaluation is of paramount importance in assessing students’ information literacy and source-use ability (Cho, 2014; Kiili et al., 2017; Stapleton, 2003, 2005). Informed by the present study, it is suggested that EFL students need to be encouraged to made appropriate source evaluation. For one thing, the results of the present study demonstrated that students often made superficial evaluation of search results. The results of the study therefore point to the need to give instruction on how to evaluate search results provided by search engines (Hinostroza et al., 2018; Wette, 2020). For another, the results showed that students made limited use of evaluation criteria related to source reliability. The results therefore indicate the great challenges that L2 students faced when evaluating information and texts to use in writing. Such results highlight the urgent necessity to carry out explicit instruction on various evaluation criteria in L2 and foreign language classrooms.

For schools and curriculum designers, it’s advisable that the instruction of source evaluation, especially the evaluation of source reliability, should be included as an integral part of L2 reading and writing courses. The curriculum should demonstrate and highlight the high importance of critical source evaluation to source use in writing (Wette, 2020), the value of using multiple aspects of source reliability to evaluate (Coiro et al., 2015) and the value of corroboration (Kohnen & Mertens, 2019). Specifically, it should help students foster Internet-specific beliefs in judging the authoritativeness of sources, using prior knowledge to evaluate sources, and corroborating multiple sources for consistency in information (Bråten et al., 2018; Hämäläinen et al., 2021). In particular, instruction should highlight the value of corroboration of opinions and information across multiple sources (Kohnen & Mertens, 2019), since corroborating information across sources is highly conducive to evaluating source reliability (Bråten et al., 2018).

Making students aware of the value of evaluating source reliability is far from enough. It is highly necessary to provide students with essential procedural knowledge about evaluating key aspects of source reliability (Braasch et al., 2011; Mason et al., 2014; Walraven et al., 2013; Wiley et al., 2009), including assessing authors’ level of expertise, inherent biases in content, quality of arguments in sources, sources of information, recency of information, stance objectivity, content accuracy and other aspects of reliability. More importantly, a single criterion for reliability evaluation is not helpful and often misleading (Hämäläinen et al., 2021). Students can benefit from getting to know a range of strategies for source evaluation and learning to apply a combination of multiple acceptable criteria for evaluating source reliability.

For teachers, they should attain training in source evaluation, raise alertness to students’ failure to evaluate source reliability, and apply explicit instruction and pedagogical approaches to foster and improve students’ abilities to evaluate source reliability. Teachers can give detailed feedback about students’ evaluation or lack of evaluation of one or some aspects of source reliability. Given that some students already acquire some knowledge of source evaluation, teachers could also encourage collaborative source evaluation activities (e.g., Hämäläinen et al., 2021; Kiili et al., 2017), and guide students to share effective strategies for source evaluation and discuss and analyze reasons for their failure to evaluate certain aspects of source reliability.

Task design is also important to develop students’ skills to evaluate source reliability. One choice is to design tasks that use prompts and give temples about various aspects of source reliability (Hämäläinen et al., 2021). This type of tasks may help students keep alert to source evaluation and elicit their careful and critical evaluation of multiple aspects of source reliability. Another choice is to design tasks that constitute of controversial information within sources or across sources and sources from a range of different websites with different formats (e.g., online newspapers, blogs, and social media). This type of tasks may be conducive to enhancing students’ awareness of the plausibility and bias of information and knowledge across websites and decreasing their overreliance on the rank of the hit lists provided by search engines. Moreover, this type of tasks could also help students learn to flexibly apply strategies for source evaluation and make informed, well-supported and logical conclusions about source reliability. Finally, task design should be varied in content area and include both domain-general and domain-specific topics. This type of tasks may be constructive to enhance students’ knowledge and awareness of evaluating source reliability specific to their own disciplines.

The study has three limitations. First, the present study investigated processes of source evaluation among a specific group of students, offering valuable insights into the topic. However, the present study was not comparative in nature and did not consider potential individual variables, such as language proficiency, prior knowledge, and experience in source evaluation. An analysis of the effects of individual differences on processes of source evaluation might yield different findings. Future studies should consider conducting a comparative investigation of EFL students’ processes of source evaluation. It would be interesting to look at and compare evaluation behaviours and criteria by L2 students across language proficiency levels and/or with different levels of prior knowledge and experience in source evaluation. Second, the present study was cross-sectional study and focused on EFL undergraduates’ processes of source evaluation. Although the present study provided a range of findings of students’ evaluation behaviours and criteria, it could not track the development of students’ abilities in source evaluation as a longitudinal study would. Future studies can adopt a longitudinal approach to trace students’ changes and developments in terms of evaluation behaviours and criteria, and to examine what factors contribute to students’ progress in source evaluation. Third, while Spearman’s correlation was appropriate for the study’s data characteristics, it is important to note its potential limitations, such as reduced sensitivity to detect smaller effects. Additionally, to achieve a more rounded analysis, alternative methods could be considered for future research to validate the findings.

Conclusion

This study examined EFL undergraduate students’ processes of source evaluation in an Internet-based environment. Students were found to engage in four evaluation behaviours and six evaluation criteria across their L2 source-based writing processes. Additionally, students’ evaluation behaviours and criteria were significantly associated with the number of reliable texts and the quality of their writings. The study provides valuable insights into the challenges students face in evaluating the reliability of multiple online texts and the association between source evaluation and task performance. These results shed new light on the complexities of source evaluation in EFL contexts. Future studies can build on these findings to further explore EFL’s processes of source evaluation and deepen the understanding of the topic.

Footnotes

Appendix

Sample of Students’ Evaluation Criteria in the Data of Stimulated Recalls.

| Code | Example |

|---|---|

| Determining relevance to the task | I felt this source was useful. |

| Predicting ease of source integration | I thought it’s difficult to integrate the source in writing in logical ways. It would be just boring writing. |

| Combining L2 writing needs | Compared with the first source (I located), this one was well-written. It featured clear organizational structure. |

| Examining source features | I did read the source, for it was published by Xinhua Net. |

| Scrutinizing information accuracy and objectivity | The content here was a little subjective. |

| Connecting to prior knowledge | I thought the information was not new to me. |

Acknowledgements

The author thank the editor and the reviewers for their insightful comments on the earlier versions of this manuscript. He is also grateful for all the students who participated in this study. The author thanks Weihong Chen for assisting in checking the data analysis.

Ethical Considerations

The study was reviewed and approved by an academic research committee organized by the institution where the author works. The committee is responsible for examining important issues concerning research, including reviewing and approving research ethics.

Consent to Participate

All participants gave their written consent before data collection of the study.

Author Contributions

Jianming Liu contributed to the conceptualization, data collection, data analysis, writing and revision of the study.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data from this study are available upon reasonable request.