Abstract

The education faculty aims to assess departmental effectiveness by analyzing the relationship between service levels, output variables, and input variables. This objective is coupled with the formulation of faculty development strategies tailored to enhance efficiency while accommodating individual professionals’ unique requirements and aspirations. Furthermore, beyond the annual University Evaluation System, faculty members conduct thorough evaluations to identify discrepancies in departmental reports detailing service levels and input components (factors of production) over a three-year period. Through descriptive statistics and hypothesis testing, reliable data guides the decision-making process regarding the optimal level for data envelopment analysis (DEA). The study employs four simulated scenarios to evaluate the overall performance of five departments using DEA. Findings reveal varying efficacy ratings across departments, with the Department of Health and Physical Education achieving a moderate rating and the Educational Technology Department exhibiting the lowest efficacy. Conversely, departments such as Art Education, Music Education, and Business Education showcase exemplary efficacy levels. Insights gleaned from quantitative analysis and questionnaires contribute significantly to faculty development, enhancing knowledge, competencies, values, attitudes, and future endeavors. Recommendations provided advocate for the widespread implementation of efficacious approaches to bolster faculty efficacy. Moreover, the study underscores the potential to enhance the efficiency and quality of service across faculties through the adoption of best practices and initiatives.

Keywords

Introduction

In the ever-changing world of higher education, colleges must create effective self-evaluation procedures. Conventional evaluation methods are inefficient and fail to properly use available data for thorough evaluations. The widely used Plan-Do-Check-Act (PDCA) cycle in quality management inspires this study paper’s revolutionary university self-evaluation technique. The study paper proposes that every university academic department use freely available data from authoritative sources to evaluate its performance and identify areas for improvement. This strategy uses pre-existing data sources to improve efficiency and effectiveness over conventional assessment approaches, which take time and money. Data Envelopment Analysis (DEA) provides a robust quantitative framework for comparing university academic departments.

This strategy is based on continual improvement, like the PDCA cycle. By integrating data-driven assessment into faculty operations, universities may foster quality, innovation, and accountability. This new approach allows professors to take responsibility for their performance and enables institutions to make evidence-based choices. Everyone agrees that education can boost a nation’s human capital. Neoclassical growth theory establishes human capital’s role in economic development (See et al., 2022). Universities enhance economic growth by sharing information, developing human capital, and encouraging innovation.

Scholarly research into practical and theoretical concerns and educational opportunities might help with this project. Thailand’s Education and Research Development Plan aspires to make it a regional leader in higher education. Due to the above, higher education institutions have increased significantly. Their influence on national strategic planning makes their effectiveness assessment crucial (Si Na et al., 2020). Performance measures vary in public higher education (Ahmed, 2020). Two major issues arise from this. Remember that non-profit institutions do not import or export. Higher education institutions use several inputs to attain a variety of outputs (Ahn et al., 1988).

The impetus for this research originates from the urgent requirement to confront the obstacles associated with performance evaluation and efficacy that higher education establishments, specifically public universities, must confront. Notwithstanding their pivotal function in nurturing the growth of human capital and bolstering economic advancement, the evaluation of their efficacy continues to be intricate and multifaceted. Therefore, the principal objective of this research endeavor is to utilize contemporary quantitative approaches, particularly Data Envelopment Analysis (DEA), in order to conduct a thorough assessment of the academic departments’ performance in a university environment. Through the utilization of DEA and quantitative analysis, one can obtain significant insights regarding the comparative efficiency and efficacy of various departments. These insights can then be applied to inform strategic planning endeavors and facilitate initiatives related to faculty development. The ultimate objective is to make a meaningful contribution to the perpetual enhancement of teaching, research, and administrative operations in institutions of higher education, thereby cultivating an environment that promotes excellence and ongoing progress.

The goal of the performance assessment process is to provide the individuals working for the academic institution with the opportunity to take on additional responsibilities and display a stronger commitment to the development of the faculty. The utilization of linked data will play a crucial role in assessing the efficacy of educational institutions. The assessment methods implemented by universities necessitate the yearly evaluation of efficiency scores. As a result, research on university effectiveness using modern mathematical and computer modeling methods is a pressing challenge for obtaining quantitative and qualitative nonparametric assessments of higher education system performance (Lin & Yu, 2023). The DEA technique is a benchmarking tool that enables the identification of the most efficient elements. Therefore, this study aims to enhance the understanding of total efficiency by including all relevant information from each department using DEA. The utilization of connected data will play a crucial role in assessing the efficacy of educational institutions, as they acknowledge the multitude of factors that need to be considered for comparative study (Abbott, 2003; Agasisti et al., 2012; Netanda et al., 2019).

The present study employed quantitative analysis (QA) and data envelopment analysis (DEA) methodologies inside the University Evaluation System forms (UES). Quantitative analysis is employed to assess the dependability of the fluctuating data included in the model. This analysis encompasses both inferential or inductive statistics and descriptive statistics. The attributes of fidelity, dependability, and generality are all necessary for the data. The DEA technique is commonly employed by researchers and practitioners to assess performance by considering the relative efficiency of several inputs in generating multiple outputs for decision-making units (Cooper et al., 2011a; Guotao & Ying, 2019). Efficiency scores serve as a metric for evaluating the relative performance of different entities. The primary objective of this study is to conduct a comprehensive assessment of the departments, as opposed to evaluating them every year, to utilize all the data provided in the annual reports.

Furthermore, the enhancement of faculty development can be achieved by the integration of evaluation outcomes with staff surveys. The opportunity exists for the initiation of an intentional process wherein both academic and non-academic personnel engage in collaborative efforts aimed at enhancing knowledge, skills, methodologies, and personal growth. The implementation of the DEA and the enhancement of faculty development initiatives are anticipated to facilitate improved administration of both teaching and research activities. Consequently, educational institutions are compelled to promptly adjust to evolving technological breakthroughs. The potential exists for this to significantly alter the general landscape of the educational system.

The main objective of this study is to provide the groundwork for the development of a comprehensive assessment system for teaching and learning within each department. This will be achieved by leveraging a network that incorporates input and output data from all decision-making units (DMUs). This system has the potential to serve as a comprehensive management tool for generating assessment outcomes in educational institutions. Additionally, it offers a range of features for facilitating communication, interaction, and the methodical development of educational management processes inside each educational institution. University departments utilize an efficiency score as a basis for designing faculty development initiatives. The objective is to enhance the academic accomplishments, knowledge, skills, values, and perspectives of the staff members. Utilizing scenario analysis, it was possible to demonstrate the impact of output variables on DMU efficiency and to identify the most significant factor influencing the efficacy scores of the various departments. In addition, a quantitative study employing interviews and surveys was conducted to determine which training staff members required in the areas of knowledge, skills, methodologies, and personal development. The overarching objective was to enhance work performance through the identification of academic and non-academic staff training needs, thereby enabling faculty to develop a strategy for support and professional development. The present study provides a concise summary of its contributions below.

University self-evaluation using the Plan-Do-Check-Act (PDCA) cycle from quality management and Data Envelopment Analysis (DEA) is shown in this study. Combining these methods creates a unique framework for analyzing higher education faculty department performance.

The research stresses speed and effectiveness in the review process, using current data sources to simplify and provide actionable insights for continual development. To determine input and output variables, quantitative analysis is used.

The study also emphasizes teacher involvement in assessment and empowers them to accept responsibility for their performance. Data-driven assessment by academics promotes accountability, innovation, and quality in institutions.

The paper also promotes evidence-based higher education decision-making. The strategy uses quantitative analysis and DEA to help colleges make strategic choices based on performance measures, improving effectiveness and competitiveness. Finally, the assessment method suggests faculty development for academic and non-academic personnel to enhance long-term teaching/research and non-academic performance.

The unique aspect of this research is that it disassembles efficiency models so as to locate areas of inefficiency within the operations of the department. Understanding the current state of department efficiency and identifying the top performers enables educational institutions to enhance their resource allocation processes. The results of such a study will enable each department to make the most of its resources and increase efficiency in an environment that is becoming increasingly competitive. The subsequent sections of the article are structured in the following manner. Section “Related Methods” of this paper provides an overview of pertinent methodologies, while sections “University Evaluation System (UES)” and “Numerical Results” delve into the essential principles of the University Evaluation System (UES) and present a numerical analysis of a case study focused on an educational faculty. The conclusions and discussions are reported with an outlook on future research directions in Sections “Conclusions” and “Discussions,” respectively.

Literature Reviews

The existing body of literature concerning efficiency assessment in higher education institutions (HEIs) encompasses a wide array of methodologies and implementations that are designed to assess performance and pinpoint opportunities for enhancement. These studies emphasize the significance of these evaluations in light of changing global conditions, technological progress, and the requirement for high-quality standards in education administration. Mikušová (2017) emphasizes the importance of institutional specialization when evaluating the efficacy of public higher education institutions (HEIs), recognizing the necessity for customized evaluation frameworks. The significance placed on institutional diversity is consistent with the conclusions drawn by Ding et al. (2021), which underscore the criticality of enhancing research performance across departments in order to optimize operational effectiveness. An additional scholarly contribution to this discourse is made by Ghimire et al. (2021), who illustrate the influence of output and input selection on institution rankings, with a particular focus on the significance of publications in augmenting assessment scores.

Ersoy (2021) broadens the applicability of efficiency assessment to distance education departments, emphasizing the increasing prevalence of online learning and the imperative for higher education institutions (HEIs) to adjust to evolving educational environments. In the same way, Villano and Tran (2021) emphasize the exponential expansion of studies utilizing data envelopment analysis (DEA), which reflects a larger tendency toward employing sophisticated methodologies to assess the performance of higher education institutions (HEIs). Regional variations in the efficacy of education systems and technological efficiency are examined in depth by Lesik et al. (2022) and Andersson and Sund (2022), respectively, highlighting the need for context-specific assessments. The aforementioned studies underscore the significance of taking into account regional variations and the caliber of inputs when assessing higher education institutions (HEIs). This sentiment is also reflected in the investigation of higher education system assessment methodologies conducted by See et al. (2022).

The works of Halásková et al. (2022) and Olariu and Brad (2022) offer valuable perspectives on the efficacy of secondary education services and higher education study programs in Romania, respectively. These studies underscore the wider ramifications that efficiency assessments can have on educational policy and the enhancement of program quality. Tran et al. (2023) illuminate the correlation between administrative intensity and operational efficiency in higher education institutions in Australia, highlighting the complex and multifaceted character of institutional performance evaluation. In support of this comprehensive approach to evaluating efficiency, Doğan (2023) employs a two-stage Network Data Envelopment Analysis (NDEA) model to examine the efficiency of research universities in Turkey. In doing so, he takes into account the influence of regional socioeconomic development and the utilization of shared inputs across sub-processes.

A novel input indicator for evaluating the efficacy of global institutions is introduced by Bornmann et al. (2023), making a contribution to the ongoing discourse surrounding standardized evaluation metrics. Likewise, Liu et al. (2023) examine the productivity and efficiency of Chinese higher education institutions (HEIs) in the midst of the COVID-19 pandemic, emphasizing the need to modify evaluation methodologies in response to shifting conditions. The studies conducted by Zuluaga-Ortiz et al. (2023) and Delahoz-Dominguez et al. (2023) respectively examine the significance of quality assurance mechanisms in improving institutional performance by focusing on quality management tools and compliance evaluation in Colombian higher education.

Chen et al. (2024) presented an all-encompassing two-stage network DEA model to evaluate the effectiveness of the human development system in sub-Saharan Africa. The authors emphasize the interdependence of economic, health, and education development. The importance of implementing integrated policy interventions to tackle technological barriers and inequalities in efficiency across various regions is emphasized by this comprehensive approach. In brief, the body of scholarly work concerning the evaluation of efficiency in higher education establishments demonstrates an increasing acknowledgment of the significance of implementing quality assurance mechanisms and context-specific assessments to improve institutional performance and further socioeconomic development objectives (Table 1).

DEA and Its Applications.

Related Methods

Quantitative Analysis

Statistics is an academic discipline that involves the systematic collection and analysis of data to derive meaningful insights, elucidate phenomena, provide answers to inquiries, and solve pertinent concerns. This process entails the utilization of statistical techniques to examine data obtained from several instances of a given event. This approach can be employed to derive inferences and elucidate a phenomenon, provide responses to inquiries, or tackle matters of significance. The study of statistics has two main divisions, namely descriptive statistics, and inferential statistics. The initial step is to do a preliminary analysis to assess the extent of comprehensiveness in the data-collection process. In contrast, inferential statistics pertain to the systematic examination of data collected from a single sample to make inferences and generalizations about the entire population.

The use of descriptive statistics is an essential data analysis technique that is imperative in every quantitative investigation. It serves the purpose of characterizing the overall data characteristics of the examined sample via the examination of central tendency and data dispersion. For descriptive statistics to effectively serve its goal as a fundamental component of quantitative research, this task must be accomplished. Inferential data analysis is a statistical methodology that entails establishing a connection between the statistical values obtained from a sample and the values of one or more parameters to describe or assess a population. To determine the statistical significance of the hypothesis outcomes, p-values will be employed. When employing inferential or reference statistics and relying on incomplete data to draw inferences about a population, it is crucial to ascertain whether any errors were made or if there is sufficient confidence in the results and the decision-making process.

Data Envelopment Analysis (DEA)

Data Envelopment Analysis (DEA) is a non-parametric approach that seems to be useful in assessing the relative efficiency and effectiveness of decision-making units (DMUs) when comparing similar entities or organizations. This approach is frequently utilized in the domains of operations research, management science, and economics to establish benchmarks and assess advancements. The DEA was developed by Charnes, Cooper, and Rhodes in the late 1970s. The initial focus of this study was on public sector service providers, such as universities, hospitals, and government institutions. Subsequently, this technology has been applied in several industries, including manufacturing, finance, healthcare, and environment. In contrast to conventional econometric techniques that depend on predetermined functional forms and assumptions regarding data distribution, DEA avoids making substantial a priori assumptions about the underlying data distribution or the functional form of the production process.

Decision-making units (DMUs), inputs and outputs, the efficiency frontier, relative efficiency, and categorization are fundamental concepts within the realm of decision analysis. Various organizations, including enterprises, clinics, colleges, and schools, are currently subject to close examination. The DEA evaluates the effectiveness with which each DMU converts inputs into products. The DEA considers a range of resources, production variables, and end products for each DMU. The versatility of inputs and outputs in DEA enables the capture of a diverse array of performance variables. The efficiency zone is utilized for the computation of the performance score, as shown by previous studies (Johnes, 2006; Johnes & Yu, 2008).

The objective of the DEA is to identify the production frontier or boundary at which DMUs attain their optimal efficiency. Any DMU that falls on or below this threshold is classified as efficient, but any DMU that falls over it is classified as inefficient or wasteful. Each DMU is assigned a score that indicates its level of efficiency relative to the other units in the dataset. This score is established by the relative efficiency of the DMU, as assessed using DEA. To achieve optimal efficiency, it is advisable to strive for a score of 1, since any value lower than this signifies a lack of efficiency. The DEA categorizes DMUs into two distinct groups: efficient and inefficient. To get valuable insights, inefficient DMUs might analyze the findings of DEA performed by their more efficient counterparts.

DEA, or Data Envelopment Analysis, can be used in a wide range of domains, including but not limited to performance evaluation, resource allocation, strategy creation, and policy analysis. This tool may be utilized by many entities such as enterprises, governments, and academia to enhance decision-making processes. Data Envelopment Analysis is widely regarded as a valuable asset across several domains due to its versatile nature, adeptness in handling diverse inputs and outputs, and capacity to provide valuable insights for optimizing operational effectiveness. The aforementioned technique is characterized by its continuous evolution since it undergoes regular iterations and adjustments to cater to the requirements of various academic disciplines and practical implementations.

Within the context of the DEA framework, it is important to acknowledge the existence of two distinct orientations, namely input and output. The concept of output orientation aims to optimize the output produced given a specific amount of input, whereas input orientation focuses on minimizing the input required to achieve a certain level of output. This inquiry will employ an input orientation as it is observed that departmental inputs are seen to be more controllable compared to departmental outputs. The authority to determine the quantity of academic and non-academic staff is with department heads, but the number of undergraduate and graduate students, as well as publications, including internal quality evaluation, is not within their purview (Kuah & Wong, 2011; Tyagi et al., 2009).

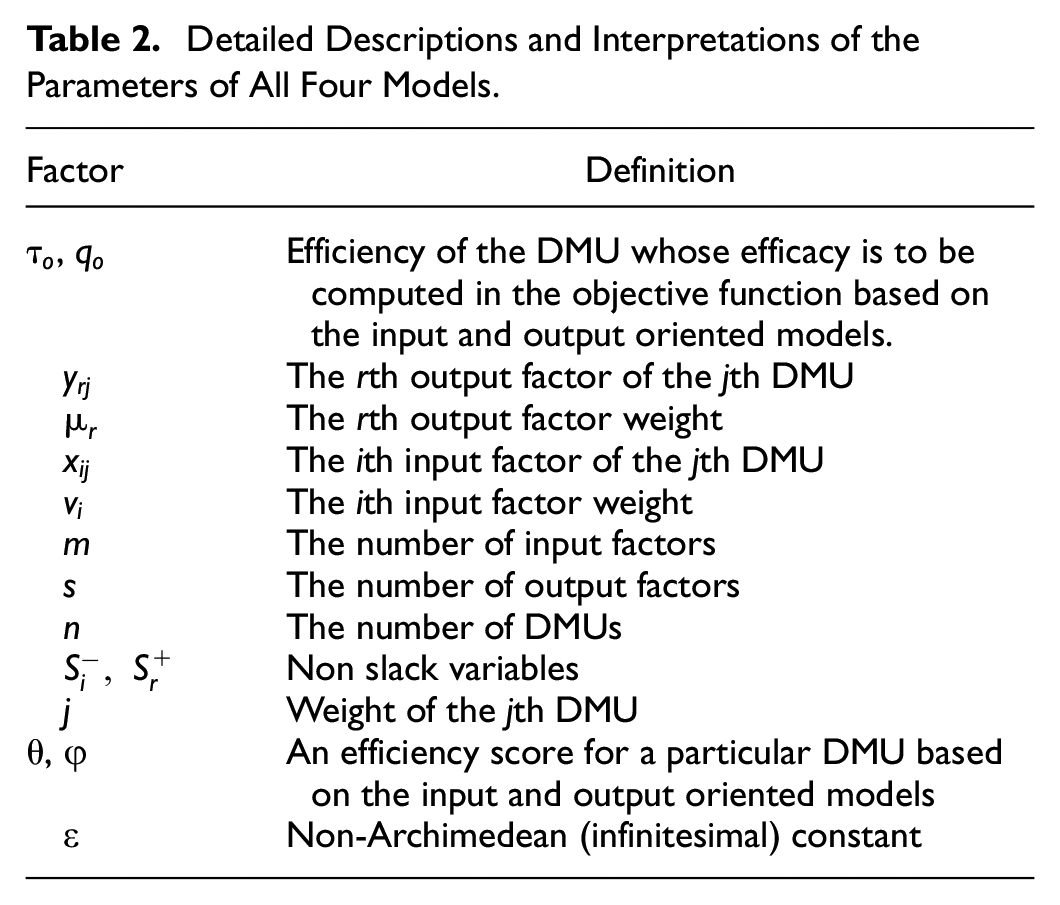

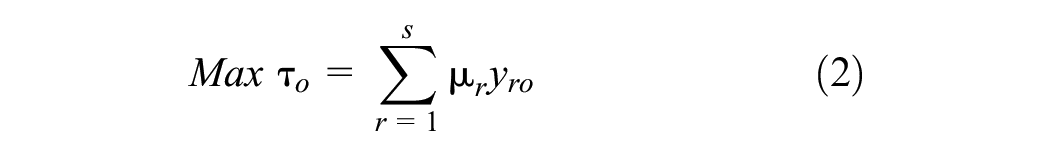

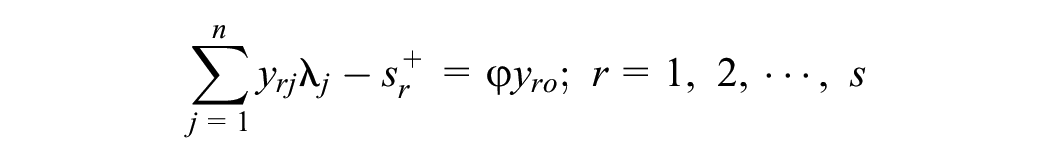

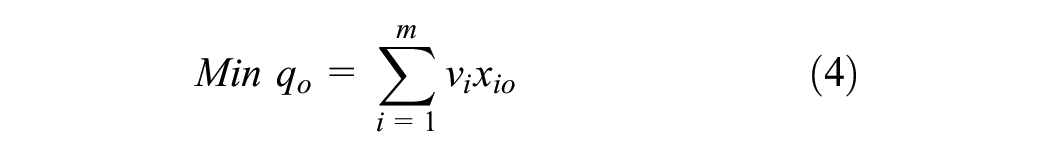

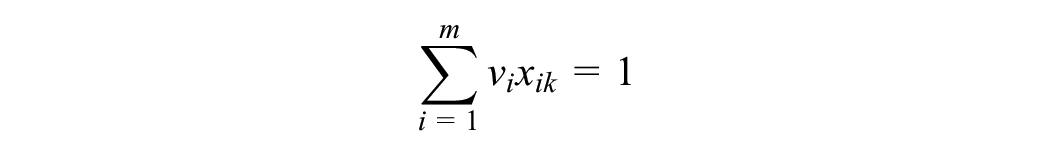

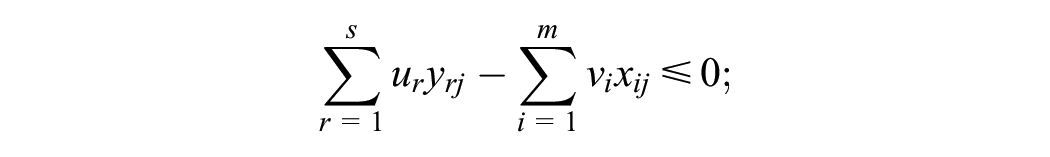

Input-oriented Data Envelopment Analysis (DEA) with Constant Returns to Scale (CRS) or equation (1) and its multiplier model of equation (2) is used to assess the relative efficiency of numerous decision-making units (DMUs), such as businesses, organizations, or departments, based on their utilization of inputs. For example, DEA with CRS is utilized for evaluating the relative efficiency of several enterprises. It is a particular kind of DEA that assumes constant returns to scale, which indicates that an increase in the proportional number of inputs ought to increase the proportionate number of outputs for efficient DMUs. If a DMU is efficient, it will be working at an optimal scale, which is defined by equation (3) and its multiplier model of equation (4), where an increase in outputs will result in a commensurate rise in inputs. This is the opposite perspective of the input-oriented DEA, which focuses on minimizing inputs for a given level of output. This perspective seeks to maximize outputs while maintaining the same level of inputs and The index 0 denotes the DMU in the objective function whose efficiency will be calculated (Cooper et al., 2011b). Table 2 contains comprehensive descriptions and explanations of all four models’ parameters and meanings.

Detailed Descriptions and Interpretations of the Parameters of All Four Models.

Subject to

Subject to

Subject to

Subject to

Within the framework of the DEA, entities engaged in the creation or provision of goods and services are commonly denoted as Decision-Making Units (DMU). Farrell (1957) introduced the notion of classifying efficiency into two distinct categories. One approach is to enhance the allocation of resources more efficiently. The term “it” pertains to the capacity of the unit to effectively determine the suitable ratio of inputs, while adhering to the limitations set by the costs of the inputs. The second aspect under consideration is technical efficiency, which pertains to the capacity of the unit to enhance productivity or output factors while constraining the quantity of input components employed (as measured from an output-oriented perspective). The unit’s capacity to decrease the quantity of input variables while preserving identical output factors is of utmost importance (input-oriented measure).

University Evaluation System (UES)

As a component of the University Evaluation System or UES (Figure 1), quantitative and data envelopment analysis methods are implemented throughout the entirety of all academic departments at the university. These methodologies are derived from the overarching structure of evaluations carried out by a variety of different departments. When conducting assessments using the UES, it is necessary to take into account the four primary circumstances, each of which is broken down into paragraphs below. Education should be the major focus of the evaluation, not only for the institutions and departments that are contributing to its execution but also for the individual faculty members and department heads who are contributing to its implementation. This is because education is the most important factor in the success of any endeavor. Learning opportunities, such as dialogues, should be incorporated into the process of documenting the components that go into producing a department’s or faculty’s outputs and inputs. This is in addition to the documentation of the components that go into producing those outputs and inputs.

Procedures of the UES.

Second, the assessment has to provide an all-encompassing viewpoint on the development of the situation. If we want our teaching and learning systems to be of high quality, the designers of those systems need to analyze the degree to which the various input components can successfully do the challenging task at hand. This will provide several performance indicators for how input factors base their decision-making on their growing understanding of their students and the instructional strategies that have the best track record of success. There is a possibility that the evaluation will be centered on a certain topic. If a faculty or department wishes to be evaluated by the UES, they need to provide evidence that they are capable of effectively juggling the crucial teaching, learning, and research responsibilities in their respective domains.

Third, when assessing both the inputs and the outputs of the system, the approach known as data envelopment analysis is the one that should be used. As a result of this, plans were established that linked the components that went into creating output with the numerous alternatives that may occur depending on the implications that these observable features had on learning, teaching, and research. These plans were created as a response to the fact that the observable aspects affected the activities of learning, teaching, and research. In conclusion, the evaluation needs to provide both critical feedback in the form of suggestions for improvement and inspiration for ongoing growth on the part of both the teaching staff and the institution as a whole.

There is a clear correlation between the quantity of accessible human resources and a company’s overall level of success. To the greatest extent possible, the success of the organization will be determined by the talents of its members of the organization. As a direct result of this fact, chief executive officers are obligated to recognize the relevance of human development and make it possible for workers to enhance their knowledge, capabilities, and job skills by providing them with opportunities to do so. CEOs are also needed to ensure that employees have access to opportunities to grow their knowledge and capacities. Continuous research investigations have been created to gather vital issues affecting knowledge growth in both sorts of staff via the use of questionnaires and quantitative analysis. This has been done as part of an exploratory research approach.

Faculty development is the ongoing process of providing educational and coaching opportunities to academic staff members to assist them in improving their work performance, particularly in terms of teaching and the evaluation of student learning, which includes research, according to the definition provided by the evaluation system through the UES. This definition was provided by the University Evaluation System (UES). This is done to assist them in improving the quality of their job. It also refers to the process that is carried out for staff workers to focus on increasing their capabilities in the following areas: educational quality assurance management, educational technology, educational research skills, and personal development. This method is followed by staff employees to do so. When it comes to thinking of and carrying out community-engaged research projects, the university can lend a helping hand to individual faculty members.

A Case Study of Educational Faculty

Decision Making Units (DMUs)

The assumption of homogeneity is one that each DMU is required to meet to satisfy the DEA. To get things rolling, DMUs need to carry out activities that are similar to one another and serve the same purpose as one another. Second, all the DMUs must utilize inputs and outputs that are equivalent to those of the other DMUs. In conclusion, Agha et al. (2011) stated that the environment present at each DMU’s place of employment needs to be the same. According to Aziza et al. (2013), the efficiency and operating costs of an academic institution are the same for both academic and non-academic staff. This is the case even though academic staff are the ones directly responsible for teaching and research.

When working with DEA, it is critical to select both the outputs and the inputs with great attention to detail. The relative efficacy of DMUs is significantly impacted by the factors that are utilized in the evaluation process for these units. The efficiency score will change with the inputs and outputs that are incorporated into the calculation. However, the order in which these inputs and outputs are selected is entirely arbitrary. DEA was applied in several investigations that were carried out at educational institutions of a higher level. The researchers utilized a wide range of input and output qualities in their studies. The academic (V1) and non-academic (V2) staff employees are both types of examples of human capital input components. The academic staff are the ones responsible for both teaching and doing research in each department within the university. Employees in each department who are not part of the academic community participate in interaction with academics and students while also giving indirect support for academic research and endeavors.

The factors under consideration are internal quality evaluation (U1), the number of undergraduate enrollments (U2), the number of graduate enrollments (U3), and the number of research publications. U1 is an effective tool for achieving the institution’s goals in a short amount of time. Every department makes it a top goal to increase the number of students enrolled in the U2 and U3 levels since this metric indicates both the quality and quantity of education that is provided by the many different types of staff members. In addition to the fact that a department’s academic professionals are responsible for teaching courses, the presence of research activity in the department is indicated by the presence of the U4 indicator. They may be published in scholarly journals, book chapters, or proceedings; the likelihood of this happening is rather high.

Quantitative Analysis Procedures

At each of the three levels of the organization (department, faculty, and university), the information that is collected from the annual report is automatically retrieved and put in the database. This process occurs at each level. One utilizes UES in conjunction with the quantitative analysis to infer statistical values (

This method involves using statistical values that were received from the sample. After that, the subsequent stage of UES will employ point estimation, also known as estimation, based on a single statistical value derived from the sample to estimate population parameters (t-test), to establish the appropriate level of the input and output variables for the mathematical model (

Model Variables.

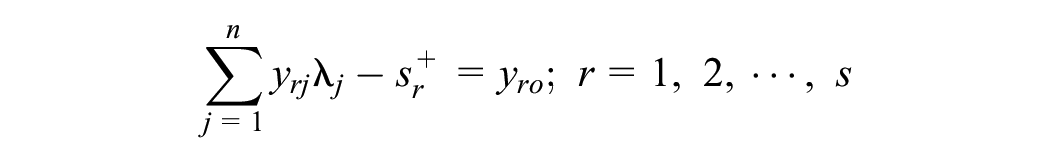

DEA Procedures

The CCR framework is the most fundamental iteration of the DEA model, and it was first presented by Charnes et al. (1978). The DEA model was initially developed by Charnes, Cooper, and Rhodes. According to Visbal-Cadavid et al. (2017), the idea behind it was to compare the performance of homogeneous DMUs with diverse inputs and outputs. This was the motivation behind the development of the idea. Let’s say n DMUs are being used for testing, and each one of them has s inputs and m outputs. The response to the fractional programming model (equation (2)) that is shown below is utilized in the process of determining how successfully one DMU stacks up against the others.

Subject to

Numerical Results

To conduct this analysis, data were gathered from five discrete academic departments situated inside a public institution, including Department of Health and Physical Education (DMU1), Department of Educational Technology (DMU2), Department of Art Education (DMU3), Department of Music Education (DMU4), and Department of Business Education (DMU5). The present research utilized data derived from official reports provided by the faculty, encompassing the academic year spanning from 2019 to 2021. In this study, the CCR model and a standardized computer software tool were used to calculate the efficiency scores of each department. This facilitated the execution of a comparative examination of performance across all domains.

Quantitative Analysis

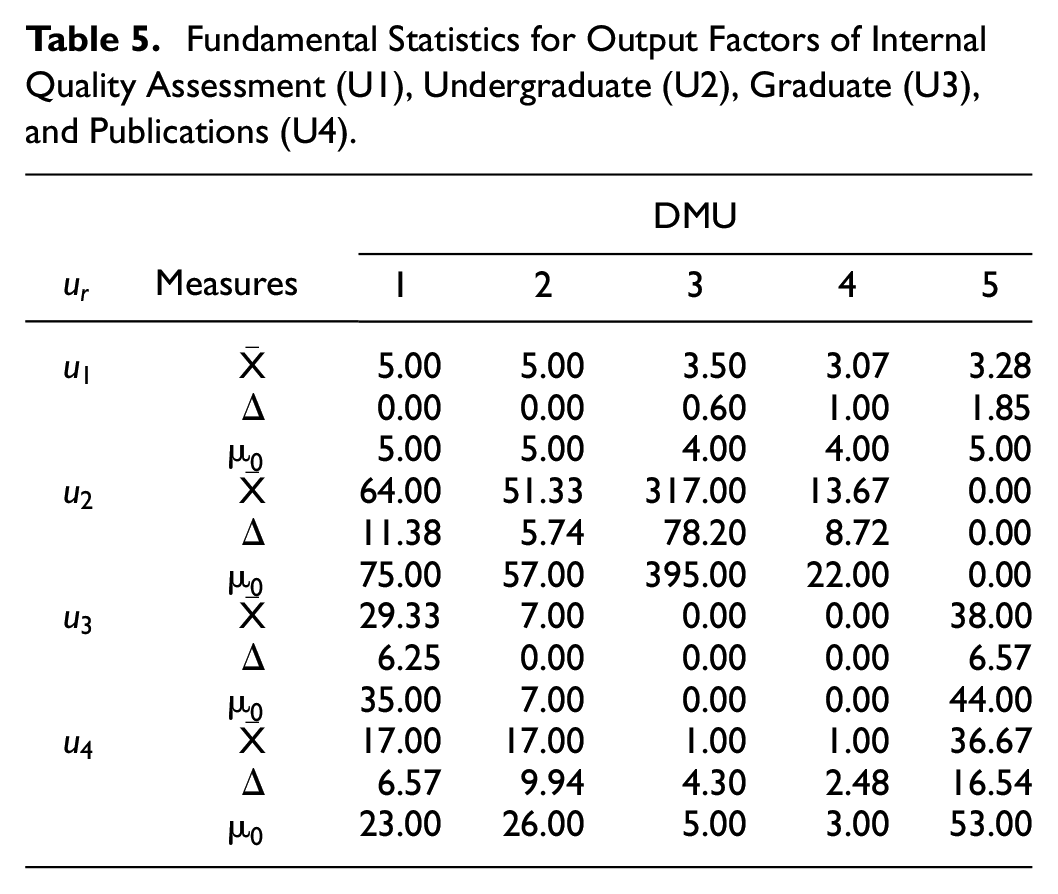

In the context of quantitative analysis, a hypothesis t-test is employed to ascertain the accuracy of the data that is utilized to generate confidence, enabling a thorough evaluation of its suitability for determining a performance score. This assessment establishes the extent to which the data may be trusted to calculate a performance score. This action is undertaken to derive a performance score from the aforementioned data. The output factors in each department will be evaluated by testing levels of

Fundamental Statistics for Input Factors of Academic Staff (V1) and Non-Academic Staff (V2).

Fundamental Statistics for Output Factors of Internal Quality Assessment (U1), Undergraduate (U2), Graduate (U3), and Publications (U4).

The hypothesis about the mean was examined concerning both the input and output elements. The expected outcome will be utilized in a data envelopment analysis (DEA) computation, which adopts a linear programming (LP) framework to assess the efficiency of each discipline. It was decided to use an output-orientation model since that type of DEA makes the most of the output for the input level. The CCR model may be seen as a depiction of the LP model, contingent upon the specific department within the educational institution. Based on the baseline level depicted in Figure 2, it can be observed that the departments of Art Education (DMU3), Music Education (DMU4), and Business Education (DMU5) had the most elevated performance ratings, each scoring a value of 1. Among all the departments, only the Department of Health and Physical Education (DMU1) had notable efficiency scores, with a score of 0.79773. The departments that achieved the highest performance rankings were Art Education (DMU3), Music Education (DMU4), and Business Education (DMU5). The Department of Educational Technology (DMU2) achieved a score of 0.46197, indicating a moderate degree of efficiency, which was unique among all other departments.

Efficiency scores classified by five departments.

Scenario Analysis

Even though the DEA lacks formal significance tests for variable selection, scenario analysis might indicate the impact of output variables on DMU efficiency (Nguyena et al., 2015). The DEA model was then investigated by removing one output element at a time before proceeding to the next stage of calculating the efficiency ratings for each department based on a variety of criteria. The four unique results are summarized in Table 6, which can be found below.

Four Scenarios of the Output Factors.

The purpose of the efficacy score analysis provided in Scenario 1 was to generate an evaluation of the effectiveness of those Education Faculty departments that considered all input and output factors other than U1. The purpose of this fictitious scenario is to examine how the Internal Quality Assessment, also known as the U1 output factor, influences the performance score of each department and how that score evolves with the organization’s overall performance score. This investigation will also examine how the U1 output factor affects the organization’s overall performance score. The following ratings for effective use can be seen in Table 7 for Scenario 1, as well as for the other scenarios.

Efficiency Scores for All Four Scenarios of the Output Factors

According to the results of the Internal Quality Assessment (U1), the scores of efficacies for the Departments of Health and Physical Education (DMU1), Educational Technology (DMU2), and Music Education (DMU4) all decreased. However, the internal quality evaluation score factor had no bearing on either the Art Education (DMU3) or Business Education (DMU5) departments’ performance scores. The number of students enrolled in graduate programs (U2) hurt the efficacy ratings of the Health and Physical Education (DMU1), Educational Technology (DMU2), and Art Education (DMU3) departments. Alternatively, the number of graduates did not affect the efficacy ratings attained by the Music Education (DMU4) and Business Education departments (DMU5) (Figure 3).

Comparative efficiency scores for all DMUs on the baseline versus scenario 2

The number of students enrolled in the Graduate (U3) program of the Department of Health and Physical Education (DMU1) affected the department’s overall efficacy score. In contrast, the number of students receiving master’s and doctoral degrees did not affect the Educational Technology (DMU2), Art Education (DMU3), Music Education (DMU4), or Business Education (DMU5) departments. As a direct consequence of the decrease in the number of articles published in the journal (U4), the Department of Educational Technology (DMU2) received a lower score for efficacy. The number of published papers had no effect on the performance ratings of the departments of Health and Physical Education (DMU1), Art Education (DMU3), and Business Education (DMU5).

Participants’ Views on the Importance of Faculty Development

Training and development programs for university faculty are essential for quality enhancement. Training serves as a means of bridging the disparity between an individual’s current skill set and the desired competencies required by an organization. Rapid technological advancement necessitates renewed expertise and skills in numerous domains. It is imperative to offer ongoing training to personnel in order to maintain their currency, effectiveness, and capacity to propel education excellence. They are evaluated often for a wide range of decision-making objectives, including pay raises, contract extensions, insurance premium distribution, and faculty research funding. Effective performance programs and supportive environments could help employees perform their responsibilities effectively by improving long-term teaching/research and non-academic performance measures. Therefore, a quantitative analysis was conducted utilizing the survey-and-interview method to determine faculty members’ requirements in all aspects/ topics to develop their knowledge, talents, techniques, and personal growth. The goals were to figure out what training educational and non-academic staff at the faculty of education needed based on certain skills and then put these training needs in order of importance. This enables the faculty to devise a plan for providing staff members with support and professional development training, with the ultimate goal of enhancing their job performance.

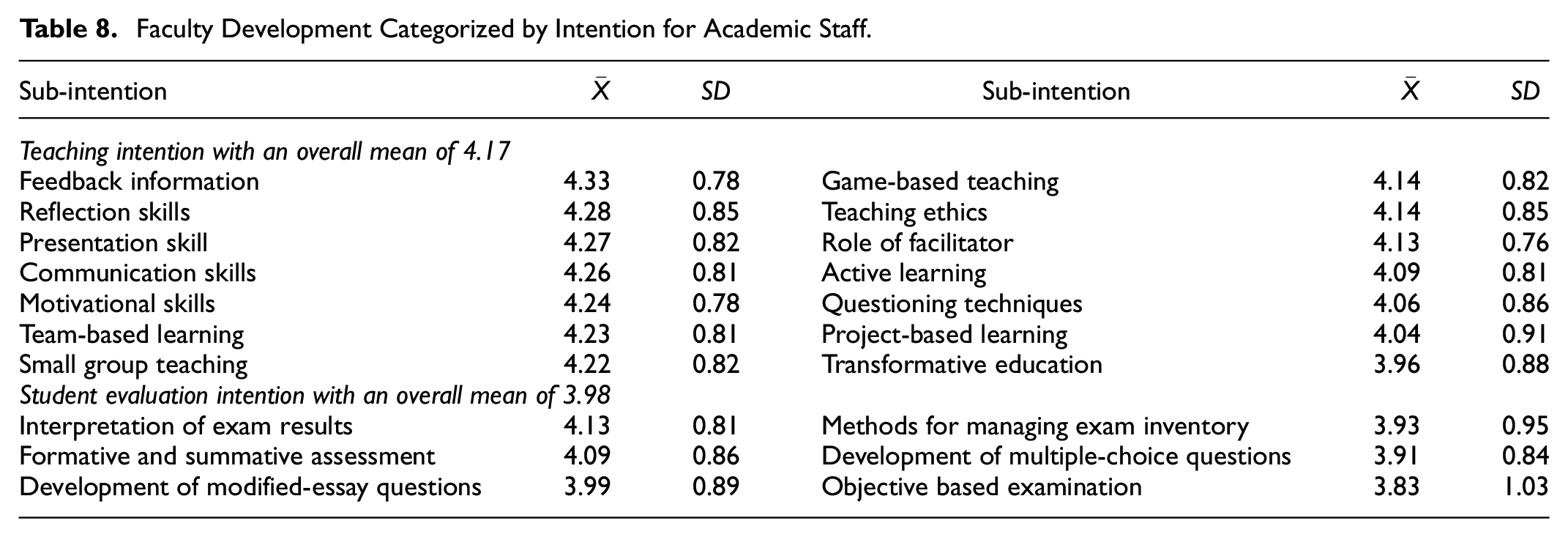

Samples were asked to rate their training requirements on a 5-point Likert scale, where 1 meant “not at all,” 2 meant “to some extent,” 3 meant “relatively important,” 4 meant “important,” and 5 meant “very important.” Data was evaluated using descriptive statistics to determine competency means and rated based on importance. The maximum level of participation in the faculty’s development activities is denoted by a score between 4.50 and 5.00; scores between 1.50 and 2.49, 2.50 and 3.49, 3.50 and 4.49, and 1.00 and 1.49, respectively, indicate a low, moderate, and high level of engagement. Academic (Table 8) and non-academic (Table 9) staff members are motivated by quantitative analysis to diligently enhance their technical expertise, competencies, and personal growth.

Faculty Development Categorized by Intention for Academic Staff.

Faculty Development Categorized by Intention for Non-Academic Staff.

According to Table 8, based on the overall mean, members of the academic staff were supposed to receive high-level training in teaching as well as student evaluation areas. On the other hand, it was discovered that they favored subjects in instructor evaluation (4.17) rather than topics in student evaluation (3.98). Training for educational professionals that is broken down according to its purpose for the academic staff. According to the subtopic, academic staff members placed the need for feedback information as their top priority (4.33), followed by the development of reflective abilities (4.28).

Table 9 presents a categorization of faculty development for non-academic individuals depending on their intentions. The results of the study revealed that participants exhibited a preference for themes related to educational technology, with a mean rating of 4.17. This was followed by a relatively high level of interest in education research, with a mean rating of 4.03. Lastly, participants had a moderate level of interest in educational quality assurance management Intentions, with a mean rating of 3.82. The subtopic that received the highest mean ranking was the utilization of Google Apps for educational purposes, specifically focusing on the necessity for enhanced training in developing educational applications.

Conclusions

Every faculty member must evaluate their department’s performance. This study examines input-output performance scores. This study examined Faculty of Education departments using yearly report data. The faculty comprises five main departments with inputs and outputs. The amount of academic and non-academic staff is included. Outputs include internal quality evaluations, undergraduate and graduate enrollment, and research publications. The department faculty performs an annual evaluation and department-wide assessment. Comprehensive assessment is the focus of this inquiry.

The material in faculty reports varies each year. Therefore, the sample mean, median, standard deviation and sample variance were used to assess the initial volatility of both the input and output. In the process location indicator-confidence interval range, statistical hypotheses are formed. Then, the optimum performance for each data set was picked for aggregate assessment. The input and output choose the levels closest to the lowest and higher bounds, respectively. After gathering the data, the DEA approach was used to analyze each department’s performance score and create a model to discover which input variables affect it. The evaluation ended after data gathering.

Upon examination of the overall efficiency score, it was determined that the Educational Technology department had the lowest overall efficiency score compared to the other departments. In addition, the study examined efficacy ratings by applying them to four unique situations, each with a distinct output component. By contrasting the outcomes of a variety of simulations, it was determined which output factors had the greatest impact on the efficacy ratings of the various departments. According to the results of the evaluations, a variety of output characteristics influenced the efficacy score assigned to each department. The following measures can be taken to increase the productivity of each division by analyzing the data using the following hypothetical scenarios:

(1) Internal quality evaluation (U1), undergraduate enrollments (U2), and the number of Master’s and Doctoral students (U3) all impacted the Department of Health and Physical Education’s efficacy score.

(2) Internal Quality Assessment (U1), undergraduate enrollment (U2), and publishing (U4) were the three factors that determined the Department of Educational Technology’s efficacy score.

(3) The efficiency score of the Art Education department was influenced solely by undergraduate enrollments (U2), whereas the efficiency score of the Music Education department was influenced solely by the internal quality evaluation (U1). For these two departments to continue achieving high-performance ratings, they must maintain their distinctive influencing variables.

The research indicates that in order to optimize their performance scores, departments ought to concentrate on preserving or enhancing the elements that have a positive impact. Departments can discern methods to enhance productivity through the analysis of the data. An illustration of this would be the Department of Health and Physical Education, which could enhance its efficacy score by concentrating on enhancing its internal quality evaluation and enrollment figures.

The DEA and QA-based UES will provide programs, seminars, and workshops for faculty development. It tries to boost departmental effectiveness. Educational staff knowledge development with an emphasis on teaching averaged 4.17. Academic instructors still value teaching above intellectual understanding. These situations demand purposeful development of nontechnical skill training, such as informational and feedback instruction, reflection instruction and skills, presenting skills, and communication skills. Coaching, seminars, workshops, and development programs should provide skills, methods, tactics, and information, according to Sibtah et al. (2016).

The student evaluation development aim scored 3.98, making it second-level. Williamson and Seewoodhary (2017) state that higher education academic success evaluations must include student assessment. Thus, well-designed tools and guidelines may help instructors. Technical advancement in education (4.17), education research (4.03), and educational quality assurance management (3.82) were the top three needs for non-academic professionals. The Faculty of Education should provide Moodle and online virtual classroom instruction.

Administrators and decision-makers may improve efficiency by improving and creating activities for interested parties, optimizing resource usage and supporting educational quality. These activities may involve a popular course or subject, an event format, and a suitable time for participants. Staff involvement may also be affected by the agency’s funding and human development needs. Target organizations are reached by tailoring courses and activities to each group.

Each faculty member may comprehend the department’s main goals by analyzing the performance score and its expected influences. The assessment of teaching and learning information, the issue solution, and the budget, which includes staff to support multiple policies, all boost efficiency. Other university faculties might utilize it as a management guideline to achieve high-performance ratings, competitiveness, and effective and sustained self-assessment to build a holistic image.

This research showed how data-driven assessment may improve university administration practices. The research showed how colleges might use data to improve faculty performance by using a PDCA-inspired strategy.

The results demonstrate the novelty and importance of this technique in addressing the urgent demand for efficient and effective higher education self-evaluation tools. This strategy opens new doors for development, innovation, and greatness for institutions, students, faculty, and stakeholders. Future research should validate and refine this strategy, examine its applicability across varied university environments, and evaluate its long-term influence on institutional performance. Continued innovation and collaboration may develop university administration and improve higher education.

The University Evaluation System (UES) uses the Plan-Do-Check-Act (PDCA) cycle, Data Envelopment Analysis (DEA), and quantitative analysis to better use current data and make more efficient decisions. The PDCA cycle guides professors through performance evaluation, implementation, planning, and refining to improve. For now, DEA offers a complete benchmarking tool to evaluate university departments’ effectiveness, identifying optimum ways and allowing comparison assessments. Additionally, quantitative analysis allows the systematic examination and integration of disparate information that include faculty performance criteria including instructional effectiveness, academic production, and administrative efficiency. These methods allow academic institutions to effectively allocate resources, prioritize faculty development, and tailor responses to specific challenges or opportunities. PDCA, DEA, and quantitative analysis increase UES assessment efficiency and effectiveness, enabling data-driven decision-making and continual educational outcomes improvement.

Discussions

The results of the research illuminate the effectiveness and efficiency of academic departments comprising the Faculty of Education. Disparities were apparent in the overall efficiency ratings, whereby the Educational Technology department demonstrated the least efficiency in comparison to the other departments. This observation highlights the importance of implementing focused interventions to address inefficiencies in particular departments, thereby refining the allocation of resources and improving the overall performance of the department. Further, the examination of efficacy ratings on various departments identified critical determinants that impact the effectiveness of each department. By employing efficacy evaluations to analyze various scenarios and conducting subsequent simulations, we were able to identify the principal output factors that influence the effectiveness of departments. These insights hold immense value for administrators and decision-makers who seek to enhance the efficiency and productivity of their departments.

The implications of the study’s findings for faculty development initiatives in academic institutions are explicit. Administrators have the ability to customize these initiatives in order to target particular areas for development within departments, using data-driven insights derived from our analysis. Departments that receive lower efficacy ratings, for instance, might find targeted training programs that improve undergraduate enrollment strategies or internal quality assessment to be advantageous. Furthermore, the results emphasize the criticality of continuous assessment and enhancement in academic departments. Through careful examination of performance scores and the identification of determining factors that impact them, faculty members can acquire more profound understandings of the objectives of the department and establish priorities for endeavors that aim to improve efficiency and efficacy. The implementation of this iterative evaluation process is vital in building an academic environment that promotes innovation and excellence.

Managerial and Practical Implications

The application of DEA and QA methodologies to assess departmental efficiency presents tangible benefits for administrators, faculty, and decision-makers operating in institutions of higher education. To begin with, administrators are able to allocate resources more efficiently by identifying the assets and deficiencies of each department. This process optimizes budgetary decisions and personnel strategies. Through the identification and prioritization of inefficient areas, faculty members have the ability to streamline operations and ultimately improve overall performance. Furthermore, the customized faculty development initiatives suggested in light of our discoveries can effectively target particular deficiencies in skills and areas requiring enhancement that have been identified within each academic division. This promotes the advancement of knowledge and abilities in both teaching and research.

Additionally, the focus of this research on the harmonization of resources and activities with the objectives of the department highlights the criticality of academic departments engaging in strategic planning and establishing objectives. The evaluation results can be utilized by administrators to establish attainable performance objectives and monitor advancements over a period of time, thereby promoting the implementation of evidence-based decision-making and ongoing improvement endeavors. Furthermore, the insights furnished by our evaluation model have the potential to guide strategic collaborations and partnerships among departments, capitalizing on their respective strengths and resources in order to more efficiently accomplish shared objectives.

In practice, the application of our suggestions may result in discernible enhancements in the caliber of instruction, efficiency of research, and overall efficacy of the department. In addition to contributing to broader societal objectives of educational excellence and innovation, educational institutions can bolster their standing and competitiveness by cultivating an environment that values accountability and ongoing development. This, in turn, will attract exceptional students and talent.

In addition to the theoretical implications, numerical results should motivate department chiefs and managerial leaders to utilize DEA when developing strategic plans for benchmarking, efficiency evaluation, and efficient resource allocation. This research presents a systematic and unbiased approach to allocating resources utilizing DEA in order to optimize effectiveness and attain superior rankings, particularly in situations involving limited financial resources and continuous departmental evaluation. Additionally, universities in Thailand are interested in the information regarding efficacy. In an already competitive environment, universities must not only be cognizant of their relative standing in relation to their peers, but they must also be given direction on how to enhance their performance in order to be successful in their endeavors to obtain additional funding from the federal government.

Limitations

The research focuses on deterministic data, which may not account for real-world uncertainties or fluctuations. DEA might be used with fuzzy, stochastic, and interval data methods in future studies. Researchers may improve efficiency assessment models by addressing these issues. Additionally, the research did not include external or contextual elements that may affect departmental efficiency. To better understand higher education efficiency drivers, future study might examine how financing, institutional culture, and leadership styles affect departmental performance.

The report also stresses the necessity of evaluation-based faculty development programs but does not provide detailed implementation advice. Future study might investigate effective methods for planning and executing faculty development programs to increase departmental effectiveness and address identified areas for improvement. In conclusion, the research paper provides valuable insights into academic department performance evaluation, but addressing these limitations in future studies will help higher education institutions better understand efficiency and effectiveness across diverse organizational contexts.

Future Directions

Further study may be needed to determine effective faculty development design and implementation based on assessment outcomes. By providing clear directions for executing these programs, one may help fix problems and boost department efficiency. It is imperative to place emphasis on broadening the scope of analysis beyond the mere evaluation of one faculty member’s efficacy. By integrating a wider array of Data Envelopment Analysis (DEA) methodologies and amassing more extensive real-world datasets from multiple academic institutions and faculties, one can attain a more holistic comprehension of the efficiency and efficacy of operations in various academic contexts. An investigation into hybrid methodologies that combine DEA and machine learning strategies may improve the assessment of Decision Making Units (DMUs). Researchers have the ability to optimize resource allocation decisions and enhance efficiency assessment processes through the utilization of sophisticated computational methods. An examination of the computational efficiency of hybrid approaches in comparison to conventional DEA methods can provide valuable insights regarding the viability and expandability of integrating machine learning methods into frameworks for evaluating efficiency.

Addressing the requirement for inputs and outputs in the evaluation model that are more pertinent and current is of utmost importance, especially in light of the data gathered throughout the COVID-19 pandemic, which may demonstrate significant variability. The study establishes a fundamental framework for evaluating the efficacy of faculty through the utilization of Data Envelopment Analysis (DEA). However, it is critical to emphasize the significance of integrating up-to-date and comprehensive data in order to improve the model’s precision and practicality. In order to overcome this constraint, forthcoming investigations will concentrate on identifying supplementary input and output variables that more accurately encompass the complex characteristics of faculty performance in institutions of higher education, taking into account the distinct obstacles and disturbances brought about by the pandemic. Potential determinants encompass student satisfaction ratings, rates of securing research grants, partnerships with industry entities, and involvement in community engagement initiatives. By integrating a wider array of inputs and outputs and considering the ramifications of the COVID-19 pandemic, the evaluation framework can furnish a more intricate and all-encompassing appraisal of faculty efficacy. This, in turn, would enable universities to engage in more informed strategic planning and decision-making.

It is imperative to rectify the shortcomings of DEA when it comes to managing ambiguous data types, including imprecise, stochastic, and interval data. Further investigation may be warranted into the incorporation of DEA with methodologies that are specifically designed to handle these data types. By integrating supplementary analytical methodologies, scholars have the ability to surmount the constraints linked to ambiguous or imprecise data and bolster the resilience and dependability of models utilized in efficiency evaluation. An assessment of departmental performance that takes into account external factors or contextual variables, including funding, institutional culture, and leadership styles, can yield significant insights. Further investigation is warranted to examine the impact of these variables on the efficacy and efficiency of institutions of higher education, so as to furnish a more comprehensive comprehension of the forces that propel efficiency.

Footnotes

Acknowledgements

For P.L., this work was supported by the Thammasat University Research Unit in Industrial Statistics and Operational Research. W.A. would like to express gratitude to the School of Science, King Mongkut’s Institute of Technology Ladkrabang for all supporting with this research. P.A wishes to thank the Faculty of Engineering, King Mongkut’s University of Technology North Bangkok for the financial support.

Ethical Approval

Not applicable

Authors Contributions

P.L. contributed to the design, conceptualization, methodology, software, validation, and visualization of the research, to the analysis of the results, and the writing—review and editing of the manuscript. C.P. contributed to an experiment. W.A. contributed to the implementation, formal analysis, investigation, and data curation of the research, and the writing—review and editing of the manuscript. P.A. contributed to the design, conceptualization, methodology, and software. All authors examined and approved the final manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research funding from the Faculty of Engineering, Thammasat School of Engineering, Thammasat University: Contract No. 003/2566.

Data Availability Statement

Data available on request from the authors: The data that support the findings of this study are available from the corresponding author upon reasonable request.