Abstract

The rapid development of artificial intelligence has driven the transformation of educational evaluation into big data-driven. This study used a systematic literature review method to analyzed 44 empirical research articles on the evaluation of big data education. Firstly, it has shown an increasing trend year by year, and is mainly published in thematic journals such as educational technology, science education, and language teaching. Chinese and American researchers have made the greatest contributions in this field. Secondly, the algorithmic models for big data education evaluation research are diverse, the text modality is the most popular, the evaluation subjects are mainly college students, with fewer primary and secondary school students, and science is the discipline that most commonly applies big data education evaluation. The evaluation objectives of big data education evaluation mainly focus on five aspects: high-order thinking analysis, learning performance prediction, learning emotion recognition, teaching management decision-making, and evaluation mode optimization, and the text modality is widely used for data collection in high-order thinking analysis; regardless of the evaluation objectives, higher education students are the most widely evaluated objects; the science discipline is the main field of using big data technology to empower teaching evaluation. Thirdly, the current research topics of big data education evaluation mainly focus on online learning behavior and environmental participation evaluation, process assessment of learning motivation and emotional analysis, development and optimization of subject domain big data models, cognitive diagnosis and high-order thinking skills evaluation, and design of learning analysis frameworks based on data mining.

Introduction

Big data is a product of the information and intelligence development, and its emergence represents another major transformation of the industrial revolution. Since the launch of the “Big Data” column in

Traditional educational methods have also been pointed out to be increasingly unable to meet the training goals for 21st talents. Simultaneously, data-driven emerging technologies have been widely applied in various aspects of educational evaluation, such as applying machine learning and deep learning in exam services, question bank construction, data collection, performance Analysis. The advantages of big data-driven evaluation have been recognized by numerous international professional evaluation institutions (Wang et al., 2008). For example, the USA GRE program of the Graduate Entrance Examination applied Multi-Stage Adaptive Test (MST) technology to achieve personalized evaluation. Different candidates can take the exam anytime, with different questions but results expressed on the same measurement system. Additionally, artificial intelligence (AI) scoring systems such as e-rater, c-rater, and m-rater have also achieved significant results in the scientific writing, English writing scoring, and mathematics scoring. The research on education evaluation driven by big data continuously advance, but there is currently few systematic review on its empirical research. Therefore, this study aim to review the technology, method, and content of big data education evaluation practice in the past decade, in order to provide more guidance and reference for education evaluation.

Data Big Data-Driven Educational Evaluation

The development of modern science and technology has made data and computing the mainstream way to describe educational phenomena. The mining and analysis of massive educational data has serve as an important basis for teaching interaction and decision-making (Wang et al., 2020). Numerous research have also pointed out the characteristics and advantages of education evaluation based on big data. Liu et al. (2021) believed that the value of intelligent technology in data collection and data mining can revolutionize traditional educational evaluation and drive evidence-based decision-making. Hu et al. (2022) pointed out that AI, big data, and brain science can bring refined and multi-modal accompanying data collection, which can achieve a transformation from evaluating students’ knowledge to procedural, dynamic, high-order, and comprehensive abilities evaluations. Meanwhile, Luo et al. (2021) believed that big data-driven educational evaluation refers to the application of emerging AI technologies to assess learners’ ability, personality, course risk, and learning process. This not only improves the efficiency and accuracy of evaluation and helps to achieve process based and personalized evaluation, but also integrates multi-dimensional information for comprehensive evaluation of individual students. It can be seen that big data-driven education evaluation mainly refers to personalized, procedural, comprehensive, and in-depth evaluation activities based on multi-modal data such as machine learning, deep learning, data mining, neuroscience, image sound, and physiology, targeting the environment of student learning, teacher teaching, and education management in the education system.

Research Objective

This study aims to describe the current status of big data education evaluation through a systematic literature review, in response to specific issues of “what to evaluate,”“to whom to evaluate,”“what to use,” and “how to evaluate” in big data-driven education evaluation practice. The objectives of this study are as follows:

What are the publishing trends, countries and regions, journals, main contributors and units of empirical research on big data education evaluation?

What are the algorithm models, data modalities, discipline field, evaluation subjects, and evaluation objectives for empirical research on big data education evaluation?

What are the main application scenarios and research themes of current big data education evaluation research?

Methodologies

This study was conducted by using the Preferred Reporting Items for Systematic Reviews and Meta Analytics (PRISMA) method. Millions of educational research are published every year, but even for the same specific questions, research conclusions may be completely different. It is difficult for teachers, students, and government decision-makers to judge the credibility of these information. The literature review highlights the potential value that research mergers may bring. For traditional narrative reviews, the author’s statements are mostly based on personal experience or pre formed concepts, lacking a unified search method, screening literature without strict and unified standards, and considering the quality of the original literature less. Therefore, narrative reviews inevitably incorporate a large amount of systematic errors (bias), making it difficult to obtain sufficient authenticity (Newman & Gough, 2019). Currently, there are numerous studies on the application of big data-driven evaluation technology in education, and researchers have also proposed different perspectives on this. If only narrative review methods are used, it will inevitably increase research errors and reduce the credibility of research conclusions. The systematic literature review method originated from research in the medical field in the 1980s and began to be applied in educational research in the 21st century (Diekemper, 2015; Page et al., 2021). By strictly summarizing relevant clinical studies, clinical physicians can quickly, accurately, and conveniently understand the latest medical measures to guide clinical practice and improve medical quality (Moher et al., 2009). The conclusion of the systematic review is more authentic and reliable, and is recommended as the gold standard for efficacy evaluation. This method can also be applied to the field of educational research, especially in the decision-making of education. Systematic literature review, as a clear and systematic literature research method, involves developing replicable retrieval strategies to retrieve, screen, and evaluate relevant literature, ensuring accurate understanding of the research status and trends of the research topic (Rudnicka & Owen, 2012). Moreover, the advantages of systematic literature review methods such as rigor and transparency can help overcome the problems caused by researchers’ subjective biases in traditional educational research literature review (Liberati et al., 2009).

Systematic Literature Review

According to Newman and Gough (2019), systematic reviews in educational research mainly include following steps: (1) Determining research theme. The theme of systematic literature review in education mainly comes from teaching practice, involving important issues that are uncertain and controversial in teaching, to help teachers and government decision-makers make decisions. (2) Constructing selection criteria. Researchers must decide which studies to include in their review. In order to achieve this systematically and transparently, they need to establish criteria. The selection criteria (inclusion or exclusion criteria) would limit the review. (3) Developing retrieval strategies. The retrieval strategy is the research plan, mainly including the search sources and search strings. (4) Retrieving and screen literature. Using search strings to search in journal tools such as Web of Science (Science and Social Science), PsycINFO, ERIC (Educational Resources Information Center). Afterward, the retrieved literature is screened in two steps. One is preliminary screening, which means screening out literature with unqualified titles and abstracts. The second is to read the full text and analyze potential qualified literature one by one to determine whether it is qualified. (5) Coding studies. Researchers need to systematically identify the characteristics of research questions in the literature, and two or more researchers are invited to encode the literature. (6) Appraising the quality of studies. The quality of studies refers to the degree to which individual experiments included in the literature prevent or reduce systematic and random errors during the design, implementation, and analysis process. (7) Synthesis. Performing statistical analysis on the encoded literature data to synthesize the results. (8) Reporting findings. Explain whether the conclusion can be applied to guide teaching practice and ultimately form a paper.

Literature Screening Criteria

After determining the research theme of big data education evaluation, we constructed seven criteria of inclusion and exclusion. Among them, criteria 1 to 4 are general screening criteria for systematic literature review. Criteria 5 is to screen empirical research on big data education evaluation, excluding theoretical speculation, review, and introduction related articles. The sixth criterion is to exclude medicine and medical education studies. Due to the early application of big data technologies in the medical education field, the number of studies on big data-driven medical education evaluation is very massive, and a relatively mature research and application system has been formed, forming a unique style in the medical field. If research on medical education is included, it is difficult to reflect the true status of the big data technology application in disciplinary fields and basic education schools. Therefore, this study excluded research literature on big data-driven evaluation in medical education. The Criteria 7 is to encode in detail the experimental design and specific process of research on big data education evaluation (Table 1).

Inclusion/Exclusion Criteria.

Literature Retrieval Strategy

In order to obtain as many empirical research studies on big data education evaluation as possible, this study conducted retrieval using string (big data OR artistic intelligence OR deep learning OR machine learning) AND (assessment OR evaluation) AND (education * OR coach * OR learn *) in five databases of Web of Science, SpringerLink, Wiley Online Library, Taylor & Francis Online, and ScienceDirect. These databases are commonly used in educational research and an important source of literature in the social sciences field. They can support full-text retrieval and open-source literature, and include a large amount of empirical and quantitative research (Kitchenham et al., 2009).

Literature Screening Process

After searching, it was found that there were very few literature related to big data education evaluation research before 2010. Based on this, this study limited the for literature retrieval to the period from 2010 to 2022. At the same time, the literature types were restricted to papers, WOS core collections, education and educational research, and English language. Based on this, this study initially retrieved a total of 5,729 articles. According to the PRISMA method, the literature screening process requires a clear presentation of the process and reasons for identifying, screening, including, or excluding literature to ensure the accuracy of systematic literature reviews or meta-analysis reports (Liberati et al., 2009). As shown in Figure 1, after deleting 894 duplicate articles, 4,835 articles were obtained. Subsequently, we conducted two rounds of screening by reading the title, abstract, and full text of the literature.

PRISMA diagram of literature selection.

Ultimately, this study obtained 44 eligible literature, including 10 Web of Science, 20 SpringerLink, four Wiley Online Library, three Taylor & Francis Online, and seven ScienceDirect. Moreover, 43 articles belong to the SSCI index, 1 article belongs to the SCI index, and 21 articles belong to both the SSCI and SCI indexes. For the 44 selected literature, this study conducted coding analysis from dimensions such as year, author, unit, country, journal, algorithm model, data modality, discipline field, evaluation objectives, and evaluation subject. A professor and two graduate students independently coded 44 articles, and resolved differences through discussion. Finally, the coding consistency was calculated, Cronbach’s α is 0.898. According to Cohen (1992), the internal consistency of the two coding results was very high, which can be further analyzed. In the extraction and synthesis of encoded literature data, Excel was used for statistical analysis, and VOSviewer was used for keyword co-occurrence analysis.

Results

Basic Overview of Research on Big Data Driven Education Evaluation

Publication Trends and Journal Distribution

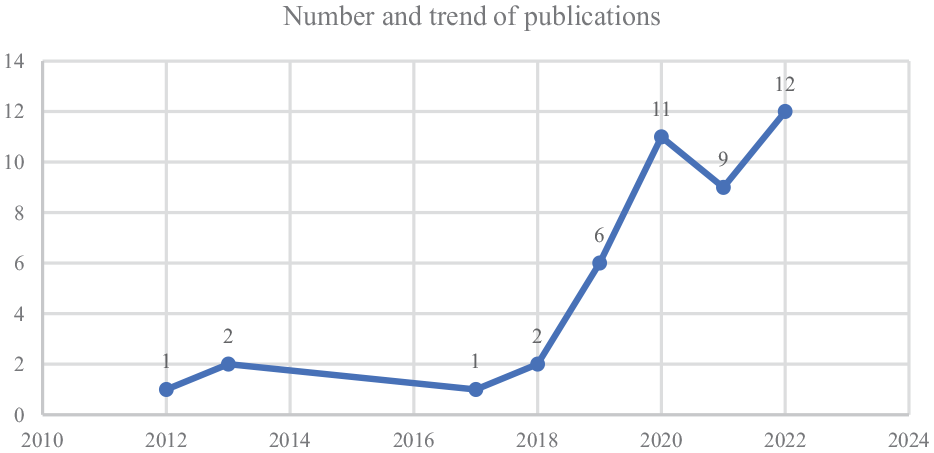

From the perspective of publication trends, the overall volume of empirical research on big data education evaluation has been increasing year by year in the past decade. Especially since 2019, related research has shown a surge (Figure 2), indicating that using education big data to serve education evaluation has become a hot topic in the current academic community. Research has also pointed out that with the rapid development of machine learning and deep learning, promoting innovation in educational evaluation practices through intelligent technologies would become an important topic in educational research (Zhai, 2021). Moreover, the advantages of machine learning in predicting and evaluating complex cognitive structures make them more in line with the requirements of cultivating core competencies in the discipline.

Publication Trends from 2011 to 2022.

From the distribution of journals, research on education evaluation driven by big data is widely distributed, with 44 articles published in 27 different journals. Among them, educational research journals have the largest number, with a total of 36 articles (81.8%); Next are computer science journals, with a total of 5 articles (11.4%); At the same time, there is one article published in educational research science journals, one article published in physics journals, and one article published in communication journals. From Figure 3, it can be seen that the top three journals with the highest number of publications are Journal of Science Education and Technology (

Contributions of published journals.

Institutional Network and Regional Distribution

After analyzing the lead author and unit of 44 articles, we found that the authors came from different units in 15 countries. As for the region, researchers from North America account for 36.4% (

Contributions of countries/regions.

It is worth noting that this field has gradually developed into key research teams, such as the Institute for Artificial Intelligence, University of Georgia, Athens, Georgia, USA, which have the highest number of publications (

Contribution of the author(s)/institutions.

Practical Characteristics of Big Data Driven Education Evaluation Research

Algorithm Model

The AI algorithm model is an internal logic that supports the intelligent evaluation. The algorithm model mainly models text, audio, image, and physiological signal data through regression, decomposition, clustering, integration, prediction, and training methods, thereby helping to achieve more objective, scientific, and systematic evaluation (Baradaran & Ghorbani, 2020). After an overview of the included literature contents, it is found that naive Bayes (NB), linear regression (LinR), support vector machine (SVM), decision tree (DT), gradient lifting (GB) algorithm, k-nearest neighbor (k-NN), random forest, GBDT and other algorithms are commonly used in the research. Alsariera et al. (2022) analyzed 39 studies published between 2015 and 2021 on different machine learning algorithms for predicting student performance and found that decision tree (DT), artificial neural network (ANN), support vector machine (SVM), K-nearest neighbor (KNN), linear regression (LinR), and naive Bayesian (NB) algorithm models are widely used in identifying and improving various academic performance. Moreover, different algorithm models possess their unique advantages for different data types. For example, logistic regression can be used to solve binary probability problems; Decision trees mainly classify or regress the dataset in the form of decision-making through segmentation; Neural networks have significant advantages in image recognition by simulating the computational methods of human brain neurons; Convolutional neural networks have the ability to process data with grid like structures, such as images and audio (Zhai et al., 2021b).

Data Modality

The development of big data technologies has made the classroom no longer limited to visible explicit behavioral data, and more multimodal data such as thinking, physiology, and psychology have been captured in real-time (L. L. Zhang & Gu, 2022). For example, collecting behavioral data such as students’ head, face, and body postures through videos, using algorithmic models to identify and analyze students’ homework text data, and tracking and observing students’ psychological characteristics data based on intelligent perception devices (eye trackers, brainwave monitors, smart glasses, etc.). This study classified the data modalities in 44 studies into four categories: text modality, visual modality, speech modality, and physiological signal modality. Among them, text modality (online text and log data) accounted for 84.1% (

Secondly, visual modality data sources account for 9.1% (

Thirdly, the data sources of physiological signal modalities account for 4.5% (

Fourth, this study included a teaching evaluation study on a voice modal data source. Shuo and Ming (2022) constructed an intelligent music teaching system based on machine learning and support vector machine algorithms, which classifies the extracted audio features to innovate the evaluation practice of music teaching (Figure 6).

Distribution of data modality.

Evaluation Subject

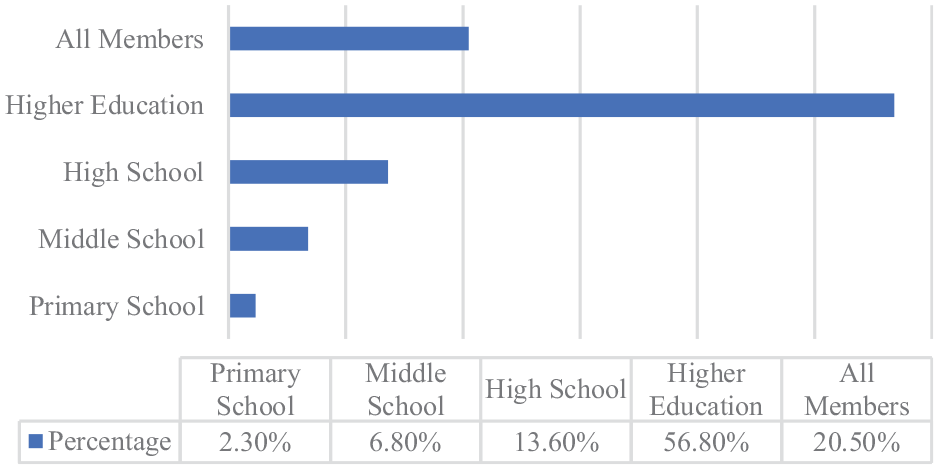

As for evaluation subject, in the past decade, empirical research on education evaluation based on big data has mostly focused on higher education students (pre-service teachers), accounting for 56.8% (

Distribution of evaluation subject.

Discipline Field

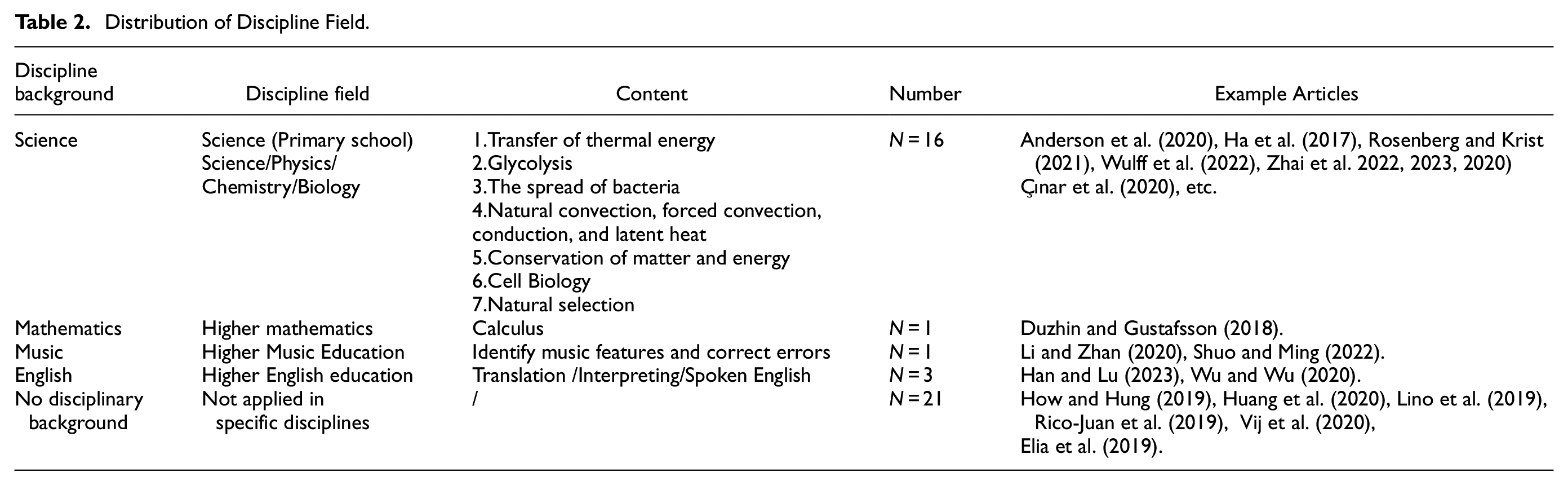

Research with a disciplinary background accounted for 52.3% (

Distribution of Discipline Field.

Evaluation Objectives

The evaluation goal is the direction of big data education evaluation. After classifying and coding the 44 empirical studies included, it was found that the application goals can be mainly divided into five categories: high-order thinking analysis, learning performance prediction, learning emotion recognition, teaching management decision-making, and evaluation mode optimization (Table 3). Among them, the evaluation research targeting higher-order thinking analysis accounted for the largest proportion (

Distribution of Evaluation Objectives.

One of the most direct and primitive purposes of education evaluation based on big data is to predict learners’ learning performance, freeing evaluators from a lot of repetitive grading work. The proportion of evaluation research aimed at predicting learning performance is also relatively high (

In addition, data-driven teaching evaluation is also widely used in diagnosing learners’ emotions (

Meanwhile, research on optimizing teaching management decision-making and evaluation models accounted for 15.9% (

The Relationship of Data Modality, Evaluation Subject, Discipline Field, and Evaluation Objectives

According to Figure 8, regardless of the evaluation objectives, text modality is the most widely used data collection method, especially for higher-order thinking analysis. Researchers often deconstruct learners’ higher-order thinking abilities through data mining and algorithm modeling of students’ paper or online answer text materials. Angeli and Valanides (2013) used educational data mining (EDM) methods to examine how Field-Dependent (FD) and Field-Independent (FI) learners actually interact with Model It (a computer modeling tool) to solve problems. The researchers’ evaluation of learning emotion recognition ability mainly collects the physiological signal modal data of students’ EEG, skin conductance, heartbeat, respiration and eye movement. As Ninaus et al. (2019) used machine learning methods based on facial emotion detection data to evaluate the emotional participation of adult participants who completed game based digital tasks or non-game based equivalent tasks. The results indicate that emotional participation in games contributes to students’ learning development.

The relationship of data modality and evaluation objectives.

The objectives of big data-driven education evaluation research for different research groups are also different. As shown in Figure 9, regardless of the evaluation target, the college/graduate student group is the most studied evaluation object. Researchers use machine learning and data mining to evaluate the higher-order thinking, learning emotions, learning performance, talent selection, and evaluation optimization for them. However, there is currently limited research on the application of big data technology in teaching evaluation among primary and secondary school students, especially for primary school students. Zhai (2021) evaluated the scientific reasoning ability of fifth grade students and found that they may differ in the specific cognitive skills required to complete the argument.

The relationship of evaluation subject and evaluation objectives.

The objectives of using big data technology for teaching evaluation vary among different disciplines. As shown in Figure 10, the science discipline mainly applies big data technology to carry out activities such as high-order thinking analysis, learning prediction, and pattern optimization. The English and music disciplines are more focused on utilizing big data technology to support talent selection and evaluation activities. What’s more, talent recruitment analysis, graduate employment rate analysis, and campus resource utilization analysis are also the main goals for researchers and practitioners to use big data technology for educational evaluation.

The relationship of discipline field and evaluation objectives.

Application Landscape of Big Data Driven Education Evaluation Research

Theme1: Behavioral Evaluation of Students’ Participation in Online Learning Environment

Driven by the fourth wave of lifelong learning in the Industrial Revolution, remote online education plays an important role in the modern education system. In particular, the outbreak of the COVID-19 since the end of 2019 has led to a sharp increase in online learning behavior, but at the same time, the demand for online learning has become prominent while its lack of application has also been exposed. As result, utilizing data technology to improve the effectiveness and experience of online learning has become the focus of researchers’ exploration (Figure 11). Tsimaras et al. (2022) analyzed an open-ended evaluation questionnaire of 1,890 Greek learners based on data mining text analysis. The researchers introduced a new metric called “Acceptance Grade” to cluster words in the text into 7 g tokens, and evaluated the first 10 sentences in each category (positive and negative). The verification of the results through two experts and data triangulation showed that the accuracy of the evaluation is 80%. Meanwhile, M. Zhang and Jia (2022) proposed an analytical model, sequence mining model, and interaction model for OLB through data mining techniques, and constructed a psychological state perception model based on OLB analysis to analyze the characteristics of students’ online learning. They also used control point (LoC) motivational models to stimulate students’ online learning motivation. The comparison of the fitting effects of GBRT, AdaBoost Regression, Linear SVR, and Linear Regression algorithms on the model using MAE and MSE as indicators indicates that the constructed model has a certain predictive ability for students’ perception of the psychological state of OLB.

Application landscape of empirical research on big data driven education evaluation.

Theme2: Process Assessment of Learning Motivation and Emotional

The evaluation method of measuring students’ learning effectiveness based on a single performance standard has increasingly been reflected on by educational research and practitioners. As a result, the collection and analysis of process data in teaching and learning behavior has gradually become a consensus among all parties, with students’ emotional and motivational psychological traits and tendencies becoming one of the research focuses. For example, Onan (2021), a researcher in Türkiye used 66,000 pieces of data from MOOC comment corpus as samples to train an effective emotion analysis classification scheme with high predictive performance. By labeling the data in the review corpus as positive and negative orientations, they compared the prediction performance of traditional supervised learning methods, integrated learning methods and deep learning methods. Empirical analysis showed that the architecture based on deep learning is superior to integrated learning methods and supervised learning methods. At the same time, the long-term and short-term memory network combined with the representation based on GloVe word embedding scheme achieves the highest prediction performance. The role of emotional analysis based on formative assessment in subject themed content teaching is becoming increasingly evident. Guo (2022) believed that process based emotional analysis can enhance the recognition and perception of emotional words in English and American literature teaching. By comparing the emotional tendency analysis methods based on emotion dictionaries and machine learning, it is proposed that the inheritance classification model combining English and American literature teaching with hidden Markov models is relatively reasonable for text analysis.

Theme 3: The Development and Optimization of Big Data Models in Disciplines

In response to the shortcomings and drawbacks of traditional evaluation methods in different disciplines, designing, developing, or optimizing big data algorithm models that fit the characteristics of the disciplines has also become an important research direction. Machine learning and natural language processing provide scientific education researchers with means of comprehensive ability assessment. Wulff et al. (2022) utilized machine learning technology to balance the trade-off between qualitative and quantitative assessments in scientific education research. They constructed response items through clustering methods based on pre trained language models to better enhance the evaluation of complex structures such as open science writing by pre service physics teachers. As for social science, Zhong (2022) proposed that an improved lightweight convolutional neural network (CNN) teaching quality evaluation model for traditional Chinese culture and ideological and political education in curriculum. It mainly evaluates students’ recognition and acceptance of teacher teaching content and methods by identifying student expressions, and its reliability and accuracy have been confirmed through object testing.

Theme 4: Cognitive Diagnosis and Higher-Order Thinking Assessment

The evaluation of higher-order thinking skills may become the current and even future dominant direction. For example, the team of the institute of artificial intelligence at the university of Georgia in Athens, Georgia, USA is committed to exploring the challenges of cultivating and evaluating higher-order thinking in science education. They developed a scoring model for students’ scientific modeling, drawing, and written descriptions using machine learning algorithms. Through six modeling and evaluation tasks, they combined the core ideas and cross cutting concepts of the discipline with modeling practice, allowing human experts and trained machine learning model algorithms to score simultaneously. The study further revealed five characteristics that may affect the accuracy of machine scoring, namely surrogate representation, chaotic labels, varying sizes, positions, and information redundancy (Zhai et al., 2022). Meanwhile, they also pay attention to the potential of machine learning in evaluating students’ scientific reasoning ability. By developing machine learning algorithms to automatically grade students’ written arguments, cognitive diagnostic methods are applied to test students’ cognitive models of scientific reasoning. The results confirmed the advantages of machine learning and cognitive diagnosis in evaluating students’ fine-grained cognition in scientific argumentation and other scientific practices (Zhai et al., 2023).

Theme 5: Design of a Learning Analysis Framework Based on Data Mining

The establishment of a machine learning analysis framework can deconstruct the complex structure of large-scale, comprehensive, and long-term learning data, promoting the effective implementation of education evaluation based on data mining. As Zhai et al. (2020) developed an analytical framework for machine learning to conceptualize the evolution of machine learning in scientific evaluation. After comparing methods based on machine learning and traditional scientific evaluation, 12 keyword features were extracted and mapped into a three-dimensional framework including construction, functionality, and automation. Further, clustering analysis was conducted to determine the four types of evaluation for these three variables. L. Zhang (2021) proposed a machine learning based English education evaluation system, which is divided into four stages: environment, knowledge base, learning process, and implementation process. The entire machine learning system is divided into user layer, business layer, and data layer from top to bottom to support the development of English teaching evaluation.

Discussion and Future Direction

This study provides a systematic review of the basic overview, practical characteristics, and application prospects of empirical research on big data education evaluation in the past decade. Below, the results of the systematic review, as well as its future trends and directions were discussed.

Firstly, the application of big data technology to serve teaching evaluation in different disciplines has gradually become a trend. The research results indicated that there is disciplinary background for more than half of the research on big data education evaluation. In the era of intelligence, the use big data technology for deep mining and scientific analysis of subject data can transform subject evaluation from evaluation based on small samples or incomplete information to diversified scientific evaluation based on overall information. The high-order thinking evaluation such as scientific argumentation, scientific reasoning, and scientific modeling through machine learning has been recognized and advocated by scientific education researchers. For example, English education researchers also emphasized the application of intelligent technologies such as machine learning to optimize students’ grammar, length, expression, structure learning, to overcome various aspects such as students’ pre class preview efficiency, autonomous learning motivation, homework completion quality, and post class reflective behavior. Moreover, utilizing the key information in disciplinary attribute data can target and help overcome the inherent challenges in disciplinary evaluation. Machine learning association rule methods can also explore the internal connections hidden between disciplinary domains, which is also of great significance for collaborative innovation in interdisciplinary evaluation. Big data education evaluation technology has greatly reduced the inefficient and repetitive labor in traditional subject teaching, significantly improved teaching efficiency and quality, and successfully promoted the transformation of teachers’ teaching thinking from traditional education methods to modern education methods, and evolved from group education methods to personalized education methods. Supporting student evaluation with data, driving curriculum optimization with evaluation, reinforcing and ensuring student growth with curriculum optimization, helping students truly understand themselves, clarify their strengths, weaknesses, and development weaknesses, enabling every student to find a suitable way of growth, achieving a win-win situation between student development and school education and teaching management decision-making, and leveraging the dual functions of comprehensive quality evaluation and education (Kong, 2021; Lin & Hou, 2024; Shin et al., 2022). Therefore, in the future, we should continue to deepen the integration of intelligent technology into subject evaluation to help data-driven precision overcome the inherent challenges of subject evaluation.

Secondly, the use of machine learning technology in K-12 classrooms for educational evaluation needs to be taken seriously. The results of this study indicated that the current research on big data-driven education evaluation is more focused on students in higher education stage, and less in the K-12 stage. However, in recent years, the research topic of big data education evaluation in K-12 classrooms has gradually received attention. K-12 students, as the fundamental stage of cultivating innovative talents in the new era, are also a crucial and decisive link in talent cultivation. The form and quality of classroom teaching evaluation in the K-12 stage directly determine the standards for talent cultivation. Under the core literacy-oriented curriculum goals, the drawbacks of traditional evaluation are increasingly prominent. The deep integration of increasingly mature AI technology and data-driven evidence-based practice with classroom teaching and evaluation is expected to solve the problems of classroom teaching and evaluation in primary and secondary schools. Researchers are also paying more attention to and calling for intelligent evaluation practices in primary and secondary school classrooms. The data-intensive form of educational evaluation also has important value for improving outcome evaluation, strengthening process evaluation, exploring value-added evaluation through multiple channels, and improving comprehensive evaluation through the integration of multiple technologies.

For example, the big data artificial intelligence system covers the entire process before, during, and after class for K-12 students, collecting multimodal data of student processes, conducting correlation analysis between data, and examining the influencing factors of student grades, in order to interact and provide feedback anytime, anywhere. Before class, the intelligent system would review the objective questions on the learning preview task list, while the teacher would review the subjective questions. Finally, the system would automatically generate an analysis report on the students’ knowledge mastery. In class, the teacher provides targeted explanations based on the situation on the preview report, and selects the teaching tools required for the teaching activities. After class, the system can analyze the data generated during the homework process in multiple dimensions to help teachers identify and fill in gaps in the subsequent teaching process, and optimize teaching. In addition, students would also receive a “study report” to have a clearer understanding of their phased learning situation and facilitate timely adjustments (Guleria & Sood, 2023; Okoye et al., 2022). Therefore, future research needs to continue to promote the application and extension of data-intensive education evaluation in primary and secondary school classrooms, in order to help effectively empower key links in talent cultivation.

Thirdly, the advantages of data-driven educational evaluation technology in higher-order thinking and emotion recognition cannot be ignored. The results of this study also indicate that the evaluation goals of big data education evaluation research mainly focus on higher-order thinking analysis, learning performance prediction, and learning emotion recognition. The traditional assessment methods are severely constrained in measuring abilities such as thinking and emotions, but the establishment of the curriculum objectives for core competencies in the subject has led thinking and emotions to the center of teaching and evaluation (Nehm et al., 2012). The application of machine learning in the evaluation of higher-order thinking in science education provides opportunities to measure and understand students’ various cognitive expressions. Machine learning based scientific evaluation meets the evaluation criteria for complex and diverse cognitive structures, and can better meet the requirements of three-dimensional scientific learning objectives in the next generation of scientific standards. Machine learning methods also provide a better way to explain, observe, and infer students’ scientific thinking “evidence,” which can support real-time and complex teaching decision-making actions. Therefore, future research needs to actively leverage the advantages of intelligent assessment in higher-order thinking and emotional recognition, and implement the goal of cultivating core competencies in the discipline. But at the same time, it is also necessary to pay attention to the effectiveness, fairness, and educational issues of evaluation, in order to better serve the teaching objectives of the subject.

Fourthly, research also needs to continuously improve the adaptability of intelligent technologies such as machine learning and teaching content, synergistically promote algorithm innovation and parallel evolution of teaching needs, in order to adapt to the attribute characteristics and dynamic changes of classroom teaching. Research has summarized the key issues in the application of intelligent technologies such as machine learning in subject evaluation: firstly, potential confounding factors arise due to the interference of multiple variables in teaching; secondly, inconsistency between the interpretation and use of scores and teaching objectives; thirdly, inconsistency between the interpretation of scores and actual learning quality; fourthly, inconsistency between machine scoring and metrics, Fifth, the generalization application ability of machine algorithm models is limited, and sixth, the extrapolation ability of machine algorithm models is limited (Zhai et al., 2021).

Enhancing the transparency and interpretability of algorithms is an important issue for technological breakthroughs. Explanatory techniques mainly refer to local sensitivity analysis, impact measurement, and techniques used to detect the importance of input features. For example, when constructing a model, features with clear meanings can be selected, and chaotic or irrelevant features should be avoided as much as possible, as well as models that are easy to understand and interpretable (such as decision trees, logistic regression, etc.) (Duchatelet & Donche, 2022; Lino et al., 2019; Oztekin et al., 2013). Therefore, while promoting innovative design of algorithm models, technology researchers and teaching practitioners should also combine the inherent characteristics and laws of teaching design to promote the collaborative evolution of evaluation technology and teaching processes. In the process of technological change, it is necessary to continuously strengthen the effective “communication” between intelligent technology and teaching processes, and through the training and feedback of algorithm models, make them more in line with the characteristics and needs of subject teaching.

Finally, research on big data education evaluation still needs to pay attention to data privacy and algorithm risks, and continuously follow up to improve the ethical and security standards for big data education evaluation. The big data education evaluation activities supported by intelligent technology should also pay attention to security, privacy, and ethical issues. Given the inherent logic and nature of intelligent technology, the “black box” problem of score allocation in the evaluation process and the uncertainty problem in the calculation and prediction process are all risks that should be vigilant and guarded against. At the same time, the privacy protection caused by multimodal data in big data has also been overlooked. Therefore, relevant ethical rules and application standards for the application of big data education evaluation should be formulated and clarified, creating a favorable development environment for big data-driven education and teaching evaluation.

Limitation

As a systematic literature review, this study also has limitations in three aspects: firstly, although we have tried our best to collect literature on big data education evaluation research, we cannot guarantee to cover all studies on this topic. Some unpublished literature was not included in this study; Secondly, this study limited the language to English, which may result in other non-English language studies not being fully reflected; Thirdly, the tendency of journals to publish research reports may also affect the results of this study. Generally, journals prefer to publish positive and effective reports, while negative and ineffective reports are difficult to obtain publication.

Conclusion

This study adopted systematic literature review method to review and sort out 44 empirical studies in the field of education evaluation driven by big data in the past decade. After conducting analysis of empirical research on big data education evaluation from three aspects: research overview, practical models, and application prospects, it was found that: Firstly, from the perspective of research overview, empirical research based on big data education evaluation has shown an overall upward trend in the past decade. And since 2019, research has been on the rise, which indicated that empowering educational evaluation with emerging intelligent technologies such as machine learning, deep learning, and data mining has become a global consensus. The relevant research is mainly published in journals related to education and educational research, with journals related to educational technology, scientific education, and language teaching having the largest number of articles. From the distribution of authors and institutions, it can be seen that researchers from North America have made the greatest contribution in this field, with American scholars accounting for the highest proportion of research, indicating that research on big data education evaluation is still mainly focused on American scholars.

Secondly, from a practical perspective, naive Bayesian (NB), linear regression (LinR), support vector machine (SVM), decision tree (DT), etc. are common algorithm models for big data education evaluation research, and different algorithm models exhibit unique advantages for different data features and evaluation needs. Currently, the main data sources for big data education evaluation are paper or online textual modal data, while visual modal data and voice modal data are also used, but relatively few. The evaluation subject is mainly composed of higher education students or all members of society, and the application of evaluation among primary and secondary school students is relatively limited, which may be related to the difficulty and restrictive factors of application in primary and secondary school classrooms. Nearly half of the research has a disciplinary background and nearly half has no disciplinary background. Among the research with a disciplinary background, the research related to scientific disciplines (physics/chemistry/biology) has the highest proportion. The evaluation objectives of empirical research on big data education evaluation mainly focus on five aspects: high-order thinking analysis, learning performance prediction, learning emotion recognition, teaching management decision-making, and evaluation mode optimization. Among them, evaluation application research targeting high-order thinking analysis accounts for the largest proportion, and relevant research has also proven the potential and value of intelligent evaluation technology based on machine learning in evaluating high-order thinking skills with complex structures. Secondly, predicting learners’ grades through training scoring models to achieve automated evaluation is also one of the main goals of the application, which is of great significance in reducing repetitive scoring work for teachers and improving the objectivity and accuracy of scoring. In addition, the application of data-driven educational evaluation in emotional recognition and auxiliary diagnosis is also one of the main evaluation objectives. By mining data on emotional valence, we can help identify prominent factors that affect learning. Finally, although the proportion of research aimed at optimizing teaching management decision-making and evaluation models is relatively small, its role in talent selection and identification, as well as in optimizing teaching evaluation practice, cannot be underestimated.

Thirdly, from the perspective of application landscape, the research fields and scenarios of international big data education evaluation mainly focus on five aspects: online learning behavior and environmental participation evaluation, process evaluation of learning motivation and emotional analysis, development and optimization of subject domain big data models, cognitive diagnosis and high-order thinking skills evaluation, and design of learning analysis frameworks based on data mining. The integration of multimodal data-driven evaluation forms into classroom and extracurricular educational activities is expected to reshape the morphological structure of educational evaluation and break through the deep-seated challenges of traditional educational evaluation.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Collaborative Evaluation Mechanism of Labor Education in Heilongjiang Universities under the Background of Artificial Intelligence in the Philosophy and Social Sciences Research Project of Heilongjiang Province of P.R. China (21EDC193); Research and Practice on the Continuous Improvement Mechanism of Teacher Training Quality in Higher Education Institutions in Heilongjiang Province of P.R. China (SJGY20220361); Design and Implementation of Embodied Teaching Mode for Class Management from the Perspective of Course Ideology and Politics of P.R. China (SJGY20210461).

Data Availability Statement

Data sharing not applicable to this article as no datasets were generated or analyzed during the current study.