Abstract

Automated Writing Evaluation (AWE) software has been viewed as a promising tool for assisting writing. This study integrated AWE and its combination with peer review discussion into writing practice in a large college writing class in the Asian context. Adopting a mixed-method approach, this study employed a quantitative questionnaire to investigate how students perceived the integration at different writing stages and a qualitative interview to examine what prompted their quantitative decisions. The results show that the integration of AWE and peer review feedback significantly reinforced the perceived usefulness of AWE at the revision stage, and students with different proficiency levels had notably different attitudes toward AWE. Their writing anxieties intensified as they became increasingly aware of their own weaknesses during the writing process. Therefore, student writing challenges involve not only language proficiency and writing skills but also psychological variables.

Introduction

Academic writing is one major teaching challenge faced by language instructors (Almubark, 2016). Academic writing requires more than linguistic basics; it encompasses considerations in style, flow and organization and demonstrations of skills such as critical thinking, persuasive power, and informational synthesis. In addition, teaching academic writing involves not only delivering content but also guiding students through an entire writing process from brainstorming outlines to reaching final drafts. In whichever step in this process, providing regular and ample feedback is instrumental in scaffolding writing instruction (Bitchener & Ferris, 2012; Ferris, 2003; Hu, 2007; Lai & Tu, 2020). Feedback ranges from higher-order concerns, such as writing logic and argument development, to lower-order concerns, such as grammar, spelling, punctuation, or conciseness. Owing to this wide range, feedback provision is effort-intensive and time-consuming. To exacerbate this already dauting task, there has been an upsizing trend in writing classes; with increased class size come heavier workloads for writing instructors. In one study, a single writing instructor in a university English class in Hong Kong was expected to mark 1,128 written papers of 250 students within a 13-week semester (Tso & Ho, 2016). Therefore, their feedback quality would deteriorate were they not to receive pedagogical support.

To extend such support, Automated Writing Evaluation (AWE) has received considerable attention. AWE software provides computer-generated feedback on the quality of a submitted text and generates automated scores via artificial intelligence, natural language processing, and latent semantic analysis (Dikli, 2006; Philips, 2007; Shermis & Burstein, 2003). Moreover, AWE software provides written feedback in the form of comments and/or corrections. Hence, all these features considered, the successful integration of AWE software may alleviate the feedback burden of writing instructors, redirecting their effort into teaching and maintaining teacher-student interaction in large class settings.

Research has been conducted to assess the potential of AWE application as a supplementary writing tool (e.g., Burstein et al., 2004; Chodorow et al., 2010) and one recent meta-analysis examines how AWE application improves L2 writing skills (Mohsen, 2022). Despite their promising results, it remains under-explored how an AWE system can be effectively incorporated into the writing classroom in tertiary education in the Asian context and in particular how AWE application helps learners with different proficiency levels address both higher-order and lower-order concerns.

Literature Review

Automated Writing Evaluation

AWE systems have been employed since the 1960s in the US to help score student essays (Page, 2003). In addition to this original assessment purpose, AWE is currently being used in high-stakes tests, such as the Test of English as a Foreign Language and the Graduate Management Admissions Test (Stevenson, 2016). AWE software, such as

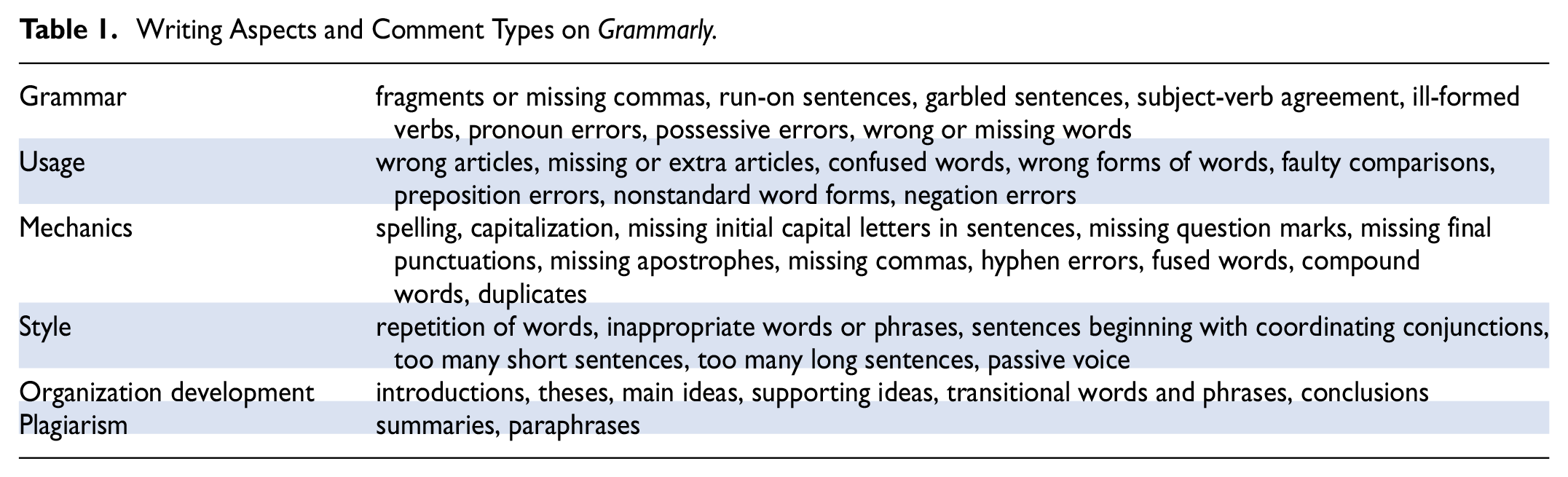

Writing Aspects and Comment Types on

AWE Classroom Applications

AWE has been explored at different levels of explicitness and depth regarding its roles in writing practice, feedback provision and classroom instruction. AWE integration into EFL and EAP writing practice has been documented in several studies (Chen & Cheng, 2008; Grimes & Warschauer, 2010). In one case, students were required to keep submitting and revising their papers through AWE software until they reached a threshold score set by the teachers, after which they would submit their papers to their instructors for feedback (Chen & Cheng, 2008). In another, students received feedback from both AWE and teachers simultaneously through the AWE system (Grimes & Warschauer, 2010); teachers would review and then modify AWE feedback. In these studies, AWE feedback was considered useful but required augmentation with human feedback, a point stressed in several studies (Bai & Hu, 2017; Miranty & Widiati, 2021; O’Neill & Russell, 2019a).

In AWE-EFL/EAP integration, assistance in feedback provision is a key component. Among different explorations of AWE as a means of feedback provision, Chen and Cheng (2008) examined AWE integration and perceived usefulness in three different classes. They found that the integration was multifold (e.g., AWE as an instructional tool during drafting and revising or throughout the entire writing process; AWE coupled with both teacher and peer feedback in later phases; AWE coupled only with teacher feedback) and that students adopted a positive attitude toward AWE when it was used in the initial stages and in combination with both peer and teacher feedback.

To scaffold students’ use of AWE, others have focused on how AWE can be employed or applied as part of classroom instruction. In these discussions, teachers tried to compensate for AWE’s limitations. In Ghufron and Rosyida’s (2018) quasi-experimental study of 40 EFL Indonesian students,

AWE Potential and Limitations

Potential

AWE assists students in assessing and enhancing writing quality, as evidenced in several studies (Bai & Hu, 2017; Cavaleri & Dianati, 2016; Chen & Cheng, 2008; Darayani et al., 2018; Miranty & Widiati, 2021; O’Neill & Russell, 2019a; Wang et al., 2013; Zhang & Hyland, 2018), as it provides timely, form-focused, and corrective feedback, such as grammatical accuracy, clarity, and mechanics. In addition to this perceived usefulness, AWE has been shown to strengthen writing motivation and facilitate autonomous learning (e.g., Liao, 2015; Miranty & Widiati, 2021; Nova, 2018; Parra G & Calero S, 2019) in that the quantitative and qualitative feedback enables students to “witness” their learning growth, a form of cognizance considered crucial for being an “autonomous learner” in Ghufron and Rosyida (2018, p. 128). Furthermore, AWE encourages self-reflection when students self-revise, and as a consequence of no involvement of others, students free themselves from judgment, hence decreasing writing anxiety (Dodgson et al., 2016).

Limitations

The adoption of AWE into classroom settings is restricted due to uncertainties over accuracy and usefulness. In terms of accuracy, research on AWE typically focuses on the methodological concerns of AWE developers, addressing only a few linguistic “micro-features” (e.g., Han et al., 2006) or preposition errors (e.g., Chodorow et al., 2010). These studies represent a “system-centric (i.e., focusing on the system performance) rather than a user-centric (i.e., focusing on the user’s interaction with the system) viewpoint” (Ranalli et al., 2017, p. 10). In system-centric studies, measurement focuses on

The uncertainty over usefulness is fourfold. First, AWE feedback is treated with ambivalence. In several studies, students had positive views of AWE feedback but preferred the feedback from their instructors (Dikli & Bleyle, 2014; Gao, 2021; Thi & Nikolov, 2022). Second, much AWE feedback goes unused, as indicated in research on revision behavior (e.g., Bai & Hu, 2017; Cavaleri & Dianati, 2016). In a study of

Given these limitations, much aforementioned AWE literature has suggested the incorporation of teacher language- and content-related feedback (Grimes & Warschauer, 2010). In addition, addressing higher-order concerns is effort-intensive and time-consuming; relying on only one approach may not effectively enhance meaning negotiation and lighten feedback burdens of writing instructors. Peer review may thus be put into play to consolidate AWE feedback.

Peer Review in Writing Practice: What & How

Peer review, an approach where students give one another constructive written or oral feedback in pairs or in groups, has been commonly implemented in writing classrooms (Alnasser, 2018; Anderson et al., 2020; Chaktsiris & Southworth, 2019; Cui et al., 2022; Hentasmaka & Cahyono, 2021; Kusumaningrum et al., 2019; Nusrat et al., 2019; Weissbach & Pflueger, 2018). The successful conduct of peer review requires careful designing (Cui et al., 2022; Hentasmaka & Cahyono, 2021; Kusumaningrum et al., 2019; Weissbach & Pflueger, 2018). To structure peer review sessions, approaches that provide specific guidance to students, such as worksheets with a set of guiding questions (Cui et al., 2022), benchmarks showing groups of writing features (Anderson et al., 2020) or grading rubrics in aid of assessment (Anderson et al., 2020; Jackson et al., 2018; Law & Baer, 2020; Mahmood & Jacobo, 2019; Nagoshi et al., 2019), are often adopted. Moreover, if students are well-trained, the quality of peer review feedback will improve (Jackson et al., 2018).

Potential

Peer review enhances the writing and learning performance of students. Peer feedback, comparable to teacher feedback, leads to writing improvements in higher education settings (Huisman et al., 2020), for structured sessions enable student writers to discern their individual strengths and weaknesses and evaluate their writing performance. Through the peer review process, student writers benefit from constructive criticism (Huisman et al., 2018; Weissbach & Pflueger, 2018), receive support that may reduce their writing anxiety, and develop into autonomous writers (Chaktsiris & Southworth, 2019). In addition, the use of sliding scale rubrics may offer less motivated students an incentive to continue learning tasks and seek help (Mahmood & Jacobo, 2019). With the help of explicit grading rubrics, students generally adopt positive attitudes toward notes anonymously graded by their peers (Nagoshi et al., 2019).

Limitations

Despite the abovementioned strengths, peer review as a sole form of feedback provision exhibits doubtful usefulness. Although Lundstrom and Baker (2009) shared with other studies their finding that feedback-givers gained more in writing performance, other studies found otherwise, as summarized in Yu and Lee (2016). Moreover, students were found to question the quality of peer feedback because of their presuppositions about the competence of their peers (Alnasser, 2018; Tsui & Ng, 2000) and demonstrate negative attitudes toward the review process (Mulder et al., 2014). Students also expressed preferences as to whether peer review should be conducted in groups or in pairs. In Mulder et al. (2014), students demonstrated diminishing expectations of peer review after being exposed to the process in courses taught in different disciplines, year levels and class sizes.

Purposes and Research Questions

The purpose of our study is to increase the compatibility of peer feedback with AWE; in particular, we assess the extent to which student change their attitudes when these two approaches are adopted. The research purpose (RP) is threefold: (1) to integrate AWE software into writing practice to explore its role during pre-writing, writing, and self-editing in post-writing in a large writing class; (2) to consolidate AWE software with peer review during post-writing; and (3) to investigate the perceived usefulness of different AWE integrations at four different writing stages through a mixed-methods approach. To this end, our study answers two research questions (RQs):

RQ1: How do L2 student writers perceive the integration of AWE software during pre-writing, writing, and self-editing in post-writing?

RQ2: How do L2 student writers perceive the combination of AWE software and peer review in writing practice and instruction?

Methodology

Research Design

The combination of methodological approaches is justified by the pragmatic and balanced approach, which aims to “improve communication among researchers from different paradigms as they attempt to advance knowledge” and “offer the best opportunities for answering important research questions” (Johnson & Onwuegbuzie, 2004, p. 16). It is argued that the mixed-methods design allows diverse views and standpoints while enriching a comprehensive understanding of the studied phenomenon (Creswell & Plano Clark, 2011). To address the research questions posited, this study adopted a mixed-method approach—a quantitative questionnaire instrument and a qualitative interview instrument—to first investigate the perceived usefulness of AWE from the perspective of students and then explore the reasons and evidence explicating their quantitative decisions. This adoption aims to involve a diversity of perspectives, enrich the understanding of the RQs, and facilitate inter-researcher communication (Ågerfalk, 2013).

AWE Integration: Functions & Application of Grammarly

This study employed

An elective academic English writing course with 82 enrolled students at an Asian university was studied. This writing class aimed to familiarize undergraduate students with fundamental writing skills. Topics included sentential structures and relationships, common rhetorical patterns, paragraph organizations, and idea development in argumentation. Students were assessed by their performance in collaborative writing tasks and four individual writing assignments. To investigate how

To realize the goal of AWE integration as a pedagogical technique, we (1) introduced

Participants

A class of 82 subjects from different academic backgrounds participated in this study. Their school year and English language proficiency are presented in Table 2. Among them, 51(62%) had upper-intermediate or above levels of proficiency and 31 (38%) intermediate or below. Only 11 (13%) had used AWE in their writing before the time of the experiment. The teacher participant had taught academic English writing for 11 years and had 4 years of AWE user experience.

Description of Participant English Proficiency Level (

Upper-intermediate or above: (1) CEFR B2 or above; (2) TOEIC 750 or above;(3) IELTS 5.5 or above, or (4) TOEFL iBT 72 or above.

Eighteen non-AWE users and two students with some AWE experience from the class were invited for a pilot test during Week 2 to ensure the clarity of the questionnaire items. All participants completed the four-stage questionnaires, and a total of 20 students (10 from the group of higher levels and 10 lower) were randomly selected and invited to participate in semi-structured group interviews.

Data Collection

Table 3 summarizes the research instruments used for data collection, the content of each instrument, the time of data collection, and the corresponding RP and RQ of each method.

Instrument Designs and Corresponding RPs and RQs.

5-Point Likert-Scale Questionnaires

A quantitative 5-point Likert-scale questionnaire was adopted and revised from Cavaleri and Dianati (2016) and Miranty and Widiati (2021). The original questionnaires comprised three domains: student perceptions about themselves in the writing process, student perceptions about

Each questionnaire comprised 34 items. To achieve the three RPs, we made four modifications. First, we added some new and revised statements into the three domains to address several challenges, such as language use and aspects (vocabulary, argument development, coherence and unity). Moreover, we substituted more specific and explicit descriptive statements (i.e., Domain 3, Statements 20–34) for some writing and revising behaviors overlooked in Cavaleri and Dianati (2016) and Miranty and Widiati (2021). The inclusion of the Domain 3 items examined how the integration of

Second, as per the pilot test results, we modified negative statements (5, 8, 9, 12, 16, 17, and 18) into affirmative ones to prevent confusion. We found that nine students had difficulties making judgments when statements were negative. For example, although four students verbally reported that Statement 8 (“I don’t always understand the feedback I get in my writing”) described their writing experience, they marked “2 Disagree,” as explained by one student subject, “I don’t always understand… so I don’t understand… so I disagreed.” This misconception may arise from the double negation in statements (e.g., “I don’t agree with the statement that I don’t always…”).

Third, we did not include statements regarding operating

Lastly, we focused the items in Domain 3 on Questionnaire 2 upon the implementation of IFM, instead of

The revised questionnaires were distributed during each of the four stages, and a total of 328 questionnaires, along with consent forms, were obtained.

Interviews

Semi-structured interviews, unlike a straightforward question-and-answer format between interviewers and interviewees, promote focused, conversational, two-way communication via guiding questions (Burgess, 1984). Semi-structured group interviews were conducted to probe into the causality of variables (i.e., writing difficulties, writing performance, revising behaviors, and

IQ1: How does

IQ2: Please name the benefits and disadvantages of using

IQ3: How does IFM enhance your writing? Please provide explanations or examples.

IQ4: Please name the benefits and disadvantages of implementing IFM.

IQ5: What would you recommend if IFM were to be implemented in a large-sized writing class?

There were five interviewee groups of four (20 students in total). Each was interviewed for 1.5 hr, and each interview was audio-recorded. All the interviews were conducted in the same classroom and post-course. The guiding questions were sent to the interviewees 1 week beforehand.

Data Analysis

The quantitative results were calculated descriptively using software R version 4.1.0 to acquire the mean scores of each item, domain, and questionnaire. The one-way repeated measures analysis of variance (ANOVAs) using the

All interview data were transcribed and coded thematically and inductively following the thematic analysis approach to generate research question-related categories. NVivo 12 was used for organizing and coding the qualitative data, because, as suggested by Malawi, “[T]he presence of nodes in NVivo makes it more compatible with grounded theory and thematic analysis approaches. Moreover, the nodes provide ‘a simple to work with structure’ for creating codes and discovering themes” (2015, p. 14).

The audios were transcribed, significant information was classified into nodes, and nodes formed into parent nodes (i.e., themes) that addressed the RQs. To be specific, transcriptions were read, reread and analyzed line by line. Major points were first identified and coded with different nodes, such as accuracy uncertainty, useful tool, timely feedback, problems with explaining ideas, and formal expressions. After initial coding, all data were collated into groups by nodes. Amongst these nodes, patterns were identified to generate themes, such as enhancement of revision/language use/comprehension/writing quality, elevated anxiety, quality of feedback, psychological effects, and effectiveness with varied degrees. What emerged was the initial codes related to student perceptions about themselves during the writing process and their opinions regarding the usefulness and effectiveness of the AWE integration.

Furthermore, we conducted the coding consistency check to ensure credibility. After we each completed respective coding, we collaboratively deliberated on possible codes and explained and justified different codes for better categorization. We then compared the categorized data with notable similarities, differences, and recurring themes highlighted. We analyzed only the themes most relevant to the RQs for analytical convenience and simplicity (Creswell, 2007).

Procedures

Figure 2 outlines the experimental procedures. As illustrated, over the course of the 18-week semester, three types of data were collected, and four measures were taken. The student and teacher consents were obtained prior to the implementation of this research.

Procedures for data collection and intervention implementation.

The post-writing peer review comprised four steps. To begin, the students were divided into groups of four. Next, each team was given the peer review discussion sheet (Table 4), and each member then took turns to present his or her work and the

Peer Review Discussion Sheet.

Credibility

Cronbach’s alpha is a statistic used by researchers to demonstrate a scale constructed or adopted for a study is appropriate for the purpose it sets out (Taber, 2018). As shown in Table 5, the Cronbach alpha values in this study indicate acceptable and good internal consistency. In addition, as the validity of a research instrument assesses the extent to which the instrument measures what it is designed to measure (Robson, 2011), the questionnaire used here were adopted from two previous literature, Cavaleri and Dianati (2016) and Miranty and Widiati (2021), and it was further revised to form more holistic coverage of the writing challenges an L2 writer may encounter. The revised questionnaires were then piloted and final revisions were made.

Internal Consistency of the Questionnaires.

As for the qualitative instrument, the guiding questions were piloted and sent to the interviewees 1 week beforehand, and the transcriptions were then sent to the interviews for confirmation. To ensure the credibility of the study, the coding consistency check was performed, where the coding was processed by the three authors independently and then collaboratively cross-examine the respective analysis.

Results and Analysis

This section comprises the presentation of quantitative questionnaire results clustered by domains and the explanation of the analysis of qualitative interview data.

Questionnaire Results

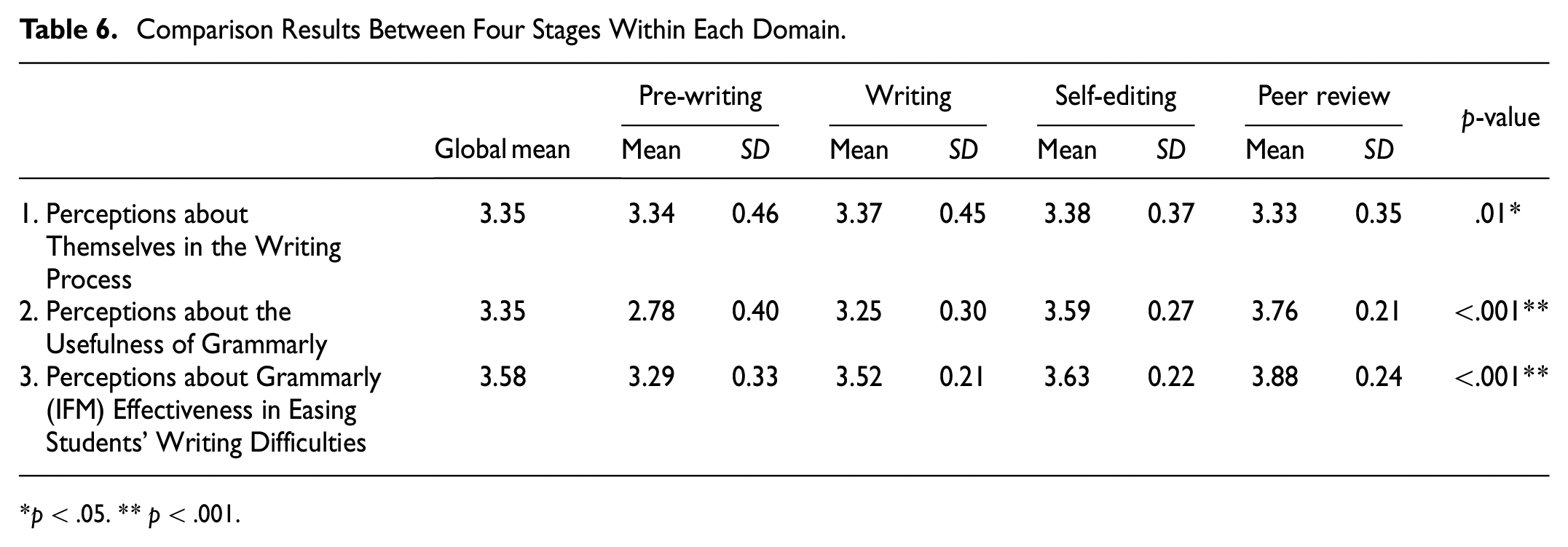

Tables 6 to 9 show the quantitative results gathered from the questionnaires. Results of domain comparison and item comparison are provided in Tables 6 and 7 and Tables 8 and 9, respectively.

Comparison Results Between Four Stages Within Each Domain.

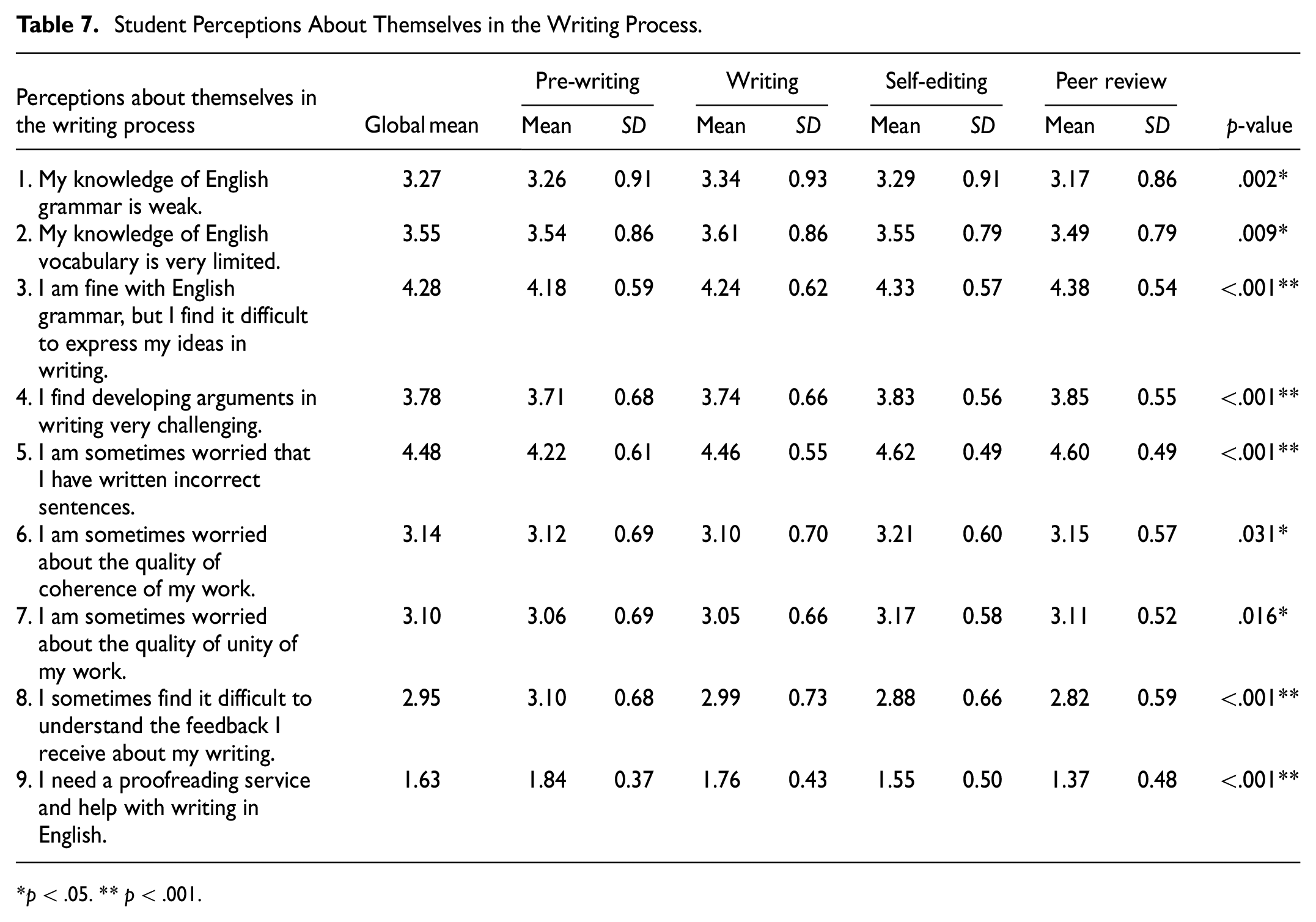

Student Perceptions About Themselves in the Writing Process.

Student Perceptions About the Usefulness of

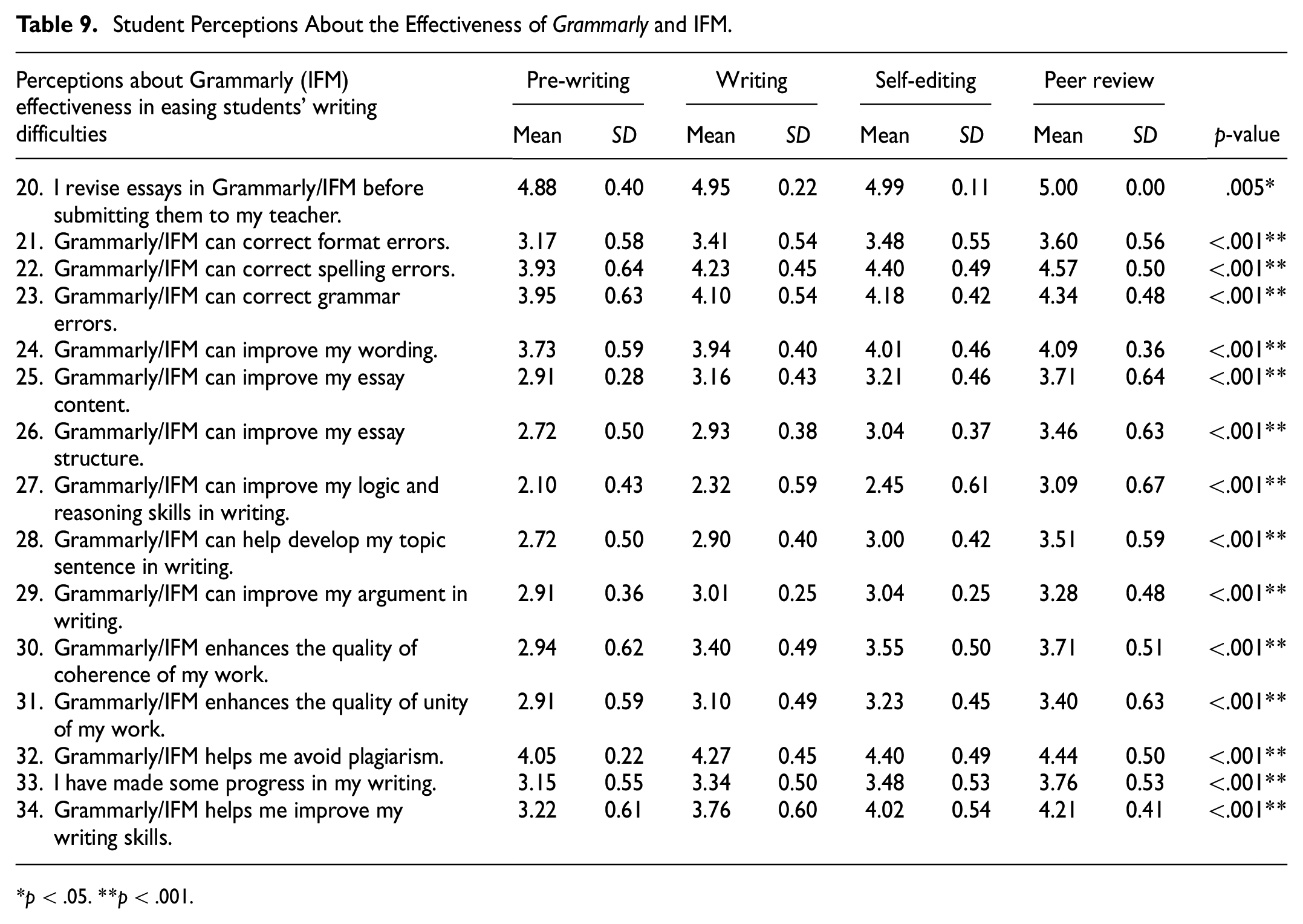

Student Perceptions About the Effectiveness of

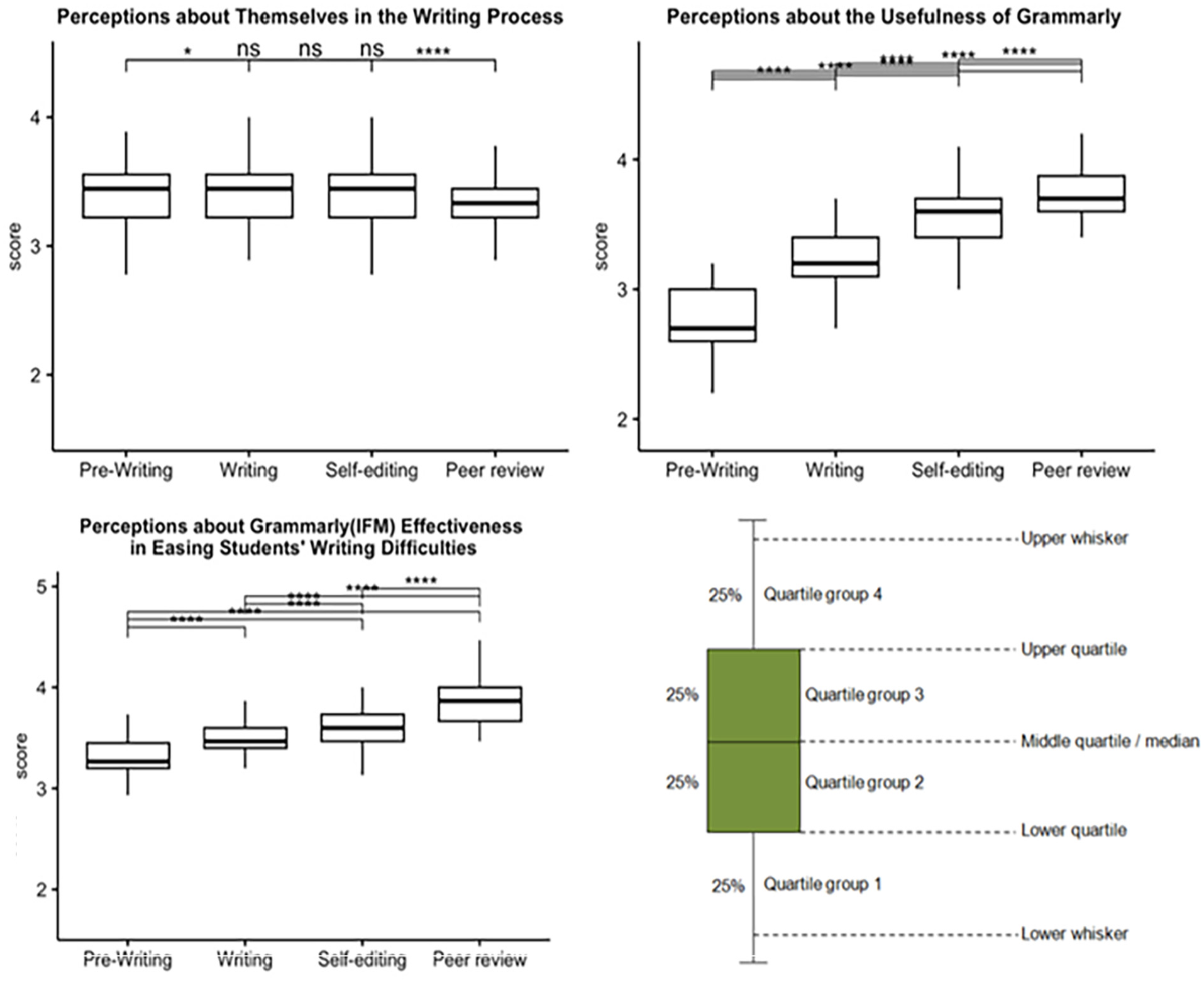

Table 6 presents the comparison results between four stages using one-way repeated measures ANOVAs and pairwise comparison. As shown, there were statistically significant differences between stages within each domain (

To better understand the differences between stages in each domain, pairwise comparisons were performed, and

Pairwise comparison between the stage of the within-subjects factor.

Table 7 shows the results of the mean scores and standard deviation values of each statement item at four stages to examine student perceptions about themselves in the writing process. They all showed statistical significance (

Most statements in Domain 1 were rated between “neutral” and “strongly agree,” except for Statements 8 and 9. The majority of the participants perceived Statements 3 and 5 to be the most agreeable at all stages (

Table 8 presents the results of student perceptions about the usefulness of the

As shown, Statement 11 received the lowest average mean score among all the Domain 2 items (

Statements 15 (the quality of

Positive perceptions of the

Table 9 presents student perceptions of the effectiveness of the two different methods of AWE integration in addressing writing challenges. The first three stages examined the efficacy of the

Moreover, Statement 20 received the highest rating at all stages, confirming that the students responded to these questionnaires based on their true experiences with the tools. The majority of the students gave positive responses to Statement 33, where their progress in writing was agreed on. Most ratings fell between “agree” for Statement 34 (

Items examining the effectiveness of IFM at the post-writing peer review stage all showed the highest ratings of all stages; in other words, the students considered the IFM feedback more effective than sole

Domain 3, where all stages were compared, showed higher mean scores on the items at the two post-writing stages, an indication of greater perceived efficacy during the revision stages. This observation was also evident in the result of Statement 33, where the students acknowledged more significant progress at the latter stages. Notably, the new function, a Plagiarism Checker (Statement 32), received consistently high ratings at four different stages. Less proficient participants rated “agree” or “strongly agree,” and many more proficient participants rated “agree” or “neutral.”

Interview Findings

Several themes merged during the transcription coding. Here, to ensure a focused paper and analytical convenience and simplicity, only themes most relevant to the RPs are presented.

Intensified Anxiety

Nine out of twenty interviewees shared their intensified anxiety about being incorrect. As time progressed, the experience of AWE practice offered the students opportunities to access their existing writing skills and knowledge, thereby providing insights into their own language needs and limits. Although providing the students with some guidelines on what and how to improve, this new grasp could have intensified their writing anxieties, as described by one of the interviewees.

Two interviewees offered different insights. They praised the peer review discussion at the post-writing stage for facilitating peer support, as described as the “emotional support needed” by Subject 4 in Group D and portrayed as “an integral element in reducing stress” by Subject 15 in Group C.

Constructive, Yet Inadequate Comments

Most students recognized to some extent the usefulness of

Providing unclear explanations could result in ambivalent attitudes toward the AWE feedback, as explained by one interviewee:

Grammarly Feedback as a Less Effective Tool at Earlier Stages

Although students appreciated the AWE feedback at the latter two stages, they were less impressed with it at the pre-writing and writing stages. This might result from the low frequency of

One more possible cause was the distinct disparity between the upper-intermediate level students (Groups A and C) and less proficient students (Groups B and D). The less proficient students perceived

Nevertheless, even though 11 interviewees considered

Enhanced Confidence

Because the students confirmed the efficacy of the AWE feedback in correcting surface-level errors, they demonstrated higher confidence in their writing quality. This was particularly evident in the post-writing stages, as explained by one interviewee:

IFM as a Comprehension Facilitator and a Writing Reinforcement

The students perceived the incorporation of the AWE feedback with peer review discussion as a facilitator. The incorporation improved their comprehension of the AWE feedback and strengthened their writing quality in terms of the organization and development of content, argument, and logic, as explained by two interviewees:

Notably, the higher appreciation for peer review discussion at the post-writing stage could provide some insights into why the students perceived

In addition to reinforcing writing, IMF promoted negotiation, reflection, perspective-taking, critical analysis and self-reflection, as evidenced in the sharing of learning actions and writing responses by two interviewees:

Discussion and Implications

Disparities in the Perceived Usefulness of Grammarly are Found at Different Stages

The frequency of AWE adoption can be a determinant of its value (Chen & Cheng, 2008). In the pre-writing stage,

Revision Behaviors and Performance are Enhanced at all Stages

Although Bai and Hu (2017) and Cavaleri & Dianati (2016) claim that much AWE feedback is not adopted by users, our findings favorably compare to those of Ghufron (2019), where students find the feedback helpful for revisions. This affirmative attitude is even firmer at the post-writing peer review stage, as peer support enables better comprehension of the AWE feedback and stimulates further exploration and negotiation of ideas.

Writing Quality is Enhanced by Addressing Low-Order Concerns at Earlier Stages

This finding is consistent with previous literature examining the usefulness of

Writing Quality is Enhanced by Addressing Higher-Order Concerns at the Peer Review Stage

The integration of

Psychological Changes May Take Place Throughout the Grammarly Application

The more students practice writing, the more they realize their own limitations, and this awareness is fortified when they engage in critical peer review discussions, as argued by Zhang and Hyland (2018). Although students perceive the “user-friendly” nature of

IFM Helps Alleviate Writing Anxiety and Reinforce Student Confidence

Although a majority of students may experience anxiety when being constantly reminded of their own writing weakness and insufficiency, some individuals benefit from the inclusion of peer review. Providing peer support to students in writing practice serves as a pillar of support that may lead to the alleviation of their writing anxiety (Chaktsiris & Southworth, 2019).

IFM Encourages Higher Cognition Activities and Complements Grammarly

The AWE feedback enables students to spend more time and energy on more complex tasks, and peer review encourages them to perform higher cognitive skills to complete tasks. IFM fosters meaningful interactions requiring negotiation, reflection, perspective-taking, and critical processing—all of which involve higher cognitive skills and are crucial for fostering autonomous learning. Hence, the introduction of the

The Conjunction is a Complementary Combination

Content formation and argument development are two writing aspects in which most students need assistance. In addition to

This research does not directly measure explicit evidence of the development of autonomous learning, but as shown in the cases like the “Butterfly versus Eagle” analogy in the student interview, the combination of AWE feedback with peer review better enables students to envision their learning growth, a finding that supports Miranty and Widiati (2021). In other words, IFM helps make up for the flaws of

Suggestions and Conclusion

This study explores in a large university writing class different methods of

The results suggest that the teacher feedback burden may be alleviated if IFM were successfully implemented. The success of IFM implementation hinges on the “preparedness of measures,” the availability of sufficient preliminary knowledge and practice of the tools (i.e.,

Footnotes

Acknowledgements

Special thanks to the faculty at the Academic Writing Education Center NTU, Mr. Marc Anthony, and Chong-Hsien Chiou for the kind and professional assistance.

Author Contributions

WYL, KK, and YJS contributed to the design and implementation of the research, to the analysis of the results and to the writing of the manuscript. All authors read and approved the final manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Ministry of Education, Taiwan (MOE) [Project NO. PGE1080405].

An Ethics Statement for Animal and Human Studies

This study was exempted from IRB Review because it was conducted in commonly accepted educational settings and only involved the use of survey and interview procedures.

Availability of Data and Materials Statement

The datasets used and/or analyzed during the current study are available from the corresponding author on reasonable request.