Abstract

This article aims to design a novel edge-centric hierarchical cloud architecture to optimize mobile learning (m-learning) performance during learners executing computation-intensive learning applications. This research adopts the potential of distributed computing paradigms, that is, mobile edge, for improving the effectiveness of m-learning performance in higher education. Edge computing enables computing at the network’s edge and effectively avoids latencies while processing learners’ computational requests. The envisioned architecture was designed on the ETSI MEC ISG protocols and deployed on the university mobile cloud infrastructure. Additionally, a use case was designed, focusing the edge computing’s latency-avoiding ability, and executing it in a real-time environment involving sixteen students from an academic course. The execution results validated the architecture’s contribution, such as tasks executed in the local server, optimized learner privacy, reduced latencies, instant access, lowered bandwidth consumption, and continued tasks’ execution despite the failure of smart nodes. The result influences user acceptance and attracts designers to extend the architecture base focusing on machine learning algorithms (for learning analytics) and blockchain (to prevent malicious attacks) to improve the effectiveness of learning management system performance.

Keywords

Introduction

In the current era, mobile computing advances mobile learning (m-learning) performance and usage in educational environments. Mobile computing is the integrated technology of mobile and cloud (Islam et al., 2020) and augments mobile device resources and enhances energy performance efficiency (Nawrocki & Reszelewski, 2017). It offers several optimistic affordances for improving m-learning performance. Mobile learning is a complete technological teaching model (Crompton & Burke, 2018). It provides access to learning resources and interactions among learners (Sung et al., 2019) in a mobile environment without time constraints. Mobile learners practice this pedagogy to achieve academic objectives (Göksu & Atici, 2013), experience efficient learning patterns, and observe improved learning performance. Noticeably, m-learning performance can be optimized by leveraging distributed computing paradigms’ (DCPs) computational abilities (Shorfuzzaman et al., 2019) and resources. The DCPs enable several modern instructional patterns for m-learning users, such as context-aware learning, synchronous sharing, and guided learning (Sung et al., 2019). Besides, seamless learning, connected learning, interactive learning, and cooperative learning have become a reality due to the integration of DCPs with learning management systems (LMS) (Aldiab et al., 2019). Indeed, LMS is deployed on mobile cloud architecture (M. Wang et al., 2014), enables learners with situated learning and location-aware (Crompton & Burke, 2018), and provides quick content sharing (Göksu & Atici, 2013). Despite these advantages, m-learning users face challenges accessing resource-demanding and computational-intensive learning content. For instance, users encounter latency delays when they need seamless connectivity, video interaction, and accessing resource-rich content. The DCPs, mobile edge and mobile fog have the computational power and potentiality to address latency issues (Elazhary, 2019) and provide an efficient interactive learning environment. Therefore, the authors exploited such provision of DCPs, investigated the possibility of scientific implementation, and the logical integration with the m-learning system to overcome latency issues.

The latency issue arises when learners execute m-learning applications using a mobile cloud framework, and the latency efficiency depends on the learner’s device connectivity to the cloud (Islam et al., 2020). Since latency delays occur due to intermittent network connections to the cloud during the execution, the execution is beyond the control of users. Usually, the computation-intensive and resource-demanding execution tasks suffer latency delays while the learning system processes users’ computing requests. Simultaneously, users’ privacy is at risk due to the vulnerability of confidential information throughout the execution process (Atawneh et al., 2020). However, these concerns must be addressed to enhance the m-learning system performance. These concerns have motivated the authors of this study to provide a potential solution. To the authors, knowledge, none of the current architectures (see Table 1) has taken latency as the core contributor to m-learning performance efficiency. Additionally, there is no architecture (with a single MEC server) available which exclusively developed on mobile edge computing, eliminating latency issues. Noticeably, the DCPs’ potential enabled a platform for researchers to design edge-based cloud architectures that process learners’ execution tasks in a distributed computing environment (Al-Qamash et al., 2018).

m-Learning Effectiveness Ignored in Literature.

Note. m-Learning architectures and DCPs.

An intended research approach must utilize edge computing characteristics while designing new m-learning frameworks (Yousefpour et al., 2019). Emerging architectures (based on edge and fog) have abilities (Varghese & Buyya, 2018) to process computing requests locally and quickly (Elazhary, 2019). Further, adopting 5G will be an attractive provision for eliminating latencies and optimizing m-learning performance. The presented study designs a novel edge-centric cloud layered architecture (Atawneh et al., 2020) following ETSI MEC ISG technical requirements (Sabella et al., 2016; Taleb et al., 2017) to eliminate the latency and optimize m-learning performance. It designs an edge-centric use case, executes it in a real-time environment, and evaluates the use case execution performance against latency and response time. The evaluation result exhibits ultra-low latency and quick response time. Furthermore, the study did a SWOT analysis to determine m-learning performance across different frameworks that show the effectiveness of the envisioned architecture. Finally, it answered the following research questions that were formulated on the scientific gaps.

RQ1: How do the mobile cloud-based m-learning frameworks adopt distributed computing paradigms, such as mobile edge, fog, and the internet of things?

RQ2: How do the emerging architectures adopt distributed computing potential and improve the effectiveness of m-learning performance?

Methodology and Research Design

This research explored emerging computing paradigm characteristics for improving the effectiveness of m-learning performance. Therefore, this study was designed in three folds (i) an investigative approach (Almaiah & Al-Khasawneh, 2020) was adopted to determine the potential features of emerging computing paradigms for m-learning effectiveness, (ii) a novel edge-centric cloud-layered architecture was designed to leverage distributed computing paradigms’ resources for improving m-learning performance effectiveness, and (iii) a real-time use case (learning assignment) (C. C. Wang et al., 2020) was executed using simulation tools CloudSim and EdgeCloudSim. Additionally, a SWOT analysis was performed to evaluate the proposed architecture’s effectiveness and analyze the m-learning execution performance across other emerging architectures (Baccari et al., 2017).

The Design and Approach

(i) Determining DCPs’ potential characteristics: this study explores the DCPs’ characteristics and determines their features (see Section “DCPs Potentials and m-Learning Framework Effectiveness”) to incorporate in the proposed architecture to improve the effectiveness of m-learning and bridges the knowledge gaps.

(ii) Edge-centric architecture design: the authors discussed the idea of designing a novel architecture (Lee & Lee, 2018) with the five experts in the field from the two regional public universities. We discussed the architecture required functionalities and the desired performance to improve the m-learning performance efficiency that was ignored in recent times (Sekaran et al., 2019). Section “The Novel Architecture Design” discusses the architecture design, the associated components, and the application execution setup.

(iii) Use case (edge-based) implementation: several use cases were considered from three academic curricula, such as computer science, architecture, and civil engineering. These use cases were designed considering the regular formative assignments given to students. Students were asked to execute one use case from each curriculum using their intelligent mobile devices and the institution’s LMS (Aldiab et al., 2019). Only one use-case did include in this study and evaluated the performance for m-learning effectiveness (see Section “The Architecture’s Layers”). The following subsections describe DCPs’ characteristics considering the study’s objectives.

DCPs Potentials and m-Learning Framework Effectiveness

The rapid advancement of DCPs and increased use of m-learning have changed the pedagogy of academic learning in higher education (Crompton & Burke, 2018). Adopting DCPs for m-leaning usage can extend the cloud-based m-learning architecture functionality and increase the effectiveness in multiple dimensions (Dede, 2011). Significantly, ECPs shape new m-learning architectures, enhance user communication efficiency during data processing, and address quality control (Parlakkılıç, 2019). Architectures developed using DCPs support robust performance, context-aware (C. C. Wang et al., 2020) processing, and low latency (Elazhary, 2019). Indeed, the integration of ECPs with m-learning gives phenomenal momentum (Raman, 2019) with tremendous benefits to m-learning actors (Parlakkılıç, 2019; Pinjari et al., 2018). Implementing m-learning frameworks developed on DCPs and using LMS effectively optimize m-learning systems’ performance (Aldiab et al., 2019). For instance, architectures using such computing platforms are mobile cloud, edge, and fog provide quick access to educational resources, minimize bandwidth issues, and improve latency delay (Pinjari et al., 2018). Further, such architectures using AI, analyze educational data more intelligently (Cox, 2021), whereas architectures built on edge and fog in 5G networks adequately process users’ data effectively and locally (Meng et al., 2020).

For m-learning effectiveness, DCPs are essential platforms (Elazhary, 2019) to eliminate traditional m-learning systems’ limitations and increase mobile cloud m-learning frameworks’ performance across educational disciplines. The following paragraphs determine the ECPs’ potentials that enable m-learning framework effectiveness (Sekaran et al., 2019).

M-Learning Frameworks Based on Mobile Cloud

This framework builds on mobile cloud computing (MCC) which offers promising features to eliminate traditional m-learning systems’ limitations and extends the framework to obtain ubiquitous learning (M. Wang et al., 2014). MCC is an integrated computing paradigm of mobile and cloud technologies (Islam et al., 2020), and it deploys mobile cloud architecture (MCA) for executing m-learning applications. The m-learning framework that deploys MCA augments the learner’s mobile device computing resources by migrating resource-demanding application execution tasks to the resource-rich distance cloud (Kuang et al., 2018). It is the most effective method for executing resource-demanding m-learning applications on learners’ devices. This framework is very effective for context-aware (i.e., learner-centered) mobile learning systems (C. C. Wang et al., 2020), multitenancy (collaborative learning), and anywhere-anytime access to learning resources by m-learning actors (Atawneh et al., 2020). Users can achieve learning resources scalability and their utilization in a mobile environment through this framework (Rimale et al., 2016). Besides, this m-learning framework offers enhanced processing power, learning content scalability, and storage capacity (Alghabban et al., 2016). However, these frameworks need to be more specific, such as augmented reality-based (Dinh et al., 2013), hybrid, and collaborative m-learning considering mobile agents (Atawneh et al., 2020).

LMS Based on Mobile Cloud Frameworks

These LMSs based on the mobile cloud framework provide an efficient application execution environment during learner’s instruction process, precisely more effective for multimedia learning content. Such LMSs enable wide, rich learning content availability and ease of use. For instance, Moodle (M. Wang et al., 2014) and Blackboard are widely used LMSs (Bb-learn, 2021) and offer excellent benefits for education systems across educational disciplines. Moodle learning system is an open-source platform that facilitates academic institutions with reduced IT infrastructure (hardware and software) cost (Elazhary, 2019; M. Wang et al., 2014). Blackboard Learn is another popular advanced LMS that enables a customizable open framework and is flexible in its pedagogical approach (Bb-learn, 2021). Besides, it is a fully responsive learning system with effective course delivery tools and supports learners in improving their learning effectiveness. Furthermore, there is another mobile intelligent (e.g., context-aware) tutoring system (ITS) based on the mobile cloud framework that offers a knowledge environment to the learners (C. C. Wang et al., 2020). In addition, such LMSs provide learning skills videos, enable students to record their learning progress, and are helpful for teachers in generating student performance reports (Elazhary, 2019).

M-Learning Frameworks Toward Edge Computing

Edge computing in a mobile environment (MEC) was first introduced by the ETSI ISG (Sabella et al., 2016) that enables the cloud characteristics within the radio access network (RAN) (Lee & Lee, 2018). In recent times, the growth of intelligent end devices (e.g., IoT devices) and the robust use of interactive applications are the reasons for increasing data volume (Taleb et al., 2017). However, the use of IoT devices is growing in the m-learning environment since edge computing (decentralized computing paradigm) enables a practical framework to execute m-learning applications quicker and closer to users. The implementation of edge computing using IoT devices in a mobile environment (Sabella et al., 2016) can be a novel framework for the latest LMS. Such frameworks have the potential of decentralized data processing at multiple endpoints locally instead of centralized processing of m-learning application execution in cloud or data centers (Al-Qamash et al., 2018; Yu et al., 2017). During the execution, the edge node is the first hop of immediate processing of locally connected IoT devices (Yousefpour et al., 2019) in the framework. Edge nodes incorporated in such frameworks minimize latencies and improve performance efficiency during the execution and provide a better experience for learners. Indeed, such frameworks improve seamless connectivity, privacy, and network traffic conjunction (Al-Qamash et al., 2018) and provide QoS in a real-time environment. Noticeably, these frameworks allow third parties (e.g., educational institutions) to deploy LMSs and emerging m-learning applications in an effective environment. The edge-cantered m-learning frameworks are responsive LMS and can scale up their capacities required for multimedia learning content delivery and fair with the increasing network traffic to ensure uninterrupted efficient performance (Collier, 2018). They recognized as critical enablers for the 5G and determined many vital uses of the 5G system (Mondal, 2021).

Fog-Based m-Learning Frameworks

Since intensive m-learning application (e.g., augmented reality) execution produces a large volume of data and requires back-and-forth communication create delays between learner devices and the centralized cloud. Indeed, fog is the novel way to minimize these delays (Meng et al., 2020) and utilize such devices appositely. Noticeably, Cisco, in 2014, did introduce the fog computing paradigm for real-time data processing, and the execution occurs at the nearest server (Pecori, 2018). Most academic institutions have started implementing fog computing for their LMS data processing (Raman, 2019). These institutions, during the m-learning execution, do not send all the data to the central cloud but to the institution’s local caches and continue data processing in collaboration with the main cloud conglomerates. Indeed, such caches are believed to be fog nodes; these nodes facilitate the m-learning application execution at local nodes (e.g., learner devices) and share the execution load with the centralized cloud. The execution load distributes among the fog-m-learning framework’s components, such as local caches, users’ devices, and the central cloud (Pinjari et al., 2018), following the requirements of the execution requests. The fog-m-learning systems effectively execute resource-intensive applications, for example, virtual reality and mobile context-aware (Mohiuddin, Fatima, et al., 2022). Such systems are crucial while executing AI-based m-learning applications and offer low latency, which is significant for resource-demanding m-learning applications, and QoS (Parlakkılıç, 2019) and can integrate with the 5G.

M-Learning Framework Using IoT Platforms

Using an IoT platform for the m-learning framework is still in the primary phases since most IoT devices inherit limited power, for example, smart sensors (Yu et al., 2017) and generate the bulk of data. However, it is required to integrate the learners’ processing date of IoT applications with the m-learning execution framework when the execution requests are generated. The IoT-integrated m-learning systems enable a live execution environment for the processing users, and they experience the execution of learning applications in reality (Elazhary, 2019). Such systems have been considerably adopted for health education activities in recent times. Indeed, user devices are empowered with intelligent components, such as RFID tags and wearable sensors, for monitoring patients’ health conditions. On the other side, similar components are also fixed into patients’ bodies that communicate with the user (teacher and students) devices for determining the health condition of such patients (Mohiuddin, Fatima, et al., 2022). Noticeably, the integration of the IoT platform with the m-learning execution framework provides students with a real-time learning experience and also helps teachers to evaluate students’ performance on given assignments (Elazhary, 2019). Hopefully, such integrated systems will extend the execution base of existing LMSs and enable functional characteristics of these potential technologies in improving m-learning performance, for example, identifying and monitoring student online learning activities using intelligent IoT devices.

M-Learning Applications, LMS, AI, and 5G Relevance

In the present era, artificial intelligence (AI) is the most sought discipline, and its functional characteristics create intelligence in machines and are applied almost in every field of life (Q. Liu et al., 2010). It is also largely used for improving educational activities performances by utilizing its intelligent functional aspects (Cox, 2021). Indeed, m-learning performance with the integration of intelligent LMSs is significantly impacted user acceptance (Han & Shin, 2016). In recent times, the utilization of AI-based LMSs has increased enormously since these intelligent systems, for example, Intelligent tutoring systems (ITS), enable customized learning approaches considering learners’ aptitudes. Learners find opportunities to adopt innovative tutoring models following their understanding abilities. Google recently launched a voice-centric learning application, that is, Socratic (Socratic by Google, 2021), which provides a voice-interactive platform for its users. Learners interact with this application in their voices and get the best possible reply to their queries. The Socratic application robustly applies AI-based intelligent algorithms for responding to learners’ requests and is intelligent learning pedagogy for students across educational disciplines (Mohiuddin, Fatima, et al., 2022).

With the implementation of 5G with the decentralized computing paradigms, m-learning applications and mobile LMSs will be more effective for academic institutions. Noticeably, 5G network getting popularity in many countries and has enabled an efficient platform (10–100 Gbps) for decentralized computing (Meng et al., 2020). The provision of 5G encourages emerging architectures developers to design effective mobile cloud architectures (Mohiuddin, Islam, et al., 2022). Such architectures shall leverage cloud-based services (Taleb et al., 2017) and enable an effective execution environment while executing resource-intensive (Islam et al., 2020) applications (e.g., augmented reality and virtual reality) for enhancing m-learning performance. For instance, it will be significantly effective for video lectures, heavy applications, and quick video interactions. Indeed, such architectures provide novel frameworks for decentralized computing and have been established as efficient platforms for m-learning performance efficiency.

The Novel Architecture Design

The cloud-based m-learning architectures execute resource-demanding applications, but their performance gets affected due to latency delays. Further, these architectures face challenges when migrating execution tasks and moving real-time data across the processing architectures (F. Liu et al., 2013) due to latency delays. However, these delays can be avoided by designing novel architectures by leveraging the EPCs or distributed computing paradigms’ characteristics, for example, mobile edge computing (MEC). The MEC is one of the distributed computing paradigms that minimizes latency issues by inducing required computing resources at the edge of the network (Taleb et al., 2017). Noticeably, the edge computing ability to overcome latency issues is the motivation behind designing the current novel architecture. However, a novel edge-based m-learning architecture design must consider the edge computing features for overcoming latency issues and be effective in obtaining performance efficiency.

The presented study proposes a novel edge-based cloud-layered architecture shown in Figure 1. The envisioned architecture leverages its computing resources hierarchically, considering the execution requirements of the processing m-learning application across the architecture. It builds on three core nodes, (i) an edge device (learner device), (ii) the edge server—infrastructure that manages application execution and network workloads, and (iii) the distance cloud—the infrastructure of execution requirements’ resources. It performs the execution at the edge, processes real-time data, and continues execution insights through the hierarchy of layers in the architecture. It follows the protocols of ETSI MEC ISG technical requirements, incorporates mobile edge APIs (Sabella et al., 2016; Taleb et al., 2017), and 4G mobile network standards. Significantly, it offers reliable ultra-low latency communication and enables predictable latency services in milliseconds. Additionally, it provides rich mobile broadband (eMBB) capacity for executing resource-demanding m-learning scenarios, for example, video lectures. The following paragraphs explain the architecture design, associated components, and its relevance.

The panoptic view of the novel architecture.

The Architecture Strategies

The proposed architecture is developed on the three core components and must dynamically cope with the network requirements and follow the ETSI MEC ISG technical principles. The architecture was designed considering the principles, such as instant content delivery, fulfilling bandwidth growing needs, supporting application execution requirements, and a MEC server capable of performing optimum efficiency in milliseconds (Lee & Lee, 2018). Significantly, the design must consider a single platform capable of integrating with learning systems, for example, LMS, and adaptable to institutional academic needs. The architecture must be open to implementing the characteristics of other distributed computing paradigms (Yu et al., 2017).

The Architecture’s Layers

The architecture resources are significantly distributed in its layers. Usually, these layers are classified into physical (tangible resources model) and application layers (application execution framework) (Mohiuddin, Islam, et al., 2022). Additionally, a network layer is determined to facilitate communication between users and across the architecture. Indeed, an m-learning application execution requires integrating these layers to consume the layers’ resources.

Physical layer: This consists of the tangible computing resources, such as learners’ mobile devices and from ECPs, edge nodes, fog nodes, IoT devices, and the cloud infrastructure. This layer also owns the edge server and the associated components of the wireless communication network. The edge server is very significant and stores the native mobile-edge applications and executes m-learning applications locally. During the execution, the data generated by learners’ devices send through edge-gateways to the edge servers and the higher layers following the execution requirements.

Application layer: It is an interface between users and computing resources and consists of APIs for user interactions. It facilitates users with distributed educational modules and executes native mobile edge applications. It offers an environment for executing m-learning applications. It is more effective for resource-demanding applications: context-aware (C. C. Wang et al., 2020) and delay-sensitive applications.

Network layer: This layer is implemented in a network environment and manages wireless communication end-to-end. Usually, the network services are provided by the network operators and offered to the subscribers. For the current architecture, the authors used the university’s network infrastructure (e.g., switches and routers) for executing the m-learning applications.

Layers’ hierarchy: The layers are the architecture’s core components and are designed and configured following the ETSI MEC ISG standard protocols. Figure 2 illustrates the layers’ hierarchy and their contributing components. These components are classified into three levels (based on their specifications) and facilitate the layers to perform functional tasks (Taleb et al., 2017). For instance, the ME system enables user accessibility, the host level manages native applications, and the network level fulfils the network connectivity requirements, including the 3rd Generation Partnership Project (3GPP) cellular network (Lee & Lee, 2018). Regardless, effective network management contributes to the architecture’s overall functionality.

Architecture’s core layers.

The Architecture’s Effectiveness Evaluation

The purpose of designing the current architecture is to create effectiveness for mobile learning systems implementing the characteristics of ECPs. For obtaining effectiveness, the envisioned architecture delivers instant services, for example, learner-centric and offers ultra-low-level latency that contribute to performance efficiency while executing application tasks. Additionally, deploying the edge server closer to the learner devices enhances the architecture functionality and effectiveness.

Functional effectiveness evaluation: The architecture effectiveness achieves implementing the MEC execution principles and can be evaluated when an application execution occurs and during the execution of tasks (Mohiuddin, Miladi, et al., 2022). The learner execution request sends to the edge server for quick processing; the server computes the tasks locally. If the execution task is resource-intensive and needs robust computing, it sends to higher layers using a layer profile request (LPR) message (Atawneh et al., 2020). For establishing communication between the server and the upper layers, additional components—a profile-based, requesting profile server hash value (RPSHV), message field identifier (MFI), a transmission timestamp, and a profile acknowledge message (PAK) are executed to contribute the functional efficiency. Besides, unique identification information, the layer profile message (LPM), and service determiner indicate task definitions and enable intermediate services during the application execution.

Content delivery effectiveness: Content delivery is an essential requirement during the m-learning application execution. The proposed architecture was designed by emphasizing the effectiveness of content delivery. It delivers content based on intelligent methods. The edge server receives the content request sent by the learners and determines the request for the execution task requirements. It initiates a lookup for the requested content on its repository and delivers it to the requesting device on availability. Otherwise, the request redirects to the higher layers for obtaining the required content. Hence, the current architecture is effective in content delivery services.

Experimental Environment

The architecture and m-learning execution framework build around the m-learning users, users’ edge devices (Android mobile phones), the MEC servers, the edge cloud, and the university LMS. For initial execution, the university mobile cloud architecture was used for deploying the proposed execution framework. The edge server was configured in line with the existing system and deployed following the ETSI MEC ISG technical standard for the preliminary execution test. The system’s services were customized considering the m-learning application execution computing needs. Indeed, the service effectiveness is relevant to the architecture’s designing strategies and the components used for the architecture.

The System Model

The authors deployed the following components near the requesting edge devices in the university campus for executing the m-learning use case.

Learner’s devices: The authors made it mandatory that authorized students use the same model Android phones with almost similar configurations for the experiment. The communication between the server and the devices occurred following the execution requirements, such as native and edge computing task execution.

The system and application deployment: For the experiment, the authors used the university LMS and implemented the required learning tools, including the video lecture application. Usually, this application is used for outdoor student assignments. During the execution, the server keeps the tracking of each device’s execution requirements and offers the required computing resources by leveraging from the upper layers of the architecture hierarchy.

The server and its relevance: The server and its associated nodes participate in computing tasks when the server receives requests from the learner devices. The server and other participating nodes (e.g., mobile devices and gateways) perform execution at the edge and assume as edge nodes. The server executes requested tasks and leverages computing resources from the architecture’s upper layers considering the task execution requirements. For instance, the server leverages distributed computing resources for resource-demanding applications, for example, video content. It manages the execution tasks and sends the task execution result to the requested device. Noticeably, the server’s effectiveness varies based on its configuration. For the current architecture, the authors used the server with the following configuration:

[model-Dell OptiPlex 9010/7010, processor-Intel i7 3770 speed 3.4 GHz, RAM-16GB, Hard disk-1 TeraByte, Graphic card-AMD Radeon HD 7470, Network card—Intel 82579 LM Gigabit, Wireless device-DW 1530 Wireless NW LAN, Operating system Windows 10 Professional].

Edge-mini cloud: It is an on-premises computing resource environment closer to user edge devices and shares the computing workload with the university mini cloud. If the application execution requirement is beyond the mini cloud, then the execution tasks send to the public cloud. Indeed, it establishes a novel computing platform that decreases latencies and optimizes bandwidth performance. The mini cloud seeks communication services, AWS, and supports the execution framework with local resources and dashboards. The architecture orchestration aspects control the execution loads and enhance the performance effectiveness during the use case implementation.

The Use Case Description and Deployment

Description: In this use case, the course teachers gave a real-time group assignment to sixteen architecture students from the university bachelor program. Students were asked to design seating arrangements in two auditoriums (300 and 400 capacities) at two buildings on the university campus following the given specifications. Students were suggested to form four groups, each with four students. They instructed to implement the provided instructions, such as (i) use the suggested mobile device camera, (ii) use the LMS tool & the App, (iii) communicate through video conference, (iv) measure and share the design, and (v) submit the final design to the teachers within the stipulated time.

Deployment: The authors deployed the MEC on-premises server on the university’s existing mobile cloud infrastructure using a private deployment option (Brown, 2016). The m-learning application like MagicPlan (iOS/Android) was installed on the server and served to participate students using multiple MEC applications (APIs), 4G and small cell networks. The application execution framework consumes radio network information service (RNIS) and VPN and disseminates video streaming among the participants. The proper deployment of the MEC resources on the preconfigured infrastructure enhances MEC services and the effectiveness of the envisioned architecture.

The Use Case Execution Environment

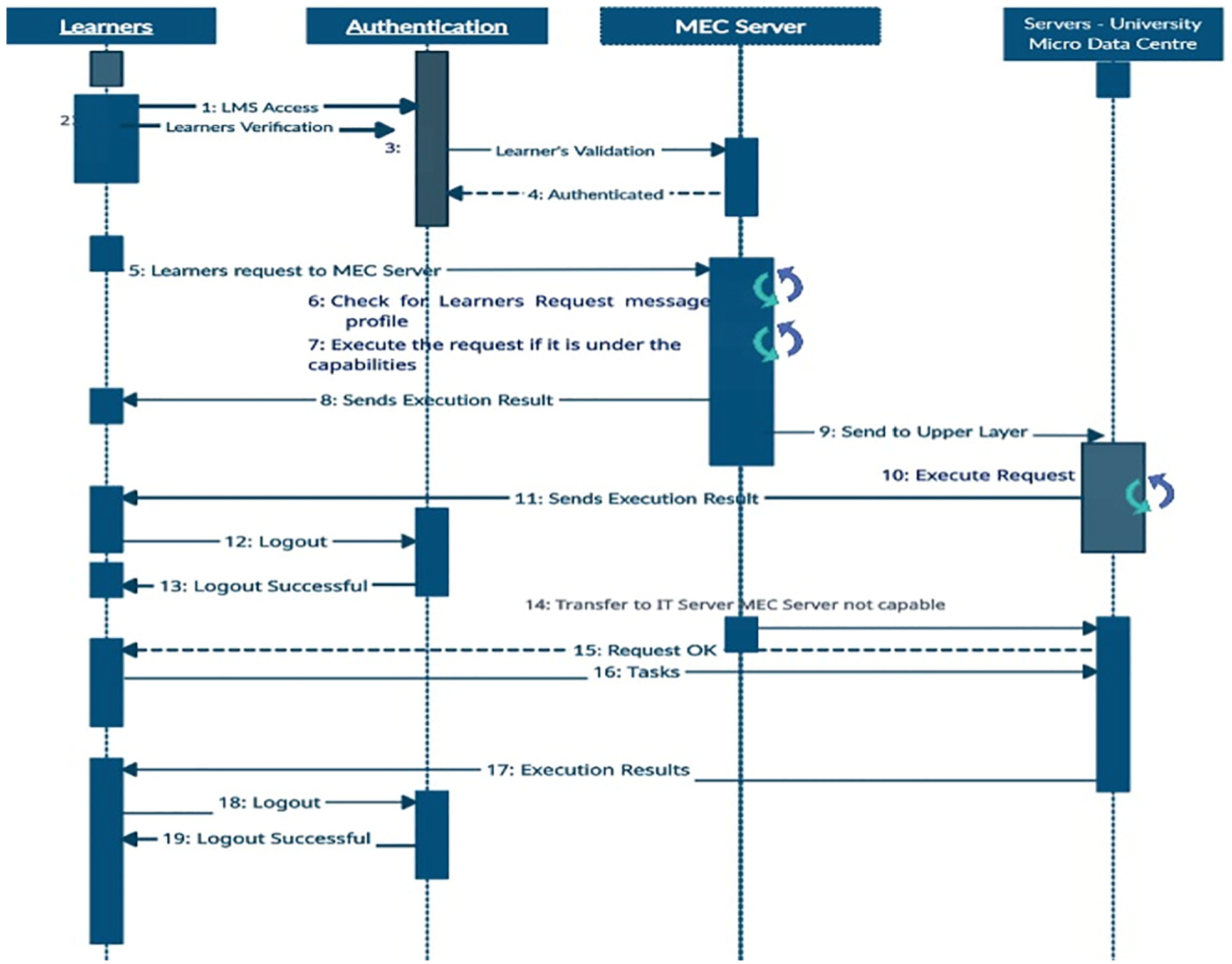

The use case successful execution was based on nineteen scenarios and distributed into three phases: (1) learner authentication, (2) consuming MEC server services, (3) leveraging distributed computing resources. Figure 3 illustrates the sequence of scenarios that contribute to the execution of the use case.

The use case execution 19 scenarios.

Phase 1: Learners access the MEC server through verification and validation. The learners’ data and computing needs send to the server using the request profile message.

Phase 2: The MEC server determines the computing needs requested by the learner’s devices and serves the requests considering the request message profile requirements. Further, the server sends the computed results to the requested devices using the request message profiles. The server repeats the entire process for every received request through the request message profile. However, if the received request computing needs beyond the server capabilities, it sends the request to the edge cloud (neighboring servers) or the higher layers and leverages the distributed computing resources.

Phase 3: The request with resource-demanding and computation-intensive needs process in this phase. The processing request consumes more resources and increases the average access time due to the request-result-received process (turnaround time).

The Use Case Execution Analysis

For the use case execution, the authors used CloudSim and EdgeCloudSim, considering several other simulation tools (Ashouri et al., 2019; Bahwaireth et al., 2016). For the execution analysis and performance evaluation, the latency and response time metrics were chosen, emphasizing ISO/IEC 25023 standards. Additionally, the authors have executed the application in real-time on the computing infrastructure with 16 students to validate the scientific scrutiny.

The execution MEC-network topology: The MEC-network topology was designed into three layers of execution. The execution layer 1 consists of the MEC server, and it was interlinked through several edge nodes, for example, wireless router and the bandwidth was tuned between 80 and 100 Mbps. The server capacity was assumed to serve up to 200 migration requests while processing the execution requests received from the learner’s devices. Noticeably, the following three hypotheses were recommended for the execution—the CPU cycles were set to serve computing operations complexity, the migration task to the server performs quality execution with one gigacycle, and the execution time for each migration task could be 0.4 seconds. The execution analysis was done across the four MEC-network models: flat MEC, hierarchical MEC levels, mobile cloud, and the proposed architecture. The following are some sample requests (SR) generated by the student devices (SDs) to the edge server (ES):

The Architecture Latency Effectiveness

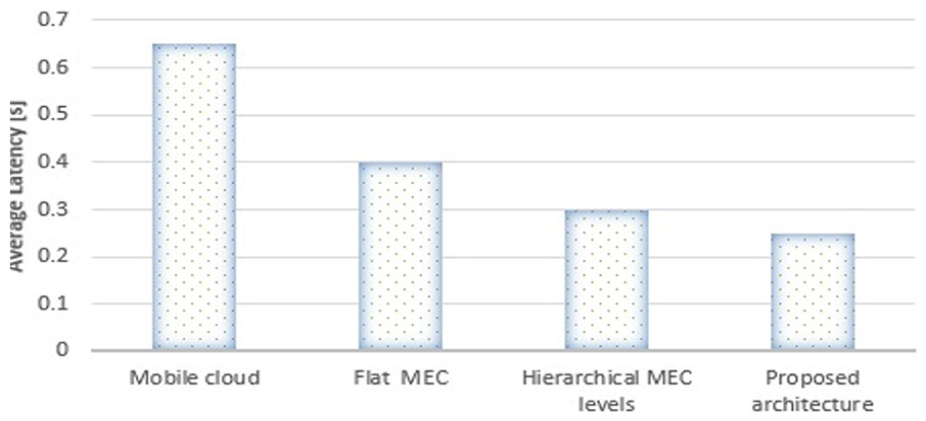

Use case performance versus latency: The use case execution performance was evaluated, and the average latency was measured in the EdgeCloudSim simulation environment across the four MEC frameworks. Figure 4 illustrates the behavior of the average latency time (ALT) that was analyzed against the SDs’ requests for the execution of tasks. The proposed model outperformed other models.

Latency performance versus use case execution (simulation).

The average latency was evaluated by executing the m-learning application in a real-time mobile cloud environment. The execution was implemented on the university mobile cloud and the newly set up MEC-network framework with sixteen students. Figure 5 shows the average latency time consumed by the four models. Therefore, the mobile cloud consumed almost 0.7 seconds, whereas the proposed architecture showed less than 0.3 seconds. In fact, the m-learning application execution tasks were the same for all the architectures.

Latency performance versus real-time use case execution.

Flat MEC: builds on several MEC servers, which are logically connected at the same level to serve computing requests. For the current use case execution, the author deployed one MEC server closer to data-generating devices (SDs). The architecture showed low latency and the ALT increased with the growing execution requests. Hence, these requests move to the adjacent server to improve the computational performance (not covered in this article).

Hierarchical MEC levels: it is an extended model of the flat MEC. Therefore, the latency performance is much better than the flat MEC since the hierarchical model owns more computing resources than the flat MEC and can do more robust computation.

Mobile cloud: it is a generic mobile cloud architecture developed on mobile and cloud computing technical requirements. Indeed, the cloud computing resources are comparatively at a longer distance and consuming these resources is latency expensive. Therefore, the ALT is much higher than the other two architectures.

Proposed architecture: this edge-centric cloud architecture offers ultra-low latency for the use case execution since it was developed on one MEC server. It did address latency issues and eliminated the round-trip time of the mobile cloud architecture. For computational effectiveness, the resource-intensive execution tasks send to the architecture’s upper layers and leverage distributed computing paradigms resources. The distributed resources enhance computational abilities and create an effective computational environment in MEC.

The Architecture Response-Time Effectiveness

For response-time effectiveness, the authors evaluated the use case execution performance for the content download, such as students and teachers were involved in sharing and downloading the video reports using the current architecture and the university LMS tool, that is, content download. Figure 6 illustrates the average response time (ART) against the content downloaded by the users. Noticeably, it showed the response-time effectiveness during the use case execution.

Use case execution performance versus content download time.

Flat MEC: supports faster access time than the mobile cloud architecture. It builds on several MEC servers, which are logically connected. However, its ART increases with the growing number of computational requests or resource-intensive tasks; in such cases, the execution requests move to neighboring servers.

Hierarchical MEC levels: it receives requests from the lower level, that is, flat MEC and manages users’ execution tasks until the requests for content transmission are controlled. It faces limitations, and its performance dilutes when the users’ content download execution requirements increase. Noticeably, the architecture response time effectiveness varies on (i) the content download robustness, (ii) the users increasing content transmission requirements, and (iii) the computational capabilities of MECs.

Mobile cloud: its response time varies based on the MCA framework and the potential of cloud infrastructure. For the current use case, the content download time performance was affected when the users’ content transmission increased.

Proposed architecture: the MEC server offers instant content download when the content is available since it owns the functional caching ability. However, if the requested content is unavailable on its repository, the request moves to the next layer in the architecture hierarchy (see Figure 1). The current architecture is more effective than the other three since it offers instant response time for the requested content download.

Result and Discussion

The presented study aims to determine how cloud-based m-learning frameworks integrate with distributed computing paradigms (RQ1). It also investigates the emerging computing architectures’ impact on the edge-centric m-learning frameworks regarding latency and response time (RQ2). Further, this study designed a novel edge-centric cloud architecture to improve m-learning effectiveness during tasks’ execution.

Cloud computing is a resource-rich environment that facilitates m-learning frameworks to enhance performance (M. Wang et al., 2014) by leveraging cloud resources. The m-learning execution framework is developed on an MCA and offers several benefits, such as rich learning resources, independent learning, content sharing, and low cost for its users (Alghabban et al., 2016). However, such frameworks experience high latency, long response time, and security issues while consuming cloud resources. High latency and response time are the most critical issues that affect m-learning system performance and user acceptance. The emergence of DCPs and their potential characteristics are very effective in addressing such issues (Collier, 2018; Pinjari et al., 2018; Raman, 2019). For instance, many educational institutions have already started implementing DCPs, such as edge and fog computing, for their educational computational needs (Raman, 2019). This study looks at the potential characteristics of DCPs, to enhance m-learning performance and achieve latency and response time effectiveness while executing m-learning applications (Sabella et al., 2016). The current study determines that edge computing is significant in a mobile environment and improves m-learning effectiveness.

The proposed decentralized architecture uses a MEC server and small power cell stations. It leverages the institution’s micro data center and public cloud while executing resource-intensive m-learning applications. It lowers the demand for radio access bandwidth, improves response time, and eliminates latency issues. It minimizes network dependency and enables a secure environment for users across educational disciplines. It remarkably offers ultra-low latency, offers localized computing, minimizes travel data, bypasses the primary data center, copes with network traffic, impacts users’ acceptance, and shows performance effectiveness.

Implementation Result Analysis

The architecture performance was measured and analyzed for latency and response time effectiveness shown in Table 2. Its latency performance and response time outperformed the other architectures while computing learners’ requests. It assures quality performance and enables the quality of experience (QoE) in interactive learning, personalized learning, and the given computation-intensive assignment. Besides, the teachers interactively control students’ activities using the LMS, which is incorporated with the orchestration of academic solutions (Han & Shin, 2016). Certainly, the execution results encourage the authors to execute more computation-intensive use cases, such as intelligent video analytics and augmented reality applications. The energy efficiency performance has been included in the table but did not consider in this paper.

Execution Result Analysis.

Note. Architecture performance in metrics.

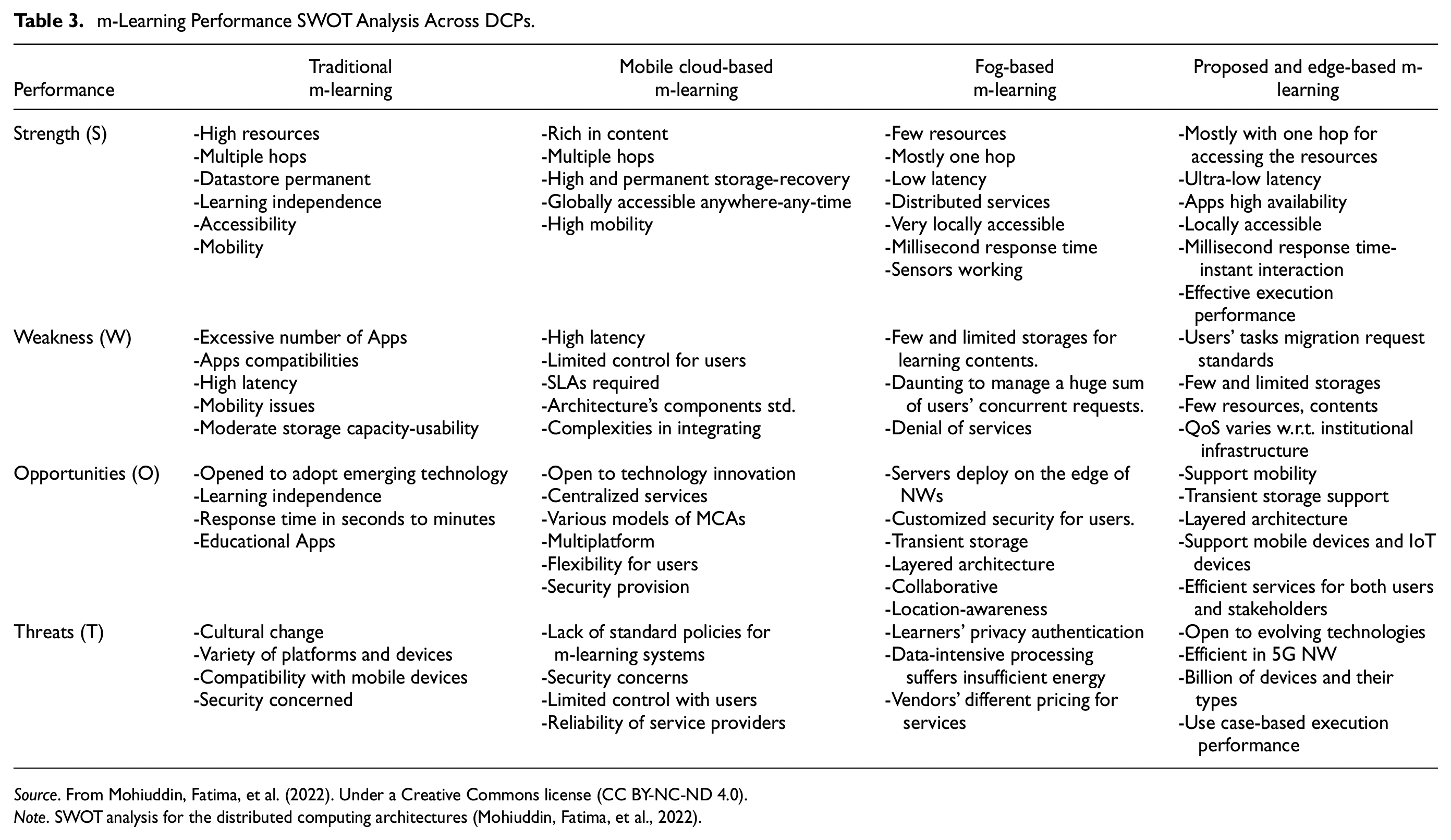

A SWOT Analysis for m-Learning Effectiveness

For m-learning users, it is significant to know about the essential characteristics of the current m-learning systems. Users get confident when they learn about the effectiveness of the system they are using. The system’s effectiveness enables users a platform for better performance in learning-teaching. Therefore, the authors did a SWOT analysis (see Table 3) of m-learning systems that help users and readers understand the potentialities of the m-learning systems based on DCPs. The table shows that the current architecture and edge-based architectures showcase coherent performances.

m-Learning Performance SWOT Analysis Across DCPs.

Source. From Mohiuddin, Fatima, et al. (2022). Under a Creative Commons license (CC BY-NC-ND 4.0).

Note. SWOT analysis for the distributed computing architectures (Mohiuddin, Fatima, et al., 2022).

Architecture’s Novelty and Effectiveness

The novelty of the architecture includes edge-centric novel design strategies, deploys one MEC server, and leverages DCPs’ characteristics, whereas the flat MEC builds on several servers and takes more time for the user’s response (Lee & Lee, 2018). The other novelty is that it skips the flat MECs’ searching time for executing users’ requirements. Remarkably, it offers the following advantages for its users and validates performance effectiveness.

■ It overcomes mobile cloud-based m-learning issues, such as multiple hops, round trip time, security, bandwidth consumption (Islam et al., 2020) (Rimale et al., 2016), localized computation, and QoE.

■ It enables a single integrated platform, facilitates interactive communications, and offers practical tools for accessing learning content instantly.

■ It is effective for video interaction, dynamically scalable, increases content repository utilization, and provides meaningful learning analytics.

■ It deploys on institutional LMS, integrates with the existing IT infrastructure, follows common procedures, and is cohesive with technical standards.

■ It is compatible with the university LMS applications and helpful in generating learning performance analytical reports for future reference.

■ It is cost-effective, minimizes security threats to confidential data, improves privacy by processing data locally, and is effective for overall system performance.

The Architecture Constraints and the Future Research

The architecture performance is efficient while executing the use case application and validates the study’s objectives. The execution framework confirms the scientific scrutiny and enables a platform for executing resource-demanding use cases. However, the architecture has faced certain constraints throughout the process.

The architecture was developed on the university mobile cloud infrastructure, and the use case was implemented using the LMS tools. The architecture consumes the computing power of the MEC server and leverages resources from the layers of the architecture’s hierarchy. It faced many constraints: the location of the MEC at the edge and the integration of the architecture with the university IT infrastructure. Further, execution cohesiveness was required among the MEC server and ETSI NFV environment. In addition, radio access bandwidth, connectivity, latency estimation, the overhead of data portability, failures of local network collectors, and the increasing number of learners during the execution remain potential constraints. Besides, the readers must be aware of the implications associated with the architecture, such as the performance efficiency measured against latency and response time by executing the use case. The performance efficiency depends on the architecture design and varies with the effective management of the small cells and robust network connectivity.

For future work, an intended approach should consider round trip time for the current architecture and leverages different characteristics of DCPs (e.g., edge data center for users’ learning analytics) for computationally complex use cases. Execute the same use case with enhanced server configuration or deploys more MEC servers at the first layer, like the flat MEC model. Use mobile orchestration, adopt other IoT devices (e.g., sensors), and efficiently manage cell zooming to balance the user’s loads. Mobility model, interface-aware protocols, extending the architecture base by including edge orchestrator, portable workloads, and multi-tire model are a few significant aspects that can be more effective for optimizing the learning management system’s performance.

Conclusion

This research develops a novel edge-centric cloud architecture based on the ETSI MEC ISG standards and focuses on leveraging the DCPs resources to optimize m-learning performance across the educational disciplines. The MEC server integrates with the university’s existing mobile cloud architecture, establishes coherence with the university LMS, and executes the use case by implementing the iOS-Android application, almost like MagicPlan, available on Google Apps. Students were involved through video conferencing while executing the use case and completed the formative assignment using their mobile device cameras. They submitted the final reports to the teacher and shared them with other students during the process. The results showed that the architecture performance effectively eliminated latency issues and improved the response time efficiency in downloading video content. Remarkably, it computes data locally and scales the computing resources considering the users’ execution tasks’ requirements. However, the authors evaluated the architecture performance for users’ requesting time to the server and learnt that the performance efficiency varies based on the users’ adequate connectivity to the server and the proper management of the small cells. Integrating different interfaces and implementing the mobility model, including the edge orchestrator and multi-tire model, are exciting factors, for the future approaches.

Footnotes

Acknowledgements

The authors would like to express their gratitude to King Khalid University, Saudi Arabia for providing administrative and technical support.

Author Contributions

Dr. Khalid Mohiuddin: Conceptualization, writing original draft, funding acquisition; Dr. Huda Fatima: Investigation, resources; Dr. Mohiuddin Ali Khan: Supervision and review of the paper; Dr. Mohammad Abdul Khaleel: Software and validation; Mrs. Zeenat Begum: Investigation, project coordination; Mr. Sajid Ali Khan: Project administration; Mr. Omer Bin Hussain (added): Methodology—designed revised methodology, review, and editing; Mr. Mohammed Aminul Islam (removed): He was a member for filling the university scientific deanship application forms for the initial requirement of the current project and no contribution for the current paper.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: The authors of the manuscript certify that they have NO affiliations with or involvement in any organization or entity with any financial interest (such as honoraria; educational grants; participation in speakers’ bureaus; membership, employment, consultancies, stock ownership, or other equity interest; and expert testimony or patent-licensing arrangements), or non-financial interest (such as personal or professional relationships, affiliations, knowledge, or beliefs) in the subject matter or materials discussed in this manuscript.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The authors extend their appreciation to the Deanship of Scientific Research at King Khalid University for funding this work through Small Groups Project under grant number RGP.1/26/43.

Ethics Statement

Not applicable.