Abstract

Learning approaches are assumed as the utmost important aspect in all academic proceedings. With the identification of students’ learning approaches, relevant institutes can devise appropriate instructional strategies. Many models have been used to explore learning approaches and styles among students, but mostly in the developed world. Literature is further scarce in the Arab region. This study aimed to assess the construct validity and reliability of the revised Biggs Study Process Questionnaire-2-factor (R-SPQ-2F) both in English and Arabic. The study also explored the learning approaches among students at King Abdulaziz University Jeddah, Kingdom of Saudi Arabia. This cross-sectional study comprised 556 participants and was carried out from October 15th, 2020, to January 15th, 2021. The reliability of the complete scale and its subscales showed good reliability, Cronbach’s α ranges from .881 to .925. Confirmatory factor analysis (CFA) showed no notable discriminant and convergent validity concerns, and all fit indices were well above threshold levels for both English and Arabic Versions. A significantly higher median deep learning score was observed in males and students with ≥4.0 GPA (p = .018 and .012, respectively).

Introduction

Different approaches to learning and the character traits of students require different but appropriate instructional strategies and assessment plans. The problem becomes even more difficult when students from different educational backgrounds enter the university. This complex transition may lead to some discrepancies in the learning environment, students’ expectations, and existing instructional and assessment proceedings (Kassab et al., 2006; Reitmanova, 2011; Shukr et al., 2013). More importantly, there has been increasing concern about teaching and learning environment and curriculum changes in recent decades with an increase in colleges, universities, and subsequently students. Therefore, exploring the learning approaches among university students can be considered a consistent obligation of relevant stakeholders to address the dynamic nature of learning environments.

Many models and tools have been used to explore learning approaches and styles among students, but mostly in the developed world (Coffield et al., 2004; Romanelli et al., 2009). Marton and Säljö’s (1976) work and recommendations have long been established and appreciated that categorize students’ learning into “surface” and “deep” approaches. The surface approach is considered mostly assessment or exam-driven. It usually requires more supervision and organization where students intend to replicate knowledge with less priority to purpose or link between the learning materials (Yong, 2010). In contrast, the deep approach is generally built on students’ intrinsic motivation and active interests. They tend to follow the course materials objectively, carefully, and critically with efforts to look for evidence and its application (Munshi et al., 2012).

Further exploration of surface-based and deep-based learning approaches was discussed by Biggs et al. (2001). Many studies have been conducted to measure learning approaches among students using different tools (Chaudhary et al., 2015; Rehman et al., 2013; Shukr et al., 2013). In exploring surface and deep learning approaches, the revised Biggs two-factor Study Process Questionnaire R-SPQ-2F is one of the major outcomes of Biggs et al. (2001). Furthermore, this tool has also been used in different parts of the world to explore learning approaches in different settings (Freiberg Hoffmann & Fernández Liporace, 2016; Martinelli & Raykov, 2017; Shaik et al., 2017). Besides, the literature has pointed out that R-SPQ-2F has a cross-cultural sensitivity (Stes et al., 2013).

This study aimed to assess the reliability and validity of the revised R-SPQ-2F tool to explore the learning approaches among undergraduate students in Jeddah, Kingdom of Saudi Arabia. Recently, there has been a continuous increase in new colleges along with more focus on education reforms across the fields. Study findings will be important due to the limited number of published studies probing learning approaches among students in the region, particularly in Saudi Arabia. Besides, this study also aimed to assess the tool using both; Original English version (Biggs et al., 2001) and the Arabic version (Munshi et al., 2012) of the revised R-SPQ-2F and compare. It is the first study to our knowledge to use both English and Arabic versions of R-SPQ-2F together.

Methods

A cross-sectional study with a sample size of 556 was carried out from October 15th, 2020, to January 15th, 2021 at King Abdulaziz University, Jeddah, Kingdom of Saudi Arabia. The ratio (N:q) of the overall sample size to the number of free parameter estimates (latent variable, indicator, variance, covariance, or any regression estimates) included in the model. Schreiber et al. (2006) suggest that the consensus for a sufficient N:q ratio is 10:1.

In the present study, the R-SPQ-2F was used for collecting data. The original English version (Biggs et al., 2001) and Arabic version (Munshi et al., 2012) of the revised R-SPQ-2F were used. All students registered in the study institute were eligible for the distribution of questionnaires. It is a validated and pretested tool with 20 items leading to two main scale scores deep approach (DA) and surface approach (SA) and subscale scores (deep motive, deep strategy, surface motive, and surface strategy) were calculated according to (Biggs et al., 2001). A 5-point Likert scale was used to evaluate the learning approaches comprising of responses. The responses to items were scored as 1 to 5. For the Arabic translated version (Munshi et al., 2012) used in this study, an established forward–backward translation procedure was used and later also pilot-tested independently, for problems in acceptance and comprehension of the questionnaire content or the phrasing.

Educationists and experts within and outside the study institute were consulted for the face and content validity of the tool. Furthermore, for this study, the tool was also pre-tested in another institute on 30 students in Jeddah with comparable settings to study institute using both English and Arabic versions to observe its status in the local context and identify any required modifications. However, no issue or change was identified. The survey was created online and later shared with all students during their classes along with the support of tutors after appropriate required approvals. Students were asked to choose either the English or Arabic version according to their preference.

The study objectives and relevant information were shared with the students. Consent was acquired from all participants and they were also informed about their voluntary participation and the confidentiality of the data. Only completed questionnaires were included in the analysis.

Software, IBM SPSS Statistics version 25 and IBM AMOS version 20 were used to analyze data. Required descriptive and inferential statistics were performed. The main scale scores and subscale scores were calculated according to Biggs et al. (2001). For this part of the study, reliability and validity were assessed by Cronbach’s alpha and confirmatory factor analysis (CFA) respectively. CFA models were conducted to determine whether the latent variables (deep and surface) adequately describe the data according to the guidelines given in Figure 1. Maximum likelihood estimation was performed to determine the standard errors for the parameter estimates. The significance of the CFA model was evaluated using the chi-square and the adequacy of the model was assessed by fit indices. Lastly, discriminant and convergent validities of the CFA model were examined. To detect differential items, differential item functioning (DIF) analysis was performed using the R package “difNLR” (Hladká & Martinková, 2020). Wilcoxon Rank sum test and Kruskal Wallis H-test were used to compare scores of deep and surface learning approaches.

Guidelines to evaluate the CFA model.

Results

The mean age of the study participants, was 22 with a range from 18 to 30 years including around 56 % males and 47% females as shown in Table 1. Participants were further categorized into Medical, Science, and the other category (all faculties other than Medicine and Science faculties).

General Characteristics of Study Sample.

Internal consistency of the complete scale was assessed by McDonald’s ω (Hayes & Coutts, 2020) and its sub-scales by Cronbach’s α as shown in Table 3. The Cronbach’s alpha coefficient was evaluated using the guidelines suggested by George and Mallery (2018) where >.9 excellent, >.8 good, >.7 acceptable, >.6 questionable, >.5 poor, and ≤.5 unacceptable. The items for the Overall scale and all its subscales had a Cronbach’s alpha coefficient of .80 both for Arabic and English versions, indicating good reliability of the scale. Details of reliability analyses are given in Table 2.

Internal Consistency of Biggs Study Process Questionnaire 2-Factor (SPQ-2F).

The results of the CFA model are presented in Table 3, all path coefficients are highly significant and well above the threshold of .5 except items 16 and 20 in the Arabic version while item 16 showed relatively lower loading in the English version.

Unstandardized, Standardized Loadings, Significance of Each Parameter in the CFA Model and Differential Item Analysis.

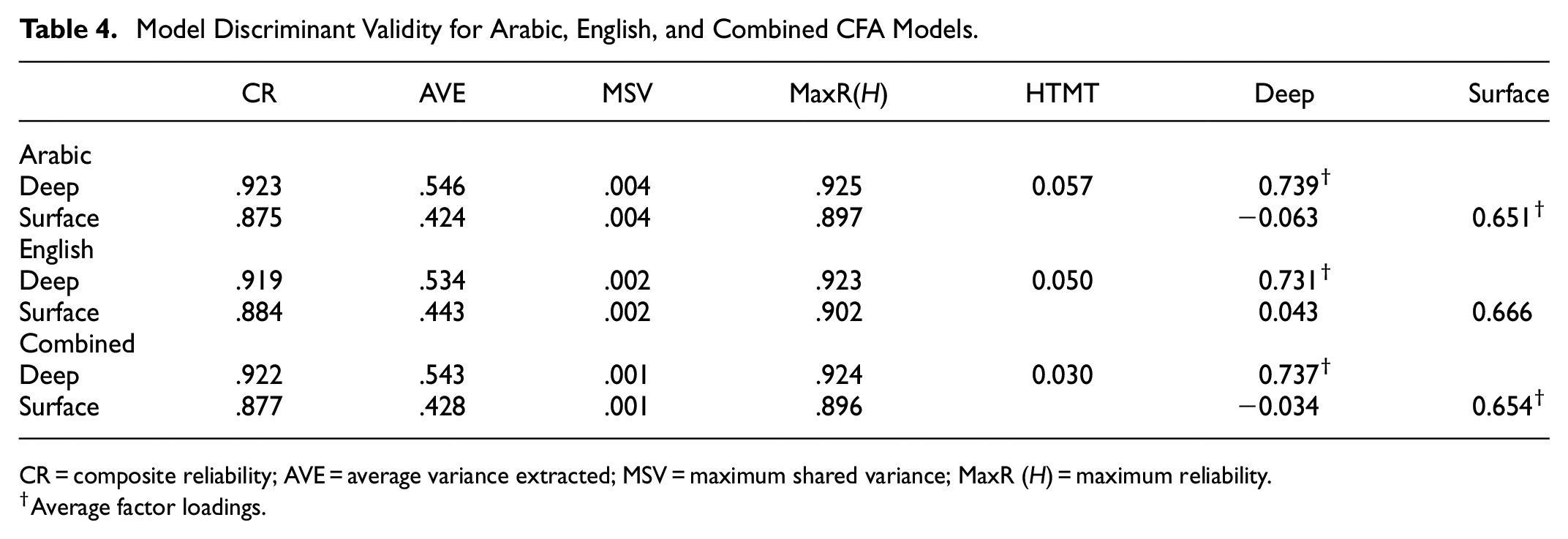

Discriminant and convergent validity results were discussed in Table 5 all CR are above .7 and also CR > AVE which satisfied the condition of convergent validity however AVE for surface construct is <.5, so removing item 16 from the construct may improve AVE. Malhotra and Dash (2019) have suggested that AVE is often too strict, and reliability can be established through CR alone. Discriminant validity is tested based on Average Variance Extracted (AVE) and Maximum Shared Variance (MSV), AVEs > MSVs. Heterotrait-monotrait ratio of correlations (HTMT) was used as the second criterion to confirm discriminant validity. According to Henseler et al. (2015), all HTMT ratios were observed less than 0.85 so no discriminant validity concerns were observed. Differential item analysis revealed no significant differences in the English and Arabic versions of the scale items (p > .05). Details are given in Table 4.

Model Discriminant Validity for Arabic, English, and Combined CFA Models.

CR = composite reliability; AVE = average variance extracted; MSV = maximum shared variance; MaxR (H) = maximum reliability.

Average factor loadings.

Figure 2 describes the CFA model, standardized estimates, model fit indices (CFI, TLI, RMSEA, CMIN/DF, and PClose) presented along with criterions. AMOS plugin was used to calculate all validity measures (Gaskin, 2016). All fit indices confirm the goodness of the factor structure as suggested by Hooper et al. (2008).

Node diagrams for revised Biggs learning approaches CFA model (Arabic, English, and Combined).

Table 5 describes the comparison of deep and surface learning approaches by study variables (age, gender, faculty of study, and GPA). No significant difference was observed among students learning approaches in terms of age and faculty of study. However, a significantly higher median Deep Learning score was observed in males (p = .018) with low effect size (Cohen’s ds = 0.2) and a significantly higher median Deep Learning score was observed among students having ≥4.0 GPA (p = .012) with low effect size (Cohen’s ds = 0.21).

Comparison of Deep and Surface Learning Approaches by Study Variables.

Mann-Whitney test.

χ2 Kruskal-Wallis.

Cohen’s ds.

η2.

Discussion

Educational settings have long been known to focus more on curriculums, teaching, and assessment-related activities. Comparatively, limited exploration has been observed on the effect of these efforts in terms of learning among students. Besides, most of the relevant literature is from the developed and western world with relatively fewer contributions from other parts of the world. This study aimed to determine and compare the learning approaches among undergraduate students at KAU, Jeddah, KSA using the revised SPQ-2F questionnaire and assessing the reliability and validity of the tool. Many studies have explored either the English version (Biggs et al., 2001) or the translated versions such as; Arabic (Munshi et al., 2012), Malay (Mokhtar et al., 2010), Dutch (Stes et al., 2013), Spanish (Zárate-Santana et al., 2021), and Norwegian (Zakariya, 2019). This study was different to assess learning approaches using both the English and Arabic translated versions among the students in similar settings.

In our study males had significantly higher mean scores for deep learning as compared to females which is similar to other studies on students in Saudi Arabia and Ghana (Mogre & Amalba, 2014; Shaik et al., 2017). However, few studies such as the one in Turkey have reported higher mean scores of deep learning in females as compared to males (Tetik et al., 2009). This shows that there is a variation of learning approaches in different regions of the world which may be due to cultural differences, distinct teaching strategies, or some other factors that need to be further explored. The decrease in the use of the surface approach in students across the years of study in our study was observed. This was also seen in another study in the United Arab Emirates and is suggested that it may be due to the use of surface learning in high school and a decrease in it as medical school goes on (Mirghani et al., 2014). The use of the surface approach by students is discouraged as it imparts only a partial understanding of the subject matter resulting in greater test anxiety and poorer academic performance (Beattie et al., 1997; Owen, 2016).

The mean score for deep learning decreased relatively over the years of the study which was also observed in Turkey (Tetik et al., 2009) and Nepal (Shah et al., 2016). The median test also shows decreasing deep learning scores across the year of study. This may be due to the increasingly vast curriculum as the years progress thus students opt for a surface approach instead of a deep approach as they pass through subsequent years. Besides, some studies found constant mean scores for deep and surface approaches across years of study (Cebeci et al., 2013; Mirghani et al., 2014). Furthermore, a study on medical students documented that deep learning was used more in clinical years of study as compared to pre-clinical years (Mehboob Ali & Rizvi, 2019) while another study found more deep learning among postgraduates than undergraduates (Sandover et al., 2015). However, all years of study use a deep approach more than a surface approach as found in many other studies on students in different settings (Mehboob Ali & Rizvi, 2019; Mirghani et al., 2014; Shah et al., 2016; Tetik et al., 2009).

Moreover, the mean score of deep learning was slightly lower in medical students as compared to non-medical students in our study. This was also shown in another study in which law students scored more on deep learning as compared to medical students (Cebeci et al., 2013). The reason for this may be the enormous syllabus in medical school which results in the students spending lesser time on each aspect of the subject material and not being able to utilize the deep learning approach. This study also found that students who had a GPA of 4 or higher also had a higher deep learning score as compared to students with a GPA lower than 4. Studies on medical students in Saudi Arabia (Shaik et al., 2017) and Pakistan (Akram et al., 2018) have also shown greater deep learning mean scores associated with higher GPAs. This may be due to students who use deep learning ending up having a greater understanding of the subject matter and thus performing better in later assessments. Deep learning is important for critical thinking and reasoning which is paramount in the clinical setting, thus emphasis should be given to encouraging deep learning in medical students especially as they progress to the clinical years. The COVID-19 pandemic and shifting to digital education has raised concerns amongst educators about the effects of deep learning on students due to digitalization (Sollied Madsen et al., 2021). Thus, at this moment in time, greater emphasis and closer monitoring and strategies for the modulation of students’ learning approaches should be devised.

The internal consistency of the subscales was measured by Cronbach’s α with both English and Arabic instruments scoring excellent and good according to guidelines given by George and Mallery (2018). The Cronbach’s α of the Arabic scale was .925 for the deep approach and .880 for the surface approach which is consistent with another study that assessed the reliability of the Arabic scale and had Cronbach’s α scores of .90 and .93 for deep and surface approach respectively. The reliability of the subscales in a few other languages is as follows; .79 and .66 in Malay (Mokhtar et al., 2010), .84 and .81 in Dutch (Stes et al., 2013), and .77 and .77 in Spanish (Zárate-Santana et al., 2021). This comparison shows that the reliability of the Arabic version was found to be slightly better than some other translated versions which may require further studies using the tool in the future to conclude.

The Cronbach’s α of the English scale .921 and .883 for deep and surface learning respectively is comparable to other studies which used the English scale such as .71 and .72 in Nepal (Shah et al., 2016), .737 and .746 in Saudi Arabia (Shaik et al., 2017), .79 and .72 in Pakistan(Malik et al., 2019), .80 and .76 in Ghana (Mogre & Amalba, 2014), and .79 and .66 in Turkey (Tetik et al., 2009) respectively of deep and surface approach.

All the goodness of fit indices (GFI) for the Arabic, English, and the combined scales were excellent in this study, only one item (item-16) was less than 0.4, suggesting further investigation in future studies. A study using the Dutch version showed that the model did not fit their data well (Stes et al., 2013). Another study of the English version in Pakistan medical students showed that their GFIs were acceptable for their data (Malik et al., 2019). This shows that the GFIs in our study were much better than other studies. The CFI for the Arabic version was 0.970 which was excellent and was a much better fit than other language versions of R-SPQ-2F, the Dutch which had a CFI of 0.80 (Stes et al., 2013), and Spanish which had a CFI of 0.88 (Zárate-Santana et al., 2021). Our CFI for the English version was 0.948 which was better than the English version employed in Pakistan which had a CFI of 0.858 (Malik et al., 2019). These findings show that the R-SPQ-2F in English and Arabic seems to be suitable for determining learning approaches among Saudi students.

Though the study was conducted in one of the biggest and oldest universities in Saudi Arabia, the generalizability of the study findings to all students in Saudi Arabia might be considered a limitation. Further studies in other universities with similar and/or different settings need to be conducted along with the exploration of qualitative aspects. Besides, more representation from other emerging subjects and specialities might also be interesting to explore in the future.

Conclusion

This study identified the learning approaches among undergraduate students studying at different faculties in Saudi Arabia. It also observed the differences in gender and academic achievement (GPA). The R-SPQ-2F tool has shown appreciable validity and reliability among Saudi students and thus further studies using the Arabic or English version should be conducted in similar settings to continuously assess the status. Further studies will give researchers and policymakers more insight into the learning approaches of students in Saudi Arabia and help improve education.

Research Data

sj-sav-1-sgo-10.1177_21582440231181090 – for Evaluation of Construct Validity and Reliability of the Arabic and English Versions of Biggs Study Process Scale Among Saudi University Students

sj-sav-1-sgo-10.1177_21582440231181090 for Evaluation of Construct Validity and Reliability of the Arabic and English Versions of Biggs Study Process Scale Among Saudi University Students by Nadeem Shafique Butt, Muhammad Abid Bashir, Sami Hamdan Alzahrani, Zohair Jamil Gazzaz and Ahmad Azam Malik in SAGE Open

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This project was funded by the Deanship of Scientific Research (DSR), King Abdulaziz University, Jeddah under grant no. G: 1498-828-1440. The authors, therefore, acknowledge with thanks DSR for technical and financial support.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.