Abstract

This study investigated the effects of Plickers use on rural students’ learning outcomes through two case scenarios. In the first case scenario, the study adopted a preexperimental study method with a focus on comparing Plickers with traditional testing to examine rural students’ learning attitudes toward Plickers. The participants were 24 students in a small rural elementary school in Taiwan who completed 1 month of English learning. In the second case scenario, a quasi-experimental pretest and posttest design was employed to evaluate the instructional effectiveness of Plickers on students’ learning performances. Research participants were 60 students from two classes in a large rural elementary school in Taiwan. During 1 month of math instruction, students in the experimental group took Plickers-based quizzes, whereas students in the control group used small whiteboards to respond during quizzes. The results from the first case indicated that students’ overall attitudes toward Plickers in English class remained positive. The results from the second case revealed that Plickers may enable students to achieve greater learning performance and improvement during math instruction. However, students’ perceptions of Plickers were similar in both cases, indicating joyful learning and active engagement when Plickers was adopted as a formative assessment tool.

Background Information

Few Technology Resources in Rural Schools

In rural areas, because of limited educational budgets, schools often cannot invest in information technology infrastructures for teaching and learning (Chen & Lin, 2019; Croft & Moore, 2019). However, although technology access in rural schools remains limited, teachers in rural schools have demonstrated more positive attitudes toward technology integration in the classroom than their nonrural counterparts have (Howley et al., 2011). In addition, if technology resources were available, rural teachers had different perceptions of technology integration in benefiting student learning (J. Wang et al., 2019). Therefore, to promote equitable, quality education as emphasized by the United Nations (United Nations, 2020), it is crucial to add more research discussion on technology in rural schools.

Plickers as a Cost-Effective Mobile SRS

Student response systems (SRSs) have long been used as instructional tools to improve student engagement in class (Caldwell, 2007), which in turn often yields positive learning outcomes compared with traditional lectures (Chou & Chen, 2017). Because of the rapid development of mobile technologies, early SRS hardware (i.e., remote-controller-size clickers) has been gradually replaced by mobile devices (smartphones or tablet computers) on which students only use applications (apps) to engage in similar interactive learning. A. I. Wang (2015) and Chou et al. (2017) had reported that mobile SRSs might advance students’ learning performances.

Plickers is a relatively new mobile SRS. However, unlike common mobile SRSs such as Kahoot or Socrative, a major feature of Plickers is that students do not need any technological devices during instructional implementation (Mshayisa, 2020). When Plickers is adopted in the classroom, only one mobile device is needed for the teacher. To respond the questions, students use paper cards to engage in an interactive quiz activity (Tompkins et al., 2018). Thus, from a cost-effectiveness perspective, Plickers use in rural schools has the potential to reduce the economic burden for technology resources, and to offer a great opportunity on quality education for students.

Learning Benefits of Plickers

Plickers has the same instructional effectiveness as traditional or mobile SRSs (Elmahdi et al., 2018). In instructional scenarios, Plickers is often used for facilitating student learning process through a class formative assessment (Mshayisa, 2020). The learning benefits regarding Plickers use identified in the previous related research often fall into two categories: students’ positive learning attitudes (or perceptions) and students’ increased learning outcomes. Overall, those findings showed a promising outlook for Plickers adoption in the current study.

Regarding learning attitude, Wiyaka and Prastikawati (2021) integrated the Plickers into the English class, and reported that the Plickers might advance student English learning. In Hassan and Hashim’s (2021) study, Plickers was adopted for supporting student vocabulary learning. The findings indicated that Plickers had a positive impact on students’ perceptions. As for learning outcomes, in Shana and Al Baki’s (2020) experimental study, Plickers enabled students to greatly enhance learning outcomes, and contributed a positive impact for student learning. However, an extensive review of related research revealed that few studies with an experimental approach had examined Plickers use on students’ learning performances. For this reason, the current study attempted to add knowledge base for the literature gap.

Purpose of the Study

Prior to the study, the research team collaborated with two in-service rural teachers who were eager to integrate Plickers into their classrooms for quality education. During a training program, the teachers received a full instruction on Plickers use. The rationale of conducting two case studies was that each case only covered one learning construct and one teaching subject. Such a comprehensive approach allowed the research team to objectively observe how Plickers made an impact on various learning domains (learning attitudes and outcomes), and supported student learning in different academic disciplines (English and math).

The current study investigated the effects of Plickers use on rural students’ learning attitudes and learning outcomes. Two case scenarios from different rural elementary schools in Taiwan were reported. The first case took place in a small rural school where an English teacher taught students using both traditional paper and Plickers testing for formative assessment. The second scenario focused on one large rural school, where a mathematics teacher compared learning performance between two groups of students who did and did not use Plickers. The research questions of the study were threefold:

What are rural students’ learning attitudes toward Plickers use (the first scenario)?

Do rural students who receive Plickers instruction obtain more positive learning outcomes than do their counterparts who receive traditional instruction (the second scenario)?

What are rural students’ perceptions of Plickers as a tool in the classroom (both two scenarios)?

Literature Review

Theoretical Foundations of SRSs Use

Although past studies did not clearly mention theoretical foundations of SRSs use, the current study attempted to use three theories to support the SRSs adoption in the learning settings: multimedia learning, active learning, and formative assessment.

Multimedia learning: Both traditional and mobile SRSs use a multimedia feature, which contains diverse and interactive technological elements. In theory of multimedia learning, educational technologies serve as scaffoldings to enable a meaningful link between the short-term memory and long-term memory (Mayer, 2001). The assumption is that SRSs use may facilitate students’ knowledge acquisition, which in turn affects their overall learning performances.

Active learning: Learners are always encouraged to take an active part in their learning. Learning scientists argue that an active learning occurs when learners can actively construct knowledge based on their learning experiences or interactions in class (Krajcik & Blumenfeld, 2006). The assumption is that SRSs use may enable students to actively engage in an interactive learning environment by responding the quizzes.

Formative assessment: According to educational assessment theories, the goal of the formative assessment is to allow students to diagnose their learning process and reflect what they learn in class. Such mechanism often forces students to pay more attention on the class lecture (Nitko & Brookhart, 2007). The assumption is that SRSs are often adopted for a formative assessment purpose, which constantly stimulates students’ thinking process and motivates student to receive subject contents.

SRSs for Formative Assessment Use

The interactive learning characteristics of SRSs make them useful for formative assessment in class. Through the implementation of SRS quizzes, students may immediately identify their learning outcomes, and the teacher may diagnose students’ retention of concepts for further instruction modification (Caldwell, 2007). In most prior research, students have reported high satisfaction with SRSs use for the purpose of formative assessment. For instance, Fang (2009) employed a traditional SRS to assess college students’ engineering knowledge and reported that students perceived that the assessment process facilitated their concept comprehension. In Stowell’s (2015) study, both traditional and mobile SRS were used in instruction assessment. The findings indicated that both tools may greatly enhance the student learning experience. Wood et al. (2017) integrated Plickers into different engineering classes and indicated that frequent class assessment encouraged students’ preclass preparation and postclass study. However, related SRSs studies tended to focus on college students. Few investigated SRSs adoption in the elementary level.

Plickers Use in the Classroom

Compared with other mobile SRSs, Plickers adoption in the classroom requires only one mobile device: that of the teacher. During Plickers instruction, students hold paper cards with a square-shaped code to represent their answers, which the teacher can scan using a specific app on the mobile device to obtain their responses (Tompkins et al., 2018). Through such an interactive learning environment, Plickers is often used for formative assessment for various academic subjects and student levels (Chng & Gurvitch, 2018). For example, Michael et al. (2019) examined how English teachers employed Plickers to assess elementary school students’ reading comprehension. In the results, teachers confirmed the role of Plickers in supporting student language learning. Wuttiprom et al. (2017) integrated Plickers in a college physics course and reported that Plickers increased student discussion. In Mshayisa’s (2020) study, Plickers adoption in a food science course enabled college students to demonstrate active engagement behaviors such as critical thinking and collaborative learning. Pearson (2020) compared the learning effectiveness between Plickers and traditional SRS. The findings showed that two methods could achieve a great learning satisfaction. Overall, previous related studies confirmed the learning benefits of Plickers in the classroom. However, of those Plickers research, few focused on the elementary level.

Research Method of the First Case Scenario (English Class)

Research Design

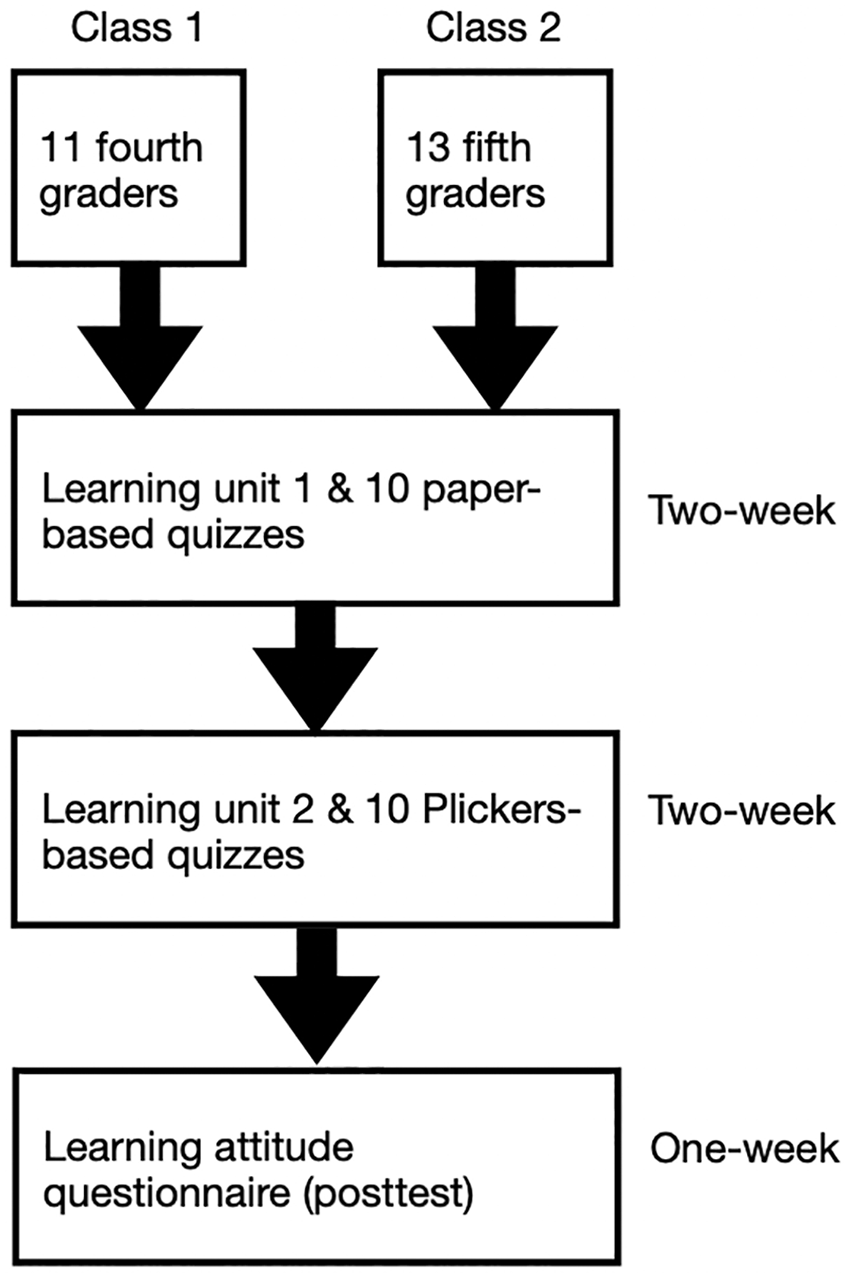

The first case adopted a pre-experimental method with a learning comparison approach to investigate rural students’ learning attitudes toward Plickers use. Prior to the study, students were administered paper-based tests in an English class. After 2 weeks of traditional testing, the students changed their formative assessment styles and began to use Plickers in the same English class. Upon completion of the 4-week educational experiment, a self-developed questionnaire (posttest) regarding students’ learning attitudes was administered to the participants. Subsequently, a semi-structured interview guide was designed to obtain students’ in-depth perspectives regarding the use of Plickers. The qualitative interview information was used to support the quantitative data in the survey stage. Figure 1 illustrates the research design of the first study.

Research design of the first study.

Research Participants

The first case adopted a purposeful sampling which focused on a small rural school the research team collaborated with. Prior to the study, an English teacher in the school received educational training regarding Plickers use from the research team. Two classes of the teachers were involved with the study. Overall, 24 students participated in the study. Among them, 11 were fifth graders in one class, and 13 were fourth graders in another class. None of the students had prior experience using any type of SRS in class. After the completion of the educational experiment, based on the students’ final English grades in the previous semester, only 12 (6 in fifth grade and 6 in fourth grade) students of different achievement levels were selected for an interview.

Tools for Obtaining Student Responses

Students’ learning attitude questionnaire

To ensure survey validity and reliability, the questionnaire was developed through three procedures. First, the study adapted A. I. Wang’s (2015) SRS questionnaire and developed 12 items on a 5-point Likert-type scale. The questionnaire measured students’ learning attitudes toward Plickers use through three major constructs: learning preference, learning engagement, and learning motivation. Next, four English teachers from other schools who had experience adopting Plickers in the classroom were invited to evaluate the question items. During this stage, two items were removed because of ambiguity in the item description, which might easily mislead students about their learning preference. In the final stage, the student learning attitude questionnaire draft was administered to 25 students who had used Plickers before. The reliability analysis of student responses revealed a reliability coefficient of .87.

Semi-structured interview guide

The semi-structured interview guide was developed to facilitate students’ thinking process during the 15-minute interview. The questions in the guide suggested by English teachers covered three elements: learning preference, learning engagement, and learning motivation. The questions were: (Q1) Did you like Plickers? Why? (Learning preference) (Q2) Would you pay more attention in class when using Plickers? Why? (Learning engagement) (Q3) Did you feel that using Plickers motivated you to learn English? Why? (Learning motivation) The interview in the study used a focus group approach to encourage more responses among students (Seidman, 2006).

Data Analysis

In the first case, t-test was used to examine the difference on the learning attitudes of students. Significance level was set to .05.

Teaching Contents

English learning was an instruction theme in the first case. Four learning units were involved in the experiment. Prior to the implementation of the Plickers activity, fourth graders received a topic entitled “can you swim?” and fifth graders’ learning lesson was “where are you going?”. During the Plickers assessment, a topic “what are these?” was taught for fourth graders and a topic “how many lions are there?” was used for fifth graders.

Research Procedure

The research procedure consisted of two phases. In Phase 1, students in two classes were taught one English learning unit for each level (fourth and fifth grades) during a 2-week period. Ten minutes before the end of class, a paper-based quiz containing 10 multiple-choice questions was administered to students. In total, students completed 10 paper-based quizzes. In Phase 2, students in both classes began to study another English learning unit during a 2-week period. Plickers replaced the paper-based testing. Ten minutes before the end of class, the instructor presented 10 multiple-choice questions on the screen, and students held up paper-based cards to represent their answers. Students received a total of 10 Plickers-based quizzes. After students received 4-week instruction, a learning attitude questionnaire as a posttest was administered to all students. Figure 2 represents the research procedure of the first case.

Research procedure of the first case.

In the study, students participated in two-phase learning scenario. The instructional model in Phase 1 was a traditional paper-based testing, which served as a starting point prior to the new technology involvement. After the completion of Phase 1 instruction, students began to engage in the Plickers assessment. At this time, while embracing a new educational technology, students might experience a major difference between two testing methods. Therefore, students’ learning attitudes on the comparison was the target of the study.

Results of the First Case

Learning Attitudes Toward Plickers Use

The descriptive statistics and t tests are summarized in Table 1. The results indicated that regardless of grade level, students’ learning attitudes toward Plickers use remained positive (>3.5). In the fourth-grade class, students expressed high satisfaction with Plickers adoption in class (>4.0) on all items except for Item 8. Similar learning patterns were also identified for fifth graders (except for Item 4). In Factor 1 (learning preference), fourth graders’ learning preference (4.7) for Plickers was higher than that of fifth graders’ (4.51). In Factor 2 (learning engagement), fourth graders (4.28) demonstrated more active learning than their counterparts did (4.03). In Factor 3 (learning motivation), fourth graders’ motivation (4.31) to use Plickers was higher than that of fifth graders (4.25). Overall, the learning attitudes of fourth graders (4.42) surpassed those of fifth graders (4.26). However, a detailed examination of Item 8 revealed that fifth graders were more likely to review class knowledge when Plickers was implemented in class.

Results of Descriptive Statistics and t-Tests.

p < .05 (Cohen’s d = 0.87).

Although the fourth graders showed higher learning scores in each factor and total survey items than the fifth graders did, inferential statistics using t tests still revealed no significant difference in learning preference (t = 0.59; p > .05), learning engagement (t = 0.59; p > .05), learning motivation (t = 0.13; p > .05), or overall learning attitudes (t = 0.44; p > .05) between the two groups of students. In other words, students in both grade levels had a similar perception of Plickers adoption in class. However, on Item 4, a significant difference (t = 2.13; p < .05; Cohen’s d = 0.87) was identified between the fourth and fifth graders. Fourth graders perceived that Plickers strengthened their engagement in English learning activities.

Students’ Perceptions of Plickers Use

Student interview responses with representation quotations were summarized in Table 2. For Q1, most students viewed Plickers as an interesting tool. One student disliked Plickers because he often failed the formative assessment. For Q2, most students perceived that Plickers would immediately report their responses, which forced them to pay more attention in English class. Some students always considered themselves focused on English class regardless of technology use. For Q3, most students considered that Plickers use in class was a game-based activity, which created an expectation for adoption in other curriculum. A couple of students perceived that Plickers was still a test tool, which could not motivate their learning.

Interview Results With Representation Quotations From Students.

Research Method of the Second Case Scenario (Math Class)

Research Design

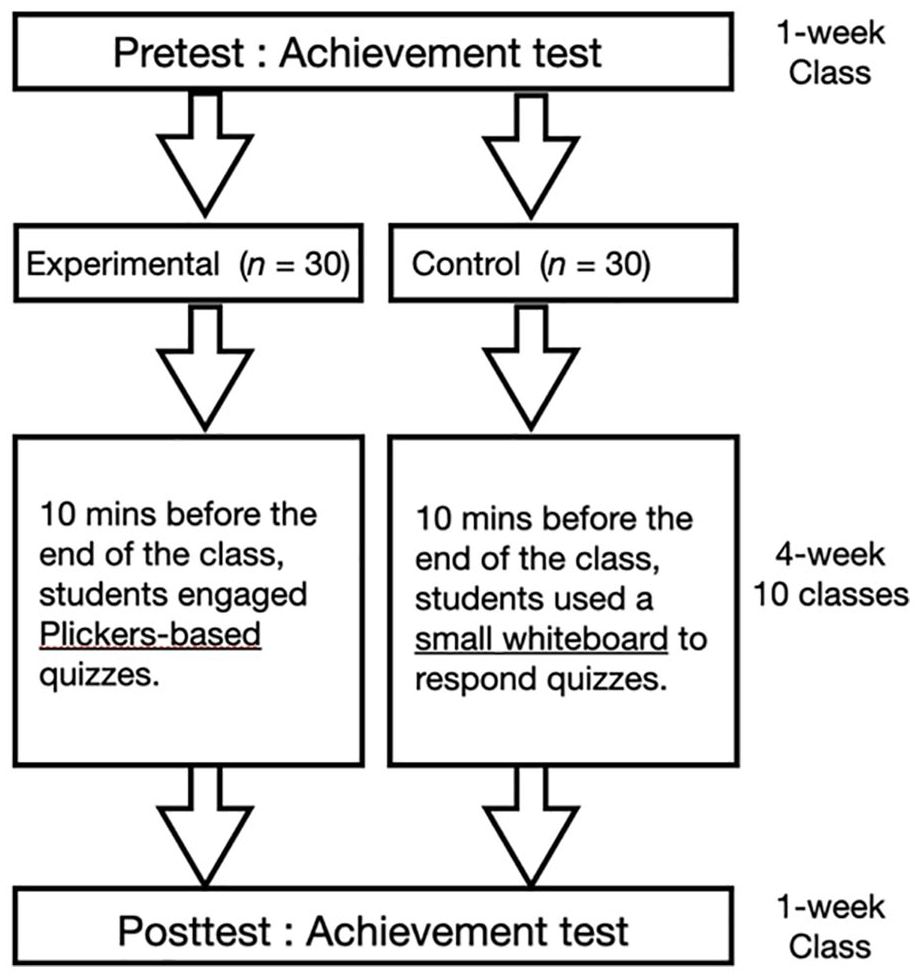

The second case adopted a quasi-experimental pretest and posttest design to investigate the instructional effect of Plickers on student learning performance. The educational experiment lasted 4 weeks. The independent variable was the type of instructional tool (with or without Plickers use for formative assessment); the dependent variable was student learning outcome (a standardized math test). Before the study began, a learning achievement test in math was used to measure students’ prior knowledge of course content. Upon completion of the experiment, students took the same learning achievement test. Table 3 depicts the research design of the second case.

Quasi-Experimental Study Design.

Note. X1 = students using Plickers in class; X2 = students using a small whiteboard in class; O1–Q2 = Learning achievement test in math (pretest); O3–Q4 = learning achievement test in math (posttest).

In the experimental group, students held up a sheet of Plickers code paper to answer the questions (Figure 3, left). By contrast, students in the control group wrote their answers on a small whiteboard to respond to the quizzes (Figure 3, right). After students in the experimental group completed the class quiz, the Plickers platform automatically revealed the correct answers of test items to students. To allow students in the control group to obtain the same instructional mechanism, the instructors also reported the correct answers of test items to students.

Left: Plickers code paper. Right: Small whiteboard.

To prevent extraneous factors influencing the internal validity of the experimental design (McMillan, 2004), this study adopted the following experimental controls:

(a) Class setting: The same instructor taught math to both groups of students. The same amount of class time (40 minutes) was allocated in each class. Both groups received math instruction in similar classrooms.

(b) Instructional method: The instructor used the same learning materials and adopted the same lecture approach in both classes.

(c) Test implementation: Formative assessments were implemented in each group 10 minutes before the end of class. The pretest and posttest were administered on the same day of the week.

(d) Initial behavior: The study used the pretest as a covariance variable to measure the two groups’ initial learning behaviors.

Research Participants

The second case also adopted a purposeful sampling. Because the experimental study required more participants, the main focus in the second study shifted to a large rural elementary school. A math teacher responsible for teaching two classes collaborated with the research team to obtain educational training regarding Plickers use. The math teacher randomly chose one class as the experimental group from two classes. Overall, 60 sixth graders from two classes (experimental: 30; control: 30) participated in the study. No student had used an SRS before, but they had often used small whiteboards to engage in math learning activities.

Tools for Measuring Learning Outcomes

Learning achievement test

A learning achievement test was developed to measure student understanding of math concepts for each learning unit. The test consisted of 20 multiple-choice questions. To confirm test validity, a draft of the test was administered to 28 students who were already familiar with the test content. Through an item analysis, some test items with a low discrimination index and difficulty index were removed. In addition, the KR-21 reliability analysis showed that the reliability coefficient of the test was .87.

Self-reported learning sheet

A self-reported learning sheet was offered to the students in the experimental group (Plickers adoption) after the completion of the educational experiment. This document contained only one open-ended question, which required students to report their feelings about the use of Plickers in instruction.

Data Analysis

In the second case, t-test was used to examine students’ learning process between the pretest and posttest. One-way ANCOVA was adopted to confirm the role of Plickers on students’ learning outcomes by excluding students’ pretest scores. The frequency analysis was performed to count students’ comments. Significant level in the inferential statistics was set to 0.05.

Teaching Contents

Math learning was a major theme in the second case. During the educational experiment, all students received a learning unit entitled the greatest common divisor and least common multiple. Before and after the experiment, tests (pretest and posttest) related to the learning unit were administered to the student participants.

Experiment Scenario

The math learning unit in the experiment covered the greatest common divisor and least common multiple. During the 6-week experiment (4-week teaching and 2-week achievement test), the two groups of students were required to participate in 10-minute formative assessments in class. The students in the experimental group took Plickers-based quizzes, whereas the students in the control group used a small whiteboard to respond to quizzes. The quiz in each class contained five multiple-choice questions. During the quiz, the instructor projected the test items on the screen one at a time, and students answered the questions using the different tools. Upon completion of the quiz, the correct answer for each question was shown on the screen. Figure 4 shows the research procedure of the second case.

Research procedure of the second case.

Students in both experimental groups had experiences using a small whiteboard to engage in formative assessment activities. Prior to the study, all students used a small whiteboard to respond quizzes in math classes. During the educational experiment, students in the experimental group replaced the whiteboard with the Plickers code paper. Our assumption was that the Plickers platform served as a learning scaffolding, enabling students to actively participate in the assessment process. Such mechanism might force students to gain more content knowledge, which in turn indirectly influenced their overall learning performances.

Results of the Second Case Scenario

Students’ Learning Outcomes

The results of t tests regarding students’ pretest and posttest are reported in Table 4. Statistical testing revealed that both groups of students significantly improved their learning after the 4-week educational experiment (experimental: t = 13.09, p < .01, Cohen’s d = 2.03; control: t = 6.45, p < .01, Cohen’s d = 1.11). From a learning gain perspective, students in the experimental group surpassed their counterparts (posttest–pretest: 5.57).

Results of the t-Test.

p < .01.

Table 5 presents the results of one-way ANCOVA, indicating that the pretest influenced students’ learning performances (F = 30.60, p < .01). However, after the effect of the pretest was removed, a significant difference still existed in the posttest scores between the two experimental groups (F = 16.26, p < .001, Cohen’s d = 0.69). In other words, students in the experimental group indeed outperformed those in the control group.

Results of One-Way ANCOVA.

p < .01.

Students’ Perceptions of Plickers Use

Table 6 summarizes student comments on Plickers use. Overall, students’ qualitative responses were grouped into three aspects (with respective frequency): learning engagement (37), learning motivation (15), and user behaviors (12). The total number of positive comments (52) surpassed that of negative comments (12), suggesting that Plickers could benefit student learning. In negative comments, students’ responses were related to their use behaviors rather than direct criticism of Plickers. However, of those self-reported comments, students did not clearly indicate if Plickers could enhance their learning performances.

Results of Students’ Comments on Plickers use.

Overall Discussion

Learning Attitudes Toward Plickers Use

In the first case scenario (pre-experimental study), the findings suggest that rural students’ learning attitudes toward Plickers use were positive. The results were consistent with Wuttiprom’s (2017) study, which revealed that students demonstrated positive attitudes when the instructor adopted the Plickers testing approach. In addition, positive phenomena occurred in three constructs: learning preference, learning engagement, and learning motivation. Such learning patterns support the findings of Michael et al. (2019) and Kent (2019), in which Plickers effectively motivated student language learning, and echoed Mshayisa’s (2020) study in which students exhibited active engagement during Plickers instruction.

Compared with the survey constructs, individual survey items revealed different findings. Regardless of grade level, students scored Item 8 the lowest on the survey. Plickers use in classroom might not strongly encourage students to prepare preclass reading. This result differs from that of Wood et al. (2017), who argued that consistent Plickers use encourages students to prepare before class. In addition, the inferential statistical analysis of Item 4 revealed that fourth graders showed more enthusiasm than fifth graders did for English learning when Plickers was implemented in class. This finding may be explained by the age of the students. Younger students were more likely to be interested in emerging educational technologies (Couch, 2018).

Students’ Learning Outcomes

In the second scenario (the quasi-experimental study), the inferential statistical analysis indicated that students in both the experimental and control groups exhibited significant improvement on their final learning achievement tests. The reason for the control group’s significant growth could be the phenomenon of statistical regression (McMillan, 2004), which may occur in any experimental research. However, in an examination of the difference between students’ initial learning behaviors (pretest scores) and final learning outcomes (posttest scores), those in the experimental group demonstrated greater improvement than did their counterparts in the control group. In other words, the learning growth curve for students using Plickers increased significantly throughout the 4-week educational experiment.

Students’ initial learning behaviors (pretest scores) created an unequal status between the two groups of students. The pretest indeed influenced the educational experiment. However, after the removal of the pretest effect, a significant difference still existed in students’ final learning outcomes between the two groups. In other words, students using Plickers to assist their formative assessment may exhibit superior performance in their summative learning outcomes. Because no previous experimental studies on Plickers have focused on mathematics, the research results can only be supported by findings in other academic disciplines. For example, Naqiyah and Wilujeng’s (2019) pre-experimental study reported significant improvement in physics learning among students using Plickers to engage in formative assessment activities.

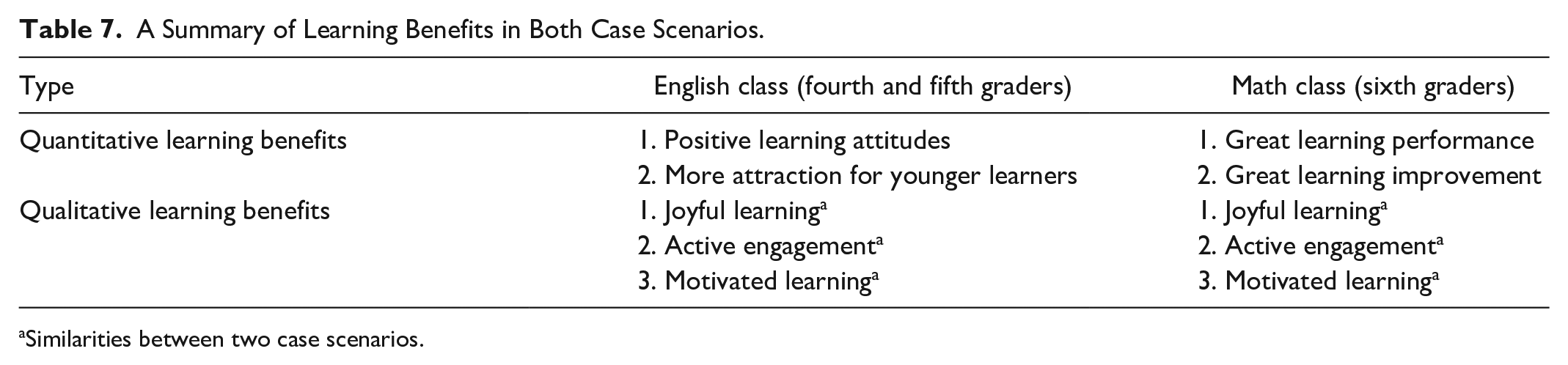

Students’ Perceptions of Plickers

A detailed examination of the negative comments in the first case scenario indicated that students’ personal preference (e.g., viewing Plickers as a test tool or not caring about test tools) determined their unfavorable impression of Plickers, which may not be an effective measure of the quality of Plickers’ integration in class. In addition, one negative comment stemmed from the student’s aversion to any formative assessment and was unrelated to Plickers use in particular. Regarding the positive comments, students perceived that integrating Plickers in English class could create three learning atmospheres: joyful learning, active engagement, and motivated learning.

In the second case scenario (the quasi-experimental study), students’ comments on Plickers use were predominantly positive. Most negative comments focused on students’ specific learning behaviors (e.g., the use of the Plickers card), which can be attributed to their lack of familiarity with the manipulation of Plickers. Some negative comments came from students’ aversion to all formative assessment in class, which cannot be considered the fault of Plickers specifically. Unlike the negative comments, the positive comments could be grouped into three themes: joyful learning, active engagement, and motivated learning, showing how Plickers brought learning benefits to the math class. Table 7 summarizes the quantitative and qualitative learning benefits yielded in both case scenarios.

A Summary of Learning Benefits in Both Case Scenarios.

Similarities between two case scenarios.

Combination of Quantitative and Qualitative Learning Effects

In the first case scenario (the pre-experimental study), the quantitative data indicated positive results after students engaged in the Plickers assessment activity. In the subsequent interview, the qualitative findings completely supported what students experienced in the questionnaire. For example, qualitative learning benefits such as joyful learning or active engagement could be identified in the survey items. Such educational phenomenon confirmed that Plickers use may advance students’ learning experiences in the social science related curriculum. However, whether positive learning attitudes will influence students’ overall learning outcomes deserves further investigation.

In the second case scenario (the quasi-experimental study), the quantitative analysis only focused on students’ learning performances. When Plickers was adopted as a formative assessment in class, the quantitative findings indicated students’ great learning improvement and learning performance. However, in the qualitative part, students’ self-reported perceptions of Plickers use in class did not contain any comments related to learning outcomes. Their responses were similar to the qualitative results in the first case scenario. It was assumed that those affective domains might indirectly influence students’ learning performances.

Conclusions

When Plickers was fully integrated into classroom teaching, the study investigated the instructional effectiveness of Plickers in two different academic disciplines. In the English class scenario, the research results confirmed that students’ overall attitudes toward Plickers in class remained positive. In addition, students’ perceptions of Plickers integration emphasized joyful learning, active engagement, and motivated learning. In the math class scenario, the research results suggested that Plickers may enable students to obtain greater learning performance and improvement. Students’ perceptions of Plickers shared similarities with those of students in the English class scenario.

The study confirmed the role of Plickers on math and English learning. From science to social science domain, a cost-effective mobile SRS indeed significantly increased rural students’ learning performances and advanced students’ learning experiences. To promote equitable, quality education, those positive learning outcomes potentially may provide some instructional implications for rural schools. First, school administrations in rural schools may create a learning community which allows teachers to receive professional teaching development regarding Plickers use. Second, because Plickers was a new tool to student population, a novel effect may exist in educational settings. Rural school teachers need to use other learning strategies such as teamwork challenge to match Plickers adoption in class. Finally, students’ records on the Plickers platform may become important learning portfolios that allow rural school superintendents and parents for further examination on remedial teaching.

Because of the nature of the research design, these findings from rural schools may not be generalisable to other class settings. Future studies with a focus on rural schools may consider some potential research themes to extend the scope of research and address the limitations of this study. First, because rural students are seldom in contact with educational technologies, they tended to express enthusiasm toward new learning tools. Future studies may investigate whether the novel effect of Plickers gradually disappears when Plickers is adopted as a long-term assessment tool. Second, two instructors in the study did not record students’ scores on the Plickers platform. Future studies may use students’ learning performance on the formative assessments as another variable to examine the relationship with summative assessment outcomes. Third, because two case scenario was conducted in rural schools, the sample size of students had a limitation. Future studies may increase the sample size in the metropolitan schools. Finally, the math learning scenario in the study did not involve traditional paper-based testing. Because traditional testing enables students to easily engage in math calculations, future studies may use paper-based testing in an additional control group to compare the learning effectives with that of Plickers in the educational experiment.

Footnotes

Acknowledgements

The author would like to express his gratitude for two rural school teachers (Ms. Li-Ping Chu and Mrs. Yun-Kuang Chung) who enthusiastically participated in the study and help collect related data.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.