Abstract

Identifying significant factors in teaching-learning performance affecting the efficiency of teachers at university is an indispensable factor in upholding the quality of the teaching in English for Academic Purposes (EAP) courses. This study aims to evaluate teachers’ performance in EAP courses using the students’ course-instructor evaluation survey, and the students’ final grades. For this purpose, the Performance Improvement Management (PIM) software Data Envelopment Analysis (PIM-DEA) is applied to evaluate the efficiency of EAP teachers. Later, a sensitivity analysis is applied to prioritize the significant indicators of teacher performance following an interview with the teachers aimed at the betterment of their performance. The result of the study reveals that the degree of student satisfaction in relation to assignments, exams, and grading systems are significant factors related to teachers’ performance. A wider realization of this should be seen, in the researcher’s opinion, vital to the development of the educational sector.

Keywords

Introduction: The Importance of Quality in Higher Education

Quality has been increasingly prominent in Higher Education (HE) and educational institutions attempt to acquire knowledge and strategies to address quality issues in the recent years. Crabbe (2003) holds that the concept of quality in language education is crucial for both those who are investing for instruction and those who are undertaking the performance, design and implementation of the curricula. In other words, in HE the issue of quality attracts the interest of stakeholders both inside and outside the education system.

Evaluation of quality has also been considered as a fundamental issue in evaluation of educational system (Cave, 1997; Cave et al., 1997). Therefore, to establish a system of quality audit and quality assessment there is a need to consider factors contributing to quality which we can refer to the analysis of efficiency and effectiveness of teacher performance. The notion of quality can be assessed at the macro level in terms of the effective (or otherwise) deployment of available finances and the organizational efficiency of the faculties, and at the micro level in relation to the performance of the teaching staff, both from the efficiency and effectiveness aspect, learners’ attainment using final exam grades, class performance, and students’ feedback.

As mentioned earlier, one of the prominent factors affecting quality at micro level is teacher performance in classroom and its evaluation as it is an integral part of quality assessment (Avalos-Bevan, 2018; Derrington & Campbell, 2018; Elstad & Christophersen, 2017; Flores & Derrington, 2018). In the higher education, the performance evaluation of teachers and academicians seems crucial in upholding quality which directly influences learners’ performance and most importantly their satisfaction (Bini & Masserini, 2016; Gómez & Valdés, 2019). One of the widely used measures for evaluating teaching quality in Higher Education is the Students’ Course-instructor Evaluation survey (SCE) (Vanacore & Pellegrino, 2019). The teacher evaluation like EAP teachers attracted a great deal of attention in the quality assessment of higher education which can be evaluated through several methods from two different aspects, effectiveness or efficiency, as two main concepts in quality evaluation.

In higher education, EAP teachers usually receive feedback from the superiors, peers or colleagues to a lesser extent and students as evaluation of their performance. Students’ satisfaction as defined by Weerasinghe and Fernando (2017) is the temporary attitude resulting from educational experience, facilities, or services. Teachers consider students’ feedback as a valuable indicator of the quality of their teaching performance and the curriculum (Surujlal, 2014). In the majority of the research, the degree of the effectiveness of teacher performance has been evaluated from different perspectives in higher education, such as course content (Hsu, 2014), examining teacher effectiveness using observations in the classroom (Garrett & Steinberg, 2015), effectiveness in novice teachers’ performance (Darling-Hammond et al., 2013), in-classroom behaviors of teachers (Seidel & Shavelson, 2007), and psychological characteristics of the teachers and evaluating teaching effectiveness (Klassen & Tze, 2014). Some research had also been conducted on evaluating teacher performance in EAP courses in general (Avalos-Bevan, 2018; Flores, 2018; Flores & Derrington, 2018; Lejonberg et al., 2018; Su et al., 2017; Thanassoulis et al., 2017; Tuytens & Devos, 2017).

Although students’ satisfaction of teaching have significant contribution in improving teacher performance, limited research has focused on students’ feedback on educational system at micro level, which includes the study of efficiency of teacher performance, designed curriculum, and class activities. Furthermore, in the majority of the students’ satisfaction studies in higher education, regular statistical data analysis illustrations, such as using variance, percentage are applied to evaluate EAP teacher performance.

Yet, to come up with more objective results, distinctive methods may be used. Considering the issues raised, in the current study, the SCE survey has been conducted not only to gather information about the teaching quality of the EAP teacher, but also to investigate the efficiency of the teachers’ performances. To investigate the efficiency of teacher performance is to see how well the teaching is performed within a specific period of time. Thus, it is believed that such an investigation could significantly contribute to the EAP evaluation studies and highlight which teaching indicators could have a strong impact on teaching performance of the teachers. Furthermore, the study is significant in data analysis method. In order to calculate the efficiency of the teachers’ performance, the study applies Data Envelopment Analysis (DEA) models. It is a commonly used mathematical approach to evaluate the efficiency of a group of particular people working in an institution, universities, or any workplace (Charnes et al., 1978). DEA provides the opportunity to analyze the efficiency using the data gathered from the student feedback about the course contents, teacher performance, class activities, and exams. In sum, this study aimed at evaluating efficiency performance of EAP teachers through using DEA method.

Regarding the purpose of study, the following questions were addressed:

What are the fundamental indicators of teaching-learning performance in EAP classes?

How can the teachers’ performance be assessed form the efficiency of EAP classes? (a) What percentage of EAP teachers’ performance acquires full efficiency value? (b) Which indicators have significant impact on teaching performance? (c) How can teaching-indicators be prioritized according to their significant role in students’ learning performance?

What should be done for the betterment of teachers’ performance in EAP course?

Effectiveness, Efficiency, and Students’ Satisfaction

As mentioned earlier, the efficiency and effectiveness of the teacher performance are two main factors in quality. Lindsay (1982) considers effectiveness and efficiency to be two dimensions of institutional performance. Effectiveness can be seen as the compatibility between outputs that are the main goals and other criteria in relation to efficiency.

Viljoen (1994, p. 9) described efficiency as relating to “how well an activity or operation is performed.” Effectiveness relates to performing the correct activity or operation. However, efficiency measures how well a university does what it does, but effectiveness raises value questions about what the university should be doing in the first place. If increased efficiency means doing more with less, then the evidence is clear that universities and the academics working in them have become more efficient (Kenny, 2008). Efficiency in education occurs at a time when outputs can be test results or value added is produced at the minimum level or resources, such as financial or the students’ innate ability (Johnes et al., 2017). That is, the target in efficiency is to achieve maximum result (output) utilizing minimum effort (inputs) at a restricted time. Output in this study is students’ test results or improving students’ language skills (value added) and the satisfaction of exam and assignment.

Therefore, efficiency can be seen as arising from expending the least amount of time, effort or money on the development of an acceptable product or accomplishing a certain goal, in this case learners’ performance. Both efficiency and effectiveness are performance indicators. As indicated in Figure 1, efficiency focuses on getting the maximum output (students’ satisfaction with grades and assignment, and final grades) with minimum input which is teacher performance and course. However, effectiveness measures if actual output meets desired objectives (Bartuševičienė & Šakalytė, 2013). Effectiveness determines the policy objectives of the university or the degree to which a university realizes its own goals (Zheng, 2005). On the other hand, efficiency measures relationship between inputs and outputs or how successfully the inputs have been transformed into outputs (Bartuševičienė & Šakalytė, 2013).

Efficiency and effectiveness.

Data Envelopment Analysis (DEA)

Data Envelopment Analysis (DEA) is a “data oriented” approach in evaluating the performance of a set of homogeneous units called decision makers who consumes identical multiple inputs to produce multiple outputs. It is a non-parametric assessment approach that has been applied in various fields for performance benchmarking and relative efficiencies measurement among homogeneous evaluated units, commonly called decision making units (DMUs). The definition of a DMU is generic and flexible. In this study, it refers to each EAP class.

DEA is an attractive tool because it can measure the performance of educational institutions, departments, courses, teachers, and students. It was initially introduced by Farrell (1957) and improved after several modifications by Charnes et al. (1978) as CCR model, and Banker et al. (1984) as BCC model.

The DEA has also been used to supply new insights into activities (and entities) that have previously been evaluated by other methods. The advantages of applying the DEA have been pointed out by Cooper et al. (2000). However, there is no need to specify explicitly a mathematical form for the production function. Furthermore, it is proven to be useful in uncovering relationships that remain hidden in other methodologies (Cooper et al., 2011). This is because it is capable of handling multiple inputs and outputs, with any input-output measurement. The sources of inefficiency can be analyzed and quantified for every evaluated unit.

A significant number of studies have been conducted applying DEA for assessing the efficiency of higher education in different countries, among which we can refer to the study of Avkiran (2001) into the relative efficiency of Australian universities. The focus of the study is on performance indicators of Australian universities. Also, Martín (2006) assesses the performance of the departments of the University of Zaragoza in Spain, investigating selected indicators concerning both the teaching and the research activities of departments.

Johnes (2006) examines the measurement efficiency in the higher education context by exploring the advantages and drawbacks of various methods for measuring efficiency in a higher education context, and emphasizes that DEA method, handling multiple inputs and multiple outputs to measure efficiency, makes it an attractive choice of technique. In another study, Johnes and Yu (2008) use DEA to examine the relative efficiency of over 100 selected Chinese universities in 2003 to 2004 using various mathematical methods. The main focus of the above-mentioned studies was on the overall efficiency of the universities, faculties and the departments on large scales. However, evaluating efficiency on a smaller scale has been conducted in a research study by Montoneri et al. (2012), who applied DEA to explore quantitative relative efficiency at a university in Taiwan. In this study, a diagram of teaching performance improvement mechanisms was designed to identify key performance indicators for evaluation in order to help teachers concentrate their efforts on the formulated teaching suggestions.

Method

The recent study follows a mixed method approach, using both quantitative and qualitative methods. It follows an explanatory design since using DEA is considered to be the quantitative aspect which is followed by a qualitative investigation of the answers of the instructors given to the questions in the interview section. The qualitative design is a case study with a semi-structured interview to flesh out the results obtained from the questionnaire.

Context

The location under observation where the researchers live and work is the northern part of Cyprus, a large island in the Mediterranean. There are nearly 30 universities in this small geography, and private institutions operating in a competitive environment. This is significant in relation to the study, as assessment of the quality of the learning experience here is vital to the development of the educational area. The university where the study is accomplished is founded in 1979, 90% of the programs are accredited. In Times Higher Education (THE), the annual university ranking, this university is among the top 1,238 universities in the world as of the evaluation of THE (Times Higher Education). Among universities which are below 50 years of age, this university is listed as 101 rank. Society impact level of the university is 300 in Times Higher Education List.

The School of Foreign Languages of the above-mentioned university provides language services to the Faculties (11 faculties) and the community. There is full range of English Language courses for preparatory, undergraduate and postgraduate students, as well as community programmers. It is also an accredited training center for Cambridge ESOL, and an accredited examination center for IELTS, and TOEFL iBT. The Foreign Language Division is part of the School of Foreign Languages responsible for the delivery of such academic products to students at both undergraduate and postgraduate level across the university.

Participants

Undergraduate multinational fresher who are studying in English medium departments are required to achieve the expected grade in the proficiency exam conducted by the Foreign Language and English Preparatory School of the institution in question. The courses offered to these students along with their faculty courses are Communication in English I and Communication in English II. These courses offer academic reading-writing skills, the students need. The course is designed to further help students improve their English to B2 level, as specified in the Common European Framework of References for Languages. The course aims to reconsolidate and develop students’ knowledge and awareness of academic discourse, language structures, and critical thinking. The course will focus on reading, writing, listening, speaking, and introducing documentation, and will also focus on presentation skills in academic settings.

As communication I and II focus on English for Academic Purpose, the researchers use the data retrieved from the SCE survey. Ten thousand fresher undergraduate students of English medium faculties took academic English courses under the supervision of this division in four consecutive years. Both autumn and spring semesters were included in this study.

For this purpose, permission to analyze the SCE data and the surveys of teachers was first obtained from the School of Foreign Languages (where they were to be appraised) and then officially approved by the Rectorate’s body in charge of supervising research related issues. Formal letters outlining the interview were sent to full-time and part-time teachers, both novice and experienced. If a given teacher agreed to participate, then the surveys were deployed in the teachers’ workplace. Fifteen native and non-native full-time teachers with more than 10 years of experience and five part-time (native and non-native) teachers with more than 5 years of experience of teaching EAP courses participated in the interviews.

Data Analysis Tools

Sources of data, in this study, were:

Student course-instructor evaluation survey, filled in by students who attended the above-mentioned courses for four consecutive years

Students’ final scores

PIM DEA software as a measurement tools

Data from an interview conducted with the instructors concerned

The SCE survey is an indispensable part of evaluation aimed at upholding the quality of education at a university in North Cyprus. It has been in use since 2005. The validity and reliability of the aforementioned questionnaire has already been verified by the administrators of the accreditation community, such as ABET (Accreditation Board for Engineering & Technology), AQAS (Agency for Quality Assurance), and City Angels. It is a bilingual questionnaire, set in English and Turkish, and comprises three different sections, in which the students are required to give feedback about course content, teachers’ teaching skills, assignments, exams, and grading procedures. Supplemental Appendix 2 contains the Student course-instructor evaluation survey.

Procedure

In order to apply PIM-DEA software for the analysis, the following phases need to be taken:

Phase 1:

1.1. Classifying the items of the survey

1.2. Calculating the mean for the items in the questionnaire and the final grades of students

1.3. Computing the correlation of coefficient to select appropriate indexes (inputs and outputs) to be used in the software

Phase 2:

2.1. Applying the Performance Improvement Management software (PIM-DEA) version 3 to assess the efficiency of EAP classes

2.2. Weight analysis

2.3. Sensitivity analysis to identify the significant indicators

Phase 3:

3.1. Interview

1.1 Classifying the items of the survey

The Student course-instructor evaluation survey (SCE) items can be classified into three groups as follows:

Items related to the richness of the course content addressed by questions 1, 7, 14.

Items corresponding to the teaching skills of the teachers concerned reflected in questions 2, 3, 4, 5, 6, 8, 9, 12, 15, 17.

Items related to positive attitudes toward the teaching, addressed by questions 10, 11, 13, 16, which aim to identify the learners’ attitudes toward assignments, exams, and grades.

1.2. Calculating the mean score

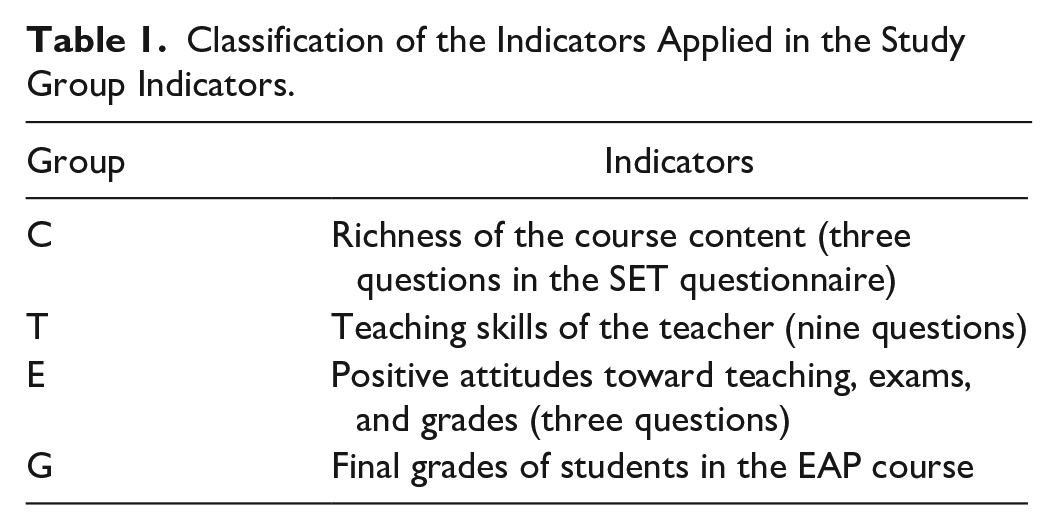

Computing the mean score for the above-mentioned items of the questionnaire and the students’ final grades is required in the next part of the study for each class. The total number of classes encompassed by this study is 443. Considering the final grades of the students, four sets of grades obtained from the analysis of the raw data were assigned as four groups denoted by a specific letter. Table 1. shows the classification of the items of the questionnaire.

Classification of the Indicators Applied in the Study Group Indicators.

Table 2. illustrates a sample mean score calculated for one class with 20 students.

Mean Score for Course ENGL 181, Fall 2010 to 2011.

1.7789 for G is the average final grades for the class. In order to compute the mean score of C, for instance, if QS1, QS7, and QS14 (question scale) show the scale of questions number 1, 7, and 14 in SCE questionnaire, 3.7436 is the average for these scales. The mean score for T and E will be the average of question scales of nine and four related questions from the SCE questionnaire.

1.3. Computing the correlation of coefficient to select appropriate indexes (inputs and outputs) to be used in the software

As already mentioned, to apply PIM-DEA software, the indicators should be classified as inputs and outputs. As Martín (2006) points out, the reliability of the results of the analysis depends on the appropriate selection of the indicators, inputs and outputs of the study.

Therefore, in order to make an accurate selection of inputs and outputs from the above-mentioned indicators, there is a need to calculate the correlation between them. The inputs and outputs required for the study were assigned based on the degree of correlation between the above-mentioned indicators. The degree of the correlation of the aforementioned groups is calculated by statistical methods such as regression analysis and a correlation coefficient test. The correlation used in this study is the Pearson correlation coefficient test.

As stated above, the third step taken in the data analysis was to calculate the correlation for indicators to ascertain which indicators could be selected as the input and the output in the study. A correlation of .00 indicates that no relationship exists (Fraenkel & Wallen, 2006), that is, they could not be considered as inputs or outputs. Both negative and positive results of correlation were accepted in this study.

In a similar study conducted by Montoneri et al. (2012), a correlation coefficient test was also calculated for output items and input items to ensure the isotonicity principle was satisfied. A positive relationship is indicated when a high score on one of the instruments is accompanied by a high score on the other or when a low score on one is accompanied by a low score. A negative relationship, however, is indicated when a high score on one instrument is accompanied by a low score on the other, and vice versa. All correlation coefficients fall somewhere between −1.00 and +1.00. Table 3. illustrates the correlation between the indicators.

Correlation of Groups.

Regarding the highly correlated items, inputs and outputs were assigned for the analysis. According to the results, group C (items related to course syllabus and content) was assigned as input 1, group T (items related to the teaching skills and performance) as input 2, group E (items related to the assignments and exam and grading) as output 1, and group G (the participants’ final grades) as output 2.

Therefore, the first question of the research regarding the indicators of teaching performance could be answered by the appropriate selection of inputs and outputs. If each EAP class is considered as one DMU, Figure 2 illustrates a typical DMU and the corresponding inputs and outputs.

A typical decision making unit and its inputs and outputs.

2.1. Applying the Performance Improvement Management software (PIM-DEA) version 3 to assess the efficiency of EAP classes

The software applied in this study was the latest updated version of the Performance Improvement Management software of Data Envelopment Analysis (PIM-DEA) by Emrouznejad and Thanassoulis (2006). Concerning the performance of one decision making unit (DMU), each class in this study, as stated in Ramanathan (2003), was “evaluated in DEA by applying the concept of efficiency, the ratio of total outputs to total inputs” (p.26). The efficiencies estimated by this method are relative, that is, relative to the best performing class (DMU). The applied model in this study is one of the most applied standard DEA models, the multiplayer side of output oriented BCC model, meaning that the focus is on increasing the indicators of outputs. Moreover, the multiplayer side computes the inputs and outputs weights used for obtaining the efficiency value of under evaluated DMU (which will be discussed in the following section). If the efficiency value is 1.00 (100), the DMU is considered as efficient, otherwise, it is inefficient and its efficiency value rates its performance. Applying PIM-DEA software enables the researchers to evaluate the performance of instructors of EAP classes from the efficiency angle.

2.2. Weighting analysis

Applying the output orientation BCC model gives not only the efficiency value for each DMU, but also the weighting, which signifies the degree of the importance of inputs and outputs in the efficiency value of the DMUs under evaluation. In other words, it illustrates which inputs or outputs play a significant role in the efficiency of each DMU. Therefore, the average of the weights can be a clear explanation for the general importance of the input and output indexes in the efficiency of all DMUs. Calculating weighting in the DEA enabled the researcher to discuss the improvement process to maximize the efficiency of inefficient DMUs. Weighting of inputs and outputs for all 443 DMUs are computed by software to identify the significant indicators which impact the efficiency value.

2.3. Sensitivity analysis to identify the significant indicators

Applying sensitivity analysis is an alternative method for identifying the significant indicator (Montoneri et al., 2012). Using this method, the efficiency value for each DMU was computed by removing inputs and outputs one at a time. Comparing the attained average of the new efficiency values and the previous efficiency value, the amount of decrease in the average of the efficiency values was detected.

3.1. The interview

On completion of the research, an interview was carried out with 20 instructors individually. Dörnyei (2007) emphasizes that the semi-structured interview is appropriate when the researcher develops broad questions beforehand which can lessen the likelihood of ready-made responses. The semi-structured interview was employed in this study to interpret the results obtained from the analysis of the data. As noted by Fraenkel and Wallen (2006), the purpose of interviewing individuals is to find out, “what is on their mind – what they think or how they feel about something” (p. 446). The interview consisted of a set of pre-prepared questions in open-ended format. The aim was to encourage the interviewee to elaborate on the issues raised in the analysis. The interview contained three sets of questions in accordance with the results of the analysis. In reference to the data retrieved from the analysis, the indicators of output 1 had a significant impact on the degree of the teachers’ efficiency. As output 1 comprised of items related to students’ degree of satisfaction on grades, assignments and exams, the first set of questions were guided by the factors on instructors’ perception about the exam and assignments and on how they can be improved. In the second part of the interview, the researcher asked about the instructors’ opinions on how to have an efficient class. The factors which they believe have an impact on EAP classes regarding the management of time and energy. Four groups of instructors were selected for this section. The interview with each instructor lasted approximately 30 minutes, and all the conversations were recorded. The findings of the interview are discussed in analysis and conclusion sections. The questions applied in the study are given in Supplemental Appendix 5.

Analysis and results

The results retrieved from the second phase, section 1 (quantitative analysis), for 443 classes (DMUs) revealed that the efficiency value ranged between 100 and 72. Nineteen (4.27%) classes (DMUs) out of 443 classes (DMUs), acquire the efficiency of 100, are efficient for the period academic year 2010 to 2015. 54 DMUs (12%) got the efficiency value between 99 and 97, the majority (28 %) of the DMUs got the efficiency value between 96 and 91. Only 28 DMUs (6.2%) got the efficiency value below 85. Figure 3. illustrates the efficiency value for all DMUs. The number on top of the column in the diagram presents the number of the DMUs (classes), and the numbers displayed below illustrate the percentage of the efficiency values. Obviously, above 65% (300) of the DMUs are efficient or almost efficient (more than 90%). Results of the analysis for the efficiency are given in Supplemental Appendix 3. Figure 3. illustrates the range of the efficiency in the four academic years.

Efficiency value of 443 DMUs.

Consideration of the attained value of the second phase, section 2 revealed that output 1 (items related to student satisfaction with assignments, exams, and grades) with the average value of (0.2818) was most significant, input 2 (items of teaching performance and skills) with (0.1429) considered the second most significant. The third rank regarding the degree of the importance belonged to input 1 (items of course syllabus) (0.1053), and output 2 (students’ final grades (0.030)—the least important respectively. Table 4 illustrates the value for each input and output. Supplemental Appendix 3 contains efficiency values and the related weights for inputs and outputs individually for each DMU.

Weights for Inputs and Outputs.

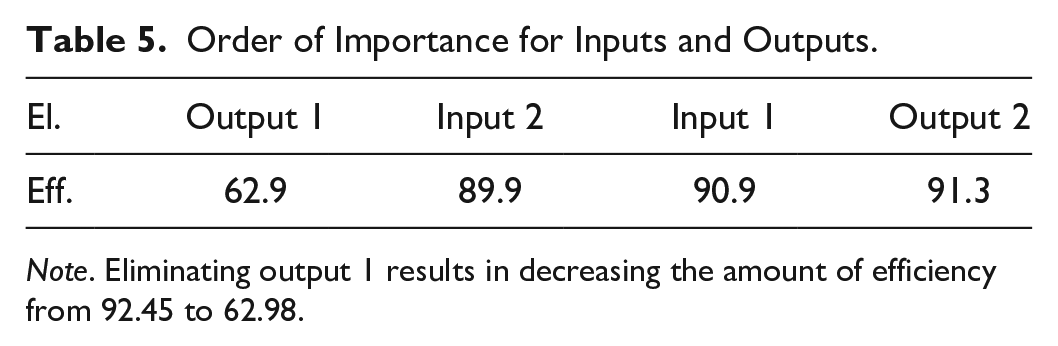

In the second phase, section 3, removing the most significant indicator, output 1, had more impact on the average of efficiency values. This means that the elimination of output 1 made a significant declining change in the efficiency value of all DMUs. Similarly, removing input 2, input 1, and output 2 had their own related decrease on the efficiency values respectively. Table 5 illustrates the above-mentioned statements in the order of importance.

Order of Importance for Inputs and Outputs.

In all the following calculation, the order of importance of each index (2 inputs, 2 outputs) remained unchanged. As mentioned earlier, student satisfaction with assignments, exams and grades was the most significant, and then input 2, teachers’ performance and punctuality in class, then input 1, the indicators related to the course content, and finally output 2, the students overall grades, was the least significant indicator in the degree of contribution to the efficiency of a teacher in class. Supplemental Appendix 4 demonstrates the result for the sensitivity value. The results prove the accuracy of the weighing analysis of the previous section.

The analysis followed an interview with the instructors to interpret the results obtained the analysis. To address the first question about the assignments, all instructors believed the assignments are effectiveness learning tools and believed in having plagiarism check for assignments as many essays are copied from the other sources. Almost one third of instructors thought that the assignments should be handed as a full pack to the students at the beginning of semester, emphasizing that the aims of the assignments need to be clarified to the students, “If the students know the aims behind the assignments, they do it eagerly.” “They should know the assignments are meaningful.” Having a good rapport between teachers and learners were recommended by almost all the instructors. In the second question, relating to the exam, majority of the instructors agreed on the format of the exam, however, emphasizing that writing section must be emphasized more and included in midterm exam, as well. “You find out the mistakes of the students while correcting their essays in the final exam, which is too late and there is no chance of improving.” Almost all believed that students don’t learn from their exam, but they learn for the exam. For the grading system, as the third question, all the teachers believed that, acknowledging students about the grading process enable them to have a clear understanding of the assignments and exams. “There should be a mutual understanding in grading between students and the teachers.” “If the students know about the grading process, they can accomplish the course objectives better.” The last part of the interview was teacher performance regarding the efficiency, and how it can be improved. All the instructors believed in the degree of its importance and only 25% of instructors had lesson plan before the class. Others believe lesson plans are for novice teachers. Almost all believe that all of the instructors should be involved in preparing the material for assignment and exam. They believe it affects their teaching performance. Almost all believe in having annual meeting for course material evaluation and students need to have a clear course objective.

Conclusion

Regarding efficiency as a major factor in the delivery of quality education, it is crucial to consider the quality concept in teacher education. Evaluating teacher performance, especially from the efficiency angle, has not received adequate attention in research to date. Therefore, the present study attempts to explore the issue in relation to teachers deploying EAP courses. The research involves a questionnaire and an interview. It was conducted with 10,000 undergraduate students studying in English medium faculties in one of the universities in North Cyprus for four consecutive years.

On the basis of the analyzed data, the results suggest the following aspects of interest. First, the study evaluated teachers’ performance from the learners’ point of view and in relation to their performance in class. Second, applying a different method (DEA in this study) in data analysis could give a distinct view point toward teaching; it first evaluated the degree of the teachers’ efficiency in class and second prioritized the indicators which had an impact on the degree of efficiency, applying two methods of analysis to verify the authenticity of the results. Third, teachers were interviewed in order to elicit more information about EAP class management. The most important finding was that the majority of EAP instructors acquired the efficiency value of 99% to 88%. Having approximately 28 multinational students in EAP course, the degree of efficiency seemed quite reasonable. Moreover, 19% of EAP instructors acquired a full efficiency value—100%.

Another important finding was in relation to prioritizing the indictors which had an effect on the degree of efficiency in teachers’ performance. According to the quantitative analysis, students’ satisfaction with exams and assignments was the most significant indicator. Teachers’ performance and punctuality constituted the second most important indicator. Student satisfaction with course content and final grades took the third and fourth level of importance, respectively. Moreover, it was found that the higher the students’ satisfaction with exams, assignments, and the grading system, the more efficient the EAP instructors were deemed to be.

The analysis was followed by an interview aims at eliciting information from the instructors in order to interpret the retrieved data. It also aimed to improve the degree of efficiency in teachers’ performance. According to the data elicited from the interview, in order to have an efficient class, EAP instructors can draw up a need analysis at the beginning of the course, clarify the course objectives to the students, explain the aims of the assignments and communicate the allocated time clearly along with informing the students about the grading system.

This result is at variance with Montoneri et al. (2012) who highlight the role of the richness of course content in determining the degree of efficiency value.

This study adds to previous literature by evaluating the efficiency of instructors on the micro level. It is a contribution to the field of teacher evaluation since the efficiency of universities in general, and departmental performance in particular, has not been widely investigated. Furthermore, this study was conducted to fill a gap in the relevant literature and to study the efficiency of teachers’ performance in class rather than the types of effectiveness which have traditionally been discussed in research. Conducting an interview with the instructors provided some techniques for improving the degree of efficiency value in the teachers’ performance.

In light of the findings of the present study some implications for teacher education and training can be suggested, especially for those concerned with EAP courses. For instance, language teachers and instructors could consider introducing the criteria and grading system to their students with an emphasis on the relation to the aims and objectives of the course. Moreover, EAP teachers are advised to go through the grading procedure with the students to enable them to have critical thinking skills in this respect. As learners become aware of the evaluation and grading system, and as they practice critical thinking skills, they can become more autonomous learners. Furthermore, it is suggested that there be more group work in EAP classes. This would save teachers’ time and improve their monitoring skills. As Allwright (2006) points out, taking into account the idiosyncrasy of humans, the teacher either tries to operate on a one-to-one basis—that is, teacher teaching each learner separately—or decides to offer multiple learning opportunities for learners to select according to their perceived needs. In conclusion, considering efficiency to be an indispensible factor of quality in class, teachers could improve their quality of performance in EAP by considering the indicators which affect the efficiency of their performance.

Further research is needed to examine how the experience of teachers influences their performance, and thereby efficiency. Furthermore, prospective research can incorporate evaluating the efficiency of EAP delivery while distinguishing the learners’ level of performance. More studies should look at the different types of rubrics for grading systems of academic writing. An aim should be to raise learners’ awareness of their mistakes in writing, thus helping them to improve their academic writing skills.

Finally, we need to conduct more research into analyzing teaching performance. Applying different methods of analysis such as DEA can enable us to distinguish some hidden facts related to teaching and learning indicators.

Supplemental Material

sj-docx-1-sgo-10.1177_21582440211050386 – Supplemental material for A Study of Teacher Performance in English for Academic Purposes Course: Evaluating Efficiency

Supplemental material, sj-docx-1-sgo-10.1177_21582440211050386 for A Study of Teacher Performance in English for Academic Purposes Course: Evaluating Efficiency by Solmaz Ghaffarian Asl and Necdet Osam in SAGE Open

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.