Abstract

This study provides the development phases and initial psychometric evaluation of the Integrated Care Competency Scale (ICCS) with sample (n = 243). Specifically, quantitative methods with graduate counseling students were used in this study. The ICCS through a three-phase study process was honed to 65 items and broadly assesses graduate counseling students’ perceived competencies in integrated care. Phase 1 reports on item generation and issues related to content validity, Phase 2 describes the results of a pilot study and preliminary psychometric properties, and Phase 3 discusses the exploratory factor analysis, and further psychometric properties conducted to assess the usefulness and reliability of the ICCS items. Results from the three-phase study process revealed satisfactory reliability, factor structure, and usefulness of the newly constructed ICCS in measuring integrated care competencies. Cronbach’s alpha coefficient for the overall scale was .98. Discussion, limitation, and implications for future research are presented.

Integrated care provides a systemic coordination of physical and behavioral health care usually by placing behavioral health practitioners, including professional counselors, in primary care environments or primary care practitioners in specialty behavior health facilities (Substance Abuse and Mental Health Services Administration–Health Resources and Services Administration [SAMHSA-HRSA] Center for Integrated Health Solutions [CIHS], n.d.). The importance of this type of care is rooted in holistic care that ensures (a) making care accessible, continuous, and increasing productivity of individuals in the community (World Health Organization [WHO], 2019a); (b) attending to the whole person (Aguirre & Carrion, 2013); and (c) reducing cost and stigmatization related to seeking help for mental health (WHO, 2019b). Fundamentally, mental and physical health are interrelated, sharing many underlying causes and overarching consequences, but are best managed using integrated approaches (Allen, Balfour, Bell, & Marmot, 2014). Thus, necessitating collaborative efforts to provide “one-stop” primary and mental health services as exemplified by agencies including Kaiser Permanente and Cherokee Health Systems.

This fundamental goal of collaborative health services presents several clinical and operational challenges to counseling students and health practitioners (Edwards & Patterson, 2006; Fox, Hodgson, & Lamson, 2012). As evidenced in previous literature, training for students and professionals regarding integrated care modality is necessary, especially when mental health professionals are placed in primary care facilities, because it facilitates competency and bridges the gap that exists due to the traditionally siloed nature of training (Blount & Miller, 2009; Edwards & Patterson, 2006). Counselors working in integrated care settings have advocated for more training specific to this modality. To reflect this, Gersh (2008) indicated a need for training counselor trainees in integrated care, which was further corroborated by Glueck (2015).

Recently, training initiatives in integrated care have increased, in part, due to SAMHSA-HRSA’s sponsored development of competency categories (Hoge, Morris, Laraia, Pomerantz, & Farley, 2014) and training grants for Behavioral Health Workforce Education Training (BHWET; HRSA, 2019) programs. Data show that there are currently 34 counselor education programs that have received the BHWET grants, 32 of which are accredited by the Council for Accreditation of Counseling and Related Educational Programs (CACREP, 2018). These training initiatives call for a psychometrically sound and useful instrument that evaluates counselor trainee competencies in integrated care. At the time of writing this article, there are no existing instruments that measure integrated care competencies. Therefore, the purpose of this article is twofold: (a) to describe the process used in the initial development of the Integrated Care Competency Scale (ICCS) and (b) to assess the reliability and factor structure related to the ICCS measure.

Conceptual Framework and Literature Review

Integrated care has its foundation in the biopsychosocial model (Engel, 1977), which suggests that the consumer health treatment is most effective when biological and psychosocial problems are considered in a holistic approach. Over the years, studies have reported increased prevalence of mental or behavioral health across the life span (Centers for Disease Control and Prevention, 2011; Kataoka, Zhang, & Wells, 2002). With 50% to 70% of consumers consulting with and/or seeking treatment from primary care practitioners (Curtis & Christian, 2012; Gatchel & Oordt, 2003), integrated care has become necessary. Nevertheless, siloed health professional education has warranted calls for competency training (Blount & Miller, 2009; Edwards & Patterson, 2006; Gersh, 2008; Glueck, 2015).

A therapist’s competence is described as “the extent to which a therapist has the knowledge and skill required to deliver a treatment to the standard needed for it to achieve its expected effects” (Fairburn & Cooper, 2011, p. 374). An often-used approach to determine counselor trainees’ competency and self-confidence in a domain of behavior is self-efficacy theory (Watson, 2012). According to Bandura (1986, 1997), self-efficacy, sometimes referred to as perceived ability, is the confidence people have about their ability to complete a required task. Although self-efficacy does not necessarily equate competence, it serves as a drive for people to attempt a behavior which under normal circumstances they would have avoided.

In reviewing counselor trainees’ self-efficacy literature, various published studies have asserted the essence of self-efficacy as a considerable factor in determining outcome and developmental milestones in counselor training competencies (Easton, Martin, & Wilson, 2008; Larson & Daniels, 1998; Mullen, Uwamahoro, Blount, & Lambie, 2015). For the purpose of this study, we defined perceived competency as how people discern themselves to be skilled and effective in integrated care. In professional counseling and related mental health professions, competence is said to be a complex construct to define because of the multifaceted nature of standards, which are often used as benchmarks to determine one’s knowledge, skills, and attitudes (Sommer-Flanagan, 2015). Therefore, the SAMHSA-HRSA core competencies (Hoge et al., 2014) were adapted as a context for the initial development of the ICCS, which provide organizations and individual professionals a “gold standard” for the skill set needed to deliver integrated care services.

The SAMHSA-HRSA core competencies are “intended to serve as a resource for providers to shape job descriptions, orientations programs, supervision, and performance reviews for workers delivering integrated care . . . and as a resource for educators as they shape curricula and training programs” (Hoge et al., 2014, p. 5). In addition, Hoge and colleagues indicated that the SAMHSA-HRSA core competencies can be merged into assessment tools and as a reference for shaping workforce training, with the primary goal of providing guidance for the integration of behavioral health into primary care.

The SAMHSA-HRSA core competencies were categorized into nine components to include (a) interpersonal communications—ability to establish rapport and communicate effectively with consumers and practitioners; (b) collaboration and teamwork—ability to function effectively in an interprofessional team; (c) screening and assessment—ability to use brief, evidence-based assessment tools to determine consumer needs; (d) care planning and care coordination—ability to develop and implement integrated care treatment plans, ensuring access to service and information by all stakeholders; (e) intervention—ability to provide brief, focused prevention, and recovery treatment, as well as long-term treatment for consumers who have chronic illnesses; (f) cultural competence and adaptation—ability to provide services that are culturally relevant to consumers; (g) system-oriented practice—ability to function within the organizational and financial structure of the system; (h) practice-based learning and quality improvement—ability to assess and continually improve services as an individual or in interprofessional team; and (i) informatics—ability to use information technology to support integrated care (Hoge et al., 2014).

Because no instrument exists that measures competencies in integrated care, this study tried to develop and evaluate the reliability and factor structure of the ICCS based on the nine SAMHSA core competencies. In doing so, first, we discuss issues regarding item development and generation and content validity in Phase 1. Second, we provide useful information on the pilot study, preliminary psychometric analyses, and properties of the ICCS during Phase 2 of this study. Finally, in Phase 3, we report on the main study and further psychometric analyses on the ICCS using a larger population. Based on the purpose of this study, we discuss in the following sections the phases used to develop a psychometrically sound instrument, which assesses the reliability and factor structure of the ICCS.

Phase 1: Item Development

Item Generation and Format

The purpose of Phase 1 was to develop and provide content validity evidence for a potential set of items that constitute a scale evaluating graduate counseling students’ competencies in integrated care. Phase 1 comprised the first five steps in scale development as proposed by DeVellis (2012). The steps included (a) determination of what needed to be measured, (b) generation of items, (c) decision on measurement format, (d) expert reviews, and (e) inclusion of reliable items. Based on current and relevant literature and examination of SAMHSA-HRSA standards, initial 95 items were generated by the researchers to constitute competencies in integrated care in nine major components. One of the researchers is well versed in current trends and educational programs in integrated care. Competence was conceptualized as “the extent to which a therapist has the knowledge and skill required to deliver a treatment to the standard needed for it to achieve its expected effects” (Fairburn & Cooper, 2011, p. 374).

The scale was designed as a self-report survey with items generated from established competencies that met the construct of interest (DeVellis, 2012). The items were scored on a 6-point Likert-type scale, which required participants to indicate their range of agreement or disagreement. We decided to use an even-numbered 6-point Likert-type scale as the measurement format because of its ability to capture a wide variety of responses (DeVellis, 2012). The 6-point Likert-type scale was used because it better suited the purpose of this study and has the potential to discriminate between participants. In addition, the 6-point scale forces choice, avoids an “easy out,” gives better data, and yields groupings that are easier to understand and discuss. Responses on the scale were coded as follows: strongly disagree = 1, disagree = 2, slightly disagree = 3, slightly agree = 4, agree = 5, and strongly agree = 6.

Content Validity

After generating the initial items, the consensus of a panel of experts was used to identify areas and a series of structured items proposed to measure integrated care competencies. Four experts—two practitioners and two research methodologists—participated. Each of these experts provided feedback that helped to revise the items. The experts and the researchers met to discuss which items to include on the scale based on the SAMHSA-HRSA standards and theoretical relevance. We reached a consensus on the final set of items after two iterations. In addition, a cognitive interview was conducted using four students who fit the criteria of potential participants for Phase 2. The cognitive interview processes involved students reading the items, taking the survey, and then providing feedback on readability, clarity, and conciseness. The expert reviews and the cognitive interviews led to the reduction of the scale items from 95 to 73. Twenty-two items were removed because they were redundant, too many under one subscale, and had no theoretical relevance. The 73 items retained were based on the conceptual definition of the construct and the SAMHSA-HRSA standards that identify the predisposition graduate counseling students need in integrated care settings.

Phase 2: Pilot Study and Preliminary Psychometrics

Results of the content validity and item development described in Phase 1 led to the development of a potential set of items intended to assess the integrated care competencies of graduate counseling students. These items were developed through consultation with leading experts and research methodologists with research and practical expertise in survey development and integrated care counseling. Thus, the next logical step in this research study was to explore some of the psychometric properties of these 73 items. Because these items were based on the nine core competencies from the SAMHSA-HRSA integrated care standards, the scale had nine subscales or factors. The expert panel and one potential participant reviewed the second version of the scale before Phase 2. Therefore, Phase 2 explored whether the items developed from Phase 1 could reliably measure or operationalize the factors of the ICCS as proposed by the SAMHSA-HRSA standards in an integrated care model.

Research Questions

The research questions that guided the Phase 2 study were as follows:

Method

Site and Participants

Participants in graduate counseling degree programs were recruited at a state level in the Midwestern part of United States from three CACREP-accredited universities. Upon receipt of institutional review board (IRB) approval, a recruitment email containing a survey web link generated from Qualtrics was sent to liaisons in the selected CACREP programs. Total responses reviewed were 40, but after data cleaning and coding, 36 graduate counseling students voluntarily participated in this pilot study by providing their responses to a 73-item Likert-type scale survey. The four participants excluded from the sample had no usable data recorded. According to Converse and Presser (1986), a sample size range of 25 to 75 is enough for a pilot study. Johanson and Brooks (2010) have indicated that when pressed for a sample size for a pilot study, 30 participants are adequate. Therefore, the sample size of 36 in this pilot study was deemed appropriate.

Phase 2 used purposeful sampling technique, which allowed the researchers to choose respondents who best fit the study. All graduate counseling students excluding school counseling and student affairs students in three universities (n = 187) were eligible to participate in this pilot study. Total participants (n = 36) yielded a response rate of about 19%. The majority of the 36 participants were master’s degree students (n = 30, 83.3%), clinical mental health students (n = 32, 88.9%), and females (n = 28, 77.8%). Those who had no training in integrated care (n = 18, 50.0%) were equal in number to those who had training. The mean age for the participants was 35.06 years (SD = 11.14), with 22 years as the youngest participant age and 56 years as the oldest age. The responses to each item on the ICCS were coded as 1, 2, 3, 4, 5, or 6, with “1” corresponding to “strongly disagree” and “6” for “strongly agree.”

Data Collection and Analysis

Data were collected by means of a web-based self-administered questionnaire that initiated the process of identifying structured items based on the conceptualization of integrated care from the SAMHSA-HRSA standards and conceptual definition of competency. Surveys were distributed to the research participants via email through a link, and their responses were collected and downloaded via the Qualtrics software. Rigorous approaches such as item redundancy, descriptive statistics, item–total correlation, and reliability analysis were used in the identification and evaluation of the potential set of items. Specifically, Cronbach’s alpha of internal consistency was employed for the reliability analysis.

Results

Item Analysis

Item analyses were performed on the coded data to determine the reliability and construct validity of the items. The analyses included the examination of correlation matrix, item–total correlations, and reliability (Cronbach’s alpha coefficient) for each subcategory and the overall scale. Examination of the items led to the deletion of some items because they had low or poor correlations with the other items. In addition, item–total correlations were computed, and those with coefficients lower than .3 were deleted (Field, 2018; Osterlind, 2010). Moreover, items that were lowering the overall Cronbach’s coefficient were removed if, conceptually, deletion was appropriate and did not affect the content validity of the construct. Ultimately, seven items were removed because (a) some redundant items were highly correlated and thus conceptually interrelated, (b) others had lower item–total correlations, and (c) some had statistically and conceptually similar interitem correlations, suggesting that these items were measuring the same construct. Altogether, these deletions resulted in the increase of the overall Cronbach’s alpha.

The purpose of Phase 2 was to provide evidence that support the creation or development of the ICCS. This self-report scale was designed to assess graduate counselor’s competency in integrated care setting. The 36 completed surveys containing the 65 remaining items yielded an alpha of .975, suggesting that the survey provided reliable measurements on integrated care competencies. In summary, a total of 65 items (89%) were included in the initial prototype of the ICCS for the main study during Phase 3. Table 1 shows information about Cronbach’s alpha for each subcategory and overall Cronbach’s alpha for the initial items and after deleting some items.

Cronbach’s Alpha for Subcategories and Overall of the ICCS—Pilot Study (n = 36).

Note. ICCS = Integrated Care Competency Scale.

Phase 3: Main Study and Further Psychometrics

Preliminary findings from Phase 2 led to the development of a potential set of items intended to measure graduate counseling students’ perceived competency in integrated care. These items were developed through consensus of leading experts and scholars with research and practical expertise in counseling, integrated care, and scale development. The next logical step in this research agenda was to explore further the psychometric properties of those 65 items. Therefore, in Phase 3, the researchers explored whether the 65 items generated from Phase 2 could reliably operationalize the factors of integrated care competency as captured on the ICCS.

Research Question

The research question that guided Phase 3 in this study was as follows:

Method

Site and Participants

Participants were recruited at the national level among CACREP-accredited counseling programs in the United States. A request for accredited institutions from CACREP yielded 349 institutions with accredited counseling programs. To be included, participants needed to be (a) 18 years old and above, (b) enrolled in a CACREP-accredited counseling program, (c) working toward a degree in counselor education, and (d) enrolled in a clinical mental health, addiction, clinical rehabilitation, college, or “marriage, couple, and family therapy counseling” programs. Participation in the study was not restricted by field experience and training. Thus, students who have had field experience and or training in integrated care and those who have not were eligible to participate in the study. However, students in school counseling and student affairs programs were excluded from this study due to the operational definition used for the SAMHSA-HRSA integrated care competencies.

We employed both probabilistic and nonprobabilistic approaches in identifying participants in Phase 3 of the research study. Purposeful sampling was used to identify counseling programs that had received the HRSA-BHWET integrated care grants that promoted placement of graduate counseling students in integrated care environments. With the purposeful sampling, a search was conducted on the HRSA-BHWET data warehouse to find counseling program-recipients of the grant. The search showed that nine of the 349 institutions with CACREP-accredited programs had received the HRSA-BHWET integrated care grants at the time of data collection. However, only six programs that received the HRSA-BHWET agreed to participate in the study.

Of the remaining 340 institutions, three were eliminated as they were used in the Phase 2 of the study. Hence, 337 institutions were entered into a random sampling technique in Microsoft Excel. The randomization process yielded several institutions, but only nine CACREP-accredited programs voluntarily agreed to participate in the research study. Overall, a total of 15 CACREP-accredited graduate counseling training institutions were used in the Phase 3 of this study. After screening the data for normality and outliers, 243 subjects were retained in the final data set for analyses and interpretation. The usable data yielded a response rate of about 18.6%, which was relatively low, but effective for the data analysis. The participants (n = 243) consisted of graduate counseling students in all but school counseling and student affairs specialties. The majority of the total sample were master’s degree students (n = 225, 92.6%), clinical mental health specialty (n = 187, 75.7%), females (n = 211, 86.8%), not trained in integrated care (n = 186, 76.5%), and interested in working in an integrated care environment (n = 224, 92.2%). Participants with training in integrated care (n = 57) selected options such as coursework (n = 45), workshop (n = 21), conferences (n = 19), and certificate training (n = 5) as the mode of training in integrated care.

Although a total of 57 participants received training, their responses were not mutually exclusive because they were given the option to choose more than one mode of training. There were 129 (53.1%) participants with no field experience and 114 (46.9%) participants with field experience. Among those who have had field experience, 38 (33%) have had placement in an integrated care setting and 76 (67%) have had experiences in other field placements which are integrated care settings. The mean age for the participants was 30.24 years (SD = 10.13), with the youngest participant being 21 years and the oldest being 70 years.

Data Collection and Analysis

We emailed the recruitment invitation which contained the URL for the survey to the liaisons of participating universities. Liaisons, in turn, forwarded the email to students to respond to the survey. Data were collected via Qualtrics software and analyzed using the SPSS Version 22 software mostly used in social sciences. In our computation processes, scores for negatively worded items were reversed coded so that all items were scored in the same direction. Quantitative approaches such as item analysis, item–total correlation, internal consistency reliability analysis, and exploratory factor analysis (EFA) were used in the identification and evaluation of the potential set of items. In particular, Cronbach’s alpha was used to estimate the internal consistency of reliability for each of the subscales and the overall ICCS.

Results

Item Analysis

An item analysis was performed to determine the reliability of the items. Item–total correlations were computed and those with coefficients lower than .30 were expected to be deleted (Field, 2018; Osterlind, 2010). In addition, items that were lowering the overall Cronbach’s alpha were supposed to be removed if they were theoretically nonrelevant. However, all the items were retained because (a) of their theoretical relevance, (b) none of them had item–total correlations lower than .30, and (c) none would increase the overall Cronbach’s alpha when removed. The 243 completed surveys containing the 65 items yielded Cronbach’s alpha of .98, suggesting that the ICCS provided reliable and usable measurement items.

Factor Analysis

An EFA was conducted to determine the appropriate number of common factors and to reveal which measured variables were reasonable indicators of the ICCS subcategories by the size and magnitude of factor loadings (Brown, 2015). A principal factor analysis (PFA) with a varimax rotation, to permit covarying multidimensional factors, was used on the 65 items. The Kaiser–Meyer–Olkin (KMO) measure proved that the sampling data were adequate for the analysis (Field, 2018), with KMO = .95. The KMO statistics describes how small the partial correlations are relative to the original zero-order correlations. A KMO value close to 1 and small partial correlation values indicate that the variables share a common factor. In addition, Bartlett’s test of sphericity (p < .001) indicated that the correlations between the items on the data were enough and appropriate to perform EFA on the data set with a PFA approach.

Upon evaluation of the components extracted, the researchers did not encounter a clear discrepancy in the numbers of factors suggested by Kaiser’s eigenvalue criterion (>1.00) and the analysis of the scree plot. However, the researchers chose to conduct a parallel analysis (Goodrich, Selig, & Trahan, 2012; O’Connor, 2000) for further evidence. Parallel analysis is a statistical procedure intended to facilitate selection of factors in EFA, based on the generation of a random parallel data set that reflects the number of items and factors of the original data. The researcher then extracts eigenvalues from the random data and compares them with the eigenvalues of the original data. According to O’Connor (2000), factors are retained forasmuch as the ith eigenvalue from the original data is greater than the ith eigenvalue from the random data. The parallel analysis conducted produced a nine-factor solution that explained about 67.5% of the total variance.

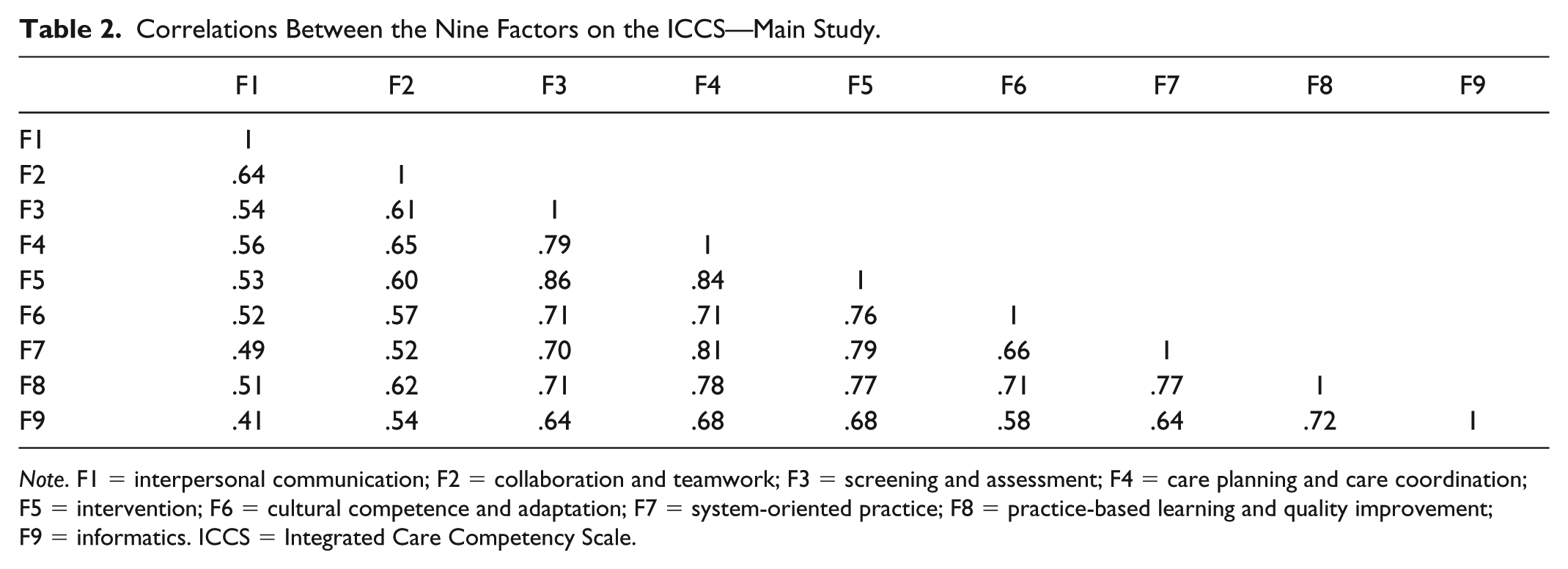

The varimax rotation was employed to maximize the factor loadings and make it easier to interpret the results of the retained nine factors. In examining the factor loadings, we used one of Tabachnick and Fidell’s (2019) criteria to assess whether there were any double or cross loadings. According to Tabachnick and Fidell, a minimum of .15 degrees of separation between loadings can assist researchers decide whether to remove or retain and assign an item to a factor. Items that double or cross loaded with a degree of separation less than .15 were removed because such items unrepresented the true construct measured by the scale. However, these processes resulted in no deletion of any items. All items loaded on the predicted factors because a cross-loading item is an item that loads at .32 or higher on two or more factors (Costello & Osborne, 2005; Tabachnick & Fidell’s, 2019), which was absent in this analysis. In addition, all items that loaded on the predicted factors were kept because the cross-loaded items had values lower than .32 on the other factors. The subscales showed the nine factors relate well by their correlations. Correlations between the factors were moderate and not sufficiently high as shown in Table 2. This suggests that the 65 items correlate well with the ICCS. Moreover, all the factor loadings were at least .30, which provided some reliability information on the ICCS items. Table 3 provides information on the factor loadings during the main study in Phase 3. Therefore, all the 65 items were retained with the nine-factor solution.

Correlations Between the Nine Factors on the ICCS—Main Study.

Note. F1 = interpersonal communication; F2 = collaboration and teamwork; F3 = screening and assessment; F4 = care planning and care coordination; F5 = intervention; F6 = cultural competence and adaptation; F7 = system-oriented practice; F8 = practice-based learning and quality improvement; F9 = informatics. ICCS = Integrated Care Competency Scale.

Standardized Factor Loadings on the ICCS—Main Study.

Note. All factor loadings in the table were at least .30. ICCS = Integrated Care Competency Scale.

Factor Structure

Further analysis was performed on each factor, and the factors were analyzed and categorized based on what the items had in common. This process was done using the integrated care framework presented in the SAMHSA-HRSA nine competency standards (see sample ICCS items). Following the recommendations of Preacher and MacCallum (2003), multiple criteria were used to determine the number of factors to retain. We used two techniques: visual inspection of the scree plot and results of the parallel analysis (Costello & Osborne, 2005; Watson, 2017). For all tested solutions, visual inspection of the item content for each factor was used to ensure extracted factors were meaningful. To interpret factors derived from the EFAs, we identified items with factor loadings greater than .3 as loading on a factor (Tabachnick & Fidell, 2019). The scree plot from the EFA of the 65 items indicated steep declines until after the ninth factor offering support for the nine factors as explained in the previous paragraph with the parallel analysis. Moreover, as shown in Table 4, the internal consistency reliability analysis indicated that the factor’s Cronbach’s alpha for the nine-factor structure ranged between .85 and .94, with an overall scale coefficient value of .98.

Descriptive and Reliability Statistics for the Nine-Factor Solution on the ICCS.

Note. n = 243. Overall Cronbach’s alpha value was .98; Kaiser–Meyer–Olkin measure = .950; Bartlett’s test = 12,970.6 (p < .001). ICCS = Integrated Care Competency Scale.

Total variance explained in the data = 67.5%.

Discussion

In this study, we created and provided initial validation evidence for a comprehensive scale of integrated care competencies (ICCS) that incorporated the major dimensions of the construct. The scale construction followed a process recommended by DeVellis (2012) and Fowler (2014). The ICCS items were generated in three ways; from items derived from a comprehensive literature review, SAMHSA-HRSA standards, and from items suggested by counseling researchers and experts. The results of the major analyses provided evidence that the construct—perceived competence in integrated care based on the SAMHSA-HRSA’s nine components—and its dimensions were estimated adequately by the ICCS. Feedback from expert reviewers, cognitive interviewers, and pilot study participants showed initial support for the content validity of the measure. The findings from the PFA and factor structure provided evidence for the construct validity of the measure. The moderate correlations between factors provided further support for the relative distinctiveness of each dimension or factor. Tests of internal consistency revealed adequate support for the reliability of the measure with graduate counseling students.

This self-report instrument was designed to assess graduate counseling students’ knowledge and skills in integrated care modality. Data from this research study provided enough evidence of the usefulness and reliability of these 65 items in measuring aspects related to nine competency categories as proposed by the SAMHSA-HRSA competency standards in integrated care (Hoge et al., 2014). The steps undertaken to produce this initial scale prove useful and reliable to the development of this initial version of the ICCS. For example, the underlying model and the nine conceptual–theoretical constructs were derived from research and relevant literature addressing competencies in integrated care settings. The potential set of items were subsequently developed using a panel of experts and cognitive interviewees. This provided content validity to the original 73 items that were later honed to 65 items using item analysis and EFA, which initially has provided significant statistical support for the ICCS. In addition, all items loaded well on the main or predicted factors and were retained because they support the content validity of the construct and the cross-loaded items had values lower than .32 on the other factors (Costello & Osborne, 2005; Tabachnick & Fidell, 2019). Finally, results from the reliability statistics and the nine-factor structure (see Tables 2 and 3) support the assertion of the usefulness of the 65 items with the overall Cronbach’s alpha for the ICCS being greater than .80.

Limitations

Although the study results are promising in terms of the development of the scale, the data collected in this study represent the experiences of a small group of graduate counseling students from CACREP-accredited programs and should be viewed with caution. Some limitations exist in this study, and researchers must be circumspective in interpreting the results. The low response rate and less diversity of the samples must be considered in explaining the results of this study. Thus, researchers and counselor educators must interpret the results carefully and consider the nature of samples when conducting future research. Issues such as response tendency and bias should be considered in the refinement of this prototype scale during future studies. It is unclear whether social desirability may have contributed to item responses on the ICCS.

Sampling instrumentation and time were other factors that limited Phase 3 of the study. Although the KMO statistics indicated that the sample was adequate at .95, having a larger and more diverse sample and using techniques such as confirmatory factor analysis (CFA), bifactor analysis, and dimensional analysis could have resulted in a more robust factor analysis solution providing stronger validity evidence to support the existence of these nine factors. These limitations are being prudently addressed in a study that is currently collecting additional evidence to further validate the psychometric properties of the ICCS. Despite these limitations, the process on which this study was developed (i.e., having the items generated and examined by content and subject matter experts, and then exploring a potential factor structure for the instrument with EFA) provides a solid methodological foundation that speaks about the integrity of the data and its results.

Implications for the Counseling Profession

Findings from this current study suggest that the counseling profession and training programs can use the ICCS for competency development and assessment purposes. Counselor education programs seeking to advance students’ competencies beyond the traditional mental health settings could use this tool to establish baseline for knowledge and skill development. Establishing baseline assessments will help counselor educators to determine necessary adjustments needed to be emphasized during instruction. Counselor educators can assess growth as they compare the results from pre- and post-assessments, with a goal of evaluating students’ learning after being exposed to integrated care concepts, competencies, and practices.

Counselor education programs that have received grants to provide training to students can use the ICCS for program evaluation. In addition, with CACREP (2016) standards admonishing programs to expose students to integrated behavioral health care systems, the development and utility of the ICCS is critical to the profession. Counselor educators may use the integrated care measure (ICCS) as a way of supplementing their integrated care objectives in their counselor preparation programs by ensuring that counselors in training conceptualize integrated care as a fluid process that can be participatory, hence challenging the traditional care giving expert decision-making paradigm. Furthermore, the ICCS can serve as a self-exploration protocol for counseling students to reflect on their current and future needs related to integrated care. Finally, in using the ICCS in clinical practice, professional counselors and supervisors may incorporate this instrument for training and professional development.

Implications for Research

This study represents the first empirical examination of the construct—ICCS—used to measure counseling students’ competency in integrated care. Because no existing research studies have empirically explored the ICCS, future studies on this scale are warranted. At this time, no other authors beyond the instrument creators have examined the psychometric properties and behavior of this scale. Researchers interested in using the ICCS should explore the nine-factor solution with other populations (e.g., different racial groups, behavioral health professions, geographic locations) to understand whether the nine-factor solution is consistent with other groups. Such data will have the goal of philosophically validating the tenets of integrated care and being more inclusive. In addition, the ICCS explored counseling students’ competency at one point in time, where evidence of the psychometric properties of the ICCS over time is required to support its use as a strong measure of change in integrated care competency. Therefore, we suggest that future researchers also engage in longitudinal studies using the ICCS.

In comparison with other validity reports, it is important for researchers to further validate this scale with a CFA and/or a convergent validity study to ensure that the scale measures what it intends or purports to measure. Convergent validity could be performed with other related integrated competency assessment tools to provide robust evidence. The methods employed in this research could be applied to future research in coordination with rigorous analyses such as CFA, bifactor analysis, and dimensionality analysis to provide further evidence of validation that would strengthen how results of this research are interpreted and applied to educational research (Lewis, 2017). Ensuing research should focus on larger sample size and how the subscales can be used to identify unique contribution of different factors in explaining graduate counseling students’ competencies in integrated care.

Regarding the psychometric properties of the ICCS, the empirical evidence supports the proposed dimensionalities, internal consistency reliability, and the construct validity of the ICCS. However, it is only the first step in a long-term investigation to create counseling students’ competencies centered on integrated care. To achieve greater applicability, the ICCS was developed with an acceptable sample size from various universities across the United States. A further validation process is required to assess construct validity within other cross-cultural settings using CFA, multimethod matrix, and direct product model (Brown, 2015; Costello & Osborne, 2005; Watson, 2017). In the initial stages of scale development, EFA can be a satisfactory technique for scale construction, whereas CFA, a special case of structural equation modeling, is effective in validating the hypothetical relationship between a construct and its descriptors and modifying instruments to improve their psychometric capabilities.

Conclusion

This study aimed to provide a contribution to the counseling literature in validating one of the first integrated care instrument used with graduate counseling students. Considering the information gathered from this study, it appears that the ICCS will be useful in exploring important research questions and generating reliable knowledge that will enhance the scope of integrated care in counseling, as well as counselor education programs. The findings from this study and the results of this line of research may be useful in assisting graduate counseling students in developing a strong perspective on how to integrate and approach the solution to an integrated care situation. Although further research is desirable and needed, the results of this study are promising, showing that the ICCS can be a valid and reliable self-report instrument for counselor educators. The findings from this study provided enough statistical evidence in support of the reliability and usefulness of the integrated care model and its measure the ICCS. Although this study achieved some level of construct validity through item and factor analysis, convergent and discriminant validity could not be achieved because no integrated care competency scales were available to compare with the ICCS.

The final version of the ICCS consisted of a 65-item list with nine subscales that measured the nine key components of integrated care as proposed by SAMHSA-HRSA. Overall, the internal consistency reliability and multidimensionality results strongly support a nine-factor solution representing the preferred solution for the ICCS. Considering the need in counselor education to promote integrated health care, the existence of such a useful and reliable instrument is timely, important, and appropriate. The future use of the ICCS offers a greater understanding of how counseling students conceptualize integrated care competencies. The ICCS shows promise to be a useful and valuable assessment tool for trainers, policy makers, counseling educators, and academic researchers. We hope that the findings and measure broadly contribute to the study of integrated care competency in counselor education and provide counselor educators, clinicians, and researchers a simple, and yet robust tool to evaluate integrated care competencies.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.