Abstract

The 2020 US election was accompanied by an effort to spread a false meta-narrative of widespread voter fraud. This meta-narrative took hold among a substantial portion of the US population, undermining trust in election procedures and results, and eventually motivating the events of 6 January 2021. We examine this effort as a domestic and participatory disinformation campaign in which a variety of influencers—including hyperpartisan media and political operatives—worked alongside ordinary people to produce and amplify misleading claims, often unwittingly. To better understand the nature of participatory disinformation, we examine three cases of misleading claims of voter fraud, applying an interpretive, mixed method approach to the analysis of social media data. Contrary to a prevailing view of such campaigns as coordinated and/or elite-driven efforts, this work reveals a more hybrid form, demonstrating both top-down and bottom-up dynamics that are more akin to cultivation and improvisation.

Introduction

The 2020 US election was accompanied by a sustained effort to construct and propagate a false meta-narrative of widespread voter fraud. This meta-narrative, an amalgamation of many distinct narratives featuring claims of fraud, took root among a substantial portion of the US population, undermining trust in election procedures and results, and eventually motivating a violent political protest—for many, an attempt to prevent certification of what they believed to be fraudulent election results—at the US Capitol on 6 January 2021 (U.S. House, 2022).

Prior to the election, Benkler and colleagues referred to the effort to delegitimize the mail-in voting process as a “disinformation campaign” (Benkler et al., 2020). Our research builds upon this framing. We expand the window of analysis from mail-in ballots to include broader efforts to sow doubt in the election—leading up to the election, on election day, and afterwards. While Benkler and colleagues highlighted the role that elites and mass media played in perpetrating this campaign, our work seeks to uncover the interplay between elites and their online audiences in seeding, producing, and spreading the misleading narratives comprising this campaign.

Contrasting with descriptions of disinformation that emerged from 2016, which focused on coordinated and foreign dimensions, we conceptualize the disinformation campaign to discredit the 2020 election as a domestic and participatory one. In this article, we explore both its top-down and bottom-up dynamics, and the roles that different kinds of influencers—from long-time conservative political operatives, to self-described “journalists” at hyper-partisan media outlets, to social media all-stars, to members of the Trump campaign—played in its spread.

Focusing on three misleading “voter fraud” narratives, we employ a mixed method approach to the analysis of social media data, leveraging a primary data collection (Twitter) and following traces out to other social media platforms. Throughout our analysis, we focus on the role of influencers in shaping and amplifying these misleading narratives—differentiating between verified and unverified influencers with different audience sizes and describing their activities during pivotal moments in each narrative’s production and spread.

Background and Related Work

The Disinformation Campaign to Delegitimize the 2020 US Election

In October 2021, a national survey (NPR/PBS News Hour/Marist, 2021) showed that 75% of US Republicans believed former President Trump’s claims that voter fraud had compromised the integrity of the 2020 US presidential election. Researchers (Benkler et al., 2020; Center for an Informed Public [CIP] et al., 2021) have argued that those views were influenced by a disinformation campaign—an intentional effort to spread misleading content for strategic gain—that sought to sow doubt in election procedures and results. The campaign’s foundations were laid as far back as 2016, when then President-Elect Donald Trump claimed that “millions of people who voted illegally” had caused him to lose the popular vote (Wootson, 2016). In 2020, President Trump and allies renewed the campaign preemptively, alleging early in the race that massive fraud would once again occur and, after Election Day, insisting that the fraud had occurred. The exact nature of that fraud remained vague throughout: it manifested as hundreds of false and misleading narratives, from claims that machines were changing votes from Trump to Biden, to assertions that large numbers of dead people had voted (CIP et al., 2021). In contrast to most research on disinformation around the 2016 US election. which highlighted foreign involvement and inauthentic coordination (Bastos & Farkas, 2019; Lukito, 2020), here we surface the domestic and participatory nature of the disinformation campaign to sow distrust in the 2020 US election.

Online Disinformation as Participatory

Disinformation can be defined as false or misleading content, intentionally seeded and/or spread, for a specific purpose—often for political gain (Jack, 2017; Starbird et al., 2019). Disinformation often works not just through a single piece of content or a single narrative, but as a campaign. Bittman (1985), a former practitioner, explains that though disinformation campaigns are typically set in motion by witting actors or “agents,” they often incorporate the work of “unwitting agents” who may not fully recognize the role they play. Building from that understanding, Rid highlights how disinformation campaigns can leverage and become integrated into otherwise organic political activism (Rid, 2020). Recent work has additionally highlighted the participatory nature of modern propaganda (Asmolov, 2019; Wanless & Berk, 2017), and conceptualized online disinformation as taking place through collaborations between witting agents and unwitting crowds (Starbird et al., 2019).

In this article, we seek to unpack those participatory dynamics—both the top-down (elite-driven) dynamics stressed by Benkler et al. (2020) and others, and the bottom-up (collaborative) dynamics noted by Starbird et al. (2019), as well as the interplay between them. Through this work, we aim to better understand the roles of political and media elites in setting the frames and spreading messages to their vast audiences, the role of various influencers in moving content from audiences to elites (and back again), and how “unwitting agents” come to participate in these efforts.

From Citizen Reporters to Social Media Influencers

Digital and social media have drastically changed news production and information dissemination. As greater numbers of citizens receive and produce information online, new classes of media have emerged, including “citizen journalists” (Gillmor, 2006) and hyper-partisan digital news sites (Rae, 2021). Many members of this new class of highly online, self-defined journalists and media outlets sit between traditional journalism and activism (Wall, 2015) and command increasing influence in the public sphere.

Online influencers operate within the expanded media horizon created by these digital disruptions, amid the blurred boundaries of media, celebrity, and marketing. Many position themselves not as journalists but as ordinary people, employing a first-person, low-production communication style meant to convey authenticity, relatability, and shared membership in a common identity (Hearn & Schoenhoff, 2016; Marwick & Boyd, 2011). Research on the social media influencer has examined the figure’s power to sell products, shape public opinion on culture, mobilize activists, and intervene in politics (Goodwin et al., 2020). Political campaigns, for example, have sought to coordinate campaign messaging with influencers, working with them to identify what resonates with the candidate’s base, what might trend on social media, and how trends might inform subsequent media coverage (McGregor, 2020).

Marketing research, which was among the first disciplines to identify and characterize the influencer, was intrigued by the figure’s power to shape consumer decision-making, devising metrics such as “reach, relevance, and resonance,” (Solis & Webber, 2012) and coining new terms such as “nano-influencer” and “micro-influencer” to differentiate influence on the basis of follower count.

Here, we adapt a classification scheme derived from the world of marketing (see Figure 1) to scaffold the exploration of the roles of different types of social media influencers, from individuals that sit on the boundaries of journalism, to emergent political activists, to micro-celebrities that build their audiences online, to more established political operatives who carry their real-world visibility over to social media.

Audience size classification, design adapted from Mediakix (2019).

Data and Methods

Three Case Studies

The disinformation campaign to discredit the 2020 US Election took shape through hundreds of false or misleading claims of election fraud. We selected three cases that (1) emerged from different periods of this campaign, (2) received significant participation (> 25,000 tweets), (3) our research team was familiar with because we documented them in depth as they were occurring:

Case 1: Sonoma Ballots. A claim from September 2020 alleging that over 1,000 mail-in ballots had been found in a dumpster in Sonoma, California.

Case 2: SharpieGate. A claim from Election Day 2020 alleging that felt-tip pens were bleeding through ballots and invalidating them. Versions of the claim appeared in multiple locations, but achieved greatest traction in Arizona, where the framing expanded to assert that Trump supporters had been specifically targeted.

Case 3: Maidengate. A claim from 9 November 2020, alleging that “political predators” had committed voter fraud by casting inauthentic votes using voters’ maiden names.

Although not necessarily representative of all narratives, these three cases demonstrate different kinds of narratives (opportunistic amplification, collective sensemaking, and conspiracy theorizing) and reveal the participatory dynamics within this campaign.

Data

To understand how these stories spread, we look to the digital record, that is, data generated through the use of social media and other digital platforms. We center our analysis on Twitter, but follow traces in that data over to other platforms.

Our primary data set consists of over 1 billion tweets, gathered contemporaneously from August 2020 to January 2021 using the Twitter Streaming API. Our initial collectors captured tweets that contained voting-related terms (such as “vote,” “voter,” “voting,” and “ballot”), terms related to claims of voter or election fraud, and terms related to potentially salient locations. We also identified emergent false and misleading stories in real time, occasionally adding conspiracy-specific terms (like #SharpieGate) to the collectors. From this broad data set, we then curated a data set specific to each case study—using keyword-based search strings to create a comprehensive, low-noise sample of related tweets.

Our data collections did experience rate limiting from the Twitter Streaming API on Election Day, which impacted data coverage, especially, for the SharpieGate case (~30% tweets lost). However, we were able to use retweets to recreate the original content and understand the spread of highly retweeted content (our primary focus here).

For accounts that shaped the spread of narrative, we categorized each according to their audience size (see Figure 1), as well as their verified status, whether they have been suspended, how they credentialed themselves, and the role they played in seeding, shaping, and/or amplifying the narrative. In addition to data collected directly from Twitter, we also follow links in the data to content on other platforms, including other social media platforms (e.g., YouTube, Parler, Facebook), external websites, and a Google document relevant to Case 3.

Note on Anonymization

To protect the identities of users who may not understand that their online activities can be seen as part of the public record, we anonymize accounts (and content from accounts) that are unverified, have less than 100,000 followers, and are not public figures.

Methodology

We employ a grounded, interpretative approach, building upon Charmaz’s (2014) approach for constructing grounded theory to integrate both qualitative and quantitative methods as we build understandings of complex social phenomena. This approach, described in detail in the study by Starbird et al. (2019), draws upon methods from crisis informatics (Palen & Anderson, 2016) and has previously been applied to studies of online rumors (Maddock et al., 2015). Here, we focus on specific rumors or stories, using visualizations and descriptive statistical analysis to identify high-level patterns and anomalies and then applying deep qualitative analysis to understand what those patterns and anomalies mean. We also borrow here from the trace ethnography approach outlined by Geiger and Ribes (2011), following the collaborative “work” of creating and spreading these misleading narratives across different platforms—from Twitter and Parler to Facebook and Google Docs.

This research emerged from data uncovered through the Election Integrity Partnership (EIP), a coalition of research entities who worked during the 2020 election to detect, analyze, and respond to election-related misinformation in real time (CIP et al., 2021). We initially began studying these cases as they were “going viral.” Later, we created temporal and network graphs, using those graphs to guide qualitative analysis of specific posts or accounts; closely analyzing hundreds of social media posts to identify “influential” posts and accounts as well as broader patterns and themes; and producing extensive memos synthesizing insights. The cases presented here attempt to distill the richness of those analyses into coherent accounts that both provide context for understanding how each narrative took shape and spread, and allow us to explore the role of influencers and the collaborative dynamics within each case.

For more detail about our selection of case studies, how we identified tweets related to each case study, our criteria for anonymizing accounts, and limitations of our methods, please see Appendix A (Supplemental material).

Background: Establishing Expectations of Voter Fraud

Benkler et al. (2020) described how the effort to undermine trust in the mail-in voting process was driven by elites and spread through mass media outlets, including Fox News and hyper-partisan media outlets. A primary actor in this campaign was President Trump, who repeatedly used his social media accounts and public speaking opportunities to spread false, misleading, and unsubstantiated claims of voter fraud (see Figure 2).

Tweet posted by President Trump claiming the 2020 election would be rigged.

The tweet above, posted by President Trump’s official account in June 2020, was one of numerous social media posts by @realDonaldTrump promoting the false meta-narrative of massive voter fraud. These messages resonated with Trump’s followers, setting, for some, a false expectation of election fraud.

Although initially focused on mail-in voting, the effort to sow doubt in the election evolved to include other allegations of electoral malfeasance. As Election Day approached, the Trump campaign encouraged its followers to join the “Army for Trump” and collect evidence of fraud, providing instructions for serving as poll observers and online forms for supporters to submit evidence of election issues. Our data suggest that, likely mobilized by the “Army for Trump” messaging and repeated claims of voter fraud from pro-Trump political and media elites, many Trump supporters arrived at the polls (and went online) actively searching for evidence to support the election fraud narrative.

Case 1: Sonoma Ballots

In the weeks leading up to the election, several stories of mail-in ballots being discarded or destroyed went viral. Some were based on genuine instances of ballot misplacement or improper disposal, although their potential impact on the election was exaggerated. Others were based on willful misinterpretations or misleading framings of standard election administrative processes, opportunistically amplified for political gain. The Sonoma Ballots case belonged to the latter category.

The Sonoma Ballots rumor, which first emerged in the tweet below, featured photos of election materials discovered in a dumpster in Sonoma, California, claiming that these “ballots” demonstrated the vulnerability of mail-in voting in the 2020 election (see Figure 3). The tweet’s author, a self-described journalist for right-wing media outlet The Blaze, concluded his exposition with the words, “Big if true.”

First tweet claiming that ballots had been found in a dumpster in Sonoma CA, posted by @ElijahSchaffer on 25 September 2020 at 12:52 a.m. Pacific (7:52 a.m. UTC).

It was not true. The photo depicted ballot envelopes received and processed during the 2018 election, which were being discarded according to guidelines, 22 months after that election (Reuters, 2020). But lack of veracity did not stop the misleading claim from spreading widely—45,000 tweets in the span of about 36 hr.

Initially, the story spread almost exclusively through retweets and quote tweets (and retweets of quote tweets) of @ElijahSchaffer’s original tweet. Schaffer’s account, which Twitter had verified with a blue-check, had 245,000 followers at the time of this tweet—a “meso” influencer-sized account.

Approximately 5 hr after Schaffer’s tweet, the Gateway Pundit posted an article and accompanying tweet featuring the same photos and claims:

The Gateway Pundit is a hyper-partisan, right-wing, online micro-media outlet. In advance of and following the 2020 election, Gateway Pundit repeatedly pushed false and misleading narratives of voter fraud through both its social media accounts and its website (CIP et al., 2021). Its verified Twitter account had 302,000 followers (“meso” level) on 25 September and would grow to ~460,000 followers before being suspended on 10 January 2021. Gateway Pundit’s article and tweet about the Sonoma Ballots contributed to a rapid surge in engagement with the narrative, which persisted for several hours.

As Figure 4 shows, the vast majority of tweets (75%) about this narrative were either retweets/quote tweets of Elijah Schaffer or contained links to the Gateway Pundit’s article. These dynamics are consistent with characterization of disinformation by Benkler et al. (2020) as driven by media and political elites from the top-down, but in this case the “elites” were mid-sized influencers from hyper-partisan media outlets using a digital-first approach.

Temporal graph of the Sonoma Ballots story (black line). The salmon area consists of retweets and quote tweets of @ElijahSchaffer’s original tweet. The purple area consists of tweets linking to the Gateway Pundit’s article.

Both Schaffer and the Gateway Pundit note that the photos had been sent to them by a source, which the Gateway Pundit refers to as a “reader.” Although we do not know the identity of this source, we assume—from the absence of public evidence of these claims prior to Schaffer’s tweet—that the source did not have enough visibility to spread the claims organically from his or her own account, and thus contacted influencers for assistance. Krafft and Donovan (2020) refer to this dynamic as “trading up,” a technique for moving content from low-visibility accounts on the periphery to higher visibility accounts. This example demonstrates how participatory audiences of hyper-partisan media collaborate in the co-creation of stories that buttress existing frames and narratives.

Eventually the Sonoma Ballots narrative would reach the account of mega influencer Donald Trump Jr, President Trump’s son, who retweeted Schaffer’s original tweet about 10 hr after it was first posted. The verified account of @DonaldJTrumpJr, which had over 5.6 million followers at the time, was a noted “repeat spreader” of misleading voter fraud claims (Kennedy et al., 2022), often acting as an accelerant rather than a catalyst, amplifying and sustaining an already-viral claim.

Figure 5, a graph that shows both the cumulative spread of the narrative and the position of specific tweets within that spread, reveals a progression from micro- to meso- and eventually to mega-influencers. Early amplifiers include the verified accounts of pro-Trump influencer Ian Miles Cheong (@stillgray) and entrepreneur Michael Coudrey. Both figures repeatedly tweeted misleading claims about election fraud.

Cumulative graph of Sonoma Ballots tweets. The y-axis represents the total number of tweets. The x-axis is time. Individual tweets of influencers (> 10,000 followers) are plotted, sized by follower count. The view is focused on the first 10 hr of propagation (aligned with the gray box in Figure 4).

Another notable account is @Timcast, a micro-media journalist whose work has found increasing traction with right-wing audiences. His tweet precipitated a surge around 12:20 UTC. That surge was also assisted by @EyesOnQ, a now-suspended account that gained influence through tweets about the QAnon conspiracy theory. After @EyesOnQ’s tweet, a large number of micro-influencers (accounts with 10,000–250,000 followers) helped to sustain the spread of this narrative. Strikingly, 32% of all Sonoma Ballots tweets, including six of the top-10 most-retweeted tweets, were posted by now-suspended accounts. Suspensions are especially concentrated among the micro-influencers group. Both trends persisted across our case studies.

The Sonoma Ballots controversy occurred primarily on Twitter. After an official correction by Sonoma County and enforcement action by Twitter, engagement with the rumor faded dramatically. However, claims persisted on the Gateway Pundit and experienced a brief second life on Facebook a few days later.

Case 2: SharpieGate

Our second case study, SharpieGate, encompasses several claims that were woven together to form a false “voter fraud” narrative, exhibiting bottom-up and top-down dynamics, and demonstrating the roles of several different kinds of influencers.

SharpieGate’s core assertion was that Sharpie pens given to in-person voters on Election Day were bleeding through ballots (true) and that this bleed-through was causing ballots to be rejected (misleading; these ballots were rarely rejected), thus disenfranchising those voters (false, rejected ballots were counted using alternative methods). According to officials (Citizens Clean Elections Commission), Sharpie pens were recommended for in-person voting in certain locations (including Arizona), because they dry faster than ink pens, which can smear vote-reading devices. Unfortunately, many voters—and others who joined the online chorus of voices about this case—may have genuinely misunderstood this electoral process.

SharpieGate unfolded in five distinct stages.

Stage 1: Sensemaking and Motivated Amplification

On Election Day, the earliest wave of voter concerns about Sharpies occurred in Chicago. At 6:31 a.m. local time, a low-follower Chicago voter of indeterminate political affiliation tweeted concern that his precinct’s ballot reader struggled with his Sharpie-marked ballot. His tweet received no engagements.

Thirty minutes later, a conservative media personality in Chicago, @AmyJacobson (22,500 followers, a nano-sized audience), echoed concern about Sharpies and encouraged voters to bring their own pens. Later in the day, Jacobson’s tone grew more alarmist, quoting her original tweet and adding that ballots were being placed in a BOX (Figure 7).

Jacobson’s tweets were highly retweeted and quoted (578 amplifying engagements), constituting 46% of all Sharpie-related tweets on Election Day. Quote tweets became an escalatory vector. Several users explicitly framed Jacobson’s tweet as evidence of voter suppression or voter fraud. Others attempted to trade up—calling attention to large-following, pro-Trump accounts by mentioning their handles alongside claims about Sharpies and fraud.

Although most tweets about Sharpies on Election Day focused on Chicago, a parallel conversation was emerging in Arizona. The first tweet to connect Arizona and Sharpies was posted at 14:17 UTC (7:17 a.m. AZ time, minutes after the polls opened) by an account with ~3,000 followers:

The tweet was posted as a reply to a highly retweeted (> 8,500) thread, initiated by a columnist at partisan outlet Newsmax, that became a site for aggregating right-wing claims about Election Day issues. The AZ reply tweet shared a similar narrative to Jacobson’s: accusing poll workers of indifference to Sharpie bleed-through. The leading question of “Electioneering?” implied that Sharpies may have intentionally disenfranchised voters. The embedded image contains a clearly marked Trump vote, signaling to its audience the assumed target of this potential conspiracy.

This first tweet about Sharpies in Arizona saw limited engagement (19 retweets). Less than 10 min later, another established account (1,700 followers) tweeted out a warning, seemingly motivated by legitimate concern, to voters to bring their own pens. That tweet got more traction, with 140 amplifying engagements. Overall, however, the numbers remained small—those two tweets constituted most of the Arizona-related spread of Sharpie claims on Twitter on Election Day. Throughout the day, @MaricopaVote (the official account of the Maricopa County election department) and several local, meso-sized traditional media outlets in both Arizona and Chicago attempted to fact-check the claim, but received very little amplification on Twitter.

Facebook hosted parallel conversations about Sharpies around the same time. At 15:15 UTC (8:15 a.m. AZ time), a Republican party official posted an image encouraging voters in Maricopa county to bring their own pens to the polls, receiving 39 engagements and 10 shares. At 21:50 UTC (2:50 p.m. AZ time), a Republican candidate for office in Maricopa county incorrectly asserted that ballots were being “canceled” due to Sharpie pen use. The post received 70 comments, though the conversation was localized—primarily people from Arizona sharing their own experiences with a tone of concern and/or anger.

Stage 2: Development and Growth of the Voter Fraud Narrative

As the polls closed, conversation around Sharpies simmered. Online warnings to voters to bring their own pens had led to clashes within polling places as poll workers attempted to distribute county-provided Sharpie pens. At 3:52 UTC on 4 November (8:53 p.m. AZ on 3 November), a right-wing political activist in Arizona posted a Facebook video featuring a woman claiming election officials were forcing people to use Sharpie pens and causing invalidated votes, tying the woman’s claims to a larger conspiracy against Trump voters. The video has accumulated over 4 million views and 27,000 engagements on Facebook. Although we cannot determine exactly when those views and engagements occurred, the post received over 100 comments in the subsequent 24 hr, including many from people in AZ who voted with Sharpies and expressed anxiety about whether their vote had counted. One asked the original poster to make the video shareable—which he did at 4:01 UTC (9:01 p.m. AZ time).

About an hour after the Facebook video was posted, at 5:20 UTC (10:20 p.m. AZ time), Fox News declared Biden the winner of Arizona. The conversation around Sharpies on Twitter, which had gone silent after the polls closed, began to revive shortly thereafter (see Figures 6 to 8). Between 06:00 and 17:00 UTC, the tweet rate increased steadily and the narrative began to converge upon explicit accusations of voter fraud.

Temporal graph of SharpieGate tweets. Shaded areas indicate the five stages: (1) collective sensemaking, (2) development of the voter fraud narrative, (3) viral spread through macro/mega influencers, (4) correction by mainstream media, and (5) resurgence among partisan media with connection to the broader election fraud meta-narrative.

Jacobson’s two tweets about Sharpies bleeding through ballots in Chicago.

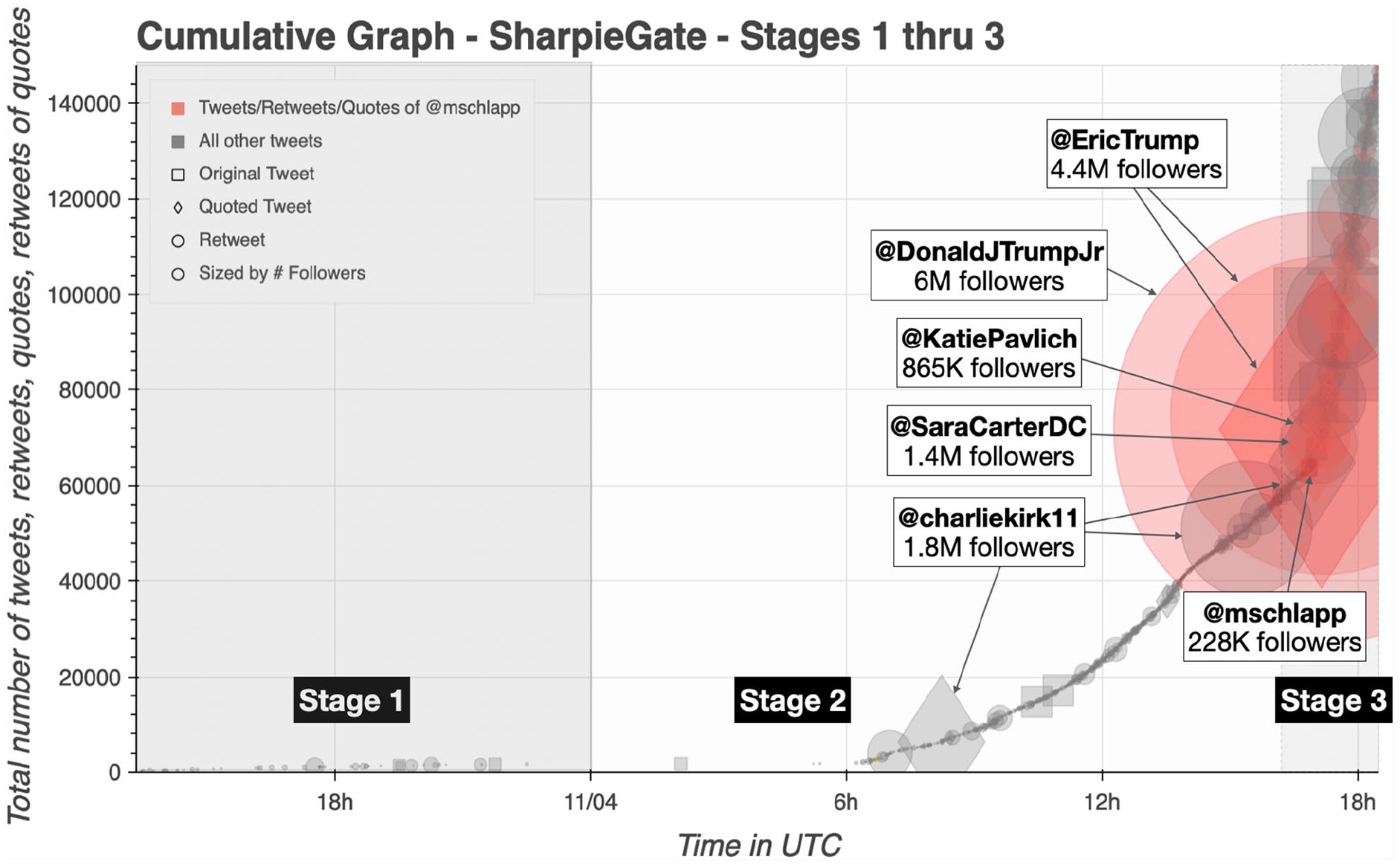

Cumulative graph of Stages 1–3 of SharpieGate. The y-axis represents the total number of tweets. The x-axis is time. Individual tweets of influencers (> 20,000 followers) are plotted, sized by follower count. Tweets are colored red if they are tweets, retweets, or quote tweets of @mschlapp.

The first prominent tweet in this surge made an explicit claim connecting Sharpies to Democrat-driven voter fraud in Arizona:

The account that posted this tweet, an unverified micro-influencer with 41,500 followers, was operated by a conservative activist. The tweet received 1,932 amplifying engagements—gaining momentum through retweets and quotes from pro-Trump, #MAGA, and QAnon networks—and was still accelerating when Twitter suspended the account at 08:15 UTC (1:15 a.m. AZ time).

The election night Facebook video reached Twitter around the same time as Tweet 2a, initially within textual posts echoing its claims, then within (674) tweets linking to the video on Facebook. Eventually users embedded the video directly into tweets. The first highly retweeted tweet with the embedded video was posted by an unverified micro-influencer who has since been suspended from Twitter:

That tweet received 18,268 amplifying engagements. One quote tweet was from an unverified low-follower account of a person in Arizona:

This tweet was retweeted 8,941 times and quoted 1,461 times. Over the next few hours, the tweet’s author traded up on the claim, attempting to reach more influential accounts—including @kelliwardaz (Kelly Ward, chair of the Arizona Republican Party), @PressSec (then Press Secretary Kayleigh McEnany), and a now-suspended high-follower QAnon account. The tweet record suggests the author of this highly viral quote tweet was legitimately concerned about the issue. Hours later, she noted that she deleted her previous tweets after reading a correction from a local news website quoting the Maricopa County elections department.

Altogether, retweets and quotes of just five tweets constitute half of the growth in the SharpieGate narrative that takes place in Stage 2. Interestingly, three of these tweets originated from unverified, low-follower accounts, while the other two originated with unverified micro-influencers that have since been suspended from Twitter. All but one of the tweets’ authors had markers in their profile indicating support for conservative politics generally or President Trump specifically, suggesting that the authors were, at least in part, politically motivated to share this content. Amplification during this time occurred, primarily, through Trump-supporting accounts with nano-, micro-, and a few macro-sized audiences—including some verified accounts. Of particular note is the role of mega influencer @charliekirk11. Kirk is the founder and president of Turning Point USA, a conservative political organization and a “repeat spreader” of false and misleading claims of election fraud in 2020 (Kennedy et al., 2022). Between midnight and 17:00 UTC on 4 November, as the SharpieGate narrative began to gain steam, Kirk posted two SharpieGate related tweets—a quote tweet of Tweet 2a and a retweet of Tweet 2c.

Stage 3: Mass Amplification by Conservative/Pro-Trump Influencers

On the day after the election, between 17:00 and 18:30 UTC, the #SharpieGate narrative began to “go viral” on Twitter—moving through a series of accounts with meso-, macro-, and mega-sized audiences and garnering more than 80,000 tweets in an hour and a half, receiving more amplification during that interval than in the preceding 24 hr combined. Figure 8 reveals that the surge was, in part, catalyzed by the following tweet from conservative operative Matt Schlapp:

The tweet—which couched the claims about Sharpie pens in uncertainty—received 15,242 amplifying engagements, echoing through the accounts of macro- and mega-influencers and media accounts including Fox News contributor @SaraCarterDC, conservative news outlet TownHall’s editor @KatiePavlich, and President Trump’s sons, @EricTrump and @DonaldJTrumpJr. Schlapp served as an influencer’s influencer, helping to move the developing conspiracy theory from its origins in low-follower accounts into the awareness of massive influencers in hyper-partisan media and within the Trump campaign.

Other accounts with highly quoted and retweeted tweets at this time include Charlie Kirk who posted another quote tweet asking “What’s going on here?,” conservative author @DineshDSouza (1.8 million followers) who similarly asked “What’s this I’m hearing about Sharpies?,” the founder of “Students for Trump” @RyanAFournier (1.1 million followers), and Arizona GOP congressman @DrPaulGosar (49,000 followers).

Stage 4: Fact-checking by Mass Media Accounts

At the end of State 3 and through the beginning of Stage 4, a series of attempted fact-checks of the SharpieGate narrative emerge—first by mega-sized accounts such as anti-Trump political pundit @gtconway3d, BlackLivesMatter activist @deray, and left/center-left media outlets @thedailybeast, @BuzzfeedNews, and @ViceNews, and subsequently by mass media outlets with mega-sized audiences such as @ABC, @HuffPost, and @WashingtonPost. Following common patterns in online rumoring (Maddock et al., 2015), these corrective tweets were not highly retweeted (relative to those pushing the misleading claims), but instead accompanied a decrease in engagement around the narrative.

However, persistent chatter remained headed into the second day following the election—mostly retweets of viral tweets posted earlier.

Stage 5: Conservative Macro- and Mega-Influencers Drive a Resurgence

On Twitter, another resurgence of SharpieGate occurred on 5 November at 16:20 UTC, precipitated by this tweet from Fox News host Maria Bartiromo, a macro-influencer with 850,000 followers:

Maria’s tweet pulled together four different claims about voting irregularities into a single post, situating the SharpieGate claims within the broader “election fraud” narrative. Interestingly, her post hinted toward conspiracy without providing a coherent theory. The tweet spread widely immediately, but accelerated about 10 min later when it was quote-tweeted by @EricTrump, who explicitly articulated the “fraud” framing and issued a call to action to the Federal Bureau of Investigation (FBI) and Department of Justice (DOJ).

Bartiromo’s tweet was quoted by several other verified, high-follower accounts in the conservative and pro-Trump media sphere, including conservative activist and president of Judicial Watch @TomFitton and Trump-supporting lawyer @RudyGiuliani; and retweeted by @DonaldJTrumpJr, Trump lawyer @JennaEllisEsq, and former GOP Speaker of the House @NewtGingrich. Bartiromo’s tweet generated a surge of ~25,000 SharpieGate tweets in its first hour and eventually received 67,000 amplifying engagements.

In subsequent days, #SharpieGate faded and merged into other controversies, evolving into one among many supporting claims for the #StopTheSteal movement’s meta-narrative of a stolen election. The pattern of its bottom-up emergence, elite propagation, and unheralded decline would repeat itself in new theories to come—including MaidenGate (CIP et al., 2021).

Case 3: MaidenGate

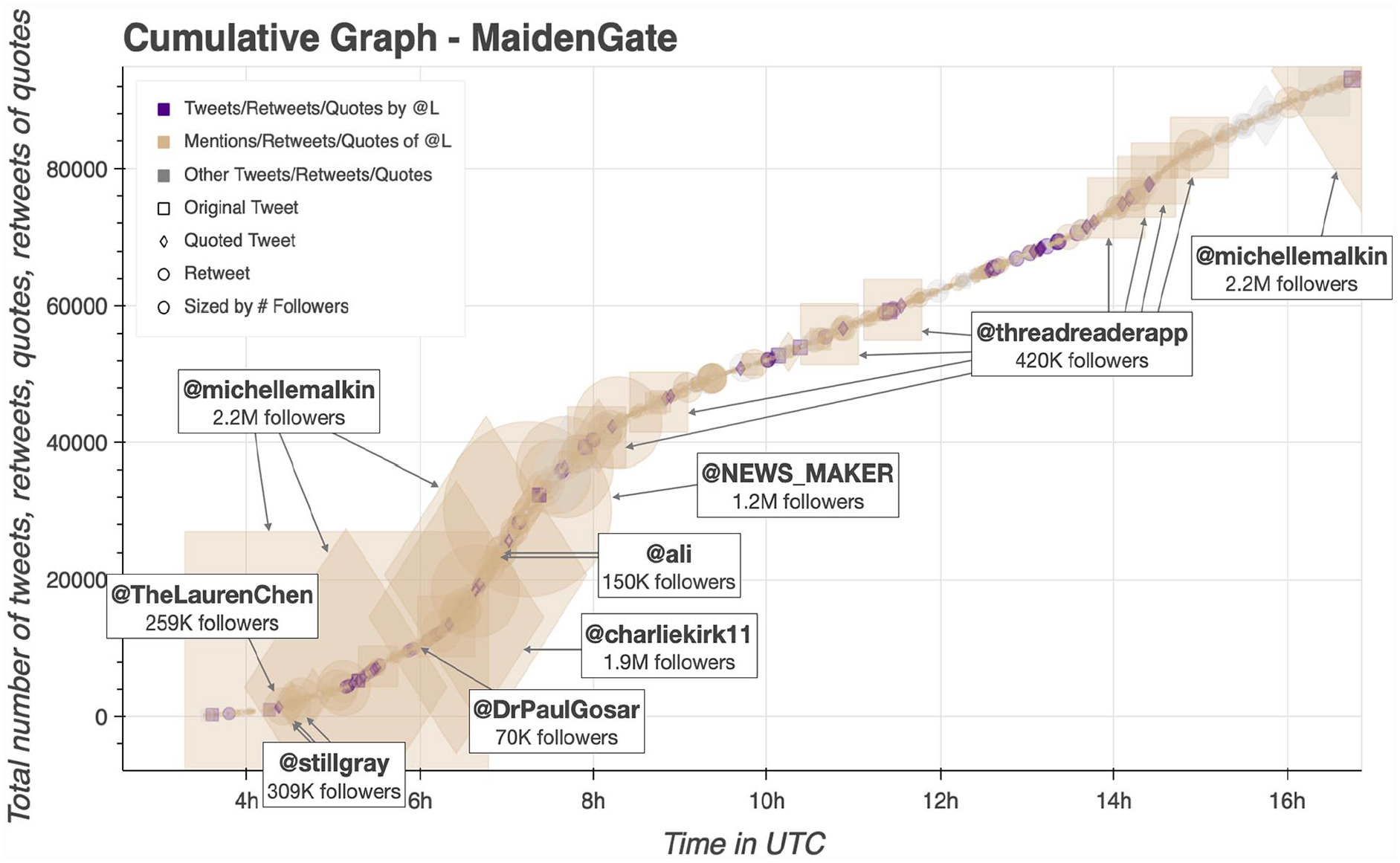

The MaidenGate conspiracy asserted that fraudulent Biden votes were cast in swing states using women’s prior legal (“maiden”) names. This theory, which supported the larger meta-narrative of the stolen election, emerged as a direct result of agitation by online political influencers—and especially the work of one particular user (L, a pseudonym). The majority (70%) of all tweets about MaidenGate were retweets, quote tweets, or mentions of @L (see Figure 9 below). At the time, L was an aspiring political micro-influencer (unverified, 86,000 followers).

Temporal graph of #MaidenGate. Total tweets per minute in black. Tweets per minute retweeting, quoting, or mentioning @L shaded in tan.

The digital record indicates that L had been active across several platforms, successfully working to gain followers for months prior to the election. At the beginning of the COVID-19 pandemic, @L had only 345 Twitter followers. She styled herself a “data analyst” and authored a political blog. Her earliest articles discuss rumors about the origins of the coronavirus, including conspiracy theories about Bill Gates’ alleged involvement. Over the summer, @L focused on the Epstein trial; in October 2020, she investigated Hunter Biden. During this time, her account grew to the level of micro-influencer.

Between 4 and 10 November, @L posted hundreds of tweets highlighting perceived issues with the election and promoting the meta-narrative of voter fraud. Many were retweets of conservative and pro-Trump influencers, including several who have appeared in this article’s earlier case studies, including @TomFitton, @stillgray, @JackPosobiec, and @DonaldJTrumpJr—along with others including conservative mega-influencer @michellemalkin, and multiple retweets of @realDonaldTrump. Her original tweets (131) received some engagement (7,730 retweets) during this time—but none from verified meso-, macro-, or mega-influencers (> 100,000 followers).

On 10 November, @L posted a flurry of tweets that ignited the MaidenGate conspiracy theory:

The tweets, which explicitly claimed fraud, received about 600 amplifications over the next 45 min. Meanwhile, @L posted a steady stream of tweets related to this and other election concerns, including a reply to mega-influencer and political pundit @michellemalkin (2.2 million followers). Next, @L followed with a call to action:

0@L began to retweet and quote-tweet others who replied to her tweets claiming that they or someone they know encountered additional registrations in their name. Soon another micro-influencer (unverified, now suspended) began to collaborate with her, amplifying replies with similar stories. This collaborative work of collecting and amplifying other similar stories continued throughout the MaidenGate discourse.

Less than an hour after her first tweet and minutes after her call to action, @L’s claims started to gain traction among micro- to macro-influencers—and eventually mega-influencers. At 4:24 UTC, meso-influencer @TheLaurenChen, host at hyper-partisan BlazeTV, retweeted @L’s call to action. Shortly thereafter, meso-influencer @stillgray, who had been tagged into conversation minutes earlier, posted a series of quotes and retweets of @L. At 5:02 UTC, mega-influencer @michellemalkin, who @L had tried to interact directly with earlier, posted the first of two quotes and two mention tweets promoting @L and her work. GOP congressman @DrPaulGosar, who had helped amplify #SharpieGate and whose follower count had grown considerably through his #StopTheSteal tweeting, quote-tweeted one of @L’s calls to action. At 6:24 UTC, conservative activist @charliekirk11, who had also helped amplify #SharpieGate, joined the echo of voices with his signature “just asking questions” style, quoting @L. At this point, L’s tweet—and the #MaidenGate conspiracy theory—was going viral. Eventually it spread through more than 140,000 tweets (see Figure 10).

Cumulative graph of the initial development of MaidenGate. The y-axis represents the total number of tweets. The x-axis is time. Individual tweets of influencers (> 20,000 followers) are plotted, sized by follower count. Tweets are colored purple if posted by @L and tan if they are mentions, retweets, or quote tweets of @L.

As @L promoted her investigation on Twitter, her follower count grew—from 86,000 at the time of her first tweet to 114,000 about 13 hr later. As @L and her claims gained visibility, conservative activist and meso-influencer (150,000 followers) Ali Alexander, a founder of the Stop the Steal movement, joined @L in her efforts. At 6:53 UTC, he tweeted that he was “looking into @L’s #MaidenGate” and later confirmed via tweet that he and @L had spoken. At this point, #MaidenGate became connected to the emergent #StoptheSteal movement—both through the collaborative, but not necessarily coordinated, public sharing of related content; and through concerted organizing and shared infrastructure between influencers associated with both.

Building a Conspiracy Theory across Platforms

As the #MaidenGate conspiracy theory developed, proponents turned to other platforms—including Parler, YouTube, Facebook, Periscope, and Google Docs—to amplify and collaborate. Alexander hosted a Periscope livestream of @L’s accusations. Like other savvy influencers, he couched the claims in uncertainty while building anticipation: “We don’t know the top of this. This is either a small problem, or it’s a problem that will shake America to the core.” His broadcast got 41,000 viewers, helping to carry the #MaidenGate claims over to Alexander’s growing audience of political activists tuning into his #StopTheSteal livestreams.

Shortly afterwards, Alexander’s team created a Google Document to consolidate information around the ongoing “investigation.” The sheet offered detailed advice for filing complaints about potential voter fraud using one’s maiden name, providing links to voter registration websites in dozens of states. Its watermark read “stopthesteal.us,” demonstrating how the #MaidenGate conspiracy was able to tap into broader #StopTheSteal infrastructure formed over the preceding days. It was shared on Twitter, Parler, and Facebook.

No evidence emerged to substantiate @L’s core accusations—that someone had cast a vote in her mother’s maiden name or that there was a systematic effort to conduct voter fraud through maiden names. By evening of 10 November UTC, Twitter announced it was monitoring the narrative and YouTube appended an information panel to #MaidenGate videos. After gaining nearly 30,000 followers (32% growth in 13 hr), @L was suspended by Twitter. Within a few days, the #StopTheSteal movement moved onto formulating other theories based on other “evidence.” #MaidenGate faded into the background.

Discussion: The Dynamics of Participatory Disinformation

We set out to explore questions about the dynamics of online disinformation—particularly whether disinformation around the 2020 US Election was primarily top-down (moving from elites to their audiences) or bottom-up (moving from audiences to elites). Previous work by Benkler and colleagues (2020) suggests that top-down dynamics, primarily from mass media on the political right, drove the spread of disinformation targeting the mail-in balloting process. Our work, which looks to the social media record to understand how specific narratives within the broader campaign developed and spread, complicates that view. In all three cases presented here, we can see both top-down and bottom-up dynamics, underscoring that disinformation around the 2020 election was participatory and took shape as collaborative efforts between political elites, hyper-partisan media, social media influencers, and online audiences.

These findings challenge the framing of disinformation campaigns as either top-down or bottom-up, mass media-driven or social media-driven. Instead, we find something more akin to Chadwick’s (2017) conceptualization of political organizing within a hybrid media system where political frames take shape and spread through elite-activist newsmaking “assemblages” across traditional and social media. Political influencers both mediate and shape these efforts, acting as “opinion leaders” (Katz & Lazarsfeld, 1955) that frame information from elites to online audiences, and promote online audiences back to the elites in a “multi-step flow” process incorporating conversation starters, active engagers, influencers, network builders, and “information bridges” (Feng, 2016). This view aligns with previous research on participatory propaganda (Wanless & Berk, 2017) and the collaborative nature of online disinformation (Starbird et al., 2019)—and provides insight into how “unwitting agents” (Bittman, 1985) become not just amplifiers but also producers of strategic narratives within disinformation campaigns.

In addition, we suggest that improvisation might be a useful framework for understanding the joint construction of conspiracy theories in the 2020 election. Neither uncoordinated nor scripted, improvisation requires shared purpose—in this case, expectations set by elites that the election was fraudulent and that evidence for that fraud can therefore be identified—but also spontaneity and adaptation.

Top-down and Bottom-Up: Setting and Fulfilling Expectations of Voter Fraud

There is no doubt that “elites” in media and politics helped seed all three of the narratives explored here—a top-down dynamic. Elites repeatedly invoked the prospect of a “rigged election,” setting an expectation of voter fraud among pro-Trump audiences, then synthesized evidence that emerged from the online audiences who rose up to fulfill that expectation. However, to enact those narratives, bottom-up participation was required: conspiracy theorizing is a collaborative endeavor, and in each of our case studies we observe groups of low-follower accounts and unverified aspirational influencers collaborating with verified meso- to mega-influencers and “media-of-one” micro-outlets to construct and propagate narratives.

In Sonoma Ballots, journalists at partisan media outlets launched the narrative based on photographs supplied by one of their readers, and all seemingly believed that they had uncovered genuine evidence of ballot fraud. In SharpieGate, after encouraging voters to visit the polls to uncover voter fraud (top-down), the Trump campaign helped to elevate (bottom-up) the particularized conspiracy theory generated from the evidence gathered. In MaidenGate, Trump supporters looking for evidence to contest the election seized upon scattered reports that some voters remained registered under previous names in other states, and—with the help of the #StopTheSteal campaign’s mobilizing infrastructure—assembled those reports into a theory of voter fraud. In each case, elites established expectations that non-elites endeavored to corroborate; when non-elites uncovered new “evidence” or wove together a new theory, elites moved quickly to amplify it. In this back-and-forth motion of evidence-gathering and consolidation of that evidence, conspiracy theories took form.

Mediating this bidirectional (top-down, bottom-up) information flow, is the political influencer, a figure of liminal identity—neither traditionally “elite” nor pedestrian, capable of influencing online crowds precisely because they disclaim the elite markers that would differentiate them from those crowds. While no model can completely capture the influencer’s role, the “two-step flow” theory provides a productive lens (Katz & Lazarsfeld, 1955). That theory contends that “opinion leaders” contextualize and represent content from mass media to audiences from whom they command trust. Building upon Feng (2016), we suggest that, in the omnidirectional information flows of the social media age, influencers perform a similar mediating role: they both transmit mass media information to audiences who trust them for their authenticity, and redirect “evidence” from those audiences back to the elites whose influence they aspire to recreate. By taking claims from the periphery of a network and moving them into the center, increasing awareness first within their community, and then, via pick-ups from other influencers, they facilitate the spread of a message across communities toward mass awareness (Centola, 2021).

Improvisation and Infrastructure in Participatory Disinformation

As we look deeper into this collaborative production, especially at the role of audiences and influencers in not just amplifying but producing misleading narratives for participatory disinformation campaigns, one useful lens is that of improvisation. An improvisation can be thought of as a performance by a group of individuals around a shared goal (Crossan, 1998). Researchers have studied improvisation in a wide range of contexts, for example, among jazz performers (Crossan et al., 1996) and disaster responders (Kendra & Wachtendorf, 2007; Mendonca et al., 2001). Here, we conceptualize participatory disinformation as improvisation, through three performances that took shape around the shared goal of providing evidence to fit the “voter fraud” theory.

Improvisation requires structure (Crossan, 1998), at minimum a shared understanding of a loose set of “rules” that guides actions. Across the three performances, we see that shared understanding grow and those rules become more clear, that is, identify potential issues with voting, gather evidence to support a view of that issue as systematic and part of a larger “voter fraud” conspiracy, draw attention from influencers who can amplify the concern to broad audiences, organize people with evidence to file affidavits, repeat. With MaidenGate, we see the performance tap into the existing infrastructure of the #StopTheSteal movement—building on top of the networks of participants and the patterns for assembling information to fit a developing conspiracy theory.

Across the cases, different sized influencers took on different roles. Low-follower and established accounts shared their own experiences. Micro-influencers amplified the experiences of others and helped move content up the chain. Meso- and macro- accounts provided the frames through which that evidence would be interpreted and assembled; many, including credentialed influencers, used well-worn rhetorical patterns such as “Big, if true” to reputationally hedge while amplifying. Mega accounts, often credentialed with visible platform-issued badges, amplified the content to vast audiences.

In the case of Matt Schlapp, a long-time political operative performed an old script—galvanizing outrage to attempt to contest an election that went against his party. But new roles can also develop through the course of a performance (Medler & Magerko, 2010), and we see that here, for example, with @L assuming the role of organizer for MaidenGate claims. As Crossan (1998) writes, “A key characteristic of improvisation is that individuals take different leads at different times” (p 596), and this can contribute to the sense of shared ownership for the performance, and a stronger sense of belonging to the group. Beyond the reputational gains accrued as followers through the course of their participation, that sense of belonging may be an essential part of the feedback loop that motivates and sustains participatory disinformation.

Although this view of disinformation as participatory and improvisational, and especially its reliance upon the participation of “unwitting” audiences of motivated-but-sincere believers, underscores the challenge for social media companies and regulators of mitigating harmful disinformation (e.g., while protecting commitments to free speech), it also suggests a potential pathway forward in addressing the activities of micro- to mega-influencers, including credentialed (platform-verified) users, who repeatedly play a role in motivating, guiding, and amplifying those audiences.

Limitations

This work has several limitations. First, though the participatory disinformation campaign we analyze here spanned multiple platforms, our research relies heavily on a single platform, Twitter, and likely overlooks significant activity in other spaces. In addition, though the cases selected here are illustrative of different kinds of narratives that spread as part of this campaign and demonstrate its participatory dynamics, they may not be representative of the entire campaign.

Conclusion

This article provides insight into the top-down and bottom-up dynamics of the participatory disinformation campaign to undermine trust in the 2020 election—a campaign characterized less by coordination than by cultivation and improvisation. Elites in politics and partisan media (including the President himself) pushed a meta-narrative of systematic voter fraud and set the expectations of voter fraud for their audiences. With help from an array of influencers—from hyper-partisan journalists and media outlets, activists, political operatives, and members of the Trump campaign—those audiences assembled the evidence, sometimes through misinterpretations of their own experiences, to produce the false and misleading narratives that sustained this campaign. These findings contribute to a more nuanced understanding of how participatory disinformation campaigns take shape through the collaborative work of online crowds and political operatives.

Supplemental Material

sj-docx-1-sms-10.1177_20563051231177943 – Supplemental material for Influence and Improvisation: Participatory Disinformation during the 2020 US Election

Supplemental material, sj-docx-1-sms-10.1177_20563051231177943 for Influence and Improvisation: Participatory Disinformation during the 2020 US Election by Kate Starbird, Renée DiResta and Matt DeButts in Social Media + Society

Footnotes

Acknowledgements

The authors thank the many contributors to the Election Integrity Partnership at the Stanford Internet Observatory, University of Washington’s Center for an Informed Public, Graphika, and the DRFLab for their help collecting, curating, and conducting initial analysis of the data presented here.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research builds upon rapid response work conducted through the 2020 Election Integrity Partnership (EIP). At University of Washington, EIP rapid-response research was financially supported by Craig Newmark Philanthropies, the Omidyar Network, the John S. and James L. Knight Foundation, and the William and Flora Hewlett Foundation. At Stanford, EIP research was funded by Craig Newmark Philanthropies and the William and Flora Hewlett Foundation. The specific analysis presented here was also supported by a U.S. National Science Foundation CAREER Grant (grant no. 1749815) to the first author and an NSF SaTC Grant (No. 2120496). Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the National Science Foundation.

Supplemental material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.