Abstract

Background

Software-based preoperative 3D shoulder arthroplasty implant planning is traditionally done manually and can be time-consuming. Systems like Blueprint© are now using software features that suggest implant positioning and sizing. This study is a comparative evaluation of preoperative planning software, assessing user interaction efficiency by comparing the time and number of actions required to complete planning tasks with and without automated planning features.

Materials and Methods

Real world plannings log files extracted from preoperative software were used to compare the impact of the auto-planning suggestion feature on the time taken and the number of actions to perform shoulder surgical planning. Comparative analyses were performed across the two main groups (with and without auto-planning) and three subgroups divided on surgeon's experience.

Results

A total of 7021 preoperative plannings done by 1018 surgeons were included. The auto-planning group included 65 surgeons with 780 plannings and the Manual group included 953 surgeons with 6241 plannings.

The Blueprint® new preoperative auto-planning feature—marketed under the name “BP Assist”—reduced the number of actions during the planning process by 29% compared to manual planning. For the most complex cases, auto-planning reduced the planning time by 25%. The auto-planning feature used by new users resulted in preoperative planning times that were at least as fast—and often faster—than those of high experience users who planned manually, while also requiring fewer actions to complete the planning process.

Conclusions

The integration of an auto-planning feature into preoperative software allowed for a reduction in the number of actions and the time spent during preoperative software-based planning process. Further research is needed to determine whether auto-planning represents a meaningful advancement in shoulder arthroplasty, with potential to support surgical efficiency and clinical decision-making.

Introduction

Historically, 3D preoperative shoulder visualizations were created manually by a surgeon and an engineer compiling CT scans. This was time-consuming and allowed for inaccuracies. 1

Preoperative planning tools assist the surgeon during the preparation of a surgery by providing 3D measurements of the patient's anatomy,2–5 and by simulation of the surgical implantation of different implant configurations and sizes. 6 It can also assist the surgeon by automatically positioning the implant, requiring adjustment by surgeon if necessary.1,7–10 These tools also allow the surgeon to compare different preoperative plans. 11

The use of these preoperative tools, such as automated implant positioning, raises two questions. First, could these tools introduce bias into the planning process made by surgeons? Second, do these tools offer a time-saving benefit for the surgeon under real-world conditions?

The first question has been partially addressed by several studies12,13 which compared preoperative planning software to manual planning and evaluated surgeon adherence to the software's recommendations. These studies suggest that such tools have a favorable influence on decision-making. To our knowledge, the second question has not been investigated.

With the expanding capabilities of modern preoperative planning software, newer features now provide automatic suggestions for implant positioning and sizing. Recent versions of Blueprint® preoperative software (Stryker, Tornier SAS, Montbonnot Saint Martin, France; further referred to as planning software) include this automatic suggestion feature, referred to as auto-planning (AP). AP proposes shoulder implant configuration and size based on patient morphology and position it as closely as possible to the placement an expert surgeon would choose. AP only handles the glenoid-side implant; the humeral-side implant must be planned from scratch by the surgeon. To enable glenoid-side predictions, a machine learning algorithm was trained on surgical plans created by a large group of expert surgeons, selected for their clinical expertise, their high surgery volume and recognition as opinion leaders in shoulder surgery. This algorithm is integrated into proprietary, closed-source software. Therefore, its technical details are not publicly available. AP enables the surgeon to make minimal adjustments and helps reducing the amount of time spent on preoperative planning. This may support low-volume shoulder arthroplasty surgeons by providing planning suggestions generated through machine learning based on the collective expertise of key opinion leaders shoulder surgeons—particularly in cases where standardized guidelines for selecting the type of arthroplasty are lacking.

This study's objective was to determine the impact of AP on the time required to plan a case in real-world conditions, further referred to as planning time. This study focused on preoperative planning for anatomic total shoulder arthroplasty (aTSA). To ensure strong statistical reliability, a large volume of real-world data from surgical cases and surgeon-generated plans was analyzed.

The primary aim of this study was to compare the planning time required by surgeons to preoperatively plan cases with AP versus without AP. The secondary aim was to analyze how the surgeon's experience with the software influenced preoperative planning time, both with and without the use of AP.

Materials and methods

Real-world user group and data acquisition

This study utilized real-world preoperative planning data generated by surgeons using Blueprint® preoperative software—marketed under the name “BP Assist” (Stryker, Tornier SAS, Montbonnot Saint Martin, France; further referred to as planning software) from July 2023 to August 2024. For each surgical case plan, the corresponding log file was extracted from which two pieces of information were obtained: planning time (seconds) and the number of user actions required to complete the plan. To compare the impact of AP on the planning time and the number of actions during the shoulder surgical planning. User action is defined as any click on a button in software graphical interface. Two distinct groups of surgeons were involved in this study, with no overlap between the groups. A group of 65 surgeons (AP group) had access to the AP suggestions feature (Blueprint® v4.0.2 or above—with all versions using the same AP algorithm) as part of limited users release. Inclusion in this group was based on voluntary participation, institutional access to the updated software and use of the manufacturer's implants and planning system. A second group of 953 surgeons (Manual group) did not have access to the AP feature. Consequently, they had to manually determine the positioning and sizing of the glenoid implant from scratch. AP only supports the implant on the glenoid side. On the humerus side, however, there is the suggestion of an implant that guarantees compatibility with the glenoid implant.

Within the Manual group, surgeons were further categorized into 3 subgroups based on their experience with the planning software during the year 2022. Those who completed more than 50 plans were classified as Expert users, those with 20 to 50 plans as Intermediate, and those with fewer than 20 plans as New users. These thresholds were selected to reflect meaningful differences in exposure to the software, distinguishing occasional users from those with regular or extensive planning experience.

Exclusion/inclusion criteria

Cases were only included when they were planned with Blueprint® version 4.0.2 or later, involved aTSA for primary glenohumeral osteoarthritis, and had no support team intervention during the planning phase.

Two filters were applied to identify and exclude inconsistent planning data. First, an inactivity filter removed time gaps longer than 2 min and 12 s between consecutive actions, based on a threshold set at three times the 95th percentile of all action-to-action intervals in the dataset. Second, to eliminate planning times outside the acceptable range, cases with total time below the 1st percentile (1 min and 3 s) or above the 99th percentile (36 min and 13 s) were excluded.

Statistics

The initial analysis used statistical methods to compare the average planning time and number of user actions between the Auto Planning (AP) and Manual groups, without accounting for the level of planning software usage. For all analysis in this study, we used Python v3.12 and Scipy v1.15 library. Planning time and the number of actions were assessed across percentiles (P10, P25, median, P75, and P90), with statistical significance evaluated using the Mann-Whitney U test. A second analysis was then conducted to break down the results according to surgeons’ experience levels with the planning software. Each Manual user's subgroup was compared to the AP group in terms of time savings and reduction in the number of actions required. This analysis evaluated task performance across the same percentiles, with statistical significance again assessed using the Mann-Whitney U test. For this study, where statistical significance was observed, a Hodges–Lehmann estimator was calculated to estimate the location shift between the two distributions.

Results

Auto-planning versus manual planning

We extracted from the software data log 9044 cases of patients diagnosed with primary glenohumeral osteoarthritis, for whom an anatomic shoulder arthroplasty procedure had been planned using Blueprint version 4.0.2 (or above). Among these, 942 plans were done by surgeons for the AP planning group, and 8102 plans were done by the Manual group. These plans were made by 75 surgeons for the AP group and 1118 surgeons for the Manuel group. After applying the exclusion criteria, criteria lead to the exclusion of 162 cases for AP group and 1081 cases for Manual group.

In total, 7021 plans of 1018 surgeons were included. The AP planning group comprised 65 surgeons with 780 plans and the Manual group 953 surgeons with 6241 plans.

To estimate the average experience with the software of each group, the distribution of the number of plans completed was assessed (all types of pathologies and total shoulder arthroplasty). In the AP group, the 1st, 2nd (median), and 3rd quartiles were 7, 16, and 41 plans, respectively. In the Manual group, the corresponding quartiles were 5, 11, and 24 plans. While the AP group showed a higher upper quartile, the median experience was comparable for both groups.

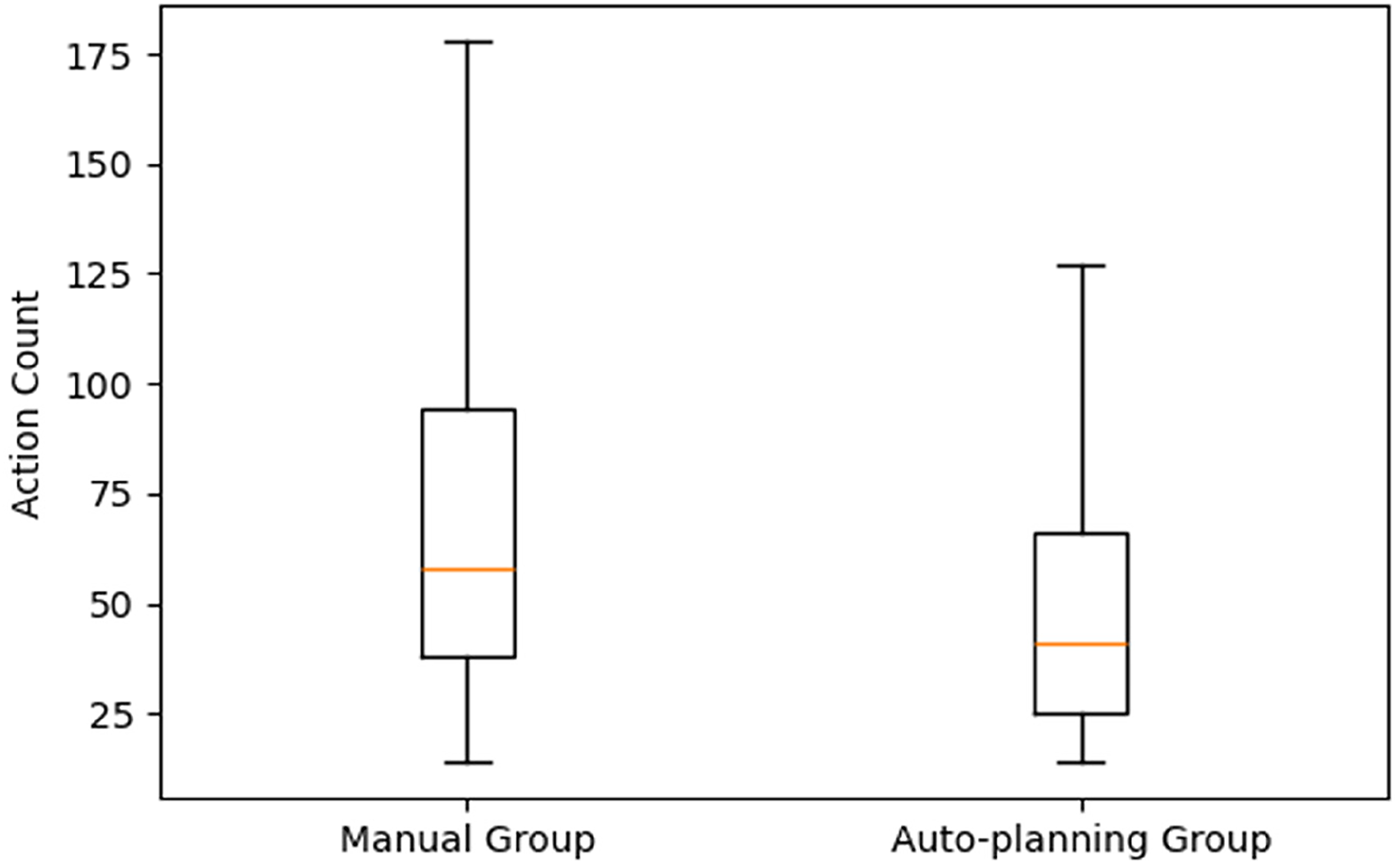

The AP group consistently required fewer actions than the Manual group. The median number of actions was 41 for the AP group versus 58 for the Manual group—a 29% reduction. This pattern held across all percentiles: at P10, the AP group required 18 actions compared to 26 in the Manual group; at P90, 110 actions versus 153 (Table 1).

Number of user actions across percentiles (P10, P25, median, P75, and P90) for each group.

The auto-planning (AP) group is compared with manual planning group. Percentage reductions represent the relative decrease in the number of actions for the AP group compared to manual group at the corresponding percentile.

Both Manual and AP cases showed statistically significant variability in the number of actions required for planning (p < 0.001).

Similarly, planning time across cases showed a considerable variability (Figure 1). Due to this variability, the distribution of planning time between the two groups could not be clearly separated. The analysis confirmed the hypothesis, demonstrating a statistically significant reduction in planning time for the AP group compared to the Manual group (p < 0.001). To quantify this difference, we used the Hodges–Lehmann estimator to assess the location shift between distributions. For surgeons in the AP group, the estimated reduction in the number of actions was 15 actions (95% CI: 13–17).

Boxplot of the two distributions of the number of actions of the auto-planning and manual group, where the box represents the interquartile range from the 25th percentile to 75th percentile, and the line inside the box represents the median value.

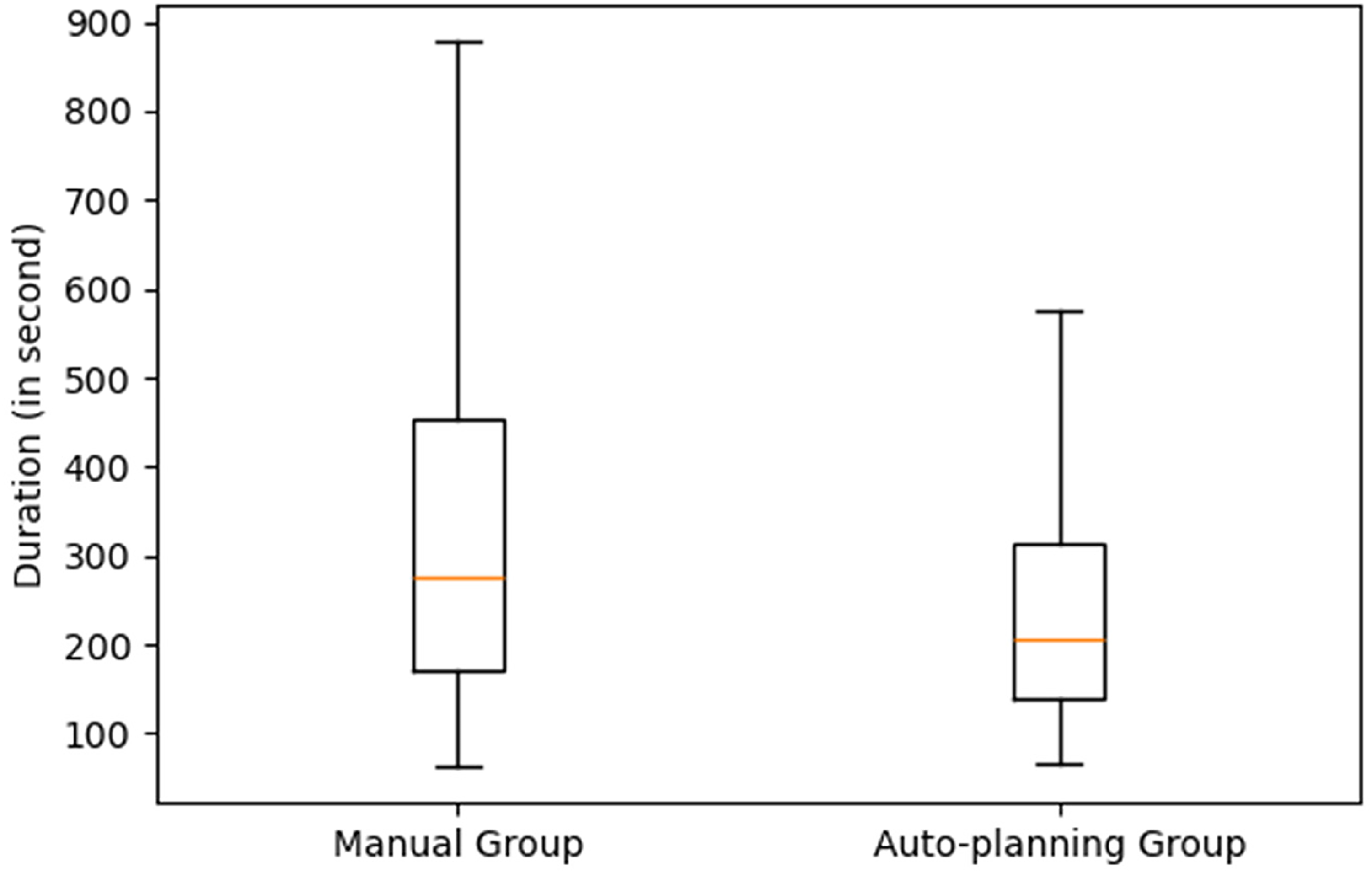

A reduction in planning time was observed across all percentiles for the AP group compared to the Manual group. The median planning time for the AP group was 207 s, compared to 277 s for the Manual group, showing a 25% reduction when using AP. For P90 (representing more complex cases), AP reduced planning time with 4 min and 20 s compared to the Manual group. The Hodges–Lehmann estimator for planning duration indicated a location shift of 57.64 s (95% CI: 47.91–66.94) less for the AP group, further confirming a statistically significant improvement in planning efficiency.

The time-saving benefit of AP increased with case complexity. As planning time rises across percentiles, the percentage reduction with AP becomes more pronounced. At the P90 level, representing the most time-consuming cases, AP reduced planning time to 490 s compared to 750 s for the Manual group, a 34.6% improvement (Table 2). In contrast, for the quickest 10% of cases, the difference was minimal: 108 s for AP versus 112 s for Manual.

Planning time reduction comparison between AP and manual groups in relation to the case complexity across percentiles (P10, P25, median, P75, and P90) for each group.

Percentage reductions represent the relative decrease in the planning time (seconds) for the AP group compared to manual group at the corresponding percentile.

While both methods show variability (Figure 2), the AP group demonstrates a lower median and a tighter distribution, suggesting greater efficiency—reported further in the results of the second analysis below.

Boxplot of the two distributions of the planning time of the AP and manual group, where the box represents the interquartile range from the 25th percentile to 75th percentile, and the line inside the box represents the median value.

To characterize the complexity of cases between the two groups, we assessed several anatomical criteria, including glenoid version, glenoid inclination, and glenoid orientation. The Mann–Whitney test revealed no significant differences in the distribution of these variables. Thus, the difference in case complexity between the two groups does not appear to account for the improvements associated with AP.

Auto-planning versus manual experience subgroup planning

Of the 953 surgeons, 129 were excluded due to unknown experience with the software or having completed fewer than 3 planned cases in the previous (2023) year, leaving 824 for analysis (Table 3).

Manual group surgeon's subgroup repartition based on their experience with the planning software.

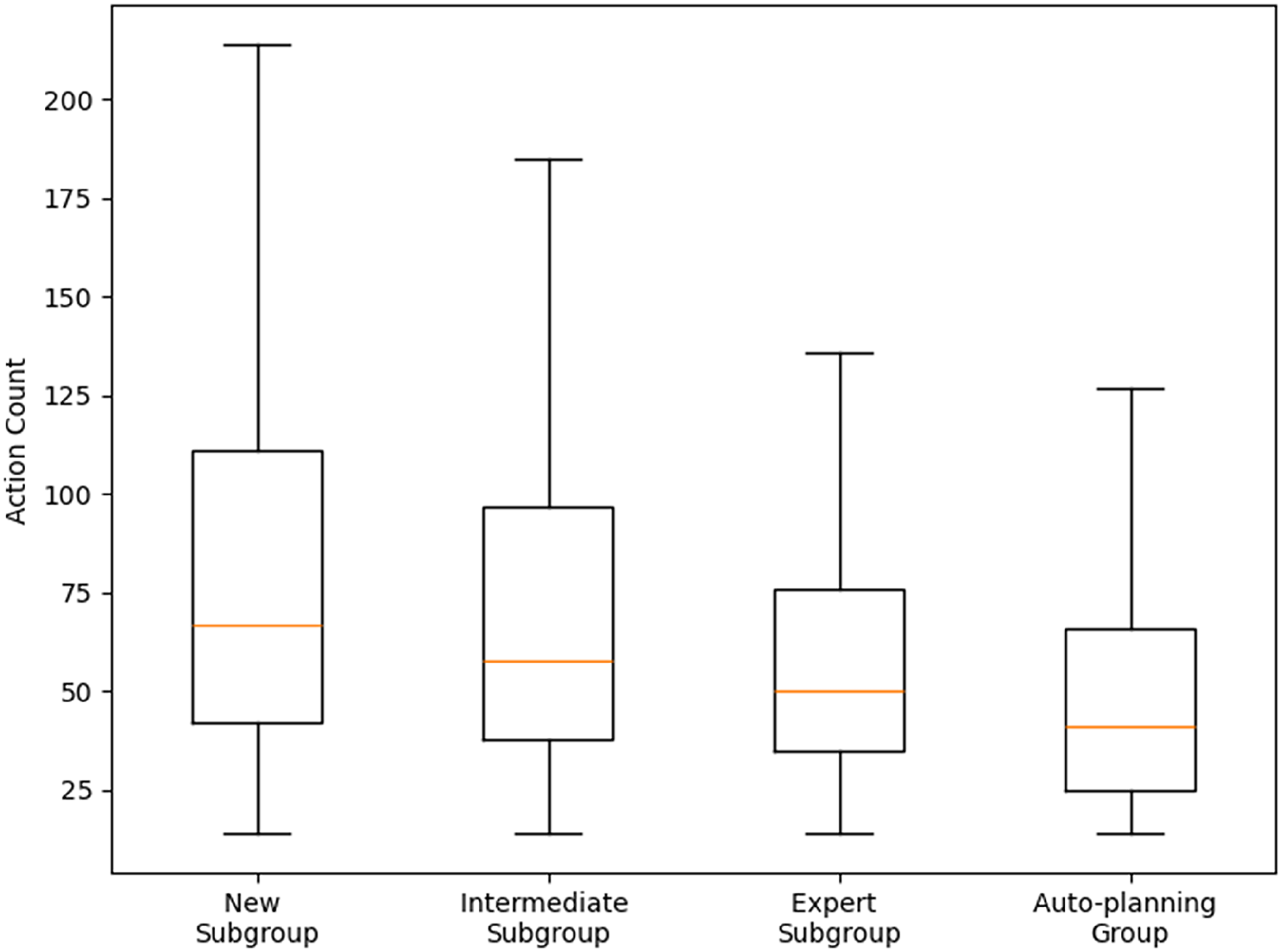

Focusing on the 3 subgroups of the Manual group, the results indicated an increase in efficiency with an increasing experience level with the planning software (Table 3). At the median usage level, new users required 67 actions, intermediate users 58 actions, and expert users 50, illustrating a progressive reduction in task effort as user experience with the planning software increased. This pattern was consistent across percentiles. For example, at P10, new users performed 29 actions compared to 26 for intermediate users and 25 for experts, while at P90, new users required 185 actions, intermediate users 156, and experts 114.

The AP group required a median of 41 actions, fewer than any of the experience-based Manual subgroups, including experts. Across percentiles, AP matched or slightly outperformed experts: at P10, it required 18 actions compared to 25 for experts; at P90, both groups completed planning with 114 actions (Table 4). This indicated that the AP significantly reduced both the number of actions and variability compared to all Manual subgroups (Figure 3). A Mann-Whitney U test confirmed that the distribution of action counts in the AP group was significantly lower than in each Manual subgroup, with p-values <0.001. For the New subgroup compared to the AP group, the Hodges–Lehmann estimator for action count indicated a location shift of 18 less actions (95% CI: 16 to 21) for the AP group. For the Intermediate subgroup, the location shift was 8 actions (95% CI: 6 to 11), and for the Expert subgroup, 5 less actions (95% CI: 3 to 8).

Boxplot of the distributions of the number of actions of the AP and 3 manual subgroups, where the box represents the interquartile range from the 25th percentile to 75th percentile, and the line inside the box represents the median value.

Number of user actions across percentiles (P10, P25, median, P75, and P90) for each group.

The AP group is compared with manual planning subgroups based on user experience levels: new, intermediate (Inter), and expert (Exp). Percentage reductions represent the relative decrease in the number of actions for the AP group compared to each manual subgroup at the corresponding percentile.

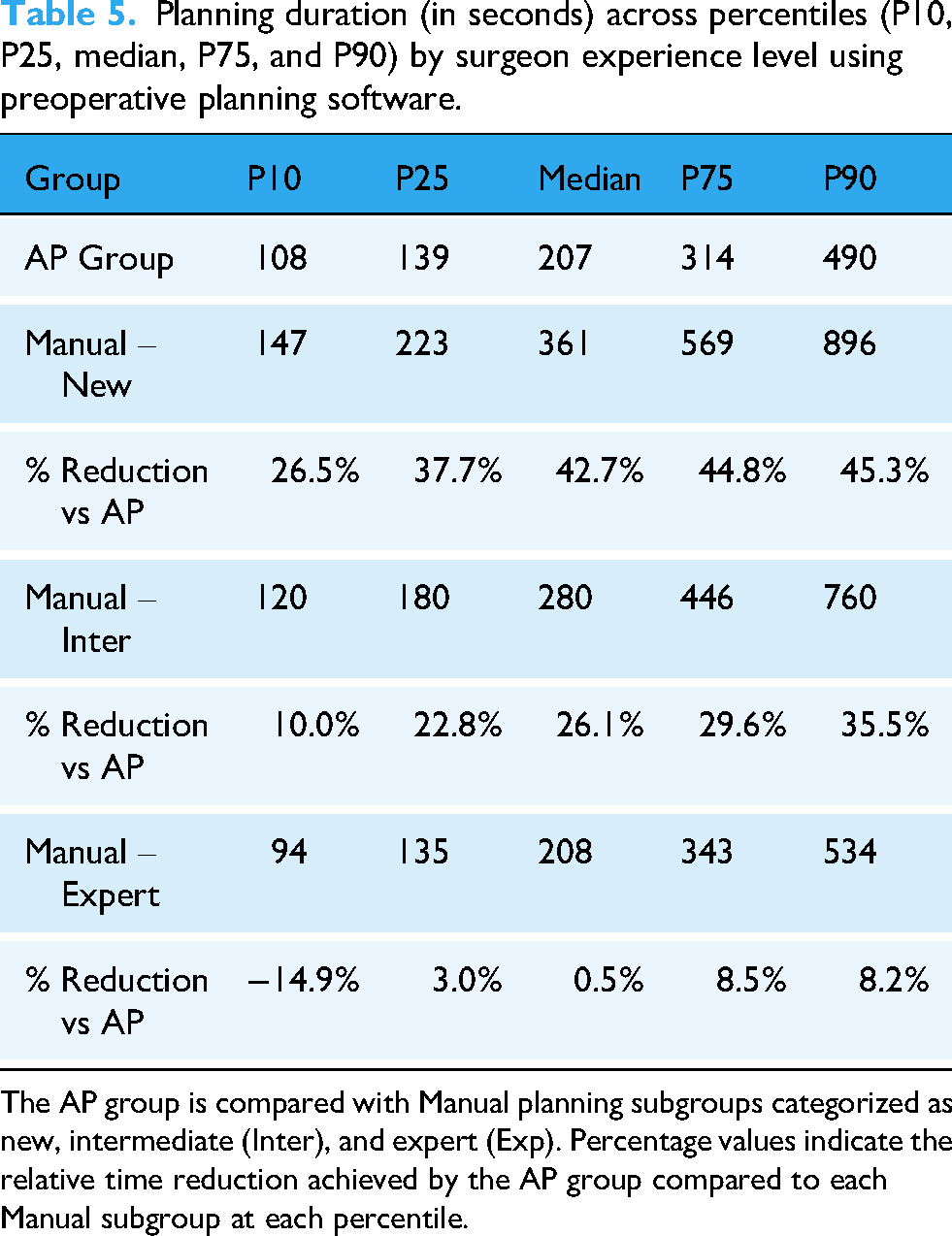

Similar trends were observed for planning time. Median planning times were 366 s for new users, 280 for intermediate, and 208 for experts, while the AP group required 207 s, which is comparable to experts. Across percentiles, new users consistently had the longest times, followed by intermediate users, with experts being the fastest. At P10, new users took 147 s, intermediates 120, and experts 94 s. The AP group, at 108 s, outperformed new and intermediate users but was slightly slower than experts (Table 5).

Planning duration (in seconds) across percentiles (P10, P25, median, P75, and P90) by surgeon experience level using preoperative planning software.

The AP group is compared with Manual planning subgroups categorized as new, intermediate (Inter), and expert (Exp). Percentage values indicate the relative time reduction achieved by the AP group compared to each Manual subgroup at each percentile.

The one-sided Mann-Whitney U test showed that the AP group's planning times were stochastically lower than those of the new and intermediate subgroups. It showed significantly lower planning times for the AP group in both comparisons (p < 0.001), indicating superior performance. However, when comparing the AP group to Expert group, the test did not reject the null hypothesis, suggesting that both distributions were statistically similar, indicating that the AP group achieved a level of efficiency comparable to expert users (Figure 4). For the New subgroup compared to the AP group, the Hodges–Lehmann estimator for planning duration indicated a location shift is 89.96 s (95% CI: 78.44–102.23) less for the AP group distribution. For the Intermediate subgroup, the location shift is 5.8 s (95% CI: 15.45 to −3.85). Because the comparison between the Expert subgroup and the AP group did not reject the null hypothesis in the Mann–Whitney U test, the Hodges–Lehmann estimator was not computed for this subgroup.

Boxplot of the distributions of the planning time of the AP and 3 manual subgroups, where the box represents the interquartile range from the 25th percentile to 75th percentile, and the line inside the box represents the median value.

Discussion

Interpretation of key findings

This study showed that auto-planning (AP) within the preoperative planning software significantly reduced planning time and the number of actions compared to manual planning using the same software. Compared to all manual experience with the software levels/exposure to the software combined, AP reduced both median planning time (median reduction of 25%) and median number of actions (median reduction of 29%). Although stratification by user experience level with the software was applied only to the Manual group, the AP group included a balanced distribution of users across all experience levels with the software. Interestingly, when focusing on the performance of the Manual Expert subgroups, it may appear surprising that AP reduced the number of required actions but did not lead to a corresponding reduction in planning time. Particularly for the shortest planning durations, such as those at P10. Since planning time is measured as the total duration, the time required to compute the AP suggestions is included in this measurement. Currently, AP computation time ranges between 15 and 30 s, depending on the case. Based on our findings, this may indicate that the time saved through AP implant positioning suggestions does not fully compensate for the time required to generate these suggestions. Overall, this study showed that increased familiarity with the planning software is associated with efficiency, as reflected by the reductions in both the number of user actions and the time required to complete the planning task. The AP group achieved performance levels comparable to those of expert users and exceeded that of intermediate and new users both in planning time and number of actions. These findings support the potential of the AP feature to enhance efficiency and streamline the preoperative workflow for shoulder arthroplasty surgeons enabling them to allocate more time to patient interaction, and other clinical activities.

Comparison with previous literature

The key findings are in line with recent literature that emphasizes the growing role of digital tools and automation in surgical planning.9,14 Previous studies show that computer-assisted planning systems can reduce intraoperative errors and improve surgical precision, 15 particularly in complex procedures such as shoulder arthroplasty. Similarly, Schoch et al. (2020) 16 highlighted that even experienced surgeons deviated from ideal positioning in 38% of cases when not using navigation, reinforcing that manual planning is subject to human variability. Moreover, literature addressed the trade-off between planning accuracy and operative time where 3D planning may slightly increase planning duration at first but enhances implant selection.15,17 This study adds insight by showing that AP improves preoperative planning time and user efficiency from the outset, bypassing the usual learning curve usually reported. 18

The integration of AP into preoperative software planning processes can be opportunities for enhancing surgical efficiency. By reducing the time needed for preoperative planning, AP-like tools may allow surgeons to dedicate more time to patient-centered tasks, including communication and clinical decision-making. These systems can also support surgical planning by integrating data-driven insights derived from expert practice patterns. Emerging evidence suggests that digital planning and artificial intelligence–assisted tools can achieve planning accuracy comparable to traditional expert-driven methods while significantly shortening processing time. 19 As these technologies continue to evolve, they may contribute to the standardization of workflows and more efficient use of clinical resources. 19 In the longer term, their integration could influence how surgical teams allocate time and responsibilities, with possible benefits for quality of care and operational efficiency, particularly in high-volume centers or in settings with limited specialized expertise. Overall, this publication is a step towards understanding more about how new planning software features including artificial intelligence/algorithm, like AP, can help all surgeons improve efficiency, aid less experienced surgeons in the planning phase, and potentially reduce costs.

Methodological considerations and limitations

This study has several limitations, including the size of the comparison groups and the difficulty in distinguishing the surgeon's surgical experience from their software experience. The differences in case volume between groups are partly attributable to the restricted user access during the limited release of the AP features. However, the median number of planned cases is 16 versus 11—a difference of only five cases, which remains relatively small. For the Manual group, experience was defined by the number of cases each surgeon had planned using the software, ensuring an overall distribution in which one-third of the plans fell into each experience category. Surgical experience itself was not taken into account. Nevertheless, the study showed a reduction in the number of actions required for surgeons using the AP software, regardless of their experience level. Surgeons can therefore position themselves within any category to assess whether these features could assist them in their daily practice.

Another limitation of this study is the inability to determine the surgeon's intent during the planning process, whether the focus was solely on completing the plan or if additional time and actions were spent exploring the software's features or any other reason for not focusing on the planning task. Such exploratory use may have contributed to variability in the registration of planning time and number of actions count. Also, all users have individual accounts and passwords; therefore, only one person should be responsible for surgical planning. However, as the manufacturer, we cannot guarantee that accounts are not shared among users, and consequently, we cannot ensure that the planning was performed by a single individual.

The software includes pre-use configuration parameters allowing users to individually define parameter ranges according to their preferences and within the system's permitted limits. These surgeon-specified default settings may influence the alignment between the AP-generated plan and the surgeon's plan and could therefore affect the outcomes assessed in this study. The study design, however, did not allow for a dedicated analysis of these potential effects. Finally, the final plan quality and replication of the plan in operating room were not evaluated, mainly because access to final plan and clinical outcomes is requiring clinical studies and requirements are different.

Implications and perspectives for future research

Further research on preoperative shoulder arthroplasty planning is needed to deepen our understanding of how planning software in general and their AI driven features like AP benefit both surgeons and patients (clinical outcomes). There are still several unanswered questions: can AP in arthroplasty truly aid inexperienced surgeons in their surgery, improve surgical plans, or reduce costs? Future studies may be able to answer those questions and confirm further whether this software feature can improve efficiency in surgical planning.

Nonetheless, our findings provide support for the integration of AP in shoulder arthroplasty to improve preoperative planning processes.

Conclusion

The integration of the Blueprint® auto-planning function into preoperative software was associated with a 29% reduction in the number of actions required during the planning process, compared with manual planning. In addition, the feature reduced the time required for planning complex cases by 25%.

The results suggest that the auto-planning function can achieve performance comparable to, or in some cases, exceeding, which of experienced surgeons planning without automation, both in terms of planning time and the number of actions performed.

These findings indicate that incorporating auto-planning tools into preoperative planning software may enhance the efficiency of shoulder arthroplasty planning support clinical decision-making, and facilitate a more effective use of resources across different levels of surgical experience. Furthers studies are warranted to confirm these results in larger and more diverse clinical settings but also to link to clinical outcomes and benefits for patients.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076261418902 - Supplemental material for Auto-planning in preoperative shoulder arthroplasty software reduces implant planning time and number of actions

Supplemental material, sj-docx-1-dhj-10.1177_20552076261418902 for Auto-planning in preoperative shoulder arthroplasty software reduces implant planning time and number of actions by Pierre Mahe, Sarah Shank, Arthur de Gast and Maud Reynier in DIGITAL HEALTH

Footnotes

Ethical consideration

Institutional Review Board Statement: The study did not involve human participants or animal subjects, and therefore ethical approval from an institutional review board (IRB) was not required.

Consent to participate

Not Applicable

Consent for publication

Not Applicable

Author contributions

All authors contributed to the study conception and design. Pierre Mahe designed the research, collected and analyzed the data. Maud Reynier supervised the project and drafted the initial version of the manuscript. Sarah Shank reviewed and edited the manuscript. Arthur De Gast reviewed, edited the manuscript and provided the expertise from surgical side. All authors read and approved the final version of the manuscript.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The conduct of the research project and the article publishing charges were financed by Stryker.

Stryker, (grant number Full project have been supported by Stryker. ).

Declaration of conflicting interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: All authors are employees of Stryker and a shareholder of Stryker Corporation.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.