Abstract

Digital therapeutics (DTx) and other forms of therapeutic artificial intelligence (AI) are becoming increasingly embedded in healthcare systems and everyday health practices. Unlike earlier forms of medical AI, which mainly support diagnostic decision-making, therapeutic AI systems interact continuously with patients and may influence health-related behaviour over time. These characteristics raise ethical, legal, and social implications (ELSI) that extend beyond conventional concerns about safety, efficacy, and algorithmic performance. This article examines how such concerns are translated into institutional practice by reconceptualizing ELSI as governance infrastructure. Drawing on a narrative review combined with comparative institutional analysis, it analyses regulatory frameworks, policy documents, and governance arrangements in two advanced digital health jurisdictions: the European Union and Japan. The analysis identifies two distinct governance models. The European Union has developed a layered regulatory framework in which the Artificial Intelligence Act operates alongside the Medical Device Regulation, the General Data Protection Regulation, and emerging health-data governance initiatives. Japan, by contrast, governs therapeutic AI primarily through sectoral legislation centred on the Pharmaceuticals and Medical Devices Act, complemented by administrative guidance and professional mediation. These approaches illustrate different ways of embedding ELSI within digital health governance. The EU model emphasizes codified ex ante obligations and legally binding compliance mechanisms, whereas the Japanese model places greater weight on adaptive oversight and post-market learning. Effective governance of therapeutic AI, the article argues, requires institutional infrastructures capable of addressing behavioural influence, lifecycle change, and accountability across AI-enabled therapeutic systems.

1. Introduction

The central theoretical contribution of this article is to reconceptualize the ethical, legal, and social implications (ELSI) of AI-enabled digital therapeutics (DTx) and related medical AI systems as governance infrastructure rather than as applied ethics alone. Existing scholarship and policy debate on medical artificial intelligence have largely framed ELSI in terms of ethical principles such as transparency, fairness, accountability, and respect for autonomy.1–3 While these principles provide important normative orientation, they are insufficient when considered apart from the institutional and regulatory contexts in which AI-enabled digital health technologies are developed, deployed, and contested.4,5 The rapid spread of AI ethics guidelines across governments, international organizations, professional associations, and technology companies has not been matched by equally robust mechanisms for enforcement, monitoring, or accountability, giving rise to concerns about “ethics washing” in the governance of digital technologies.3,6

From the perspective advanced here, ELSI should be understood as the institutionalized processes, role allocations, and accountability mechanisms through which ethical commitments are translated into durable practice across the AI lifecycle.4,5,7 This shifts attention away from abstract principles alone and towards the organizational, regulatory, and professional arrangements that make those principles effective in practice. The point is especially important for AI-enabled DTx and related behavioral intervention technologies, where ethical challenges arise through sustained interaction between algorithmic systems and patients over time rather than through discrete clinical decisions.8,9

Artificial intelligence has become a major driver of medical digital transformation, extending well beyond its earlier role in clinical decision support towards systems capable of shaping health-related behavior in everyday life.8,9 Advances in machine learning, mobile health platforms, and real-world data analytics have enabled the rapid diffusion of AI-enabled DTx, mental health chatbots, and adaptive coaching systems designed to promote adherence, self-management, and preventive behaviors.9,10 Within the broader hierarchy of digital health technologies, DTx are generally understood as evidence-based digital interventions designed to prevent, manage, or treat medical conditions, typically subject to regulatory oversight comparable to software as a medical device.8,11 In this article, the term “therapeutic AI” is used as an analytical category referring to AI-enabled digital therapeutics (DTx) and closely related digital health interventions that make explicit therapeutic or preventive claims and are therefore likely to fall within medical-device regulatory frameworks. The term is used not as a settled regulatory category, but as a functional concept for analyzing governance problems associated with sustained algorithmic influence on patient behavior.

The scale and scope of these technologies continue to expand. AI-enabled systems for mental health, chronic disease management, and lifestyle modification are increasingly embedded in patients’ everyday environments rather than confined to episodic clinical encounters.8,9 Mental health applications alone have been estimated to number more than 10,000 across major mobile application platforms, although only a small proportion meet the regulatory requirements associated with DTx.9,12 These technologies do not simply provide information or clinical recommendations. Rather, they interact continuously with users through personalized notifications, behavioral nudges, gamification mechanisms, and adaptive feedback loops designed to shape habits and decision-making over extended periods.13,14

The COVID-19 pandemic further accelerated the adoption of digital health technologies and telehealth globally, particularly in mental health services.10,15 Telehealth use rose sharply during the early stages of the pandemic, highlighting both the possibilities and the limits of digitally mediated healthcare systems. 15 At the same time, the rapid expansion of digital health exposed gaps in regulatory and governance frameworks originally designed for hardware medical devices or diagnostic software rather than for behavioral intervention systems capable of exerting sustained influence over users’ choices and routines. Existing legal regimes in both the European Union (EU) and Japan—including medical device regulation and data protection law—now face governance challenges related to algorithmic persuasion, cumulative effects on patient autonomy, and the allocation of responsibility for long-term behavioral outcomes.16–19

Against this backdrop, ethical guidelines for “trustworthy” or “responsible” AI have proliferated globally. Reviews of the emerging AI governance landscape have identified more than eighty frameworks proposing convergent ethical principles such as transparency, fairness, human agency, and accountability. 1 Yet an expanding body of scholarship argues that ethical principles alone cannot secure responsible AI governance without institutional infrastructures capable of translating those principles into operational practice.3,4,20 Therapeutic AI brings this tension into particularly sharp relief. Because its effects unfold cumulatively across time and context, governance mechanisms based solely on pre-market assessment or static compliance checks may prove inadequate. A mental health chatbot that appears safe at deployment may evolve through continued learning, while behavioral coaching algorithms may produce unintended effects on patient autonomy or decision-making over time. 21

The European Union and Japan provide an instructive comparative context for examining how ELSI may be institutionalized as governance infrastructure. Both jurisdictions are advanced ageing societies facing similar pressures from workforce shortages, rising healthcare costs, and growing demand for digital health innovation. Both also possess highly developed regulatory infrastructures governing pharmaceuticals and medical devices. Their institutional architectures, however, differ significantly. The European Union operates as a supranational regulatory system in which instruments such as the Artificial Intelligence Act, the Medical Device Regulation, the General Data Protection Regulation, and the European Health Data Space establish horizontal regulatory frameworks that are implemented through additional layers of Member State regulation and policy.17,18,22,23 Japan, by contrast, governs AI-enabled medical software primarily through a unitary national regulatory system centered on the Pharmaceuticals and Medical Devices Act (PMD Act), the Act on the Protection of Personal Information, and administrative guidance issued by the Ministry of Health, Labour and Welfare and the Pharmaceuticals and Medical Devices Agency (PMDA).24,25 This structural asymmetry—supranational multi-level governance in the EU versus nationally centralized sectoral regulation in Japan—shapes how ethical principles are translated into regulatory practice and how governance capacity is assembled.

Building on these observations, this article pursues two related aims. Empirically, it develops a structured comparative analysis of European and Japanese governance frameworks for therapeutic AI, focusing on regulatory philosophy, the institutional embedding of ELSI, and lifecycle governance mechanisms spanning pre-market assessment, post-market monitoring, and adaptive system revision. Conceptually, it advances the notion of ELSI as governance infrastructure, offering an analytical framework for understanding how ethical commitments can be translated into durable institutional practices for AI technologies that exert sustained behavioral influence in everyday life.

2. Methods

2.1. Study design and analytical approach

This study adopts a narrative review combined with comparative institutional analysis to examine how the ethical, legal, and social implications (ELSI) of therapeutic artificial intelligence are operationalized within digital health governance frameworks.22,23 The analysis is explicitly governance-oriented, prioritizing regulatory design, institutional arrangements, and implementation capacity rather than clinical effectiveness or algorithmic performance. 22 This methodological choice reflects the article’s central concern: how ethical principles are translated into practice across different institutional settings.

Therapeutic AI sits at the intersection of technology, regulation, and care. Its governance spans multiple legal domains—including medical device regulation, data protection law, and AI-specific regulation—as well as multiple institutional levels ranging from supranational authorities to professional communities. 26 Under such conditions, questions of regulatory coherence, normative trade-offs, and lifecycle oversight are not well suited to quantitative synthesis. Narrative review approaches are therefore especially useful in digital health policy research where interpretive analysis and contextual integration are required.27–29

A narrative review is appropriate here because the field is both heterogeneous and fast moving. Relevant evidence is distributed across empirical studies, legal analyses, policy documents, and institutional reports, and these different source types must be read together to understand how governance works in practice.27,28 In therapeutic AI, this heterogeneity is not incidental but constitutive of the subject itself, since regulatory development is shaped by interactions among law, professional norms, technological change, and institutional capacity.

Comparative institutional analysis complements this approach by enabling systematic comparison between governance systems with distinct regulatory traditions. 26 Rather than treating legal frameworks as static texts, it examines how regulatory instruments interact with professional practices, administrative procedures, and institutional capabilities. The combined methodology therefore makes it possible to analyze not only what rules exist, but also how those rules function in practice as governance infrastructure.

Two jurisdictions—the European Union and Japan—were selected for comparative analysis because both face similar demographic pressures and digital health challenges while operating under distinct regulatory architectures. The EU represents a multi-level supranational governance system, whereas Japan represents a nationally centralized regulatory model. This contrast offers a useful basis for examining how ELSI principles become embedded in institutional governance structures.

2.2. Conceptual framework: Therapeutic AI and ELSI as governance infrastructure

The analytical framework is grounded in two core concepts: therapeutic artificial intelligence and ELSI as governance infrastructure.

Therapeutic AI is defined here as AI-enabled digital therapeutics and closely related digital health interventions designed to influence patient behavior directly for therapeutic or preventive purposes.8,30 This category includes evidence-based AI-enabled digital therapeutics (DTx), adherence-support algorithms, AI-enabled mental health interventions, and adaptive coaching systems embedded in mobile or wearable platforms. 8 Its defining characteristics are continuous interaction, adaptive learning, and sustained behavioral influence beyond discrete clinical encounters.

Unlike diagnostic AI systems that generate episodic outputs for clinician interpretation, therapeutic AI systems operate directly in patients’ everyday environments and often do so without immediate professional mediation. Several application domains illustrate this pattern. Mental health technologies provide a particularly clear example, with AI-enabled chatbots delivering cognitive behavioral therapy or emotional support without direct human therapist involvement.21,31 Chronic disease management systems employ adaptive algorithms to support medication adherence, lifestyle modification, and self-monitoring. Behavioral coaching platforms use persuasive design techniques and personalized recommendations to encourage preventive health behaviours.

ELSI is conceptualized in this study not simply as a set of ethical principles, but as governance infrastructure—that is, as institutionalized processes, roles, and mechanisms that translate ethical commitments into operational practice across the AI lifecycle. Within this framework, concepts such as explainability, accountability, and participation are understood as organizational and regulatory practices rather than merely technical features or abstract normative ideals.4,7,32

This conceptualization builds on scholarship that highlights the gap between ethical principles and their practical implementation. Mittelstadt argues that principles alone cannot guarantee ethical AI because they must be institutionally embedded to function as effective constraints on behavior. 4 Mazzucato and Schaake similarly emphasize that governing AI in the public interest requires institutional capacity rather than ethical aspiration alone. 7 Building on these insights, this study treats ELSI as the institutional infrastructure through which ethical commitments acquire practical force in the governance of therapeutic AI.4,7,19,30,32,33

2.3. Literature search strategy

A targeted literature search was conducted to identify scholarship at the intersection of digital health, AI-enabled DTx, governance, regulation, and ELSI. The search strategy was designed to balance breadth with analytical focus.

The primary database search was conducted in PubMed because of its extensive coverage of biomedical and health policy literature. Complementary searches were performed in Google Scholar to identify interdisciplinary research in law, political science, public policy, and science and technology studies, as well as relevant grey literature and policy documents.

Search terms were organized into three conceptual groups. Digital health terms included “digital health,” “digital therapeutics (DTx),” “digital medicine,” “mobile health,” “mHealth,” “eHealth,” “telemedicine,” “wearable technology,” and “software as a medical device.” Artificial intelligence terms included “artificial intelligence,” “AI,” “machine learning,” and “deep learning.” Governance-related terms included “ethics,” “ELSI,” “governance,” “regulation,” “policy,” “oversight,” “accountability,” and “transparency.”

The search string combined these three concept groups using Boolean operators as follows: (“digital health” OR “digital therapeutics” (DTx) OR “digital medicine” OR “mobile health” OR “mHealth” OR “eHealth” OR “telemedicine” OR “wearable technology” OR “software as a medical device”) AND (“artificial intelligence” OR “AI” OR “machine learning” OR “deep learning”) AND (“ethics” OR “ELSI” OR “governance” OR “regulation” OR “policy” OR “oversight” OR “accountability” OR “transparency”). Variations of this core search string were adapted to the syntax requirements of each database.

This search strategy was refined iteratively. An earlier exploratory search using broader field specifications yielded a larger but less focused set of records. The refined approach described above produced a more conceptually coherent dataset for governance-oriented analysis.

An initial exploratory search in PubMed without field restrictions yielded 1,246 records. To obtain a more conceptually focused dataset, the search was subsequently restricted to records in which the same Boolean search string appeared in the title or abstract fields using the PubMed [tiab] tag. This refinement resulted in 420 records, which were then subjected to title–abstract screening. After screening for relevance to governance, regulation, or ethical analysis, 87 publications were retained for full-text assessment. From this set, 34 core publications were selected for in-depth analysis because they addressed regulatory frameworks, institutional governance, or ELSI implementation in digital health and AI systems directly.22,23,27

Inclusion criteria covered publications addressing governance, regulatory, policy, or ethical dimensions of AI in healthcare; comparative analyses of regulatory approaches across jurisdictions; conceptual contributions to ELSI in digital health; policy analyses of regulatory instruments such as the AI Act, MDR, GDPR, or PMD Act; and studies examining institutional implementation of digital health governance.1,3,14–17 Studies were excluded if they focused exclusively on clinical outcomes, algorithmic development, or model performance without relevance to governance. Publications examining purely diagnostic AI were also excluded unless they offered transferable governance insights, such as analyses of liability allocation or lifecycle monitoring of high-risk AI systems.

The search was conducted in November 2024 and iteratively updated between December 2024 and January 2026 to incorporate recent analyses of the EU AI Act, digital therapeutics regulation, and Japanese governance frameworks.

2.4. Policy and legal document analysis

In parallel with the literature review, official legal and policy documents were analyzed as primary sources. Documents were identified through targeted searches of EUR-Lex, European Commission repositories, and official Japanese government and regulatory websites, including the Cabinet Office, the Ministry of Health, Labour and Welfare (MHLW), and the Pharmaceuticals and Medical Devices Agency (PMDA).

For the European Union, the analysis focused on the Artificial Intelligence Act (Regulation (EU) 2024/1689), including annexes defining high-risk AI systems and requirements concerning transparency, risk management, and human oversight. 34 The Medical Device Regulation (Regulation (EU) 2017/745) was examined for provisions governing software as a medical device. 35 The General Data Protection Regulation (Regulation (EU) 2016/679) was analyzed with particular attention to provisions on automated decision-making and algorithmic accountability. 36 The European Health Data Space Regulation (Regulation (EU) 2025/327) was included in order to assess emerging frameworks enabling the secondary use of health data for AI development. 37

For Japan, key documents included the Pharmaceuticals and Medical Devices Act (PMD Act), which governs software as a medical device, 38 and the Act on the Protection of Personal Information (APPI). 39 Administrative guidance and regulatory reports issued by MHLW and PMDA were analyzed to understand implementation practices, including initiatives such as the DASH framework for adaptive post-market modification of AI-enabled medical software.40,41

All legal and policy documents were analyzed using a common template covering objectives, scope, risk categorization, oversight mechanisms, lifecycle governance provisions, and references to ELSI principles. This structured comparison made it possible to identify the regulatory philosophies and institutional mechanisms through which ethical principles are translated into operational governance practice.

Taken together, the documentary analysis was embedded within a comparative institutional strategy that examined regulatory philosophy, the institutional embedding of ELSI, and lifecycle governance capacity, while acknowledging the limitations of a qualitative, document-based study design.

3. Results

3.1. The European Union: Layered governance with ex ante risk management

3.1.1. High-risk classification under the AI act and MDR

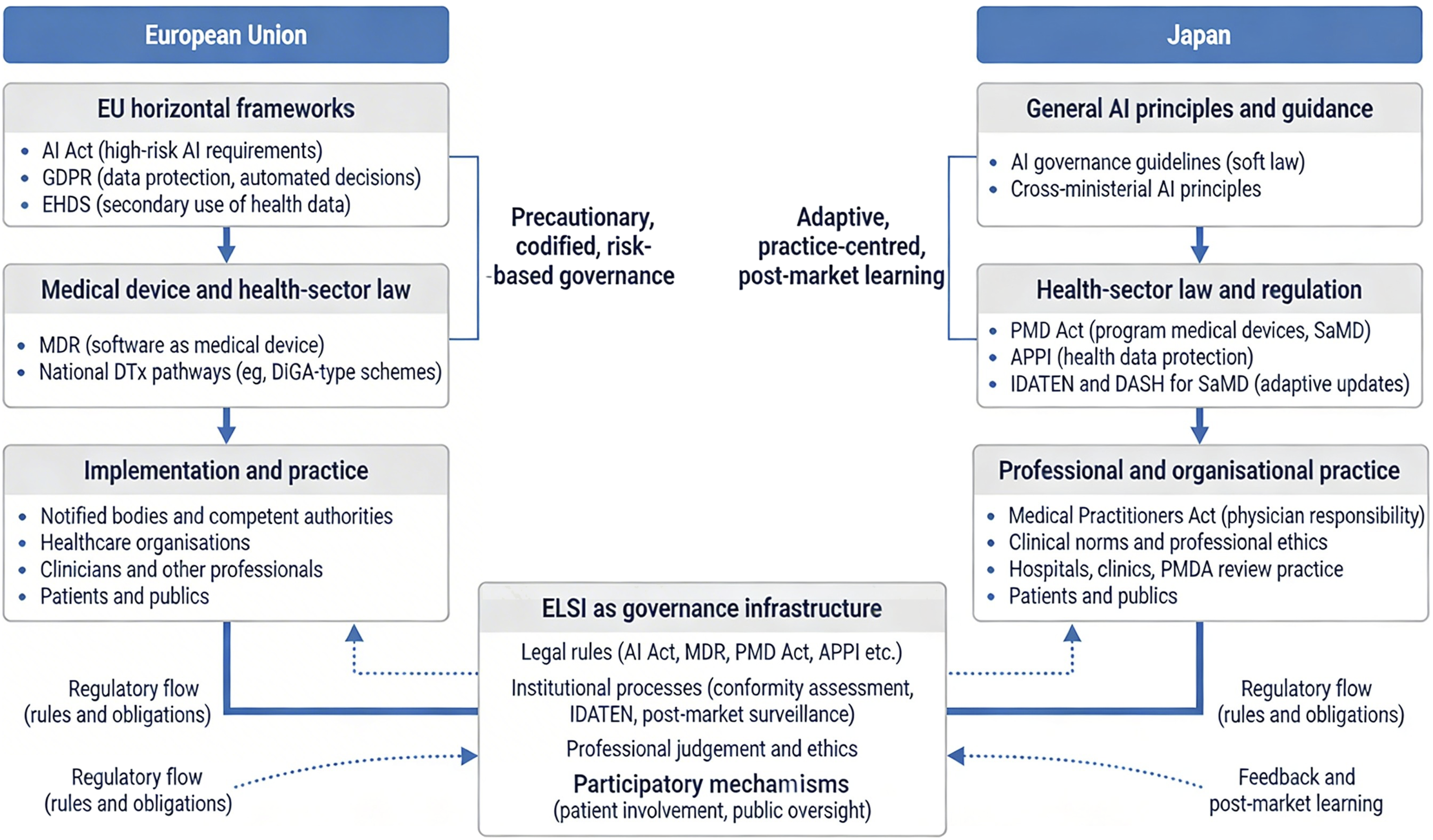

The European Union has established a layered governance architecture for therapeutic artificial intelligence by combining horizontal AI regulation with medical device law and health-data governance. Building on the conceptual governance framework developed in this study, Figure 1 first outlines how legal regulation, professional mediation, lifecycle monitoring, and participatory oversight jointly structure the governance infrastructure for therapeutic AI. Within this framework, Figure 2 illustrates how the European Union’s governance operates through the interaction of the AI Act, the Medical Device Regulation (MDR), the General Data Protection Regulation (GDPR), and the European Health Data Space (EHDS), together with implementation at Member State level.17,18,23,35–37 Conceptual governance framework for therapeutic AI. This figure illustrates the conceptual argument developed in this study. AI-enabled digital therapeutics increasingly shape patient behaviour in everyday life, creating governance challenges that cannot be addressed by ethical AI principles alone. The framework proposes a conceptual shift from principle-based ethics to ELSI as governance infrastructure, encompassing legal regulation, professional mediation, lifecycle monitoring, and participatory oversight. The lower section illustrates how these governance dimensions are institutionalized differently in the European Union and Japan. This figure illustrates the institutional governance architectures for AI-enabled digital therapeutics in the European Union and Japan. In the European Union, governance is structured through layered horizontal regulatory frameworks, including the Artificial Intelligence Act, the General Data Protection Regulation (GDPR), and the European Health Data Space (EHDS), combined with sector-specific regulation under the Medical Device Regulation (MDR). These frameworks are implemented through national authorities, healthcare organisations, and professional actors within a precautionary, codified, risk-based regulatory model. In Japan, governance is primarily organised through sector-specific legislation and administrative practice centred on the Pharmaceuticals and Medical Devices Act (PMD Act), the Act on the Protection of Personal Information (APPI), and regulatory initiatives such as IDATEN and DASH for software as a medical device (SaMD). This system emphasises adaptive oversight, professional mediation, and post-market learning. The figure highlights how ethical, legal, and social implications (ELSI) operate as governance infrastructure through legal rules, institutional processes, professional judgement, and participatory mechanisms across both regulatory systems.

Within this framework, most therapeutic AI systems are likely to fall within the category of high-risk AI systems where they function as safety components of medical devices or are themselves regulated medical devices subject to conformity assessment.17,18,35,36 In practice, this means that AI-enabled DTx and related software intended for therapeutic intervention, patient monitoring, or clinically relevant behavioural support are typically captured by a regulatory pathway that combines medical-device classification with AI-specific obligations.17,18

Under the MDR, software intended to provide information used for diagnostic or therapeutic purposes is commonly classified as Class IIa or higher, depending on intended purpose and the severity of possible harm in the event of malfunction. 36 Where such software also qualifies as an AI system under the AI Act, high-risk status triggers a set of legally binding obligations extending beyond conventional device safety requirements. 34 These include lifecycle risk management, data governance, technical documentation, logging, human oversight, transparency for deployers, and requirements relating to accuracy, robustness, and cybersecurity.34,36

This layered structure is especially important for therapeutic AI because these systems do not merely support isolated decisions; they may shape patient behaviour continuously over time. For this reason, AI-enabled mental health applications, DTx and adaptive behaviour-support tools are more likely to be treated as governance-sensitive technologies when their outputs materially affect treatment, patient risk profiles, or clinically significant behavioural outcomes.8,9,17,18

3.1.2. Scope and limits of EU regulation

At the same time, the EU framework does not encompass all digital health applications equally. A regulatory distinction remains between therapeutic or clinically consequential systems and lower-risk wellness or self-care applications that do not make therapeutic claims or materially influence diagnosis or treatment.8,17–19 This distinction is especially important in the field of digital mental health, where large numbers of consumer-facing tools exist outside the formal DTx category.9,19

Overall, the EU model is characterized by a precautionary and codified form of ex ante governance in which legal obligations are specified before market entry and linked to risk classification. Figure 2 captures this pattern by showing how horizontal legal frameworks are layered over health-sector law and implementation practices to form a formalized governance infrastructure.17,18,23,34–37 This layered and risk-based approach builds on earlier EU policy commitments to trustworthy and risk-based AI governance set out in the 2018 Communication “Artificial intelligence for Europe” and the 2020 White Paper on AI.17,18,23,34–37,42,43

3.2. Japan: Adaptive governance through sectoral regulation and professional mediation

3.2.1. PMD act-based regulation of AI-enabled medical software

In contrast to the EU’s horizontal AI-specific framework, Japan governs therapeutic AI primarily through existing sectoral legislation, especially the Pharmaceuticals and Medical Devices Act (PMD Act) and related administrative practice. As shown in Figure 2, Japan’s governance architecture is centred on health-sector regulation, professional responsibility, and post-market learning rather than on a single cross-sector AI statute.24,25,39,41,44

Under the PMD Act, software used for medical purposes is regulated as program medical devices or software as a medical device (SaMD).24,39 AI-enabled medical software is integrated into the existing device-risk classification framework rather than subjected to a separate horizontal AI law. As a result, the governance of therapeutic AI in Japan is institutionally embedded in medical-device review, clinical oversight, and administrative guidance.24,25,33,39,40

AI-based SaMD is generally classified according to intended use and potential risk to patients, with higher-risk products subject to review and oversight by the Pharmaceuticals and Medical Devices Agency (PMDA).24,39,44 Manufacturers are expected to maintain quality management systems, generate evidence appropriate to intended use, and comply with post-market safety and vigilance requirements. Although governance concerns similar to those seen in the EU—such as safety, transparency, and update control—also arise in Japan, the Japanese approach does not rely on a general AI Act to formalize these obligations.24,25

3.2.2. DASH/IDATEN and post-market learning

A distinctive feature of Japan’s governance model is its emphasis on adaptive post-market modification. PMDA and MHLW guidance, including DASH for SaMD and related frameworks, has sought to accommodate learning systems and iterative software updates through structured regulatory pathways.33,40,44 These initiatives reflect a governance philosophy in which post-market experience, expert review, and incremental adjustment play a central role.

This orientation is particularly relevant for therapeutic AI because such systems may evolve after deployment, whether through software updates, learning processes, or changing real-world use conditions. Rather than relying primarily on ex ante codification, Japan places greater weight on administrative flexibility, professional mediation, and iterative oversight.24,25,33,40 Figure 2 captures this practice-centred logic by showing the centrality of health-sector law, PMDA review practice, and professional and organizational implementation.

3.2.3. Professional mediation and clinical responsibility

Another defining feature of the Japanese model is the continuing centrality of physician and institutional responsibility in deployment. Therapeutic AI systems are not governed only as technical artefacts but as components of broader clinical practice, mediated through hospitals, clinics, and professional judgement.24,25,44 This contributes to a governance pattern in which ethical concerns are often operationalized through administrative guidance, sectoral review, and professional norms rather than through a single legally codified set of AI-specific obligations.

3.3. Comparative findings: Divergent governance philosophies

The comparison between the European Union and Japan reveals two distinct modes of embedding ELSI into governance infrastructure for therapeutic AI. Figure 1 summarises the conceptual governance framework developed in this study, highlighting how legal regulation, professional mediation, lifecycle monitoring, and participatory oversight jointly constitute governance infrastructure for therapeutic AI. Therapeutic AI creates forms of sustained behavioural influence in everyday life that cannot be governed adequately through abstract ethical principles alone, so effective governance requires institutional arrangements capable of addressing behavioural influence, lifecycle change, and accountability across AI-enabled therapeutic systems. Figure 2 then maps how this governance infrastructure is instantiated in the European Union and Japan, showing the layered supranational framework in the EU and the sectoral, practice-centred model in Japan. Together, these figures clarify both the conceptual argument and the institutional pathways through which ELSI is operationalised in the two jurisdictions.

Figure 1 highlights the central analytical move of the article: therapeutic AI creates forms of sustained behavioral influence in everyday life that cannot be governed adequately through abstract ethical principles alone. Instead, governance requires institutional infrastructure comprising legal regulation, professional mediation, lifecycle monitoring, and participatory oversight. The comparative implication is that effective governance of therapeutic AI requires a hybrid model combining legal safeguards with adaptive lifecycle oversight.

Figure 2 then shows how this general conceptual framework is instantiated differently across jurisdictions. In the EU, ELSI is embedded through a precautionary, codified, and risk-based model combining the AI Act, MDR, GDPR, and EHDS with implementation through Member State institutions and healthcare organizations.17,18,23,35–37 In Japan, ELSI is embedded through a more adaptive, sectoral, and practice-centered model grounded in the PMD Act, APPI, PMDA review practice, and professional mediation.24,25,33,38–41

High-risk AI regime for therapeutic AI systems under the EU AI Act and MDR.

Governance architectures for therapeutic AI in the European Union and Japan.

3.3.1. Main comparative result

Taken together, the findings indicate that the EU and Japan do not differ simply in regulatory strictness. Rather, they differ in how governance capacity is assembled (Table 2). In the EU, governance capacity is built primarily through ex ante legal codification, multilayered regulatory obligations, and harmonized institutional requirements. In Japan, governance capacity is built more through sectoral regulation, administrative adaptation, and professional mediation. These are not merely alternative legal techniques but distinct governance philosophies for managing the ethical, legal, and social implications of therapeutic AI.

4. Discussion

4.1. Beyond principle-based ethics: Therapeutic AI as a governance problem

The analysis suggests that the governance challenges posed by therapeutic artificial intelligence cannot be addressed adequately through principle-based ethics alone. Although ethical principles such as transparency, fairness, accountability, and human oversight have become central reference points in global AI governance discourse, these principles by themselves do not ensure responsible implementation in practice.1–7,16,20 The key issue is not simply whether such principles are normatively desirable, but how they are translated into institutionalized processes operating across the lifecycle of AI-enabled interventions.

This matters because therapeutic AI differs in important respects from earlier forms of medical AI. Rather than generating discrete outputs for clinician interpretation, therapeutic AI systems may operate continuously in patients’ everyday environments, shaping behaviour through repeated interaction, nudging, adaptation, and longitudinal engagement.8,9,13,14,21 Governance problems therefore emerge not only at the moment of system approval or deployment, but throughout the lifecycle of the system. Concerns related to autonomy, persuasion, opacity, and accountability may develop gradually through cumulative behavioral influence rather than through a single identifiable decision point.16,19–21

The comparative results reinforce the central conceptual claim of this article: ethical, legal, and social implications (ELSI) should be understood not merely as ethical concerns, but as governance infrastructure. Ethical commitments become meaningful only when embedded in legal rules, institutional procedures, professional responsibilities, and mechanisms for monitoring and accountability.4,7,20,32,45–47

4.2. The European Union: Codified and precautionary governance

The European Union’s governance architecture illustrates the strengths of a codified and precautionary model of AI regulation. By combining the Artificial Intelligence Act with the Medical Device Regulation (MDR), the General Data Protection Regulation (GDPR), and the emerging European Health Data Space (EHDS), the EU has constructed a layered regulatory framework through which therapeutic AI systems can be governed across multiple legal domains.17,18,23,35–37

Within this framework, many therapeutic AI systems fall into the category of high-risk AI systems where they function as safety components of medical devices or are themselves regulated medical devices requiring conformity assessment.17,18,34,35 In practice, this means that AI-enabled DTx and related systems are subject to a range of legally binding obligations, including lifecycle risk management, data governance requirements, documentation obligations, human oversight mechanisms, transparency requirements for deployers, and standards concerning robustness and cybersecurity.34,35

One advantage of this approach is the creation of clear ex ante obligations. Developers and deployers can identify regulatory requirements before placing systems on the market, improving legal certainty and regulatory transparency.17,18,34 In addition, by embedding ethical concerns into legally enforceable duties, the EU framework translates abstract ethical principles into operational governance mechanisms.4,16–18

The EU model also has limits. The interaction of multiple legal instruments may create regulatory complexity, particularly for smaller innovators and for AI systems that evolve after deployment. Therapeutic AI systems may change through retraining, updates, or shifting patterns of real-world use, raising questions about how conformity assessment and lifecycle oversight should adapt to dynamic systems.17,18,34 The EU approach therefore provides strong formal safeguards, but may encounter difficulties when regulating continuously learning systems.

4.3. Japan: Adaptive governance through sectoral regulation and professional mediation

Japan represents a contrasting governance model centered on sectoral regulation and administrative adaptation. Rather than creating a horizontal AI-specific legal framework, Japan regulates therapeutic AI mainly through the Pharmaceuticals and Medical Devices Act (PMD Act), data protection legislation such as the Act on the Protection of Personal Information (APPI), and regulatory practice developed by the Pharmaceuticals and Medical Devices Agency (PMDA).24,25,33,38–41

Under this system, AI-enabled medical software is regulated as software as a medical device within the established medical-device classification framework. Risk classification and approval pathways are determined primarily by intended use and potential patient harm, rather than by the existence of a separate AI regulatory regime.24,39 This approach embeds therapeutic AI governance within existing medical-device oversight structures and clinical regulatory processes.

A notable feature of the Japanese approach is its emphasis on adaptive post-market governance. Regulatory initiatives such as DASH-related frameworks enable structured management of updates and modifications for AI-based medical software, reflecting a regulatory philosophy that prioritizes real-world evidence and iterative improvement.33,40,44 This allows oversight to evolve alongside technological development.

Professional mediation also plays a central role. Physicians, hospitals, and expert committees contribute to interpreting and applying regulatory frameworks in clinical contexts. Governance is therefore implemented not only through statutory obligations, but also through institutional practice and professional responsibility.24,25,44

This approach has its own limitations. Because ethical safeguards are less explicitly codified in law, governance outcomes may depend more heavily on administrative interpretation and institutional practice. This may lead to greater variability in how ELSI principles are operationalized across different contexts.24,25

4.4. Divergent governance philosophies

Comparison of the European Union and Japan highlights two different ways of embedding ELSI within governance systems for therapeutic AI.

The EU model is characterized by precautionary, codified, and risk-based governance, in which ethical principles are translated into legally binding requirements associated with high-risk classification and lifecycle compliance obligations.16–18,35–37 Japan’s approach, by contrast, is more adaptive, sectoral, and practice-centered, relying more heavily on administrative guidance, institutional oversight, and professional mediation.24,25,33,38–41

These differences should not be reduced to simple variations in regulatory strictness. They instead reflect distinct strategies for assembling governance capacity. The EU emphasizes formal legal structures and harmonized compliance obligations, whereas Japan emphasizes flexibility, incremental learning, and sector-specific expertise.

The comparison suggests that neither model alone fully resolves the governance challenges posed by therapeutic AI. Highly codified frameworks may struggle to accommodate rapidly evolving technologies, while purely adaptive approaches may lack transparency and consistency. Effective governance may therefore require a hybrid model combining legal safeguards with adaptive lifecycle oversight mechanisms.

4.5. Implications for digital health governance

Several implications follow from these findings.

First, therapeutic AI should be governed as a category of technology that raises not only safety and performance questions, but also questions of behavioral influence and relational autonomy.8,9,19,21,42 Governance frameworks designed for static medical devices may not be sufficient where digital systems shape patient behavior continuously in everyday settings.

Second, lifecycle governance should be treated as a central component of AI regulation. Therapeutic AI systems may evolve after deployment through software updates, learning processes, or changing patterns of use. This makes post-market monitoring, revision pathways, and feedback loops essential components of governance infrastructure.17,18,33–35,40,41,44,45

Third, professional mediation remains important, but is not sufficient on its own. Clinicians and healthcare organizations play a critical role in supervising the use of therapeutic AI, but governance frameworks must also address situations in which AI systems operate with limited professional oversight, particularly in consumer-facing digital health applications.19,21,24,25

Fourth, participatory oversight mechanisms deserve greater attention. If therapeutic AI systems influence behavior in everyday life, governance frameworks should incorporate mechanisms that allow patients and publics to contribute to oversight, evaluation, and accountability processes.16,32,44,45,47

For developers, these findings imply that governance considerations need to be integrated into system design from the outset, including documentation, transparency mechanisms, update management, and monitoring capabilities. For healthcare organizations, they highlight the need for institutional governance capacity capable of evaluating, deploying, and supervising AI-enabled therapeutic systems.

4.6. Limitations and future research

This study has several limitations. First, the analysis is based on a narrative review and comparative institutional analysis rather than on a systematic review or empirical case studies. Its aim is therefore interpretive and conceptual rather than exhaustive or statistically generalizable.27–29

Second, the analysis focuses primarily on formal governance frameworks and institutional design rather than on implementation outcomes in clinical practice. Empirical research examining how therapeutic AI governance operates within healthcare organizations and real-world deployment settings remains necessary.

Third, the comparison is limited to two jurisdictions. Future research should extend comparative inquiry to additional regulatory environments, including the United States and emerging Asian digital health governance systems.

Further empirical work is also needed to examine how patients, clinicians, regulators, and developers experience governance mechanisms in practice, particularly where AI-enabled therapeutic systems operate across hybrid clinical and consumer environments.

5. Conclusion

This study examined how ethical, legal, and social implications (ELSI) surrounding therapeutic artificial intelligence are institutionalized within digital health governance frameworks. By combining narrative review with comparative institutional analysis, the study analyzed regulatory approaches to AI-enabled DTx in two advanced digital health jurisdictions: the European Union and Japan (Tables 1 and 2).

The analysis demonstrates that ELSI should not be understood solely as normative principles or ethical guidelines, but as governance infrastructure—that is, institutionalized arrangements through which ethical commitments are translated into operational practices across the lifecycle of AI systems. Therapeutic AI, particularly in the form of DTx and behavior-support technologies, introduces governance challenges that differ from those associated with traditional medical devices or diagnostic AI systems. Because these technologies interact continuously with patients and may influence health-related behavior over extended periods, governance must address not only safety and technical performance but also long-term behavioral effects, autonomy, and accountability.

The comparative findings reveal two distinct governance models. The European Union has developed a codified and precautionary governance architecture, combining the Artificial Intelligence Act, the Medical Device Regulation, the General Data Protection Regulation, and the European Health Data Space into a layered regulatory framework that embeds ethical principles into legally binding compliance obligations. Japan, in contrast, has adopted a more adaptive and sectoral governance approach, in which therapeutic AI is regulated primarily through the Pharmaceuticals and Medical Devices Act, complemented by administrative guidance, professional mediation, and post-market regulatory learning.

These contrasting approaches illustrate different strategies for operationalizing ELSI within digital health governance systems. The EU model prioritizes legal certainty and harmonized ex ante obligations, while the Japanese model emphasizes regulatory flexibility, institutional adaptation, and iterative post-market oversight. Neither model alone fully resolves the governance challenges posed by therapeutic AI. Instead, effective governance is likely to require a hybrid framework that combines legally codified safeguards with adaptive lifecycle monitoring and institutional learning.

More broadly, the study suggests that governing therapeutic AI requires a shift in analytical perspective—from viewing ethics as external guidance to understanding ELSI as an integral component of governance capacity in digitally mediated healthcare systems. As AI-enabled therapeutic technologies continue to expand in ageing societies, developing such governance infrastructures will be essential for maintaining public trust, ensuring accountability, and aligning technological innovation with social values.

Footnotes

Ethical considerations

Not applicable. This study did not involve human subjects or primary data collection.

Author contributions

Yasue Fukuda and Koji Fukuda jointly conceptualized the study. Yasue Fukuda led the manuscript drafting and coordination. Koji Fukuda contributed to theoretical framing, comparative analysis, and critical revision. Both authors approved the final version of the manuscript.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

No datasets were generated or analyzed during this study. All materials analyzed are publicly available legal and policy documents cited in the references.