Abstract

Objective:

In-person counseling faces limitations in timing and geographical accessibility, often causing adolescents and young adults (AYAs) to miss timely psychological support. With the advent of large language models (LLMs), the mental health care industry has increasingly focused on developing chat counseling services as supplementary tools to reduce these barriers. However, existing services have two primary limitations: tendency toward generic advice and absence of human-like dialogue. To overcome these limitations, this article proposes BetterMood, a human-like AI counseling service specifically for Korean-speaking AYAs.

Methods:

Our design for BetterMood separately addressed the content and delivery of counseling dialogue. For content, we develop a concern-aware counseling LLM refined through prompt-engineering with a novel prompt derived from collected counseling data. For delivery, we create a human-like AI counselor that employs a chunk-based streaming methodology to enable human-like dialogue. We then conducted a user study with 10 adolescents, 110 young adults, and 8 professional clinicians to assess the feasibility and user experience across four domains: (i) interaction capability, (ii) perceived support, (iii) usability, and (iv) ethical safety.

Results:

Our user study indicates that BetterMood’s interactive capabilities, particularly its ability to suggest appropriate responses, received positive feedback from 90.0% of adolescents, 90.9% of young adults, and 75.0% of professional clinicians. Stratified analysis revealed that outcomes regarding perceived support and usability of the service differed across cohorts and initial screening status. Furthermore, independent evaluations by eight professional clinicians demonstrated moderate agreement for individual ratings but excellent reliability for the aggregated assessment.

Conclusion:

Positive user experience and high inter-rater reliability among clinicians support BetterMood’s potential as an accessible supplementary tool for initial psychological support.

Keywords

Introduction

Timely access to effective mental health care remains limited for many adolescents and young adults (AYAs).1,2 Multiple barriers, including clinician shortage, geographic inequalities, and social stigma, contribute to this problem.3–5 Meta-analysis indicate that fewer than 50% of AYAs who need psychological support utilize in-person mental health services, highlighting substantial gaps in access.6,7

To address these challenges, digital mental health interventions have emerged as scalable alternatives to improve accessibility.8,9 Recent studies further highlight AYAs’ distinctive digital engagement needs, with confidentiality, interactivity, and personalized support emerging as primary considerations.10–12 When interventions are tailored to these needs, AYAs’ familiarity with digital platforms can facilitate uptake.13,14 Systematic reviews report that adolescent-focused digital tools, particularly those using conversational AI, demonstrate clinical benefits and higher retention.15–17

Building on this evidence base, researchers and industry have expanded AI-enabled mental health solutions.

18

Among these, large language model (LLM)-powered conversational agents (e.g. Woebot, Wysa) have gained traction for offering on-demand, stigma-reducing support.19,20 Scoping reviews identify more than 50 mental health chatbots, over 20 explicitly using LLMs, across academic pilots and commercial products.

21

Randomized and quasi-experimental trials in AYAs demonstrate that LLM-powered conversational agents produce small-to-moderate reductions in internalizing symptoms (Hedges’

Despite these advances, leading reviews and empirical studies highlight two limitations: (a) tendency toward generic advice, and (b) absence of human-like dialogue. These limitations particularly weaken support for AYAs, who prioritize context-sensitive, emotionally attuned, interactive care. They are not minor stylistic issues; rather, theory and evidence indicate that they can diminish therapeutic impact.22–25

(a) Tendency toward generic advice. This refers to interactions that ignore users’ specific contexts and emotions.22,23 Instead, they deliver broad, formulaic recommendations (e.g. “talk to someone you trust”). Theoretically, such genericity clashes with core counseling frameworks. Person-centered therapy, 26 for example, emphasizes empathic understanding, unconditional positive regard, and genuineness.27,28 Generic advice misses the individualized attunement that those mechanisms require. Empirically, qualitative studies with AYAs show that lack of contextualization lowers perceived support and speeds disengagement.23,29 Consistent with these findings, as Figure 1 illustrates, the open-source LLM-based chatbot ChatCounselor 30 acknowledges frustration yet still defaults to sweeping suggestions (e.g. “seek help from teachers or classmates”).

Sample dialogue from ChatCounselor.

(b) Absence of human-like dialogue.24,25 We define human-like dialogue as (i) multimodal social cues that convey empathy and social presence, and (ii) low-latency turn-taking that sustains conversational flow and rapport.31,32 Theoretically, Social Presence Theory 33 and Media Richness 34 perspectives posit that richer, more immediate communication channels enhance interpersonal understanding. In digital mental health, the construct of a digital therapeutic alliance extends this logic by linking perceived alliance to adherence and outcomes.35,36 Empirically, interventions that integrate multimodal, human-like feedback yield higher alliance scores and retention than text-only agents.37–39 Yet most LLM chatbots remain text-based and asynchronous, limiting nonverbal expression and real-time responsiveness. Reflecting this limitation, empirical studies show that only a minority of AYAs perceives these chatbots as emotionally attuned or supportive when compared with human counselors.40–42 Likewise, prior work shows that even brief (e.g. several-second) pauses in voice-based automated agents can disrupt rapport and dampen engagement.43–45

To overcome these two limitations, we propose BetterMood, a human-like AI counseling service specifically designed for Korean-speaking AYAs, as illustrated in Figure 2. Our main contributions are twofold: (a) a concern-aware counseling LLM (From now on, by counseling LLM, we mean a concern-aware counseling LLM whenever there is no ambiguity.), and (b) a human-like AI counselor (By AI counselor, we mean a human-like AI counselor whenever there is no ambiguity.). For the first contribution, the main challenge is integrating effective psychotherapeutic counseling knowledge into a general-purpose LLM so that it can move beyond generic advice. To achieve this, we collect counseling data from both real counseling scripts and synthetic counseling scripts. Using these data, we devise a novel prompt based on psychotherapy and counseling techniques, then perform prompt-engineering. Additionally, we implement per-client session-level management to stores contextual information and each client’s concerns, ensuring continuity across visits. For the second contribution, the main challenge is creating seamless human-like counseling experience. To this end, we develop the AI counselor that delivers text responses generated by our counseling LLM through visual feature, voice, and gestures. To further support human-like dialogue with the AI counselor, we introduce a chunk-based streaming methodology that ensures minimal latency. This approach processes the counseling LLM’s text responses on a sentence-by-sentence basis. Once a sentence is finalized, the TTS module converts it into audio. Then, the audio is lip-synced with the AI counselor to enable streaming of responses.

BetterMood counseling flow.

Through this design, BetterMood integrates a human-like AI counselor with a concern-aware LLM, providing counseling services to Korean-speaking AYAs without constraints of time or location. It is expected to serve as a supplementary tool that helps users overcome psychological barriers.

Methods

As illustrated in Figure 2, each conversation cycle between the client and the AI counselor in BetterMood proceeds as follows. The counseling begins by capturing the client’s audio and transmitting it to the BetterMood server. The captured audio is immediately forwarded to the STT module, which leverages OpenAI’s Whisper API. 46 Once the audio is transcribed, the resulting text is routed to the counseling LLM, which generates a sequence of responding sentences. As soon as each sentence is generated, it is passed to the TTS module, which uses ElevenLabs API 47 for audio synthesis. Then, the synthesized audio is fed into the visual response generation module, processing AI counselor’s response. Finally, the AI counselor’s response is played back to the client as a set of short video segments, completing a single conversation cycle. Through this process, BetterMood aims to provide a counseling experience that closely simulates real human interaction. To evaluate the practicality and immediate user perceptions of BetterMood, we conduct a user study with three cohorts: 10 adolescents, 110 young adults, and 8 professional clinicians. The adolescent and young adult participants are screened and divided into screen positive and screen negative groups based on their screening results. After each counseling session, participants complete a satisfaction survey assessing various aspects of the service. Additionally, the professional clinicians participate in counseling sessions using BetterMood and provide evaluations from an expert perspective. Prior to the study, written informed consent is obtained from all participants. For participants over 18, written informed consent is obtained from themselves. For participants under 18, written informed consent is obtained from their legally authorized representatives (parents or legal guardians), with simultaneous assent from the minors. The technical details of concern-aware counseling LLM, and human-like AI counselor will be discussed more extensively in the subsequent sections.

Concern-aware counseling LLM

Current chat counseling services often provide general solutions immediately without properly exploring and discussing clients’ concerns. To address this problem, we collect counseling data and analyze how counseling sessions are conducted. Based on insights into counseling practices, we devise a novel prompt grounded in psychotherapy and counseling techniques. Then we conduct prompt-engineering to ensure our counseling LLM provides responses closely tailored to clients’ concerns. Counseling data collection. Our counseling data consist of two types of scripts: (a) real counseling scripts and (b) synthetic counseling scripts. The real counseling scripts are collected from actual counseling sessions between a counselor and students. We recruit a highly credentialed Level 1 Licensed Professional Counselor employed at the Student Counseling Center of Seoul National University, as well as students who score above the cut-off (Participants are classified using the following cut-off scores: STAI-X-1 (≥52), PHQ-9 (≥10), BIS-15 (≥39), and endorsement of 4 or more items on ASRS-V1.1 Part A.) on at least two of the four mental health self-report questionnaires: STAI-X-1 (anxiety),48–50 PHQ-9 (depression),51,52 BIS-15 (impulsivity),53–56 and ASRS-V1.1 Part A (ADHD).57–59 These questionnaires are selected based on their relevance to prevalent mental-health concerns among Korean AYAs.60,61 Prior to the study, we confirmed the usage rights for all questionnaires. The PHQ-9, BIS-15, and ASRS-V1.1 Part A are publicly available for research purposes. For the STAI-X-1 (Korean standardized version), we obtained written permission via email from the copyright holder prior to data collection. Across the sessions, discussions typically focus on academic stress, career choices, familial conflicts, and interpersonal relationship difficulties. Counseling sessions are conducted remotely via Zoom, last approximately 30 minutes, and are recorded only after participants provide written informed consent, including clear information on potential risks, confidentiality boundaries, and participants’ right to withdraw. Recordings are transcribed verbatim using the Naver ClovaNote, 62 which demonstrates high transcription accuracy for Korean speech. 63 Transcripts undergo a quality-control process in which an independent reviewer compares each transcript against the original audio recording and corrects identified discrepancies. Through this thorough procedure, 5 high-quality real counseling scripts are generated.

Before participation, all individuals receive a detailed explanation of the study’s goals, data handling procedures, potential risks, privacy measures, and their rights, both through written materials and verbal briefing. Recording of counseling sessions occurs only after explicit consent, with all participants reminded that they can withdraw at any time without consequence. To ensure confidentiality and ethical standards, all transcripts undergo an anonymization process: personal identifiers, sensitive references, and any information that might reveal participant identity are systematically removed. Fully de-identified transcripts are stored on encrypted institutional servers with access restricted to authorized research personnel only. No identifiable or sensitive participant information is shared outside the research team or used in any publication or tool development.

For synthetic counseling scripts, we collaborate with experts from the Department of Psychology at Korea University. Graduate psychology researchers author these scripts, explicitly basing their dialogues on established psychotherapeutic approaches such as cognitive behavioral therapy (CBT). Scripts address common psychological concerns among AYAs, including academic stress, career choices, familial conflicts, and interpersonal relationship difficulties. Each synthetic script represents a counseling dialogue of approximately 20 to 25 minutes and is systematically structured to reflect realistic therapeutic interactions. To maintain high data quality and clinical realism, we apply a two-step validation procedure. Initially, each script undergoes peer review, allowing authors to receive mutual feedback and enhance script fidelity; subsequently, a psychology professor comprehensively reviews each script, conducting practical role-play scenarios to test therapeutic appropriateness, psychological plausibility, and ethical sensitivity. Throughout script creation, explicit measures are taken to minimize biases, avoid reinforcement of stereotypes, and sensitively handle potentially distressing topics. This multi-layered approach yields 100 robust, clinically realistic synthetic counseling scripts.

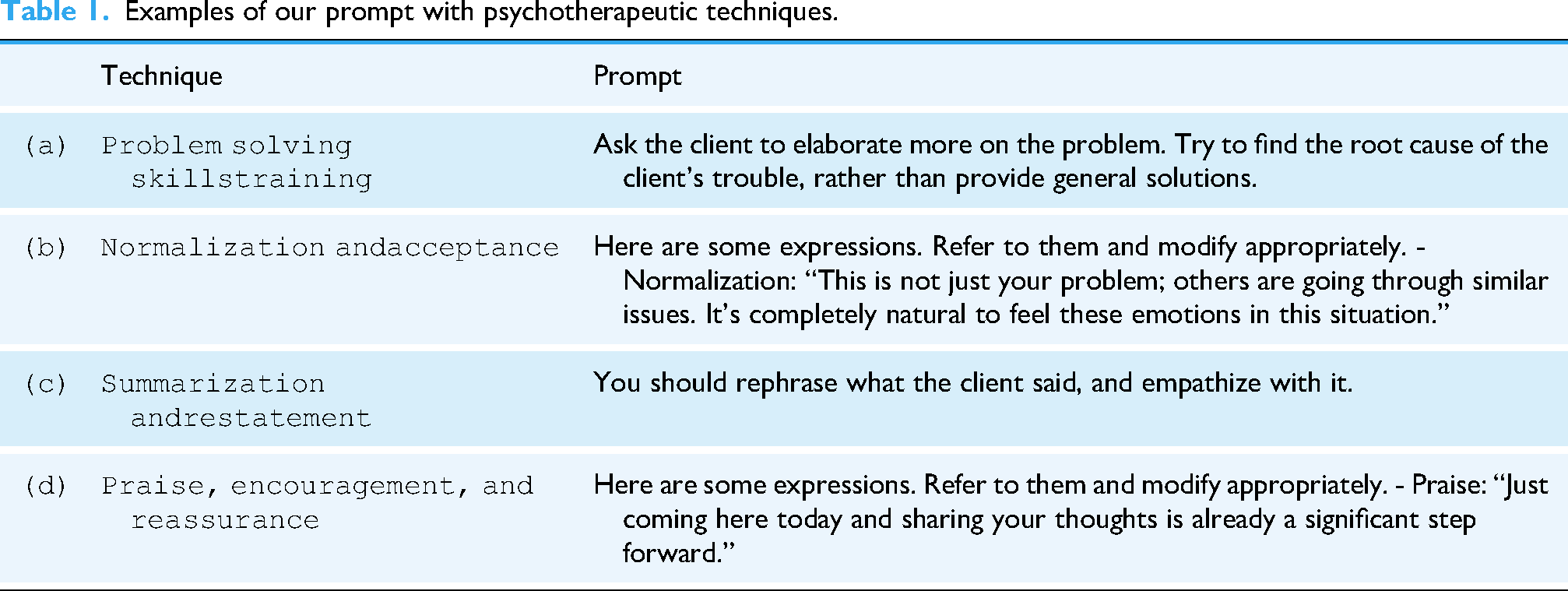

Prompt-engineering. To ensure that our counseling LLM delivers responses closely tailored to clients’ concerns, we developed a novel prompt comprising two core components: (a) psychotherapeutic techniques derived from CBT and SP, and (b) structured counseling techniques for effective interaction. For the first component, we analyze our counseling dataset and identify the four most frequently used psychotherapeutic techniques derived from CBT and SP. These are well-established counseling approaches known for their efficacy in addressing various mental health conditions.64–66 Figure 3 illustrates samples of our counseling data, highlighting these four techniques. Table 1 presents a segment of our prompt that incorporates these techniques, and the following paragraphs provide explanations of each technique and its application.

In order to provide meaningful and personalized support, it is essential to engage in a more deepened conversation about the issue rather than immediately listing solutions to clients’ problems. To achieve this, our prompt is designed to ask clients for more details of their situations and difficulties while helping them to identify the underlying causes of their concerns (Table 1(a)). Through collaborative discussions, clients gain clarity and explore possible solutions.

64

Counseling data samples. Examples of our prompt with psychotherapeutic techniques. Clients may suffer from the belief that their problems are unique to them, which can lead to further distress. Normalization alleviates this by reassuring clients that others face similar challenges, reducing self-blame and feelings of abnormality.

67

As shown in Table 1(b), we incorporate few-shot examples into the prompt to normalize clients’ concerns. By applying this, our counseling LLM helps clients accept their emotions as natural and valid, thereby reducing their anxiety and making the counseling session more effective.

67

By summarizing or restating clients’ statements, rather than offering brief reactions such as “Yes” or “I see,” counselors demonstrate a higher level of attentiveness.

68

Furthermore, this approach can assist clients in clarifying their problems. We design our prompt to rephrase clients’ concerns and demonstrate empathy related to those issues (Table 1(c)). This approach enables our counseling LLM to show that it understands clients’ concerns, fostering an empathetic interaction.

66

Counselors frequently offer praise to their clients, since clients can greatly benefit simply from receiving encouragement. We include examples of praise in the prompt, as shown in Table 1(d), to provide generous encouragement to clients. Such praise and support enhance their self-esteem, which is crucial for successful counseling.

66

For the second component, counseling techniques, we establish a clear counselor persona within our prompt, articulated as: “You are a kind counselor for teenagers.” To maintain an appropriately conversational and empathetic tone, we include explicit instructions such as “Maintain a conversational and empathetic tone.” Additionally, we provide structured guidance tailored to each phase of the counseling process—including initial greetings, exploring client concerns, facilitating problem-solving discussions, and effectively concluding the session—thereby enabling our counseling LLM to generate suitable and contextually relevant responses at every stage. Furthermore, we provide few-shot examples to address various client conditions such as depression, anxiety, and extreme situations like suicidal thoughts. Our prompt is refined with feedback from psychology experts to guarantee the appropriateness of responses.

Utilizing the prompt, we develop our counseling LLM based on the GPT-4o model with

Human-like AI counselor

AI counselor construction. Building rapport between clients and AI counselors is essential for creating a comfortable and engaging experience. Research on virtual rapport states that clients communicate more effectively when they feel connected to their conversational partners.70,71 Other research points out that familiar avatars not only enhance user comfort but also foster greater collaboration and interaction in virtual counseling environments. 72 However, existing chat counseling services fail to support human-like dialogue, making it difficult for clients to establish a virtual rapport. In order to overcome this limitation, BetterMood deploys AI counselor as an utterance medium of counseling LLM, which consists of three components: visual feature, voice, and gestures.

To maximize virtual rapport, we first aim to make user-friendly AI counselor for AYAs. A face-swapping technology is applied to generate user-friendly visual feature, resembling celebrities, athletes, or friendly figures so that clients feel comfort and relaxed. 73 Then the visual feature is integrated with the corresponding voice using ElevenLabs. Lastly, we enable our AI counselor to mimic gestures that help counseling sessions. These gestures include actions indicative of careful listening and empathetic understanding, which help the client feel heard and understood.

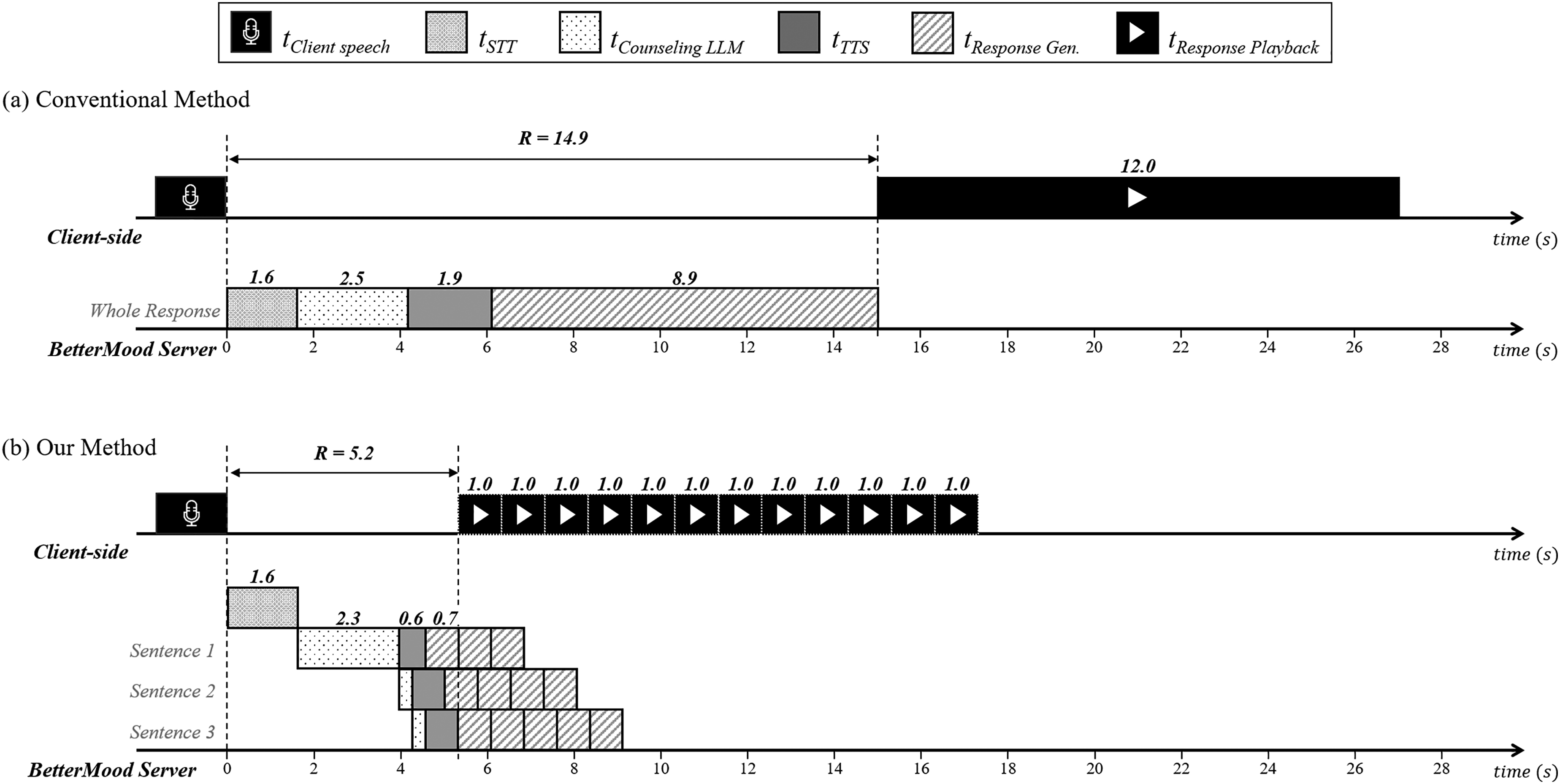

The text response generated by the counseling LLM is synthesized into audio that reflects the intended voice characteristics. BetterMood then integrates this audio with the selected visual feature and gestures. Subsequently, MuseTalk, 74 a lip-sync generation model, synchronizes visual feature and gesture elements with the synthesized audio to produce the AI counselor’s response. Human-like dialogue. As illustrated in Figure 2, delivering the AI counselor’s response to the client requires sequential execution of four modules, namely STT, concern-aware counseling LLM, TTS, and visual response generation. This sequential processing approach, hereafter referred to as the conventional method, leads to a considerable overall response time, which hinders real-time human-like dialogue. To address this challenge, we propose a chunk-based streaming methodology that significantly reduces total response time by delivering the AI counselor’s response in 1-second chunks. Figure 4 provides a direct comparison between the conventional method and our proposed approach by illustrating the entire process from the client’s speech to the AI counselor’s response delivery. To clearly demonstrate the differences, we present an example in which the AI counselor’s response consists of 3 sentences, with a total audio duration of 12 seconds.

Comparison of the conventional and our methods for AI counselor response delivery.

Figure 4(a) shows the conventional method’s process of delivering the AI counselor’s response. Initially, once the client finishes speaking, the STT module transcribes the spoken audio into text. This text is then provided to the counseling LLM, which generates a complete text response. Subsequently, the generated text is passed to the TTS module, where this response is synthesized into a 12-second audio. Finally, this audio is forwarded to the visual response generation module, where it is lip-synced with the AI counselor using MuseTalk. Throughout this sequential process, the client must wait approximately 15 seconds for all stages to be completed before hearing or seeing any part of the AI counselor’s response.

In contrast, Figure 4(b) illustrates our proposed method. Since the modules involved in delivering the AI counselor’s response operate independently, we adopt a pipelining approach. Rather than waiting for the counseling LLM to generate the entire text response, each sentence is immediately forwarded to the TTS module as soon as it is generated. Furthermore, we divide the synthesized audio into 1-second chunks, effectively breaking down the large lip-sync process into smaller tasks. As a result, the client waits approximately 5 seconds before hearing or seeing the first part of the AI counselor’s response, while subsequent response chunks continue to be generated. As denoted by

Automated silence detection.

Moreover, AI counselor responses are rendered immediately to the client via MediaSource buffering, 76 minimizing perceptible latency. Alongside this continuous rendering, the AI counselor performs subtle behavioral gestures, such as acknowledging nods or note-taking actions, to emulate the dynamics of human counselors. These combined designs effectively hide residual latency and ensure an authentic counseling experience.

User study

This study aimed to evaluate the practicality and initial user perceptions of the BetterMood. A cross-sectional user study was conducted remotely via the BetterMood platform in South Korea from September to December 2024, involving three distinct cohorts: adolescents, young adults, and professional clinicians. The study was explicitly designed to focus on capturing short-term user experience. Importantly, this study does not assess clinical efficacy or sustained psychological change, but rather participants’ first impressions and satisfaction after a single session. Adolescents and young adults. The process of the study for AYAs is as follows.

A total of 120 individuals participated in the user study, comprising 10 adolescents (aged 13–17) and 110 young adults (aged 18–24). Adolescents were recruited through online announcements posted on middle- and high-school bulletin boards within Seoul, whereas young adults were recruited via nationwide university online community boards and social media services. Prior to the study, all participants received comprehensive written guidelines.

Participants were instructed to complete four self-report questionnaires designed to assess mental health disorders: STAI-X-1 (anxiety),48–50 PHQ-9 (depression),51,52 BIS-15 (impulsivity),53–56 and ASRS-V1.1 Part A (ADHD).57–59 The following cut-off scores were applied to identify participants as screen positive: STAI-X-1 scores of 52 or higher (elevated anxiety), PHQ-9 scores of 10 or higher (moderate-to-severe depressive symptoms), BIS-15 scores of 39 or higher (significant impulsivity), and endorsement of four or more items on the ASRS-V1.1 Part A (probable ADHD). Participants who met or exceeded at least one of these cut-offs were classified as screen positive; all others were classified as screen negative.

Participants freely conducted a single 10- to 15-minute counseling session on BetterMood addressing their psychological concerns, such as academic pressure, career decisions, family issues, and interpersonal concerns.

After counseling sessions, participants responded to a satisfaction survey designed to assess various aspects of the service. The survey consisted of 9 questions: 7 closed-ended, which were measured on a 4-point Likert scale, and 2 open-ended. The closed-ended questions were designed to evaluate four aspects of BetterMood, with one or two questions for each aspect: (i) Interaction Capability, (ii) Perceived Support, (iii) Usability and (iv) Ethical Safety.

77

The open-ended questions required participants to freely describe both the strengths and limitations. Detailed information on the survey items is shown in Supplementary Material S4-1.

Among the 10 adolescents, 3 (30.0%) were male and 7 (70.0%) were female. Screening classified 4 adolescents (40.0%) as the screen positive group and 6 (60.0%) as the screen negative group. In the group of 110 young adults, 41 (37.3%) were male and 69 (62.7%) were female. Within this group, 49 (44.5%) were categorized as screen positive, while the remaining 61 (55.5%) were screen negative. Table 2 summarizes these demographic details, and Figure 6 illustrates the distribution of screen positive cases across anxiety, depression, impulsivity, and ADHD for each age group.

Screening results.

Demographics of adolescents and young adults.

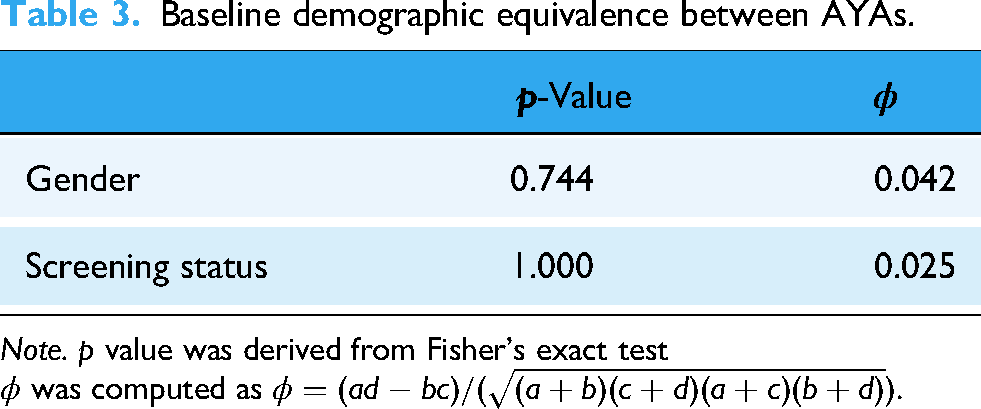

As shown in Table 3, two-sided Fisher’s exact test revealed no significant differences in either gender distribution (

Professional clinicians. We recruited 8 qualified professional clinicians, certified by the South Korea Ministry of Health and Welfare (MOHW) and the Korean Clinical Psychology Association (KCPA). They engaged in counseling sessions on BetterMood and completed a satisfaction survey. The survey consisted of 14 questions: 12 closed-ended, which were measured on a 4-point Likert scale, and 2 open-ended. The closed-ended questions covered the same four evaluation aspects as those in the adolescents’ and young adults’ survey,that is, (i) interaction capability, (ii) perceived support, (iii) usability, and (iv) ethical safety. Additionally, the open-ended questions required professional clinicians to freely describe both the strengths and limitations of BetterMood. Detailed information on the survey items is shown in Supplementary Material S5-1. Ethical considerations The study was approved by the Korea University Institutional Review Board (KUIRB-2024-0458-01). Participants were provided with detailed information on the purpose and procedures of the user study, and written informed consent was obtained from all participants. For those under 18, written informed consent was obtained from legally authorized representatives (parents or legal guardians), with simultaneous assent from minors before study initiation. The data collected during the user study, including participants’ screening results and satisfaction survey responses, were strictly de-identified and securely stored on an encrypted server. Data analysis Responses to the closed-ended survey items administered to AYAs were analyzed with respect to two independent variables: age group and screening status. Because direct group comparisons are challenging when both variables are considered simultaneously, we applied the Breslow–Day (BD) test for homogeneity and the Cochran–Mantel–Haenszel (CMH)

Results

This section presents the findings from the closed-ended items of the user study, organized into four evaluation aspects: interaction capability, perceived support, usability, and ethical safety. Interaction capability reflects the AI counselor’s ability to understand conversational context and respond appropriately. Perceived support denotes participants’ immediate, self-reported sense of concern being addressed and mood change after a single session. Usability captures the convenience and ease of using BetterMood. Ethical safety refers to any ethical concerns or discomfort reported while interacting with the system. Detailed item-level results are provided in Tables 4 and 5, reporting the proportion of positive responses (“agree” and “strongly agree”). Table 4 presents responses from AYAs, and Table 5 from professional clinicians.

Baseline demographic equivalence between AYAs.

Note.

Closed-ended results of user satisfaction survey for AYAs (“agree” and “strongly agree”).

Note. This table presents the proportion of positive responses(“agree” and “strongly agree”) among AYAs. All values are reported as percentages.

Detailed information on the survey items for AYAs is shown in Supplementary Material S4-1.

Closed-ended results of user satisfaction survey for professional clinicians (“agree” and “strongly agree”).

Note. This table presents the proportion of positive responses (“agree” and “strongly agree”) among professional clinicians.

All values are reported as percentages. Detailed information on the survey items for professional clinicians is shown in Supplementary Material S5-1.

For ethical safety, lower agreement indicates a more positive outcome, as the statements reflect potential ethical concerns.

The user satisfaction survey items were combined into a single satisfaction scale that showed good internal consistency. To evaluate this, we computed Cronbach’s

BetterMood received high ratings for interaction capability across all participant groups. Among adolescents, 90.0% agreed that the AI counselor’s responses were contextually appropriate (

Perceived support received moderate ratings, with notable differences across cohorts. Among adolescents, 90.0% reported perceived concern resolution (

Usability was rated highly positive across all cohorts. Among adolescents, 80.0% indicated willingness to engage with the AI counselor again (

Ethical safety concerns were minimal. Adolescents reported feeling comfortable during sessions (

Stratified analysis

Using age group as a stratification variable, we conducted the Breslow–Day (BD) homogeneity test and the Cochran–Mantel–Haenszel (CMH)

Age-stratified CMH and breslow-day tests for screening status effects (only AYAs).

Note. BD = Breslow–Day test for homogeneity of odds ratios CMH = Cochran–Mantel–Haenszel test stratified by age group OR = odds ratio

CI = confidence interval. All p-values are two-tailed.

Sample sizes: Adolescents

Using screening status as the stratification variable, we again applied the BD homogeneity test and the CMH

Screening status-stratified CMH and breslow-day tests for age effects (only AYAs).

Note. BD = Breslow–Day test for homogeneity of odds ratios; CMH = Cochran–Mantel–Haenszel test stratified by screening status; OR = odds ratio

CI = confidence interval All p-values are two-tailed.

Sample sizes: Screen Positive

Clinician inter-rater reliability

Eight professional clinicians independently evaluated each counseling session conducted on BetterMood. Inter-rater agreement was assessed with the ICC calculated from a two-way random-effects model. The single-measure ICC (ICC (2,1)) was 0.56, indicating moderate agreement among individual raters, whereas the average-measure ICC (ICC (2,8)) was 0.90, reflecting excellent reliability when scores are aggregated across raters. Thus, while individual ratings vary to some extent, the pooled scores exhibit very high consistency. Full statistics are presented in Table 8.

Inter-rater reliability for clinician ratings (two-way random, absolute agreement).

In summary, the primary aim of this study was to assess the AI counselor’s feasibility and user experience as a supplementary tool. Accordingly, all findings are drawn solely from participants’ immediate, self-reported impressions after a single session. The results indicate strong interactive capability, usability, and ethical safety across adolescents, young adults, and professional clinicians, although perceived support varied notably between cohorts.

Discussion

BetterMood serves as an accessible supplementary tool for AYAs. Because our conclusions rest solely on participants’ immediate self-reports after a single session, BetterMood should be viewed as a gateway or adjunct resource, not a replacement for professional care. Acknowledging this limitation, the remainder of the Discussion reviews our quantitative and qualitative findings (expanded in the Supplementary Materials S4-2 and S5-2) and explains how each insight informs system refinements and future research.

Design implications

Interaction capability. Approximately 90.0% of AYAs agreed that the AI counselor’s replies were contextually appropriate (

Interpretation of subgroup differences

Screening status-related difference (age-stratified). According to Table 6, after adjusting for age, the screen negative group tends to endorse likelihood of recommending(

These contrasting perspectives demonstrate a clear difference in how the two cohorts perceive the AI counselor based on screening status. The screen negative group viewed the session as a quick, low-barrier outlet for emotional expression, valuing its accessibility and expressing willingness to recommend it to peers. In contrast, the screen positive group judged that more immediate helpfulness and tailored interaction was necessary, leading them to be more conservative in their likelihood of recommending the service. Based solely on these short-term perceptions gathered immediately after a single 10- to 15-minute interaction, the AI counselor appears most immediately helpful as an emotional outlet for screen negative group, whereas screen positive may still require richer conversation or supplemental human care. Age-related difference (screening status-stratified). After adjusting for screening status in the CMH analysis (Table 7), we identified a single significant age-related difference: perceived concern resolution(

Together, these two frameworks clarify why an empathy-first design feels curative to adolescents yet incomplete to young adults. Retaining empathic normalization for adolescents while adding goal-setting prompts, decision aids, and other solution-focused tools for young adults would tailor the chatbot to the distinct motivational priorities of each developmental stage.

Comparative analysis

This section compares BetterMood with open-source LLM-based chatbots (ChatCounselor and MentaLLaMA), focusing on psychotherapeutic methodology that we used for our concern-aware LLM and perceived user experience.

For this comparison, each model (BetterMood, ChatCounselor, MentaLLaMA) served as the counselor role. The client agent was implemented with OpenAI GPT-4o, presenting scenarios across four key youth concerns: academic stress, career choices, familial conflicts, and interpersonal relationship difficulties. For each topic, 25 counseling sessions were conducted, yielding 100 sessions per model. Each session comprised 10 multi-turn interactions (one counselor and one client message exchanged per turn, 10 total per session), resulting in 1000 dialog turns per model.

Each counseling dialogue was then rated across seven core competency domains:

Problem Solving/Skills Training Normalization and Acceptance Summarization and Restatement Praise, Encouragement, and Reassurance

Interaction Capability Perceived Support Ethical Safety

An evaluation agent (OpenAI GPT-4o) assigned scores from 1 to 5 for each domain per dialogue. Table 9 below presents the average scores:

Quantitative comparison results.

Note. Average expert ratings (Scale: 1–5) for each counseling domain.

BetterMood demonstrated superior performance compared to open-source models across most evaluation domains, particularly excelling in emotional support and empathy, where it achieved the highest scores in normalization, perceived support, and encouragement. This advantage likely stems from targeted prompt engineering specifically designed for affective communication. Additionally, BetterMood showed superior capabilities in summarization and reflection, effectively restating and reflecting user input, which contributed to stronger rapport and enhanced interaction coherence. However, all three models exhibited relatively low performance in problem solving and skills training, indicating that current LLM-based chatbots convey empathy rather than deliver structured behavioral change techniques.

Note: All results from open-source model comparisons are based on automated, multi-session agent-based simulations followed by domain-specific evaluation by OpenAI GPT-4o, and should be interpreted within the context of simulated data.

Policy implications

Recent prohibitions on AI counseling platforms in the United States highlight the need for stronger evidence to guide regulation. Our study contributes to this evidence base by providing the user perspective. While our findings do not address therapeutic outcomes, the high user satisfaction and favorable first impressions we observed suggest that these platforms hold promise and that a categorical ban could preclude a potentially valuable resource for mental health support. Based on our findings, we suggest that policymakers in South Korea and elsewhere might consider a framework that positions AI counselors as adjunctive or introductory tools rather than substitutes for licensed therapists. Policy could focus on standards for usability, safety, ethics, and responsible integration with existing healthcare. For example, regulators could require platforms to clearly label that the service is a support tool, not a substitute for therapy. Additionally, they could mandate the implementation of safety guardrails that effectively triage crisis situations and escalate them to human professionals. This approach could help de-stigmatize and broaden access to care, while ensuring these platforms operate as a safe bridge to professional clinical services.

Limitation

Before outlining the study’s specific limitations, it is worth underscoring that our aim was an early probe into BetterMood’s practicality and the immediate user perception. The results are therefore a foundation, not a final clinical assessment. Viewed in this light, the limitations that follow are not simply shortcomings; they outline the work that lies ahead.

(a) Post-session measures: Although we used self-report screening tools to group participants at the outset, no follow-up assessments with the same instruments were carried out. Therefore, we cannot speak to objective psychological change or therapeutic efficacy; the results reflect only participants’ immediate impressions after a single interaction with the AI counselor.

(b) Single, brief session: Each participant engaged with the system for only 10 to 15 minutes. Such brief, one-time encounters do not capture the broader dynamics of ongoing mental health support or sustained engagement. Accordingly, the study should be viewed as an initial feasibility and user-experience exploration rather than a clinical validation.

(c) User satisfaction questionnaire: The user satisfaction survey employed in this study was a self-developed instrument. To ensure its relevance and content validity, the questionnaire was systematically constructed. Key domains for evaluating AI counselors, including (i) interaction capability, (ii) perceived support, (iii) usability, and (iv) ethical safety, were drawn from the evaluation framework for conversational agents in health interventions proposed by Ding et al. 77 Based on these domains, specific items were drafted and subsequently reviewed for clarity and relevance by three independent experts in the fields of clinical psychology. However, the instrument has not undergone formal psychometric validation. Therefore, while the items are grounded in established literature and expert consensus, the reliability and validity of the satisfaction scores have not been statistically confirmed, and the findings should be interpreted within this context.

(d) Novelty effects: Our results based on self-reported surveys may be skewed by biases and the novelty effect, since participants’ limited prior exposure to AI mental-health tools could prompt transient curiosity or optimism. Future research should use repeated or longitudinal sessions to control for these effects and capture more stable user perceptions.

(e) Sample size and composition: Although adolescents, young adults, and professional clinicians were included, subgroup sizes, particularly for adolescents and clinicians, were small. Larger and more demographically balanced samples are needed to improve generalizability.

(f) Cultural and linguistic scope: BetterMood was developed in Korean and evaluated mainly with Korean-speaking users. Because its underlying model is GPT-4o, the system can also provide counseling in English (and potentially other languages) when appropriately prompted, and we used this capability for a preliminary English-language comparative analysis. Even so, a full international rollout will still demand careful localization beyond literal translation to reflect diverse expressions, values, and mental-health frameworks. Future work should therefore test usability and perceived helpfulness across a wider range of linguistic and cultural settings to confirm the model’s cross-cultural applicability.

Conclusion

This study presents BetterMood, a human-like AI counseling service. It is designed to address two key limitations of existing AI-driven chat counseling services: (a) tendency toward generic advice, and (b) absence of human-like dialogue. Our first contribution is the development of a concern-aware counseling LLM. This model incorporates psychotherapeutic knowledge into a general-purpose LLM using targeted prompt engineering. Our second contribution is an interactive AI counselor that employs a chunk-based streaming approach, delivering responses synchronized with visual expressions, speech, and gestures in one-second segments, thus enabling more human-like interactions.

Future research needs to incorporate comparative studies with existing chat counseling services to accurately assess BetterMood’s efficacy. Additionally, long-term, multi-session evaluations are essential for understanding sustained mental health outcomes. Recruiting larger and more diverse groups of professional clinicians will further enhance insights into clinical expectations, guiding targeted improvements. Through continuous model refinement, direct comparative analysis, and comprehensive longitudinal evaluations, we aim to advance BetterMood into an effective and reliable supplementary tool for mental health care.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076251392294 - Supplemental material for BetterMood: A human-like AI counseling service for adolescents and young adults

Supplemental material, sj-docx-1-dhj-10.1177_20552076251392294 for BetterMood: A human-like AI counseling service for adolescents and young adults by Do Hyung Kim, Soeun Baek, Joonsung Lee, Taehwi Lee, Soyeon Park, Beomchan You, Ji-Won Hur, Minah Kim and Chang-Gun Lee in DIGITAL HEALTH

Footnotes

Acknowledgments

We would like to thank all the participants who took part in this study. This work was supported by the National Research Foundation of Korea (NRF) grant funded by the Korea government (MSIT) (Grant No. 2023R1A2C3003007).

ORCID iDs

Ethical approval

The study was approved by the Korea University Institutional Review Board (KUIRB) and the approval number was KUIRB-2024-0458-01.

Consent to participate

Informed consent to participate was written.

Contributorship

DHK and CGL conceived the presented idea. DHK, SB, and SP contributed to managing the collection of real counseling data. JWH supervised the generation of synthetic counseling data. All collected counseling data were reviewed by JWH and MK. DHK and SB developed the concern-aware counseling LLM. JL and TL created the human-like AI counselor. DHK and BY contributed to developing the chunk-based streaming methodology. DHK, SB, JL, TL, SP, and BY supervised the user study. All authors participated in drafting, revising, and approving the final manuscript for submission. CGL supervised the overall research project.

Funding

The author(s) disclosedreceipt of the following financial support for the research, authorship, and/orpublication of this article: This work was supported by the National Research Foundation of Korea (NRF) grant funded by the Korea government (MSIT) (Grant No. 2023R1A2C3003007).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental material

Supplemental materials for this article are available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.