Abstract

Purpose

This study found that healthcare professionals expressed diverse opinions regarding the integration of AI in the Jordanian healthcare sector. This study aimed to explore healthcare professionals’ perceptions of artificial intelligence (AI) and how these perceptions relate to perceived barriers and potential risks associated with AI integration in clinical practice in Jordan.

Materials and Methods

A cross-sectional survey of 605 healthcare professionals who were conveniently selected from four types of hospitals, namely private, teaching-affiliated, Royal Medical Service, and public in Amman city and the northern regions of Jordan.

Results

About half of the respondents were female (50.7%), holding a bachelor's degree (60.5%) and having 1–5 years of experience (33.1%), working in the Ministry of Health (47.4%). This study found that healthcare professionals hold optimistic perceptions of AI integration in the Jordanian healthcare sector. There is a moderate level of perceived barriers and a perceived level of risks associated with AI incorporation into the healthcare sector.

Conclusion

This study highlights the need for more transparent policies, better communication, and more robust frameworks for AI implementation in healthcare.

Introduction

Artificial Intelligence (AI) is revolutionizing the healthcare sector by analyzing complex medical data to diagnose, treat, and predict outcomes in various clinical scenarios. 1 As a branch of computer science, AI systems are human-designed software and hardware that act in the physical or digital dimension to achieve complex goals.2,3 They can use symbolic rules or learn a numeric model and adapt their behavior by analyzing previous actions. 4 The AI is beneficial for a variety of tasks, including pattern recognition, language comprehension, perceiving relationships and connections, following expert-recommended decision-making algorithms, understanding concepts rather than just processing data, developing reasoning through the capacity to integrate new experiences, and self-improvement through problem-solving or task completion. 5

Healthcare delivery is changing due to advances in AI and machine learning. Healthcare organizations have collected large-scale data sets in the form of demographic data, insurance data, clinical trial data, and health records. 6 AI technologies are suited to examine this data and find patterns and insights that individuals could not independently discover. Deep learning algorithms from AI can assist healthcare organizations in making better operational and clinical choices and raising the standard of their experiences. 7

Artificial intelligence has the potential to bring numerous advantages to the healthcare sector, such as automating high-volume routine tasks such as administrative documentation, scheduling, and image analysis, thereby reducing the workload and cognitive burden on healthcare professionals. 8 Additionally, AI offers practical algorithms and machine learning techniques to extract meaningful insights from complex data, support clinical decision-making, and contribute to precision medicine by tailoring treatments to individual patient characteristics.9–11

Healthcare professionals’ perceptions of AI in healthcare are often overlooked, despite its potential benefits and drawbacks. 12 Healthcare providers reported varied opinions regarding the benefits, limitations, and challenges of implementing AI in clinical settings. 13 The reliance on automation skills can lead to deskilling and performance disruptions, impairing physicians’ ability to draw well-informed conclusions from observable symptoms, indicators, and data.14,15 Understanding healthcare professionals’ perceptions is crucial for predicting an organization's readiness and acceptance for the adoption of new technology, sustaining the healthcare workforce to adapt to the fast-evolving digital healthcare environment, and helping designers enhance the features and functionalities of AI technologies. For instance, a survey conducted by Huang et al. 16 in nine tertiary hospitals in China found that 81.61% of physicians believed AI could reduce the workload for radiologists and increase diagnostic accuracy. However, 41.74% of physicians believed that one of the biggest concerns associated with using AI as a diagnostic technique was the leakage of patients’ private information. Another online survey on Canadian vascular surgeons’ perceptions of AI was performed by Li et al., 17 and most respondents positively perceived AI and its potential to advance patient care, research, and education.

Particularly during the COVID-19 pandemic, acute care facilities quickly embraced digital health technology for tasks such as inpatient surveillance, EHR-based algorithms for pnumonia monitoring, and AI-based diagnostics such as imaging algorithms. 18 For instance, Zhao et al. 19 highlighted AI's function in high-stakes situations by describing an IT-based surveillance system in hospitals to notify primary care teams about nonresponsive pneumonia cases. Besides, acute care physicians frequently perceived AI as a tool to improve diagnostic precision and lessen the burden, but compared to other settings, worries about patient safety and dependability are more acute. 20

According to a scoping review study performed by Shinners et al. 21 reported that age, professional discipline, use, and educational aspirations all have a substantial impact on how AI is perceived by healthcare professionals. AI adoption may be hampered by limited technological knowledge or insufficient training among healthcare practitioners. Many medical professionals expressed a desire for more information about how AI is used in healthcare settings, as well as its ethical implications for patient care.

On the other side

Building trust between humans and AI is crucial for advancing healthcare. 23 Ethical principles of accountability and autonomy, ensuring transparency and explainability, fostering responsibility and accountability, and ensuring equity and inclusiveness. 24

Additionally, applying AI in healthcare has the potential to improve patient outcomes, processes, and medical research. 25 However, it also poses risks, including dehumanization of care, which involves overreliance on AI-driven technologies for decision-making and recommendation-making, and the reduction of healthcare professionals’ skills.26–28 AI can exacerbate biases and difficulties in upholding fairness, accountability, transparency, and trust in AI-based decisions. 29

AI can also impact healthcare professionals’ jobs and interactions with patients. While there is excitement about the potential, there are concerns that specific healthcare positions may become redundant as productivity is fueled by technology. 30 Hence, this study aims to explore healthcare professionals’ perceptions of AI and how these perceptions relate to perceived barriers and potential risks of AI integration in clinical practice among healthcare professionals in Jordan.

Materials and methods

Design

A cross-sectional, descriptive, correlational design was used.

Participants and settings

A total of 605 healthcare professionals were recruited from hospitals located in the northern regions of Jordan and Amman. This study employed healthcare professionals from hospitals in Amman and northern Jordan, considering factors such as accessibility, resource limitations, and logistical viability. The data were collected from a diverse workforce from public, military, and university-affiliated healthcare institutions.

The sample was recruited conveniently due to its practical suitability for the research context. Healthcare professionals were recruited directly from hospital settings during their available time, allowing efficient access to a diverse group of participants, including nurses, physicians, pharmacists, and allied health staff. This approach was particularly appropriate given the logistical and time constraints of conducting research within busy clinical environments. Participants included registered nurses, pharmacists, physicians, clinical laboratory technologists, radiologic technologists, medical assistants, physical therapists, occupational therapists, and other allied healthcare professionals. Eligibility criteria required participants to hold at least a bachelor's degree in a healthcare-related field and to be currently employed in a hospital setting. Those without the relevant academic qualifications, not working in hospitals, or located outside the target regions were excluded. The sample size was determined to ensure adequate representation across diverse professional roles, based on an estimated medium effect size, 95% confidence level, and a power of 0.80, accounting for an anticipated response rate of around 50%. Ultimately, a 50.4% response rate was achieved, which aligns with similar studies targeting busy clinical populations, and is considered acceptable for cross-sectional healthcare surveys. The composition of the sample reflects the distribution of healthcare professionals in Jordan, where approximately 2.5 physicians and 2.4 nurses per 1000 people were reported in 2008. In comparison, OECD countries reported averages of 3.7 physicians and 9.2 nurses per 1000 people in 2021. These differences in workforce density contextualize the healthcare environment in Jordan and provide insight into the workload and experiences of the professionals included in this study.

Approximately 1.5 generalists and 1.0 specialized physicians per 1000 people were found in Jordan as of 2008. There were 1.6 professional/registered nurses for every 1000 people, and 0.8 enrolled (associate) nurses for every 1000 people. 31 The average number of physicians per 1000 people in OECD nations was 3.7 in 2021. This number fluctuated; Norway, Austria, Portugal, and Greece reported more than five doctors per 1000 people, whereas Mexico, Colombia, and Turkey recorded 2.5 or fewer doctors per 1000 people. On average, there were 9.2 nurses for every 1000 people, while there were notable differences between nations. 31 When comparing these figures, Jordan's ratio of generalist and specialist physicians combined (2.5 per 1000 population in 2008) was lower than the OECD average of 3.7 per 1000 population in 2021. Similarly, the number of professional/registered nurses in Jordan (1.6 per 1000 population) was substantially lower than the OECD average of 9.2 per 1000 population. These disparities highlight differences in healthcare workforce densities between Jordan and OECD countries.

Instruments

The Shinners’ tool was used to explore healthcare professionals’ perceptions of AI. Unlike previous tools for facilitating human-technology interaction, the SHAIP questionnaire from the open-access article focused on healthcare professionals’ perceptions of AI. 32 The 10-item questionnaire was created to gather perspectives on AI in the context of healthcare professionals’ roles, their level of preparedness for AI, and how AI affects clinical decision-making and patient care. Cronbach's alpha was 0.804, suggesting that items had very little variance specific to individual items. 33 A 5-point Likert scale, where 1 = strongly disagree and 5 = strongly agree, was used to measure the healthcare professionals’ perceptions of the 10 items.

Healthcare professionals’ perceived barriers and potential risks were taken from the same study, with Cronbach α coefficient values of internal reliability reaching 0.85, and Kaiser–Meyer–Olkin values above 0.5 are considered acceptable. 34 The barriers and risks sections contained 15 items with a 5-Likert scale where 1 = strongly disagree, 2 = disagree, 3 = neutral, 4 = agree, and 5 = strongly agree. Permissions were requested from the authors to use these questionnaires. A higher score indicates more perceived barriers.

The original English version was first translated into Arabic using a forward–backward translation process carried out by bilingual linguists and healthcare experts to guarantee the tool's applicability and clarity in the Jordanian context. During translation, cultural adaptation was carefully taken into account, and changes were made to ensure that the language and terminology used were suitable for Jordanian healthcare environments. A total of 25 healthcare professionals from different disciplines participated in a pilot study to evaluate the items’ cultural relevance, clarity, and comprehension. Minor changes were made in response to pilot feedback to enhance item clarity and contextual appropriateness.

Regarding data analysis, while the 5-point Likert-scale items are ordinal, means and standard deviations were reported in alignment with common practice in survey-based research, where Likert-type items are treated as approximating interval data under the assumption of equal intervals between points. This approach, supported by prior applications of the Shinners tool, facilitates comparative interpretation of central tendencies across items. Nonetheless, nonparametric tests were also considered during analysis to confirm the robustness of findings and minimize the risk of misinterpretation due to scale assumptions.

Data collection procedure

Formal letters signed by the president of the Jordan University of Science and Technology (JUST) were sent to the general directors of the Jordanian Ministry of Health (MOH) to seek approval for conducting the study in Jordanian hospitals. Upon receiving approval from the administrative departments of the involved hospitals, the researcher visited each hospital to introduce himself to the Chief Officer and explain the study's objectives, data collection methods, the required number of healthcare professionals, and the time needed to complete the questionnaire. The researcher also sought support and orientation within the hospital units and floors.

A convenience sample of healthcare professionals was selected based on their availability during the data collection period. Healthcare professionals were approached during different time slots and shifts and were asked to fill out the questionnaire by scanning a QR code leading to an online Google Forms survey. The researcher remained available in the departments to address any questions and provided contact information for further inquiries.

The data collection process was facilitated by the continuous education departments in the hospitals. Completed questionnaires were compiled via Google Forms into an Excel sheet, which was then entered into SPSS version 26 for coding and analysis.

Ethical considerations

Ethical approval was obtained from the Institutional Review Board (IRB)/ (Ref: 25/160/2023, date: 17/05/2023) from JUST and IRB/ (Ref: 8409, date:17/7/2023) from the MOH. From the private sector, we take the permission of the owner of the dental clinic or center as an ethical approval for data collection. Participation in this study was voluntary. Before beginning the online survey, participants were given a thorough information sheet on the first page. This contained information about the goal of the study, voluntary participation, the anticipated time needed to finish the survey, confidentiality guarantees, and the researchers’ contact details. Since no personally identifiable information was gathered and responses were safely stored with only the research team having access, anonymity was guaranteed. According to ethical guidelines for online surveys, participants gave their informed consent by clicking the “Next” button, indicating that they had read the study details and voluntarily agreed to participate. The Google Form was deactivated to end data collection after the study, which took place from August 2023 to November 2023, was completed.

Statistical analysis

Statistical Package for Social Science was utilized to analyze the data. Data were checked for missing values and outliers. Descriptive statistical analyses were conducted to summarize the demographic characteristics of the participants and to explore their perceptions, perceived barriers, and risk awareness regarding AI. Frequencies and percentages were used to describe categorical variables such as sex, profession, years of experience, and type of healthcare institution. Measures of central tendency, including means and standard deviations, were calculated for each item and subscale of the AI perception, barrier, and risk instruments. Inferential statistics of one-way analysis of variance (ANOVA) was used to assess the mean difference with AI and demographic characteristics. Before conducting ANOVA, the data were assessed for normality using the Shapiro–Wilk test and for homogeneity of variances using Levene's test. The results indicated that the assumptions of normal distribution and equal variances were sufficiently met. Therefore, the use of one-way ANOVA was deemed appropriate for comparing group differences in perception scores.

Results

Sociodemographic characteristics

Out of approximately 1200 respondents, 605 respondents were received, resulting in a response rate of 50.4%. The data indicate a bias toward younger age groups, showing a youthful workforce in the hospitals. The most prominently represented age group is 26 years, accounting for (n = 47, 7.8%). The 25-year-old group follows with (n = 37 responses, 6.1%), and the 24-year-old group with (n = 32 respondents, 5.3%). The sample has a virtually equal sex distribution, with females comprising (n = 307, 50.7%) and men comprising (n = 298, 49.3%).

A significant proportion of the survey healthcare professionals, who are healthcare practitioners in the Jordanian healthcare industry, possess a bachelor's degree (n = 366, 60.5%), suggesting a workforce with a high level of education. Subsequently, those with a master's degree comprised (n = 139, 23.0%) of the sample. Including healthcare professionals holding a PhD (n = 41, 6.8%) or diploma (n = 37, 6.1%) contributes to the variety of educational backgrounds. A minority of responses (n = 22, 3.6%) are categorized as “others,” suggesting diverse educational backgrounds.

Among the healthcare professionals in the study, doctors played the most prevalent role, making up (n = 228, 37.7%). Nurses comprise a significant proportion, accounting for (n = 202, 33.4%) of the sample. Pharmacists comprise (n = 98, 16.2%), revealing their vital contribution to healthcare services.

Out of the total responses, n = 605 (33.1%) have 1–5 years of experience, indicating a notable presence of relatively inexperienced professionals. Additionally, n = 85 (14.0%) have less than one year of experience. A significant (n = 106, 17.5%) possess 6–10 years of experience, suggesting a mix of very recent and somewhat seasoned professionals.

The data also show that (n = 81, 13.4%) have 11–15 years of experience, and (n = 56, 9.3%) have 16–20 years of experience. The proportion of respondents with over 20 years of experience declines substantially, with only (n = 16, 2.6%) having 26–30 years and a mere (n = 2, 0.3%) having over 41 years of experience. A substantial majority of healthcare professionals, (n = 287, 47.4%), are employed by the MOH, reporting its significant influence in the healthcare industry. Private hospitals account for (n = 125, 20.7%) and affiliated-teaching hospitals represent (n = 131, 21.7%). Additionally, Jordanian Royal Medical Services account for (n = 62, 10.2%).

A substantial majority of respondents (n = 369, 61.0%) have not yet used any AI applications in their clinical practice, indicating that AI integration in clinical practice remains limited among the healthcare professionals surveyed. Conversely, n = 236 (39.0%) have used AI technologies in their clinical practice, showing a growing trend toward AI adoption in the healthcare industry (Table 1).

Descriptive characteristics of the study sample (N = 605).

Data descriptive statistical analysis

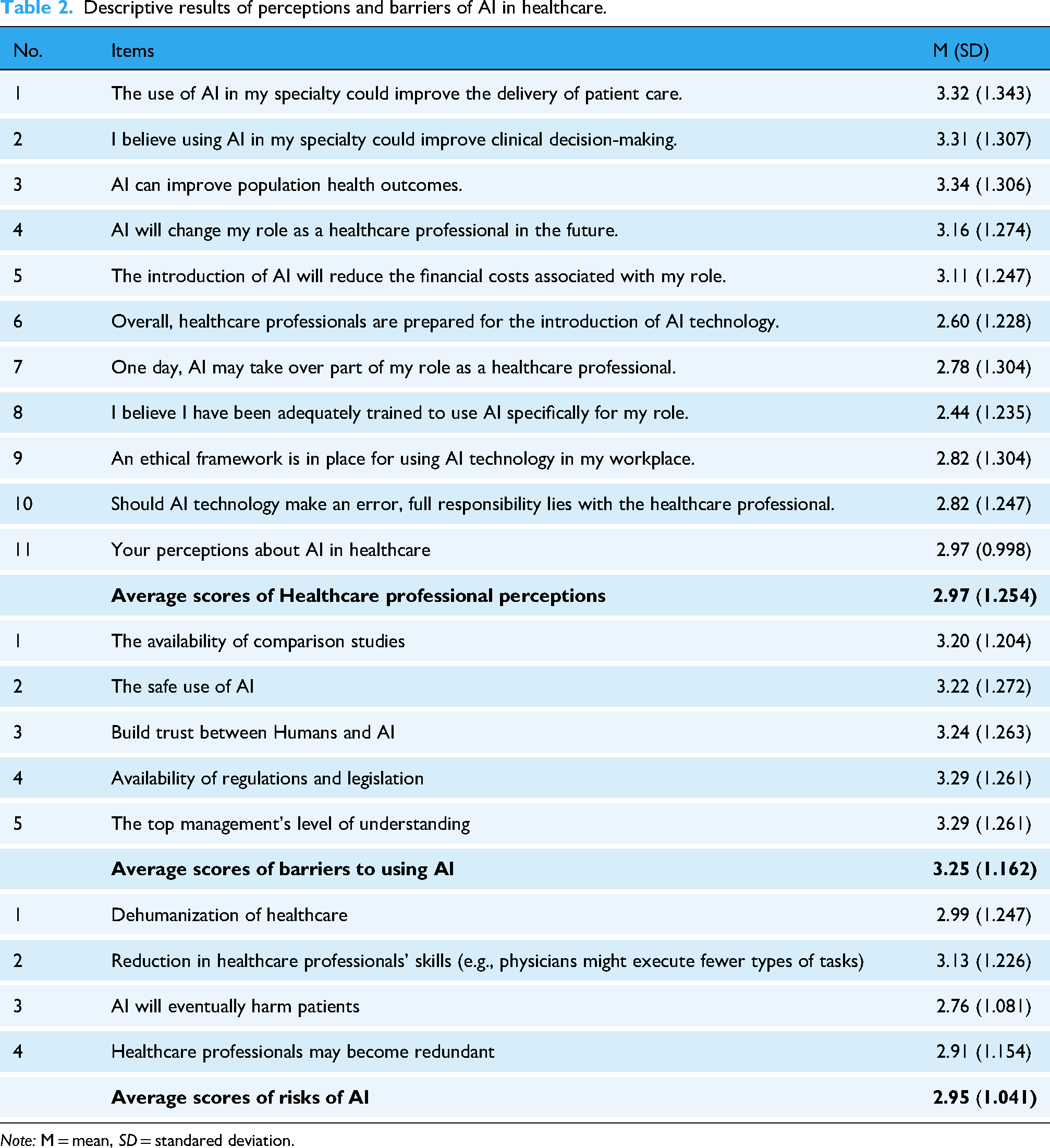

The category “Perceptions about AI in Healthcare” consists of 10 items and has strong internal consistency with a Cronbach's alpha of 0.928. The questionnaire's reliability is shown by its evaluation of the barriers and risks related to AI. These evaluations are measured using Cronbach's alphas, which have values of 0.960 and 0.906 for the relevant item sets of 5 and 4 items. The data suggest a modest degree of optimism about incorporating AI in healthcare. Respondents generally believe that AI could enhance patient care (M = 3.32, SD = 1.343), clinical decision-making (M = 3.31, SD = 1.307), and population health outcomes (M = 3.34, SD = 1.306), indicating a positive outlook on AI's potential benefits.

However, there are notable concerns about the readiness and training for AI integration. The lowest mean score (M = 2.60, SD = 1.228) was for the perception that healthcare professionals are prepared for AI technology, and similarly low (M = 2.44, SD = 1.235) was the belief in having received sufficient AI-specific training. These scores reflect significant doubts about current training and preparedness. Additionally, ethical concerns and responsibility for AI errors also emerged as areas of caution, with average scores of (M = 2.82, SD = 1.304) for both the ethical framework and responsibility for AI mistakes. This highlights the need for clearer ethical guidelines and accountability measures. Overall, the collective mean score was (M = 2.97, SD = 1.254), reflecting a neutral direction toward AI in healthcare.

While there is recognition of AI's transformative potential in healthcare, the findings underscore the necessity for improved education, readiness, and explicit ethical standards to address healthcare professionals’ concerns to optimize AI's benefits.

Concerning the barriers that were perceived by healthcare professionals in adopting AI in healthcare, it was revealed that healthcare professionals express considerable concerns across key areas, with average scores around (M = 3.2, SD = 1.204), indicating significant but manageable obstacles. The need for comparative studies with an average of (M = 3.20, SD = 1.272) underscores the necessity for research validating AI's effectiveness. Safety (M = 3.22, SD = 1.272) and trust establishment (M = 3.24, SD = 1.263) are highlighted, emphasizing the importance of robust regulations and building trust between humans and AI systems. Issues with rules and legislation (M = 3.29, SD = 1.261) and management understanding (M = 3.29, SD = 1.261) further indicate the need for comprehensive regulatory frameworks and improved AI literacy among managers. While there's broad agreement on these obstacles, some variance in perspectives exists, likely influenced by individual experiences. Collaborative efforts in research, regulation, education, and management strategies are crucial to effectively address these obstacles and facilitate AI integration in healthcare, leading to improved patient outcomes and operational efficiency.

Regarding the risks associated with integrating AI in healthcare, it was shown that the average scores range from 2.76 to 3.13, indicating considerable levels of concern but not excessive worry. Concerns about the “Dehumanization of Healthcare” (M = 2.99, SD = 1.247) highlight worries about the potential loss of human connection and compassion. There's elevated apprehension about AI potentially diminishing healthcare professionals’ proficiency (M = 3.13, SD = 1.226), suggesting concerns about AI's impact on practical skills and decision-making abilities. Interestingly, worries about AI causing harm to patients have a lower average score (M = 2.76, SD = 1.081), indicating cautious optimism about AI's ability to enhance patient outcomes with careful application and oversight (Table 2).

Descriptive results of perceptions and barriers of AI in healthcare.

Note: M = mean, SD = standared deviation.

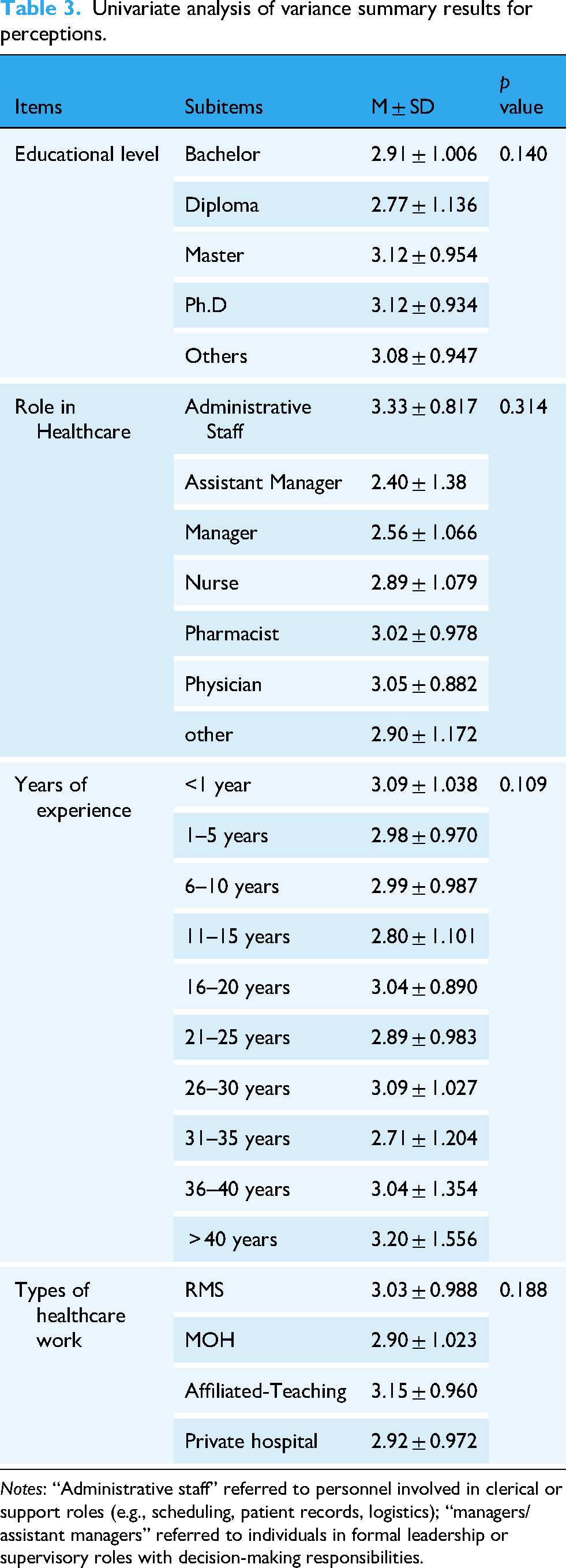

Demographic factors mean difference with AI

The presented results in Table 3 highlight variations in AI-related perceptions across different demographic and professional categories among healthcare professionals. Respondents with higher educational levels (Master's and Ph.D) reported slightly more positive perceptions of AI (M = 3.12) compared to those with Bachelor's (M = 2.91) or Diploma degrees (M = 2.77).

Univariate analysis of variance summary results for perceptions.

Notes: “Administrative staff” referred to personnel involved in clerical or support roles (e.g., scheduling, patient records, logistics); “managers/assistant managers” referred to individuals in formal leadership or supervisory roles with decision-making responsibilities.

However, the lack of statistical significance suggests that education level alone does not strongly differentiate AI perceptions. Administrative staff reported the highest AI perception scores (M = 3.33), possibly due to their focus on system-level efficiency and AI's potential in streamlining operations, while Assistant managers and managers had the lowest scores (M = 2.40, 2.56), which might indicate concerns about AI disrupting workflows or increasing managerial complexity. Additionally, physicians (M = 3.05) and pharmacists (M = 3.02) had slightly more positive views than nurses (M = 2.89), suggesting a considerable but not statistically significant difference in AI acceptance across professions. Given the high variation, a deeper multivariate analysis could clarify whether these role-based differences remain significant when controlling for other factors (e.g., education, experience).

Less experienced professionals (<1 year, M = 3.09) and highly experienced professionals (>40 years, M = 3.20) had more positive AI perceptions. Concerning midcareer professionals (11–15 years, M = 2.80; 31–35 years, M = 2.71), they reported lower AI perception scores. This U-shaped trend may indicate that early-career professionals are more open to AI due to their tech-savviness, while late-career professionals may perceive AI as a valuable tool rather than a job threat. However, midcareer professionals may have concerns about AI disrupting their established workflows. Finally, affiliated teaching hospitals had the highest AI perception scores (M = 3.15), possibly due to greater exposure to research and innovation. Ministry of Health employees (M = 2.90) and private hospital staff (M = 2.92) reported slightly lower AI perceptions, suggesting that implementation challenges in nonacademic settings might contribute to skepticism.

Analysis of variance test was run to analyze barriers across demographic variables. Individuals who have master's and Ph.D degrees had the highest scores of M = 3.34 ± 1.071 and M = 3.32 ± 1.020, respectively. This indicates that these individuals have great knowledge and critical thinking skills to assess the factors that can impede the use of AI in healthcare. The differences between the groups were found not to be significant (p = 0.138). Given their prominent roles in operational management, implementation monitoring, risk management, and change management initiatives within healthcare organizations, assistant managers, and administrative staff's high mean score (M = 4.2 ± 3.73) concerning barriers to AI in healthcare is perhaps not surprising. The difference between groups was found to be significant (p = 0.021). Respondents who had fewer years of experience had the lowest mean scores (<1 year, M = 3.23, 1–5 years, M = 3.31) compared to respondents who had greater years of experience (36–40 years, M = 2.8, over 41 years M = 2.9). However, the differences among these groups are statistically insignificant (p = 0.826).

Individuals from Jordanian Royal Medical Services had the highest score of (M = 3.49 ± 1.048) compared to private hospitals who had the lowest score of (M = 3.11 ± 1.160), with a significance of perceiving barriers (p = 0.099). Also, individuals who have never attended a course on AI but still have the highest mean score of barriers to AI in healthcare (M = 3.38 ± 1.095). These respondents may feel overwhelmed by technology due to their lack of knowledge, exposure, and understanding. Attending AI courses had a statistically significant impact on perceived barriers (p = 0.00) (Table 4).

Univariate analysis of variance summary results for barriers.

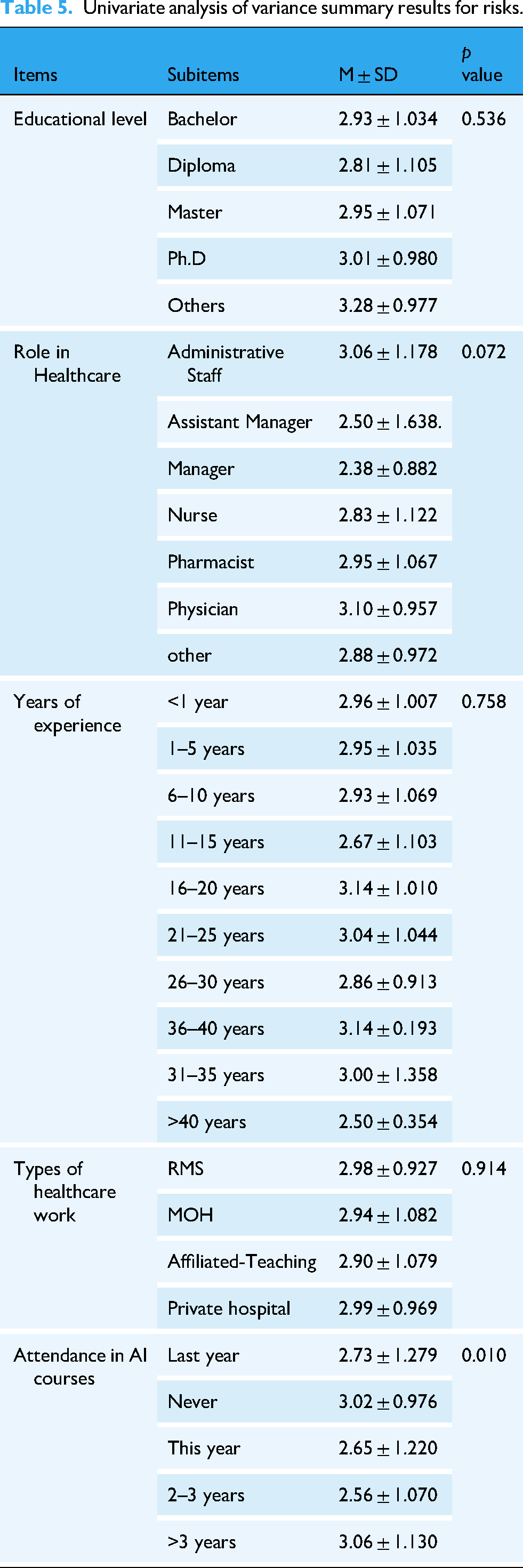

Regarding the perceived risks across AI items, the ANOVA test showed that master's and PhD holders had the highest mean scores of (M = 2.95 ± 1.071) and (M = 3.01 ± 0.980), respectively. Additionally, physicians had the highest score of (M = 3.10 ± 0.957). This implies that they believe in risks related to patient safety, including errors or incorrect information in therapy recommendations and diagnoses made by AI. In addition, they can be concerned with the legal and ethical implications of AI, such as potential bias in algorithms, liability problems, and privacy violations. The difference between groups had a significance of (p = 0.072).

Respondents who had 31–35 years of experience had the highest mean score of (M = 3.14 ± 0.193). The differences between the groups were found to be not significant (p = 0.758). Individuals from Jordanian Royal Medical Services and university teaching hospitals had the highest scores of (M = 2.98 ± 0.927), and (M = 2.99 ± 0.969), respectively (Table 5).

Univariate analysis of variance summary results for risks.

Discussion

The study shows that healthcare professionals in Jordan have a mixed perception of AI integration in the medical sector, with some optimism but also concerns about potential replacement. However, they believe AI can improve workflow, population health outcomes, and clinical decision-making. These findings were consistent with a study performed by Abdullah and Fakieh 35 who found that overall perceptions toward AI were moderate, especially when most respondents indicated that they were concerned that they might be replaced by AI. Furthermore, the current study was consistent with a study conducted by Catalina et al. 36 who found that respondents considered AI to be efficacious in improving workflow, population health outcomes, and clinical decision-making. These findings provide an extensive overview of the variables affecting healthcare professionals’ perceptions of the use of AI in the Jordanian healthcare system.

Additionally, this study revealed that healthcare professionals had modest optimism regarding AI's ability to save costs. This aligns with studies that examined AI's potential for cost savings and how it impacts AI acceptance.37,38 The results suggest that healthcare professionals think that AI will take over their jobs shortly, consistent with a previous study performed by Ogolodom et al. 39 that reported the respondents’ concern that their jobs would be taken over by AI.

Regarding the barriers to utilizing AI, this study conveyed that the healthcare professionals exhibited considerable perception of barriers toward using AI in their clinical practice. This level suggests that significant but manageable barriers are acknowledged. Both the availability of laws and regulations and the degree of understanding from senior management received the maximum score. This was in line with a prior study performed by Joshi et al. 40 that found that senior management's lack of commitment and ignorance of the legal implications of AI are the leading causes of implementation barriers. This suggests that legislative developments and senior management support are required to improve AI implementation. Our study brings to light trust issues among healthcare professionals, which are consistent with studies performed41–43 that discovered participants’ mistrust of how their personal information will be gathered and managed. Additionally, they have less trust in AI to protect patients’ safety while executing its task, particularly when it comes to giving correct information on uncommon disorders or unexpected events. Targeted actions are necessary to solve specific barriers to the implementation of AI in healthcare. Legislative progress and top-management assistance are essential for smooth integration. For AI to be implemented successfully, trust is critical. Creating trust requires demonstrating AI's dependability and security in delivering correct information and its clear data privacy and security measures. Creating a culture of trust among healthcare professionals can help eliminate barriers and make the use of AI in healthcare easier to adopt.

Additionally, this study revealed a concern related to carrying out a limited range of tasks in which AI can lead to a reduction in the performance skills and decision-making abilities of healthcare professionals. This is in line with a study performed by Van Rooij et al. 44 who reported that the development of skills may be impacted by new technology. By limiting the chance to practice specific procedures and lowering clinical expertise. As a result, a strong foundation for multidisciplinary and teamwork is required in addition to significant improvements to clinical training and education. Additionally, our study found that the perception regarding the dehumanization of healthcare is a relatively significant perceived risk that can be a result of AI implementation. This is consistent with a study done by Minerva and Giubilini 45 who argue that human connections, such as trust and apathy, are seen as fundamental components of healthcare delivery. Therefore, the human element of the therapist–patient relationship would be reduced with AI. This emphasizes the necessity of finding a balance between advancing technology and prioritizing patients’ needs. Our study's finding that professional's perception that AI may be harmful to patients is consistent with previous studies performed by Richardson et al. 46 that has drawn attention to the potential harm that might arise from errors made by healthcare AI due to the technology's development utilizing incorrect data. The study's conclusions cast uncertainty on the idea that AI's narrow scope of applications will significantly improve healthcare professionals’ performance and ability to make decisions. Instead, fears about possible adverse effects, such as dehumanization and patient harm, highlight how crucial it is to carefully use and continuously assess AI technology in the healthcare industry. This necessitates a thorough strategy that tackles and reduces related dangers and considers any possible advantages to guarantee the ethical and advantageous integration of AI in healthcare procedures.

Demographical data such as sex may influence how AI is seen and embraced. Sex has been found in studies to impact technology adoption, with males and females exhibiting differing degrees of comfort or skepticism toward new technologies. However, in our study, both male and female healthcare professionals had comparable perceptions. This is consistent with the study performed by Syed et al. 47 findings which showed that there was no statistically significant difference in the respondents’ perceptions of AI. Besides, higher educational healthcare professionals, such as those with master's or doctorate degrees, may have a better grasp and, hence, a more favorable impression of AI. They may also be more aware of possible risks, as suggested. In our study, healthcare professionals who held a PhD had the most optimistic score. However, there were no statistically significant differences in respondents’ answers by educational level.

The study by Abdullah and Fakieh 35 suggests that different healthcare professionals have different opinions and experiences with AI, with physicians and nurses having different perspectives. Administrative professionals may be more concerned with operational issues, while physicians are more concerned with clinical risks. The study found that administrative staff had the most optimistic score, but the difference between positions was not statistically significant. Future research could use mixed-method approaches, behavioral data analysis, or longitudinal studies to better understand the relationship between perception and AI adoption in healthcare. Future research could complement these findings by using mixed-method approaches, behavioral data analysis, or longitudinal studies to better understand the relationship between perception and actual AI adoption in healthcare.

Strengths and limitations

This study brings strength and innovation, as there are few studies on the perception of AI in the Jordanian healthcare sector. However, the current study employed a convenient sampling method, a cross-sectional design, potential response bias, and reliance on self-reported data, which may affect the robustness of the conclusions. Besides, the data were collected from hospitals located in the northern regions of Jordan and the capital, Amman. While this sampling provided valuable insights into perceptions of AI among professionals in these areas, it may not fully capture the perspectives of practitioners in rural regions or those working in private clinics. To address this limitation, future research should aim to include a more diverse and representative sample by expanding recruitment to southern and rural areas, as well as private healthcare settings, to enhance the generalizability of the findings to all Jordanian healthcare professionals. Besides, this study employed an online survey due to cost and time constraints, even though mixed-method research is more suited for studies in healthcare. Also, the demographic distribution in our sample—particularly the predominance of younger healthcare professionals—may not fully reflect the broader healthcare workforce in Jordan. While the sample includes a range of healthcare roles, the age and professional distribution may limit generalizability.

Conclusion

Healthcare professionals in Jordan generally expressed an optimistic score of perception toward AI integration in the healthcare sector. This indicates a growing awareness and suggests the need for enhanced exposure and education regarding AI technologies. This suggests a need for more structured training and educational programs to increase awareness and understanding of AI applications in healthcare. Additionally, significant perceived barriers to AI adoption were identified, including regulatory issues, a lack of trust, and concerns about the safe use of AI. These barriers highlight the need for more transparent policies, better communication, and more robust frameworks for AI implementation in healthcare. There is an awareness of potential risks associated with AI use, such as the dehumanization of care and reduced skill sets among healthcare professionals. Mitigating these risks involves careful planning, ethical considerations, and continuous training. Deeper demographic comparisons could be a valuable direction for future research focused on representativeness or workforce dynamics.

Footnotes

Acknowledgements

The authors want to thank all the participants in the current study.

Ethical considerations

Ethical approval was obtained from the Institutional Review Board (IRB)/ (Ref: 25/160/2023, date: 17/05/2023) from Jordan University of Science and Technology (JUST) and (IRB)/ (Ref: 8409, date:17/7/2023) from the Ministry of Health (MOH). From the private sector, we take the permission of the owner of the dental clinic or center as an ethical approval for data collection.

Contributorship

AD: conceptualization and formal analysis; RMOM: data curation and writing the original draft; AA: interpretation of results and methodology; MAL: supervision; SBH: methodology, writing the final draft, and editing.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability

Data will be available upon request from the corresponding author.