Abstract

Objective

The integration of Artificial Intelligence (AI) in healthcare offers opportunities to transform patient care, improve efficiency, and support clinical decision-making. Yet, its implementation is hindered by technical, organizational, and collaborative challenges. This study explores the key success factors for sustainable AI adoption from the perspectives of healthcare professionals (HCPs) and AI experts, with the aim of identifying alignments and gaps that influence collaboration and sustainability.

Methods

Guided by the NASSS framework, semi-structured interviews were conducted with four HCPs and five AI experts with experience related to AI technologies in the healthcare sector. Thematic analysis was applied to examine stakeholder perspectives, focusing on gaps and alignments between stakeholders and identifying common patterns.

Results

Transparency leading to trust was the most emphasized factor by both AI experts and HCPs. Alignments were also found in recognizing the importance of interorganizational cooperation, demand-side value, scope of use, usability, responsibility and redefining the roles. Gaps included challenges in cooperation with HCPs, misunderstandings between stakeholders and the need for interdisciplinary experts, insufficient training, concerns over data quality and privacy, and limited attention to usability. Based on the found patterns, a framework is proposed to strengthen collaboration between AI experts and HCPs, enabling effective and sustainable AI implementation in healthcare.

Conclusion

This study extends the NASSS framework to a dual-stakeholder context, offering insights into the alignments and gaps between HCPs and AI experts. The proposed framework supports improved collaboration, guiding healthcare professionals, AI developers, and managers toward effective, sustainable AI implementation and fostering mutual understanding for successful adoption.

Keywords

Introduction

Health systems globally are facing challenges such as workforce shortages in patient care, rising costs, inadequate infrastructure, limited access to services, aging populations, and the emergence of new disease strains.1–4 The COVID-19 pandemic further highlighted these weaknesses, revealing shortages in resources, poor information sharing, deficiencies in diagnostic testing, and excessive workloads for frontline healthcare workers. Consequently, the growing demand for high-quality care must be balanced against the ongoing shortage of personnel and resources in the healthcare sector. 2 In light of these challenges, artificial intelligence (AI) offers significant potential for the healthcare industry, particularly as a promising solution to mitigate the shortage of healthcare professionals while accommodating the growing patient population through innovative approaches.2,4 Gilson et al. 5 found that ChatGPT, utilizing natural language processing, is capable of answering medical questions with a proficiency comparable to that of a third-year medical student in the United States.

AI has introduced a range of transformative applications aimed at improving healthcare services by offering valuable and supportive assistance, and its use in the healthcare sector is expected to grow due to the increasing complexity and volume of data in this field.4,6,7 Research shows strong demand within the healthcare sector with 86% of healthcare providers, as well as technology and life sciences companies using AI. AI applications are increasingly used in clinical trials (22.7%), while radiology accounts for the largest share in therapeutic area support (75%). 8

However, fully realizing the benefits of AI in healthcare requires effective implementation, which entails addressing multiple major challenges. This process involves not only retraining the workforce and reorganizing health services but also navigating various legal, ethical, and social issues. 9 AI and digital technologies have been found to exert a moderate to substantial influence on 13 health-related sub-goals and six other UN Sustainable Development Goals (SDGs). This demonstrates their pivotal role in advancing the SDGs, particularly the third goal in healthcare, which aims to ensure universal health coverage and improve global well-being, with a special focus on developing countries.10–12 Implementing AI tools and ensuring their sustainable use is a complex process, as such technologies often encounter skepticism stemming from concerns over algorithmic and data bias, limited clinical involvement in product development, safety and risk issues, liability, legal and ethical considerations, and potential effects on professional roles.13–15

Therefore, the implementation of AI in healthcare demands thorough planning and structured organization to guarantee quality, safety, and acceptance. 16 In addition, interdisciplinary collaboration among stakeholders, such as medical practitioners, academic instructors, computer scientists, health informatics professionals, and other relevant experts, should be promoted throughout the development and deployment of AI technologies. 17 Moreover, multiple studies have underscored the essential role of AI specialists and medical professionals in implementation efforts, stressing the importance of their collaborative engagement.1,13,18

Guided by the literature highlighting the significance of stakeholder collaboration and the necessity for supportive frameworks and regulations, this study addresses the scarcity of research on cooperation between healthcare professionals (HCPs) and AI experts. Although these groups work closely together, their differing viewpoints, especially regarding collaboration in the implementation of AI technologies in the healthcare sector, remain poorly understood, highlighting a significant research gap.

This study, a developed version of previous research,

19

addresses this gap and seeks to examine how success factors align between healthcare professionals and AI experts in implementing AI within healthcare, using a qualitative research approach. The aim is to deliver practical insights into the healthcare sector by identifying success factors that should be preserved and pinpointing gaps that require attention. Additionally, the research intends to support the creation of a framework for managing interactions between HCPs and AI experts, with particular emphasis on Finland. The Nordic countries are widely regarded as leaders in digital health technologies, with national implementation guided for many years by sector-specific strategies, and Finland stands out as particularly ambitious in its aim to transform its health system through digitalization.

20

The study addresses one main question, divided into two sub-questions: • What are the key success factors from the viewpoints of healthcare professionals and AI experts? • What gaps exist between HCPs and AI experts concerning these success factors?

Answering these research questions has both theoretical and practical implications. Theoretically, the study extends the use of the NASSS framework by applying it to investigate collaboration between stakeholders during the implementation process, rather than examining its domains separately or analyzing stakeholders only within specific domains. Practically, addressing these questions clearly highlights the gaps between stakeholder groups that decision makers and policy makers need to focus on more during the implementation of AI technologies in the healthcare sector, as well as the areas of alignment that should be emphasized to ensure smooth implementation.

Literature

Challenges and success factors of AI implementation in healthcare

The growing number of digital health applications, rising expectations for progress in medical, social, and economic fields, and the adoption of digital health practices accelerated by COVID-19 have created a need to identify the key elements for successful AI deployment. 21 However, although technologies are commonly viewed as a means to improve care by increasing safety and efficiency, in reality, such projects often fail to achieve the complete set of expected benefits. 22

According to the literature, the gap between the potential and actual impact of AI technologies in medical settings may be attributed to the historical focus on technology development, market entry, and commercialization. The divide between research and real-world application largely arises from the challenges of implementing AI solutions, which demand careful consideration of the specific conditions and factors necessary for effective integration into routine clinical practice.23,24

The challenges surrounding AI implementation are global in nature, which have prevented AI from achieving widespread adoption in healthcare practice. 24 AI implementation in healthcare can be described as a multifaceted socio-technical initiative, as its success in clinical settings depends on more than technical performance and involves diverse stakeholders, including technology creators, regulatory agencies, healthcare organizations, professionals, patients, and care providers. This level of intricacy places AI toward the more challenging end of the spectrum compared to traditional measures explored in implementation science.25,26

Several studies have examined the challenges and success factors linked to implementing AI technologies in the healthcare sector. One key challenge involves data-related issues, such as gaining access to large volumes of data, inconsistencies in healthcare data, and concerns regarding data privacy and confidentiality.24,27,28 Another challenge highlighted in several studies concerns legal issues and regulatory frameworks, including compliance with established guidelines, gaps in existing policy structures, regulatory questions about accountability for errors in AI-assisted decision-making, and technology-related difficulties.21,24,27–29 Furthermore, the literature highlights concerns related to stakeholders in the implementation process, including the need for active engagement of all parties involved and the varying attitudes toward adopting AI in healthcare.24,25,27 Past research has also identified technology and assessment-related challenges, including the need for seamless integration into complex clinical workflows, issues with health system infrastructure, the lack of sufficient medical and economic impact evaluations, and differing opinions on how to assess the value of AI.21,24,27,29 Some studies have also pointed out research-related challenges in this field, such as the scarcity of real-world evidence and inadequate funding mechanisms, along with the need for further studies to produce insights essential for developing implementation frameworks that can support the future deployment of AI in clinical settings.25,29

In their comprehensive literature review, Wubineh et al.

30

categorize the challenges of AI implementation in healthcare into four main themes: (1) moral and confidentiality concerns, primarily related to data usage and informed consent; (2) lack of awareness about AI applications, referring to insufficient knowledge that can lead to unrealistic expectations; (3) concerns about the unreliability and credibility of AI technologies, focusing on the lack of transparency in algorithms and inaccurate predictions; and (4) healthcare professionals and responsibility, highlighting the need for training and clarifying the responsibilities of healthcare professionals in using AI and interpreting its results. The specific barriers under each theme are illustrated in Figure 1. Challenges of healthcare AI implementation reported by Wubineh et al.

1

NASSS framework and AI technologies in healthcare

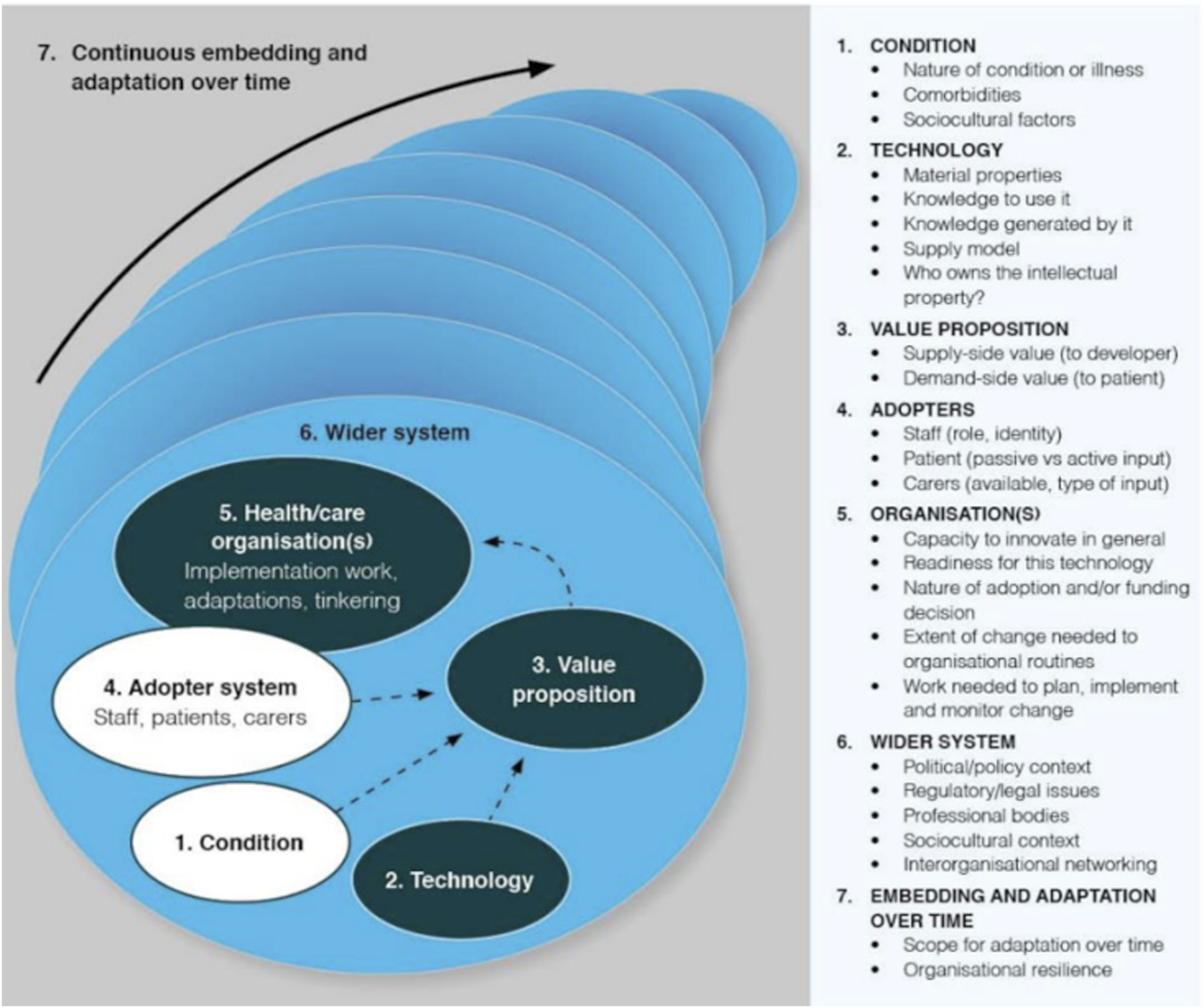

The Nonadoption, Abandonment, Scale-up, Spread, and Sustainability (NASSS) framework was suggested by Greenhalgh et al. 31 in the study to develop a practical, evidence-based framework that can help predict and assess the success of technology-supported health or social care programs. The resulting NASSS framework, developed through literature review and case studies, covers seven key domains. These include the Condition, which covers clinical, comorbidity, and sociocultural factors affecting patient suitability; Technology, which examines ease of use, reliability, data impact, and sustainability risks; Value Proposition, which weighs business viability against patient benefits; Adopter System, which focuses on acceptance by staff, patients, and caregivers and the factors influencing their support; Organization(s), which looks at readiness, resources, and coordination for adoption; Wider Context, which considers policy, regulatory, and financial influences; and Embedding and Adaptation Over Time, which stresses the importance of continuous evaluation and flexibility for long-term success. 31

Greenhalgh and Abimbola

22

expanded the NASSS framework from its 2017 version by adding new subdomains. The Condition domain gained a “Sociocultural factors” subdomain to capture cultural and societal influences on use. The Technology domain added “Who owns the intellectual property”, emphasizing issues of data and technology ownership. In the Wider System domain, “Interorganizational networking” was introduced to address the collaborative relationships between organizations involved in implementation. The updated version of the framework is shown in Figure 2. The NASSS framework, developed by Greenhalgh and Abimbola (2019), adapted from Greenhalgh et al. (2017).

The NASSS framework was systematically developed to address gaps in the literature concerning technology implementation. It focuses not only on adoption but also on nonadoption and abandonment, as well as the challenges in transitioning from a local pilot project to full-scale integration (scale-up), expanding to other settings (spread) and ensuring long-term sustainability through ongoing adaptation to the changing context.

While the NASSS framework was not originally developed for AI, it effectively guides implementation in healthcare. Several studies have successfully applied it to AI integration in healthcare. Reddy 32 showed that assessing the NASSS elements reveals key barriers and facilitators to adopting generative AI and provides an effective lens for analyzing complex adaptive systems. Hogg et al. 18 also identified NASSS as the most suitable tool for synthesizing evidence in their systematic review of AI implementation. Nair et al. 33 reported that factors influencing adoption of an AI-based clinical decision support system align well with its seven domains. Strohm et al. 34 also used NASSS in a qualitative study on clinical radiology, comparing interview data with the framework and creating a modified version that retained main domains while tailoring subdomains to the study context. Importantly, NASSS also incorporates sustainability in implementation—an increasingly critical issue as AI adoption grows—aiming to maximize benefits while supporting environmental preservation.10,31,35

Regarding the implementation of AI technologies in healthcare, with a focus on stakeholder collaboration, the NASSS framework has priority over other frameworks. Compared to TAM

36

and its extension UTAUT,

37

NASSS considers different stakeholders in the domains of

The Practical, Robust Implementation and Sustainability Model (PRISM), developed by, 38 incorporates the RE-AIM evaluation framework. 39 PRISM integrates elements of program design, predictors of implementation and diffusion, and outcome measures, with emphasis on sustainability and stakeholder engagement. However, unlike NASSS, it is not tailored to healthcare technologies and instead covers interventions broadly, lacking technology-specific elements. The Consolidated Framework for Implementation Research (CFIR), developed by Damschroder et al., 40 also identifies factors influencing implementation in healthcare. Yet, while it includes stakeholder perspectives, it is not dedicated to technology in healthcare and, like PRISM, omits technology-specific considerations.

Methodology

Theoretical framework

The theoretical framework of this research is grounded in the NASSS framework, with a focus on selecting the domains, subdomains, and themes most relevant to HCPs, AI experts, and their collaboration. This selection is primarily guided by the application of the NASSS framework in Hogg et al. 18 and their findings on factors considered important by both HCPs and AI experts, or by either group individually, complemented by insights from other relevant literature.

The study by Hogg et al. 18 is selected as the basis for this research because it offers one of the most comprehensive analyses of clinical AI implementation in recent years. Through a systematic review of 111 qualitative studies (2014–2021), it consolidated the perspectives of key stakeholders, including HCPs, patients, developers, healthcare leaders, and policymakers. The study aimed to identify factors influencing AI adoption in healthcare and to highlight evidence gaps, using the NASSS framework as its foundation. Importantly, it extended the framework by introducing two subdomains: Care pathway positioning (Technology domain), which considers tool independence, interaction timing, and response context; and Relationships (Adopters domain), which examines how AI affects trust, communication, and collaboration among HCPs and their relationship with patients.

Theoretical framework for identifying key success factors in AI implementation in healthcare.

Data collection

This study follows a qualitative research methodology which is consistent with previous studies that employed semi-structured interviews with stakeholders to identify key success factors for AI implementation in healthcare.27–29,33,41 The semi-structured interviews in this study were conducted with HCPs and AI experts from October to December 2024 in Oulu, Finland.

The questions were derived from the domains and subdomains of the NASSS framework, selected based on the literature (Appendix 1). They were designed to be general and exploratory to avoid biasing interviewees’ responses regarding each subdomain. The questionnaire was not based on a previously validated instrument; however, its relevance for the two studied groups was reviewed by an AI expert with experience in healthcare AI implementation to ensure clarity and relevance to interviewees’ roles and alignment with the intended subdomains and themes.

Purposive sampling was used to select participants with relevant expertise, as it targets respondents most likely to provide valuable insights. 42 For this study, the inclusion criteria for healthcare professionals were doctors with experience in using or implementing AI in healthcare, while AI experts included developers or their supervisors involved in creating and applying AI tools in this field.

The interviews were conducted separately, with participants answering approximately 13–15 questions tailored to their expertise and guided by the study’s theoretical framework. In addition, an open-ended question was included to capture other potentially relevant factors, allowing for the identification of overlooked subdomains or the emergence of new subdomains and themes.

In total, nine interviews were conducted with participants based in Finland including four HCPs and five AI experts. 43 Given the demanding schedules of many interviewees, particularly HCPs, both online and email formats were used. One HCP and three AI experts took part in live online interviews lasting 30 minutes to one hour, while the others completed email interviews. There were no relationships between the interviewer and the participants before the interview; however, the interview invitations sent by email included information about the interviewer’s current education and role, the research goals, and the importance of the study.

For online interviews, questions were sent in advance, and for email interviews, participants were asked to return their responses within one week. This approach allowed all interviewees, regardless of format, to reflect on the topics and provide more considered and comprehensive responses. Additionally, no follow-up questions were asked based on interviewees’ answers in online interviews, so the depth of responses stayed the same across both formats.

Informed consent was obtained verbally at the beginning of the interview, during which the interviewer read the privacy notice; if the interviewee agreed, the interview proceeded. In email interviews, the privacy notice was provided at the beginning of the interview, and the interviewee was asked to continue if they agreed to the terms. This procedure followed the publicly available guidelines of the University of Oulu Human Sciences Ethics Committee, in line with national TENK guidelines, 44 which emphasize informed and voluntary consent rather than a specific format and allow verbal or electronic consent.

For both online and email interviews, participants were informed in advance about the ethical considerations, including the use and analysis of data, procedures for anonymization, and data retention. Informed consent was obtained before each interview. The online interviews were audio-recorded with participants’ consent and later transcribed using Microsoft Teams’ automated tool. Field notes were taken during and shortly after each interview. These notes included the interviewer’s reflections on emerging themes and were reviewed during data analysis to assess their relevance and consistency with the interview data. All transcripts were carefully checked against the audio recordings to correct any transcription errors and ensure accuracy. Details of the participants and interviews are in appendix 2 of the paper.

Demographic data such as age, gender, and education were not collected. In line with the General Data Protection Regulation (GDPR), personal data must be “adequate, relevant, and limited to what is necessary in relation to the purposes for which they are processed” (Article 5(1)(c)). 45 As these demographic data were not required to address the study goals and were not included in the theoretical framework, they were excluded to protect participant anonymity and privacy. In addition, given the small sample size, collecting demographic data would not provide meaningful analytical insights. Instead, participants’ current professional roles and relevant experience were collected as background information to support the interpretation of perspectives.

Data analysis

Data collection and primary coding and analysis were conducted by one author (ZYD), a doctoral researcher in Business Analytics with an interdisciplinary background in electrical engineering, business administration, and analytics, which may have shaped the analytical focus on technological and organizational aspects of AI implementation. To enhance analytical rigor and reduce potential bias, the analysis was reviewed and discussed with the supervising author (TK), an associate professor specializing in business analytics and digital service business with strong background in digital health, at the University of Oulu, who provided independent oversight and critical feedback throughout the research process.

This study employed thematic analysis to examine the qualitative interview data. Recommended by Braun and Clarke 46 as a foundational qualitative method, it helps develop essential analytical skills. Thematic analysis seeks to identify meaningful patterns or themes that address research questions and provide deeper insights, going beyond description to interpret and explain the data. 47

This study applies an abductive approach to thematic analysis. Abductive methodologies, as noted by Nenonen et al., 48 enhance research quality by producing concepts that are practical, understandable, and applicable to real-world contexts. Thompson 49 further provided a structured guide for abductive thematic analysis, positioning it between inductive and deductive approaches by combining empirical data with theoretical frameworks. In this research, the NASSS framework was used to guide predefined subdomains and themes, while also allowing for the identification of new factors that could extend the framework. Following Thompson, 49 this approach uses existing theory as a lens while staying open to novel insights, thereby avoiding arbitrary conclusions and exposing gaps where current frameworks may not fully explain empirical findings.

The qualitative data in this study were analyzed using thematic analysis with the aid of MAXQDA software. The choice of this software was informed by Weinert et al., 41 who also employed it to explore stakeholder perspectives on AI implementation in hospitals. The analysis was conducted by one analyst and subsequently checked and validated by another. To identify both gaps and alignments between the two stakeholder groups, HCPs and AI experts, the analysis was first conducted separately for each group and later combined to propose a framework aimed at improving collaboration between them.

Each interview transcript was initially read in full to gain an overall understanding of the participants’ views. Transcripts were then coded in MAXQDA using predefined codes derived from the NASSS framework, while additional inductive codes emerging from the data were incorporated into the coding scheme for use in subsequent transcripts. Once coding was completed, earlier transcripts were revisited to determine whether the newly added inductive codes applied. Inductive codes generated during the process were either mapped to existing NASSS subdomains where applicable or incorporated into the framework as new themes. The final analysis for each stakeholder group was structured around the NASSS framework’s subdomains and the themes identified in this study.

In addition, memos were created for each transcript to capture key insights and participant reflections, which supported the identification of patterns in the data. These emerging patterns were then examined across all participants and integrated into a final list, which led to the design of a framework in this study.

Results

The following section presents the data analysis structured around the domains and subdomains of the NASSS framework.

Domain: Condition

This domain was not strongly emphasized by AI experts in relation to their collaboration with HCPs. However, two participants noted that AI tools in healthcare require further improvement to address comorbidities, illness conditions, and socio-cultural factors. As one expert explained, “

Two HCPs mentioned challenges with AI tools regarding the subdomain of “Nature of condition or illness”, and one raised concern about “comorbidities”. “

Domain: Technology

Subdomain: Care pathway positioning

Regarding the theme “extent of tools’ independence”, most AI experts agreed that final patient-care decisions should rest with HCPs, as required by regulations. They noted that HCPs generally prefer retaining decision-making authority, with AI mainly providing support. However, one participant observed that in certain time-consuming tasks, some HCPs may prefer AI to handle the entire process: “

HCPs expressed mixed views on full automatization. Two participants felt it could be acceptable in certain circumstances, while two others emphasized that final decisions should remain with clinicians. As one noted, “

Subdomain: Supply model

For the theme “quality of health data”, AI experts generally did not view data issues as a major challenge in their collaboration with healthcare providers. However, one participant noted that HCPs expressed concerns about the location of stored data, “…

Two HCPs commented on this factor. One noted that trust in AI experts to access the required data operates more at the organizational level, while another raised concerns about security and privacy. Both also highlighted challenges with training data. As one explained, “…

Subdomain: Knowledge generated by it

Regarding the theme “communicate meaning effectively”, several AI experts stressed that HCPs need transparency in how AI systems make decisions, especially in patient care. Some linked this to the “black box” problem, which they felt fosters distrust, while one expert argued that demonstrating clinical evidence of effectiveness is sufficient reassurance. As one participant explained, “

All HCPs emphasized the importance of understanding how AI systems reach specific decisions, while noting that full technical details are unnecessary. One participant highlighted the challenge of the “black box” problem in neural networks, stating, “

Subdomain: Knowledge to use it

The theme “agreeing the scope of use”, was considered highly important by AI experts and was emphasized repeatedly. They noted that HCPs need clarity on the scope of use of AI tools, while understanding the underlying model logic is not necessary. One participant stressed the importance of training HCPs on this scope, whereas another highlighted challenges in providing such training. As one AI expert explained, “

Three HCPs emphasized the importance of clearly defining the scope of use for AI tools. However, two participants pointed to insufficient training from AI experts and difficulties in educating HCPs. One noted, “

Subdomain: Material properties

In relation to the theme “usability” several AI experts emphasized that ease of use matters more than extensive functionality, warning that overly complex tools risk failure. As one participant noted, “

While two HCPs considered ease of use and functionality equally important, two others emphasized ease of use, with one also pointing to a lack of attention from AI experts. “

Domain: Value proposition

Subdomain: Demand-side value

The theme “healthcare professionals’ requirements”, identified through inductive analysis, was assigned to the NASSS subdomain of demand-side value. AI experts stressed the need to address HCPs’ requirements, though some noted misunderstandings and limited attention to demand-side concerns. One participant highlighted the economic value of AI tools alongside functional needs: “

Regarding “healthcare professionals’ requirements”, three HCPs agreed that AI should handle routine tasks, allowing them to focus on patient care. One highlighted radiology as a key area: “

In the subdomain of “demand-side value”, the theme “perceived value” also emerged from inductive analysis. All AI experts emphasized the importance of understanding the benefits of AI, noting that such awareness supports acceptance and strengthens collaboration. One of them explained, “

Regarding “perceived value”, only one HCP raised this factor, emphasizing its role in cooperation and acceptance of change. As the participant explained,

Domain: Adopters

Subdomain: Tools redefine staff roles

This subdomain was emphasized by several AI experts without a special theme, mentioning that cooperation, perceived value, and usability are important in redefining the roles of HCPs without disrupting their work. As mentioned by one AI expert,

Two HCPs felt that AI tools supported their tasks without changing their roles. Another noted that while many providers are open to role reshaping, some remain protective, emphasizing the importance of communication between AI experts and HCPs, explaining, “

Domain: Organization

Subdomain: Extent of change needed to organizational routines

Regarding the theme “Fitting the tool within current practices”, three of the AI experts highlighted the importance of integrating AI tools into existing practices. They noted that smooth implementation requires involving users already during the development stage. As stated by one AI expert,

Out of three HCPs discussing this factor, two of them did not report problems in this area noting, “

Domain: Wider context

Regulatory and legal issues

In this subdomain, regarding the theme “deciding who is responsible”, most AI experts emphasized that HCPs hold the final responsibility for clinical decisions, as defined by regulations. Some added that this also depends on AI experts clearly defining and training the scope of use: well-informed HCPs are more likely to trust AI and accept accountability, while unclear training on the scope of use may shift responsibility back to developers. As explained by one AI expert “

Most HCPs also believed they should remain responsible for final decisions, citing limited trust in AI. As one participant noted, “I would love that the AI would do all the dirty work…we cannot yet trust what it decides…humans have brains which are much more complicated, and we can make the decision making and learn all day” (HCP, Interview 7).

Subdomain: Professional bodies

The theme “Lack of understanding between professional groups” was highlighted by all AI experts, noting that HCPs often lack awareness of AI’s capabilities, while AI experts struggle to understand healthcare needs. Interdisciplinary experts were seen as essential to bridge this gap. One participant added that insufficient understanding on the developers’ side can also foster HCP distrust: “

Two HCPs felt there was no misunderstanding with AI experts and therefore no need for an interdisciplinary role. As one explained, “

Subdomain: Interorganizational networking

The factor of interorganizational networking, identified through inductive analysis without a special theme, aligns with the corresponding NASSS subdomain. AI experts emphasized the need for cooperation with HCPs, starting in development and continuing through implementation. Some noted HCPs’ limited availability due to heavy workloads but believed that once convinced of a tool’s value, they would engage actively. As one AI expert stated, “

All HCPs agreed on the need for close cooperation with AI experts during both development and implementation. One participant described the positive impact of early involvement: “

Domain: Embedding and adaptation over time

Subdomain: Organizational resilience

This subdomain was identified as important by most AI experts without a specific theme. They stressed the need for close cooperation between HCPs and AI specialists in both adapting and developing AI tools. One participant shared challenges in cooperation, noting that collaboration is closely tied to change management. As one AI expert stated, “

Most HCPs also emphasized the importance of cooperation with AI experts during the adaptation process. As one participant explained, “

Most highlighted factors

In response to the free-text question on the most important factors, two AI experts highlighted demand-side value. Other factors mentioned once included interorganizational cooperation, transparency and trust, lack of understanding between professional groups, and privacy and security.

In response to this question, two HCPs identified transparency and trust as the most important factors. Other factors mentioned once included usability, interorganizational cooperation, and demand-side value.

Patterns

Key patterns emerging from the interviews.

Discussion

Answer to research questions and critical interpretation

This study identified both alignments and gaps between AI experts and HCPs, analyzed through the NASSS framework using thematic analysis. In the domain of Condition, both groups agreed that AI tools still require significant improvement to manage complex cases, incorporate socio-cultural factors, and address comorbidities. The absence of major gaps in this domain suggests that limitations in this domain are broadly recognized, and while not affecting the collaboration as a gap, can restrict the AI adoption in the healthcare sector.

Transparency, identified as one of the most important factors by both AI experts and HCPs, was linked to trust in six interviews. Both AI experts and HCPs emphasized that transparent communication of AI outputs is essential for building trust. This reflects earlier findings, such as Reddy, 32 who argued that the complexity of generative AI undermines trust when transparency is lacking. Similar concerns were noted by Bhavaraju 48 and Alanazi, 28 who highlighted trust as a central barrier to adoption for healthcare providers, while Vinh Vo et al. 50 reported transparency as a top priority for stakeholders. Hogg et al. 18 further observed that the “Black Box” nature of non–rule-based AI produced mixed reactions, with some HCPs viewing responsibility as their own to improve understanding, while others placed it on developers to provide more interpretable metrics. This indicates that transparency functions as a foundation for trust and AI adoption in healthcare, primarily by influencing healthcare professionals’ willingness to engage in collaboration.

Interorganizational cooperation and early involvement of HCPs were viewed by both groups as essential and among the most important factors, for aligning AI tools with clinical needs and facilitating integration. Vinh Vo et al., 50 likewise emphasized clinician participation in the development process of artificial intelligence in healthcare. Weinert et al. 41 also reported that collaboration with clinicians helps define shared goals, identify useful algorithms, and assess usability in practice, which is in line with the pattern identified in this study. Despite agreement on its importance, perspectives diverged: AI experts questioned HCPs’ willingness to engage, whereas HCPs expressed differing views on working with commercial versus academic partners, with some favoring industry collaboration and others preferring internal expertise. Similar tensions are reflected in prior studies. Laï et al. 51 noted that many HCPs see industry-developed AI as a way to save time and streamline tasks, while Hogg et al. 18 observed that effective cooperation requires both clinical and technical expertise but is often hindered by the workload demands of HCPs. This suggests that although collaboration is valued by both groups, limited trust and the absence of clear mechanisms for effective collaboration can hinder smooth implementation.

Demand-side value, understood here as both actual and perceived value for HCPs, was of the most important factors and strongly emphasized by both AI experts and HCPs for developing effective AI solutions. Bhavaraju 52 similarly argued that AI development should begin with careful evaluation of use cases and a clear understanding of user needs. This study found that greater perceived value encouraged collaboration, echoing Hogg et al., 18 who reported that HCPs engage more when they recognize value in context. Petersson et al. 13 also concluded that demonstrating tangible benefits for daily tasks is crucial to enhancing HCPs’ motivation and engagement. Despite this alignment, notable gaps emerged. AI experts largely viewed AI as a means to automate routine work, whereas HCPs also expected decision-support capabilities and transparent outputs. Some experts perceived HCPs as unclear about their own needs, while HCPs criticized developers for a limited understanding of clinical processes and excessive focus on financial returns. Similar findings appear in prior studies: Ahmed et al. 53 and Swed et al. 54 reported that only 23% and 27% of physicians, respectively, were aware of AI use in healthcare, and Laï et al. 51 noted a gap between scientific progress and the exaggerated claims promoted in the media.

Both HCPs and AI experts stressed the importance of clearly defining the scope of use to set expectations and clarify responsibilities in AI implementation. Prior studies have likewise highlighted the need for HCP training, especially on the scope of use.13,32,50,55 In this study, however, some HCPs reported insufficient support from AI experts, with training responsibilities shifted to hospitals, while one AI expert pointed to difficulties in training HCPs. This reflects gaps in cooperation and unclear role specification, which is also found in the domain of interorganizational cooperation. Similar concerns were reported by Hogg et al., 18 who observed cases where AI tools were abandoned due to limited understanding, and by Laï et al., 51 who noted that industry professionals often underestimated the importance of physicians fully understanding how AI tools operate, sometimes suggesting only superficial training.

Ease of use was highlighted by both groups as critical, since intuitive tools are more likely to be adopted in busy healthcare settings. Petersson et al. 13 similarly noted that healthcare leaders expect AI systems to be simple and require minimal training. Weinert et al. 41 also found that usability was among the most frequently identified subdomains in AI studies. In this study, some HCPs reported that usability was often overlooked during development, while one AI expert argued that functional value could compensate for limited usability. While ease of use was not raised by any AI experts as one of the most important factors in this study, Hogg et al. 18 reported that developers themselves considered usability and accessibility to influence adoption more strongly than performance. These gaps suggest that limited interaction and the absence of shared goals between the groups may have contributed to differing expectations regarding ease of use.

Staff roles were not seen as a major source of resistance, as both HCPs and AI experts emphasized that changes are acceptable when supported by cooperation, usability, and value. One HCP raised concerns about replacement by AI, but most felt their primary roles remained intact and noted that adapting to change is part of their profession. This aligns with Laï et al., 51 who reported that physicians are generally open to reevaluating roles as long as they remain central to patient care, their primary goal. AI experts also stressed smooth integration to avoid disrupting clinical work, echoing Hogg et al., 18 who found that gradual, HCP-led transfers of responsibility support successful AI adoption.

On the issue of mutual understanding, most AI experts in this study pointed to a communication gap with HCPs and suggested interdisciplinary experts as a bridge. In contrast, some HCPs felt no such misunderstanding existed, highlighting divergent perceptions of collaboration. Moreover, while one AI expert emphasized a lack of understanding as the most important factor, no other HCPs had the same idea. Thenral and Annamalai 27 also identified the shortage of interdisciplinary experts as a barrier to developing deployable AI-enabled telepsychiatry solutions. Petersson et al. 13 noted that healthcare leaders viewed communication across professional groups as a major obstacle, as they do not share the same professional language, stressing the need to adapt training to prepare future HCPs for digital technologies. He et al. 24 and Vinh Vo et al. 50 also argued the importance of investment in training and educational initiatives for HCPs, emphasizing that HCPs require education on both the benefits and limitations of AI with a suggestion that curricula incorporate health informatics, computer science, and statistics.

On the issue of responsibility, both groups in this study agreed that final accountability should rest with HCPs, as they remain the ultimate decision makers, while AI experts also stressed their duty to provide adequate training on the scope of use. However, a gap also appeared regarding AI autonomy: AI experts felt HCPs preferred to retain final decision-making authority in all cases, whereas some HCPs welcomed full automatization in specific areas such as radiology. Laï et al. 51 similarly identified responsibility as a central concern, with most stakeholder groups—physicians, industry partners, institutions, and independent individuals—reluctant to accept liability for patient harm, while Physicians were willing to assume responsibility only if they had a clear understanding of how the tool reached its conclusions, which aligns with the findings of this study. This tension reflects an unresolved boundary between human oversight and automation, suggesting that acceptable levels of AI autonomy may be context-specific rather than universally defined.

On data quality and security, AI experts in this study generally did not highlight major issues, whereas HCPs raised concerns about training with external datasets, as well as data privacy and storage, which reduced trust and willingness to cooperate. Similar concerns are reflected in previous research on privacy and security risks.27,28,32,52 Petersson et al. 13 also noted challenges in defining personal data within regulatory frameworks and the risk of reidentification. Data quality training was another recurring issue. Bhavaraju 52 emphasized that AI performance depends on careful collection, curation, and use of training data, while Laï et al. 51 reported that developers often face difficulties accessing clinical data for algorithm training. In this study, one HCP mentioned reluctance to share data due to privacy concerns, echoing Hogg et al., 18 who found that developers frequently encountered resistance from healthcare organizations and patients unwilling to grant access to sensitive data.

Finally, this study contributes to the sustainable value proposition of AI in healthcare by emphasizing responsible adoption. De Andreis et al. 56 noted that transparency, accountability, and effective data management are central to ethical and sustainable AI use, all of which were highlighted in this study. As AI adoption expands, concerns about its environmental impact also increase. 10 This underscores the need to prioritize high-impact applications that address real healthcare demands, consistent with the results of this study in demand-side value.

Implications for the NASSS framework

This study showed that the NASSS framework is a useful tool for evaluating collaboration between different stakeholders throughout technology implementation. The comprehensive domains and subdomains of the framework support a systematic examination of how differing perspectives on each factor, between collaborating groups, can influence cooperation and affect implementation.

Moreover, through inductive analysis, this study added healthcare professionals’ values to the demand-side value domain, which previously focused on patients’ values in the original framework. This study showed the importance of considering the values of all the stakeholders for better collaboration and can be considered in future research. In addition, perceived value emerged through inductive analysis and was added to the demand-side value as a theme. Alongside actual delivered value, perceived value was found to be an influential factor in implementation and was extensively emphasized by participants, which is in line with the TAM framework. 36 Together, these additions demonstrate the flexibility of the NASSS framework and support extending its use to evaluate collaboration between stakeholders.

Proposed framework

Rather than considering each NASSS subdomain or theme in isolation, this study goes beyond individual factors by identifying common patterns across interviews and examining their interactions, which informed the development of the proposed framework. The framework (Figure 3) was derived from the shared patterns identified in Table 3 and aims to strengthen collaboration between healthcare professionals (HCPs) and AI experts by focusing on values shared by both groups and illustrating the process of initiating and reinforcing collaboration. It highlights the importance of involving HCPs early in tool development, improving communication with AI experts, and using interdisciplinary experts to better identify demand-side value. This leads to improvement in value proposition, including perceived value among HCPs as they understand tool advantages and find their influence on the final product, which in turn strengthens cooperation during implementation. This close collaboration helps with training HCPs and clarification on the scope of use and together with transparency in the communication of results, fosters trust and further supports cooperation during adoption. This framework is grounded in the study data and requires validation with a larger sample of HCPs and AI experts. Proposed framework to enhance collaboration between AI experts and healthcare professionals in the implementation of AI in healthcare.

Practical implications

The findings of this study offer several implications for the successful and sustainable implementation of AI in healthcare. Early and continuous involvement of HCPs is essential to align AI tools with clinical workflows and ensure their relevance in practice. Structured collaboration mechanisms, supported by interdisciplinary expertise, can bridge the communication gap between clinicians and AI developers and strengthen mutual trust.

The need for interdisciplinary expertise, emphasized in this study, also highlights implications for workforce development and education policy. This requires targeted training solutions that equip HCPs with foundational AI literacy and provide AI experts with greater exposure to clinical contexts. Including interdisciplinary competencies in healthcare education, professional development programs, and organizational training strategies are among the solutions that can be considered to strengthen interdisciplinary expertise.

Equally important is a focus on demand-side and perceived value. AI tools should be designed to reduce routine workload while also supporting decision-making, thereby demonstrating tangible benefits for providers. Regular feedback loops between HCPs and developers can enhance usability and foster greater engagement.

Transparency and a clearly defined scope of use are critical to fostering trust. Providing interpretable outputs, clarifying responsibilities, and delivering structured training enable HCPs to use AI tools effectively and safely. At the same time, usability must be prioritized to ensure that tools integrate seamlessly into demanding clinical environments.

Finally, concerns regarding data privacy, security, and quality need to be addressed. Transparent practices, compliance with regulations, and validation on local datasets are necessary to reduce resistance and encourage data sharing. Developers should also be realistic about the current limitations of AI in managing comorbidities and complex conditions, avoiding overstated claims. Taken together, these findings provide a roadmap for managers and decision makers to foster trust, strengthen collaboration, and ensure that AI systems deliver real value in clinical practice.

Limitations and future research

This study has several limitations that should be considered when interpreting its findings. The sample size was small and limited to Finland, which may restrict the generalizability of the results and the proposed framework. While the qualitative method and precise selection of participants with substantial experience in the field provided in-depth insights, a larger and more diverse sample could have captured a broader range of perspectives. Time constraints during interviews also limited exploration of some themes, particularly more complex aspects of AI implementation. In addition, demographic data such as age, gender, and years of experience were not collected; including such information in future studies could provide a richer understanding of how personal characteristics shape perceptions of AI adoption.

Future research should address these limitations by expanding the number of participants and combining qualitative and quantitative approaches. Longer or repeated interviews, alongside focus groups, could yield more nuanced insights and facilitate dialogue between HCPs and AI experts. Further studies with more samples are also needed to validate and refine the proposed framework across diverse healthcare settings and stakeholder groups, which would help assess its applicability and strengthen its relevance for guiding effective AI implementation.

Conclusion

This study explored the perspectives of HCPs and AI experts on the success factors for implementing AI in healthcare, applying the NASSS framework to capture both alignments and gaps. Transparency and trust, importance of usability, understanding the scope of use by HCPs, early involvement of HCPs, responsibility, and redefining the roles emerged as shared priorities, while differences were observed in collaboration preferences, lack of understanding, the need for interdisciplinary experts, expectations of demand-side value, views on responsibility, lack of training, data quality, and privacy.

The proposed framework emphasizes early collaboration, clear communication, training, and interdisciplinary support as key strategies to strengthen trust and cooperation. It also promotes the sustainability of AI integration in healthcare by highlighting transparency, accountability, data privacy, and data quality, while emphasizing the importance of understanding demand-side value to ensure that resources are directed toward applications delivering meaningful clinical impact.

Taken together, this study contributes both theoretical insights into stakeholder collaboration and practical guidance for managers and developers seeking to integrate AI responsibly and sustainably into healthcare practice.

Supplemental material

Supplemental Material - Bridging perspectives: Success factors for AI implementation in healthcare from healthcare professionals and AI experts

Supplemental Material for Bridging perspectives: Success factors for AI implementation in healthcare from healthcare professionals and AI experts by Zohreh Yousefi Dahka and Timo Koivumäki in Digital Health.

Supplemental material

Supplemental Material - Bridging perspectives: Success factors for AI implementation in healthcare from healthcare professionals and AI experts

Supplemental Material for Bridging perspectives: Success factors for AI implementation in healthcare from healthcare professionals and AI experts by Zohreh Yousefi Dahka and Timo Koivumäki in Digital Health.

Footnotes

Acknowledgements

No other contributors were involved in this research.

Ethical considerations

The Regional Medical Research Ethics Committee of The Wellbeing services county of North Ostrobothnia (Pohde) waived the need for ethics approval and no patient data was used in this study. The research was done at the University of Oulu, So, the data collection process is directly based on the publicly available guidelines of the University of Oulu Human Sciences Ethics Committee, which follows the national TENK (Finnish National Board on Research Integrity) guidelines.

Consent to participate

In online interviews, ethical considerations were read aloud, while in the email interviews they were provided in written form. In both cases, participants were asked to provide consent before proceeding.

Consent for publication

No personal data was collected in this study.

Author contributions

Zohreh Yousefi Dahka: Conceptualization; Data collection; Data analysis; Literature review; Writing – original draft. Timo Koivumäki: Conceptualization; Supervision; Data collection support; Validation of data analysis; Writing – review & editing.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The interview data cannot be shared publicly, as participants were not informed that their data would be made openly available, in accordance with GDPR requirements. However, the metadata describing the dataset has been published in Fairdata.fi and is cited within the text.

Guarantor

Zohreh Yousefi Dahka accepts full responsibility for the work, had access to the data, and controlled the decision to publish.

Supplemental material

Supplemental material for this article is available online.