Abstract

Objective

Clinical narratives provide comprehensive patient information. Achieving interoperability involves mapping relevant details to standardized medical vocabularies. Typically, natural language processing divides this task into named entity recognition (NER) and medical concept normalization (MCN). State-of-the-art results require supervised setups with abundant training data. However, the limited availability of annotated data due to sensitivity and time constraints poses challenges. This study addressed the need for unsupervised medical concept annotation (MCA) to overcome these limitations and support the creation of annotated datasets.

Method

We use an unsupervised

Result

Without training data, our unsupervised approach achieves an F1 score of 0.765 in English and 0.557 in German for MCN. Evaluation at the semantic tag level reveals that “disorder” has the highest F1 scores, 0.871 and 0.648 on English and German datasets. Furthermore, the MCA approach on the semantic tag “disorder” shows F1 scores of 0.839 and 0.696 in English and 0.685 and 0.437 in German for NER and MCN, respectively.

Conclusion

This unsupervised approach demonstrates potential for initial annotation (pre-labeling) in manual annotation tasks. While promising for certain semantic tags, challenges remain, including false positives, contextual errors, and variability of clinical language, requiring further fine-tuning.

Keywords

Introduction

Electronic health records (EHRs) store extensive health data, including patient details on diseases, risks, procedures, and medications. 1 Most of this information, crafted by healthcare professionals under time constraints, is in narrative form, often dense, filled with abbreviations, and disregarding grammar rules. This emphasizes the need to map expressions to standardized codes for effective communication.2,3 To address this need, named entity recognition (NER) and medical concept normalization (MCN), 4 also known as entity linking, a subfield of natural language processing (NLP), 5 plays an important role. As healthcare organizations increasingly adapt EHR systems, the demand for clinical terminology in real-life clinical applications is increasing rapidly. In our study, we prioritize standardization of EHR data using clinical terminology.

Conventional biomedical NER methods can be broadly classified into dictionary-based, semantic, and statistical approaches. 6 In recent years, state-of-the-art (SOTA) approaches heavily rely on deep learning (DL) algorithms,7–9,11,10 and most prominently transformer models, such as bidirectional encoder representations from transformers (BERT) 12 and its variations. 6 However, to solve medical NER, BERT requires training data, which is often very limited due to the sensitive nature of the text.

From a technological point of view, the same applies to MCN. BERT models outperform, for instance, other SOTA architectures.13,14 They also excelled in handling multilingual data and capturing contextual information, surpassing the previous SOTA for the normalization of biomedical concepts.15–18,4 A pairwise learning-to-rank with a vector space model, 15 enhanced performance of BERT, BioBERT, 19 and ClinicalBERT20,21 models across different datasets, surpassing previous methods. In the 2019 n2c2/UMass Lowell task on MCN, various methods were tested, such as dictionary matching, DL, retrieval, and rank techniques22–24 using similarity metrics such as the cosine distance. The most accurate approach used a DL structure with a pre-trained SciBERT layer. 25 Deep neural network models for generating sentence embeddings as semantic representations, also enhanced cross-lingual biomedical concept normalization. 26 Another method, utilizing target concept guidance in MCN within noisy user-generated texts, 27 effectively integrates target concept information and domain lexicon knowledge to enhance model performance.

Self-alignment pre-training for BERT (

Combining NER and MCN is a challenging task. 32 Several existing methodologies also employ a combined strategy, leveraging the strengths of both NER and MCN to achieve more comprehensive and accurate results. MetaMap, 33 maps text to UMLS Metathesaurus but faces challenges with spelling mistakes and ambiguous concepts. Bio-YODIE 34 improves extraction speed and disambiguation but requires annotated data. SemEHR 35 builds on Bio-YODIE but relies on manual rules for enhancement. cTAKES 36 utilizes existing technologies but needs plugins to handle certain challenges. ScispaCy 37 is a supervised NER model with limited linking capabilities. CLAMP 38 is a comprehensive clinical NLP tool, while BioPortal 39 offers annotation for various ontologies but may face data protection issues due to its external interface. MedCAT 40 is a flexible concept extraction tool using any terminology based vocabulary. It boasts a user-friendly interface for customization and model training, making it versatile for clinical and research tasks. However, it requires annotated data for optimal performance. Despite the progress in transformer technologies, challenges persist in solving NER and MCN. Key issues include:

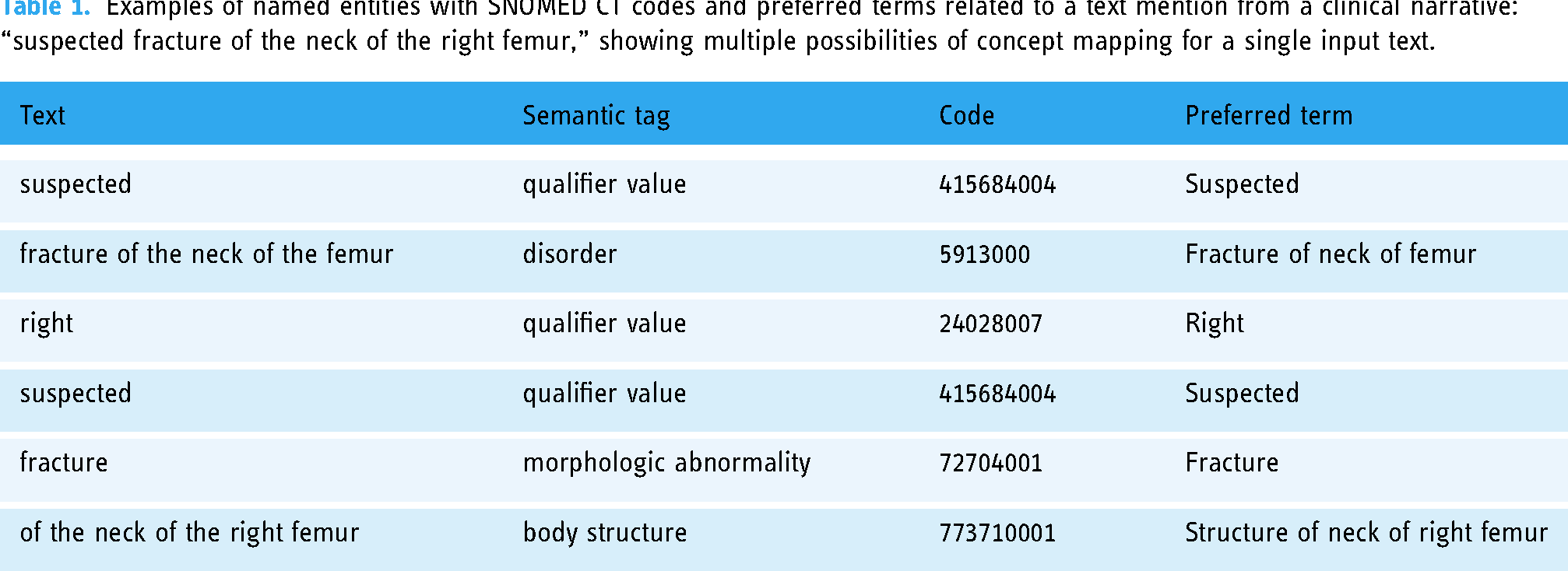

Examples of named entities with SNOMED CT codes and preferred terms related to a text mention from a clinical narrative: “suspected fracture of the neck of the right femur,” showing multiple possibilities of concept mapping for a single input text.

Medical data often contains sensitive information, which makes it difficult to share. Even in the current era of large language models such as ChatGPT, their application in medical settings poses ethical and privacy issues. 42 Concerns include patient privacy breaches, unclear responsibility in case of harm, and the need for clear rules to protect users. 43 For these reasons, it is crucial to weigh the implications and explore privacy-focused alternatives. Low-resource languages often suffer from a lack of publicly available datasets due to various factors such as small corpus sizes, different formats suited for specific tasks, and limited accessibility. 44

Clinical gold standards refer to a benchmark available under reasonable conditions. 45 However, the number of publicly available gold standards is limited, necessitating unsupervised approaches when labeled data is unavailable. 46 Different unsupervised NER approaches were reported, utilizing adversarial training 47 and contextualized word representations. 48 Nath et al. 49 focused on unsupervised specialized word embeddings and NER for clinical coding. Within unsupervised approaches for MCN, Yan et al. 50 utilized multi-instance learning for linking Chinese medical symptoms to ICD-10 classifications, surpassing the baseline by 1.72%. Tahmasebi et al. 51 demonstrated effective unsupervised anatomical phrase normalization using word embeddings in SNOMED CT. Karadeniz et al. 52 achieved precision scores of 65.9% and 68.7% for bacteria biotope entities and adverse drug reactions, respectively, using unsupervised entity linking methods with word embeddings and syntactic re-ranking.

The first general and complete unsupervised solution for NER with entity detection and classification used a noun phrase chunker with inverse document frequency for boundary detection and distributional semantics for terminology code assignment. 53 The overall classification shows good results, considering that only 39% and 19% of the entities could be found according to the datasets used. Another unsupervised framework for recognizing and linking medical entities from Chinese online medical text, namely unMERL 54 uses a combination of offline linguistic resources and online detection approaches to improve the recognition and linking performance. The results show that unMERL consistently outperforms current approaches and has good generalizability.

To address missing entities compared to the other unsupervised approaches,53,54 we employed n-gram-based entity detection and leveraged (

Material and methods

This section outlines our methodology and materials. We employ the NLTK n-gram generator 8 to extract linguistic patterns, specifically for entity mention detection. SNOMED CT, 55 described in Section “Terminologies,” is used as the reference terminology for mapping clinical entities. Details on the datasets and the proposed framework are discussed in Sections “Datasets” and “Proposed approach.”

Terminologies

The UMLS Methathesaurus is a large dataset unifying about 150 biomedical terminologies, such as MeSH, SNOMED CT, and RxNORM, and links concepts of 200 different vocabularies. 56 In this work, we are particularly interested in the subset SNOMED CT, a standardized, multilingual clinical terminology that includes more than 350,000 entities.57,55,58 It facilitates the comprehension and exchange of health information among diverse systems through the use of codes and expressions. 57

Datasets

For our experiments, we use the 2019 n2c2/UMass Lowell shared task on MCN dataset, 24 consisting of 100 discharge summaries from U.S. hospitals. In these texts, 10,919 mentions of medical problems (diagnoses), treatments, and tests were manually annotated using UMLS. 59 In this work, we consider only SNOMED CT annotations due to their global acceptance, comprehensive scope, compatibility with FHIR, widespread adoption, and broad coverage, ensuring standardized and comprehensive healthcare data representation. 58 Within the dataset, we considered the top 10 most frequent semantic tags (“procedure,” “disorder,” “qualifier value,” “finding,” “substance,” “body structure,” “morphologic abnormality,” “observable entity,” “physical object,” and “regime/therapy”), representing 95% of all SNOMED CT concepts within the dataset. Focusing on SNOMED CT candidates of the n2c2 dataset results in 6232 training mentions and 6528 test mentions.

To maintain consistency with the annotation standards used in existing datasets, we created a new German dataset in addition to the English dataset, for evaluation. The data is from an Austrian network of public hospitals and contains de-identified narratives from 10 EHRs. The texts were manually annotated using INCEpTION, 60 (see Figure 1 ) following the English annotation guidelines from n2c2. 59 The annotated set of discharge summaries resulted in 600 SNOMED CT normalized mentions that have 97% of the mentions within the aforementioned semantic tags.

Manually annotated clinical narrative in German using INCEpTION.

Proposed approach

Our work targets unsupervised MCA that combines n-gram decomposition with embedding-based similarity matching, as shown in Figure 2. Given an entity mention, classical normalization approaches would rely on the terms and their synonyms, as mentioned in the terminology, to find a corresponding entry. In this study, we rely on vector representations of those concepts modeled as embeddings. Therefore, given a candidate mention in the form of an embedding, the best vectorized SNOMED CT term needs to be found. Both steps, the vectorization of SNOMED CT and the vector search, are described in the following Section “Embedding space.” The detailed overview of our approach is provided in Section “Framework.”

Illustration of the proposed framework with (A) creating an embedding space of SNOMED CT, (B) preparation of clinical data for testing, (C) n-gram generation, entity recognition and normalization, and (D) selection and evaluation of the best n-grams.

Embedding space

Framework

In the following, we describe the unsupervised MCA framework, as shown in Figure 2.

We utilize n-grams due to their ability to capture contextual information surrounding entities and accommodate variations in token order within reference terminologies. This approach minimizes errors arising from partial matches and ensures robust entity identification across diverse text structures. By aligning textual mentions (preprocessed n-grams) with semantically similar concepts in SNOMED CT, this step enhances the accuracy and completeness of entity normalization, even amidst lexical variations and structural complexities in the text. Prioritizing mentions with high syntactic similarity ensures precision and accuracy in entity identification. While embeddings effectively capture semantic similarities and handle synonyms, relying solely on semantic similarity may introduce ambiguity and noise, especially in domains with complex terminology. Emphasizing syntactic similarity aims to prioritize entities exhibiting structural and contextual consistency with reference terms in SNOMED CT. This ensures a reliable mapping between textual mentions and corresponding concepts, minimizing the risk of misinterpretation or incorrect normalization. Therefore, incorporating syntactic similarity as a key criterion complements the strengths of embeddings, enhancing overall precision and reliability in entity identification and normalization within biomedical text analysis.

Baseline approach

Our methodology for the mapping of clinical concepts is compared with a baseline approach, using an n-gram generator to extract information from clinical texts, followed by a basic dictionary matching method to identify matches to SNOMED CT. This straightforward baseline serves as a benchmark to assess the effectiveness of our proposed methodology.

Results

Medical concept normalization (MCN)

We first evaluate our

Performance of MCN and MCA on semantic tag levels using n2c2 dataset.

MCN: medical concept normalization; MCA: medical concept annotation; NER: named entity recognition; P: precision; R: recall; F1: F1 score.

Performance of MCN and MCA on semantic tag levels using German EHRs dataset.

MCN: medical concept normalization; MCA: medical concept annotation; NER: named entity recognition; P: precision; R: recall; F1: F1 score.

Medical concept annotation (MCA)

Following the initial evaluation, we proceed to analyze the MCA method. This integrated approach combines MCN with n-gram decomposition for a comprehensive analysis of our methodology. The mentions proposed by the n-grams are utilized within the MCN, potentially deviating from the test data mentions, therefore resulting in either exact, partial, or no matches. The results at the semantic tag (MCA: NER) and concept ID level (MCA: MCN) are presented in Tables 2 and 3.

Similar to the MCN method, the analysis of the MCA approach reveals that “disorders” consistently exhibit higher performance in both evaluated cases. Performance in the semantic tag “regime/therapy,” achieving perfect precision (1.0) and a fair F1 score, showcasing its effectiveness in this particular semantic category. Comparing the results in the MCN and MCA: MCN columns of Tables 2 and 3, shows a uniform decline in MCA:MCN performance irrespective of the semantic tags, which highlight the need for better entity recognition systems.

To address computational requirements and processing times in real-world applications, we adopted a pragmatic approach, by extracting random samples of 100 lines of different lengths from the documents under consideration. By calculating the processing time for each line and deriving an average, we obtained a representative measure of the time required to process individual lines. Our findings indicate an approximate processing time of 60 s per line, which has to be optimized when applying this method for large-scale document processing. The main reason is the decomposition of the line under scrutiny into varying n-gram lengths, each of them a possible candidate that has to be processed.

Error analysis

The significant performance gap between English and German datasets prompted an investigation into their linguistic and structural differences. English’s analytic nature contrasts with German’s synthetic structure, impacting sentence comprehension due to differences in word order. German’s complex morphology poses challenges for tasks such as part-of-speech tagging, and divergent vocabulary and idiomatic expressions require tailored approaches. Additionally, variations in naming conventions and syntax offer insights into language model processing.

Upon closer examination of Tables 2 and 3, distinct groups of errors were identified, including contextual errors, granularity errors, analogy errors, similarity errors, wrong IDs, nugatory IDs, acronym errors, and spelling errors. Contextual, granularity, and analogy errors emerged as the most prevalent categories. Contextual errors primarily manifested as non-contiguous mentions, stemming from incomplete span coverage or ambiguous spans. To address these errors, efforts should focus on refining entity recognition algorithms for improved span delineation accuracy. Granularity and analogy errors, stemming from challenges in providing a singular “correct” normalization to a mention, were also significant contributors to performance degradation. In contrast, less frequently occurring errors included spelling and acronym errors. A detailed overview of different types of errors, along with examples, is provided in Appendix 1.

The performance of MCA was compared with the baseline approach of dictionary matching, see Table 4. MCA generally achieved higher precision, recall, and F1 scores for both NER and MCN compared to the baseline dictionary matching in the n2c2 dataset. However, in the German EHRs dataset, MCA showed lower P, R, and F1 scores compared to the baseline. A detailed analysis revealed that MCA outperformed the baseline in identifying exact mentions in both German and English data but exhibited a higher incidence of false positives. Addressing false positives, particularly for semantic tags such as “qualifier value” and “observable entity,” is crucial for improving overall precision. Moreover, reducing false negatives is essential to ensure a comprehensive capture of all relevant mentions, highlighting the importance of ongoing refinement for comprehensive MCN.

Comparison of NER and MCN of MCA with the baseline dictionary matching approach using n-grams on the n2c2 (en) and German EHR (de) datasets.

MCN: medical concept normalization; MCA: medical concept annotation; NER: named entity recognition; P: precision; R: recall; F1: F1 score.

Discussion

In this work, we focus exclusively on SNOMED CT and employ an unsupervised approach. Our model achieves an F1 score of 0.765 in MCN, as shown in Table 2, which indicates that it has no prior knowledge from the training set. This score can be compared to the “unseen concepts” category in the work mentioned by Xu et al., 30 which attained an accuracy of 0.691. Their findings indicate that future research on MCN should more effectively address previously unconsidered concepts, which was another motivating factor for this study. In addition to the unsupervised approach, our experiments showed promising results reaching an F1 score of 0.872, especially when leveraging additional training data within the embedding space for MCN, as shown in Appendix 2—SNOMED CT*. This outperformed the top-performing teams in the 2019 n2c2 challenge and other BERT models. This result also competes with the supervised method as investigated by Xu et al., 30 considering only SNOMED CT concepts.

In contrast to Zhang and Chen 10 and Chen et al., 11 we refrained from employing advanced preprocessing steps before NER, such as abbreviation expansion or numeral replacement, which reduce the complexity and computational overhead associated with preprocessing. Even though, MCA resulted in a higher incidence of false positives, with approximately 40% to 50% of detected entities contributing to these errors. A closer examination revealed that nearly 70% of identified mentions as “qualifier value” and “observable entity” were false positives. These stemmed from entities not present in the gold standard data, matched within the terminology. Addressing this abundance is pivotal for precision improvement. Re-evaluating “qualifier values” inclusion may enhance precision. Additionally, refining the extraction process is crucial to minimize false positives and enhance precision. While MCA outperformed the baseline in terms of false negatives, particularly in the German dataset, there remains room for improvement in this aspect. Reducing false negatives is also essential to ensure the comprehensive capture of all relevant mentions.

The consistently high performance of the “disorder” underscores method reliability in MCN. MCA demonstrates adaptability, showing significant F1 score improvements for “disorder” and the lowest false positive entry rates in both datasets, highlighting its efficacy across diverse medical contexts. This performance of “disorder” was competing with the supervised approach by Leaman et al. 67

Variations in performance across datasets imply dataset-specific challenges, warranting further exploration for optimization. A detailed analysis comparing MCA across the dictionary-matching baseline approach in the n2c2 dataset and German EHRs underscores the need for a better NER approach and reduced false positives. This fully unsupervised method can serve as a starter for pre-annotations in languages lacking publicly available datasets, such as clinical narratives, significantly reducing manual annotation time. 68 Unlike other unsupervised methods,51,69 our approach focuses on all semantic tags found in EHR narratives, potentially improving overall algorithm performance. However, it is important to acknowledge that adapting our method to new languages or terminologies may require language-specific preprocessing and domain-specific knowledge integration. Overall, our study lays the groundwork for exploring the practical applications of unsupervised MCA on real-world clinical narratives, potentially enhancing efficiency and accuracy in medical data annotation. Our approach also offers valuable insights into computational demands by estimating processing times at the line level, facilitating the understanding and targeted optimizations for enhanced system performance and resource allocation. Future research could explore techniques for automatic adaptation and scaling to diverse linguistic and medical contexts, taking into account further validation and fine-tuning to ensure seamless integration and address challenges such as false positives, contextual errors, and the idiosyncratic nature of clinical language.

System limitation

The MCA method exhibits a significant drawback in generating numerous false positives across both datasets, undermining overall recall and precision. Semantic ambiguity, where a word or phrase holds multiple interpretations, poses a complex challenge in clinical NLP. Efforts to mitigate this issue, such as employing rule-based filters, result in a performance drop. Acronyms further contribute to semantic ambiguity, complicating the analysis of mentions within their original context.

In contrast to systems that restrict semantic tags to predefined categories, our approach adopts a generalized approach, accommodating diverse medical domains. However, this flexibility may challenge precision and coverage. Dataset limitations, particularly biased tag distribution, can skew the model. The standardized content of clinical terminology systems remains a challenge, exacerbated by the scarcity of publicly available training data. Our unsupervised MCA method effectively addresses this challenge by semantic types that can lead to misclassification and decreased performance in medical concept recognition, thus impacting practical applicability in clinical settings. Nevertheless, the currently high processing time of 60 s per document line must be considered when developing optimized versions of the algorithm in the future.

Conclusion and outlook

The alignment between language expressions in clinical sociolects and standardized content of clinical terminology systems remains a challenge, exacerbated by the scarcity of publicly available training data. Our unsupervised MCA method addresses this challenge effectively, particularly in the absence of training data for supervised machine learning approaches.

Our proposed method demonstrates suitability for identifying and annotating text mentions in clinical narratives using codes from terminology systems. It holds promise as an initial annotation step to support manual annotation tasks in the future. The achieved F1 score performance of 0.765 for MCN sets a baseline, to be further explored with advanced language model techniques such as ChatGPT in future investigations.

Recognizing the importance of addressing fragmented mentions, we intend to incorporate techniques, such as context-based modeling or neural sequence labeling, in future iterations. These enhancements aim to improve the coverage and accuracy of entity recognition, thereby enhancing overall effectiveness. Future improvements for our methodology include enhanced normalization filters, improved entity recognition, and reduced false positive rates to further support coding in the context of clinical narrative data.

Footnotes

Acknowledgements

The authors have no specific acknowledgments to declare for this research.

Contributorship

AA and MK designed the project and the processing workflow with feedback from RR and SS. AA and SS annotated the dataset. MK triggered the problem motivation and AA is responsible for the core implementation. All authors read and approved the final version of the manuscript.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical approval

This study was approved by the Institutional Review Board (IRB) of the Medical University of Graz (30-496 ex 17/18).

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Guarantor

Markus Kreuzthaler

Informed consent

Informed consent was waived because the data being studied was de-identified, as approved by the IRB of the Medical University of Graz (30-496 ex 17/18).

Notes

Appendix 1. Types of errors encountered along with their occurrence rate for both embedding-based MCN and MCA on the n2c2 dataset

The following types of errors were observed during error analysis for both the embedding-based MCN on the test mentions and MCA on the test documents.

Embeddings-based MCN error rate: 32.6%.

MCA error rate: 42.6% (en), 46.5% (de).

Sentence from gold standard: “There were diffuse ST segment and T-wave lightgrayabnormalities, which were nonspecific.”

Candidate term: “abnormalities.”

Gold standard target: 55930002, “ECG ST segment changes.”

Output: 263654008, “Abnormal.”

Embeddings-based MCN error rate: 14.3%.

MCA error rate: 12.7% (en), 12.8% (de).

Sentence from gold standard: “She has also had some discomfort in her lightgrayleft lower abdomen and notes diarrhoea every 4–5 days.”

Candidate term: “left lower abdomen.”

Gold standard target: 68505006, “Left lower quadrant of abdomen.”

Output: 1017212007, “Left abdominal lumbar region.”

Embeddings-based MCN error rate: 24.6%.

MCA error rate: 12.8% (en), 8.5% (de).

Sentence from gold standard: “The patient had been taking his usual medications and using his lightgraynasal oxygen at home.”

Candidate term: “nasal oxygen.”

Gold standard target: 371907003, “Oxygen administration by nasal cannula.”

Output: 71786000, “Intranasal oxygen therapy.”

Embeddings-based MCN error rate: 8.4%.

MCA error rate: 3.8% (en), 6.7% (de).

Sentence from gold standard: “lightgrayEstratab.”

Candidate term: “Estratab.”

Gold standard target: 126099009, “Esterified estrogen.”

Output: 446265008 ,“Estrilda.”

Embeddings-based MCN error rate: 7.5%.

MCA error rate: 16.0% (en), 11.1% (de).

Sentence from gold standard: “He was admitted to the Short Stay Unit, given lightgrayAncef and Gentamicin per the team for antibiotic prophylaxis and observed overnight”

Candidate term: “Ancef.”

Gold standard target: 387470007, “Cefazolin.”

Output: 81123006, “Interleukin-5.”

Embeddings-based MCN error rate: 7.3%.

MCA error rate: 7.4% (en), 4.1% (de).

Sentence from gold standard: “The patient is a 78-year-old female who has had osteoarthritis and noted the sudden onset of lightgrayleft knee pain in 09/89. ”

Candidate term: “left knee pain.”

Gold standard target: 468251000124107, “Not Valid ID.”

Output: 287047008, “Pain in left leg.”

Embeddings-based MCN error rate: 5.1%.

MCA error rate: 4.2% (en), 9.6% (de).

Sentence from gold standard: “Cholecystectomy in 1994, colonoscopy 2004, status post tonsillectomy, status post appendectomy, status post lightgrayORIF of left wrist, status post left ear surgery.”

Candidate term: “ORIF.”

Gold standard target: 133863002, “open reduction with internal fixation.”

Output: 413042008, “Immature reticulocyte fraction.”

Embeddings-based MCN error rate: 1.1%.

MCA error rate: 3.0% (en), 5.8% (de).

Sentence from gold standard: “diabetes mellitus with lightgraydiabetic renopathy, renovascular occlusive disease, with thrombosis of the right renal artery, hypertension, probably renal vascular, hypertensive cardiac disease with history of congestive heart failure.”

Candidate term: “diabetic renopathy.”

Gold standard target: 4855003,“Diabetic retinopathy.”

Output: 127013003, “Diabetic nephropathy.”