Abstract

Background

Quality patient–clinician communication is paramount to achieving safe and compassionate healthcare, but evaluating communication performance during real clinical encounters is challenging. Technology offers novel opportunities to provide clinicians with actionable feedback to enhance their communication skills.

Methods

This pilot study evaluated the acceptability and feasibility of CommSense, a novel natural language processing (NLP) application designed to record and extract key metrics of communication performance and provide real-time feedback to clinicians. Metrics of communication performance were established from a review of the literature and technical feasibility verified. CommSense was deployed on a wearable (smartwatch), and participants were recruited from an academic medical center to test the technology. Participants completed a survey about their experience; results were exported to SPSS (v.28.0) for descriptive analysis.

Results

Forty (n = 40) healthcare participants (nursing students, medical students, nurses, and physicians) pilot tested CommSense. Over 90% of participants “strongly agreed” or “agreed” that CommSense could improve compassionate communication (n = 38, 95%) and help healthcare organizations deliver high-quality care (n = 39, 97.5%). Most participants (n = 37, 92.5%) “strongly agreed” or “agreed” they would be willing to use CommSense in the future; 100% (n = 40) “strongly agreed” or “agreed” they were interested in seeing information analyzed by CommSense about their communication performance. Metrics of most interest were medical jargon, interruptions, and speech dominance.

Conclusion

Participants perceived significant benefits of CommSense to track and improve communication skills. Future work will deploy CommSense in the clinical setting with a more diverse group of participants, validate data fidelity, and explore optimal ways to share data analyzed by CommSense with end-users.

Introduction

Quality communication is critical to deliver equitable, compassionate, and safe healthcare.1–3 Multiple studies have demonstrated its importance, especially during high-stakes conversations related to end-of-life care, prognosis, goals of care, and delivering difficult news.4–6 Ineffective communication negatively impacts patients and family caregivers, as well as clinicians and organizations, with significant emotional, physical, and financial consequences. 7 Clinicians may receive training in how to communicate more effectively, but such workshops are generally episodic and occur in simulated contexts.8–10 It can be extremely challenging for clinicians to implement best-practice communication techniques in the less predictable clinical arena and even harder to evaluate communication performance or track progress over time. Current methods to evaluate healthcare communication performance in naturalistic settings, provide actionable feedback, and track progress longitudinally is limited and remains a key gap in healthcare communication science.6,10,11

Another critical need is to reduce disparities and enhance equity related to healthcare communication. 12 The ramifications of suboptimal health-related communication disproportionately impact minoritized and underrepresented groups, and innovative approaches and interventions are needed. 13 Technology that leverages a “learning health system approach” (e.g. timely sharing of relevant data from healthcare interactions that can change behavior and improve health outcomes14,15), combined with rapid advances in natural language processing (NLP),16–20 machine learning, 21 and wearable devices, 22 offers novel opportunities to measure and improve clinician–patient communication and, in turn, help reduce health-related communication disparities.

The purpose of this paper is to describe the design and initial pilot testing of CommSense, 23 a novel communication software application, created with the overarching goal to reliably extract key markers of communication performance, provide actionable feedback to clinicians in real time, and improve the quality of patient–clinician interactions, thus reducing disparities related to healthcare communication.

Methods

This was a prospective, descriptive study to evaluate initial acceptability and feasibility of CommSense. The overall study protocol has been reported previously. 23 Briefly, CommSense is a novel wearable sensing system and associated NLP algorithms deployed on mobile devices (smartwatches) and worn by clinicians, which aims to provide feedback about patient-clinician conversations. The underlying CommSense software builds upon prior work conducted by members of our team related to algorithm development for the monitoring of health-related behaviors.24,25

Phase I: Design of the CommSense application

Establishing communication metrics

Our first step was to determine the optimal and feasible communication performance metrics to be detected and extracted by CommSense. Clinical team faculty (VL, TF, and DL), all with palliative care experience, worked closely with a health sciences librarian to create a strategy to search PubMed, Web of Science, PsycINFO, and CINAHL databases for articles related to patient-clinician communication, palliative care, and best practices (see Supplemental Data File for the full search strategy). Included articles were published in English within the past 10 years, focused on adults within the USA, and were related to communication in the context of serious illness. We selected the timeframe of 10 years for our review of the literature in consultation with our health sciences librarian with the goal to capture the most salient and current recommendations related to palliative care communication. We also wanted to account for a more recent perspective on communication practices given enhanced awareness related to healthcare disparities within the past decade. We identified 96 articles; 18 were added based on expert opinion. Citation titles and abstracts (n = 114) were initially screened in the Zotero reference manager; 72 articles (n = 72) underwent full-text review, and key features were recorded using a 25-item Qualtrics survey developed by our team. All citations were reviewed by at least two members of our study team, and any discrepancies were resolved by group consensus.

From the full-text article review, we extracted specific communication recommendations and best practices relevant for CommSense. Clinical team members (VL, TF, DL, and JE) then met in-person and used a “think-aloud” thematic analysis approach to combine and collapse similar recommendations. This was a highly iterative and interactive process that resulted in a final list of seven core communication metrics (five verbal; two non-verbal) with associated strategies for operationalization. For example, for the communication best practice of “eliciting patient concerns,” we categorized this under the larger umbrella of “explore/seek understanding” and proposed a CommSense operationalization metric of detecting “open versus close-ended questions.” We shared this list with two external communication experts to ensure content validity and gather additional feedback. Our “communication best practices” list was then shared with the engineering team members to discuss technical feasibility and possible approaches to implementation. To assist in this process, the clinical team members added more specific ideas and examples of how to operationalize desired metrics (Table 1).

General/core principles of quality patient-clinician communication to inform design of CommSense.

Creating CommSense simulation scripts

After communication metrics were agreed upon by the clinical and engineering teams, the clinical team crafted a set of “Ground Truth” conversation scripts to be used in pilot testing. The goal of these “Ground Truth” conversation scripts was to embed our established communication metrics (Table 1) within the interactions in order to evaluate the ability of CommSense to successfully identify the desired features. The clinical team developed two sets of conversation scripts (one for physicians, one for nurses) that focused on pain management in the context of advanced cancer and discussing prognosis and goals of care (see Supplemental Data File). Each set of scripts contained a “best case” conversation—embedded with positive/desired communication metrics (e.g. empathy)—and a “worst case” conversation—embedded with negative/undesired communication metrics (e.g. inappropriate use of medical jargon and interruptions). Conversation scripts (n = 8) were drafted by clinical faculty (VL, TF, and DL) and independently labeled (“tagged”) with the desired metrics by two members of the clinical team using Microsoft Word (Figure 1); discrepancies were resolved by group discussion to reach consensus. In order to capture “negative” communication elements or missed opportunities, portions of the conversation were tagged as either “good” or “bad.” For example, “Emotion–bad” represented deflecting or dismissing patient emotion, whereas “Emotion–good” represented responding therapeutically to patient emotion (Table 1).

Excerpted examples of Ground Truth–labeled conversation scripts.

Phase II: Pilot testing of CommSense

Data collection procedures

Institutional Review Board (IRB) approval was granted prior to data collection (SBS IRB #4985), and all participants provided informed consent before data collection. Current and future healthcare providers (i.e. prelicensure nursing and medical students and licensed and practicing clinicians) were recruited from an academic medical center using a snowball sampling approach and email announcements between July and October 2022. Participants wore a commercial smartwatch (Huawei Smartwatch 2 with a microphone) programed with the CommSense application (Python programming language) and read two to four conversation scripts with a “patient” (clinical member of our study team) that aligned with their discipline (e.g. nurses and nursing students read the nurse scripts; physicians and medical students read the physician scripts). Conversation scripts focused on conveying difficult news, discussing prognosis, and managing pain and contained examples of positive (e.g. active listening) or negative (e.g. interruptions and medical jargon) communication skills. Participants were instructed to follow the script as closely as possible, and study team personnel emphasized that the goal was not to evaluate the quality of their interaction but instead to evaluate if the software would detect the desired communication features. Participants were not directed with any specific non-verbal guidance (a chair was provided, but participants were not told they needed to sit in it); some participants wore facial masks related to COVID-19, while others did not. Conversations were recorded by CommSense, as well as by Otter.ai software to provide a “Ground Truth” transcript and an audio file of the conversation. All data collection occurred in the School of Medicine Clinical Simulation Lab and took approximately 20–30 minutes for each participant. Participants received a $25 gift card as compensation for their time.

Immediately after completing the simulation, participants completed an electronic 27-item exit survey (Qualtrics, Provo, UT; survey items summarized in Table 2; see Supplemental Data File for the full survey) via a study tablet to assess their experience with CommSense and their opinions about its potential application in clinical practice. The survey consisted of basic demographic questions (n = 6) and Likert-style questions related to the following: prior experience and comfort related to communicating with seriously ill patients (4 items); prior experience with wearable devices (1 item); and general user experience with CommSense, perceived benefits and preferences for receiving communication performance feedback (12 items). Free-text questions (4 items) allowed the participant to share additional qualitative feedback.

CommSense post-simulation user experience survey items.

Survey items were informed by the health communication literature and by standardized mobile application evaluation tools (such as the SUS46,47 and MARS 47 ). We created a customized assessment survey as many items from the standardized tools did not apply (e.g. our study participants did not need to interact with the smartwatch or application, only to wear it while it passively recorded their interaction), and our goal was to understand, very specifically, how the CommSense technology was perceived by potential future users. The survey was internally piloted with eight members of our interdisciplinary team (n = 8) to ensure clarity, logical flow, and length of time to complete and iteratively revised based on feedback.

Data analysis

Qualtrics survey data were verified, cleaned, and exported to SPSS (v.28.0) for analysis. Items for all surveys were completed, and there were no missing data. Descriptive analysis was performed (frequencies, percentages) on all quantitative items, and free-text responses were exported to Microsoft Word and collated by question (e.g. all responses for question 1 were grouped together) to explore themes or patterns. We analyzed quantitative survey items both as the total sample and then by two separate characteristics to explore if different perceptions of CommSense may exist based on whether a participant was a student or already an experienced clinician (“trainee status”) or if the participant was affiliated with the discipline of nursing or medicine (“role”). For “role,” “nurse” included licensed nurses and nursing students and “physician” included licensed physicians and medical students. For “trainee status,” “students” included medical and nursing students and “clinicians” included licensed nurses or physicians. Crosstabs were run for all survey items by both the trainee status (student versus clinician) and the healthcare role (nurse versus physician). It is important to note that these groups were treated as separate measures in our analysis (e.g. each participant was assigned a role—either physician or nurse—and separately assigned a trainee status—either student or clinician). Deidentified CommSense recordings were off-loaded from wearable devices for post-processing analysis with NLP and other computational methods to explore the accuracy of extraction of desired communication metrics compared to Ground Truth conversation scripts; results from this aspect of analysis are the focus of a forthcoming publication.

Results

Forty (n = 40) participants (5 clinicians, 19 nursing students, and 16 medical students) pilot tested CommSense and completed exit surveys. The majority of participants were aged 18–24 (n = 29, 72.5%), female (n = 32, 80%), and White (n = 24, 57.1%). All clinicians in the sample (n = 5, 100%) practiced in palliative care, and almost all (n = 4/5, 80%) had more than 10 years of clinical experience; one clinician (n = 1/5, 20%) had been practicing between 1 and 5 years.

Demographics

See Table 3 for additional demographic details.

Participant demographic information

Could select more than one option. SOM: School of Medicine student; SON: School of Nursing student; RN: Registered Nurse; MD: Doctor of Medicine; NP: Nurse Practitioner.

Communication comfort and experience

Overall, most participants reported having “a little” experience communicating with seriously ill patients (n = 22, 55%) and formal communication training (n = 21, 52.5%) and being “somewhat uncomfortable” communicating with seriously ill patients (n = 12, 30%) (Table 4). When asked “how would you rate your clinical communication skills, overall, on a scale from 1 to 10 (1 = poor, 10 = excellent),” half of the overall sample (n = 20, 50%) rated themselves as either a 7/10 (n = 12, 30%) or an 8/10 (n = 8, 20%). All clinicians (n = 5, 100%) rated their clinical communication skills as a 9/10 (n = 2, 40%) or a 10/10 (n = 3, 60%). The distribution of student responses was greater, with 5 students (n = 5, 14.3%) rating their clinical communication skills between a 3–5/10; 27 students (n = 27, 77.1%) rating their clinical communication skills between a 6–8/10; and 3 students (n = 3, 8.6%) rating their clinical communication skills as a 9/10.

Self-reported communication skills and training, shown by total sample, trainee status, and role.

Trainee status: “student” includes medical and nursing students; “clinician” includes licensed and practicing nurses and physicians. bRole: “nurse” includes licensed nurses and nursing students; “physician” includes licensed physicians and medical students.

Use of wearable devices

Almost 60% of the total sample (n = 23/40, 57.5% (students, n = 20/35, 57.1%; clinicians, n = 3/5, 60.0%)) reported regularly wearing a smartwatch or similar device; of those, all but one reported wearing the device daily and regularly in the clinical setting (n = 22, 95.6%); 100% (n = 23) reported receiving some type of feedback (e.g. fitness-related feedback (n = 23); message notifications (n = 22); sleep data (n = 1); and weather alerts and GPS tracking (n = 1) from the device (note: respondents could select more than one response for the type of feedback received on their wearable)). The one individual (n = 1, 4.3%) who reported not wearing their smartwatch regularly in the clinical setting reported it was because “it's distracting to me.”

Of the 17 total participants (n = 17, 42.5%) who reported not regularly wearing a smartwatch or similar device, reasons included the following (participants could check all that apply): never tried it but am open to it (n = 7); never tried it and am not interested (n = 1); too expensive (n = 7); don’t want to wear a watch (n = 1); and other (n = 6).

Perceptions of CommSense and perceived benefits

Overall, the majority of participants “somewhat agreed” or “strongly agreed” that CommSense had significant potential to increase awareness of the importance of patient–clinician communication, help healthcare organizations provide higher-quality healthcare, and facilitate more compassionate communication between clinicians and patients and their family members. Figures 2 to 4 present comparisons of responses between the total sample, by role (nursing students and licensed nurses compared to medical students and licensed physicians), and by trainee status (student nurses and medical students compared to licensed and practicing nurses and physicians). All practicing clinicians (n = 5, 100%) “strongly agreed” that CommSense could increase awareness of the importance of patient–clinician communication and help clinicians communicate more compassionately with patients and their family members.

Participant agreement with the statement: “Feedback from CommSense could increase awareness of the importance of patient–clinician communication” (strongly disagree to strongly agree), by total sample, role, and trainee status. Role: “nurse” group includes licensed nurses and nursing students; “physician” group includes licensed physicians and medical students. Trainee status: “student” group includes medical and nursing students; “clinician” group includes licensed and practicing nurses and physicians.

Participant agreement with the statement: “Feedback from CommSense could help healthcare organizations deliver higher-quality healthcare” (strongly disagree to strongly agree), by total sample, role, and trainee status. Role: “nurse” group includes licensed nurses and nursing students; “physician” group includes licensed physicians and medical students. Trainee status: “student” group includes medical and nursing students; “clinician” group includes licensed and practicing nurses and physicians.

Participant agreement with the statement: “Feedback from CommSense could help clinicians communicate more compassionately with patients and their family members” (strongly disagree to strongly agree), by total sample, role, and trainee status. Role: “nurse” group includes licensed nurses and nursing students; “physician” group includes licensed physicians and medical students. Trainee status: “student” group includes medical and nursing students; “clinician” group includes licensed and practicing nurses and physicians.

Almost 90% of participants (n = 35, 87.5%) “somewhat agreed” (n = 23, 57.5%) or “strongly agreed” (n = 12, 30%) that they were comfortable with the idea of CommSense recording their clinical conversations; only two participants (n = 2, 5.0%) “somewhat disagreed” and no participants “strongly disagreed” with this statement. Similarly, most participants “strongly agreed” (n = 15, 37.5%) or “somewhat agreed” (n = 20, 50%) that they were comfortable with CommSense assessing their communication skills; no participants disagreed with this statement.

Concerns about privacy varied. When asked if “CommSense makes me concerned about privacy/confidentiality,” six (n = 6, 15.0%) participants “strongly disagreed” (n = 6, 15.0%) or “somewhat disagreed” (n = 6, 15.0%); nine (n = 9, 22.5%) participants neither agreed or disagreed; and n = 12 (30.0%) participants “somewhat agreed” or “strongly agreed” (n = 7, 17.5%). Of note, despite these concerns, 92.5% (n = 37) of all respondents agreed they would be willing to use CommSense in the future (strongly agreed, n = 23, 57.5%; somewhat agreed, n = 14, 35.0%); 7.5% (n = 3) indicated they neither agreed nor disagreed and no participants disagreed with the statement.

Feedback and data sharing preferences

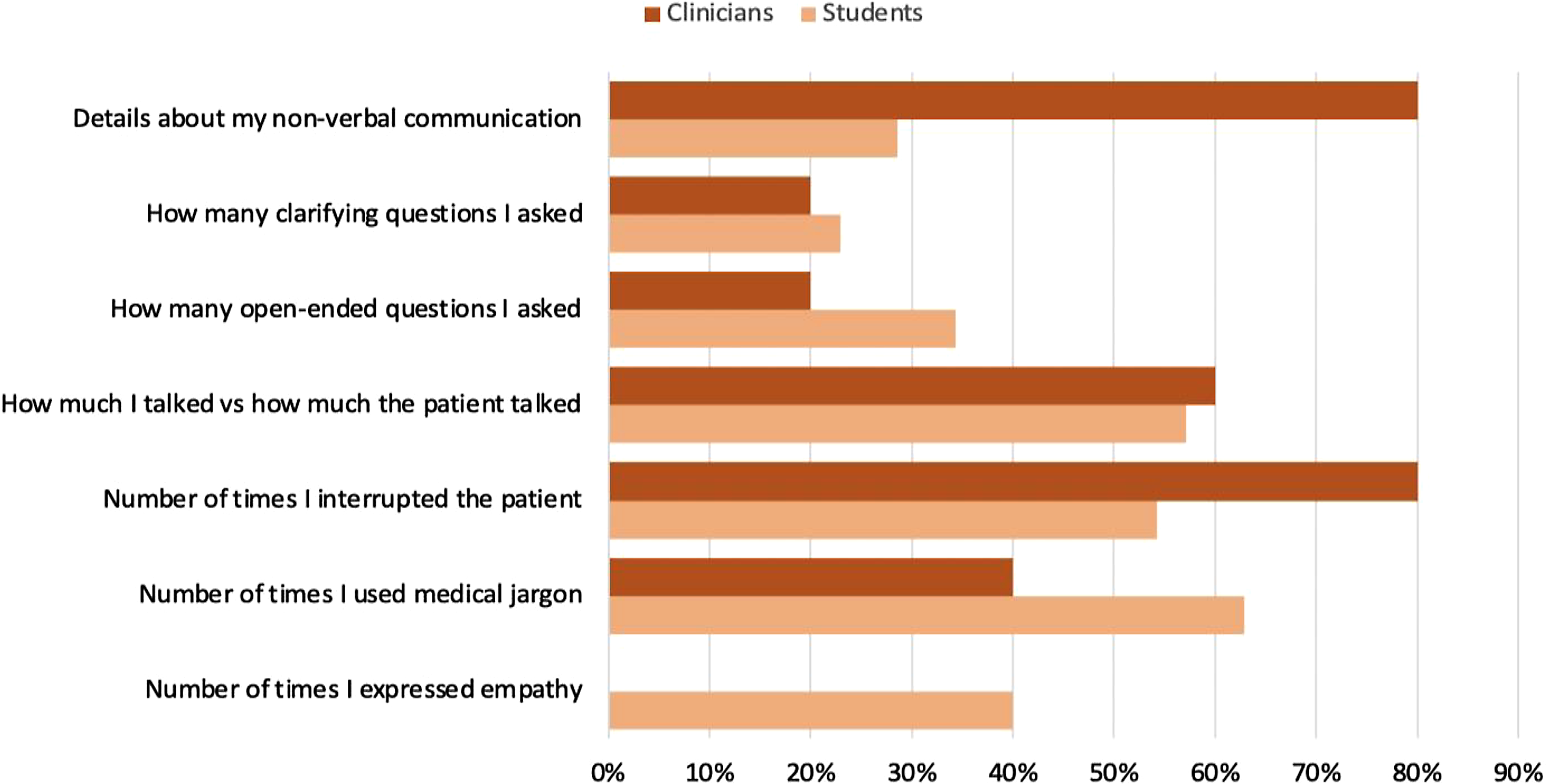

Participants were asked to select the types of feedback they would be most interested in receiving from CommSense about their interaction with a patient (could select their top three choices). For the overall sample, the most preferred feedback included use of medical jargon (60%), speech dominance (58%), and number of interruptions (58%) (Figure 5). By role (Figure 6), physicians rated number of interruptions (68%), medical jargon and speech dominance (both 58%), and number of open-ended questions (47%) as most preferred compared to nurses who rated medical jargon (62%), speech dominance (57%), and interruptions and details about non-verbal communication (both 48%) as most preferred. For clinicians (Figure 7), preferred metrics for feedback included number of interruptions and details about non-verbal communication (both 80%), speech dominance (60%), and use of medical jargon (40%); for students, the most preferred feedback included use of medical jargon (63%), speech dominance (57%), and number of interruptions (54%).

Preferred feedback from CommSense, by total sample (n = 40). Respondents could select their top three choices.

Preferred feedback from CommSense, by role: physicians (n = 19); nurses (n = 21). Respondents could select their top three choices. Physician group includes practicing physicians and medical students. Nurse group includes practicing nurses and nursing students.

Preferred feedback from CommSense, by trainee status: clinicians (n = 5); students (n = 35). Respondents could select their top three choices. Student group includes nursing and medical students. Clinician group includes practicing physicians and nurses.

When queried about the method in which participants would like to receive feedback about their communication performance, overall, the most preferred method was (note: participants could “select all that apply”): post-interaction summary statistics (e.g. a composite score for “clarity” or “empathy”) (n = 38 responses, 47.5%), followed by longitudinal data to show trends in communication performance over time (e.g. improvement in using active listening over the past 3 months) (n = 31 responses, 38.8%) and, lastly, real-time feedback during the interaction (e.g. a vibration on the watch when excess medical jargon is being used) (n = 9 responses, 11.3%). Two participants selected “other” for this question and expressed a desire to have all three options (“post-interaction and longitudinal data would be most useful although having the option of real-time feedback would be helpful as well”), and another participant astutely recognized the need for customization of metrics with the comment, “the vibration for medical jargon is neat, but would be nice if this was ‘intelligent’ as sometimes patients are in the medical field or prefer the jargon.”

Open-ended responses

User interaction with the actual watch application was limited for this pilot, as CommSense was simply turned on by a member of our research team and passively recorded the conversation. Reported user concerns were related to the bulkiness of the watch and the importance of having the application integrated with a user's existing wearable (i.e. not having to wear a second smartwatch). Consistent with the quantitative survey items, there was strong consensus among participants that CommSense could be helpful in improving communication. Responses focused on the advantages of receiving unbiased, objective feedback from CommSense, particularly related to speech dominance and use of medical jargon; the helpfulness of the technology in clinical rotations for students; and the potential for feedback from CommSense to improve the patient experience. While most comments were positive, concern was expressed that feedback from CommSense could be used inappropriately for clinician evaluations or student grades; that those who may benefit most may be most resistant to using the technology; of the critical importance of data storage, security, and access; and that assigning “negative” or “positive” labels to communication metrics could be problematic. See Table 5 for a summary of representative responses.

Summary of representative responses to open-ended survey items.

Discussion

We are encouraged that this initial pilot work demonstrated a high level of acceptability and feasibility with the CommSense technology with a sample of currently practicing and future clinicians. We are particularly encouraged that even though participants tested CommSense in a simulated setting, they reported strong interest and receptivity to using the technology in the actual clinical setting and perceived CommSense as very beneficial at both the individual and interpersonal levels, as well as organizationally. Our results contribute to the health communication literature by proposing a new approach to measuring communication performance and offering a strategy that could be helpful to longitudinally assess the impact of communication training programs in the clinical arena. This approach aligns with recommendations from the American College of Physicians that recommends creating real-time monitoring and feedback on communication performance for clinicians. 34

We view our list of CommSense metrics not as an exhaustive or definitive list but instead as a starting point for this early-stage research. Many of the items we included (e.g. jargon and responding to emotion) are consistent with continued needs to ensure best practice in oncology and palliative care communication. 5 We see significant potential for technology such as CommSense to provide critical support in helping evaluate the impact of communication skills training, another key and ongoing need. 10 As machine learning and NLP continue to advance, we envision the future ability of CommSense to be adapted to detect specific, customized metrics. For example, if a healthcare provider completes communication training such as VitalTalk 48 or the COMFORT 8 curriculum, then their CommSense wearable could be programed with specific outcome measures relevant to these communication skills training programs.

The higher number of nursing and medical student participants skewed the age of our overall sample (younger) and likely influenced results in other ways, such as student participants reporting less clinical experience and comfort with communication; this group may potentially also have had a greater openness to wearing a smartwatch. Due to the unbalanced sample, results that compare clinicians to students must be interpreted cautiously; we present them in this paper as we feel there are some potentially helpful signals despite the small number of clinicians. For example, all clinicians self-rated themselves as highly proficient in patient–clinician communication; this is helpful to know, as CommSense could potentially alter a seasoned clinician's self-perceived confidence in communication (e.g. clinicians feel they are doing a great job, but CommSense presents data that may threaten this assumption); for some, this may be motivating, for others, discouraging. Relatedly, while there was general consensus that the preferred way to receive feedback from CommSense would be as post-interaction summary statistics, some participants expressed an interest in real-time haptic feedback and emphasized they would want concrete examples of how to improve (e.g. “if I used too much medical jargon, offer alternative ways to phrase it,” or “if I’m not being empathetic to give examples of ways to offer empathy”). This underscores the importance of thoughtfully presenting feedback from CommSense in a way that is most constructive and conducive to positive change and providing options and autonomy to users regarding about how they would like to receive feedback.

There was general consensus from participants that feedback regarding the use of medical jargon, number of interruptions, and speech dominance were the most important communication feedback performance metrics. Interestingly, the number of empathic statements and open-ended or clarifying questions and details about non-verbal communication were rated as lower feedback priorities by the overall sample—although for some participants, these metrics were considered more important. Additionally, some participants astutely recognized and commented that assigning metrics a binary label of “good” or “bad” could be inappropriate. For example, a high use of medical jargon could be problematic in some conversations but appropriate in others, such as if the patient is also a clinician in a similar field or interrupting the patient may be necessary if an urgent medical history is required during an emergency. These examples illustrate that caution is needed in labeling communication behaviors as “positive” or “negative” and the critical importance of contextualizing feedback from CommSense, based upon both patient characteristics and preferences, as well as specific clinical situations. Contextualization may help alleviate concerns expressed by some participants that CommSense feedback could be used inappropriately in evaluations or create discord among team members.

While overall participants expressed enthusiasm for CommSense, recognized its potential benefit, and reported a high likelihood to use CommSense in the future (it is noteworthy that no participants said they would not be willing to use CommSense in the future), some important concerns were raised that must be considered in the scaling-up of such technology. Participants acknowledged the importance of privacy and confidentiality, and the potential risk of data breaches. This is a critical point, as concerns related to patient privacy may be a leading barrier to implementation of CommSense. Clearly, any device that is recording sensitive healthcare-related conversations must adhere to stringent data security and HIPAA-compliant protocols and have built-in safeguards, such as automatically “turning off” when the interaction has ended. Research teams will need to work in close collaboration with health systems and institutional information technology teams to ensure that the use of CommSense (and related technologies) meets the highest security standards related to data capture, processing, sharing, and storage. Likewise, a plan to ensure consent of all participants involved in the interaction would be needed; this could be difficult and complex in in-patient or high-traffic clinical settings. To avoid concern of cost, enhance potential for scalability, and ensure data security features, employers would ideally provide the mobile/wearable device on which CommSense runs. In many organizations, clinicians are provided with a tablet or smartphone; CommSense could be loaded onto such devices or, with the right safeguards, downloaded onto an individual's preexisting personal smartwatch, which was a preference expressed by a number of participants.

We observed some interesting findings related to conducting the conversation simulations that may be helpful to others engaged in similar research. We observed that some participants had an overall demeanor and way of speaking that was so therapeutic they were able to make the more overtly and purposefully “negative” elements of the conversational script actually sound therapeutic. This finding may warrant further exploration. We also observed that participants spoke faster through negative elements of the conversation scripts, and speech rapidity may be an interesting metric to consider for future conversational analysis. Additionally, most students needed help with some of the medical terminology as they had not used it/learned it yet, and we also quickly realized that while interruptions happen frequently in natural speech, they are actually quite difficult to simulate. Lastly, some participants expressed that reading the less therapeutic scenarios was distressing; in hindsight, incorporating a more formal and robust post-simulation debriefing process would have been helpful.

Future directions

We see significant potential for CommSense and similar technologies to support clinicians, as well as other professionals and contexts where quality communication is critical (for example, perhaps in couples’ therapy). Future work will include scaling up and deploying CommSense in the real clinical arena with a more diverse population of clinicians; gathering feasibility and acceptability data from patients; continuing to evaluate technical capabilities of CommSense; exploring how to best customize metrics and link them to relevant patient-centric and organizational outcomes; and building out the mobile application to optimally share data analyzed by CommSense with end-users. We are particularly interested in evaluating the ability of CommSense to reduce disparities in healthcare-related communication and could explore testing CommSense with discordant patient and clinician dyads (race/ethnicity, educational background, etc.). Moving forward, we anticipate that establishing the metrics for CommSense will be a dynamic process, as the science of communication continues to evolve. For example, future work could include more novel and innovative metrics, such as those related to joy and connection expressed at the end of life. 49 This pilot focuses on face-to-face interactions, but we also see strong potential for CommSense to support remote/telehealth interactions or assist with evaluation of text messaging or other electronic documentation as well.

Limitations

A limitation of this study is that CommSense was tested in a simulated setting using scripted scenarios. Although the ultimate objective of this research is to deploy CommSense in the clinical setting with real patients, this initial step, compatible with the scope of the pilot study, was essential to evaluate preliminary data fidelity and user acceptability and feasibility. Future work will deploy CommSense in the clinical arena with real patients. Additionally, despite our recruitment efforts, enrolling busy clinicians and coordinating their schedules with the simulation lab and study team availability proved extremely challenging. This resulted in an unbalanced sample when considered as students compared to clinicians. It is also important to note that our clinician sample was composed entirely of palliative care clinicians with experience and likely a particularly vested interest in quality communication. Our sample is less unbalanced when viewed by physician versus nurse participants; however, considering the sample in this way likely obscures key differences between practicing clinicians and students related to their experience with direct patient care. Our small sample size also limited our ability to test for statistical significance between the groups; however, this was not an intended aim of this pilot study. Our review of the communication literature focused on the past 10 years, as it was beyond the scope of this pilot study to conduct an exhaustive systematic review; it is possible that some communication best practices were not captured in our review. While we attempted to be as systematic and objective as possible, deciding on communication “best practices” and how to operationalize communication metrics for detection by CommSense—and how to label and tag portions of the Ground Truth logs—was inherently subjective. In the end, we grouped together metrics that we felt were closely related and difficult to parse (e.g. the difference between “empathy” and “positive emotion”). It is also important to note that the initial focus of CommSense is on verbal/spoken communication; future work will incorporate more non-verbal aspects of communication, such as body gestures. Lastly, we asked participants about how they would like to receive feedback regarding their communication performance, but we did not actually provide feedback to participants in this pilot study; this is a key goal for future work.

Conclusion

Overall, participants evaluated CommSense as having significant potential to help improve patient–clinician communication and healthcare delivery, reported being willing to use the technology in clinical practice, and are eager to see data collected by CommSense. Although participants expressed a willingness to use CommSense, there were concerns regarding privacy and confidentiality; attention to data protection will be critical in future studies of CommSense with real patients. It will also be important to test CommSense with a more racially and ethnically diverse sample and with a larger number of actively practicing clinicians. Clinician–patient communication is extremely complex. Our pilot work suggests CommSense may be one valuable tool in a large and diverse toolbox to help clinicians learn about their communication patterns, track and measure progress towards communication goals, and ultimately improve patient outcomes. Additionally, appropriate metrics to evaluate communication are critical but can be challenging to operationalize. We propose a core set of evidence-based communication “best practices” that can be extracted from conversations using technology applications such as CommSense to provide real-time communication performance feedback with the goal to enhance health equity.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076231184991 - Supplemental material for Feasibility and acceptability testing of CommSense: A novel communication technology to enhance health equity in clinician–patient interactions

Supplemental material, sj-docx-1-dhj-10.1177_20552076231184991 for Feasibility and acceptability testing of CommSense: A novel communication technology to enhance health equity in clinician–patient interactions by Virginia LeBaron, Tabor Flickinger, David Ling, Hansung Lee, James Edwards, Anant Tewari, Zhiyuan Wang and Laura E Barnes in DIGITAL HEALTH

Footnotes

Acknowledgements

The authors would like to thank Mehdi Boukhechba, Toby Campbell, Lee Ellington, S.M. Nusayer Hassan, Anna Kutcher, Daniel Wilson, Shayne Zaslow, and Kara Fitzgibbon for their assistance and guidance in this research.

Contributorship

VL, TF, DL, and LEB conceived the study. VL, TF, DL, and JE were involved in researching the literature. VL led protocol development and gained IRB approval. VL, JE, TF, DL, HL, AT, and ZW were involved in participant recruitment and data collection. VL led data analysis and wrote the first draft of the manuscript. All authors were involved in the interpretation of results and reviewed and edited the manuscript and approved the final version of the manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical Approval

This study was approved by the University of Virginia Social and Behavioral Sciences Institutional Review Board (UVA SBS IRB #4985).

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the University of Virginia Engineering in Medicine Seed Pilot Program.

Guarantor

VL.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.