Abstract

Background

ChatGPT has recently emerged as a disruptive technology, potentially impacting various societal dimensions, including pharmacy practices. In Thailand, community pharmacists are navigating transitions as patients increasingly rely on digital tools for healthcare recommendations. This study explores the attitudes of community pharmacists in Hatyai, one of Thailand's most populated cities, towards the integration of ChatGPT in pharmacy services.

Method

ChatGPT-3.5 was used to generate responses to three questions concerning the use of medicine in special populations in the Thai language. These responses were then incorporated into a questionnaire and evaluated using a Likert scale from 1 to 5. Participants who consented were asked to rate the responses and participate in an in-depth interview.

Results

The majority of participants rated the responses favorably, with scores of 4 and 5 accounting for at least 60% of the ratings. Only a small proportion of responses received doubtful ratings (score of 3) or was in disagreement, ranging from 20% to 40%. Moreover, open opinions extracted from the interviews suggested that participants viewed ChatGPT as a capable assistant, as it provided fast yet reasonably accurate information in the Thai language.

Conclusion

The findings indicate that community pharmacists view ChatGPT as a capable assistant, albeit noting the need for further refinements. The study underscores the importance for pharmacists to proactively adapt to technological advancements, particularly those affecting patient safety, to enhance healthcare delivery and optimize treatment outcomes.

Introduction

The advancement of search engine technology has catalyzed a multitude of changes across human society. Up to the present, undoubtedly, people in the modern world have become accustomed to researching what they need at the tips of their fingers via smart devices. Health information represents one of the domains that individuals can explore at their discretion. However, the legitimacy of this information—the degree to which information accurately reflects established pharmacotherapeutic knowledge, is relevant to the target question, and covers the key points of the question, with appropriate citations and acknowledgment of limitations—is not always guaranteed.1,2

The introduction of large language models (LLMs), such as ChatGPT, which was launched in late 2022, has stirred significant discussion and interest in the community, including academic researchers.3,4 ChatGPT exhibits a variety of competencies in assigned tasks, ranging from drafting travel plans to solving computational problems, including offering coding suggestions. However, there have been reports of a phenomenon known as “hallucination,” where information provided by ChatGPT was unintentionally fabricated due to the nature of its language model.5,6 Moreover, as it is primarily capable of generating text-based information, if utilized in a mischievous manner, it can produce various types of misinformation with greater rapidity than ever before. This represents a facet of the disruptive era, albeit contributing negatively to society. 7

Community pharmacists in Thailand are categorized as one of the primary healthcare providers. The regulation of drug dispensing in Thailand is quite different from other countries. Several items that are categorized as prescription drugs in the US or Australia, such as amoxicillin and other nonsteroidal anti-inflammatory agents, are not in Thailand. 8 Under the legislative framework governing drug dispensing in Thailand, the aforementioned items may be dispensed at community pharmacies, subject to the discretion and justification provided by pharmacists. Moreover, due to the high population density in Thailand and significant differences in household income, many patients tend to seek more affordable treatment from community pharmacies rather than utilizing services from public hospitals or private clinics. 9 As such, in actuality, countless and myriad types of patients frequently visit drugstores on a daily basis, a scenario that occasionally requires the pharmacists in charge to adopt varied management approaches. An emerging particular type of patient in Thailand, who shares similarities with those in other nations, is those who rely on news shared via social media or who use standard search engines to self-diagnose before seeking the appropriate medication in drugstores. 10 These patients may come from various educational backgrounds; hence the selection of information sources could range from a Google search to, in more extensive cases, academic articles. Consequently, the propensity for utilizing artificial intelligence (AI)-assisted search tools, such as ChatGPT, to investigate pharmacotherapy-related health information as a preliminary step before procuring medication is, conceivably, a development that is nearing practical realization. However, despite gradual progress in health literacy in Thailand, significant challenges persist. Data suggested that the elderly and individuals residing in remote areas are particularly affected by poor health outcomes due to limited health literacy.11,12 Factors such as illiteracy, socioeconomic status, and occupation significantly impact health literacy levels. This is exemplified by the widespread sharing of fake news or unreliable sources regarding health tips, leading individuals to follow such information without recognizing the potential detrimental consequences. These issues highlight the complex nature of the emerging patient demographic in Thailand.

It has been observed that the integration of AI tools, particularly ChatGPT, into pharmacy practices has become increasingly prevalent and a topic of recent interest. Examples include their use in medication therapy management and the identification of drug–drug interaction (DDI) problems. The free version of ChatGPT (ChatGPT-3.5) proved partially effective, particularly in providing potential DDIs and explanations in layman’s terms. 13 In contrast, the advanced, paid model of ChatGPT (ChatGPT-4.0) demonstrated its capabilities in addressing varying degrees of complexity in medication therapy management and even provided appropriate follow-up plans and alternative treatment options. 14 As such, it could be inferred that the integration of ChatGPT could be useful, even as an alternative support tool. It could offer immediate and easy-to-comprehend information, proving its value when handling high volumes of cases in some pharmacy settings. Moreover, it could potentially serve as an assistant in providing patient education and medication counseling, thus facilitating routine administrative tasks.

One notable shortcoming of ChatGPT is its proficiency in handling non-English languages such as Thai, Turkish, and Korean.15,16 The deployment of ChatGPT, particularly when used in the English language, has shown promise in handling various complex tasks.3,17 Previous preliminary studies have highlighted inconsistencies in the quality of responses to health-related questions in the Thai language, although they are generally deemed acceptable. 18 However, there is a noticeable lack of research on the use of ChatGPT in the Thai language, particularly concerning its application for specific purposes, such as providing pharmacotherapy information to special populations. The current study aims to gather attitudes from community pharmacists in a practical evaluative sense, using the Thai language as the medium of communication. It seeks to ascertain their perspectives on the acceptable quality of responses and the potential for implementation in pharmacy practice, drawing insights from an in-depth interview approach.

Methods

Study design and setting

Due to the complexity of pharmacy services for patients, in-depth interviews, as part of a qualitative approach, are the optimal choice to extract the most valuable insights from pharmacists. An in-depth interview is also a method of consideration when a variety of outputs from participants is anticipated. 19 The perspectives on the implementation of ChatGPT, as derived from the sample, could offer insights into the evolving direction of pharmaceutical care in Thailand.

The current qualitative, cross-sectional study was initiated in the Hatyai district, Songkhla province, one of the most populated cities in the southern region of Thailand. The investigators aimed to include community pharmacists from different sub-districts of Hatyai, where the population density was high and evenly distributed. Furthermore, the investigators aimed to collect data from pharmacists with varying years of experience in community pharmacy to understand whether their viewpoints on the interview topics differed based on their professional tenure.

An interview guide was developed in the Thai language by the investigators (Supplementary A) and received content validation approval from expert panelists (Supplementary B). The topics focused on the impressions and attitudes towards the information obtained from ChatGPT (GPT-3.5-turbo) as a result of input questions. Three questions, written in Thai language and covering themes of drug use in special or vulnerable populations, including pediatric, pregnant, and geriatric patients, were posed to ChatGPT for response.

To simulate the pattern of browsing the internet for information exploration, responses from ChatGPT were generated three times for each question, without any modification to the questions posed, yielding a total of nine responses. The investigators compiled the questions and their corresponding responses into one document, amounting to three pages in total, which was then presented to the participants. The participants were asked to evaluate each response generated by ChatGPT, followed by scoring the legitimacy of the information in terms of accuracy, relevance, completeness, citation, and acknowledgement of limitations. The details of each evaluation paradigm include:

Accuracy: evaluating how closely the texts generated by ChatGPT align with established pharmacotherapeutic principles and treatment guidelines. This includes assessing the correctness of information about drug actions, indications, contraindications, adverse effects, and drug interactions. Relevance: assessing the extent to which the texts address the target question. This involves determining whether the information provided is pertinent to the topic and free of unnecessary details. Completeness: assessing how well the generated texts cover all essential aspects of the target question, including those in the Thai language. Citation and acknowledgment of limitations: evaluating whether the texts provide appropriate references to support ChatGPT's claims and acknowledge the limitations of the information provided. This also involves suggesting consultation with health professionals for additional guidance.

The scoring system was based on a Likert scale ranging from strongly disagree (1) to strongly agree (5). This process continued until all nine responses were completed.

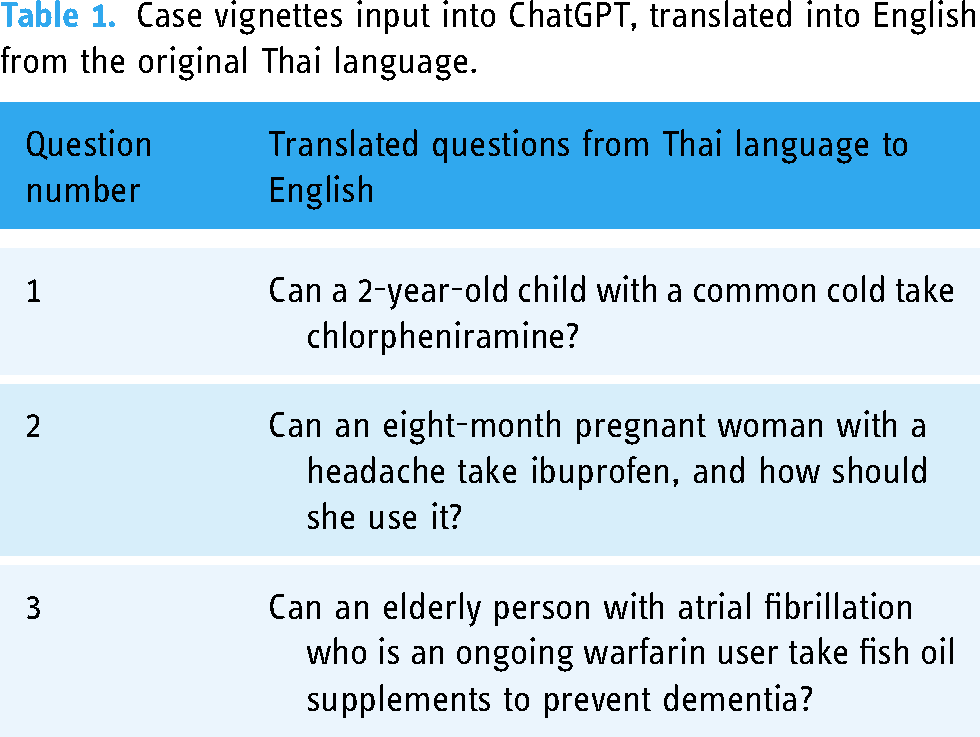

Subsequently, the participants were requested to offer their open opinions on the responses generated by ChatGPT. They were specifically asked to focus on the accuracy of the information and its implications as a healthcare information source, especially in the context of its use in Thailand and the Thai language. The interviews concluded after discussing topics related to the potential usefulness and threats of artificial intelligence, such as ChatGPT, to solicit open opinions from the participants. The case vignettes as shown in Table 1 were chosen based on the commonness of encountering, the prevalence of misconceptions or doubts in Thailand, and the use of drugs in special populations with serious health problems or consequences.

Case vignettes input into ChatGPT, translated into English from the original Thai language.

This study was granted an exemption from requiring ethical approval by the Ethical Committee of the Faculty of Pharmaceutical Sciences at Prince of Songkla University, as per the memorandum PSU 108/66-3165, dated December 6, 2023.

Sampling and recruitment

The authors selected community pharmacists as participants based on the population density within the Hatyai district, Songkhla province, using snowball sampling. Participants were recruited by telephone, based on the following inclusion criteria: (a) holding a pharmacy license; (b) consenting to be recorded on tape; and (c) having worked as a community pharmacist in a store recognized as an Accredited Pharmacy, which was qualified by The Office of Pharmacy Accreditation (Thailand), The Pharmacy Council of Thailand. The sample size determination for data collection through in-depth interviews should involve at least five participants. 20

Data collection

A semistructure interview was conducted using in-person interviewing. All participants were scheduled appointments for around 1–1.5 h at their workplaces, consented to be recorded during the interview, and the recordings were then transcribed afterwards to safeguard against any misinformation. The privacy of the participants was guaranteed by anonymizing their identities, and all records were stored only on the local computer storage of the investigators. Additionally, the verbatim audio recordings were sent to all participants for review, in accordance with the standard practices outlined in the Consolidated Criteria for Reporting Qualitative Studies guidelines (Supplementary C). The field investigators were validated to have no prior relationship with the participants before conducting the interview.

Interviews commenced after obtaining written informed consent and presenting the research proposal. The interview protocol initiated with an introduction outlining the aim of the interview, the terminology to be used, and the expected duration and scope. All interviews were conducted during February to March 2024. All investigators involved in this study were accredited in ICH Good Clinical Practice.

Data analysis

All transcripts were pseudonymized prior to analysis using the framework method. The data were coded in Microsoft Excel and subsequently analyzed using R statistical software, in conjunction with the necessary computing, wrangling, and visualization packages.

Subsequent thematic analysis was performed after reviewing the interview responses from the open opinion section (Supplementary D), which inquired the participants about various paradigms of the impact of ChatGPT on pharmacy practices. 21 Several aspects were observed, which can be analyzed into insightful perspectives.

Results

Participant characteristics

In total, five participants were recruited and completed the entire process, which occurred during February to March 2024. A sufficient number of participants have met the criteria for in-depth interviews. 20 The participants’ experience in pharmacy practice varied from two to thirty years. Detail of participants characteristic was shown in Table 2. Additionally, there were no non-participants present during the interview and there were no repeated interviews in this study.

Selected characteristics of community pharmacists, Hatyai, Songkhla, Thailand

Part 1: The extent to which community pharmacists agree with the data obtained from ChatGPT

Figure 1 summarizes the evaluation score obtained from all participants. Each figure illustrates the average rating given by community pharmacists to the responses from ChatGPT, based on three regenerated responses. Thus, the total number of evaluations was three questions multiplied by three regenerated responses, multiplied by five, resulting in a total of 45 evaluations. The quality of evaluation was guided by the field investigators to the participants if they required direction for the evaluation. The guidance followed conversational instruction as part of the interview but focused on three key elements: accuracy, relevance, and supporting evidence. Accuracy and relevance were assessed based on how closely the ChatGPT-generated texts align with established pharmacotherapeutic principles and guidelines and their contextual appropriateness to Thailand. While obtaining explicit supporting evidence from ChatGPT can be challenging, this element was measured based on logical reasoning and sound principles, especially within the context of Thai society and language. These elements were categorized as part of the correctness and citation and acknowledgment of limitations paradigm.

Likert scores obtained from summarizing responses of all participants in the study. Panels (A, C, and E) represent the scores derived from questions regarding the accuracy, relevance, correctness and citation and acknowledgement of limitations. Panels (B, D, and F) represent the scores derived from questions regarding the participants’ willingness to solely utilize the information obtained from ChatGPT in practical situations. A Likert scale has been presented, ranging from 1 (red; strongly disagree) to 5 (solid green; strongly agree).

Generally, participants tended to give favorable scores regarding accuracy and practical acceptability across all three questions and their regenerated responses. Scores of 4 and 5 were the most common, comprising at least 60% of responses, while only a small proportion fell into the doubtful (score of 3) and disagreement categories, ranging from 20% to 40%. On the other hand, the scores indicating the likelihood of using information from ChatGPT in practical settings showed noticeable variation across different questions and their regenerated responses. Question 2 (Figure 1D), regarding ibuprofen use in the eighth month of pregnancy, shows varied reactions: 40% doubtful and 20% strong disagreement for response 1 [Q2R1], while most scores for response 3 agreed with its practical use, leaving 20% doubtful. Similarly, a comparable pattern was observed in Question 3, specifically for responses 1 [Q3R1] and 3 [Q3R3] (Figure 1F).

To elaborate on the reasoning behind the scores, selected opinions from the interviews were presented here.

From Question 2, Response 1 [Q2R1] The presented information requires more concrete supporting details before it can be used in practice.—P01 The information obtained from ChatGPT lacks clarity, particularly regarding whether it is safe to use ibuprofen during the eighth month of pregnancy.—P02

From Question 2, Response 3 [Q2R3] The response mentions fetal development and advises consulting with a physician, which is considered a better approach.—P01 The response was reassuring to hear from a patient’s perspective. It also advised consulting with a medical professional, including a pharmacist, at the very least.—P05

Thus, the significantly different responses, even from immediate regeneration by ChatGPT, could yield varying tones of information. When focusing on particular responses that tended to receive less positive evaluations, such as the willingness to solely use ChatGPT as a source (Figure 1B), participants primarily gave a score of neutral or unable to decide (score of 3). This in-between rating reflects their doubt and hesitation to fully trust ChatGPT without further verification from additional credible sources of information, even though the accuracy and relevance of this question leaned toward favorable scores. This phenomenon suggests that although AI-generated responses seem acceptable, participants, as pharmacists, still seek further confirmation to ensure patient safety and wellbeing, which is their primary commitment—a positive and responsible practice for healthcare providers.

Another aspect that needs to be focused on is the discrepancy between the regenerated responses. It was observed that Q3R1 in Figure 1F obtained quite different scores compared to the other subsequent regenerated responses. This highlights the inconsistency of the information obtained from ChatGPT, where the same question could yield varying degrees of difference. A similar pattern was also observed with Q2R1 in Figure 1D, although the level of discrepancy was smaller.

Several open opinions from participants during the evaluation section presented a consensus on the role of ChatGPT as a supportive tool in pharmaceutical care. They mentioned that ChatGPT allows them to review and refresh their knowledge, assess the accuracy of the information, and make predetermined judgments to swiftly address the presented scenarios. Consequently, at the end of the first part of the interview, a question was asked about whether the participant would seek additional sources of information after consulting ChatGPT. The unanimous response was a recognition of the necessity to seek further information, highlighting a consensus that they would not depend solely on ChatGPT for their professional practice. It is beneficial that it provides reasoning behind its suggestions, but in reality, additional patient-specific information is needed to make an appropriate decision.—P01 The answers were too vague and sometimes not conclusive.—P03 It is partially agreeable what was provided, but only to an extent.—P05

Part 2: To what extent would community pharmacists utilize the information from ChatGPT in real situations?

A follow-up interview question and some open opinions from participants were gathered and analyzed during the evaluation section regarding their willingness to utilize the information in real situations or not. One obvious drawback of ChatGPT was its handling of non-mainstream languages, such as the Thai language. The participants noted that the responses in the Thai language obtained from ChatGPT were ambiguous, confusing, and gave the impression of machine translation. In summary, the participants were generally satisfied with the context provided by ChatGPT, yet they found the content lacking in delivering a seamless, human-liked experience. However, some were impressed by the sequential presentation of information, which was easy to follow. Most participants expressed their willingness to use ChatGPT only as an additional part of the information-searching process rather than relying solely on it. They were impressed with the speed and thoroughness of the information provided by ChatGPT but also raised concerns about its accuracy and comprehensibility in the Thai language, suggesting that these issues could lead to confusion and potential harm if used without further confirmation from healthcare providers. Therefore, most agree that ChatGPT could be a good companion only as a tool to assist alongside other methods, but not as the sole method. Nevertheless, a refinement of the Thai language usage would be greatly preferred. The selected statements provided by the participants were presented here: Considering if the information were read by patients, it could negatively impact their impression, as some irrelevant technical terms might be interpreted in different ways.—P01 It is actually pretty good in terms of the order of information provided. However, it still seems to be an answer from an AI, which is somewhat confusing in Thai writing.—P03 It tends to provide a lot of useful information; however, large amounts of typos could lead to changes in meaning and diminish its legitimate image.—P04 It is quite strange that the response, intended to be in Thai, has the Chinese characters pop up.—P04 It is absolutely confusing; however, upon careful reading, it is acceptable. It requires time to contemplate.—P05

Thematic analysis from open opinion regarding the attitudes of pharmacists towards ChatGPT

Usefulness of ChatGPT in providing health information

The participants generally showed a positive response towards the usefulness of ChatGPT in providing health information. They appreciated the quick retrieval of information as an immediate reference, which they found very beneficial for rechecking and addressing common queries efficiently. However, some participants also expressed the opinion that it was necessary to use multiple sources to verify the accuracy and reliability of the information provided by ChatGPT.

Detailing these aspects into a few categories as follows:

Positive response: Some pharmacists found ChatGPT useful for quick information retrieval and as an initial reference. Yes, it is useful because I have never used it before. I think it can help pharmacists recheck information.—P01

Neutral/Mixed Responses including negative response: Others believed ChatGPT might be useful for general information but felt the need for more reliable and consistent references.

Some quotes relating to these aspects were as follows: Initially before reading the answers, I thought it might be somewhat usable for quick, rough answers before referring to other more reliable sources. However, after looking at the answers, I felt they might not be usable. For example, generating three times gave different answers each time, with varying directions making the answers seem scattered. The references are not clear whether they are reliable, and it seems necessary to specify where the references come from.—P02 I don’t really like it because using information from ChatGPT to buy medication may lack detail. Environmental factors, the patient’s condition, and diet are also important. Most pharmacists would generally not agree with it.—P03

The view towards the validity of responses obtained from ChatGPT

When querying opinions about the validity of responses from ChatGPT from the perspective of patients, concerns have been raised regarding the potential risks and benefits of using ChatGPT. Some patterns of attitude have been observed as follows:

Concern about general information and detail

The participants expressed concerns about the broad and nonspecific nature of ChatGPT's responses. They suggested that follow-up professional judgment is essential to verify and supplement the AI-generated information for accurate clarification. Additionally, they emphasized the need to assess the appropriateness of ChatGPT’s responses and provide further information as necessary, whether the patient may use the information for self-diagnosis in the future or the pharmacists use it to assist their work.

Some quotes relating to these aspects were as follows: Some answers are agreed with because they match what has been learned, but the general public might not know and thus come to ask pharmacists in the pharmacy. Nowadays, people bring information from Google or Pantip (a popular Thai webboard/forum) to ask, and pharmacists have the duty to inform whether the information is correct.—P01 The information that the public receives may be too general. It is necessary to explain the information they have obtained in more detail and make it more precise. The information from ChatGPT is not wrong, but it is too broad and not specific. If someone comes to buy medication based on information from ChatGPT, the advantage is that the public has already started to understand the question and has some information. The pharmacist’s duty is to explain it in more detail and make it more credible.—P02 I think ChatGPT does not specifically direct patients to treat themselves. It mostly advises seeing a doctor, pharmacist, or specialist.—P03

The Impact of AI on the role of pharmacists: strengths, weaknesses, and challenges

The participants perceived the existence of ChatGPT in various ways regarding their professional practices. When considering the positive aspects of ChatGPT, participants viewed AI as a beneficial addition that could reduce workload, improve information accuracy, and enhance readiness. However, some raised concerns about the complexity of information provided to the public audience, which could potentially have detrimental effects. Generally, participants showed less concern that the advent of AI would replace human roles entirely, particularly the role of pharmacy practitioners.

Some quotes relating to these aspects were as follows: I think it is positive and beneficial. Pharmacists can work alongside AI, which helps reduce their workload and provides more accurate information. It is more about collaboration rather than diminishing the role of pharmacists. It is a supportive and positive development.—P04 It is unlikely to have an impact because in healthcare, AI cannot fully replace humans 100%. There will always need to be people working behind the scenes.—P05 Yes, because if the details are too extensive, patients will tend to believe the information they get from AI, TV, or radio more than what a pharmacist says. The more detailed it becomes, the harder the job will be because pharmacists will have to explain more.—P05

At the very end of the interview, participants were inquired to provide their thoughts regarding the strengths and weaknesses of ChatGPT. Examples of these strengths and weaknesses were shown in Table 3. The complete list of strengths and weaknesses can be found in the Supplementary data.

Example of strengths and weaknesses of ChatGPT in pharmacy practices based on participant attitudes.

Discussion

The current era, characterized by significant technological disruption, has integrated numerous advanced fields into the daily lives of individuals. An automated chatbot capable of conducting human-like conversations has emerged as one of the focal points. 22 However, most of the relevant chatbot constructed as LLM, in particular ChatGPT, were predominantly trained with the English language. 23 When it needs to be used by people whose native language is not English, uncertainty would certainly be one of the first impressions towards such technology. Moreover, as this technology becomes increasingly prevalent in the healthcare system, what would be the impression of healthcare professionals towards “Doctor ChatGPT,” who is becoming the unlikely successor to “Doctor Google.” 24

This preliminary qualitative investigation primarily concentrated on examining the perspectives of community pharmacists in Thailand regarding the potential application of ChatGPT within their practices. Herein, a group of volunteer pharmacists offered their unbiased assessments regarding the reliability and legitimacy of information provided by ChatGPT. The findings revealed that while participants were generally satisfied with the promptness of the responses generated by ChatGPT, they were somewhat disappointed with the confusion arising from the Thai language narration. Likewise, it was observed that while ChatGPT tends to answer very advanced questions and could potentially pass several medical exams,25–27 its performance in certain languages, such as the Thai language, Chinese, or in specific contexts, was found to be relatively suboptimal.18,28–30 In the current study, the authors identified two significant aspects related to the use of ChatGPT and its implications in local community pharmacies in Thailand, from the practitioners’ standpoint.

Firstly, misinformation obtained from ChatGPT could be unintentional, stemming from inherent issues within its training model. ChatGPT and other LLMs were primarily trained on a variety of sources from the internet or from relevant text-based data, which are predominantly in English.23,31 Recognizing this limitation, several developers have aimed to develop LLMs that specialize in particular languages.32,33 As such, when using these English-oriented LLMs with alternative input languages, especially for specific purposes such as health information, the highest level of caution should be exercised. Additionally, research suggests that rapid generation and dissemination of fabricated information by AI chatbots may act as a new form of digital weaponry, thereby underscoring the necessity of implementing a vigilant system to mitigate these risks and protect public health. 7 Regarding the potential for misinformation and the need for caution with LLM-generated texts, the authors further investigated this subtle topic by questioning the prescribing category of amoxicillin in Thailand, the US, and Australia using ChatGPT. In reality, amoxicillin is categorized as a dangerous drug, or Class D, under Thailand FDA regulations, which allows a registered pharmacist with a license to prescribe this medication at their drugstore if necessary. Meanwhile, amoxicillin is classified as a prescription-only medication in both the US and Australia. The response from ChatGPT in English, as shown in Supplementary Figure A, provided inaccurate information by stating that amoxicillin is prescription-only in Thailand. In contrast, the Thai language (Supplementary Figure B) response to the same question appeared to be more accurate, possibly because the question was asked subsequently after the English query, allowing ChatGPT to retain knowledge from the prior conversation. Additional query regarding the classification of amoxicillin in Australia and the US using English as the medium was shown in Supplementary Figure C, which depicted accurate information.

The second significant finding was the potential role of ChatGPT as a capable companion in community pharmacy practice. Community pharmacists who participated in this study expressed interest in using ChatGPT, as most of them were previously unaware of its capabilities. Participants initially had the preconception that it was designed to be used only in English. However, when the investigators demonstrated its capability in the Thai language, it led to increased curiosity and surprise among the participants. Disappointingly, when the participants reviewed the responses from ChatGPT to the health-related questions posed, they found that ChatGPT’s expertise was lacking, falling short of their expectations. There were errors in typing, confusing sentence structures, irrelevant information, and the use of random words, all of which could negatively impact readers if not carefully considered. To exacerbate the situation further, some responses included fragments of other languages amidst the intended Thai language, compromising the consistency and professionalism of the information provided. Therefore, the findings led participants to view ChatGPT as merely a “capable” companion, due to its varied abilities but necessitating human oversight in information delivery. This finding aligns with literature from various contexts, such as medical exams in French and Korean occupational therapy assessments, which also document the challenges of using different languages with ChatGPT.15,34 However, this linguistic challenge found in this research and other similar literature should be viewed as mechanistic technical feedback for further improvement rather than as criticism of the developers’ shortcomings. Developers should, for instance, focus on improving language models to better understand and generate non-English languages, taking into account the nuances and complexities of each language. Incorporating more fine-tuned training data or increasing the amount of dataset for training the model in the particular language would enhance both accuracy and comprehensibility.

The limitations of this study revolve around its methodological approach. As a pilot qualitative study using the snowball sampling method, it was unavoidable that selection bias could be observed. Snowball sampling can introduce selection bias due to its non-random nature. However, prior to conducting the research, bias mitigation strategies were employed. These included selecting participants based on specific characteristics—only pharmacists working in accredited drug stores were considered—stratifying by years of experience and considering geographical and population factors. By using these strategies, the authors aimed to represent diverse viewpoints across generational cohorts and the services provided in areas with varying population densities, thereby achieving a more realistic representation. Moreover, the small sample size in this study could lead to uncertainty in data saturation. This limitation was addressed during the interviews by ensuring that data saturation was reached. Each interview lasted around 1–1.5 h per participant, providing ample time for a complete extraction of opinions and discussion. Nevertheless, further research with an expanded number of participants on the current topic should be conducted to ensure consistency with the present findings. However, following the formal analysis as part of the thematic analysis, the authors observed no additional distinct or relevant themes beyond what has been presented in the results section. As such, this led the authors to conclude that data saturation was achieved with the number of participants using the questionnaire and approach deployed in this study.

Pharmacists in Thailand’s local communities have observed significant shifts in patient behaviors towards health decisions, including occasional reliance on unreliable internet rumors for their health information, reflecting trends seen in other countries.35–37 It is inevitable that, at some point in the future, patients will approach nearby pharmacies and request specific medicines based on information obtained from LLMs. To address this issue, adopting a more proactive strategy would be the preferred approach. Pharmacovigilance could be extended to address this issue, which should also highlight and recommend the safe development and use of LLMs by tech companies. In addition, local medical governance bodies, such as the Pharmacy Council of Thailand, should increase their awareness regarding the changing patterns of patient behavior related to digital trends in healthcare. It would be beneficial to incorporate an understanding of the usefulness of AI in pharmacy practice, including the potential pitfalls that must be taken into account in real-life situations. This concept could be particularly valuable if modern pharmacy students familiarize themselves with this topic early in their academic years. Additionally, workshops from academic institutions on the application capabilities of AI in pharmacy could also be beneficial for pharmacists who are already working full-time in various settings.

Conclusion

ChatGPT demonstrated acceptable capabilities in providing information regarding the use of medicines, as evaluated by pharmacists’ opinions in the Thai language. However, it is still far from perfect for using the aforementioned information for seamless integration or as a sole primary source in pharmacy practice. This study underscores the importance of integrating AI in healthcare, particularly in community pharmacy settings. As digital health continues to advance globally, it is crucial for pharmacists to remain informed about emerging trends, regardless of the language medium, to effectively adapt their services within the community. By embracing AI tools such as ChatGPT, pharmacists can align with global trends in digital health, enhancing their ability to provide accurate and comprehensive patient care as these technologies continue to improve.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076241283256 - Supplemental material for Exploring community pharmacists’ attitudes in Thailand towards ChatGPT usage: A pilot qualitative investigation

Supplemental material, sj-docx-1-dhj-10.1177_20552076241283256 for Exploring community pharmacists’ attitudes in Thailand towards ChatGPT usage: A pilot qualitative investigation by Nuntapong Boonrit, Kornchanok Chaisawat, Chanakarn Phueakong, Nantitha Nootong and Warit Ruanglertboon in DIGITAL HEALTH

Supplemental Material

sj-docx-2-dhj-10.1177_20552076241283256 - Supplemental material for Exploring community pharmacists’ attitudes in Thailand towards ChatGPT usage: A pilot qualitative investigation

Supplemental material, sj-docx-2-dhj-10.1177_20552076241283256 for Exploring community pharmacists’ attitudes in Thailand towards ChatGPT usage: A pilot qualitative investigation by Nuntapong Boonrit, Kornchanok Chaisawat, Chanakarn Phueakong, Nantitha Nootong and Warit Ruanglertboon in DIGITAL HEALTH

Footnotes

Acknowledgments

This work was supported by the Faculty of Pharmaceutical Sciences, Prince of Songkla University. The authors would also like to express their gratitude to all participants involved in this study.

Contributorship

NB and WR contributed to study design, supervision, data analysis, manuscript writing, and editing. NB contributed to participant recruitment. KC, CP, and NN contributed to conduct the interviews, collected data, and performed data preprocessing and analysis. WR contributed to conduct formal analysis, assisted with visualization, and the first draft of the manuscript.

Data availability statement

The data that support the findings of this study are available from the corresponding author upon reasonable request.

Declarations of conflicting interest

The authors declare no conflict of interest. WR is a registered pharmacist with a doctorate degree in clinical pharmacology. NB is a registered pharmacist who is board-certified in Pediatric Pharmacy by the Board of Pharmacy Specialties (BPS). KC, CP, and NN were fifth-year pharmacy students in a 6-year pharmaceutical care program, supervised by WR and NB during the study.

Ethical approval

This study was granted an exemption from requiring ethical approval by the Ethical Committee of the Faculty of Pharmaceutical Sciences at Prince of Songkla University, as per the memorandum PSU 108/66-3165, dated December 6, 2023. All research collaborators have completed training in the ICH Good Clinical Practice course in human research.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Guarantor

WR

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.