Abstract

Introduction

Generative artificial intelligence (GenAI) tools such as ChatGPT-4o and Gemini are rapidly influencing public health research and communication. Their capacity to assist with drafting, summarising, and translating content offers significant potential, particularly in multilingual and resource-limited settings.This narrative review critically explored the adoption of GenAI tools in public health research and communication, focusing on their practical applications and ethical implications.

Methods

This narrative review synthesised 18 recent peer-reviewed and grey literature (2023–2025) to explore the role of GenAI in public health research and communication. A hybrid human–AI approach was used, where colour-coded manual coding was combined with AI-supported thematic analysis. All AI-generated outputs were critically reviewed, verified, and refined by the author.

Results

Five key themes were identified: (1) Supporting scientific research writing tasks; (2) enhancing language clarity and scientific tone; (3) bridging the gap between science and the public; (4) ethical concerns and quality assurance; and (5) future potential and the need for upskilling.

Discussion

GenAI can democratise and accelerate public health research publication and communication, provided it is used transparently and critically. Human oversight and contextual judgement remain essential to ensure responsible use.

Conclusion

With thoughtful implementation, GenAI can enhance human expertise in the realm of public health, academia and scientific communication. It offers an emerging opportunity to strengthen public health research and communication, particularly when supported by ethical guidelines, training, and institutional leadership.

Keywords

Introduction

Generative artificial intelligence (GenAI) refers to artificial intelligence systems that can generate new content, such as text, images, or audio, by learning patterns from existing data. 1 GenAI tools have emerged as transformative forces across disciplines, with wide-ranging implications for public health research and scientific communication. 2 Powered by large language models (LLMs) such as OpenAI's ChatGPT-4o, Google's Gemini, and Anthropic's Claude, these tools can generate human-like text, translate languages, draft structured reports, and assist in literature synthesis. Their integration into research and communication workflows is accelerating rapidly, raising excitement around improved productivity, and concerns about ethical risks such as bias, hallucinated citations, and data misuse. 3

In public health, where the rapid translation of research into action is vital, GenAI offers a notable promise. The COVID-19 pandemic has revealed both the urgency for clear and accessible communication, and the burden on researchers to produce and disseminate scientific content across diverse audiences. Generative AI tools have demonstrated potential to support manuscript drafting, generate summaries of complex data, and adapt health messaging to different languages and literacy levels.4,5

Studies have shown that LLMs can assist with literature review, idea generation, manuscript editing, and translation.6,7 They also play a growing role in health promotion, enabling the faster creation of natural language content for mobile apps, campaigns, and websites. 8 Public-sector agencies and universities are piloting the use of AI to enhance drafting, communication, and policy briefs.9,10

However, their use raises important concerns regarding the factual accuracy, biases such as demographic underrepresentation and training data skewing toward high-income settings, data privacy, and authorship. The WHO has warned that AI models may produce authoritative but incorrect responses, which could lead to misinformation if left unchecked. 11 Questions of equity also emerge, as access to these tools is uneven across settings and the data used to train LLMs often underrepresent marginalied populations.

This review aimed to synthesise existing literature on the use of GenAI in public health research and communication, identify recurring themes, and evaluate ethical and operational implications It further reflects on global policy responses and advocates for structured upskilling of public health professionals, researchers, and government communicators to support the responsible and inclusive adoption of GenAI, citing a framework for professional development tailored to this purpose. This comprehensive overview of GenAI's role in public health offers insights for scholars, policy-makers, practitioners and professionals in the field. To the best of my knowledge at the time of writing, there are no reviews conducted locally that have systematically examined the dual roles of GenAI in both academic writing and public health outreach.

Methods

A narrative review design approach was used for synthesising rapidly evolving, interdisciplinary literature on generative AI in public health. Unlike systematic reviews that require well-established and stable bodies of evidence, narrative reviews allow for greater contextual interpretation and flexibility, which is ideal for emerging topics that span technological, ethical, and practical domains. Hence, PRISMA guidelines were not applicable, however, transparency and reproducibility was maintained through structured search terms, iterative triangulation, and AI-assisted synthesis.

This study employed a hybrid human–AI synthesis approach using leading LLMs, specifically OpenAI's ChatGPT-4o and Google's Gemini. These tools were selected based on their accessibility, fluency in public health discourse, and prior use in academic settings. All outputs were critically reviewed, verified, and refined by the author. This approach aligns with established best practices for narrative reviews, enabling a broad yet rigourous synthesis of both peer-reviewed and grey literature published between 2023 and 2025. The method prioritised depth of insight, relevance to practice, and reflexive integration of technology without compromising scholarly integrity.

A purposive sample of 18 peer-reviewed articles and grey literature sources published between 2023 and early 2025 was selected to reflect diversity across key dimensions: application area (research vs communication), ethical framing (e.g., responsible and inclusive AI use), and geographical context.

Literature search strategy

A desk-based literature search was conducted between March and April 2025 and the review focused on literature published between January 2023 and March 2025. The selected time frame captures the period following the public release of advanced generative AI models, notably ChatGPT-4 in March 2023 and ChatGPT-4o in May 2024. These models marked a transformative shift in the mainstream adoption and capabilities of generative AI in research and health communication.

Literature was sourced using a targeted desk review strategy across academic databases (PubMed, Scopus, Google Scholar) and reputable grey literature sources (e.g., institutional reports, government initiatives, and policy guidance). Search terms included combinations such as ‘generative AI’, ‘ChatGPT’, ‘large language models’, ‘public health’, ‘scientific writing’, ‘AI literacy’, ‘AI in scientific writing’, ‘using large language models in research’, ‘public health communication’, ‘AI ethics’, ‘AI in research’, ‘AI in scientific communication’ and ‘academic research’. Boolean operators and filters (e.g., by year and language) were applied to ensure relevance and recency.

Priority was given to articles that discussed real-world applications, policy implications, or ethical issues related to GenAI. Representative examples include Lupton & Butler's position paper on GenAI in public health, empirical studies on ChatGPT's performance in academic writing, and reports on national AI strategies in Southeast Asia. Representative sources were selected based on relevance, recency, and accessibility, and are cited within the discussion to support each thematic domain (see Table 1).

Representative literature supporting thematic analysis

GenAI: generative artificial intelligence; LLM: large language model.

This review considered both peer-reviewed literature and high-quality grey sources to capture recent developments from 2023 to 2025. Studies focusing solely on clinical diagnostics or biomedical image recognition were excluded. Particular attention was paid to research papers that reported on AI use in academic research, health promotion, and public-sector communication.

Application of generative AI in the review process

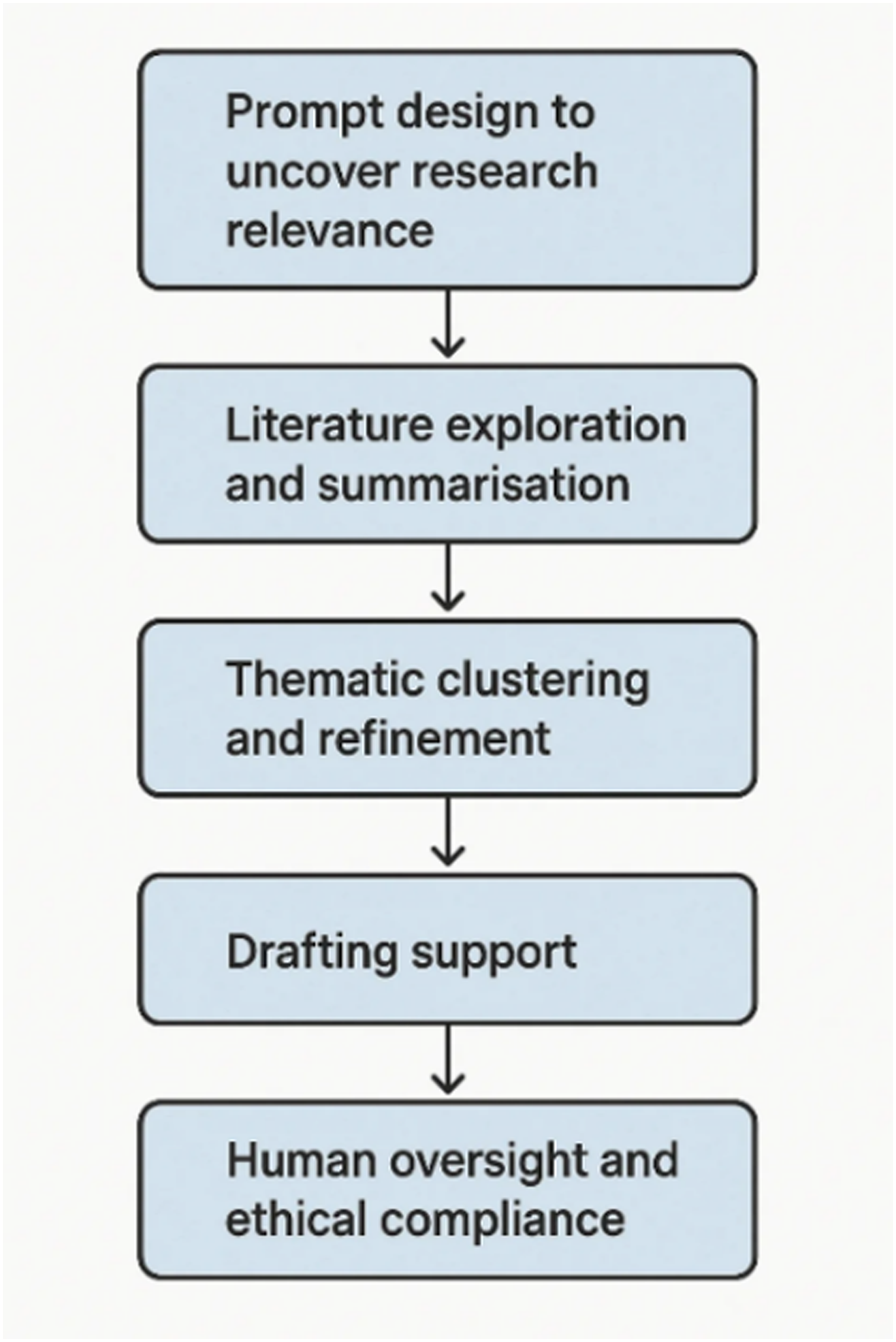

This narrative review serves as a practical demonstration of AI-assisted academic writing to explore the use of GenAI in the research workflow. As illustrated in Figure 1, the application of Generative AI in the review process follows a structured iterative sequence.

Structured flow of generative ai application in the review process.

Prompt design to discover research relevance

Prompts were iteratively designed and refined to elicit targeted outputs that could contribute meaningfully to the literature synthesis and thematic structure of the review. For instance, early-stage prompts such as ‘Summarise GenAI applications in public health research’ were used to surface broad use-cases, which were then narrowed down through follow-up queries like ‘Provide examples of GenAI supporting scientific communication’ or ‘Identify risks associated with using AI in public health literature reviews’.

To structure the review's core themes, prompts such as ‘Group key findings thematically based on current literature’ or ‘Suggest relevant thematic areas emerging from research on GenAI in public health’ were used. These outputs offered a scaffold that was subsequently reviewed and validated against manually colour-coded excerpts from selected articles, ensuring conceptual coherence and alignment with the published evidence.

This iterative and interactive process allowed the AI to function as a thinking partner.

Literature exploration and summarisation

The initial exploration of the literature was led by the author through traditional scientific methods, including manual review and colour coding of key excerpts across multiple sources. This process allowed for the identification of recurring themes, emerging concepts, and nuanced language patterns. Text segments related to core topics such as ethics, equity, accuracy, and communication were highlighted and categorised, enabling a visual map of content relationships to emerge.

These manually colour-coded groupings were then cross-checked and expanded using ChatGPT-4o. The generative AI tool was prompted to synthesise insights around the highlighted categories and suggest additional thematic connections that may not have been immediately visible during manual coding. Suggested references and claims provided by the AI were all independently verified by the author to ensure validity and alignment with peer-reviewed literature. This dual approach, anchoring in human-led analysis followed by AI-enhanced synthesis enabled both depth and breadth in theme development, combining interpretive sensitivity with computational efficiency. Only verified, retrievable, and academically sound references were included in the final review.

Thematic Clustering and Refinement

To support thematic analysis and synthesis, a series of structured prompts were used to guide the generative AI tool (ChatGPT-4o) in identifying patterns and clustering topics across the reviewed literature. These prompts were designed to extract relevant themes and synthesise the diverse findings from multiple studies. Examples of initial prompts included: ‘Group the following articles into major themes relevant to public health research using generative AI’, and ‘What are common use-cases of generative AI in scientific communication based on current literature?’ These were followed by more refined, layered prompts such as: ‘Can you elaborate on Theme 2 with examples from peer-reviewed sources?’ The generative AI tool (ChatGPT-4o) outputs were not accepted at face value. Instead, the AI-generated themes were cross-validated against the source literature and iteratively refined by the author. A manual colour coding technique was employed during document analysis. Relevant segments of literature were highlighted according to emergent categories which allowed the author to visually track recurring patterns and compare them to the AI-suggested themes. This visual method helped to triangulate findings, uncover overlapping concepts, and identify areas of thematic divergence or nuance not captured by the AI. The integration of human-led colour coding with machine-generated thematic outlines ensured that the final thematic synthesis was both data-driven and critically curated.

Drafting support

Thematic sections were co-drafted in collaboration with ChatGPT-4o, which was used to support the organisation of ideas and improve linguistic clarity. The preliminary drafts were subsequently refined by the author to ensure alignment with verified data, appropriate academic tone, and contextual relevance. While the AI contributed to the drafting process as a supportive tool, all interpretation, and final editorial decisions were undertaken by the author. This collaborative approach reflects a responsible integration of GenAI, where human expertise remains central to ensuring methodological rigour and scholarly integrity

Human editing and ethical practise

All AI-assisted content was carefully reviewed by the author to ensure factual accuracy, ethical integrity, and alignment with scholarly expectations. This process included identifying and correcting non-existent references and claims, 12 clarifying ambiguous statements, and verifying that the final language was consistent with both the evidence base and the intended academic tone. The use of ChatGPT-4o was transparently disclosed in accordance with international editorial standards, including those set by Nature and the International Committee of Medical Journal Editors (ICMJE). 13 This commitment to transparency reflects broader efforts to promote responsible integration of generative AI in scholarly communication, ensuring that the final manuscript upholds the principles of trust and accountability.

Results

The thematic analysis resulted in five recurring domains, reflecting both operational and ethical dimensions of GenAI use in public health communication.

Theme 1: Supporting research initiation and drafting

This theme captures how GenAI functions as a conceptual and editorial assistant, enabling researchers to navigate the often-time-consuming tasks of shaping research questions, summarising articles, and preparing working drafts. For example, Lupton and Butler observed how researchers used ChatGPT to refine manuscript abstracts and accelerate ideation during early-stage academic writing. 4 GenAI tools such as ChatGPT have been shown to assist researchers in generating structured outlines, refining languages, summarising evidence, and simulating literature synthesis. In some studies, researchers have used GenAI to produce early drafts of review articles or compare study frameworks.14–17 For instance, a pilot study in Malaysia found that Gemini's deployment in Malaysia's civil service has reportedly reduced administrative drafting time by over three hours per week. 18 However, authors such as Banh and Strobel caution that overreliance on AI-generated drafting may obscure critical reasoning and reduce scholarly originality. 1 Although promising, these tools must be used carefully to avoid propagating inaccurate claims. Furthermore, as these tools become more sophisticated, there is a growing need for clear guidelines and transparency in their application to maintain the integrity of scholarly publications.

Theme 2: Enhancing language clarity and scientific tone

Generative AI tools are increasingly used to enhance the clarity, tone, and structure of scientific writing, particularly among early-career researchers and non-native English speakers. Several studies in the reviewed literature highlight how LLMs support more coherent sentence construction, professional tone, and accessible phrasing in public health outputs. Non-native English speakers in academia benefit significantly from GenAI, which uses tools to refine grammar, sentence structure, and tone. 19

Chetwynd highlights how GenAI is now routinely used in scientific writing for initial drafts, grammar refinement, and tone calibration and echoed by Gorraiz, who discusses the growing trend of acknowledging AI contributions in research publications, especially when tools have substantively shaped the tone or structure of academic outputs.20,21

As a result, they contribute to levelling the playing field in academic publishing by reducing language-related barriers that often disadvantage non-native English-speaking scholars. This increased accessibility can promote greater diversity in academic discourse and allow valuable research from underrepresented regions to gain wider recognition.20,21 While these capabilities offer new efficiencies, both authors caution against fully automated outputs. Chetwynd warns that uncritical use can result in homogenised or overly generic phrasing, while Gorraiz stresses the importance of clear disclosure and maintaining the integrity of human authorship.20,21 Therefore, while AI tools can enhance presentation, they should be used as a complement to, rather than a substitute for, strong scientific reasoning and integrity.

Theme 3: Bridging the gap between science and the public

ChatGPT and similar tools are used to create health education content, FAQs, chatbot scripts, and social media posts in multiple languages. GenAI helps translate scientific findings into accessible formats that are particularly important during health emergencies or public awareness campaigns.

Davies et al. identified that LLMs can play a critical role in tailoring culturally sensitive public health messages, especially when language, tone, and context are adjusted based on population needs¹. Similarly, Ali et al. showed that a hybrid AI–human collaboration in rewriting surgical consent forms significantly improved patient comprehension and readability. These AI tools can also generate tailored health messages for specific demographics or communities, enhancing the reach and effectiveness of public health communication.

These findings align with Gorraiz, who emphasises the communicative potential of AI-generated content beyond traditional academic publishing, particularly in settings where researchers aim to make findings accessible to lay audiences. 20 Yet, as Chetwynd cautions, generative outputs must be carefully curated to avoid misinformation, over-simplification, or loss of nuance in translation from technical to plain language. 21

The potential of GenAI to create personalised health content could significantly improve public education and engagement.23–26 This tailored approach may lead to better health outcomes and increased adherence to medical advice, ultimately transforming the dissemination and consumption of healthcare information.

Theme 4: Ethical concerns and quality assurance

Major concerns include hallucinated content, biased outputs, and misuse of generated text. The WHO warns that AI models can produce plausible yet false content. Journals now require AI to be disclosed and prohibit listing it as a co-author.27,28

Ethical considerations also feature prominently, with scholars calling for comprehensive frameworks to guide the responsible use of AI in research environments. A growing body of literature highlights the risk of algorithmic bias wherein AI systems may perpetuate or amplify existing inequities. Transparency remains a key priority, particularly in the form of clear disclosure and documentation of AI usage across the research lifecycle. Experts further advocate for interdisciplinary collaboration among developers, academics, publishers, and policymakers to address these multifaceted challenges. 29 Finally, the establishment of best practices and standardised protocols has been identified as essential for guiding the ethical and effective implementation of AI in scholarly communication. 30 Together, these insights point to the complex nature of integrating AI into academic workflows and the ongoing need for coordinated, evidence-informed approaches.

Theme 5: Future potential and the need for upskilling

Looking ahead, the future utility of GenAI in public health hinges on the capacity of professionals to use these tools effectively and responsibly. McKinsey & Company (2024) reports that benefits are maximised when users are equipped with the digital literacy needed to effectively leverage AI capabilities in their work. 31 A compelling example of institutional upskilling can be seen in Malaysia's national rollout of Google Gemini across the civil service, which couples access to the tool with structured training initiatives. This approach not only builds technical fluency among public-sector staff but also normalises the integration of GenAI into everyday workflows from internal communications to policy brief drafting.32 Such models underscore the importance of combining tool adoption with capacity building, positioning upskilling as a cornerstone of sustainable and equitable GenAI integration in public health contexts.

Discussion

This review found that while GenAI offered practical benefits in drafting and dissemination public health information, it also introduced new challenges related to ethics, accuracy, and authorship. The five thematic areas identified suggest that GenAI is no longer a peripheral novelty, but is steadily becoming a central part of the academic and communication toolkit in public health.

The capacity of GenAI to support researchers through the research lifecycle is particularly relevant in settings where time, technical support, and linguistic resources are limited. Public health professionals under pressure to produce and translate evidence across languages and formats may benefit significantly from the time-saving and stylistic support provided by the GenAI tools.

Supporting scientific research tasks

GenAI tools such as ChatGPT-4o and Gemini are increasingly being used to streamline time-intensive tasks across the research pipeline. These include literature summarisation, manuscript drafting, conceptual framework development, and even qualitative data organisation. In contexts where time, technical support, and linguistic resources are constrained, these tools offer a substantial advantage. For instance, a pilot conducted in Malaysia demonstrated that civil servants using GenAI saved an average of 3.25 hours per week on documentation tasks while improving the clarity and structure of their outputs. 33 This finding aligns with broader trends reported by McKinsey & Company, which predict significant productivity gains across knowledge-intensive sectors through responsible GenAI adoption³¹.

However, this efficiency must be paired with caution. During the simulation literature research for this paper, multiple incorrect references were conjured by the ChatGPT-4o. Similarly, several studies have documented the risks of ‘hallucinated’ references and factually incorrect outputs from ChatGPT-4o.29,30 When called out, ChatGPT-4o's response was:

Apologies for the earlier confusion and any inaccuracies in the reference details I provided. Upon re-examination, I realize that the citation does not correspond to an actual published article.

It appears there was a miscommunication or error in generating this reference. After searching through available databases and the official websites of the mentioned authors, I couldn't locate a publication matching this description. It's possible that this reference was intended as a placeholder or was mistakenly included. To ensure the accuracy and credibility of your manuscript, I recommend the following steps:

Review Your Reference List: Double-check all the citations in your manuscript to ensure they correspond to actual, published works.

Verify Each Source: Use academic databases like PubMed, Google Scholar, or the respective journals’ official websites to confirm the existence and details of each reference.

Replace or Remove Inaccurate References: If a cited work cannot be verified, consider replacing it with a relevant, credible source or removing the citation altogether.

Consult Co-Authors or Colleagues: If you're unsure about a particular reference, discussing it with your co-authors or colleagues might provide clarity.

Such an occurrence underscores a recurring theme, which is GenAI tools offer acceleration, not substitution. Without human-led validation, especially in public health domains requiring interpretive nuance, the benefits may be undermined by factual inaccuracies. Therefore, it is essential that public health researchers maintain rigourous oversight and use GenAI as a co-pilot that supports productivity but does not replace human critical thinking and accountability.

Enhancing language clarity and scientific tone

One of the most significant contributions of GenAI is in enhancing the readability and professionalism of scientific writing. This is particularly impactful for non-native English-speaking researchers, who may face linguistic barriers in international publishing. In his bibliometric review, Sallam identifies linguistic clarity and structural coherence as the most cited benefits of ChatGPT in health research communication, highlighting its capacity to reduce writing barriers while improving scientific readability²². Similarly, Fui-Hoon Nah et al. noted that GenAI enhances efficiency and surface-level quality in scientific documentation by supporting language simplification, consistency in tone, and content summarisation³. These capabilities enable researchers to convey complex findings with improved precision and accessibility.

AI-driven tools can support grammar refinement, tone alignment, and structure, helping ensure that research findings are clearly and effectively communicated. While this levels the playing field in some ways, it also raises new questions around authorship, intellectual contribution, and the risk of masking weak arguments beneath polished prose. Chetwynd warns that overly polished AI-generated text may obscure weak arguments. 21

Combined, the evidence suggests that while GenAI improves linguistic accessibility and lowers structural barriers to publication, its integration must be carefully managed. GenAI should function as a co-authoring assistant to facilitate clarity and tone, without eroding the intellectual agency of the human author.

Bridging the gap between science and the public

Generative AI presents new opportunities for inclusive, multilingual communication. As noted by Ali et al., AI-assisted rewriting of surgical consent forms led to measurable improvements in patient comprehension, demonstrating GenAI's real-world value in bridging information asymmetries in clinical settings.26 This aligns with Davies et al., who found that LLMs could dynamically adapt tone and cultural references to produce locally sensitive health messages across multilingual populations. However, while both studies highlight GenAI's translational power, they adopt subtly different lenses. Ali et al. focus on augmenting comprehension in formal patient-provider interactions, whereas Davies et al. 25 emphasise GenAI's role in tailoring public health outreach campaigns. 26 Taken together, these findings illustrate a layered utility where by GenAI tools can support both institutional communication and broader civic education.

In Asia, where linguistic diversity is high, the ability to produce materials in multiple languages can significantly broaden public health reach. However, AI-generated content must be rigourously evaluated for tone, accuracy, and cultural sensitivity. While promising, these tools are not immune to misinformation, particularly in rapidly changing health scenarios. Countries such as South Korea and Singapore have responded by issuing guidelines on ethical use of AI in public communication, requiring human review and disclosure.34,35

Risks, ethical concerns and quality assurance

The integration of GenAI raises ethical concerns around misinformation, data privacy, and algorithmic bias. 1 The thematic analysis revealed that advanced models like GPT-4o may generate fabricated citations. A study published in the Journal of Medical Internet Research evaluated the accuracy of references generated by LLMs for systematic reviews. The hallucination rates were found to be 39.6% for GPT-3.5, 28.6% for GPT-4, and 91.4% for Bard. 36 These risks are especially pronounced in low-resource settings, where the capacity to verify AI outputs may be limited. Similarly, recent commentary has emphasised the tendency of LLMs to generate hallucinated and erroneous content erroneous when used without sufficient domain expertise or methodological safeguards. 37 Such limitations underscore the need for competent human oversight to ensure the accuracy and integrity of extracted information. While The Lancet highlights citation integrity, Milvus (n.d.) addresses a different ethical frontier: the exploitation of sensitive information through AI prompts². The risk of private health data being inadvertently shared or recycled by publicly trained models raises serious questions about consent, confidentiality, and prompt security. This concern is especially salient in public health where prompts may include epidemiological data, contextual identifiers, or unpublished policy drafts.29,30

Equity is also at stake where models trained predominantly on English-language, high-income datasets may underperform when applied to marginalised populations or underrepresented topics. Indigenous knowledge systems and community-based health practices are often absent from major datasets, raising the risk of epistemic exclusion. This omission not only limits the contextual relevance of GenAI outputs in low- and middle- income country settings but also perpetuates structural inequities in global health knowledge production. Institutional policies must address these challenges through transparency, verification protocols, and capacity-building efforts. Many academic publishers have already issued clear guidance on the disclosure of GenAI usage, and tools cannot be credited as co-authors. 13 These evolving standards reinforce the principle that while AI may assist, responsibility must remain with human researchers.

Future potential and the need for upskilling

To realise the full potential of GenAI in public health, structured upskilling is essential. The value of these tools depends on the user's ability to craft effective prompts, verify outputs, and integrate content critically. A notable example of institutional upskilling can be seen in Malaysia's national ‘AI at Work 2.0’ initiative, which embedded Google's Gemini into civil servant workflows while offering practical training to foster AI literacy. This approach not only builds technical fluency among public-sector staff but also normalises the integration of GenAI into everyday work activities, from internal communications to policy brief drafting. Similarly, the United Kingdom's National Health Service (NHS) has launched an AI and Digital Workforce Capability Framework, aimed at equipping healthcare professionals with the skills to use AI responsibly in clinical and research settings. 38

Embedding GenAI tools into institutional workflows that is supported by explicit policies and training can help demystify the technology, encourage experimentation, and create a more equitable digital environment. Universities are beginning to integrate AI competencies into public health curricula, and international agencies such as UNESCO have called for inclusive AI literacy to prevent new digital divides. More empirical research is needed to assess GenAI's long-term impact on scientific outputs and health equity, especially in the Global South.39,40

However, successful integration of GenAI requires more technical access and critical literacy. Researchers must develop the ability to evaluate AI-generated content, identify factual inaccuracies, assess tone and readability, and distinguish between automated and original thought. These skills should not be viewed as optional extras but as core competencies in modern research ecosystems.

As AI technologies continue to evolve, it is crucial that researchers adapt their skills accordingly. This includes not only mastering the use of AI tools, but also developing critical evaluation skills to ensure the integrity and quality of AI-generated content in scientific research. By fostering AI literacy and promoting responsible AI adoption, the research community can harness the potential of these technologies, while mitigating potential risks and ethical concerns. 41 Taking this write up as a case in point, the combination of literature review, AI- assisted analysis, and writing assistance may serve as a model of an innovative approach to knowledge synthesis and dissemination.

The collaborative efforts of academic institutions, publishers, and funding bodies play a vital role in shaping the future of AI integration into research. By implementing comprehensive AI education programmes, establishing clear guidelines for AI use in publications, and supporting innovative AI-super charged research projects, stakeholders can create an environment that encourages responsible AI adoption while maintaining the highest standards of scientific rigour and ethical conduct.

Finally, there is a strategic opportunity for funders and academic publishers to incentivise GenAI adoption through grants, guidelines, and recognition. Journals can play a pivotal role by encouraging transparency in manuscript submissions, developing clear guidelines for AI-assisted writing, and normalising the ethical use of generative AI in scholarly communication. Funders, meanwhile, can support innovation by incentivising GenAI adoption through grants, recognition programmes, and peer-reviewed pilot projects. Together, these efforts can help shift the field from reactive regulation to proactive innovation, ensuring that GenAI's integration is not only efficient, but also ethical, inclusive, and transformative.

Although this review offers a timely and reflective synthesis, several limitations should be acknowledged. The review is restricted to English-language sources, which may have excluded relevant literature from non-English speaking regions. Given the emerging nature of the field, no formal quality scoring (e.g., CASP) was applied, though sources were cross-checked for credibility and recency. Additionally, the purposive selection of literature introduces potential selection bias. However, methodological rigour was maintained through transparent search strategies, critical verification of sources, and triangulation of human-led and AI-assisted analysis.

Conclusion

Generative AI is rapidly transforming the landscape of public health research and scientific communication. When used transparently and thoughtfully, it can serve as an effective co-pilot to enhance research efficiency, improving language equity, and accelerating the translation of findings into policy-relevant outputs. This review highlights how GenAI tools are already supporting researchers, facilitating inclusive communication, and bridging gaps between science and society, while also introducing new ethical and quality assurance challenges.

To harness these benefits responsibly, governments, funders, academic institutions, and journals must invest in AI literacy, ethical governance, and capacity-building. Malaysia's national integration of GenAI into civil service workflows offers an encouraging example of how structured upskilling and access can support widespread and responsible adoption. As more countries and institutions move toward AI-assisted knowledge systems, it is essential that these tools are embedded not just efficiently, but ethically and inclusively.

However, the promise of GenAI must be balanced with vigilance. This review has shown that despite gains in productivity and clarity, risks such as citation inaccuracies, ethical opacity, and exclusion of marginalised knowledge systems remain pressing. Comparative insights from recent studies underscore that GenAI should function as an intellectual partner, augmenting rather than replacing human expertise.

With the right training, policies, and collaborative spirit, GenAI can become a transformative ally in advancing public health research and communication for the better.

Footnotes

Acknowledgements

The author would like to thank the Director General of Health, Malaysia, for his permission to publish this manuscript.

The author acknowledges the use of ChatGPT-4o, to support selected stages of this manuscript's development. These tools were employed to assist with summarisation, thematic organisation, and language refinement. AI-assisted outputs were used strictly as writing and analytical aids and were critically reviewed, validated, and revised by the author to ensure coherence, accuracy, and scholarly rigour. All decisions related to content inclusion, interpretation, and synthesis were made by the author, who retain full responsibility for the final manuscript.

Ethical approval

Ethical approval was not required as the study did not involve human participants or personal data.

Author contributions

The author conceptualised, conducted, and wrote this manuscript with the assistance of generative AI (ChatGPT-4o) in the drafting and editing stages, all of which were reviewed for accuracy and appropriateness.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Guarantor

Kishwen K Yoga Ratnam is the guarantor of this study and accepts full responsibility for its content.