Abstract

Automated epileptic seizure detection from ectroencephalogram (EEG) signals has attracted significant attention in the recent health informatics field. The serious brain condition known as epilepsy, which is characterized by recurrent seizures, is typically described as a sudden change in behavior caused by a momentary shift in the excessive electrical discharges in a group of brain cells, and EEG signal is primarily used in most cases to identify seizure to revitalize the close loop brain. The development of various deep learning (DL) algorithms for epileptic seizure diagnosis has been driven by the EEG's non-invasiveness and capacity to provide repetitive patterns of seizure-related electrophysiological information. Existing DL models, especially in clinical contexts where irregular and unordered structures of physiological recordings make it difficult to think of them as a matrix; this has been a key disadvantage to producing a consistent and appropriate diagnosis outcome due to EEG's low amplitude and nonstationary nature. Graph neural networks have drawn significant improvement by exploiting implicit information that is present in a brain anatomical system, whereas inter-acting nodes are connected by edges whose weights can be determined by either temporal associations or anatomical connections. Considering all these aspects, a novel hybrid framework is proposed for epileptic seizure detection by combined with a sequential graph convolutional network (SGCN) and deep recurrent neural network (DeepRNN). Here, DepRNN is developed by fusing a gated recurrent unit (GRU) with a traditional RNN; its key benefit is that it solves the vanishing gradient problem and achieve this hybrid framework greater sophistication. The line length feature, auto-covariance, auto-correlation, and periodogram are applied as a feature from the raw EEG signal and then grouped the resulting matrix into time-frequency domain as inputs for the SGCN to use for seizure classification. This model extracts both spatial and temporal information, resulting in improved accuracy, precision, and recall for seizure detection. Extensive experiments conducted on the CHB-MIT and TUH datasets showed that the SGCN-DeepRNN model outperforms other deep learning models for seizure detection, achieving an accuracy of 99.007%, with high sensitivity and specificity.

Keywords

Introduction

The neurological system of the human brain is extremely complicated. The diagnosis and treatment of numerous neurological diseases, including epilepsy, alzheimer, autism, encephalopathies, and others, usually make use of the non-invasive technique known as electroencephalography (EEG), which records electrical activity throughout the patient's skull and scalp. Despite having a lower spatial resolution than methods for imaging the brain like computed tomography (CT) and magnetic resonance imaging (MRI), EEG is a well-liked diagnostic tool among doctors because of its superior temporal resolution, low cost, and non-invasive nature. 1

According to estimates from the World Health Organization (WHO), epileptic seizures impact about 65 million individuals globally. 2 Early detection and timely treatment are vital due to the potential mortality risk associated with epilepsy. Electroencephalogram (EEG) recordings provide immediate measurement of the brain's electrical activity, allowing for the identification of abnormal brain oscillations associated with epileptic seizures. However, manually monitoring long-term EEG data can be laborious. An automated seizure detection system can be highly valuable in diagnosing epilepsy and enabling early intervention for prompt medical assistance. Such a system serves as a real-time alerting mechanism, facilitating timely diagnosis and subsequent therapy. 3

Automatic seizure identification using the deep learning (DL) method is a two-stage procedure. The signals’ spectral or temporal information, or maybe both, are used in this feature extraction procedure. The retrieved features may then be subjected to statistical analysis techniques before being fed into a classifier in the subsequent stage. This two-stage technique, despite producing outstanding results, is nevertheless challenging and calls for a high level of deep learning proficiency when preprocessing and attempting to extract the most representative features from the raw data. Finding a straightforward yet effective methodology that can combine all seizure detection phases into a single deep-learning automated system is therefore preferable. 4

The majority of these deep learning-based methods were developed using models of traditional neural networks, such as the convolutional neural network (CNN), recurrent neural network (RNN), long short-term memory (LSTM), and gated recurrent unit (GRU). The ability to retrieve seizure properties from a shallow neural network is typically somewhat limited. Seizures are caused by various parts of the brain in different ways. It is necessary to distinguish between EEG data from various brain areas. As a result, our focus is on creating a deep neural network that can handle raw EEG data directly, distinguish between data on different channels, and automatically identify seizures and non-seizures. 5

Deep learning methods for graph data have recently attracted more and more attention. To handle the complexity of graph data, new generalizations and definitions of crucial operations have been rapidly developed over the past few years, driven by CNNs, RNNs, and autoencoders from deep learning. Existing machine learning algorithms face substantial difficulties as a result of the complexity of graph data. Because graphs can be asymmetric, they may contain varying numbers of unordered nodes and neighbors. For relational data between variables in these applications, graphs may be a particularly useful encoding approach because they naturally reflect relationships between entities. 6 Hence, a major area of study has been the generalization of graph neural networks (GNN) into structural and non-structural situations. Graph convolutional networks (GCNs), which have enabled the use of CNN's representation learning capabilities for irregular graph data, have advanced the theory of signal processing on graphs. Through the use of graph convolutional networks, convolution is made applicable to non-Euclidean graph data. Graph Attention Network (GAT), the important variation of the graph-based neural network, can automatically assign weights to neighbouring nodes, capturing the significance of connections between nodes within the graph. 7

The time-domain EEG data are directly translated into their graph representations by the graph-based approaches. Because of this, they are more susceptible to the phase changes of various signals, which might result in undesirable intra-class variance. Thus, we propose a sequential graph convolutional network (SGCN) to solve this issue that first applies the Fast Fourier Transform to the time-domain signal (FFT). With all EEG signals having the same length and sampling rate, the outcome is an ordered list of frequency-domain properties that are completely aligned. The problem of time domain phase shift is no longer a problem for the complex networks formed from these frequency-domain data. Additionally, these frequency-domain complex networks might produce very comprehensible results due to the extensive domain knowledge regarding the relationship between epilepsy and EEG components on particular frequencies. 8

Recurrent neural networks were created as a deep learning technique for handling sequential input. It is made up of cyclic-connected feedforward neural networks. By utilizing the temporal correlations between the data at each point in time, it maps the whole history of input in the network to anticipate each output. RNNs with sophisticated recurrent hidden units, such as the LSTM unit and the gated-recurrent unit (GRU), have gained popularity in recent years as a method for modelling temporal sequences. 9 Recurrent neural networks (RNNs) are well-liked designs for sequence analysis that keep a hidden representation of the signal at each point in time. Information from nearby time points is combined via this concealed representation, which is continuously updated depending on its previous value. EEG data from one-second windows is input directly into an RNN that is comparable to CNNs. 10

Nonlinear functions that are frequently employed in the development of conventional RNNs include the sigmoid or a hyperbolic tangent function. The stochastic gradient descent approach known as backpropagation training algorithm to recurrent neural network applied to sequence data like a time series (BPTT) is frequently used to estimate the parameters of the RNN model. The “vanishing” or “exploding” gradient problems, which frequently occur while training RNNs using BPTT, have been addressed by two specific models, the long-short term memory (LSTM), and the gated recurrent unit (GRU). Utilizing the hidden state from the conventional RNN as an intermediate candidate for an internal memory cells and adding it as an element-wise weighted-sum to the previous value of the internal memory state to produce the current value of the memory cell, the combination of LSTM-RNN and GRU-RNN transforms the conventional RNN into DeepRNN. The “vanishing” or “exploding” gradient problem can be solved and the DeepRNN characteristics are achieved by using the additive memory unit in LSTM and GRU. 11

Feature extraction in deep learning is the process of automatically extracting meaningful and relevant features from raw data. It involves transforming the input data into a more compact representation that captures the most important information required for the task at hand. It helps to reduce the complexity of the data and increase the efficiency and accuracy of the model. By automatically extracting meaningful features, the model can focus on learning the important patterns in the data, rather than being overwhelmed by irrelevant information. In deep learning, feature extraction is often performed by intermediate layers of a neural network, which extract higher-level features that are more relevant to the task. 12 Line length, auto-covariance, auto-correlation, and periodogram as feature extraction techniques we used in this experiment. Line length is a simple feature that measures the length of a signal and can provide information on the shape and amplitude of the signal. 13 Auto-covariance and auto-correlation are statistical measures that capture the relationship between different segments of the signal, providing information on the variability and periodicity of the signal. 14 Periodogram is a spectral analysis technique that decomposes the signal into its frequency components, providing information on the frequency content of the signal. These features can be combined in various ways to capture the unique characteristics of the signal and improve the performance of deep learning models. 15

The diagnosis of seizures is an important element of addressing neurological illnesses, and the use of deep learning models has shown encouraging results in improving accuracy. In this paper, we present SGCN-DeepRNN, a novel technique for EEG-based seizure detection that capitalizes on the characteristics of the sequential graph convolutional network (SGCN) and Deep Recurrent Neural Network (DeepRNN) architectures. Instead of digging into technical details, we focus on the practical applications of SGCN and RNN algorithms for EEG data processing. The proposed technique employs SGCN to detect spatial correlations among derived features from EEG data. Because of the graph structure inherent in EEG, SGCN is particularly well suited for such tasks. Following SGCN processing, the data is sent onto a DeepRNN, which utilizes its capacity to model temporal dependencies. The DeepRNN employs gated recurrent units to update its internal memory state depending on processed input to determine the presence or absence of a seizure. In the following sections, we will look into particular applications and examples of SGCN and RNN algorithms, proving their uses in the context of EEG analysis. We hope to offer a clear understanding on how these approaches contribute to the proposed seizure detection framework by concentrating on practical applications.

For the rest of this paper, Section 2 explores previous research and a comparative analysis of the state-of-the-art. Section 3 demonstrates the proposed method and the SGCN-DeepRNN based hybrid framework utilized in the paper. Section 4 describes two datasets used in this work. Section 5 provides the detail explanation of the result & experiment analysis, patient-specific experiments, and comparison to other state-of-the-art techniques. Finally, the future research direction discussed, and the concluding remarks are addressed in Section 6 and Section 7, respectively.

Related work

The detection of seizures is based on the assumption that the seizure and non-seizure phases are fundamentally different. The majority of epilepsy research presently focuses on using EEG data to detect seizures.

J. Wang et al. 16 presented a study in which the frequency domain representation of EEG signals has been used sequential graph convolutional network (SGCN) architecture to preserve sequential information. This approach can reduce computation complexity without affecting classification accuracy. We also present convergence results for the proposed approach. Vidyaratne et al. 17 employed a deep recurrent neural network architecture for automated patient-specific seizure detection using scalp EEG. This network achieved superior performance to current state-of-the-art methods with a higher detection rate and low processing time, making it appropriate for real-time use. An end-to-end deep learning model that combines a CNN and a BiLSTM to efficiently detect epileptic seizures in multichannel EEG recordings, outperforming conventional feature extraction methods, and state-of-the-art deep learning approaches. Craley et al. 10 developed a model that captures both short and long-term correlations in seizure presentations and demonstrates strong generalizability to new patients. Their model mimics an in-patient monitoring setting through a leave-one-patient-out cross-validation procedure, attaining an average seizure detection sensitivity of 0.91 across all patients. Golmohammadi et al. 18 developed a model that performed long short-term memory units (LSTM) and gated recurrent units (GRU) in seizure detection using the TUH EEG Corpus. The results show that convolutional LSTM networks with proper initialization and regularization outperform convolutional GRU networks, achieving 30% sensitivity at 6 false alarms per 24 h. Talathi et al. 19 discovered how recurrent neural networks (RNNs) can aid in the development of automated seizure detection and early seizure warning systems. Abdelhameed et al. 20 used a gated recurrent unit (GRU) RNN, which achieved an impressive overall accuracy, detecting 98% of seizure events within the first 5 s of the seizure duration. Their model diagnoses epilepsy with an automatic seizure detection system based on raw EEG signals, using a CNN-Bi-LSTM architecture with higher accuracy in classifying normal and ictal cases, and overall accuracy for normal, inter-ictal, and ictal cases, with robust evaluation via k-fold cross-validation. Aliyu et al. 21 used discrete wavelet transform (DWT) preprocessed EEG data to achieve good accuracy in epileptic EEG signal classification, outperforming logistic regression (LR), support vector machine (SVM), K-nearest neighbor (KNN), random forest (RF) and decision tree (DT) models. RMSprop with 0.20 dropout and four hidden layers was found to be the optimal configuration. The discrete wavelet transform (DWT) is used to eliminate noise and extract 20 eigenvalue characteristics from the raw EEG data that was collected from patients and healthy subjects. The preprocessed data is then sent to the classifier in the second stage to identify epilepsy. Several classification techniques are models in the classifier, and the models are trained and tested using the data.

Jaffino et al. 22 applied grey wolf optimization (GWO) based on the deep recurrent neural network (RNN) approach for accurate detection of epileptic seizure in brain waves. The proposed method achieved a precision of 93.4% by decomposing brain waves into sub-bands using discrete wavelet transform, extracting features, and applying a GWO-based deep RNN for classification. Johnrose et al. 23 proposed a novel method using the rag-Rider optimization algorithm (rag-ROA) and deep recurrent neural network (Deep RNN) for EEG seizure detection. Kumar et al. 24 focused on discovering epileptic seizures automatically, which introduces a method utilizing wavelet, sample, and spectral entropy features extracted from EEG signals. Huang et al. 25 introduced an end-to-end deep neural network, attention-based CNN-BiRNN that uses multi-scale convolution, attention models, and multi-stream bidirectional recurrent models to automatically detect seizures with high sensitivity and specificity. Additionally, a channel dropout method is proposed for training the model and handling missing or different channels in EEG signals. Naderi et al. 26 presented a novel three-stage technique utilizing spectral analysis and recurrent neural networks. Gayatri et al. 27 developed an automated diagnostic system for epilepsy using an Elman Neural Network and ApEn as an input feature. The proposed system can detect the stage, type, and reason for epilepsy in patients, providing an efficient alternative to traditional analysis methods. Minasyan et al. 28 constructed a patient-specific method for automatic seizure detection using recurrent neural networks and scalp electroencephalogram features. The proposed pre-onset detection achieves a median time of 51 s and a low false-positive rate, suggesting its potential for a non-invasive and reliable epilepsy diagnosis.

Epilepsy can be debilitating, and predicting seizures accurately is crucial to improving patients’ lives. Borhade et al. 29 proposed an innovative technique, the Modified Atom Search Optimization-based Deep Recurrent Neural Network that uses electroencephalogram signals to detect epileptic seizures automatically. Fukumori et al. 30 utilized a convolutional layer and a CNN or RNN model, a fully data-driven approach that achieves an exceptional performance in spike and non-spike classification. The results of this study have important implications for early diagnosis and treatment of epilepsy in clinical settings, highlighting the potential for this novel method to improve patient outcomes. The authors 31 proposed a cutting-edge method for automatic seizure/non-seizure classification using independently recurrent neural networks (IndRNNs) with a dense structure and attention mechanism. The study also sheds light on the significance of segment length on classification accuracy, further enhancing our understanding of this critical factor. Automated EEG analysis can improve diagnosis and reduce manual errors in brain-related disorders such as epilepsy. To identify abnormal brain activity, the paper 32 proposes a novel recurrent neural network architecture called ChronoNet, which outperforms previous studies on the TUH Abnormal EEG Corpus dataset by 7.79%. ChronoNet is also shown to have domain-independent applicability, successfully classifying speech commands. Researchers 33 introduce an innovative method utilizing recurrent neural networks (RNNs) with long-short term memory (LSTM) networks to automate EEG interpretation. With no need for pre-processing, this approach effectively models temporal patterns from raw EEG data, boosting a low computational complexity and memory requirement. The system impressively achieved an average validation accuracy of 95.54% and an average AUC of 0.9582, highlighting the tremendous potential of deep learning in both clinical applications and neuroscience research. The authors 34 developed a long short-term memory (LSTM) based recurrent artificial neural network (RNN) to classify EEG signals and predict epileptic seizures. The proposed network achieved high accuracy and sensitivity in detecting ictal regions but was unable to accurately classify pre-ictal regions. Additionally, it demonstrated excellent specificity in its classifications.

Dataset

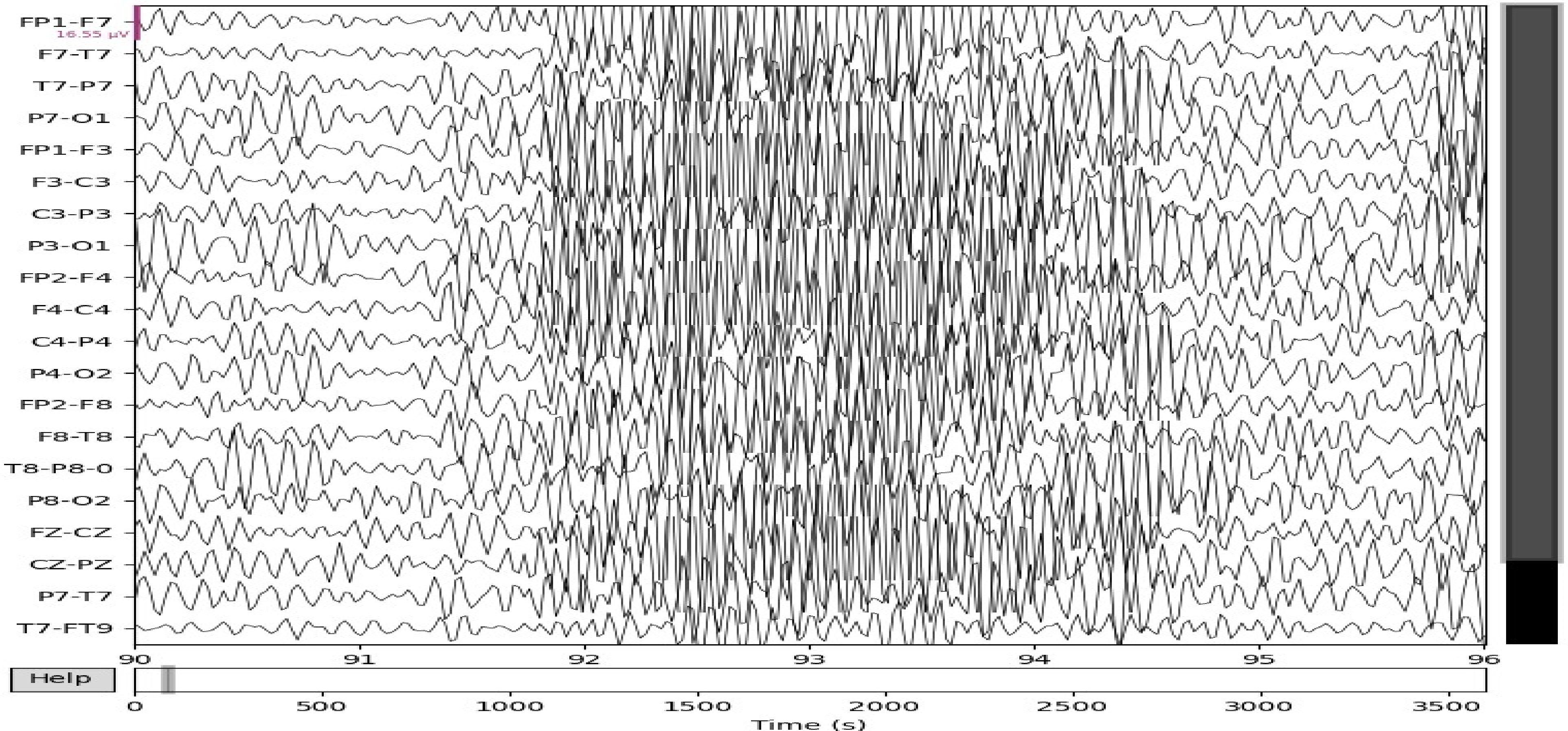

We analyze our methods using the TUH dataset and the CHB-MIT dataset. Boston Children's Hospital (CHB) and the Massachusetts Institute of Technology (MIT) provided a publicly available EEG dataset, which is called CHB-MIT dataset. There are 17 females, ranging in age from 1.5 to 19, and 5 men, ranging in age from 3 to 22. The sampling frequency for the signals is 256 Hz. The signals last for a total of 958 h, of which 198 h are taken up by seizures. We used the International 10–20 system to get these signals. Our investigations make advantage of the 16 bipolar electrodes’ common EEG signals, including “FP1-F7,” “F7-T7,” “T7-P7,” “P7-O1,” “FP1-F3,” “F3-C3,” “C3-P3,” “P4-O2,” “FP2-F4”, “F8-T4”, “FZ-CZ,” and “CZ-PZ”. 35

The second data source is a public EEG dataset called TUH (Temple University Hospital). 36 This dataset consists of more than 30,000 EEG recordings that were made at TUH beginning in 2002. Patient ages, diagnoses, medications, channel setups, and sample frequencies all differ between recordings. The TUH EEG Abnormal Corpus is a derived corpus made up of recordings from the TUH EEG that have been extensively annotated by specialists as either “normal” or “abnormal” (TUAB). For this experiment, we have used 16 channels. To obtain a total of 1385 EEGs from 1385 different patients, we only use the TUAB recordings that are annotated as “normal” and disregard those that are “abnormal” for our research. The TUH EEG Corpus contains data from 504 patients with epilepsy. This data includes EEG recordings from both interictal (periods between seizures) and ictal (during seizures) states. 37

Here are some of the differences in EEG patterns between normal with eyes open and pure epileptic seizures:

In addition to these changes in the frequency bands, epileptic seizures may also be characterized by other features, such as:

In Figures 1 and 2, we show the comparative EEG pattern for eyes open and eyes closed state of pure epileptic seizure.

DeepRNN classifier.

Detailed about proposed method of SGCN-DeepRNN based seizure detection.

Proposed method

This paper proposed a hybrid deep learning method for the EEG signal of epileptic seizure patients so as to automatically detect seizure with high efficiency. In this section, we demonstrate all components of our comprehensive model which will give a clear understanding about proposed method.

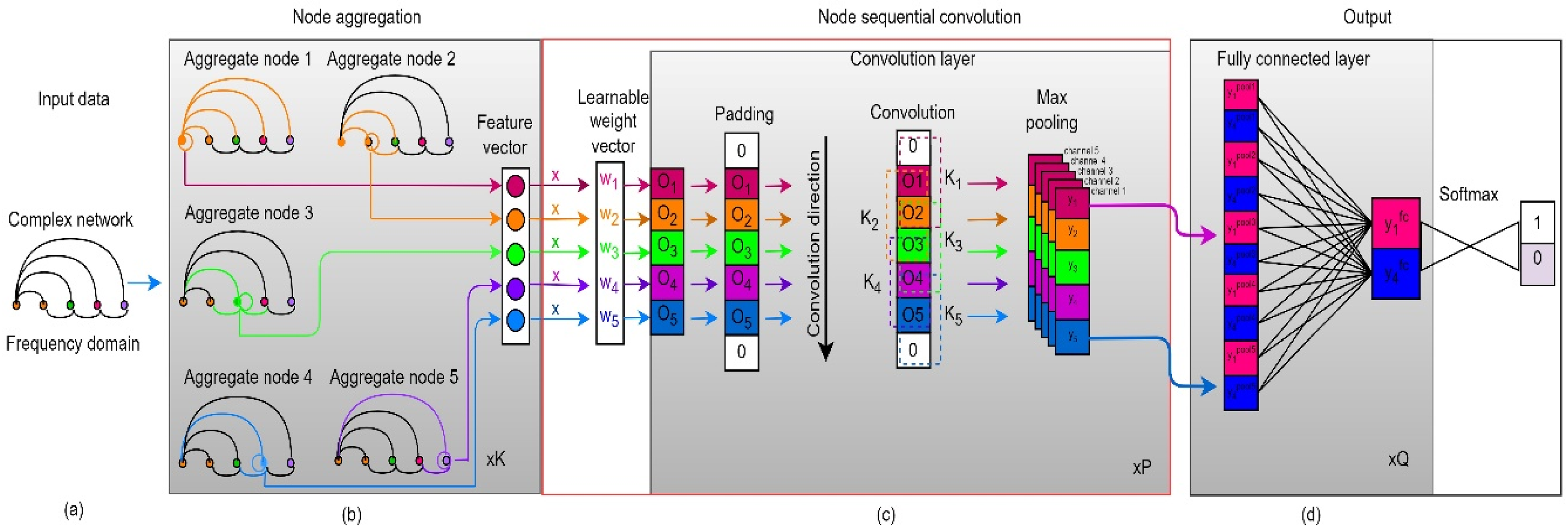

SGCN-DeepRNN based system model

The proposed hybrid deep learning framework combining with sequential graph convolutional neural network (SGCN) and deep recurrent neural networks (DeepRNN) can potentially provide improved performance of seizure detection in EEG signals. This network combines the strengths of two state-of-the-art deep learning models: i) sequential graph convolutional Network (SGCN) and ii) deep recurrent neural network (DeepRNN). The SGCN component captures the spatial relationships between electrodes and captures the spatial distribution of EEG activity, 16 while the DeepRNN formulate the temporal dynamics of EEG signals and captures the temporal information. 38 By combining these two components, the SGCN-DeepRNN hybrid network is able to combine both spatial and temporal information, providing a more complete understanding of the EEG signals. The final detection is made by feeding the outputs of the SGCN and DeepRNN components into a fully connected layer. Figure 3 depicts the flow diagram of the proposed approach.

Values obtained for normal with eyes open and pure epileptic seizures (TUH dataset).

Pre-processing of EEG signal

EEG signals can be acquired using electrodes placed on the scalp to record electrical activity from the brain. The acquired raw EEG signals usually undergo preprocessing steps, such as filtering, artifact removal, and re-referencing to reduce noise and improve the signal-to-noise ratio. These preprocessing steps are crucial for improving the quality of the EEG signals and for ensuring the accuracy of subsequent analysis and modeling. In this work, bandpass filtering approach is used to preprocess EEG signals. After data segmentation, it breaks down into a number of distinct time periods using a sliding window with a period of 1 s and an overlap rate of 0.5. For instance, an EEG signal of 60 s in duration and a sampling frequency of 1 Hz would be represented as a vector 60 s in length. Filter the EEG signal to the delta (1–4 Hz), theta (4–8 Hz), alpha (8–12 Hz) and beta (13–24 Hz) bands. Remove artifacts from the EEG signal using baseline correction.

Feature extraction

Seizure identification in EEG signals, line length characteristics, auto-covariance and auto-correlation, and periodogram features have all been demonstrated to be very effective. In EEG signals, line length feature is useful in capturing changes in signal amplitude and can provide information on the presence of spikes and sharp waveforms, which are often associated with epileptic seizures. Auto-covariance and Auto-correlation features capture the similarity and regularity of a time series signal. In EEG signals, these features can provide information on the periodicity and stability of the signal, which are important indicators of epileptic activity. Periodogram feature captures the frequency content of a signal by transforming it into the frequency domain. Moreover, high-frequency oscillations, such as those linked to epileptic convulsions, may be detected using periodogram features.

Let

Using the equation, the autocorrelation

Graph representation

Graph representation in graph neural networks (GNNs) is essential for processing and understanding graph-structured data. In GNNs, nodes and edges are represented mathematically as feature vectors and relationships, respectively. The graph representation can be represented mathematically as Y = σ

Where

Sequential graph convolutional network

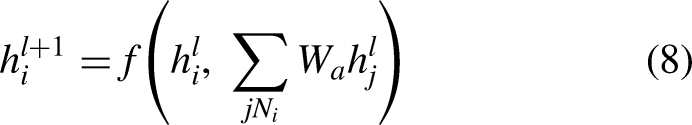

The architecture of sequential graph convolutional network (SGCN) is designed to retain sequential information within the graph neural network (GNN) framework. Most traditional GNNs consist of two main components: multi-hop aggregation of node neighbors and graph-level readout. The multi-hop aggregation updates the feature of each node by iteratively aggregating the features of its neighbors, while the graph-level readout summarizes the node features into a graph-level representation using sum or pooling operations. However, this process of readout often results in the loss of node-level sequential information, making traditional GNNs unsuitable for learning from complex networks. To overcome this limitation, the SGCN architecture incorporates an improved sequential convolution operation into the GNN framework. This operation is key to preserving sequential information, as it enables the model to capture the relationship between node features at different time steps. The working process of the sequential graph convolutional network (SGCN) involves the following steps:

The mathematical formulation of the SGCN can be represented as follows:

Let

The graph-level readout can be represented as follows:

Values obtained for normal with eyes closed and pure epileptic seizures (TUH dataset).

Deep recurrent neural network (DeepRNN)

Deep recurrent neural network (DeepRNN) is a latest idea of neural network knowledge architecture that performs better than conventional tuning-based learning techniques. When compared to other classifiers, the deep recurrent neural network (RNN) algorithm has a high learning rate.

22

To categorize seizures, we specifically employed DeepRNN by using gated recurrent unit (GRU) as a hidden unit. Recurrent neural networks (RNNs) with complex recurrent hidden units, such the long-short-term memory (LSTM) unit and the gated-recurrent unit (GRU), have recently gained popularity as a method for modeling temporal sequences.32–34 Due to the well-known vanishing or expanding gradient difficulties, standard RNNs are challenging to train. The gated recurrent network topologies, such as gated recurrent unit (GRU), were presented as a solution to the vanishing gradient problem.

A RNN is a discrete dynamical system with an output of

The transition function and output function of a traditional RNN are defined as

In Figure 5 we give the basic architecture of the gated recurrent unit that is adjoined to the RNN to form the DeepRNN.

SGCN-DeepRNN based system model for seizure detection.

Architecture of DeepRNN

Deep RNN is a network structural design that consists of various recurrent hidden layers in the network design's layer of hierarchy. The recurrent connection remains at the hidden layer in Deep RNN. Because of the recurrent feature, the Deep RNN was extremely effective in working with the features. Deep RNN is regarded as an excellent classifier among traditional deep learning strategies due to the chronological pattern of information.

The setup of Deep RNN is created by taking the input vector of

Furthermore, the arbitrary unit number and total number of units of the

To ease the process, the

In Figure 6 we outline the architecture of the Deep RNN classifier.

SGCN model.

SGCN-DeepRNN Seizure Detection Model

In Figure 7 shows the detailed system flow diagram of proposed SGCN-DeepRNN based seizure detection method.

Basic architecture of GRU.

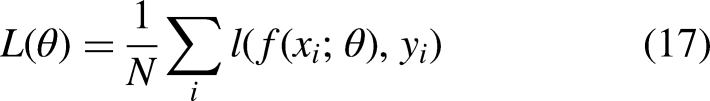

Loss function

Loss function measures the difference between the desired output and actual output of a deep learning model. The goal of training a model is to minimize the loss function, which guides the adjustment of the model's parameters during training. Common loss functions include MSE, MAE, categorical cross-entropy, binary cross-entropy, and negative log likelihood, and the choice depends on the task and data.

In a graph classification task, where the goal is to predict the label of the entire graph, the binary cross-entropy or the categorical cross-entropy loss can be used, depending on whether the labels are binary or categorical. Other loss functions such as mean squared error or mean absolute error can be used for regression tasks, where the goal is to predict continuous values rather than discrete labels.

In general, the loss function for SGCN can be written as:

Experiment & result

In this section we present the details of our experiments and explain the experimental outcomes that clearly establish our proposed method.

Experimental setup

Here we demonstrate four extensive experiments. To carry out the experiments, PyTorch was utilized, a powerful tool for deep learning. The computations were carried out on a high-performance server equipped with an Intel i7-12700k CPU @3.6 GHz and four NVIDIA Titan XP GPUs and 24 GB RAM ensuring reliable and efficient results.

The parameters were adjusted throughout a range of values to set the best DeepRNN parameters for the model. Performance was tested for dropout, number of layers, and number of units, learning rate, and activation function. Experiment 1 had two layers with 64 units in each layer and a dropout rate of 0.2, while Experiment 4 had five layers with 512 units in each layer and a dropout rate of 0.5. From the table, we can see that increasing the number of layers and units generally improved the accuracy of the model, although there were diminishing returns beyond a certain point. The choice of activation function also had an impact on performance, with leaky ReLU achieving the highest test accuracy in Experiment 3, where learning rate is 0.005 and dropout rate is 0.4.

Evaluation indicators

By comparing the labels given by the proposed model and the experts for each epoch, the performance of the proposed model is evaluated on an epoch-based basis. As evaluation markers, sensitivity, specificity, accuracy, AUC (Area under the Curve of ROC), and F1 are used.

Sensitivity: It is the proportion of correctly classified positive samples defined as:

Detection of seizure signal.

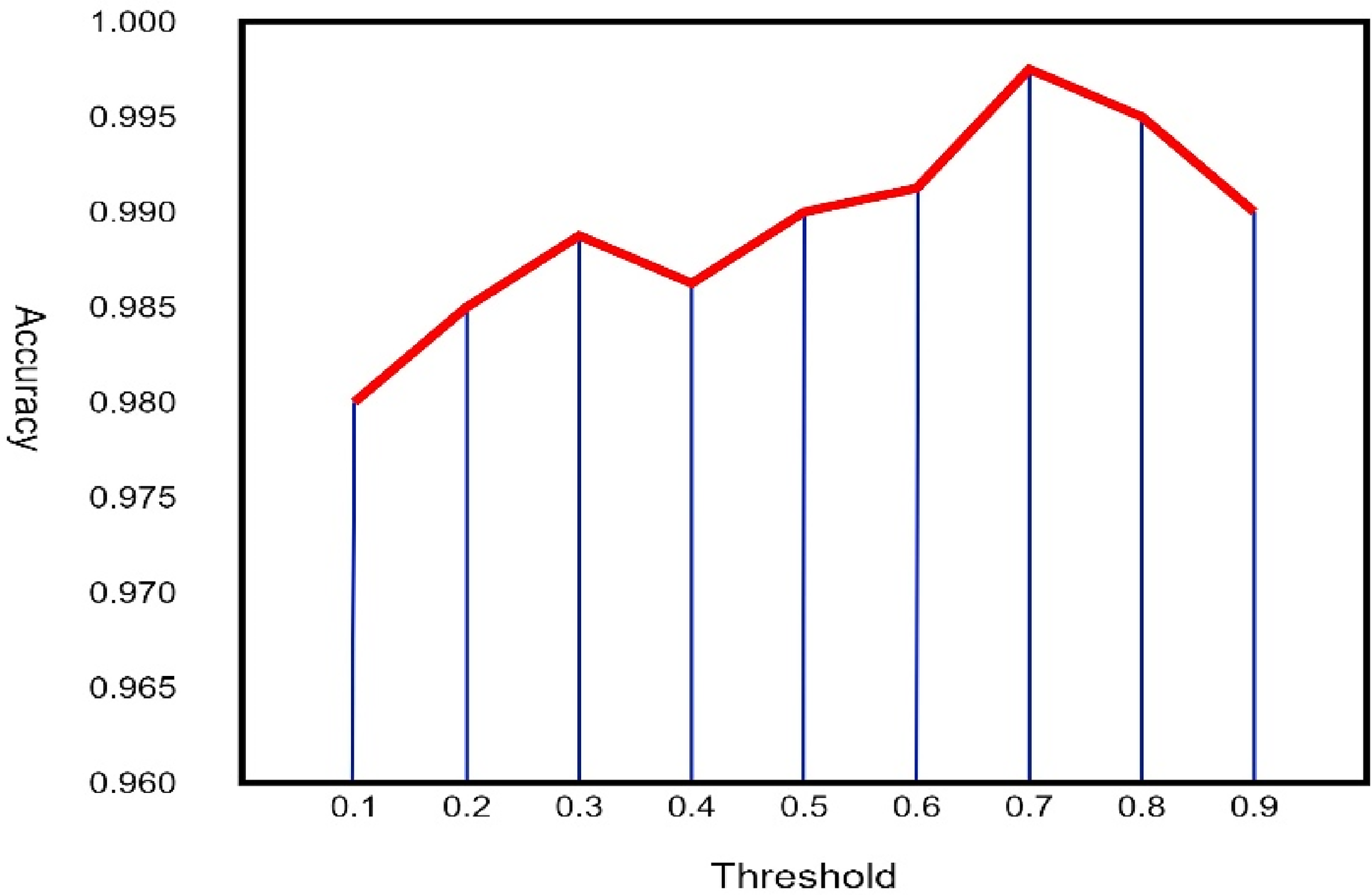

The influence of different thresholds on the performance of the SGCN-DeepRNN model on CHB-MIT dataset and TUH dataset are given in Figures 9 & 10.

The influence of different thresholds on the performance of the SGCN-DeepRNN model on CHB-MIT.

The influence of different thresholds on the performance of the SGCN-DeepRNN model on TUH.

Evaluation of hyperparameters

To fully understand the impact of the SGCN-DeepRNN hybrid model, we conducted patient-specific separate experiments using the SGCN model, the DeepRNN model, and our proposed hybrid SGCN-DeepRNN model. We ensured the fairness of the experiments by setting the models to the same parameters. The results, shown in Table 1, prove the superiority of our proposed SGCN-DeepRNN model over the other models in all three-evaluation metrics. This demonstrates the robustness and effectiveness of our model in EEG signal processing. By combining the advantages of both models and leveraging SGCN to extract spatial features followed by DeepRNN to extract temporal features and classify the signals, we fully utilize the temporal and spatial relationships between EEG channels. As a result, the learning ability of our model is significantly enhanced. As a result, the proposed SGCN-DeepRNN model offers a superior solution to epileptic seizure detection shown in Table 2.

Performance accuracy of SGCN-DeepRNN with CHB-MIT and TUH dataset.

Experimental results of SGCN, DeepRNN and the proposed SGCN-DeepRNN model on two dataset.

Patient by patient experiments

To ensure the reliability and stability of the results, the experiments were repeated 10 times and validated using 5-fold cross-validation on CHB-MIT dataset in Table 3. The average results for all patients are nothing short of remarkable, with an overall accuracy of 99.007%, a sensitivity of 98.058%, and a specificity of 95.025% in Table 2. Only 3 patients had a specificity score of less than 90%, which can be attributed to the fact that some patients had fewer epileptic seizures and their scalp EEG records were greatly affected by external noise. Impressively, the F1-score and AUC of the proposed method were 96.671% and 97.669%, respectively, underscoring the model's outstanding accuracy and stability. Moreover, the p-values of all cases were less than 0.005. The proposed SGCN-DeepRNN architecture has demonstrated robustness and effectiveness in EEG signal processing, surpassing the performance of both the SGCN and DeepRNN models in all indicators.

Experimental results on our CHB-MIT dataset using the proposed SCGN-DeepRNN architecture.

In Table 4 shows the experimental performance on TUH dataset by using SGCN-DeepRNN method. The mean results for all patients are impressive, with an overall accuracy of 98.08%, a sensitivity of 95.13%, and a specificity of 94.99%. Only three patients had a specificity score of less than 90%, which may be due to external noise affecting their EEG records. The F1-score and AUC of the model were 97.88% and 97.95%, respectively, demonstrating its outstanding accuracy and stability. The p-values of all cases were less than 0.005, indicating that the decision variables learned by the model are significantly different. Overall, the SGCN DRNN model has demonstrated robustness and effectiveness in EEG signal processing, surpassing the performance of both the SGCN and DeepRNN models in all indicators.

Experimental results on our TUH dataset using the proposed SCGN-DeepRNN architecture.

Discussion

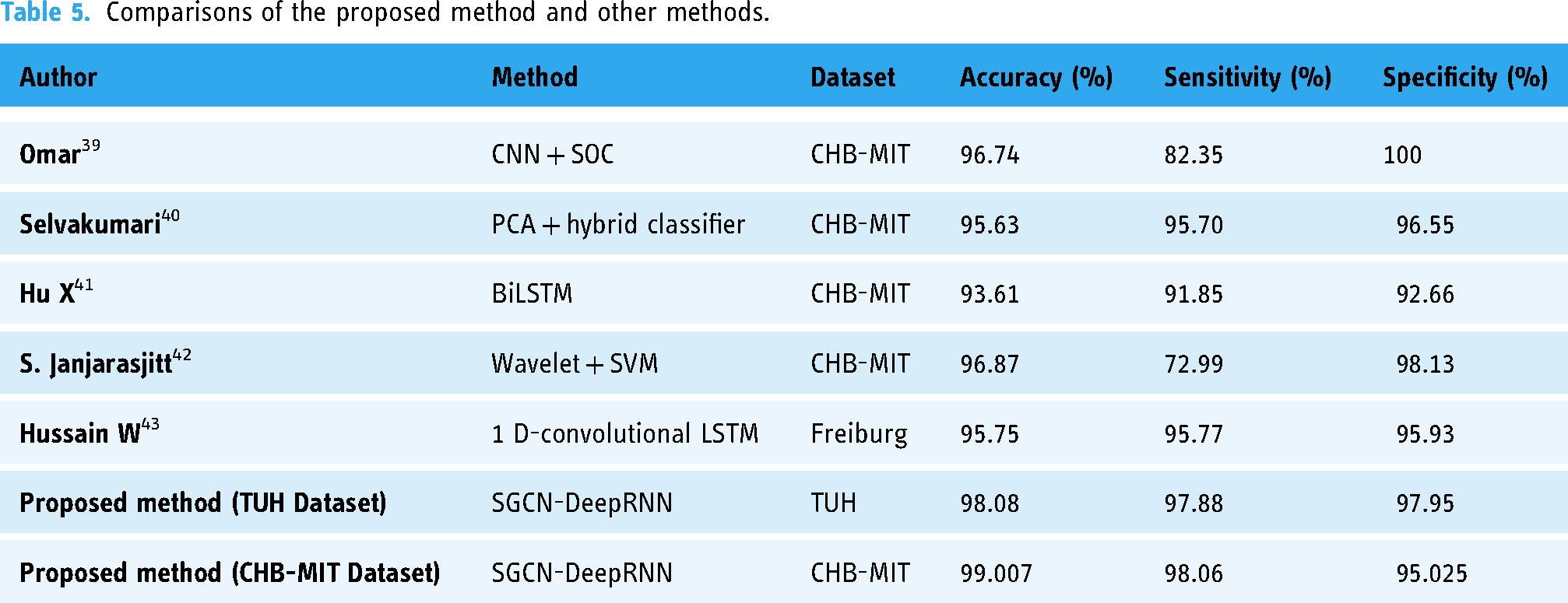

We compare the proposed SGCN-DeepRNN model with other five state-of-art models. The SGCN-DeepRNN model achieved an impressive accuracy of 99.007%, with a sensitivity of 98.06% and a specificity of 95.025% on CHB-MIT dataset. On the other hand, In TUH dataset 98.08% accuracy with sensitivity of 95.13% and specificity is 94.99%. This indicates that the model can accurately detect seizures in patients with epilepsy. This is further supported by the high F1-score and AUC values on CHB-MIT dataset of 96.671% and 97.669%, respectively for TUH dataset 97.88% and 97.95% respectively. The next best-performing model was the Wavelet-SVM model, which achieved an accuracy of 96.87% and a specificity of 98.13%, but had a much lower sensitivity of 72.99%. This suggests that the Wavelet-SVM model may struggle to accurately detect epileptic seizures in patients with mild symptoms. The 1D-convolutional-LSTM model achieved an accuracy of 95.75%, with a sensitivity of 95.77% and a specificity of 95.93%. This model performed well, but not as well as the SGCN-DeepRNN model. The BiLSTM model had an accuracy of 93.61%, with a sensitivity of 91.85% and a specificity of 92.66%, indicating that it is less accurate than the SGCN-DeepRNN model. The PCA-hybrid classifier model had an accuracy of 95.63%, with a sensitivity of 95.70% and a specificity of 96.55%. This model performed well, but again, not as well as the SGCN-DeepRNN model. The CNN-SOC model had an accuracy of 96.74%, with a sensitivity of 82.35% and a specificity of 100%. This model had a high specificity but a low sensitivity, which indicates that it may miss epileptic seizures in some patients.

The comparison we mentioned in Table 5 clearly shows that our proposed method performs better than the other three state-of-the-art methods. It should be noted here that we have applied our method to both datasets TUH Dataset and CHB-MIT Dataset.

Comparisons of the proposed method and other methods.

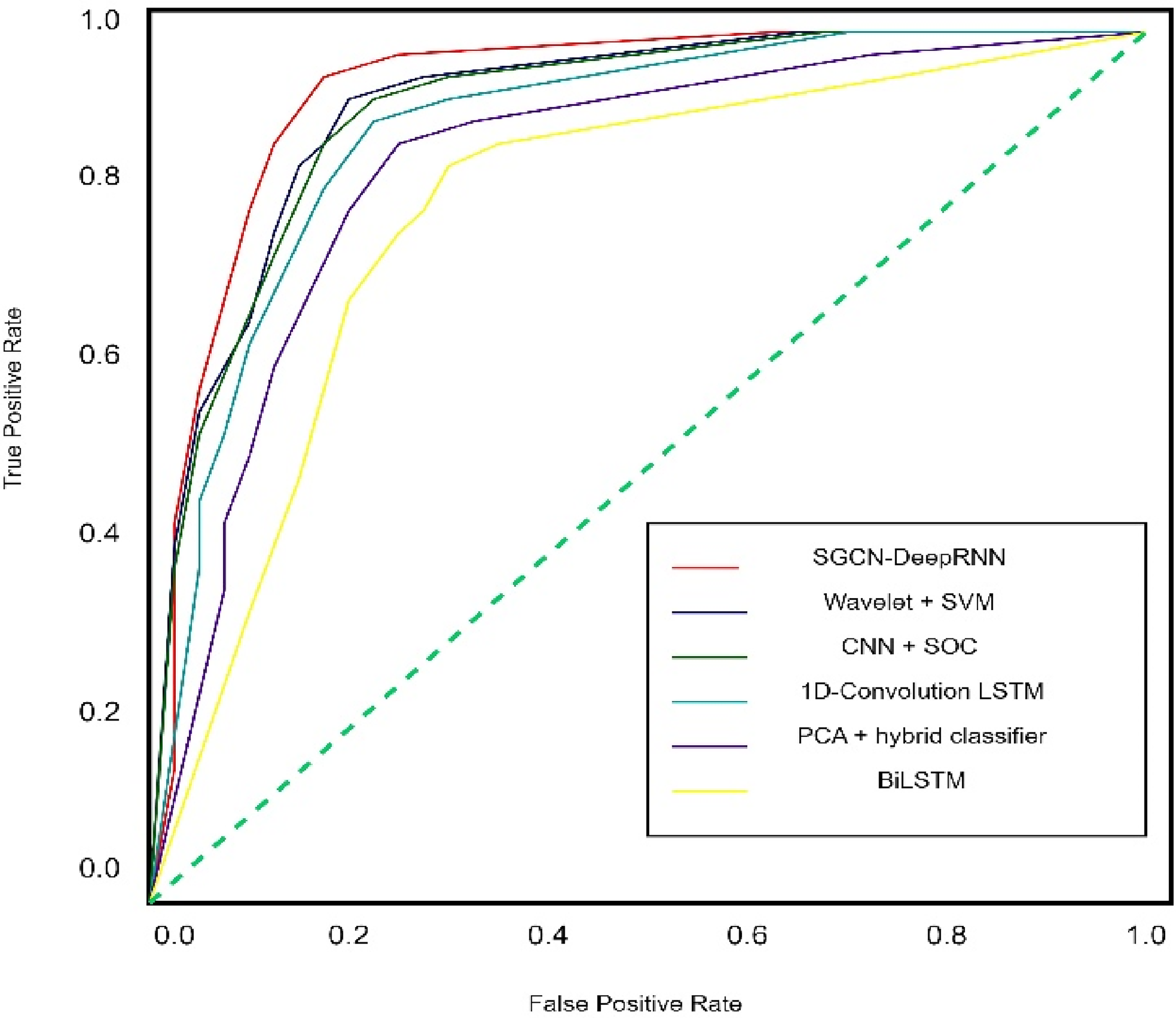

Figures 11 & 12 show the AUC and ROC curve of our experiments. The detail description of figures is mentioned in the figure title.

AUC curves for six different model where SGCN + DeepRNN get maximum area 97.67%.

ROC curves of the proposed models. Mean (red line), standard deviation (blue shaded area), and median (blue line) ROCs are calculated using the obtained ROC curves of each EEG recording for models based on CNN-SOC (left), BiLSTM (middle), and PCA + hybrid classifier (right).

Figure 13 shows the losses regarding the experiment through DeepRNN to different hidden batch size of EEG Signal. Figures 14 & 15 provide the graphical comparison of our proposed model (SGCN-DeepRNN) with other five state-of-art model.

Loss with regard to the DeepRNN hidden batch size.

Graphical representation for comparing five model with SGCN-DeepRNN (CHB-MIT).

Graphical representation for comparing five model with SGCN-DeepRNN (TUH).

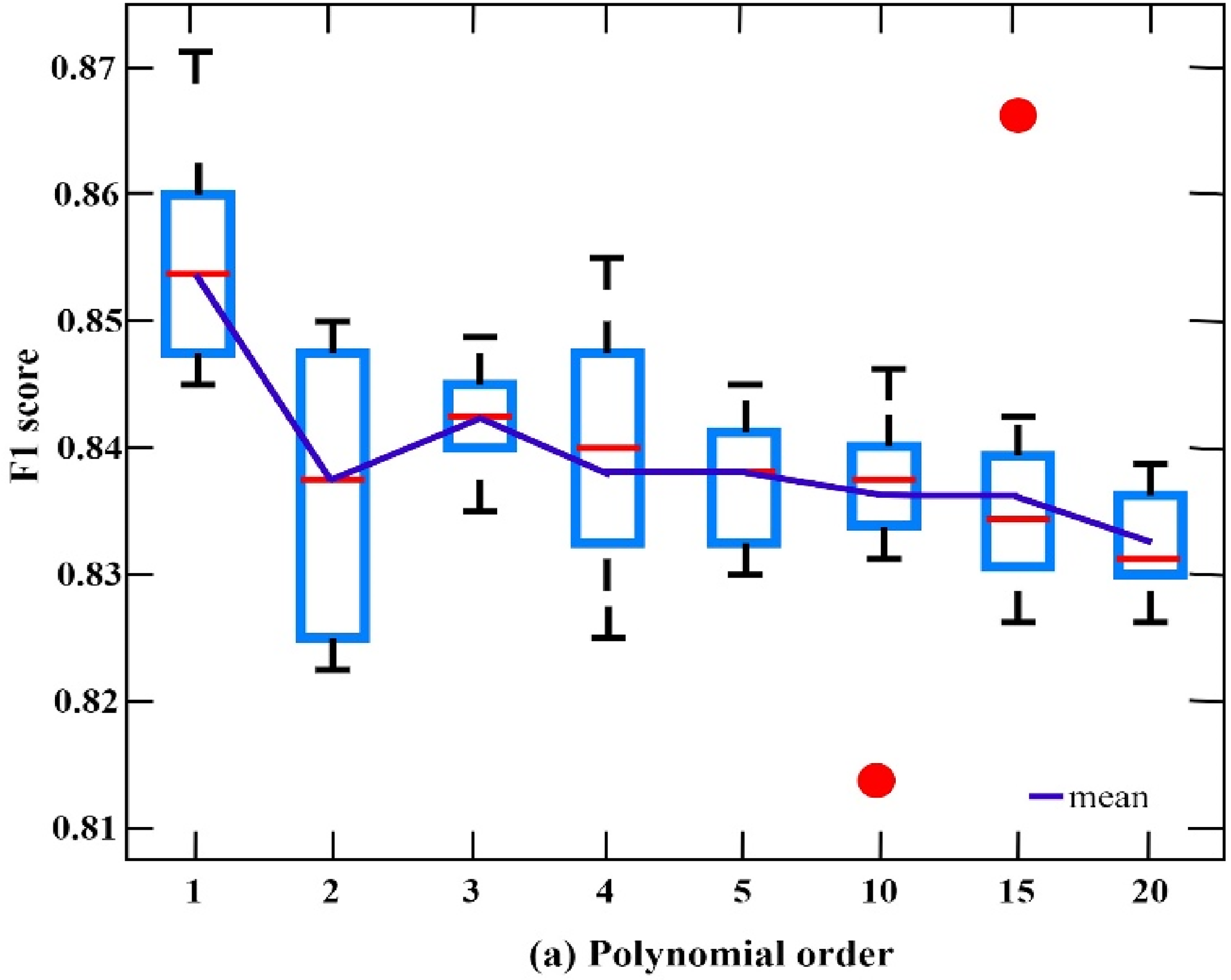

Figures 16 & 17 show the result of F1 score in our proposed model (SGCN-DeepRNN) while experiment with two different datasets CHB-MIT and TUH EEG Seizure Corpus.

F1 scores of SGCN-DeepRNN on CHB-MIT with various polynomial orders.

F1 scores of SGCN-DeepRNN on TUH with various model capacities.

Our analysis depicts that the SGCN-DeepRNN model outperforms all of the other models in terms of accuracy, sensitivity, and AUC.

Future work

The impressive results from our proposed SGCN-DeepRNN model in both ablation experiments and patient-by-patient experiments demonstrate its superiority in epileptic seizure detection. However, there is still much room for improvement in this field. Future work could focus on enhancing the model's performance by exploring new techniques for feature extraction, such as sparse graph convolutional networks or attention mechanisms. Additionally, the proposed model could be applied to other neurological disorders, such as Parkinson's disease, to investigate its effectiveness in different contexts. Furthermore, investigating the interpretability of the model could provide insight into the underlying mechanisms of epileptic seizures and aid in developing personalized treatment plans for patients.

Conclusion

In this paper, we propose SGCN and DeepRNN based hybrid framework for seizure detection. We have created an innovative approach for seizure detection which provides excellent experimental result. The proposed SGCN-DeepRNN hybrid architecture integrates the strengths of spatial and temporal relationships between EEG channels, resulting in better accuracy and performance. The F1-score and AUC values, which demonstrate the model's accuracy and consistency, further reinforce its effectiveness. It is one of the strengths of our method that we conducted experiments on two popular public datasets CHB-MIT and TUH. When compared to other cutting-edge approaches, our SGCN-DeepRNN model outperformed all others in terms of accuracy, sensitivity, and AUC. This represents a significant breakthrough in the field of seizure detection and provides medical professionals with an invaluable tool for accurately diagnosing in epileptic seizures. Our dedication to advancing the field of seizure detection through continued research and development is steadfast. With the remarkable results of our proposed SGCN-DeepRNN hybrid model, we are confident that our approach will pave the way for further advancements in the field, ultimately leading to better care and outcomes for patients with epilepsy.

Footnotes

Acknowledgments

We acknowledge the Research Grant: STR-IRNGS-SET-CAPRT-01- 10 2022 from Sunway University, Malaysia.

Author's note

Ferdaus Anam Jibon is also affiliated with Department of Computer Science and Engineering, International University of Business Agriculture and Technology (IUBAT), Uttara, Dhaka, Bangladesh. Hwang Ha Jin is also affiliated with School of Creative Industries, Astana IT University, Astana, Kazakhstan.

Consent statement

Consent was not necessary for this study. This is because in this research for epileptic seizure detection, the data was extracted from dataset CHB-MIT available at ![]() website. A team of investigators from Children's Hospital Boston (CHB) and the Massachusetts Institute of Technology (MIT) created and contributed this database to PhysioNet. This is a publicly available dataset, and free to use in development and evaluation of computerized approaches to detection of seizure onset.

website. A team of investigators from Children's Hospital Boston (CHB) and the Massachusetts Institute of Technology (MIT) created and contributed this database to PhysioNet. This is a publicly available dataset, and free to use in development and evaluation of computerized approaches to detection of seizure onset.

Credit authorship contribution statement

F.A.J., A.R.J.C., S.S., S.N., F.H.S and A.H.M.K performed the experiments, analyzed the data, and contributed to preparing the manuscript. F.A.J., A.R.J.C., S.S., S.N., F.H.S., A.H.M.K and M.H.M. contributed to the experimental design and reviewed/edited the manuscript. F.A.J., A.R.J.C., S.S., S.N., F.H.S and A.H.M.K contributed to setting up the experiment and reviewed/edited the manuscript. M.UK, M.S. and A.A.F.Y. supervised the experiments, contributed to the data analysis, prepared and reviewed/edited the manuscript.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This research did not receive any specific grant from funding agencies in the public, commercial, or not-for-profit sectors.

Ethical approval

This research requires no approval from any ethics committee as this research uses data that is publicly available.

Data availability statement

All data are available in the manuscript.

Guarantor

Mayeen Uddin Khandaker (M.UK).