Abstract

Background. To investigate the effectiveness of upper limb rehabilitation, sound measures of upper limb function, capacity, and performance are paramount. Objectives. This systematic review investigates reliability and responsiveness of upper limb measurement tools used in pediatric neurorehabilitation. Methods. A 2-tiered search was conducted up to July 2014. The first search identified upper limb motor assessments for 1- to 18-year-old children with neuromotor disorders. The second search examined the psychometric properties of the tools. Methodological quality was rated according to COSMIN guidelines, and results for each tool were assembled in a “best evidence synthesis.” Furthermore, we delineated whether tools were unimanual or bimanual tests and if they measured recovery or did not distinguish between physiological and compensatory movements. Results. The first search delivered 2546 hits. Of these, 110 articles on 51 upper limb assessment tools were included. The second search resulted in 58 studies on reliability, 11 on measurement error, and 10 on responsiveness. Best evidence synthesis revealed only 2 assessments with moderate positive evidence for reliability, whereas no evidence on measurement error and responsiveness was found. The Melbourne Assessment showed moderate positive evidence for interrater and a fair positive level of evidence for intrarater reliability. The Pediatric Motor Activity Log Revised revealed moderate positive evidence for test–retest reliability. Conclusions. There is a lack of high-quality studies about psychometric properties of upper limb measurement tools in children with neuromotor disorders. To date, upper limb rehabilitation trials in children and adolescents risk being biased by insensitive measurement tools lacking reliability.

Keywords

Introduction

The importance of adequate assessments is well known. They can provide information about various health care–related topics, such as the effectiveness of rehabilitation interventions; the course of a disease; the planning and adjustment of treatments; the objective reporting of the patient’s progress to health care specialists, patients, and their families; and the justification of treatments to health insurance companies. 1

While many studies established the psychometric properties of measurement tools, most of these studies focused on healthy or disabled adults. However, for those working in a pediatric setting, the results of these studies, and even the assessment tools themselves, often cannot directly be applied to children. 2 Especially in pediatrics, assessments that take a long time to complete are not well tolerated by young patients, and therapists do not have the time to familiarize themselves with many assessment tools for different patient populations.

Over the past decades, the development of assessment tools for this younger population has advanced, and studies have been conducted investigating the psychometric properties of child-friendly adaptations of existing measurement tools. Lately, a number of reviews have addressed psychometrics of assessments for children.3-5 Yet most of them focused on a specific patient and/or age group, and it remains unclear whether results can be transferred to children with other diagnoses and of other ages. Moreover, none of these reviews differentiated between assessments concentrating on the evaluation of true recovery and those allowing compensatory strategies. However, to understand rehabilitation processes and improve therapeutic decision-making, it is crucial to know the underlying mechanisms of changing motor outcomes. Last, many reviews focused only on one single component of the International Classification of Functioning, Disability and Health (ICF), whereas for a comprehensive evaluation, measures at the ICF component levels Body Function and Activity and Participation should be investigated. 6 The latter is further divided in the ICF qualifiers Capacity, reflecting the best possible the child can do when circumstances are ideal, and Performance, reflecting what the child actually does in its natural environment. 6

Therefore, the objective of this study was to systematically review the literature for all measurement tools available to assess the upper extremity at the level of the ICF qualifiers Body Function, Capacity, and Performance in children and adolescents with a wide range of central motor disorders including cerebral palsy (CP), stroke, traumatic brain injury (TBI), or myelomeningocele (MMC). We thereby provide an overview of the current level of evidence for reliability, measurement error, and responsiveness of those measures for specific diagnoses. While reliability is defined as the proportion of total variance in the measurements derived from “true” differences among patients, measurement error stands for the systematic and random error of a patient’s score that is not attributed to true changes in the construct to be measured. 7 Responsiveness is defined as the ability to detect change over time in the construct to be measured. 7

Additionally, we differentiated whether measurement tools (a) assess only physiologically desired movements; (b) assess both physiological and compensatory movements, but account for compensation in the scoring process; (c) do not distinguish between physiological or compensatory movements. Furthermore, we presented whether task execution and scoring are performed and evaluated unimanually or bimanually. We hope this review can help clinicians and researchers decide what measurement tool would be most appropriate for their therapeutic or experimental intervention.

Methods

Search Strategy

The standardized protocol consisted of a 2-tiered search 8 between December 2012 and June 2013, and an update of the search was performed in July 2014.

Search 1: Identification of Measurement Tools

We comprehensively searched through 6 electronic databases (CINAHL, Medline, Cochrane, OTSeeker, PEDro, Embase) to find all available upper limb assessments. The search term included keywords of (a) diagnosis, (b) age, (c) upper limb, (d) assessment tools, and (e) psychometric properties. An example of the search term for Medline is given in Appendix A.

The results were imported to a reference management system (Mendeley, Mendeley Ltd, London, UK). Duplicates were merged. Two reviewers independently screened titles, abstracts, and, if necessary, full texts for inclusion or exclusion according to predefined criteria. In case of disagreement, the reviewers discussed until consensus was reached. Otherwise, the opinion of an independent third reviewer was taken into account. A hand search of reference lists of all articles that met the inclusion criteria was then conducted.

Articles were included if they met the following criteria: (a) participants were children or adolescents (1-18 years) and (b) had diagnosed central motor disorders; (c) the body part of interest was (part of) the upper limb; (d) measurement tools assessed Body Function and/or Activity and/or Participation; (e) the evaluation of at least one psychometric property of the given tool was accomplished (irrespective of the main aim of the article); and (f) articles were peer-reviewed full texts (g) written in English or German.

Articles were excluded if (a) any of the participants exceeded the age of 20 years or more than 10% of the participants were between 18 and 20 years; (b) the assessment tool was invasive (eg, needle electromyography), (c) only used for diagnosis or (d) measured sensory function; and (e) they were case studies with less than 5 subjects.

Search 2: Selecting Psychometric Studies for Determining the Level of Evidence for Reliability, Measurement Error, and Responsiveness

For each measurement tool identified from the first search, a second search was conducted in the 6 databases mentioned above to find all articles investigating the psychometric properties of the tools. Therefore, the name of the tool and its abbreviations and variations were added to the search term used in the first literature search. The search result of each database was exported to the reference management system, and the same 2 reviewers screened titles, abstracts, and full texts for inclusion or exclusion of articles. In case of disagreement, the reviewers discussed until consensus was reached. Otherwise, the opinion of an independent third reviewer was taken into account. Additionally to the exclusion criteria mentioned in step 1, studies that only considered validity of a measurement tool, studies focusing on lower extremities, and review articles were excluded. Last, reference lists of all articles that met the inclusion criteria were screened for additional literature.

Quality Assessment

Quality assessment was administered at 2 levels.

Level 1: Quality Rating of Individual Studies

The quality of articles was rated according to the COSMIN (COnsensus-based Standards for the selection of health Measurement INstruments) checklist. 9 It is a rating scale to evaluate the methodological quality of studies on measurement properties of health status instruments. In 9 boxes with 5 to 18 items, methodological aspects, such as applied statistics or independency of test administration, are rated for each psychometric property outlined in the article. Each item can be rated as “poor,” “fair,” “good,” or “excellent.” 10

In the current review, the quality of studies on reliability, measurement error, and responsiveness was rated. Data were entered in a previously developed Microsoft Access COSMIN database, 11 which included also a Generalizability box to extract data on the characteristics of the study population and sampling procedure. 12 Two reviewers independently rated every article with the COSMIN checklist and discussed whenever ratings differed. Otherwise, the opinion of an independent third reviewer was taken into account. An overall score was defined by taking the lowest rating of all items per box.

One item in each box addresses sample size. Based on the COSMIN checklist, the sample size must be at least 30 to be rated as “fair.” Subsequently, many studies would be rated as “poor” even though all other items would be scored at least as “fair.” Therefore, in line with previous studies,11,13,14 we accounted for the score of this item at the level of the best evidence synthesis, where only studies of “fair,” “good,” or “excellent” methodological quality were included.

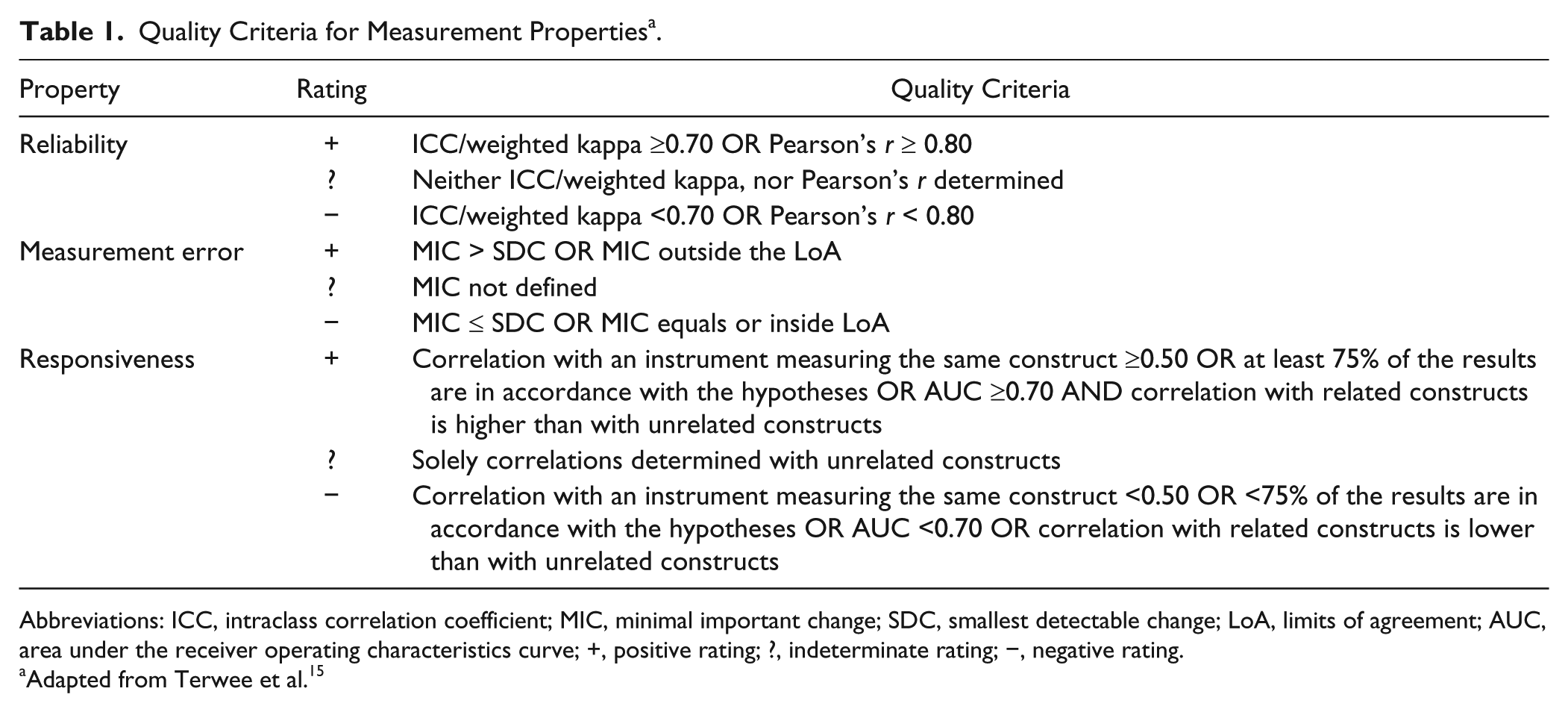

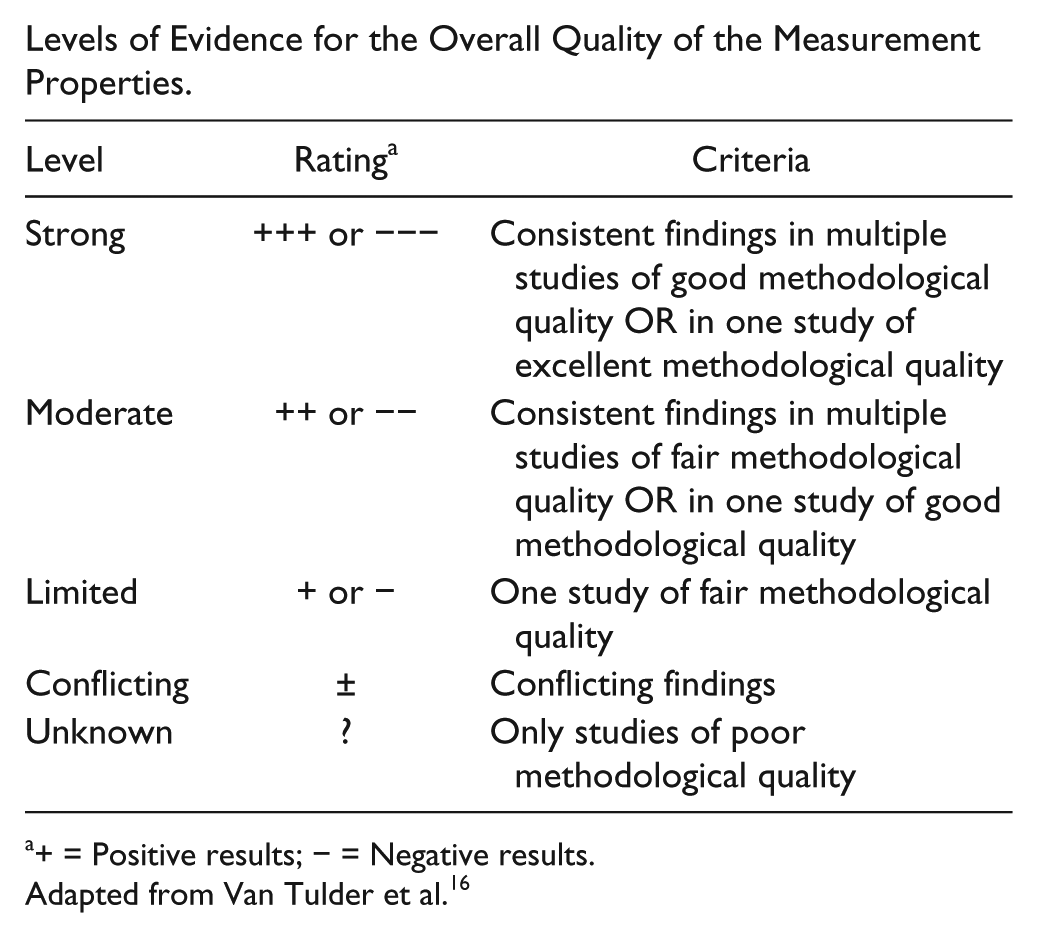

Level 2: Best Evidence Synthesis for Each Measurement Tool

Results of each study were rated as positive, indeterminate, or negative 15 (Table 1). If multiple studies on the same assessment were homogeneous enough, an overall rating was performed as proposed by van Tulder et al. 16 To facilitate the choice of an adequate assessment, a “best evidence synthesis” based on the strategy of the Cochrane Back Review Group was performed. 16 The level of overall evidence was rated as “strong” if findings were consistent in multiple studies of good OR in one study of excellent methodological quality; as “moderate” if there were consistent findings in multiple studies of fair OR in one study of good methodological quality; as “limited” if there was only one study of fair methodological quality; as “conflicting” whenever findings throughout studies were conflicting; or as “unknown” when only studies of poor methodological quality were available 16 (Appendix B). To account for sample size, the level of evidence was rated as “strong,” when total sample size of combined studies was ≥100, “moderate” for a total sample size between 50 and 99, “limited” for a total sample size between 25 and 49, and “unknown,” when sample size was less than 25.11,14

Quality Criteria for Measurement Properties a .

Abbreviations: ICC, intraclass correlation coefficient; MIC, minimal important change; SDC, smallest detectable change; LoA, limits of agreement; AUC, area under the receiver operating characteristics curve; +, positive rating; ?, indeterminate rating; −, negative rating.

Adapted from Terwee et al. 15

Results

Identification of Measurement Tools

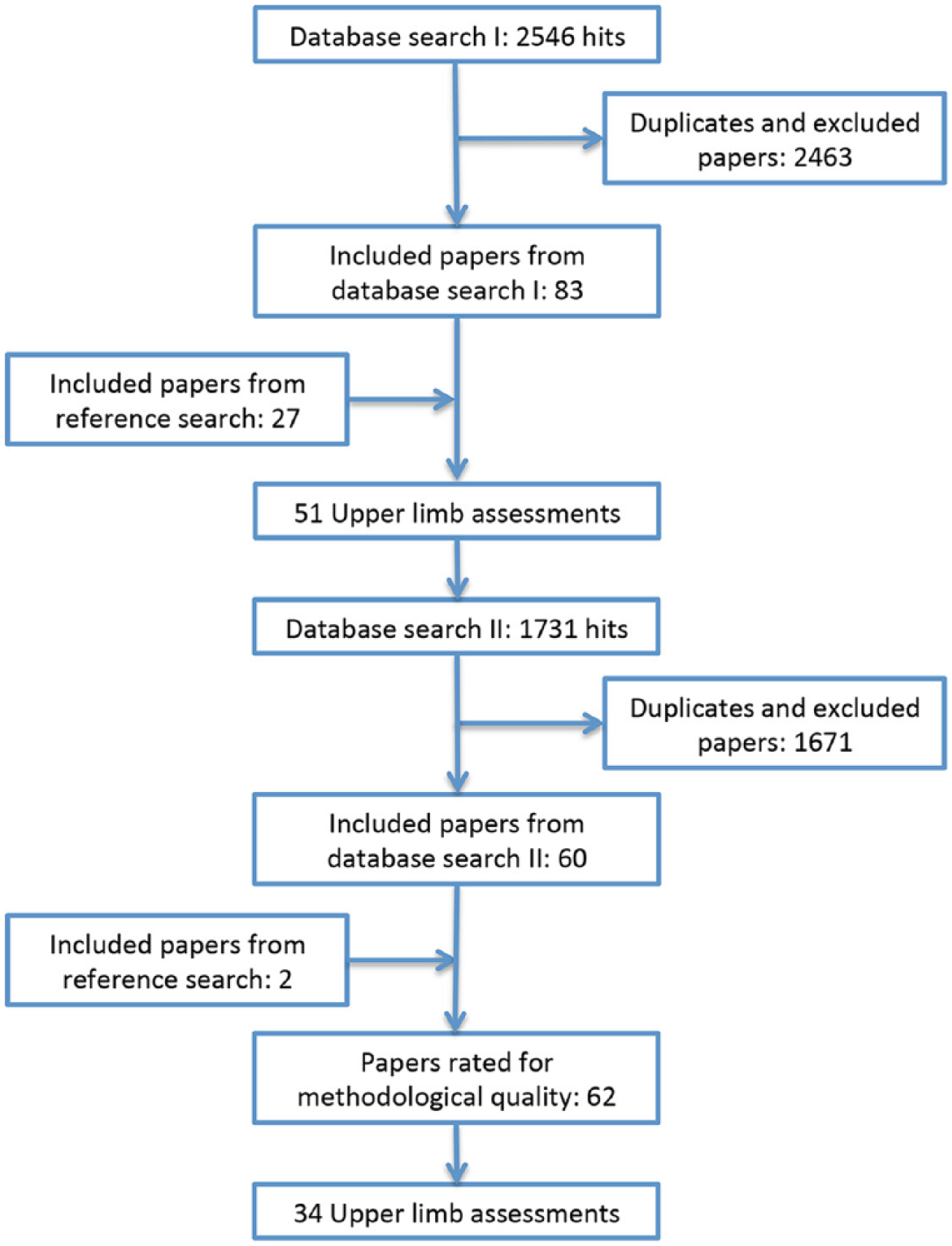

As illustrated in Figure 1, 83 articles met the inclusion criteria. After reference searching, an additional 27 articles were included. Screening of retrieved articles revealed 51 upper limb assessment tools.

Flowchart of the literature search and the selection of the studies.

Evidence for Reliability, Measurement Error, and Responsiveness

The search for psychometric properties of each tool resulted in 1731 articles. For the quality assessment, 62 articles covering 34 measurement tools met the inclusion criteria (see Figure 1).

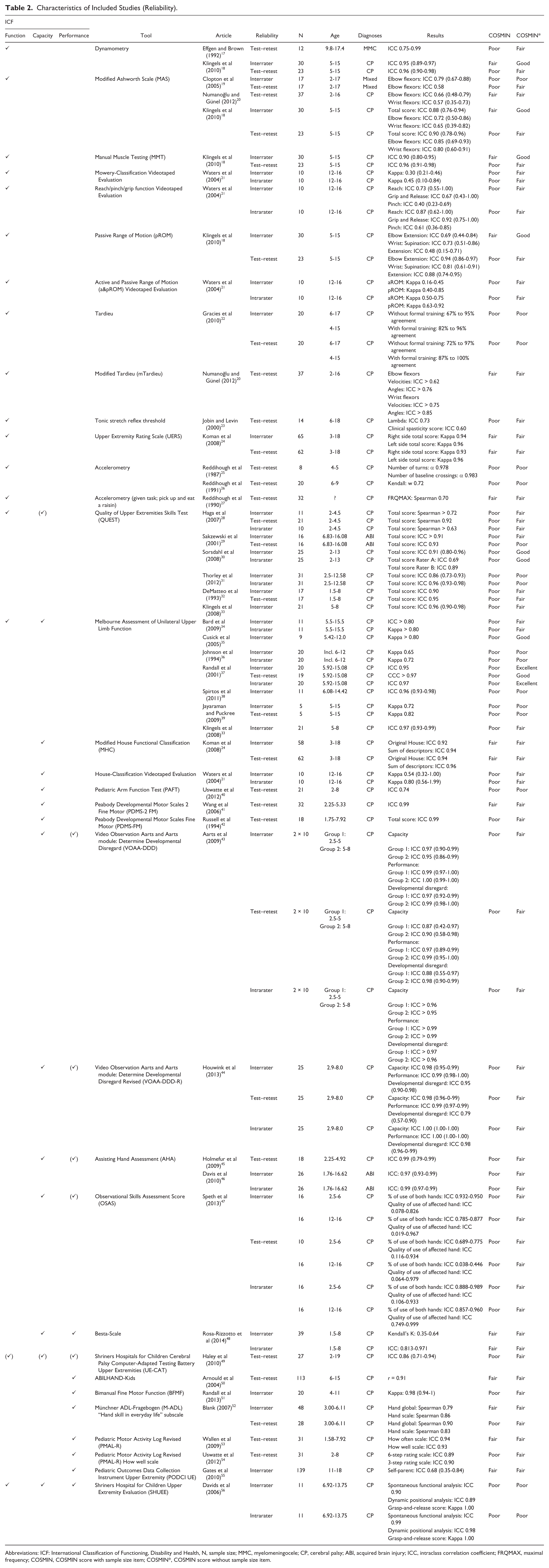

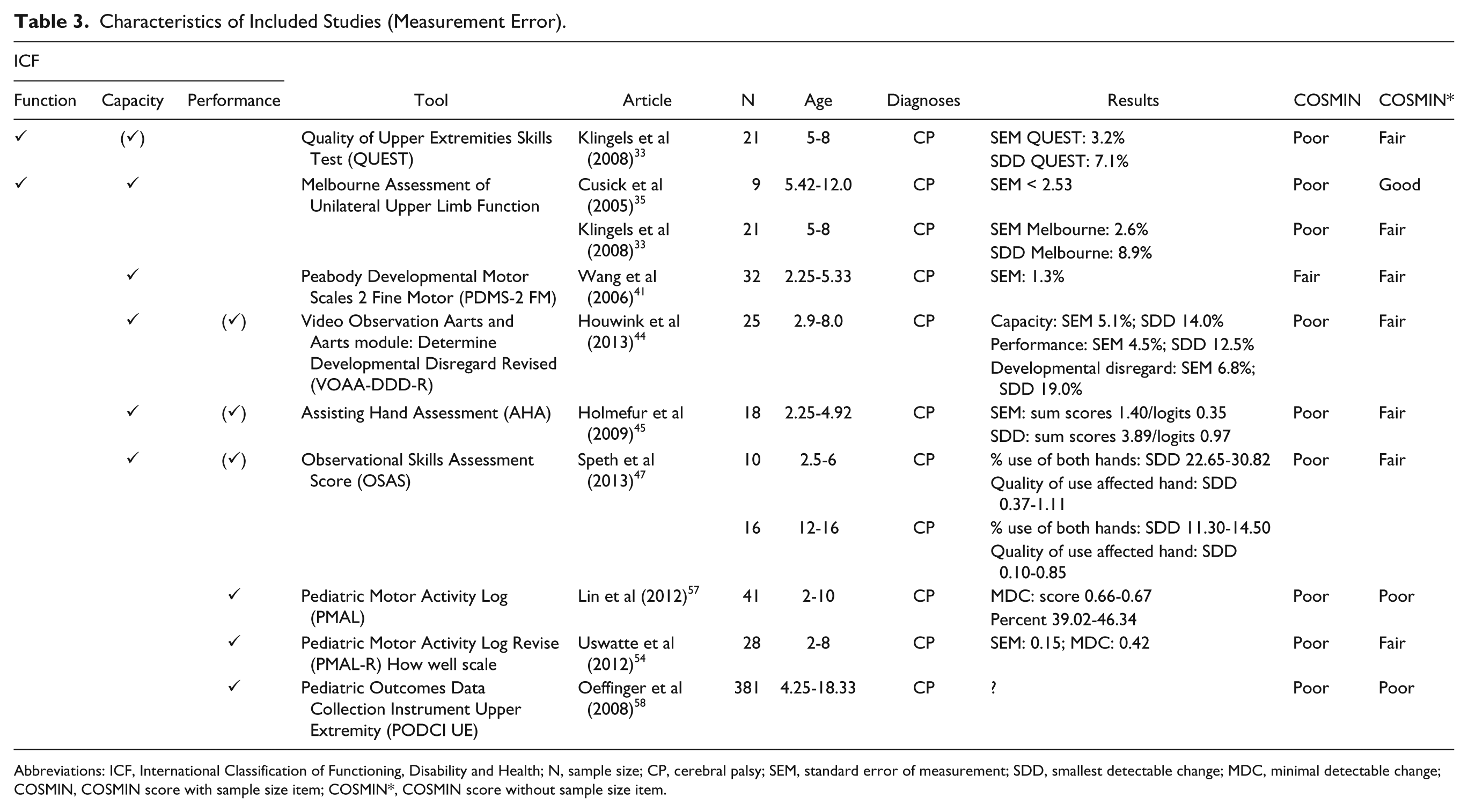

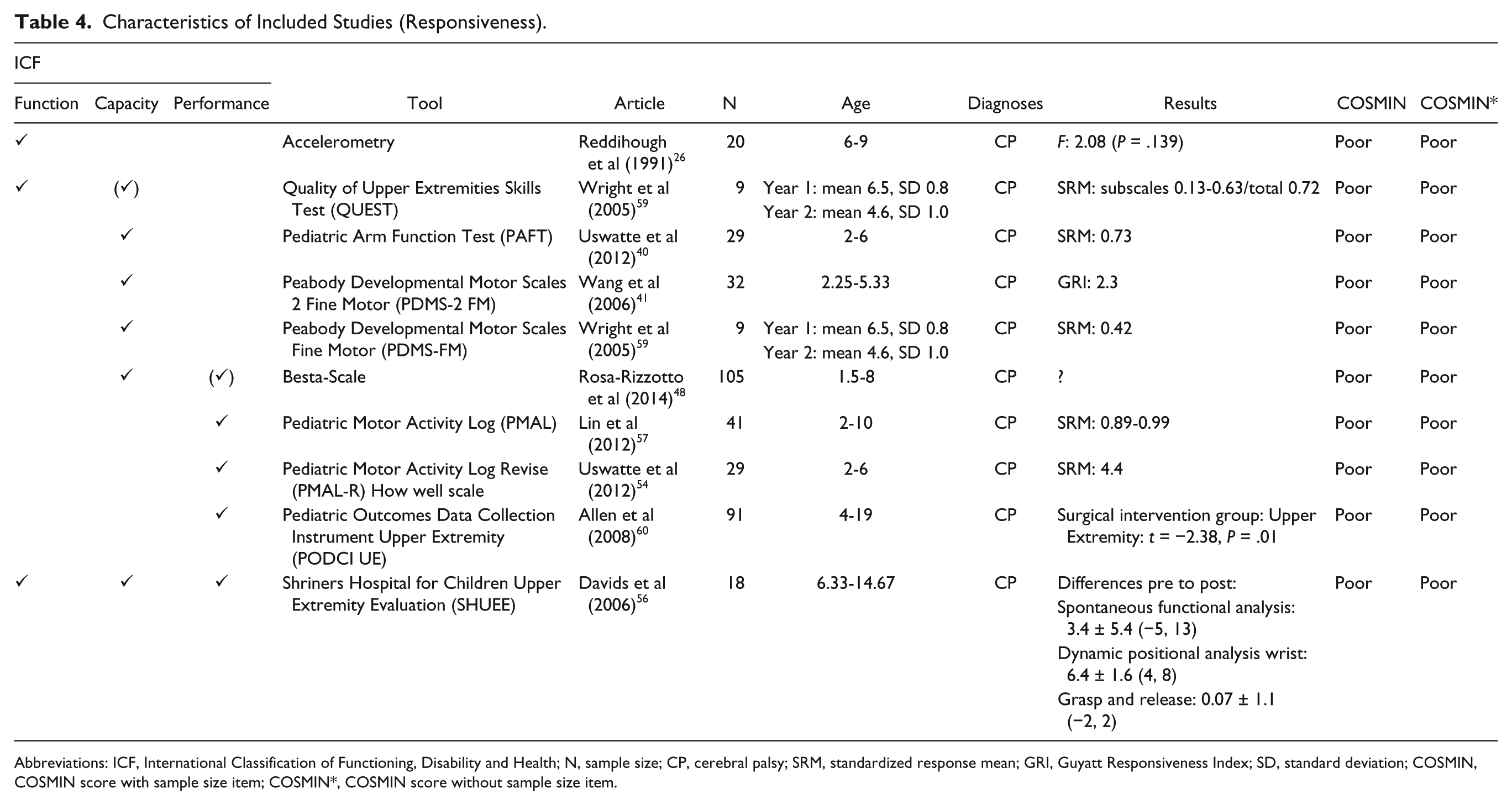

In 58 articles, data about reliability were reported (Table 2), measurement error was outlined in 11 studies (Table 3), whereas 10 studies provided information about responsiveness (Table 4). Please note that kinematic measures are not included in these tables.

Characteristics of Included Studies (Reliability).

Abbreviations: ICF: International Classification of Functioning, Disability and Health, N, sample size; MMC, myelomeningocele; CP, cerebral palsy; ABI, acquired brain injury; ICC, intraclass correlation coefficient; FRQMAX, maximal frequency; COSMIN, COSMIN score with sample size item; COSMIN*, COSMIN score without sample size item.

Characteristics of Included Studies (Measurement Error).

Abbreviations: ICF, International Classification of Functioning, Disability and Health; N, sample size; CP, cerebral palsy; SEM, standard error of measurement; SDD, smallest detectable change; MDC, minimal detectable change; COSMIN, COSMIN score with sample size item; COSMIN*, COSMIN score without sample size item.

Characteristics of Included Studies (Responsiveness).

Abbreviations: ICF, International Classification of Functioning, Disability and Health; N, sample size; CP, cerebral palsy; SRM, standardized response mean; GRI, Guyatt Responsiveness Index; SD, standard deviation; COSMIN, COSMIN score with sample size item; COSMIN*, COSMIN score without sample size item.

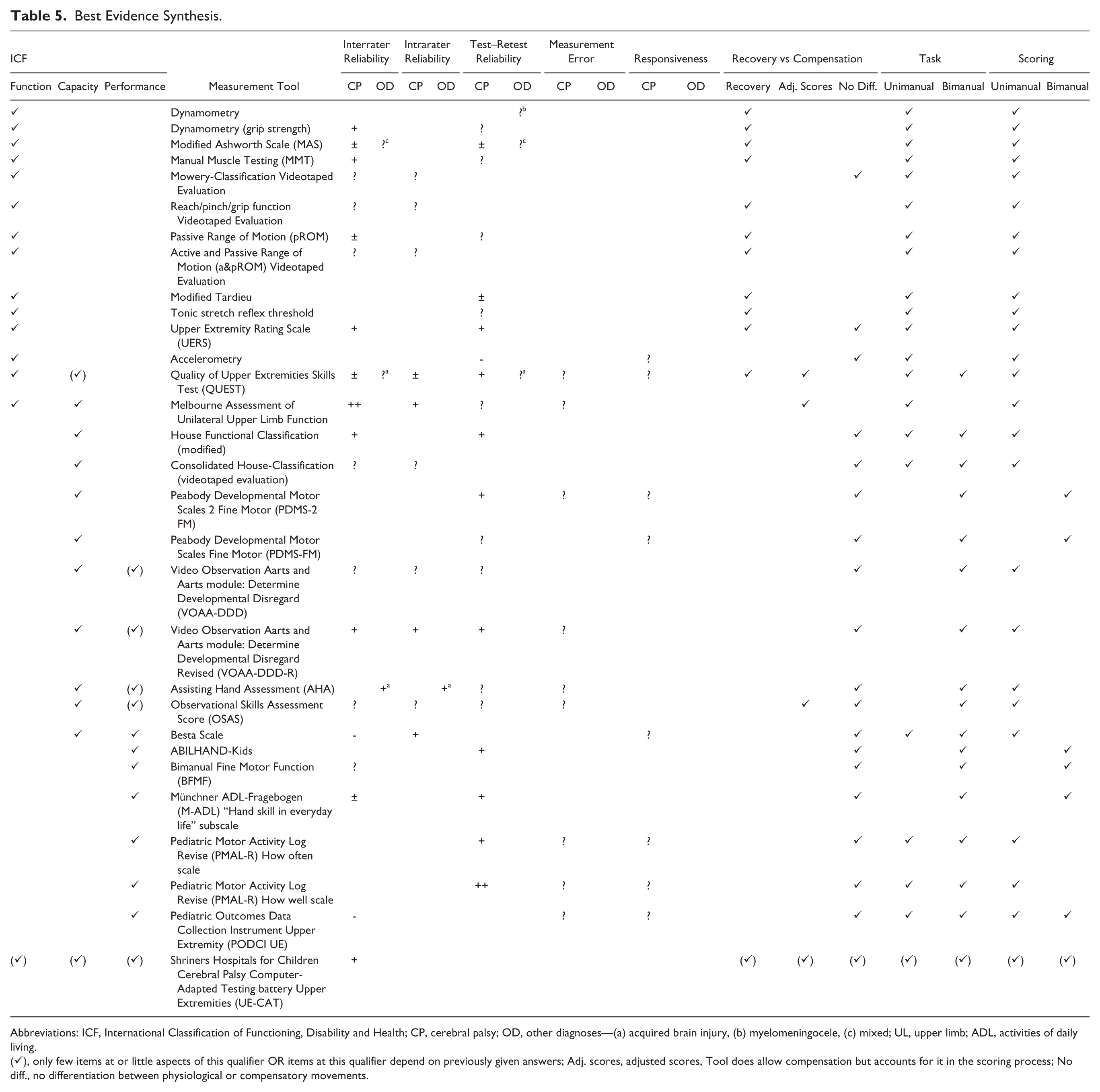

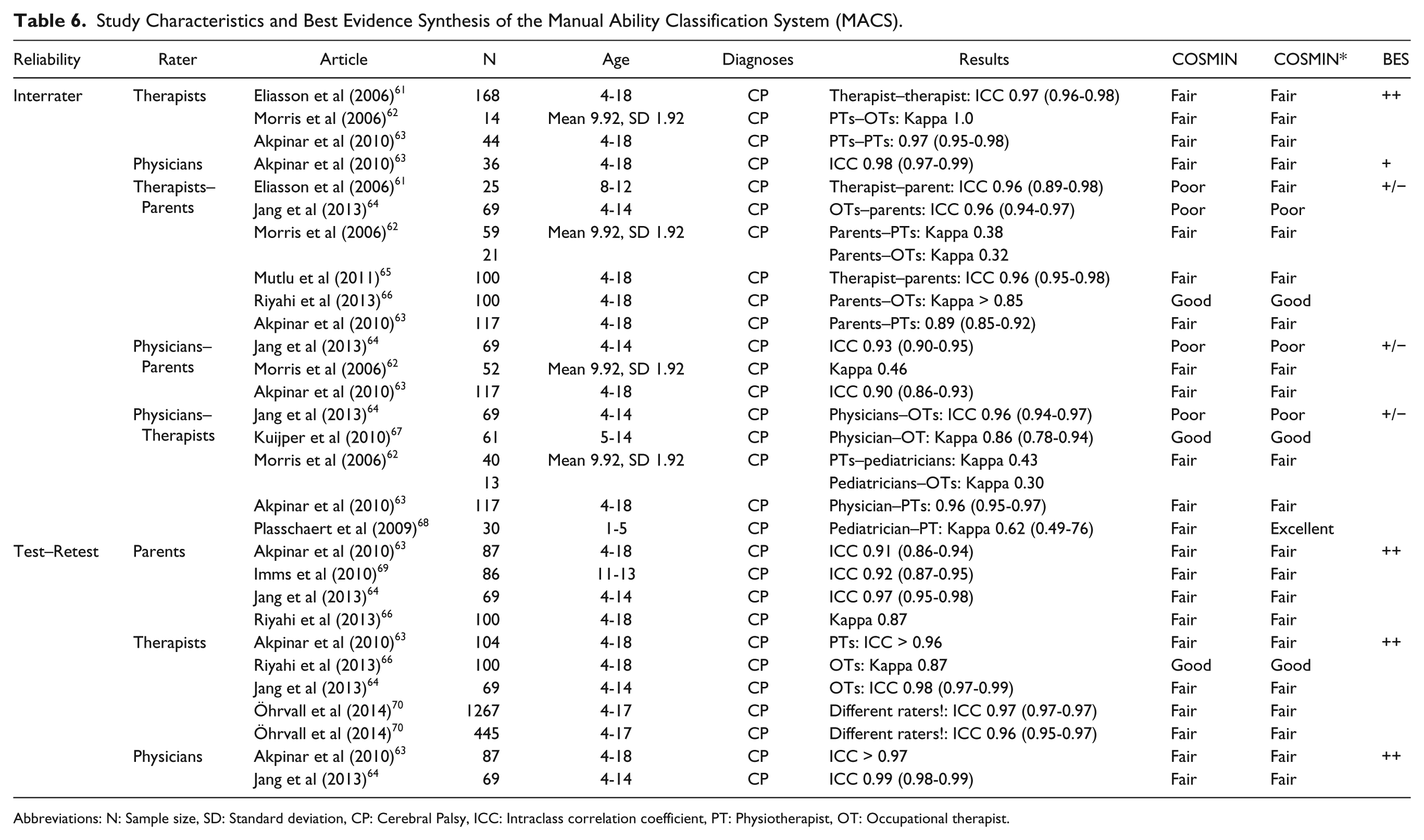

An overview of the levels of evidence for reliability, measurement error, and responsiveness of each measurement tool is shown in Table 5, where we also present (a) whether the measurement tool assesses recovery, allows compensatory strategies, or allows compensatory strategies but accounts for it in the scoring process; (b) whether task execution and scoring are performed unimanually or bimanually. Results for the Manual Ability Classification System (MACS) are tabulated separately (Table 6).

Best Evidence Synthesis.

Abbreviations: ICF, International Classification of Functioning, Disability and Health; CP, cerebral palsy; OD, other diagnoses—(a) acquired brain injury, (b) myelomeningocele, (c) mixed; UL, upper limb; ADL, activities of daily living.

(✓), only few items at or little aspects of this qualifier OR items at this qualifier depend on previously given answers; Adj. scores, adjusted scores, Tool does allow compensation but accounts for it in the scoring process; No diff., no differentiation between physiological or compensatory movements.

Study Characteristics and Best Evidence Synthesis of the Manual Ability Classification System (MACS).

Abbreviations: N: Sample size, SD: Standard deviation, CP: Cerebral Palsy, ICC: Intraclass correlation coefficient, PT: Physiotherapist, OT: Occupational therapist.

Reliability

Of the 58 studies dealing with reliability, 32 were of fair, 3 of good, and 1 of excellent methodological quality (Table 2). Reasons for the rating of poor methodological quality in 13 articles were insufficient study description,25,26,38,56 inappropriate statistical methods,22,36,71 questionable independency of test administration,22,31,39,40,72-74 and unsuitable methodology. 26 In the remaining 9 studies, methodological quality varied between different kinds of reliability and therefore they could not be classified as a whole (Table 2). The best evidence synthesis did not reveal any tool of strong positive evidence for reliability (Table 5). For the Melbourne Assessment (Melbourne),33-39 there was moderate positive evidence for interrater reliability. The MACS61-70 as well as the Pediatric Motor Activity Log (PMAL) “how well” scale53,54 showed moderate positive evidence for test–retest reliability. Limited positive evidence for interrater reliability was found for dynamometry,17,18 Modified House Classification (MHC), 24 Manual Muscle Testing, 18 Upper Extremity Rating Scale (UERS), 24 and the Video Observation Aarts and Aarts module: Determine Developmental Disregard Revised (VOAA-DDD-R). 44 The Melbourne, the VOAA-DDD-R, the Assisting Hand Assessment (AHA),45,46 and the Besta-Scale 48 showed limited positive evidence for intrarater reliability. For test–retest reliability, the MHC, Quality of Upper Extremities Skills Test (QUEST),28-33 UERS, Peabody Developmental Motor Scales-2–Fine Motor abilities (PDMS-2 FM), 41 VOAA-DDD-R, ABILHAND-Kids, 50 Münchner ADL-Fragebogen (M-ADL), 52 the PMAL “how often” scale, 53 and the Shriners Hospital for Children CP Computer-Adapted Testing battery–Upper Extremities 49 showed limited positive evidence. All other assessments (House-Classification, 21 Modified Ashworth Scale [MAS],18-20 Mowery Classification, 21 reach/pinch/grip function, 21 range of motion18,21 [modified] Tardieu,20,22 tonic stretch reflex threshold, 23 Pediatric Arm Function Test, 40 PDMS-FM, 42 VOAA-DDD, 43 Bimanual Fine Motor Function [BFMF], 51 Pediatric Outcomes Data Collection Instrument Upper Extremity [PODCI UE], 55 Observational Skills Assessment Score [OSAS], 47 and Accelerometry25-27) were found to have unknown, conflicting, or negative levels of evidence for reliability (Table 2).

For the MACS, many different comparisons were drawn to analyze reliability (Table 6). Interrater reliability within a professional category (ie, therapists or physicians) showed a positive level of evidence, whereas analysis of interrater reliability between professional categories or with parents revealed conflicting results. For test–retest reliability, a positive level of evidence was found for parents, therapists, and physicians.

Reliability of kinematic measures was examined in 8 studies.71-78 However, very different tasks and measurement techniques were used. Therefore, a best evidence synthesis could not be performed.

Measurement Error

Six studies about measurement error were rated as being of fair and 2 of good methodological quality. As independency of test administration was doubtful,58,73 the statistical analysis inappropriate, 58 or the description of the study population missing, 57 3 studies were rated as being of poor methodological quality (Table 3). None of the studies dealing with measurement error defined a minimal important change. Consequently, no study about measurement error achieved positive or negative ratings as proposed by Terwee et al, 15 and the level of evidence remains unknown. Measurement error of kinematic measures was examined in 2 studies.73,75 The study of Jaspers et al 75 was of good methodological quality, but it included only 12 participants and thus the evidence for measurement error of this tool remains unknown.

Responsiveness

With one exception, the methodological quality of all articles about responsiveness was rated as poor. In 6 of 10 studies, statistical methods used to prove responsiveness of the tool were not appropriate. In the remaining studies, essential methodological details were missing26,60 or no hypotheses about changes in scores were formulated a priori 59 (Table 4). Hence, no best evidence synthesis could be performed for responsiveness.

Mackey et al 79 conducted a study of fair methodological quality about the responsiveness of kinematics. However, as they only included 10 patients, the evidence for responsiveness of this measurement remains unclear.

Discussion

The objective of this systematic review was to screen peer-reviewed literature for measurement tools used to assess upper extremity motor function and activities in children with a wide range of central motor disorders. The aim was to offer clinicians as well as researchers an overview about the evidence concerning reliability, measurement error, and responsiveness of measurement tools.

In comparison with previous reviews,3-5,8,80,81 the current article covered a broader age range and several diagnoses. This ensured a comprehensive overview of the evidence of assessment tools used in clinical everyday life. Furthermore, the COSMIN checklist 9 was applied to systematically rate the methodological quality of articles, and a best evidence synthesis allowed providing assembled information of single measurement tools.15,16

In 62 eligible studies reliability and/or measurement error and/or responsiveness was reported for 34 measurement tools. The methodological quality of most of these studies was rated as “fair” using the COSMIN checklist without rating sample size. All but 4 studies comprised exclusively children with CP. One study including 12 participants with MMC investigated the test–retest reliability of dynamometry. 17 Clopton et al 19 examined the interrater and test–retest reliability of the MAS in a mixed patient group. The QUEST was assessed for its interrater and test–retest reliability in a group of patients with ABI, 29 and in the same population, Davis et al 46 studied interrater and intrarater reliability for the AHA. But even only for children with CP, there was no assessment showing at least moderate positive evidence for all psychometric properties examined in this review.

The search revealed tools that measure upper extremity Function and Activity but no tool was found at the ICF Participation level. Participation hardly ever depends solely on upper extremity motor ability but also on many other factors and therefore no Participation measure complied with our inclusion criteria.

Reliability

At the Body Function level, the Melbourne is the most comprehensively studied upper limb assessment for children. It showed moderate positive evidence for interrater and a fair positive level of evidence for intrarater reliability.

As the Melbourne covers the component Body Function, and only partially Capacity, there is no comprehensive Capacity measurement tool with at least moderate positive evidence for reliability.

In line with a previous study of McConnell et al, 81 the MACS was found to be the tool with most published evidence. It shows moderate positive levels of evidence for interrater reliability (when only considering comparisons between therapists) and test–retest reliability. Likewise does the PMAL “how well” scale. Thus, the MACS and the PMAL seem to be the most promising tools to measure Performance with respect to the level of evidence for reliability. However, when measuring rehabilitation outcomes, it has to be considered that the MACS is a classification system and is not designed to measure changes over time. 82

Measurement Error

While reliability parameters are highly dependent on the variation in the population sample, agreement parameters, based on measurement error, are more a characteristic of the measurement instrument itself. 83 Thus, agreement parameters are more stable over different population samples. Furthermore, as measurement error is expressed on the actual scale of the measurement, clinical interpretation of a change score is straightforward. 83

Based on the quality criteria proposed by Terwee et al, 15 results for measurement error can only be rated as positive, when a minimal important change (MIC) is defined and its magnitude exceeds the smallest detectable change. The MIC (also minimally important clinical change) is defined as the minimal change that patients perceive as beneficial, 84 but there is currently no agreement on the definition of “minimal” and “important.” 85 Hence, its scientific acceptance is controversial. This might be one of the reasons why in none of the included studies a MIC was determined.

Responsiveness

According to COSMIN guidelines, the appropriate way to quantify responsiveness is to correlate changes in scores of the assessment with changes in scores of a “gold standard” or “external criterion.” As there is a lack of “gold standard” assessments in rehabilitation, an alternative would be to formulate hypotheses about the direction and magnitude of the correlation a priori. Reports about responsiveness were mostly rated as “poor” because neither a “gold standard” was available nor were hypotheses formulated. Recently, the approach to prove responsiveness proposed in the COSMIN guidelines has been put into question, as it confronts traditional methods.11,86

Recovery Versus Compensation

Depending on the severity of impairment, patients might improve their independency in daily life by either using compensatory movement patterns or via the reduction in impairment through (re-)appearance of motor patterns present prior to the injury of the central nervous system (ie, recovery). 87 To avoid confusion in the interpretation of the efficacy of different treatment interventions, Levin et al 87 proposed a distinction of recovery/compensation at the Body Function/Structure and Activity levels. Consequently, motor measures should be selected in order to differentiate between desirable physiological motor patterns and compensatory strategies.

At the ICF Body Function level, the focus lies more on quality of movement than on movement outcome or task performance. Accordingly, several tools at this level measure physiologically desirable movements and thus recovery. The Mowery Classification, Accelerometry, and one UERS item do not exclude compensatory movements. Neither does the reach/pinch/grip function Videotaped Evaluation but its scoring system accounts for compensation.

As at the ICF Activity level the emphasis lies more on task accomplishment, most of the evaluations at this level do not determine how the task is completed. Accordingly, all included assessments at the Activity level permit patients to use compensatory strategies. Only the OSAS accounts for compensation.

The Melbourne and the QUEST cannot be exclusively classified as either a Body Function or Activity measure. Scoring systems of both assessments account for compensatory movements and the QUEST additionally comprises several items measuring recovery.

Compensatory strategies help patients in their activities of daily life but may also be associated with long-term problems and potentially lead to learned nonuse. 88 Therefore, therapeutic interventions should exploit the full potential of recovery, and efforts need to be made to provide the appropriate assessments. To date, there is no upper extremity tool with at least moderate positive evidence for reliability, measurement error, or responsiveness that measures recovery.

Unilateral Versus Bimanual Motor Measures

Interestingly, all measures at the Body Function level are measured and scored unilaterally, whereas at the Activity level all include bimanual items. This can be explained with the fact that strength, range of motion, spasticity, and so on, are Body Functions that are often unilaterally measured. In contrast, the focus of the Activity level lies on task execution and therefore assessments at this ICF component comprise usually bimanual elements.

The original and second edition of the PDMS-FM, the ABILHAND-Kids, BFMF, M-ADL, and PODCI UE focus on general age-appropriate activities of daily living and do not differ between the left arm and the right arm. The 2 House Classifications, the original and revised VOAA-DDD, the AHA, OSAS, Besta Scale, and PMAL-R “how often” and “how well” scales are bimanual tests, but as they were developed for hemiplegic patients, only the affected upper extremity is scored. Even though most of these assessments have been developed for a specific patient group (eg, unilateral CP), we believe that after validation, they could also be used for other patients with similar functional deficits (eg, unilateral stroke). For instance, the AHA has originally been developed for children with hemiplegic CP but recently has been used and psychometrically analyzed in children with acquired brain injuries. 46

The Melbourne and the QUEST are tested unilaterally (the latter also includes a few bimanual items), and the scoring is performed for each side separately.

Activity measures should be chosen depending on whether the focus lies on general changes of task execution or on performance alteration of the assisting hand. However, so far only the PMAL-R “how well” scale, which focuses on the weaker arm/hand, shows moderate positive evidence for one of the psychometric properties (ie, test–retest reliability) examined in this study.

Methodological Considerations

The COSMIN checklist was developed for the quality scoring of self-reported questionnaires. However, it is also suitable for other assessments. Recently, several reviews about assessments in the pediatric field used the COSMIN checklist.11,89-91 Nevertheless, despite the extensive guidelines, there were some items where subjective interpretation was inevitable. Consider the following example about the MACS, which is a 5-level classification system: When scored twice by the same therapist at an interval of approximately 2 weeks, the ratings probably are independent and the time interval can be rated as appropriate, because therapists see several children per day, which impedes recall bias. In contrast, if parents score their own child twice, 2 weeks apart, they will most likely remember their rating of time point one because the MACS is a classification system with only one single score. In this case, the time interval would have to be rated “not appropriate.”

Furthermore, studies investigated the long-term stability of the MACS69,70 and of dynamometry. 17 As long-term stability of an assessment tool is not acknowledged as a psychometric property, neither the reliability box (the intermediate time between ratings would be scored as poor) nor the box for responsiveness (most of the patients should not show improvements) can satisfactorily cope with such studies.

Another difficulty of the COSMIN scoring is the concept of responsiveness that differs from traditional methods. 86 Consequently, statistical methods are rated inappropriate in some included studies as they used the standardized response mean without formulating hypotheses about its expected size to measure responsiveness. The advised alternative—comparing improvements with improvements in other non–gold standard assessments—might also not be appropriate, as the alternative outcomes could even be less responsive.

As our first aim was to get an oversight of available assessment tools, we chose a 2-tiered search 8 rather than a validated search filter (eg, as proposed by Terwee et al 92 ). To evaluate the influence of the search strategies, we compared their results for one database (ie, Medline) of a randomly chosen year (ie, 2008). No substantial differences were found comparing the 2 strategies, and we therefore conclude that our comprehensive 2-tiered search did not miss any relevant measurement tools.

Other than proposed by the COSMIN guidelines, we did not rate the sample size item in the quality assessment but accounted for it at the best evidence synthesis. As in neuropediatric studies sample sizes usually are rather small, by choosing this approach we could augment the available evidence by more than 60%.

For some measurement tools, the psychometric properties of different versions or subscales have been investigated separately. In these cases, a best evidence synthesis was not applicable.

Conclusion

On the one hand, due to the lack of articles about psychometric properties in the population of interest, poor-quality studies, and small sample sizes, we cannot give substantial recommendations about which upper limb measurement tools should be used. On the other hand, no tool was found to have distinct negative evidence for reliability, measurement error, or responsiveness. Thus, we cannot conclude that the existing measurement tools are unsound. Furthermore, we only evaluated reliability, measurement error, and responsiveness, whereas information about validity, clinical utility, practicability, and costs are needed as well for thorough evaluation. The most promising assessments with respect to reliability are the Melbourne at the ICF Body Function level (with items at Capacity level) and the PMAL “how well” scale at the Performance level. The MACS appears to be the most reliable upper limb classification system (ICF Performance level).

Summing up, before investing in randomized controlled trials, researchers in the pediatric field dealing with upper extremities should work on high-quality psychometric studies. To date, trials on upper limb rehabilitation in children and adolescents risk being biased by insensitive measurement tools lacking reliability.

Footnotes

Appendix A

Appendix B

Levels of Evidence for the Overall Quality of the Measurement Properties.

| Level | Rating a | Criteria |

|---|---|---|

| Strong | +++ or −−− | Consistent findings in multiple studies of good methodological quality OR in one study of excellent methodological quality |

| Moderate | ++ or −− | Consistent findings in multiple studies of fair methodological quality OR in one study of good methodological quality |

| Limited | + or − | One study of fair methodological quality |

| Conflicting | ± | Conflicting findings |

| Unknown | ? | Only studies of poor methodological quality |

+ = Positive results; − = Negative results.

Adapted from Van Tulder et al. 16

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Clinical Research Priority Program (CRPP) Neuro-Rehabilitation of the University of Zurich (Switzerland); the Fondation Gaydoul (Zurich, Switzerland); the Swiss National Science Foundation (Grant 32003B_156646); and the Mäxi-Foundation (Zurich, Switzerland).