Abstract

Epistemological beliefs are subjective views about the nature of knowledge and knowing. A large number of research approaches are dedicated to this field. Yet, there is no research investigating the beliefs that pre-service teachers have towards educational psychology, a highly relevant domain for their prospective profession. Based on this theoretical background, two studies have been conducted. In the first study, epistemological beliefs with regard to contemporary educational psychology research and their change during a research-based lecture on educational psychology have been assessed (N = 82). In a second study, the aim was to examine these epistemological beliefs during different phases of a pre-service teacher programme (N = 252). Findings indicate that students entering a programme are already partly aware of the nature of educational psychology. Results also reveal that students’ knowledge and beliefs develop during the programme, although the relevance of educational psychology as being a central part future professional practice is not recognized. Findings also imply that especially the trust in scientific quality and the awareness of the importance of this field for teacher training and practice could be enhanced. Possible solutions could include more research-oriented courses and a more reflected integration of educational psychology within the curriculum.

Introduction

Epistemological beliefs address subjective ideas that individuals have about the nature of knowledge and knowing, concerning either general or domain-specific knowledge. Such epistemological beliefs are related to learning in schools (Hofer & Pintrich, 1997; Urhahne & Hopf, 2004) and to learning in institutions of higher education, such as universities (Hofer, 2004; King & Kitchener, 1994; Perry, 1970).

One central mission of universities can be seen in the establishment of an appropriate understanding of the nature of science. Scientifically adequate epistemological beliefs are a constituent part of scientific literacy (Urhahne & Hopf, 2004) and are basic for acquiring competences in a specific domain (Haider et al., 2009). Misconceptions may lead, for example, to an oversimplification of complex information and result in worse learning outcomes (Duell & Schommer-Aikins, 2001; Schommer, 1990). Thus, students should learn which methods are used to gain knowledge and insight in their domains, how this knowledge is developed and changes, and which limitations of science exist (Urhahne & Hopf, 2004). Nevertheless, conditions for acquiring this specific type of knowledge and skills are not always beneficial at colleges and universities. In this manuscript, we specifically address pre-service teacher students. This target group is often faced with a mixture of different disciplines, requirements, and skills. Teacher education varies strongly between different countries, even sometimes within one country among different states, different schools, and school levels. We address here the specific situation of university teacher training for pre-service secondary school teachers in Austria. Pre-service teacher studies in Austria include bachelor- and master’s-level programmes. The master’s level is required for an indefinite teacher position at school. Compared to the bachelor-level programme (covering basic knowledge and skills), the master’s programme fosters specialised problem-solving skills and critical thinking skills regarding teachers’ work and related research. The university education in Austria requires the study of two different school subjects (e.g., mathematics, German language, biology, English language, history, Latin, etc.). Furthermore, every pre-service teacher has to acquire competences and skills in educational sciences and has to go through a practical phase in order to gain insight into his or her profession and to develop professional skills.

Accordingly, the curriculum in teacher education includes at least four different domains with different research approaches and traditions:

domain knowledge in each subject discipline teaching permission will be acquired; methodology in each subject; domain knowledge in educational sciences; practical educational and instructional skills in educational science and didactical content knowledge.

Hence, it is rather unlikely to understand the idea or epistemology of one of these different fields thoroughly without additional support. Notably, the combination of two different disciplines might contribute to increased difficulty, as different approaches to research methodology and, thus, epistemology might differ largely (e.g., between natural sciences and linguistics). Programmes developed here to enhance pre-service teachers’ critical thinking and reflective judgment (e.g., Brownlee et al., 2001; Lunn Brownlee et al., 2017; Hill, 2000) have shown to be supportive but still remain an emerging field of research.

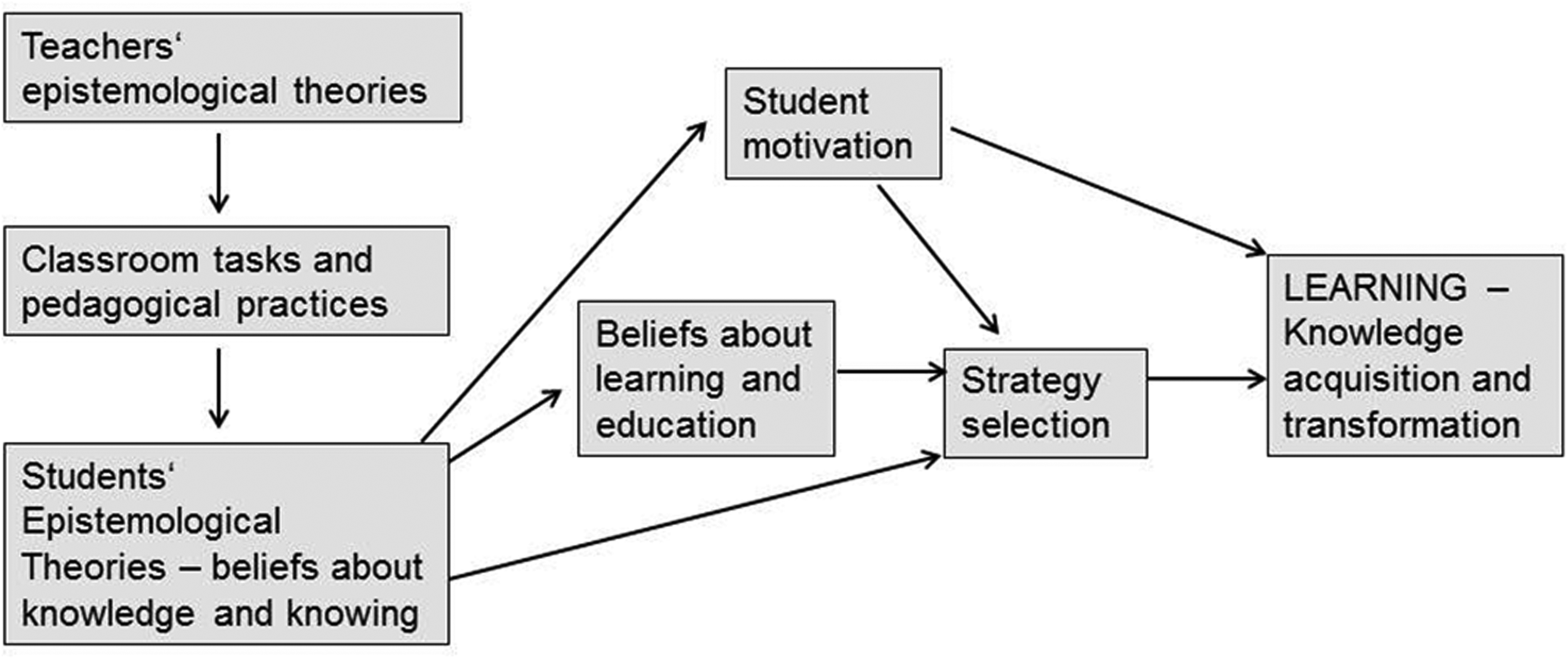

However, it is known that subjective theories of teachers have an impact on their behaviour in their work at school. Epistemological beliefs of teachers play an important role regarding teachers’ conception of teaching and learning and their perception of education-related theory and research, and consequently they have an impact on the design of learning environments (Fiechter et al., 2009; Guilfoyle et al., 2020; Tezci et al., 2016). Hofer (2001) also shows how teachers’ epistemological theories influence their pedagogical practices and the design of classroom tasks (see Figure 1). These choices again influence their students’ epistemological beliefs and, subsequently, affect their students’ knowledge acquisition and transformation. Correspondingly, Müller et al. (2008) assume a connection between teachers’ epistemological beliefs and the choice of teaching methods as, for example, ‘… an understanding of knowledge as ‘separate building blocks’ would be more likely to be expressed in the transmission of purely factual knowledge rather than in a presentation of complex, interlinked content’ (p. 1).

Working Model of how Epistemological Theories Influence Classroom Learning (see Hofer, 2001, p. 372).

One major task for teachers in practice is to plan and evaluate their lessons. One objective of teacher education is to base this process on theories and research contributed by educational psychology and educational sciences. The basic knowledge and competences in these domains are usually communicated amongst others via lectures on educational psychology and educational sciences in universities. Although these courses represent only a minor part of the total pre-service teachers’ curriculum, these classes aim on fostering a deeper understanding of the culture of educational psychology and its underlying theories and research outcomes. This should contribute to support pre-service teachers in deriving adequate strategies and actions in educational practice for their in-service practice (e.g., Haider et al., 2009).

Thus, it is critically important that students gain scientifically appropriate epistemological beliefs not only in the school subjects they intend to teach, but also in educational psychology. Although educational psychology plays a central role in knowledge transfer from university into schools, its culture only is rudimentarily communicated during teacher education (Haider et al., 2009). This point might present a problem as, for example, students might only marginally or not at all challenge and reflect upon scholarly results (Wong, 1987; as cited in Mietzel, 2007). In addition, although there is a large amount of research concerning epistemological theories of teachers and some research with pre-service teachers (e.g., Brownlee et al., 2001; Chan, 2011; Fiechter et. al, 2009; Müller et al., 2008; Schraw & Olafson, 2008), there is as yet little research concerning pre-service teachers’ epistemological beliefs towards educational psychology.

The first research question in this study addresses the assessment of epistemological beliefs of pre-service teachers towards educational psychology and how these change during their first semester learning in a research-oriented educational psychology course. The second purpose of the first study is the development and validation of a scale for assessing student teachers’ beliefs about the nature of educational psychology. Here we address, primarily the discriminatory validity of the measures applied for educational psychology in special and the scales for assessing epistemological beliefs in general.

Further, the second research question addresses the assessment of epistemological beliefs of pre-service teachers in different stages of their programme.

Dimensions of Epistemological Beliefs

The ideas that individuals hold about the origin and acquisition of knowledge have various denominations, such as personal epistemologies, ways of knowing, reflective judgment or intellectual development (for an overview see Hofer, 2001), but are frequently subsumed under the term epistemological beliefs (Schommer, 1990). Epistemological beliefs address theories and beliefs that individuals hold about knowledge and knowing, either concerning general or domain-specific knowledge. Epistemological beliefs can be seen as individual views and theories about the genesis, ontology, significance, justification and validity of sciences (Priemer, 2006). They typically include beliefs about the definition of knowledge, how knowledge is constructed, how knowledge is evaluated, where knowledge resides, and how knowing occurs (Hofer, 2001).

Epistemological beliefs develop during life and are fundamental for understanding scientific research and results (Bromme & Kienhues, 2007). Ways of knowing can change within a relatively short period. Conley et al. (2004) showed that students became more sophisticated in beliefs about source of knowing and certainty of knowledge during the course of a 9-week science unit. Further, Trautwein and Lüdtke (2007) as well as Päuler-Kuppinger and Jucks (2017) showed that university students gain more sophisticated epistemological beliefs during their studies, regardless of their academic field. However, there is evidence that the development of epistemological beliefs differs with regard to the different disciplines (Rosman et al., 2017).

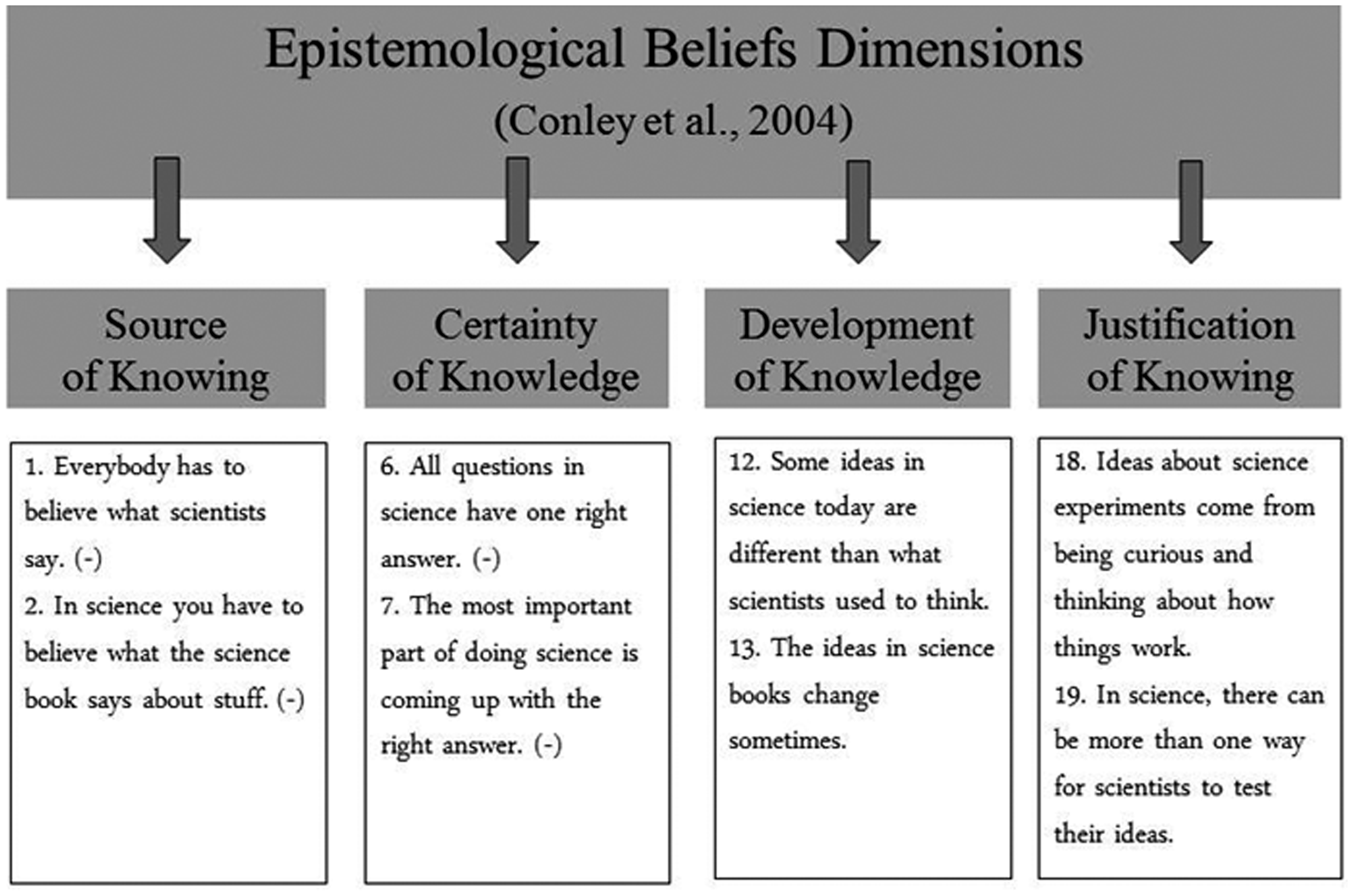

First approaches to assess epistemological beliefs are reported by Perry (1970), who investigated the intellectual development of young adults. Initially, he found a rather dualistic comprehension of knowledge among college students. At the beginning of their studies they, for instance, believed that there is only one truth about knowledge and experts know what is true in their domain. Those conceptions changed with growing experiences towards a more relativistic view, as the students now also recognized various and sometimes-contradictive alternatives. Meanwhile, many approaches examining epistemological beliefs have been developed (for an overview see Hofer & Pintrich, 1997). Essentially, most of them share the assumption that epistemological beliefs develop during life from rather naïve views of knowledge up to more sophisticated beliefs (Müller et al., 2008). While earlier theories see epistemological beliefs as one consistent, one-dimensional construct (King & Kitchener, 1994; Perry, 1970), approaches that are more recent regard them to be a more complex and multidimensional system (Schommer, 1990; Urhahne & Hopf, 2004). Schommer (1990), for instance, identified five dimensions of beliefs: (1) source of knowledge (authority or observation/reason); (2) certainty of knowledge (unchanging or tentative); (3) organization of knowledge (isolated or integrated); (4) control of learning (fixed or improvement); and (5) speed of learning (quick or gradual). According to Schommer (1990), individuals may be more sophisticated in some beliefs but not necessarily sophisticated in other dimensions. She states ‘(…) epistemological beliefs may or may not develop in synchrony’ (Schommer-Aikins, 2004, p. 20). Nevertheless, the five-factorial structure of Schommer’s model could not be replicated in subsequent studies (Conley et al., 2004). Furthermore, Hofer and Pintrich (1997) argued that the dimensions control of learning and speed of learning do not really measure the nature of knowing but rather the nature of learning. Consequently, they suggested a model that comprises four slightly different dimensions: certainty of knowledge, simplicity of knowledge, source of knowing, and justification for knowing. In line with that research, Conley et al. (2004) found evidence for a four-dimensional model of epistemological beliefs. They identified source of knowing, certainty of knowledge, development of knowledge, and justification of knowing. While the source and certainty dimensions reflect beliefs about the nature of knowing, the development and justification dimensions reflect beliefs about the nature of knowledge. Despite concerns about the nature of epistemological beliefs, there is also the question of how to assess them.

Assessment of Epistemological Beliefs

Instruments that measure epistemological beliefs are heterogeneous. Frequently, epistemological beliefs are measured by means of qualitative interviews (King & Kitchener, 1994; Perry, 1970), open-ended questionnaires (Baxter Magolda, 1992), or self-report questionnaires (Conley et al., 2004; Krettenauer, 2005; Schommer, 1990). According to Urhahne and Hopf (2004), there is a tendency that theories with a one-dimensional approach mostly use interviews while theories with multi-dimensional models mostly refer to questionnaires (see also Duell & Schommer-Aikins, 2001).

Schommer’s (1990) Epistemological Belief Questionnaire (EBQ) is one of the most commonly used instruments. It measures five proposed dimensions of epistemological beliefs by means of a questionnaire that consists out of 63 items (answered via a 5-point rating scale from ‘does not apply’ to ‘does apply’). The dimensions given in the questionnaire are Omniscient Authority (i.e., beliefs about the validity of the source of knowledge), Certain Knowledge (as in beliefs about the reliability of knowledge), Simple Knowledge (i.e., beliefs about the structure of knowledge), Quick Learning (as in beliefs about the speed of learning) and Innate Ability (i.e., beliefs about capacity for learning; Brownlee, 2003). The fourth and fifth dimensions instead address the nature of learning within a domain and do not refer to the nature of how generation of new knowledge is characterized within a discipline. Conley and colleagues focused their work rather on how new insights are characterized (2004; see Figure 2). They developed a four-dimensional model by means of a self-report questionnaire with 26 items. Items were rated on a 5-point Likert scale (from ‘strongly disagree’ to ‘strongly agree’).

Epistemological Beliefs Dimensions and Example Items (see Conley et al., 2004).

Application of this instrument revealed that during a 9-week course the students became more sophisticated in their beliefs about source of knowing and certainty of knowledge, but not in development of knowledge and justification of knowing (Conley et al., 2004). Furthermore, they could find evidence that children with a low socioeconomic status and low-achieving children had less sophisticated beliefs compared to average socioeconomic status and high-achieving children.

Using a translated version of this questionnaire, Urhahne and Hopf (2004) replicated the four-dimensional structure of epistemological beliefs for German students. Results also revealed that more sophisticated epistemological beliefs positively correlate with motivation and domain-specific self-concept in the subjects of biology and physics. Their study also showed a connection between epistemological beliefs and learning strategies – for example, children that had a stronger belief in certainty of knowledge more often used simple root learning strategies. Regarding educational psychology, Zeuch and Souvignier (2014) used a questionnaire with 16 items concerning different attitudes towards knowledge and science and further vignettes in order to assess transfer. They found, for examples, that psychology students hold more sophisticated epistemological beliefs than pre-service teachers. While their questionnaire is already focused on one discipline (educational psychology), it still assesses the content of the domain rather than its epistemology. Thus, there is still a lack of an instrument that assesses directly epistemological beliefs about educational psychology regarding the domain of classroom-based learning and instruction and accompanying factors (e.g., motivation, classroom management). Taken together, results reveal that epistemological beliefs affect many important domains related to teaching and learning. Thus, this subject needs further investigation with regard to pre-service teachers’ epistemological beliefs on educational psychology.

Open Research Questions and Hypotheses

The major aims of this research are twofold: First, we wanted to adapt the rather generic questionnaires designed to assess epistemological beliefs to the specific methodology of contemporary educational psychology research. This is rather a conceptual top-down approach driven by the multiple facets that distinguish this methodology. The second aim was to apply this newly designed instrument in practice and apply it in pre-service teacher training. In the first study, we followed a longitudinal approach and investigated students’ change in epistemological beliefs within an introductory course in educational psychology. The research question (RQ1) in this respect reads as follows: To what extent do students’ epistemological beliefs towards educational psychology change before and after they visited an introductory course in educational psychology? Based on recent findings about the nature and amount of misconceptions among psychology students (Lyddy & Hughes, 2011) we assume that pre-service teachers have little or no prior knowledge in psychology. We also assume that they have inappropriate mental models about what (educational) psychology is about and how research is conducted within this discipline. Subsequently we assume that using an intervention like a state-of-the-art introductory course might contribute to (1) a change in insights how reliable and valid research is in this field (Hypothesis 1) and (2) develop an appropriate representation about what educational psychology is about (Hypothesis 2).

The second study represents a cross section of both bachelor-level students at the beginning of their studies and master’s-level students’ epistemological beliefs before they are trained in their vocational field (bachelor’s level) and after they have gained a high amount of professional knowledge (master’s level). The research question in this respect (RQ2) reads as follows: To what extent do students at bachelor level differ from students at master’s level with regard to their epistemological beliefs about the nature of educational psychology? We assume, that master’s-level students have already developed an appropriate representation about validity and reliability of the field and with regard to educational psychology issues and thus, compared with bachelor-level students, show advanced attitudes and knowledge (Hypothesis 3).

Study 1 – Assessing Change in Epistemological Beliefs During a Research-Based Lecture

Method

Participants

A total of 82 participants (51 female, 31 male, mean age = 24.27 years; SD = 8.29) were recruited for this study. All participants were university students in the pre-service teacher course programme at a Central European university attending an introductory lecture in educational psychology. The course is part of the study orientation phase. Thus, this was the first encounter for the students with educational psychology within their studies. No reward was given for participation, students were informed that the questionnaire was designed for formative evaluation purposes in order to optimize the course. The offered course was one alternative out of three similar courses and could be attended at different semesters within the curriculum.

Procedure

In the first lecture an overview about course requirements and exam modalities were presented, followed by a short explanation of the syllabus that was also announced prior for online registration purposes. Immediately afterwards, the questionnaire was administered within the lecture time. After 15 minutes, the lecture started with its regular content. Twelve weeks later the same questionnaire was applied as post-test, also within the regular lecture time but at the end of the session. Students who did not want to participate were allowed to leave the lecture hall.

Material

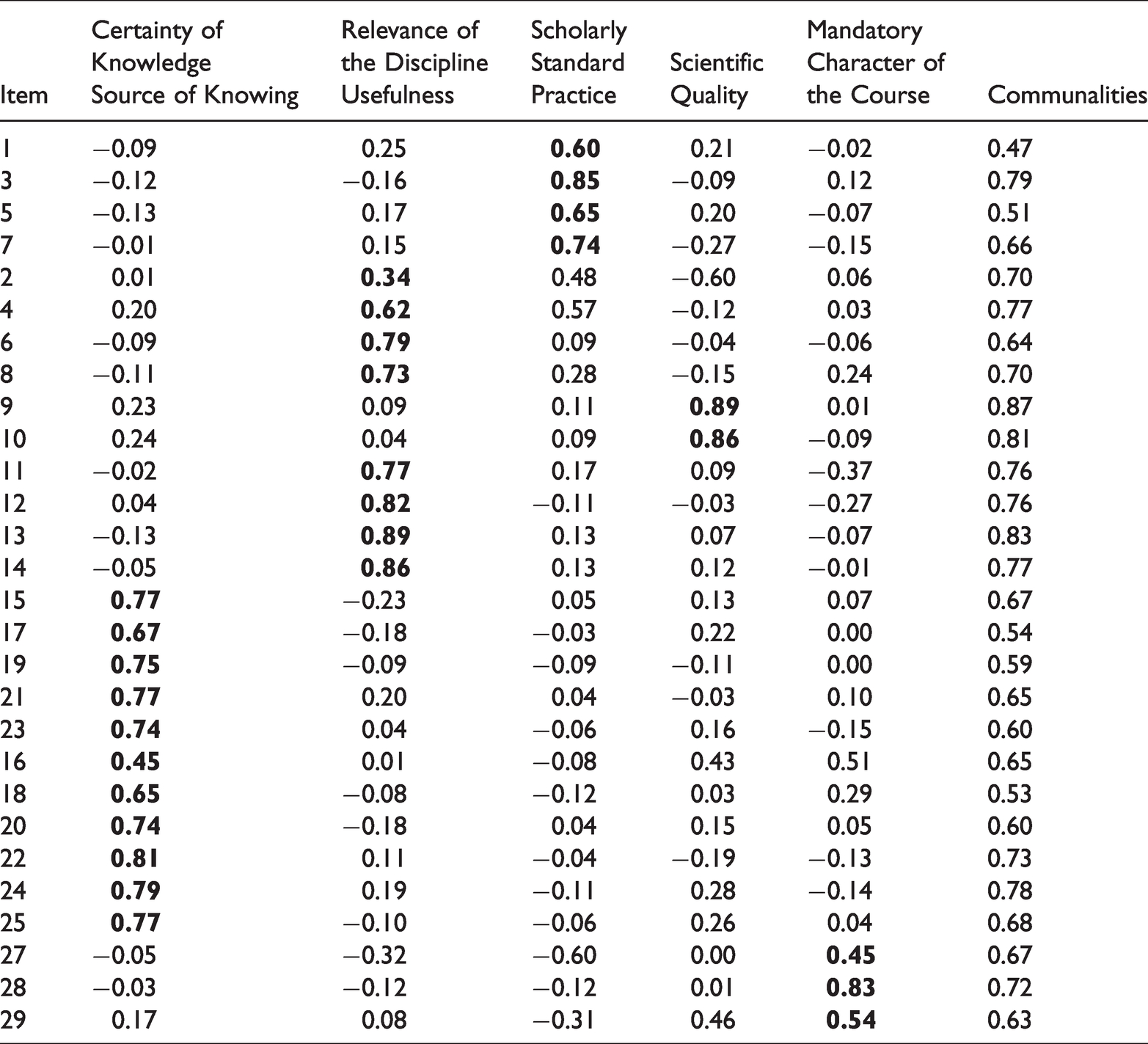

The applied questionnaire consisted of different subscales. Some of the scales were taken from different instruments to assess epistemological beliefs and were adapted to the psychological sub-discipline of educational psychology. As recommended by Trautwein and Lüdtke (2007), we included scales that measure topic-specific beliefs as well as scales that measure general epistemological beliefs. The following subscales were integrated into the instrument: Scholarly Standard Practice (4 items, e.g., ‘Results in educational psychology are based on systematically planned and analysed research data’; Cronbach’s Alpha in post-test = 0.73; pre-test = 0.66), Relevance of the Discipline for in-service practice (4 items, e.g., ‘Results from research in educational psychology may help to reduce or avoid problems in school’; Cronbach’s Alpha = 0.79; pre-test = 0.61), Scientific Quality (2 items, e.g., ‘Research in educational psychology is as properly conducted as in physics’; Cronbach’s Alpha = 0.95; pre-test = 0.79), Usefulness for in-service teaching (4 items, e.g., ‘Educational psychology helps me to plan my future instruction in school’; Cronbach’s Alpha = 0.91; pre-test = 0.82), Source of Knowing (5 items, e.g., ‘In science, you have to believe what the science books say about stuff’; Cronbach’s Alpha = 0.84; pre-test = 0.79), Certainty of Knowledge (6 items, e.g., ‘All scientific questions have one right answer’; Cronbach’s Alpha = 0.83; pre-test = 0.83), and Mandatory Character of the Course (originally with 4 items, e.g., ‘This course is not be relevant for teacher students’, but one item was excluded (item 7.1) in post-test, with the three remaining items Cronbach’s Alpha = 0.67; pre-test with the same three remaining items = 0.79). All items could be answered on 5-point Likert scales (from ‘strongly disagree’ to ‘strongly agree’). Regarding scales that address beliefs about educational psychology (Scholarly Standard Practice, Relevance of the Discipline, Scientific Quality, Usefulness), higher values mean more preferable beliefs about the nature and content of educational psychology. For the general belief scales (Source of Knowing and Certainty of Knowledge), we recoded the values, and again higher values mean more preferable general scientific views. Regarding Mandatory Character of the Course: the lower the score, the more participants suggest that the course is helpful and should be mandatory. The subscales Source of Knowing and Certainty of Knowledge were taken from Urhahne’s and Hopf’s (2004) German translation of Conley’s and colleagues’ (2004) questionnaire and aimed on assessing beliefs about science in general, whereas all other scales were specifically adapted to the field of educational psychology. This approach was chosen to measure the relationship between general epistemological beliefs and attitudes towards educational psychology and its underlying methodology. To examine the assumed structure of the scales a principal component analysis with subsequent Varimax rotation was applied to all items except item 26 (for all items see Appendix A). Following a scree plot analysis and the Kaiser-criterion (Eigenvalues > 1), five factors were extracted (sequence of original Eigenvalues: 7.00, 5.62, 2.67, 2.18, and 1.94).

These five factors explain 66.97% of overall variance (Certainty of Knowledge; Source of Knowing: 24.15%; Relevance of the Discipline; Usefulness: 19.38%; Scholarly Standard Practice: 9.22%; Scientific Quality: 7.52%; Mandatory Character of the Course: 6.70%). The first two factors include two subscales each (see Table 1). Almost all communalities (except 5 out of 29) are higher than .60; thus, following Bühner (2006) a sample-size larger than .60 justifies exploratory factor analysis (EFA). Besides the criteria of communalities, there are also other criteria undermining that an EFA can yield good quality results. Following de Winter and colleagues (2009), such criteria include a small number of factors, high factor loadings and a high number of variables. In this study a small number of factors (f = 5) is given, factor loadings are high for almost all variables (see Table 1), and the number of variables (p = 29) is high, according to de Winter and colleagues. Thus, the quality of the EFA can be judged as high although the sample size is rather low.

Factor Analysis and Factor Loadings.

In addition, a selection of different topics based on major chapters of an introductory psychology textbook was presented as Content Assessment (Krapp et al., 2006). Participants had to judge on dichotomous scales if these topics are core topics within the field of educational psychology or not. Overall, 26 items were presented here, with 11 topics that are original to educational psychology (e.g., ‘memory’, ‘learning processes’) and 15 that are not original to it, or are so only marginally (e.g., ‘depression’, ‘personality’; Cronbach’s Alpha = 0.91).

The lecture itself provided an overview over basic topics in learning research, developmental and cognitive psychology, learning in schools, universities and corporations, and education with an emphasis on the following topics: history of educational psychology, basics and foundations of educational psychology, developmental psychology, cognitive psychology and educational psychology, learning as problem-solving, motivation and emotion, education and teaching, diagnostics, counselling, instructional design, and learning with media. In order to interlink theory and practice, all topics were presented in a research-oriented manner: the lecture was based on current research results, giving students insights into scientific work of this discipline. One full professor presented the lecture throughout the whole semester, using contemporary standard presentation techniques (following principles of direct instruction; Stockard et al., 2018). Each session lasted 90 minutes, with 14 sessions overall. Physical presence was not mandatory. For successful completion of the course, a written exam at the end of the semester had to be passed.

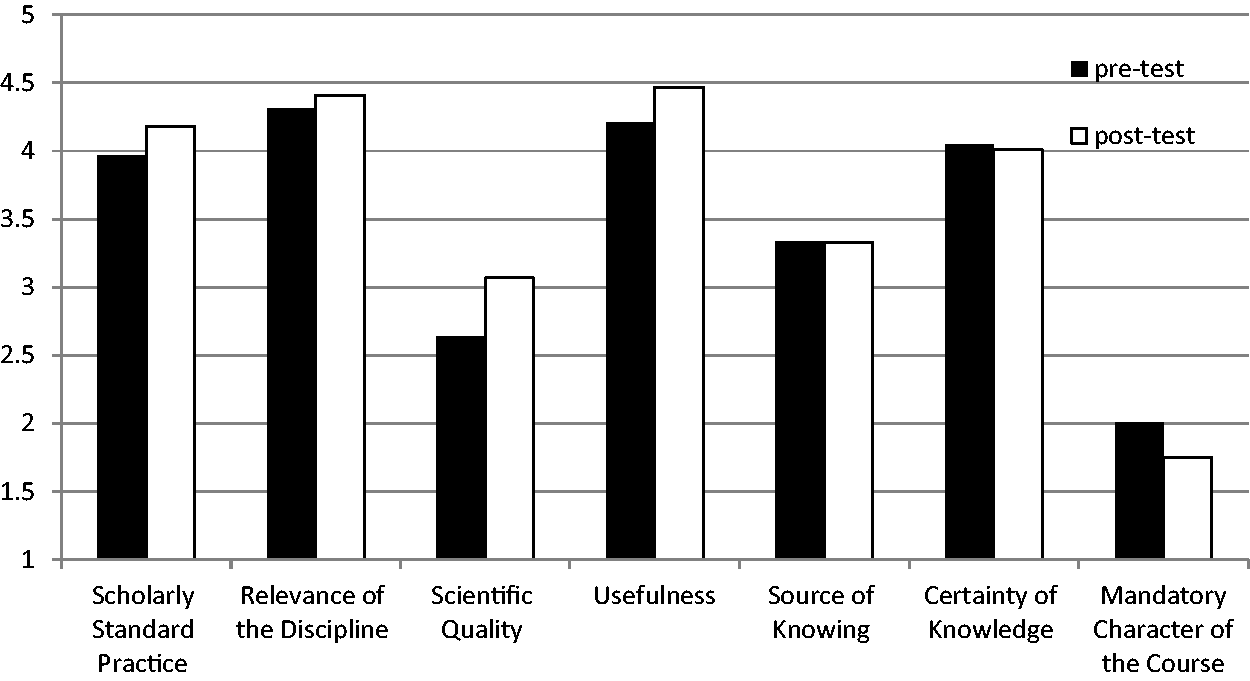

Results

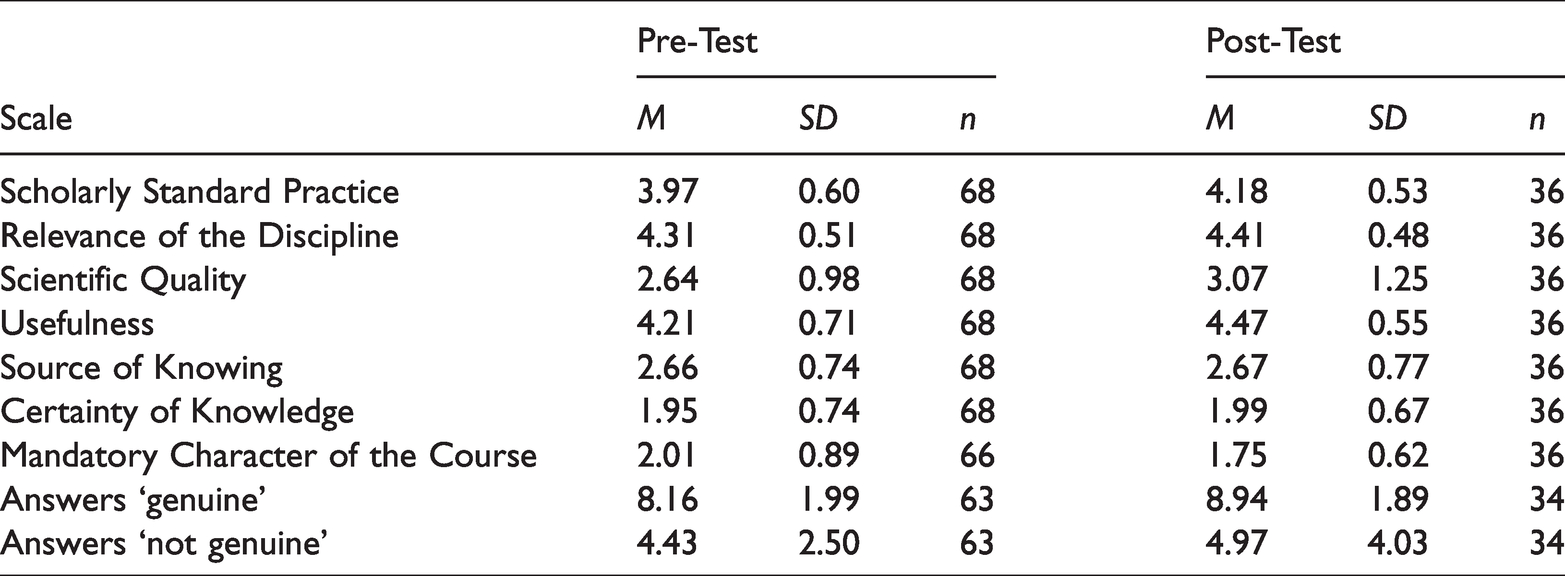

For answering RQ1 and analysing the differences between pre-test and post-test, all single items were aggregated corresponding to their assigned scales and mean values were computed. For Content Assessment, the number of correct assignments was added to an overall value. A major methodological problem resulted out of different sample size numbers between pre-test (n = 68) and post-test (n = 36) as well as a minimal overlap between participants taking part in both points of measurement (n = 21). The problem resulted out of the fact that attendance at the lecture itself was not compulsory, as is the case for university lectures in most German-speaking nations. This leads to the problem that attendees are visiting lectures depending on their weekly schedule, and pre-service teacher students with at least two different majors out of 21 subjects have a wide range in their weekly academic programme. Nevertheless, most students taking this lecture attend about 75% of sessions, but they might miss single ones. As a result, they receive an overall introduction but might miss single units, which the students then have to be prepare on their own behalf in order to successfully pass the exam. As all scales (except Scientific Quality in pre-test and Usefulness in post-test) were normally distributed, according to a Kolmogorov-Smirnov test (Scholarly Standard Practice: pre D = 0.11; p = 0.42; post D = 0.22; p = 0.06; Relevance of the Discipline: pre D = 0.12; p = 0.29; post D = 0.20; p = 0.10; Scientific Quality: pre D = 0.20; p = 0.01; post D = 0.22; p = 0.07; Usefulness: pre D = 0.15; p = 0.11; post D = 0.25; p = 0.02; Source of Knowing: pre D = 0.10; p = 0.47; post D = 0.17; p = 0.24; Certainty of Knowledge: pre D = 0.15; p = 0.11; post D = 0.13; p = 0.60; Mandatory Character of the Course: pre D = 0.08; p = 0.79; post D = 0.12; p = 0.67; Correct answers: pre D = 0.16; p = 0.09; post D = 0.21; p = 0.09) we still decided here to use two different ways of significance testing: (1) an ANOVA with repeated measurement was used to control for comparisons within-subjects and (2) t-tests were computed for between subject analyses. In order to avoid the problem of multiple testing with an accumulation of the Alpha-Error, p-values were adjusted using the Bonferroni-Holm-procedure (Neter et al., 1992). Descriptive statistics reveal that all domain-specific subscales showed an increase from pre- to post-test, the general belief scales show rather similar values and the Mandatory Character of the Course scale reveals a decrease from pre- to post-test (see Table 2 and Figure 3).

Mean Values and Standard Deviations of Dependent Variables.

Mean Values in Pre- and Post-Test.

The decrease in Mandatory Character of the Course results out of the reversed polarity of the scale. The lower the score, the more participants suggest that the course is helpful and should be mandatory. Regarding Content Assessment, descriptive results reveal that more correct as well as incorrect topics were identified correctly (see Table 2).

Results of the repeated measurement ANOVA (within-subject comparisons) revealed a non-significant overall effect from pre- to post test (F(10,9) = 1.90; p = .113; η2 = .60). Nevertheless, analyses for the subscales showed a significant effect in subscale Scholarly Standard Practice (F(1,18) = 4.74; p = .022; η2 = .21) while Scientific Quality just misses significance level (F(1,18) = 2.13; p = .055; η2 = .14) as well as Usefulness (F(1,18) = 2.66; p = .060; η2 = .13) and Mandatory Character of the Course (F(1,18) = 2.67; p = .060; η2 = .13). No significant differences were found for Relevance of the Discipline (F(1,18) = 1.46; p = .121; η2 = .08), Source of Knowledge (F(1,18) = 1.78; p = .100; η2 = .09) as well as Certainty of Knowledge (F(1,18) = .04; p = .427; η2 = .00).

Regarding Content Assessment results revealed no significant differences from pre- to post-test concerning topics original to educational psychology (F(1,18) = 2.34; p = .071; η2 = .12) and topics that are not original to the field (F(1,18) = .84; p = .186; η2 = .04).

Inference statistical analyses (between-subjects comparisons) revealed for two-sided testing and after Bonferroni-Holmes adjustment significant differences from pre- to post test in subscales Scholarly Standard Practice (t(67) = −2.85; p = .006; d = .36), Scientific Quality (t(67) = −3.60; p = .001; d = .40), Usefulness (t(67) = −3.02; p = .004; d = .40), and Mandatory Character of the Course (t(65) = 9.20; p < .001; d = .32). No significant findings were found regarding the scales Relevance of the Discipline (t(67) = −1.69; p = .096; d = .21), Source of Knowing (t(67) = −0.06; p = .956; d = .01), and Certainty of Knowledge (t(67) = −445; p = .658; d = .06).

Test statistics regarding Content Assessment revealed that in post-test significantly more topics original to educational psychology were identified than in the pre-test (t(62) = −3.13; p = .003; d = .40). The difference in correct identification of topics that are not original to the field was not statistically significant (t(62) = −1.72; p = .090; d = .18).

An analysis of correlations among the sub-scales specifically adapted to assess participants’ attitudes toward educational psychology and Science in general revealed hardly any significant correlations. Here, Source of Knowing does not correlate significantly with Scholarly Standard Practice (r(36) = −0.13; p = .46), Scientific Quality (r(36)= −0.32; p = .05), Usefulness (r(36) = −0.08; p = .65), and Mandatory Character of the Course (r(36) = 0.12; p = .49), and Relevance of the Discipline (r(36) = −0.11; p = .52). It correlates significantly with Certainty of Knowledge (r(36) = 0.81; p < .001). Certainty of Knowledge does not correlate significantly with Scholarly Standard Practice (r(36) = −0.19; p = .27), Usefulness (r(36) = −0.09; p = .61), and Mandatory Character of the Course (r(36) = −0.23; p = .18), and Relevance of the Discipline (r(36) = −0.08; p = .65). It slightly correlates with Scientific Quality (r(36) = −0.34; p = .04).

Discussion Study 1

Results regarding the scales of internal consistency revealed that all scales except one can be regarded as reliable measures. The exception here was the scale ‘Mandatory Character of the Course’ with low values in internal consistency. A basic reason for this exception could be the fact that the course was available for pre-service students at different stages of their programme. Thus, some of the students might already have practical experience in schools, while others have not. With first insights into school practice, students might be aware that teaching is not only presenting content, but rather being faced with a multiple set of challenges regarding teaching and learning, education, communication, counselling etc. In other words: challenges where educational psychology or psychology in general can contribute to a helpful basic and applicable knowledge and competences. The need of such competences is hard to anticipate without the experience of direct needs. Nevertheless, the internal consistency of this sub-scale lies only marginally below the standard threshold of 0.70.

RQ1 addressed the question of to what extent students’ epistemological beliefs towards educational psychology change before and after they visited an introductory course in educational psychology. Here, a methodological problem came up: the drop in sample size from pre- to post-test. This might lead to a bias surrounding the remaining participants – for example, regarding motivation and/or subject interest. Pre-service teacher students had the possibility either to attend the same course at a later phase of their study programme or, to attend a parallel course with a slightly different focus rather on developmental psychology. Thus, participants in the pre-test could have used the first sessions in order to orient themselves and then continue with this course or leave it. Nevertheless, the pre-test sample would here provide a base rate regarding the cognitive variables as assessed in this study. Despite the drop in sample size, the comparison of pre- with post-test measures reflects the change in students’ experience. Unfortunately, this can only be concluded from between-subjects comparisons due to a missing overlap between pre-test sample and post-test sample. Thus, interpretation of these findings has to be conducted carefully, due to the above-mentioned possible biases.

The validity of the chosen methodology can be judged out of three perspectives. First of all, the items and scales used here show a high amount of face validity. In addition, the subscales that specifically referred to educational psychology were sensitive to reveal some significant changes in some beliefs and attitudes regarding this discipline. Students judged the Scholarly Standards of educational psychology as well as its Scientific Quality significantly higher at the end of the course with medium to strong effect sizes. Both subscales, which were referring to attitudes towards Science, in general did not differ significantly from pre- to post-test. Finally, correlation analyses of the first study indicate that the general epistemological beliefs as assessed here hardly correlate with the subject specific scales and are located on different factors. This can be regarded as an indicator for discriminatory validity of the two different sets of scales that obviously assess two different constructs. These results also indicate that it is important to emphasize the role of subject-specific assessment of epistemological beliefs: results here suggest that these are two different constructs. A student might have a general impression about how science works but might also have a much more differentiated impression about how single disciplines lead us to new insights. Thus, it is important to assess both of these aspects, the general ones and the subject specific ones.

The beliefs related to educational psychology as assessed here show that students have developed up to the end of the semester. Although some of those differences just miss significance or only show significance in between-subjects analysis, these results stress the importance of showing (teacher) students how this discipline works, i.e., that it is a scientific discipline with established standards like objectivity, reliability, validity, and research that is replicable among other criteria. Nevertheless, this demands that academic education is research-based or research-oriented so that students will be able to comprehend how knowledge is ‘created’ (Zumbach & Moser, 2012). Though Usefulness in within-subjects comparisons just misses significance level, a significant increase was measured for the Usefulness of educational psychology for in-service practice in between-subjects design. This might also provide a hint that psychology teaching and learning might not only benefit from creating clarity about how research is conducted and where insights come from, but also from demonstrating what this research is good for in daily practice and how it can be translated in daily (school) life.

Regarding the Relevance of the Discipline, Source of Knowing, and Certainty of Knowledge, we did not find significant differences both in within-subjects comparisons as well as in between-subjects comparisons. These scales include rather generic or vague items, which are unlikely to change within the intervention as applied here. Relevance of the Discipline is, like Mandatory Character of the Course, hard to judge from pre-service point of view and might be affected rather by treatments that combine introductory courses with advanced courses and especially practical experience.

Regarding general scientific epistemological beliefs about the Source of Knowing and the Certainty of Knowledge, the high scores indicate that students already possess a rather critical attitude towards the act of how stable scientific findings are and how these findings are communicated. This is not original to educational psychology but, is likely to be addressed within different disciplines and over the curriculum and fosters students’ critical thinking.

Finally, results of between-subjects comparisons in the measures Content Assessment show that students could correctly identify more topics that are original to the field of educational psychology. The correct identification of topics that are not original to educational psychology still is better in the post-test, however, only on descriptive level. Those results might indicate that changes in beliefs and attitudes are accompanied or, even more likely caused by an increase in knowledge or a change in knowledge structures, assuming that these attitudes are not crystalized (cf. Schwarz & Bohner, 2001).

Taken together, we did not find significant differences for all scales in Study 1; thus, Hypotheses 1 and 2 can only partially be confirmed.

Our results show that one possible strategy to support the development of appropriate beliefs and attitudes towards and in educational psychology might be to provide adequate learning environments, e.g., research-oriented lectures. These learning environments should support students in acquiring knowledge and competences about the nature of this discipline but also how it can be translated into practice. Guilfoyle and colleagues (2020) further recommend combining information from the epistemic nature of the discipline with personal research experiences in the field. Additionally, interventions that include conflicting information have shown to be of advantage for the development of preferable epistemological beliefs in the domain of psychology (Rosman, 2016).

Asides from a longitudinal perspective that investigates students change in beliefs (RQ1), we were interested in a cross-sectional analysis regarding epistemological beliefs about educational psychology in different stages the study programme. Thus, we conducted the following cross-sectional study, analysing different students at different phases of a pre-service teacher programme (RQ2).

Study 2 – Change of Epistemological Beliefs During the Programme

This study extends the first study by using a cross sectional design by means of assessing students’ epistemological beliefs with regard to educational psychology at different stages of the curriculum (bachelor's programme versus master’s programme; RQ2). The same questionnaire as in Study 1 was applied. The purpose of this study was to determine whether the findings from Study 1 could be replicated and extended from one course (as in Study 1) to a whole programme. We assume here that during their professional development and with ongoing expertise development, their views on epistemological beliefs also undergo a developmental process.

Method

Participants

Overall, 252 preservice teachers (179 female, 73 male, mean age = 21.42 years; SD = 3.76) participated in the second study. Again, all participants were university students in the pre-service teacher course programme at a central European university. 203 students were freshmen on bachelor level (BC) and 48 were on master level (MA). Students on bachelor level did not have prior experience with educational psychology. The lecture was part of their study orientation phase. Students on master level are assumed to have advanced knowledge, as educational psychology was part of their bachelor phase. No reward was given and students were informed about the evaluation’s purpose.

Procedure

The questionnaire was administered within different mandatory bachelor- and master’s-level courses within their first units at the beginning of the winter term. Participants took about 15 minutes to fill in the questionnaire. Bachelor students were all freshmen and in the first semester of their study. The questionnaire was conducted in their first lecture of the orientation phase. After a short introduction of the course lecturer, the students were informed about the procedure of the evaluation. Students who did not want to participate were allowed to leave the lecture rooms.

The procedure was the same within the group of master’s-level students and the evaluation also took place at the beginning of the winter term. One exception here was that the evaluation of the master’s students took place in the same course, but the course was split between three different course lecturers. Nevertheless, the evaluation took place at the beginning of the semester, so the course lecturers had not taught any of the questionnaire’s topics so far.

Material

The applied questionnaire consisted of the same subscales that are described in study 1: Scholarly Standard Practice (4 items, Cronbach’s Alpha = 0.72), Relevance of the Discipline for in-service practice (4 items, Cronbach’s Alpha = 0.56), Scientific Quality (4 items, Cronbach’s Alpha = 0.86), Usefulness for in-service teaching (4 items, Cronbach’s Alpha = 0.83), Source of Knowing (5 items, Cronbach’s Alpha = 0.65), Certainty of Knowledge (6 items, Cronbach’s Alpha = 0.76), and Mandatory Character of the Course (originally with 4 items, after one item excluded with 3 items; Cronbach’s Alpha = 0.70). Similar to Study 1, all items could be answered on 5-point Likert scales (from ‘strongly disagree’ to ‘strongly agree’). Regarding scales that measure domain-specific and general epistemological views, higher values reveal more preferable views. For Mandatory Character of the Course, lower values indicate that the course is helpful and should be mandatory. Further, similar to Study 1, a selection of different topics out of the field of psychology in general was presented as Content Assessment (26 items, Cronbach’s Alpha = 0.84). Participants had to judge if these topics are core topics within the field of educational psychology or not.

All students were enrolled in the preservice teacher education programme. The underlying curriculum consists out of four core parts: (1) domain knowledge in two subject disciplines where the teaching permission will be acquired, (2) pedagogical content knowledge in each subject, (3) pedagogical knowledge, and (4) practical educational and instructional skills. For full teaching qualification, a master’s degree is required, so that almost all students take the whole programme at the same university. While the bachelor programme (four years) covers basic knowledge within the above-mentioned parts the master’s programme (two years) allows specialization within single fields (also from a methodological perspective in either one of the two domains or in pedagogical sciences) as well as practical skills.

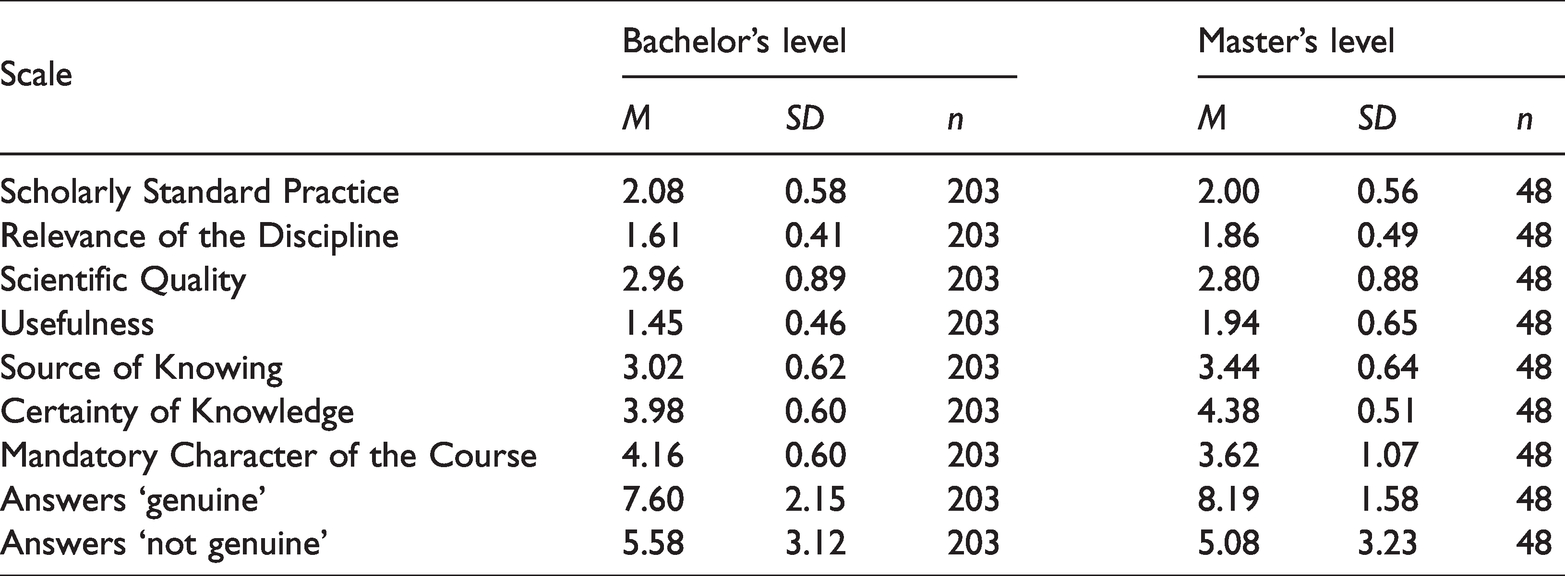

Results

For analysing the differences between bachelor- and master’s-level students, we used an MANOVA for between subject analyses. Stage within the programme was used as independent variable (BC vs. MA) and the subscales of the questionnaire were used as dependent variables.

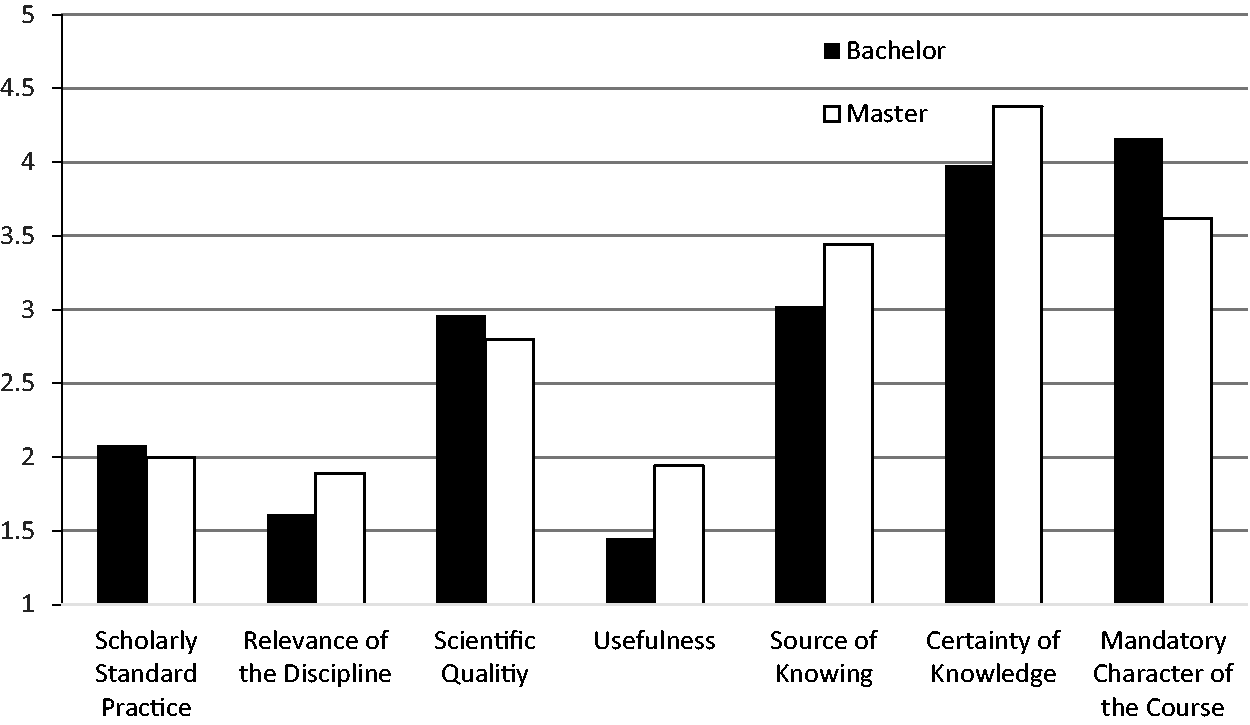

Results of the MANOVA revealed a significant overall effect (F(9,241) = 10.48; p = .000; η2 = .28). Analyses for the subscales showed significant effects for Relevance of the Discipline (F(1,249) = 13.81; p = .000; η2 = .05), Usefulness (F(1,249) = 36.57; p = .000; η2 = .13), Source of Knowledge (F(1,249) = 16.87; p = .000; η2 = .06), Certainty of Knowledge (F(1,249) = 18.03; p = .000; η2 = .07) and Mandatory Character of the Course (F(1,249) = 22.00; p = .000; η2 = .08). No significant differences were found for Scholarly Standard Practice (F(1,249) = 0.70; p > .05; η2 = .00) as well as Scientific Quality (F(1,249) = 1.27; p > .05; η2 = .00).

Descriptive statistics reveal that the master’s-level students show higher values for the significant scales than the bachelor's-level students, except for Mandatory Character of the Course (see Table 3 and Figure 4). The decrease in the latter results out of the reversed polarity of the scale. The lower the score here, the more participants suggest that the course is helpful and should be mandatory.

Mean Values and Standard Deviations of Dependent Variables.

Mean Values of Bachelor's and Master’s Students.

With regard to Content Assessment, results showed only partly significant differences between bachelor's- and master’s-level students: descriptive results reveal that master’s students more correctly identified topics that are original to the field compared to bachelor's students (see Table 3; correct: F(1,249) = 3.21; one-sided p = .037; η2 = .01). No significant differences were found for the identification of non-original topics (F(1,249) = 0.98; p > .05; η2 = .00).

Discussion Study 2

Results from the second study reveal again that the scales used here show in general acceptable values with regard to internal consistency. Only the subscale Relevance of the Discipline reveals an insufficient value. A possible explanation for this could be that students simply do not yet know and find it difficult to judge how basic theories and findings in educational psychology might be applied in everyday classroom settings. These assumptions are supported by findings from König et al. (2020), demonstrating an increase of performance-related knowledge during teacher students’ practical phase. Further, research results from König (2013) reveal that practical teaching phases positively influence the practical pedagogical knowledge of teacher students. Additionally, research from Kleickmann et al. (2013) reveals that the length of the practical experience increases pedagogical content knowledge. Consequently, in particular beginners of the academic programme that hardly know anything about the nature and the content of this discipline yet might be faced with difficulties to answer these questions homogenously. This might also affect students in a later stage of the curriculum: although they already had some practical experiences with teaching in schools as part of their academic programme, they might still face difficulties to transfer their knowledge to daily classroom instruction.

RQ2 addressed the question to what extend do students at bachelor’s level differ from students at master’s level with regard to their epistemological beliefs about the nature of educational psychology. All significant differences we found (i.e., Relevance of the Discipline, Usefulness, Source of Knowledge, Certainty of Knowledge, and Mandatory Character of the Course) indicate that master’s students have developed a more accurate picture of epistemological views in educational psychology compared to bachelor's students. Findings indicate here that students entering the programme are not really aware of content and nature of educational psychology. Although almost all of them had to take psychology as a school subject for at least one year, their knowledge in this regard and their opinion about this field are not really developed. This is even more astonishing as these students should be familiar with the curriculum they chose: informed students should know about the content of the curriculum of the two subjects they want to teach but also about the parts of the programme that include content didactics, education, and educational psychology. Obviously, beginners as assessed within this study are only partly informed about the programme they are enrolled in. Results also show evidence that this knowledge develops over time as assessed in the master’s-level students cohort within this sample. However, the perspective on scientific standards and quality of educational psychology does not significantly differ between bachelor's and master’s students.

Taken together, master’s-level students show more sophisticated knowledge about the nature of educational psychology and show more preferable epistemological beliefs (partly confirming Hypothesis 3). This is in line with research revealing that epistemological beliefs change during academic studies (e.g., Ferguson & Brownlee, 2018; Päuler-Kuppinger & Jucks, 2017; Trautwein & Lüdtke, 2007). Nevertheless, the reported scores provide space for further development, because most of the values are rather low. This is in particular alarming, as epistemic beliefs directly affect the processing of new information (Hofer & Pintrich, 1997), also in teacher education (Walker et al., 2012). Even the master’s students do not seem to realize the relevance and usefulness of this discipline for their future in-classroom work. This might be caused by the fact that they have already experienced practical teaching phases during their programme, but these practical phases are not sufficient to evaluate the programme and its disciplines from a metacognitive perspective. In this regard, research from Knight (2015) shows that students increasingly see theory as integral to their practice over the course of their studies. However, negative beliefs about pedagogical scientific content show high stability (Bleck & Lipowsky, 2020). Additionally, findings from Kleickmann et al. (2013) provide evidence that in-service teachers or student teachers with considerable practical experience show substantial higher pedagogical content knowledge compared to pre-service teacher students, either at the beginning or at the later stage of their studies.

Nevertheless, these interpretations are limited because we did not use a longitudinal research design but rather a cross-sectional one. Support for these assumptions is provided by the fact that master’s students completely finished the same bachelor's programme. Despite this fact, sampling biases cannot be excluded.

Further, students’ motivation to learn from practice is shown to be higher than their motivation to learn from theory and related research (Bråten & Ferguson, 2015). Usually pre-service teachers rather are struggling in their practical phases (Moore, 2003), which might affect their ability to reflect their programme adequately and in consequence education research seems to be invaluable for them. Nevertheless, it seems that the experienced usefulness of educational psychology is more recognized by students at master’s level than by bachelor's-level students, although it should be further promoted during their education overall. Another improvement should focus on teacher students’ trust in scientific quality of educational psychology. Both could be fostered by means of (interdisciplinary) teaching approaches that foster higher order thinking skills. Research-based courses or tasks that in particular provide deeper insights into the methodology of educational psychology would be of advantage here (see Flores, 2016 for different ways to include research into teacher education), as well as practical oriented courses like Problem-Based Learning or Service Learning (Lunn Brownlee et al., 2017). Such promotion could also increase students’ awareness regarding the importance of this discipline for teaching practice, because it affects almost all areas of teachers’ daily work.

Overall Discussion

One major aim of this research was to develop and apply an instrument for assessing epistemological beliefs within the field of educational psychology. Based on the assumption that epistemological beliefs directly influence learning in higher education (e.g., Hofer, 2004; King & Kitchener, 1994), the fostering of adequate beliefs within different domains could and should be forced. Especially in the field of psychology, it appears to be evident that even students in genuine psychology programmes still have misconceptions about psychological content knowledge and/or methodological issues (Lyddy & Hughes, 2011). The situation could be more problematic in academic programmes where Psychology or a psychological sub-discipline is only a minor or subsidiary subject. This is actually the case in some academic programmes in pre-service teacher education, where the main focus is rather on the school subjects that future teachers will be teaching: here, educational psychology and/or educational science are only accompanying disciplines among others commonly, secondary school teacher students are not only covering one teaching discipline but rather more (e.g., in Austria and in some parts of Germany they cover two). Therefore, we assumed that it is not only difficult to acquire a deep understanding of the methodology (and epistemology) of these fields, but much harder to get the idea about how educational psychology ‘works’ and how insights are generated by which methodology. Nevertheless, there is consensus, that preservice teachers need elaborated epistemic beliefs in all areas of their professional knowledge (Guilfoyle et al., 2020). Within this research, we made a first step in adapting and extending existing measures to assess different dimensions of epistemological beliefs to the domain of educational psychology. Results from both studies reveal that the instrument developed and applied here is suitable and in most sub-scales reliable. Nevertheless, there were subscales that did not meet acceptable criteria of reliability. A basic reason here could be the fact that these single measures are very specific and need at least basic experience of participants to respond to the items as specified within these scales. Outcomes from both studies let us assume that participants might simply be overstrained by these scales without basic (practical) knowledge. For example, Study 1 reveals that internal consistency significantly increases from pre- to post-test. Especially with regard to the Relevance of the Discipline scale, our results let us assume that a basic practical teaching experience or at least a demonstration of this is necessary to understand how educational psychology can be transferred into practice.

Results also reveal that the instrument shows not only face validity but also is sensitive to measure changes during different educational programmes. While the first study used a longitudinal design, although only covering one course over 4 months, the second study used a cross-sectional design comparing two different samples at different stages (bachelor vs. master’s level) of a teacher education university programme. Within both studies, improvement with regard to knowledge about nature and domain as well as epistemology of educational psychology could be detected. Nevertheless, these slight improvements reveal that there is additional need in order to foster students’ insights about how educational psychology can contribute to applicable practical knowledge (e.g., for use in daily classrooms). Further, it has to be pointed out that research approaches and findings in educational psychology might partially differ from those in natural sciences (e.g., educational psychology might use interpretative or discursive psychological approaches). However, they are not based on simple ‘opinions’, but instead follow clear scientific standards that are not worse or better than standards within disciplines that are usually regarded as ‘hard’ (e.g., in biology or physics). In addition, there is also a need to show students the usefulness of educational research as frameworks for varying contexts. The right balance between those two aspects is a crucial aspect that leads to the acceptance and positive perception of educational research (Guilfoyle et al., 2020). This is in particular important, because teachers’ classroom activities are highly dependent on their conceptions about teaching and learning. And those conceptions are influenced to a high amount by individual epistemic beliefs about knowledge and learning (Chan, 2011).

Comparing mean values between Study 1 and Study 2 shows that there are different levels regarding most subscales. This difference can be explained by the settings in which students participated. Participants in the first study were all students on the same course (research-based lectures on educational psychology), while in the second study the context was much broader and assessment took place in teacher education courses outside this specific discipline. Thus, context available within the first study might have influenced answering behaviour and contributed to higher scores than within the more general setting with a lower contextual framing, as in Study 2. In consequence, future studies could use a longitudinal approach and investigate a broader population of teacher students’ (as in the second study) and their change in epistemological beliefs during a longer time span than in the first study. Further, it would be particularly helpful to measure in addition the amount of research-related activities these students encounter during their studies, in order to draw conclusions about the influence of engagement with education research on epistemological beliefs in educational psychology. This might add to research findings related to epistemic beliefs of preservice teachers and the importance of such beliefs for the process of learning how to teach.

A major criticism towards our chosen methodology (using self-reported Likert-scales) is provided by Sinatra (2016). She doubts about the validity of such approaches. Nevertheless, for using other approaches like behavioural observation, think-aloud protocols, vignettes, interviews, etc., a major prerequisite is usually an increased experience within the domain. In other words: more sophisticated methodological approaches might be applicable with an increase of expertise (e.g., for reading comprehension; see Fox, 2009). Revisiting participants in these samples reveals that especially freshmen and even advanced students show a rather basic level of knowledge within the field. Thus, the validity of above-mentioned higher-level approaches is also questionable, as in, for example, think-aloud protocols in usability studies (Ramey et al., 2006). Additionally, Krettenauer (2005) found high correspondence between interview and questionnaire data. Therefore, we assume that self-reported questionnaires are still a valid and economic tool in order to assess epistemological beliefs for a broad range of participants who begin to develop their abilities within a certain field. But again, following Sinatra (2016), mixed-method approaches are superior to self-reported questionnaires where applicable (despite the fact, that analysis of such qualitative data might also be problematic (cf. Mazor et al., 2008). Reflecting upon the results of both studies, such approaches do not seem to be indicated, due to low levels of expertise.

Taken together, this research shows that epistemological beliefs of preservice teacher students develop only slightly over time and curriculum. Even advanced students have not developed an elaborated insight into educational psychology. Educational psychology is a core discipline connecting basic with applied research that has a major impact on daily educational practice. Thus, it is crucial to develop curricula and possibilities for preservice teacher students that provide opportunities to increase their expertise within this field.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or

publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.