Abstract

This study investigates online hate speech in Finland, particularly Twitter messages targeting people of Muslim faith and the LGBTQ+ community, using a mixed-methods approach that combines quantitative text classification with a BERT model and qualitative thematic analysis via BERTopic and examination of highly interacted posts from 2018 to 2023. The study shows increasing instances of hate speech occurring online, primarily against Muslims, with topic modeling uncovering 32 topics for Muslims and 41 for the LGBTQ+ community, featuring themes of violence, cultural conflict, and challenges to traditional values. The LGBTQ+ community is depicted as undermining traditional norms, whereas Muslims are presented with hostility. The research underscores the necessity for digital platforms to employ nuanced strategies to counter hate speech, advocating for policies that tackle hate speech while also addressing the underlying factors and enhancing understanding of the social and cultural contexts of the targeted groups to refine detection accuracy.

Introduction

In the contemporary digital era, social media platforms such as X (formerly Twitter), have significantly reshaped the landscape of political and social discourse. These social networks democratize communication by enabling interactions on a global scale, yet they simultaneously serve as conduits for disruptive phenomena like hate speech (Kettunen and Paukkeri, 2021). Despite the perception of social cohesion within Finnish society, online hate speech toward particular minorities has continued to increase, a reflection of deeper sociopolitical rifts concerning identity and prejudice (Laaksonen et al., 2020; Lehtonen, 2023; Reichelmann et al., 2021).

The rise of online hate speech in Finland is closely linked to a resurgence of far-right ideologies, facilitated by their widespread dissemination via digital platforms (Horsti and Nikunen, 2013; Horsti and Saresma, 2021; Pettersson and Nortio, 2022). These factions exploit the extensive reach of social media to disseminate their views, frequently targeting vulnerable communities, including Muslims and LGBTQ+ individuals (Horsti and Saresma, 2021; Saresma and Tulonen, 2022). Our study examines the online political activism of far-right movements, demonstrating that they utilize digital spaces not only as a mirror reflecting existing societal tensions but also as active platforms to foster and exacerbate explicit hostility toward specific groups (Horsti, 2015). This perspective underscores the critical role social networks play in enabling and intensifying such ideologies, particularly impacting Muslim and LGBTQ+ communities.

We deliberately selected these minority groups to examine online hate speech within far-right political activism, rather than broadly surveying hate speech victims. This choice reflects the far-right’s focus on these communities, offering a lens to explore digital technology, hate speech, and social identity theory. Previous studies show far-right discourses on these groups are intertwined (Jantunen and Kytölä, 2022).

In Finland, Muslims and LGBTQ+ communities face rising hate crimes, both online and offline. Statistics show an increase in such incidents. In 2021, hate crimes against Muslims increased, rising from 39 to 55 reported cases, and further to 60 cases in 2022. Similarly, hate crimes based on sexual orientation saw a sharp increase in 2021, climbing from 68 to 126 cases and reaching 140 cases the following year (Rauta, 2022, 2023). 1 Although no dedicated research in Finland has explicitly examined the connection between online hate speech and offline hate crimes, the existing literature underscores this correlation (Kotonen and Kovalainen, 2021; Lupu et al., 2023; Müller and Schwarz, 2020; Peterson, 2018; Wahlström et al., 2020).

In this study period, from 1 January 2018 to 1 January 2023, Finland experienced consistent growth of political polarization, specifically affective polarization, notably influenced by the rise of the Finns Party (Kawecki, 2022; Kekkonen and Ylä-Anttila, 2021). As a principal opposition force and the second-largest party following the 2015 and 2019 parliamentary elections, its impact was profound. Until 2019, the Centre Party, categorized as center-right, headed the government. This leadership was subsequently taken over by the Social Democratic Party, marking a noticeable shift toward the left with the establishment of a red-green coalition government, inclusive of the Greens. This political milieu was characterized by pronounced contrasts, particularly between supporters of the Greens and the Finns Party, on issues such as equality, migration, and environmental policies (Kekkonen and Ylä-Anttila, 2021). Despite a history of relatively minor ideological differences among Finnish parties, facilitating cross-spectrum coalitions, recent electorate studies have identified distinct blocs: the conservative and the red-green liberal blocs (Westinen and Kestilä-Kekkonen, 2015), reflecting the backdrop of two divergent governments within the period under review.

Noteworthy events during this timeframe include highly publicized incidents of perceived child exploitation and accusation of rape by asylum seekers or refugees in Oulu just before Christmas 2018, catapulting immigration to the forefront of the 2019 elections, notwithstanding the relatively low number of refugees (Arter, 2020). In addition, debates on the reform of trans-law and the ramifications of the COVID-19 pandemic, including intensified border controls and a surge in xenophobic, far-right rhetoric (Wondreys and Mudde, 2022), became more prominent. This scenario, marked by an increase in anti-immigration sentiment, xenophobia, and heteronormative discourse within Finnish political speech (Unlu et al., 2024; Unlu and Kotonen, 2024), as also indicated by the study at hand, warrants a thorough examination through the lens of Social Identity Theory (SIT), focusing on the articulation and perception of cultural and ideological disparities.

This study analyzes hate speech on social media, particularly X, targeting Muslims and the LGBTQ+ community. It aims to understand how these platforms amplify extremist views and affect societal dynamics. This is essential for grasping the sociopolitical landscape and its influence on public opinion and political debate (Mossie and Wang, 2020). Our study examines online communication to identify the language that contributes to stigma and marginalization. This provides insights into systemic discrimination and its impact on marginalized individuals (Hazel and Kleyman, 2020; Koopmans and Olzak, 2004).

Our research makes significant contributions to the study of hate speech, both in the Finnish context and in the broader international landscape of online discourse analysis. To the best of our knowledge, this is one of the pioneering longitudinal studies examining X engagement over 5 years in Finland, allowing us to monitor trends and dynamics in hate speech over multiple years. While our study focuses on Finland, its significance extends far beyond national boundaries, offering valuable insights and methodological innovations applicable to hate speech research globally.

Our mixed-methods approach, combining state-of-the-art machine learning methodologies with rigorous qualitative analysis, provides a robust framework for studying online hate speech that can be adapted to various cultural and linguistic contexts. The integration of text classification, topic modeling, and thematic analysis not only enhances the reliability and depth of our findings but also demonstrates a robust methodological approach that bridges computational and qualitative techniques. This method addresses a critical gap in hate speech research, offering a more nuanced and contextually rich analysis than purely computational or qualitative methods alone.

Unlike much prior research, which often focuses on general hate speech themes, specific groups (e.g., far-right), politicians, or particular platforms, our study distinguishes between hate speech directed at two distinct groups—Muslims and LGBTQ+ individuals—within the context of political discourse in Finland. This enables comparative analysis of hate speech across these communities, providing unique perspectives on the intersectionality and dynamics of online hate, which is relevant to researchers and policymakers worldwide.

By examining these trends over an extended period, our study provides insights into the evolution and persistence of hate speech in online environments, contributing to broader theoretical discussions on digital hate, social media dynamics, and the impact of sociopolitical events on online discourse. In addition, situating our findings within Finland—a welfare state known for its high levels of equality and social trust (Kestilä-Kekkonen and Söderlund, 2016; Niemi, 2016)—challenges prevailing assumptions about the relationship between societal factors and online hate speech. This aspect of our study contributes to ongoing debates about the universality of online hate and the complex interplay between offline sociopolitical contexts and online behaviors, offering a valuable comparative perspective for international research on digital hate and extremism.

As a case study focusing on X, this research highlights the pervasiveness of online far-right discourses, which find traction even in a country characterized by an extensive welfare state and relatively high levels of equality, political, and social trust. This unique positioning enhances the study’s contribution to a global understanding of hate speech dynamics and provides a crucial comparative element for international scholarship on digital hate and extremism (Kestilä-Kekkonen and Söderlund, 2016; Koivula et al., 2021).

This study aims to address two fundamental questions: How has hate speech against Muslims and the LGBTQ+ community changed over this period on X? And what does this speech reveal about the interaction of identity, biases, and structural prejudices in the expression of hate speech?

SIT, social media, and hate speech in Finland

Utilizing SIT, as proposed by Tajfel and Turner (1979), explains how individuals identify themselves based on their affiliations to specific groups, encompassing factors such as nationality, race, religion, and other sociocultural attributes, which in turn leads to in-group favoritism and out-group bias (Ellemers and Haslam, 2012). This bias is further reinforced by social categorization and comparison processes, favoring in-groups over out-groups (Brewer, 1999; Jaspal, 2017).

Defining hate speech is a complex endeavor, with interpretations spanning various contexts, such as legal, lexical, scientific, and practical (Papcunová et al., 2023). While the literature lacks a universally accepted definition, most studies emphasize certain characteristics of hate speech, such as identifiable targets, incitement to violence or hatred, attacks or diminishment of specific groups, and both implicit and explicit forms of expression, along with the severity of the speech’s context (Khurana et al., 2022; Leonhard et al., 2018). However, rather than delving deeply into theoretical debates, this study conceptualizes hate speech in alignment with the Council of Europe’s Committee of Ministers Recommendation (1997) 97(20), which defines it as targeting individuals or groups based on identity aspects like race, gender, or sexual orientation, and contributes to prejudice and discrimination. Applying SIT to hate speech, there is a tendency for in-groups to unfavorably contrast against out-groups, influencing perceptions and emotional responses (Ellemers and Haslam, 2012; Pettigrew, 2016). This dynamic is evident in hate speech against Muslims and LGBTQ+ communities, often rooted in social categorization and comparison biases (Hewstone et al., 2002; Iyengar and Westwood, 2015).

The rise of social media has accentuated these dynamics. Political entities and their supporters often use divisive “us versus them” language, with far-right groups promoting traditional gender norms and anti-immigrant sentiments, while progressive groups advocate for gender equality and multiculturalism (Horsti, 2015).

In the context of digital mediation, social media platforms are not merely passive conduits for information but actively shape how information is produced, disseminated, and consumed (Forberg, 2022). They play a pivotal role in facilitating social categorization and the enhancement of in-group versus out-group distinctions (Baele et al., 2023). Previous research has underscored the transformative impact of digital technology on social identity formation and expression (Kim, 2019). These technologies, by their design and algorithms, not only enable but also amplify the visibility and reach of hate speech.

The phenomenon observed in Finland, where there is a marked increase in hate speech against Muslims and the LGBTQ+ community, can be analyzed through this lens. The pervasive nature of social media allows for such speech to gain prominence in the public sphere more than ever before. This visibility can be attributed to the algorithms that prioritize content that engages users, often privileging sensational or divisive content (Brady et al., 2017). Consequently, social media does not just reflect societal biases but, through these algorithmic biases, can also exacerbate them.

Moreover, the digital age has introduced a level of anonymity and disinhibition among Internet users. This anonymity can embolden individuals to express opinions or engage in behaviors they might refrain from in face-to-face interactions, leading to an increase in the production and dissemination of hate speech (Bastug et al., 2020; Mondal et al., 2017). This phenomenon suggests a correlation between the rise of social media and the visibility and acceptability of hate speech in public discourse (Wahlström et al., 2020).

In addressing the responsibility of social media in the proliferation of hate speech, it is crucial to consider the infrastructural and algorithmic effects that these platforms have on directing users toward certain types of content (Stieglitz and Dang-Xuan, 2013). The algorithms that govern the visibility of content on these platforms often create echo chambers, reinforcing existing prejudices and potentially radicalizing individuals by exposing them to more extreme content over time (Cinelli et al., 2020; Zuiderveen Borgesius et al., 2016). This infrastructural effect underlines the performative role of digital platforms in shaping social identities and public debate.

To situate this research within existing literature, it becomes apparent that the dynamics of hate speech in the digital age are complex and multifaceted. Social media platforms, through their design and algorithms, have a significant impact on the production, dissemination, and consumption of hate speech (Wahlström and Törnberg, 2021). This impact is not neutral; it can magnify social divisions and reinforce in-group and out-group biases inherent in SIT.

In Finland, public debate increasingly features anti-immigrant and xenophobic speech, prominently from far-right groups like the Finns Party, targeting Muslims and portraying Islam as a threat to national values and gender equality (Hatakka et al., 2017; Horsti, 2015; Mäkinen, 2016; Saarinen and Koskinen, 2022). These groups utilize anti-immigrant rhetoric and gender-based arguments, ostensibly advocating for the rights of women and LGBTQ+ individuals, yet often concealing a covert objective to subjugate immigrant men (Lähdesmäki and Saresma, 2014).

Far-right hate speech themes revolve around protecting national and traditional values from perceived threats like immigration and Islam, with Muslim immigrants often depicted as monolithic and patriarchal (Lähdesmäki and Saresma, 2014; Saarinen and Koskinen, 2022). Despite rhetorical claims of supporting LGBTQ+ rights, these groups frequently target the LGBTQ+ community (Lähdesmäki and Saresma, 2014). In contrast, left-wing parties align with feminist causes, while right-wing factions like the Finns Party resist progressive gender policies and multiculturalism (Kantola and Lombardo, 2019; Nygård and Nyby, 2022).

The main actors spreading hate speech are far-right political parties like the Finns Party, their supporters, and anti-immigration activists (Backlund and Jungar, 2019; Horsti, 2015). They utilize social media to spread hate speech and radicalize individuals by exploiting fears about immigration and cultural identity (Hokka and Nelimarkka, 2020; Horsti, 2015). Politicians use blame deflection and justifications like protecting the nation to minimize their harmful rhetoric (Pettersson, 2019).

Thus, the current study contributes to the understanding of hate speech in the digital age by highlighting the central role of social media in influencing social identity dynamics and the manifestation of hate speech.

Methods

Our study adopted a threefold methodology to analyze hate speech on social media. The initial phase started with data collection that was executed using the R package academictwitteR (Barrie and Ho, 2021), targeting tweets over 5 years from 1 January 2018 to 1 January 2023. Our search, based on established literature (Kettunen and Paukkeri, 2021; Mäkinen, 2019; Rauta, 2023), involved specific keywords indicative of potential hate speech. For LGBTQ+-related content, 13 search terms with 199 variants were used, while the Muslim-related corpus employed 14 keywords and 221 variants (see Table 1 in the Supplemental Appendix). These terms, adapted to Finnish linguistic nuances, yielded a dataset of 320,045 tweets from 30,360 unique X profiles.

Our research, involving human subjects and their opinions, received ethical clearance from the Finnish Institute for Health and Welfare (THL). We prioritized identity protection by anonymizing and paraphrasing identifiable quotes during analysis.

Text classification

Data annotation

Classifying hate speech is a complex task, influenced by social, cultural, and linguistic factors. Our approach was multifaceted, taking into account these nuances (Gagliardone et al., 2015; Laaksonen et al., 2020). The inherent challenge lies in understanding hate speech’s context-dependency, its disguise under moral language on public platforms, and the evolving nature of social media communications, including sarcasm, emojis, and niche terminology (Berbrier, 1999; Brady et al., 2017; Fielitz and Ahmed, 2021).

We conducted a thorough review of the literature and adapted the hate speech classification framework originally developed for X by Waseem and Hovy (2016) and rooted in the research of McIntosh (2003). Their hate speech categories encompass 11 types of discourses, including those that: use sexist or racial slurs; attack a minority; seek to silence a minority; criticize a minority without a well-founded argument; promote hate speech or violent crime without direct usage; criticize a minority employing a straw man argument; overtly misrepresent the truth or aim to distort views on a minority based on unfounded claims; endorse problematic hashtags; negatively stereotype a minority; defend xenophobia or sexism; and feature a screen name that, based on the previous criteria, renders the tweet at best ambiguous and pertains to a topic that meets any of the aforementioned criteria.

To enhance its comprehensiveness, we expanded our study to address numerous intricate issues. We trained our experienced annotators on various facets of hate speech, which include, but are not limited to, the identification of target groups (De Gibert et al., 2018), deciphering humor, sarcasm, polysemy, and interpreting emojis (Fielitz and Ahmed, 2021; Vidgen et al., 2019), discerning both implicit and explicit forms of hate speech (Waseem et al., 2017), offensive language (Davidson et al., 2017; Kocoń et al., 2021), as well as hostility, criticism and counter-speech (Vidgen et al., 2020), and severity of the discourse (Leonhard et al., 2018; Papcunová et al., 2023). For simplicity and computational efficiency, annotators classified tweets into three hate speech categories: “hate speech” if any such content is identified, “no” if none is present, and “not sure” if the determination is unclear.

We acknowledge ongoing debates in the literature regarding the scope of hate speech classification frameworks. Some studies argue for distinguishing hate speech from offensive or critical language that may not be inherently hateful (Davidson et al., 2017; Gelber, 2021; Madukwe and Gao, 2019) and propose frameworks that separate harmful intent from merely controversial expressions (Vilar-Lluch, 2023). These discussions suggest that hate speech classification should reflect different types of speech, such as offensive, hateful, racist, and sexist, rather than collapsing them into a binary classification (Fortuna and Nunes, 2019) or adding additional measurements such as offensiveness (Roß et al., 2016) and aggressiveness (Basile et al., 2019; Poletto et al., 2017). While these conceptual distinctions are important, computational limitations often lead researchers, including ourselves, to adopt a binary classification approach (Basile et al., 2019; De Gibert et al., 2018; Gröndahl et al., 2018). In our framework, posts labeled as “hate” specifically exhibit targeted hostility, prejudice, and potential harm toward specific groups, while the “negative sentiment” category includes posts that contain criticism or offensive language without explicit hate speech. This approach aligns with more nuanced definitions of hate speech (Khurana et al., 2022; Leonhard et al., 2018). Although we adopted Waseem and Hovy’s (2016) framework, we acknowledge that their definition may encompass certain types of “criticism” toward minority groups that, while possibly inappropriate or unjustified, do not necessarily constitute hate speech. To address this, we ensured that such instances of criticism were not classified as hate speech in our study. Instead, we reserve the term “hate speech” for language explicitly conveying hostility, animosity, or prejudice. Accordingly, we define hate speech in our framework as comments that are both hostile and hateful.

Annotators also conducted sentiment analysis, classifying content into negative, neutral, and positive sentiments. The negative sentiment category was broader than hate speech, encompassing all forms of criticism, disagreement, or dislike, while positive sentiment captured supportive or admiring tweets for the target group. The sentiment classification aimed to evaluate the general attitude toward the target group, with negative sentiment encompassing both hate speech and negative attitudes.

After the training, annotators were instructed to follow a structured methodology to classify each post. They began by identifying the target group of each tweet—whether Muslim, LGBTQ+, both, or neither—by determining who was the primary subject of discussion. In cases where no specific target group was identified, the post was labeled as “neither,” and the annotators moved on to the next post. If the content targeted any of these groups, the annotators then assessed the sentiment toward the identified group, and then the text was re-evaluated based on hate speech categories. This structured approach enabled annotators to systematically narrow their focus to identifying hate speech. For instance, a post might exhibit negative sentiment without necessarily qualifying as hate speech (see hate speech samples in Table 6 in the Supplemental Appendix).

For data annotation, a subset of randomly selected 4381 tweets was extracted. During preprocessing, tweets were filtered out if they were retweets, had fewer than 30 characters, or consisted solely of a URL without accompanying text. Four native Finnish-speaking research assistants were employed for manual text classification. The group had experience with previous annotation tasks but lacked expertise in hate speech. While there are arguments suggesting that non-expertise can be beneficial for certain tasks (Waseem, 2016), others advocate that expert annotators yield superior results compared to crowdsourced annotations (Mubarak et al., 2017). In addition, it’s posited that annotators who share cultural background and language expertise with the target group enhance the reliability of the annotations (Mossie and Wang, 2020). The annotation training process involved 10 iterative sessions to reach a majority vote consensus, with 60 posts reviewed in each iteration and inter-rater reliability (IRR) calculated using Krippendorff’s alpha. Annotator agreement strongly correlates with the quality of hate content recognition (Kocoń et al., 2021). Despite our efforts, the complex nature of social media posts posed significant challenges, particularly due to the prevalence of sarcasm, slang, and culturally specific references. Annotators sometimes struggled with terminology related to LGBTQ+ issues and Islamic beliefs, which were relevant to the dataset. For example, references to organizations like SETA (an NGO advocating for LGBTQ+ rights in Finland) could be misinterpreted if annotators were unfamiliar with their context or purpose.

Furthermore, we observed that the annotators’ individual religious, cultural, and personal backgrounds influenced their judgments, particularly when interpreting humor or sarcasm, which complicated achieving high reliability for hate speech categories. While previous annotation tasks with the same group on topics like misinformation, political trust, and vaccine hesitancy achieved good IRR scores within 5–6 sessions, this task required 10 sessions due to its additional complexities. The highest IRR results for the target group, sentiment categories, and hate speech are 0.84, 0.67, and 0.64, respectively. 2

FinBERT text classification models

We adapted FinBERT, a state-of-the-art language model published by researchers from Turku University (Virtanen et al., 2019), for text classification tasks using the annotated tweet samples. Five distinct models were trained, each tailored to a unique task—identifying the target group of tweets and classifying sentiment and hate speech in LGBTQ+ and Muslim-targeting contexts. The performance of FinBERT models varies across different tasks. In terms of accuracy, FinBERT achieves 94% in target group classification, 78% and 75% in detecting sentiment toward LGBTQ+ and Muslim groups, respectively, and 83% and 71% in classifying hate speech against these two groups. Further validation details of FinBERT models are reported in Table 2 of the Supplemental Appendix.

The observed lower accuracy in the model targeting hate speech against Muslim communities could suggest a greater level of disagreement among annotators regarding what constitutes hate speech based on a definition. This discrepancy might stem, in part, from the annotators’ lack of familiarity with Muslim culture and its various contexts (Obermaier et al., 2023). As illustrated below, several topics identified as hate speech touch upon elements intrinsic to the Islamic faith, including its belief system, practices, Quranic verses, and Sharia laws. This highlights the complexity and cultural specificity involved in accurately identifying hate speech in this domain that brings subjective interpretations (Khurana et al., 2022; Kshirsagar et al., 2018).

Moreover, Åkerlund’s (2020) research indicates that influential users discussing hate speech often employ sarcasm, irony, and a neutral stance, leaving much of the interpretation of the post up to the reader. This strategy facilitates engagement with less radical audiences and ensures their posts remain on platforms. However, it also complicates the annotation process. Thus, in subjective data labeling tasks such as hate speech, disagreement among individual labelers can be difficult to resolve (Sang and Stanton, 2022). Nonetheless, studying these discrepancies among annotators can yield valuable insights (Fell et al., 2021).

Topic modeling

Topic modeling is a technique used to isolate and analyze topics from a collection of text. BERTopic, 3 a Python library, is a topic modeling tool that clusters documents according to their semantic similarity across BERT embeddings (Grootendorst, 2022). Compared to alternative approaches, including Top2Vec, non-negative matrix factorization (NMF), and latent Dirichlet allocation (LDA), BERTopic produces not only distinct topics but also novel insights from similar texts (Egger and Yu, 2022).

We generated topic models corresponding to negative sentiment and hate speech data related to LGBTQ+ and Muslim content. BERTopic contains a series of customizable steps to generate the topic representation of text documents. These steps include extracting document embeddings, reducing the dimension of embeddings, clustering, tokenizing, applying a weighting scheme, and fine-tuning representation. The specific training parameters utilized by our BERTopic models for each data category are detailed in Table 3 of the Supplemental Appendix.

Thematic analysis

Our research utilized thematic analysis to examine the most representative and engaged tweets identified through topic modeling. Thematic analysis, a qualitative method, helped us explore patterns and meanings in tweets, focusing on sentiments and behaviors expressed online (Clarke and Hayfield, 2015). This involved coding and categorizing data to uncover consistent themes.

We analyzed two stages. First, we used BERTopic analysis results to select the top 20 tweets representing each identified topic. Next, we analyzed the 20 most engaged tweets, based on likes, retweets, and comments, to understand which topics garnered the most user interaction. This phase was limited to tweets classified as hate speech due to space constraints.

The analysis culminated in grouping topics into broader thematic clusters. We note that these themes are not inherent in the data but result from our interpretive process (Clarke and Hayfield, 2015). This approach yielded insights into the nature and dynamics of hate speech within the X community.

Results

A corpus of 263,103 tweets was amassed based on results from the text classification model, which were sourced from 24,185 distinct X profiles. The dataset incorporates 55,411 standalone tweets (without any mentions), 174,076 mentions (including replies, comments, and mentions), and 33,616 retweets.

Text classification

In our dataset, a significant portion of the posts, 190,705 (72%), targeted Muslims, while 72,398 posts (28%) targeted LGBTQ+ individuals. Our text classification model identified 63,067 tweets as hate speech, accounting for 24% of the total analyzed. Of these, a substantial 87% (54,606 tweets) targeted Muslims, while the remaining 13% (8,461 tweets) were directed at LGBTQ+ individuals. In addition, the model detected 161,352 tweets exhibiting negative sentiment, comprising 61% of the total. Within this category, a predominant 88% (141,476 tweets) targeted Muslims, and 12% (19,876 tweets) targeted LGBTQ+ individuals.

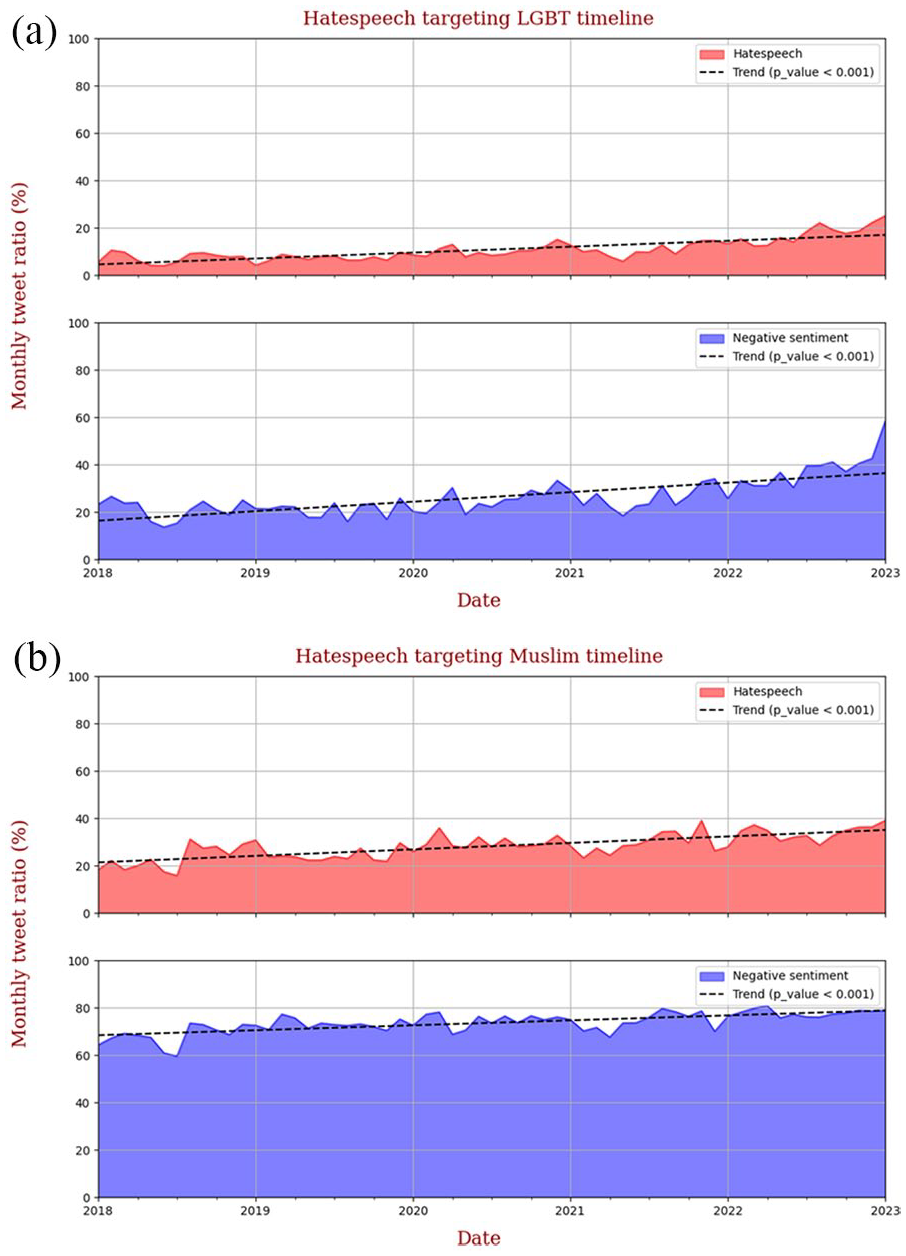

Figure 1(a) and (b) presents monthly proportions of hate speech and negative sentiment tweets targeting LGBTQ+ individuals and Muslims, respectively, as a percentage of total monthly tweet counts. The data from both figures reveal two common characteristics: a substantially greater prevalence of tweets with negative sentiments compared to hate speech and an overall increasing trajectory in both hate speech and negative sentiment against both target groups over time.

(a) Timeline of hate speech and negative attitudes toward LGBTQ+ individuals. (b) Timeline of hate speech and negative attitudes toward Muslims.

A notable rise in negative sentiment and hate speech against LGBTQ+ individuals was observed from mid-2021 to the end of 2022, representing an increase of up to 50% compared to the previous two-year period (2018–2020) (T-test results for the 2021–2022 comparison can be found in Table 7 in the Supplemental Appendix). Moreover, Figure 1(a) and (b) both report a p-value of less than 0.001 for the hate speech and negative sentiment trend lines in the Monthly tweet ratio targeting Muslim and LGBTQ+ groups over time (2018–2023). For the Muslim target group, the proportion of negative sentiment tweets remained persistently high, above 70%, with a small increment between 2021 and 2022. Notably, the trend toward increased hate speech against Muslims was more pronounced and sustained over the entire period from 2018 to 2022.

Topic modeling

Figure 1(a) and (b) illustrates the disparity between the proportions of negative sentiment tweets and hate speeches, with approximately twice as many negative sentiment tweets detected, contributing to more topics discovered by BERTopic models within the negative sentiment category than within hate speech targeting both groups. In tweets targeting LGBTQ+ individuals, 46 topics were identified within the negative sentiment category, compared to 41 in hate speech. In contrast, for Muslims, the disparity was even more pronounced, with 94 topics emerging in negative sentiment versus only 32 in hate speech. The differing volume and diversity of content between these categories can be attributed to various factors, including event-driven discussions on X related to Muslims. Due to page constraints for this article, the focus remains on hate speech posts.

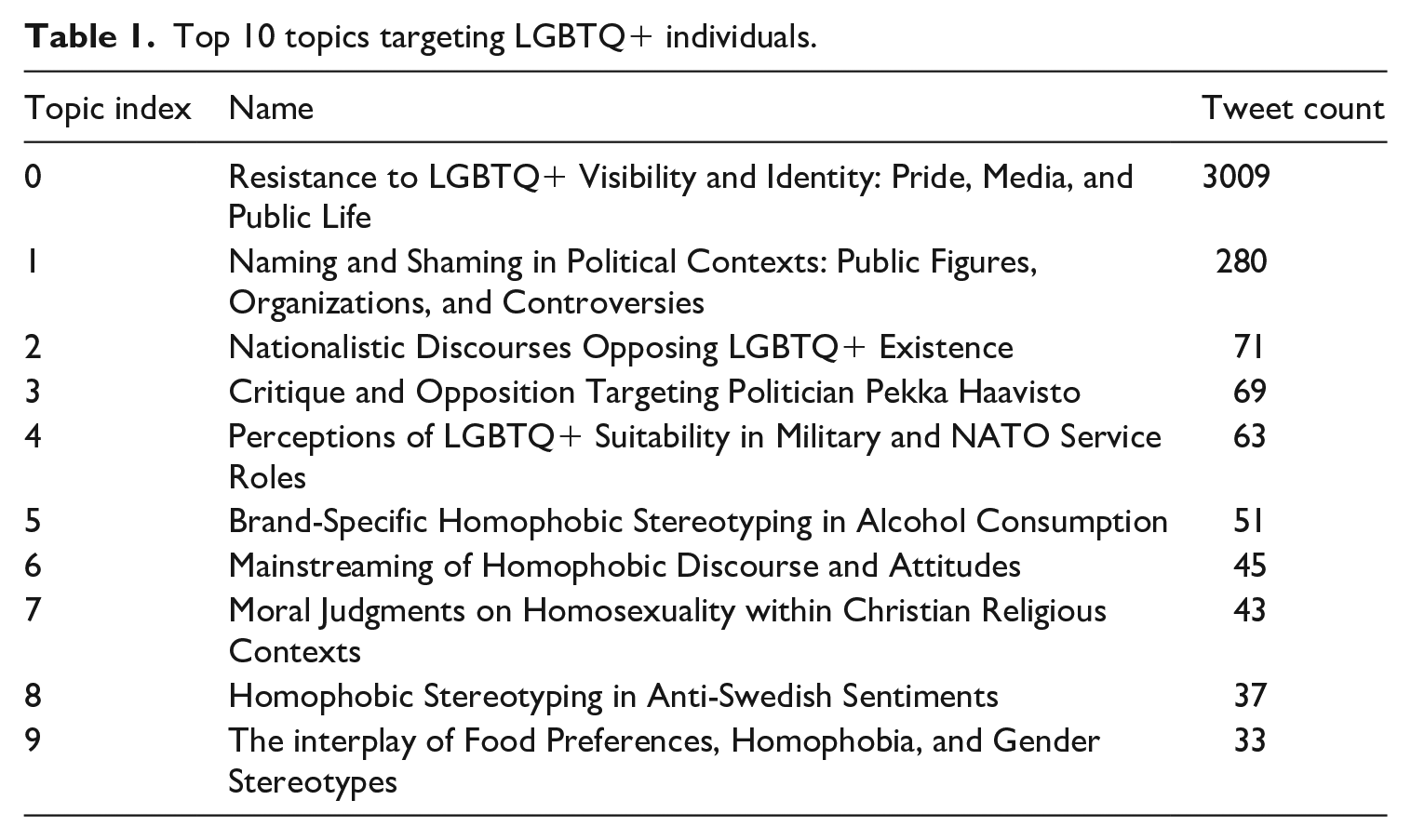

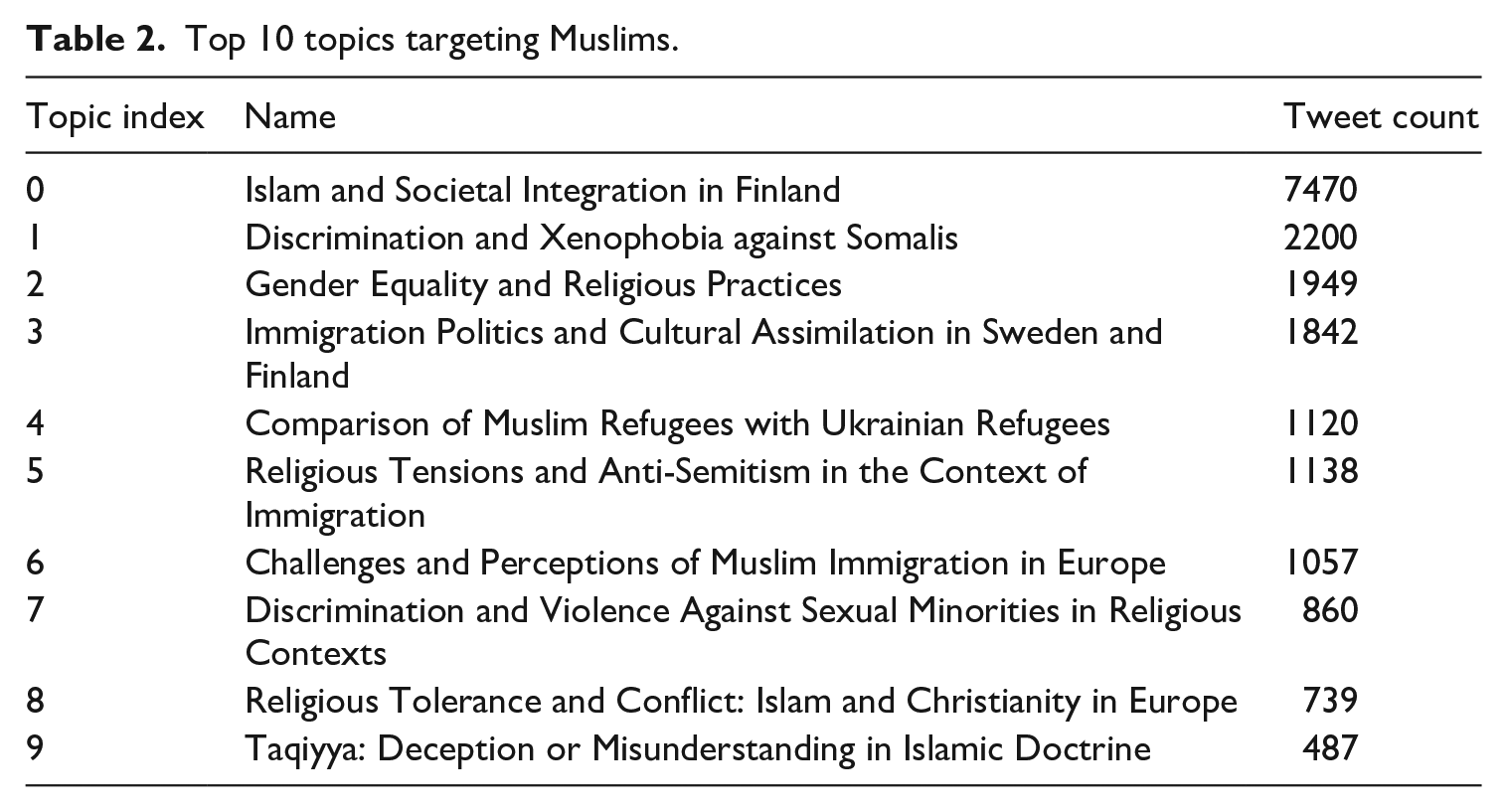

Despite a smaller volume of hate speech tweets targeting LGBTQ+, these tweets reveal a greater variety of topics than those found in hate speech directed at Muslims. However, unlike hate speech against LGBTQ +, which often focuses on a single topic, hate speech against Muslims tends to cover a broader range of issues. A closer look at the following Table 1 reveals that the most dominant topic among LGBTQ+ hate speech posts, “Resistance to LGBTQ+ Visibility and Identity: Pride, Media, and Public Life,” is wide-ranging, whereas smaller topic groups, such as “Critique and Opposition Targeting Politician Pekka Haavisto” and “Brand-Specific Homophobic Stereotyping in Alcohol Consumption,” are more specific and focused on particular entities. This specificity appears to contribute to the significant disparity in tweet counts among LGBTQ+ hate speech topic groups. Similar patterns can be observed in Table 2, where the most popular hate speech topic targeting Muslims, “Islam and Societal Integration in Finland,” is more general than the smaller but more specialized topic groups, such as “Taqiyya: Deception or Misunderstanding in Islamic Doctrine.”

Top 10 topics targeting LGBTQ+ individuals.

Top 10 topics targeting Muslims.

LGBTQ+ community

Table 1 summarizes the 10 most dominant discourses within LGBTQ+ targeting hate speech. Notably, the predominant topic, “Resistance to LGBTQ+ Visibility and Identity: Pride, Media, and Public Life,” alone accounts for 70% of all tweets targeting this minority. Key points in this topic include widespread use of derogatory language toward LGBTQ+ individuals, resentment toward their visibility in media and public events like Pride, and misunderstanding or rejection of transgender and non-binary identities. Many see LGBTQ+ representation as an imposition on societal norms, conflating these identities with negative stereotypes. The discussion is marked by an intolerant tone, with little empathy for LGBTQ+ rights and a perception of their visibility as a threat to traditional values. This reflects deep-rooted biases and prejudices, underscoring the need for targeted advocacy and education against homophobia and transphobia.

The topics “Naming and Shaming in Political Contexts: Figures, Organizations, and Controversies” and “Critique and Opposition Targeting Politician Pekka Haavisto” focus primarily on the practice of naming and shaming political figures and organizations. They also delve into the creation of controversies related to the identities or stances of these entities within a political framework. Pekka Haavisto is an openly gay politician representing the Greens, who acted as minister of foreign affairs from 2019 to 2023. During his tenure as minister, an especially heated debate was stirred by his decision to repatriate children of Finnish ISIS fighters held in Syria. Meanwhile, the topics, “Nationalistic Discourses Opposing LGBTQ+ Existence” and “Perceptions of LGBTQ+ Suitability in Military and NATO Service Roles,” are characterized by discussions centered on national identity, where exclusive language is used to stigmatize LGBTQ+ individuals, particularly in contexts like military service exclusion. Finnish NATO membership was at the top of the political agenda after the Russian invasion of Ukraine in 2022. Its centrality as an issue was reflected in other topics too, including the ones on LGBTQ+.

The topic, “Mainstreaming of Homophobic Discourse and Attitudes,” involves speech that normalizes homophobic language and attitudes. Likewise, the topics, “Brand-Specific Homophobic Stereotyping in Alcohol Consumption” and “Interplay of Food Preferences, Homophobia, and Gender Stereotypes,” explore how certain behaviors and characteristics are stereotypically linked to LGBTQ+ individuals. Additionally, the ninth topic is focused on homophobic stereotyping within anti-Swedish sentiments, while the topic “Moral Judgments on Homosexuality within Christian Religious Contexts” engages with the intersection of Christianity and views on homosexuality, often framing the latter as a moral issue. Discussions are marked by the use of religious texts, particularly the Bible, to justify negative views on homosexuality, labeling it as “sinful” or contrary to God’s will. The topic shows a clear conflict between religious doctrines and the acceptance of LGBTQ+ rights.

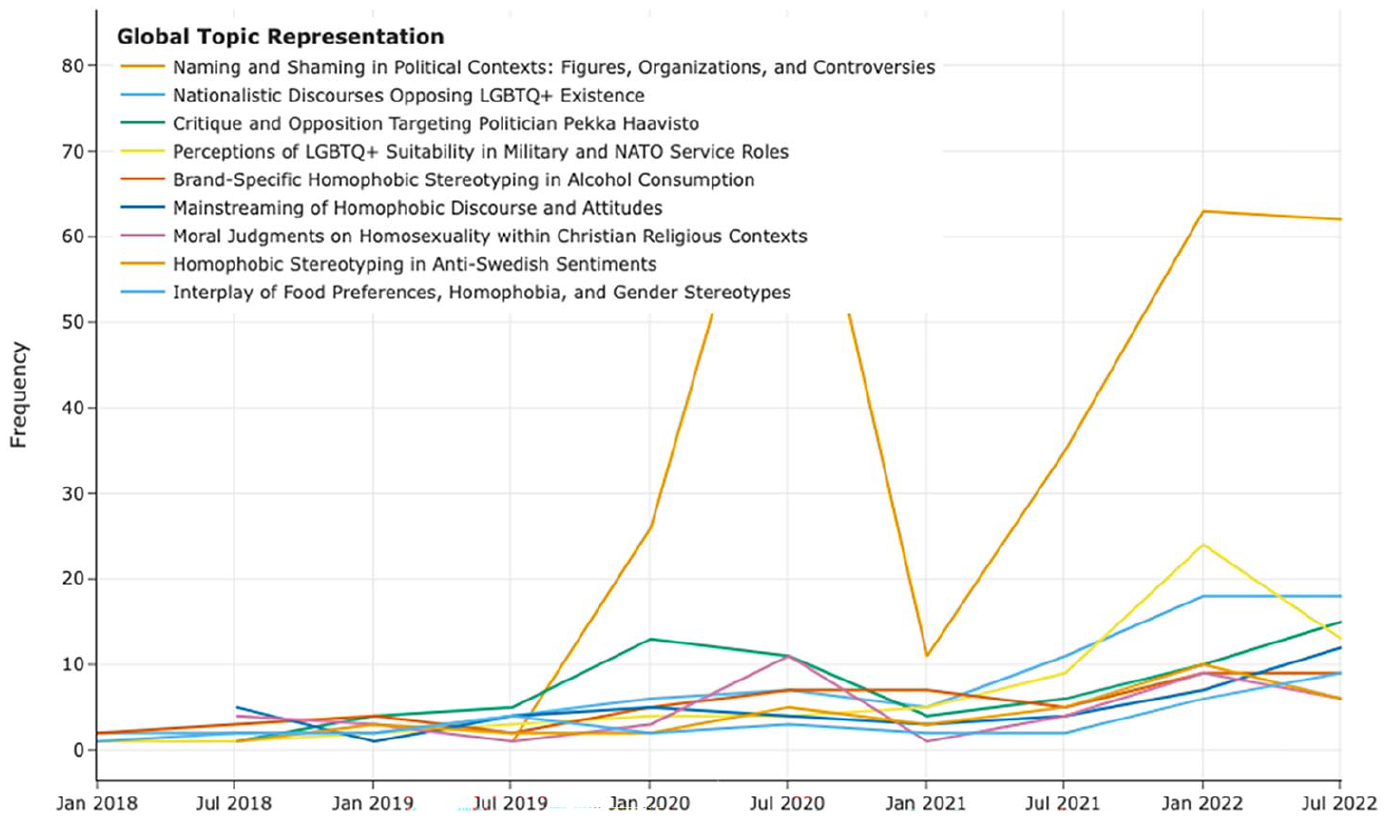

As evident from the data presented in Table 1, the most prominent topic targeting LGBTQ+ individuals accounts for over 3000 tweets, and thus, was deliberately excluded from the topic timeline analysis to facilitate a more nuanced examination of secondary themes, as illustrated in Figure 2. To provide a more comprehensive overview of hate speech targeting the LGBTQ+ community, we have included a full list of topics (Table 4 in the Supplemental Appendix) and a timeline plot of the top 20 topics (Figure 1) in the Supplemental Appendix.

Top 9 hate speech topics against LGBTQ+ individuals over time (excluding the most popular topic).

Figure 2 highlights that the volume of hate speech per topic against the LGBTQ+ community fluctuates over time, with an overall increasing trend. Notably, there were significant increases in hateful tweets between January to July 2020 and July 2021 to February 2022 across multiple topic groups, including “Resistance to LGBTQ+ Visibility and Identity: Pride, Media, and Public Life,” “Naming and Shaming in Political Contexts: Figures, Organizations, and Controversies,” “Critique and Opposition Targeting Politician Pekka Haavisto,” and “Perceptions of LGBTQ+ Suitability in Military and NATO Service Roles,” respectively. These spikes are likely triggered by major events, including Pride celebrations, the municipal election of 2021 and county election of 2022, and online discourse about Finland applying for membership in NATO at the beginning of 2022, which may have amplified existing biases and animosity toward this community.

Muslims

The number of hate speech topics targeting Muslims amounts to 31, which is fewer compared to those targeting LGBTQ+ individuals. One primary reason for this is the inclusion of specific names of persons, organizations, and political parties contributing to the increased variety of topics. Some examples include the focus on naming and shaming politicians and criticizing institutions and political parties for either supporting or failing to react strongly against LGBTQ+ individuals. However, hate speech targeting Muslims tends to concentrate on a few themes. For example, the top 10 topics account for about 79% of the BERTopic labeled discussions, with the first topic alone encompassing 31% of these discussions.

An overview of the 10 most common hate speech topics targeting Muslims is reported in Table 2. The first topic, “Islam and Societal Integration in Finland”, centers on various concerns and perceptions regarding the Muslim community’s integration into Finnish society. Key points include apprehensions about cultural and religious assimilation, fears of religious extremism, and prevalent negative stereotypes about Muslims. The language often reflects a perceived threat to Finnish cultural identity and social values, marked by resistance to changes in social norms attributed to the Muslim presence. A notable subset of posts adopts a defensive stance toward Muslim immigration, linking it to societal issues like crime. The topic “Challenges and Perceptions of Muslim Immigration in Europe” also has similar content but mainly focuses on ineffective policies in response to Muslim immigrants. It is interesting to note that the topic “Taqiyya: Deception or Misunderstanding in Islamic Doctrine” produces arguments that Islamic belief allows Muslims to deceive non-Muslims for the benefit of Islam, referring to a perceived systematic practice that fosters dishonesty and conceals the true intentions of Islam, particularly in the context of violence and proselytization.

The topic “Gender Equality and Religious Practices” focuses on the contentious relationship between women’s treatment in Islam and Finnish societal norms. It includes critical views on Islamic practices deemed oppressive toward women and debates on the perceived silence of feminists on these issues. The discourse often portrays Islam as patriarchal and conflicting with Western gender equality values. There is also a concern that political correctness prevents an open critique of Islam’s impact on women’s rights. Discussions extend to fears of religious extremism and generalizations of Muslims based on extreme interpretations. Finally, there’s advocacy for Finnish legal and social systems to counteract religious practices that contradict local laws and values.

The topic “Discrimination and Violence Against Sexual Minorities in Religious Contexts” centers on the fear of Islamic attitudes toward homosexuality influencing Europe due to immigration. Discussions often conflate Islamic teachings with extremist actions, suggesting a widespread view among Muslims. The perceived clash between Islamic perspectives on homosexuality and European values of equality is a recurring theme. Criticism is directed at political movements for compromising LGBTQ+ rights to accommodate Islamic practices. Concerns about increased discrimination and violence against sexual minorities in Europe due to Muslim integration are prevalent. The debate highlights the silence of liberal groups on these issues, perceived double standards in defending minority rights, and calls for stronger policies against religious extremism. A significant worry is the potential impact of Sharia law on European norms and LGBTQ+ rights, illustrating the complexities of balancing advocacy for different minority groups.

Two topics have religious rhetoric in their discussions. The topic “Religious Tolerance and Conflict: Islam and Christianity in Europe” discusses the perceived stark differences between Islam and Christianity. Islam is often viewed as a political system focused on control, in opposition to Christianity’s emphasis on love and forgiveness. Islam is often seen as resistant to modern reforms, contrasting with the evolved Christian doctrines. Concerns about the impact of Islamic beliefs on European culture and the decline of Christian values are prevalent. There is apprehension about the expansion of Islam in Europe, with some fearing a loss of Christian identity and an increase in intolerant Islamic practices. Criticism is directed at Christian institutions for not responding effectively to these challenges. Furthermore, the topic “Religious Tensions and Anti-Semitism in the Context of Immigration” revolves around the perceived threat Muslims pose to Jewish and Christian communities in Europe, with accusations of ingrained anti-Semitism among Muslim immigrants. Discussions involve concerns over the resurgence of anti-Semitism due to Muslim immigration, debates on religious freedom, the influence of extremist ideologies, and calls for stricter policies to address anti-Semitic behavior.

The topic “Discrimination and Xenophobia against Somalis” specifically addresses the experiences of Somali immigrants in Finland, who represent one of the first significant Muslim migrant groups. In contrast, the topic “Immigration Politics and Cultural Assimilation in Sweden and Finland” focuses on discussions about Sweden’s Muslim-tolerant immigration policies and their perceived failure, leading to crime and social unrest. In addition, the topic “Comparison of Muslim Refugees with Ukrainian Refugees” offers perspectives on the suitability of these groups as refugees, considering factors like identity, values, and cultural similarities.

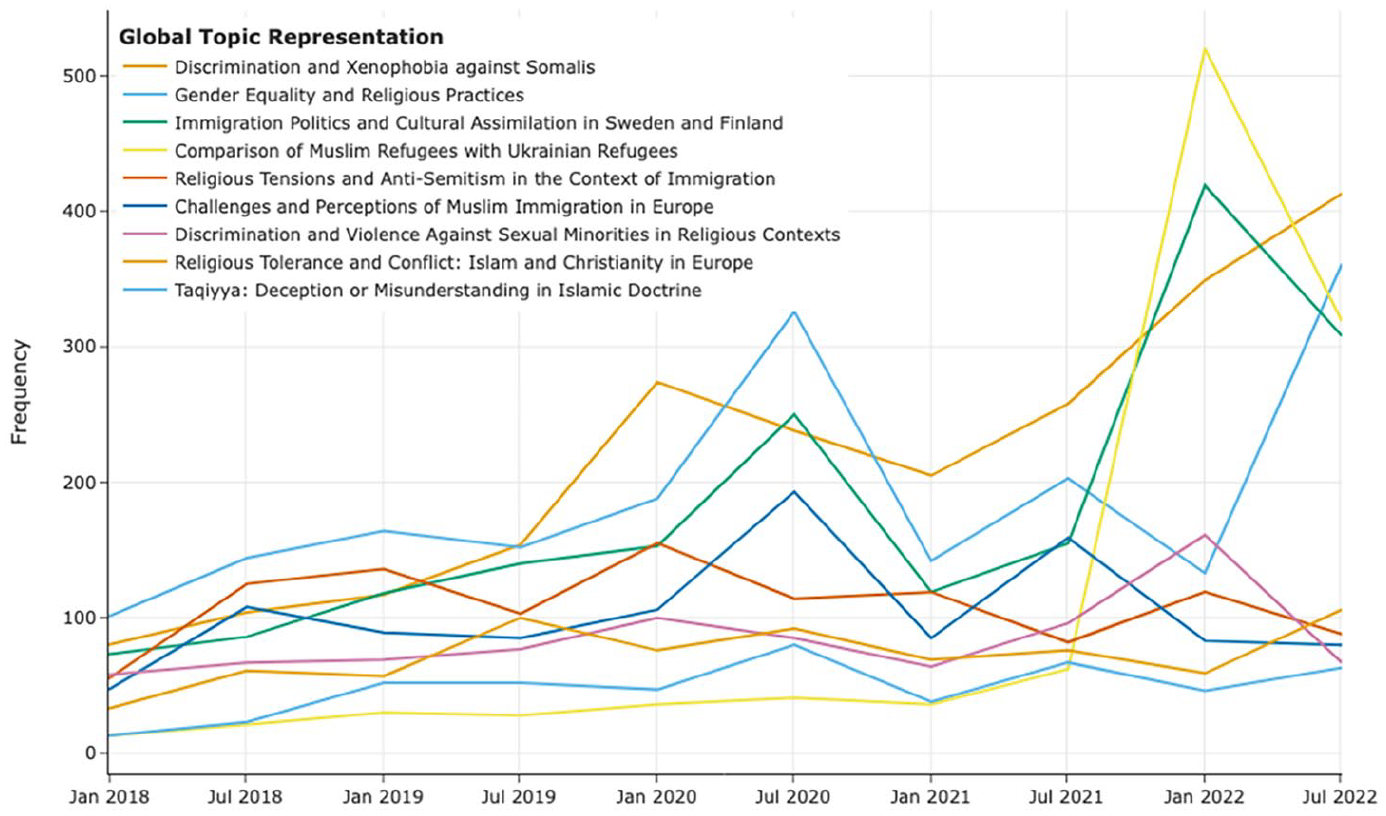

Additional information about hate speech targeting Muslims is available in the Supplemental Appendix, including a comprehensive list of topics (Table 5 in the Supplemental Appendix) and a timeline plot showing the top 20 topics (Figure 2). Below, Figure 3 presents the timeline of tweet volumes for the top 9 dominant hate speech topics targeting Muslims, with the most popular topic, “Islam and Societal Integration in Finland,” excluded. Similar to the previously seen LGBTQ+ topic timeline, a pattern of fluctuating volumes and an overall increasing trend can be observed in the timeline plot. Specifically, significant increases in tweet volumes were observed between January and July 2020, and again between July 2021 and February 2022. This surge can be linked to major events such as the Pride celebrations (e.g., “Gender Equality and Religious Practices”), the Russia-Ukraine war leading to a refugee crisis in Finland (e.g., “Comparison of Muslim Refugees with Ukrainian Refugees”), and the municipal and county elections in 2021 and 2022 (e.g., “Discrimination and Xenophobia against Somalis” and “Immigration Politics and Cultural Assimilation in Sweden and Finland”).

Top 9 hate speech topics against Muslims over time (excluding the most popular topic).

Thematic analysis

In this section, we conducted a detailed qualitative analysis of selected tweets, focusing on in-group and out-group dynamics concerning our research questions. We moved beyond mere topics to uncover latent themes, examining the underlying, often implied, messages and concepts. While topics detail the subject matter, themes provide deeper insights into the broader opinions shaping these discussions. The thematic analysis aims to uncover these latent themes, revealing the data’s underlying ideas (Braun and Clarke, 2019).

Our thematic analysis, particularly in the context of far-right discourses about Muslims and LGBTQ+ individuals, centered on how perceived threats are expressed and the worldviews underpinning them. We began by identifying posts relating to in-group and out-group definitions and manually coding the key themes linked to them. Topic modeling revealed dominant themes like threats to cultural or national identity and fear of extremism. The thematic analysis corroborated these findings and showed that themes often span multiple topics.

LGTBQ+ community as a threat to the traditional order

Prominent themes in the tweets focusing on LGTBQ+ individuals and communities selected for the analysis are weakness and traditional order. Sexual minorities are described as weak, pacifist, and corrupt, and, as they are shown also in the media, are a threat to societal norms and national values.

In these tweets, the approach is, relatively speaking, personalized or individualistic, and slurs are directed at individuals taking part in discussions. In that sense, they are seen as close to in-group. Personalized messages may nevertheless reveal more general ideas behind the attitudes. Being a part of a sexual minority is, for some tweeters, comparable to a lack of the will to defend the country: “**slur**. No good for defending the fatherland.”

While using common Finnish anti-Swedish slurs, which shows also in topic modeling, militant and militaristic attitudes may be connected to weakness too. The mental weakness is presented as similar to the lack of capability in defending borders, both imaginary and concrete: “There is no single sane person in the Swedish defense forces, all are **slur**. Hopefully, that country will be occupied, if someone would agree to do it.”

A specific subtheme in the tweets supporting the traditional order is the relationship between the church and sexual minorities, reflecting the identified topic of moral judgments. Several tweeters argue that the church has gone astray when not following the traditional values or the message of the Bible. Some of the most explicit statements also connect the alleged corruption of the traditional values within the church with “green-left” ideology:

The reason for leaving the church is, that it does not represent anymore Christianity, but communism, gayness, pedophilia, and other green-left filth. At the churches, the leftist gays and pedophiles hang around as priests, preaching the bliss of immoralities they practice.

This also implies experienced threats caused by mixing categories, where political and sexual identities are intertwined, and traditional order is undermined by introducing “foreign” elements into it.

At the deeper level, some tweets show ideas close to conspiracy theories, arguing, for example, that “there are these fake-brothers infiltrating, having as their goal to destroy the church with gays and other perverts.” Similar ideas, such as a “fifth column” undermining the nation within, are also visible in tweets regarding Muslims and are shown in topic representation too, where also the role of media and public events is mentioned.

Themes of violence and naivety in tweets on Muslims

In line with the issue alignment thesis, maintaining a certain level of thematic consistency, Muslims are described as a threat to national identity. Prominent and intertwining, themes in the samples focusing on Muslims are violence and naivety. As such, themes also illustrate how the Western society, an in-group alongside more specifically defined Finnish society, is viewed by those participating in the discussions. Group identities and threat scenarios are constructed while defining violent out-group and naïve in-group, and often the actual targets of the message are Western politicians.

The first theme can be divided into several subcategories, such as sexual violence, invasion, and, more general, violent culture, which are also mentioned in topic representations. Among the most extreme expressions are the explicit calls for anti-Muslim violence. This kind of statement, which might also fulfill the criteria of incitement in the Finnish penal code, is, however, rare, representing the most radical categorization by excluding them physically from the community: “Which MP dares to say first, that only brutal violence and lead will affect the raging Muslims? Concentration camps for use and immediately!”

In a similar fashion and emphasizing physical control and limits, one tweet states, using an untranslatable Finnish ethnic slur for Muslims, that “the only language” they “will understand is force. Give them any leash, and a pack of dogs will attack.”

The violence is, however, more often described as targeting and threatening the in-group, while crossing their physical boundaries. Islam is seen to represent a violent and invasive culture, as shown in several comments: “The purpose of Muslims is to conquer Europe and destroy the Christians and the Jews.” Specific forms of violence are even occasionally illustrated as characteristic of Islam: “The purpose of Islam is to conquer the world, and convert everyone. Who does not convert, will be taken as slaves unless their heads are cut.”

Interestingly, in some posts, a simile is also built between Muslims and some of the Russians, as supporting an expansive culture, speaking, for example, of both groups as “beasts,” “the only beast are people supporting Islam and Z-Russians.”

Different forms of sexual violence belong to the most common themes, typically represented as a consequence of government politics. Rape culture is also seen as endemic to Islam and its culture: “Again in Oulu, in that paradise of child-raping, 3 pieces of **slur** pampered the Green-lefties, enriched the life of a Finnish child by gang-raping her, like so many others Islam-following pedophiles.”

Furthermore, biological metaphors, often referring to the rate of “breeding” among Muslims, relate to a theory of expansion by birth rate. The theme of disease, as an extreme form of othering, also implies that Muslims do not belong to Europe and are perceived as an alien element in a healthy body. The lack of resistance implies weakness when defending the borders between in-group and out-group.

The second theme, naivety, is expressed, besides explicitly political themes, for example, in calls for ending virtue signaling, stressing the need for waking up, and while ridiculing tolerance. In topic modeling, this is linked to issues such as ineffective policies in response to Muslim immigrants and “taqiyya” as well as in discussions on gender and sexual minorities. At the deeper level, although often less explicitly pronounced, is the idea of a Western society in a state of crisis, a community that has lost its values and traditions and has thus also lost its immunity to external threats. Often this was, however, as is often claimed, made in the name of equality: “All those activities, that separate men and women are flag-waving for radical Islam. [–] This is what the fanatics want – that we crumble our value base, from inside out.”

These two major themes reflect an “orientalist” and othering look on the Muslims, and, by default, also the perceived weaknesses of the liberal system. In this sense, the themes show an approach that goes beyond Islamophobia, at least in its sheer negative definition (Skenderovic and Späti, 2019).

There seem to be certain discernible patterns within tweets, which show a semblance of thematic consistency, which were also already visible at the level of the topic modeling. The same arguments, although differently contextualized, are often used both against Muslims and LGTBQ+ communities. Both are seen as threats to collective in-group identity, and any border-crossing activities, whether in the form of trespassing the cultural norms and by mixing categories or crossing the actual borders, are dangerous (Taguieff, 1993). The blame is almost as much toward the people belonging to the in-group, indulging the elites and representatives of the media, who give in to these activities. This division between “invaders” and “traitors” is typical also in extreme nationalist discourses (Bjørgo, 1995).

Discussion

Our investigation was structured around a definition of hate speech, specifically focusing on posts that target individuals or groups based on identity aspects, such as race, gender, or sexual orientation, thereby contributing to prejudice and discrimination (The Council of Europe, 1997). Our annotators engaged in an iterative classification process, beginning with the identification of the target group, followed by the assessment of sentiment, and finally, determining the presence of hate speech elements. We found that while 61% of analyzed posts showed negative attitudes toward target groups, only 24% qualified as hate speech. This distinction is critical: not all negative speech constitutes hate speech. Negative sentiment, defined in our study as posts with a generally negative tone toward the target group, was about three times more common than hate speech.

Our annotation process involved careful categorization. Annotators labeled texts as hate speech only when they strongly aligned with our definition. Posts with unclear classification but evident negative attitudes were marked as negative sentiments. This approach underlines the complexity of discerning hate speech, especially on social media, where context understanding is challenged by informal language, brevity, and the use of sarcasm, stickers, memes, and emojis. To improve IRR among annotators, we extended our training sessions. However, we were not able to raise IRR to the desired level. We believe this was due to several factors: the cultural backgrounds of the annotators, the inherent challenges in interpreting social media posts, the lack of domain-specific expertise among lay annotators, and the definitional complexities of hate speech, even after we narrowed the definition as described in the method section.

The corpus included basic terminologies relevant to Muslim communities, from neutral to offensive terms, to capture a wide range of discourse. Our analysis revealed a significant disparity: about 74% of posts about Muslims showed a negative tone, compared to just 27% for the LGBTQ+ community. This highlights a pronounced negativity in online communication about Muslims, pointing to deep-seated biases and emphasizing the need for addressing hate speech in these digital spaces.

While our study does not concentrate on specific political groups or online platforms that represent a uniform group identity, our content analysis of global social network platforms, such as X, indicates that our findings are consistent with research on far-right movements, populism, and Islamophobia (Ekman, 2015; Horsti and Saresma, 2021; Saresma and Tulonen, 2022). Our research unequivocally shows that far-right groups deploy distinct language in discussions about Muslims and LGBTQ+ individuals, thus underscoring a deep-rooted identity discourse. SIT highlights two main mechanisms: social categorization and social comparison. Social categorization entails the grouping of individuals based on common characteristics, leading to the formation of in-groups (e.g., “True Finns”) and out-groups (e.g., Muslims and LGBTQ+ individuals) (Tajfel and Turner, 1979). In contrast, social comparison is an essential mechanism within SIT that allows individuals to evaluate and appreciate the attributes of these groups through comparison and differentiation (Hogg, 2016). This process is critical for individuals to develop perceptions of their group (in-group) in relation to others (out-groups), thus influencing their identity and sense of worth within the wider social context (Jaspal, 2017).

In our analysis, we identified that the comparison of LGBTQ+ individuals primarily centers on identity markers (including gender expression, sexual orientation, and cultural practices), alongside stereotypes and prejudices (related to personality traits, behaviors, or roles), and values and beliefs (emphasizing equality, freedom of expression, and relationships). These themes are echoed in tweets targeting LGBTQ+ individuals and communities, illustrating social categorization and identification at various levels. Specifically, at the intergroup level, these tweets delineate clear distinctions between in-groups and out-groups, portraying LGBTQ+ individuals and their allies as an out-group that poses a threat to the conventional social order and identity of the in-group. As Saresma and Tulonen (2022) underscored in their analysis of a Finnish far-right group forum, LGBTQ+ individuals are perceived as undermining traditional marriage and the established gender hierarchy, leading to them being subjected to derogatory comments fueled by disgust. The language frames LGBTQ+ identities and advocacy efforts as imports of foreign, “un-Finnish” values that undermine traditional Finnish and Nordic cultural norms (Saarinen and Koskinen, 2022). Topics like child abuse and pedophilia are also in concordance with the findings of Saresma and Tulonen’s (2022) study (see Supplemental Appendix). These tendencies are also apparent among far-right groups in Germany, which accuse LGBTQ+ individuals of “deviance” from what they consider “healthy” norms (Ahmed and Pisoiu, 2021). This reflects SIT’s premise that groups seek to positively distinguish their group from relevant out-groups along valued dimensions as a way to achieve a positive social identity. The tweets assert the superiority of traditional gender roles and sexuality as key markers of national and cultural identity and moral virtue.

At an intra-group level, the tweets also police the boundaries of acceptable attitudes and behaviors within the national in-group, particularly traditional institutions like the church and military (Hazel and Kleyman, 2020; Schlatter and Steinback, 2010). References to “fakers” and “infiltrators” suggest those expressing more inclusive attitudes are betraying their role within trusted in-group domains (Radnitz and Mylonas, 2022). This relates to SIT’s discussion of the “black sheep effect,” where individuals who go against in-group norms often face harsher derogation from fellow group members (Marques et al., 1988). Those constructs help explain the vitriol targeted toward religious leaders and politicians espousing progressive views on gender and sexuality.

Overall, findings regarding LGBTQ+ individuals suggest attitudes are rooted partly in perceived threats to the positive distinctiveness and traditional identity constructs that bind the national and cultural in-group. Prejudice emerges from a motivation to demarcate the in-group from an encroaching, foreign out-group seen as introducing destabilizing and corrupting external values and agendas.

These intergroup threat perceptions likely make overcoming biases through contact and appeals to superordinate common identities more difficult. The conspiratorial language and personalized attacks also suggest a scattering of hostile subgroups who have deeply internalized these markings of out-group threat. Their boundaries for acceptable diversity in attitudes or identities may be quite rigid. Conspiracy theories often fuel anxieties about losing control or status, driven by the belief in powerful groups manipulating the public and concealing their activities. The dissemination of such theories through mass media and hyper-partisan news sites contributes to perpetuating a cycle in which online communities rely on conspiracy-driven news sources, leading to widespread exposure to these ideas (Calderón et al., 2020; Tucker et al., 2018).

The culture and nature of Islam are pivotal in-group comparison of Muslims and Finns, with Islamophobia acting as a fundamental aspect of far-right groups’ approach to highlighting cultural distinctions. This phenomenon is not exclusive to Finland; indeed, Finnish far-right groups are part of a broader pattern observed across various European nations, including Germany, Sweden, Denmark, and France, where similar groups have emerged, indicating a shared trajectory in the rise of far-right ideologies (Ahmed and Pisoiu, 2021; Åkerlund, 2020; Derczynski et al., 2019; Froio and Ganesh, 2019).

The findings related to Muslims suggest that themes of violence and naiveté are manifestations of in-group favoritism and out-group derogation. These processes stem from perceived threats to the in-group’s identity, challenging its positive distinctiveness (Crosset et al., 2019). The language of Islamophobia often portrays Muslims as inherently dangerous and violent, ascribing this portrayal to the perceived authoritarian nature of Islam. Such arguments fail to distinguish between Islam and Islamism, or between non-violent and violent strands of Islamism (Ekman, 2015). In support of these claims, selective quotations from the Koran are utilized to suggest that the very nature of Islam provides an explanatory framework. This results in the construction of an identity for Muslims as an invasive out-group, posing a threat to the safety, values, and cultural identity of Western societies (Froio and Ganesh, 2019) or Finnish in-groups. This rhetoric underpins the core assumption of far-right groups in various European countries. For instance, Farkas et al. (2018) uncovered fraudulent Facebook pages purporting to represent Muslims in Denmark with intentions to kill non-Muslim Danes and overtake Danish society.

The explicit calls for violence and dehumanizing language serve to maximize perceived differences between groups, categorically excluding Muslims from shared moral communities or characterizations of humanity. These posts often fail to distinguish individual acts of violence, which may not be directly linked to religion beyond the perpetrator being labeled as a “Muslim.” Such incidents are then presented as “evidence” that Islamic culture fosters violent behavior (Ekman, 2015) and discussed as an oppressive ideology in other studies in Finland (Sakki and Martikainen, 2021). This dehumanizing language also encompasses claims of a sexist—and frequently violent—dimension within Islam, portraying it as fundamentally oppressive and abusive toward women. In discussions that traverse subjects such as “Slavery in Islam,” with a focus on the enslavement of women for sexual purposes, alongside “Gender Equality and Religious Practices,” debates surrounding the “Hijab,” “Women's Rights,” and the situation of “Women's Rights in Iran,” incidents of sexual assault involving Muslims are frequently brought forth as evidence in arguments suggesting that “hatred of women” is intrinsic to Islam (Ekman, 2015). Other studies in the Finnish context also emphasize how Muslim male refugees are stereotypically portrayed as having a predisposition toward committing crimes, frequently those of a sexual nature (Horsti and Saresma, 2021; Sakki and Martikainen, 2021; Saresma and Tulonen, 2022), or as portrayed as hypersexual in Denmark (Farkas et al., 2018) and violent criminals specifically against women and children in Germany (Ahmed and Pisoiu, 2021).

Another prevalent argument among the far right is the conspiracy theory concerning the widespread infiltration of open institutions and government bodies in Western societies by Muslims. This theory suggests that Muslims are secretly influencing mainstream politics, national legislation, and institutions. It also claims that they are imposing Islamic laws and customs across all societal levels (Ekman, 2015). In our exploration of the topic of “Taqiyya,” we observed a common belief among many that it permits Muslims to deceive non-Muslims to Islam’s benefit. In addition, the idea that Taqiyya is employed as a strategy to expand Islamic presence and influence in Europe frequently recurs. Similarly, discussions about Turkish President Erdogan’s migration policy indicate skepticism toward his political tactics, with numerous posts considering his actions to be strategic efforts aimed at promoting the spread of Islam in Europe. This concept aligns with the “great replacement” theory, which suggests that Europe’s cultural and ethnic composition is under threat of being replaced by non-European populations. This argument is also utilized by the German far-right party, AfD (Ahmed and Pisoiu, 2021).

The themes also reflect perceptions that the liberal values of tolerance and multiculturalism weaken the in-group, making it unable to mount strong defenses against the alleged goals of conquest and destruction by the out-group. Descriptors like “naïve” and “crisis” portray the in-group identity as being in a threatened state precisely because its liberal democratic values open it to attack (Farkas et al., 2018). Mainstream media is often criticized by far-right groups in various countries for allegedly obscuring the “facts” about Muslims engaging in rapes, murders, thefts, and other crimes (Åkerlund, 2020; Ekman, 2015). However, in Finland, the critique of how Muslims and Muslim culture are portrayed on state-funded broadcasts has become a more prominent issue. Similarly, politicians advocating for political correctness are targeted, while left and liberal politicians and elites are accused of betraying their countries by allegedly aiding or inviting Muslim immigrants (Ahmed and Pisoiu, 2021; Keskinen, 2013). This “inner enemy” rhetoric is also a common theme in Finland and Sweden (Sakki and Martikainen, 2021). This assistance is purported to facilitate a “shadow government” with global influences, ultimately leading to the colonization of their nations (Ekman, 2015).

Together this suggests prejudicial attitudes are grounded in perceptions that the national and cultural identity of the in-group faces extinction by an invading out-group with fundamentally opposed values and goals. The Muslim identity is portrayed as fundamentally incompatible with Western society, depicted as the exact opposite and sworn enemy of Western society, a general theme in the Finnish context (Keskinen, 2013), and a perception that is also evident in Denmark (Farkas et al., 2018), and Sweden (Åkerlund, 2020). This anti-immigration rhetoric significantly stands as a transnational topic circulated among far-right groups also across Germany, France, the United Kingdom, and Italy (Froio and Ganesh, 2019). From that defensive posture, derogation, dehumanization, and even calls for violence become psychologically rationalized means of protection (Bandura et al., 1975; Marcks and Pawelz, 2022).

The findings indicate messaging based solely on appeals to common humanity, values, and interests may have little effect, given the depth of threat perception. Improving intergroup relations likely depends on demonstrated, concrete evidence that counters the zero-sum discourse regarding diversity and immigration. Researchers and advocates should continue bringing nuance to documenting and characterizing the variety of attitudes present.

Limitations

This study provides valuable insights into hate speech on X, but it has limitations due to its exclusive focus on Finnish language X data. While Platform X attracts a user base with more political orientation, skewing toward younger demographics, hate speech remains prevalent on other platforms favored by Finns, such as Facebook (Meta, 2022). Moreover, Elon Musk’s acquisition of Twitter led to several policy changes on the platform, including reduced content moderation, fewer restrictions on bot activity, and the reinstatement of previously banned or restricted users (Hickey et al., 2023). Recent research indicates an increase in hate speech following his takeover (Auten and Matta, 2024; Wang et al., 2024). However, since this transition began in October 2022, just 1 month before the end of our data collection period, we believe our dataset is largely unaffected by these changes. Nevertheless, our study is limited in scope and does not investigate the potential impact of this transition on hate speech trends.

Our findings provide a comprehensive insight into the nature of hate speech within the Finnish X sphere and also exemplify common themes prevalent among far-right groups across Europe (Froio and Ganesh, 2019; Hokka and Nelimarkka, 2020). Furthermore, analyzing the motivations of individual hate speech producers goes beyond the scope of this article. This being the case, it is not possible to evaluate how comprehensively the opinions presented in social media represent the mindset of their contributors, although a previous study based on a survey suggests a linkage of online hate with personal risk factors, such as impulsivity, as well as group behavior and social identification with online groups (Kaakinen et al., 2020). For future studies, combining surveys with social media data might be a useful approach to enhance representativeness in this respect (Reiter-Haas et al., 2023).

Despite these limitations, our thematic analysis effectively captures and classifies hate speech posts, demonstrating the potential of nuanced thematic methods in hate speech research. We addressed the challenges of text classification in the methodology, ensuring robustness in our results. Future research should consider involving annotators with deeper knowledge of the social and cultural contexts of the target groups to improve hate speech detection accuracy.

Conclusion

This study of X, using SIT, uncovers prevalent online hate speech against Muslims and LGBTQ+ individuals. It shows widespread negative sentiment, especially toward Muslims, indicative of biases in Finnish online discussions. The LGBTQ+ community also experiences negativity and is viewed as disruptive to traditional norms. Muslims are often depicted as violent and a threat to Finnish and Western values, highlighting fears and resistance to multiculturalism in digital spaces.

These insights emphasize the need for refined strategies on digital platforms to tackle hate speech. Effective policies should address not just the speech itself but also the underlying drivers. In addition, deeper knowledge of the social and cultural contexts of the target groups is needed to improve hate speech detection accuracy, and for understanding sometimes differing dynamics between different forms of hate speech. A challenge is that the categories often intertwine but do not develop entirely in tandem. Further research is also needed to explore the specific algorithmic mechanisms and design choices that facilitate the spread of hate speech and to develop strategies for mitigating their negative effects on social cohesion and public debate.

Supplemental Material

sj-docx-1-nms-10.1177_14614448241312900 – Supplemental material for From prejudice to marginalization: Tracing the forms of online hate speech targeting LGBTQ+ and Muslim communities

Supplemental material, sj-docx-1-nms-10.1177_14614448241312900 for From prejudice to marginalization: Tracing the forms of online hate speech targeting LGBTQ+ and Muslim communities by Ali Unlu, Sophie Truong, Nitin Sawhney, Tuukka Tammi and Tommi Kotonen in New Media & Society

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the Nordic Research Council for Criminology (NSfK). It was conducted in collaboration with the Finnish Institute for Health and Welfare, Aalto University, and the University of Jyväskylä.

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.