Abstract

This essay explicates a middle range theory to predict and explain the propagation and magnification of hate messages on social media. It builds upon an assumption that people post hate messages in order to garner signals of social approval from other social media users. It articulates specific propositions involving several constructs, including signals of social approval, disapproval, and sufficiency of social approval. Six derived hypotheses predict how these dynamics apply in certain contexts of social media interaction involving hate posting. It reviews empirical research that applies to these hypotheses, raises issues for future research, and reviews concurrence and distinctions between this approach and other theories.

The communication of hate messages on social media is a “major problem for every kind of online platform where user-generated content appears” (Saleem et al., 2017, n.p.). The United Nations observed that “hateful messages disseminated online are increasingly common and have elicited unprecedented attention” (Iginio et al., 2015, p. 13). The incidence of hate messaging on “online communities, gaming sites, online news and sport sites, and social networking environments” (Bliuc et al., 2018, p. 75) is growing, as is concern over its nature and effects. Research has flourished focusing on its content, incidence, and effects.

There is less research, and little in the way of theory, to explain what prompts people to propagate hate messages in social media (Castaño-Pulgarín et al., 2021). This may be because common sense (see Monge & Laurent, 2024) and many studies presume that online hate is the result of individuals’ intentions to harm the persons or groups who are targeted in the messages; the “reason for hate speech in cyber space is the desire to hurt and humiliate people” (Górka, 2019, p. 237). That presumption is reflected in numerous definitions of hate messaging that focus on its negative and harmful consequences, like cyberaggression, “the intentional infliction of harm through information communication technologies” (Zhang, 2023, p. 1) or the Jigsaw corporation’s (2023) definition of a toxic message 1 as one that “is likely to make individuals leave a discussion.” There is no question that online hate can harm individuals’ reputation, privacy, safety, and psychological and physical well-being when it is observed by those who it names, as it often is (for review, see Tong, 2024).

This article advances a different explanation for the propagation of online hate. It begins by questioning whether the primary purpose of hate message generation is exclusively based in harmful intent. Alternatively, a social approval theory of online hate has as its central assumption that the primary purpose of online hate messaging is to seek, and to be gratified by, the social approval it garners from other social media users. Although this assumption and its background have been raised in very brief form (Walther, 2022), the systematic articulation of constructs, propositions, and hypotheses had yet to be elaborated. The hypotheses derived from his framework are at times straightforward and at others, counter-intuitive, and seem to conflict with one another—predicting similar outcomes due to opposite conditions—but they are all tenable, plausible responses to the seeking of social approval in online settings. They are suggested to apply in concrete social media situations or in more abstract hypothetical contexts, some of which involve certain affordances that can draw other social media users’ expressions of approval toward an individual’s hate postings across time and space. The essay also discusses existing empirical research, conducted mostly without reference to the specific theory, that is consistent with its predictions nevertheless.

The work aims to provide a “middle-range” theory (Merton, 1968), that is, a set of related propositions that can organize existing extant facts and propel the derivation of original hypotheses that can be verified (or contested) through additional empirical inquiry. The major constructs are not entirely novel. They are adopted and adapted from other research foci. Its hypothetico-deductive qualities, however, allow for the specification of otherwise seemingly conflicting hypotheses that may nevertheless be logically and empirically valid.

The work does not focus on organized hate groups that have a well-established offline presence (see Phadke & Mitra, 2024). It focuses on “the actors in informal communities dedicated to producing harmful content, or the accounts that produce hate speech on mainstream platforms” (Siegel, 2020, p. 61), for which little “empirical work has systematically examined how these actors interact within and across platforms.” Moreover, a boundary condition of the work is its focus on hate messages that are posted publicly, where other social media users can see them and respond to them. Other studies have focused on “networked harassment” in which a social media user posts a message disparaging a specific person, and readers take it upon themselves to harass the individual target using private messaging (e.g., Lewis et al., 2021). Accessibility of, and potential interactivity with hate messages are critical to this approach (with the exception of hate messages that are later displayed to others in specific ways to be discussed) because feedback to them by other social media users is the central factor.

Online Hate

A key construct in this work is an online hate message. Its definition is treated at length because of its centrality and the ways it differs here from some previous definitions. There is well-known dissensus in defining online hate (see Hietanen & Eddebo, 2023, for a comprehensive review of the history and problems of its definition). This essay uses the term “online hate” rather than the widely-used term, “online hate speech” deliberately, in concurrence with Jigsaw (n.d.): “Terms like ‘hate speech’ or ‘abuse’ frequently refer to specific categories of language that violate certain terms of service or laws. Different platforms or countries have different rules that govern these specific categories of speech.” It is, however, consistent with Saleem et al.’s (2017) characterization of “speech (or any form of expression) that expresses (or seeks to promote, or has the capacity to increase) hatred against a person or group of people.”

Although many definitions specify that “the speech causes harm [and] the speaker intends harm” (Hietanen & Eddebo, 2023, p. 446), an online hate message is defined here according to qualities of its content rather than by its potential consequences. Harmful consequences are excluded because of the central conceptualization that online hate is something that is posted primarily for potential admirers rather than targeted victims. Effects on or responses from targets are not a given, and consequences therefore cannot be a definitional aspect. This does not suggest that online hate has no harmful potential consequences. Clearly online hate can hurt “the feelings, self-respect, or dignity of people it purports to describe, when they are exposed to it” (emphasis added); online hate, as defined here, has the potential to do “harm indirectly, by persuading. . .people to hate another group” (Dangerous Speech Project, n.d.).

Several extant definitions fit these content-based parameters. For instance, online hate “may be defined as the use of information and communication technology (ICT) to profess attitudes that devalue others because of their religion, race, ethnicity, gender, sexual orientation, national origin, or some other characteristic that defines a group” (Hawdon et al., 2024, p. 2). It “may include derogatory comments, threats, and incitements to violence” (Oliveira et al., 2023, p. 1), language that is “‘discriminatory’ (biased, bigoted or intolerant) or ‘pejorative’ (prejudiced, contemptuous or demeaning)” including “images, cartoons, memes, objects, gestures and symbols” (United Nations, 2023) and “jokes, puns, and caricatures” (Bliuc et al., 2018, p. 83). It also includes “content that ‘praises, promotes, glorifies, or supports any hateful ideology (e.g., white supremacy, misogyny, anti-LGBTQ. . .)’” (Hietanen & Eddebo, 2023, p. 448).

Almost all definitions of online hate involve targeting some group or minority on the basis of “identity factors”: “religion, ethnicity, nationality, race, color, descent, gender. . .economic or social origin, disability, health status, or sexual orientation, among many others” (United Nations, 2023). These are frequently considered to be essential to definitions (e.g., Vilar-Lluch, 2023), based in law concerning hate crimes (Hietanen & Eddebo, 2023). The present, content-based definition, however, includes messages that target individuals on non-group characteristics, such as someone’s physical appearance (e.g., Chandrasekharan et al., 2017) or intelligence. It also includes delegitimization and dehumanization attributed due to moral or criminal corruption, such as “terrorists” or “child molesters” (see Crandall et al., 2002). Online hate also includes some forms of cyberbullying, conceived as “an aggressive, intentional act carried out by a group or individual, using electronic forms of contact, repeatedly and over time against a victim who cannot easily defend him or herself” (Smith et al., 2008, p. 376). 2

Social Processes Facilitate Online Hate

Almost all studies into the motivation for online hate (93% according to Bührer et al., 2024) assumed that it is the product of various personality characteristics. These traits include sadism, psychopathy, and Machiavellianism (Buckels et al., 2014), or deep-seated prejudices such as “racial hatred, xenophobia, antisemitism or other forms of hatred based on intolerance, including. . .nationalism and ethnocentrism, discrimination and hostility against minorities, migrants and people of immigrant origin” (Cinelli et al., 2021, n.p.). Alternatively, the impetus to generate and share online hate may be due primarily to social rather than individual forces. Rather than dismiss online hate posters as “bad actors” (Potter, 2021) or being driven by Schadenfreude, Quandt (2018) suggests that audience factors also facilitate hate online. Brady et al. (2021, p. 1) go as far as to untether what people post for others from their beliefs: Although individuals hold various moral positions, their expression “may be sensitive to factors that have less to do with individual moral convictions, particularly in the context of social media . . . .[and they] change their outrage expressions over time through reinforcement.” In this manner, hate posters attune their messaging toward the positive responses they seek and obtain from others. That is, from a social processes perspective, the primary audience for online hate may be other users who are liable to reward the postings, rather than ostensible victims (Walther, 2024). The primary purpose of online hate posting may be to draw admiration among haters and their admirers. Its exchange may offer salutary effects among those who traffic in it.

Several attributes frequently associated with online hate messages support the notion that the primary audience for online hate is other admirers rather than the targets to whom their messages allude. There are hashtags that are so repugnant to many people, that they may work as gatekeepers, for example, “#whitepower, #blackpeoplesuck, #nomuslimrefugees” (ElSherief et al., 2018, p. 44). Additionally, online hate messages often contain symbols and phrases that are interpretable only by individuals who are familiar with a community’s shared code system (for review, see Walther, 2024). It seems unlikely one would attempt to offend victims using phrases and symbols that victims themselves do not understand. Another symptom that hate is produced for appreciative audiences is its frequent insulation from those whom its messages target. Hate messaging is proportionately more prominent within so-called fringe platforms than on the mainstream platforms where targets of hate messages would be more likely to see them (see Daniels, 2017), since fringe platforms offer less content moderation such as message take-downs or account suspensions (for review, see Gillespie, 2018).

Consequently, there is an astounding degree of vulgar racism and other forms of hate in these spaces (see Jasser et al., 2023). Messages in “hate-containing sites” are often overtly hostile to minority members, who are generally loathe to visit them intentionally; the majority of people who land on them do so accidentally (Reichelmann et al., 2021). Computational linguistics research found that hate messages written about particular groups or persons (compared to hate messages addressed to victims directly) are more extreme, with more references to killing, murder, and extermination (ElSherief et al., 2018).

Despite the strong appearance that hate posters hate the people about whom they post, ironically, it may not always be the case. As Siegel (2020, p. 62) observed of hate posters, “we do not know the degree to which their rhetoric represents their actual beliefs or is simply. . .attention-seeking behavior, which is quite common in these communities.” Hate posting may meet other goals, such as maintaining moral order (Marwick, 2021), sustaining communities and interpersonal bonds (Wahlström & Törnberg, 2021), or providing humor and mutual entertainment (Altahmazi, 2024; Udupa, 2019). These observations suggest that online hate messaging is performative, along the lines suggested by Goffman (1956). Performers may be like-minded ingroup members with respect to racism and other prejudicial attitudes, but it is not necessary that they are. They may engage in “insincere performances” played for one another rather than “sincere performances” based in shared animus. Insincere performances, according to Goffman, involve “staging talk” and “derisive collusion” (Goffman, 1956, chapter 5) by which participants coordinate and strategize among themselves before, during, and after collaborative behavior. This performative aspect seems especially clear in Stringhini and Blackburn’s (2024) documentation of “backstage” comments posted by Zoombombing perpetrators (who invade and disrupt others’ online Zoom meetings). Zoombombers often collude “backstage” in real time on 4chan, where they strategize before, during, and after an attack, including decisions to shout racial epithets in Zoom meetings because of their immense potential for disruption. Their goals seem to be amusing themselves rather than inflicting sincerely-held racial hatred. This is not to say that the actors and actions of online hate are harmless to others. They are not. Rather, that the disruption and consternation they cause connote successful performances.

Assumptions, Propositions, and Constructs

Among the social processes that impel the expression of hate online, this theory focuses on the accrual of social approval from other online hate providers and sympathetic observers. It also specifies an ironic potential indirect effect emanating from negative responses that, under specific circumstances, may, ironically, lead to more hate messaging rather than less. That is, the expression of objections and overt resistance to hate messaging by victims or bystanders may also indirectly motivate and gratify hate messaging, but only through the extent that they prompt yet others’ expression of social approval. This hypothesis is addressed more fully further below. In order to explain these relationships, the central assumption of the theory is repeated here, followed by propositions and the constructs that are incorporated in them.

Assumption

The central assumption of the theory asserts that the expression of hate online is motivated toward and gratified by the garnering of social approval signals from others online in response to one’s hate messaging. The repetition and magnification of hate messaging are subject to reinforcement mechanisms related to the securing of social approval.

Proposition 1

The first proposition derived from this assumption is that receiving relatively greater signals of social approval for one’s hate message causes an individual to generate similarly hateful messages more frequently, or more extremely hateful messages, thereafter. This proposition is consistent with operant learning theory (for review, see Burgoon et al., 1981), from the perspective of which social approval signals comprise positive reinforcement.

Signals of Social Approval

A major construct in the foregoing proposition is signals of social approval. Social approval is conventionally understood as praise, affirmation, congratulations, reciprocation, and the like. There are a variety of ways that it can be signaled in social media. Social media systems easily afford verbal and graphical replies to one’s message. Verbal replies include praise, agreement, concurrence, and extensions.

Social media also afford expressions of social approval in potent, novel forms. Various forms of Likes—graphical symbols that users can append to others’ messages, such as a thumbs-up graphic in Facebook and YouTube, hearts in Twitter/X, and upvotes in Reddit—generally signal approval, affirmation, and sociability (Hayes et al., 2016). Seeing these symbols, and the number of such symbols appended to other users’ messages, connotes popularity and value of the messages’ content (for review, see Haim et al., 2018).

The present theory is concerned with the accrual of such signals on hate producers’ own messages, however. In that context, these signals comprise a “numerical representation of social acceptance” (Rosenthal-von der Pütten et al., 2019, p. 76). An experiment simulating a social media platform found that getting likes significantly enhanced self-esteem and mood, and decreased sadness and anger (Wolf et al., 2015). Another study found that after retweeting a fake news story, the more Likes participants received, the more they believed the story they shared (Walther et al., 2022). There is even conjecture that users experience “a dopamine hit every time they get likes, comments, shares, retweets, etc.” (Stjernfelt & Lauritzen, 2020, p. 204).

Proposition 2

The second proposition may seem contradictory to the previous one. If hate posters seek approval, should they fail to achieve a level of approval that they perceive to be sufficient, they may be driven to take extraordinary steps, via more extreme hate messaging, in order to acquire approval. Therefore, a second proposition states, receiving insufficient social approval to one’s online hateful message(s) causes individuals to generate more hateful messages thereafter.

Expected Approval and Sufficiency

A critical construct implicated in this proposition is the notion that hate posters expect a level of approval of their online hate messages, as signaled by other users on the same or different social media platform. The expected level of approval provides a threshold of sufficiency. Hate posters (and others) can experience less approval than expected, comprising a deficit or insufficiency, creating a noxious state (see Covert & Stefanone, 2020). The level of expectation fluctuates over time and varies from person to person.

Several studies about social media, unrelated to hate, suggest the existence of some level of expected or desired approval-signaling for one’s social media postings. Scissors et al. (2016) surveyed Facebook users about how many Likes made them feel that their posts were successful. Results showed a median of 8 Likes and a mode of 10. A similar study by Carr et al. (2018) found a mean of 38. Across a number of platforms, individuals notice decreases in engagements with their posts from one day to the next, and “feel indignant [when] they are not getting the social media attention they believe they deserve” (Nicholas, 2022, p. 8). These reports suggest that there is an expected level of approval-signaling and some frustration when it is lacking.

If hate messengers receive insufficient approval they may respond by increasing the intensity of their hate messaging, as predicted in Proposition 2. From a learning theory perspective, garnering of social approval to ameliorate insufficient approval operates as negative reinforcement since it alleviates a noxious state. The first and second propositions may both be true, although not for the same person at the same time.

Proposition 3

The third proposition states that social disapproval prompts indirect effects on the production and magnification of hate messaging, but not direct effects. Social disapproval is conceived as messages that challenge or condemn a hate posting. Social disapproval from some user(s) indirectly affect one’s hate messaging when they provoke social approval from yet other user(s) who condone and admire the hate message that provoked disapproval. That is to say that social disapproval encourages more hate messaging by an individual if it triggers social approval to a hate poser from yet other users.

Social Disapproval

If replies to hate messages contain negative content such as criticism, contradiction, mockery, or reciprocated hate, they signal social disapproval. In addition to discursive means of expressing disapproval, some social media platforms offer graphic symbols to append to others’ messages, such as thumbs-down “Dislikes” and other symbolic forms. Overt negative message responses to hate postings are not uncommon, when hate messages appear where targets see them. In fact, “counterspeech” campaigns encourage people to object to online hate messages explicitly in hopes of deterring hate posting (Jones & Benesch, 2019). The literature reflects few cases in which intervention messages appear to reduce hate messaging, and in those cases, intervention messages emanated from sources other than victims (Munger, 2017; Siegel & Badaan, 2020). The present approach actually predicts that overtly negative responses to online hate may encourage more hate, when victims or bystanders’ protests prompt yet others to respond approvingly, as proposed above and hypothesized more fully, below.

Hypotheses

Based on the assumptions, propositions, and constructs, this essay derives a number of hypotheses. Some hypotheses are more strictly theoretical, in that they do not suggest specific social media spaces where the predicted relationships may occur. Other hypotheses are more grounded in exemplary contexts, situations, affordances, and practices. Additionally, this section reviews extant, related empirical findings.

This hypothesis derives from the first proposition. As a corollary, consistent with learning theory, getting no social approval after repeated hate messages may lead to decline and possible extinction of online hate messaging.

A few extant studies suggest support for H1a. Field studies by Brady et al. (2021) found that Twitter users whose “moral outrage” messages received Likes or shares posted more such messages thereafter. Moral outrage messages include many elements common to hate, such as anger, disgust, and contempt toward people and things, “holding them responsible, or wanting to punish them” (p. 2).

Additional experiments by Brady et al. also indicate that social media users select the degree of extremity of messages to post on the basis of their assessment of what will garner social approval. Participants chose to make their initial posting the same kind of message that appeared to have gotten more Likes by others, whether the messages reflected outrage or not. Moreover, whatever the level of outrage in their initial postings was, they subsequently increased their level of outrage when they received Likes for doing so. Similarly, Shmargad et al. (2022) looked at naturalistic “toxic” messages posted on news discussion sites. Seeing users’ reception of “upvotes” for toxic messages led individuals to post more hate messages. Both Brady et al. and Shmargad et al. examined the frequency of posting the particular messages as the dependent variables in their research, leaving unanswered H1b, below.

There are few studies that examine the magnitude of hatefulness rather than frequency as a result of social approval. Jiang et al. (2023) found that when Twitter users’ xenophobic hate messages received Likes and retweets at levels that were greater than their respective average, their subsequent messages were more hateful. Frimer et al. (2023) analyzed 1,293,753 tweets from the official Twitter accounts of all U.S. congressional representatives from 2009 to 2019. The incivility of congressional tweets rose by 23% over time, and tweets that were more uncivil attracted significantly more Likes and retweets than did civil tweets. In the final analysis, the appearance of Likes and retweets significantly mediated the increase in the incivility of tweets. In other words, “uncivil tweets were apparently well received on Twitter, and this reception incentivized further incivility,” according to Frimer et al. (2023, p. 264).

This second hypothesis is abstract and not connected to any specific social media space. Its predictions, based on social approval-seeking, compete with an implicit rival hypothesis reflecting conventional assumptions, that hate posters post primarily to antagonize victims. It employs Daniels (2017) notions of target-rich online conversation environments: Twitter (now X.com) was a target-rich environment for white supremacists to attack victims; unlike fringe platforms that are dominated by white males, Twitter hosted a racially diverse user base, allowing racists to post hate messages in a way that the targets were likely to see them. If haters want to harm targets, a target-rich environment would be where to post more hate messages, possibly in a hashtag thread, discussion space, or comments section that is populated by a diversity of users.

If hate posters desire to garner social approval from other users, however, a primarily target-rich environment should not provide the approval that a hate poster seeks; too few individuals are present to provide social approval. Rather, individuals may post more hate in environments where there is an abundance of hate promoters and admirers, who are more likely to yield social approval. The notion of an environment with numerous haters does not necessitate that they identify with one another, or hold a common ideology. They may or may not, for it may not matter to them from whom they garner social approval, just as long as they get it (a question that surfaces again in the Discussion), being merely a matter of subjective expected utility regarding the likely accumulation of social approval signals. These dynamics may be similar to recent findings about motivations for sharing political news on Twitter: “Partisan social media users selectively share political news, whether true or false, based on anticipation of positive reactions of like-minded audiences” (Marie & Petersen, 2023, p. 1).

A mixed space would also be attractive for hate posters: A conversation space with ample targets as well as potential admirers should motivate the generation of hate messages. (Some exceptions and extensions to this general hypothesis appear in H6 below in cases where admirers are absent.)

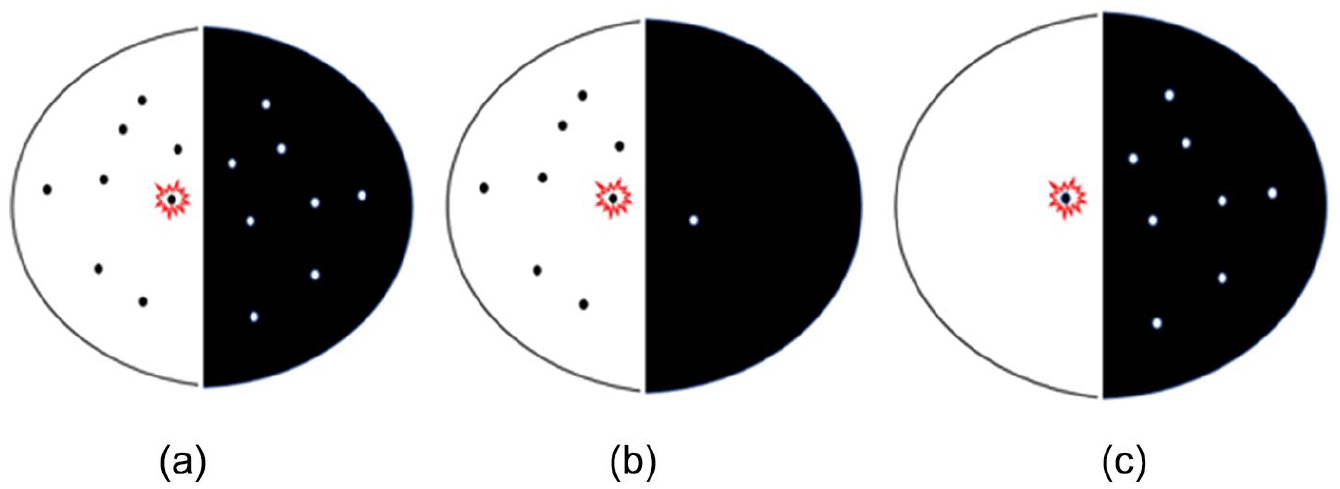

Figure 1 represents hypothetical interaction spaces with dots representing some number of hate posters on the left and some number of targets on the right. The star represents any hate poster from whom we can predict greater or lesser volume of hate, as will be witnessed by other participants on the same side as well as any participants on the other side. If online hate is motivated by its potential to harm victims, spaces A (mixed) and C (target-rich) are more likely to generate hate messages than B (hater-rich). However, if online hate is motivated by the potential for social approval from others who are likely to provide it, as hypothesized, A (mixed) and B (hater-rich) are more likely than C (target-rich) to generate greater hate messages; they provide a “cost-effective” way (Zhang, 2023) to garner approval from a maximal number of probable admirers, which C does not.

(a) Mixed, (b) hater-rich, and (c) target-rich conversational spaces.

This hypothesis invokes the second proposition and the construct of individuals’ expected or sufficient levels of social approval, and the central assumption that hate posters post hate in order to acquire social approval for their hate messaging. It suggests that hate posters behave in more extremely hateful ways in response to a deficit of approval signals. Their choice of abnormally hateful messages as an approval-getting strategy is not unreasonable if they have observed that more extreme messages tend to get more approval (as demonstrated by Frimer et al., 2023).

At first glance, the hypothesis might appear to be the antithetical to H1. H1 predicts that haters post more hate when they experience an abundance of social approval for their previous hate messages; H3 predicts that haters post more hate when they experience a deficit of social approval for their previous hate messages. The predictions are not mutually exclusive. Both can be true, across individuals, or even for the same person but at different times. Different kinds of people are also more sensitive to one condition or other, a notion that is consistent with studies connecting narcissism to hate posting online, and dissatisfaction with the amount of Likes one’s Facebook postings get (Carpenter, 2012; Zell & Moeller, 2017).

The next hypothesis extends the principle of insufficient attention and approval into a specific social media phenomenon: deplatforming, or account suspension. When one’s social media postings violate a platform’s rules (as online hate often may), a platform can cancel that individual’s account on that platform, temporarily or permanently. Being banned from a platform and unable to post is certain to thwart acquisition of approval for one’s messages, with one or two exceptions, as follows:

Account suspension is one form of content moderation in social media, a topic with its own abundant literature and controversies (e.g., Chandrasekharan et al., 2017). Relevant to this hypothesis are studies showing that account suspensions actually backfire and increase hate posters’ output and extremity (Buntain et al., 2023); “content and account bans could have galvanizing effects for certain extremist actors who view the sanction as a badge of honor” (Siegel, 2020, p. 77), a badge that they display by proclaiming their martyrdom to others.

Detailed and compelling research by Mitts (forthcoming) concerning cross-platform effects of account suspension are consistent with H3. Mitts examined the content and activity from users who had accounts on both Twitter and Gab, who posted hate content, and who became banned from Twitter. Using computational message classifiers, analyses indicated that account suspension on Twitter was “associated with a significant (17%) increase in hate speech on Gab” (p. 4) compared both to their postings on Gab before their Twitter suspension, and on Twitter in the time leading up to the suspension.

Moreover, Mitts’ analyses reflect that users banned from Twitter capitalize on the banning in order to garner approval. Gab users who were suspended on Twitter commented within Gab about Twitter (often in vehemently negative terms; see Mitts, forthcoming, Table 3), moreso than those who had accounts on both platforms but who had not been suspended on Twitter. The topic of Twitter was not the exclusive focus on their hate messages; banned users also posted significantly more anti-Black, anti-Jewish, anti-Muslim, anti-Latinx, anti-Asian, anti-immigrant, anti-LGBTQ+, and misogynistic comments as well.

Analyses of deplatforming discussion spaces, rather than users, show somewhat similar results. Reddit terminated several “sureddits” that were chronically hateful. Sixteen percent of their former posters left Reddit, another 16% stayed and posted in other subreddits but reduced their toxicity by 6.6%, but another 5% of users increased their toxicity by 70% (Cima et al., 2024).

The next hypothesis incorporates the construct of social disapproval. It specifies conditions under which disapproval indirectly affects a hate poster, the direction of the effect, and several critical contingencies moderating the effect.

This hypothesis is multiply-contingent on the presence of social disapproval messages as well as approval related to the exchange of hate messages and their replies. If online hate posters are affected primarily by approval messages from others, negative responses from yet other users would not be expected to affect hate posters directly. However, if there are sympathetic witnesses to one’s hate message and negative (presumably victims’ or bystanders’) response(s) to it, it is likely to stimulate epicaricacy, the overt responses from which may appear as signals of social approval and/or piling on the target, which gratifies the original hate poster. In this context, the greater the intensity and negative emotion expressed as social disapproval by critics, the greater the social approval peers may provide to the hate poster. This in turn leads the poster to replicate or magnify expressions of hate. This is, in a sense, an important hypothesis for a social approval explanation of online hate: If hate posters magnify hate in response to victims’ disapproval messages, alone, then hate messaging is trolling or sadism performed in order to disturb its victims, rather than a function of social approval messages that follow on.

Research that directly tests the effects of disapproving messages posted in response to hate messaging is rare. In one potentially related study (Munger, 2017), a message objecting to their language was sent to actual Twitter users whose tweets included the word, n***er. When that message appeared to have been sent by a white-skinned character, a long-term reduction in hate posters use of the word, n***er followed. However, when the same message was sent by what appeared to be a dark-skinned character—more likely to represent a victim—there was an increase in hate posters’ use of the word over several weeks. It is not discernable from Munger’s report if the effect was mediated by social approval from peers. That element is necessary to distinguish between the social approval hypothesis instead of reactance formation. Within social approval theory, enraging victims is not a goal but rather the means to an end, the end being earning social approval from those who express enjoyment at victims’ negative expressions.

This hypothesis not only requires negative responses but also that the negative responses appear in the presence of a hate poster’s overt admirers. Sometimes hate posters can tell whether peers are present in an online space by seeing what has been written before, and what has received social approval (e.g., Shmargad et al., 2022). At other times, in a target-rich environment, for instance, they may not. Under such circumstances, should there be sufficient motivation or anticipated gratification for a hate attack to take place at all? H2 predicted there should not, but an exception may be found when hate posters use other means (including certain digital affordances) to communicate their hate messaging to audiences at another time or in an alternative channel in order to evoke social approval, as reflected in the next hypothesis.

There are at least three strategies by which hate posters can report their hate messages (and victims’ disapproval) in order to focus others on those messages and garner their approval, even when the others did not witness the conversation in which the messages originally appeared. The strategies defy time and space so to speak, in that they recreate to some extent the experience of witnessing events that may have taken place in a different thread, on another platform, and/or at another time. One way is simply by telling others what one said and what response occurred. Having “owned” one’s opponents (Hartley, 2023) is a commonplace boast, presumably uttered to attract social approval. Another strategy is available when hate messages reside on an asynchronous communication platform, the location of which a hate poster can share with a hyperlink pointing to the event (Rae et al., 2024; Stringhini & Blackburn, 2024).

When hate postings take place in real time, hate messengers have used a cross-platform strategy by which they alert others congregating on one platform about of their hate activity (and victims’ responses) on another. As mentioned above, Stringhini and Blackburn (2024) describe numerous cases in which hate messengers initiate a message thread on one channel (e.g., 4chan) and organize collaborators to attack a victim elsewhere, such as a YouTube page or a Zoom meeting. Hate messengers report back on 4chan what upsetting messages they posted to the target, and targets’ aggrieved responses. This kind of showing off sometimes continues in the 4chan thread even after the attack has concluded, in what Stringhini and Blackburn call a “wrap up/celebration” phase. According to Stringhini and Blackburn (2024, p. 259), “attackers even include screenshots from the secondary platform showing their contributions to the attack itself,” that is, they take pictures of their aggression on one platform, and post them in a different platform where other users see and admire them. Similar maneuvers are reported in cyberbullying research, where a “perpetrator may get some peer rewards through sharing their abusive actions (most obviously, in picture/videoclip bullying), thus amusing others in their gang and constructing the wider audience often involved in cyberbullying” (Smith et al., 2008, p. 383). These phenomena make a strong case for social approval as a driver of online hate as opposed to its motivation primarily to distress victims.

Discussion

This essay argues that online hate is driven by the adulation one garners from other social media users. Seeking and acquiring social approval are critical in the propagation of online hate, and may be more influential than individuals’ desires to harm ostensible victims. The serious negative effects on the victims of online hate are not to be underestimated; their reality has been a proper impetus for the study of online hate and efforts to moderate its presence on social media. Notwithstanding their importance, the present work considers the deliberate instigation of harm a tragic, subordinate goal of online hate posting.

This premise leads to propositions about how the securing of approval affects hate messaging; how approval provides positive reinforcement in some contexts, and negative reinforcement in others. It predicts that some activities that are conventionally undertaken to deter online hate, through counterspeech or account suspension, may actually encourage it, when the actions garner a hate poster more signals of social approval by other observers in the immediate communication space, or through haters’ narratives and digital evidence of the misdeeds they communicate across time and platforms. Some hypotheses appear as abstractions, while others appear to be substantiated, at least indirectly, by extant empirical findings.

It is not a grand theory and it has no unique undefined terms. Its aim is to organize and relate important factors in such a way that may untangle potentially paradoxical patterns that have been and may be observed, with the potential to improve the explanation and prediction of online hate at least in part. It offers a communication, interaction-based explanation of the social dynamics of online hate production. Its factors may provide necessary and sufficient conditions with which to predict public online hate messaging, or they may covary or interact with other influences, in ways that future research can assess, along with or in place of the predominantly psychological and deviance-related approaches that have dominated the field.

Theoretical Value

One may ask how this approach aligns with existing theories that address online hate production. Research often calls on social identification theory (SIT) to explain the etiology of online hate (e.g., Oksanen et al., 2014): Those who identify with an ingroup denigrate members of an outgroup, or the entire outgroup, to make the ingroup distinctive and “evaluatively superior to an outgroup” (Goldman et al., 2014, p. 819; Tajfel & Turner, 1979). This is particularly likely if a group perceives that its status is threatened (Douglas et al., 2005), a frequent theme of “the great replacement theory” that echoes in many hate postings (Hobbs et al., 2023; Jasser et al., 2023). SIT also explains the forms of expression that become normative in online communities (Törnberg & Törnberg, 2024). Nothing in these well-established patterns is precluded in a social approval model of online hate propagation.

Neither, however, is SIT necessary or sufficient to predict the effects by which garnering signals of social approval are proposed to promote greater hate posting. Individuals may express hate about others regardless of the etiology of their attitudes toward them, whether based in group identification or not. The present model focuses on approval messages between hate posters and their audience members; SIT is silent with regard to interactions between ingroup members or their concern for one another’s good graces. Those are interpersonal concerns, beyond the boundary of SIT, which holds that individuals enhance their esteem by means of social comparisons between groups, not inter-member interactions (Tajfel & Turner, 1979).

Nevertheless, beyond the etiology of hate expressions, intergroup discrimination and denigration is so salient in many online hate contexts, that advocates for SIT might consider identification to be indispensable to the social approval dynamic. It may, one might argue, matter greatly to hate posters whether they perceive the source of an affirming response to their hate posting to be an ingroup or outgroup member: If and only if an affirmation appears to be from an ingroup source is it perceived as social approval, and bestow reward value, encouraging further hate posting. If social identification moderates the effect of social approval that way, it would provide a critical boundary condition to the theory, and be a defining characteristic of a “hater-rich environment” proposed in H3 above. This becomes a vital question for research.

Resolving the question will necessitate careful research designs. Simply asking hate message posters (or observers) whether an affirming response to a message came from an ingroup or outgroup source is insufficient, as it will be prone to a tautological bias. Experiments involving variations in apparent group membership and affirmation signals may be stronger (e.g., Wang et al., 2009). Potential approval signals, in both normal and greater-than-normal numbers, must appear to be generated by (a) ingroup members; (b) outgroup members, for example, those who are the very targets of the original hate message (who nevertheless seem to praise an antagonistic posting); (c) socially indistinguishable individuals (neither in- or out-group); and (d) a mixture of persons, a through c, to see if haters do not care who “likes” them, as long as they get liked by many people, regardless of who they are. The impact of these factors may also differ contextually, depending, for instance, on the perception of intergroup threat, or more generally, whether a performance is more sincere or less sincere, in Goffman’s terms.

Aside from social identification, some hate-related exchanges may foster interpersonally individuated relationships, theoretically beyond the reach of SIT. In Stormfront’s white nationalist discussion boards, for instance, some members exchange individual birthday greetings (De Koster & Houtman, 2008). Interpersonal relationships offline intensify when partners discuss people they mutually dislike (Bosson et al., 2006), and online, people come to know and like each other, sometimes intensely, based on interpersonal characteristics they discover while exchanging posts within large-scale virtual communities (e.g., Baym & Ledbetter, 2009). Online Likes and affirming verbal reactions levy nearly twice the effect on social media users when they come from identifiable friends than from others (Carr et al., 2016; Carr & Foreman, 2016). Just as haters may be friends, friends lead each other to be haters (see Burston, 2024). A well-known online strategy by which white supremacists groom neophytes is first by befriending them, and then introducing hate strategies; for one young man befriended through an online gaming platform, “These people became [his] friends. They talked to him about problems he was having at school, and suggested some of his African-American classmates as scapegoats” (Kamenetz, 2018, n.p.; see also Simi & Futrell, 2015). The roles of interpersonal or hyperpersonal relations and their influences on social approval in the context of hate posting should be explored (Walther & Whitty, 2021). To discern the roles of social identification and/or interpersonal influences on the impact of social approval messages, research focusing on different sources of social approval is clearly needed.

Norms are also exemplified in the relationship between hate messaging and social approval online. Research shows how individuals emulate other users’ hate messaging in response to observing normative patterns in the Likes that other hate posters received (Shmargad et al., 2024). Recent work shows normative influences on online incivility (name-calling) both within and between two identity strata on Twitter (elected politicians and the public), indicating contagion effects and “conversational momentum” (Barbati et al., 2024). In fact, one experiment found that when otherwise unhateful individuals are randomly assigned to online conversations with high levels of trolling messages, and are in a bad mood, they post significantly more extreme messages, including profane and racist comments (Cheng et al., 2017).

Other theoretical approaches may also inform and extend the principles presented above. If the need for social approval may be considered a human drive, predictions can be made that the escalation and de-escalation of hate posting should appear cyclical over time. Hull’s (1943) approach to operant learning theory includes drive as a motivational factor that affects the likelihood that a behavior is enacted in the presence of probable reward. When one’s drive elevates (because it has not been satisfied in some time), and if one perceives the presence of probable and obtainable reward, an individual enacts behavior that has previously accrued the reward. If successful, it reduces the drive state, and the extremity of behavior recedes for some time. This approach may inform the contention that hate posters seek spaces where social approval is more abundant, that there is a motivational aspect to a state of insufficient approval, and the mechanism of social approval is reinforcement.

Hull also offers conditions that lead behaviors to intensify, however. If, at the peak of one’s drive, one’s behavior garners unusually high levels of reward, it “will evoke more rapid, more vigorous, more persistent, and more certain reactions” (Hull, 1943, p. 133). If there is a need for social approval, and one successfully clamors to satiate it, the need and the clamoring recede. If the clamoring begets more and better approval than in the past, however, one will clamor louder and longer the next time one is needful. Other recent work discusses “reinforcement learning” in response to affirmations that are conveyed via affordances of social media (Turner et al., 2024), by which digital signals of “‘social rewards’ provide the ingredients for achieving affiliation, [and] make us feel good in and of themselves” (p. 3).

Other Areas for Future Research

A social approval explanation of online hate raises research issues that may enhance the precision of its constructs and propositions, their operations, and their boundary conditions. Individual differences may affect the scope of the dynamics this work has posited, and they warrant investigation. The proposed relationships are likely to adhere within a “differential susceptibility to media effects model” (Valkenburg & Peter, 2013): Some people will never express online hate messages, and the experiences, backgrounds, upbringing, or forces that originally lead an individual to do so are likely to interact with the factors proposed in this work.

Other individual differences may also pertain. The need for social approval relates to the use of social network sites (Kalaman & Becerikli, 2021), while narcissism corresponds with efforts to get Likes online and how important it is to get them (Zell & Moeller, 2017), with online approval-seeking behavior (Bergman et al., 2011), and with the production of online hate (Carpenter, 2012). Relevant cultural differences in terms of online approval-seeking (Yue & Stefanone, 2022) may also play a role, as may variations in the social acceptability of ethnophaulisms (derogatory slurs, or references to physical or character traits; Reid, 2012).

Assessing other motivations for hate posting, and their potency in relation to social approval, should also receive attention. This theory has prioritized social approval without explicating secondary goals and influences. Sadistic intent to harm targets psychologically, entertainment, and other factors deserve examination nevertheless. The prominence of alternative goals among those who post hate are likely to vary contextually, if not universally. A beneficial outcome of future research would be to verify or to falsify the preeminence of social approval as the driver of hate messaging in relation to other factors.

Differences among various forms of social approval signals may exist (see Sumner et al., 2020) with respect to their effects on hate messaging. Recent research has shown that Likes provide the effects predicted in H1; retweets have stronger effects. Other forms of engagement had none, including “quote tweets” (in which users forward a hate message with their own comment appended) and replies (comprised entirely of a verbal response; Jiang et al., 2023). This may be because a verbal reply can negate, rather than affirm, a hate message (Metzger et al., 2021; Weigel & Gitomer, 2024). Research should investigate how alternative forms of reply confer (dis)approval of hate messages: whether they complement them via additional verbiage, compliment them via the meta-messages conferred by Likes and retweets, or negate them.

Content moderation practices that can remove hateful postings or suspend hateful users naturally affect the production of hate and its responses. Hate posters, cognizant of differences in content moderation, imbue their messages with secret codes and innuendo to evade detection. They even at times carry on conversations across multiple platforms simultaneously, to avoid restrictions that are enforced on some platforms but not on others (Rae et al., 2024). How these parallel conversations affect levels of expressed hatefulness in order to evade moderation, and the effects of social approval signaling, will comprise complex but important research.

The role of anonymity and its relationship to garnering approval for hate is also a complex issue. Many commentaries describe internet communication as anonymous and many point to anonymity as a factor in the production of hate messages (Jasser et al., 2023; United Nations, 2023). However, most social media spaces are not literally anonymous. Users’ account names or nicknames persist across time and provide identity and means to accrue social approval markers. Even when extremists’ accounts get suspended and they lose their collection of followers, they often obtain new identifiers quickly; “a variation of the previous username is created within hours . . . .The user’s first tweet is often an image of the Twitter notification of suspension, proving that they are the owner of the previous account. . .which allows them to rebuild their audience of like-minded followers” (Vidino & Hughes, 2015, p. 24). Among the few platforms offering true anonymity, 4chan also offers the ability to use nicknames, but using them or remaining anonymous makes no significant difference in hate messaging (Stringhini & Blackburn, 2024). In a sense, anonymity might be the enemy of social approval. Empirical studies that have tested it have failed to find significant effects of anonymity on hate messaging (Douglas et al., 2005; Munger, 2017; Rösner & Krämer, 2016; Trifiro et al., 2021). Douglas and McGarty’s (2001) experiment found that individuals logged in with their names used more stereotypical language to describe targets than did those who were anonymous. Additional research is needed given many variations of pseudonymity, anonymity, and identification online.

Research should explore the cumulative effects over time that the hypothesized actions may produce. If the patterns of hate escalation are cyclical, as was considered above, we may predict homeostatic levels of hate expression online over time. Alternatively, the pattern of hate, reinforcement, and magnification may lead to attitude changes among haters over time, leading to greater extremism. When “an individual’s . . . messages garner numerous Likes and reinforcing comments from others—dozens or hundreds more indications of social approval than they could garner offline—we might predict a transformative shift in the individual’s views and identity” (Walther & Whitty, 2021, p. 129). This development has been documented in several accounts of online hate (Scrivens et al., 2020; for review, see Bright et al., 2022; Tong & DeAndrea, 2023). Even if hate posting is impelled by extrinsic reward, 3 individuals may experience self-perception effects, and bring their attitudes to match their behavior (Bem, 1972).

The social approval model raises certain possibilities to mitigate or control online hate. The model implies some utility for “shadow banning,” by which social media platforms surreptitiously hide a user’s post from others (Jaidka et al., 2023), or hide Likes (Susilawaty et al., 2023). Consequently, a hate poster receives less social approval, without being banned and migrating. Although less approval might frustrate such users’ needs leading them to exacerbate hate messages at first, a long-term reduction of approval may extinguish the behavior. Other “soft moderation strategies” can also demote the exposure a message receives (Gillespie, 2018) and throttle social approval (Cima et al., 2024). Perhaps demoting the visibility of frequent hate-Likers may be more effective than banning frequent hate-posters. Research on “counterspeech” provides paradoxical predictions. Direct negative responses to hate messages from targets or bystanders, originally conceived to deter hate posters, more often benefit the targets of hate postings, who feel protected or defended (Tong & DeAndrea, 2023; Van Houtven et al., 2024). Such findings often appear in controlled experiments where no possibility of additional responses by haters exists. It remains to be seen whether counterspeech provokes others’ responses that support a hate poster, which in turn stimulate greater hate messaging. Additional research is crucial about whether and how counterspeech, from whom, in what form, and in front of what others, promotes or inhibits the repetition and intensification of hate overall.

Methodologically, research exploring online hate frequently relies on computational applications to naturally-occurring “big data.” It is important for future research of this nature to examine hate messages and responses of all kinds—social approval signals, counter-messages and their responses, additional social approval of the hate message, and the original author’s responses to both social approval and to counter-message(s). Although analyzing hate messages has definitional and measurement problems, efforts to harness artificial intelligence for effective content analysis hold promise (Li et al., 2024).

This essay has paid little attention to the harm that online hate messages cause. Research is warranted in large part because of those very serious effects on victims’ well-being (Tong et al., 2022; for review, see Bliuc et al., 2018), and the consequential intolerance and discrimination that echo from them throughout the internet and society (Hsueh et al., 2015).

Footnotes

Author’s Note

The author expresses his sincere gratitude for the stimulating suggestions by and exchanges with the anonymous reviewers, and those of Communication Research editor Steve Rains.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.