Abstract

This study empirically examines the opinion leader effects on a mobile clinical information technology implementation by physicians in an American community health system using a fixed effect regression model. The model result suggests that the opinion leader effects are statistically significant during this information technology implementation process. Quantitatively, if opinion leaders increase their technology usage by 10 percent, the physicians who work closely with those opinion leaders would increase their technology usage by 3.5 percent, after controlling for physician individual-level fixed effects, time effects, working environment, and workload. This empirical result of opinion leader effects provides policy implications such as, if a healthcare system wants to promote a new information technology or a new mobile information technology implementation within their organization, they should leverage this opinion leader effects.

Keywords

Introduction

Research shows that e-health can have positive impacts on reducing medical errors, saving costs, improving usability, and convenience. 1 However, the healthcare information technology (IT) adoption rate in the United States is low at both the physician office and hospital levels. Less than 30 percent of US physicians adopt e-health in their work and less than 10 percent of US hospitals use a robust healthcare information system.2,3 Even up to 2014, the physician office e-health adoption rate in the United States is 74 percent on average at national level, which is much lower than that of other developed countries, such as New Zealand, the Netherlands, and the United Kingdom, which is 89 percent or more.3,4 The US healthcare industry has already realized its inadequacy in this area. 5 However, the healthcare industry in the United States has many special characteristics, including the fact that many physicians or physician offices are independent or practice in facilities independent from the major healthcare systems or hospitals, even when they are associated with them. This means that the health organizations have no power or very weak influence to mandate the implementation of new IT over physicians. This issue makes the promotion of IT implementation and utilization among healthcare providers more challenging in the healthcare industry in the United States.

At the same time, interest in mobile technology applications has been rising in healthcare.6–9 One study found 62 percent of surveyed clinicians expressing interest in using apps to view electronic health records (EHR). 8 Another survey shows that 68 percent of surveyed junior physicians said that using smartphones’ clinical apps saved their time in clinical activities. 10 However, a 2013 survey of physicians indicates that nearly 60 percent are non-users of e-health. 11 Although there are some studies on mobile technology usage or acceptance at work,12,13 there are limited empirical studies about physicians’ mobile technology usage or implementation behavior and the factors that impact them.14,15

Also, the authors noticed that since 1980s, there are a large number of technology acceptance model (TAM) studies in information systems as well as in healthcare.16–21 But, this study takes a different angle from the TAM research on the technology adoption and implementation. We are interested in using a secondary data set, from an objective perspective, to examine physicians’ technology usage behavior in a clinical setting, not survey questions to investigate the technology acceptance by physicians.

In the United Kingdom, a study found that the most important factors that impact IT implementation in healthcare are human factors, not technology factors. 22 Therefore, this study is interested in examining how the social influence, or opinion leader effects, impacts a new mobile IT implementation in healthcare, using a secondary panel usage data from a community health system in the United States.

Social influence and opinion leader effects have been examined by scholars in information systems for a long time, and its importance has been noted in medical research as well.23,24 Particularly, the classical innovation diffusion study by Rogers 23 emphasized that innovation diffusion is a process; when a new technology is introduced, initially people perceive using the new technology as uncertain and risky, so many may not adopt the new technology in the beginning, but instead, may seek out others around them who have already adopted the innovation, such as early adopters or opinion leaders, which may help to reduce their uncertainty, resulting in subsequent adoption. Thus, the innovation will diffuse from the early adopters or opinion leaders to their circle of acquaintances over time. Conceptually, opinion leaders are respected people who possess sufficient interpersonal skills to exert influence on others’ decision-making.23,24 Empirically, there are many ways to identify opinion leaders among a population. We will discuss more specifically later in section “Data.”

This study is interested in investigating opinion leader effects on a mobile technology implementation because if social influence is established as a strong factor, then as a policy adjustable factor, it can be leveraged to promote the implementation and utilization of the new technology, rather than the technology design users, gender, or age, which we cannot change once the technology has been deployed by an organization. Thus, our hypothesis is that opinion leaders have a strong impact on technology usage by physicians around them. The more opinion leaders use the new technology, the more the physicians around the opinion leaders would use the technology. If so, policy makers or health organization’s administrative can utilize the opinion leader effects to promote technology adoption or implementation within their organization, thus improve the quality of care using the new technology.

Data

Study context

The study site is a community-based health system located in Southwestern Pennsylvania, USA. In partnership with about 300 physicians and nearly 4000 employees, the community healthcare system offers a broad range of medical, diagnostic, and surgical services at two hospital campuses with over 500 beds, and many small clinical practices distributed across the community. In June 2006, the health system deployed a Mobile Clinical Access Portal (MCAP), which is a wireless personal digital assistants (PDA)-based, client-server solution providing physicians with online access to clinical data and about 24 clinical functions such as searching patient information, reviewing patient medical histories, using electronic prescribing, placing lab orders, and checking lab results. Physicians of this health system were provided PDAs free of charge, and the physicians were able to use the PDA to access the MCAP anywhere, anytime, at their convenience, such as in the office, at home, or while traveling. All the MCAP use was optional, not required, because the system wide electronic medical record (EMR) is on a desktop computer system which is the entry for all the major clinical data.

Data

The Chief Information Officer of this healthcare system and his technical team provided four data sets for this study. The first data set included de-identified demographic information about 250 full-time physicians, comprising a unique coded physician ID and demographic data. The second data set included the clinical group practice information, which indicates which physicians practice together and which physicians are solo practitioners. The third data set contained MCAP usage data from their system computer server’s log files, and each record represents a certain application that was being used at a given time by a given physician. The fourth data set included physician ID, patient visit date, and four types of patient visit volume for each physician: inpatient visit, outpatient visit, physician office visit, and emergency room visit. This data set is from their system’s servers as well.

It was necessary to exclude 58 out of the 250 physicians with either missing demographic information or missing patient visit information after merging the four data sets, leaving 192 physicians in the merged file for this study. Since almost 23 percent (58 out of 250) of the physician records were dropped due to incomplete data, we performed four two-sample t-tests between the dropped out group and the remaining group for age variable, gender variable, specialty area, and patient visit, respectively, to see whether those variables’ mean values are equal or not between the two groups. Since none of the t-tests are statistically significant, which means those mean values from dropped group are not statistically different from the mean values from the remaining group, we concluded that the dropped data should not impact our analysis or bias our model result.

Ideally, we would like to examine the technology usage behavior for every specialty area separately. But as a community health system with limited size, many specialty areas only had one specialist or one practice (such as Oral and Maxillofacial Surgery, Nephrology, and Psychology). Also, the MCAP features are not very medical specialty dependent just as the online Blackboard system is not designed for every subject in a university. Therefore, after discussing with the health system decision makers, we divided the 30 medical specialty areas into two general categories, General Practitioners and Specialists, in order to control for how specialty areas may affect physicians’ implementation of MCAP. The General Practitioner category includes internal medicine, family practice, and pediatrics, and Specialist category includes the remaining specialty areas. Furthermore, since age is not believed to have a linear impact on technology usage behavior, we divided the physicians into three nominal age groups as prior literature has done: ages 45 years and below (with the youngest physician being 30 years old), ages between 46 and 55 years, and ages 56 years and above. 25

As scholarly studies have discussed, there are many ways to identify opinion leaders in an empirical study and different identifications may have different impact on the study results.23,26–28 In this study, opinion leaders for MCAP (for simplicity, from now on we only call them opinion leaders but it does not mean they are opinion leaders for everything) were identified by the health system administration based on their dynamic observations, referred to as the informants’ rating method. 23 In our case, the health system’s administrators identified some physicians who were opinion leaders of this MCAP technology. Those physicians were enthusiastic drivers to encourage the community health system to adopt and deploy the MCAP, and they were also early (received the new technology in the first 2 months) and frequent users of the MCAP. The rest of the physicians of the health system received the PDA gradually over time after the third month. Such exogenous identification of opinion leaders avoids the problems that arise when users are asked to nominate their opinion leaders using a survey as in prior studies, which provides a unique opportunity for our study.26 –28

We also need to clarify the social structure of this community system, to understand who are influenced by the opinion leaders. This is a typical American community health system, which means many clinics are spread throughout the community, miles away from each other. Those clinics are also financially autonomous and independent entities. Physicians in the clinics are loosely associated with the hospital, and they only go to hospital for performing surgeries or visiting their patients for post-surgery. Physicians rarely see other physicians from other clinics because of the physical distance and irregular visit to hospital campus. The most interactions that physicians have are with physicians from their own clinics. Thus, we assume the medical practices (clinics) as the basic social influence groups, and opinion leader effects occur within the group, not across groups. This implies that physicians are only influenced by the opinion leaders from their own group practice, not by the opinion leaders from other practices.

Finally, there is no indication of group formation endogeneity problem because these group practices are based on the medical specialties, not the interests of IT or mobile technology. Furthermore, the group practices were formed long before the MCAP was deployed. Thus, this definition of social influence groups provides an unambiguous, theoretical foundation for identifying any peer effect (opinion leader effects are one type of peer effects) as discussed in the seminal paper by Manski. 29

In summary, the MCAP data sets provide several advantages for our study of opinion leader effects on physicians’ mobile technology implementation. First, the PDAs and MCAP system were provided to the physicians free of charge by the community health system, with no incentives for using it; therefore, there was no user acquisition cost which might confound technology usage decision due to users’ heterogeneous socioeconomic situations. Second, MCAP system was designed with a straightforward menu-click interface, so the learning curve was very low and the non-usage behavior should not be due to “difficult to use” reasons. Third, the use of MCAP was not mandated, and it was only an optional choice for physicians to use as part of the EMR features in a mobile version. This indicates that whether the physicians use this mobile technology or not would not affect the formal EMR system or the physicians’ daily work. Additionally, since it is a mobile technology, physicians or opinion leaders probably may use it when they move around within their clinics, such as switching between different exam rooms, lunching in the kitchen, and chatting in the lounge. Therefore, it is reasonable to assume that physicians would probably see their colleagues or opinion leaders using this new mobile technology more than a desktop computer application, and they may chat about it too and learn about each others’ use of MCAP.

Descriptive statistics

As a real-world project for an optional use technology, there was no clear timeline or deployment plan regarding when to give out the PDA to which physician. Generally, this deployment process was a little random according to physicians’ personal interest or technical staff’s schedule. Thus, the panel data that we received are an unbalanced data set.

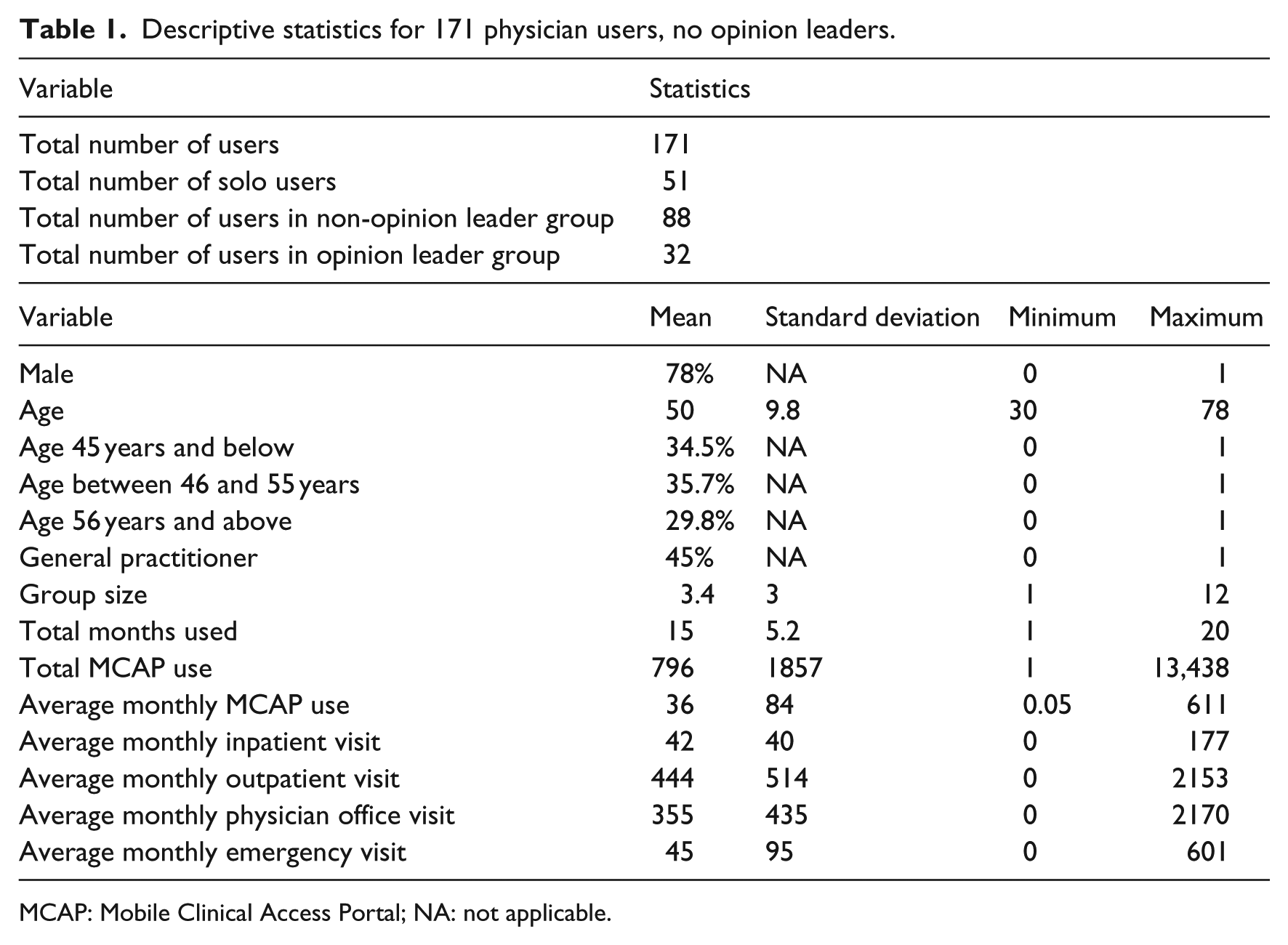

Descriptive statistics of 171 physicians (not including the 18 physicians who are opinion leaders of MCAP) and their usage are presented in Table 1. We do realize that our data set is a little small but this is an average size of a typical community health system in the United States. The descriptive statistics shows that the majority of physicians are males (78%). The age range is from 30 to 78 years old, and the average age is 50 years. The three nominal age groups have approximately similar membership. There are fewer general practitioners (45%) than specialists (55%).

Descriptive statistics for 171 physician users, no opinion leaders.

MCAP: Mobile Clinical Access Portal; NA: not applicable.

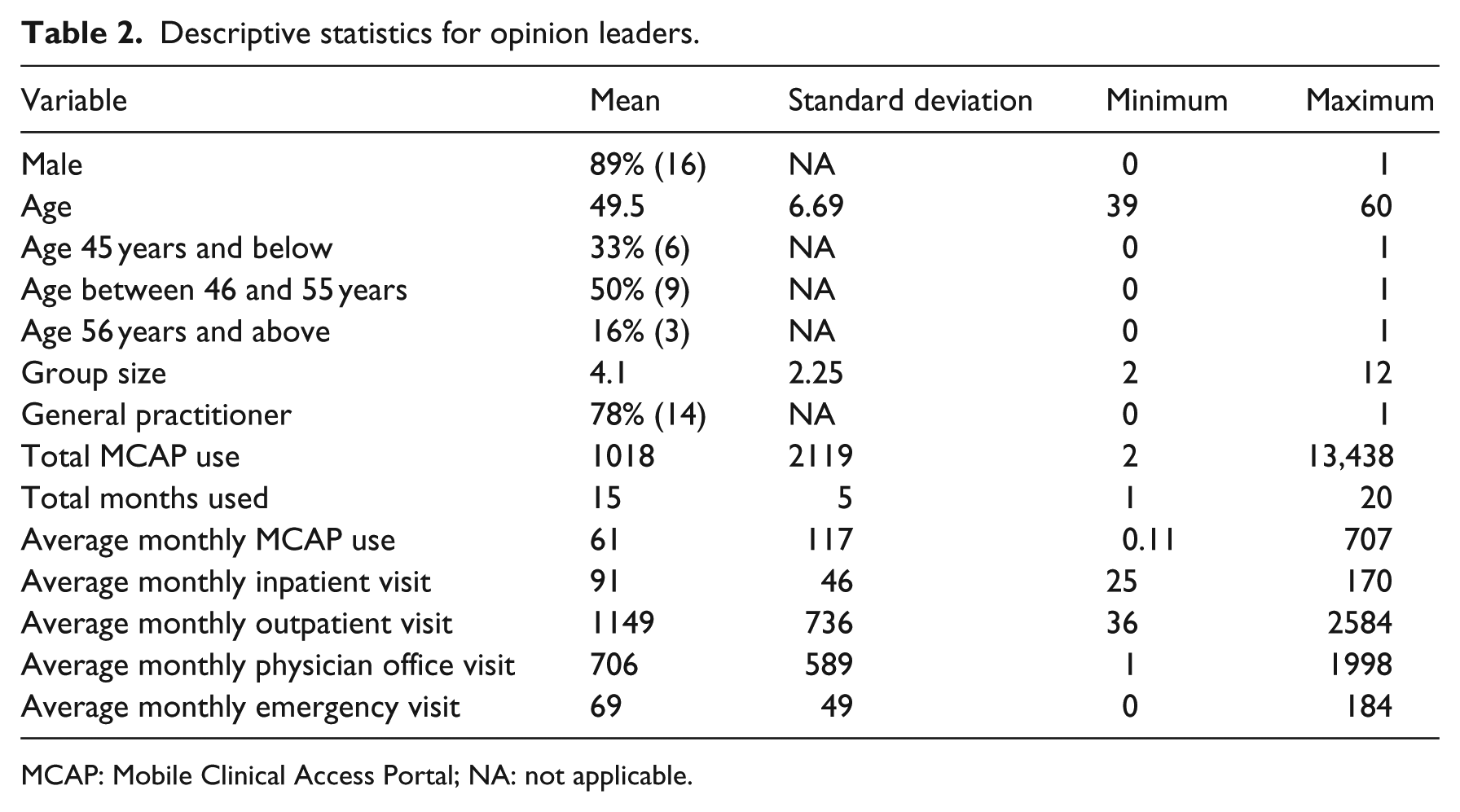

Although we have usage data at the individual instance level, we study the technology use behavior at monthly level due to data sparsity at the daily and weekly levels. One month is one time period in this study. Therefore, we aggregate the physicians’ MCAP usage data and patient visit data at the monthly level for descriptive statistics. We also can see the average monthly usage of MCAP by general physicians is 36 times per month and 61 times by opinion leaders.

The descriptive statistics about opinion leaders are presented in Table 2. There are 21 opinion leaders and 3 of them are solo practitioners who are not discussed in this study because solo opinion leaders do not influence anybody in the health system. We also performed three two-sample t-tests between opinion leader groups and non-opinion leader groups for age variable, gender variable, and specialty area variable to examine whether the variables’ means from those two groups are statistically different or not. None of the t-test results were statistically significant. That means that the mean values of those three variables from opinion leader groups and non-opinion leader groups are not statistically different, which indicates no bias for the demographic composition of opinion leader groups or non-opinion leader groups.

Descriptive statistics for opinion leaders.

MCAP: Mobile Clinical Access Portal; NA: not applicable.

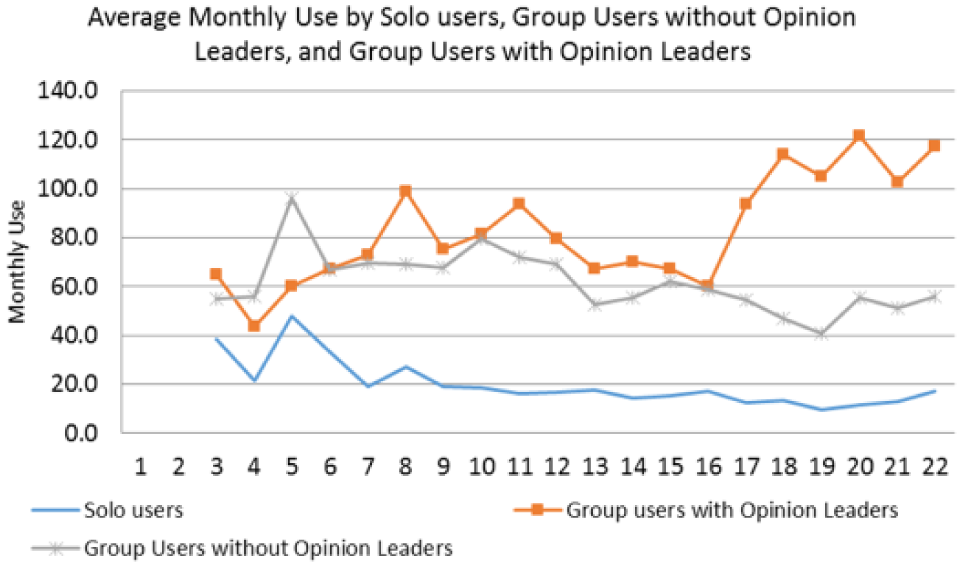

Figure 1 shows the average monthly usage by three types of users: solo physician users, group physician users with opinion leaders, and group physician users without opinion leaders over 22 months. We can see that solo practice physician’s average monthly usage of the MCAP is the lowest one among those three groups, and the group practice physicians with opinion leaders are higher most of time, and the group physicians without opinion leaders is in between. It indicates that besides opinion leader effects, a general peer effect may also exist.

Average monthly technology usage by three types of physician users.

Empirical model and results

Empirical model

Based on Figure 1 and our observation of this community healthcare system, it is likely that peer effects, effects from general colleague physicians or general peer physicians, not from opinion leader physicians, may exist too. Hence, besides opinion leader effects (having opinion leader physicians in the same practice), some physicians may be exposed to peer effects (having general colleague physicians in the same practice), relative to solo practice physicians. We do not want to assume that those peer physicians do not have an impact on the focus physician’s technology usage Or do that physicians who practice with general colleagues (non-opinion leaders) would be the same as physicians who practice solo. Thus, in order to estimate the opinion leader effects more accurately, we should control the peer effects in our model.

Furthermore, the physicians in this health system rotated among different clinical settings, such as inpatient, outpatient, emergency rooms, and office visit. The number of patient visits each physician had at each time period is also different, thus the number of patient visits at different clinical environment should be controlled in our model to examine opinion leader effects on new technology usage.

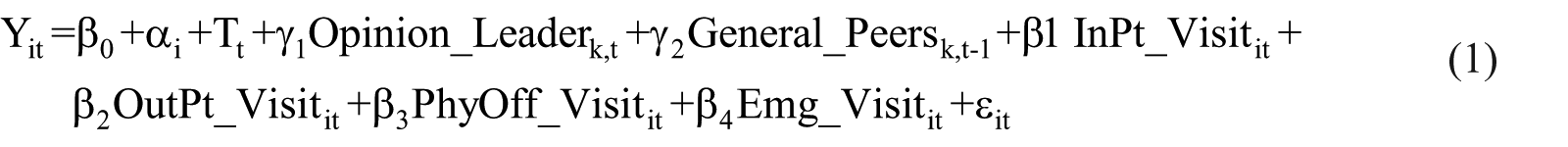

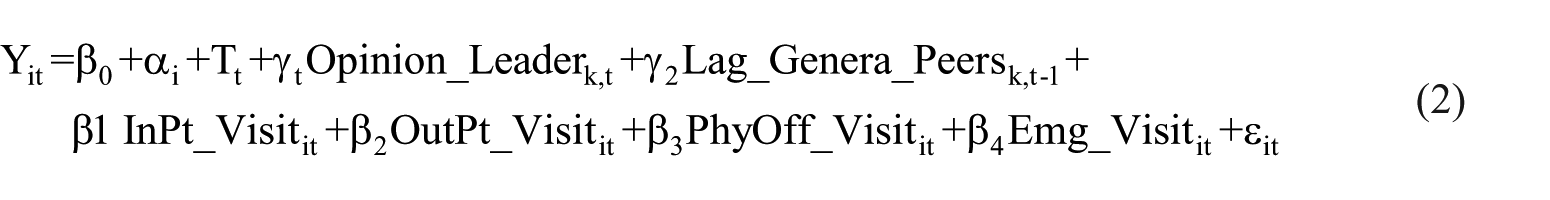

We construct the following fixed effect regression model to examine the correlation between the technology usage by physicians and their opinion leaders over time, controlling for general peer effects, the number of patient visits a physician had in different clinical settings, and the individual-level fixed effects including all the time-invariant variables such as observable characteristics: gender, age, and education, and unobservable characteristics: personal interests or technology preference 30

where Yit is the monthly usage of physician i at time period t, β0 is the model intercept, and αi is the fixed effect of the physician i, including both visible and invisible individual-level time-invariant characteristics. We also include the time trend dummies, Tt, to control time effects. ϵit is the model error term. Opinion_Leaderk,t is opinion leader effects, and we use the total technology usage by the opinion leaders within group k at time period t as the proxy of the opinion leader effects on physician i in group k. General_Peersk,t is peer effects, and we use the total technology usage by the peers within group k at time period t as the proxy of the peer effects on physician i in group k. InPt_Visitit, OutPt_Visitit, PhyOff_Visitit, and Emg_Visitit are the number of patient visits physician i had at time period t in the inpatient setting, outpatient setting, physician office setting, and emergency room setting. Note, some groups have both opinion leaders and peers. Some groups have one of them. Some of physicians practice solo which means they have neither opinion leader effects nor peer effects.

To address concerns that peer effects may have potential simultaneity problem here because physicians are peers to each other, in Model (2), we use the lagged usage by peers as the proxy to avoid this issue. 31 The only difference between Model (1) and Model (2) is that in Model (2), we use peer physicians’ previous time period’s MCAP usage, not the current time period’s MCAP usage

Model result

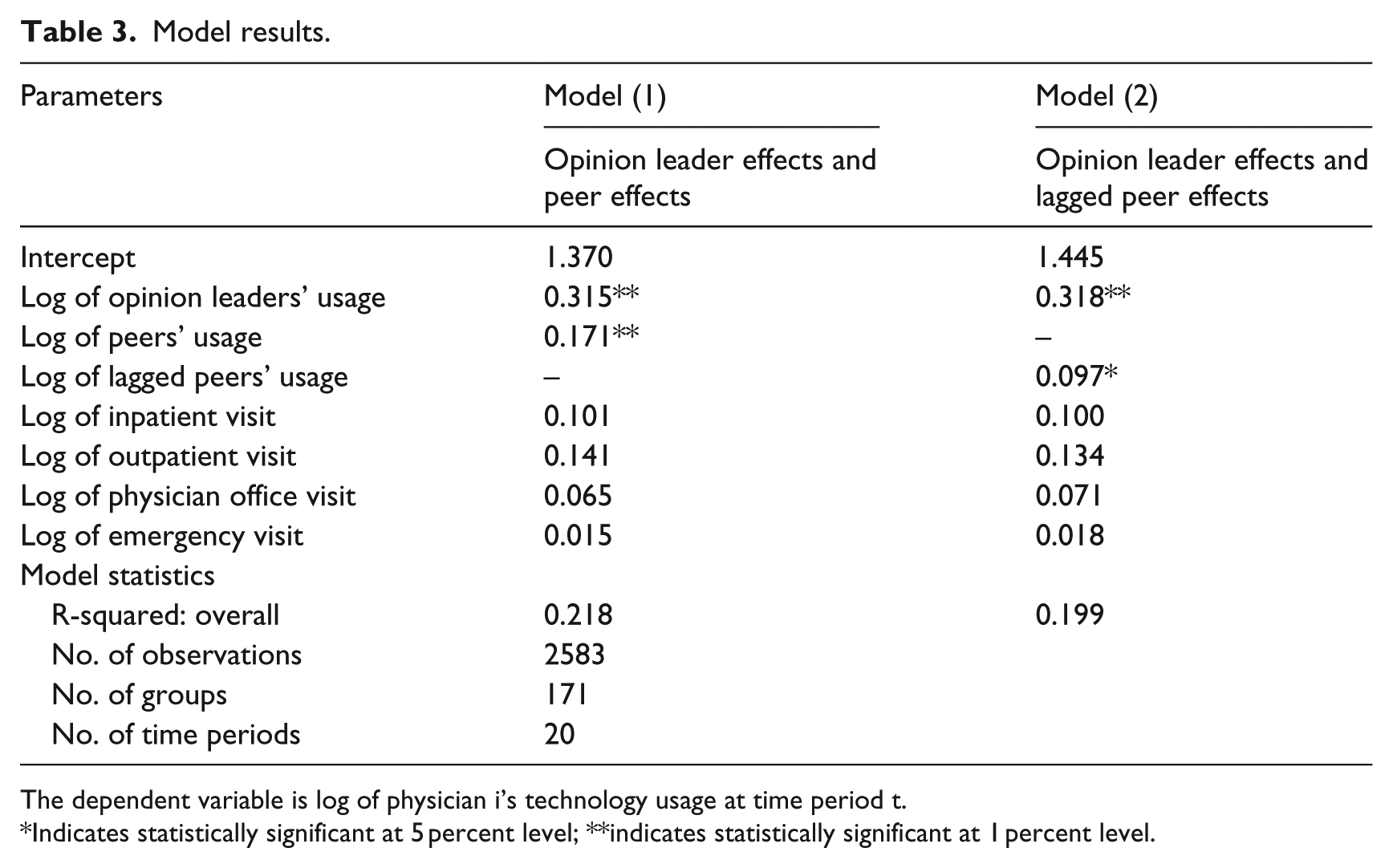

We present two fixed effect model results in Table 3, including both lagged peer effects and non-lagged peer effects models. We can see that opinion leader effects are statistically significant and similar in both models, which are 0.315 and 0.318, respectively. Because we have taken logs on both sides of the model when running this fixed effect regression (easier interpretation of the model estimates), the result suggests that when opinion leader/s increase their technology usage by 10 percent, the physician in the same group would increase their technology usage by 3.2 percent. 30 This result is consistent with our expectation that opinion leader effects exist during physicians’ technology implementation process, and the more the opinion leaders use the technology, the more the physicians under opinion leader effects use the new technology.

Model results.

The dependent variable is log of physician i’s technology usage at time period t.

Indicates statistically significant at 5 percent level; **indicates statistically significant at 1 percent level.

In both models, the general peer effects are statistically significant too. But, when general peers increase their technology usage by 10 percent, the physician in the same group would only increase their technology usage by about 0.1 percent, which is quite small. Also, none of the patient visit volumes affect physicians’ technology usage, which may indicate that this technology is quite simple, straightforward, and independent from clinical setting.

Conclusion and limitations

In this study, we examine opinion leader effects on a new mobile technology implementation by physicians in an American community health system using a fixed effect regression model. According to authors’ knowledge, this is one of the first empirical studies to examine opinion leader effects on mobile technology implementation in healthcare using a quantitative approach. The empirical results show that opinion leaders’ usage have statistically significant effects on their colleague physicians’ technology usage, controlling for general peer effects, the number of patient visits, and the individual-level fixed effects. Hence, without any special stimulants or financial incentives, as long as opinion leaders continue using or increasingly using the new technology, the physicians under the influence of the opinion leaders will likely use the new technology. This may suggest a few policy implications. First, when a healthcare organization implements a new IT or a new mobile technology, the administration should train a few opinion leaders in different clinics to encourage them to implement the new technology first. Once those opinion leaders accept and implement the new technology into their work, their influence will be naturally and gradually spread out to the people around them. This is a practical and easier solution for an organization to leverage than to launch an organizational wide campaign to work with every physician or every employee to promote a new technology implementation because opinion leaders are a small fraction of the entire organization. Second, peer effects are statistically significant too, although it is not as large as opinion leader effects. Peer effects indicate that a physician working with general peer colleagues, even if there are no opinion leaders, is relatively more likely to adopt a new technology than physicians practicing solo, or alone. Third, physicians’ clinical setting or the number of patient visits does not have a statistically significant impact on physicians’ technology usage behavior.

This research has some limitations. First, we do not know if physicians learned about this new mobile technology from friends, families, or other physicians outside of their own clinics. They might but our model only catches the opinion leader effects and peer effects from the same clinic because of data limitation. However, since this technology is very clinical and work specific, we would not worry too much that physicians would discuss a work technology with family or friends which may disclose their patients’ information. Second, we do not know whether the number of patient visits would be an accurate measure for each physician’s work because some patient visit may take longer time and some patient visit may take shorter time per service. This may bring some bias to our model estimates. Third, the exact social structure of the health system, such as within and crossing groups’ professional network, is not known. We simplified this community health system’s social structure based on its characteristics. Future research can investigate these limitations by collecting more data on physicians’ social networks and the real workload to improve the research on social influence on technology implementation.

Footnotes

Acknowledgements

The authors are grateful to the administrators of the community health system for providing the data used for this study and the physicians and staff for the clarification meetings and feedback and colleagues, R. Telang and B. Sun, for valuable inputs on the research.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.