Abstract

In an effort to improve patient safety and reduce adverse events, there has been a rapid growth in the utilisation of health information technology (HIT). However, little work has examined the safety of the HIT systems themselves, the methods used in their development or the potential errors they may introduce into existing systems. This article introduces the conventional safety-related systems development standard IEC 61508 to the medical domain. It is proposed that the techniques used in conventional safety-related systems development should be utilised by regulation bodies, healthcare organisations and HIT developers to provide an assurance of safety for HIT systems. In adopting the IEC 61508 methodology for HIT development and integration, inherent problems in the new systems can be identified and corrected during their development. Also, IEC 61508 should be used to develop a healthcare-specific standard to allow stakeholders to provide an assurance of a system’s safety.

Keywords

Introduction

Health information technology (HIT) has been widely advocated as one of the primary strategies for improving patient safety in healthcare systems. 1 Significant investment has, in recent years, been given to the introduction and integration of HIT into existing healthcare services worldwide. Electronic medical records (EMR) and computerised physician order entry (CPOE) have become key elements in reducing the number of incidents and adverse events in healthcare organisations.2-4 The wide spread advocacy of HIT has been driven by key reports in the area and an increase in media attention and public interest in the quality of healthcare services.1,5

The wide-scale introduction of, and investment in, HIT has resulted in an increased interest in its safe operation within healthcare organisations. This has prompted several studies examining the use of HIT at the ‘sharp-end’ of the system—identifying problems with the human-machine interface, integration issues within departments and the effects of its introduction on productivity.6-8 Much of the work to date has identified the benefits and success stories of HIT implementation, detailing significant reductions in the number of recorded adverse events.9-11 The reported failure of HIT systems largely appears to be anecdotal in nature, apart from for a small number of exceptions.12-16 The interest and adoption of HIT use and its benefits, however, does not appear to have been accompanied by a similar interest into the methods and techniques used to develop, create and safety-assure these systems. While the functionality of HIT systems continues to grow and become more critical to successful and safe patient care, there has been significantly less consideration given to the appropriate techniques applied in their design and development.

This article introduces concepts from conventional safety-related systems development that, it is recommended, should be considered in the development and integration of HIT systems. Analogies are drawn between healthcareand conventional safety-related systems, and it is argued that the techniques applied in the development of safety systems for the transport, chemical processing and nuclear power generation industries should equally be required in the development of HIT systems. It is recommended that IEC 61508—Functional Safety of Electrical/Electronic/ Programmable Electronic Safety-Related Systems 17 —be used as a basis for the generation of a healthcare-specific HIT development standard.

HIT in a safety-related system

IEC 61508 17 defines a safety-related system as a

‘designated system that both: — implements the required safety functions necessary to achieve or maintain a safe state for the EUC [Equipment Under Control]; and — is intended to achieve, on its own or with other E/E/PE [Electrical/Electronic/Programmable Electronic] safety-related systems, other technology safety-related systems or external risk reduction facilities, the necessary safety integrity for the required safety functions.’

A safety function is intended to achieve or maintain a safe state for the system in the occurrence of a specific hazardous event, while functional safety refers to the part of the overall safety relating to the equipment and the equipment control system, which depends on the correct functioning of the system, other technology and external risk reduction facilities. 18 The topic of safety-related systems has been predominantly researched by government organisations striving to ensure the safe operation of military, power generation and transportation systems.

A safety-critical system is a safety-related system whose safety requirement implies an increased degree of criticality. 19 Healthcare exhibits similar properties to other safety-critical industries, as discussed by several authors in recent years.20-23 These properties include high risk, complexity, uncertainty and the potential for significant losses. In addition, healthcare bares additional properties that make it significantly more challenging to manage and control as a system. These include, but are not limited to, tight-coupling, interdependency, ad hoc configurations, wide variability, conflicting guidelines for practices and regulation, incomplete evidence bases and the employment of inexperienced staff.23-26 Several of these additional factors are in direct opposition to conventional safety-related systems, which have been systematically designed to ensure safety through the use of Standard Operating Procedures (SOPs) and competent personnel, combined with a reduction in system variability.

While the similarities between healthcare and safety-related industries are clear, the technology that is being advocated as a primary strategy for improving patient safety (including EMR and CPOE) continue to be developed without the compulsory techniques of conventional safety-related systems being apparent. HIT systems continue to be developed using many of the same techniques that would be used for many commercial IT applications, for example ad hoc configuration, little formal integrationand the use of programming languages and compilers not acceptable for safety-critical systems. However, it would seem appropriate that the development of a HIT system should be developed based on standards similar to those applied to traditional safety-critical systems, with additional requirements regulating the technology used as a result of the added challenges imposed by healthcare itself. Wears and Leveson 27 highlight the problem that healthcare systems are safety-critical systems, which are dependent on IT systems that have not been designed or developed using safety-related methods.

HIT stemmed from the requirements of hospitals to better manage their business functions—billing, scheduling and the dissemination of information to patients. These functions expanded with time beyond business applications to incorporate functions throughout the healthcare service,including the following :28,29

electronic health records;

clinical alerts and reminders;

computerised decision support systems.

A recent report from the Läkemedelverkets Medical Products Agency 30 (the Swedish national authority responsible for regulation and surveillance of the development, manufacturing and sale of drugs and other medicinal products) strongly suggests that many of these HIT systems be classified as medical devices. This would require such devices to meet specified medical device directives to which manufacturers must adhere. At a very minimum, this should ensure that the manufacturers and hospitals can work with the same intentions.

The continued growth of HIT functionality has fused the delivery of modern healthcare with the proliferation of HIT use. Yet, this growth in the use of HIT has happened with little change to the methods and tools used in the systems development.

HIT developers continue to make system interfaces more ‘user-friendly’ and less complex. This simplification of the user interface merely shifts the systems complexity out of sight, requiring increasingly complex engineering systems to enable the same system functions at the sharp end. 31 This engineered complexity is achieved through ever more intricate embedded systems and software programming, for which there is little regulation.This is antonymous to conventional safety-critical systems. IEC 61508-3 32 clearly specifies requirements for the software elements of safety systems, including suitable software languages, compiler types and code development. IEC 62304 33 suggests the use of IEC 61508-3 32 as a resource for good software methods, techniques and tools, while IEC 80002-1 34 is suggested for use in completing the ‘verification’ stage of software development.

Many HIT systems have developed over time with additional features and functions added to each new version of the existing system, resulting in a type of ‘system evolutionary creep’. This has lead to later iterations of a system appearing fundamentally different to their initial design, often with significantly increased functionality and complexity. 35 An example of this is LANTIS™ (Siemens Medical Solutions, Concord, CA, USA), a radiation oncology-based information system, which was initially a record and verify system for radiation oncology treatments. LANTIS™ can now:

allow the tracking of patients, charts and films;

provide an interface between other Health Level (HL) 7 systems;

be used as a treatment planning system interface;

be extended to include other departments such as medical oncology or medical laboratories.

These new functions have made LANTIS™ a more powerful tool, but have also greatly increased its complexity in operation and design.

New system functionality is frequently introduced to a system to provide an increased level of safety in terms of the delivery of care and integrity (the ability of a system to detect faults in its own operation and inform a human operator). Leveson warns that increasing functionality is easy but determining whether you should do so is more difficult. 36 Adding new functionality to systems can result in a shift in the role of the system from a non-critical one (e.g. image storing) to one of a high criticality (system interfacing and planning). This increased functionality and shift in the system’s role to one that is safety-related should be accompanied by more stringent development methods to provide an assurance of its safe operation as is required in conventional safety-related systems. Spear highlights the fact that HIT developers are operating in a highly competitive market, where their own product enhancements may be incompatible with their earlier models, and which will most likely not conform with the products of other companies. 37

Safety-related systems theory has long considered the impact of computer systems on successful system operation prior to ‘going live’, for example the Darlington nuclear reactor. 19 However, anecdotal evidence suggests that the safety of adoption and use of HIT is often, and routinely, only considered once the system has been bought and frequently only as a knee-jerk reaction when an incident occurs. IEC 61508provides a structured methodology for safety-related systems development and integration which would be of significant benefit in the development and integration of HIT.

Existing standards for medical device and HIT development

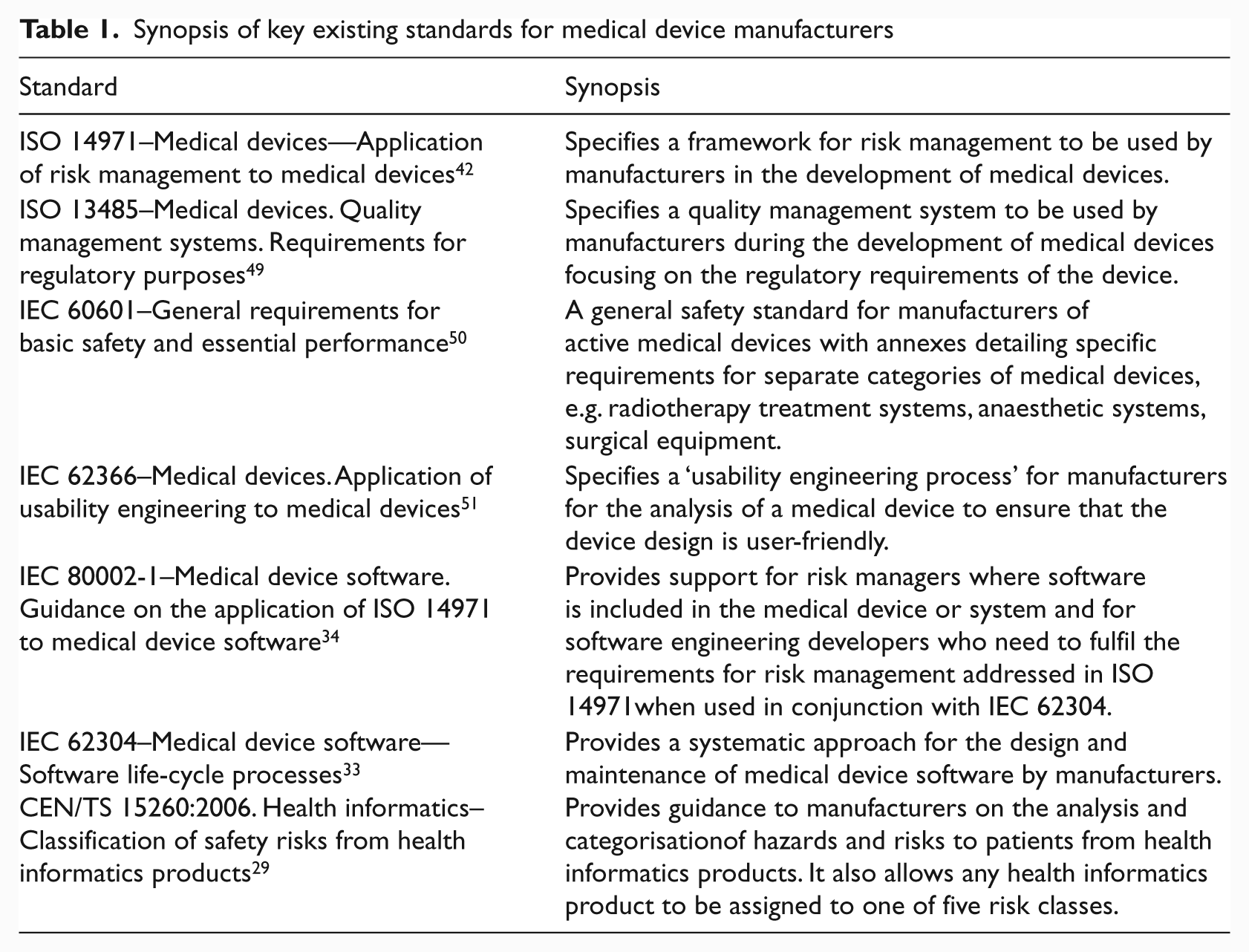

It is frequently the case that hospitals will combine multiple medical devices and network capabilities to create an integrated HIT system. A number of standards exist for medical device development to which manufacturers must conform, including those outlined in Table 1 below.

Synopsis of key existing standards for medical device manufacturers

However, these standards ‘are addressed to the manufacturer of medical devices’ and not the healthcare organisations who integrate the devices creating the healthcare system. 38 These standards have been focused on the development of individual medical devices and not on the safety and analysis of integrated HIT systems. Significantly, as discussed by IEC 60601-1, 39 it is often the case that individual pieces of medical equipment ‘will not have been designed to work with each other’.

Wears et al. 40 argue that the United States Food and Drugs Administration’s (FDA) evaluation of medical devices is intentionally limited, stating that their definition of a medical device is ‘purposely narrow’ and would exclude devices such as CPOE and integrated healthcare systems.In contrast, the Läkemedelverkets Agency 30 requires the healthcare organisation to ensure that the network configuration and changes to it are given ‘the same level of attention and monitoring regarding any residual risks as those expressed by each system manufacturer’. Furthermore, the Läkemedelverkets Agency 30 clearly states that the installation and ‘verification of installation’ of a system must be completed using a standardised methodology ‘known to both manufacturer and user’.

Unfortunately, as stated by Wears et al., 40 the ‘validation of individual device design is an insufficient basis from which to conclude that use in context will attain the design performance levels’. Currently, there are no prescribed methods to provide an assurance of safety for an integrated healthcare system, i.e. multiple medical devices connected using a hospital network with specific functionalities and procedures. To facilitate this, the healthcare provider would be required to evaluate and report to the manufacturer what the intended operating environment would be, including what connectivity there would be with other devices or systems, for example operating systems or databases. This would require detailed knowledge of the intended operating environment and systems. Equally, the healthcare organisation will require detailed information about the individual medical devices, for example, compatibility with other devices and known interfacing problems, so that it can create the required HIT system functionality.

The resulting consequence of this is that as healthcare providers combine different devices and network capabilities to create a healthcare system, they will be required to provide an assurance of safety of the developed integrated system. This would place the duty of care, regarding the safety assurance of a health care system, on each individual healthcare organisation, for which only limited guidance is provided by IEC 80001. 41

From a healthcare organisation’s perspective, the only relevant HIT standard is IEC 80001. 41 Its aim is to help healthcare organisations when connecting medical electrical equipment with software elements in the hospital’s network and has a particular emphasis on the administration of the hospital’s computer network. It details two distinct system configurations: (i) physically isolated medical devices and (ii) shared medical devices. Hospitals are solely responsible for ensuring the safety of shared system configurations.

IEC 80001-1 recommends a ISO 14971 42 approach to ensuring safety, where hazards are proactively identified and risks are determined, with risk control measures to ensure system safety. This is dependent on the implementing organisation comprehensively identifying all potential hazards, which can be particularly difficult when new system configurations are created and system inter-dependencies may not be fully understood. However, healthcare is a complex socio-technical system, which exhibits emergent properties that are the result of unexpected non-linear interactions occurring between the system components and the environment. These properties or hazards cannot be identified from a functional decomposition. 38 Consequently, the ISO 14971 approach to risk management may not be suitable for ensuring the safety of complex healthcare HIT systems.

HIT development using IEC 61508

IEC 61508 provides a generic approach for the safety lifecycle activities required for the development of electrical and/or electronic and/or programmable electronic components [electrical/electronic/programmable electronic systems (E/E/PESs)] that are used to perform safety functions. 17 The standard was developed as a generic guideline for safety-related industries to apply in the development of new systems and the upgrade of existing systems. It was also intended to provide industries with a starting point for the development of appropriate industry specific standards, for example IEC 61513 Nuclear power plants—Instrumentation and control for systems important to safety—General requirements for systems 43 and IEC 61511 Functional safety—Safety instrumented systems for the process industry sector. 44

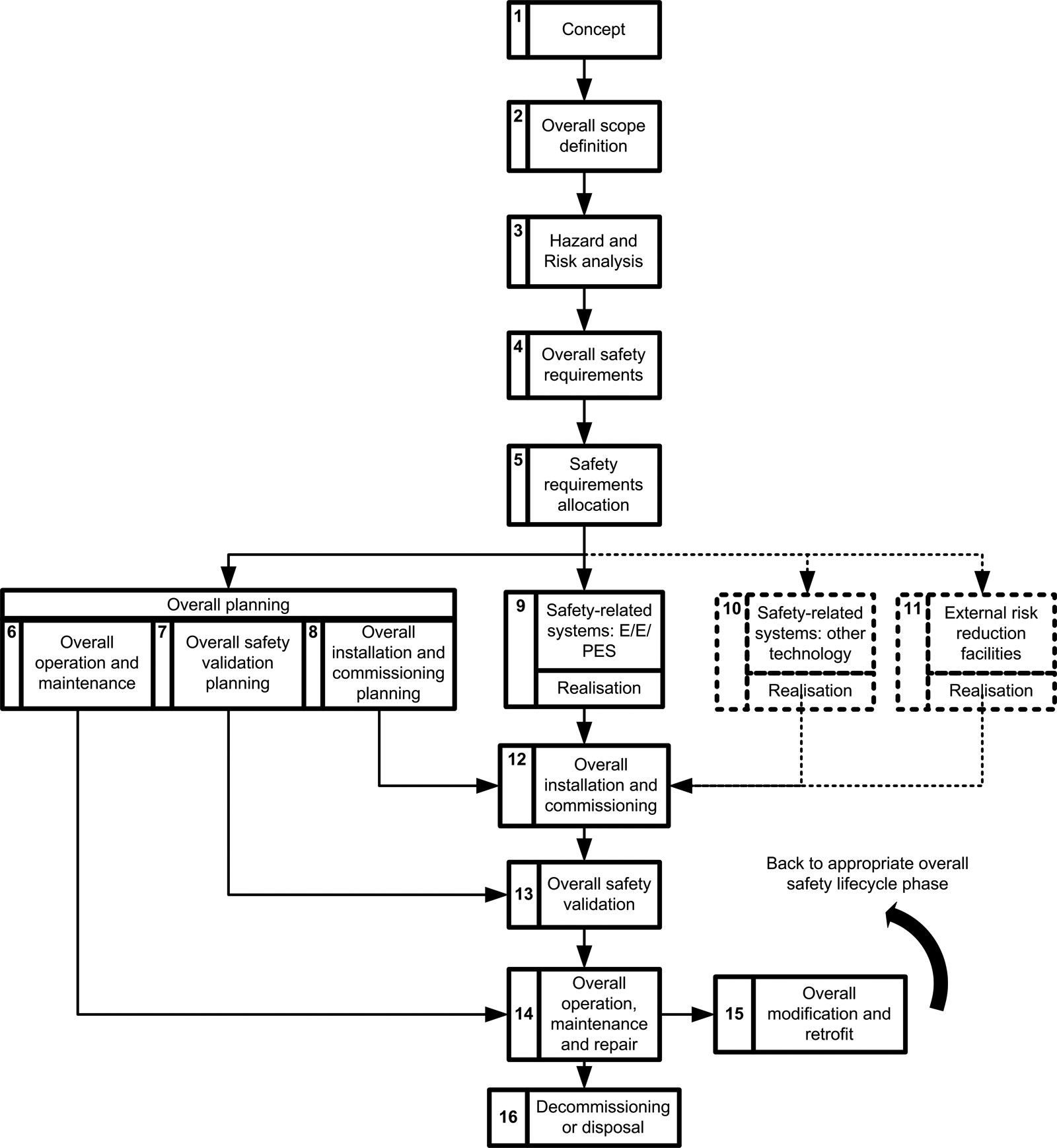

IEC 61508 17 specifies the development lifecycle model for safety-related systems, as shown in Figure 1 below. The development of a system is broken down specifically detailing the development of the E/E/PESs for that system. IEC 61508 requires the developer to determine the objective, scope, inputs and outputs for each lifecycle stage in Figure 1. In subsequent sections of the standard, the considerations for each lifecycle stage are described in detail.

Overall safety lifecycle 17

IEC 61508 specifies methods for use in the development of a safety-related system in relation to all aspects of the system and its development:

specification;

validation planning;

design and development;

integration, installation, commission in, operation and maintenance procedures;

validation;

modification;

verification;

functional safety assessment.

The above stages are all vital elements in the successful development, integration and use of a functional safety-related system. While their importance is clear, it is beyond the scope of this work to detail each individually. The guidelines provided in IEC 61508 for each of these elements ensure that theyare successfully considered and taken account of in the development process. Requiring such an approach for HIT systems would provide added assurance of successful operation and integration within healthcare organisations. Adopting this approach would also potentially serve to pre-empt the problems encountered when new systems are introduced into an existing process, for example, changes to workflows, new work practices and changes in communication methods/lines. 45

It is suggested that IEC 61508 could be used as a methodological foundation on which to develop a healthcare-specific standard, which would be used by healthcareorganisations to provide an assurance of the safety of an assembled health care system. Jordan 46 discussed the professional role of the healthcare ‘system integrator’ whose responsibilities were subsequently described in IEC 60601 [39] to include:

planning the integration of any medical electrical equipment or system and non-medical equipment in accordance with the instructions provided by the various manufacturers;

performing risk management on the integrated system;

passing on any manufacturer’s instructions to the responsible organisation where these are required for the safe operation of the integrated system. These instructions should include warnings about the hazards of any change of configuration.

However, IEC 60601 39 does not provide a methodological framework to guide such a systems integrator towards the safe completion of their responsibilities. This healthcare stakeholder role is similar to the ‘Medical IT-Network Risk Manager’ role identified in IEC 80001-1. These stakeholder roles could use, and be responsible for implementing, the healthcare version of IEC 61508, leading to an assurance of the safety regarding the developed health care HIT system.

A basic example

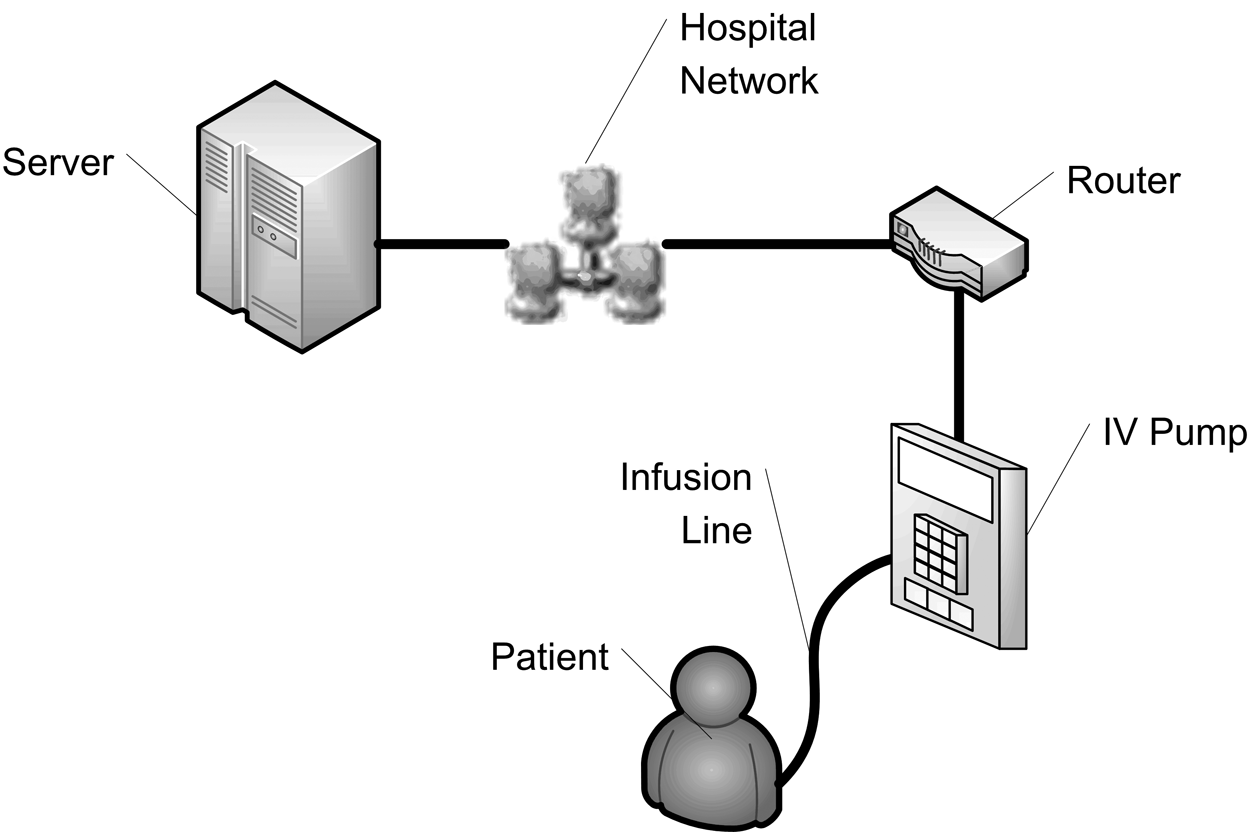

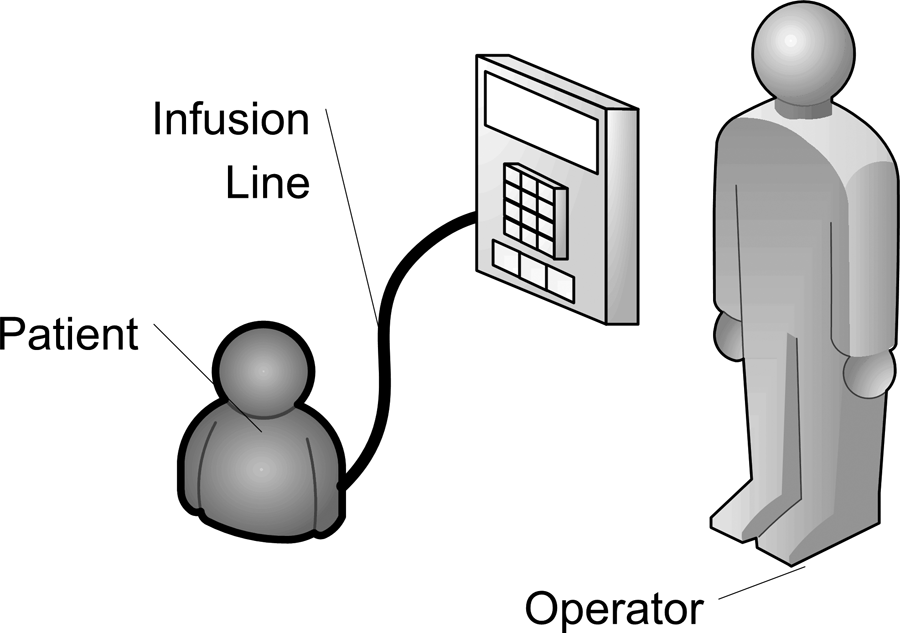

The following short example will highlight the potential importance of the adoption of an IEC 61508 type development and assessment methodology for HIT systems. Figure 2 below shows a simplified representation of a network controlled intravenous (IV) infusion pump.

Simplified network-controlled IV pump configuration

The pump is controlled by the HIT system and uses the patient’s scheduled medication administration data recorded in the EMR to control its settings. The use of a network-controlled IV pump reduces the potential for human error occurrence during the set-up of the pump. However, the safe administration of the medication becomes tightly coupled with the safety, and safety integrity, of the HIT system.

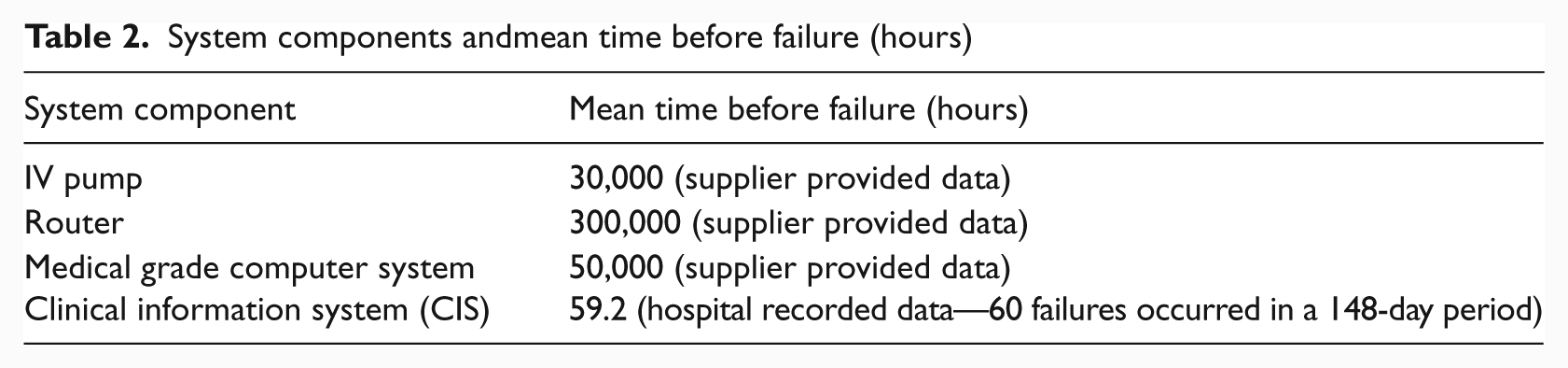

The system represents a very simplified configuration for the occurrence of an IV pump heparin medication error,and is obviously based on several assumptions, for example, that the correct prescription was entered into the EPR and the correct drug is ready to be infused in the IV pump. The diagram uses data extracted from the Medical Physics and Bioengineering Department and IT Department maintenance logs of a large Irish public hospital and from data provided by the equipment manufacturers, as described in Table 2.

System components andmean time before failure (hours)

The resulting system would have a Mean Time Before Failure (MTBF) of approximately 58.9 hours. This value would be considered unacceptably low in terms of overall system safety and is highly influenced by the poor MTBF rate of the clinical information system (CIS). These failures may result in minor inconvenience for users or failures that could result in missed or excessive administration.

In contrast, a standard operator-programmed IV pump would have a configuration as shown in Figure 3.

Operator-controlled IV pump configuration

Harder et al. 47 determined heparin error rates to occur at a rate of 2.01 errors per 1000 doses. Santell 48 reported heparin error rates resulting from incorrect computer entry, i.e. IV pump programming error, to occur at a rate of 14.6% based on 17,572 records submitted to a national reporting system during the period January 2003 to December 2007. Combining this data with the IV pump failure rate used above, it is possible to determine the failure rate for an operator-controlled heparin IV pump administration to be approximately 2351 hours. There is a significant difference in the perceived safer HIT-controlled IV pump administration and the human operator-controlled administration, which is unfounded.

This very simple example demonstrates the potential consequences of poor systems integration. It also illustrates the difficulty of quantifying the performance of even simple system configurations in healthcare, which could be particularly difficult cognisant of the dynamic clinical environment. Part of this difficulty is a result of the problem of quantifying HIT software errors which, in the example shown, skewed the reliability data.

A systems integrator should be able to identify the implications for system and patient safety of the network configuration outlined above using proactive hazard and risk analysis (step 3 in the IEC 61508 overall safety lifecycle shown in Figure 1). They should also be able to highlight the need to improve the safety of the CIS before such a configuration could be safely implemented.

It is important to consider IEC 61508 in conjunction with the development of health care IT networks whose risk management will be standardised with the development of IEC 80001. 41 IEC 80001 will provide a procedure for analysing the risk of a healthcare network and its devices. The IEC 61508 methodology provides a mechanism to combine the risk of the individual devices to determine an overall risk and safety for the network. Having established what the expected and desired safety standard for the network should be, it would be possible to determine whether this level had been achieved. Furthermore, it would identify what the discrepancy between the desired and actual safety standard is, allowing developers to focus their attention on the network’s weaknesses, i.e. those devices which have the greatest impact on the network’s safety. Any modifications to the network’s design, configuration and devices would require the re-evaluation of the system’s safety and dictate whether it continues to meet the specified standard and is suitable for use.

Regulatory implications of implementing IEC 61508

IEC 61508 is a significant change from the current approach to systems integration in health care. For each hospital setting, a safety case would be required that would outline the system configuration and how the safety of the system would be assured. Sujan et al. 38 discuss the difficulty in utilising the safety case in healthcare and the challenges facing their application:

the complex and emergent properties of healthcare systems;

no one stakeholder has the responsibility for maintaining the safety of the entire system and, subsequently, the overall safety argument;

existing safety argumentation techniques have difficulty in coping with the unforeseen emergent properties of complex systems, i.e. health care.

It would not be possible to develop generic safety cases, as each setting would have different practices, technology configurations and processes. This would involve significant changes in the current regulatory practices used in healthcare, as changes to the approved system could require re-assessment of the system’s safety. For example, if an IV pump were to fail prior to(or during) use, the organisation would be required to replace it with a pump of equal or greater assurance of safety to continue meeting the regulatory requirements. If a similar, or better, pump was not available, the hospital would need to re-assess the clinical setting with the new, less safe IV pump, to ensure the required safety was maintained.

For a practical safety case to be developed, healthcare organisations would need to limit the prevalence of dynamic system configurations. They would also have to proactively consider the implications of modifying existing configurations onsystem and patient safety. Similar consideration would have to be given to changes in clinical stakeholders, for example, the use of agency nurses or locum clinicians. These stakeholders may require additional training or the imposition of limited privileges until they can show the required competency for their intended operating environment, for example proficiency in programming a particular type of IV pump.

The implications of this safety approach on each organisation would be significant; new hospital stakeholders, in the form of system integrators, would need to be employed within a new group responsible for the management of the organisation’s safety case, as well as the availability and credentialing of replacement equipment or stakeholders. Medical device manufacturers would be required to clearly state the safety performance of their products, the other systems they can safely integrate with without degrading their safety performance, and the systems and configurations for which the devices have not been tested.

At a regional or national level, the cost and complexity of a government monitored and accredited based regulation process would be prohibitive to its adoption. A self-regulating system in which the legal obligation is placed on healthcareorganisations to implement the approach would be more efficient, i.e. the organisation itself would be held liable should an adverse event occur that is found to be associated with its HIT system. However, this approach would only be effective if the penalty cost of non-compliance was greater than the cost of implementation.

Conventional safety-related industries have achieved ‘ultrasafe’ performance over several decades by implementing a proactive and systematic approach to system configuration and integration, as standardised in IEC 61508. Healthcare can make significant safety improvements in a comparably shorter period of time by utilising the existing frameworks, methodologies and tools from these industries.

Conclusion

HIT use in healthcare is rapidly increasing, but this is resulting in non-standardised development and integration practices, which can compromise safety, as illustrated in the example discussed. The adoption of an IEC 61508 approach to HIT development should lead developers to apply standard techniques in the development and integration of HIT and ensure that the system is acceptable for use in the safety-critical healthcare environment. IEC 61508 should be used as a starting point for the development of a healthcare-specific standard for HIT system development and integration similar to those developed in other safety-related industries.

Implementing an IEC 61508 approach in healthcare would require significant changes to current regulatory and operational practices. A safety case would be required for each hospital setting as they would have different practices, technology configurations and processes. New departments of staff to manage the organisation’s safety case would be required to ensure changes to the system maintained the required safety standard. Regulation of the proposed approach at a national level would also be problematic; active regulation would be impractical given the rapidly changing and emerging properties of healthcare organisations and would require the healthcare organisations themselves to adopt the practices. However, it is not possible to achieve ultrasafe care without the assurance of safety of the systems that are underpinning and supporting the delivery of care in healthcare organisations.

Footnotes

Acknowledgements

The authors would like to thank Mr William Chadwick for his useful comments and suggestions on earlier drafts of this paper.

This research received no specific grant from any funding agency in the public, commercial, or not-for-profit sectors.