Abstract

Algorithmic management is used to govern digital work platforms such as Upwork or Fiverr. However, algorithmic decision-making is often non-transparent and rapidly evolving, forcing workers to constantly adapt their behavior. Extant research focuses on how workers experience algorithmic management, while often disregarding the agency that workers exert in dealing with algorithmic management. Following a sociomateriality perspective, we investigate the practices that workers develop to comply with (assumed) mechanisms of algorithmic management on digital work platforms. Based on a systematic content analysis of 12,294 scraped comments from an online community of digital freelancers, we show how workers adopt direct and indirect “anticipatory compliance practices”, such as undervaluing their own work, staying under the radar, curtailing their outreach to clients and keeping emotions in check, in order to ensure their continued participation on the platform, which takes on the role of a shadow employer. Our study contributes to research on algorithmic management by (1) showing how workers adopt practices aimed at “pacifying” the platform algorithm; (2) outlining how workers engage in extra work; (3) showing how workers co-construct the power of algorithms through their anticipatory compliance practices.

Introduction

Digital labor platforms such as “Upwork,” “Fiverr,” or “Twine” enable organizations and individuals to outsource specific tasks – such as graphic design, programming or data visualization – to an anonymous global workforce (Gandini et al., 2016; Kuhn, 2016; Taylor et al., 2017; Wood et al., 2019). On such platforms, algorithms play a key role in approving or rejecting workers from the platform, matching workers/talents with potential clients/tasks as well as rendering skill and performance levels of workers transparent over time (Kellogg et al., 2020). In governing access, visibility and reputation on the platform, algorithms shape behavior and relationships between workers and clients (Curchod et al., 2019; Orlikowski and Scott, 2015). As such, they facilitate a form of control that is distinct from the technical and bureaucratic control used by employers for the past century (Kellogg et al., 2020: 366).

The algorithmic management of workers on such platforms is characterized by an inherent opaqueness (Burrell, 2016), driven by a lack of disclosure about data sources (Orlikowski and Scott, 2014), evaluation mechanisms that operate “under the surface” (Introna, 2016: 18), and the difficulty for workers to properly interpret algorithmic outcomes (Burrell, 2016; Martin, 2019). Since work processes and outcomes change drastically under algorithmic management, a plethora of new and exciting research directions investigate workers in the context of algorithmic management (Kellogg et al., 2020). This growing research stream has illuminated the changing nature of work and encompasses, for instance, research on power asymmetries (Curchod et al., 2019; Gandini, 2019), new forms of labor in the gig economy (Barley et al., 2017; Gray and Suri, 2019), or human resource practices under algorithmic management (Leicht-Deobald et al., 2019; Meijerink and Keegan, 2019).

However, while workers are a critical part in the study of algorithmic management, as Kellogg et al. (2020) outline, the core focus so far has been on understanding algorithmic management as something experienced by workers, while often overlooking the agency that workers have in accommodating and reacting to such type of management and control. In opposing algorithmic management and the “iron cage” built by algorithms (Faraj et al., 2018), recent evidence highlights that gig workers can build workplace solidarity through collective action (Tassinari and Maccarrone, 2020), can create “invisibility practices” (Anteby and Chan, 2018), or might even engage in “algoactivism” (Kellogg et al., 2020). Yet, while these findings allude to gig workers’ agency in the face of algorithmic management, we lack deeper understanding of the practices they develop and how these practices are entangled with the materiality of algorithmic management (Curchod et al., 2019; Leonardi, 2012; Orlikowski and Scott, 2014). Drawing on the perspective of sociomateriality (Orlikowski and Scott, 2008, 2014), we therefore investigate how and through which practices gig workers deal with algorithmic management and its opacity. Adopting this perspective, we see worker practices and conversations around algorithms inherently intertwined with the algorithm’s materiality (Orlikowski and Scott, 2014).

We build on a systematic content analysis of 12,294 scraped comments from an online community of digital freelancers. Our findings show how workers aim to pacify the algorithm – that is, avoid algorithmic scrutiny and punishment – through four distinct anticipatory compliance practices. We find that workers aim these practices either directly (e.g. by avoiding words which may “trigger” algorithmic scrutiny) or indirectly (e.g. by undervaluing their own work in exchange for a good rating) at the algorithm. We further show that these practices prompt gig workers to perform extra work, encompassing additional cognitive, social, and emotional work that is intertwined with regular tasks. Paradoxically, as workers employ anticipatory compliance to reclaim control over their own work process, they reaffirm the algorithm’s power by internalizing its assumed decision-making mechanisms.

Our study makes three distinct contributions to the literature on digital work and algorithmic management. First, drawing on sociomateriality and workers’ social agency, we provide new insights into the ways that workers react to, accommodate, and work against algorithmic management (Anteby and Chan, 2018; Kellogg et al., 2020; Leonardi, 2012; Tassinari and Maccarrone, 2020). Second, we uncover implications of algorithmic management by drawing out how workers engage in extra work directed at pleasing the digital platform, which acts as a “shadow employer” (Gandini, 2019; Kuhn, 2016; Orlikowski and Scott, 2014). Last, our article contributes to the discussion of power and power asymmetries in the gig economy (Curchod et al., 2019) by highlighting how sociomaterial practices among workers, based upon their shared understanding of the materiality of algorithms, produce “subjectification” (Fleming and Spicer, 2014), which weakens the power of workers.

Algorithmic management in the digital economy

Algorithmic management empowers and constrains workers

Algorithmic management of workers is drawing considerable attention in recent years (Burrell, 2016; Danaher et al., 2017; D’Cruz and Noronha, 2006; Dourish, 2016; Introna, 2016; Just and Latzer, 2017; Kellogg et al., 2020; Wood et al., 2019; Zarsky, 2016; Ziewitz, 2016; Zuboff, 2015, 2019). Algorithmic management or algorithmic governance refers to the use of computerized technologies to (partially) automate processes of decision-making and control, enabled through the unprecedented speed, scale and ubiquity of surveillance technologies, data processing as well as machine learning (based primarily on: Danaher et al., 2017; Helles and Flyverbom, 2019; Just and Latzer, 2017; Kellogg et al., 2020). In algorithmic management, decision-making and control may be exerted entirely through computerized systems (humans out of the loop), it may be subjected to human oversight (humans on the loop) or it may be used as a means to support human decision-making and control (humans in the loop) (Danaher, 2016).

The literature surrounding algorithmic management encompasses both discussions of flexibility and autonomy as well as more critical debates on control and surveillance. Both Wood et al. (2019) and D’Cruz and Noronha (2006) find that on the one hand, working in a digital environment governed by algorithms grants high degrees of flexibility, autonomy, task variety and complexity. On the other hand, algorithmic management may create power inequalities and pressure on the worker-side as it enables clients to “potentially contract with millions of workers based anywhere in the world” (Wood et al., 2019: 10). This may lead to social isolation, irregular hours and overload of work (Just and Latzer, 2017; Shapiro, 2018).

As Kellogg et al. (2020) highlight, algorithms provide specific affordances for managerial control by relying on comprehensive information based on a variety of sources, giving instantaneous assessments of performance based on algorithmic computation, and providing interactive platforms on which multiple parties can partake in interactions. For workers, however, the most important aspect is that algorithmic management and its decision mechanisms are opaque and continuously evolving and, thus, on the user side ultimately inscrutable (Burrell, 2016; Danaher et al., 2017; Hansen and Flyverbom, 2015). As more and more key-decisions are either facilitated or made entirely by algorithms, concern is growing about potentially unfair, arbitrary or discriminatory outcomes (Kim, 2018; O’Neil, 2016). This is particularly salient in the areas of recruiting, human resource management and employment (Leicht-Deobald et al., 2019). Here, retailer Amazon was recently criticized for deploying an algorithmic recruiting tool that turned out to be biased against women because it was trained on a data-set where the top candidates were always men (Gershgorn, 2018). Similarly, also Martin (2019) highlights that algorithms may reproduce bias and discrimination depending on the type of data utilized and thus takes into question their accountability.

Taking a sociomaterial perspective on workers under algorithmic management

In understanding how workers deal with opaque algorithmic management, research has increasingly relied on sociomateriality (Barley, 2015; Curchod et al., 2019; Kellogg et al., 2020; Larson and DeChurch, 2020; Lehdonvirta, 2018; Orlikowski and Scott, 2008, 2014, 2015). The sociomaterial perspective is a response to the pure social constructivist approach to technology studies (Barley, 1986, 1990), which has been criticized for focusing too much on social context and practices of actors, and not enough on the technology and its use itself (Leonardi and Barley, 2010).

Here, sociomateriality aims to provide a balanced position where both the materiality of technology as well as social agency matter. Materiality refers to ways in which “physical and/or digital materials are arranged into particular forms that endure across differences in place and time” (Leonardi, 2012: 29), while social agency refers to the coordinated human intentionality formed in partial response to perceptions of a technology’s materiality (Leonardi, 2012: 42). This means that not only do individuals act with technology, which changes the form of technology (Barley, 1990), technology also acts upon individuals, changing social aspects such as perceptions and actions (Leonardi, 2012). Sociomateriality, therefore, highlights the “inherent inseparability between the technical and the social” (Orlikowski and Scott, 2008: 434).

This interplay of materiality and social agency unfolds in sociomaterial practices (in the following: practices) that gig workers adopt (Leonardi, 2012; Orlikowski, 2007). In the context of this article, we understand practices in accordance with Schatzki (2001) and Leonardi (2012) as arrays of human activity that are centrally organized around a shared practical understanding of algorithmic decision-making on a digital platform. For example, Orlikowski and Scott (2014) show that hoteliers reenact Tripadvisor’s material ranking algorithm and its valuation in their everyday practices. These practices change the relations between hoteliers and guests as the public visibility of hotel rankings turns guests into critics, who now have increased bargaining power (e.g. through the threat of a negative review) within the hotelier-guest relationship.

The material and social aspects of algorithmic management unfold in practices in several ways. Key metrics employed by platforms are ratings, rankings or success scores – mostly in the form of compound numerical representations of workers’ performance on the platform (Whelan, 2019). These performance metrics transform peer-feedback (sometimes combined with other data points), into an instrument to monitor and ultimately control worker’s performance and productivity (Gandini, 2019). Previous work has found that peer-feedback has a significant impact on worker behavior as it increases overall quality of service delivery (Lutz et al., 2018; Rosenblat and Stark, 2016). The “normative control” (Gandini, 2019) exercised through peer-based performance metrics works in two ways: On the one hand, workers may self-discipline as they playfully strive for high scores in a “gamified” environment (Lehdonvirta, 2018). On the other hand, workers may adjust their behavior in order to comply with the expectations of clients who are in the position to enable/hinder their future access to and success on the platform through their positive/negative feedback. Here, algorithmic management turns clients into “middle managers”, who enact performance evaluations of workers through reviews and rankings (Gandini, 2019; Meijerink and Keegan, 2019; Rosenblat and Stark, 2016). The platform in turn remains largely invisible and working in an implicit coalition with the clients (Curchod et al., 2019). Thereby, the platform takes on the role of a shadow employer - an invisible managerial figure or decision-making mechanism which determines workers access, visibility and reputation on the platform (Friedman, 2014; Gandini, 2019).

While the literature outlines the psychological effects of algorithmic management and its power implications for workers (Ahsan, 2020; Martin, 2019; Petriglieri et al., 2019), most of the current research has focused on the experience of workers under algorithmic management (Kellogg et al., 2020). While such research has given exciting insights into the nature of work under algorithms (Gandini, 2019; Rosenblat and Stark, 2016), it provides a passive view of workers and undervalues their ability to adapt to new forms of management. Thus, with the exception of few recent studies (Anteby and Chan, 2018; Tassinari and Maccarrone, 2020), our understanding of gig workers’ agency and the practices they develop under algorithmic management remains limited. Such understanding is especially crucial to better outline and theorize relations between workers, clients, and the algorithm (Curchod et al., 2019; Gandini, 2019) and the power imbalances that might result from such relations (Kellogg et al., 2020; Leonardi and Barley, 2010). Therefore, building on Upwork as a research context, we investigate the practices that workers adopt under algorithmic management to ensure long-term access, visibility, and reputation on digital platforms.

Methodology

Research context: Upwork as a knowledge-based freelancing platform

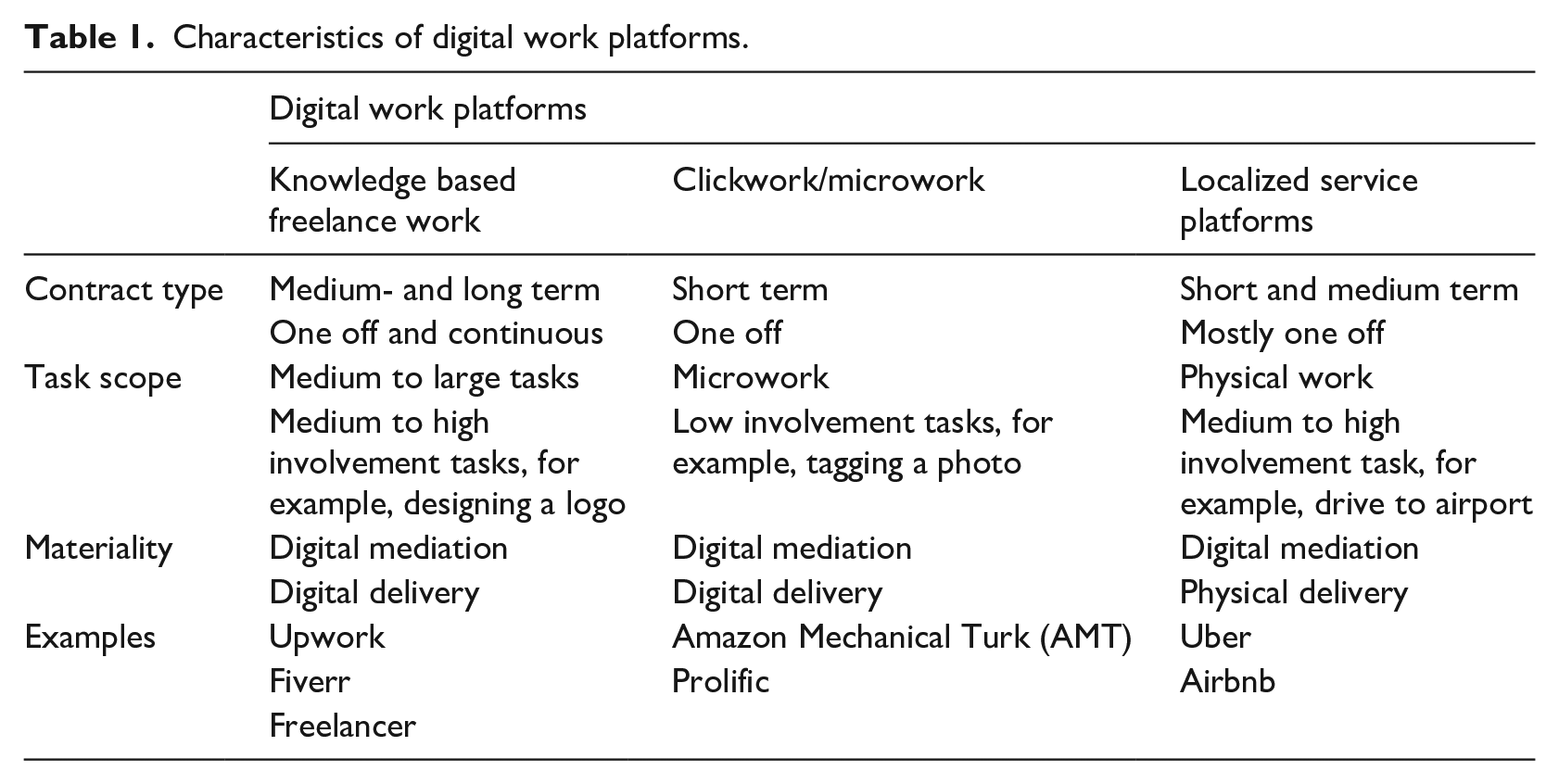

Online work platforms, also termed “remote staffing marketplaces” (Kuhn, 2016) or “freelance contracting platforms” (Fieseler et al., 2019), act as intermediaries, connecting freelance workers with clients, often on a global scale. Synthesizing recent contributions on online work platforms (Fieseler et al., 2019; Scholz, 2012; Wood et al., 2019), we outline three types of online work platforms, depending on contract type, task scope and materiality, including (1) knowledge-based freelancing platforms such as Upwork, Freelancer or Fiverr which facilitate medium to large-scale tasks, often creative jobs, which require high involvement on the worker side (e.g. designing a logo, recording a voice-over) (2) “clickwork”, or “microwork”, platforms such as Amazon Mechanical Turk or Prolific which mediate small granular (micro-)tasks that require low involvement on the worker-side, (e.g. tagging a photo, answering a survey) as well as (3) localized service platforms such as Airbnb or Uber which facilitate physical services among local actors (e.g. driving a passenger to the airport, sharing a spare bedroom). In order to gain a better understanding of how algorithmic decision-making affects workers on online work platforms, we focus on a knowledge-based freelancing platform since this type of platform relies on a more continuous and more invested relationship between platform, workers and clients (see Table 1).

Characteristics of digital work platforms.

We selected the platform Upwork – one of the largest digital knowledge-based freelancing platforms – as our context of study. Upwork (formerly Elance/oDesk) went public in 2018 while mediating freelance work in 180 countries and facilitating a total of 1.8 billion USD in gross service value 1 (Pofeldt, 2018; Upwork, 2018a). Upwork’s business model relies on fees charged for both clients and workers. In their annual report, Upwork (2018a) emphasizes the importance of machine learning algorithms which process “detailed and dynamic information, including skills provided by freelancers, feedback and success indicators of freelancers and clients” to shape effective user experiences (Upwork, 2018a: 3). Furthermore, Upwork employs “specific pattern-matching algorithms” to either detect unusual behavior (Upwork, 2018a: 5) or to predict future behavior (Upwork, 2018a: 6) on the platform. In order to be able to “operate at scale”, Upwork has automated several core processes, such as selecting candidates: “Upon registration, our machine learning algorithms assess a freelancer’s potential to be successful on our platform based on the current supply and demand in addition to the skills in the freelancer’s profile” (Upwork, 2018a: 6).

Workers who pass this algorithmic review are granted access to the platform and will be able to bid on gigs and send out proposals. Workers who are either not selected or who did not successfully connect with enough clients will be forced to drop out of the platform, receiving an automated notification: “Unfortunately [. . .] we must part ways with freelancers whose skills are not in demand in our marketplace [. . .]” (explained for instance on: IMTips, 2017). The boundaries of algorithmic and human management are blurry, and often it is impossible for workers to discern which platform decisions are based purely on algorithmic calculation and which ones are grounded in actual human insight and judgement. Decisions that are at least in part derived through an algorithm encompass areas of access (managing hiring and people flow), visibility (proposing matches and facilitating search) as well as reputation building (calculating job success scores). These features and the central role of algorithmic decision-making throughout the platform journey (gaining access, being matched, build reputation) render Upwork an excellent context to study how workers adapt to and deal with algorithmic management.

Research design: Collecting and filtering worker conversations

Data collection: Scraping online community conversations

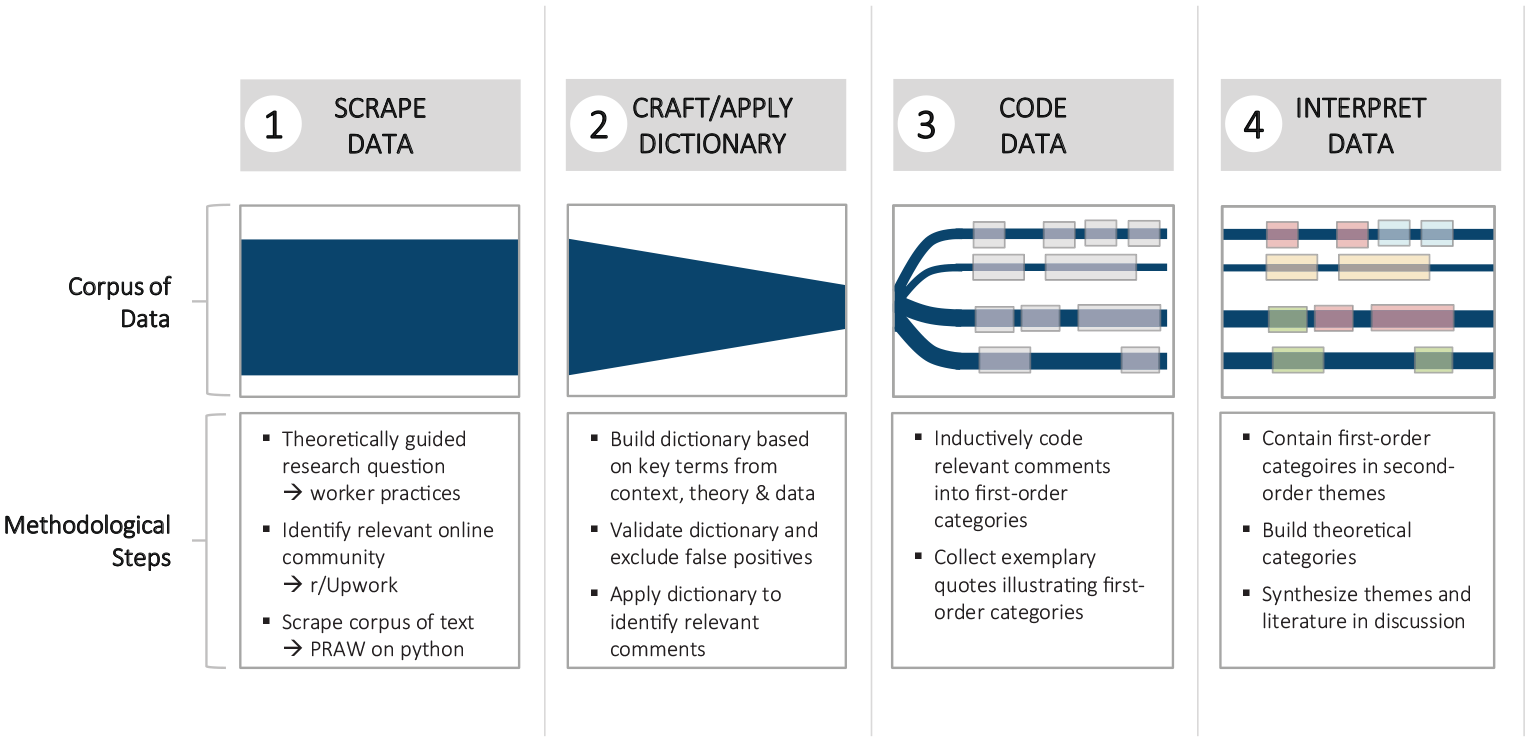

To gain an understanding of how digital workers “preemptively” adjust their behavior (anticipatory compliance practices) in light of algorithmic management, we gathered conversation data from a large online community dedicated to our case 1platform Upwork (“r/upwork” on Reddit) (see figure 1 for an overview of our methodological approach). This data is particularly fitting to our purpose as it captures naturally occurring conversations without researcher interaction, thus revealing practices in their natural form (Potter, 2013; Schatzki, 2001). The online community is hosted by Reddit, a third platform with no ties to Upwork. Workers use this online community as a social forum where they can anonymously ask questions and share stories and heuristics surrounding their participation in Upwork. Given the official policy of Upwork to immediately “sanction and/or suspend” commenters who are “posting deliberately disruptive and negative statements about Upwork” on the official forum (Upwork, 2018b), we expect the independent online community to prompt more candid and unfiltered responses. As of September 2018, the Upwork community on Reddit had 6700 members. We used a self-developed script within the Python Reddit API Wrapper (PRAW) - a python package that allows for simple access to Reddit’s API 2 - to scrape the 1000 most recent discussion threads from the online community which resulted in a total of 12,294 comments made by 948 commenters (Chandra and Varanasi, 2015; Reddit, 2018). The data collection took place in September 2018 and encompasses posts spanning about 3 months. A first breakdown of the scraped data reveals that the ten most active commenters are responsible for 33.6% of all comments, which corresponds with the typical Pareto distribution of online conversations, where a minority of contributors provides the majority of content (Barabási, 2003).

Step-by-step description of methodology (collecting, filtering, coding, and interpreting data).

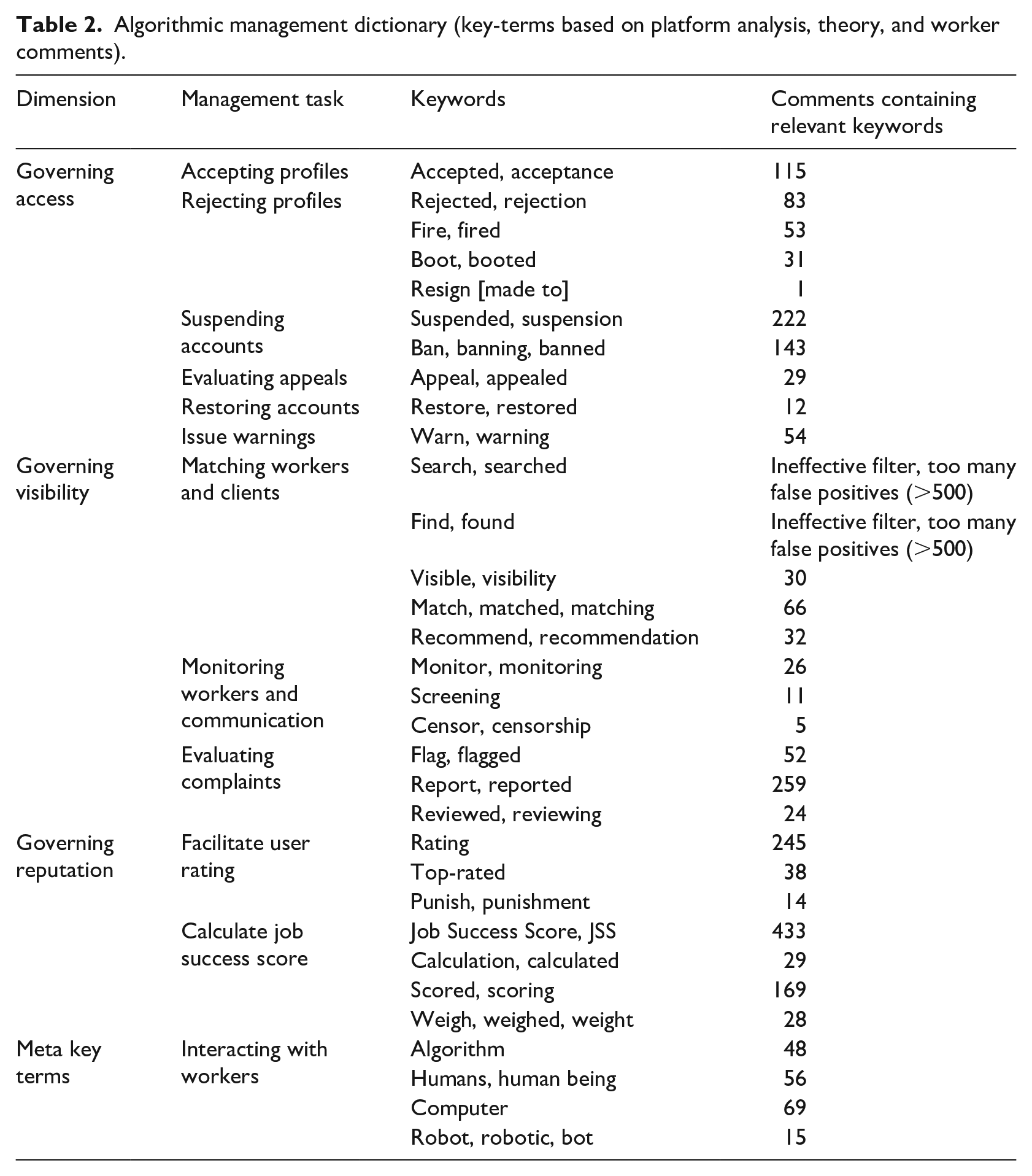

Dictionary development: Identifying algorithmic management keywords

In order to identify the subset of relevant comments about algorithmic management among the large corpus of data, we first compiled a list of key terms (in the following: dictionary) tied to algorithmic management, which we used to filter the main dataset. In the absence of a standard dictionary for algorithmic management, we created a bottom-up custom dictionary (Graham et al., 2009; Humphreys and Wang, 2018). More to the point, in order to identify tasks performed by the platform or algorithm (e.g. profile accepted, account suspended, rating calculated etc.), we followed the systematic “walk-through method” originally proposed by Light et al. (2018) for the analysis of web applications. Here, two researchers assumed a user’s position and systematically and forensically stepped through the various stages of the Upwork platform, mimicking a prototypical user flow which includes (1) registration, login, and profile setup, (2) actions of everyday use such as searching for potential clients and finally, and (3) discontinuation of use or logoff. In particular, we created our own client account in order to gain an in-depth understanding of the platform processes. During the walk-through, we noted all potential algorithmic management tasks. The set of identified tasks was then translated into keywords that were likely to be found in a discussion among workers. For example, the algorithmic management task of “rejecting profiles” may be discussed using the terms “reject”, “rejection”, “rejected”, “deny,” or “denied”. In order to get a more complete and realistic list of key-words that also reflect the language and speech of the community, we did a plausibility check based on a subset of 200 comments. We carefully read through the comments to identify alternate phrasings of key terms. For example, when individuals were talking about being “rejected” by the algorithm, they sometimes used more colloquial terms such as “booted” or “fired”. Furthermore, based on the subset of comments, we added a filter category of “meta” criteria to identify instances where users directly talk about the algorithm. These include terms like “algorithm”, “robot”, “bot”, “human”, “human being” [as opposed to algorithms], or “computer”. This process yielded a final dictionary of 32 keywords (see Table 2). Two keywords were excluded because they yielded too many matches (>500) and were thus unsuitable to meaningfully filter the data.

Algorithmic management dictionary (key-terms based on platform analysis, theory, and worker comments).

In filtering the entire corpus of data for all relevant comments that contain dictionary keywords, we aim to preclude central actor bias. Similar methods often focus on qualitatively analysing comments of only the most central or most active actors (Moser et al., 2013), which may grant rich contextual insight but potentially distorts the data in favor of few central actors. Our current method is a way to access and leverage the “long tail” of text data and include comments from less central actors. In applying the dictionary as a filter to the sample data, we reduced the 12,294 comments by 83% resulting in 2067 relevant comments where workers specifically discussed algorithmic management (i.e. decisions which were taken presumably in an automated fashion by the platform without meaningful human participation or contribution). We further reduced the number of comments by screening for misidentified or off-topic matches. For example, while the majority of comments containing the word “monitor” did indeed pertain to the algorithmic task of monitoring (surveilling) workers, some comments pertained to computer monitors (hardware) instead 3 . The latter were excluded from the sample. Furthermore, comments that were either about very specific use-cases or exclusively about worker-client relationships and thus not relevant to the larger scope of this contribution were also excluded from analysis. After removing these erroneous matches, we retained a set of 1889 comments surrounding algorithmic management which were subsequently coded manually for anticipatory compliance practices. The self-developed dictionary approach allows for an effective way to process large scale text data and render them suitable for qualitative analysis. Unlike other methods that focus on capturing either breadth (structures, themes) or depth (content/meaning), we manage to provide both by first structuring the data and then coding it qualitatively (Levina and Vaast, 2015).

Data analysis – Coding for compliance practices

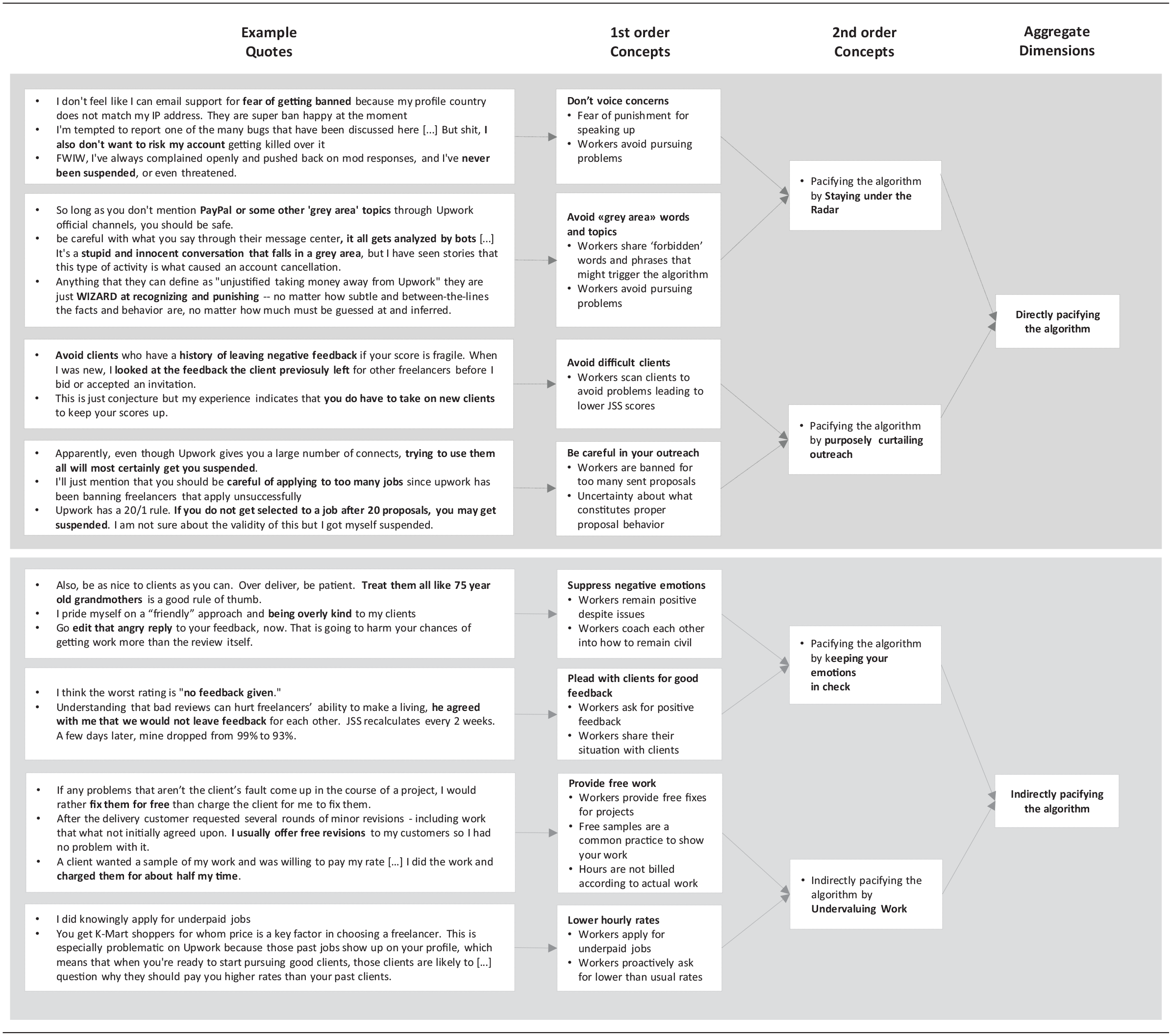

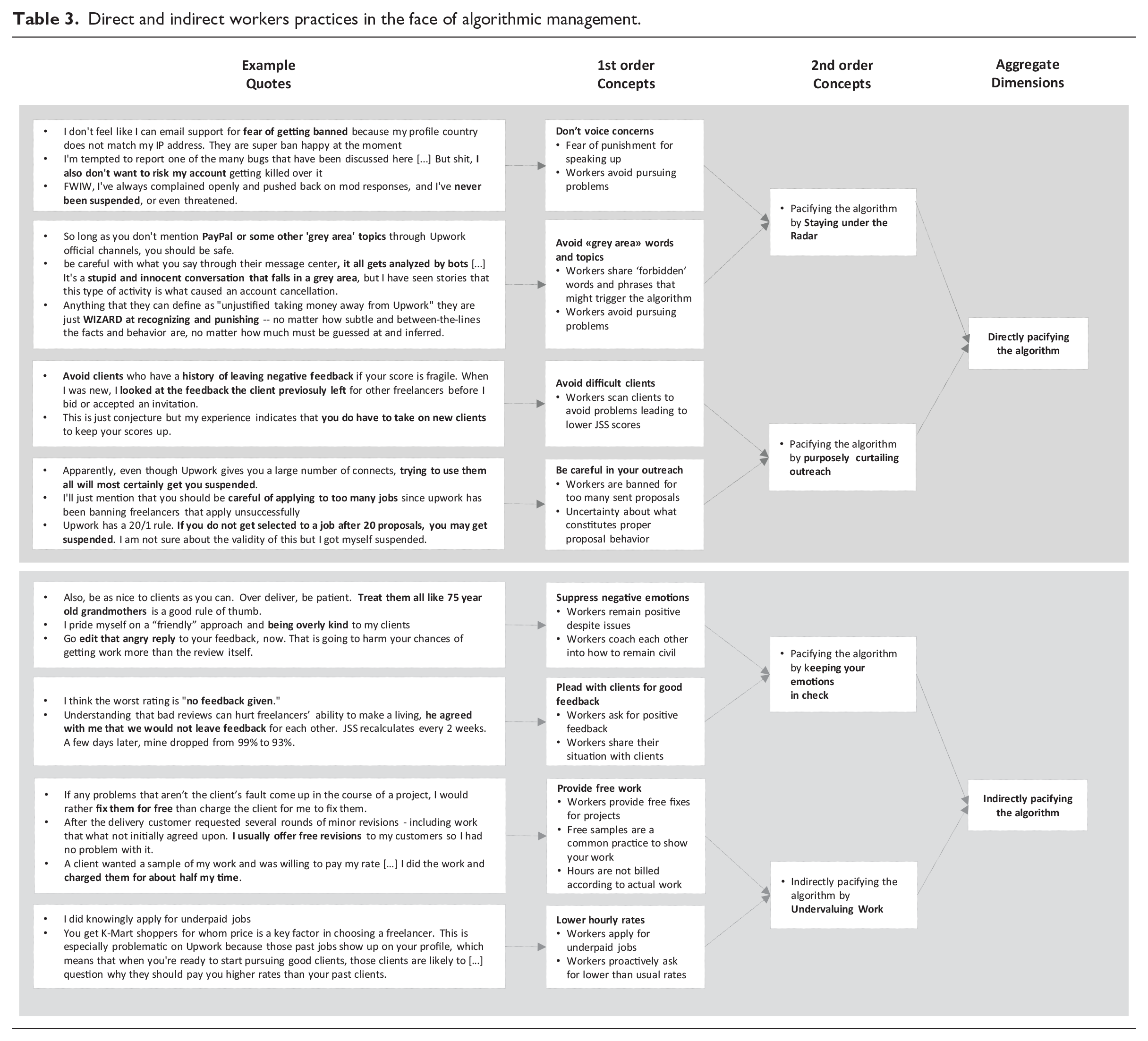

The analysis of the empirical material derived from the Upwork community (r/upwork) is based upon qualitative content analysis and follows common templates for creating theoretical categories from qualitative material (Corbin and Strauss, 1990; Hsieh and Shannon, 2005; Miles et al., 2014). We followed a three-step coding approach in the analysis: In a first step, the first two authors went through the comments independently and engaged in an “open coding”, labeling worker practices. These codes remain close to the data and were usually short and descriptive, rooted in the phrases of the informants (Miles et al., 2014). For instance, “sharing forbidden phrases” was used to describe how workers share terms that the algorithm might understand as potential violations of the terms of services. In a second step, the first two authors reviewed, discussed, refined and combined the descriptive codes and unified wordings into conceptual second order concepts. For instance, we combined “don’t voice concerns” and “avoid ‘gray area’ words and topics” into “staying under the radar of the algorithm”, which encompasses practices of abstaining from actions that could trigger the algorithm. In order to strengthen the robustness and confirmability of the analysis (Lincoln and Guba, 1985), in a third step, the third author recoded the empirical data, reviewed and compared the codes, and initiated revisions in case of a disagreement between the three authors. Table 3 provides an overview over our emerging codes and theoretical constructs, in line with best qualitative practice (Gioia et al., 2013; Langley and Abdallah, 2011). In the last step, we investigated how the anticipatory compliance practices relate to each other. To understand their interplay, we placed them in the triadic nexus of worker, algorithm and client on Upwork, proposed by Gandini (2019) as well as Meijerink and Keegan (2019). Doing so, we were able to draw out how workers employ anticipatory compliance practices to directly and indirectly pacify the algorithm.

Direct and indirect workers practices in the face of algorithmic management.

Empirical findings – Pacifying the algorithm

Anticipatory compliance in the face of algorithmic management

With algorithms governing key moments of the digital work process, such as access to the platform, visibility toward clients or reputation building, our findings highlight that workers adapt their behavior to comply with (assumed) algorithmic materiality to ensure their continuous and successful participation in the digital work platform. More to the point, our analysis of online conversations suggests that due to the high complexity and non-transparency of the algorithmic decision-making, individuals develop anticipatory compliance practices – that is, they engage in specific practices according to the assumed (but not yet proven) material design of the algorithm – to increase their chances of gaining and maintaining access, visibility and reputation on the platform. Here, workers pursue two different paths toward pacifying the algorithm: On the one hand, they employ direct compliance practices that are aimed exclusively at the algorithm and have no significant impact on the client/worker relationship. On the other hand, workers develop indirect compliance practices that seek to prompt favorable feedback from clients, which in turn translates into a favorable rating from the algorithm.

Direct compliance practices

Staying under the radar

The first direct practice deriving from our findings pertains to workers “staying under the radar” so as not to trigger algorithmic scrutiny, which may lead to suspension or ban from the platform. On the one hand, workers are careful not to mention “gray area words” within the chat, which may alert the algorithm to potential rule violations. Here, terms related to performing transactions outside of the platform (e.g. mention of alternative communication channels or payment providers) are regarded as especially problematic. Members of the online community share a growing number of terms that have supposedly triggered warnings or bans. One worker commented on this: “[. . .] be careful with what you say through their message center, it all gets analyzed by bots and I’m certain this is what triggers an account review that can lead to a cancellation.” This uncertainty sparks not only much discussion but also a sense of paranoia that leaves workers wanting to over-adjust their behavior so as to be on the “safe side”, not triggering any algorithmic response. One user summarizes this feeling of latent paranoia by stating that the algorithmic functions “are just WIZARDS at recognizing and punishing - - no matter how subtle and between-the-lines the facts and behavior are, no matter how much must be guessed at and inferred”. Getting suspended for misbehaving on the platform’s own communication channels (e.g. the built-in chat function) can happen abruptly and with no significant means of recourse. One worker describes their own experience as follows: “I might have just barely [gone] over the edge of the ban trigger algorithm. But my problem is not even the ban itself. I realize it was justified. It is the absolute lack of any prior warning or any attempt to help or understand the freelancer that gets me.”

On the other hand, workers refrain from “voicing concerns” on the internal forums or in the communication with service agents. Even when reporting bugs or issues with the site, they are careful: “I’m tempted to report one of the many bugs that have been discussed here [. . .] But shit, I also don’t want to risk my account getting killed over it”. This is especially the case if workers suspect that there may be an issue with their account or their behavior in recent times which could be exposed by accident if the algorithm suddenly turns its gaze onto their account. This also includes, for example, if workers travel and therefore show a discrepancy between their profile country and their current IP address: “I don’t feel like I can email support for fear of getting banned because my profile country does not match my IP address. They are super ban happy at the moment.” While some workers appear to be exceedingly insecure and try to avoid any contact with the platform or the algorithm, others stress that they never had any issues: “[For what it’s worth], I’ve always complained openly and pushed back on mod responses, and I’ve never been suspended, or even threatened.” In the comments, there is some indication that seasoned workers and commenters with a longer post-history are less likely to be scared of reaching out to the platform.

Purposely curtailing client outreach

As a second direct compliance practice, we find that workers purposely forgo opportunities for paid work – either by limiting their outreach to clients or by avoiding specific clients altogether – in order to escape algorithmic scrutiny. As an example, workers on the platform receive a number of tokens (“connects”) that they can use to send out work proposals to clients. The more tokens a worker acquires, the more proposals they are allowed to send out. However, workers often hesitate to actually use their tokens for fear of getting banned if they send out too many proposals without getting hired. While workers do not know the exact ratio of successful and unsuccessful bids that may trigger unfavorable consequences, there are many stories and hypotheses being shared within the community: “Apparently, even though Upwork gives you a large number of [tokens], trying to use them all will most certainly get you suspended.” Another worker has even developed a tentative heuristic based on their own experience: “Upwork has a 20/1 rule. If you do not get selected to a job after 20 proposals, you may get suspended. I am not sure about the validity of this but I got myself suspended.”

Another reason for purposely curtailing one’s outreach is the fear of incurring even one bad rating, which leads workers to be exceedingly careful not to enter into contracts with potentially difficult clients. Here, workers would rather forgo a potential income than risk receiving “a bad feedback or, almost worse, no feedback at all”. Workers often turn to the online community for advice in vetting clients and recognizing potential “red flags” in client profiles early on. As a general rule, a more tenured workers suggests: “Avoid clients who have a history of leaving negative feedback if your score is fragile. When I was new, I looked at the feedback the client previously left for other freelancers before I bid or accepted an invitation.” The tendency of workers to be overly selective in client outreach – not because they fear that clients may default, but because they feel that even one bad rating may severely harm their chances on the platform – points toward the overall weight and significance of ratings and reviews on the platform.

Indirect compliance practices

Undervaluing work

Our results further suggest that workers continuously undervalue their labor in order to increase chances of a securing a match and gaining favorable ratings from clients, which in turn will positively impact their job success score. Here, undervaluing work includes providing free work in the form of billing less hours than actually worked, providing free work samples or carrying out fixes for free. Often, such unpaid work can take up a substantial amount of time. One worker provided “hours of extra work” on a very low paying gig. Having entered the contract he felt that he had “no way out” and had to do everything possible to avoid a negative rating. In some cases, these issues arise from problems caused by workers themselves. One worker illustrates this by stating that if problems arise during the transaction, she would usually “rather fix them for free than charge the client” in order to improve the customer experience and incur a favorable rating. However, often the extra work is either part of an extension or results from additional client requests. Describing an instance where a client made endless change-requests, one worker noted that he just “sucked up the extra work for no extra pay” so as not to risk his rating.

Another emerging practice is the lowering of one’s hourly rate below what would normally be fitting for the level of expertise and experience. Even though many workers on the platform highlight the importance of asking for appropriate rates, the empirical findings show that undervaluing work is common – especially so among newer members of the platform who are still out to “prove themselves” and acquire a good job success score. A voice artist explains their reasoning: “The pay was definitely lower than I would otherwise normally charge, but I realize that I am new to the platform and need to prove myself.” Several workers further highlight that there are numerous clients who engage in tricks to keep workers’ hourly rates low. As a worker explains, “The logic goes that the more you are tied to a client the lower your pay is - the logic that is often applied to the lowly priced freelancers who are also often desperate and don’t have much success winning projects.” Thus, workers often find themselves in a vicious cycle of unpaid or poorly paid work that is hard to escape.

Keeping emotions in check

The fourth cluster of anticipatory compliance practices surrounding algorithmic management pertains to keeping one’s emotions in check to avoid conflict with clients and prompt positive ratings. One worker summarized her general attitude toward dealing with clients as follows: “be as nice to clients as you can. Over deliver, be patient. Treat them all like 75 year old grandmothers is a good rule of thumb.” In the same vein, workers remind each other not to show their anger at being treated unfairly by a client and to swallow their negative emotions both during interactions with clients, but also in replying to negative reviews as this may harm their reputation on the platform: “Go edit that angry reply to your feedback, now. That is going to harm your chances of getting work [. . .].”

Another part of this practice is negotiating directly with clients and asking them to leave positive feedback, either by pointing out how crucial their feedback is or how much a worker relies on a good feedback for their livelihood. This puts workers in the position where they have to swallow their feelings and even in the case of unsuccessful interactions have to plead with the client not to rate them badly or not to leave a rating at all. One worker recounts such an interaction with a client after an unsuccessful encounter where he pleaded with the client to understand “that bad reviews can hurt freelancers’ ability to make a living.” As a result, the client agreed not to leave a negative feedback. While this interaction was ultimately “successful”, it was also humiliating to the worker and likely took an additional emotional toll. One worker compared Upwork to another platform where client feedback was not factored into as strongly by algorithmic management: “on [the other platform] I just stopped caring about asking for reviews [. . .] Compare that to Upwork when every single time I get awarded a job I have to suffer hours of worry whether my JSS score will get affected or not.” By putting such an emphasis on client reviews, the algorithm influences work and working relationships on the platform.

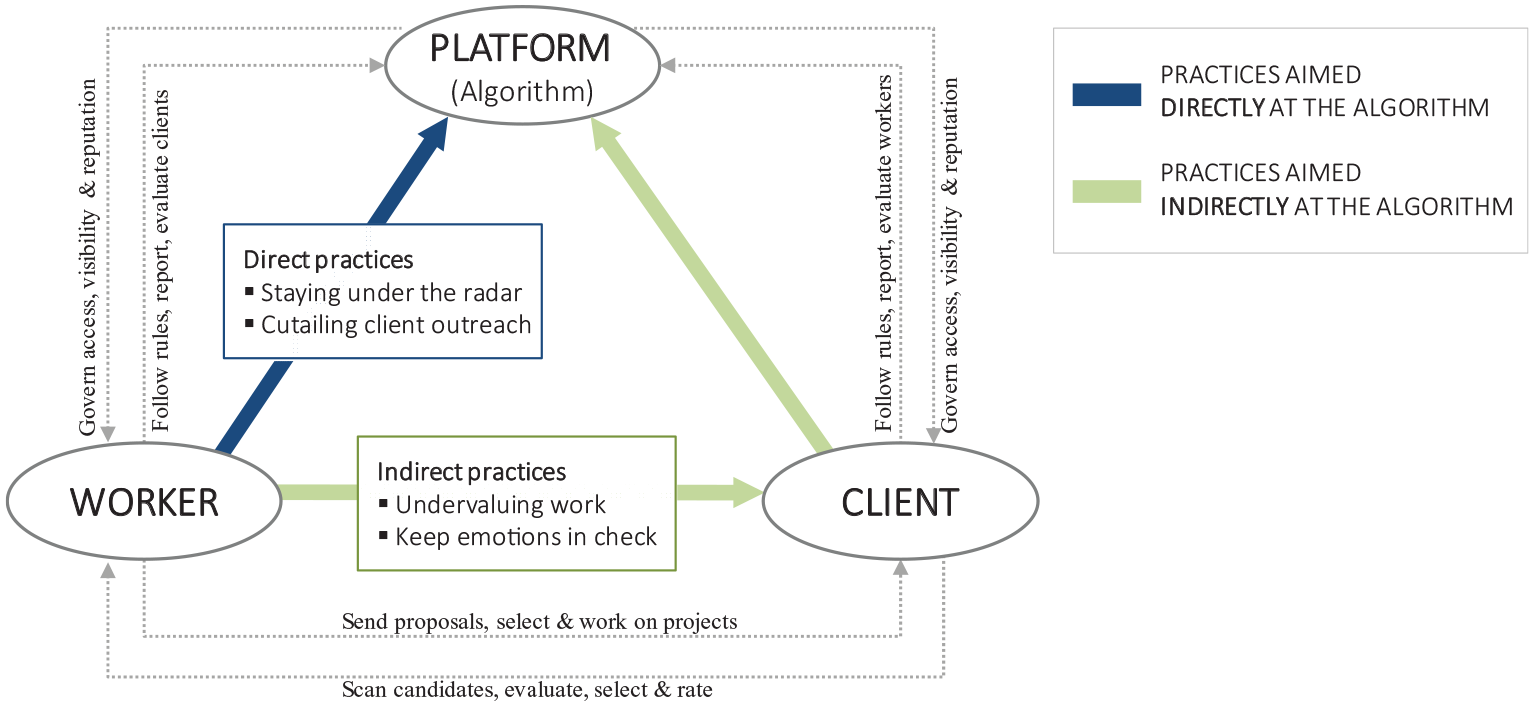

Pacifying the algorithm – Direct and indirect practices to ensure platform participation

Taken together, our empirical findings reveal how digital freelancers share, discuss and craft direct and indirect compliance practices vis-à-vis algorithmic management in order to ensure their continued participation on the platform. Figure 2 shows how these practices work in concert to ultimately “pacify” the algorithm. The platform environment is marked by a triadic relationship between algorithm, client and worker.

Direct and indirect compliance practices to “pacify” the algorithm.

On the one hand, workers try to influence the algorithm directly by either avoiding specific behaviors or speech that might “trigger” a ban or suspension or by purposely limiting client outreach to the point where they are forgo paid work opportunities so as not to skew their success statistics or the ratio between outreach and job-completion. On the other hand, workers try to influence the algorithm indirectly by trying to prompt positive client feedback and favorable ratings through lowering their rates and providing additional services for free or by performing emotional labor. At first sight, these practices are largely directed toward clients: Just as in traditional service settings, a good worker-client relationship amounts to a higher likelihood that a contract will be renewed or extended. However, in the highly interconnected platform environment, satisfying the client – while necessary – is by no means sufficient to ensure participation. In opposite to traditional freelancing, workers need to focus primarily on receiving positive feedback and ratings from clients, which in turn translate into a positive evaluation by the algorithm that allows workers to remain on the platform. As perceived by the workers, even one bad rating or the absence of a rating could potentially harm visibility and future access to the platform. While the goals of algorithms and clients might be largely aligned, they are not entirely congruent. For example, while clients may be interested in establishing long-term relationships with good workers, the unveiled compliance practices suggest that workers are incentivized to continuously “take on new clients to keep [their] scores up”.

Our findings suggest that workers are keenly aware of the central role that the algorithm plays in the triadic relationship between workers, clients and platform and that addressing the algorithm both directly and indirectly (via the client) is key in ensuring continuous participation in the platform. While most relevant interactions take place within the constraints of the platform environment, our findings indicate that workers often turn to the community of other workers in order to vent, ask for advice and share stories. The current study highlights the importance of such community spaces – often operated independently of the platform – for the collective negotiation and refinement of practices vis-à-vis the algorithm.

Discussion and conclusion

Our findings show how workers develop anticipatory compliance practices such as staying under the radar or undervaluing work (direct practices) as well as purposely curtailing client outreach and keeping their emotions in check (indirect practices), in order to avoid de-selection or sanction by the algorithm. In the following, we present three implications of our study, each emphasizing a sociomaterial view on the intertwinement between worker and algorithm. First, we show how workers’ dependence on algorithmic decision-mechanisms drives them to perform “extra work” in general and emotional labor in particular (Orlikowski and Scott, 2014). Second, we shed light on how algorithmic management allows platforms in the gig economy to take on the role of a “shadow employer” that shapes interactions between workers and clients (Friedman, 2014; Gandini, 2019). Third, we contribute to the debate on power and technology (Leonardi and Barley, 2010) by showing how workers themselves produce and socially construct the power of algorithmic management through their practices.

Agency and extra work in the face of algorithmic management

In contrast to previous research in the sociomaterial tradition, which has put a strong focus on how algorithmic management impacts on workers’ wellbeing and perceived vulnerability (Curchod et al., 2019; Kellogg et al., 2020; Orlikowski and Scott, 2014), our findings highlight workers’ social agency by focusing not just on how they experience algorithmic management but how they develop practices in response to the algorithm’s material features. More to the point, we show that algorithmic management prompts workers to perform “extra work” 4 and emotional labor in particular in order to avoid de-selection and sanction by the algorithm. Here, we understand “extra work” as additional cognitive, social, and emotional effort that is intertwined with regular tasks and is aimed through direct and indirect practices at pacifying the algorithm. As our findings highlight, workers constantly engage in considerations of potential repercussions of their behavior and, thus, engage in anticipatory compliance practices. While such considerations may be common to a certain extent in traditional work settings as well (see for instance Anteby and Chan, 2018), they are likely much more profound and critical on digital platforms as interactions are comprehensibly monitored, instantaneously analyzed and translated into decisions with little to no explanation or recourse options. This issue is further exacerbated through the lack of a human layer within the decision structure that might be able to explain decision rationales. Hence, digital workers have to be hyper-vigilant to how both their own actions as well as their clients’ actions (and inactions) may be interpreted by the platform. Such considerations “supercharge” regular tasks, such as contacting new clients, with “extra work,” which creates additional stress and strain for the workers. As an example, our data encompasses frequent instances where users have to, “bite their tongue,” “swallow their anger,’ or “be extra friendly” toward clients for fear of retaliation – either by the client or by the algorithm that would translate a bad client feedback into a lower success score. This goes so far that workers not only fear negative feedback, but also the absence of feedback, as this might negatively impact their rating on the platform as well. To obtain feedback, workers engage in relational labor with clients, even going so far to beg them for feedback. Finally, as our findings indicate, workers spend much time in designing their communication with clients in order to avoid “gray words” that might trigger algorithmic scrutiny.

Taken together, these findings show that “extra work” is charged both with cognitive as well as social and emotional elements. The suppression of negative emotions and expressions as well as the evocation of positive emotions and expressions constitutes “emotional labor” (Ashforth and Humphrey, 1993; Grandey and Melloy, 2017; Hochschild, 1983; Morris and Feldman, 1997). Emotional labor is commonly performed by service workers in a face-to-face manner. Accordingly, previous scholarship on emotional labor has primarily focused on flight attendants, waiters or frontline staff (Ashforth and Humphrey, 1993; Leidner, 1999) as well as care-givers and nurses (Brotheridge and Grandey, 2002) or hosts/drivers of localized service platforms such as Airbnb (Bucher et al., 2018) and Uber (Rosenblat and Stark, 2016). Here, our study offers new insight by highlighting how algorithmic management may considerably amplify expectations of emotional labor – even in contexts that are fully digitally mediated with limited or absent face-to-face interaction. Emotional labor in algorithmic work environments may be exacerbated not just by high service-expectations on the client-side, but also by the general opacity and insecurity of a digital work environment marked by the continuous looming fear of being flagged, suspended or down-graded for actions (carried out by worker or client) or events (intended or unintended) that may trigger the algorithm.

Working for the shadow employer

Digital platforms change the nature of work (Barley et al., 2017; Kuhn, 2016) and especially the relations between workers and organizations (Friedman, 2014; Fuller and Smith, 1991; Gandini, 2019). Our findings illuminate the distinction of how digital work platforms present themselves and how workers experience and react to the platform’s algorithmic management. Platform providers such as Upwork or Fiverr refer to workers as entrepreneurs, freelance contractors, independent professionals or sellers (Fiverr, 2020; Upwork, 2019), thereby implying that digital work is primarily a transaction taking place between independent workers and clients with the platform being a mere matchmaker, marketplace or marketing channel. Our findings contradict this representation of the relationship, putting a much stronger emphasis on the role of the platform and its algorithmic decision-making as an invisible managerial figure, which fundamentally shapes the relationship and power dynamics between workers and clients (Gandini, 2019). In opposite to a “neutral” marketplace, digital platforms and their algorithms profoundly shape the behavior of workers, who engage in direct and indirect anticipatory practices in assumed compliance with algorithmic decision-making rationales. By unpacking workers’ practices and their social agency, our emerging theory provides further evidence for Gandini’s (2019) observation that digital platforms increasingly act as “shadow employers” that are “designed as organizational models that “invisibilize” the managerial figure” (2019: 1051). Building on Gandini, we define a shadow employer as an “invisibilize”, managerial figure or decision-making mechanism. The term “shadow employer” may be particularly fitting as (1) algorithmic decision-making mechanisms remain largely opaque with limited feedback or recourse options and (2) platforms take on key roles of employers, such as hiring and performance management (Leicht-Deobald et al., 2019; Meijerink and Keegan, 2019). This conceptualization of digital work platforms has important implications.

First, our findings contrast with previous work that has highlighted the flexibility and autonomy found in the gig economy (Wood et al., 2019). While this is technically true and workers do have the flexibility and autonomy to craft their own schedule and choose clients, the client-worker relationship is severely constrained by the platform, as workers have to simultaneously please the client and the algorithm in order to ensure their continued participation on the platform (e.g. by sending out only a limited number of proposals, by not working with clients who have left mediocre feedback in the past or by undervaluing their own labor). Second, our notion of anticipatory compliance practices points out the precarity and stress created by workers having to deal with a shadow employer. Whereas traditional organizations provide a holding environment for workers and socialize them into norms, roles, and expectations (Petriglieri et al., 2019), such socialization is lacking on digital platforms. Workers are left to themselves in figuring out the expectations of an opaque algorithm, and as our findings indicate, it is up to the workers to proactively create a holding environment for themselves. The implications of how workers manage their own socialization and how this may affect workers’ well-being (Kowalski and Loretto, 2017) are important avenues for future research. Last, as traditional organizations are increasingly making use of algorithms to gain efficiency, for instance in their human resource management (Angrave et al., 2016), our findings point toward the hidden cost of such changes and the implications for organizational power structures (Fleming and Spicer, 2014). The efficiency gains that algorithmic management may bring come at a steep cost for workers, who must engage in constant extra work to pacify the algorithm, or risk being dropped from the platform. Whether, and to which extent, these costs are an unintended by-product of algorithmic management or represent an intended part of the platform’s design (Zuboff, 2019) is an important avenue for future research.

Opacity and power in the gig economy

Further, our study adds new insights into how power asymmetries are constructed in the gig economy. Advancing recent contributions on algorithmic management (Burrell, 2016; Danaher, 2016), our findings indicate that workers find it difficult to reverse engineer the rationality of the algorithm with respect to what triggers suspension and what lowers their JSS score (Burrell, 2016). As such, the algorithm on the one hand gains power over workers as they come to “fear the wizards,” as some workers describe the algorithm. Here, we show that it is not through the algorithm’s ability to find and punish workers that the algorithm changes behavior, but through fear and unawareness of its material properties. We, therefore, argue that the power of the algorithm lies not solely in its materiality, but in how the perception of such materiality unfolds in the social agency of workers (Leonardi, 2012). Accordingly, power of algorithmic management is rooted in and constructed through shared practices of workers, which may not be purely determined by the algorithmic design. On the other hand, based on an understanding of algorithmic management as constructed through materiality and social agency (Kellogg et al., 2020; Leonardi, 2012; Orlikowski and Scott, 2014), our findings – paradoxically – indicate that workers strengthen the power of algorithms through their anticipatory compliance practices. While workers adopt these practices in an attempt to “pacify” the algorithm, our findings indicate that in doing so, they ultimately shift power even further to the algorithm. As workers share their experiences and practices with each other, they involuntarily shape the perceptions of the algorithm’s materiality.

Here, an analogy can be drawn to the inmates in Foucault’s (1977) panopticon prison who are constantly surveilled by an observer who sees but is not seen. This relationship leads inmates to internalize and self-regulate, as they always feel under the gaze of power. Similarly, the workers on digital work platforms are subjected to continuous surveillance without being able to understand or reverse-engineer the nature of their algorithmic observer (Zuboff, 2019). In line with Foucault’s (1977) argument, we see that workers internalize the rationality of power which leads them to eventually monitor and discipline their own behavior. Foucault (1977) terms the form of power described here “subjectification.” This form of power works through apparently agential qualities, such as practices. But these qualities are, in fact, self-disciplining actions that shape the subject’s sense of identity and role (Fleming and Spicer, 2014). The anticipatory compliance practices we find are examples of this. When the workers employ the practices to pacify the algorithm, they take on a role as possible transgressors similar to the inmates in Foucault’s prison. As the workers absorb the evaluative performance of algorithms and take it upon themselves to discipline their activities in anticipation, they are enforcing the directives of the platform unto themselves. This raises the question whether such enforcement is coincidental. Without traditional employment arrangements (Meijerink and Keegan, 2019), platforms lack direct control over workers. It is therefore likely that platforms strategically seek to force workers into following their directives by relying on opaque algorithmic management. Thus, we encourage future research to investigate the role of platform design in shaping power relationships in the gig economy.

Contributions, limitations, and conclusion

This study investigates how workers develop direct and indirect anticipatory compliance practices under algorithmic management through a sociomateriality lens, thereby extending our understanding of algorithmic management and new forms of work. In particular, our study makes three contributions. First, the study builds on sociomateriality to examine how gig workers deal with algorithmic management by outlining direct and indirect practices that workers engage in. Focusing on the social agency of gig workers and their understanding of the algorithm’s materiality, our findings outline how workers react to, accommodate and work against algorithmic management. We thus extend previous insights focused on a more passive account of workers’ experiences by unearthing practices that workers develop in the face of algorithmic management (Anteby and Chan, 2018; Kellogg et al., 2020; Tassinari and Maccarrone, 2020). Second, we uncover implications of algorithmic management by introducing the concept of “extra work.” Our findings show how platforms relying on algorithmic management act as “shadow employers” and, as such, profoundly shape client-worker relationships, pushing workers to engage in cognitive, social and emotional “extra work” to ensure their continued access, visibility and reputation on the (Gandini, 2019; Kuhn, 2016; Orlikowski and Scott, 2014). Last, our article extends the discussion on power imbalances in the gig economy (Curchod et al., 2019) by outlining how workers’ practices and their shared understanding of the materiality of algorithms produce “subjectification” (Fleming and Spicer, 2014). This dynamic assigns power to the algorithm that goes beyond its material design and, thus, re-enacts power imbalances in the everyday gig work.

While our study makes several important contributions, it is not without limitations. First, our insights are based solely on one platform, which naturally limits our claim to generalizability. Second, while we investigate the triad between algorithm, workers and client, our empirical data stems from the worker side. Thus, future research should incorporate both the design of the algorithm as well as the voice of clients. Moreover, our method, while offering a powerful way to collect, filter and code large sets of text, comes with a two limitations: On the one hand, since we focus in our analysis on individual comments, we cannot make statements about relationships or conversation dynamics. On the other hand, much of analysis – and especially the filtering of the data – depends on the quality of the self-developed dictionary. Here, it would be beneficial to apply the same dictionary to other platforms as well to test for robustness. Despite these limitations, we consider the current instrument fitting for an exploration of key themes within the large dataset. Third, as our research design does not contain a longitudinal element, we cannot make inferences to how workers have developed practices over time, nor how they may continue to develop them as the market and platform changes.

These three limitations open several opportunities for future research. First, future research needs to explore whether algorithmic management differs across digital platforms. As recent evidence outlines, there are stark differences in how platforms treat their workers, with some platform providers striving to alleviate precarious and exploitative conditions and instead facilitate voice and fair work standards (Gegenhuber et al., 2020). Second, future research should explore algorithmic management in traditional organizations, which increasingly transition toward algorithmic over human management (Kellogg et al., 2020). Such contexts may allow future research to more clearly understand the differences between and possibly also intertwinement of algorithmic management and human management. Third, we encourage processual research to investigate how workers develop sociomaterial practices over time as they engage with algorithmic management and become familiar with it. For instance, to which degree do workers socialization and habituation on the platform enable workers to cope with and resist again algorithmic management? To understand this, future research needs to delve deeper into the performativity of algorithms and how workers can enact it (Curchod et al., 2019; Orlikowski and Scott, 2008). Moreover, there may be an important role for online communities in facilitating the creation of shared practices (Kellogg et al., 2020), and also for workers to engage in collective action against platforms (Tassinari and Maccarrone, 2020). We thus encourage future research to employ diverse methods and mixed-methods to improve our understanding of algorithmic management.

Concluding, our article emphasizes the importance of taking a critical stance toward algorithmic and its implications for workers. As we highlight, algorithms cannot be seen as neutral, technological progress which merely reduces transaction costs between workers and clients. Instead, algorithms present a management tool that profoundly shapes power relations between workers, clients and platforms. While workers are able to exert agency by developing practices aimed at pacifying algorithms, such practices ultimately may serve to (re-)produce the power of the algorithmic management. As more and more digital as well as traditional organizations rely on algoriworthmic management, it becomes important for researchers to engage critically with this phenomenon. We, therefore, hope that our study can serve as a foundation for future critical scholarship on the use of algorithmic management and its implications across contemporary organizations.

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by the Norwegian Research Council under the project «Future ways of working in the digital economy» (275347).