Abstract

This research explores how a new relation of production—the shift from human managers to algorithmic managers on digital platforms—manufactures workplace consent. While most research has argued that the task standardization and surveillance that accompany algorithmic management will give rise to the quintessential “bad job” (Kalleberg, Reskin, and Hudson, 2000; Kalleberg, 2011), I find that, surprisingly, many workers report liking and finding choice while working under algorithmic management. Drawing on a seven-year qualitative study of the largest sector in the gig economy, the ride-hailing industry, I describe how workers navigate being managed by an algorithm. I begin by showing how algorithms segment the work at multiple sites of human–algorithm interactions and how this configuration of the work process allows for more-frequent and narrow choice. I find that workers use two sets of tactics. In engagement tactics, individuals generally follow the algorithmic nudges and do not try to get around the system; in deviance tactics, individuals manipulate their input into the algorithmic management system. While the behaviors associated with these tactics are practical opposites, they both elicit consent, or active, enthusiastic participation by workers to align their efforts with managerial interests, and both contribute to workers seeing themselves as skillful agents. However, this choice-based consent can mask the more-structurally problematic elements of the work, contributing to the growing popularity of what I call the “good bad” job.

Keywords

In recent years, the number of people in the United States who show up to work by turning on an app on their smartphone has dramatically increased, redefining our understanding of work, labor, and employment issues. Dubbed on-demand, platform, or gig workers, these individuals log in to digital apps in which algorithms instead of humans manage workers, rewarding, disciplining, and evaluating them. Researchers have sounded alarms about the rise of algorithmic “tyranny” (Lehdonvirta, 2018: 27), “cruelty” (Gray and Suri, 2019: 67), and “despotism” (Griesbach et al., 2019: 1) that trap workers in an “encompassing” (Kellogg, Valentine, and Christin, 2020: 366) “invisible cage” (Rahman, 2021: 945). On-demand work, especially work on popular digital platforms such as Uber and Instacart, is often seen as the epitome of a “bad job,” i.e., characterized by variable and low wages, unpredictable schedules, no formal job ladders, and high risk (Kalleberg, Reskin, and Hudson, 2000; Kalleberg, 2011; Ravenelle, 2019). Furthermore, because on-demand workers are classified as independent contractors, they are not covered by employment protections, such as workers’ compensation, unemployment insurance, and health insurance, which are part and parcel of what is considered the quintessential high-quality “good job” (Kalleberg, 2011; Cappelli and Eldor, 2023). Yet, workers continue to opt for on-demand jobs despite the increasing availability of traditional jobs (Katz and Krueger, 2019; Kaplan et al., 2021; Garin et al., 2023) of high quality (Aeppli and Wilmers, 2022; Autor, Dube, and McGrew, 2023; Newman and Jacobs, 2023), with one-third of workers preferring algorithmic over human managers (Östergaard, 2017). These findings suggest that these individuals may not experience the work to be as deleterious as many scholars have argued (Shapiro, 2018; Gandini, 2019; Ravenelle, 2019). Thus, the puzzle remains regarding why people participate in and even claim to like such precarious work.

Emerging technology and algorithmic management, in particular, open up new questions about how work is organized and how it is experienced by people. In a growing stream of research about on-demand work and algorithmic management, studies often end with the punch line that workers are controlled by an all-encompassing, comprehensive management system (e.g., Rosenblat and Stark, 2016; Gandini, 2019; Griesbach et al., 2019; Rahman, 2021). This critical view of algorithmic management stems, in part, from an unnecessarily limited view of how workers can express agency within these systems. Because much of the research on algorithmic management centers on control, the limited literature that has focused on workers’ agency has categorized it as either collective action (Wood, Lehdonvirta, and Graham, 2018; Tassinari and Maccarrone, 2020; Lei, 2021) or individual resistance (Shapiro, 2018; Cameron and Rahman, 2022). Such research often treats consent as mere compliance with management’s objectives, describing workers as passive, unresisting dupes to managers’ designs (see Hodson, 1991 as an example). But consent, like resistance, can entail workers’ agency. Here, I define consent as individuals’ enthusiastic and active participation to align their efforts with managerial objectives and even to exceed managerial goals (Burawoy, 1979; Hodson, 1999; Padavic, 2005; Mollick and Rothbard, 2014). Through workers’ subjective well-being, i.e., finding meaning and fulfillment in their work, workers’ and managerial interests become aligned.

A rich literature in labor process theory examines the relationship between workers’ experience and workplace consent. This research highlights how social relations with coworkers and managers make jobs tolerable under precarious, bad conditions. In the absence of managers, workers in manufacturing units encouraged one another to work faster, eschewing organizational safety procedures (Hayes and Wheelright, 1984; Barker, 1993; Bernstein, 2012). In myriad manufacturing and service jobs, workers competed against one another, playing games to gain social status by beating the piece rate or baiting a customer for larger tips (Burawoy, 1979; Sherman, 2007; Sallaz, 2009). And when managers cloaked their authority under the guise of friendship and offered preferential treatment, individuals remained in demanding jobs, tolerated callous behavior from customers, and put up with irregular schedules (Anteby, 2008; Mears, 2015; Sallaz, 2019).

However nuanced, prior ways of understanding workplace consent are inadequate for fully understanding how consent is produced in algorithmically managed work. While early research considered the relationship between technology and consent (e.g., the role of the machine in workplace games, Burawoy, 1979), the role of technology and, particularly, algorithmic management is noticeably absent in contemporary research. Like electricity, the telephone, and the internal combustion engine, algorithms are an “infrastructural technology” (Barley, 2020: 8); they change the system of production and the labor process (Aneesh, 2009; Kellogg, Valentine, and Christin, 2020; Vallas and Schor, 2020), which has significant implications for the manufacturing of consent. Unlike work on the shop or service floor, work on digital platforms occurs through an electronically mediated exchange between the worker, the customer, and the platform’s algorithms. On-demand work is largely asocial, as workers have little or no in-person contact with managers and coworkers. And because workers are classified as independent contractors, platform companies do not have the legal obligations (e.g., duty of care, fiduciary responsibility) embedded in the employment relationship that might elicit workers’ participation; nor is it legal to try to develop these practices (Cappelli and Keller, 2013; Cappelli and Eldor, 2023). 1 Without coworkers or managers to act as a co-participant in the production of consent, this arrangement raises the question, how is consent generated in algorithmically managed work? To answer this question, we must first understand the granular relationship between workers and the technology they interact with at the point of production.

I examine this question at the “strategic” research site (Merton, 1987: 1) of the ride-hailing industry, the largest sector of the on-demand economy that relies on algorithmic management to direct workers. I draw on a seven-year study that includes participant observation (as both a driver and rider), longitudinal interviews (n = 136), social and print media, and official company materials. I first describe how algorithms structure the work process by segmenting work into small chunks of human–algorithm interactions. Algorithmic management scaffolds a choice set from which workers can make hundreds of choices within a short period. I show that at each site of these human–algorithmic interactions, workers make choices in the work process, and through this observation I identify two dominant tactics that workers use. In engagement tactics, drivers interact within the boundaries of the algorithmic management system: they decide when, where, and for which platform company to work, and they usually adhere to the algorithm’s nudges. In deviance tactics, by contrast, drivers manipulate the algorithmic management system, pushing against its boundaries: in this context, they declined certain rides and tried to inflate fares to obtain desired outcomes. Although the ride-hailing companies’ algorithmic managers penalized these actions if detected, drivers could easily counter the penalties. While the behaviors associated with these tactics of engagement and deviance are practical opposites, both tactics elicit consent and contribute to workers describing themselves as skillful agents. Last, I describe how workers can withdraw consent, either by leaving the platform or compromising the integrity of the algorithmic management system.

While “structured antagonism” (Edwards, 1990: 126) between management and workers always exists, so do shared interests—there must be some symbiosis to beget consent. By examining workers’ granular interactions with ride-hailing apps, this study shows that at hundreds of moments at the point of production, individuals could make choices within a highly confined management system—choices that elicited consent even when workers were deviating from some of the formal guidelines. This is what I label choice-based consent. In contrast to prior literature, which emphasizes how social mechanisms elicit consent through broadly sweeping, durable social relations (e.g., Burawoy, 1979; Anteby, 2013; Mears, 2015), this study finds that algorithmic management elicits consent through a series of narrow yet frequent choices that are associated with workers’ sense of mastery. In doing so, this study elaborates and extends the consent literature, identifying new mechanisms and outcomes. Ultimately, I argue that this algorithmically mediated choice-based consent contributes to the growing appeal of the “good bad” job—work that is attractive and meaningful in ways that mask structurally problematic elements.

On-Demand Work, Algorithmic Management, and Workplace Consent

The Rise of On-Demand Work and Its Implications

The number of workers in the on-demand economy is growing, with more than two million jobs added in the U.S. alone in 2020 (Zgola, 2021; Garin et al., 2023), and online on-demand work accounts for up to 12 percent of the global labor force (Datta et al., 2023). 2 Digital platforms such as Uber, Instacart, and Amazon Flex have radically transformed the way work is organized, managed, and experienced, challenging how scholars think about work. 3 For legal scholars, on-demand work blurs the legal classifications of employee and independent contractor, thereby complicating the issue of who is entitled to labor protections (Dubal, 2017, 2020). For economists and strategy scholars, digital platforms create frictionless marketplaces in which rating systems and incentives facilitate trust between strangers (Sundararajan, 2016; Tadelis, 2016; Jacobides, Cennamo, and Gawer, 2018). For researchers of communications and information sciences, on-demand work offers an exemplary setting for examining long-standing issues regarding transparency, fairness, and ethics (Christin, 2017; Möhlmann et al., 2021; Cameron et al., 2023). For labor scholars, on-demand work, especially on closed labor platforms like Lyft and DoorDash, is the quintessential precarious, low-paid job of poor quality (Ravenelle, 2019; Wood et al., 2019) whose decentralized nature makes it challenging for individuals to organize for better working conditions (Wood, Lehdonvirta, and Graham, 2018; Mayberry, Cameron, and Rahman, 2024). For organizational psychologists, the atomized nature of this work complicates their understanding of how social processes such as identification and socialization occur (Petriglieri, Ashford, and Wrzesniewski, 2019; Anicich, 2022; Cropanzano et al., 2023). And for organizational scholars, on-demand organizations represent a significant departure from traditional organizational forms because of the former’s reliance on independent contractors and the replacement of managers by algorithms (Aneesh, 2009; Vallas and Schor, 2020; Davis, 2022). Given the widespread implications of this new form of labor, the need for a granular understanding of on-demand work has never been greater.

Algorithmic Management and Control

In the on-demand workplace, algorithms are de facto managers embedded in the work process and have responsibilities that include hiring, allocating tasks, evaluating performance, and firing. First defined by Lee et al. (2015: 1603), algorithmic management means that software algorithms “assume managerial function and surrounding institutional devices that support algorithms in practice.” Drawing on elements of scientific management (Taylor, 1911), scholars have called algorithmic management a digital version of Taylorism because of the high degree of task standardization and decomposition, digital surveillance, information asymmetry, fine-grained measurement of labor, and piece-rate wages (Cherry, 2016; Faraj, Pachidi, and Sayegh, 2018; Curchod et al., 2020; Jarrahi et al., 2020; Kellogg, Valentine, and Christin, 2020; Rahman, 2021; Noponen et al., 2023). 4 As a result, much of the extant research has focused on how algorithmic management controls workers.

Matching algorithms either assign workers to tasks in closed labor market platforms (e.g., Instacart) or suggest potential workers to customers via open labor market platforms (e.g., Upwork). A worker’s refusal to accept a task can incur a penalty, such as receiving a status downgrade (Rosenblat and Stark, 2016), removal of privileged status (Cameron, Thomason, and Conzon, 2021), or the loss of access to future assignments (Rahman and Valentine, 2021). Algorithmically mediated telemetrics and customer ratings track, rank, and evaluate multiple aspects of workers’ performance (e.g., acceleration, response times, customer ratings; Wood et al., 2019; Cameron, 2022). The management system’s interactive nature enables companies to update the algorithms that manage workers by analyzing data from those same workers, thereby allowing the companies to experiment on workers at will (Möhlmann and Zalmanson, 2017; Veen, Barratt, and Goods, 2020; Rahman, Weiss, and Karunakaran, 2023). This process creates an environment rich in information asymmetry, in which “workers are given information in a piecemeal fashion, while remote company dispatchers maintain omniscient views” (Shapiro, 2018: 2959). This line of reasoning suggests that algorithmic management’s control would reach unprecedented levels, actualizing the notion of the panopticon.

The concept of consent allows scholars to think of workers as using their agency to participate in rather than refuse their work, even in exploitative conditions (Burawoy, 1979, 1985). 5 A few studies have examined how schedule flexibility, a common feature of on-demand work, affords agency (Occhiuto, 2017; Milkman et al., 2021). However, scholars argue that this flexibility is largely mythical because it severely diminishes as one’s economic dependence increases (Schor et al., 2020; Muralidhar, Bossen, and O’Neill, 2022), and most of the work on these platforms is done by people who need the work to survive (Gray and Suri, 2019). Thus, the question of how consent is generated within algorithmic management systems remains unresolved and is crucial for gaining insights into the growing appeal of this work.

Generating Consent in Manufacturing and Service Work Through Social Relations

Emerging from earlier studies on the informal organization and social relations, (Roy, 1952; Roethlisberger and Dickson, 2003; Mayo, 2004), a line of research has examined how consent, or workers’ active participation to align their efforts with managerial objectives, is manufactured through social relations in various white- and blue-collar jobs (e.g., IT professionals, machinists; Hodson, 1999; Barley and Kunda, 2004). Given that on-demand work is an amalgamation of features associated with manufacturing (e.g., piece-rate wages, technologically mediated pacing) and service work (e.g., customer interactions and ratings), the most useful literature in which to situate this study is that which examines how consent is produced in those workplaces. Labor process theorists have found that social relations between workers and other organizational members are key to securing consent. By offering leniency, praise, and friendship, managers can soften the harshness of work conditions while keeping their managerial authority intact. By looking the other way regarding rule-breaking (e.g., allowing smoking at a mine, tolerating craftsmen who use company time and materials to make souvenirs; Gouldner, 1954; Anteby, 2008), managers build relationships with workers on the managers’ own terms while also reinforcing managerial authority. Managers have urged workers to see their jobs as an opportunity to fully be themselves in order to distract their attention away from brutal conditions, such as in heavily surveilled call center work (Fleming and Sturdy, 2009). When supervisors praised aspects of workers’ identity that were stigmatized by broader society, workers were willing to tolerate physically and emotionally demanding conditions. For example, Sallaz (2019) documented how call centers, notorious for their strange hours and abusive customers, were one of the few places where gay men and trans women in the Philippines were praised for their subcultural identity markers (e.g., English fluency, urbaneness). And in VIP lounges, brokers built such strong strategic intimacies by flirting with and offering gifts to attractive young women that these women went out night after night, unpaid and teetering on stilettos, to brokers’ events (Mears, 2015).

Relationships among coworkers are also key in generating consent. Unlike gamification, which relies on rules designed by management to improve workers’ affective experience and to boost productivity (Deterding et al., 2011; Mollick and Rothbard, 2014), workplace games result from organic interactions between workers. In the social game of “making out,” machinists synchronized their efforts with one another to beat the piece rate by an agreed-upon amount (Burawoy, 1979). Similarly, in the “tipping game,” concierges and casino dealers categorized customers, agreeing on how much emotional labor to expend to optimize the tip-to-effort ratio (Sherman, 2007; Sallaz, 2009). These games are socially constructed: workers make the rules, receive feedback from each other, and compete against one another for rewards (Sallaz, 2013). Shared norms can induce workers to work harder than they would under a manager’s gaze. Describing the change from direct managerial oversight to being on a self-managing team, one technical worker reported having dozens of eyes upon him to make sure that he arrived on the line early, ready to work: “Now the whole team is around me and the whole team is observing what I’m doing” (Barker, 1993: 408). On assembly lines, workers shielded their efforts from managers’ eyes so they could ignore safety procedures and speed up production at their coworkers’ behest (Bernstein, 2012).

When consent is manufactured, workers have a sense of psychological and social achievement in that they have done their work well (Sallaz, 2013). Accomplishing the work, either through winning a game or developing group solidarity, elicits pride and mastery. Another byproduct of consent is that it mollifies the inherent conflict between workers and management (Sallaz, 2013) by either attenuating it through the development of friendly relationships with managers (e.g., strategic intimacies; Mears, 2015) or transferring the conflict laterally to coworkers (e.g., peer monitoring; Barker, 1993).

Manufacturing Consent in the On-Demand Economy

This rich literature on the manufacturing of consent is less applicable to on-demand work, which is largely atomized and asocial. Individuals work independently with little to no direct contact with managers and coworkers, and interactions with customers are fleeting and transactional. Thus, the social mechanisms foregrounded in the workplace consent literature are non-existent. And without a cohesive organizational culture and mission statement, normative mechanisms of control, such as shared goals or purposes (e.g., Kunda, 1992), are largely unavailable. Indeed, by being classified as independent contractors, workers are explicitly told by platform organizations that they are not organizational members, as these organizations instead call workers entrepreneurs, co-creators, service providers, consumers, or partners (Ravenelle, 2019).

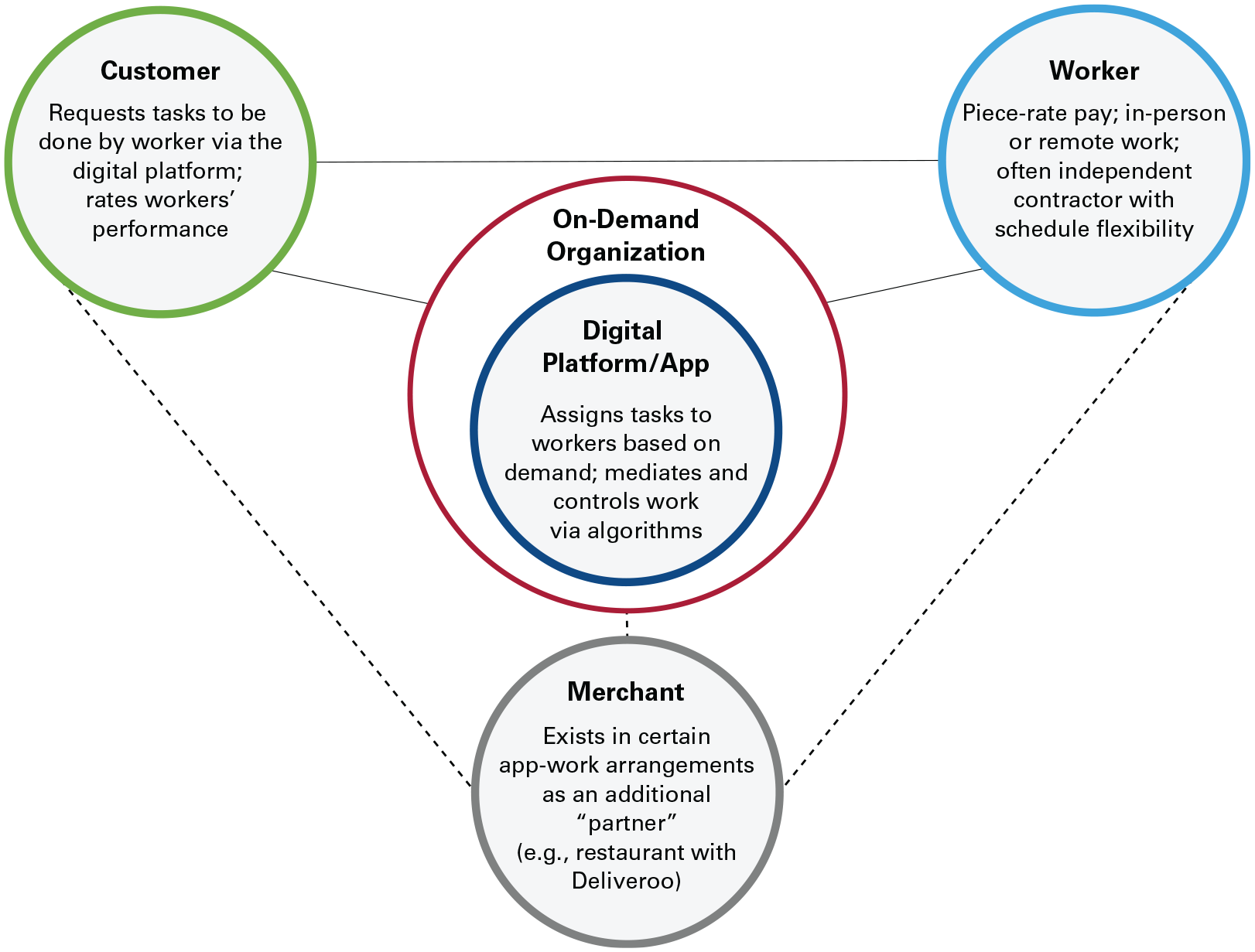

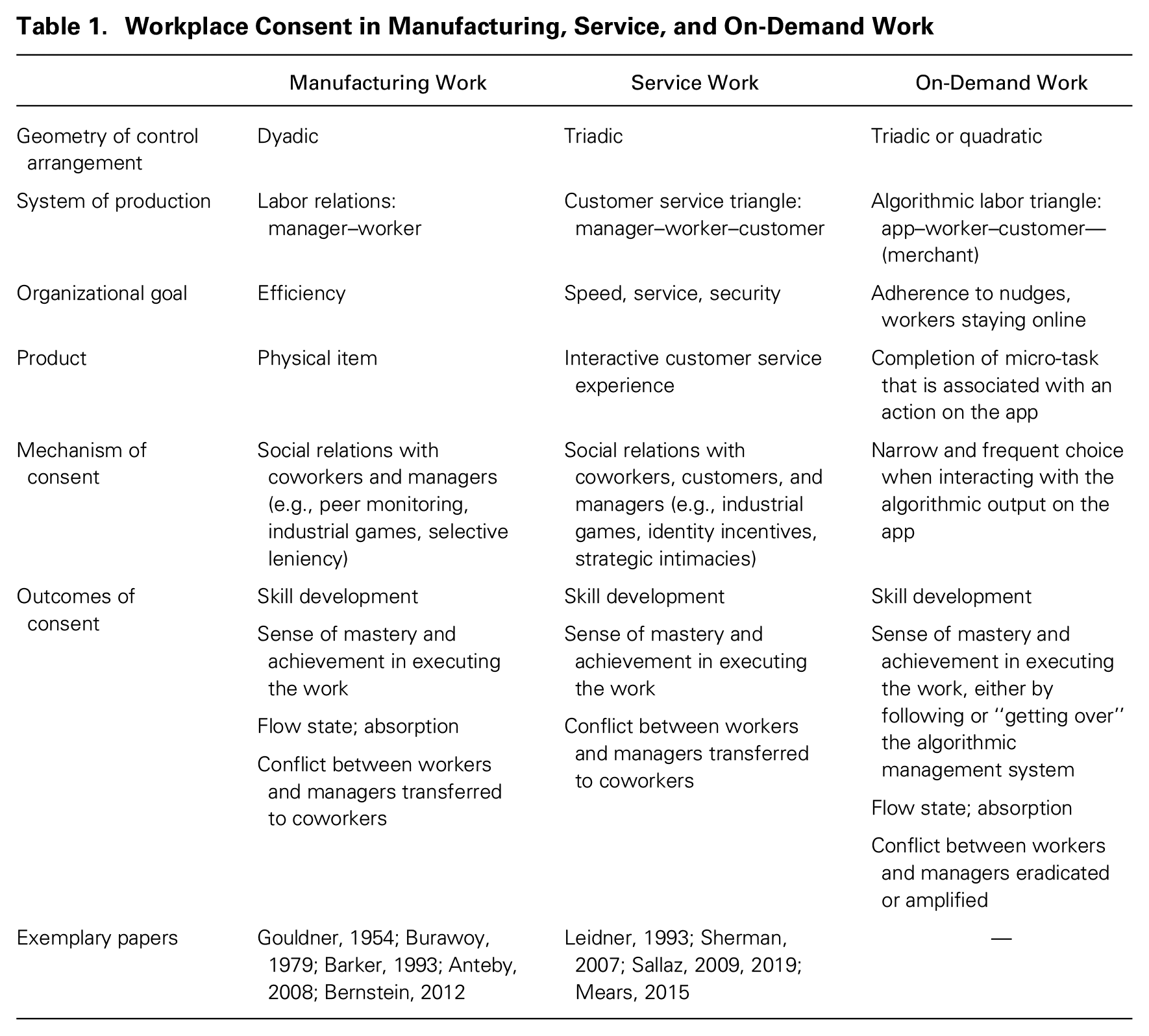

Moreover, consent is manufactured at the point of production, and on-demand work has a different point of production compared to manufacturing and service work (i.e., worker–machine and customer–worker interactions, respectively; Burawoy, 1979; Leidner, 1993). In on-demand work, the labor process is reconfigured such that the point of production involves an app and its algorithms facilitating connections among multiple actors (e.g., the restaurant, customer, worker, and app in food delivery services; see Figure 1). Hence, irrespective of where exactly the work is done (i.e., digitally by an MTurker or on the road by a Deliveroo courier), the site of the worker–app–customer interaction constitutes a clearly defined system of production at which consent is manufactured, often in the absence of managers and coworkers. Indeed, managerial intent is obscured in this arrangement in which the app—not a supervisor—appears to organize the work process (Chai and Scully, 2019; Vallas and Schor, 2020; Schor, Tirrell, and Vallas, 2023). See Table 1 for an overview of management systems and consent production.

Algorithmic Labor Triangle and On-Demand Work*

Workplace Consent in Manufacturing, Service, and On-Demand Work

Research has not yet examined how algorithmically mediated changes to the labor process may affect consent. Like the internal combustion engine, algorithms are infrastructural technologies that lie at society’s core, as they have the power to “underwrite almost every aspect of daily life” (Barley, 2020: 8). Already, algorithms are transforming how jobs are filled (Schechner, 2017; Feffer, 2023), structured (MacKenzie, 2021; Lebovitz, Lifshitz-Assaf, and Levina, 2022), monitored (Levy and Barocas, 2018; Allison, 2023), and experienced (Bellesia, Mattarelli, and Bertolotti, 2023; Möhlmann, de Lima Salge, and Marabelli, 2023). Understanding how algorithmic management reconfigures work, especially at the human–algorithm interface, is crucial for understanding workers’ experiences and the broader socio-material work ecosystem (Orlikowski and Iacono, 2001; Barley, 2020). But this kind of granular analysis is missing from the literature. Scholars have hinted at how individuals’ ability to make choices and develop workarounds can keep them working under precarious conditions (e.g., Lee et al., 2015, Shapiro, 2018), but the literature lacks a more comprehensive understanding of the relationship between algorithmic management and manufacturing consent. One step toward answering these questions involves closer examination of workers’ moment-by-moment interactions with the technology, to help us understand the choices workers do have and make vis-a-vis the algorithmic management system.

Research Setting, Data Collection, and Analysis

The Ride-Hailing Industry

First launched in 2011, ride-hailing services such as Uber, Lyft, and Juno have disrupted the taxicab industry. The core innovations enabling these services are digital maps and the algorithms that coordinate the work process by matching independent, distributed drivers (working either in their own or rented cars) with customers within seconds and giving block-by-block directions. Fares dynamically adjust based on consumer demand, and performance is evaluated through customers’ ratings and drivers’ acceptance and cancellation rates. Drivers have little direct contact with company employees and few ways to voice concerns; even firing (which is euphemistically called deactivation) is done online. Work requirements vary, with most companies requiring clean driving records, no moving violations in the previous three years, state vehicle inspections, and increasingly, despite industry protests in some cities, criminal background checks. Once hired, which can take from three days to three weeks, workers can go online and begin working. Classified as independent contractors, drivers pay their own employment taxes and are not eligible for health insurance or other social benefits (e.g., workers’ compensation, unemployment insurance). Pay is based on miles driven and bonuses, demand-based incentives, and tips (if any), and it is delivered weekly, although drivers can daily request “instant pay” for a small fee ($1.49 as of December 2023). Some drivers are active on multiple platforms, and algorithmic features are similar across platforms. (See Online Appendix, Image 7 for an example of a worker using two platforms at the same time.) In this article, I use the term “ride-hail(ing)” when discussing the act of driving, I refer to “RideHail” when discussing one or more of the platform companies examined in this study, and I name a specific company (e.g., Uber, Lyft, Juno) only when doing so is necessary to contextualize a comment.

On-demand platforms explicitly promise to provide immediate access to labor for customers. Thus, ride-hailing platforms have two overlapping objectives: to have workers follow algorithmic nudges and to keep workers online and available to work on demand. Nudges encourage workers to make decisions in alignment with fluctuating customer demand, such as offering incentives to work in busy areas. Given that workers are classified as independent contractors, drivers are not contractually obligated to follow nudges, such that even if drivers do not follow a nudge but stay active online, this meets the ride-hailing platforms’ distal second objective of having drivers available to work and offer rides to passengers.

The Work Structure

The work arrangement

RideHail classifies workers as independent contractors, allowing workers to choose their working hours and days with little human oversight, which most drivers in this study liked. Workers I interviewed planned their daily schedules around doctor appointments, preferred sleeping schedules, and child care responsibilities and could take prolonged breaks—for overseas travel or to launch a new business, for example—with no questions asked. For this reason, one participant (Thiel, Philadelphia) left their union job as a carpenter in order to work for RideHail, stating, “I can control the shift. I don’t have a boss.” Being removed from direct human oversight also meant that workers no longer had to work with managers who were sexist, racist, or xenophobic. One driver had lost two previous jobs due to race-based managerial aggression and now described himself as “freed” from the White gaze. Indeed, the retort “I don’t have a boss” was the most frequent response to the question “What do you like about driving for [RideHail]?” In contrast to workers’ prior employment (primarily in retail), on-demand work provided more benefits, allowing individuals to escape bad managers and have greater discretion over their schedules. 6

The work task

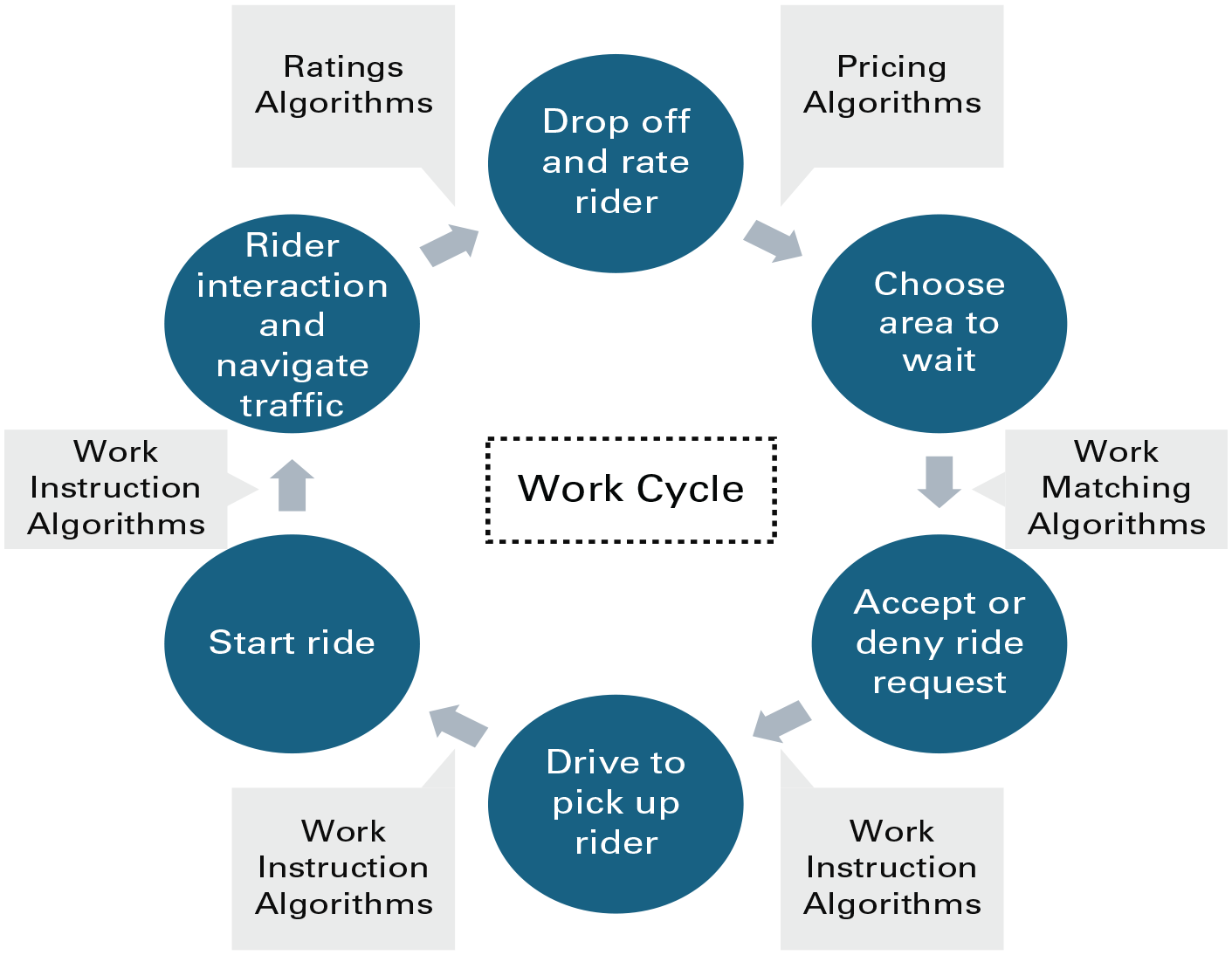

The work process of a ride-hailing driver is based on a three-way interaction between the driver, the rider, and the app. Drivers begin a shift by choosing a location to open their app and swiping right to go online. A complete ride consists of (1) the app matching the driver and rider and the driver accepting; (2) the driver getting to the rider’s location and waiting for the rider to enter the vehicle; (3) the driver swiping “start ride” on the app; (4) the driver and rider interacting; (5) the driver dropping the rider off; and (6) the driver swiping “end ride” and rating the rider (see Figure 2). Work cycles may end prematurely, such as when a rider fails to show up or the app malfunctions. After the end of a work cycle, employees may stay online and wait to be matched again or go offline and stop working. In smaller cities, where trips tend to be shorter, drivers can complete as many as six or seven rides in an hour, while in larger locales they might complete only two or three. Fares are set locally and are based on a pickup fee, distance, time, and demand pricing (if any). Drivers may also be offered bonuses for completing a certain number of rides within a designated period, but this is not offered in all markets. Reports of gross hourly wages range from $12 to $30. 7

Ride-Hailing Work Cycle

Data Collection and Analysis

Given the emerging nature of on-demand work and my interest in theory development, I designed a multiple-source qualitative study, having spent seven years in the field (from 2016 to 2023). I used four overlapping data sources, which I triangulated to bolster validity (Eisenhardt, 1989): participant observation (160 total hours of driving-related activity), conversational interviews (n = 112), semi-structured interviews in 23 North American cities (n = 136), and social and print media. 8

Participant observation

To understand how algorithms were deployed and experienced, I participated in the ride-hailing industry as both a driver and a rider. From 2016 to 2019, I was a driver in a major U.S. city, using both my personal car and a rental car, the latter obtained through a platform-sponsored program. I earned income from one RideHail company. I varied my driving times and routes to widen my range of experiences: I drove the weekday morning commute and the evening bar shift, timed my airport runs with international flight arrivals, visited higher- and lower-income neighborhoods, and worked on major holidays, including twice on New Year’s Eve (the busiest day of the year). I also conducted mini experiments on myself. On some days I would try to maximize my income by chasing demand-based incentives and bonuses, while at other times I purposely ignored such incentives and did not check my earnings until the day’s end. Sometimes I manipulated the app, trying to confine my trips to a certain area, while at other times I let the app “drive” to see where I ended up. To gain perspective on drivers’ experiences in different areas, I enlisted a research assistant to drive for the same company in another U.S. city. Our ethnographic notes included reflections on busyness, ratings, incentives, interactions with in-app driver support systems, accidents, car care, and road conditions. I also attended classes, organized by a local group, on defensive driving and on my legal rights as a driver. During the same period, as a rider I kept notes on nearly all rides taken (n = 112) with individuals working for several ride-hailing companies. Some of these rides were personal, and some were specifically for this study. For example, I spent afternoons taking rides around a new city. Logs included information about how I hailed the ride, the car’s condition, app malfunctions, and overall impressions of the ride, including my rating.

Semi-structured interviews

In my first round of data collection starting in 2016, I conducted 63 semi-structured interviews with drivers across North America. 9 The protocol began with grand-tour questions: “Tell me about driving” and “What’s a good day driving?” In the second round of interviews (n = 44), which were conducted 18 to 24 months after the initial interviews, I asked workers to describe any changes since our last interview (e.g., schedule changes or app updates) and their current financial situations, and I followed up on themes that had not been addressed in the first interview. 10 In the third round (n = 29), I focused on how drivers’ work lives were changing, in particular regarding the COVID-19 pandemic, and how drivers navigated and solved problems (e.g., with customers or the apps). Most interview data in this article are from the first and second rounds of data collection. All interviews except one were conducted in English, and all interviews except ten were professionally transcribed. 11 In total, I conducted 136 interviews with 63 drivers, of whom 19 (30 percent) were female. Fifty (79 percent) reported driving as their primary source of income, and all except one reported driving to meet essential household expenses such as utility bills. Twenty-four drivers (38 percent) were active on at least two apps, though not all participants lived in cities in which multiple ride-hailing companies offered services. The amount of time employed in the industry (at Round 1) ranged from two weeks (ten rides) to seven years (18,000 rides), with the average driver having approximately 14 months of experience, 1,800 trips completed, and a 4.87/5.0 rating. 12 My sampling procedure yielded participants who were more committed than most drivers to the ride-hailing industry, reflected by a longer tenure than the three- to six-month industry average (Campbell, 2018; Hall and Krueger, 2018).

I used several sampling approaches to ensure maximum variation and participants’ anonymity, as news media have reported that riders and drivers have been blocked by Uber after having made grievances public (Isaac, 2019). I met roughly half my informants through ride-hailing—either as part of my everyday activities or through expeditions to an unfamiliar area. To increase participants’ anonymity, I often hailed rides from family members’ or friends’ phones. I also recruited informants by advertising (e.g., parking lots, web forums) and via convenience and snowball sampling. 13 As the majority of ride-hailing drivers were male and/or people of color who worked 30 or more hours per week (Campbell, 2018), I tried to interview drivers who were female, White, or worked part time, to gain minority perspectives. Interviews ranged from 35 minutes to 2.5 hours, with an average length of 65 minutes. Lastly, I collected data across multiple cities because ride-hailing and new features were introduced at various times and places. For example, shared rides, a service that matches drivers with multiple riders traveling in the same direction, were first introduced in 2015 and available only in larger cities. Interviews included drivers from areas where the industry was well established (e.g., Philadelphia), nascent (e.g., Missoula), banned (e.g., Austin), and facing pressure from unions (e.g., New York City).

Archival and social media

Unlike labor process theorists before me, I did not confine my field site to a fixed location, and I included blogs, discussion boards, YouTube videos, online articles, and company materials. 14 These documents served as useful support or allowed triangulation (Shah and Corley, 2006). I created several analytical aids from these materials, such as an industry time line and a look book of interviews with industry leaders. This unobtrusive form of data collection (Webb and Weick, 1979) provided important information about the social, legal, and political challenges in the industry as well as additional perspectives on drivers’ experiences.

Data Analysis

I analyzed data by using a grounded theory approach (Locke, 2001; Charmaz, 2006; Glaser and Strauss, 2017) with field observations, interviews, and web forum posts as primary data sources.

Stage 1: Open and focused coding

Data in the first wave were collected in four two-month rounds, with each round followed by two months of preliminary analysis. After my first round of data collection, I refined my research questions, interview schedule, and the structure of my notes. For example, I noticed that drivers often discussed pricing incentives and set earnings goals and that these themes also appeared in my field notes. Thus, in subsequent interviews, I probed to understand how drivers’ activities were shaped by incentives (e.g., ignoring overly challenging bonuses), and I paid attention to my own behavior as a driver in response to incentives. Toward the end of the first round of data collection, I began focused analysis. While my earlier analysis had informed my thinking, I put these observations aside so that I could see my data with fresh eyes as I began coding and, as Charmaz (2006: 45) termed it, “generating the bones of analysis.” Interviews and field notes were coded over five rounds. I began by open coding one-fifth of my data, which I selected for maximum variation of gender, hours worked, length of time driving, and location. Three major themes emerged: general (dis)like of work, pricing incentives, and ratings. In the next two rounds, I focused on these themes while also continuing open coding, through which two additional themes emerged: interactions with the apps (i.e., accepting a ride request) and strategies to create a good day. No new themes emerged in the last two coding rounds. I followed a similar process in the second and third waves of data collection. Throughout the process, I wrote memos, discussed ideas with colleagues, and workshopped early findings.

Stage 2: Axial coding

In the next stage, I began axial coding and iterating between the data and existing theory to build “a dense texture of relationships” (Charmaz, 2006: 60) around concepts. Based on my experience driving, I knew there was a rhythm to the work, so I began by constructing a temporal model of a routine day and a routine ride. Depending on when I worked, I drove the same streets and had identical conversations with similar people (e.g., driving professionals in silence on North Capitol Street on weekday mornings, having conversations with drunk partygoers on Wisconsin Avenue on weekend evenings). Tasks were repetitive in that each ride required the same interactions with the app I used as a driver (e.g., accepting a ride, rating the rider). Using stacks of index cards as a visual model, I laid out the five thematic codes on top of the routine day and routine ride models and, as I had more data for the latter, focused my analysis there (see Figure 2).

Prior literature has described algorithms as discrete units (Orlikowski and Scott, 2014; Curchod et al., 2020), but my informants and I experienced algorithms as distinct but interlocking parts of a larger management system. Declining a ride, for example, affected ratings, which could then affect the following week’s bonus. Building on this insight, I recoded my data around each mention of an algorithmic function and identified five functions: matching, work instructions, demand-based pricing, bonus pricing, and ratings. 15 Further coding clarified the following for each algorithmic function: its purpose, how it was communicated to drivers, how it was linked to other algorithms, and whether it influenced drivers through rewards or sanctions.

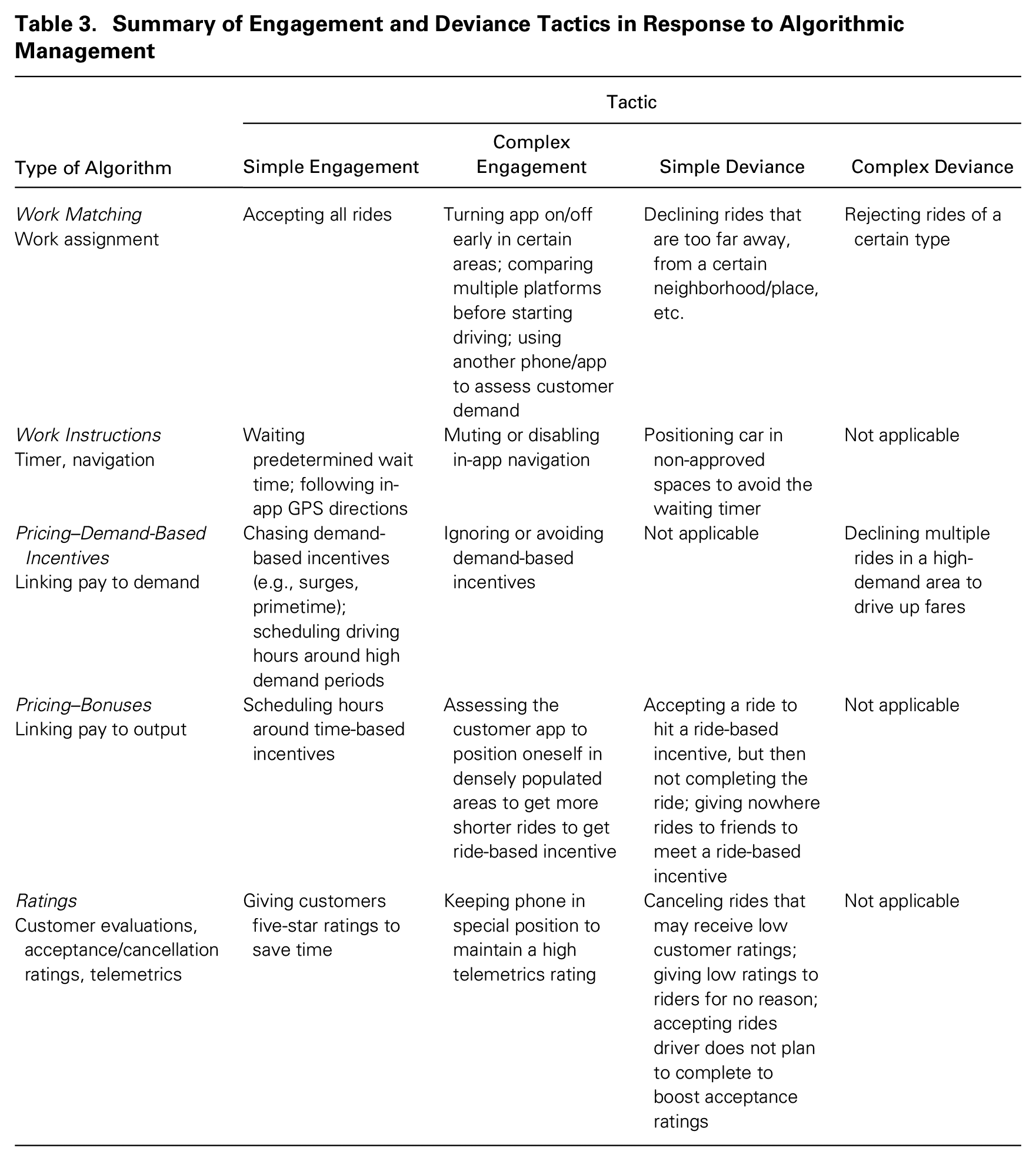

With a clear sense of the temporal work cycle and the function of each segment of the algorithmic management system, I asked myself, “What is the platform company’s algorithmic management system encouraging drivers to do? How are drivers responding to this encouragement?” In re-reading the interview transcripts, I found that one of my informants had already answered these questions, describing two goals, one organizational and one personal, that were sometimes in conflict and sometimes aligned: “The company is trying to make drivers take as many rides as possible. The driver is trying to make as much money as possible” (Arthur, L.A.). With this response, everything clicked. The ride-hailing platforms structured the work process to encourage drivers to complete the maximum number of rides possible. The tasks to move along the work process were segmented by the algorithms and were thus simple and discrete, designed so that workers would complete each task and quickly move along to the next. These tasks can be viewed as moments of choice that were built into the work process. And RideHail regularly emphasized choice, as schedule flexibility, as a benefit of the work. Advertisements, for example, highlighted how workers drove their own cars and set their own schedules (see Image 1 in the Online Appendix). Recognizing that moments of choice were also embedded in the human–algorithm interactions, I recoded my data accordingly, noting the ways in which workers talked about choice in relation to the algorithms. I identified moments of choice embedded within the two tactics that I named “engagement” and “deviance”—the first rarely resulting in sanctions and the latter often so. In engagement tactics, workers largely followed the algorithms’ nudges and stayed within the boundaries of the management system, while in deviance tactics, workers manipulated their input into the algorithmic management system. Conversations with other scholars encouraged me to consider more cases in which the drivers used the output of the algorithms to make informed choices about how to interact with the work arrangement itself, i.e., when, where, and for how long to work. Because these choices were within the boundaries of the management system and not sanctioned, I folded these data into the engagement tactic.

Stage 3: Theoretical coding

In the final round of analysis, theoretical coding, I developed relationships between categories elicited in earlier stages to “weave the fractured story back together” (Charmaz, 2006: 63). Starting with the observation that workers had different choices when interacting with algorithms, I returned to the literature to help me theorize from the data. I found literature in the sociology of work to be fruitful, as it extensively documents how consent, the ways in which workers enthusiastically align their efforts to meet or exceed managerial objectives, is generated in many jobs. After identifying key features associated with consent (site of production, mechanisms, outcomes), I then reanalyzed my data, paying particular attention to how the existing theory did and did not match my data. Moving between analyzing data, drawing models, and writing memos, I abstracted from these categories and relationships to identify how consent is produced through a series of constant yet confined choices. I also identified the underlying mechanisms and associated outcomes of consent, ultimately explaining that how consent is generated in algorithmic management systems differs from how it is produced in other settings, as well as showing how it affects workers’ engagement.

Findings

First, I describe the five functions that compose the algorithmic management system by assigning, evaluating, and pricing work tasks in order to parcel work into small segments. I then show how these constant yet confined choices elicit consent through seemingly oppositional tactics: engagement and deviance. I conclude by describing how workers could withdraw consent by either logging off with no intention of returning or by damaging the integrity of the algorithmic management system.

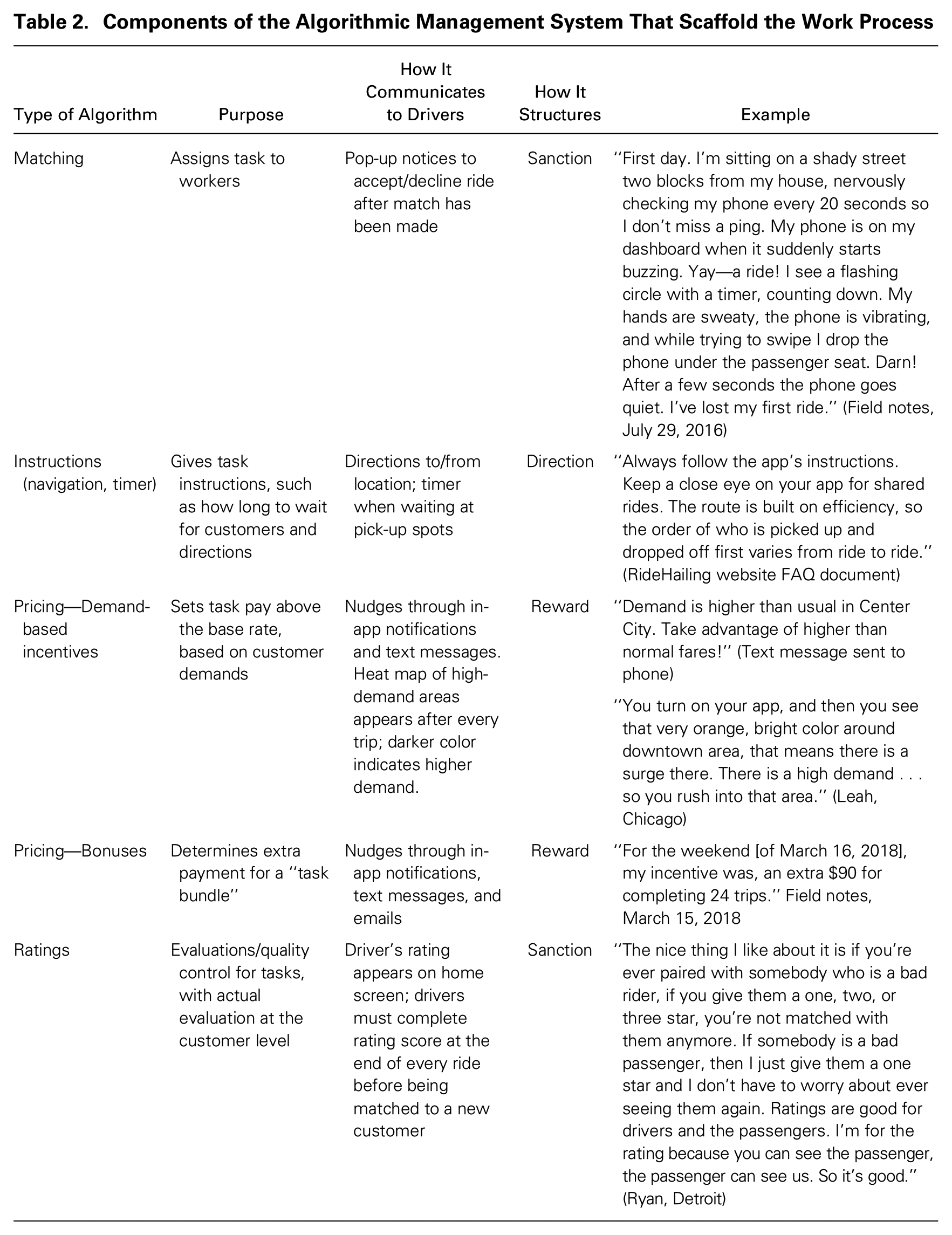

Algorithmic Management in the Ride-Hailing Industry

Algorithms scaffold the work process

To understand workers’ granular experiences of work, it is first necessary to understand how the work was structured. Unlike manufacturing and service work, in which human management helps to scaffold work, the algorithmic management system set up the work process. In the ride-hailing industry, algorithms scaffolded the work process by (1) matching workers to riders; (2) instructing workers how to proceed through the work tasks (e.g., giving directions); (3) setting and adjusting fares dynamically (demand-based pricing); (4) offering bonuses (e.g., “Do 50 rides in the next 5 days for an extra $50”); and (5) evaluating workers’ performance via customer ratings. See Table 2 for descriptions of all five components. (Table 2A in the Online Appendix shares the same information and includes accompanying images as examples of each algorithm.) Algorithmic management systems thus used rewards, sanctions, and specific directions to manage drivers. Ultimately, the algorithmic management system was the defining feature of the work; for example, in a typical shift a driver may have completed only a dozen rides but would have more than 100 unique interactions with the algorithms.

Components of the Algorithmic Management System That Scaffold the Work Process

Rewards

The pricing algorithms, which were demand-based incentives and bonuses, rewarded drivers if they coordinated their schedules in response to demand. Demand-based incentives could be predictable (e.g., rush hours) or sporadic based on local events. Texts and in-app messages alerted drivers regarding fare increases in busy areas: “Demand is higher than usual in Center City. Take advantage of higher-than-normal fares!”“1.2–1.8x boost—4.30PM–7PM in downtown DC!” and “Adele is playing at [venue] tonight! The streets will be full of people!!” (see Image 2 in the Online Appendix). In addition, heat maps that indicated high-demand areas popped up when drivers first opened an app and at the end of every ride (see Image 3 in the Online Appendix). Checking heat maps became a routine part of the workday, as drivers “turn[ed] on [the] app and [looked for] that very orange, bright color” (Leah, Chicago) and rushed to “try to go where the heat maps are surging” (Mercy, San Francisco).

Weekly bonuses offered extra money for completing an algorithmically determined number of rides. How the algorithmic management system determined bonuses was proprietary company knowledge and, at times, seemingly arbitrary; for example, a driver who met the quota one week might receive an easier or harder quota the following week. Bonuses and quotas were continuously communicated to drivers through texts and in-app alerts—while taking a mid-semester hiatus from the work, I still received biweekly texts (see Image 4 in the Online Appendix). Some bonuses encouraged commitment to a specific platform company. After driving with a competitor, George (Boston) received an enticing bonus offer from his former ride-hailing company: “They will pay me up to $500 on top of the money that I make for [the competitor] . . . and if I stay without logging out for one hour, they will pay me $40. [Laughs] That’s how they just got me back easily.” Other bonuses were predicated on workers having completed a certain number of consecutive rides without logging off, further encouraging workers to commit to a specific company (see Image 5 in the Online Appendix).

Sanctions

The matching and evaluation algorithms sanctioned workers who did not comply with regulations. When the algorithmic management system completed a match, the driver’s phone buzzed and displayed the distance to the pickup spot, rider’s details (including rating), and demand-based incentive amount (if any). Drivers had up to 15 seconds to accept, and they were sanctioned if they did not accept a certain percentage of rides. “We use acceptance rates to determine driver eligibility for certain incentives and help keep rider wait times short,” Uber’s guidelines noted. Repercussions for canceling rides included warnings, temporary suspensions, and permanent deactivation; consequently, most drivers reported accepting all rides (see Images 6 and 7 in the Online Appendix). When asked if he declined any rides, Jay (D.C.) replied, “Not really, because like I mentioned, I’m out here to work.” Laughing, Leah (Chicago) said, “You can decline—[but] how are you going to decline?” and reported that she had accepted every ride request in the past year.

High customer ratings were required for continued platform access, and drivers were punished if their evaluations fell below a certain number. In short, riders could fire drivers. 16 While the rate of driver deactivation due to ratings was low, the threat of poor ratings loomed large and clearly shaped drivers’ behaviors. 17 Drivers created signs that hung over back seats reminding riders that a rating of less than five stars was harmful (see Images 8 and 9 in the Online Appendix). At the end of one ride, a driver cheerily said to me, “You’ve been a five-star customer! I hope you rate me the same!” while making sure I was watching him rate me (Field notes, March 19, 2017). Drivers with high ratings received positive reinforcement, such as compliments and non-monetary badges (e.g., good conversationalist; see Images 10 and 11 in the Online Appendix). When customers’ ratings dropped, drivers received warnings, with suggestions about how to improve or a requirement to attend a remedial course at the driver’s expense. Others could be deactivated with limited information provided by RideHail and no means of recourse, which in cities with only one ride-hailing platform was the equivalent of an industry blackball (see Images 12 and 13 in the Online Appendix).

Directing

Lastly, the work-instruction algorithms directed workers through the navigation and pacing of the work. After a driver accepted a ride, these algorithms provided routes, created queues, and set timers (see Image 14 in the Online Appendix). Instructional materials described the process: “After you accept a request, tap ‘Navigate.’ The app guide[s] you to the rider. The rider will see your car approaching on their app and your ETA. When you’re close, we’ll send them a text.” Once the driver arrived, a timer appeared, dictating how long drivers must wait (60 seconds to 10 minutes based on ride type) before drivers could select “no show” and receive a new ride (see Image 15 in the Online Appendix). Navigation instructions were critical in shared rides, in which multiple riders with different destinations shared the same vehicle. Instructions urged drivers to “Always follow the app’s instructions. The route is built on efficiency, so the order of who is picked up and dropped off first varies from ride to ride” (see Image 16 in the Online Appendix). In addition, algorithms matched drivers to their rides and sent various messages and reminders. For example, drivers were placed in virtual queues in high-traffic areas, such as the airport (see Image 17 in the Online Appendix), and the queues dictated how long they must wait for a new ride. The algorithms sent nudges at opportune times to encourage drivers to stick with it. Texts appeared when drivers had taken a break (“You haven’t driven in three days. Go out there and make some money!”), and pop-ups nudged drivers as they logged off (“You’ve only driven 8 hours today! Only $18 to go until you meet yesterday’s pay-out”). Telemetrics monitored speed, acceleration, and deceleration, offering encouragement such as “Good job keeping your braking smooth!” and warnings when drivers did not meet the telemetrics standards (see Images 18 and 19, Online Appendix). Through rewarding, sanctioning, and directing work activities, the five components of the algorithmic management system structured and segmented the work such that drivers were able to make a series of constant yet confined choices when conducting their work, and I found these choices to be the building blocks of manufacturing consent.

Manufacturing Consent: Engagement and Deviance Tactics Within Algorithmic Management Systems

Although most research emphasizes the all-encompassing control of algorithmic management systems, these systems do not necessarily take away workers’ ability to exercise choice—indeed, because algorithms segmented the work into discrete tasks, there were frequent opportunities for workers to exercise choice, albeit over narrow segments of work. Regardless of whether workers used engagement or deviance tactics, at hundreds of points in every work day, workers made choices within a highly constraining system—choices that elicited their consent to a platform’s algorithmic management system, even when they skirted some of the platform’s formal guidelines. While the tactics were practical opposites in terms of how workers interacted with the management system, they both elicited consent and were associated with workers’ finding meaning and seeing themselves as skillful agents; however, the tactics differed in terms of their potential for worker–management conflict.

Engagement Tactics: Playing Within the Boundaries of the Algorithmic Management System

When deploying engagement tactics, drivers worked in conjunction with the algorithmic management system, using information from the apps to make decisions about how to navigate within the boundaries of the system. In simple engagement tactics, workers followed the algorithmic nudges, such as driving toward high-demand areas. In complex engagement tactics, workers did not follow the algorithmic nudges; instead, they used information provided by the nudges to inform their navigation of the work. Below, I describe workers’ engagement with the pricing and matching algorithms.

Simple engagement tactics

Chasing demand-based incentives

The algorithms that calculated demand-based incentives were some of the most visible algorithms in the management system and were directly linked to workers’ income. As soon as workers opened an app, and when they completed a ride or logged off—or even when not working—they received notifications about nearby increases in demand. Arthur (L.A.) described the importance of demand-based incentives

18

: [Demand-based incentives] play a critical role in whether or not you’re going to go out, because you’re dealing with economics—you don’t want to waste your time or your gas. I use it to my advantage. When it comes into your app, you want to be in those areas. [The company] pays flat rates and obviously the flat rate is not as attractive as surges.

Demand-based incentives could make the difference between breaking even and making a profit, especially as base mileage pay declined over the period of this study. 19 To chase a demand-based incentive, drivers checked heat maps, text messages, or in-app notifications before driving. George (Boston) monitored the heat map before signing on: “I’m just waiting. When the surge price is starting to go up, that’s when I put the system on. I know where to be, when, what time—every single day.” Drivers on ride-hailing forums offered complex suggestions to monitor demand pricing, such as installing software to take automated screenshots over multiple days at different points in time to be better able to predict trends. Another driver on the forums described using “one phone to drive for [one company], and the other phone to zoom out to your entire market to watch the surge areas.”

Accepting all rides

Some drivers believed the best way to work in the system was to choose to accept all rides. Ignoring information like a customer’s ratings, Ryan (Detroit) said, “I want to work. I’m not here to see what you’re rated.” Similarly, Curtis (D.C.) said, “The system is: do the work or you don’t. There’s no in-between. You got to be out here.” Drivers described cranking up the music, often rap or electronic music, so they could “have fun, cruise, and just go with the flow” (Tila, Boston). Rhythmic music soothes the nervous system, lowering brain activity (Thaut, 2013), and facilitates entering into a state of absolute absorption (Csikzentmihalyi, 1990). After listening to one driver’s music bounce between three distinct beat-heavy sounds—Afrobeats, Czech house, and Bamana—I asked how he put together his playlist. He replied, “I play what I want—this cat here [an Afrobeats artist] puts me in the zone” (Field notes, July 26, 2018).

In Leland’s car (Philadelphia), we started talking about Lindsey Stirling, a dubstep artist, who was playing via his phone. In a follow-up interview, I asked about his typical day: [Every day is] pretty [much] just like every other day. . . . I basically turn on the app, hoping I get a ping soon . . . I don’t really prepare in any sort of way . . . I can stay focused. I’m always pretty good when I’m driving. If there’s someone in the car, I guess something just kicks in, that I am able to stay focused and not slack off. . . . It’s weird eating because you want to stay online and keep earning, at the same time you’re hungry. I usually just wait until afterwards . . . I’m too focused. . . . Wait, what was the original question again?

Accepting every ride, Leland got so absorbed in driving that he avoided stopping to eat or even to use the bathroom—indeed, while responding to the question, he re-entered the same flow state he was in while driving, losing his train of thought. The combination of accepting all rides and beat-filled music helped drivers “stay focused” (Kristin, New Haven) so that “time goes fast” (Thiel, Philadelphia), so much so that at the end of the day they were surprised at how much they had earned (Innocent, Detroit). Subsequent software updates made it even easier for drivers to choose to accept all rides and stay in the flow state; they could just click the “Accept All Rides” button so they did not need to swipe “yes” for each ride.

Complex engagement tactics

Ignoring and avoiding demand-based incentives

In complex engagement tactics, drivers did not necessarily follow algorithms’ nudges but did stay within the boundaries of the management system, allowing them to see themselves as skillful agents. Not all drivers chased demand-based incentives, for example, and there were no rules stating that drivers must do so. Indeed, Tamara (Denver) ignored them: “I don’t pay much attention to the surge. I start up where I start off and just go wherever I get my ping. It’s just not worth it—too much thinking.” Due to their real-time nature, demand-based incentives changed frequently or even disappeared, leaving drivers with a bitter taste. Kimbo (Seattle) said, [Demand-based incentives are] a little annoying because it’s misleading or by the time you get there it’s gone. They’ll send out a text that says, “Adele is playing tonight, the streets will be filled with people.” But there’s going to be a lot of traffic and I’m going to be driving one person. They just exaggerate—that’s a better word—you’ll make crazy cash this weekend. It’s like they’re insulting your intelligence. [Laughs]

As following demand-based incentives did not necessarily lead to more money, some drivers tempered their expectations and/or carefully chose which incentives alerts might be profitable. Others went a step further, examining the demand-based-incentives algorithmically generated heat map and then driving in the opposite direction. Eric (D.C.) said, I don’t hang around where the surge is because there are a lot of cars around. You go a little farther to get more passengers than to go where the surge is and get less passengers. For example, get one passenger in 30 minutes and make $20, but go outside the surge and get four passengers and make $50.

By monitoring demand-based notifications and making estimates to determine whether a ride was worth their time, drivers exercised choice. Though drivers might respond in seemingly contradictory ways—George turned on his app only when he saw a demand-based incentive, while Eric drove in the opposite direction—each driver was monitoring the demand-based-incentive algorithm, evaluating its information, and responding in a way that they believed would maximize their earnings. While drivers may be rewarded for chasing a demand-based incentive to a busy area, drivers were not penalized for ignoring or avoiding these areas.

Optimizing pickup locations

Classified as independent contractors, drivers had the flexibility to determine when and where to work, and the algorithmic management system influenced how drivers enacted this flexibility. Leland (Philadelphia) tried to get the matching algorithm “working” in his favor by turning on the app two minutes before getting to his preferred spot because he believed that “the algorithm factors in how much time you’ve been waiting. The longer I’m online, the more chances I have to get a ping [ride].” Other drivers monitored the rider app, sometimes purchasing a second phone expressly for this purpose, to scope out other cars and position themselves advantageously. Amber (Missoula) said, You open the passenger app and it will show you the eight closest drivers and then you just go where they’re not. You could count all the other drivers on any given moment. It would be the 200 block of Ryman, but there were too many cars—it was too stressful . . . it wasn’t always a sure thing. So, I would go four blocks away where there were less people but more bars. And it would work out for me.

Considering the dynamic pricing algorithm, some drivers turned off an app when finishing a ride in a less desirable location and then turned it on again at a more desirable one (e.g., near the airport) to maximize their earnings. Two drivers described the different ways in which the pricing algorithm influenced how long they worked in a college town: I told my buddy this. Sometimes you got to turn on the app and go wherever the riders take you. If you go to Ypsilanti, leave it on. If the Ypsilanti person takes you to Detroit, leave it on. If the Detroit person takes you to Royal Oak, leave it on. Do that once and see where you go and how much you make. You’ll see that it doesn’t pay . . . staying in Ann Arbor is the best thing you can do as opposed to going outside. (David, Ann Arbor) I get out of Ann Arbor as fast as I can. They charge about 20 cents more a mile. . . . They charge more of a base rate . . . but your rides are much shorter. And if you’re going two miles in Ann Arbor, it could take you 10 to 12 minutes to get there . . . you can’t make any money. Between the hills and people walking, you can’t get anywhere fast. I’d rather take someone 20 miles on a highway, get there in 20, and make some money, than take someone three miles around Ann Arbor making an extra 20 cents a mile. (Boxton, Detroit)

Drivers’ ability to make different choices about when and where to work yet remain within the boundaries of the management system shows how the algorithmic management system allowed workers to make confined choices that could also reinforce their image of themselves as skillful workers.

Outcomes of Consent Produced by Engagement Tactics: Skill Development and the Eradication of Managerial Conflict

When deploying engagement tactics, drivers found meaning in the work by seeing themselves as skillful agents, deftly navigating the algorithmic management system and having a sense of mastery of their work. Drivers boasted that they had “special skills” (Jorge, D.C.), “knew how to make the money” (George, Boston), and could make things “work out” (Amber, Missoula) “to their advantage” (Arthur, LA). They worked smart (Zach, NYC), only in the times and places “worth” (Boxton, Detroit) their time. Emphasizing the skills and meaning she found in driving, Elizabeth (Detroit) said, “Oddly, it is fulfilling to me. I mean I’ve heard people describe it as a job that you can do if you have little skills or something like that . . . but I don’t think that’s the case for most, I really don’t.”

Another outcome associated with engagement tactics was the absence of conflict with the algorithmic manager. One reason workers did not see themselves having any conflict with the algorithmic management system is that their behaviors were aligned with the nudges, which, in turn, were often associated with various incentives. Often, drivers repeated that the best thing to do was to follow the system, which often worked in their favor. Thrilled at the money he earned by following the nudges, Marshall (Detroit) said, “I made $35 off some perks [incentives] and I did it by doing what I’m supposed to so . . . everything else will work itself out.” Drivers reported no real conflict with the algorithmic management system. Sebastian (Detroit) said, “Sometimes you do a back and forth with them about a cancellation fee or a fare adjustment, but that’s [about it].” Overall, drivers described feeling satisfied, noting that the work was “reasonable” (Betrand, D.C.), and said things such as, “I like it. I can’t complain at all. And honestly, I don’t think anyone can” (Maxwell, D.C.). Confident in their skills navigating the algorithmic management systems, workers said they could “drive forever” (Franklin, Detroit).

Each interaction with the pricing algorithms and the work-matching algorithms thus presented these drivers with an opportunity to exercise choice, such as by analyzing a heat map before deciding to drive toward (simple engagement) or away from (complex engagement) a high-demand area. Workers’ behaviors in simple engagement tactics were in full alignment with the algorithmic nudges and exemplified how nudges were used to encourage individuals to make a specific choice. Drivers exercised greater latitude of choice in complex engagement tactics because they did not explicitly follow the nudges but, instead, used the information presented by the system to inform their behaviors. While behaviors such as drivers manipulating their input to influence the algorithmic management system or using two phones to optimize their location were not prohibited, they were nonetheless not in accordance with RideHail’s provided norms and suggested practices.

Deviance Tactics: Pushing Against the Boundaries of the Algorithmic Management System

When deploying deviance tactics, drivers tried to get around the algorithmic management system’s directives by manipulating their input into the work-matching, pricing, and ratings algorithms and, if penalized, to counter any sanctions. In simple deviance tactics, drivers circumvented the blind matching algorithms by selecting and screening rides. 20 In complex deviance tactics, drivers tried to influence their position within the algorithmic management system either by inflating surge prices or by increasing their acceptance rates or customers’ ratings. If the system detected either of these tactics, it sanctioned drivers; however, because the penalties were applied in a consistent manner, drivers could counter them. In this way, deviance tactics elicited consent because workers were able to make choices, and these choices and their counters to sanctions stayed within the boundaries of the algorithmic management system, so ultimately, drivers remained online, which was one of RideHail’s objectives.

Simple deviance tactics

Pre-selecting riders

In simple deviance tactics, drivers attempted to circumvent the blind matching algorithms by being matched with a preferred rider. I describe the three tactics in this section in order of how frequently drivers might deploy each one, from most to least frequent. Trying to make the matching algorithms assign the driver to someone already in the car was a tactic that drivers initially reported frequently, though it became less common later in my data collection. When I began data collection in 2016, drivers in many cities recounted successfully using this tactic. My field notes describe an incident in D.C.: Marsha seemed unfazed as we navigated bumper-to-bumper rush hour traffic, but I was nauseated [by the stop and go] and frustrated with all the stops and complained after we dropped off the frat boys. “Wanna switch to a private ride?” she asked. “Sure, as long as it doesn’t hurt you.”“Naw, it’s cool.” She ended my ride and went offline. As soon as she went back online, she told me to request a new ride and—ta- da!—we were matched. As we continued to Ballston, she confessed she didn’t like shared rides as she’ll drive for half an hour and realize she’s only made $5. (Field notes, August 30, 2016)

Though against the rules, switching to a private ride was in Marsha’s interest as it paid more and pleased me, the rider. In late 2015, when ride-hailing first launched in the city where I lived, I became friendly with an informant, whom I first met via the app and who soon became my designated airport driver. I texted him whenever I needed a ride. Once in his car, I would request a ride and we would be matched immediately. I appreciated the convenience, and he appreciated the guaranteed fare. By early 2017, it took multiple attempts to be matched even though we were in the same car, and I often resorted to paying in cash. In mid 2017, I was back to using the RideHail app in its intended fashion for airport rides; around the same time across the country, drivers reported similar events. In late 2017, Ashton (Detroit) reported, [A friend asked] “I’ve got to go to the airport at 4:30 p.m. on Friday, can you take me?” Sure. It used to be that you would get in the car, and they’d request a ride, and it automatically goes to you. It’s changed dramatically. It takes maybe three or four times that [the rider] has to request a ride, cancel it, request a ride, cancel it. It gets to me.

In Ashton’s case, exercising the choice to give his friend a ride came with frustration, as he had to direct his friend to request the ride multiple times before being successfully matched. However, because Ashton directed the rider to initiate the requests rather than doing it himself, he avoided detection and potential sanctions by the algorithmic management system and was matched with his preselected rider.

Screening rides

Choosing whether to accept a ride after being assigned by the matching algorithm was another simple deviance tactic. Drivers screened rides by distance, surge amount, and customer rating or location. Arthur (L.A.) said, “I only accept rides five minutes [away] and below—especially if it’s not a surge. . . . It’s going to cost me more time and gas to get there for a three-minute ride. That is totally not worth it . . . [that’s how] I make my money.” Other drivers declined potentially problematic rides based on race and class cues. Orion (Detroit) said, I screen out riders by their name, rating, and location. [Some] people are more problematic than average—it’s not worth it. They just harass me. I’m not traveling from 10–15 minutes away to take them to a liquor store and wait for them with their kids.

Doing a cost–benefit analysis before traveling to pick up a rider, drivers used the output of the matching algorithms to calculate potential earnings versus time spent. Rejecting distant pickups, for example, allowed drivers to be available for shorter, more profitable rides.

A “Get Rides to Destination” feature allowed drivers to ask the matching algorithm to pair them with rides going in a specific direction. On discussion boards, drivers reported putting in distant destinations, hundreds of miles away, to get longer rides. Mercy (San Francisco) used it to pick up riders while commuting to her primary job. Like many drivers, I entered my home address toward the end of a shift to get another fare before stopping for the day.

I needed to be home by 3:15, so I put on “Get Rides to a Destination.” Ping—[shared ride], too much hassle, plus it’s 8 minutes away. Decline! Ping. Another [shared ride]—Decline! Can these people not give me a good ride? [Shared] rides take too long and there’s no way I’ll get home in time. Another decline and I’ll be blocked [30 seconds] from the app. Whatever. Ping. Oh good, it’s a [private] ride. Accept! I drop the lady off only a few blocks away from my house and I’m home at 3:08! (Field notes, July 29, 2018)

In this illustration, multiple choices were in play; I chose to use the destination feature and to accept only a private ride, which I thought was more likely to get me home on time. Given that I declined only two rides, I surmised that the possibility of sanctions would be low and minimal if applied (i.e., a 30-second block) as drivers were able to decline a small percentage of rides without facing serious penalties. Drivers’ ability to make these multiple, though narrow, choices could contribute to workers seeing themselves as skillful—I certainty saw myself as such when I arrived home at the desired time. Thus, for the most part, drivers could choose rides more desirable to them in hopes of maximizing their earnings and faced few to no penalties, as the choices were within the boundaries of the management system.

Blanket ride rejection

In blanket ride rejections, drivers rejected all rides of a specific type. Shared rides, whereby the algorithmic management system coordinated multiple riders traveling in the same direction, were introduced in late 2015. Shared rides were offered at a lower cost, which made the service more attractive to new customers; however, most drivers, including myself, disliked shared rides due to the low pay, circuitous routes, and querulous riders frustrated by longer travel times, and many drivers systematically rejected these rides. Yet, even though they were “too damn cheap,” drivers knew that “you gonna have to start accepting them or you gonna get blocked out of the system” (Tanya, D.C.). One driver described rejecting these rides: In the beginning they used to say [shared rides] were optional, but after a month they said it was mandatory and were sending messages saying they were going to cut me off if I kept [rejecting them]. I wrote two messages saying all the reasons I don’t like doing it. If I force straight decline the ride the app turns off, but I can take a break and turn it back on. I really don’t like having five people in the car. (Thiel, Philadelphia)

In late 2017, new incentives linked bonuses to ride quotas, making shared rides more attractive. Leland (Philadelphia) found the new bonuses motivating: “I love incentives, and [shared rides] helps when I’m trying to get multiple rides. If I’m trying to get 60 rides and I get a [shared ride], it takes 40 minutes, but I get three rides. It’s a great deal and I don’t have to worry about driving around looking for another ride.” With the new incentive, the deviance tactic of blanket ride rejection was transformed into the engagement tactic of accepting all rides, as workers’ behaviors then aligned with RideHail’s goal of real-time coordination of supply and demand. As the algorithmic management system changed the rules, deviant tactics were curtailed, and workers adapted in a way that met their income goals and aligned with RideHail’s more-proximate goals.

Complex deviance tactics

Inflating ratings

In complex deviance tactics, drivers manipulated their input to change their positions within the algorithmic management system, and if detected, drivers could be sanctioned. Ratings, namely customer and acceptance ratings, played an important role in earnings, as they determined drivers’ access to the platforms and their eligibility for special incentives. To inflate their acceptance rates, drivers might accept a ride they did not plan to complete by forcing customers to cancel a ride or by canceling it themselves. On a discussion board, one driver, who was looking to receive an incentive but whose acceptance rate was too low, described how they did exactly that: “Because I had accepted the request, my acceptance rate now jumped above the 80% threshold and unlocked the $80 bonus for me. BOOM! . . . I got my bonus and didn’t even have to complete an additional trip.” Smith (D.C.) described an encounter in which he accepted a ride but forced the customer to cancel to maintain his perfect rating: I had one time where I didn’t want to hurt my 100 percent [acceptance rating]—if you let that [timer] time out—so I accepted [the ride request] and I just wanted the person to cancel. It was the night of when Donald Trump was inaugurated, and it was downtown, and you couldn’t really get down there. So, people kept requesting me and I didn’t want to keep canceling. Every single time someone gave me a request, I hit it, but then I would just take a whole bunch of time and they eventually would cancel.

21

Drivers strove to keep their acceptance and cancellation ratings within certain thresholds to avoid penalties or the removal of rewards.

Drivers often manipulated the algorithms to inflate ratings, such as by canceling a ride prematurely to circumvent a customer’s rating. George (Boston) said, “If I make a mistake, I cancel the trip and give it to you for free, doesn’t matter where you are going. [That way] people don’t really have the chance to give me any bad rating.” Likewise, Smith (D.C.) described, I had just turned 4.93 and I picked up a woman and her kid. The app took me to the back [of the pickup location], but she was in the front and it was really cold. She was holding the kid in her hands and she was pissed off. I said, “Ma’am, I’m just going where the app sent me.” She ran in the back, got in the car, destroyed me on the ratings, and I went from a 4.93 to a 4.90 just like that. If you lose points it’s real hard to get them back, so I’ve learned [to] make sure that a person can’t rate you, close out the ride before you let them off. The ride will cancel—it will still pay you up until that point, but it’s impossible for them to rate you. I tell them straight up too—“I’m canceling you because I don’t want you to rate me.”

While only around a 4.6 rating was required for continued access to the app he was using, Smith was so deeply invested in the algorithmic management’s rating system that he exerted considerable efforts—with both direct and indirect financial consequences—to maintain a high rating. Canceling rides increased drivers’ overall cancellation rates, putting them in jeopardy of not receiving future incentives, of not being accepted into loyalty programs, or of being deactivated. More immediately, if drivers ended a ride early, they did not receive the full fare and were not covered by the platform’s liability insurance during the gap. Even so, some drivers saw forgoing income and insurance coverage as an acceptable tradeoff to maintain high customer ratings, showcasing how one’s consent to the work could eclipse one’s own best interests. Overall, drivers exercised choices to maintain high ratings and the attendant privileges.

Inflating surges