Abstract

Background:

Artificial intelligence (AI) is increasingly applied to colposcopy to enhance the detection of cervical intraepithelial neoplasia (CIN) and cervical cancer. We conducted a systematic review to summarize the diagnostic performance achieved by AI‑based colposcopic systems.

Methods:

Following the PRISMA 2020 guidelines, the PubMed database was searched using the search terms ‘artificial intelligence’ and ‘colposcop*’ for articles published between 2019 and 2024. From the initial 43 articles retrieved, 19 studies were selected based on specific inclusion criteria: original research articles, written in the English language, and relevant to CIN or cervical cancer diagnosis. For each, we extracted the sample size, AI architecture (e.g., convolutional neural networks, U-Net/DeepLab V3 + segmentation models, multimodal fusion networks), reference standard, and reported metrics (sensitivity, specificity, accuracy, and area under the curve).

Results:

Across multiple studies, AI systems demonstrated superior diagnostic accuracy, sensitivity, and specificity, particularly for early detection of high-risk lesions and classification of cervical abnormalities. Deep-learning models, such as convolutional neural networks, consistently outperformed conventional methods by reducing diagnostic variability and offering robust performance even in low-resource settings. The review also highlights the potential of AI for real-time diagnostics and its capacity to support clinical decision-making via automated systems.

Conclusion:

AI has the potential to revolutionize cervical cancer diagnosis and management by enhancing the accuracy and efficiency of colposcopic evaluations. However, challenges remain, including the development of standardized datasets, validation in diverse populations, and ethical considerations surrounding data privacy and access to technology. Continued research and development are crucial to harness AI’s global potential to improve patient outcomes.

Keywords

Introduction

Cervical cancer remains one of the leading causes of cancer-related morbidity and mortality among women worldwide, with 662 301 new cases and 348 874 deaths reported in 2022 alone. 1 It continues to pose a significant public health concern, particularly in low- and middle-income countries (LMICs), where both incidence and mortality rates remain disproportionately high. 2 Persistent infection with high-risk human papillomavirus (HPV), especially types 16 and 18, drives the pathogenesis of cervical cancer, typically manifesting as intraepithelial neoplasia (CIN), a precursor lesion to invasive cervical cancer.3,4

To prevent the development of cervical cancer, early detection, and timely intervention are paramount. The World Health Organization recommends regular screening using Papanicolaou (PAP) smear, liquid-based cytology, and visual inspection with acetic acid (VIA) to detect CIN and early-stage cervical cancer. 5 Although these approaches have significantly reduced cervical cancer incidence in high-income countries, they still face limitations in sensitivity, specificity, and accessibility, particularly in resource-limited settings. 6 For instance, PAP smears require experienced cytologists for accurate interpretation, potentially leading to variability in results, 7 VIA is highly operator-dependent and often produces inconsistent diagnoses. 8

Colposcopy is a vital diagnostic tool for detecting cervical cancer and has been widely used for detailed visual examination of the cervix and guiding biopsies. It remains the gold standard for identifying high-grade lesions, such as CIN2 and CIN3. 9 Despite providing real-time assessments and enabling directed biopsies, 10 colposcopy’s efficacy is limited by operator skill and variability. This challenge has prompted growing interest in integrating artificial intelligence (AI) into colposcopy to enhance diagnostic accuracy and reduce dependence on human interpretation.11,12 Additionally, in LMICs with few expert colposcopists, limited access to advanced diagnostic procedures frequently in missed diagnoses and delayed treatment. 13

In recent years, AI and deep-learning (DL) algorithms have demonstrated substantial potential to improve diagnostic accuracy in a variety of medical fields, including cervical cancer detection. By automating image analysis, AI can minimize human error and increase diagnostic consistency.14,15 In cervical cancer diagnostics, AI applications have expanded to include image classification, lesion detection, and multimodal data integration to optimize screening efficacy and risk stratification. 16 These advancements have improved the identification of high-risk while streamlining screening workflows.15,17

The capability of AI to provide real-time analysis of colposcopic images may significantly bolster diagnostic reliability, especially in regions lacking a sufficient number of expert colposcopists. 18 While several recent reviews have addressed technical progress in digital colposcopy, including segmentation and classification techniques,19,20 may have focused on these technological developments rather than systematically assessing clinical performance metrics or the routine integration of AI into clinical practice. This gap highlights the necessity for further investigations that consolidate clinical evidence and evaluate the impact of AI on CIN and cervical cancer diagnostics.

AI has already seen broad adoption in cervical cancer diagnostics, encompassing area like PAP smear image analysis,21,22 HPV genotyping-based risk stratification,23,24 and real-time microendoscopy. 25 These methods have encouraged improvements in accuracy and efficiency, notably for identifying high-risk patients.26,27 However, colposcopy’s ability to provide real-time visual assessments and facilitate guided biopsies underscores its distinct advantages for AI integration. Consequently, this review focuses on using AI in colposcopic image analysis while recognizing that parallel progress has been made in other diagnostic modalities. Building on this foundation, the present review aims to synthesize recent studies highlighting how AI-driven colposcopy can enhance cervical disease detection, classification, and management. Accordingly, this review examines various AI methods – from classification algorithms to segmentation-based approaches and multimodal data integrations – and discusses their reported performance metrics, clinical applicability, and limitations. The objective of this review is to provide a consolidated perspective on the current state of AI in colposcopy, clarify its potential impact on patient outcomes, and outline key challenges and future directions for integrating AI more fully into cervical cancer care.

Methods

Reporting guidelines and protocol

This systematic review was conducted and reported following the PRISMA 2020 Statement (see Supplementary File 1). The protocol was not prospectively registered with PROSPERO or INPLASY because the literature search had already been completed at the time registration was considered.

Search strategy

A comprehensive literature search was performed using the PubMed database to assess the utility of AI in the systematic diagnosis of cervical diseases. The search combined the MeSH term ‘artificial intelligence’ and the keyword ‘colposcop*’ in all fields, with the publication date limited to articles from 2019 to 2024. This initial query produced 48 articles.

Study selection

Strict inclusion and exclusion criteria were then applied. Articles focusing on diseases outside the cervix or on diagnostic modalities unrelated to colposcopy were removed. Nonoriginal research – such as review articles, commentaries, and editorials – was also excluded. Only studies published in English and original research on AI-driven colposcopy for cervical disease diagnosis were included, resulting in a shortlist of 27 articles. Subsequently, an additional screening eliminated nine studies whose primary investigations centred on other methods (e.g., Pap smear analysis, HPV genotyping) without substantial colposcopy usage, yielding 18 qualifying articles. A supplementary manual search was conducted via Google Scholar to ensure completeness, uncovering one more study that met all inclusion criteria. As a result, the final dataset consisted of 19 articles (Figure 1).

PRISMA flow chart for study selection.

Eligible study designs

We accepted any primary, human‑subject investigation that reported diagnostic performance for an AI‑based colposcopic system, irrespective of observational design (retrospective cohort, prospective diagnostic‑accuracy study, or case‑control). Randomized trials and purely technical reports lacking a clinical reference standard were excluded.

Data extraction

For each eligible study, pertinent details were carefully extracted. These details included the author(s) and year of publication, specific evaluation metrics (such as sensitivity, specificity, accuracy, and area under the curve [AUC]), sample size, population or comparison groups (e.g., control subjects and baseline modalities), and the specific AI models employed (e.g., convolutional neural networks, segmentation networks, or multimodal fusion approaches). This systematic approach facilitated a robust comparison of AI-based diagnostic methods against conventional strategies.

Two independent reviewers screened all titles and abstracts and subsequently assessed the full texts. Disagreements were resolved through discussion with a third reviewer. The same two reviewers independently extracted study data using a piloted form.

Data synthesis and analysis

Following data extraction, the studies were thematically categorized in alignment with the review’s focus areas. Those investigating AI applications for cervical intraepithelial neoplasia (CIN) were organized according to their emphasis on early lesion detection, segmentation and biopsy guidance, or prognosis and lesion severity prediction. Studies on cervical cancer were grouped by advanced lesion detection, real-time decision support, multimodal data integration, and implementations suitable for resource-limited settings. Each thematic group compared reported performance metrics (e.g., sensitivity, specificity, AUC) and methodological approaches (e.g., CNN-based architectures, segmentation algorithms, and cross-modal integrations) to ascertain clinical efficacy and scalability. Observations regarding dataset diversity, external validation requirements, and practical implementation considerations were then synthesized to inform the overarching discussion on how AI can improve colposcopic diagnostics for both CIN and invasive cervical cancer.

Because clinical tasks, outcome definitions, and reporting formats were highly heterogeneous, quantitative pooling (meta‑analysis) was not attempted. Instead, results were synthesized narratively and tabulated:

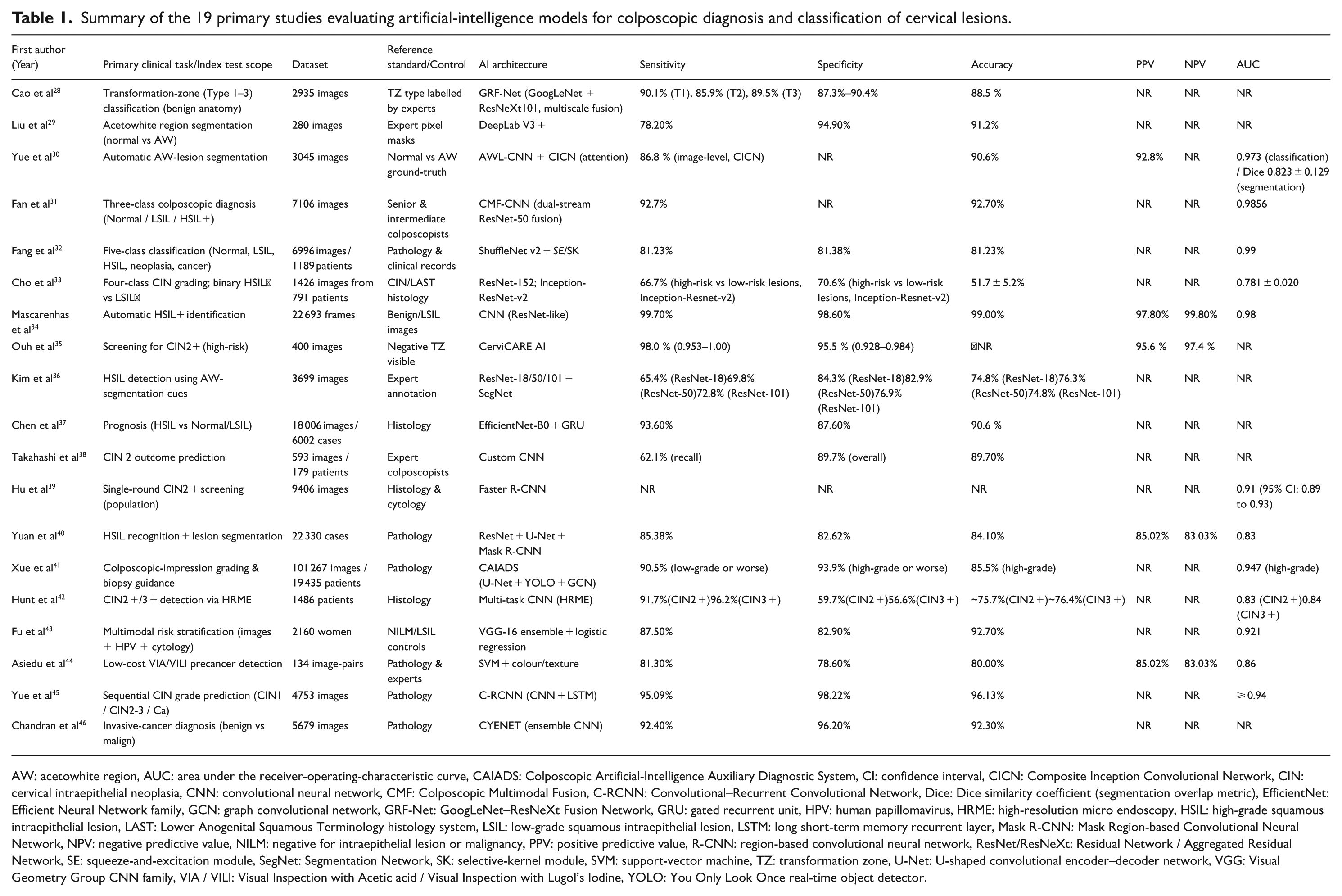

Table 1 – study‑level performance metrics ordered by disease spectrum

Supplemental Table 1 – model‑development characteristics

Supplemental Table 2 – QUADAS‑2 assessments

Summary of the 19 primary studies evaluating artificial‑intelligence models for colposcopic diagnosis and classification of cervical lesions.

AW: acetowhite region, AUC: area under the receiver‑operating‑characteristic curve, CAIADS: Colposcopic Artificial‑Intelligence Auxiliary Diagnostic System, CI: confidence interval, CICN: Composite Inception Convolutional Network, CIN: cervical intraepithelial neoplasia, CNN: convolutional neural network, CMF: Colposcopic Multimodal Fusion, C‑RCNN: Convolutional–Recurrent Convolutional Network, Dice: Dice similarity coefficient (segmentation overlap metric), EfficientNet: Efficient Neural Network family, GCN: graph convolutional network, GRF‑Net: GoogLeNet–ResNeXt Fusion Network, GRU: gated recurrent unit, HPV: human papillomavirus, HRME: high‑resolution micro endoscopy, HSIL: high‑grade squamous intraepithelial lesion, LAST: Lower Anogenital Squamous Terminology histology system, LSIL: low‑grade squamous intraepithelial lesion, LSTM: long short‑term memory recurrent layer, Mask R‑CNN: Mask Region‑based Convolutional Neural Network, NPV: negative predictive value, NILM: negative for intraepithelial lesion or malignancy, PPV: positive predictive value, R‑CNN: region‑based convolutional neural network, ResNet/ResNeXt: Residual Network / Aggregated Residual Network, SE: squeeze‑and‑excitation module, SegNet: Segmentation Network, SK: selective‑kernel module, SVM: support‑vector machine, TZ: transformation zone, U‑Net: U‑shaped convolutional encoder–decoder network, VGG: Visual Geometry Group CNN family, VIA / VILI: Visual Inspection with Acetic acid / Visual Inspection with Lugol’s Iodine, YOLO: You Only Look Once real‑time object detector.

Quality assessment

Risk of bias and applicability were appraised with QUADAS‑2. Each domain was rated low, high, or unclear by both reviewers; discrepancies were resolved by discussion. Domain‑level judgements appear in Supplemental Table 2.

Results

We ultimately included 19 primary studies that evaluated AI-based colposcopic systems (Table 1). Diagnostic tasks spanned (1) lesion classification, (2) acetowhite segmentation and biopsy guidance, (3) prognosis/severity prediction for CIN, and (4) detection or staging of invasive cervical cancer. Because outcome definitions and reporting formats differed markedly, findings are summarized narratively without pooled effect estimates.

Across all studies, reported sensitivity ranged from 62.1% to 99.7% and specificity from 56.6% to 98.6%, underscoring substantial between‑study heterogeneity. QUADAS‑2 appraisal (Supplemental Table 2) found a high risk of bias in the patient‑selection domain for 17/19 studies, mainly owing to retrospective convenience sampling (Supplemental Table1).

AI-based diagnostic applications for CIN

Because CIN represents a precursor to invasive cervical cancer, timely and accurate diagnosis is paramount. Multiple studies demonstrated that in intense learning models, AI consistently outperforms traditional colposcopic evaluations in detecting and classifying CIN2 + lesions. CerviCARE AI and the CMF-CNN model achieved high sensitivity and specificity, thus reducing false negatives and strengthening overall screening reliability.35,31 Similarly, a CNN-based approach reported by Mascarenhas et al 34 reached sensitivity and accuracy levels exceeding 99%, highlighting the potential for AI to refine risk assessment. In these investigations, sample sizes varied greatly, ranging from 400 colposcopic images (negative findings) in Ouh et al 35 to 22 693 frames in Mascarenhas et al. 34 Where images of benign or low-grade lesions served as controls, the proposed methods often demonstrated both high sensitivity and high overall accuracy.

AI-based classification for early lesion detection

Despite encouraging results, not all studies achieved uniformly high accuracy, suggesting variability among models and datasets. For instance, Cho et al 33 employed a ResNet-152 architecture for classifying cervical neoplasia and found only 51.7% mean accuracy for CIN differentiation. Once classification was simplified to a binary task (high‑grade squamous intraepithelial lesion [HSIL]+ vs. low‑grade squamous intraepithelial lesion [LSIL]−), the model’ AUC rose to 0.781 ± 0.020, underscoring the nuanced impact of class definitions and dataset diversity on performance. Fan et al 31 also highlighted the challenge of multiple categories. Yet, their CMF-CNN model, trained on 7106 colposcopy images, achieved 92.70% accuracy and a Macro-AUC of 98.56%, demonstrating strong support for AI-based classification in early lesion detection.

AI-based segmentation and biopsy guidance

Beyond classification, segmentation has proven crucial for accurate lesion delineation and guiding biopsies. Several researchers adopted approaches such as GRF-Net 28 achieved an 88.49% classification accuracy for cervical transformation zone types and ShuffleNet 32 to achieve significant classification accuracies and computational efficiency, with the latter reaching an AUC of 0.99. These methods precisely identify acetowhite (AW) regions and reduce the likelihood of missed high-grade lesions.29,30 In Kim et al, 36 segmentation information considerably enhanced detection accuracy for HSIL, boosting classification accuracies for ResNet-18, ResNet-50, and ResNet-101 from approximately 66%–70% up to 75%–76%. Meanwhile, Liu et al 29 validated a DeepLab V3 + model on 280 colposcopic images (70 per CIN category), improving the mean accuracy to 91.2% and specificity to 94.9% compared with traditional segmentation methods.

AI-based prognosis and lesion severity prediction

Specific investigations focused on predicting lesion severity to facilitate early clinical decision-making. For instance, Chen et al 37 evaluated 6002 patient cases encompassing normal, LSIL, and HSIL findings, reporting a binary classification sensitivity of 93.6% and an overall accuracy of around 90.61%, using EfficientNet-B0 and GRU-based deep-learning models. Takahashi et al 38 similarly achieved an 89.7% overall accuracy when applying a CNN-based system to 167 colposcopy images for CIN2 prognosis; their results aligned well with expert colposcopy assessments. Hu et al 39 also addressed large-scale screening by combining Faster R-CNN with a referral-rate management strategy, achieving a 0.91 AUC for CIN2 + detection with only an 11% referral rate, thus underscoring the method’s practicality in high-throughput settings.

AI-based diagnostic applications for cervical cancer

AI’s transformative impact extends equally to diagnosing and managing invasive cervical cancer. Several studies emphasized advanced lesion detection and real-time therapeutic decision support. Chandran et al 46 reported that their CYENET model reached a sensitivity of 92.4% and specificity of 96.2% on 5679 colposcopic images, outperforming a VGG19 baseline. Meanwhile, other teams, such as Hunt et al, 42 highlighted the capability of AI-driven high-resolution microendoscopy to match or exceed conventional colposcopic performance in identifying CIN2 + and CIN3 + lesions.

AI-based advanced lesion detection and real-time decision support

Recurrent convolutional neural networks (C-RCNN) have leveraged sequential colposcopic images to enhance diagnostic precision, as illustrated by Yue et al, 45 who achieved a test accuracy of 96.13% and a specificity of 98.22%. These high accuracy and specificity values indicate AI’s promise in providing clinicians with rapid and reliable real-time support, which is particularly useful for advanced cervical lesions.

AI-based multimodal data integration

Another subset of research merged different diagnostic inputs (HPV status, cytology, or molecular markers) to enhance overall model performance. Fu et al 43 showed that integrating colposcopic imaging and molecular diagnostics elevated the AUC to 0.921 across 2160 women, reflecting a comprehensive diagnostic ecosystem. Yuan et al 40 similarly fused segmentation and classification algorithms in high-resolution colposcopic images and found that such multimodal approaches substantially refined risk assessment for invasive lesions.

AI-based resource-limited settings and cost-effective solutions

Specific AI systems specifically target resource-limited environments, improving accessibility and cost-effectiveness. Asiedu et al 44 developed a Pocket Colposcope algorithm relying on VIA/VILI images from 134 patients, surpassing conventional colposcopy performance (accuracy of 80.0%, AUC 0.86). Similarly, Xue et al 41 used CAIADS for targeted biopsies, enhancing histopathological concordance to 82.2% among 19 435 patients, compared with 65.9% achieved by standard colposcopic methods.

AI-based comparison between CIN and cervical cancer diagnostics

While AI-based approaches for both CIN and cervical cancer share the advantages of improving diagnostic accuracy and minimizing interobserver variability, their clinical aims differ. In CIN-focused studies, classification and segmentation are emphasized to aid early lesion detection and guide biopsies.29,35 By contrast, cancer-focused research frequently aims to identify invasive tumours, stage disease, and integrate multiple data streams for comprehensive risk assessment.43,46 Molecular diagnostics combined with AI-driven colposcopic analysis is more pronounced in invasive cancer, thus permitting tailored interventions and potentially improving patient survival outcomes.40,43

Discussion

AI and DL techniques are reshaping colposcopic practice by automating image interpretation, reducing inter‑observer variability and – when properly validated – improving diagnostic performance for both premalignant and malignant cervical disease. In this systematic review of 19 studies, convolutional neural networks (CNNs) achieved median sensitivities and specificities in the low‑to‑mid 90 % range, and several models (e.g. CerviCARE‑AI, CMF‑CNN, Mascarenhas‑CNN) reported sensitivities >98 % for CIN 2 + detection. These figures are encouraging when compared with historical single-expert colposcopic accuracy; however, heterogeneity in study design and outcome definitions precluded a formal meta-analysis comparing AI versus human performance.

A key advantage of AI lies in its ability to effectively classify high-grade CIN (CIN2 and CIN3)—lesions known to be precursors to invasive cancer. Tools such as CerviCARE AI and the CMF-CNN showed high sensitivity and specificity for CIN2 + lesions, outperforming conventional colposcopy and cytology-based approaches. This level of precision is vital for the timely recognition of high-risk lesions and subsequent early intervention, which can curtail the incidence of invasive disease. Notably, AI systems’ robust classification of CIN holds substantial promise for detecting precancerous lesions more accurately and improving patient management among those at increased risk. 47

Beyond CIN detection, recent AI-driven methods have shown strong capabilities in identifying invasive cervical cancers. Integrating AI algorithms with colposcopic imaging facilitates early detection of invasive diseases, a critical factor in optimizing patient outcomes through timely treatment. 11 Models such as CYENET and recurrent CNN have been incredibly effective, with high accuracy rates reported for detecting CIN3+ and invasive cancers. Early and precise identification of malignant lesions can markedly increase survival rates,48-50 underscoring the significant clinical value of these emerging technologies.

The importance of combining AI with multimodal data – such as HPV testing and cytology – is also becoming more evident in cervical cancer diagnostics. By merging AI-based image analysis with molecular diagnostics (e.g., HPV DNA testing), clinicians gain a more holistic view of patient risk. This is particularly beneficial in resource-limited settings where standard diagnostic tools may not be readily accessible. 51 As AI-based methods continue to evolve, they offer the potential to accelerate risk stratification and enhance screening efficacy for both CIN and invasive cancer worldwide.

Implications for practice and research

Before AI‑enabled colposcopy can be adopted routinely, prospective external validation against expert colposcopists and histology is indispensable. Future studies should report image resolution, augmentation details, and complete TP, FP, FN, and TN counts in a standardized format to facilitate meta-analysis. Implementation science must be integrated with algorithm development to address issues of workflow integration, clinician acceptance, and regulatory compliance from the outset. Ethical concerns – including data privacy, algorithmic bias, and equitable access – also require explicit discussion. Importantly, the choice of model architecture carries practical consequences: image‑only systems such as CerviCARE‑AI already achieve high sensitivity with minimal input demands, whereas multimodal fusion models like CMF‑CNN can deliver even higher macro‑AUCs but at the cost of additional HPV or cytology data, greater computational load, and more complex clinical workflows – factors that may limit their feasibility in low‑resource settings. Conversely, models optimized for higher specificity may reduce unnecessary biopsies but risk missing true positives; transparent reporting of these trade‑offs is essential for informed clinical adoption.

Strengths and limitations

This is the first PRISMA‑conforming synthesis dedicated solely to AI‑based colposcopy. Strengths include duplicate screening and extraction, QUADAS-2 appraisal, and comprehensive tabulation of model-development parameters. Despite the encouraging performance figures reported for AI‑enhanced colposcopy, this review has several significant limitations that temper the generalizability of its conclusions. First, many of the included studies relied on relatively small or demographically homogeneous image sets, raising concerns about external validity and the risk of overfitting. Algorithms trained on constrained datasets may not perform equally well in more diverse real-world populations. Only a minority of papers conducted independent external validation; therefore, the robustness of the high accuracies remains uncertain. Second, our literature search was restricted to PubMed and a supplementary Google Scholar hand‑search; databases such as Embase, Scopus, CNKI, and Web of Science were not queried. Consequently, relevant studies indexed exclusively in those sources could have been missed, introducing potential publication and language bias. Third, while several investigations showcased real-time or low-cost implementations, the practical translation of AI systems into routine clinical practice still must confront data-privacy regulations, algorithmic transparency, regulatory approval, and the need for clinician training and acceptance – issues that were only cursorily addressed in the primary literature. Moreover, most deep-learning models function as ‘black boxes’, offering limited interpretability compared with traditional machine-learning approaches, such as decision trees. This opacity may impede clinician trust and regulatory approval if not mitigated through explainability techniques. Future work should therefore prioritize the creation of large, ethnically diverse, multi‑centre datasets, undertake rigorous external validation, and explore how AI‑enabled colposcopy can be integrated with other diagnostic modalities such as HPV genotyping and cytology; addressing these challenges will be essential to realize the full clinical potential of AI for cervical‑cancer prevention and care. Finally, none of the included studies referenced sufficient emerging AI-ethics frameworks, and most provided scant detail on consent procedures or algorithmic transparency, underscoring the need for more transparent ethical and regulatory reporting before the widespread clinical deployment of AI.

Conclusion

AI-driven approaches and deep-learning models have shown considerable promise in enhancing the detection and management of CIN and cervical cancer. By automating image analysis and reducing interobserver variability, these technologies can outperform traditional diagnostic methods, facilitating earlier intervention for high-risk lesions. Furthermore, integrating AI with multimodal data – such as HPV testing and cytology – broadens its diagnostic utility, especially in resource-limited settings where standard tools may be scarce.

Despite these advances, widespread clinical adoption of AI depends on overcoming key challenges, including the development of large, diverse datasets, rigorous external validation, algorithmic transparency, and the ethical handling of data privacy and equitable access. With continued research and responsible implementation, AI is poised to become a pivotal element of cervical cancer care, enabling more accurate diagnoses and ultimately improving patient outcomes on a global scale.

Supplemental Material

sj-docx-1-onc-10.1177_11795549251374908 – Supplemental material for A Systematic Review of the Application of Artificial Intelligence in Colposcopy: Diagnostic Accuracy for Cervical Intraepithelial Neoplasia and Cervical Cancer

Supplemental material, sj-docx-1-onc-10.1177_11795549251374908 for A Systematic Review of the Application of Artificial Intelligence in Colposcopy: Diagnostic Accuracy for Cervical Intraepithelial Neoplasia and Cervical Cancer by Takayuki Takahashi, Yusuke Kobayashi, Rieko Sakurai, Keiko Matsuoka, Jun Akatsuka, Iori Kisu, Takashi Iwata, Jun Takayama, Motomichi Matsuzaki, Wataru Yamagami, Kouji Banno, Yoichiro Yamamoto, Hikaru Matsuoka and Gen Tamiya in Clinical Medicine Insights: Oncology

Supplemental Material

sj-docx-2-onc-10.1177_11795549251374908 – Supplemental material for A Systematic Review of the Application of Artificial Intelligence in Colposcopy: Diagnostic Accuracy for Cervical Intraepithelial Neoplasia and Cervical Cancer

Supplemental material, sj-docx-2-onc-10.1177_11795549251374908 for A Systematic Review of the Application of Artificial Intelligence in Colposcopy: Diagnostic Accuracy for Cervical Intraepithelial Neoplasia and Cervical Cancer by Takayuki Takahashi, Yusuke Kobayashi, Rieko Sakurai, Keiko Matsuoka, Jun Akatsuka, Iori Kisu, Takashi Iwata, Jun Takayama, Motomichi Matsuzaki, Wataru Yamagami, Kouji Banno, Yoichiro Yamamoto, Hikaru Matsuoka and Gen Tamiya in Clinical Medicine Insights: Oncology

Footnotes

Ethical considerations

Not applicable, as this study is a literature review and does not involve the collection of primary data from human or animal subjects.

Consent to participate

Not applicable.

Consent for publication

Not applicable.

Author contributions

TT: Conceptualization, Methodology, Formal analysis, Investigation, Writing – original draft, Writing – review & editing, Supervision, Project administration

YK: Investigation, Resources, Writing – review & editing

RS: Data curation, Formal analysis, Visualization, Writing – original draft

KM: Investigation, Data curation, Writing – review & editing

JA: Software, Validation, Formal analysis

IK: Resources, Writing – review & editing

TI: Methodology, Supervision

JT: Software, Visualization, Formal analysis

MM: Investigation, Validation

WY: Supervision, Resources, Writing – review & editing

KB: Supervision, Project administration, Writing – review & editing

YY: Validation, Writing – review & editing

HM: Conceptualization, Methodology, Supervision, Project administration, Writing – review & editing

GT: Software, Formal analysis, Validation, Writing – review & editing

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Not applicable. This study is a literature review and does not involve the collection of new datasets or the development of new code.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.