Abstract

Recently, the use of the Bayesian network as an alternative to existing tools for similarity-based virtual screening has received noticeable attention from researchers in the chemoinformatics field. The main aim of the Bayesian network model is to improve the retrieval effectiveness of similarity-based virtual screening. To this end, different models of the Bayesian network have been developed. In our previous works, the retrieval performance of the Bayesian network was observed to improve significantly when multiple reference structures or fragment weightings were used. In this article, the authors enhance the Bayesian inference network (BIN) using the relevance feedback information. In this approach, a few high-ranking structures of unknown activity were filtered from the outputs of BIN, based on a single active reference structure, to form a set of active reference structures. This set of active reference structures was used in two distinct techniques for carrying out such BIN searching: reweighting the fragments in the reference structures and group fusion techniques. Simulated virtual screening experiments with three MDL Drug Data Report data sets showed that the proposed techniques provide simple ways of enhancing the cost-effectiveness of ligand-based virtual screening searches, especially for higher diversity data sets.

Keywords

Introduction

Many virtual screening approaches have been implemented for searching chemical databases, such as the substructure search, similarity, docking, and QSAR. Similarity searching is the simplest and one of the most widely used techniques for ligand-based virtual screening in drug discovery programs. 3 There are many studies in the literature associated with the measurement of molecular similarity.1–4 However, the most common approaches are based on two-dimensional fingerprints, with the similarity between a reference structure and a database structure computed using association coefficients such as the Tanimoto coefficient. 3

The effectiveness of ligand-based virtual screening approaches can be enhanced by using data fusion. 5 Data fusion can be implemented using two different approaches.5,6 The first, similarity fusion, involves searching for a single reference structure using multiple fingerprints. The similarity scores, or ranking, for each similarity measure (fingerprint) are combined to obtain the final ranking of the compounds in the database. The second approach is group fusion, in which multiple reference structures with a single similarity measure are used to search the database. Group fusion has been found to be generally more effective than similarity fusion.

In more recent studies, the Bayesian inference network (BIN) has been introduced as promising the similarity search approach.7,8 In our previous works,9–11 the retrieval performance of the Bayesian inference network was observed to improve significantly when multiple reference structures were used or more weights were assigned to some fragments in the molecule structure. Unfortunately, such information is unlikely to be available in the early stages of a drug discovery program when just a single weak lead is available.

BIN was originally developed for text document retrieval systems. 12 Many studies in information retrieval (IR) have shown that the retrieval effectiveness of BIN can be improved by using relevance feedback technique. Relevance feedback is one of the most useful query modification techniques in IR systems. 13 It is used to improve the query formulated in the IR systems when the documents initially retrieved do not completely fulfill the user’s information need. It works in the following way: A user submits a query expressing his information need to the IR system, which then ranks the documents in decreasing order of relevance to the query. The user then inspects the high-ranking documents returned by the IR system and specifies which documents are relevant and which ones are not relevant to his information need (relevance judgment). Based on the user’s relevance judgment, the IR system updates the initial query, adds new terms to the query, and reweights the query terms. This process is repeated until the user is completely satisfied with the retrieved documents. Relevance feedback has been extensively studied in the literature13,14 and is the focus of the present article.

Relevance feedback has also been used in similarity-based virtual screening under the name of its nearest neighbors. Hert et al. 15 have described an extension of similarity searching, referred to as Turbo similarity searching (TSS), in which a single active reference structure and the information about the nearest neighbors for that reference structure in a conventional similarity search are used to search the database and then data fusion is used to combine the individual similarity searches. The information about the nearest neighbors is also used to enable the use of machine-learning techniques for virtual screening without the need for an explicit training set of known active and inactive molecules. 16 The basic idea underlying TSS is the assumption that the nearest neighbors of a reference structure are actives. These assumed active structures can be used as reference structures together with the original reference structure to construct multiple reference structures that are required for group fusion. This process is performed automatically without any additional burden on the user. The nearest neighbors information has been proven to be a very effective way to enhance the effectiveness of the ligand-based virtual screening approaches.15,16

In this article, we enhance the screening effectiveness of the BIN using the relevance feedback information. In this approach, a few high-ranking structures of unknown activity were filtered from the outputs of BIN based on a single active reference structure to form a set of active reference structures. This set of active reference structures is used in two distinct techniques of carrying out such BIN searching: reweighting the fragments in the reference structures and group fusion methods. In the group fusion method, the BIN repeats the search using the set of reference structures and then combines the similarity scores resulting from individual reference-structure similarity searches using data fusion rules.

Materials and Methods

This study compares the retrieval results obtained using three different similarity-based screening models. The first model was based on the BIN described by Abdo and Salim 9 that uses the Okapi (OKA1) weight, which was found to perform best in their experiments, which we shall refer to as the conventional BIN model. This model involves searching using a single reference structure. The second model was based on the BIN and relevance feedback information, which we shall refer to as the reweighted BIN model. It involves searching for a single reference structure and then using the feedback information to reweight the fragments in the reference. The third model was also based on the BIN and relevance feedback information, which we shall refer to as the group fusion model. It involves searching using multiple reference structures extracted from relevance feedback information and then combining the individual similarity searches using any fusion rules.

In what follows, we give a brief description of the BIN model on which this work is based. Full details of the BIN model are given by Abdo and Salim.7,9 In this work, the emphasis will be on investigating the capability and performance of BIN to use the feedback information.

Conventional BIN model

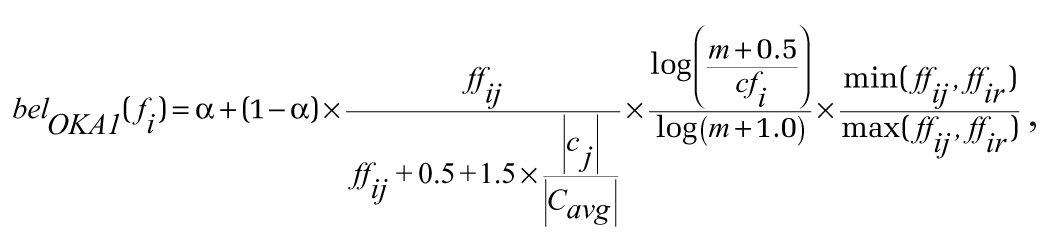

To estimate the probability associating each compound to the reference structure, we need to compute the probability in the fragment and reference nodes. Our recent work 9 showed that one particular belief function, called OKA1, 9 was the most effective of those tested, and we have hence used this function for the experiments reported below. This function was used to compute the probability in the fragment nodes and is given by

where α is a constant, and experiments using the Bayesian network show that the best value is 0.4. 7 ffij and ffir are the frequency of the ith fragment within jth compound and rreference structure, respectively; cfi is the number of compounds containing ith fragment; |cj| is the size (in terms of the number of fragments) of the jth compound; |Cavg| is the average size of all the compounds in the database; and m is the total number of compounds.

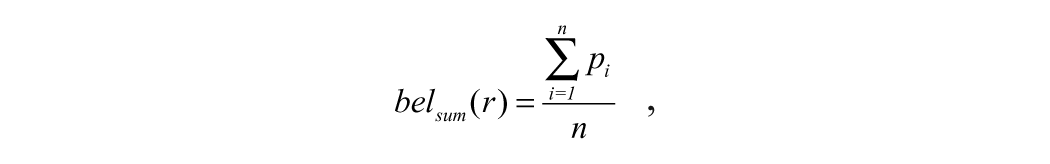

To produce a ranking of the compounds in the collection with respect to a given reference structure, a belief function from In Query, specifically the SUM operator, was used. If p1, p2,..., pn represent the beliefs at the fragment nodes (parent nodes of r), then the belief at r is given by

where n is the number of the unique fragments assigned to rreference structure, and pi is the value of the belief function bel(fi) in ith fragment node. The reader should note that in this article, we consider the use of only a single reference structure. However, the methods that we describe can be extended to multiple reference structures. This is achieved by combining the individual reference structure nodes using any of the fusion rules (e.g., MAX, SUM) as described previously for the BIN model. 10

Reweighted BIN model

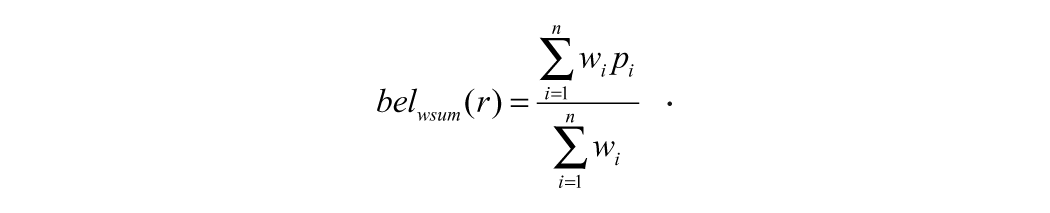

The difference between the two models (BIN and reweighted BIN) arises from the differences in the type of belief function used to produce the ranking of the compounds in the collection. In the conventional BIN model, the probability in the reference node is computed by summing up the probabilities in the fragment nodes connected to the reference node. The fragment nodes participating in the final probability are scored equally (meaning that no weight is given to any fragment node). This calculation is conducted using the SUM operator, as described above. In the reweighted BIN model, the relevance feedback information is used to assign weights to the fragment nodes associated with the reference node. Consequently, the probability value in the reference node depends on the values in the fragment nodes and the assigned weights associated to them. To illustrate how the reweighted BIN model can have different weights for fragments in the reference structure, the belief function from InQuery, the WSUM operator, has been used. If p1, p2,..., pn represent the beliefs at the fragment nodes (parent nodes of r) and w1, w2, and wn are the parent weights, then the belief at r is given by

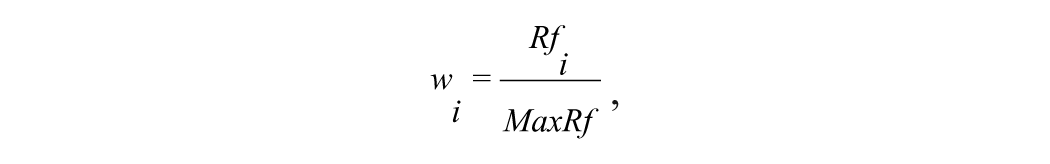

To produce the relevance feedback information, we have to start with the conventional BIN model to search the database with a single active reference structure. A few high-ranking structures of unknown activity are then chosen from the outputs of conventional BIN, based on a single active reference structure, to form a set of active reference structures (relevance feedback information). The original active reference structure, together with the set of active reference structures (feedback information), form the final set that is used to reweight the BIN model. Here, the additional weights assigned to the fragments are based on the frequency of their occurrence in the set of relevance feedback structures. The weight of the ith fragment wi is given by

where Rfi is the sum of the frequencies of occurrence for the fragment ith in the set of relevance feedback structures, and MaxRf is the maximum frequency of occurrence in the set of relevance feedback structures. Consequently, higher weight will be assigned to those fragments that occur more frequently in the relevance feedback structures.

Group fusion model

The group fusion model uses the conventional BIN model to search the database using a single active reference structure. The relevance feedback information (the nearest neighbors information) for that reference structure is also used to search the database. Data fusion rules are then used to combine the similarity scores of individual similarity searches. This model is similar to the TSS method described by Hert et al. 15 The difference is in the type of similarity-searching approach used to search the database. The basic idea underlying the reweighted BIN and group fusion model is the assumption that the relevance feedback structures (nearest neighbors) of the original reference structure are actives, as in Hert et al. These assumed active structures can be used as reference structures, together with the original reference structure, to construct the multiple reference structures that are required for the reweighted BIN and group fusion models.

Simulated virtual screening experiments

Our experiments have used the most popular chemoinformatics database: the MDL Drug Data Report (MDDR)

17

that has been used in our previous studies of Bayesian networks.

9

This database consists of 102 516 molecules. The 102 516 molecules in the database were converted to Pipeline Pilot’s (Accelrys Software, San Diego, CA) ECFC4 (extended connectivity) fingerprints and folded to a size of 1024. For the screening experiments, three data sets (DS1-DS3) were chosen from the MDDR database. The data set DS1 contains 11 activity classes, with some of the classes involving actives that are structurally homogeneous and other classes involving actives that are structurally heterogeneous (i.e., structurally diverse). The DS2 data set contains 10 homogeneous activity classes, and the DS3 data set contains 10 heterogeneous activity classes. Details of these three data sets are given in

Conventional BIN searches were carried out using 10 reference structures selected randomly from each activity class. Different numbers of nearest neighbors—10, 20, 50, and 100—were selected (as reference structures) for each reference structure in the conventional BIN searches. These sets were used by the reweighted BIN search and group fusion search: these searches will be referred to as BIN, RE, and GF, respectively. In the group fusion search, the outputs from sets of 10, 20, 50, or 100 searches were fused using the MAX fusion rule. The superiority of the MAX rule has been noted by previous works. 10 The effectiveness of similarity searches was evaluated by the recall, where the recall is the percentage of the actives retrieved in the top 1% or the top 5% of the ranked list resulting from a similarity search.

Results and Discussion

Our purpose is to identify different approaches of using relevance feedback information in the BIN model and then identify the retrieval effectiveness of using such approaches. In this study, we tested the various BIN models (conventional BIN, reweighted BIN, and group fusion) on the MDDR database using three different data sets: DS1 to DS3.

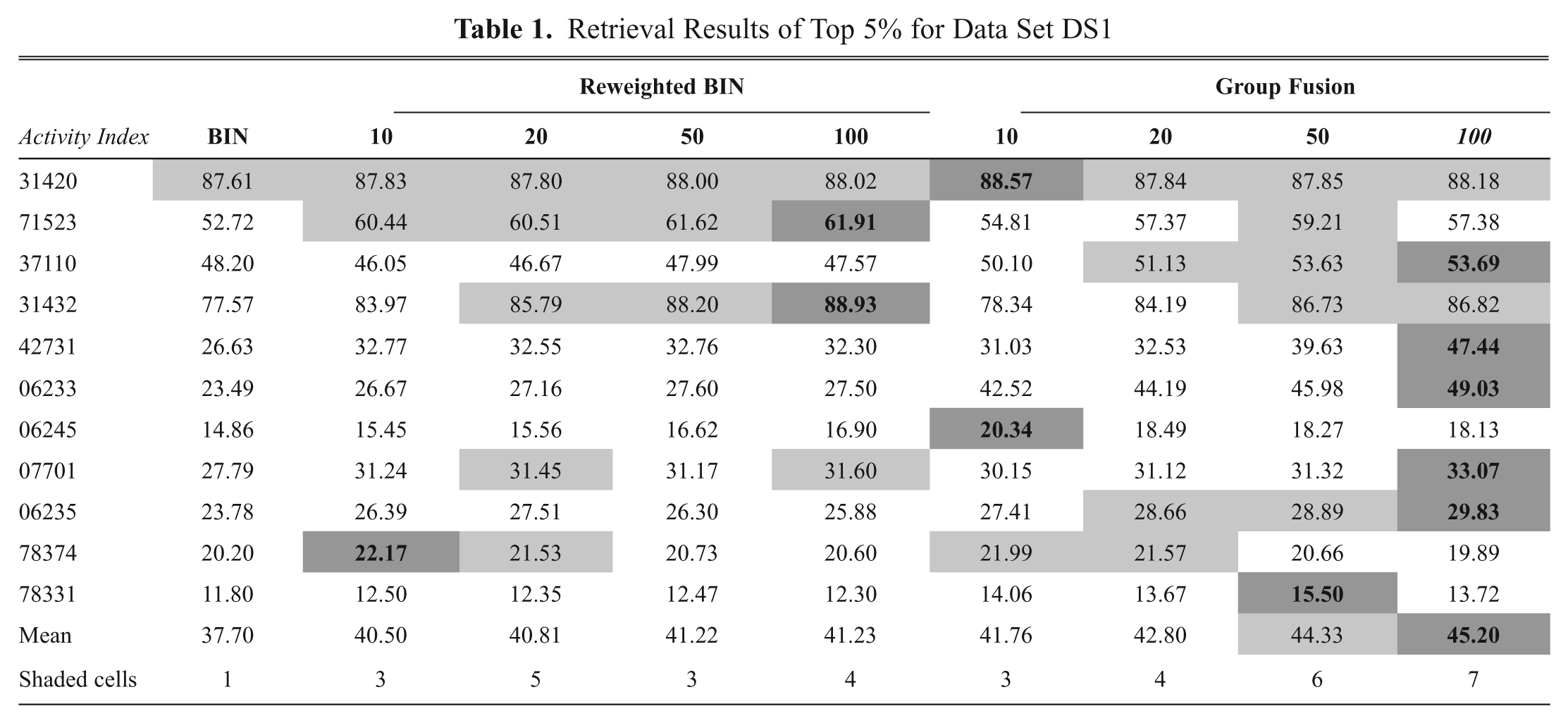

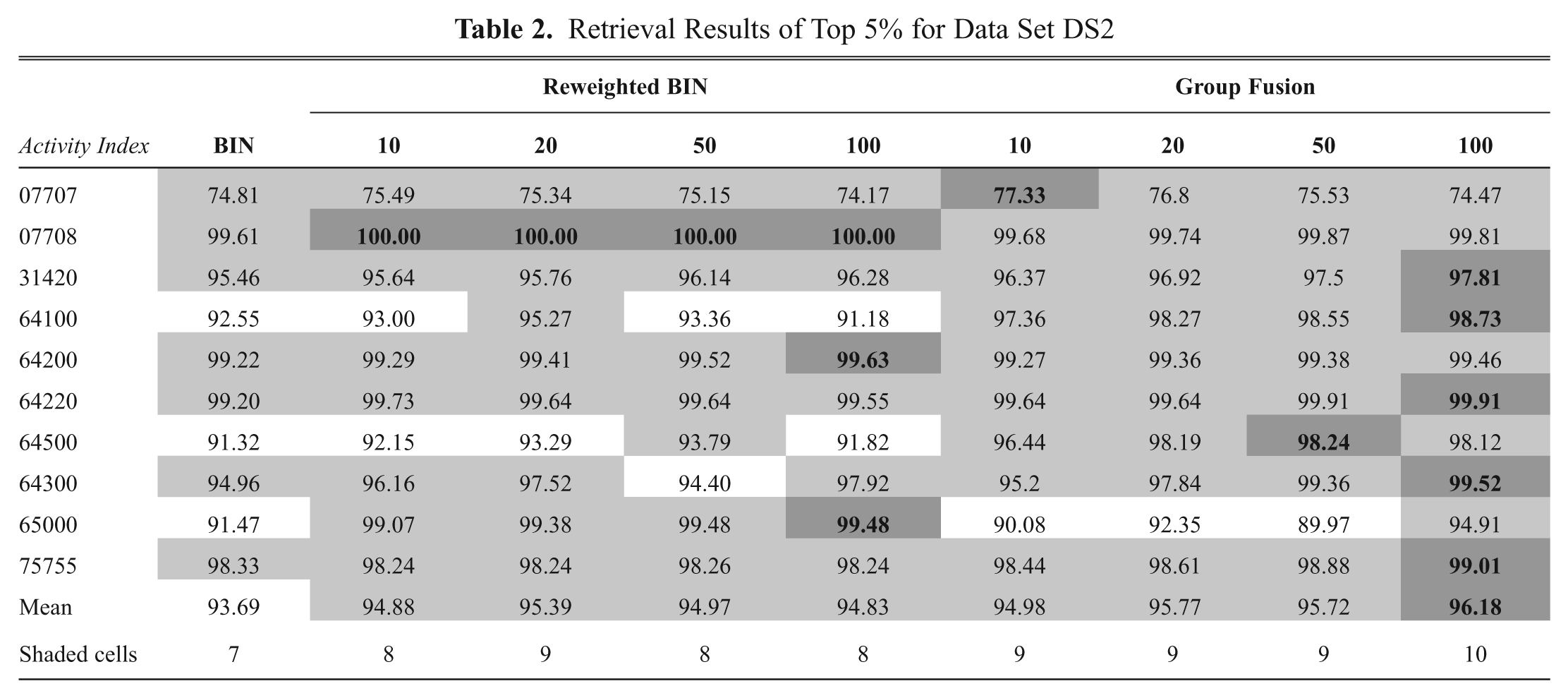

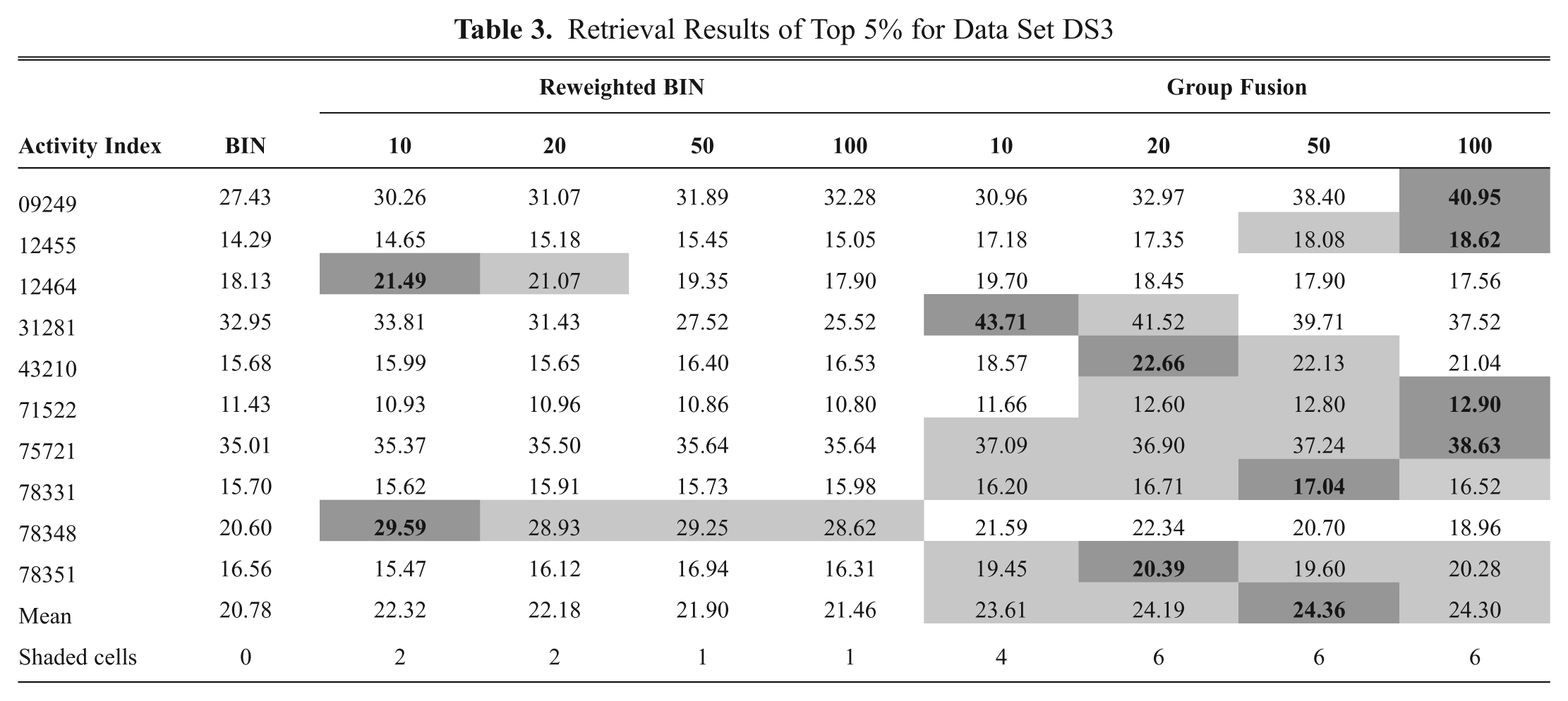

The results of the conventional BIN, the reweighted BIN, and group fusion for the searches of DS1 to DS3 are presented in Tables 1 to 3 , respectively, using a cutoff of 5%. The first column from the left of each table contains the results for the conventional BIN when a single reference structure is used, the second column of each table contains the corresponding results when different numbers of nearest neighbors (10, 20, 50, or 100) are used to reweight the reference fragments in the reweighted BIN model, and the last column of each table contains the corresponding results when different numbers of nearest neighbors (10, 20, 50, or 100) are used as reference structures in the group fusion model. Each row in a table lists the recall for the top 5% of a sorted ranking when averaged over the 10 searches for each activity class, and the penultimate row in a table corresponds to the mean value for that similarity method when averaged over all of the activity classes for a data set. The similarity method with the best recall rate in each row is strongly shaded, and the recall value is boldfaced; any similarity method with an average recall within 5% of the value for the best similarity method is shown lightly shaded. The bottom row in a table corresponds to the total number of shaded cells for each similarity method across the full set of activity classes.

Retrieval Results of Top 5% for Data Set DS1

Retrieval Results of Top 5% for Data Set DS2

Retrieval Results of Top 5% for Data Set DS3

Visual inspection of the recall values in

Tables 1

to

3

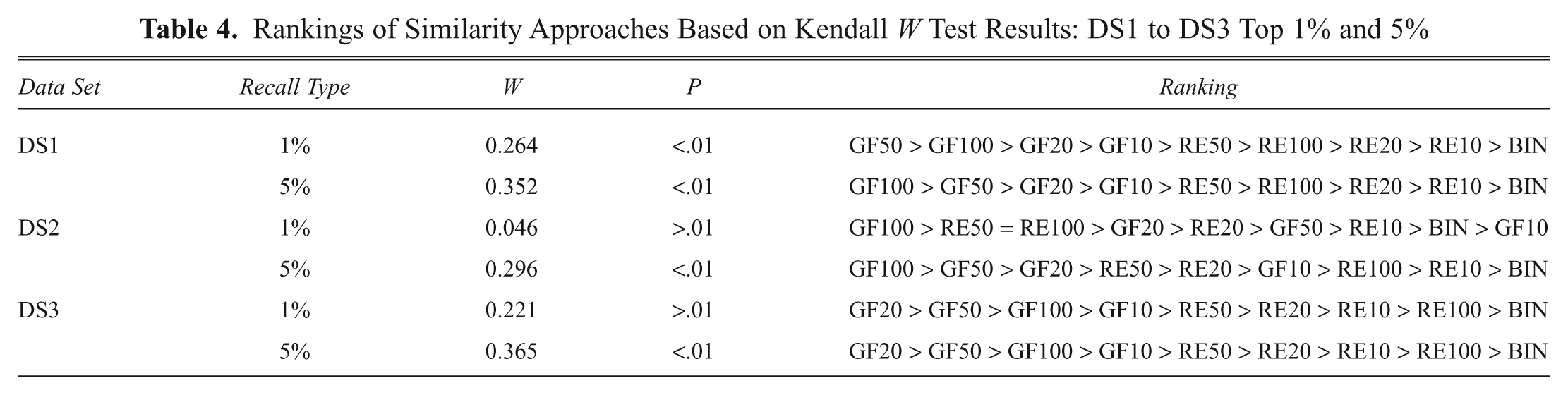

enables one to make comparisons between the effectiveness of the various search models. However, a more quantitative approach is possible using the Kendall W test of concordance.

18

This test shows whether a set of judges makes comparable judgments about the ranking of a set of objects; here, the activity classes were considered as judges and the recall rates of the various search models as objects. The output of such a test is the value of the Kendall coefficient and the associated significance level, which indicates whether this value of the coefficient could have occurred by chance. If the value is significant (for which we used cutoff values of 0.01 or 0.05), then it is possible to give an overall ranking of the objects that have been ranked. The results of the Kendall analyses (for DS1–DS3) are reported in

Rankings of Similarity Approaches Based on Kendall W Test Results: DS1 to DS3 Top 1% and 5%

Some of the activity classes may contribute disproportionally to the overall value of mean recall (e.g., low-diversity activity classes). Therefore, using the mean recall value as an evaluation criterion could be impartial to some methods but not others. To avoid this bias, the effectiveness performance of different methods has been further investigated, based on the total number of shaded cells for each method across the full set of activity classes, as shown in the bottom row of

Tables 1

to

3

. These shaded cell results are listed in

Inspection of the DS1 search in Table 1 shows that the group fusion search (GF100, GF50, GF20, or GF10) produced the highest mean value compared with the BIN and reweighted BIN. In addition, according to the total number of shaded cells in Table 1 , group fusion with 100 nearest neighbors (GF100) is the best performing search across the 11 activity classes in terms of mean recall, with GF50 also performing well. Table 4 shows that the value of the Kendall coefficient (for DS1 top 5%), 0.352, is significant at the 0.01 level of statistical significance. Given that the result is significant, we can hence conclude that the overall ranking of the different procedures is GF100 > GF50 > GF20 > GF10 > RE50 > RE100 > RE20 > RE10 > BIN. The good performance of the group fusion method is not restricted to DS1 because it also gives the best results for the top 1% and 5% for DS2 and DS3.

The results in Table 1 also show that the reweighted BIN and group fusion methods are always superior to the conventional BIN in their ability to identify active molecules for all activity classes, with performance being quite significant for the more heterogeneous activity classes. In only one instance (for the Renin inhibitor activity class), the increase in performance was marginal. The low performance with low-diversity activity classes can also be seen clearly through the DS2 search results reported in Table 2 . Results in Table 2 show that group fusion (GF100) is the best-performing search across the 10 activity classes in terms of mean recall and number of shaded cells, with reweighted BIN (RE20) also performing well. The overall ranking of the 10 different procedures based on the Kendall coefficient in Table 4 (for DS2 top 5%) is GF100 > GF50 > GF20 > RE50 > RE20 > GF10 > RE100 > RE10 > BIN.

The DS3 searches are of particular interest because they involve the most heterogeneous activity classes in the three data sets used and thus provide a tough test of the effectiveness of a screening method. Hert et al. 16 found that TSS (group fusion) was not being preferred to the conventional similarity search for the DS3 activity classes. However, when the group fusion described here, GF, is used on this data set, Tables 3 and 4 show that it (as shown by GF20, GF50, and GF100) gives the best performance of all the methods for this data set at both cutoffs.

Table 4

gives the level of statistical significance and the associated ranking for the set of results in

Tables 1

to

3

. It can be seen that there is a high degree of commonality in the rankings, with the group fusion method (e.g., GF100, GF50, and GF20) in particular providing a level of performance that is generally superior to the other similarity methods tested here. In addition, the results in

Visual inspection of the results (

Tables 1

–

4

and

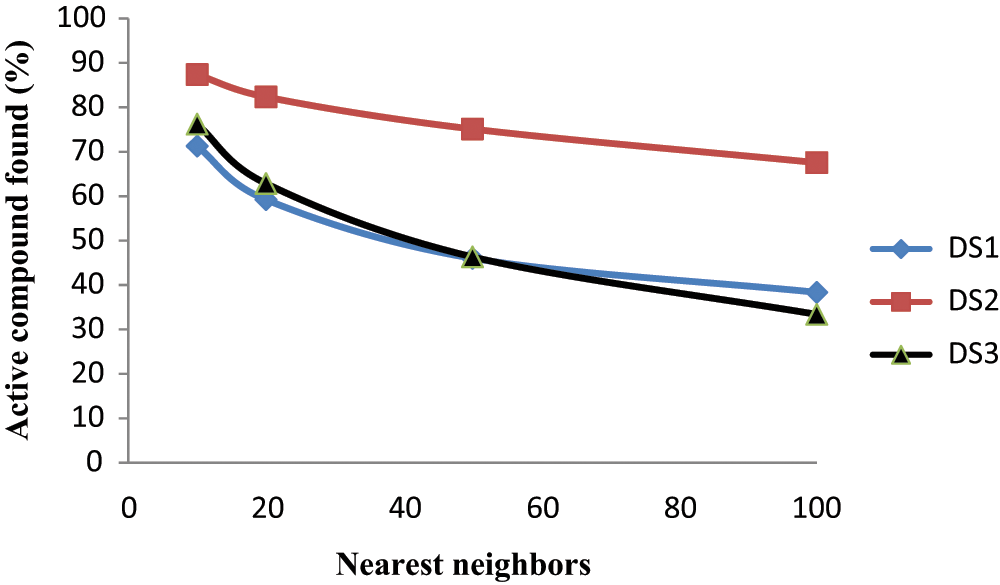

In Figure 1 , the percentage of active compounds in different data sets has been plotted as a function of its ranks (i.e., the part of the ranked list in which it would occur during a conventional BIN similarity search). This percentage was obtained by averaging the number of compounds found active at a given rank when averaged over all of the activity classes. The results are shown in Figure 1 , which provides a better insight into why the reweighted BIN and group fusion methods work and improve the recall effectiveness. Surprisingly, the best results are generally obtained when the largest number of nearest neighbors is used, because Figure 1 shows clearly that the percentage of active compounds decreases rapidly as one increases the number of the nearest neighbors. However, the reason the average recall does increase is that these molecules are providing useful information. This finding is in line with previous studies by Hert et al. 15 and Klon et al. 19 However, for the data sets studied here, we found that the use of 100 nearest neighbors produced better results at minimal computational cost than the use of 200 nearest neighbors, and we have hence included results only for the number 10, 20, 50, and 100 nearest neighbors.

Average percentage of compounds being active in different numbers of nearest neighbors.

To validate the performance of the reweighted BIN and group fusion methods, similar experiments were repeated but using the TSS

15

method, and their results are presented in

In conclusion, we have introduced two different techniques of using the relevance feedback information in ligand-based virtual screening using the BIN. The first technique is based on reference fragment reweighting, and the second is based on reference expansion (group fusion). Simulated virtual screening experiments with MDDR data sets show that the proposed techniques described here provide simple ways of enhancing the cost-effectiveness of ligand-based virtual screening in chemical databases. Our experiments also show that the increases in performance are particularly marked when the sought actives are structurally diverse. If this is not the case (low-diversity actives), then any improvement over the conventional similarity searching is likely to be marginal.

Footnotes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.