Abstract

This study investigates the impact of authorship on the convincingness and willingness to read government foresight reports. Using a preregistered online-based experimental design with 307 participants, this research examines how perceived and actual authorship (human experts vs. generative AI) influence these factors. Our findings show a significant interaction between perceived and actual authorship. Reports perceived as written by AI but actually authored by experts were less convincing, while those perceived as written by experts were more convincing. Higher perceived convincingness correlated with greater willingness to read the full text. Hence, the present research highlights biases and trust issues in AI-generated content, emphasizing the importance of aligning perceived and actual authorship to enhance government communications and public policy.

Keywords

Introduction

Governments often communicate relevant policy issues using a report format, in which relevant topics are outlined, and plausible consequences are presented. These reports include, e.g., consequences of nuclear waste management (Sjöberg, 2004) or measures during a health crisis (Hyland-Wood et al., 2021). Such topics must be communicated to citizens so that core messages are well received, i.e., perceived as relevant, including in contexts with high uncertainty (Van Der Bles et al., 2020). One such communication of uncertainty is done with foresight reports. Foresight reports aim to showcase plausible developments in a nation’s future (Jütersonke and Munro, 2024).

In recent years, Generative Artificial Intelligence (GenAI) is increasingly being used to create content, even material that can be crucial for electoral decisions (Bowers and Glenday, 2021; Fuerth and Faber, 2012). GenAI can generate headlines, text, and images (Dwivedi et al., 2023). Given the rise in interest in the field, research has begun to explore its impacts on public policy (Selten et al., 2023; Straub et al., 2023). In the public sector, studies focus on, e.g., governments putting forward policies to support AI-enabled public services (van Noordt et al., 2023), citizens’ intentions to follow recommendations from AI applications (Wang et al., 2023), or self-interest in the reception of AI (Heinrich and Witko, 2024).

Research has recognized the importance of context for the use and disclosure of GenAI in texts, e.g., in consumer services (Grigsby et al., 2025) or crisis communication (Piller, 2024). Across the world, public sectors are using GenAI in their work (Mökander and Schroeder, 2024; Nalbandian, 2022; Sun and Medaglia, 2019). Nevertheless, research shows context-specific results regarding the reaction to the disclosure of AI use and the efficiency gains from its use in government (Keppeler, 2024).

In this study, we examine the effect of disclosing AI use in government foresight reports. Previous research has mainly analyzed the direct impact of such reports on policy-making, often through process tracing (Schmertzing, 2021). This policy-centered perspective, however, overlooks another crucial dimension: citizens as stakeholders and the need to sustain their trust (Kurki, 2021). Against this background, we test how the use of GenAI affects the convincingness and relevance of government foresight reports (Criado et al., 2024). Using an experimental research design, we investigate whether the convincingness of governmental foresight reports depends on whether they are written by humans or GenAI. To address this gap, we aim to answer the following research questions:

The present study makes three contributions to public management literature. First

Further, we contribute to the practice of strategic foresight in public administrations. First, our findings provide insights into the possibility of using GenAI in the writing process of foresight reports. Second, we show that citizens’ perceptions of authorship are an influential factor in the relevance of the findings. These insights help public servants to disseminate public foresight reports while keeping in mind the relevance for citizens.

This study is structured as follows: First, we outline the theoretical background and develop the hypotheses about the effects of perceived and actual authorship on the convincingness of foresight reports. Second, we describe the experimental design, data collection, and analytical approach in depth. Third, we present the empirical results. Finally, we discuss the results in light of the existing public administration literature and the practices of strategic foresight in the public sector, and outline the limitations and potential for future research.

Theoretical background

Proactive policy orientation

Governments publish a plethora of reports on various policies. Given the high complexity of the operational environment of governments, such issues have increasingly been proactive and future-oriented (Lee, 2024). One concrete example is the publication of results of foresight projects (also known as foresight reports – i.e., the reports “We the UAE 2031”, Singapore’s “Foresight 2024”, or the UK Government Office for Science reports). There, governments publish the outcomes of foresight projects, in which experts have collaborated to develop plausible futures across various topics. To do that, they employ various methods, the most well-known being scenario planning (Ramírez and Wilkinson, 2016; Schmidthuber and Wiener, 2017; Volkery and Ribeiro, 2009). The findings of the research are then primarily published in a classical report format and, until now, are written by human experts working in public administration and focusing on such policy issues (Zumbrunn, 2023).

In recent years, the field of foresight and proactive policy in the public sector has received increased attention in both research and practice (Bowers and Glenday, 2021; Scholta and Lindgren, 2023). To conduct foresight, two key sources must be provided (Bolger and Wright, 2017). The first is data, which can be qualitative, quantitative, or in a mixed form. The second is access to experts who know how to work with and interpret the given data.

Foresight reports, as an output of proactive public policy, are suitable for investigating the underlying questions of this article for three reasons. Firstly, the rise of GenAI has had a profound impact on the interpretation of the second key source of foresight, which, until recently, was a purely human domain. With the development of GenAI, however, there is a possibility to replace human experts with the capabilities of AI (Noy and Zhang, 2023; West et al., 2023). The capabilities raise novel questions and challenges to governments publishing such reports.

Secondly, they tackle topics of high uncertainty and with potentially high impact on the country and its citizens. Thus, the concept of convincingness is not new in the field of foresight reports. Haasnoot et al. (2018: 277) conceptualize “convincibility” as the consistency “with perceived change or other information already available, when they trust the source as authoritative, and/or when the assessment process has been according to scientific standards (trustworthiness of source and procedures)”.

Lastly, compared to other reports, such as evaluations of previously implemented policies, they actively convey the government's perception on issues related to the future. Again, this is important for citizens from a public policy perspective, as these decisions predominantly affect what is yet to come (Birch, 2023). In this context, foresight reports are critical tools helping individuals and organizations make sense of uncertain futures and take concrete measures in preparing despite uncertainty (Piirainen and Gonzalez, 2015).

Generative artificial intelligence in government

Governments have embraced technological advancements as part of a broader process of digital government transformation and innovation (Guenduez et al., 2025; Haug et al., 2023). In this regard, Artificial Intelligence (AI) provides new opportunities across various facets of public administration, fundamentally reshaping operations, policymaking, and engagement with citizens and stakeholders (Guenduez and Mettler, 2023). While the concept of AI dates back to foundational work in 1955 (McCarthy et al., 1955), and is broadly understood as machine-based problem-solving, its applications have recently multiplied with more powerful computational tools and greater data availability (Haenlein and Kaplan, 2019).

‘GenAI' systems are distinct from other AI systems in that they primarily learn from human-authored data and then generate text, images, and data in response to specific prompts (Blau et al., 2024). With the release of OpenAI’s ChatGPT in 2022, GenAI applications have received significant attention from both research (e.g., Cantens, 2024) and the wider public (e.g., Koonchanok et al., 2024). The rapid adoption of such novel tools has, however, also raised critical questions, such as whether the quality of answers given by GenAI is sufficient to reach the levels of sophistication necessary for public administration (Cantens, 2024). Despite growing use of GenAI, a lack of consensus persists regarding the best wasy to approach its various challenges (Zuiderwijk et al., 2021).

This growing interest in AI applications in the public sector has intensified research focus on questions of trust. For general AI, publications highlight that both cognitive and emotional trust are influenced by how the AI is made available, and by the tool’s capabilities (Glikson and Woolley, 2020). Specifically for GenAI tools, trust is often defined as the reliability and credibility of the responses provided (Shin, 2021). Similar to general AI, capabilities are important for trust in GenAI, with conversational humanness identified as an essential antecedent for trust in chatbots (Hu et al., 2021). This trust is further enhanced when tools are personalized to individual users (Hyun Baek and Kim, 2023).

Despite these potential enhancements, skepticism toward AI, particularly in personal or value-laden situations, remains. Public chatbots, for instance, are seen as less trustworthy when dealing with personal rather than technical issues (Aoki, 2020). This skepticism contributes to broader concerns about automated decision-making in government, particularly when such systems lack transparency or accountability (Araujo et al., 2020), as human decision-making continues to be perceived as more trustworthy than AI decision-making (Ingrams et al., 2021). Research has also examined the familiarity and experience of public servants as key factors in the use of AI tools (Ahn and Chen, 2022).

Understanding the actual differences between AI- and human-generated texts is crucial, even with our focus on perceived authorship. Human authors uniquely impart tacit knowledge, empathy, and contextual judgment, shaping a text’s tone and resonance. AI, conversely, relies on algorithmic patterns and fundamentally lacks lived experience, direct accountability, or genuine understanding. These fundamental differences imply AI-generated content may harbor hidden biases, lack explainability, and be perceived as less trustworthy – particularly in high-stakes contexts like foresight reporting. Such cues, even when subconscious, can influence trust, credibility assessments, and citizens’ willingness to engage with policy recommendations (Lee et al., 2025; Shin, 2021).

While recent advancements demonstrate that AI, combined with multi-agent simulations, can yield predictions comparable to human experts (Rao and Firth-Butterfield, 2020), this capability has significant implications for public sector foresight by aiding in scenario creation and data-driven impact analysis (Pencheva et al., 2018). Despite a broader, longer-term focus on trust in AI, questions about trust in GenAI and governmental responses have been more contentious (Cantens, 2024). Interestingly, governments have already begun adapting GenAI and public servants attribute high trust to the tools they use (Bright et al., 2024). This trust suggests a potential for public servants to use GenAI for foresight activities (Pérez-Ortiz, 2024). However, prior research indicates that trust in government AI use must be built over time (Kleizen et al., 2023; Knowles and Richards, 2021; Robles and Mallinson, 2023).

Hypotheses development

One essential goal of government foresight reports is to persuade citizens and create a convincing and plausible vision of futures (Alqahtani, 2024). In this context, trust plays a central role. The disclosure that GenAI tools have been used in the production of government content has been shown to erode public trust (Schilke and Reimann, 2025). This erosion is particularly problematic in government communications, where institutional trust is foundational to policy acceptance and compliance (Knowles and Richards, 2021).

Prior research shows that human decision-making and authorship are generally perceived as more trustworthy than AI (Aoki, 2020; Ingrams et al., 2021; Schilke and Reimann, 2025). When government reports are perceived to have been authored by AI rather than humans, they may suffer from a credibility deficit, thereby reducing their perceived persuasive power. If citizens attribute lower trustworthiness to AI-authored content, its ability to present a compelling vision of the future may be undermined. We therefore expect that foresight reports will be seen as more convincing when participants are told they were written by human experts rather than by artificial intelligence:

Participants who are informed that a government report was written by experts will tend to attribute higher convincingness to the report than those informed that it was generated by Artificial Intelligence. However, we further argue that it is not the actual authorship that drives evaluations of convincingness, but rather the perceived authorship. Individuals often rely on mental shortcuts when forming judgments, especially under conditions of limited information or cognitive effort (Chaiken, 1980). Even if the content of a report is identical, the label of its origin (human or AI) can serve as a powerful cue, triggering pre-existing beliefs or biases about the source’s credibility and trustworthiness (Lee et al., 2025; Shin, 2021). People tend to rely on such cues, rather than solely on the detailed content, especially when cognitive resources are limited or when forming initial impressions. Even when the content remains constant, the label attached to it can influence how it is received. Consequently, we expect that the perceived source will primarily dictate the attributed convincingness, regardless of whether the report was, in fact, truly written by humans or AI. This leads to our second hypothesis:

The perceived authorship will significantly influence the attributed convincingness of a government report, regardless of its actual authorship. Foresight reports are not only tools for long-term strategic planning, but also essential instruments of government communication. Their impact depends, to a large extent, on whether recipients perceive them as relevant. In public administration, perceived relevance is increasingly recognized as a critical outcome variable that influences how citizens process, trust, and respond to government information (Grimmelikhuijsen et al., 2013; Moynihan, 2008). We conceptualize perceived report relevance as the extent to which a report fosters a personal and meaningful connection with the reader, thereby engaging their self-interest (Priniski et al., 2018; Visser et al., 2006). Perceived relevance is essential for understanding how citizens interact with public information (Battaglio and Legge, 2009; Boninger et al., 1995; Mouritzen, 1987; Zamir and Sulitzeanu-Kenan, 2018). As Moynihan (2008) argues in the context of performance management, information must be seen as relevant to close the persistent gap between knowledge and action. Similarly, while transparency can build trust, its effectiveness depends on the meaningfulness of the content (Grimmelikhuijsen et al., 2013). When a report is perceived as highly relevant, it prompts greater engagement, such as the willingness to read it in full, which, in turn, facilitates behavioral outcomes like policy compliance or civic preparedness. Building on Selten et al. (2023), who highlight that perceived relevance influences the decision to follow recommendations, we formulate our third hypothesis:

Higher convincingness – as predicted by H

1

and H

2

– leads to a higher willingness to read the full report, indicating a higher assumed relevance for citizens.

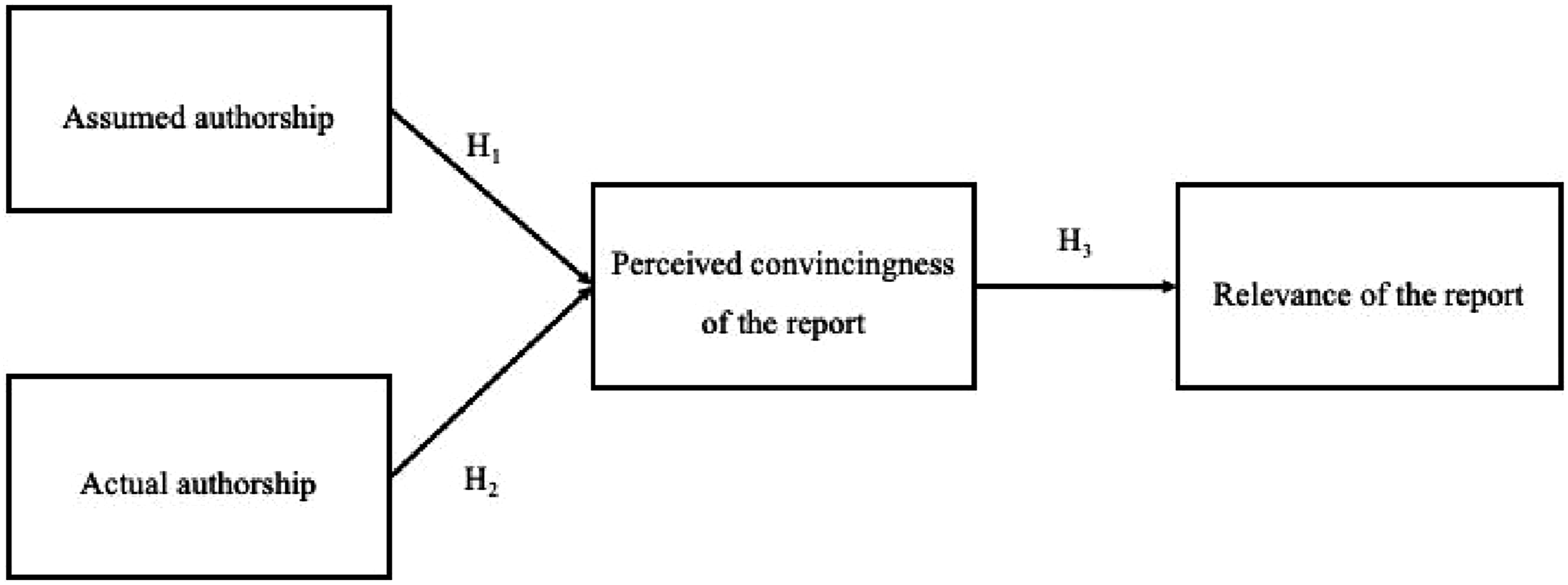

Research Model

Based on the literature review, this study proposes a conceptual framework to analyze the impact of assumed and actual authorship on the convincingness and subsequent relevance of a public policy report, concretely in the context of proactivity through foresight. As Figure 1 shows, the conceptual framework assumes direct impacts of the authorship on convincingness, which then relates to the relevance of the report to citizens. This is analyzed in the context of proactive public policy by focusing on foresight reports as the unit of analysis. Research model.

Research method

Experimental method

This study follows a preregistered experimental design, which has been shown to be valuable for capturing perceptions of citizens, also in the concrete context of AI uses in the public sector (Wang et al., 2023). To construct the study stimuli, we used specially designed front pages and abstracts of foresight reports, focusing on the same topic (see Appendix Figure A2). Abstracts often are a decisive factor in whether people read a full version of the text or not, hence implying the relevance of the text for the reader.

The experiment simulated that they were asked to read closely an abstract of a text and answer questions on this abstract. After voluntarily answering demographic information, they were shown one picture of the front page, with the authorship highlighted in red. This emphasis on the authorship was further supported by a short text under the image. There, again, the authorship was highlighted. Further, all participants were informed that the topic of the report was the digitalization of society in a country by 2035.

The participants were then asked to read a two-paragraph abstract of the assumed implications of digitalization in 2035. When they finished reading and continued the experiment, they were prompted to answer an attention check question, namely, who the author of the report was. More on this step will be elaborated in the chapter on validity. As a subsequent and final step, they had to answer questions relating to the text.

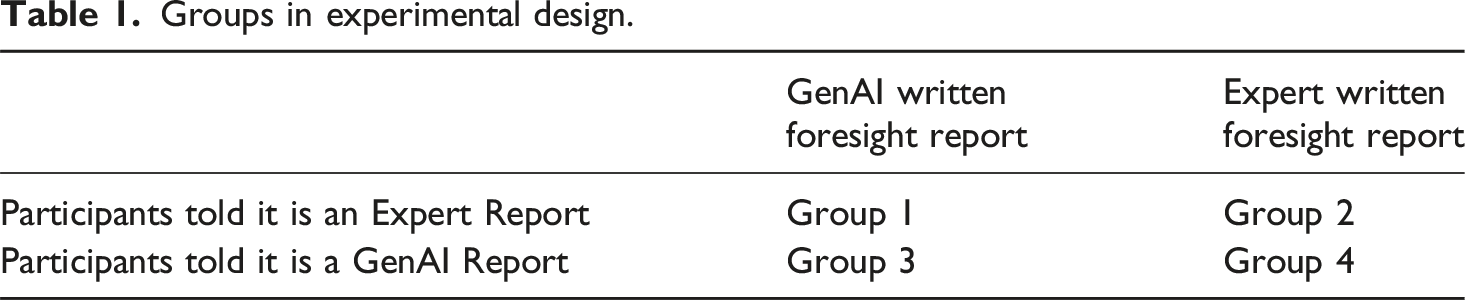

Groups in experimental design.

In groups 3 and 4, participants were furthermore provided with the concrete prompt that the AI was working with, thus underscoring the artificial origin of the text. Thereby, the participants knew the origin of the reported results and how they were produced. Of course, an exact reproduction is not possible, given the nature of GenAI outputs. Nevertheless, given that GenAI tools such as ChatGPT have passed the Turing Test (Biever, 2023), this information is assumed not to influence the perception of the quality of the text itself but rather underscores the potential and capabilities of GenAI.

To isolate the effect of perceived authorship on convincingness, we included two covariates in our analysis. First, we account for participants’ agreement with the report content, as previous research shows that individuals tend to perceive information as more convincing when it aligns with their preexisting beliefs (Jurma, 1981). Recent studies on GenAI further suggest that such agreement plays a critical role in shaping trust in AI-generated content (see, e.g., Longoni et al., 2022; Selten et al., 2023). Second, we control for general trust in government, which influences how citizens evaluate official communication and shapes acceptance of public sector messages (Chohan et al., 2021).

Data collection

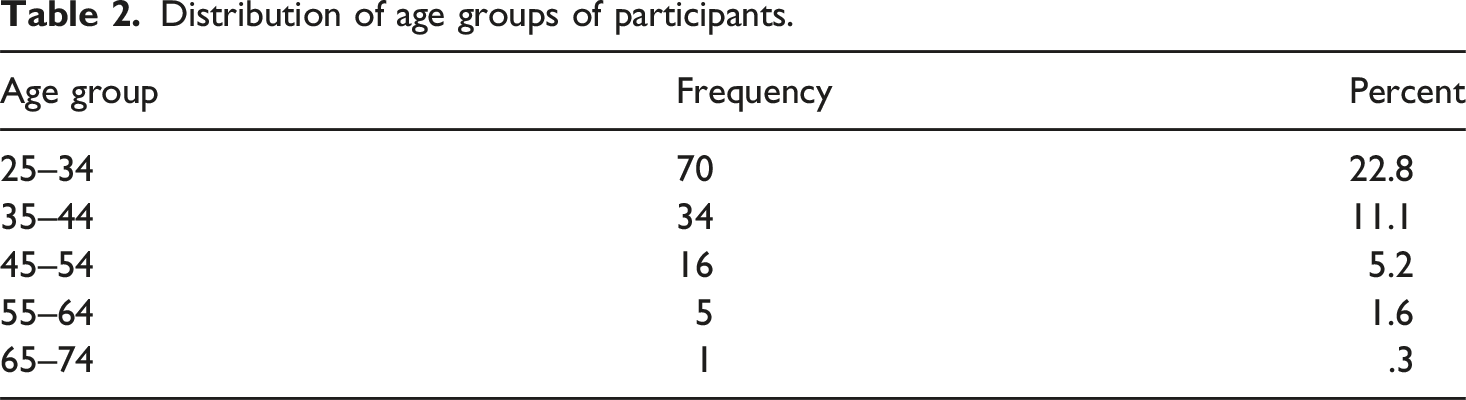

Distribution of age groups of participants.

Also, participants had to give initial consent concerning data protection at the start of the experiment and informed consent after the experiment was completed. 13 participants decided to withdraw their consent, resulting in the N = 430.

Operationalization and analytical approach

In this study, the independent variables are operationalized by categorizing the authorship of reports into two groups: human experts and GenAI. This categorization ensures a clear differentiation between reports authored by experts and those generated by AI systems. The dependent variable, perceived convincingness, was measured using a five-point Likert scale ranging from 1 (very convincing) to 5 (not convincing). This scale allows for a nuanced assessment of how the perceived authorship influences the perception of the convincingness among readers or participants.

To analyze the hypotheses, one- and two-way ANOVAs and a correlation analysis are used to measure the between-subject effects. H 1 and H 2 are analyzed individually using a one-way ANOVA. However, it is still assumed that there could be an interaction effect between H 1 and H 2 . Hence, a two-way ANOVA allows us to best analyze the effects of two variables in between-subject designs. Using an ANOVA analysis approach allows us to analyze the effects in the 2 × 2 experimental design, given the effect of the dichotomous factor of authorship on the continuous outcome variable. For H 3 , a correlation analysis and, to increase reliability, a (one-way) Welch ANOVA were used to analyze the data and understand the interaction for the bivariate analysis in H3.

Ensuring validity in online surveys

Online surveys and experiments have been noted as having a low quality of their data (Douglas et al., 2023). One explanation that has been frequently noted is a possibly higher distraction of online participants (Necka et al., 2016). Nevertheless, despite such challenges, MTurk is still considered to be a viable source for online experiments, even in comparison to other tools including in-person experiments (Kees et al., 2017). MTurk has also been demonstrated to be a viable tool in public administration research and has been frequently used in this context (Stritch et al., 2024).

When using such tools, there are several methods that enable researchers to ensure the attentiveness of participants in an experiment, one of which is the task to recall essential information (Abbey and Meloy, 2017). This type of “memory recall” is frequently used to improve the validity of the data, with the ability to ensure retention and awareness.

Thus, the participants all had to pass an attention check to be included in the analysis. The attention check ensured that the participants understood the core elements of the task. In the present experiment, participants had to correctly indicate the author that was displayed on the text they read right before the attention check. This further reduced the N to 307, after participants not passing the attention check were excluded. This corresponds to 29.61% of participants not passing the attention check.

Previous research indicates that the attention of MTurk participants can go in both directions, either outperforming or underperforming compared to other control groups (Goodman et al., 2013; Hauser and Schwarz, 2016). Therefore, it is not possible to say whether the present results are indicative of systemic issues of the empirical approach. What they do underscore, however, is the importance of ensuring the quality of the results via the use of attention checks.

Results

In the following section, we discuss the results of our analysis. The table below provides an overview of the number of participants in our study. Overall, the results are based on N = 307 participants, who were successfully randomly assigned to the four groups.

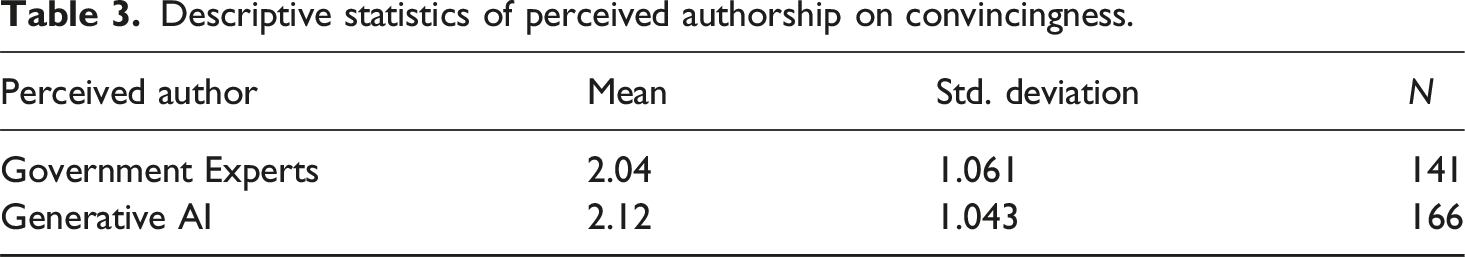

Descriptive statistics of perceived authorship on convincingness.

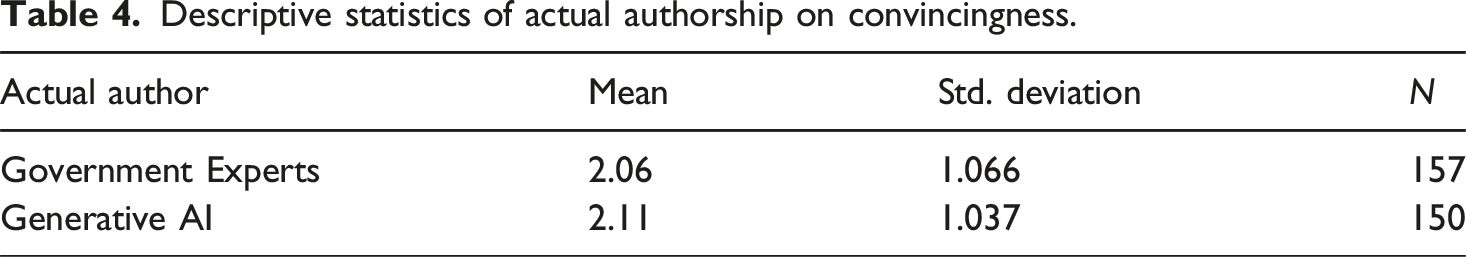

The results indicate that the perceived authorship alone does not significantly influence the convincingness (ranging from 1/very convincing to 5/not convincing) of the results presented in a foresight report (p = 0.5, see Appendix Table A1). This is true for both texts perceived to be written by “Generative AI” as well as for “Government Experts.” However, all defined covariates have a significant impact on the model. Additionally, it must be noted that experts still score slightly higher (mean 2.04 and 2.12, respectively).

Descriptive statistics of actual authorship on convincingness.

Like the results for the perceived author, the results indicate that the actual author alone also does not significantly influence the convincingness of the results presented in a foresight report. Furthermore, the results are similar also in the closeness of perceived convincingness. However, the results show a higher level of significance, being significant at the 0.1 threshold (p = .064, see Appendix Table A2).

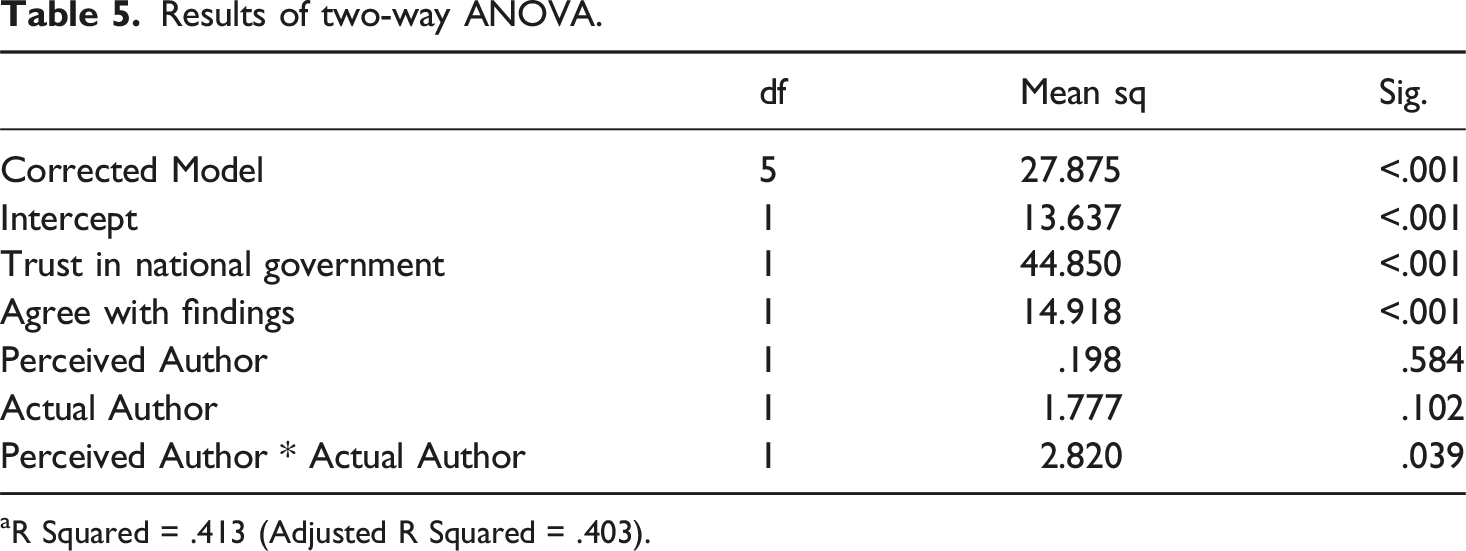

Results of two-way ANOVA.

aR Squared = .413 (Adjusted R Squared = .403).

Overall, the ratings of the convincingness of the Foresight Reports were close to 2, regardless of the perceived author, indicating that the texts were perceived as convincing. The descriptive statistics, which can be found in Appendix Table A3, reveal that when participants believed that the text was authored by “Government Experts”, they found the results less convincing if the text was in fact written by “Generative AI” (Mean = 2.22) compared to “Government Experts” (Mean = 1.86).

Conversely, when participants believed that the text was authored by “Generative AI,” they found the results more convincing if the text was actually written by “Generative AI” (Mean = 2.00) compared to “Government Experts” (Mean = 2.23). In summary, the findings suggest that the perceived convincingness of the reports varies depending on the perceived and actual author. Participants tend to find texts written by “Generative AI” more convincing if they believe they are authored by “Generative AI,” and texts written by “Government Experts” more convincing if they think they are authored by “Government Experts.”

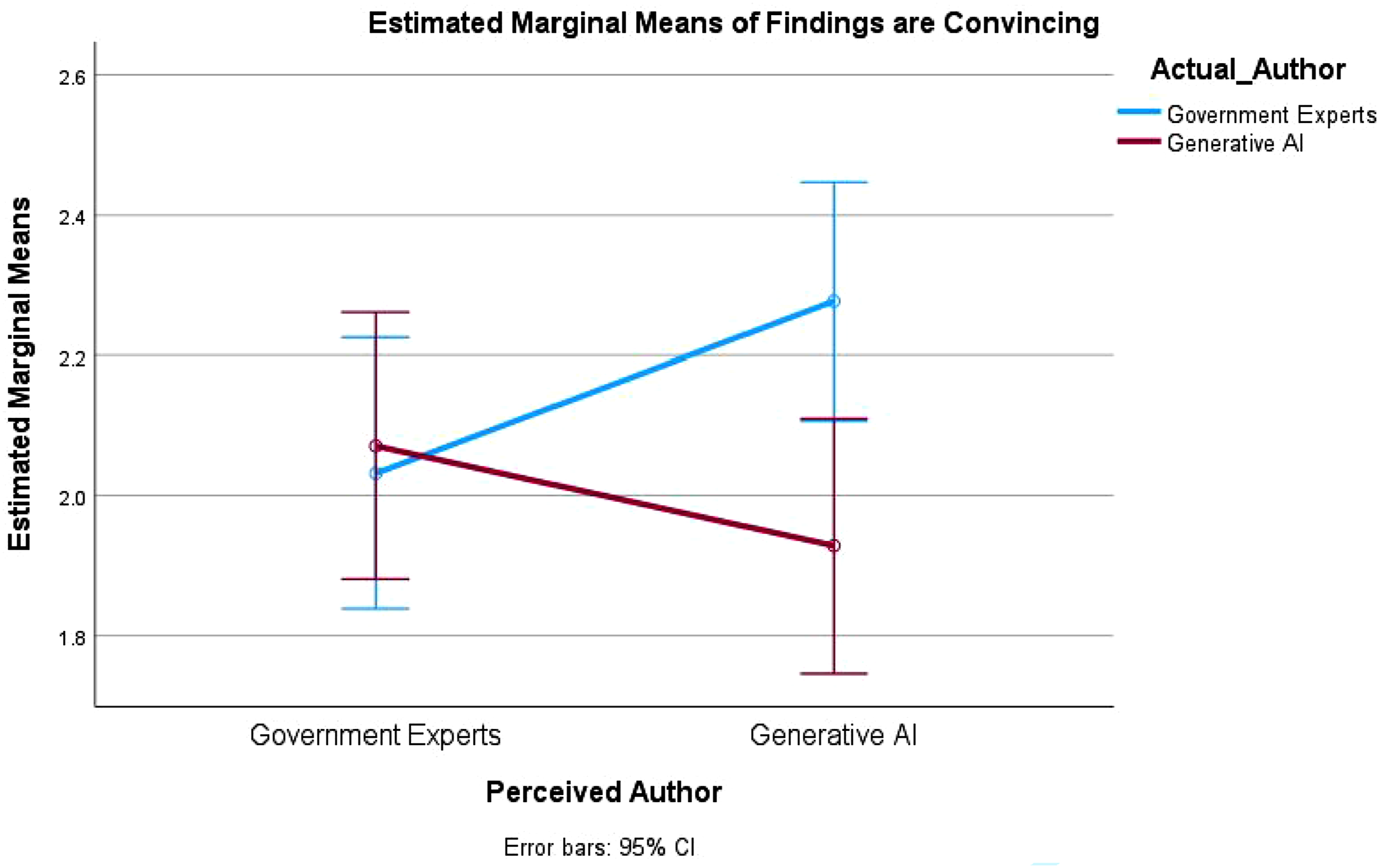

The two-way ANOVA results show that the adjusted model explains a substantial portion of the variance in the perceived convincingness of the findings (Adjusted R Sq = 0.403). Both covariates, trust in the national government and agreement with the findings, are significant predictors, while the perceived and actual authorship individually do not have significant effects. However, a significant interaction between perceived and actual author indicates an interplay between these factors in influencing perceived convincingness. Figure 2 further visualizes this interaction. Interaction diagram of perceived and actual authorship.

The plot highlights that the convincingness of the findings is influenced by the interaction between perceived and actual authorship. Specifically, texts perceived to be written by “Generative AI” but actually authored by “Government Experts” tend to be rated as less convincing.

Overall, these results underscore the complex interplay between perception and actual authorship in shaping participants’ evaluations of the text’s convincingness. The interaction plot illustrates that the alignment between perceived and actual authorship directly impacts the perceived convincingness of the report. When the actual author aligns with the perceived author, participants rate the findings as more convincing.

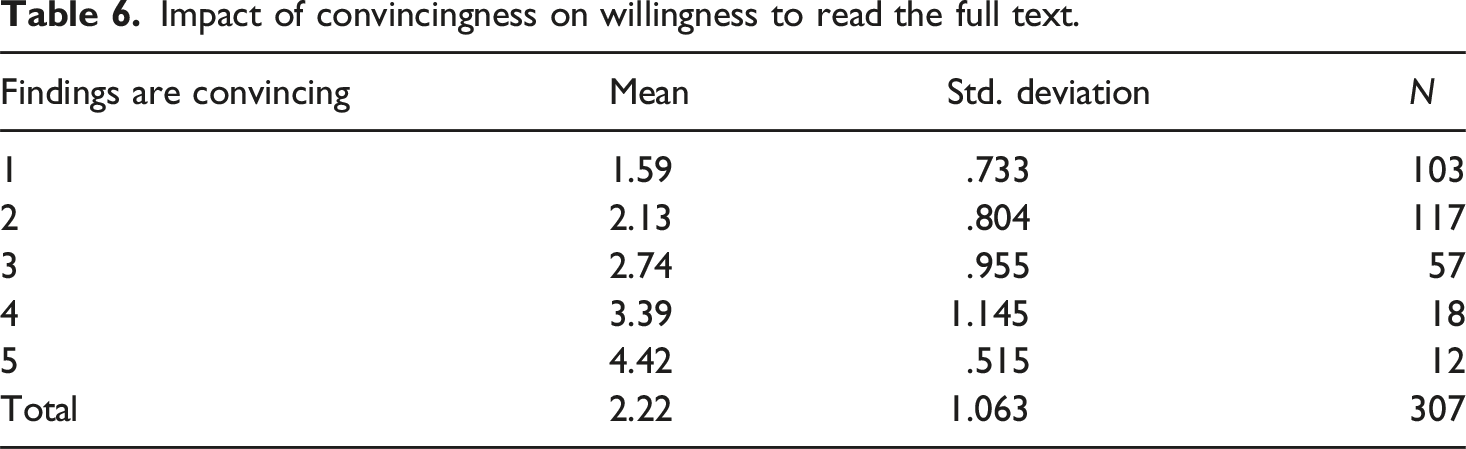

Impact of convincingness on willingness to read the full text.

The Welch ANOVA (Appendix Table A4) confirmed significant differences between groups, stressing the importance of perceived convincingness in motivating engagement with the material. Higher convincingness leads to a greater desire to read the full report, supporting H 3 (descriptive analysis in Appendix Table A5).

Additionally, the results of the between-subjects effects test provide further validation. The corrected model is highly significant (p < .001), explaining a substantial portion of the variance in the willingness to read the full text (Adjusted R Sq = .395). The significant effect of the convincingness variable (p < .001) highlights that the perceived convincingness of the findings has a large and significant impact on participants’ willingness to read the full text. Thus, this indicates the high perceived relevance the participants give to the report.

Overall, our analyses reveal nuanced findings regarding the role of authorship in shaping perceived convincingness and citizen engagement. Neither perceived authorship (p = .584) nor actual authorship (p = .102) had a statistically significant main effect, offering no support for H1 and H2. However, a significant interaction effect between perceived and actual authorship (p = .039) underscores the importance of source congruence, i.e., the alignment between perception and reality in influencing perceived credibility. The explanatory model accounted for over 40% of the variance in perceived convincingness (Adj. R2 = .403), with trust in government and agreement with findings exerting strong, significant effects (both p < .001). Supporting H3, we also find that higher perceived convincingness is strongly associated with participants’ willingness to read the full report (p < .001), suggesting that credibility perceptions are crucial for fostering civic engagement. These results highlight the importance of authorship framing in AI-mediated communication, as well as the potential for misaligned expectations to undermine citizen trust in public sector foresight efforts.

Discussion

Our findings indicate that participants rated the Foresight Report as more convincing when the perceived and actual authorship aligned, particularly favoring texts which were perceived to be and are in fact authored by Government Experts. Conversely, when the text was perceived to be written by GenAI, it was rated as less convincing if the actual author were in fact Government Experts. This suggests that credibility suffers when participants expect an “AI-authored” report but encounter one actually written by experts, highlighting the impact of a mismatch between perceived and actual authorship. Furthermore, this aligns with the broader negative connotation associated with AI disclosure (Keppeler, 2024).

The significant interaction term in the ANOVA results (p < 0.05) highlights the necessity of considering both the perceived and actual authorship when evaluating the convincingness of government reports. This interaction indicates that the actual author’s effect on the text’s perceived convincingness depends on who the participants believe the author is. Additionally, overlapping confidence intervals when the perceived authors are Government Experts and when the perceived author is GenAI further support the significance of this interaction effect.

Specifically, the data show that when there is a mismatch between the perceived and actual authors, such as believing the text was written by GenAI when it was actually authored by Government Experts, the perceived convincingness can vary significantly. These findings suggest that convincingness and expectations play a vital role in how information is received and evaluated. This is particularly important given the questions around the disclosure of GenAI use in foresight reports.

As the context is already highly uncertain, with no guarantee on what will happen in the future and no data on it available, there are questions around expertise that arise. This has also been extensively studied in the context of GenAI, with questions of how implementations could look in strategic foresight (Geurts et al., 2022). This convergence of expertise attribution in foresight offers ground for extending source credibility and algorithmic legitimacy frameworks. Therefore, this demands scholars to reconceptualize trust and human–machine collaboration in public-sector foresight projects.

Lastly, when considering the impact, the convincingness has on the perceived relevance for the citizens, results indicate that this is a highly significant indicator. Of course, it must be considered whether this is a case of self-selection. However, this is unlikely in the present case. Of all the people who clicked on the experiment, 95.82% decided to finish the study. This means that the participants do not show signs of a selection bias.

Contribution to literature

The present study offers three key theoretical contributions to public administration scholarship. First, we contribute to the literature on source credibility in the public sector (e.g., James and Van Ryzin, 2016). Especially as the boundaries between human and artificially produced texts become less clear, we add to the literature by identifying the alignment between perceived and actual authorship as a critical factor shaping how citizens evaluate the credibility of government reports. We show that it is the congruence between what people believe and what is true about a report’s authorship – rather than source identity alone – that significantly influences credibility judgments.

In doing so, we complement work on the role of disseminating bodies in foresight reports (Felli and Castree, 2012) and on the disclosure effects of GenAI in the public sector (Keppeler, 2024). Additionally, the experiment advances scholarly understanding of questions concerning the relevance of human expertise in the sensemaking of citizens of uncertain futures in foresight engagements (Bolger and Wright, 2017), and extends prior research on expert authority and citizen sensemaking under uncertainty (Bolger and Wright, 2017). This contribution is important because it explains how credibility in public communication depends less on whether a report is authored by humans or AI, and more on whether disclosure practices align citizens’ expectations with reality.

Second, we further understanding about trust in governments, with prior research identifying transparency as an important factor (Grimmelikhuijsen et al., 2013). When using GenAI, it is essential to disclose its use adequately (Dasborough, 2023; Goktas, 2024), as this raises questions about the reliability and credibility of the responses provided (Shin, 2021). Our results further underline that the correct identification of the authorship has a positive overall effect on the convincingness of foresight reports. This is an important contribution as it provides further insights into the link between citizens’ perception of AI-supported public services, i.e., the provision of a future-proofing report, and how their perceived trust influences their willingness to use the material, i.e., reading the report (Wang et al., 2023).

Third, our findings advance the emerging scholarship on GenAI and citizen trust in public sector communication (Marien and Hooghe, 2011; Simon et al., 2023). By showing that perceived convincingness strongly affects participants’ willingness to engage further with foresight reports, we demonstrate that the use of GenAI also influences the reception of government knowledge. This highlights how credibility and perceptual alignment impact citizen engagement with AI-generated content. Our results invite future research to integrate digital authorship into existing sensemaking and legitimacy frameworks, and to adopt insights from other AI-mediated communication domains (Jia et al., 2024) and to examine how evolving AI competencies recalibrate the balance between expert authority and algorithmic persuasion in strategic foresight (Annapureddy et al., 2025).

Implications for practice

In addition to the theoretical contributions, this study offers important implications for practitioners of strategic foresight in public administration. First, the findings underscore the necessity of carefully managing authorship disclosure when GenAI tools are employed in the preparation of foresight reports. Since perceptions of authorship significantly influence the perceived convincingness and trustworthiness of a report, decisions regarding whether and how to disclose the use of GenAI carry practical consequences for the legitimacy of government communication. In strategic foresight, a context characterized by uncertainty and strategic complexity, this consideration becomes particularly salient for administrations aiming to maintain credibility (Kunseler et al., 2015; Van der Steen and Van der Duin, 2012).

Second, the study provides insight into how citizens evaluate the use of AI in public sector foresight. While prior research highlights the potential of GenAI to enhance scenario-based planning (Ködding et al., 2023), our findings demonstrate that perceived authorship affects how reports are received. Foresight practitioners and policymakers operating under conditions of public scrutiny can use these insights to design communication strategies that take public perception into account. This may help increase the legitimacy, accessibility, and acceptance of AI-supported outputs in government, thereby enabling the responsible integration of emerging technologies into democratic governance.

Limitations and further research

While using online survey experiments offers several advantages, there are also certain limitations. While we took the necessary steps to ensure the data quality of the responses, there are certain inherent aspects such as sampling and representativeness issues or self-selection bias (Bethlehem, 2010). Therefore, generalizations from our results are connected with the background of the respondents, which has been found to be a significant and representative proxy for the U.S. population (Redmiles et al., 2019).

Further, the research design consisting of the experimental setup and the choice to use ANOVA as the analytical method may yield different results to other approaches, for example by using a survey setup and employ structural equation modeling. In a future approach, this could further identify additional latent-variable decision framework and identify further paths of the influence of authorship on convincingness of foresight reports.

For further research, it would be beneficial to understand in-depth the importance of the context of the report or publication. As the use of GenAI in the public sector is perceived as less trustworthy in more personal topics than in technical areas (Aoki, 2020), our findings offer limited insights in how citizens’ trust could look like if the published report was on a more technical subject. Forwarding our understanding in this will also benefit the question if citizens consider foresight reports to be a more personal or more technical report.

Furthermore, given cultural and language-related differences in the understanding of time and temporality (Liang et al., 2018), also concretely in foresight projects (Andersen and Rasmussen, 2014; Cagnin and Könnölä, 2014), it would be beneficial for future research to build on these insights and foster our understanding, how GenAI input may be trusted differently in different cultural settings.

Conclusion

Overall, our analysis underscores the critical role of perceived convincingness and relevance in government reports. Ensuring that the perceived and actual authorship align can enhance the perceived convincingness and, consequently, the willingness of citizens to read the full text. This has important implications for how information is presented in various contexts, particularly those involving GenAI and expert authorship.

First, our results highlight the critical role of both perception and reality in determining how information is received and evaluated. Understanding this interaction can provide valuable insights into how to present information more convincingly in various contexts, particularly those involving GenAI and expert authorship. Furthermore, the strong correlation between the convincingness and the perceived relevance of the text further adds to current understanding.

Second, our findings suggest that enhancing the convincingness of findings could be a key strategy to increase citizen engagement and attention for reports on policy issues. Ensuring that the findings are perceived as convincing and convincing may lead to a greater interest in reading of and engagement with the full report. This insight can be particularly valuable for researchers and practitioners aiming to disseminate their work more effectively, especially combined with recent findings on the reported willingness to follow AI recommendations (Selten et al., 2023; Wang et al., 2023).

Third, our study emphasizes that “staying true” is still true despite very convincing results from GenAI. Correctly reporting the authorship behind a text enhances the convincingness of results. This is particularly interesting, especially in light of recent studies presenting evidence that humans seem to struggle to tell apart artificially generated texts from human-generated ones when not informed accordingly (Fui-Hoon Nah et al., 2023; Nov et al., 2023).

The results highlight the critical role of both perception and reality in determining how information is received and evaluated. Hence, the research explores potential avenues for further advancements of AI into areas that are still predominantly human domains. Given recent developments in AI-powered agents, this will demand further attention for both public management researchers and practitioners.

Footnotes

Ethical considerations

The Ethics Review Committee at the University of St Gallen approved our online survey experiment (approval: HSG-EC-20240212) on March 15, 2024. Respondents gave written consent for review before starting and informed consent after the completion of the experiment.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

All data is available upon reasonable request.