Abstract

Travel barriers can turn everyday journeys into challenges that hinder activity participation. For two decades, researchers have frequently sought to measure travel barriers through surveys. Questionnaire design shapes respondents’ cognitive processing of questions, making survey reliability, validity, and usability crucial to yielding policy-relevant insights. This study applied a system-based approach called the Problem Classification Coding Scheme (CCS) to critically review existing travel barrier survey questions to assess their consistency with best practices. We carried out a keyword search relating to equity, transportation, and surveys. After carefully considering their relevance to the study and based on the availability of the full questionnaires, we evaluated 29 questionnaires used in 34 studies using the CCS. Overall, we identified 920 problems across 1,850 questions; over 32% of the questions had at least one problem. Six issues—vague or unclear questions, unclear respondent instructions, undefined periods, rolling periods, high detail requirements, and complex mental calculations—represented over two-thirds of the identified problems. Over 58% of the problems also occurred in the “comprehension” category of problem classification. We discuss example questions from the reviewed questionnaires to explain why they are likely to cause reliability and validity issues. We also present a checklist as a practical tool to assist researchers and users of travel barrier research in designing robust instruments.

Surveys are widely used in both academic and nonacademic research to understand the travel barriers experienced by people at risk of transport-related social exclusion (TRSE) and to help policy makers and planners address these barriers. Academic researchers frequently repurpose existing household travel surveys or conduct study-specific surveys to examine travel barriers. They also often replicate specific questions and categorizations from government surveys to compare their sampled responses with a wider population. Yet, there is little understanding of the extent to which the survey questions adequately and reliably capture the perceived and actualized experiences of barriers. This is important because questionnaire design can directly affect the reliability, validity, and usability of the research findings ( 1 , 2 ) when it comes to designing policy and planning interventions, and the inaccurate measurement of travel barriers may lead planners to minimize efforts to remove them and/or to overpromote some solutions over others.

Travel barriers are factors that prevent or make it substantially difficult for people to travel. Such barriers may be related to the design and practical usability of the transportation mode and infrastructure, safety issues, monetary cost and travel time, unpleasant interactions with other travelers, attitudinal factors of the individual, social norms, and lack of availability and accessibility of required information to travel. These factors may have varying effects on individuals from different sociodemographic and economic groups. Different travel barriers can also interact with each other and make the actual experience of the barriers complex. Travel barriers are important in transport justice research because they can lead to transport poverty, reduce activity participation, and increase the risk of TRSE ( 3 ). Research on travel barriers can advance transport justice by helping policy makers identify interventions to empower travelers.

In this study, we critically evaluate the framing of TRSE survey questions about travel barriers using cognitive research approaches applied to survey methodology. The aim is to better understand whether these questions are consistent with best practice recommendations for questionnaire design, to provide guidance for researchers and in doing so, improve their survey design skills. We conducted a critical review of academic literature relating to TRSE surveys about travel barriers (peer-reviewed journal articles, book chapters, conference proceedings) published in English between the year 2000 and January 2022. Our review answers the question: “To what extent are TRSE survey questionnaires used to study travel barriers consistent with the recommendations for good questionnaire design practices?”

Literature Review

Impacts of Questionnaire Design on Survey Outcomes

The science of understanding the impacts of questionnaire design on survey outcomes is extensive and complex. Questionnaire design has a significant underlying component of cognitive processing, which is often overlooked by researchers. This involves processing complex information relevant to the questions in a relatively short time and then responding to those questions. The respondents’ own value systems, beliefs, and understanding of the world, both in relation to what is being asked and what is or is not desirable, all influence how the respondent processes the information ( 4 – 6 ). A wide range of factors are involved in how a respondent interprets and responds to survey questions. Questionnaire design can directly influence these outcomes and, thereby, affect the reliability and validity of the responses ( 1 , 2 ).

Two key components of questionnaire design are the “survey framing effect” and the “survey mode effect.” Both can have an impact on survey responses and, consequently, the reliability and validity of the survey outcomes ( 7 , 8 ). The survey framing effect refers to the impact of the content of the survey on the respondent and is reflected through question types, response formats, question wording, questionnaire and question length, and order ( 6 , 7 ). To illustrate, let us consider an example open-ended question: “How many trips did you make in the last month by walking? Consider all work and nonwork trips over 10 min, counting each one-way trip separately.” Respondents must consider multiple criteria simultaneously to answer this question—trip type, duration, timeframe, and the distinction between one-way and round trips. This complexity can make the question feel lengthy and cognitively demanding. Furthermore, the open-ended questions also lack predefined range options, making accurate recall difficult, especially for frequent walkers. By contrast, offering multiple-choice responses (e.g., “10 or fewer,”“11 to 20,”“21 to 30”) could reduce the cognitive load on respondents and improve accuracy of the data collected.

The survey mode effect indicates the impact of the medium or instrument through which a respondent completes the survey, for example, the effects of self-administered questionnaires versus computer-assisted personal interviews with an interviewer, travel diary methods versus questionnaire methods ( 7 , 9 , 10 ). In this study, we could not examine the impact of the medium through which the questionnaire surveys were administered owing to the lack of data on the survey medium or the use of multiple media in a single study. Therefore, we could not control the effects of the survey mode. However, framing effects can influence survey responses regardless of the survey mode, as question characteristics directly affect the interviewer’s and respondent’s cognitive processing, influencing their behavior, interactional processing, and the survey answer ( 11 ).

Furthermore, responding to survey questions does not only involve an immediate understanding of the question. Rather, interpreting the questions involves a great deal of complex cognitive processing. Cognitive psychologists have identified four main stages of cognitive processing involved in answering survey questions: (1) preliminary perceptions and comprehension of the questions, (2) searching relevant concepts in the memory, (3) assigning meanings to the underlying representation of the question and integrating the retrieved memory to that, and (4) selecting from the provided response options ( 4 , 5 , 10 , 12 ). Errors can occur at any of these stages, and questionnaire design can directly influence all these stages by making it easier or harder to comprehend the questions, posing recall challenges, using vague, difficult or biased wordings, and offering vague and/or complex response options. Therefore, the direct interaction between a respondent’s cognitive processing and question characteristics provides a rationale for examining the survey framing effects in travel barrier literature.

Question characteristics involve several aspects, including the broad question topic (travel barriers, in our case), question type (e.g., multiple choice, Likert Scale), response format and dimensions, question structure (e.g., filter and follow-up questions), question wording (e.g., length, readability, term ambiguity, double-barreled questions, and grammatical complexity), and question specifications (task instructions, definitions, examples, parenthetical statements, reference periods). System-based approaches, for example, the Problem Classification Coding Scheme (CCS), Question Appraisal System (QAS), Question-Understanding Aid, or Survey Quality Predictor (SQP), use these dimensions to assess the quality of questionnaires ( 11 ). In this study, we used the CCS ( 13 , 14 ), which identifies 28 survey problems spread throughout the four cognitive stages of answering questions ( 4 ). This allowed us to examine how frequently problems occur during the four stages of responding to TRSE questionnaires studying travel barriers. The section covering the findings illustrates the results of this examination. We also present a list of good question framing examples based on CCS in Appendix B.

An Overview of the Problem Classification Coding Scheme

The CCS, developed by Rothgeb and colleagues ( 14 ), is a qualitative system designed to categorize questionnaire issues using the four-stage cognitive response model proposed by Tourangeau et al. ( 4 , 14 ). The CCS organizes codes into three hierarchical levels. At the highest level or core stage, it aligns with Tourangeau and colleagues’ model with four core stages: (1) comprehension, (2) retrieval, (3) judgment, and (4) response selection. Each of these categories is further subdivided into mid-level subdivisions and lowest-level problem categories, with the lowest level drawing insights on the problem types identified in the QAS ( 15 ). By integrating with cognitive science principles, the CCS serves as a versatile, flexible, and detailed tool for diagnosing questionnaire issues. System-based approaches like CCS, QAS, and SQP have been found to be effective in evaluating the quality of survey questionnaires ( 16 , 17 ). We selected CCS over QAS owing to its alignment with cognitive science principles and over SQP because SQP is limited to specific research topics and cannot evaluate questionnaires focused on transportation equity ( 13 , 14 ).

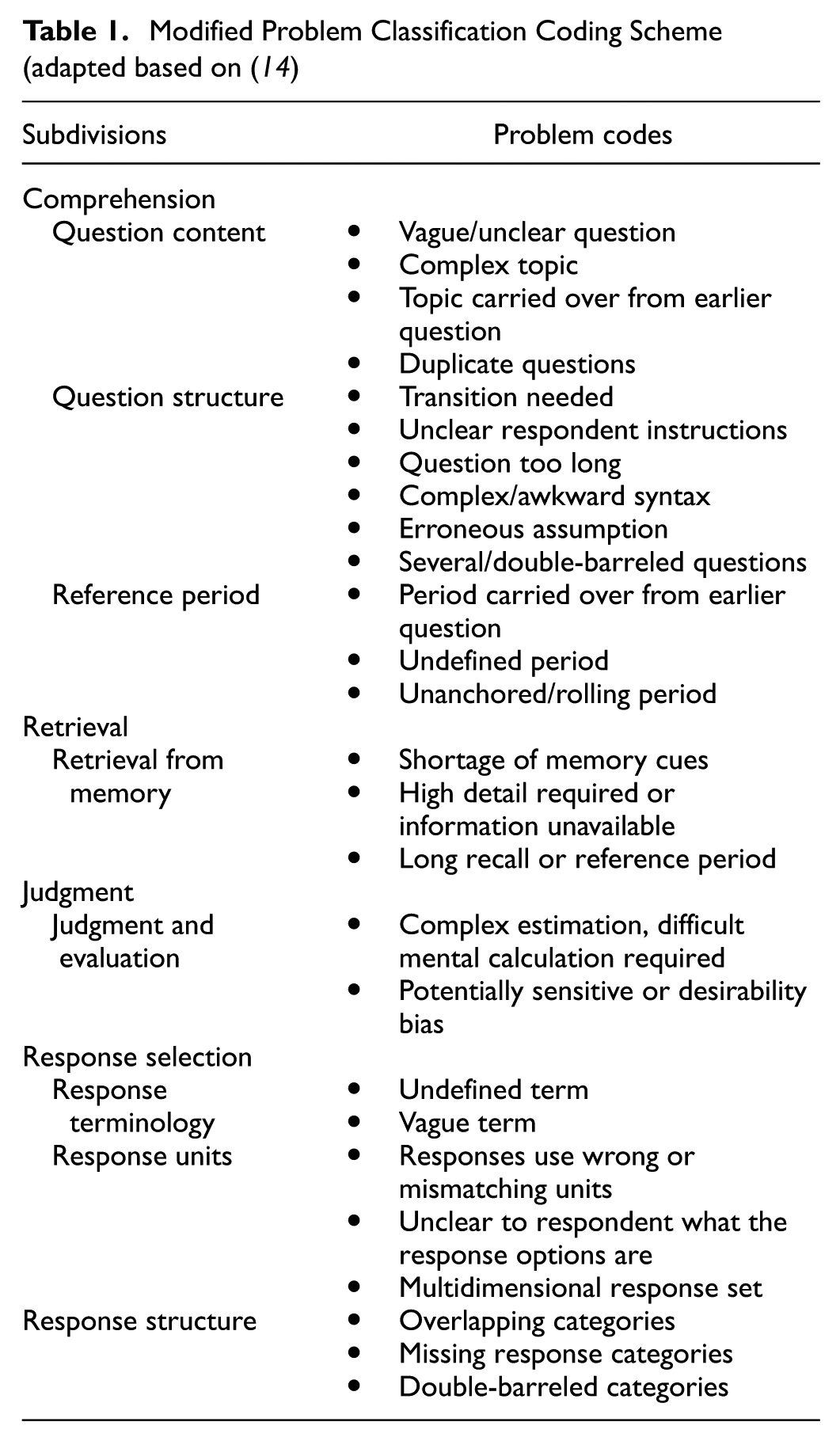

Among the 28 lowest-level problem categories in the CCS, three address interviewer-related problems, whereas the remaining 25 focus on respondent difficulties. In this paper, we concentrate on survey framing effects for the reasons explained earlier, and therefore, focus on respondent challenges only. We adjusted the original CCS proposed by Rothgeb et al. to reduce redundancy and address gaps ( 14 ). Specifically, we merged “vague/unclear question” and “vague term” under a single category in “comprehension,” as vague terminology essentially results in overall question ambiguity. Additionally, we introduced a category for double-barreled categories under “response selection.”Table 1 presents the modified coding scheme utilized in this study.

Modified Problem Classification Coding Scheme (adapted based on ( 14 )

Now, we will briefly explain these problems within each subdivision in relation to TRSE.

Question Content

“Vague/unclear question” is defined as questions that can be interpreted in several ways and it is unclear what is to be included and excluded ( 15 ). A question can also be vague if it contains familiar terms that are not clearly defined ( 15 ). Whereas vague/unclear question is about the general lack of clarity of otherwise common words, “complex topic” indicates use of technical or complex topics or terms that might be too complicated for the respondents and the question does not define or explain such technical topics ( 13 , 15 ). “Topic carried over from earlier question” can be problematic if the transition or link between the question is not clear, especially for people with literacy issues or low cognitive capacities who are also more likely to experience travel barriers and social exclusion. “Duplicate questions” are the ones where multiple questions in the questionnaire cover almost the same topic, concept, or query. A direct outcome of this is a response burden on the respondents and consequently, lower response rate and/or a higher number of “Don’t Know” and “Refused” answers provided ( 28 ). Furthermore, duplicate questions can create redundancy and reduce the reliability of statistical models through overestimation or underestimation of the effects of different variables. However, researchers sometimes accept these risks and include duplicate questions as attention checks.

Question Structure

Questions should also have appropriate and smooth transitions when switching topics or introducing sensitive topics. A lack of a proper transition can create confusion for the respondents owing to the abrupt change in topic. This can also create fatigue or stress for a respondent who is answering a long questionnaire or one that includes sensitive topics. This is particularly important in TRSE surveys because respondents may have literacy issues, low cognitive capacities (e.g., older adults, people with disabilities, children), or face challenges from language barriers (i.e., responding to the questionnaires in their nonnative language).

Questions can sometimes have confusing, complex, or conflicting instructions ( 13 , 15 ). A common example is the lack of clarity on what to include or exclude. For instance, “What is your annual income?” without specifying whether the question is about individual or household income or before- or after-tax income. Such unclear instructions can lead to the respondents misunderstanding the question and different respondents understanding the same question differently. Many respondents might not even know the exact number, as low-wage workers who frequently experience TRSE are also often paid weekly, biweekly, or intermittently.

Questions can also be too lengthy and contain complex/awkward or ungrammatical syntax, which can adversely affect readability and comprehension ( 13 , 15 ). Erroneous assumptions include inappropriate assumptions about a respondent that affect the relevance of the question for them or assumptions of constant behavior when the behavior is, in fact, variable ( 15 ).

Double-barreled questions cover more than one implicit topic or idea but allow only one answer. Responses to such questions are often ambiguous because they contain multiple ideas ( 1 , 2 , 15 ).

Reference Period and Retrieval from Memory

Reference period and retrieval from memory are closely related to each other and therefore we discuss them together. The choice of reference period is crucial in any TRSE survey including the ones addressing travel barriers. This is because not all types of travel barriers possess the same degree of severity or occur at the same frequency. For example, a high transit fare might be a pressing yet constant travel barrier for many individuals. As a result, they are less likely to remember every instance of dealing with this barrier. On the other hand, a car breaking down or road accidents are more salient events that are likely to occur less frequently. Thus, individuals might be able to recall such occurrences more easily. Patterned barriers might also be recalled easily. For example, if the bus is always late when an individual goes to work at 8 a.m., this information is more likely to be encoded in memory and retrieved easily. Therefore, the choice of reference period is dependent on how patterned, severe, and distinctive the behavior or barrier is ( 18 – 20 ).

While respondents might recall the occurrence of an incident, they still might not accurately remember when the incident happened, especially because the date of the incident is one of the least accurately remembered features and memories become less precise over time ( 18 ). This principle about memory makes the choice of reference period even more crucial because even when respondents might remember significant events (e.g., road accidents), they might struggle to accurately recall when they happened. Research also suggests that severe but less frequent events tend to be recalled as taking place more recently than they actually did. This phenomenon leads to overreporting and is called “forward telescoping” ( 18 , 21 ). The opposite phenomenon, called “backward telescoping” leads to underreporting where respondents recall recent events as happening longer ago than they actually did ( 18 , 21 ).

Bounded recall or anchoring is often suggested to minimize telescoping whereby specific reference points are used in the question to define the reference period ( 20 – 22 ). Showing the respondents a calendar with the reference period marked has also been found to improve the accuracy of reporting via the additional visual aid ( 20 , 23 ). Previous research also suggests that using a longer recall period is preferable for more salient events whereas shorter periods should be used for frequently occurring events ( 24 ).

Judgment and Evaluation

Questions can strain respondents’ working memory if they require complex mental calculations ( 13 , 15 ). This is because respondents first need to recall an array of relevant information, store it in their short-term memory, and do the mental calculation to answer such questions. This is different from recall error in that the primary challenge here is not being able to recall the information but difficulties in accurately processing different pieces of information.

Questions can also sometimes introduce potentially sensitive topics that might be uncomfortable for the respondents. The nature of the research may frequently require asking about sensitive issues. However, this can be problematic if the questions are asked in an offensive or judgmental language or create unnecessary emotional burden ( 15 ). Potentially sensitive topics should also be preceded by transition sentences to allow the respondents to ease into the topic gradually instead of posing the questions abruptly. Such topics include but are not limited to harassment experiences during travel, mental health and well-being related to TRSE, or even income as individuals experiencing TRSE might feel uneasy revealing their unemployment or low-income status. Furthermore, sometimes questions are framed to elicit a specific desired answer with which the respondents may not actually agree ( 25 , 26 ). Such leading questions can result in the “bandwagon fallacy,” which implies that responding to a question in a certain way or choosing a particular option is desired and makes the respondents feel smart, popular, or socially acceptable ( 15 ). The opposite can also happen if questions are framed in a way that makes the respondents feel unsmart or unpopular unless they answer in a certain way ( 26 ).

Response Terminology

Similar to vague/unclear question, vague response refers to response options that are not well-defined, and therefore, can be interpreted in multiple ways. Undefined response options refer to technical terms in the response categories that should be defined.

Response Units

Asking questions with multiple response dimensions can pose comprehension difficulties for the respondents. An example of such a question is asking about the frequency and intensity of a barrier under the same response category. Such questions should be broken down into separate questions ( 27 ). Another problem with response units may occur if wrong or mismatching units are used, or units are frequently changed (e.g., asking for answers in kilometers in one question and in miles in another). Furthermore, respondents are also likely to struggle if they are not clear what the response options are. For example, missing or inconsistent scale definitions in a Likert scale or the lack of unit specification.

Response Structure

Overlapping response categories refer to categories that are not mutually exclusive (for example, 1 to 5 km, 5 to 10 km, 10 to 15 km). Missing response categories, on the other hand, occur when the provided categories do not cover all possible response options.

Similar to double-barreled questions, double-barreled response options cover more than one implicit subject within one option. Both double-barreled questions and response options should be broken down into multiple distinct questions or response categories that cover only one topic.

Methodology

This paper reports the results from a broad scoping review of surveys used in the TRSE literature. Therefore, this study is exploratory in nature and aims to better understand the landscape of growing TRSE literature. Our preliminary scoping research led us to consider any paper that addressed at least one of the following six topics relating to TRSE: (i) suppressed travel (involuntarily unrealized travel leading to social exclusion), (ii) travel barriers (factors preventing travel or making travel substantially challenging), (iii) activity participation (low activity participation as a form of social exclusion), (iv) life outcomes (impacts of suppressed travel and travel barriers on socioeconomic and well-being outcomes), (v) travel satisfaction (the presence or absence of experienced satisfaction during travel), and (vi) mobility aspirations (travel preferences and desire to move freely and without significant barriers). This approach proved necessary as many surveys reporting on mobility barriers are framed in relation to other interrelated topics without using the keyword “barrier” in their metadata ( 28 ). As a result, reviewing travel barrier studies under a broader TRSE umbrella gave us comprehensive coverage of literature in which concepts and terminologies are often not strictly defined, frequently overlap, or both. Therefore, a more commonly adopted approach to using exact keywords pertaining to the research problem would not have been helpful in this case.

Our review includes papers published on or after January 1, 2000, as this marks the publication date of the earliest academic literature that we identified on TRSE ( 29 ). We experimented with different keyword search configurations across various databases and visualized the keyword networks using VOSviewer software. From these tests, we decided to use the Web of Science and Scopus databases to search for relevant studies. Specifically, we focused on peer-reviewed journal articles, books, and conference proceedings published in English from January 2000 to January 2022. To be included in this initial search, a study needed to contain at least one word pertaining to equity or social exclusion, one word pertaining to transportation or travel, and one word pertaining to survey methodology in the metadata (title, keywords, or abstract) ( 28 ). The complete list of keywords is provided in Appendix A.

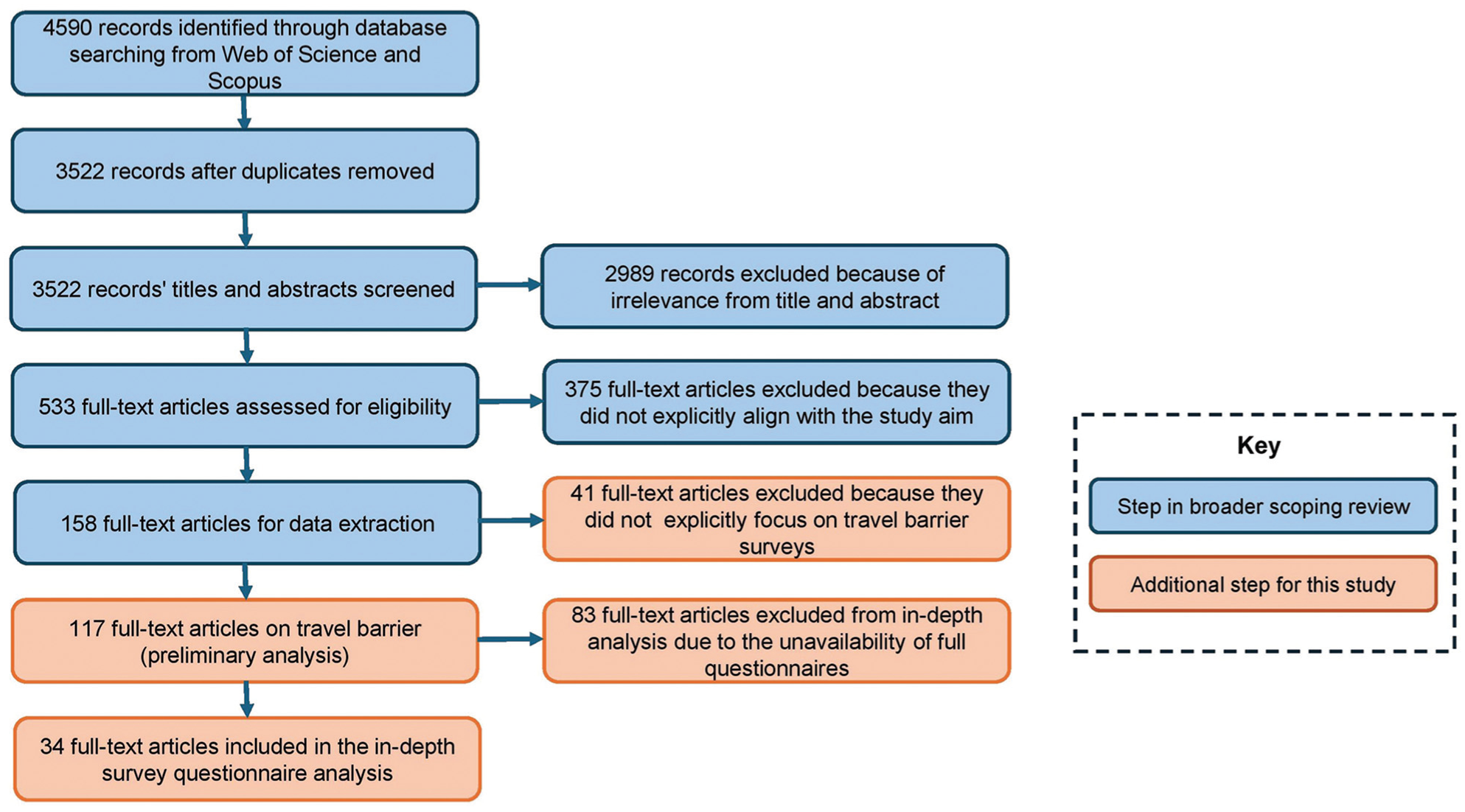

Our initial search led us to 4,590 studies. Eliminating duplicates reduced the number of studies in the review to 3,522 (Figure 1). We used the following inclusion criteria to select the studies that would be advanced to a full-text review:

The study must have examined one or more of the following concepts as the outcome variable: transport poverty, TRSE, mobility poverty, accessibility poverty, mobility justice, transportation justice, transportation equity, transportation inequity, transportation insecurity, transportation barrier, transportation disadvantage, suppressed travel, satisfaction with transport, mobility aspirations. It is essential to clarify that we focused on these underlying concepts rather than specific keywords since these concepts are often represented by similar or synonymous terms.

The study must have conducted or used a quantitative questionnaire survey.

The study must have been published in English in peer-reviewed journal articles, books, or conference proceedings between January 2000 and January 2022.

The study can include participants of any sociodemographic or economic group.

Two research assistants reviewed the 3,522 titles and abstracts for their inclusion using the systematic review software Covidence. Each abstract was reviewed by both research assistants, who reached an agreement 88% of the time. A co-author conducted an additional check to confirm that the papers identified by the research assistants met the inclusion criteria. For the remaining 12% of cases, where one assistant wanted to include an abstract and the other did not, a co-author made the final decision. At this stage, many papers did not focus on any of the six TRSE-related topics. For example, studies that examined only the spatial patterns of inequities in accessibility without studying its social outcomes, or that focused on the spiritual and leisure values of travel, or the sociodemographic profiles of specific transportation mode-users, but did not shed light on TRSE. Furthermore, studies relating to physical mobility and rehabilitation, mobility relating to competitive or recreational sports, and e-commerce-related travel were not relevant to our study goal. As a result, we excluded these papers from further analysis, reducing the number of studies to 533 ( 28 ).

Flowchart of the methodology.

The authors conducted a full-text review of the 533 papers. During this review, we excluded papers that either (a) did not address one of the six dimensions of TRSE listed above or (b) did not align with our study’s goal of evaluating surveys. Excluded studies focused on travel behavior or calculated accessibility indices for different sociodemographic groups, mentioning TRSE concepts only in the context of background information or policy recommendations, or used other data collection methods (e.g., interviews and focus group discussions). By the end of the review process, 158 studies remained for data extraction. The authors then extracted data from the remaining papers, which included information on geography, year, journal, referenced or analyzed modes, populations focused on, and which of the six TRSE topics the paper addressed. Papers could be coded as addressing multiple topics ( 28 ). The focus of this paper is surveys in the TRSE literature on travel barriers. In a parallel study, we reviewed surveys in the TRSE literature that measure suppressed travel and unmet travel needs ( 28 ).

Of the 158 TRSE studies, 117 focused on examining travel barriers. These studies examined one or more of the following barrier types: travel mode (design, engineering, and practical usability of the mode of transportation), safety, digital barriers, cost, travel time (when the amount of time spent traveling using a particular mode or non-travel-time-related pressures do not allow an individual to use that mode conveniently), services/scheduling (lack of appropriate service in places, times, and manners needed for the respondents to travel comfortably and safely), interactional (behavior of the people with whom an individual needs to directly or indirectly interact while traveling), personal/attitudinal, built environment/physical (occurs in human-made infrastructures or modified physical spaces of the environment that encompass the transportation system), cognitive/informational (lack of availability and accessibility of the information required to travel), cultural/social norms (e.g., gender norms and active travel in some communities), natural barriers (weather, terrain, natural water bodies), and other barriers not covered by the abovementioned types (e.g., pollution) ( 30 ).

Fewer than five papers included the full survey questionnaires. To establish a preliminary insight about the quality of the survey questions, the authors extracted travel-barrier-related questions from papers with available questionnaires and inferred questions from the methodology and findings (e.g., tables and charts) when full questionnaires were unavailable. The authors coded the questionnaire design issues as previously discussed (Table 1). To ensure consistency, every code assigned by one author was verified by two others. Owing to the limited availability of actual questionnaires, system-based approaches could not be fully applied at this preliminary stage. Nevertheless, this initial analysis provided valuable insights into the prevalence of questionnaire design issues, including vague or unclear wording, undefined or rolling periods, long recall periods, double-barreled questions, and response categories.

Our initial analysis identified potential questionnaire design issues in the travel barrier studies that merited further exploration. Accordingly, we contacted the authors of these studies via email to request the full questionnaires used in their research. We were able to collect 29 full questionnaires used in 34 studies in this process. The remaining questionnaires could not be collected because of insufficient author contact information in the papers, lack of response from the authors, outdated email addresses most likely caused by affiliation changes, and restrictions on sharing imposed by external collaborators or funders. We also excluded questionnaires provided by the authors that were not in English. This process gave us a coverage of 29% of the travel barrier studies. These questionnaires were used to evaluate the quality using CCS as discussed in the previous section ( 13 , 14 ). We evaluated all questions relating to travel preferences, barriers, behavior, and vehicle ownership. Aligning with best practices, each statement or item in a matrix was treated as a separate question while analyzing matrix-type questions.

Findings

Our preliminary analysis of 117 full-text articles indicated that travel barrier surveys frequently use vague and generalized words and phrases that can be interpreted differently by different respondents. We additionally found potential issues with the temporal basis or frame of reference of the questions. We found questions to have excessively long recall periods, making it unlikely for respondents to accurately remember details. We also found that vague adverbs of frequency like “often,”“typical,”“regularly,” and “usually” had been used widely.

Our preliminary analysis further suggested that researchers use double-barreled questions and response options, duplicate questions and response options, as well as leading questions with loaded connotations that might influence the respondents’ perception. This preliminary analysis suggested the prevalence of certain potential questionnaire design issues in TRSE surveys on travel barriers that required further appraisal. We discuss these issues and others in more detail using the CCS on 29 full questionnaires.

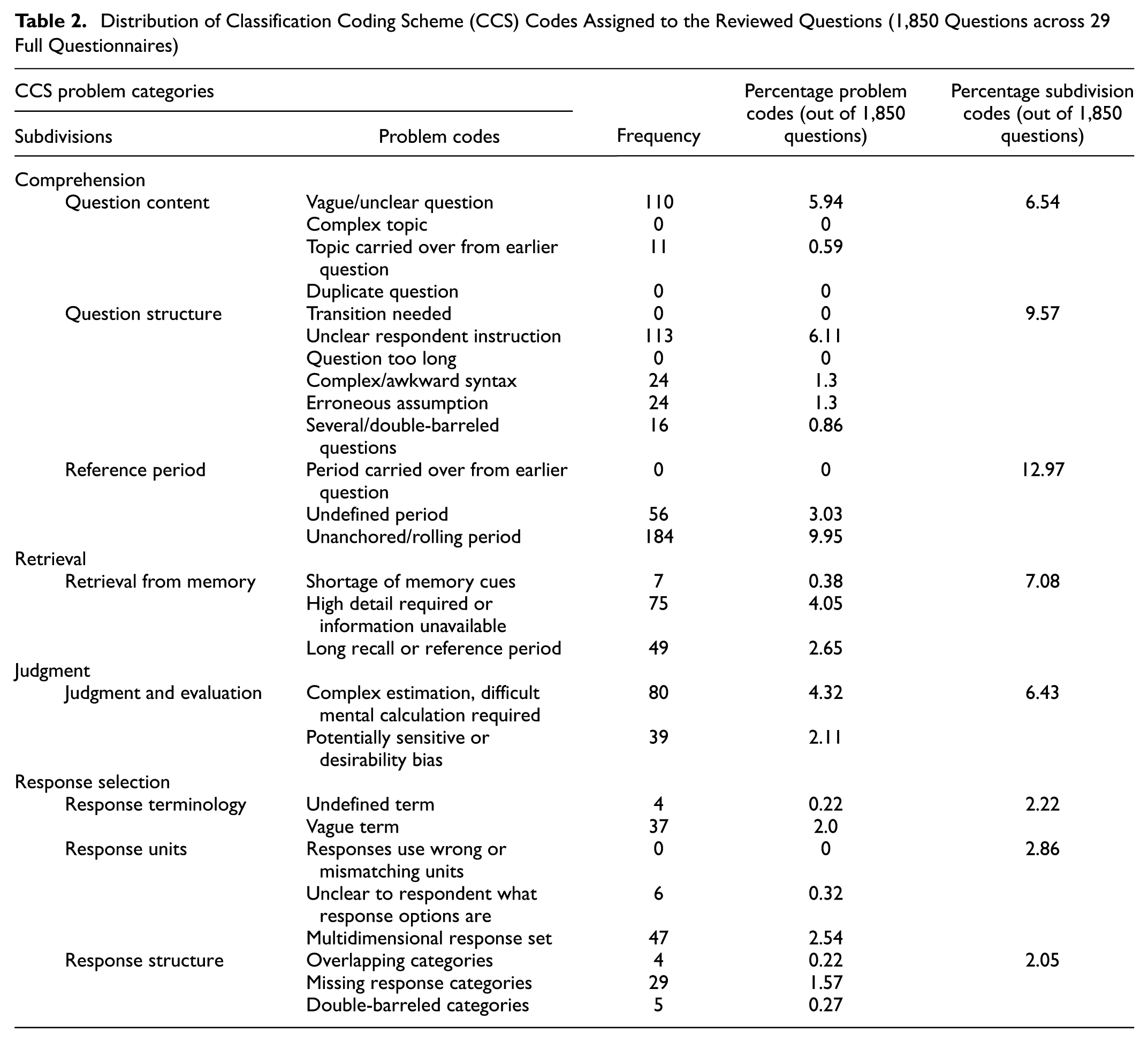

In-Depth Appraisal Using CCS: 29 Questionnaires

In this section, we discuss our findings on the 29 full questionnaires using CCS. Table 2 illustrates the frequency of the problem codes appearing in the reviewed questionnaires. Overall, we identified 920 problems across 1,850 questions with an average of 0.5 lowest-level problem codes per question. Over 32% of the questions had at least one problem.

Distribution of Classification Coding Scheme (CCS) Codes Assigned to the Reviewed Questions (1,850 Questions across 29 Full Questionnaires)

Table 2 reveals that a small number of codes accounted for most of the identified problems. Specifically, six issues—vague or unclear questions, unclear respondent instructions, undefined periods, rolling periods, high detail requirements, and complex mental calculations—represented over two-thirds of all identified problems. At the mid-level subdivisions, issues relating to reference periods, question structure, and question content made up over 58% of the assigned codes. Notably, most of the lowest-level problem codes and all the mid-level subdivision codes contributing to the majority of problem codes were classified under the core “comprehension” stage. These findings suggest that the reviewed TRSE surveys on travel barriers were prone to questionnaire design issues that were likely to have hindered respondents’ ability to understand the questions accurately and easily.

CCS Codes in the Context of Travel Barrier Surveys

In this section, we use example questions from the full questionnaires and discuss in detail why such framing is problematic.

Question Content

Q1: “In a typical month, how often do you have transportation problems?” ( 31 )

Let us take Q1 as an example of a vague or ambiguous question: this question involves a few issues. First, a month can be a long time from which to reliably recall the frequent problems experienced, as previous literature suggests, and it is unclear whether the question refers to the calendar month or a month as a unit of time (i.e., 30 days). Second, “transportation problems” is a broad theme and might require a respondent to consider all the problems they encounter while using transit, biking, walking, or driving. Finally, words like “typical” can be particularly problematic in travel behavior or barrier studies because people’s travel behavior and experiences can fluctuate throughout the year owing to work schedules, nonwork responsibilities, and seasonal changes (e.g., cycling in the winter months has different barriers than cycling in summer, or children’s school season might mean different travel habits and challenges for parents than during the nonschool season). Therefore, there might simply not be one “typical” or “usual” month. On top of that, interpreting what constitutes a “typical” month involving all the possible transportation problems requires processing a great deal of complex information all at once. Therefore, such ambiguities can make it virtually impossible to tease out how each respondent has interpreted the questions, and the responses that might involve potentially significant recall bias.

Q2: “How far do you agree that you cycle because it is flexible? Completely agree/Agree/Neither agree nor disagree/Disagree/Completely disagree” ( 32 ).

The word “flexible” in this question is vague and unclear since it does not clarify flexibility in relation to “what.” Flexibility can encompass diverse aspects for different respondents: whereas for some it can mean being able to make a trip whenever they want, for others it might mean being able to make multiple stops on the way.

Q3: “In the past few months, how often have you traveled to or from WORK AT NIGHT? (After 9:30 p.m. on weekdays; 7 p.m. Saturdays; and 6:30 p.m. on Sundays.)” ( 33 ).

The use of “past few months” not only contains the problem of unanchored/rolling period, but there is also no one way to define “few months.” Some respondents might consider 3 months as “few,” others may consider 5 or 6 months to be “few.” Furthermore, traveling “to or from” is not clearly defined in this question. If a respondent travels to and from work at night, should they count it as one trip or two?

Question Structure

Q4: “Currently, how many trips do you make in a day?” ( 34 )

This question makes an inappropriate assumption of constant behavior ( 15 ) that respondents make the same number of trips every day of the week or month.

Q5: “How many days in the past month or so (20 workdays) did you go to work by: If none, put ‘0’

a. ___ days Walking

b. ___ days Biking

c. ___ days Driving alone

d. ___ days Carpool driver

e. ___ days Carpool passenger

f. ___ days Vanpool

g. ___ days Bus

h. ___ days Taxi

i. ___ days Subway/Trolley” ( 35 ).

This question provides some form of bounded recall by specifying “past 20 workdays” and only asks about trips to the workplace. However, it does not provide any instructions on how to calculate multimodal trips. For example, if a respondent goes to work by walk—bus—walk, do they count walk twice and bus once or do they only count the mode used for the longest distance?

Q6: “Have you ever suffered any negative incidents or have been victim of any crime during the night in Brussels? followed by What type? Mugging/Theft/Assault/Harassment/I never suffered/Other” ( 36 ).

Q6 does not clarify whether multiple answers are allowed. As a result, this question cannot be effectively answered because a respondent might have experienced multiple negative incidents of different types in the past.

Q7: “When the events occurred, did you file a report with the authorities? Yes/No” ( 37 ).

Similarly, Q7 assumes that a respondent either filed reports after every incident or they never filed reports after any incident.

Q8: “Have you already experienced a crash with your e-bike?” followed by “Under which circumstances did this crash occur?” ( 38 ).

Q8 does not indicate which crash is being asked about in situations where the respondents might have experienced multiple crash incidents. These questions lack clarity owing to ambiguous wording, the lack of instructions, and incorrect assumptions. Similar problems have been discussed by Willis and Lesseler ( 15 ). As a result, they can lead to invalid responses and make it difficult for appropriate policy and planning interventions.

Q9: “How would you rate the reliability of the train/underground/metro/light rail/tram?

1. Very reliable

2. Fairly reliable

3. Neither reliable nor unreliable

4. Fairly unreliable

5. Very unreliable

6. (No local service)

7. (Do not use)

8. (No opinion/Don’t know)” ( 39 ).

Q9 makes it difficult to rate the overall reliability as a result of train, underground, metro, light rail, and tram all being in the same question. Although all of them are rail transportation modes, the reliability of one might be very different from the reliability of another, and there is no simple way to effectively judge their combined reliability.

Q10: “Overall, how satisfied were you with your typical daily commute before the relocation to the Glen site?” ( 40 ).

Q10 is an example of erroneous assumption because it assumes the invariability of satisfaction with the daily commute before relocating to the said site. However, “before” suggests any time before the relocation and a respondent might have had different travel behaviors and experiences at different time periods before the relocation. Thus, the question should have a more specific and explicit reference period.

Q11: “If your bike was fitted with active technology, would your risk, compared to other bicycle riders of your age and sex, of being involved in a traffic accident be … much higher/a little higher/virtually the same/a little smaller/much smaller?” ( 32 ).

Q11 has an awkward syntax and is difficult to read. A better syntax might be “If your bike were fitted with active technology, how would your risk of being involved in a traffic accident compare with that of other bicycle riders of your age and sex?”

Reference Period

Among reference period issues, undefined periods and unanchored or rolling periods appeared most frequently in our reviewed papers. The problem of an undefined period occurred when questions were posed without any specific reference period. This absence can lead to ambiguity in how different respondents perceive the question. These questions also have an implicit and potentially erroneous assumption of constant or invariable behaviors, attitudes, or events.

Q12: “Compared to a fixed-route bus trip (i.e., stop-to-stop without any detours), how much more time do you spend on the bus for an on-demand transit trip, and what was the frequency of these delays?” ( 33 ).

Q12 does not have a specific reference period and erroneously assumes an invariable pattern of delays. However, it is possible that in the past 30 days it always took less time [on-demand transit] than a fixed-route bus trip, but in the past 90 days it rarely took less time [on-demand transit] than a fixed-route bus trip. The absence of a defined reference period may result in respondents comparing the two types of trips using different self-selected timeframes, leading to inconsistent interpretations.

Q13: “Have you ever missed a clinic appointment because of transportation problems?” ( 31 ).

Q13 illustrates another example of an undefined timeframe by using terms like “ever” or “lifetime,” implying an indefinite, continuous period. Existing literature suggests that such question framing often yields unreliable responses owing to recall errors and telescoping effects. This question is particularly prone to both backward and forward telescoping, depending on factors such as the frequency with which respondents miss appointments because of transportation issues, the perceived importance of the missed appointments, and the time at which they occurred. Consequently, researchers typically advise against using phrases like “Have you ever …?” or “lifetime” as reference periods ( 18 ).

The next issue is unanchored or rolling periods.

Q14: “How many days in the past month have you walked to the following places from your home or work?” (with 10 types of work and nonwork destinations listed) ( 35 ).

Q14 shows an example of an unanchored/rolling period in which the time period is defined poorly ( 13 , 15 ). “Past month” can be interpreted in various ways: whereas some respondents might consider “past month” as the past 30 days from the day of the survey, others could treat it as the specific month before the month of the survey (e.g., June 1 to 30 if the survey is completed on any day in July). This can also lead to forward and backward telescoping, as it is difficult to imagine that respondents could accurately recall the number of all the walking trips in a month to 10 different types of destination from home or work.

Q15: “Over the past year, how many trips did you miss or delay [to (1) Routine health checkups (2) Chronic health care visits (3) Emergency care] because you could not drive or did not have a ride?

None/1–2/3–5/6–10/11–20/More than 20” ( 41 ).

The phrase “past year” in Q15 could be interpreted in various ways by different respondents in this question, too. Moreover, the long recall period suggests the possibility of telescoping, especially for routine health checkups and chronic health care visits, which are likely to be more frequent than emergency visits.

Retrieval from Memory

Q16: “How long have you been riding a bicycle? (don’t count riding as a child or teenager if you’ve had a break from cycling of a year or more).Years: Months: Weeks:” ( 42 ).

Retrieval from memory is closely related to long recall period. For example, Q16 is likely to put a significant cognitive load on the respondents given the high detail required to answer it, especially if they have been riding for a long time.

Q17: “How often have you used bike share over the past 12 months? (Select one.): Daily/More than once a week/Once a week/Once a fortnight/Once a month/Less often/Never” ( 43 ).

Similarly, accurately recalling bikeshare usage over the past 12 months in Q17 might be challenging for many respondents. Research suggests that longer recall periods lead to more backward telescoping, particularly when the event is considered normal or mundane [ 24 ]. On the other hand, shorter recall periods lead to more forward telescoping, especially for more significant events ([ 21 ] and [ 24 ]). Together, this means that regular bikeshare users are more likely to underreport their usage in this question. However, if the recall period was made shorter (e.g., 6 months), infrequent users would be more likely to overreport their usage. There is no obvious solution here, however, researchers could take certain steps to minimize the potential recall errors. For example, a significantly shorter recall period (e.g., 1 or 2 weeks) might reduce the recall errors for both frequent and infrequent users. Participants might also be asked to maintain a travel diary for a week. Future research might consider comparing travel diaries and responses using a range of recall periods in an attempt to find an ideal recall period.

Q18: “Recall all of the trips that you made last Tuesday. Include all short trips and errands, for example, trips to go to lunch or coffee, or a stop at the post office on the way home. Walking to the bus stop, or to a parked car is not considered by itself a trip. Consider round trips as two separate trips. How many trips did you make last Tuesday?” ( 44 ).

Q18 asks about trips made on only one day. Although the shorter period could possibly facilitate recall, accuracy could vary depending on how close to a Tuesday the respondent is participating in the survey. Moreover, it requires the respondents to recall detailed information including short trips and errands. Compared with longer trips or work trips, participants are more likely to forget or subconsciously ignore the short trips (e.g., trips to get coffee). A better approach might have been to provide more memory cues in this question by listing a range of possible trip purposes.

In analyzing potential recall issues, we took into account the type, severity, and frequency of the barrier or incident. For example, whereas we identified the question “How often have you used bike share over the past 12 months?” as posing potential recall issues owing to the long reference period, we do not believe the question “What was the MAIN cause of your MOST severe cycling injury in the last 12 months?” ( 42 ) would pose a similar recall issue, even though the reference period is the same. The difference in the frequency and salience of using bikeshare (more frequent, less salient) and severe cycling injury (less frequent, more salient) means the same reference period has different implications for different types of events or barriers. This distinction is made in line with previous research ( 19 , 24 ).

Judgment and Evaluation

Issues relating to judgment and evaluation occur when questions require respondents to perform complex mental calculations or involve loaded connotations that could create desirability bias.

Q19: “The following aspects could discourage bike use; how much do you think they influence?” ( 34 ).

For instance, Q19 carries a strong presupposition and lacks a neutral, objective tone ( 4 ). The phrasing implies a preemptive assumption that the listed factors discourage bike use, which may unduly influence respondents’ perceptions of those factors. Consequently, this could affect the reliability of their responses.

Q20: “Approximately what percentage of your medical appointments is out-of-town?” ( 41 ).

Q20 is likely to require some complex estimation to be performed by the respondent. It requires recall of the total number of medical appointments for different health reasons, followed by the total number of such appointments made out of town, and then requires calculation of the percentage. Moreover, the question lacks a reference period, which could place further cognitive load on the respondent in making estimates based on their own arbitrary reference period. As a result, the response might lack reliability and validity. A better approach could have been to specify a reference period for which the respondents are likely to remember the total number of medical trips in and out of town, and then ask them to provide the total number instead of a percentage.

Q21: “In your household, how many people (including yourself) rode a bicycle at least once a week on average over the LAST 12 MONTHS?” ( 42 ).

Q21 requires respondents to provide heavily detailed information about all the household members, followed by performing complex calculations to answer the question. Accounting for other people’s behaviors and cognition is generally a flawed approach in relation to accuracy. It is unlikely for a respondent to reliably know, recall, and be able to calculate how many household members rode a bicycle at least once a week on average for a long recall period of 12 months.

Response Terminology

Q22: “How often do you combine cycling with public transport? 1. Never, 2. Rarely, 3. Sometimes, 4. Often, 5. Always” ( 45 ).

Vague response categories mainly resulted from the use of ordinal measurement scales. For example, studies show that, depending on the content of the question, adverbs of frequency like “often,”“typical,”“regularly,”“usually,” and so forth can represent different frames of reference for different people since there are no empirical references for these words. As a result, such vague quantifiers can produce noncomparable responses ( 1 , 4 , 6 , 22 , 24 ). Furthermore, the absence of a temporal reference period can further complicate how respondents perceive the response options. Whereas one respondent might answer this question based on their previous month’s travel, another might consider their year-long travel. As a result, the frequency that one respondent considers “sometimes,” may easily be considered “often” by another. Researchers argue that interval scales are likely to be reliable, replicable measures ( 46 ). Therefore, instead of using ordinal measures of frequencies like rarely or sometimes, asking for the actual number of times or providing a range of categories (e.g., 0 times, 1 to 3 times, 4 to 6 times) is more likely to produce reliable and comparable responses ( 27 ).

Q23: “As an urban cyclist, you consider yourself: Experienced/Somewhat experienced/A beginner/Inexperienced” ( 44 ).

Other cases of vague response categories involved overlapping or indistinguishable categories. For example, “beginner” and “inexperienced” are two very similar categories in Q23 and respondents might struggle to differentiate between them: a beginner is very likely to be an inexperienced cyclist.

The problem of undefined response categories occurs when technical/unfamiliar terms are not defined in the questionnaire. An undefined response category is different from a vague category, as the latter refers to familiar or common terms that are not properly defined.

Q24: “For in-town medical trips, how much of a problem is each of the following with using public transportation, if available?”…“lack of door-through-door service” ( 41 ).

Q24 is an example of an undefined response category, since respondents might not be familiar with door-through-door services and could confuse them with door-to-door services.

Response Units

Going back to Q12 under Reference Period (“Compared to a fixed-route bus trip (i.e., stop-to-stop without any detours), how much more time do you spend on the bus for an on-demand transit trip, and what was the frequency of these delays?” [ 33 ]), this is an example of a multidimensional response set, because the response options of that question included occurrences in the rows and frequencies in the columns in the matrix or grid-type response pattern. Although such response patterns might be space efficient, they can be cognitively cumbersome for respondents and should therefore be broken down into separate questions ( 27 ).

Response Structure

Q25: “It is difficult for my child to walk or cycle to school because … There is nowhere to safely leave a bike: Strongly disagree/Somewhat disagree/Somewhat agree/Strongly agree” ( 47 ).

Missing response options can occur when the provided response options do not cover all possible responses. The lack of an option might pose difficulty for some respondents to accurately answer the question. For example, Q25 is an opinion question and required a “Not applicable” option, because some children may simply not bike to school. The respondents in this case could choose “Strongly disagree” and that would conflate the “Strongly disagree” option chosen by those respondents who do have access to safe bike storage and for whom it is therefore not a barrier.

Q26: “For which of the following reasons could you foresee yourself using bike share?” ( 43 ).

Similarly, Q26 had 11 options but did not have a “Not applicable” or “None of the above” option, although it is possible that some respondents would not foresee themselves using bike share at all.

Q27: “The following aspects could discourage bike use; how much do you think they influence? … Lack of bike lanes and suitable parking” ( 34 ).

Double-barreled categories involved response categories with two separate topics or components in one response. For example, in Q27, bike lanes and suitable parking were included under one option, which did not allow the respondents to indicate whether bike lanes, or parking, or both influenced their bike usage.

Q28: “How often do you use the ADA paratransit service? Almost every day/Several times a week/About once a week/Several times a month/About once a month/About once a year/Other” ( 48 ).

Another example of overlapping categories is Q28. In this question, if someone uses the paratransit service several times a week, they also use it several times a month. A better option might be removing the “Several times a month” option or using an interval scale.

Methodological Recommendations

Based on our review, we recommend that questionnaire design in future research on travel barriers be more theoretically robust, rigorously pretested, and aligned with the research aims. One reason for doing this is to ensure that questionnaires are designed based on empirically established best practices. Transportation researchers must consider how travel barriers are potentially perceived and experienced in real life by the target population to develop questions that the respondents will understand. That would require focusing on, for example, the type, severity, and frequency of the barriers in question. Another critical consideration would be to apply a frame of reference or recall period that is adequate to capture the travel barriers being studied. Although a short frame of reference or recall period can offer more reliable responses in general, some barriers might not occur frequently within a short recall period ( 49 ): short recall periods could underestimate the prevalence of certain travel barriers and offer more optimistic statistics than the status quo in such cases.

To summarize, researchers need to ask questions that respondents can reliably answer ( 50 ), and provide response options that are as clear and definite as possible for all respondents. Furthermore, researchers should consider whether a questionnaire survey is the ideal tool to answer their research questions. Frequent yet irregular barriers might be more reliably captured through tools like travel diaries and mixed methods approaches such as using a combination of travel diaries and photovoice. Frequent and regular barriers, on the other hand, might be less influenced by the frame of reference ( 20 ). Pretesting the questionnaire through pilot surveys, focus groups, cognitive interviewing, and behavior coding ( 20 ) before fielding surveys can also help researchers avoid the common pitfalls discussed in this paper.

Readers, policy makers, and practitioners should keep in mind the aforementioned pitfalls in questionnaire design and use that knowledge to assess whether a particular paper or question is reliable enough for policy and planning implications. Furthermore, considering multiple papers on similar travel barriers and/or conducting meta-analyses could allow the teasing out of barriers that have been proven to be consistent across studies.

Based on our findings, we present a checklist that researchers could use while designing their TRSE survey questionnaires. Readers and users of existing TRSE research could also consult this list before using any travel barrier research for policy and planning practices.

Is the frame of reference/recall period reasonable for the travel barrier being assessed (e.g., frequently occurring barriers versus rare barriers, comparatively more severe barriers versus less severe barriers)? Consider whether the recall period aligns with the frequency and severity of the barrier in question.

Are there words/phrases that respondents might construe differently? Both unfamiliar/technical terms and seemingly familiar words/phrases can be interpreted in different ways by respondents. If such ambiguity exists, define the terms within the question to clarify what is included and what is excluded by a specific word/phrase in the given context. Consider using interval scales instead of ordinal scales when possible.

Are there words/phrases that might influence the respondents to answer in certain ways, causing biased responses? Questions framed with loaded connotations could influence respondents to consider certain travel behaviors or perceptions as more socially desirable. To minimize bias, consider using a neutral/objective tone.

Are there broad terms or phrases in your survey that encompass multiple transportation-related aspects or issues (e.g., “lack of infrastructure,”“transportation problems,”)? If so, consider breaking them down into smaller, more specific components to enhance clarity and precision. For instance, instead of using a general term like “transportation problems,” specify distinct issues such as infrequent bus service, crowded trains, or unreliable tram schedules. This approach allows for a more detailed and actionable understanding of respondents’ perspectives.

Does the number of response categories seem reasonable and cover all possible responses? (E.g., consider whether options like “Neutral,”“Don’t Know,”“Not Applicable,” in addition to “Yes/No/Sometimes,” might provide more nuanced responses compared with only offering “Yes/No,” and how this might have an impact on the survey outcomes.)

Are there questions that ask about multiple barriers/topics/issues within a single question? If so, consider splitting them into individual, focused questions for clarity and improved response accuracy.

Are there unwarranted assumptions about constant travel behaviors or patterns, even when such behaviors are likely to vary? If so, consider narrowing down the scope of the question to the point where a constant behavior or pattern can be reasonably assumed.

Conclusion

For this study, we analyzed 29% of the questionnaires out of 117 travel barrier papers identified in our scoping review. Although this represents a relatively small share of the total travel barrier papers we reviewed, the findings are highly significant. To the best of our knowledge, this is the first study to critically review the survey questionnaires used in the travel barriers literature.

Although the identified problems are known in the field of questionnaire design, our findings indicate that these problems consistently persist within the travel barriers literature. We believe that a major reason for this is the lack of integration of good questionnaire design practices into transportation equity and justice research. Additionally, these resources may not be easily available and accessible for academic researchers, government, practitioners, and community advocates. Given the popularity of questionnaire surveys as a data collection tool in transportation equity studies, this study sheds light on the common pitfalls to help researchers design more robust and effective instruments in the future. Despite our best efforts, we could not obtain all of the questionnaires. Therefore, we cannot provide conclusive evidence on exactly what percentage of travel barrier questions consist of framing or design issues. However, our findings suggest that framing or design issues in TRSE surveys on travel barriers might be more common than is currently recognized.

Although we focused on studies addressing travel barriers, the findings serve as valuable guidelines for designing questionnaire surveys to address any dimension of the TRSE framework. Regardless of the TRSE dimension studied, researchers need to ensure that their survey questions are clear (e.g., avoid vague adverbs of frequency), provide adequate instruction for the respondents (e.g., how a “trip” is defined in the specific question), contain clearly defined reference periods with a suitable recall period, and avoid imposing a cognitive load on the respondents as much as possible. They also need to ensure that the response categories are clearly defined and properly categorized to avoid ambiguity. We aimed to offer in-depth insights into questionnaire design issues that, according to literature, are likely to affect the reliability and validity of TRSE surveys. We therefore believe that this study will be a valuable resource for researchers designing questionnaire surveys in transportation equity contexts.

Finally, more research is needed to understand how different travel barrier question types, response options, and frames of reference might affect survey outcomes. Such travel barrier- and transportation-specific research could provide empirical evidence on how travel barrier surveys can ensure high reliability and validity of the outcomes and, thus, potentially contribute to policy making and planning practices effectively.

Supplemental Material

sj-docx-1-trr-10.1177_03611981251378483 – Supplemental material for Using Questionnaires to Identify Travel Barriers: How (Not) to Ask Questions

Supplemental material, sj-docx-1-trr-10.1177_03611981251378483 for Using Questionnaires to Identify Travel Barriers: How (Not) to Ask Questions by Paromita Nakshi, Matthew Palm, Elnaz Yousefzadeh Barri, Steven Farber, Michael Widener and Karen Lucas in Transportation Research Record

Footnotes

Author Contributions

The authors confirm contribution to the paper as follows: study conception and design: P. Nakshi, M. Palm, K. Lucas; data collection: P. Nakshi, M. Palm, E. Yousefzadeh Barri; analysis and interpretation of results: P. Nakshi, M. Palm, E. Yousefzadeh Barri; draft manuscript preparation: P. Nakshi, M. Palm, E. Yousefzadeh Barri, M. Widener, S. Farber, K. Lucas. All authors reviewed the results and approved the final version of the manuscript.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the Social Sciences and Humanities Research Council of Canada.

ORCID iDs

Data Accessibility Statement

The authors do not have permission to share the data.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.