Abstract

This article explores how the work of ethical assurance is understood by those involved in artificial intelligence development and deployment, and uses the findings to consider how ethical reflection might be better supported. The article presents a case study of a multi-disciplinary project developing a care support system that used machine learning in remote monitoring of people living at home with dementia. In this project engineering and clinical perspectives come together through a fractionated interdisciplinary trading zone to address goals such as remote detection of urinary infections. Ethics is done, according to project participants, in formal ethical review processes and through a shared understanding of common goals, but also in discipline-specific practices that sit outside of the trading zone. A key role is played by team members who translate concerns between discipline-based research groups and who act as proxies for the ultimate users of the systems not directly present within the trading zone. These insights into cross-disciplinary ethical work in relation to smart care lead us to recommend that infrastructural support for imaginative and transparent ethical reflection needs to be woven through the collaborations that create artificial intelligence, both across disciplines and throughout the lifetime of a project.

Introduction

In recent years there has been voluminous and vigorous debate about the need to ensure that artificial intelligence (AI) is developed and used in accordance with ethical principles. Despite considerable consensus around the desirability of these principles there is still little agreement on practical mechanisms to ensure adherence. This article contributes to ongoing concerns about the means by which ethical AI might be achieved, through a focus on the ethics of AI as the upshot of diverse practices situated within the interdisciplinary work of producing innovations. It complements work by Govia (2020), Orr and Davis (2020) and Slota et al. (2023) on how AI researchers think about ethics by looking at how these understandings play out among the different disciplines and professions involved in a single initiative, according to participants. Slota et al. (2023) are particularly inspirational in their focus on how the work of AI ethics is done and their stress on the importance of making such work visible and of rewarding it. The case study presented here contributes further insight into how such ethics work is understood within the practices of interdisciplinary collaboration that develop AI-based systems. The analysis helps us to understand how the heterogeneous work of ethics identified by Slota et al. (2023) meshes together, where tensions between different forms of ethics might arise and how these tensions might be addressed.

The focus of the case study is an initiative in the domain of healthcare, concerning development of remote monitoring systems making use of machine learning 1 to identify patterns of concern in data derived from sensors installed in the homes of people living with long-term conditions such as dementia. The case study spans the perspectives of those designing and developing the system, those delivering it and those making use of it, exploring their views on what should be done in this territory and how they understood what their own responsibilities were for making it so. The following section explores the purchase offered by the concept of the trading zone for understanding ethics within the development of AI infrastructures. The discussion then moves to outline a series of interviews conducted with those involved in the smart care system that forms the focus of a case study, in order to explore where such interdisciplinary work entails understandings of ethical practice. First, the diverse forms of ethical thinking presented by interviewees are analysed, through the example of the development of an algorithm to detect urinary tract infections through passive activity monitoring and physiological data. The case study is then further examined to consider how far this instance of AI development can be understood as a trading zone (Galison 1997) where different disciplines and specialisms develop shared understandings. The article explores how ethics are practiced in relation to this interdisciplinary work, asking to what extent the work entails development of a shared discourse of ethics across disciplines and whether it is inclusive of diverse ethical perspectives. The analysis leads into a depiction of AI development that operates largely as a patchwork of discrete ethical responsibilities, only to a limited degree developing a shared ethical language that resides within a trading zone and persists across the development and deployment process. The conclusion reflects on the prospects for promoting conversations about ethics within such forms of interdisciplinary work and for enhancing the visibility of ethical considerations across the time span of infrastructure development.

AI Ethics and Interdisciplinary Collaborations

According to the synthesis offered by Floridi and Cowls (2019), conversation about ethical dimensions of AI has converged on a set of key principles focused on the need to prioritise doing good (beneficence) and avoidance of harm (non-maleficence), preservation of human autonomy in decision-making, and attention to issues of fairness and justice. These principles relate closely to the established principles of biomedical ethics (Beauchamp and Childress 2019). For AI, a further principle of transparency or explainability is derived from concerns that the processes of AI can be opaque and thus lacking in accountability (Floridi and Cowls 2019). Despite the widespread acceptance of these principles, there are considerable difficulties in enacting them and governing adherence (Mittelstadt 2019; Morley et al. 2020a; Whittlestone et al. 2019). It is also important to note that these ethical principles are not comprehensive and scholars have identified needs to broaden understanding of ethics in relation to AI, particularly in relation to feminist ethics of relationships (Wagman and Parks 2021), and with attention to decolonial concerns (Mohamed, Png, and Isaac 2020). Rather than operating as an abstract view from nowhere, ethical principles may insidiously reflect prevailing power relations and exclude marginalized voices. In relation to healthcare, particular sets of tensions emerge around expertise, autonomy, privacy and equitable access to healthcare (Char, Abramoff, and Feudtner 2020; Karimian, Petelos, and Evers 2022; Morley et al. 2019; Morley et al. 2020b; Morley and Floridi 2020).

Within this existing debate about appropriate ethical principles for AI there is an unhelpful tendency to treat ethics as situated within the technology, and to take a determinist stance on the downstream consequences of these embedded ethical values (Greene, Hoffmann, and Stark 2019). Instead, it may be more useful to appreciate that AI is enacted within socio-technical assemblages that derive meaning and consequence in context (Johnson and Verdicchio 2017). AI increasingly participates in the infrastructures that underpin everyday lives, becoming at once mundane and highly consequential. Developing these infrastructures is a complex and indeterminate process in which decisions are made but also unmade and reshaped as work is distributed spatially, temporally and socially, according to distinct sets of expertise, and as infrastructures come into new contexts of operation (Karasti and Blomberg 2018; Star and Bowker 2002; Star and Ruhleder 1996). It thus becomes important to consider the full development and implementation process, and to investigate ethics not simply at the outset but across the various contexts that the technology touches upon and is embedded within. Within this framing it also becomes meaningful to think of ethics as a practice that is done, rather than an a priori set of principles that are applied (Clegg, Kornberger, and Rhodes 2007). This perspective focuses the attention on how ethics are enacted in context, and as discourses that people use to position themselves in relation to others and to make sense of the everyday decisions and dilemmas that they face (Kornberger and Brown 2007).

Viewing ethics as infrastructural practices and discourse dispersed across the socio-technical assemblages of AI development and deployment directs our attention to varied forms and sites of work and the mechanisms through which different disciplinary groups collaborate in the process. The metaphor of the trading zone (Galison 1997) has often proved helpful to conceptualize the challenges and opportunities that arise when such different sets of expertise come together. Central to the idea of the trading zone is that different disciplines may have incommensurable ways of understanding a problem—different languages in effect—and that for them to collaborate on a project some locus of cooperation or trading zone has to develop. As Collins, Evans, and Gorman (2007) outline, interaction within the trading zone may involve disparate groups collaborating via a shared boundary object (Star and Griesemer 1989) that means different things to each group, or that may entail some interactional expertise in which each group can understand the others’ concerns, to a degree. Interactional expertise implies a shared language that embeds context-specific tacit knowledge (Collins, Evans, and Gorman 2007). A ‘fractionated’ trading zone allowing for collaboration between groups that retain a sense of their separate identities can operate effectively to achieve a common goal (Collins, Evans, and Gorman 2007).

This perspective on collaborative work provides a framing for thinking about ethics as practice in the production of AI technologies. Gorman and Werhane (2010) suggest that an effective trading zone can offer participants exposure to one another's mental models and allow for the questioning of assumptions, thus enhancing ethical sensitivity. Such ethical outcomes are not, however, guaranteed by the mere existence of a trading zone, as Sismondo (2012) highlights. A combination of infrastructural support, skilled mediators and the will to engage may be needed. Mills et al. (2010) see trading zones as complex adaptive systems, and argue that values and goals need to be revisited explicitly in a form supported by an appropriate infrastructure for collaboration if trading zones are not to drift away from their original conception. Caby (2023) suggests that brokering productive interdisciplinary dialogue across a fractionated trading zone can rely on specific skills that mediators acquire in fostering and closing debate without necessarily needing interactional expertise in the disciplines themselves. Gorman, Werhane, and Swami (2009) argue that morally imaginative thinking is needed to enable people coming from different disciplinary perspectives to address divergence in ethical values. An explicit focus on developing infrastructures and skills to support imaginative ethical conversations across disciplines may therefore be valuable in AI development projects.

Viewing interdisciplinary collaborations through the metaphor of the trading zone has potential both to illuminate how ethical positions are negotiated in such spaces, and to suggest how such work might be better supported through attention to appropriate skills and infrastructural support. The case study in this article involves an interdisciplinary collaboration with potential to develop into a trading zone. Rather than assuming that this was the case, interviews with project members elicited accounts of how they worked individually and together, which were used to derive provisional insight into the extent to which a trading zone operated among the various groups involved. These insights were referred to participants themselves for further discussion, and prompted further exploration of how and where ethical work was done. This approach was taken in the spirit of co-creation: the first author is a social scientist acting as collaborator with the second author, a specialist in machine learning for healthcare. We aimed to develop insights that have participant validity as well as analytic purchase (Lincoln and Guba 1985) and to build toward a collective identification of learning points for this project and others like it.

A Case Study of Smart Care Development and Delivery

The case study explored in this article focuses on a research center 2 working in collaboration with the NHS to develop and deploy remote monitoring solutions based on Internet of Things for people living at-home with dementia, developing machine learning algorithms, personalizable to the individual, that would detect disease progression and also identify significant health events. A basic remote monitoring system was a service offered by the NHS Trust collaborating on the project to several hundred people living with dementia or cognitive impairment, and older people living with anxiety or depression in its catchment area in the southeast of England. This service system offered a small number of passive infrared activity sensors placed around the home and a smart plug to detect use of the kettle. Service users also took physiological measurements of pulse, blood oxygen levels and temperature and, in latter stages of the research, were also offered blood pressure monitors. The service system was supported by an NHS monitoring team who responded to alerts relating to potentially concerning physiological measurements. These alerts were generated automatically based on levels personalized to the individual. In response to an alert, the monitoring team would follow an agreed clinical pathway to take action on the alert: this often involved telephoning the service users and carers to ask for a reading to be repeated and then, if still out of range, advising on escalation to other healthcare services. In parallel, the company who installed and maintained the sensors operated their own monitoring service to register alerts for lack of activity in the home over a sustained period, and the two monitoring services communicated to coordinate support. Service users had access to a tablet device for entering their data and viewing previous activity. There was also an app for smartphones. The system could provide users with an overview of their own sensor activity and physiological data over time. In addition to the service system, at the time of interviewing there were multiple strands of research ongoing at the center simultaneously, focused on a cohort of participants taking part in research to develop the system further. These research participants were given additional in-home sensors and physiological monitoring devices, and also undertook regular cognitive assessments. In some cases, they took blood tests and MRI scans. They were also supported by staff from the NHS Trust.

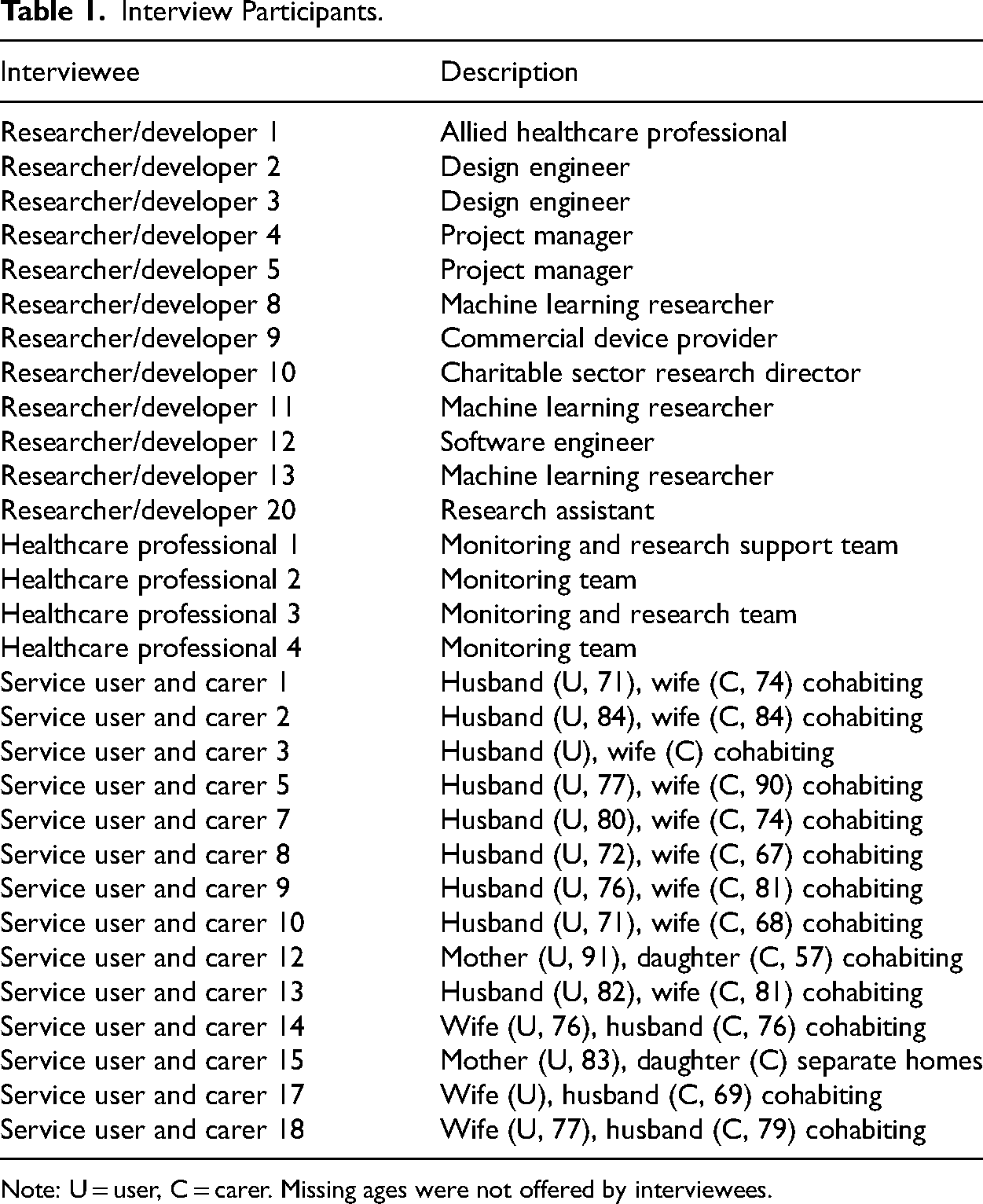

This article presents an analysis based on interviews with researchers and developers, NHS staff involved in monitoring and users of the service system, conducted in 2021 and 2022. 3 Interviews were conducted with users of the service system and not the research system, to avoid overburdening the already intensively involved research system participants. Interviews with users of the service system were conducted in pairs with the service user and a friend, family member or carer who was supporting them in their use of the system. All of the interviews covered a similar interview guide with adaptations for accessibility to each audience. The interviews covered the experience of smart care and any challenges and dilemmas encountered, followed by a discussion about the ethics of smart care, focusing on how interviewees felt the core principles of beneficence, non-maleficence, autonomy, fairness and explainability applied to their own experiences of smart care. The two-part interview covered the interviewee's own definitions of what might count as an ethical issue, as well as their response to prevailing framings. Following an initial interview of an hour, two further short follow-up interviews were offered, at 8-week intervals for researchers and developers and healthcare professionals, and at 4-week intervals for service users and carers. This added a longitudinal element to the research, which captured issues unfolding over time while also creating occasions for participant validation, for interviewees to reconsider and revisit issues, and for the interviewer to check that discussions had not created undue burden or anxiety for service users and carers. In total 12 initial interviews were conducted with researchers and developers, 4 with members of the monitoring team and 14 with service user and carer pairs, 12 of whom were spousal couples and 2 of whom were parent and adult child. Interviews and follow-ups were conducted using Teams videoconference software for researchers and developers and healthcare professionals. Service users and carers were offered a choice of face-to-face or Zoom for the initial interviews, and 12 opted for face-to-face and were interviewed in their own homes. Follow-ups were conducted by telephone or Zoom. Table 1 summarizes the interview participants.

Interview Participants.

Note: U = user, C = carer. Missing ages were not offered by interviewees.

All interviews were audio-recorded and transcribed, and the resulting transcripts coded using NVivo. Following identification of initial findings, researchers and developers and healthcare professionals were invited to focus groups to discuss implications for practice going forward. The analysis presented here focuses on the understanding of ethics and ethical responsibility portrayed by interviewees and focus group participants involved in development and deployment, with a particular emphasis on how participants depicted the nature of the collaboration and their responsibilities for taking action based on ethical commitments within that collaborative structure. We begin by giving an overview of the various forms of ethical thinking relating to one sub-system of the project undergoing development, before moving on to explore the extent to which a trading zone was seen to exist.

Findings

The Ethics of Remote Monitoring for Urinary Tract Infection

The entire project was governed according to the national requirements for ethical review of healthcare research. This governance committed all those involved in the research to conform to an approved protocol that covered the processes of recruitment and informed consent and the research activities that followed. A formal amendment process was required for any changes to this protocol such as new sensors or new research instruments. Project managers coordinated the ethical review process and ethical compliance across the project, with input from individual disciplinary groups of researchers according to the specific focus of an amendment. Formal review of data management and security protocols was also conducted according to institutional requirements and national standards, providing the rationale for approaches to secure data storage and transfer and anonymization as a priority wherever possible. Access to unanonymized data was only available to specific members of the project on a need-to-know basis. The participant-facing members would often also have undergone training in “Good Clinical Practice,” the international standard for conduct of clinical research. 4 Machine learning researchers tended to have access only to anonymized data and to have no direct contact with the participants whose data they analysed, but they were trained in secure data management protocols.

The forms of formal review and training described above were necessary, but do not encompass the diverse array of values-based decision-making that interviewees described as informing their daily work. The example of developing a sub-system of the remote monitoring service designed to detect urinary tract infection (UTI) serves to introduce both the disciplines involved in the work and the diversity of forms of ethical issue identified by interviewees. From a clinical perspective, detecting a UTI early is a high priority (Tal et al. 2005). It is widely understood that these infections can go undetected among those already experiencing cognitive impairment and lead to increased levels of confusion, with the possibility of falls and hospitalization that could have been averted if the UTI had been treated earlier. This can be understood both in terms of the well-being of the individual and in terms of care costs. These combined rationales were offered repeatedly in interviews by researchers and developers as well as healthcare professionals, as a reason for directing effort into developing a robust algorithm for UTI detection. For example, a machine learning researcher described this as a widely accepted rationale: For people living with dementia a lot of the reason for hospitalizations and a lot of [reasons why] people get institutionalized at some point and a lot of the cost of dementia care overall, all tends to be adverse health condition related, but [due to] things that are predictable or could have been predicted. From the predictive side, it's basically being able to see things before they’re happening or in the early stages, and making sure that the care given is actually enough to allow people to continue a relatively independent life of good quality. I think one of the most obvious ones…to the general public is UTIs. You get a UTI that often can lead to a lot of agitation. You end up in hospital but afterward you’re just not the same person. (RandD 13)

Despite widespread acceptance that a remote monitoring system that could detect a UTI would be a positive step, a number of points were identified where it raised challenges or dilemmas—some of them identified as carrying ethical connotations. Among machine learning researchers on the project there was considerable optimism that given data from activity sensors placed around the home and from daily physiological measurements, patterns of data that indicated a UTI might be found. Yet there were many technical challenges in achieving this due to the scarcity of labelled data, noisiness of data, and high levels of missing readings, leading to the need for considerable experimentation and exploration of novel techniques. For instance, identifying whose activity was being registered by the system was not straightforward: So…a big technical design challenge is working out who is in the house, who is attributed to which data? Especially with the machine learning and daily routines, it's really interesting. (RandD 2) It means that we don’t use the same patient in test and train.

5

After that it is also important to have an external validation if possible. For example, we have 50 homes [where] we are collecting the data. What about if we consider only 40 of them in the first round of validation? And use the other 10 in external validation, or a separate hospital? Or if we have two separate NHS Trusts, even in the home collection. Like, if some of them are in another Trust, can we do across Trust validation? (RandD 8)

Despite their best efforts to combat bias through careful use of existing datasets, machine learning researchers portrayed themselves as hampered by the limits of the data available. The concern about bias thus also provided a rationale for efforts to broaden the participant pool for research—work to be carried out by research assistants focused on recruitment. For those involved in recruitment, there was a balance to be struck between encouraging participation and putting pressure on people to participate. The long-term goal of detecting UTIs was widely endorsed, but in the short term it was recognized that although the system could not reliably offer that outcome, participants were still needed to provide data in order to achieve that outcome. As one project manager described, this produced an ethical dilemma: I think it's easy to sell the project around that point and say, you know, we can detect UTI but first of all we need to say that it's not a validated method yet and there is still the issue about timing. (RandD 4) Whereas I think if we get someone who withdraws from say…an assessment or doesn’t want to continue, people are like, “Why is their data missing?” And it is like, “Well, we are not going to force someone to continue. Would you do that if you were put in that situation?” (HCP 3 follow up 1)

Participation in research places a sometimes-considerable burden on participants and carers. Participants here needed to accept taking regular physiological measurements and having sensors installed in their homes. In order to develop an algorithm to detect UTIs it is necessary for monitoring data to be labelled according to whether the participant has a UTI at that time. Providing labelled data involved asking people to test urine frequently. In future, the rollout of a UTI detection algorithm would result in a prompt for a UTI test whenever an alert was produced. How to do this in an acceptable way became a focus of innovation, with a view to transforming the entire pathway of diagnosis and intervention: So we’re looking at different sorts of technologies that can create diagnostic tests that could be used at home and specifically, rather than just telling you whether you have an infection or not, could be used to tell you what kind of bacteria is causing the infection. So that kind of technology, for instance, can then lead to a more bespoke specific antibiotic treatment and hopefully expedite the response and, you know, create a kind of shortened pathway between identification and treatment response. (RandD 1) So, depending on the project we might do simulations, you know with the UTI project, we might do a simulation of the procedures they need to go through to get the test result….But is it going to be feasible to ask people to get a sample of urine, put it in a test tube, see if it changes color and report the result before we inflict the whole thing on them? (RandD 2)

Introducing a new algorithm to the service system happened only when there was confidence that the algorithm was performing at appropriate levels of accuracy. As a software engineer described, this required verification of performance and some attempts at explaining how the algorithm was working: So our current plan is…that before we actually introduce a new algorithm to the system we run it on a weekly basis. Just offline and see what alerts it generates and then talk to the monitoring team to verify those alerts. And then if we’re happy with the results and have enough evidence, then we’ll document how it works. (RandD 12) I choose the algorithm…and data presentation that has the smallest error function but there are ethical consequences to data representation, and that's something that people in AI ethics don’t talk much, or hardly ever, about. They generally talk about the data acquisition, the dataset, but the representation embodies values and we forget. (RandD 3)

Once an algorithm had been implemented, healthcare professionals monitored the system dashboard and followed up on alerts with participants. The decision to retain a human monitoring team as part of the system was portrayed as an important step by the monitoring team, by researchers and developers, and by service users and carers. One member of the monitoring team positioned this process as a matter of both careful validation and respect for patient autonomy: But the sensor for the bathroom, if that's triggered so many times throughout the day, that will go off. As much as that could be, yes, they might have a UTI, it could also be if they have a party and everyone was using the bathroom. If that happens, what we would do is see if they’ve got any symptoms or say that this has been flagged up. If you would like to do a test, we can offer it. If you’re feeling at all unwell, if you think you’ve been going to the toilet more frequently and again like this pathway that we follow and they can have a test if they’d like one. (HCP 2) It feels a bit to me like, [named colleague] said to me he assumed that what you could do with a car you could do with a human. That's how he started in this business. It was all around the oil light coming up on your dashboard. Funnily enough, we’re a bit more complicated. That oil light, unfortunately, could be any number of things. I guess my worry is that we don’t have enough context getting fed into the AI as it currently sits. (RandD 9)

Across the research and development team there was a commitment to retaining human judgment at the point when an alert had been registered.

Human involvement was also presented as ethically important by service users, many of whom expressed concern that the introduction of remote monitoring could be used as a rationale for replacing aspects of in-person care that they valued: My only concern is that you could possibly hand over responsibility to AI and go… I think some people just forget, [remote monitoring] will look after mum or me and not actually really sort of take responsibility. (SUandC 15) I would still like a human involvement, wouldn’t you, you wouldn’t want a machine to phone you up and say… (SUandC 18) But we’re going to get that anyway, aren’t we, because as I understand it, this business about you ring up for an appointment and you’re triaged and somebody is looking at your responses to decide whether you’re going to have a phone call conversation or they’re going to call you in. Well, the next stage is that a computer will look at your responses and turn round and say, no, actually I think you’ve got cancer of something or other. (SUandC 14) All this is fine as long as the doctors are going to cope with that. They might feel “not another thing,” you know… If it would help them yes, but would it help them or will it just give them more things to do. I don’t know how it works. (SUandC 7)

Ethical issues in the form of values-based reasoning were therefore identified as arising in multiple different forms across the research and innovation process and extending into delivery of the UTI detection algorithm. In this healthcare context clinical ethics dominate, but intersect with ethical perspectives on machine learning, practical ethics of day-to-day care and novel issues that arise as decisions are made about how this new technology for testing might appropriately be deployed within care settings. The next section explores how these different perspectives on ethics were handled across the interdisciplinary project.

Is There a Trading Zone?

Sismondo (2012) warns against seeing trading zones everywhere, and Galison (2010) suggests setting limits on the concept. A tentative view that there was a trading zone emerged from the initial interviews conducted for this project. Follow-up interviews explored with interviewees how deeply the metaphor of the trading zone resonated for them, discussing to what extent they considered themselves to be participants in a trading zone and, if they did, to what extent they saw it as operating effectively. Depending on their response to the metaphor, interviewees then discussed where they felt ethics sat in relation to that organization of the work. In particular, they were asked about the extent of shared understanding that developed through collaborations across specialisms and whether this involved coming to an understanding of the way that other groups defined ethical problems and approached solutions.

Some interviewees from the research and development domain enthusiastically embraced the idea of the trading zone: indeed, one research manager suggested that the very role of the research center was to create a trading zone between the specialisms and to blend their perspectives and skillsets to meet the core challenge of developing technologies to support dementia care that the center was funded to address. In contrast, others suggested that it was not realistic to expect a central trading zone because the distance between the various specialisms was so great. These interviewees highlighted instead the key people who move between groups, such as user experience specialists, design engineers and project managers. They argued that these people need to become skilled in understanding the issues from the perspective of each group, and brokering solutions that work for all. Lack of agreement would be problematic because it would become difficult to move forward with key developments, such as introducing new sensors or making innovations in identifying urinary infections. Much coordination work was needed to align the diverse array of activities needed to progress to an effective system for UTI detection but there was, across the research and development domain, a disparity of opinion about how effectively the desired degree of work organization could be achieved. According to different interviewee perspectives, the center either felt as though it contained a cohesive trading zone in which there was some degree of shared understanding, or alternatively operated a hub-and-spoke model where brokers moved between the various disciplinary groups such as machine learning researchers, synthetic biologists, sleep scientists and cognitive and behavioral scientists and developed sufficient understanding to translate between them. Across these differing perspectives on the scope and degree of understanding between disciplines it was clear that distinctive specialist disciplinary identities were retained, in the manner of a fractionated trading zone (Collins, Evans, and Gorman 2007).

Moving to a less abstract level, beyond the brokers who liaised with separate groups, the key mechanism portrayed as making coordination between different research and development activities real in participants’ experience was various forms of meetings that brought together representatives of the relevant specialisms to discuss progress. Understanding was brokered both through ad hoc meetings called to address a specific issue, and through a standing program of regular meetings. Various kinds of meetings brought different sets of people together for different purposes, providing an infrastructure for conversations that, on the face of it, could provide for development of a trading zone that contains some degree of shared language or interactional expertise. A monthly meeting, for example, brought together representatives from the research team with representatives from the NHS Trust who were in regular contact with participants. Separate meetings focused on the clinical operations of service delivery and the clinical research perspective focused on data generation. Yet the mere existence of such meetings did not guarantee mutual understanding, as one project manager explained in the context of agreeing on an approach to UTI detection: Because it was like, it is a very complicated pathway. If you look at the graph you will understand why it was complicated but no one really took the responsibility of saying okay, I’ll go through this and I’ll talk to all the other parties. Once we get agreement, I will let everybody know, so they were having these huge meetings of like 25 people in an hour, of course, you can’t get anything done and it was a very… it was a kind of hot potato. No one really wanted to hold it so I said okay, I’ll try but I think it was necessary that like someone neutral, you know, I didn’t have any interest in one measure or the other, you know, like okay, whatever works best and at the end after… a couple of months we had a UTI flowchart defined and that was an achievement and I think it was because someone else took ownership. (RandD 4)

In the example above, meetings were not considered sufficient to achieve a consensus across different disciplinary perspectives, because of the complexity of the issues to disentangle and the danger of groups talking past one another. Other concerns were also expressed with meetings. Some interviewees, despite being present at the meetings, found issues with the extent to which they were inclusive of the various voices. A healthcare professional who had been involved in recruiting participants found, for example, that research meetings were helpful for finding out what the researchers intended from the data but did not necessarily give an impression of cohesion: I think maybe where that little bit of disparity comes is that it tends to be seen as a “this is what we’re doing” presentation, rather than a “let's all work together to work out the best way forward” kind of scenario. So it still feels like lots of mini teams I guess, all wanting to do their own projects and their own things, without this sort of one central goal. (HCP 1 follow up 1)

Working across disciplines was found to involve tensions and misunderstandings. A machine learning researcher spoke of the communication challenges of working with those who do not understand code: I thought the code was working the first time and that's it but actually they were asking [for] some modification, improvements, which makes sense because they had different needs. (RandD 11 follow up 1) I think unlike engineering, these are all words that you think you know in English but you don’t really know because they are technical terms. So, it's things like that where it's somehow even easier to miscommunicate because you kind of think you know but you don’t really whereas in engineering, [I might say]: “I just don’t know the term, please explain.” (RandD 20 follow up 1)

This statement is highly resonant of a lack of interactional expertise. In other cases there were issues of differing conceptions of the working process. A design engineer discussed feeling more affinity in this regard with some disciplines than others: So I think with the computing and software developing team, ... although designers and developers think very differently, there's a sort of a process supported by software and standards. And things where it kind of works. Task management and creating graphics, and sending them across and talking about it. It kind of works although we feel like very different tribes. (RandD 2 follow up 1)

One significant infrastructural challenge with ethical connotations concerned how and where the views of users would be represented. Those who had regular contact with service users and research participants often saw themselves acting as proxies for their interests and responsible for representing them to other groups in the research context. Their view on the importance of this activity resonates with the insights of Gorman, Werhane, and Swami (2009) regarding the role of the trading zone in enhancing the moral imagination of participants. The need for proxy representation arose because regular project meetings did not, routinely, involve direct representation from service users. In regular meetings the patient voice was represented by those who did have interaction with patients, including healthcare professionals from the NHS Trust and design engineers working across the various research and development groups. The meetings provided a forum for raising concerns about experiences of patients and highlighting the need to consider participant burden. As far as possible, participant identity was protected within meetings and limits were placed on the identifiable data available to machine learning researchers in order to protect participant confidentiality. While in accordance with conventions around good practice for data handling, this technical fix of anonymization was felt by some to prevent those in machine learning from understanding, or feeling the need to understand, the patient context—paradoxically, anonymization is a move designed to protect participants, but has consequences for the ability to visualize and respond to the patient context across the trading zone, constraining the development of moral imagination (Gorman, Werhane, and Swami 2009). From the perspective of a charitable funder of smart care research, the voice of those living with dementia should be included as a specialist form of expertize in its own right within the trading zone, although it would require the skilled work of translation: So I think there is perhaps in my mind a role for the translator in that trading zone. So it's kind of the United Nations, isn’t it, when you’ve got a lot of people sat around talking different languages, and I think either you need quite a lot of skill and commitment and discipline from people to be able to do that, or you need somebody who can do that translation piece. (RandD 10 follow up 1).

There were many different stakeholders including those beyond the research and development setting, and achieving a workable system that could be deployed in real world contexts under practical and economic constraints was portrayed by a project manager as an aspiration that would require a complex array of skills: I think that shows all of the different stakeholders from your academics, from ... scientists working in the lab to try and get this urinary detection component, to working with the council and the GPs so that they can actually input into what would a system look like that they could actually use. It kind of brings together all of those stakeholders to have those conversations while we’re also developing the technology at the same time. (RandD 5)

Across interviews with researchers, developers and healthcare professionals, a picture emerged of disparate disciplinary cultures and sets of expertise that were brought together into a coherent overall project through a range of infrastructural mechanisms, including scheduled meetings targeted at particular issues, the brokers who moved between groups and stakeholders and translated concerns between them, and the members who acted as proxies for the voice of service users and carers. Researchers and developers worked with and through key boundary objects (Star and Griesemer 1989) such as the data repository and the ethically approved protocol. As a fractionated trading zone, the identities of the various specialist groups were maintained but they were able to coordinate and progress toward aspects of a common goal dictated by the research center's overarching grant funding. Interactional expertise across specialisms was developed in a somewhat patchy fashion and particularly located with the brokers who moved between groups.

Discussion: Bounded Responsibilities for Ethical Smart Care

Discourses of ethics can play a role in making sense of oneself and one's peers in a working context (Kornberger and Brown 2007), and discussions in interview settings should be understood in that light rather than as a reflection of some underlying fixed ethical stance. In interviews, many research and development participants said they were interested to have these discussions about ethics that were different from those in their everyday work, and expressed concern that their ideas in the ethics of smart care might not be fully developed or suitably informed. Similarly, the AI researchers interviewed by Govia (2020) tended to treat ethics as something outside of their technical practice. As Orr and Davis (2020) found, AI researchers tended to offer quite a constrained version of their own ethical responsibilities. Having initially distanced themselves from expertise in ethics or discussion of ethics as covered in formal ethical review processes, many interviewees suggested that the project as a whole shared an overall ethical commitment to achieving better outcomes for people living with dementia. Commitment to this overarching goal was tempered by notions of the boundaries of what they could influence and what was out of their control. This perspective was captured in a contribution by a participant in the final focus group with researchers and developers, summarizing the group discussion: I think there was a sense of “there needs to be a core set of values that we adhere to and understand and acknowledge that's agreed within the center” but I think there was then [a discussion of] empowering the individuals to translate those ethical values into their own practice and providing training and having the confidence and know-how for people to then feel like they know how that applies within their specialism and feel confident to speak up or challenge if there's something that they feel maybe doesn’t apply or doesn’t comply with those ethical values that have been set out. (RandD focus group, P1)

Participants’ ideas of their ethical responsibilities tended to be specific to a particular set of expertise and also to be positioned in a specific temporality within the overall time span of project development and deployment. In terms of temporality, for service users and carers, the system they were offered had to be taken on trust and they had little interest in what practical steps might have been taken in the past to ensure its ethical status. By contrast, in temporal terms, researchers and developers saw themselves as having little practical responsibility for the ethical aspects of future contexts of deployment. AI systems are often designed with a focus on solving a specific problem or advancing the fundamental theory of machine learning and intelligent systems. Developers of such systems may not necessarily feel responsible for downstream implications of the technology. Specific components of ethical reasoning often formed a section within publications describing the project, but beyond these brief accounts there was no apparent infrastructure for preserving a record of decisions taken on ethical grounds across the time span of the project—in a way that could display and hold to account the various forms of values-based decisions that had been taken within disciplinary silos and make these available to future users, nor to decision-makers who might be commissioning the system. Those involved in the development of smart care expressed a sense of responsibility for working according to their own disciplinary notion of ethics, but the values-based decisions that they took were often not made transparent in a systematic fashion.

Conclusion: AI Ethics and Interdisciplinary Trading Zones

Attending to how the complex interdisciplinary work of developing and deploying an AI-enabled system of smart care is achieved is crucial if we are to have any realistic prospect of making the conversations around ethics more inclusive and more effective. Schiff et al. (2021) identify the plurality of disciplines involved in AI development and the resulting lack of unified approach as key in obstructing passage from principles to practice in responsible AI. This challenge emerges here, in the patchwork of ethical responsibilities positioned across the timeframe of development and deployment that interviewees portray as only represented to a limited extent within a fractionated trading zone. Interviewees also, however, point toward solutions, highlighting the importance of key infrastructural features that enable ethical issues to be addressed effectively across a trading zone, including shared boundary objects, meetings between different specialisms, and skilled personnel adept at translating between the concerns of different groups and acting as proxies representing the perspective of stakeholders.

Slota et al. (2023) found that the work of ethics within AI development risked being invisibilized and undervalued. They also found that ethical practice took diverse and situated forms, according to both professional expertise and personal values. This observation resonates with the case study presented here. Here the role of those who can speak for the service users and carer perspective was key within the trading zone. Anonymization became a device oriented toward a set of ethical values protecting participant interests but limiting the development of certain kinds of conversation about the lived experience of service users within the trading zone. Formal ethical review legislates for considering certain kinds of ethical issues, particularly informed consent, but risks being seen as a merely bureaucratic process if not viewed as part of the trading zone, and has a minimal role in considering future implications.

By looking at ethical practice and perspectives across a single case study we can build on the work of Slota et al. (2023), arguing that ethical practice in AI is diverse and situated, and needs to be supported by an infrastructure for collaboration that is able to support ethical conversations across the specialisms involved, and offers a transparency that makes values-based decisions accountable. Stakeholder engagement is promoted as an answer to ethical challenges particularly where acceptability of AI is concerned, but proves not to be a straightforward matter in terms of who is involved, how their interests are represented, or how the issues important to them might be addressed. There are multiple constraints on meaningful and sustainable involvement of service users and carers, and on providing actionable input: efforts may be better invested in supporting development of expertise in translating their concerns and bringing these into the trading zone. Infrastructures to support ethical AI development and deployment are needed. These may include structures of anticipatory review and pre-implementation audit, but they also need to provide skilled support to encourage imaginative conversation about ethics throughout the process and across the project, in an extended version of the ongoing audit Raji et al. (2020) propose, mitigating any tendency for ethics to be invisibilized, thought of as happening elsewhere, or treated as having been once done and then forgotten.

Footnotes

Acknowledgments

The authors are grateful for the generous and insightful contributions made by interviewees to this study and to anonymous reviewers who have helped to sharpen and clarify the argument presented here.

Data Availability

Anonymized and redacted interview transcripts are available on request via the UK Data Archive SN 856529.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the APEX award scheme (Royal Society, British Academy, Royal Academy of Engineering, Leverhulme Trust), Medical Research Council, Alzheimer’s Society and Alzheimer’s Research UK (grant numbers APX_R1_201173, UKDRI-7002).