Abstract

Objectives

High-riding jugular bulb (HRJB) is an anatomical variation in the petrous temporal bone (PTB) that can be defined as the presence of the jugular bulb at the level of the basal turn of the cochlea in the axial plane. The presence of HRJB can increase the risk of injury during middle ear surgery and may contribute to the pathogenesis of conductive and sensorineural hearing loss. This study investigated the accuracy of a deep learning convolutional neural network (CNN) algorithm in identifying HRJB on axial PTB computed tomography (CT) scans.

Methods

Petrous bone CT scans were retrospectively obtained from consecutive patients imaged in January 2024 from an Australian tertiary hospital. Two blinded investigators – a board-certified otolaryngologist and an otolaryngology resident – labelled the images as either HRJB or normal. Training and test sets were created in a 2:1 ratio. Microsoft Azure's Custom Vision platform was utilised to devise the deep learning algorithm.

Results

2400 images were collected from left, right and flipped axial PTB CT scans of 600 patients. After exclusions, 2367 final images were used. The CNN achieved an overall accuracy of 0.948 (95% CI 0.930–0.962), with a sensitivity of 92.9% and specificity of 95.5% for HRJB identification.

Conclusion

The CNN successfully identified HRJB on axial PTB CT scan images with a high degree of accuracy. This approach's robustness is premised on accurate labelling of datasets and rigorous cross-validation with a dedicated testing set. Future studies could explore CNNs for detecting other anatomical variations, potentially enhancing diagnostic accuracy and improving patient outcomes in Otolaryngology.

Keywords

Introduction

The jugular bulb represents the confluence of multiple venous dural sinuses in the skull base, predominantly the transverse and sigmoid sinuses, and drains into the internal jugular vein. The jugular bulb is typically located within the petrous temporal bone in the floor of the middle ear. Anatomical variants include a dehiscent jugular bulb and a high-riding jugular bulb (HRJB). High riding jugular bulb has been variably defined dependent on the position of the most cephalad portion of the jugular bulb in the axial plane; at the level of the basal turn of the cochlea 1 (as seen in Figure 1); within 2 mm of the internal acoustic canal 2 ; or, above the level of the inferior portion of the tympanic annulus. 3 The respective incidence of the varying definitions is 38%, 63% and 11.5%.2–4

Diagram of an axial temporal bone CT scan at the basal turn of the cochlea with (A) no high-riding jugular bulb and (B) a high-riding jugular bulb.

The surgical significance of an HRJB is the increased risk of injury it poses for middle ear surgery when accessing the hypotympanum. Occasionally, it can make access to the round window challenging, such as in cochlear implant surgery. Outside the operative challenges, an HRJB poses it has been associated with sensorineural and conductive hearing loss, vertigo, including Meniere's disease and pulsatile tinnitus. 5 It is thought that conductive hearing loss may ensue from contact of an HRJB with the tympanic membrane, ossicles or round window, or rarely as a pseudo-conductive loss due to a third-window effect, such as in superior semicircular canal dehiscence.6,7 Sensorineural hearing loss may result from compression of the vestibular aqueduct.6,8 The presence of an HRJB may also be misdiagnosed as a vascular middle ear tumour, such as a glomus tumour. Other anatomical variants associated with an HRJB include jugular bulb dehiscence and labyrinthine dehiscence, including the posterior semicircular canal,4,9 and the vestibular aqueduct. 3

Due to the significance of an HRJB, especially when considering surgical approaches and risk of injury, the presence of an HRJB should be routinely identified and reported by radiologists to assist with surgical planning. While the surgeon is well attuned to the relevance of a high-riding jugular, it is not always reported by the radiologist and due to the fine nature of structures within the temporal bone may be variably difficult for the radiologist to identify. 10

Deep learning (DL) has emerged as a transformative tool in operative planning – optimising surgical workflow and enhancing precision and safety. 11 It is used to efficiently identify anatomical landmarks, diseases and critical areas and to automate surgical video documentation.12–16

Convolutional neural networks (CNNs) utilise iterative ‘kernels’ or filters adept at identifying specific features in an image, such as edges and textures. These filters process the image in matrices to then deploy their classification and detection capabilities. 17 Integrating CNNs with computer vision allows for analysis of various preoperative/ operative investigations and media.

This study aims to determine the feasibility of using a deep learning CNN algorithm to classify the presence or absence of an HRJB on CT temporal bone imaging.

Methods

Institutional ethics approval was obtained from the Western Sydney Local Health District Ethics Committee (2021/PID03049), which waived the requirement for informed consent due to the use of de-identified data.

De-identified, prospectively collected adult-only (ages 18–85) axial CT petrous temporal bone scans were retrieved from a Western Sydney Local Health District tertiary hospital radiology picture archiving and communication system (PACS) between January 2024 and March 2024. All scans were captured on a CT scanner capable of fine slice image acquisition (0.3 mm). Single-slice axial images of the left and right temporal bones at the level of the basal turn of the cochlea were captured using Digital Imaging and Communications in Medicine (DICOM) software and cropped to include each unilateral temporal bone. Exclusion criteria included paediatric patients, images of poor quality (e.g. motion blur or artefacts), and a history of prior temporal bone surgery such as mastoidectomy or cochlear implantation. The dataset was augmented by flipping each image in the vertical plane.

Two investigators, including a board-certified otolaryngologist (author ZH) and an otolaryngology resident (author KO), manually labelled the images as either HRJB or normal jugular bulb. HRJB was defined as the presence of any part of the dome of the jugular bulb at the axial slice corresponding to the basal turn of the cochlea. The images from the HRJB and normal datasets were split into a training and test set in a 2:1 ratio.

We employed Microsoft Azure's Custom Vision (Redmond, Washington) platform to train and test an algorithm's identification capabilities with 2 different datasets. Custom Vision is an off-the-shelf, pre-trained convolutional neural network hosted by Microsoft Azure. This platform creates machine learning (ML) algorithms that perform classification tasks on datasets where n ≤ 50 images. The training set was uploaded onto the platform and fed to the algorithm using the 1-h training mode.

Following training, a separate unseen test set was uploaded to assess the trained algorithm's performance in HRJB identification. The algorithm's classification was recorded in a Microsoft Excel (Redmond, Washington) spreadsheet and compared to the prelabelled manual responses.

Key performance metrics, including precision, recall, and mean average precision, were obtained from the initial training set. The test set was subsequently uploaded onto the Custom Vision platform to evaluate accuracy post-training, and class label probability was recorded in MedCalc. The probability threshold >65% was set to represent positive identification. Henceforward, allowed the calculation of sensitivity, specificity, positive and negative predictive values, and F1 score. A receiver operating characteristic (ROC) curve was generated using MedCalc, applying the methodology of DeLong et al. to model the algorithm's accuracy. 18 False positive and negative images were re-examined by the investigators and qualitatively assessed to consider causes of the misclassification.

Results

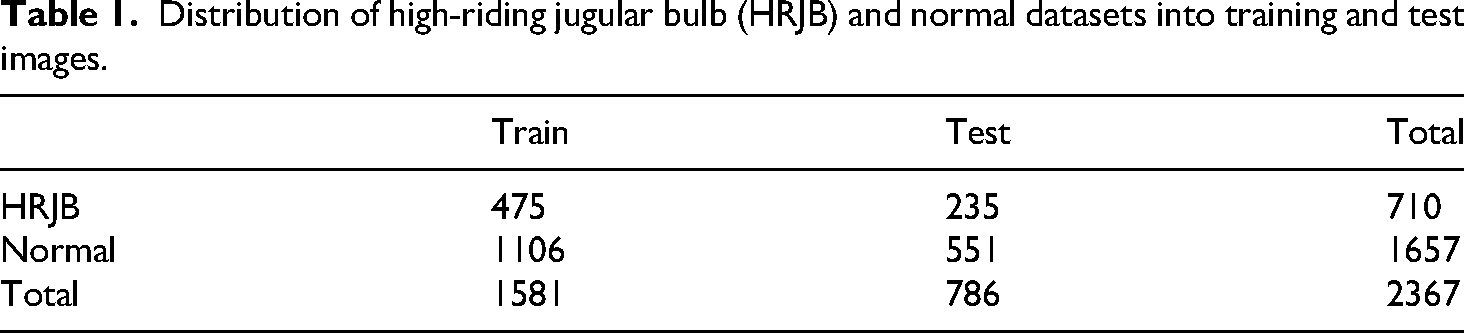

Scans from a total of 600 patients were retrieved. After left and right axial slices were identified and flipped, a total of 2400 images were obtained. Thirty-three images were excluded for poor image quality, yielding a final dataset of 2367 images. The final dataset contained 1657 normal images and 710 HRJB images. These datasets were further divided randomly in a 2:1 ratio into training and test sets as follows: HRJB set included 475 training images and 235 test images, and the normal set included 1106 training images and 551 test images, as presented in Tables 1 and 2.

Distribution of high-riding jugular bulb (HRJB) and normal datasets into training and test images.

Confusion matrix demonstrating performance of the model at a probability threshold of 65%.

The overall accuracy of the model was 0.948 (95% CI 0.930–0.962). At a probability threshold of 65% the sensitivity was 0.929 (95% CI 0.887–0.957) and the specificity was 0.955 (95% CI 0.934–0.970).

The positive predictive value was 0.897 (95% CI 0.856–0.928), and the negative predictive value was 0.969 (95% CI 0.951–0.980).

The ROC curve with 95% CI is demonstrated in Figure 2, with the area under the curve of 0.986 (95% CI 0.975–0.993; SE 0.006) indicating an excellent model performance for distinguishing HRJB from its absence. This curve demonstrates the variance of sensitivity and specificity as the probability threshold is adjusted.

Receiver operating characteristic (ROC) curve at 95% confidence intervals (CI).

Discussion

This is the first study using deep learning to classify the presence or absence of a high-riding jugular bulb in temporal bone imaging. The results demonstrate the robust potential of DL algorithms in classifying HRJB vs normal anatomical variants in temporal bone CT scans with an overall accuracy of 94.8% and sensitivity and specificity of 92.9% and 95.5%, respectively. The high positive predictive value (89.7%), high negative predictive value (96.9%) and AUC (0.986) further validate the algorithm's efficacy and reliability. This is clinically relevant given the surgical risks associated with unrecognised HRJBs during otologic procedures, such as haemorrhage. 19

The algorithm's accuracy is comparable with other studies that have explored the use of computer vision in analysing CT scans of the temporal bone. For instance, our group previously investigated the ability of an AI algorithm to classify pneumatisation of the mastoid process, for which the model achieved an accuracy of 95.4%. 15 Further, our results compare favourably with other related studies in the head and neck region, such as a paper focusing on concha bullosa (83%), anterior ethmoidal artery (81%), sphenoid sinus (93%), and the osteomeatal complex on paranasal sinus CT scans (85%).20,21 We have also published a previous systematic review of the accuracy of AI in radiology relevant to ENT surgeons. 22

The reliability of our results is also supported by a large sample size of 2400 images post data augmentation. Artificial intelligence algorithms intrinsically risk perpetuating biases which can arise from a non-rigorous study design and sampling biases. Our study ameliorated this issue through the use of consecutive sampling, ensuring there was no selective inclusion of patients, which could skew the incidence of HRJB. A larger sample size also reduces the risk of overfitting, making the algorithm more generalisable to other datasets. 23

The prevalence of HRJB varies based on its definition. In this study, we adopted the definition of presence of the jugular bulb at the level of the basal turn in the axial plane (Figure 3) as it is a widely adopted definition and results in a higher incidence, which assists ML analysis.4,24 On a retrospective review of 229 computed tomography (CT) scans (458 ears), Irabien-Zuniga et al. found the incidence of HRJB to be 38.4% at the level of the basal turn. 25 Of note, a higher prevalence in women (44.6% vs 33.6%) and bilateral HRJB was noted in 7.9% of patients. 25 Our dataset yielded an incidence of approximately 42%, providing a robust sample for training our AI algorithm – a substantial number of images in both the normal (1657 images) and HRJB (710 images) set – and for more rigorous testing through cross-validation.

Axial temporal bone CT slice of a (A) right-sided high-riding jugular bulb (HRJB) at the level of its first presence at the slice corresponding to the basal turn (BT) of the cochlea and (B) left-sided normal axial temporal bone CT.

Recently, the use of machine learning algorithms has been explored as a means to automate and improve radiology reporting.12–16 ML is a branch of artificial intelligence that uses computers to perform analyses through supervised training with structured data. Deep learning is a subset of ML, which conducts complex analyses on usually unstructured data, such as images and videos, using multi-layered ‘neural network’ architectures. This architecture is inspired by the human visual cortex and brain, 26 enabling sophisticated pattern recognition and decision-making.

There is a lack of published DL applications involving the temporal bone. Petsiou et al. provide the only comprehensive overview of AI applications in temporal bone imaging, summarising findings from 37 studies. 27 These include 15 on temporal bone image segmentation, 14 on middle ear diseases, 2 on tinnitus and balance disorders and 6 on vestibular schwannomas. The systematic review highlights a growing trend toward incorporating AI in temporal bone imaging. The prevailing method involves supervised learning, with experts manually labelling images, followed by algorithm training and cross-validation with a validation set. Among the various CNNs employed in this field, U-NET has emerged as a popular choice for segmentation, and ResNet/VGG-16 are frequently used for disease detection. 27 There is growing evidence that these AI systems can be a viable tool for clinical application, enhancing diagnostic accuracy and efficiency in medical imaging.

One other related study analysed the ability of CNNs to diagnose chronic otitis media on temporal bone computed tomography (CT). 28 The model had a high degree of diagnostic accuracy, which exceeded the ability of six trained clinicians, including two otologists, three general otolaryngologists and one radiologist. Additionally, our group previously reported applications that identified the presence or absence of mastoid pneumatisation and identified key anatomical structures in the temporal bone.14,15 Identifying anatomical variations in the temporal bone presents a challenging endeavour for even experienced specialist otolaryngologists and head and neck radiologists.

Building upon the high level of accuracy demonstrated by this model, future studies can investigate other anatomical variations of the temporal bone such as an anteriorly located sigmoid sinus, deep sinus tympani, enlarged cochlear aqueduct and an enlarged internal auditory meatus. 29 The respective incidences of the variations are all low at 2.94%, 5.01%, 0.58%, and 1.76%, respectively, 29 so exploring these variations can test the model's effectiveness in detecting less common anatomical variations, further validating its utility in clinical settings and comprehensive capabilities. By integrating the trained models for specific tasks, the model could evolve to be able to perform complex multitask segmentation, detection and classification with high precision.

Future studies can also consider testing this model on different imaging modalities such as MRI. MRI offers distinct advantages over CT in certain clinical scenarios, such as assessing marrow in the setting of skull base osteomyelitis or differentiating space-occupying lesions. 30 Additionally, DL analysis of 3D visualisations has gained prominence in recent years. Vaidyanathan et al. used a 3D U-Net model to effectively segment the inner ear with a high Dice coefficient. 31 This success indicates potential for incorporating 3D capabilities into our model to facilitate improved mapping and analysis of anatomy.

The main limitations of this study include the absence of an external validation set and the reliance on data from a single institution, which may restrict the generalisability of the findings. Another limitation is the absence of demographic information. Variations in temporal bone anatomy can occur based on factors such as ethnicity, age, and gender, so the lack of such data may further limit the model's generalisability. Future research could address this by conducting multivariate analysis to gain more detailed insights into how demographic variables affect the model's performance, potentially enhancing its accuracy and applicability across diverse populations. This is particularly important for not only for this model, but all AI algorithms, aiming for practical integration into routine medical practice.

Conclusion

This study successfully applies DL to identify high-riding jugular bulbs on temporal bone CT scans, achieving a high overall accuracy of 94.8%, sensitivity of 92.9%, and specificity of 95.5%. Having validated the efficacy of our approach with this anatomical variation, the potential for extending it to other variations is promising. Future enhancements could include refining the model with different imaging modalities, incorporating 3D visualisations and including demographic information. These enhancements may ensure the model's broader applicability and assimilation into routine clinical practice.

Footnotes

Author contributions

Concept – Z.H.; design – Z.H.; supervision – F.R., N.S.; materials – Z.H., K.O.; data collection and/or processing – Z.H., K.O., F.A., S.K., M.L.; analysis and/or interpretation – Z.H., K.O., S.K., M.L.; literature search – Z.H., K.O., S.K., M.L.; writing – Z.H., K.O.; critical review – Z.H., K.O., N.S.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability

The datasets generated during and/or analysed during the current study are available from the corresponding author on reasonable request.