Abstract

Objective

In prior research, we employed artificial intelligence (AI) to distinguish different anatomical positions in the airway under bronchoscopy. We aimed to leverage AI to identifying different types of airway stent.

Methods

To “deep learn” imaging data from patients who underwent bronchoscopy for implanting airway stents from January 2010 to June 2024, utilizing the Vision Transformer model (AI architecture). Eight percent of randomized clear images of the upper ends of stents from 662 patients were used to train for three main types of airway stent (T-shaped silicone, silicone, and metal-covered), and to determine if the stents were Y-shaped. The remaining 20% of clear images were utilized for validation.

Results

A total of 1254 bronchoscopic images of the upper ends and interiors of stents from 662 patients with different types of stents were analyzed. These types of stents were T-shaped silicone (70 patients), Y-shaped silicone stents (121), non-Y-shaped silicone stents (196), Y-shaped metal covered (67), and non-Y-shaped metal covered (208). A total of 662 bronchoscopic images depicting the upper ends of stents were utilized to identify three primary types of stents: T-shaped silicone, all silicone, and all metal covered. The mean accuracy for recognizing these three types was 98.5%, with individual accuracies of 93.3% for T-shaped silicone, 98.4% for all silicone, and 100% for all metal-covered stents. The area under the curve value for these three types was >0.99. Additionally, 592 images of stent interiors were employed for training and validation to determine if they were Y-shaped, and if they could be categorized further into Y-shaped silicone, non-Y-shaped silicone, Y-shaped metal-covered, or non-Y-shaped metal-covered stents. The accuracies for identifying Y-shaped silicone stents and Y-shaped metal-covered stents were 95.5% and 100%, respectively.

Conclusions

Artificial intelligence technology can differentiate between various types of stent utilizing bronchoscopy images. The trained model holds potential to improve quality control in future clinical applications.

Introduction

The bronchoscope was invented by Killian. 1 With the technological advancements over the past century, several novel technologies (e.g. endobronchial ultrasound [EBUS], 2 radial EBUS,3,4 electromagnetic navigation bronchoscopy, 5 virtual bronchoscopic navigation,3,6 ultrathin bronchoscopy, 7 transparenchymal nodule access, 8 and robotic bronchoscopy9,10) have been developed to assist clinicians in the diagnosis and treatment of various diseases.

Artificial intelligence (AI) is transforming lives. Few AI-associated studies discussing using natural bronchoscopy images have been published. Matava, et al. 11 conducted an AI-based bronchoscopic study to distinguish between vocal cords and the tracheal. Another study conducted by anesthesiologists aimed to help define various intubation positions. 12 We have shown that an AI method could be employed to automatically find airway anatomical positions 13 and tracheobronchopathia osteochondroplastica (a rare disease of the airways) 14 under bronchoscopy.

In the future, AI could enable automated bronchoscopy procedures at a high probability.15–17 Previously, we employed AI technology to distinguish different anatomical positions in the airway under bronchoscopy. However, we did not undertake AI recognition under bronchoscopy of airways containing various types of stents.

Herein, our study utilized an AI architecture to automatically distinguish between the primary types of stents. This strategy could facilitate automated bronchoscopy examinations by machines in the future.

Methods

The study protocol for this retrospective study was approved (ES-2023-028-01) by the Ethics Committee of the First Affiliated Hospital of Guangzhou Medical University (Guangzhou, China). We de-identified all patient details. The reporting of our study conforms to STROBE guidelines. 18 And we conducted our study in accordance with the Helsinki Declaration of 1975 as revised in 2024.

We focused on the application of AI for the classification of various types of stent using bronchoscopic images. Data were collected from 662 patients who underwent bronchoscopy at the First Affiliated Hospital of Guangzhou Medical University between January 2010 and December 2024. Clinical reports and bronchoscopic images confirmed the presence of a stent in the airways. Retrospective analyses were conducted on bronchoscopic images of five types of airway stent: T-shaped silicone (opaque white silicone material); Y-shaped silicone (transparent silicone material); non-Y-shaped silicone (transparent silicone material); Y-shaped metal-covered; and non-Y-shaped metal-covered. Our AI model was utilized for deep learning of bronchoscopic images to classify these types of airway stents. The processes are shown in Figure 1.

Strategies for recognizing different types of stents by using bronchoscopy images (first, we used the image at the top of the stent to distinguish between silicone stents, T-shaped silicone stents, and metal-coated stents; second, using within-stent images, the silicone stents were divided into Y-shaped silicone stents and non-Y-shaped silicone stents, and the metal-covered stents were divided into Y-shaped metal-covered stents and non-Y-shaped metal-covered stents.

The T-shaped silicone stents utilized in our study were composed exclusively of opaque white silicone, because transparent T-shaped silicone stents have not been manufactured for several years. In addition, we excluded bare-metal stents from our study because of (i) the potential for tumor ingrowth; (ii) bare-metal stents are employed infrequently in our center.

Inclusion criteria

The overall inclusion criteria were (1) patients aged > 14 years; (2) patients with stents who underwent bronchoscopy which elicited clear image data; (3) bronchoscopic images of the upper end of the stents were utilized to differentiate among three primary types of stents (T-shaped silicone, all silicone, and all metal covered); (4) bronchoscopic images of the stent interior were employed to distinguish between two subgroups: Y-shaped silicone stents and non-Y-shaped silicone stents, and Y-shaped metal covered stents and non-Y-shaped metal-covered stents.

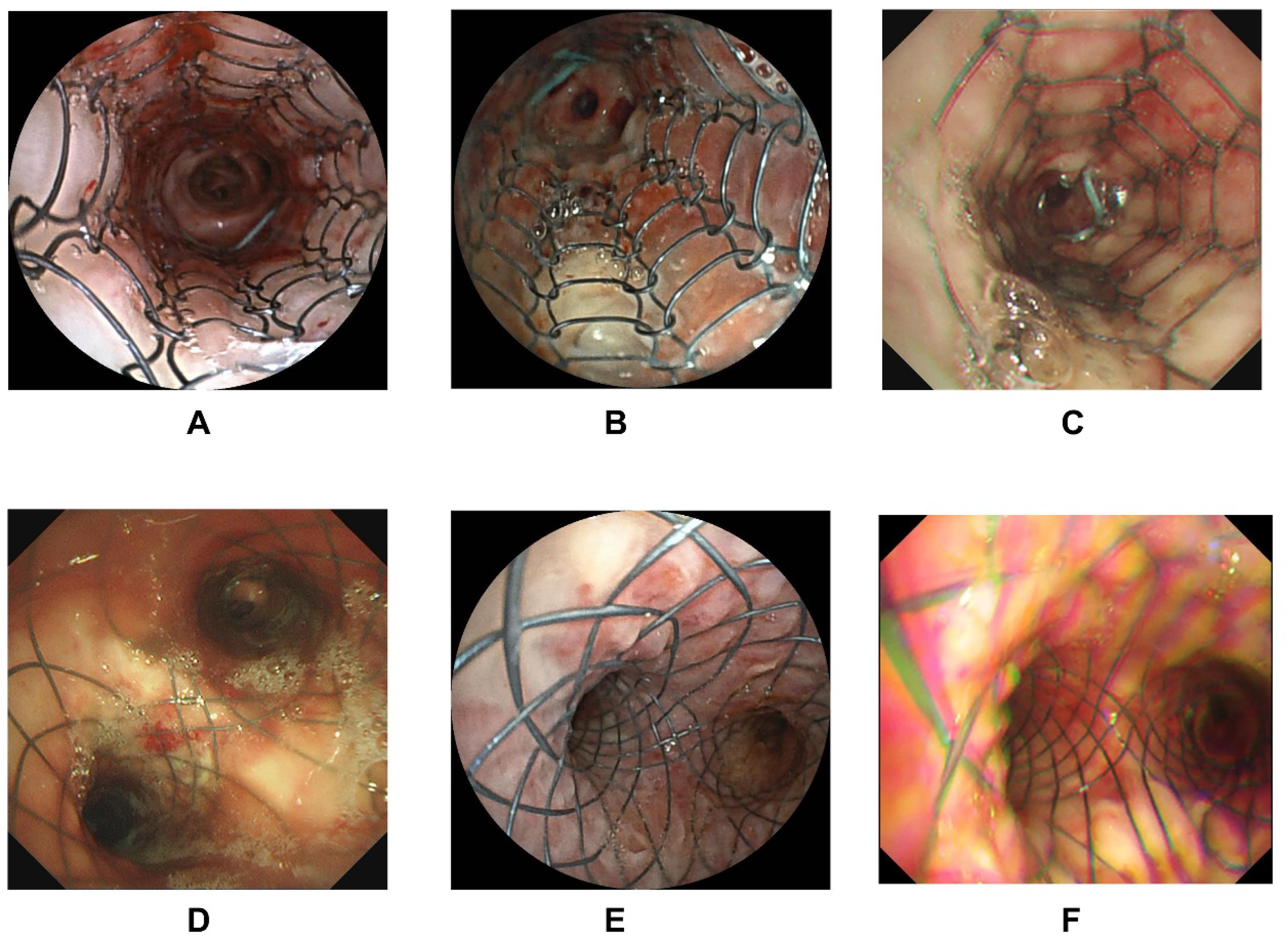

The inclusion criteria for clear images were: (1) bronchoscopic images of the upper end of the stents must include the stent edges (Figure 2 provides sample images of the upper end of stents); (2) images of the interior in Y-shaped stents must exhibit distinguishing characteristics to differentiate the shapes (Figures 3 and 4 provide sample images of stent interiors); (3) images should reveal minimal secretions to ensure that the stent characteristics can be identified accurately by AI; (4) if a patient underwent multiple examinations, only one image was included.

Bronchoscopy images of the upper end of stents. (A–C) Silicone stents, (D–F) T-shaped silicone stents, (G–I) metal-covered stents.

Bronchoscopy images within silicone stents. (A–C) Non-Y-shaped silicone stents, (D–F) Y-shaped silicone stents.

Bronchoscopy images within metal-covered stents. (A–C) Non-Y-shaped metal covered stents, (D–F) Y-shaped metal covered stents.

Artificial intelligence model

An AI model, Python 3.7, Vision Transformer, was used for deep learning. The Vision Transformer is a visual model based on the Transformer encoder architecture. It is pretrained in a supervised manner on the ImageNet-21k dataset (with a resolution of 224 × 224 pixels). The benchmark model extracts features for fine-tuning, and a newly trained “classifier” layer is appended at the end to adapt to the proposed tasks in a particular study. The model undergoes continuous training and, at the end of each epoch, an independent validation set (used for tuning during training) is employed to evaluate the model and compute the current loss. Training continues until no improvement in validation loss is observed for 10 consecutive epochs, at which point the process terminates. The model state with the best validation loss up to that point is selected as the final version and applied to the test set (an independent test set for evaluating final metrics) to obtain the corresponding indicators for model performance.

The training parameters of the model were a learning rate of 5 × 10−5, a per-device training batch size of 16, and four gradient accumulation steps.

The bronchoscopic images of all anatomical positions in the airways were preprocessed by a Gaussian filter (which could smooth the image and filter the noise 19 ), graphic lightening, and normalizing. A Gaussian filter is a strategy based on image enhancement to preprocess images. Specific steps must be followed. Briefly, for each datum included in the training set, the Gaussian filter is first used to remove Gaussian noise from the image. The Gaussian filter is a linear filter that can suppress noise and smooth the image. Next, the black edges in the collected image are removed, and the effective information is retained. Then, the image is adjusted to a uniform and appropriate size for subsequent training. After normalization, the features between different dimensions have a certain degree of comparability in numerical values, which can greatly improve the accuracy of the classifier. After standardization, the process of finding the optimal solution becomes noticeably smoother, making it easier to converge correctly to the optimal solution. The confusion matrix and receiver operating characteristic (ROC) curves were calculated to reveal the accuracy.

Statistical analyses

Categorical variables are expressed as numbers and percentages. Continuous variables are expressed as the mean ± standard deviation.

Results

Baseline characteristics of patients for training and validation

Table 1 delineates the baseline characteristics of the 662 patients with clear bronchoscopic images included in our study. The dataset comprised bronchoscopic images from 374 male patients and 288 female patients. The mean age of the patient cohort was 51.85 ± 16.79 years.

Characteristics of included patients at baseline.

In total, 1254 bronchoscopic images depicting the upper end of a stent and the stent interior were utilized for training and validation. These bronchoscopic images comprised T-shaped silicone stents (upper end of stent: 70), Y-shaped silicone stents (upper end of stent: 121; stent interior: 121), non-Y-shaped silicone stents (upper end of stent: 196; stent interior: 196), Y-shaped metal-covered stents (upper end of stent: 67; stent interior: 67), and non-Y-shaped metal-covered stents (upper end of stent: 208; stent interior: 208) (Table 2).

Characteristics of the included images.

Initially, 662 bronchoscopic images depicting the upper end of a stent from patients were utilized to identify three primary types of stents: T-shaped silicone, all silicone, and all metal covered. Furthermore, 592 images of the stent interior were employed to distinguish between Y-shaped stents and non-Y-shaped stents.

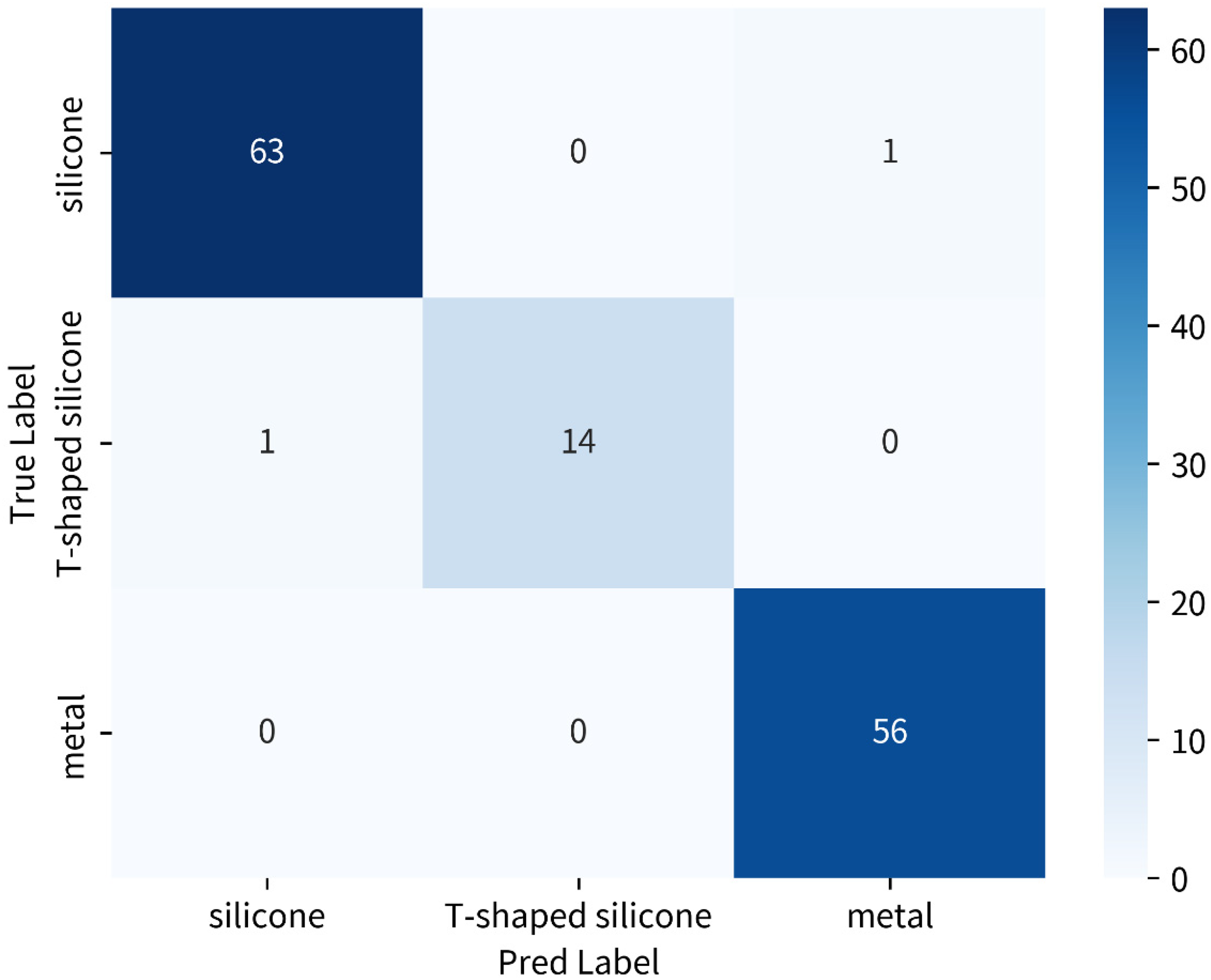

Initial recognition of three primary types of stents

The mean value of accuracy for recognizing the three primary types of stent was 98.5%. Individual values for accuracy were 93.3% for T-shaped silicone stents, 98.4% for all silicone stents, and 100% for all metal-covered stents. Figure 5 illustrates the confusion matrix representing the accuracy for these three primary stent types. For identifying silicone stents, the precision, recall, and fi-score were 0.98, 0.98, and 0.98, respectively. For identifying T-shaped silicone stents, the precision, recall, and fi-score were 1.00, 0.93, and 0.97, respectively. For identifying metal covered stents, the precision, recall, and fi-score were 0.98, 1.00, and 0.99, respectively.

Accuracies of recognizing three main stents (T-shaped silicone, silicone, and metal covered) by AI. Confusion matrix: the horizontal row represents the true classification labels, and the vertical column denotes the classification labels predicted by the AI model.

The area under the ROC curve (AUC) for all three stent types was >0.99, T-shaped silicone stents, all silicone stents, and all metal-covered stents had an AUC of 1.00, respectively (Figure 6).

Receiver operating characteristic (ROC) curves for recognizing the three main stents by AI.

Further recognizing whether Y-shaped stents

Furthermore, 592 images of the stent interior were utilized for training and validation purposes. These images were categorized further into four groups: Y-shaped silicone; non-Y-shaped silicone; Y-shaped metal-covered; and non-Y-shaped metal covered. The classification accuracy for distinguishing between a Y-shaped silicone stent and non-Y-shaped silicone stents was 95.5%, with an AUC of 0.97. For identifying a Y-shaped silicone stent, the precision, recall, and fi-score were 1.00, 0.95, and 0.98, respectively. For identifying a non-Y-shaped silicone stent, the precision, recall, and fi-score were 0.98, 1.00, and 0.99, respectively. Similarly, the accuracy for differentiating between a Y-shaped metal-covered stent and non-Y-shaped metal-covered stent was 100%, with an AUC of 0.98. For identifying a Y-shape metal-covered stent, the precision, recall, and fi-score were 0.79, 1.00, and 0.88, respectively. For identifying a non-Y-shaped metal-covered stent, the precision, recall, and fi-score were 1.00, 0.93, and 0.97, respectively (Figures 7 to 10).

Accuracies for recognizing two different types of silicone stents (Y-shaped or non-Y-shaped) by AI. Confusion matrix: the horizontal row represents the true classification labels, and the vertical column denotes the classification labels predicted by the AI model.

ROC curves for recognizing two different types of silicone stents (Y-shaped or non-Y-shaped) by AI.

Accuracies for recognizing two types of metal-covered stents (Y-shaped or non-Y-shaped) by AI. Confusion matrix: the horizontal row represents the true classification labels, and the vertical column denotes the classification labels predicted by the AI model.

ROC curves for recognizing two different types of metal-covered stents (Y-shaped or non-Y-shaped) by AI.

Discussion

We utilized deep-learning algorithms to analyze material characteristics from bronchoscopy images of the upper ends of stents. We demonstrated that the overall accuracy for identifying the three primary types of stent—T-shaped silicone, all silicone, and all metal-covered—exceeded 95%. In addition, we employed AI technology to determine the presence of Y-shaped stents based on bronchoscopy images taken from within the stents, achieving accuracies >95% consistently. Following the application of these two AI processes, we were able to categorize five distinct types of stent during bronchoscopy: T-shaped silicone; Y-shaped silicone; non-Y-shaped silicone; Y-shaped metal covered; and non-Y-shaped metal covered.

Artificial intelligence analyses have leveraged a diverse array of data related to respiratory interventions. These include images related to bronchoscopy,13,14 computed tomography, 20 CP-EBUS,21–23 radial-EBUS, 24 cytology,25,26 optical coherence tomography, 27 and pulmonary function. 28 Ongoing research and development in AI algorithms are expected to further refine and expand AI capabilities in the realm of respiratory interventions.13,14 We demonstrated that AI technology could be used to distinguish between different types of stent using bronchoscopy images. The model we developed could assist in quality control (QC) in the future.

Following the application of supervised machine learning, the training model demonstrated the capability to accurately identify different types of stent under bronchoscopy. Numerous technologies have emerged in the context of advancements in respiratory interventions using bronchoscopy facilitated by AI. Our AI-related studies indicated that AI methods could autonomously identify airway anatomical positions 13 and tracheobronchopathia osteochondroplastica 14 under bronchoscopy.

Artificial intelligence has reached a high level of maturity for QC in gastroscopy. The associated technologies can be categorized into location identification, lesion recognition, and time management. 29 The present study represents a foundational effort in applying AI for QC in bronchoscopy tests. High-performance computing could be leveraged for real-time QC in the future. By identifying normal anatomical structures in conjunction with our existing research, we can infer abnormal anatomical areas (e.g. airway stenosis) in patients who require stenting. Artificial intelligence can be employed to identify subtle changes in airway anatomy that may indicate the need for stenting. This capability leads to earlier interventions, potentially improving patient outcomes. Furthermore, postoperative monitoring is crucial for assessing the efficacy of airway stenting. By deep learning various images of airway stents, AI systems can detect signs of complications, such as the migration of or obstruction by a stent. Early detection allows for timely interventions, minimizing risks and enhancing patient safety.

Artificial intelligence can automate various aspects of the stenting, including the analyses and documentation of images. This automation reduces the workload on healthcare professionals, allowing them to focus on patient care rather than administrative tasks. Consequently, healthcare systems can operate more efficiently, improving overall patient throughput. Artificial intelligence recognition systems can also serve as valuable educational tools for healthcare providers. By providing real-time feedback and analyses, AI recognition systems can enhance the training of clinicians in airway management and stenting methods. Improved training can lead to better decision-making and procedural skills in real-world scenarios. When undertaking bronchoscopy, the trained AI could identify the positions in the airway. However, most AI models are based on training the normal position of the airways. Our data could help update the practicality in identifying positions in patients after stent treatment.

Our model required preprocessing of the input image data during training and testing. Such data preprocessing is based on collected data and has been evaluated through practical operations to reduce common types of noise and reduce the interference of artifacts on the prediction results of the model. Therefore, the model can maintain a certain degree of stability in classifying images with noise and artifacts.

Our study had six main limitations. First, we categorized the bronchoscopic images into five types of stent, such as non-Y-shaped silicone stents, which encompassed other types of stent but were analyzed collectively as a single group. Second, our study is a follow-up investigation, utilizing bronchoscopic images to differentiate airway positions, rare airway diseases, and complex airways with stents. Third, the T-shaped silicone stents used in our study were made from opaque white silicone because transparent T-shaped silicone stents have not been produced for several years. Fourth, we excluded bare-metal stents from our study due to the potential for tumor ingrowth, and bare-metal stents are utilized infrequently in our center. Fifth, we did not undertake a sample size calculation. Sixth, as a single-center retrospective dataset, the generalizability of our results is low.

Conclusions

We demonstrated that AI technology can be used to distinguish different types of stent in bronchoscopy images. The AI model we trained may help QC in the future.

Footnotes

Acknowledgements

The authors acknowledge all authors who contributed to this work.

Ethics approval and consent to participate

This retrospective study's protocol was approved by the Ethics Committee of the First Affiliated Hospital of Guangzhou Medical University (approval number: ES-2023-028-01).

Consent for publication

The requirement for informed consent for inclusion in and/or collection/use of data was waived by the ethics committee. This waiver was granted due to the use of de-identified data, in accordance with the guidelines of the Council for International Organizations of Medical Sciences (CIOMS).

Author contributions

Chongxiang Chen contributed conceptualization; data curation; investigation; methodology; methodology; and writing—original draft. Yingnan Zuo contributed formal analysis; investigation; and methodology. Jingyu Liu contributed supervision; validation; and formal analysis. Mingyue Min contributed supervision; validation; and formal analysis. Jiangtao Ren contributed supervision; validation; and formal analysis. Huiping Qiu contributed supervision; validation; and formal analysis. Wenhua Jian contributed supervision; validation; and writing—original draft. Ping Peng contributed supervision; validation; and writing—original draft. Changhao Zhong contributed supervision; validation; and writing—original draft. Shiyue Li contributed conceptualization; supervision; validation; and writing—review & editing.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by Science and Technology Projects in Guangzhou (2024A04J3536), as well as Medical Scientific Research Foundation of Guangdong Province (B2025528).

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Availability of data and materials

The datasets used and/or analyzed during the current study are available from the corresponding author upon request.