Abstract

The need to provide greater accountability to consumers of mental health services, funders and governments is a major force impacting upon service provision. The assessment of consumer outcomes has become a major strategy targeted at improving the quality and accountability of mental health services in Australia and elsewhere [1–5].

As a part of the First National Mental Health Strategy, a program to investigate appropriate methods for the routine assessment of consumer outcomes was initiated by the National Mental Health Information Strategy Committee of the Australian Health Ministers' Advisory Council (AHMAC).

In their report on Stage 1, Andrews et al. [6] identified six potentially useful measures of consumer outcomes: the Behaviour and Symptom Identification Scale (BASIS-32) [7–9], the Mental Health Inventory (MHI) [10,11], the Medical Outcomes Study 36-Item Short-Form Survey (SF-36) [12–14], the Health of the Nation Outcome Scales (HoNOS) [15], the Life Skills Profile (LSP) [16–18], and the Role Functioning Scale (RFS) [19–21].

Andrews et al. recommended further study of these instruments and suggested the following dimensions for assessing the suitability of an instrument as a measure of consumer outcomes [6, pp. 29–33]: The measure must be applicable. It should address dimensions that are important to consumers and service providers, and should provide information that facilitates the management of services. The measure must be acceptable. It should be brief, and the purpose, wording, and interpretation should be clear. The measure must be practical. Issues of practicality relate to the burden imposed on consumers and service providers in terms of time, costs, training and level of skill required in the scoring and interpretation of the data. The measure must be valid. The measure must be reliable. The measure must be sensitive to change.

Traditionally, emphasis has been given to demonstrating that a particular instrument is suitable for a given purpose by investigating the psychometric properties of the measures such as reliability, validity and sensitivity to change. Additional weight is given if these data are available for the groups or questions under study. Pragmatic considerations such as time, length, cost and training are often included as secondary considerations. Where more than one instrument is thought to be suitable, as in Stage 1 of the project, it is necessary to consider issues such as the acceptability of the various measures.

This study investigated some aspects of the applicability and acceptability of the six selected measures of consumer outcome by analysing both quantitative and qualitative feedback received from participants after initial use of the instruments and in focus group discussions. As it is not possible to recommend an instrument without clearly understanding its purpose and the context in which it is to be put, comments from the focus groups that qualify judgements about the utility of the questions are also reported.

Method

Sample

Service providers

Sixty-five providers were recruited from three clinical settings: general practice (n = 20), private psychiatry (n = 16) and public psychiatry (n = 29).

Each service provider nominated a number of consumers who met ICD-10 primary care criteria for one of three diagnostic groupings: anxiety disorders, affective disorders or schizophrenia.

Consumers

Of the 183 consumers nominated by the service providers, 173 provided valid responses on diagnosis and to the six measures. The frequencies of consumers' practice settings and diagnostic groupings are shown in Table 1.

Frequencies of patients' practice settings and diagnostic groupings

Procedure

Consumers were each randomly assigned two of the three selected self-report scales (BASIS-32, MHI or SF-36), with the order of presentation of the two scales also randomised. The same process was applied independently to the service providers' scales (HoNOS, LSP, and RFS) for each nominated consumer. This design was chosen in order to moderate the demands made on the respondents' time, and their willingness to cooperate in the study, while permitting detection of, and adjustment for, effects due to order of presentation. The measures were completed at either the respondent's home, service setting or other location as nominated by the respondent. The achieved frequencies of presentation of the measures across the entire sample are shown separately for the service providers and consumers in Table 2. The randomisation process was not constrained to produce equal numbers of all possible sequences of measures across the entire study.

Achieved frequencies of presentation of measures

Following the completion of a measure, consumers and service providers were asked to complete a questionnaire which assessed their opinions of the utility of the measure. This Utility Questionnaire allowed for a rating of the perceived relevance, effectiveness and usefulness of the measure. A General Utility (GU) score was derived by summing the relevant items from this questionnaire [22, pp. 214–215, 218–219]. Following completion of both measures, participants were asked to nominate which of the two measures they preferred. A comments section for further qualitative feedback was also included.

In addition to completing the Utility Questionnaires, all participants were invited to be involved in focus groups to discuss the use of standardised measures for the routine assessment of consumer outcomes. Eight discussion groups were convened: four for service providers and four for consumers. For each practice setting represented in the study, a consumer and a service provider focus group were convened. An extra focus group discussion for service providers in the public psychiatry setting was convened. In addition, a further consumer focus group discussion was convened for members of local consumer support groups who were not otherwise involved with the study. Twenty-six service providers participated in one of four focus group discussions which were convened (six general practitioners, seven service providers from the private psychiatry setting and 13 service providers from the public psychiatry setting). In addition, 20 consumers participated in one of four focus group discussions which were convened (seven consumers from the general practice setting, five from the private psychiatry setting, eight from the public psychiatry setting and six representatives of consumer support groups).

Results

The random effects in the design (service providers within practice settings, and consumers within service providers), the sequence of presentation, the practice setting, the diagnostic groupings and possible heterogeneity of variation among settings are modelled using mixed model regression conducted by ‘proc mixed’ in SAS 7.00 (SAS/STAT, SAS, Gary NC, USA). This procedure permits estimation in the presence of incomplete sequences, and estimation of the intraclass correlation (ICC) for both consumers and service providers, the ICC being the correlation among the measures provided by the same individual.

The analyses described here relate to the participant's perception of the utility of the selected measures. Other aspects of the larger study have been reported in full elsewhere [22].

Also described here are the issues raised during the focus group discussions, which are integral to any discussion of the perceived utility of the six selected measures.

Perceived utility ratings of the measures completed by consumers

There are no significant interactions among the four effects: measure, practice setting, sequence of presentation, or diagnostic grouping. The tests for main effects are presented in Panel A of Table 3, and the fitted means in Panel B. There is some evidence (p = 0.05) of a sequence effect, with a tendency for a higher utility score to be given to the measure presented second. Controlling for this effect, the only highly significant differences are among the three consumer measures (p < 0.001), with the contrast between the MHI and the pooled results for the BASIS-32 and SF-36 being the main source of this effect, evidencing a greater perceived utility among consumers for the MHI (F = 14.31, df = 1, 274, p < 0.001). There is no evidence of heterogeneity among settings (Panel C of Table 3), and the ICC is consistently high at approximately 0.75, perhaps reflecting an inability of consumers to distinguish differences in purpose among the three measures.

Consumers' utility scores

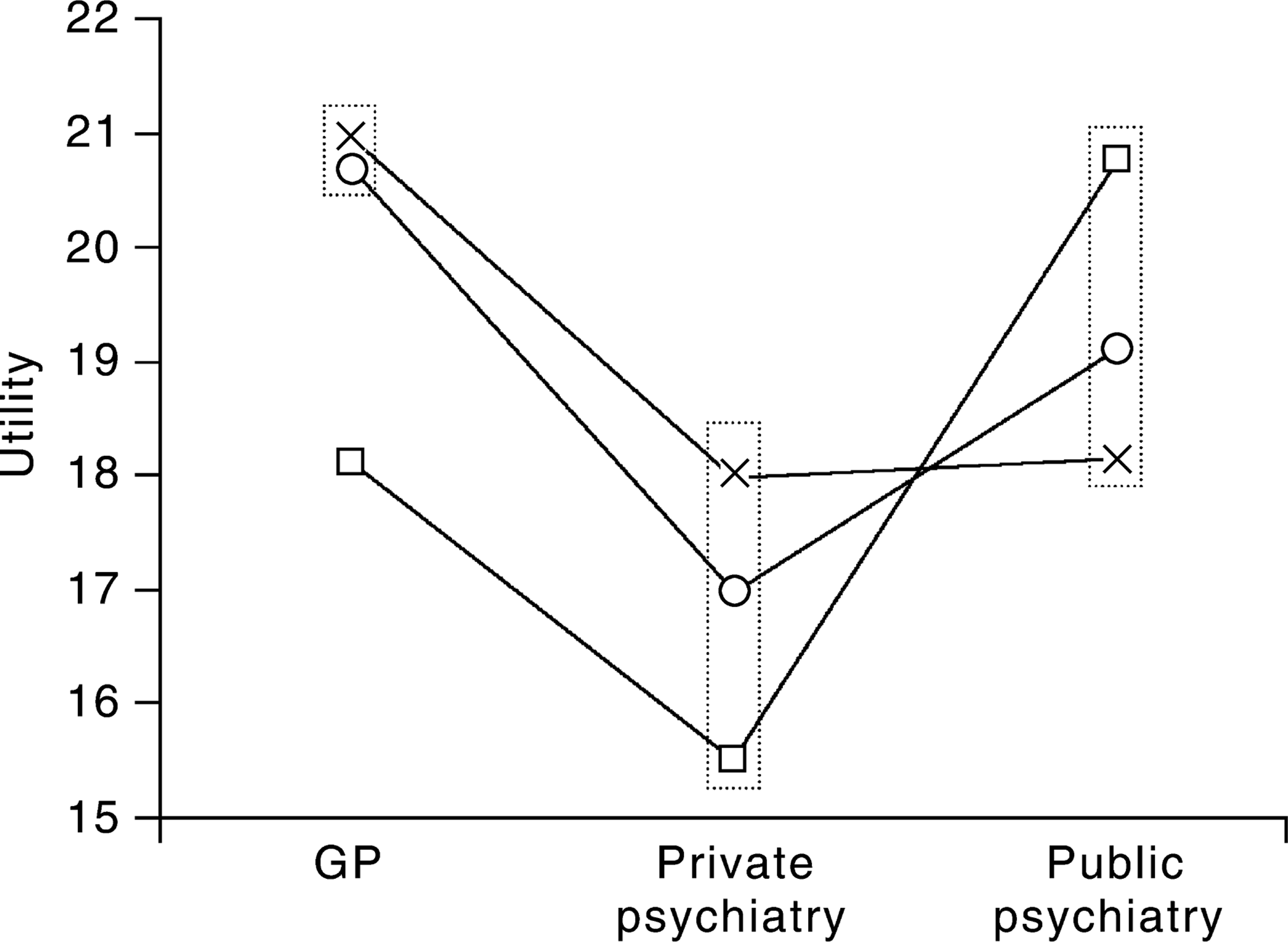

Figure 1 shows fitted means of utility questionnaire results, by practice setting and diagnostic grouping. An overview of the qualitative responses made by consumers is detailed in Stedman et al. [22, p. 119].

Fitted means, by practice setting and diagnostic grouping. O, affective; □, anxiety; x, schizophrenia.

Perceived utility ratings of the measures completed by service providers

The patterns of utility ratings made by service providers are markedly different from that made by consumers. There are significant interactions among the effects predicting utility scores. Panel A of Table 4 shows the significance of main effects (setting and diagnosis reaching strong levels of significance) and two-factor interactions, with all four effects appearing in at least one interaction. This indicates that a main effects analysis alone will be misleading, as it will not take into account the interactions between effects. Panel B of Table 4 shows the two-way, and marginal, fitted means. The intraservice provider correlations of around 0.45 (see Panel C, Table 4) suggest a consistency of response, and a professional ability to distinguish among the aims of the three provider instruments.

Service providers' utility scores

Setting × measure

Although there are no significant main effects of service provider measures (p = 0.76), service providers in different settings have different perceptions of the utility of the measures. General practitioners hold them all in similar, relatively high regard (F = 0.97, df = 2, 255, p = 0.38) (see Table 4); private psychiatrists in much less regard, but of similar magnitude (F = 2.75, df = 2, 255, p = 0.07), while providers in the public setting show significant variation in their perceptions of the utility of the instruments (F = 15.6, df = 2, 255, p < 0.001) and, in contrast to their peers, perceive the LSP as having most utility.

Sequence × measure

Although there are no significant main effects of service provider measures or sequence of presentation, there are clear differences in perceived utility for some provider measures according to the sequence they were presented in (see Table 4). The HoNOS changes from greatest perceived utility when presented first, to least utility when presented second, while the LSP has greatest perceived utility when presented second.

Setting × diagnosis

The significant main effects of practice setting and diagnostic grouping must be interpreted with care as the interaction between them is also significant, and evidences differential outcomes among the three practice settings. Within both public and private psychiatry, mean differences do not vary among diagnoses. For general practitioners, however, a significantly lower utility is attached to measures of anxiety when compared with diagnoses of either schizophrenia or affective disorders (F = 22.74, df = 1, 255, p < 0.001).

While general practitioners perceived the measures as having most utility for affective disorders and schizophrenia, providers in the public setting perceived the measures as having least utility for a diagnosis of schizophrenia and the most utility for anxiety disorders (see Table 4 and Fig. 1).

An overview of the qualitative responses made by service providers is detailed in Stedman et al. [22, p. 123].

Process issues raised in focus groups which impact on the perceived utility of the six selected measures

Both consumer and service provider groups suggested that they would need the following information to make a judgement about the utility of the given measures. Also, several participants indicated they would be reluctant to be involved if they were not satisfied with the process.

Consumer completed measures

Groups indicated a desire to know: (i) whether the measure would be completed independently or with a trained interviewer present, and whether assistance to complete a measure would be required or available; (ii) the impact that illness may have on a consumer's ability to complete a measure; and (iii) the likelihood that the process of completing a measure may cause distress to consumers.

Service provider-rated measures

Groups indicated a desire to know: (i) training requirements for the administration, analysis and interpretation of the information obtained from these measures; (ii) the extent to which the information from these measures can be used to help with clinical interventions including communication and monitoring progress; and (iii) how the information collected using these measures was to be controlled and used and how consumer confidentiality was to be ensured.

Outcome measurement issues

In addition, both consumers and service providers expressed concerns about: (i) the burden which may be placed on the clinical process through the routine introduction and use of these measures in the clinical setting; (ii) resource and service implication issues such as cost, time, budgets and space availability for storage of data; (iii) the attribution of change, consumers expressed misgivings about change being automatically attributed to service interventions, and providers were concerned that they may be held responsible for changes outside of their control.

Discussion

While the majority of consumers responded that most or all of the questions contained in each of the three selected consumer measures were important and relevant to them, a modest preference was observed for the MHI in this study, which also displayed a greater utility scores than either the BASIS-32 or the SF-36. While interpretation of this finding should be undertaken with caution, comments elicited by the use of the MHI suggested that consumers considered their emotional, affective and wellbeing concerns were often not able to be expressed in clinical settings. The MHI provides an opportunity to express these concerns, while the BASIS-32 and the SF-36 focused on the more typical concerns of clinicians.

In general, consumers reported minimal difficulty in understanding the wording of the measures, and indicated that they considered each of the measures was either reasonably or very useful as an outcomes measure.

In relation to the clinician-rated measures trialled in this study and their utility, service providers indicated that some or most of the questions on each of the selected measures were relevant to assessing outcomes for their specific consumer(s), and for people with mental illness in general. Service providers also indicated that the three measures were slightly or reasonably effective as measures of treatment outcomes.

Service providers from the three practice settings had significantly different perceptions of the utility of the three observer-rated measures trialled in this study. One explanation for this finding may be the perceptions held by service providers in the private psychiatry setting of greater potential for misuse of routine measures of consumer outcomes. The favouring of the LSP in the public psychiatry setting may have been partly due to greater familiarity of the providers in this setting with this instrument, as they are already using it as an assessment tool on a routine basis.

It is also interesting to note that service providers rated the perceived utility of the three measures differently depending upon the sequence in which they were presented. When the HoNOS appeared as the first of the two measures to be completed, providers rated it as having the highest utility. However, when the HoNOS appeared second in the sequence of the two measures to be completed, providers rated it as having the lesser utility. While no significant main effects of either sequence of presentation or measure were observed, it is interesting to note the significant interaction of these two factors, and that the perceived utility of a measure was observed to change simply on the basis of the sequence in which it was presented.

Significant main effects were observed for practice setting and diagnosis, and a significant interaction between these two factors was also evidenced. It is interesting to note that within each of the three practice settings, utility ratings were highest for the diagnostic group which appeared to be serviced least (see Table 1), that is, for schizophrenia in the general practice setting and private psychiatry settings, and for anxiety disorders in the public psychiatry setting.

The perceived utility of a measure is likely to influence its acceptability if it is selected to be used routinely by a service, and these results provide information that will assist in the choice, training for use and implementation of the measures.

Conclusion

The routine use of a system of outcome measurement has considerable potential to improve mental heath services. However, achieving this potential will require considerable understanding and support from all participants in the process.

This study provides information about the initial responses to use of a selected range of outcome measures in a variety of settings. While a consumer's or clinician's positive view of an instrument will not ensure that information generated will be useful, it may provide a guide to acceptance of the measure as well as issues to be addressed in training and implementation.

All of the service provider measures trialled (HoNOS, RFS and LSP) elicited a generally positive response. None was considered by service providers in this study to be clearly more useful.

The consumer-rated measures (BASIS-32, SF-36 and MHI) elicited mostly positive responses, with a possible preference for the MHI.

Both consumers and service providers high-lighted several issues, apart from utility, which are integral to any discussion of the routine assessment of patient outcomes.

The responses highlight that there is more to the implementation of measures of outcomes than choosing and administering questionnaires. There should be a shift towards discussion of outcome information needs as part of local and regional services; and of how data collected at the grassroots level can be meaningfully translated into information that is relevant to consumers, service providers, services and higher levels of management in mental health services.