Abstract

This paper presents the novel method of mobile robot simultaneous localization and mapping (SLAM), which is implemented by using the Rao-Blackwellised particle filter (RBPF) for monocular vision-based autonomous robot in unknown indoor environment. The particle filter is combined with unscented Kalman filter (UKF) to extending the path posterior by sampling new poses that integrate the current observation. The landmark position estimation and update is implemented through the unscented transform (UT). Furthermore, the number of resampling steps is determined adaptively, which seriously reduces the particle depletion problem. Monocular CCD camera mounted on the robot tracks the 3D natural point landmarks, which are structured with matching image feature pairs extracted through Scale Invariant Feature Transform (SIFT). The matching for multi-dimension SIFT features which are highly distinctive due to a special descriptor is implemented with a KD-Tree in the time cost of O(log2N). Experiments on the robot Pioneer3 in our real indoor environment show that our method is of high precision and stability.

Keywords

Introduction

A key prerequisite for a truly autonomous robot is that it can simultaneously localize itself and accurately map its surroundings (Kortenkamp et al, 1998), which is known as Simultaneous Localization and Mapping (SLAM). Particle filters provide an attractive approach for updating distributions of data (Doucet, 1998). Early successes of particle filters can be found in the area of robot localization (Dellaert et al, 1999). Recently, particle filters have been at the core of solutions to higher dimensional robot problems such as SLAM, which, when phrased as a state estimation problem, involves a variable number of dimensions. Murphy adopted Rao-Blackwellized particle filters (RBPF) (Murphy, 2001) as an effective way of representing alternative hypotheses on robot paths and associated maps. Montemerlo et al. (Montemerlo & Thrun, 2003) extended this method to efficient landmark-based SLAM using Gaussian representations of the landmarks and were the first to successfully implement it on real robots.

The difficulty of the SLAM depends on the robot's environment, its sensors, and the representation of map. The environment could be relatively benign indoors with flat floors. But it could also be quite subversive such as aircraft and submarines. The most common sensors in use are sonar sensors, laser range finders and video cameras.

Sonar readings are susceptible to high degrees of uncertainty especially due to angular and radial errors. Lasers are accurate while they are heavy, expensive. Sonar and lasers are primarily used for 2D map. On the other hand, cameras are light, cheap, and can provide abundant environmental information, but are difficult to work with. Popular choices for the map representation include grid-based (Schultz & Adams, 1998), topological (Choset & Nagatani, 2001) and feature based models (Chong & Kleeman, 1999). Grid-based models are easy to build and maintain while implies high data requirements and induces high computational costs. Topological maps usually have the advantage of being compact, and more tolerant to errors in the robot location. Feature based representations have been difficult to build while being significantly less complex.

We primarily focus on investigating real-time, monocular vision based SLAM for indoor environments, and constructing 3D feature map from video data. Scale invariant features are extracted through Scale Invariant Feature Transform (SIFT) (Lowe, 2004), which are used to structure 3D landmarks because they are invariant to image scale, rotation and translation as well as partially invariant to illumination changes. We presents a fast and efficient algorithm for matching features in a KD-Tree in the time cost of

Background

Consider the case of a mobile robot moving through an unknown environment consisting of a set of landmarks θ. The robot moves according to a known motion model

This can be done efficiently, since the factorization decouples the SLAM problem into a path estimation problem and individual conditional landmark location problems, and the quantity

A successful instance of the RBPF SLAM is FastSLAM, which offers many improvements over the traditional EKF-based SLAM framework: it has excellent time complexity; it does not need to linearize the robot's motion model; especially it can maintain several data association hypotheses. However, FastSLAM also has drawbacks: each particle has a different view of the map, integrating these views to obtain a single map is nontrivial, and more importantly, data association must be performed for each particle independently, which introduces a significant computational burden; FastSLAM is prone to diverge in regions where its measurements are not very informative, either due to high noise or the sparseness of landmarks.

RBPF calculates the posterior over robot paths

Moving the samples in the prior to regions of high likelihood is important if the likelihood lies in one of the tails of the prior.

Here we need to calculate the posterior over robot paths

In our methods, the

EKF approximates the distribution through the first-order Taylor-series expansion of the nonlinear observation function

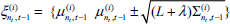

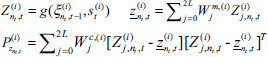

The first-order mean and covariance used in the EKF is given by Deterministically generate 2 Propagate the sigma points through the nonlinear transformation:

Compute the mean and covariance as follows:

Now we follow UKF algorithm to extend the path Calculate the sigma points according to Eq. (4):

Using motion model to predict:

Incorporating new observation Sampling new pose

In this step, we update the posterior over the landmark estimates represented by the mean

The probability Calculate the sigma points:

Using observation model to compute the mean and covariance of the observation as follows:

Under this approximation, the posterior for the location of landmark

Next, we resample from temporary particles set

Target distribution

To calculate

After the resampling, all particle weights are then reset to

SIFT Feature Extraction

The Scale Invariant Feature Transform (SIFT) was proposed in (Lowe, 2004) as a method of extracting and describing key-points, which are robustly invariant to common image transforms. The SIFT algorithm has four major stages: 1) Scale-space extrema detection. The first stage finds scale-space extrema located in Difference of Gaussians (DOG) function

Maxima and minima are detected by comparing a pixel (marked with X) to its 26 neighbors in 3 × 3 regions at the current and adjacent scales (marked with circles).

A key-point descriptor.

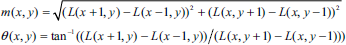

Typical extracted SIFT features with their locations represented by ‘+’. The radius of the circle represents their scales: the 320×240 pixel test image taken at (a) 1618mm; (b) 756mm; and the result is (a) 278 key-points; (b) 267 key-points.

4) Key-point descriptor. Typical key-point descriptors use 16 orientation histograms aligned in a 4 × 4 grid. Each histogram has 8 orientation bins each created over a support window of 4 × 4 pixels (Fig. 3). The resulting feature vectors are 128 elements with a total support window of 16 × 16 scaled pixels. For a more detailed discussion see (Lowe, 2004). The number of features generated is dependent on image size and content, as well as algorithm parameters. In this paper, we use the vectors with 128 elements as key-point descriptor. Fig. 4 shows an example of SIFT feature extraction for a cluttered and occluded image of size 320×240 pixels.

This section describes KD-tree algorithm for determining the matching SIFT features pairs of successive images captured at relatively close positions along the robot's path by a monocular vision system mounted on the robot. Every time the CCD camera vision system is triggered, it captures the consecutive digital images of pixels and after SIFT feature extracting, generates SIFT feature match pairs in adjacent images through KD-tree based feature matching algorithm. The match pairs are used for the landmarks' 3D structure. Given a SIFT key-points set

After constructing the KD-tree, the nearest neighbor search algorithm which is depth first is used to search the child node which contains the target. The space occupied by set

The object intersect only if the distance between

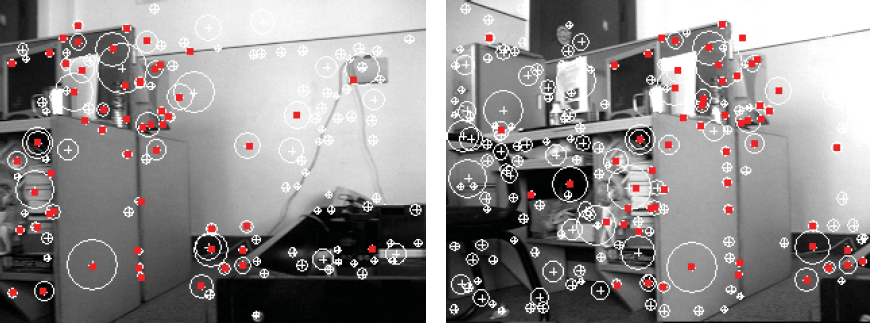

The SIFT feature matches based on KD-tree, and the matching pairs are represented by red “.”.

The key-point descriptor histograms of the matching key-point at different scale and direction.

We implement the SIFT key-points matching algorithm based on nearest neighbor algorithm in a KD-tree, and the distance of the key-points is represented using the Euclidean distance between their according 128 dimensional descriptor vector. The basic process for matching is as follows: A KD-tree is constructed using all key-points of the image

Two viewpoints geometry and the epipolar constraint.

After the SIFT feature matching, we obtain the 2D SIFT image feature matching pairs used to structure the 3D spatial landmarks, which are in a single world model. As seen from Fig. 7, According to the epipolar constraint, all the entities

The solution of three unknown variants

The experiments are performed on a Pioneer 3-DX mobile robot incorporating an 800 MHz Intel Pentium processor as shown in Fig. 8(a). Motor control is performed on the on-board computer, while a 2.6GHz PC connected to the robot by a wireless link provides the main processing power for vision processing and the SLAM software. A monocular color CCD camera mounted at the front of the robot. The test environment is a robot laboratory with limited space as shown in Fig. 8(b).

(a) Pioneer 3 robot; (b) experiment enviroment.

Frames of an image sequence with SIFT features marked: (a) 2th frame; (b) 9th frame; (c) 19th frame; (d) 70th frame; (e) 79th frame; (f) 100th frame; (g) 150th frame; (h) 163th frame; (i) 172th frame.

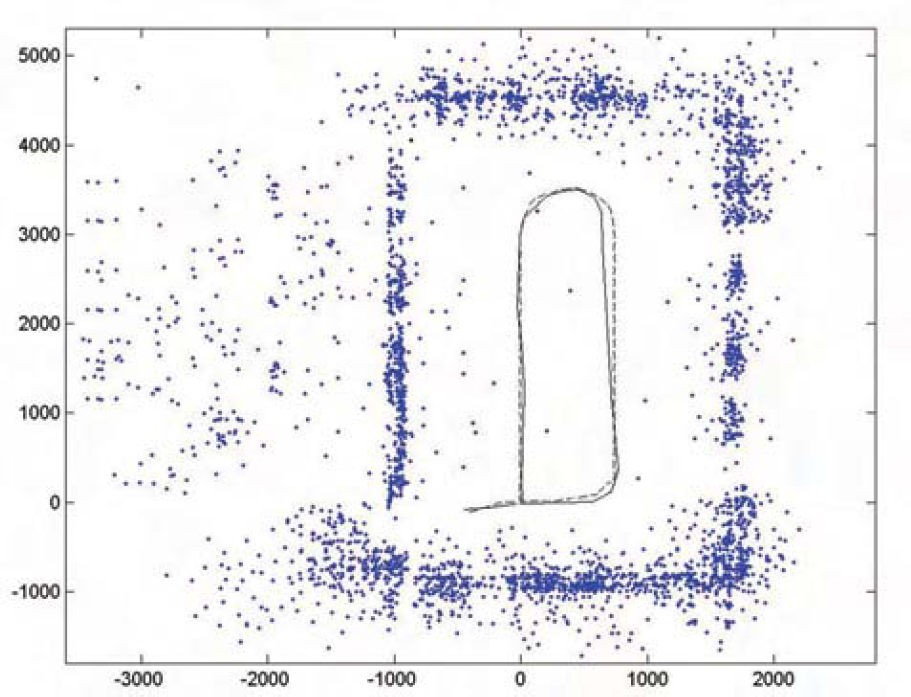

Bird's-eye view of the SIFT landmarks in the map. the dashed line indicates the estimated robot path and the solid line indicates the real robot path.

The 3D SIFT landmark database map viewed from different angles. Each landmark has appeared consistently in every view: (a) from top; (b) from left; (c) from right.

The images are captured and processed, the map is kept and updated on the fly while the robot is moving around. The robot goes around in the laboratory for one loop and to come back. Fig. 9 shows some frames of the 320 × 240 image sequence (189 frames in total) captured while the robot is moving around. A total of 4068 SIFT landmarks with 3D positions are gathered in the map. The runtime of our RBPF SLAM algorithm with different numbers landmarks is shown in Fig. 10. Other performance of our SLAM algorithm with different numbers of particles and landmarks is also shown in Fig. 12. Fig. 10 shows the bird's-eye view of all these landmarks. Fig. 11 shows three views of the 3D SIFT landmark map from different angles. Finally, we compare our method with traditional EKF method, and our method shows superior performance as shown in Fig. 13.

Performance of our RBPF SLAM algorithm: (a) robot position error and (b) landmark error with different numbers of particles; (c) runtime and (d) memory requirement with different numbers of landmarks.

Comparison of our RBPF SLAM algorithm and EKF for (a) robot position error and (b) memory requirement.

Novel RBPF is presented to implement monocular vision-based mobile robot SLAM in indoor environment. The particle filter is combined with UKF to sampling new poses integrating the current observation. The landmark position estimation and update is implemented through the UT and EKF respectively. For solving the particle depletion problem, the number of resampling steps is selected adaptively. Single camera tracks the 3D natural point landmarks, which are structured with matching feature pairs extracted through SIFT. The matching for highly distinctive SIFT features descripted with multi-dimension vector is implemented with a KD-Tree in the time cost of